Abstract

In order to use a value retrieved from a Knowledge Graph (KG) for some computation, the user should, in principle, ensure that s/he trusts the veracity of the claim, i.e., considers the statement as a fact. Crowd-sourced KGs, or KGs constructed by integrating several different information sources of varying quality, must be used via a trust layer. The veracity of each claim in the underlying KG should be evaluated, considering what is relevant to carrying out some action that motivates the information seeking. The present work aims to assess how well Wikidata (WD) supports the trust decision process implied when using its data. WD provides several mechanisms that can support this trust decision, and our KG Profiling, based on WD claims and schema, elaborates an analysis of how multiple points of view, controversies, and potentially incomplete or incongruent content are presented and represented.

Keywords

Introduction – WD as information source

Wikidata [26] (WD) is currently one of the most extensive publicly available Knowledge Graphs (KGs). Numerous other websites are regularly using it, services (search engines, personal assistants, libraries, and museums [9,27]), applications (Daimler, Lufthansa, Novartis, data journalists, …), and research projects [11]. It is regarded by many as part of the Semantic Web (e.g., [16]).

In some applications using KGs [3], one needs to obtain the value of some property of an entity (item in WD parlance) to make some computation. For example, finding the capital city of a country or province; obtaining a person’s birth or death date; determining the author of some artifact; obtaining some physical property of a known substance; etc. By design, WD contains statements based on claims about items. In principle, there is no guarantee of the truthfulness of claims.

Suppose a person wants to claim some inheritance from Ana Maria Imeni by being a descendant of Menotti Garibaldi, the son of the famous revolutionary Giuseppe Garibaldi. According to WD, there are two claims for who his mother was; see Fig. 1. Which one is true? Can a person have two mothers? If yes, under which circumstances?

Menotti Garibaldi mothers.

We have argued elsewhere [22,23] that crowdsourced KGs, or KGs constructed by integrating several different information sources of varying quality, must be used via a Trust Layer. Given the goal of carrying out some action, this layer is where a decision is made about the veracity of each relevant claim in the underlying KG, necessary to carry out that action. Consequently, the data user should have their own trust criteria to accept a claim as valid and use it in an intended computation. This additional layer, with some generalizations, has already been proposed regarding statements in WD, and more generally about the Semantic Web, [2]. It has also been recognized in the context of polyvocal KGs [25] that incorporate multiple voices (or points-of-view) about a subject.

The present work aims to assess how well WD supports the trust decision process implied when using its data. The remaining sections detail WD structural and ontological features and our KG Profiling approach used to evaluate those in WD to support trust decisions through a Trust Layer. We examine the explicit support for disagreements about the veracity of statements. We also look at incompleteness and how well constraint violations capture them. We investigate the support for detecting incongruences and what additional information it provides to resolve them in a trust decision. We also look closely at the support for provenance from a broader perspective. Finally, we present an analysis of the observations made on how well WD supports a trust decision based on the mechanisms mentioned earlier, discuss related work, and point to ongoing and future work.

The two main components of interest in WD are Entities and Properties. Entities are uniquely identified by an Item ID (aka QNode) and properties by a Property ID (aka PNode). Figure 2 about

An example of a WD statement, composed by a claim (inside the green rectangle) with qualifications and reference.

Given an item, WD allows it to be the subject of multiple statements with different values for the same predicate, i.e., expressing different perspectives about a subject even if they contradict one another. While this may be entirely appropriate in some cases (e.g.,

The intended semantics are further characterized by specifying constraints over these properties, which formulate restrictions on how these properties should be used within statements in WD.1

Another constraint of interest is

Although, there are situations when no context applies (e.g., for pure inverse-functional properties such as

Sometimes an item should have only one value related to a property, which ideally is expressed through the

Brazil’s population of 2020 and 2021 years where 2021 (most recent available) is marked as preferred (green).

In other situations, multiple values in a query result are not necessarily controversial. This can be the result of an improperly (incompletely) formulated query or the result of a shortcoming in the data present in the KG. For instance, if someone is interested in who is the

Provenance in WD is given mainly through references. A referrer is any property whose type is

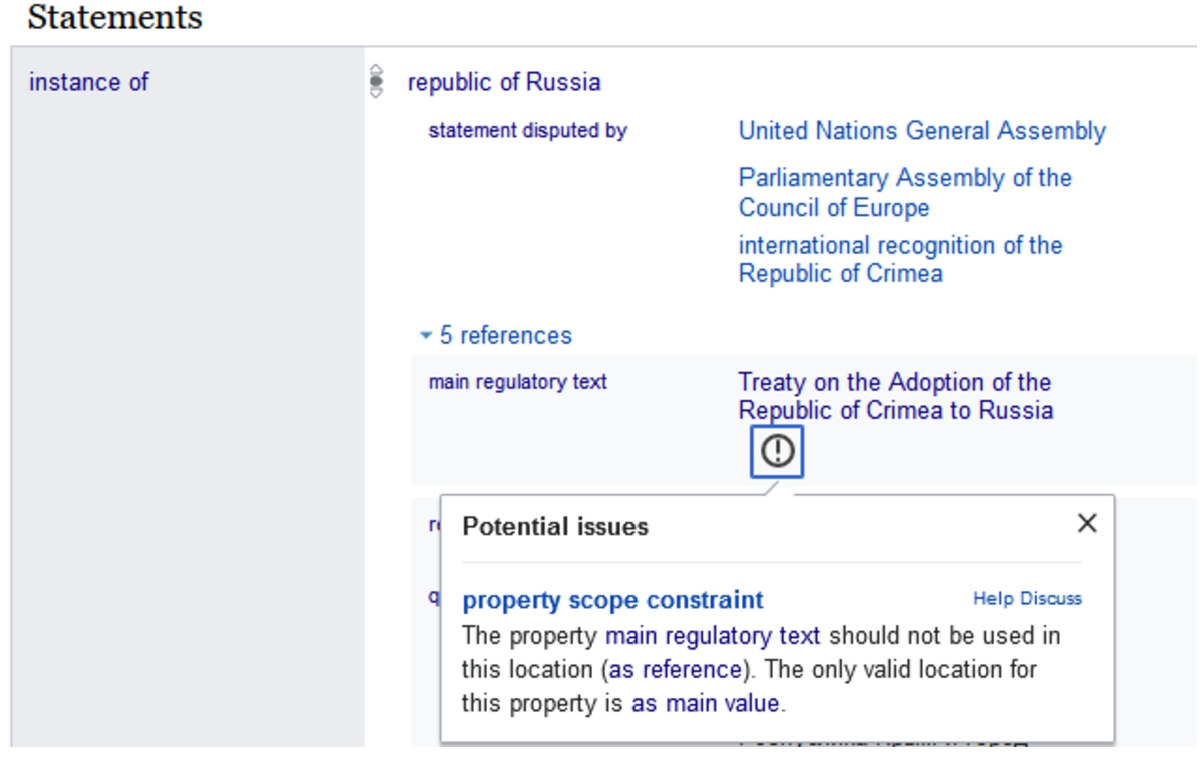

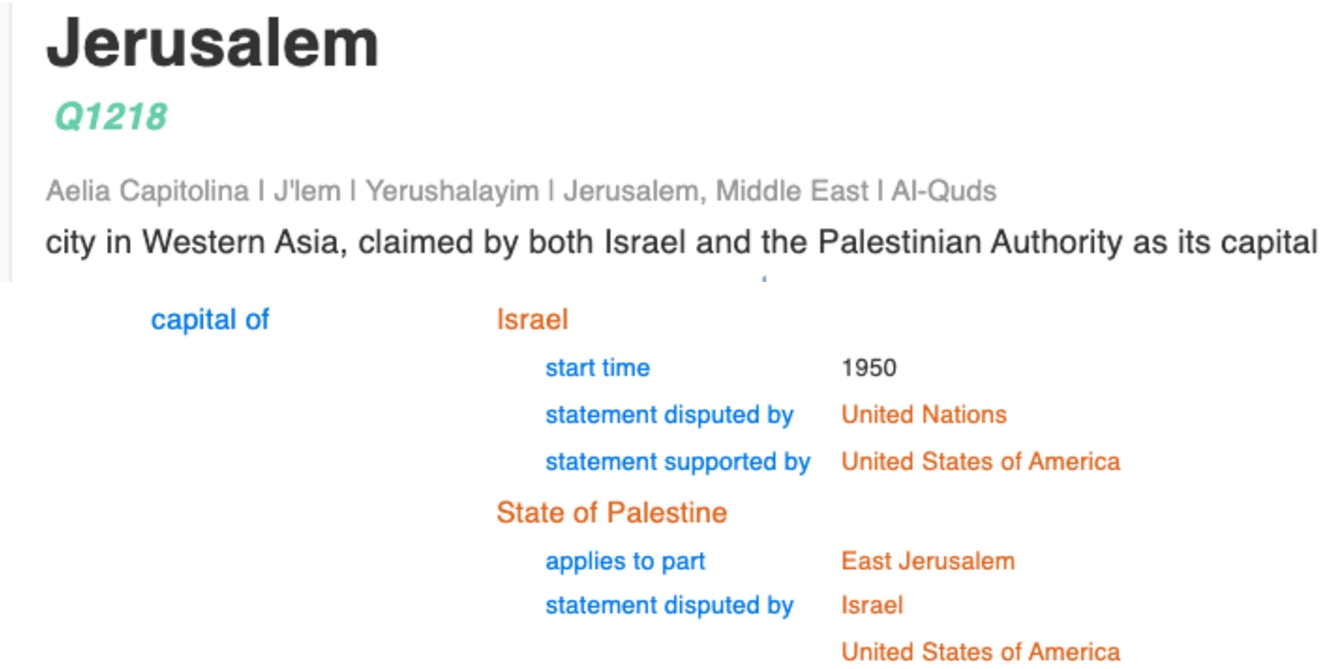

In addition to these structural mechanisms, WD also provides ontological support recording controversial statements by explicitly marking them with the

An example of a dispute with multiple points of view.

Interestingly, however, there are many cases in which a disagreement is recorded using this qualifier, but no alternative value is claimed. For example, the

An example of an one-sided disagreement.

According to the Cambridge Dictionary [6], a controversy is a disagreement, often a public one, that involves different ideas or opinions about something; Merriam–Webster’s [7] definition is a discussion marked especially by the expression of opposing views, and the Oxford English Dictionary [8] defines it as disagreement, typically when prolonged, public, and heated. From these definitions, one could interpret the occurrence of statements with multiple different values for the same item in a KG as indicators of potential controversies. A more careful examination of such occurrences indicates the existence of three different types of situations – incompleteness, incongruences, and controversies. We discuss each one next.

Following the dictionary definitions of controversy given previously, one can rephrase them, for our purposes, as an incongruence that also involves a discussion and disagreement among authors of the statements involved, possibly over an extended period. For crowdsourced online resources such as WD and Wikipedia, a controversy is manifested through the talk pages associated with an entry, as well as by patterns in their edit history (see [5,20,21,29]). Such analysis is outside the scope of this paper and is left for future work; in this respect, we will focus on incomplete and incongruent content in WD.

We have already pointed out that to use a value stated in a WD claim for some computation, the user should, in principle, ensure that s/he trusts the veracity of the claim, i.e., considers the statement a fact. This points to the need for an additional layer above the KG, which we call the Trust Layer, where trust policies and decision criteria are represented. The trust decision is made (for each user) based on the intended use (action) for the data retrieved from WD and additional information about the context in which the statement should be considered. This additional information comes in three forms, in WDs data model [16]:

Qualifiers, statements about statements, better characterize the relation being asserted. For example, ≪

WD statements have a rank, which can be

Statements can also have references (or sources) to support their veracity. A reference can be a URL of some external source or a link to a WD item that supports the claim being made. References cannot be associated with qualifiers of a statement since the WD data model does not follow a multi-layer graph model [1].

Even though the trust process applies to statements that assert a single value for a property of an item, it is more crucial in situations where there are multiple statements about a property of an item each with a different value, and a single value is needed for some computation. In such cases, beyond applying the trust process to each asserted value, the user is further confronted with the additional decision of which value to choose.

Given the additional information, the user must apply their trust policies, taking them into account to decide whether to accept or reject the claim’s veracity. An important criterion often used in trust policies is provenance – e.g., who made a claim, or how the claim was established. Provenance may be given through references or through some of the qualifiers (e.g.,

Unfortunately, the id of the user who asserted a statement is not directly available as an item in WD itself, although it can be retrieved by accessing the underlying database (Wikibase). This significantly hampers the ability to build a trust layer on top of WD alone, as trust policies relying on the authorship of a statement (claim) would not be able to be evaluated.

This paper will not elaborate further on how such a Trust Layer could be defined and implemented. Rather, we analyze using our KG Profiling approach the current status of WD to support a trust process. This support entails properly recording multiple points of view, explicitly representing controversies, characterizing potentially incomplete or incongruent content, and providing provenance information.

KG Profiling focuses is on computing frequencies and statistical measures to understand the KG characteristics in terms of the structure and the contents [15]. It can help the initial KG exploratory stages in order to identify whether the dataset can satisfy the current information need or complementary resources are necessary. Our KG Profiling approach aims to:

Compute the usage of the disputed by qualifier (P1310) by predicate to explicitly express disagreements. Compute violations of the required qualifier constraint by predicate to identify incomplete statements. Compute missing required qualifiers to identify which contexts are affected by incompleteness. Compute ranking usage by predicate to identify community consensus coverage. Compute statements with multiple values and no qualification by predicate to identify potential incongruities. Compute reference usage by predicate to identify provenance coverage.

This section presents WD profiling data concerning incompleteness, incongruences, ranking, references, and provenance. This data was compiled using the DWD dump provided by the KG Center at ISI,4

First, we present some general statistics about WD to give an overview of this KG. There are 559,038,971 claims using 9,653 properties as predicates, and 141,983,745 qualifications are associated with claims using 9,906 properties as qualifiers. There are 10,089 properties, and 90,00% of them have a

All data collected, statistics, scripts, and queries used for conducting this research can be found in

Property scope constraint distribution

We observe that only 363 properties have a

Accumulated Frequency, in all tables, is the sum of the previously reported frequency.

While it is difficult to provide a definitive explanation why, it is possible to detect that some users who participated in the discussion about the creation of this property (

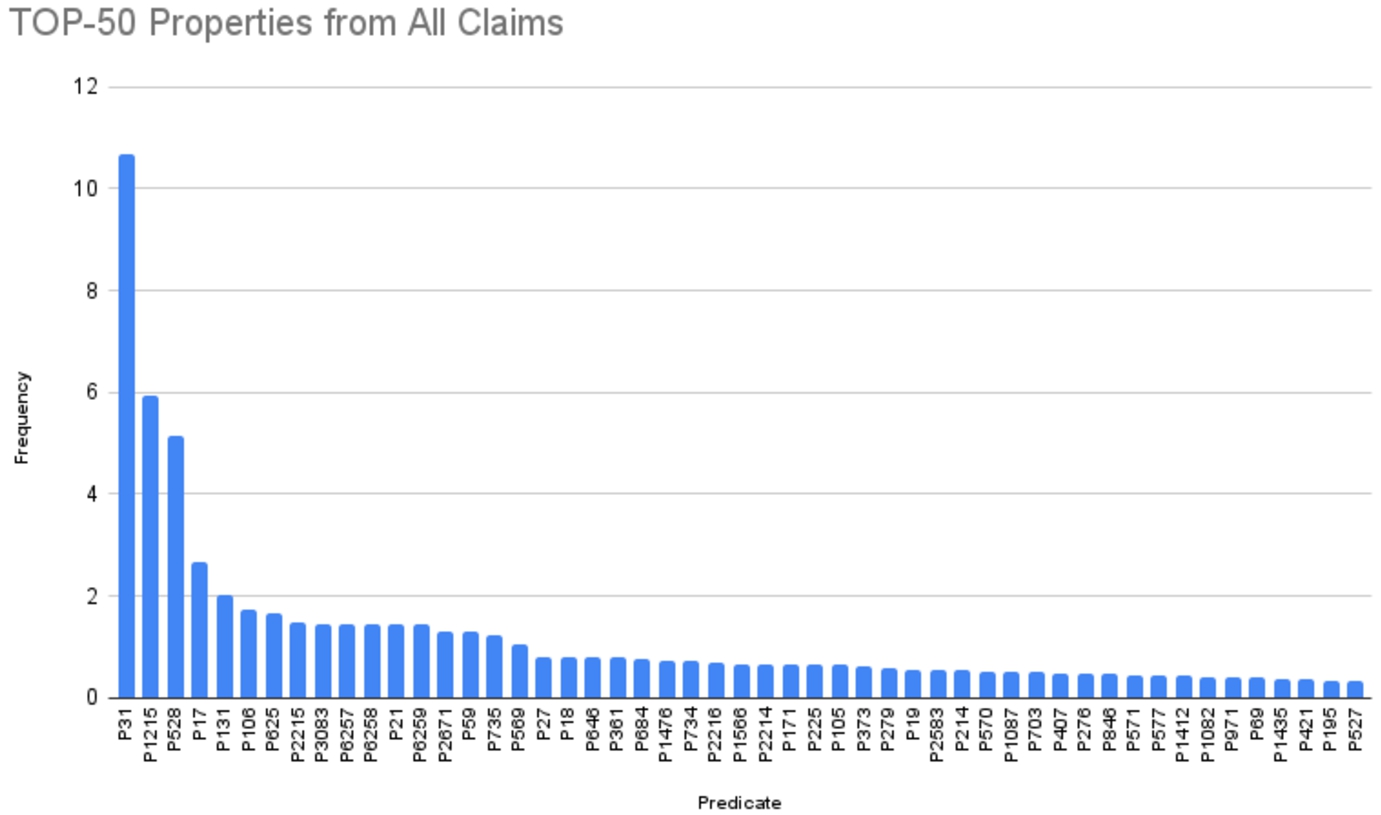

Top-50 properties used as predicates from claims.

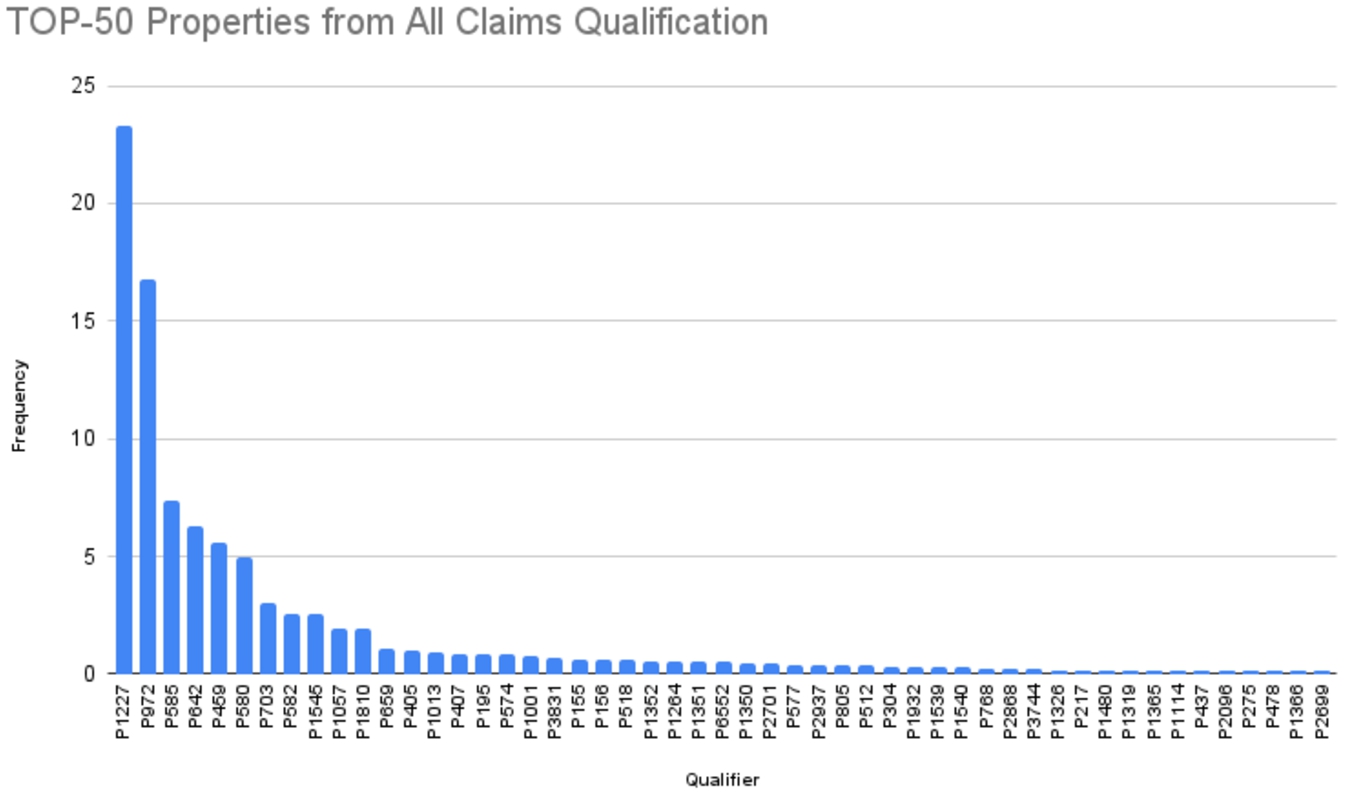

Top-50 properties used as qualifiers from qualifications.

Top-10 predicates in all claims. The frequency is relative to the total number of statements

Top-10 qualifiers in all qualifications. The frequency is relative to the total number of qualifications

We identified that only 21,00% of WD claims have qualifications. Due to its crowdsourced and distributed authoring model, incompletenesses often creep into the WD KG [19,24].

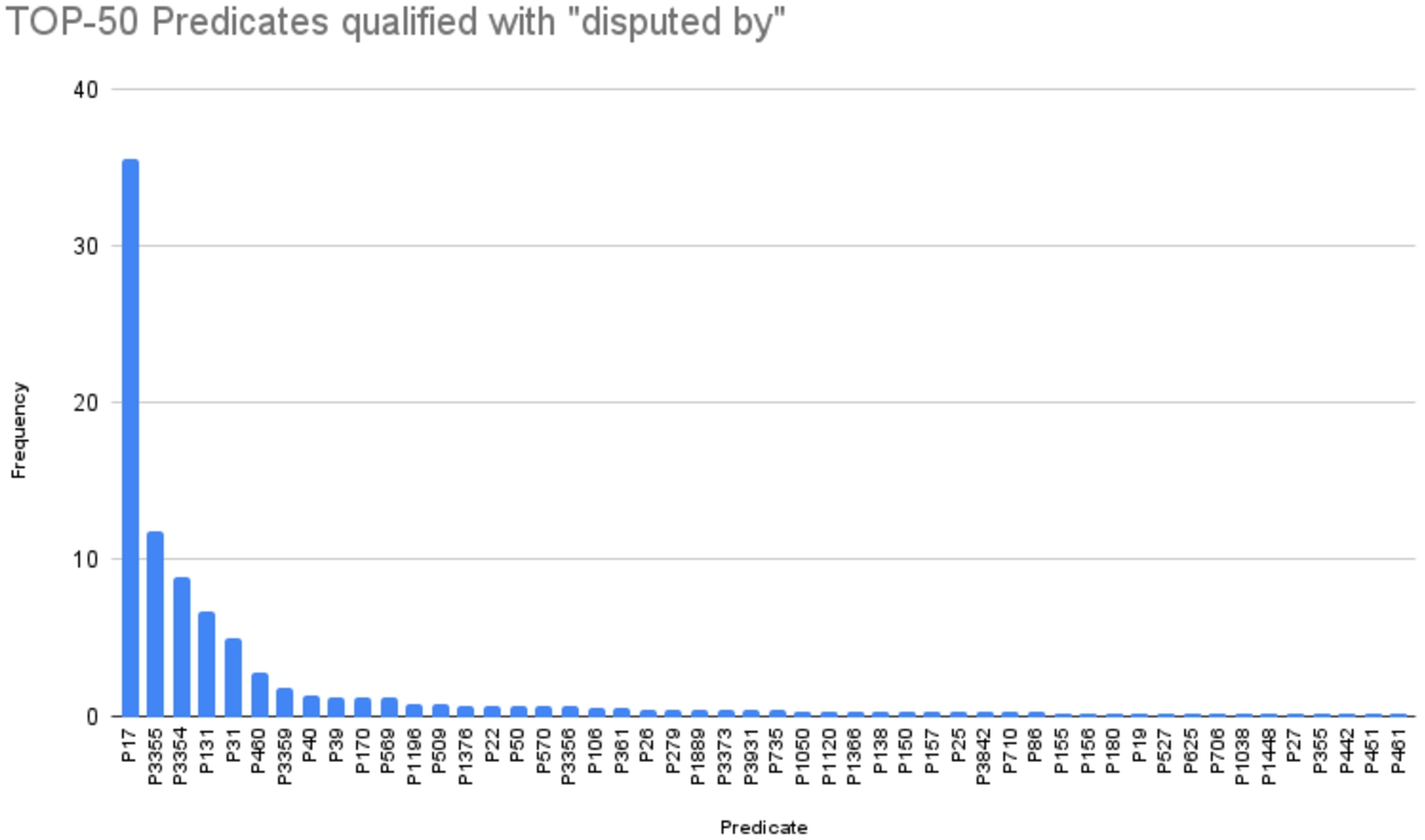

We examine here the detailed record of disagreements via the use of the

Disputed by frequencies

Next, we present statistics about explicit disputes in WD. There are 157710

According to the Property Talk page

Top-50 most frequent properties in claims qualified with

Property types for the top-10 claims predicates qualified with

This profiling information indicates that only a tiny fraction of statements in WD record explicit disagreements (0,0003%), perhaps unsurprisingly involving topics that are already controversial in the physical world. The statements capturing these disagreements often do not use the available properties in a way that can support a user in deciding which statement to accept as a fact if they must do so. This is further hampered by limitations of the WD data model, for example, needing to give some provenance information (or reference) to a qualifier statement (since qualifiers cannot be qualified). For instance, it might be essential to have a reference to the qualifier statement

Qualifiers and constraints are essential mechanisms to provide a context in WD. Looking at all statements, there are 141,983,745 qualifications over 116,211,413 claims, using 5,082 properties as predicates; qualifications were associated with claims using 9,905 properties as qualifiers. This means that only roughly 21,00% of 559,038,971 statements provide some context information using qualifiers.

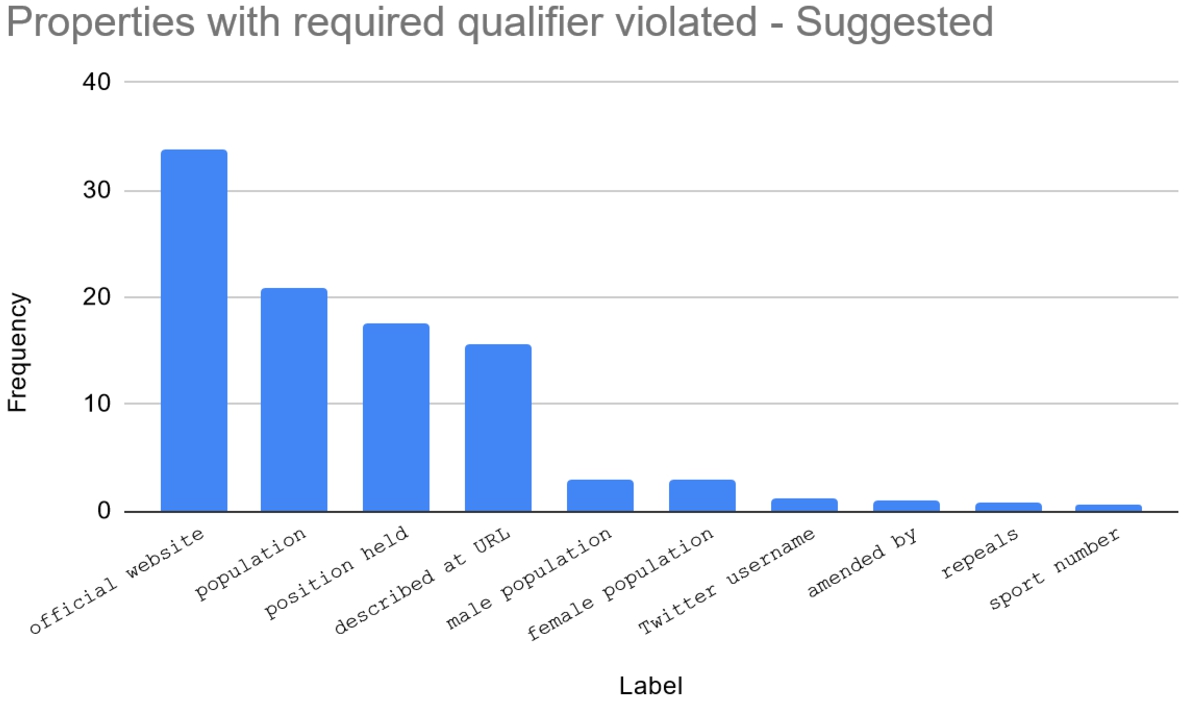

One can identify contextually incomplete statements by looking at violations of the required qualifier constraint. A

We found 17,934,765 claims using predicates with mandatory required qualifier and 7,824,405 with suggested required qualifier constraint. Among them, there are 359,445 with mandatory required qualifier violation, representing 2% of the first group and 0,0643% of all claims. In comparison, 3,448,673 violated the suggested required qualifier constraint of their predicates, meaning nearly 44% of the second group and 0,62% of all claims. Claims with these constraint violation are those where at least one missing qualifier was found. Figures 10 and 11 show the top-10 most frequent predicates used in those claims.

Top-10

Top-10

There were 395,604

Top-10 missing qualifiers mentioned in required qualifier mandatory constraint. The frequency is relative to the total number of qualifications absence. Highlighted predicates involve time

Top-10 missing qualifiers mentioned in required qualifier suggested constraint. The frequency is relative to the total number of qualifications absence. Highlighted predicates involve time

The qualifiers

Some properties, defined as having a

Missing restrictive qualifiers from mandatory required qualifiers constraints

In the analyzed dataset, there were 5,480,866 preferred ranked claims, nearly 1,00% of all claims, and only 71,609 have the qualifier

According to the Property Talk page

Property types and frequency for the top-10 preferred ranked claims the frequency is relative to the total number of preferred claims

Ranking statements, as preferred, can be regarded as a mechanism to indicate a community consensus about the veracity (or at least the likelihood) of a statement. From the trust process point of view, it is a proxy for a more detailed determination process that each user goes through and does not consider for what purpose that claim will be used.

This is supported by observing, for example, that nearly 72,00% of ranked statements (for

The statistics show that this mechanism is seldom used (1,00% of the statements) and even less frequently justified. Consequently, if a user must choose among multiple possible values for a property, s/he would rarely be able to rely on ranking. Furthermore, little support is given to help them determine if the criteria used by the community match their own for the intended action.

Incongruences among statements where multiple values are present for the same property of the same WD item should be detected after evaluating their values and contextual information. In the analysed dataset there are many instances of statements that assert multiple different values for the same property of the same item and do not provide any qualifier to differentiate among them. Such statements indicate either a gap in the recorded information in WD or potential incongruences.

Multiple value statements statistics

Next, we present statistics about multiple value statements in WD and property constraints:

There are 132,552,453 statements with multiple values for the same item and the same property corresponding to 23,71% of all statements.

We separate this set into statements with and without qualifiers. Of these, 91,148,503 have at least one qualification (approximately 16.30% of all statements).

Overall, 1,333,012 statements with multiple values, representing 0.24% of all statements, violate one or more required qualifier constraints.

Among the top-10 qualifier absences there are 3 time-related properties:

In a best-case scenario, where one assumes that all violations have been corrected, only 16.54% of all statements with multiple values would provide some contextual information. There are still 41,403,950 statements; 31,30% of those with multiple values (7,41% of all statements) do not have any qualifications.

We computed

Top-10 required qualifiers in multiple valued statements. The frequency is relative to the total number of all statements with multiple values. Highlighted predicates involve time

Top-10

We also checked the

Top-10

It is important to note that not all cases where multiple values are present, are inconsistent, as there may be a functional dependency or logical implication between them. Even though the statements are fully qualified and referred to, the incongruence may be only detected while applying the trust process to each asserted value according to task-specific criteria. Suppose a person has a biological mother and a foster (legally defined) mother. Two claims using predicate

We focus only on properties used as reference qualifiers in qualifications since, as such, they serve to provide provenance information.

Among the top-10 properties indicating the use of a source as qualifiers, shown in Table 11 and representing 2,11% of all qualifications, seven have all three possible scopes (

Top-10 properties to indicate a source used as qualifiers. Frequency is calculated based on qualifications using a property of type Wikidata property to indicate a source

Top-10 properties to indicate a source used as qualifiers. Frequency is calculated based on qualifications using a property of type Wikidata property to indicate a source

Because the ISI dump does not include references, we extracted them using the WD Virtuoso SPARQL EnPoint12

Top-10 properties used as referrers in references. External identifiers are highlighted

Data type distribution of referrers.

It should be noted that provenance, in its broader sense, includes not only the sources but also the activities and other elements that are involved in the creation of an artifact (see, for example, the Prov-DM data model13

Piscopo and Simperl (2019) [19] analyzed 28 previous papers about WD quality metrics using different dimensions and, similarly to Bizer et al. [2], they also considered that (i) data quality depends on the task and (ii) its relevance to a task is user-subjective. Although the authors stated that completeness is the dimension covered by the most significant number of papers, different from those, we chose to focus on qualification completeness based on required qualifiers violations. Such constraint violations may lead to incongruence and controversies in interpreting the data since it affects claims contextualization.

The authors of [24] developed a framework to detect and analyze low-quality statements in WD. They propose three metrics to identify WD Quality: Community-based indicators, Deprecation-based indicators, and Constraints-based indicators. Differently from those concerned with WD data quality in general, our analysis aims to detect WD incongruences, incompleteness, and controversy that affect criteria often used in trust policies, such as provenance and timeliness.

Regarding Provenance, [18] developed an approach to evaluate the relevance and authoritativeness of Wikidata references based on two complementary methods: microtask crowdsourcing and machine learning. Our analysis is complementary, looking at constraint violations and analysis of types of references.

WD Property Talk pages have useful information such as the property’s current usage, query examples, and report about constraint violations. WD also has definitions of schemas in the form of shapes (subgraph patterns to describe a concept) using ShEx shape expressions. Some schemas, besides predicates with

In [12], the authors proposed a set of SPARQL queries, formalizing all current WD property constraint specifications. These queries can be used to verify if a statement violates a constraint in online mode. Our profiling approach was developed using the Kypher language in the KGTK toolkit [14]. Still, we used the same query logic to verify some constraint violations of interest among all WD claims in batch mode. We complement our data using SPARQL queries to extract references data from an EndPoint.

Statistical methods to automatically build knowledge graphs create a simplified representation of knowledge where diverse perspectives tend to be resolved as conflicts. This approach introduces bias and loses traceability [25]. According to them, data is created based on a particular perspective or view. Data models that advocate being perspective-aware should be able to trace provenance in a broader sense, including creation and transformations.

WD, as a crowdsourcing effort where a voice corresponds to the data contributor perspective, enables the representation of provenance of claims at the individual level. Still, references are associated only with the data provider. The viewpoint of data contributors is unknown, and rank annotation of some statements reflects the consensus from a particular domain community. Complementary to this perspective, another viewpoint must be considered when building a contextualized knowledge graph to support the Trust Layer: the data consumer. The Trust layer will apply trust policies to this perspective over data representing different viewpoints.

Bizer et al. [2] argued that web information should have its quality assessed according to task-specific criteria before being used. Their work is supported by Quality-based information filtering policies, that is, policies that users may choose for deciding if some piece of web information may be accepted or rejected to accomplish a specific task. They developed the WIQA – Information Quality Assessment Framework composed of a set of software components dealing with uncertain information. Their proposed quality layer is similar to what we envisage for the trust layer for KGs to support the trust decision process implied when using its data.

Generating statistics on specific domains may have different characteristics from those we use in our approach. In [17], in addition to their tool to visualize research profile, the authors also present how WD has been used to store and disseminate bibliometric information and present scientometric statistics extracted from this information. Our approach can be applied to any sub-domain present in WD, such as Academics, Astronomy, Genomics, Biology in general, etc. Since the goal is to analyze WD support for the trust process through multiple points of view, controversies, and potentially incomplete or incongruent content.

Before conclusion, we go over again, in Table 13, our main findings from WD Profiling about WD mechanisms and data to support trust process.

WD profiling summarization

WD profiling summarization

To gain some insight on what these statistics tell us, there are several factors to consider, to whit:

Some statements are missing qualifications because of a simple lack of information at creation time, and authors expect that either themselves or other users will eventually fill in this information when it becomes available, similar to Wikipedia missing links in page contents;

Constraints for some properties are possibly mis-formulated – i.e., their intended semantics need to be understood by the user community that creates statements. In some sense, constraints reflect a community consensus about the semantics of properties that all users may not share. Furthermore, constraints do admit violations in some exceptional cases;

Some properties require qualification, but this has yet to be captured in constraints when they were defined, despite the editorial process. This can also happen if the understanding of the semantics of the property evolves due to new knowledge being brought in or created;

Finally, users are simply ignorant that certain properties should be qualified (although the editing interface warns them of this, and they are flagged in the standard WD browser;

Incorrect or incomplete import scripts to automate the ingestion of new sources or unavailability of that information in the imported source.

We summarize here our observations about the various mechanisms provided by WD to support a trust decision about one of its claims.

Although the explicit recording of disagreement may seem like a natural way to capture these real-world situations in WD, only a negligible fraction of statements and a very small number of properties are present using this mechanism. The properties used in these statements involve mostly territory, medicine, diseases, and relations among people. The analysis also highlights a shortcoming of the WD data model, which prevents a qualifier from being further qualified or having a reference. A trust decision, therefore, cannot verify, for example, if some provenance information supports a qualification.

Considering that qualifications provide context information, we observe that only 21,00% of statements are qualified. Regarding

This means that approximately 75,20% of statements do not have any context information via qualifiers. For those, any support for a trust decision would come from provenance information via authorship, which we already indicated that it is not provided in WD or through references.

We could not determine the total number of referenced statements in WD for computational reasons, as we had to extract those from the full dump. However, we can make some approximations based on the proportion of statements excluded from the ISI dump we used for all the other statistics. For this dump, it left out approximately 50,00% of all statements by ignoring scholarly and review articles and their sub-classes. Applying this proportion to the total reported in Section 4.5, we estimate there are 51,870,340 references, of which only 43,70% refer to WD items, amounting to 22,667,338 references. As a lower bound, if we assume all of those references hypothetically apply to only those statements without qualification (which we know is not the case), this would still represent only 5,40% of all such statements. Therefore it is safe to conclude that at least 69,60% of all WD statements do not have any support for trust decisions.

As already pointed out throughout the paper, author information about statements is unavailable as part of the KG in WD. This would be the only possible information for those statements without qualifiers or references that can provide information to the trust process and therefore has a significant negative impact on WD’s support for trust decisions.

Statements with multiple values for the same property for the same item can represent potential incongruences. For such statements, the trust decision must, in addition, filter each value claimed and often choose one of them to perform a computation. Again in a best-case scenario in which all constraint violations were to be corrected by providing missing values, there would still be 41,403,950 statements, 31,30% of those with multiple values (7,41% of all statements) without any qualifications.

It is worth noting that temporal information, often essential to properly determine the context of the veracity of a claim, is missing in 57,00% of claims that violate a time-related

WD provides no, or at best very little, support to detect implicit disagreements, differentiate them from incomplete data, and support trust decisions.

In situations with multiple values, the user has to decide which value to choose beyond applying the trust process to each asserted value. WD provides the concept of ranking to aid the user in making this decision. We identified a rarified occurrence of ranked statements and even fewer with a qualifier to specify a

The analysis shows that provenance is also available through qualifiers, and other types of properties related to methods and processes should also be considered as provenance. Even though 43,70% of references point to WD Items, there is still a large number of reference information pointing to external data sources using identifiers (24,70%), URLs (29,70%), and strings (1,90%). These external references typically require additional (often non-trivial) processing to be used in a trust decision about the claim where it is used as a reference. Furthermore, the WD model does not allow references to be associated with qualifiers since it is not a multi-layered model [1].

Looking at tools provided by Wikidata to support the trust process, one can look at ways to retrieve statements with preferred rank in case of multiple value statements. One such way proposed in WD is to use, when querying WD, statements annotated with preferred rank to retrieve the best non-deprecated ranked statement for a given property using the prefix

Another tool is that constraint violations can be visually verified using the standard WD browser interface, but it is very complex to infer this information at query time. A SPARQL query example for required qualifier violations, extracted from [12], is illustrated in.17

From these analyses, it is possible that WD’s support for trust decisions about its statements is low and could be improved significantly. Including a warning mark to indicate claims that do not have provenance data (in qualifiers or references) as well as allowing the author of the claim to be viewed (whether human user or bot) could encourage data completion.

Any open KG constructed collaboratively by contributors with different levels of domain knowledge and world views can suffer from wrong, biased, outdated, incomplete, and inconsistent content. The knowledge retrieved from a KG to be used to accomplish a specific task has to be more contextualized as possible to support the trust process. Here we adopted the context definition from [13]: By context, we refer to the scope of truth, and thus talk about the context in which some data are held to be true.

After analyzing the current status of WD, we realized that given the characteristics of the data model (such as the lack of references for qualifiers, incorrect specification of property scope in addition to the absence and ambiguities of definitions in constraints) and in the data (violations of constraints that generate incompleteness and possible interpretation errors) it is necessary to have an additional and separate layer, that will be user and task-dependent. And, to provide all information required by this layer, the knowledge retrieved from the KG should be explicitly contextualized, at least in terms of Provenance, Temporal, and Location dimensions.

As part of ongoing and future work, we are looking at ways to improve the ontology and the data model of a KG to support a trust layer better [10]. This process will necessarily involve human participation through knowledge engineers, helped by automated and semi-automated tools. Even though this paper has explicitly focused on WD, we are looking at the problem from the point of view of KGs in general. The design of a trust layer itself is left as future subsequent work.

KG Summarization & Profiling [4], based on instances or schema, can assist knowledge engineers in evaluating context dimensions already present in the KG. For each property in the role of Qualifier or Referrer, the knowledge engineer would identify which context dimensions it belongs to. It can also help formulate semantic rules interpretations for qualifiers’ existence, absence, and possible claim contradictions.

Identifying context dimensions is challenging since any information that characterizes an element can, in principle, be considered as context. Knowledge engineers should build on top of an existing KG a set of mappings and rules that makes contextual dimensions explicit about transforming a standard KG into a Contextual KG (CKG).