Abstract

Using knowledge graph embedding models (KGEMs) is a popular approach for predicting links in knowledge graphs (KGs). Traditionally, the performance of KGEMs for link prediction is assessed using rank-based metrics, which evaluate their ability to give high scores to ground-truth entities. However, the literature claims that the KGEM evaluation procedure would benefit from adding supplementary dimensions to assess. That is why, in this paper, we extend our previously introduced metric Sem@

Keywords

Introduction

A knowledge graph (KG) is commonly seen as a directed multi-relational graph in which two nodes can be linked through potentially several semantic relationships. More formally, a knowledge graph

Excerpt of a KG containing some influential political figures and relations holding between them.

KGs are inherently incomplete, incorrect, or overlapping and thus major refinement tasks include entity matching, question answering, and link prediction [48,72]. The latter is the focus of this paper. Link prediction (LP) aims at completing KGs by leveraging existing facts to infer missing ones. In the LP task, one is provided with a set of incomplete triples, where the missing head (resp. tail) needs to be predicted. This amounts to holding a set of triples

The performance of KGEM for LP is evaluated using rank-based metrics such as Hits@

Few works propose to go beyond the mere traditional quantitative performance of KGEMs and address their ability to capture the semantics of the original KG,

More specifically, in this work, our goal is to assess the ability of popular KGEMs to capture the semantic profile of relations in a LP task, RQ1: how semantic-aware agnostic KGEMs are? RQ2: how the evaluation of KGEM semantic awareness should adapt to the typology of KGs? RQ3: does the evaluation of KGEM semantic awareness offer different conclusions on the relative superiority of some KGEMs compared to rank-based metrics?

Accordingly, the main contributions of this work are:

to evaluate KGEM semantic awareness on any kind of KG, we extend a previously defined semantic-oriented metric and tailor it to support a broader range of KGs.

we perform an extensive study of the semantic awareness of state-of-the-art KGEMs on mainstream KGs. We show that most of the observed trends apply at the level of families of models.

we perform a dynamic study of the evolution of KGEM semantic awareness vs. their performance in terms of rank-based metrics along training epochs. We show that a trade-off may exist for most KGEMs.

our study supports the view that agnostic KGEMs are quickly able to infer the semantics of KG entities and relations.

The remainder of the paper is structured as follows. Related work is presented in Section 2. Section 3 outlines the main motivations for assessing the semantic awareness of KGEMs. Section 4 subsequently presents Sem@

Link prediction using knowledge graph embedding models

Several LP approaches have been proposed to complete KGs. Symbolic approaches relying on rule-based [16,17,31,38,47] or path-based reasoning [12,32,79] are somewhat popular but are not considered in the present work. Instead, KGEMs are the focus of this paper.

In particular, this work is concerned with assessing the semantic awareness of agnostic KGEMs. The semantic awareness of such models is defined as their ability to score higher entities whose types belong to the domain and range of relations. Agnostic KGEMs are defined as KGEMs that rely solely on the structure of the KG to learn entity and relation representations. In this respect, these models differ according to several criteria, such as the nature of the embedding space or the type of scoring function [25,54,70,72]. In this work, KGEMs are considered with respect to three main families of models that are traditionally distinguished in the literature.

Combining embeddings and semantics

The possibility of using additional semantic information has been extensively studied in recent works [10,23,30,37,46,71,78]. In general, the semantic information stems directly from an ontology, originally defined by Gruber as an “explicit specification of a conceptualization” [18]. Ontologies formally describe a specific application domain of interest (

A significant part of the literature incorporates such semantic information to constrain the negative sampling procedure and generate meaningful negative triples [23,30]. For instance, type-constrained negative sampling (TCNS) [30] replaces the head or the tail of a triple with a random entity belonging to the same type (

Semantic information can also be embedded in the model itself. In fact, some KGEMs leverage ontological information such as entity types and hierarchy.

Embedding models project entities and relations of a KG into a vector space. Thus, the semantics of the original KG may not be fully preserved [23,49]. As stated by Paulheim [49], because embeddings are not meant to preserve the semantics of the KG, they are not interpretable and this can severely hinder explainability in domains such as recommender systems. Consequently, Paulheim [49] advocates for

Evaluating KGEM performance for link prediction

KGEM performance is evaluated in two stages. During the validation phase, KGEM performance is evaluated on the validation set

In both cases, the rank of the ground-truth entity from the test (resp. validation) set is used to compute aggregated rank-based metrics based on the top-

KGEM performance is almost exclusively assessed using the following rank-based metrics: Hits@

As mentioned in Section 1, these metrics present some caveats. LP is often used to complete knowledge graphs, where the Open World Assumption (OWA) prevails. KGs are incomplete and, due to the OWA, an unobserved triple used as a negative one can still be positive. It follows that traditional evaluation methods based on rank-based metrics may systematically underestimate the true performance of a KGEM [73].

In addition, the aforementioned rank-based metrics have intrinsic and theoretical flaws, as pointed out in several works [5,19,64]. For example, Hits@

Recent works recommend using adjusted version of the aforementioned metrics. The Adjusted Mean Rank (AMR) proposed in [1] compares the mean rank against the expected mean rank under a model with random scores. In [5], Berrendorf

In Section 2.2, several approaches incorporating the semantics of entities and relations into the embeddings were mentioned. However, in such cases, the use of semantic information such as entity types and the hierarchy of classes is intended to improve KGEM performance in terms of the aforementioned rank-based metrics only. The underlying semantics of KGs is considered as an additional source of information during training but the ability of KGEMs to generate predictions in accordance with these semantic constraints is never directly addressed. This encourages further assessment of the semantic capabilities of KGEMs, as firstly suggested in [21]. In our work, we directly address this issue by assessing to what extent KGEMs are able to give high scores to triples whose head (resp. tail) belongs to the domain (resp. range) of the relation. When such information is not available –

Motivations and problem formulation

Motivating example

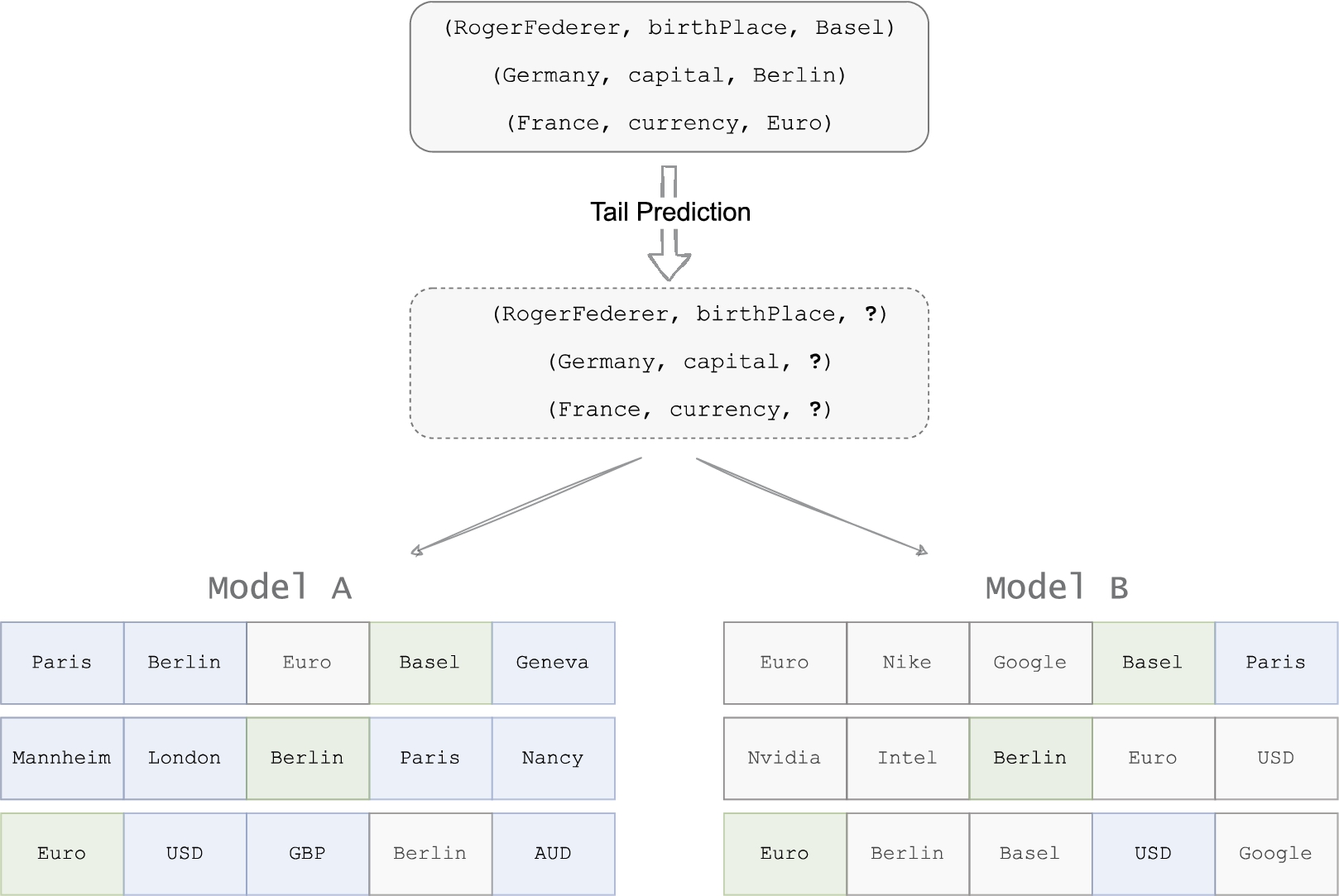

To motivate the use of Sem@

Motivating example. Tail prediction is performed for the three test triples contained in the upper insert. Model A and Model B output scores for each possible entity and only the top-five ranked tail candidates are depicted here. Model A and Model B have the same Hits@1, Hits@3 and Hits@5 values. But Model A has better semantic capabilities. Green, blue and white cells respectively denote the ground-truth entity, entities other than the ground-truth and semantically valid, and entities other than the ground-truth and semantically invalid.

The traditional evaluation of KGEMs solely based on rank-based metrics can be flawed for several reasons. First, KGEMs benchmarked on the same test sets can exhibit very similar results. Using only rank-based metrics, the final choice only depends on the best achieved MRR and/or Hits@

Measuring KGEMs semantic awareness with Sem@K

The standard LP evaluation protocol consists in reporting aggregated results, considering the rank-based metrics presented in Section 2.3. As mentioned above, these metrics only provide a partial picture of KGEM performance [5]. To give a more comprehensive assessment of KGEMs, we aim at assessing their semantic awareness using our proposed metric called Sem@

Typology of KGs and their respective adequacy for the presented Sem@K versions

Typology of KGs and their respective adequacy for the presented Sem@

This version of Sem@

A note on Sem@K, untyped entities, and the Open World Assumption

Traditional KGEM evaluation can be performed in all situations, regardless of whether entities are typed or whether the KG comes with a proper schema. When measuring the semantic capability of KGEMs –

Under the OWA, an untyped entity should not count in the calculation of Sem@

Sem@K [ext] for schemaless KGs

Not all KGs come with a proper schema,

Sem@K [wup]: A hierarchy-aware version of Sem@K

Sem@

To leverage such a semantic relatedness between concepts in Sem@

Several similarity measures

Considering the example in Fig. 3, using the Wu-Palmer score in the calculation of Sem@

Excerpt from the DBpedia class hierarchy.

Datasets

In order to draw reliable and general conclusions, a broad range of KGs are used in our experiments. They have been chosen due to their mainstream adoption in recent research works around KGEMs for LP and the fact that they have different characteristics (

The statistics of the datasets FB15K237-ET, DB93K, YAGO3-37K and YAGO4-19K used in our experiments are provided in Table 2. As discussed in Section 4.2, to create an experimental evaluation setting as unbiased and flawless as possible, the schema-defined KGs used in the experiments are filtered to keep typed entities only. This way, Sem@

SPARQL queries were fired against DBpedia as of November 9, 2022.

We built

Statistics of the schema-defined, hierarchical KGs used in the experiments

Another range of datasets used in these experiments do not come with an ontological schema. In particular, this means relations do not have a clearly-defined domain or range. Although Codex-S and Codex-M are based on the Wikidata schema which does possess property constraints linking subject types to value type constraints, we limit ourselves to KGs that represent this information with

Statistics of the schemaless KGs used in the experiments

Statistics of the schemaless KGs used in the experiments

In this work, the semantic awareness of the most popular semantically agnostic KGEMs is analyzed. More specifically, the translational models TransE [7] and TransH [74], the semantic matching models DistMult [81], ComplEx [66] and SimplE [29], and the convolutional models ConvE [13], ConvKB [42], R-GCN [57] and CompGCN [68] are considered. Note that in the analysis of the results in Section 6, a distinction will be made between pure convolutional KGEMs (ConvE, ConvKB) and GNNs (R-GCN, CompGCN). Although the latter have convolutional layers, they are able to capture long-range interactions between entities due to their ability to consider k-hop neighborhoods. The characteristics of the models used in our experiments are mentioned hereinafter and summarized in Table 4.

Summary of the KGEMs used in the experiments

Summary of the KGEMs used in the experiments

For the sake of comparisons, MRR, Hits@K and Sem@

In the following, we perform an extensive analysis of the results obtained using the aforedescribed KGEMs and KGs. For the sake of clarity, the complete range of tables and plots are placed in Appendix B and C, respectively. When necessary to support our claim, some of them are duplicated in the body text.

Semantic awareness of KGEMs

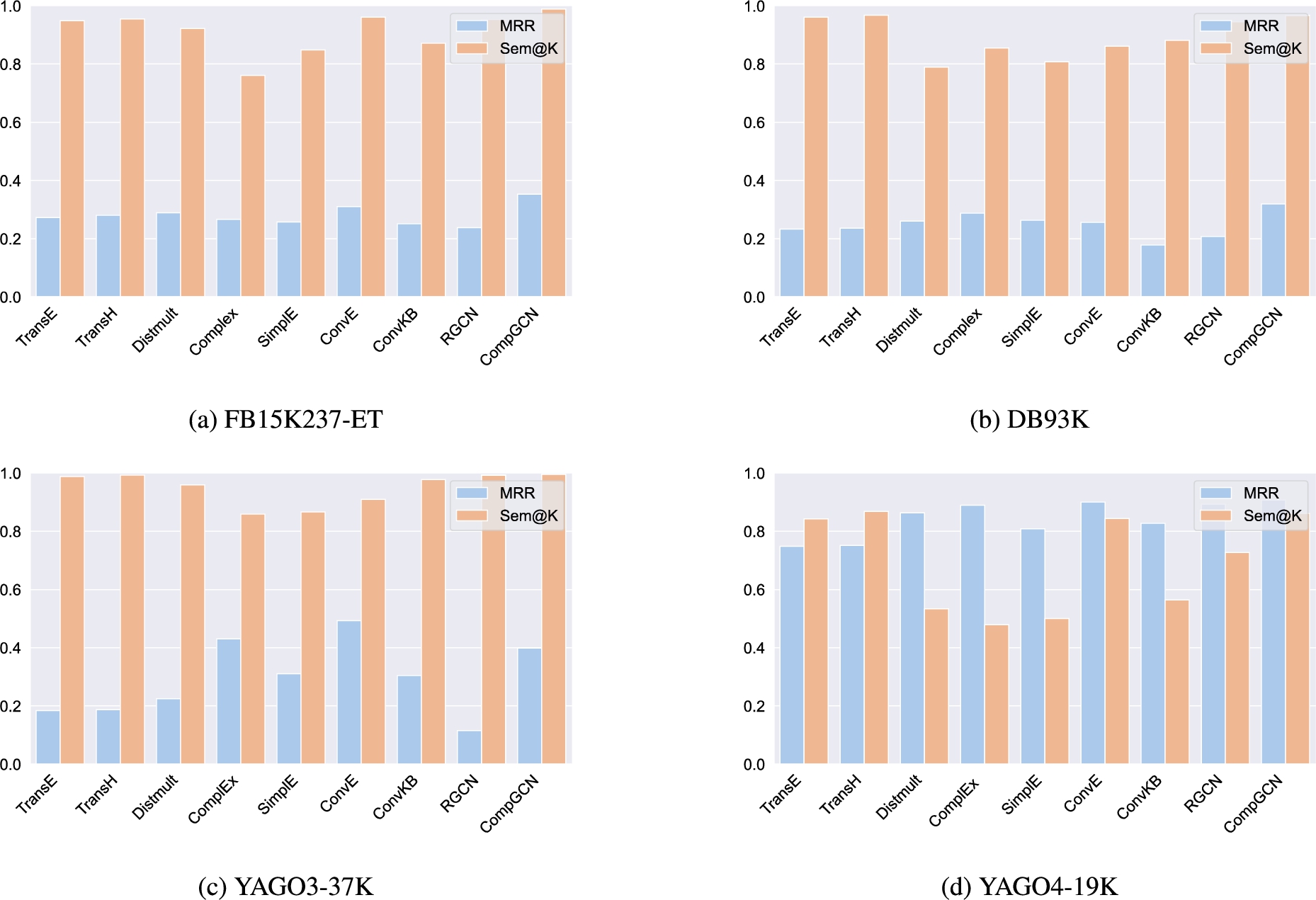

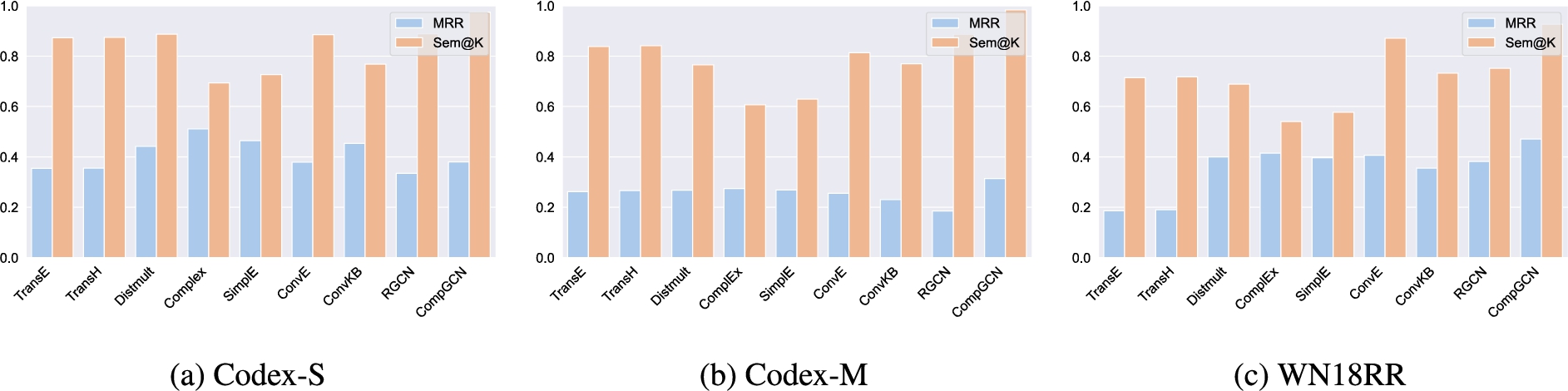

This section draws on the Sem@

A major finding is that models performing well with respect to rank-based metrics are not necessarily the most competitive when it comes to their semantic capabilities. On YAGO3-37K (see Table 5) for instance, ConvE showcases impressive MRR and Hits@K values. However, it is far from being the best KGEM in terms of Sem@

From a coarse-grained viewpoint, conclusions about the relative superiority of models with the distinct consideration of rank-based metrics and semantic awareness can be generalized at the level of models families. For example, semantic matching models (DistMult, ComplEx, SimplE) globally achieve better MRR and Hits@

MRR and Sem@10 results achieved at the best epoch in terms of MRR for each model and on each schema-defined dataset.

MRR and Sem@10 results achieved at the best epoch in terms of MRR for each model and on each schemaless dataset.

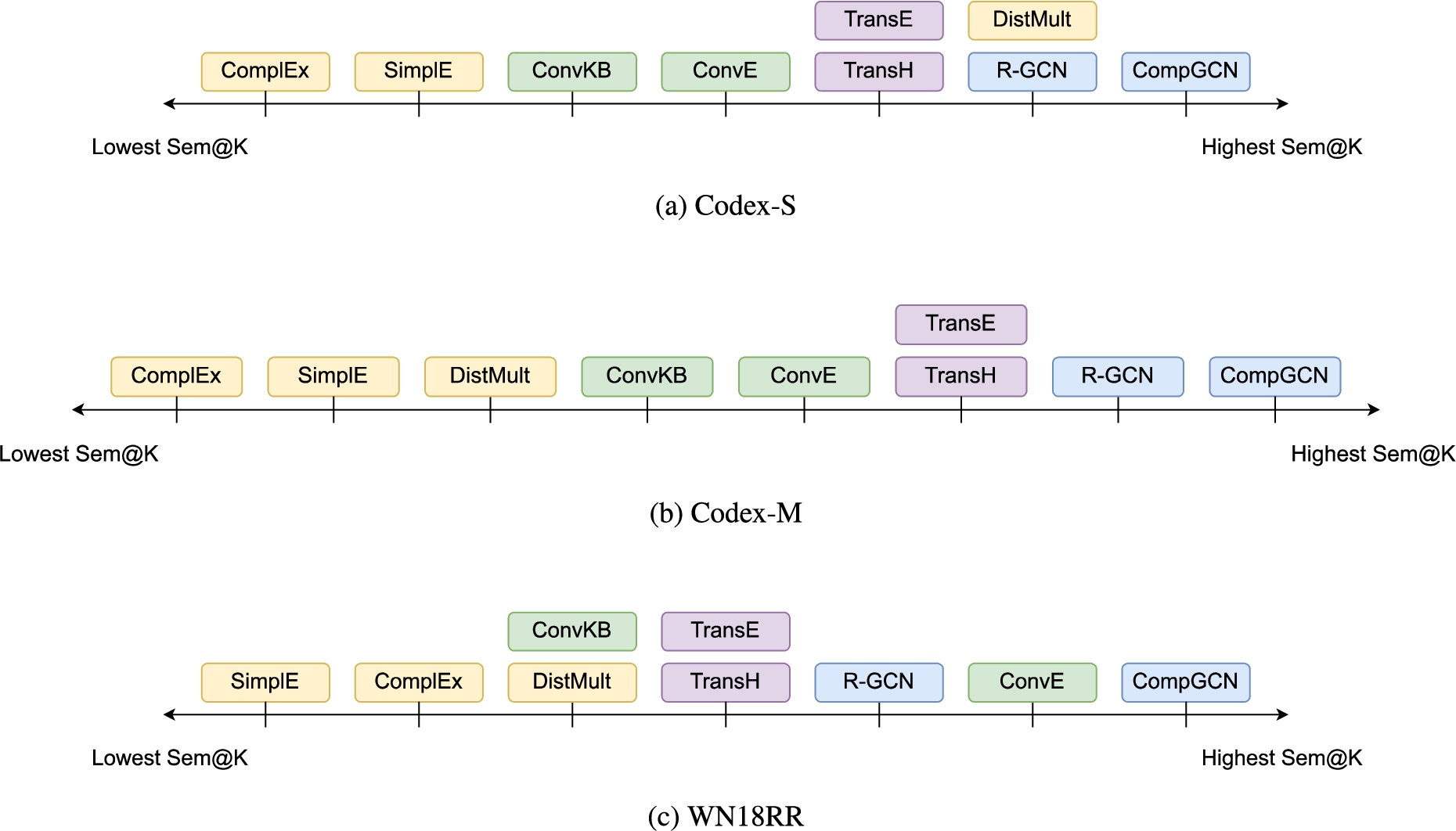

Sem@

Sem@

Therefore, it seems that translational models are better able at recovering the semantics of entities and relations to properly predict entities that are in the domain (resp. range) of a given relation, while semantic matching models might sometimes be better at predicting entities already observed in the domain (resp. range) of a given relation (

Interestingly, CompGCN which is by far the most recent and sophisticated model used in our experiments, outperforms all the other models in terms of semantic awareness as, with very limited exceptions, it is the best in terms of Sem@

Our experimental results suggest that the structure of GNNs seems to be able to encode the latent semantics of the entities and relations of the graph. This ability may be attributed to the fact that, contrary to translational models which only model the local neighborhood of each triple, GNNs update entity embeddings (and relation embeddings in the case of CompGCN) based on the information found in the extended, h-hop neighborhood of the central node. While translational and semantic matching models treat each triple independently, GNNs model interactions between entities on a large range of semantic relations. It is likely that this extended neighborhood comprises signals or patterns that help the model infer the classes of entities, thus providing very promising semantic capabilities in all experimental conditions.

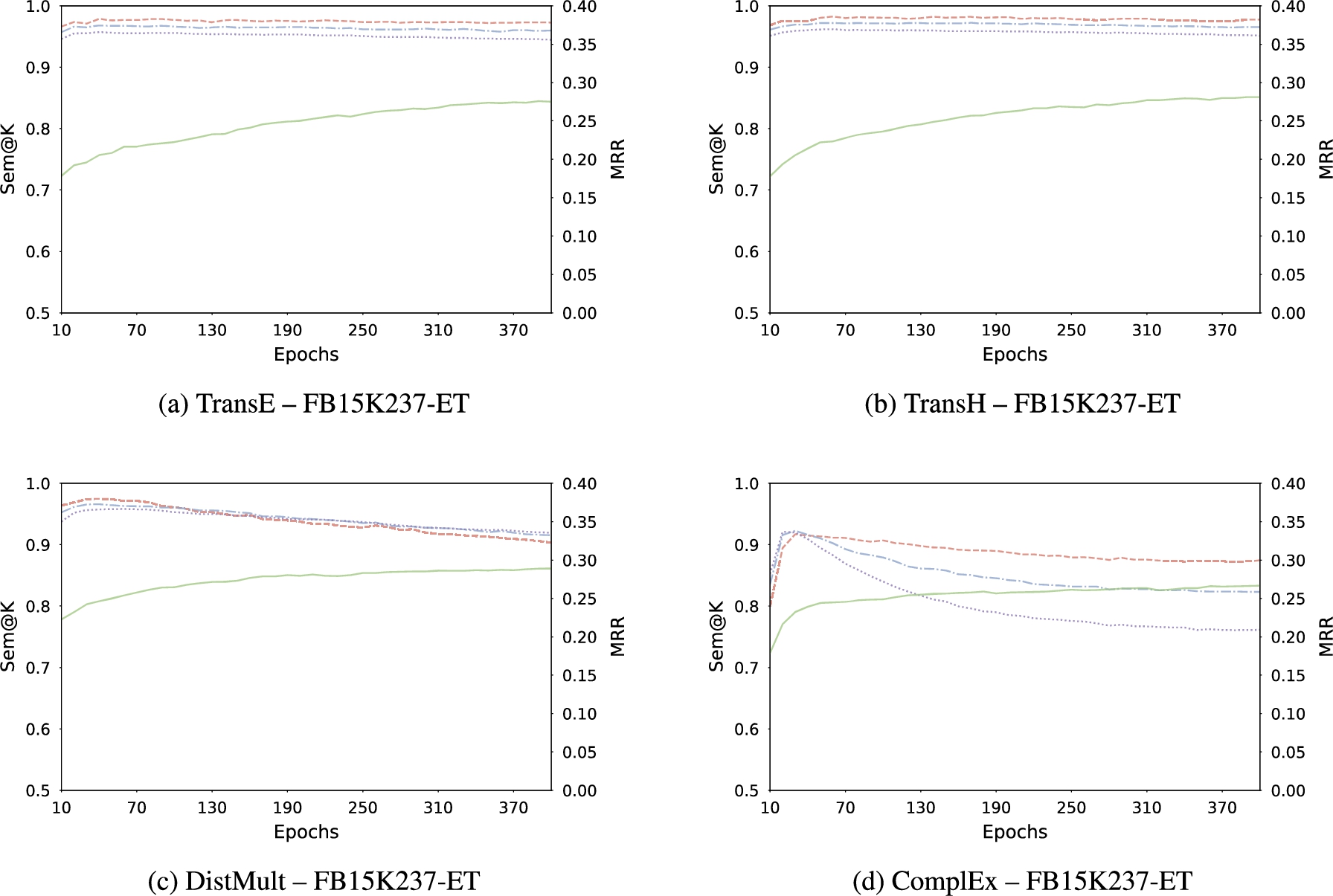

Evolution of MRR (green —), Sem@1 (red - -), Sem@3 (blue - · -), and Sem@10 (purple

For certain models, rank-based metrics performance and semantic capabilities improve jointly. For others, the enhancement of their performance in terms of rank-based metrics comes at the expense of their semantic awareness. Interestingly, trends emerge relatively to families of models. First, we observe that a trade-off exists for semantic matching models. Results are particularly striking on FB15K237-ET (see Fig. 8), where it is obvious that after reaching the best Sem@

Plots of the joint evolution of MRR and Sem@

Top-ten ranked entities for head and tail predictions at epochs 30 and 400 for a sample triple from YAGO3-37K. Green, blue and white cells respectively denote the ground-truth entity, entities other than the ground-truth and semantically valid, and entities other than the ground-truth and semantically invalid. In this case, semantic validity is based on the domain and range of the relation

As reported in Table 1, KGs based upon a schema and a class hierarchy are candidates for the computation of all the versions of Sem@

Discussion

Three major research questions have been formulated in Section 1. Based on the analysis presented in Section 6, we discuss each research question individually. We ultimately discuss the potential for further considerations of semantics into KGEMs.

RQ1: How semantic-aware agnostic KGEMs are?

From a coarse-grained viewpoint, we noted that KGEMs trained in an agnostic way prove capable of giving higher scores to semantically valid triples. However, disparities exist between models. Interestingly, these disparities seem to derive from the family of such models. Globally, translational models and GNNs – represented by R-GCN and CompGCN in this work – provide promising results. It appears that the two aforementioned families of KGEMs are better able than semantic matching models (DistMult, ComplEx, SimplE) at recovering the semantics of entities and relations to give higher score to semantically valid triples. In fact, semantic matching models are almost systematically the worst performing models in terms of semantic awareness. From a dynamic standpoint, it is worth noting the high semantic capabilities of KGEMs reached during the first epochs of training. In most cases, this is even during the first epochs that the optimal semantic awareness is attained.

RQ2: How KGEM semantic awareness’ evaluation should adapt to the typology of KGs?

Drawing on the initial version of Sem@

RQ3: Does the evaluation of KGEM semantic awareness offers different conclusions on the relative superiority of some KGEMs?

A major finding is that models performing well with respect to rank-based metrics are not necessarily the most competitive regarding their semantic capabilities. We previously noted that translational models globally showcase better Sem@

The answers provided to the research questions also lead to consider new matters. As evidenced in Table 8 and Table 9, some KGs are more challenging with regard to Sem@

Recall that this work is motivated by the possibility of going beyond a mere assessment of KGEM performance regarding rank-based metrics. We showed that these metrics only evaluate one aspect of such models, somehow providing a partial view on the quality of KGEMs. Our proposal for further assessing KGEM semantic capabilities aims at diving deeper into their predictive expressiveness and measuring to what extent their predictions are semantically valid. However, this second evaluation component does not shed full light on the respective KGEM peculiarities. Other evaluation components may be added, such as the storage and computational requirements of KGEMs [51,69] and the environmental impact of their training and testing procedure [50]. Furthermore, the explainability of KGEMs is another dimension that deserves great attention [55,83].

Towards further considerations of semantics in knowledge graph embeddings models

The Sem@

It should be noted that due to the only consideration of domains and ranges, Sem@

KGEMs evaluated in this work are all agnostic to ontological information in their design. However, some models that consider or ensure specific ontological or logical properties exist. For example, HAKE [84] is constructed with the purpose of preserving hierarchies, Logic Tensor Networks [3] are designed to ensure logical reasoning, and the training of TransOWL and TransROWL [11] is enriched with additional triples deduced from,

Conclusion

In this work, we consider the link prediction task and extend our previously introduced Sem@

In future work, we will conduct experiments considering a broader array of KGEM families (

Footnotes

Acknowledgements

This work is supported by the AILES PIA3 project (see

Hyperparameters

Results achieved with the best reported hyperparameters

Evolution of MRR and Sem@ K values with respect to the number of epochs

The evolution of MRR and Sem@