Abstract

We present an approach to publish Linked Data on the Web with quantifiable integrity using Web technologies, and in which rational agents are incentivised to contribute to the integrity of the link network. To this end, we introduce self-verifying resource representations, that include Linked Data Signatures whose signature value is used as a suffix in the resource’s URI. Links among such representations, typically managed as web documents, contribute therefore to preserving the integrity of the resulting document graphs. To quantify how well a document’s integrity can be relied on, we introduce the notion of trust scores and present an interpretation based on hubs and authorities. In addition, we present how specific agent behaviour may be induced by the choice of trust score regarding their optimisation, e.g., in general but also using a heuristic strategy called Additional Reach Strategy (ARS). We discuss our approach in a three-fold evaluation: First, we evaluate the effect of different graph metrics as trust scores on induced agent behaviour and resulting evolution of the document graph. We show that trust scores based on hubs and authorities induce agent behaviour that contributes to integrity preservation in the document graph. Next, we evaluate different heuristics for agents to optimise trust scores when general optimisation strategies are not applicable. We show that ARS outperforms other potential optimisation strategies. Last, we evaluate the whole approach by examining the resilience of integrity preservation in a document graph when resources are deleted. To this end, we propose a simulation system based on the Watts–Strogatz model for simulating a social network. We show that our approach produces a document graph that can recover from such attacks or failures in the document graph.

Introduction

How do we know whether data on the Web can be trusted if, after the publication of data on the Web, the agent controlling the published resource can modify or delete the resource at will? No commonly accepted mechanism ensures that the published data is not altered after it has been first made accessible on the Web. In addition, declaring authorship of information on the Web is not required by design: There is no standardised mechanism to incentivise agents to publish information that they would want to be held accountable for. In other words, it is hard to build trust on the Web, as anybody can publish anything on the Web without being held accountable; and later modify or delete it. In this paper, we want to work towards trust starting with the notion of integrity, and present a decentralised technical solution that makes interlinked data tamper-evident and at the same time authorship traceable, while incentivising agents to contribute to the integrity of the link network.

The notion of integrity has several meanings [37]: While integrity can mean the adherence to high (e.g. moral) standards, which we cannot check for data on the Web, we apply a technical definition, where integrity refers to an “unimpaired condition” [37]. Yet, it can be argued that with traceable authorship, people publish data that adheres to higher standards, as they can get held accountable, thus we also indirectly address the former point. This paper is now about quantifying the degree of the unimpaired condition of data that can be verified to be from a certain user. By knowing that the data is unlikely to have changed and knowing by who the data is, we achieve reliability and thus trustworthiness.

That information on the Web is reliable forms the foundation of many applications: In e-commerce, a recent study [1] by McKinsey & Company, a management consulting firm, found that more than half of consumers from the Millennial generation always search for background information prior to buying a fashion product. In supply chains, item documentation offers significant benefits regarding traceability of faulty products, and thus increased efficiency and reduced disruptions [22]. In research, e.g. regarding COVID-19 vaccination intention, information from experts and health authorities as well as local newspapers have a positive impact, while alternative information sources and misleading claims pose a noticeable threat to vaccination intention [23]. In social applications, chats and forums in all flavors, interaction is built on the sharing of posts, comments or messages. Without these resources being reliable, accountability on the Web is missing and human interaction may suffer from fake news or even personal harassment. For example, with Twitter, a centralised micro-blogging platform, posts are not editable and the idea of the possibility of editing posts sparked fierce discussion in the Twitter community.1

As a solution, one may be tempted to envision a trusted third party handle all data publication and access control, similar to social media content with Facebook as the third party. This approach not only compromises data sovereignty but simply transfers the problem to the central agent. No such central agent is trusted by all agents on the Web. As an alternative solution, one may be tempted to envision a decentralised approach, e.g. a public blockchain to store hashes of the published data as proof of existence, which does not rely on trust in one specific agent. Such a system is for example explored in [8]. However, the blockchain approach introduces an additional system that has proven to be very expensive and inefficient due to the consensus mechanism. Our approach on the other hand provides a mechanism to publish trustworthy data on the Web only using Web technologies, without compromising data sovereignty or introducing an additional system.

Our work can be seen in context of ongoing and heatedly debated standardisation efforts at the W3C around Verificable Credentials (VCs),2

In this paper, we present an approach to publish Linked Data on the Web with quantifiable integrity, only using Web technologies, and in which rational agents are incentivised to contribute to the integrity of the link network. As such, the foundation of our approach is the Web architecture [30] and Linked Data [6]. The Solid Protocol [12] allows for adding access control. Our approach then consists in the following contributions:

The notion of self-verifying resource representations, which we first showcased in [10], where the RDF that describes a resource is signed digitally using Linked Data Signatures (LDS) 3 and RDF-star,6

If the description changes, the change becomes evident as the hash of the description does not match the hash in the URI any longer. If a Signed URI is part of another signed resource’s description, those links in the descriptions form a directed acyclic document graph, in which changes to one resource representation need to get propagated to all those linking to the representation. Thus, transitively the integrity of the graph can get established.

The definition of trust scores to quantify how well a resource representation’s integrity is preserved by the document graph’s link structure.

The definition of heuristics that help an agent determine to which other Signed URI to link under resource constraints.

The evaluation of different graph metrics as trust scores, using which we show that Kleinberg’s hubs and authorities [32] can serve as a trust score that lets agents, who want to maximise the trustworthiness of their own documents, contribute to the integrity preservation via the document graph’s link structure.

The evaluation of different heuristics for agents to determine the next link to set, using which we show that our Additional Reachability Strategy (ARS) outperforms other heuristics.

The evaluation of the whole approach by looking at the resilience of the integrity preservation of the document graph in the presence of deleted resources and resources going offline (failures as imagined by Guéret et al. in [24]), using which we show that the graph can indeed recover.

A simulation system based on the Watts–Strogatz model [50] and situated in a social network setting for conducting some of the evaluations.

The paper is structured as follows: In Section 2, we give the foundations for our work including the foundations of the methods used in the evaluations. In Section 3, we survey related research. In Section 4, we provide a running example, illustrate the overall architecture and introduce some helpful terminology. In Sections 5, 6, 7 and 8, we introduce our approach. In Section 9, we evaluate our approach regarding constructive agent behaviour, heuristic score optimization performance and score resilience in the document graph. In Section 10, we conclude.

In this section we present the technological underpinnings of our contributions, which build on Linked Data and related technologies. Moreover, we build on approaches to analyse the web, which we present subsequently.

Linked Data

This section clarifies the notion of Linked Data in the context of the paper. The Linked Data Principles form the technological foundation which is build on and extended by the Social Linked Data (Solid) project. The Linked Data principles outline a lightweight standard to semantically describe and publish data in a decentralised fashion on the Web. Defined by Sir Tim Berners-Lee in [6], the four principles are:

Use URIs as names for things. Use HTTP URIs so that people can look up those names. When someone looks up a URI, provide useful information, using the standards (RDF∗, SPARQL). Include links to other URIs. so that they can discover more things.

URI, resource and resource representation

Adhering to the Linked Data principles, we use Uniform Resource Identifiers (URIs) [7] as names for things which can be virtual, physical or abstract. The thing that is identified by a URI is also called a resource. A resource’s state may be described by the resource’s representation [21] which is retrieved when the resource’s URI is dereferenced. We define a resource’s representation according to [21]:

(Resource Representation).

A resource representation is a sequence of bytes, described by representation metadata. The representation of a resource is retrieved upon dereferencing the URI identifying the resource.

When dereferencing a resource’s URI yields a representation, the resource is called an information resource. A resource where no representation can be retrieved is called to a non-information resource. Typically, non-information resources are described along side with information resources in an information resource’s representation. For example,

is a non-information resource identifying Tim Berners-Lee. Dereferencing the URI yields the representation of the corresponding information resource

i.e. the profile card where one might find information on the non-information resource. More formal definitions are provided in Section 2.1.3. As representations of information resources that are available on the Web are commonly referred to as Web documents, the terms document, information resource and information resource representation are used synonymously in the remainder of the paper, similar to [27].

A URI [7] is structured as follows:

Square brackets indicate optional parts. Query and fragment are optional parameters that are included when necessary or desired for the resource to be served as intended. The authority and path identify the address at which the resource is hosted. The scheme determines how the subsequent characters are to be interpreted. For example, a

HTTP

For interacting with a resource or, more specifically, its representation, we use the Hypertext Transfer Protocol (HTTP) [19]. With HTTP, two parties communicate with each other: a client, the requesting party, and the server, the responding party. We use the HTTP POST method to create and the HTTP GET method to retrieve a resource’s representation as specified in [20]. For example, when accessing a URI with HTTP GET, the client initiates the communication with the host server identified from the authority and path of the URI. The server processes the request and responds by serving a representation of the requested resource to the client. The resource is served in a data format that has been negotiated between client and server during the communication. One family of such formats is described by the Resource Description Framework (RDF).

RDF

We create resource representations using graphs expressed according to the Resource Description Framework (RDF) [17]. Formal definitions in the realm of RDF are adopted from and stay close to the definitions of [27].

RDF specifies a graph-based data model: An RDF graph is defined as a set of triples. A triple consists of a subject, predicate, and object. In each position, a URI may identify the associated resource. In subject and object position so-called blank nodes may serve as graph-local identifiers. In object position, so-called literals represent values, e.g. numbers or strings. Literals, blank nodes, and URIs are called RDF terms. RDF terms, triples, and graphs are formally defined as follows:

(RDF Terms, Triple, Graph).

The set of RDF terms consists of the set of URIs

For example (using CURIEs for prefix abbreviations, # denoting a comment), the RDF graph

indicates that Tim Berners-Lee is a Person. Such representation may, for example, be retrieved upon dereferencing the URI of the profile card, the information resource mentioned in Section 2.1.1.

(Information Resource, Dereferencing).

Let

As described in Section 2.1.1, a non-information resource is typically associated to an information resource. This association is also referred to as correspondence.

(Correspondence).

The function

For example,

the non-information resource identifying Tim Berners-Lee has the corresponding information resource of

An RDF graph may include URIs that correspond to information resources other than the graph’s corresponding information resource itself. In other words, the graph links to other information resources whose representations, i.e. RDF graphs, may link to more information resources and so on. Thus, a directed graph of interlinked information resources, i.e. “Web documents”, is formed. Such graph is thus referred to as document graph.

(Document Graphs).

Let the set of information resources referenced in an RDF graph G be defined as

To reiterate in other words: The terms document, information resource and information resource representation are used synonymously (as mentioned in Section 2.1.1). This is especially intuitive when considering Web documents. A Web document is an information resource and its representation is the document’s content which in turn comprises the document itself. So when a URI identifies a Web document, that document is retrieved upon dereferencing the URI. Links in that document link to other Web documents, thus forming the document graph.

RDF-star

RDF-star [28] (sometimes also RDF∗) extends the RDF abstract syntax to support easier modelling of statements about other statements. In particular, RDF-star allows for quoting triples, i.e. referencing without asserting, using additional notation of

indicating that the profile card includes the statement that Tim Berners-Lee is a Person. At the same time, the “outer” statement does not make an assumption about the truth-value of the quoted statement. It only asserts that the profile card includes such a statement. Whether or not Tim Berners-Lee is actually a Person is not asserted. Quoted triples are referentially opaque (in contrast to reified triples), i.e. the quoted triple is considered one entity instead of a composition of its single terms.

Social Linked Data – the solid protocol

Tim Berners-Lee’s endeavor to re-decentralise the Web manifests in the Social Linked Data (Solid) Project [36,43]. The goal is to decouple applications from the data they consume, to break up centralised data silos and to give users back control over their data. To this end, the Solid Protocol [12] specifies a set of conventions and recommendations, building on the Linked Data Principles and existing W3C standards. For managing data in a decentralised manner and for building applications consuming this distributed data, the protocol connects mature Web technologies like the Resource Description Framework (RDF), RESTful HTTPS APIs borrowing from the Linked Data Platform [46] and an adaptation of Open ID Connect [16].

Users or, more general, agents store their data as web-accessible resources in so-called Pods, personal online data storages, where they control who has access to what part of their data. Pods are basically personal Web servers with user-defined access control. Each agent controls one or more Pods, where among its other data the agent’s personal profile information is stored. An agent is identified by its WebID [44] which points to the location of the agent’s profile information. Using its WebID, the agent is able to authenticate to a Pod when accessing a resource. On the Pod, user-defined access control lists (ACL) [11] determine if the accessing agent is authorized to perform its desired action (read, write, append or defining access control).

Additionally, agents are able to exchange messages using Linked Data Notifications (LDNs) [13]. Each Pod exposes an inbox, where any agent can post LDNs to, e.g. to request access to a specific resource or to announce the creation of a new resource.

Linked Data Signatures

Linked Data Signatures (LDS) are a way of modelling a cryptographic signature of an RDF graph or dataset. The distinguishing factor from a typical signature of a plain string or byte array is that the LDS is RDF syntax agnostic. It does not matter in which specific RDF syntax (Turtle, JSON-LD, Quads, …) the resource representation is available. The syntax of the original representation which the signature value has been calculated on does not need to match the RDF syntax of the representation to be verified. Any specific RDF syntax produces the same hash and thus can be verified with the information modelled by the LDS.

An LDS includes information on the calculation of the signature value. It specifies, e.g.,

the canonicalization algorithm used to normalize the RDF graph, e.g. Hogan’s algorithm [29] the message digest algorithm to calculate the hash, e.g. SHA-256 [38] the signature algorithm to sign that hash, e.g. Elliptic Curve Digital Signature Algorithm (ECDSA) [2] with curve P-256, such that the signature value can be verified the cryptographic key (or a link to the key) to be used for verification the signature value to be verified

In addition, the LDS typically includes when and by whom the signature was created. LDS are not yet standardized, i.e. there exists no recommendation on how to connect the signed data to the LDS. Nonetheless, LDS are listed as a valid signature scheme in the Verifiable Credential (VC) data model [47], a W3C recommendation. We examine the usage of LDS in the VC data model in Section 2.4. We present the usage of LDS in our approach in Section 5.1; for an example see Listing 2.

Verifiable Credential (VC) data model

The W3C recently released the Verifiable Credential (VC) data model [47] as a recommendation for sharing verifiable claims. The VC data model focuses on modelling credentials and claims in particular. Various options for signature schemes are mentioned, including LDS among other non-linked-data schemes. We summarize the most important aspects of the VC data model to our understanding from [47].

The VC data model is composed of three main components: the claim, the credential (metadata) and the proof. These components are explained best with a simple example:

The statement that Alice is a student of a university is called a claim. The university accrediting Alice being a student is called a credential. The university’s signature on the credential is called a proof.

All three elements of claim, credential and proof form a VC. The VC data model is defined in JSON-LD to allow for adoption of in both JSON and RDF-based implementations.

Regarding the data model from an RDF perspective, the VC data model relies on RDF datasets, i.e. a composition of RDF graphs. Claims and associated credential metadata form the so-called credential graph. The credential graph links to the so-called proof graph formed by the credential’s proof information, i.e. the digital signature. In the credential graph, claims represent the actual statements which the VC is created for. The credential metadata represent information on the credential itself, e.g. which agent or entity issued the credential, when the credential was issued, which type the credential is of and what the subjectof the credential is. The proof graph consists of all information associated with the digital signature, e.g. an LDS, that asserts the credentials validity. Figure 1 illustrates the structure of a VC: With the VC data model, the credential links to the proof, i.e. the signature. There does not exist a link from the signature to the data that is signed.

A verifiable credential and its components in the style of [47]: claim (yellow), credential (metadata) (rose) and proof (green).

Listing 1 shows a VC in JSON-LD format using a Linked Data Signature. Just from looking at the data, it is impossible to directly extract which algorithms for canonicalisation, digest and verification should be used. These are defined by the type of the signature, i.e. Ed25519Signature2020, and need to be looked up at the corresponding specification.

Example of a verifiable credential

The Web is comprised of interlinked Web resources, e.g. human-understandable Web pages and machine-readable Web documents like RDF graphs and datasets. In this paper, we focus on a document graph on the Web as specified in Definition 5.

Hubs & authorities

The notion of hubs and authorities have originally been defined in the context of the HITS algorithm [32] for calculating a relevance score for pages and documents on the Web. The definition of hubs, authorities and their corresponding scores are adapted from the original definitions found in [32]:

(Hubs, Hub Score).

Hubs are documents that reference highly ranked authorities. Let the hub score be

A document’s hub score is the sum of all the authority scores of documents it points to. Not only does the hub score take the number of referenced documents into account, but it also reflects the degree to which a document references authorities, i.e. other documents that are referenced by other hubs. In other words, highly ranked hubs exhibit a considerable overlap in the sets of documents they link to.

(Authorities, Authority Score).

Authorities are documents that are referenced by highly ranked hubs. Let the authority score be

A document’s authority score is the sum of all the hub scores of the documents that point to it. Not only does the authority score take the number of documents referencing the document into account, but it also reflects the degree to which a document is referenced by hubs, i.e. other documents that reference other authorities. In other words, highly ranked authorities exhibit a considerable overlap in the sets of documents that reference them.

PageRank

The PageRank [39] is a score that assesses the popularity of Web pages. The idea of the PageRank can be formulated as “Popular pages link popular pages”. The definition of the PageRank is adapted from its original definition as presented in [39] and its calculation via the power method [4]:

(PageRank).

The PageRank of a document u is calculated iteratively until convergence by

In each iteration the PageRank of each document is updated based the PageRank of documents linking to the document. The random surfer is introduced to solve the drainage of PageRank by sinks, i.e. documents with no out-going links [39]. The random surfer jumps from any document to another document in the graph based on a predefined damping factor d. Additionally, the random surfer jumps from a document without outgoing links to any other document in the document graph, by default with uniform probability.

Analysis of agent behaviour on the web

Watts–Strogatz model

The Watts–Strogatz model [50] generates graphs that exhibit the so-called small-world properties of short average path lengths and high clustering of graph nodes. One representative of such small-world networks are social agent networks [50]. The Watts–Strogatz model is thus used in Section 9.3 to create agent networks based on which agent interaction is modelled in the evaluation simulations.

The small-world networks generated by the Watts–Strogatz model are a type of network whose structural properties, e.g. shortest path length or clustering, lie in between the properties of regular graphs and random graphs. In a regular graph, each node is connected to the same number of other nodes, while in a random graph, each node is randomly connected to other nodes. To achieve such structural middle ground, the model’s graph generation is based on rewiring connections between nodes of an initially regular graph. Dependent on a specified probability, each connection between two nodes is rewired.

To create a network using the Watts–Strogatz model, the number of nodes, their number of connections and the rewiring probability need to be specified. A regular graph is generated with the specified number of nodes where each node is connected to the specified number of other nodes. Then, the rewiring procedure is conducted per connection between two nodes in the graph. The thus created graph can be interpreted as a social network of agents, where the graph nodes represent agents and the connections between nodes represent agent interaction, e.g. being friends or chatting with each other. Using such networks, agent interaction can be modelled.

Basic concepts from Game Theory

We examine the behaviour of agents on the Web that is induced by our proposed approach. To analyse more complex agent behaviour, e.g. when agents interact repeatedly, we take inspiration from the analysis of repeated games in Game Theory. We use this technique in Section 9.1.3 to analyse agent behaviour when there is no obvious answer to how an agent may behave given a specified approach to trust scores. This section summarizes the foundations of repeated games in game theory, based on [42].

Agent behaviour is assumed to be rational with the goal of maximising a favorable payoff in a game. The way agents choose to behave within the rules of the game is defined as a strategy. Each agent has its own set of strategies. A game is thus defined as a set of agents and their set of strategies, i.e. their possibilities to play the game according to the rules. Each combination of strategies across all agents, i.e. a scenario that results from the combination of the agents’ behaviour, is associated with an outcome for each agent. Each agent tries to maximise its payoff (also referred to utility) received by an outcome. The fact that an agent maximises its own payoff exclusively affects the decision making of all other agents. A state where no agent is able to receive a better payoff be deviating from its current strategy, given that the strategies of the others remain unchanged, is called a Nash equilibrium.

An agent’s dominant strategy is a strategy that yields the best outcome for that agent independent of the other agents’ strategies. There exists no incentive for that agent to deviate from its dominant strategy regardless of what other agents decide to do. If for each agent there exists a dominant strategy, the resulting Nash equilibrium is called a Nash equilibrium in dominant strategies.

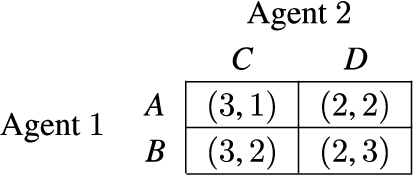

A common tool to represent agents’ payoffs, in a game of two agents, for each strategy they may choose provides the payoff matrix. Agent 1’s possible strategies are denoted by the rows and agent 2’s possible strategies are denoted by the columns. In each tile, the first element of the tuple denotes the potential payoff for agent 1 and the second element of the tuple denotes the potential payoff for agent 2. As illustrated by Fig. 2, dominant strategies as well as Nash equilibria can be identified by simple inspection.

A payoff matrix where agent 1 is indifferent between its strategies A and B, and agent 2 has a dominant strategy D over its other strategy C, resulting in the two Nash equilibria (A,D) and (B,D).

In a game that is played once, called a stage game, all agents choose their strategy once and receive the corresponding outcome. A repeated game is a repetition of a stage game, where outcomes are added up. The agents need to take the impact of their current action on future actions of other players into account. Repeated games can be categorised into finitely repeated games and infinitely repeated games. In a finitely repeated game, the expected payoff of an agent’s strategy is the sum of outcomes in each round. In an infinitely repeated game, the expected payoff of an agent is calculated by discounting future outcomes:

where δ is the discount factor, t the index of iteration and

Related research

Our approach aims to contribute integrity of Linked Data on the Web. Hence, we survey related work in the area of Linked Data integrity and Web analysis.

Linked data integrity

Integrity of Linked Data is typically verified by calculating and comparing cryptographic hashes of the underlying RDF graphs. Our approach relies on Hogan’s algorithm [29] for canonicalising and hashing RDF graphs as it is able to handle blank nodes most gracefully. Various alternative algorithms for generating cryptographic hashes of RDF graphs have been presented [5,14,34,45,48].

Trusty URIs [34,35] aim to make digital resources verifiable, immutable and permanent by extending the usual URI scheme with a cryptographic hash of the resource as a URI suffix. A resource is directly verifiable from its Trusty URI as an accessing agent can already expect a specific content to be served from the hash in the URI. However, our approach builds on and extends the conceptional idea of Trusty URIs with digital signatures to Signed URIs.

Nanopublications [33] aim at publishing data on the Web as verifiable, immutable, and permanent digital artifacts. The approach uses Trusty URIs such that links among nanopublications contribute to data integrity. With only hash values ensuring integrity of data, however, authorship of publications is not preserved. The centralised yet distributed nanopublication-server-network ensures publications’ discoverability, permanence and immutability. However, in our approach, which is fully decentralised, each agent may host their own server, e.g. a Solid Pod, to self-sovereignly make their resources available at Signed URIs.

Similar in data model and serialization to Trusty URIs, a draft specification by the Internet Engineering Task Force (IETF) for Hashlinks,7

The application of asymmetric cryptography to compute signatures of RDF graphs to secure communication among agents is presented in [31]. The integrity of messages are preserved through calculation of digital signatures over the message content. By adding the previous messages to the new message’s content, a chain of iteratively signed messages is created in a local RDF graph. However, our approach builds on interlinked documents are distributed across the Web. Additionally, our approach considers confidentiality of data through access control in Solid Pods.

The W3C recently released the Verifiable Credential (VC) data model [47] as a recommendation for sharing verifiable claims. One possible signature scheme in the data model is the LDS, among other non-linked-data schemes. The VC data model focuses on modelling credentials and claims in particular whereas we concentrate more on the signature and less on content modeling. Thus, we do not use the VC data model in particular but use the more broadly applicable LDS for providing verifiability for any Linked Data and not just credential models. LDS are not yet standardized and, in fact, were in recent discussion: A proposal for standardization of LDS8

Resource Integrity Proofs data model [3] aims to enable hash-based integrity verification of digital resources. The authors suggest to include integrity information like hashes and algorithms in the resource itself as meta-data. They also propose a combination with distributed ledger technology to create persistent proofs of existence of resources stored outside of the distributed ledger. Approaches following such a strategy are examined in [18,49] and for example applied in a simple supply chain use-case in [8,9]. In contrast, our approach does not use a distributed ledger to achieve data integrity. Instead, our approach relies on digital signatures in URIs and their transitive nature in a distributed document graph on the Web.

In the realm of Web analysis, and as mentioned in Section 2.5, PageRank [39] and HITS [32] are two well known methods for calculating a relevance score for pages and documents on the Web. More specifically, they analyse the link structure of the graph that is formed by interlinked Web pages. Our approach founds its trust scores on the notion of hubs and authorities from [32].

An alternative algorithm for dynamic and collaborative computation of page-rank-based scores of Web pages distributed in a P2P network is presented in [41]. It is extended in [40] with statistical methods for detecting and accounting for malicious agent behaviour during the score calculation. An open Web is assumed that lacks mechanisms to ensure data integrity or confidentiality unlike to our approach that allows for both integrity verification and privacy of information.

A general framework for propagation of trust and distrust in a directed graph of entities is introduced in [25]. Prediction of trust scores is based on individual trust scores for and of entities. The framework focuses on a reputation network of agents with individual scores to quantify trust in other people. Only focusing on trust as a given, underlying mechanisms to achieve such trust are not examined. In contrast, our work provides a technical approach to achieve trust in the integrity of documents on the Web.

Analysing the Web graph, [24] examines the robustness of the Web of data. More specifically, a method to analyse and improve the physical and semantic structure of the Web is presented. We took inspiration for the evaluation from the method presented in [24], which laments that there are too few links on the Web. Our approach however incentivises the creation of links between documents, thus contributing to the robustness of the document graph. We use their method of targeted attacks is used to quickly disconnect the document graph during the evaluation of the proposed approach.

Exemplary architecture and terminology

Assume a decentralised social network on the Web, e.g. a Solid-based micro-blogging application where agents create and interlink posts of social banter, as illustrated by Fig. 3. These posts are interlinked by nature of the underlying social application: A post may reference another post as a comment or a simple reference. Each agent stores its posts in its own Pod where access control on the posts can be defined as desired by the agent. Not all posts may be available to everyone, access may be restricted to a certain group of agents.

A post is a resource with a self-verifying representation (presented in Section 5): It is described in RDF including a Linked Data Signature and is available at a Signed URI, which includes the signature’s value as a suffix. Upon dereferencing the Signed URI, the integrity of the resource and the corresponding creator can be verified. The resource is said to be modification-detectable, or tamper-evident.

A modification to a resource refers to the resource being updated “in-place”, i.e. the representation is updated but it is still available at the same URI. With a modification, there is no statement made if the resource representation can still be verified. In contrast, with an update of a resource, the verification of its representation still yields a valid result. An update of a resource thus refers to a new resource with corresponding self-verifying resource representation being created.

Consider a group of agents forming a social agent network: Alice, Bob, Charlie and Dave. Alice, Bob and Charlie form a group where they share posts only they interact with, i.e. respond to. Dave is a close friend of Bob but does not know or interact with the others.

An illustration of the system architecture: user’s store their interlinked resources in their corresponding pods. The solid-based application consumes the RDF resource representation via HTTP as linked data. Each document exhibits a hub score (HS) and authority score (AS), calculated on the transitive closure of the document graph. Assume for this figure that Dave joined the group chat of the others and comments the latest post, created by Bob in reply to Alice, ignoring Charlie’s post.

We call such assemblies of interacting agents interaction channel of size n: An interaction channel of size n is an abstract space where a group of n agents actively interacts with each other, e.g. create and interlink posts. An interaction channel may be comparable to an online chat room of n actively participating agents. In the remainder of this work, we distinguish between interaction channels of size two, i.e. a pairwise agent interaction channel, and interaction channels of size greater than two, i.e. a multi-agent interaction channel.

The number of passively consuming, i.e. posts reading but not creating, agents is irrelevant to the definition of an interaction channel. In other words, it does not matter to the interaction channel if the posts are publicly available or are confidential, i.e. only readable by actively participating agents. Instead, this decision is a design choice of the social Web application (and ultimately of the agents themselves). We investigate the effects of the decision on confidentiality as an parameter in our final evaluation of our approach (presented in Section 9.3).

Assume Charlie creates a new post in the multi-agent interaction channel with Alice and Bob. Agents are notified about a newly created document using Linked Data Notifications (LDNs). Of course, other means of advertising a newly created resource are also possible but not applied here. Independent from such options, resources are discoverable by Graph Traversal.

As Charlie’s post is a comment to a post created by Alice, Charlie’s post includes a link to Alice’s post. We refer to such a link, which exists because of the natural linking of resource contents, as a content-based link. As the created resources have self-verifying representations, links among resource contribute to their integrity (presented in Section 6): In our case, Alice would update her resource, then, she would have to ask Charlie to also update her resource, such that the update becomes undetectable. The more resource representations link to a particular resource, the more resource representations need to be updated and, presumably, the more users would need agree to do so. The social Web application may desire an even more interlinked Web of resources in order to protect against unwanted undetectable resource updates (presented in Section 6.2). To this end, it may require a specific number of links to be included in any resource. The application may even impose additional constraints on a resource, e.g. which resources to link or which shape it should fit. If a resource does not satisfy the application’s requirement it is considered untrustworthy by the application and thus ignored. We focus on the requirement of additional links, which we call application-based links. To put a number on the integrity, i.e. trustworthiness, of resources, the social Web application may consider calculating a score, which we call trust score (presented in Section 7). It captures on how well the application deems the resource to be secured against unwanted undetectable updates. We assume that agents using the application would like their resources to be considered well-secured, i.e. trustworthy. Based on that assumption, agents may include additional links in their resources that do not necessarily contribute to the content of the resource but mainly to the resource’s scores as considered by the application used. With these additional links, agents aim to increase the trust scores of their resources (presented in Sections 9.1 and 9.2). We thus call such links score-based links. We investigate the effects of an application deciding to simply rely on content-based links or to apply trust scores as an parameter in our evaluation (presented in Section 9.3).

As the agents continue to collaborate on the social Web application, a Web of resources with self-verifying representations is created.

We introduce self-verifying resource representations as we presented them in [10]: A resource is identified by an URI. Dereferencing that URI yields an RDF representation of the corresponding resource. A self-verifying resource representation consists of two components:

an RDF resource representation, which must include an LDS a Signed URI, which the resource is made available at

Such self-verifying resource representation is also called modification-detectable, or tamper-evident: Any modification to the resource’s representation becomes evident by comparing the hash calculated from the retrieved representation and the hash derived from the signature provided in the Signed URI. At the same time, the agent that created the corresponding LDS is authenticated.

Linked data signature using RDF-star

To sign the RDF representation of a resource, a Linked Data Signature (LDS) is created:

the RDF representation is canonicalised, e.g. using Hogan’s algorithm [29]. the message digest of the canonical RDF representation is calculated, e.g. using SHA-256 [38] the hash of the canonical RDF representation is signed, e.g. using the Elliptic Curve Digital Signature Algorithm (ECDSA) [2] with curve P-256.

The specific algorithms used are to be documented in the LDS, as illustrated by Listing 2, such that a verifying agent is able to verify the LDS.

LDS are listed as one possible signature scheme in the Verifiable Credentials (VC) data model [47], which is heavily influenced by the JSON-LD syntax. Examining, from a pure RDF perspective, how LDS are defined and used in the VC data model, we took issue in To avoid these issues, we model LDS using RDF-star: RDF-star allows for quoting triples, i.e. referencing without asserting. We argue that the LDS only provides meta-information on the signed triples and does not necessarily have to assert those triples. This may be useful, for instance, when the truth-value of the signed triples can change over time: a digital student id card becomes invalid once the student graduates. The signature, however, is always valid as it makes no statement about the truth-value of the signed triples.

Example of a self-verifying resource representation, in our example available at https://solid.charly.me/msg__0x8402f...01b234. It is comprised of the asserted triples (see

A Signed URI is a URI that includes the hexadecimal value of the resource representation’s signature as a suffix, delimited by two underscores, e.g. for Listing 2:

we first remove the signature suffix from the base URI prior to resolving relative URIs in the RDF graph. We then check if the signature value is verifiable using the quoted triples and the specified algorithms. Next, we check if all triples quoted by the Linked Data Signature are in fact asserted.

The latter check is optional in cases where assertion of signed triples is irrelevant.

An agent accessing a resource at a Signed URI can not only verify the content of the RDF representation from its Linked Data Signature but also expect that specific content signed by a specific creator to be served. Signed URIs allow agents to reference a specific resource in a specific state from another resource’s representation.

A web of self-verifying resource representations

In a Web of self-verifying resource representations, links among representations contribute the integrity of the resources’ representations as those links include the signatures of the corresponding resources. When a resource’s representation changes, its signature value changes, the existing Signed URI mismatches, and the modification becomes detectable. For an undetectable update of a resources’ representation, a new Signed URI is to be created and all resources that transitively reference the original resource have to be updated with respectively. In turn, those resources need to be updated with new Signed URIs as well and so on. Since on the Web, resources are typically under control of many different users, all those other users whose resources link to the original resource have to agree on such updates. Otherwise, some evidence will remain.

Thus, the more interconnected resources are, the more resources need to be iteratively updated. Additionally, the more agents are involved, the more agents need to be convinced to put up with the effort to update their resources. This way, contributions to such a Web contribute to preserving the integrity of existing self-verifying resource representations.

The Web of resource representations is a directed graph, where the resources9

More specifically, their corresponding information resources.

Using self-verifying resource representations, this document graph implicitly contributes to the integrity of its documents by making them discoverable via graph traversal and subsequently verifiable for any agent. The document graph forms a directed acyclic graph (DAG). No cycle exists in the document graph; consider a trivial example: Document (a) is referenced by document (b) by a link using (a)’s Signed URI. So, (b)’s signature includes (a)’s signature. If document (a) was to reference (b), (a)’s signature would include (b)’s signature and, transitively, its own signature. This is not probable as this would indicate a collision in the hash or signature functions.

The documents can still be modified or deleted by the controlling agent. When an agent is to update a document, the modification of the existing content entails an update of the Signed URI. To do so unnoticed by other agents is only possible when the original document has not yet been referenced by other documents from other agents as agents traversing the graph expect a specific content when dereferencing the original Signed URI. If the document already has been linked from another agent’s document, it is not possible for the first agent to modify its document unnoticed. Instead, the agent needs to create a new document and truthfully announce it as an updated version. It is then at the decision of agents whose documents reference the original to reference the new version in their documents accordingly or to keep the original reference. The iterative nature of document updates becomes apparent.

Undetectable document updates remain possible if and only if all agents whose documents reference the original document directly or transitively update their documents accordingly to reference the respective newly created documents. Moreover, all involved agents need to delete their original documents such that no trace of the original document sub-graph remains. If only one agent keeps one document that includes a reference to some original document, and if the agent is able to provide a replica of that document, the agent can prove that there has been an iterative update process.

Social applications on such a web

With self-verifying resource representations, any resource modification or update is detectable as long as at least one agent decides to not delete the references to the original representation. Only if all agents involved agree to the update, no evidence of the original resource may remain. Considering our exemplary application environment, i.e. a typical public online forum where agents interact in multiple public groups (see Section 4), this would mean that if all agents of one public group chat agree to an update, no evidence of the original messages persists.

The applications will abstract away most of the technicalities regarding a resource update, even a agreed non-evident one. For example, regarding the simple publication of a document at a Signed URI, the user will not notice at all that there is some magic happening in the background that ensures the document’s integrity. Moreover for a document update, the application will also handle and abstract away setting links to the new and links to the old document appropriately and as desired. For a user, such details regarding publication and updates are hidden.

While such agreed-upon non-evident updates may be acceptable for some applications, others may not desire such behaviour due to stricter data integrity requirements. In such applications, preserving the integrity of resources is the overall objective. To this end, it may require a specific number of links to be included in any resource representation. The application may even impose additional constraints on a resource representation, e.g. which resources to link or which shape it should fit. If a resource representation does not satisfy the application’s requirement it is considered untrustworthy by the application and thus ignored. We focus on the requirement of additional links, which we call application-based links.

As a dense document graph contributes more to the integrity of the documents than a sparse document graph, an application may impose an additional semantic constraint on the application-based links: The documents referenced by the application-based links should be “unrelated” to the document at hand. In our example, a post in a specific interaction channel should reference posts from other interaction channels as it is not directly related to those other posts.

By connecting otherwise unrelated documents, the number of agents involved with a specific document is potentially increased. With more documents connected and more agents involved, non-evident document updates become more difficult: All references of the original need to be discovered iteratively by the involved agents. The document graph needs to be searched “upstream” for incoming references of any document to be updated. In large-scale document graphs, the discovery of all such documents and references may become impractical. In addition, the corresponding agents need to be requested to update their documents accordingly. Moreover, some of these agents may be indifferent towards the update process, i.e. those agents who are involved due to the application-based links. Since updating their documents accordingly and subsequently searching for and notifying all other referencing agents would impose quite the effort on them, these agents have ceteris paribus no incentive to update their document. By introducing application-based links, the effort for non-evident document updates, i.e. the chance that some evidence of that process remains, is increased. To put a number on the achieved integrity, i.e. trustworthiness, of resources, the social Web application may consider calculating a score, which we call trust score.

Quantifying integrity of resource representations on the web

The following terms regarding document integrity are introduced: A document’s integrity preservation describes how well its integrity is preserved by the document graph. A document’s integrity contribution describes how much it contributes to the integrity of the document graph. Subsequently, the quantification of document integrity refers to measuring the effort needed for a document update in terms of number of documents to update. Due to the iterative nature of digital signatures, the transitive closure

(Integrity Preservation, Integrity Contribution).

Document u’s in-degree

The quantification of document integrity provides a basis for trust in documents, where trust refers to the corresponding document’s security against undesired access, modification or deletion as perceived by an agent. When a document’s integrity is highly preserved, it can be assumed that the document is unlikely to be updated without evidence and hence can be relied on. The integrity preservation of a document can serve as an indicator of how trustworthy an agent would consider that document.

We introduce the notion of trust scores:

(Trust Score, Trustworthiness, Gravitas).

Trust scores are document-specific values that provide a basis for interpretation regarding trustworthiness and gravitas of that particular document. The trustworthiness of a document describes how well that document may be trusted by an agent. The gravitas of a document describes the document’s influence on the trustworthiness of a second document.

In general, trust scores provide a qualitative ordering instead of a quantifiable measurement of document integrity. While quantitative measurements of document integrity can be interpreted as trust scores, trust scores may additionally rely on other properties. These additional properties may not impact the integrity of a document directly but may be relevant to the integrity of the document graph, e.g. the interconnectedness of documents within the graph.

We introduce trust scores based on hubs and authorities and a practical heuristic strategy to optimize the scores. We will show in Section 9.1 that optimizing hub- and authority-based trust scores results in an incentive for agents to constructively contribute to the document graph and its integrity preservation. In Section 9.2, we provide experimental evidence that the proposed optimization heuristic outperforms alternative heuristic strategies.

Hub- and authority-based trust scores

Adapting the notion of hubs and authorities from [32], hub score and authority score can be interpreted as trust scores for resources with self-verifying representations on the Web.

Recall the definition of the hub score (Definition 6): A document’s hub score is the sum of all the authority scores of documents it points to. The hub score of a document can be interpreted as to how much it contributes to preserving the integrity of the document graph, receiving more weight from highly ranked authorities. The hub score of a document can hence be interpreted as its gravitas. Not only does the hub score take the number of referenced documents into account, but it also reflects the degree to which a document references authorities, i.e. other documents that are referenced by other hubs. In other words, highly ranked hubs exhibit a considerable overlap in the sets of documents they link to.

Recall the definition of the authority score (Definition 7): A document’s authority score is the sum of all the hub scores of the documents that point to it. The authority score of a document can be interpreted as to how well its integrity is preserved by the document graph, receiving more weight from highly ranked hubs. The authority score of a document can hence be interpreted as its trustworthiness. Not only does the authority score take the number of documents referencing the document into account, but it also reflects the degree to which a document is referenced by hubs, i.e. other documents that reference other authorities. In other words, highly ranked authorities exhibit a considerable overlap in the sets of documents that reference them.

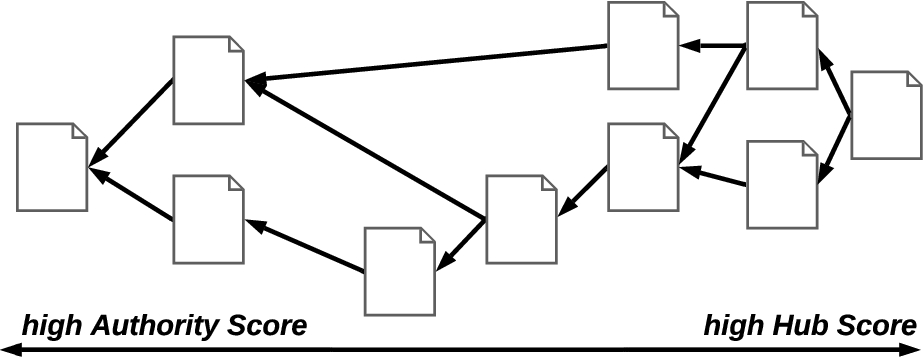

Due to the iterative character of digital signatures, hub and authority scores are calculated on the document graph’s transitive closure. Figure 4 illustrates the distribution of scores within a document graph.

As documents that are deep in the DAG exhibit a significant overlap in the set of documents referencing them on the transitive closure of the document graph, they exhibit a high authority score. As such documents do not link many other documents, their hub score is low. Inversely, as documents that have been recently been added exhibit a significant overlap in the set of documents they link to on the transitive closure of the document graph, they exhibit a high hub score. As such documents are not linked to by a lot of other documents, their authority score is low.

A document graph with qualitative distribution of the trust scores calculated on its transitive closure.

This interdependence of hub and authority scores fosters interconnectivity among the documents. It is also the reason why a document that is referenced by a lot of documents with a low hub score does not necessarily receive a high authority score. Since the referencing documents are not well connected with other documents, and hence exhibit the low hub score, the resulting authority score of the referenced document will be rather low. The interpretations of gravitas and trustworthiness based on hubs and authorities can thus be described as follows: A document’s gravitas is dependent its own interconnectedness and the interconnectedness of the documents it links to. A document’s trustworthiness is dependent on the interconnectedness of the documents it is linked by. Or short: “Trust is based on the interconnectedness of documents.”

Trust scores allow agents to determine which resources they would like to rely on in an application context, i.e., which resources are more trustworthy than others to be relied. When a resource is considered not trustworthy enough, the resource would simply not be considered for usage by an agent. Trust scores can thus serve as a mechanism to application designers for formulating incentives regarding agent behaviour: When agents rely on trust scores, agent behaviour may be influenced towards optimising these trust scores. In an application context for example, an agent would like its documents to be highly trustworthy such that other agents using the application consider the documents trustworthy enough to be used. The agent would like the trustworthiness of its documents to be reflected by the trust scores specified by the application. To this end, an agent would exploit the trust scores as much as possible to make its own documents seem most trustworthy.

Of course, an agent can always choose to rely on other trust scores than the ones defined by the application or to ignore them entirely. However, some trust scores may be more suitable than others in specific application contexts. Application-defined trust scores establish a common ground for agents interaction based on the recommendation of the application designer. Moreover, when an agent relies on different trust scores than the rest of the agents, the agent would optimise its chosen trust scores instead of the commonly used ones. This will reduce the trustworthiness of its documents from the perspective of the other agents. When an agent chooses to ignore the trust scores of its own documents, it does only effect the trust scores of its own documents. It does not have negative effects on the trust scores of other agents.

Application designers can leverage the characteristics of trust scores to incentivise participation and contribution to the integrity of and trust in their application. Assuming agents that optimise the trust scores of their documents to maximise their trustworthiness, agent behaviour is influenced depending on which trust score is chosen by the application designer.

For an agent, the main question regarding the optimisation is the following: “Which document or documents should a new document reference, such that its own trust scores, i.e. trustworthiness or gravitas, are high.” With a high trustworthiness of the just published document, the agent has already reached its goal. With a high gravitas of the just published document, the agent can include a reference to another document such that that document’s trustworthiness is increased.

This allows an application designer to choose the application’s trust scores such that contributing to the integrity and trust in the document graph leads to high trustworthiness or gravitas of newly created documents. Subsequently, an optimisation rule can be deducted from the choice of trust scores. That is, which resources an agent should reference in a new resource to maximise the trust scores. Such references are thus a specific type of application-based links that is referred to as score-based links.

General score optimization

For an agent, the main question regarding score optimization is the following: “How can I make my documents regarded as trustworthy (enough), i.e. high authority score, for other agents to rely on them?” With hub- and authority-based trust scores, however, it is not possible to have a newly published document with high authority score as no incoming links exist. Instead, with a high gravitas of the just published document, the agent would be able to increase the trustworthiness of already existing documents referenced from the new document. When the gravitas of a new document is maximised, it contributes most to the trustworthiness of referenced documents.

Looking at Fig. 5, it is quite obvious that documents with an in-degree of zero, that reference all the other documents transitively, exhibit a high hub score. Subsequently, those documents contribute most to the authority score of the documents deeper in the DAG.

A document graph with trust scores calculated on its transitive closure. The top value indicates the hub score, the bottom value indicates the authority score of a document. For a new document, it remains the question which existing documents to reference best.

For an agent publishing a new document, this means that the new document should reference as much other documents as possible. This way, it contributes most to the authority score of the referenced documents, and especially, to the referenced documents of the agent itself. The easiest way to achieve this, is by simply referencing all documents with an in-degree of zero from the new document. Thereby, the new document references all documents in the DAG transitively. In other words, all documents in the transitive closure of the document graph are referenced.

However, including references to all documents with an in-degree of zero may not an option, e.g. when considering a large-scale document graph like the Web. The decision problem arises which document or documents to reference best; based on the information available to a particular agent, i.e. the documents that the agent has access to. Similarly, Web applications, e.g. our exemplary forum application from Section 4, may require resources to have a specific shape, where to number of outgoing links is restricted. For example, an application designer wants to leverage the integrity preserving nature of Signed URIs but, at the same time, needs to balance this desire with the responsiveness and performance of the web application. To this end, the allowed number of outgoing links is limited to a use-case specific number. As a specific example: Ten links may be handled by the application in under 2 seconds which may be the maximum of response delay desired for the application.

As brute-forcing the optimal choice is infeasible at large-scale and simply not allowed in restricted application environments, we propose the Additional Reachability Strategy (ARS) as an optimization heuristic to iteratively choose the next best document to reference. Assume a new document v being added to the document graph. Iteratively, ARS chooses as next reference the existing document which provides the highest number of not yet reachable documents from v. Implicitly, already reachable vertices are excluded since they yield an additional reachability of zero. In Section 9.2, we compare ARS to other potential heuristic strategies and show that ARS outperforms it competitors.

Evaluation

We evaluate three aspects of our approach:

First, we evaluate our proposed approach to trust scores which induce agent behaviour towards optimising such scores. We assess the strategy that an agent would choose to maximise the trustworthiness of its own documents, given an approach to trust scores. We evaluate three possible options: Degree-based, PageRank-based and hub- and authority-based trust scores. We show that hub- and authority-based trust scores provide a dominant strategy and thus an incentive for agents to contribute to the document graph and its integrity preservation.

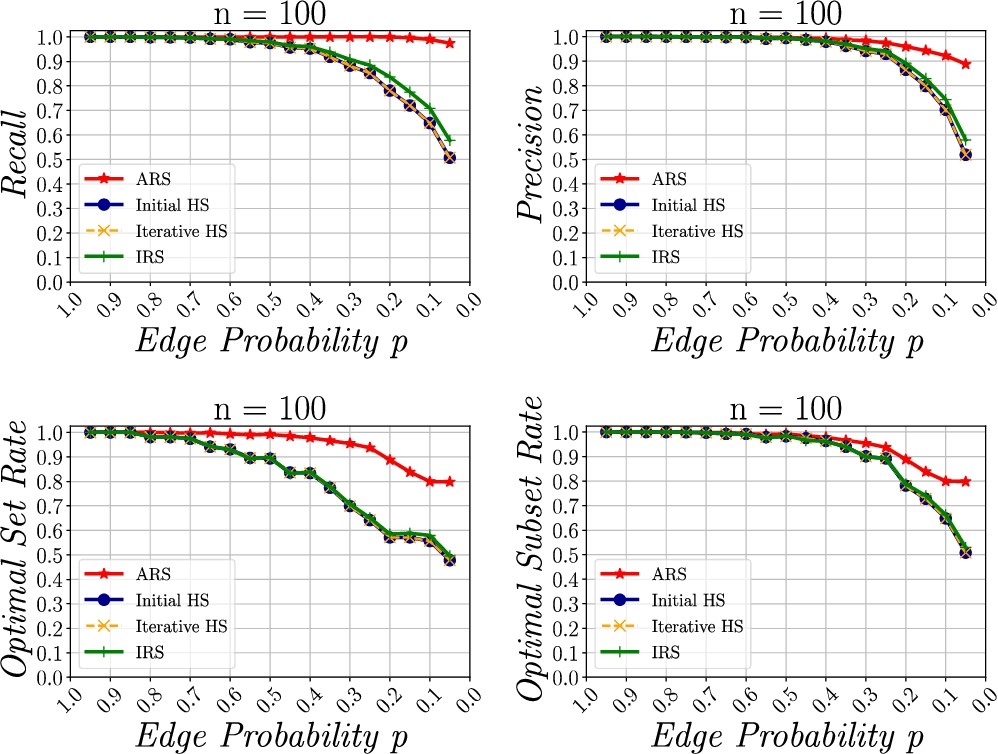

Second, we evaluate our proposed Score Optimisation Strategy for finding the next best link for a new document. We assess four decision heuristics, i.e. the Initial Max Hub Score (Init HS), the Iterative Max Hub Score (Iter HS), the Initial Reachability (IRS), and the Additional Reachability (ARS). We compare the performance of these heuristics using four performance metrics, i.e. precision, recall, optimal set rate and optimal subset rate. We show that ARS exhibits significantly better performance than other heuristic score optimization strategies.

Third, we evaluate our approach in our exemplary application environment. We assess how the authority score distribution within the document graph changes when iteratively documents are deleted until the graph becomes weakly disconnected. We further examine extend to which the score distribution is able to recover upon reconnection of the graph. We introduce a model of our exemplary application to simulate an agent network in a social Web application based on the Watts–Strogatz model [50]. We present a method to simulate agent activity within the social application, i.e. creating and interlinking resources. We then artificially delete resources until the graph becomes disconnected. The agents make an effort to connect the graph again and continue their usual behaviour. We define two evaluation parameters which yield five simulation cases. On one hand, we examine the influence of access control, i.e. the case where resources in an interaction channel are visible and thus linkeable only be members of the interaction channel, and transparency, i.e. the case where resources in an interaction channel are visible and thus linkeable to any agent. On the other hand, we examine the influence of different link structures within the document graph, i.e. a case where only content-based links exist, a case where only score-based links exist and a case where both types of links exist. We show that authority scores in a document graph are able to recover from the graph becoming weakly disconnected, even in access-controlled environments.

Choice of trust scores based on the induced agent behaviour

Trust scores allow agents to determine which resources are more trustworthy than others to be relied on for usage in an application context. Subsequently, agent behaviour may be influenced towards optimising these trust scores. Trust scores can thus serve as a mechanism to application designers for formulating incentives regarding agent behaviour. The evolution of the document graph underlying an application can thus be described based on the induced agent behaviour.

We first present three options for trust scores. Then, we define our methodology for evaluation. And lastly, we provide the results of our analysis.

Mathematical modeling of trust scores

Three approaches to trust scores are examined: The first approach produces simple degree-based trust scores. The second approach is based on the PageRank [39]. The third approach is adapting the notion of hubs and authorities of [32]. The approaches to trust scores can be summarized by their motto as follows:

Degree-based approach: “Integrity is trust.” PageRank: “Highly trusted documents reference highly trusted documents.” Hubs and Authorities: “Trust is based on the interconnectedness of documents.”

An overview of the iterative formula for modelling trust scores according to the three approaches is provided in Table 1.

Overview of mathematical modelling of trust scores (∗ iterative formula after convergence)

Overview of mathematical modelling of trust scores (∗ iterative formula after convergence)

Degree-based approach When integrity preservation is the only factor influencing trust in information resources, the quantitative measures of resource integrity may be interpreted directly as trust scores. The in-degree of a document is then interpreted as the trustworthiness of the document. The higher the in-degree of a document, the more trustworthy that document is. The in-degree of a document is not affected by the in-degree of referencing documents but only the existence of the reference. Thus, the gravitas of a document is equivalent to the existence of a reference to another document, i.e. it is 1 if a reference exists and 0 if there no reference exists.

PageRank As an alternative use case for the PageRank, [39] explicitly states that the PageRank may be an indicator of the trustworthiness of a web page. The idea is adopted for documents published on the Web with self-verifying representations. The interpretation of the PageRank as a trust score can be formulated as “Highly trusted documents reference highly trusted documents”. When a highly trustworthy document references another document, the gravitas of that document leads to additionally increased trust in the referenced document. Highly trusted documents contribute with their gravitas to the trustworthiness and thus gravitas of other documents. In the PageRank-based approach, the trustworthiness as well as the gravitas of a document is determined by its PageRank.

Recall the definition of the PageRank (Definition 8). From the iterative calculation formula, the converged PageRank

The notion of a random surfer and its damping factor d is not directly compatible with the interpretation of the PageRank as a trust score. While for the popularity of a Web page it may be acceptable to assume that an agent randomly jumps to another web page, it is hard to find a reasonable interpretation of the random surfer regarding trust scores. Hence, when interpreting the PageRank as a trust score, the damping factor should specified such that the random surfer is prohibited from jumping to a random document in the graph. Then, the random surfer is only allowed to jump from a sink document to a random document in the graph. Since this is done with uniformly distributed probability, a base value is distributed to all documents in the graph. This can be interpreted as a base trust level that is present in the document graph, even on documents that have no incoming links. This way, the problem of sinks is solved and the PageRank calculation results in reasonable values. Accepting the interpretation of the random surfer as a base trust level, the PageRank may serve as a trust score. The PageRank reflects the trustworthiness of a document and the sum of its gravitas at the same time.

Hubs and authorities Adapting the notion of hubs and authorities of [32], hub score and authority score can be interpreted as trust scores for documents published on the Web with self-verifying representations.

Recall the definition of hubs (Definition 6) and authorities (Definition 7): A document’s hub score is the sum of all the authority scores of information resources it points to. The hub score of a document can be interpreted as to how much it contributes to preserving the integrity of the document graph, receiving more weight from highly ranked authorities. The hub score of a document can hence be interpreted as its gravitas. A document’s authority score is the sum of all the hub scores of the information resources that point to it. The authority score of a document can be interpreted as to how well its integrity is preserved by the document graph, receiving more weight from highly ranked hubs. The authority score of a document can hence be interpreted as its trustworthiness.

Obviously, hub scores and authority scores are coupled: The trustworthiness of a document is determined by the gravitas of documents referencing that document. Inversely, the gravitas of a document is determined by the trustworthiness of other documents referenced by that document.

This interpretation of gravitas may seem counterintuitive at first glance because a document’s own trustworthiness does not seem to influence its gravitas. Yet, the hub score of a document is dependent on the authority score of the referenced documents which in turn is dependent on the hub score of the documents referencing them. The hub score of a document is implicitly dependent on other hubs referencing the same documents. Hence, highly ranked hubs exhibit a considerable overlap in the set of documents they link to. The interdependence of the scores therefore fosters interconnectivity among the documents.

Referencing a lot of documents alone does not automatically make a document a highly ranked hub. It is referencing a lot of documents that other hubs reference as well. Only if a document is well interconnected with the other documents, it is able to aggregate the enough gravitas to contribute considerably to another documents trustworthiness. Similarly, a document referenced by one hub is also not automatically a highly ranked authority. Only if it is referenced by more other hubs, its authority score will increase. [32] describes the behavior of hubs mutatis mutandis as “Hubs pull together authorities and allow to rule out unrelated pages of large in-degree”.

The interpretations of gravitas and trustworthiness based on hubs and authorities can thus be refined as follows: A documents gravitas is dependent its own interconnectedness and the interconnectedness of the documents it links to. A documents trustworthiness is dependent on the interconnectedness of the documents it is linked by.

We assess the suitability of the proposed approaches by formal analysis. In particular, we examine the extend to which the modeling of the trust scores induces agent behaviour that contributes to the integrity of the document graph. We assume that an agent tries to maximise the trustworthiness of its own documents and, to this end, includes or excludes links to other documents in new documents it creates. This includes the assumption of rational agents.

The goal of the analysis to find a strategy for an agent to maximise the trustworthiness of its documents. We first try to find a dominant strategy by formally analysing the calculation formula of each trust score approach. Recall Section 2.6.2: A strategy is called dominant if it defines an optimal behaviour for an agent regardless of the behaviour of other agents. As all the agents have the same action set, and thus same strategies, finding a dominant strategy for an agent results in finding a Nash equilibrium in dominant strategies. In the case that we are not able find a Nash equilibrium in dominant strategies that way, we try to find any Nash equilibrium using a Game Theoretic approach: The iterative interaction of agents is represented as a repeated game where agents may collaborate to achieve an overall better outcome for oneself.

Results

Degree-based approach “Integrity is trust.”

This approach relies fundamentally on Definition 10: Trustworthiness is only based on the incoming references of a document. Because the trust score of a document is not tied to its out-degree, there exists no score-inherent incentive to include outgoing links in a document. This means that there is no score-inherent incentive to reference other agents documents and to contribute to the integrity and trust of the document graph. Yet, from a game theoretic perspective, agents may want to collaborate and reference each others documents to increase the trustworthiness of their documents.

Formal Analysis. An agent can only influence the trustworthiness of its own documents by documents it has created itself as

The payoff matrix of the degree-based example (

Game Theoretic Assessment. Despite the lack of a score-inherent incentive to contribute to the integrity of the document graph, agents may want to collaborate and mutually reference each others documents to increase the trustworthiness of their documents. Consider the following example of two agents. It suffices to examine a two agent game as a game with more agents would just result in parallel pairwise versions of this game. In a nutshell, the game resembles a tit for tat between two agents. Each agent has already published a document, i.e. agent 1 published document 1 and agent 2 published document 2. Each agent is to publish a new document, i.e. agent 1 publishes document 3 and agent 2 publishes document 4. The agents can choose between the same set of strategies when publishing their documents. Strategy O refers to an agent only referencing its own existing document. Strategy B refers to an agent referencing both existing documents. Figure 6 depicts the contribution of the newly published documents to the trustworthiness of documents 1 and 2, i.e.

Consider the following case: The new document of agent 1 references the existing documents of agent 1 and 2, i.e. agent 1 chooses strategy B, while the new document of agent 2 only references the existing document of agent 2, i.e. agent 2 chooses strategy O. The resulting payoff is

Both agents are indifferent between strategies O and B because they receive the same payoff regardless of their own action. Instead, their payoff depends only on the other agent’s strategy. In the stage game, all agents are indifferent regarding their own strategy. Thus, we have no dominant strategy for an agent but found two Nash equilibria

Considering a repeated game, which corresponds to agents repeatedly adding new resources to the document graph. The agents are able to collaborate to achieve an overall better outcome. In a finitely repeated game, it is best to collaborate to achieve the outcome of

Implications on Graph Evolution. It is best for agents to mutually reference each others documents such that the trustworthiness of all documents is increased. In a document graph, this translates to simply referencing all documents transitively. This is achieved by including references to all documents with an in-degree of zero in a newly published document.