Abstract

Maintenance of assets is a multi-million dollar cost each year for asset intensive organisations in the defence, manufacturing, resource and infrastructure sectors. These costs are tracked though maintenance work order (MWO) records. MWO records contain structured data for dates, costs, and asset identification and unstructured text describing the work required, for example ‘replace leaking pump’. Our focus in this paper is on data quality for maintenance activity terms in MWO records (e.g.

We present two contributions in this paper. First, we propose a reference ontology for maintenance activity terms. We use natural language processing to identify seven core maintenance activity terms and their synonyms from 800,000 MWOs. We provide elucidations for these seven terms. Second, we demonstrate use of the reference ontology in an application-level ontology using an industrial use case. The end-to-end NLP-ontology pipeline identifies data quality issues with 55% of the MWO records for a centrifugal pump over 8 years. For the 33% of records where a verb was not provided in the unstructured text, the ontology can infer a relevant activity class.

The selection of the maintenance activity terms is informed by the ISO 14224 and ISO 15926-4 standards and conforms to ISO/IEC 21838-2 Basic Formal Ontology (BFO). The reference and application ontologies presented here provide an example for how industrial organisations can augment their maintenance work management processes with ontological workflows to improve data quality.

Introduction

Maintenance of assets is a significant cost input for the manufacturing, resources, defence and infrastructure sectors. Maintenance costs typically range between 20–60% of operational expenditure depending on industry and asset type [60]. There has been significant effort in the last decade to move from reactive to preventative and predictive maintenance strategies propelled by developments in sensing, WiFi, cloud computing, and data analytics. However, generating value from analytics using these platforms has often proved challenging [1,6,67]. In part, this is due to the way in which data describing what maintenance work was actually done, to what item, when it was done and what it cost, is captured and stored [9,56] in maintenance work order (MWO) records; that is, as unstructured free text.

MWOs are the equipment equivalent of health records for humans. Tens to hundreds of thousands of MWOs are generated a year on a moderately complex site or asset system. A MWO is an information artifact that is generated in industrial organisations (that are mature enough to maintain a maintenance strategy) to inform technicians that work needs to be done. MWOs can be generated automatically (by a Computerised Maintenance Management System) in the case of preventative maintenance, or manually by a technician in response to an observation or event. These MWO records contain an unstructured text field with a short description of the job (e.g., “replace all damaged idMobile lers” or “inspect / refurb scrapers”). Each record also has structured data fields for a unique work order number, asset functional location, dates for the proposed and actual start and end date of the work, budget and actual costs, as well as fields for metadata about the record such as when it was generated and closed. Computer readability of the unstructured text in MWOs using natural language processing (NLP) is currently an active area of research and development [7,12].

Our focus in this paper is on data quality of the maintenance activity term in the MWO unstructured text field. This activity is described by verbs such as

Data quality of MWOs has been a topic of organisational behaviour research for many years [37,40,64]. However the problem is unresolved and data quality is cited as an issue affecting maintenance and warranty management improvement programs [18,33,47]. Labour productivity in maintenance is now a focus of senior management attention [60]. The time taken by experienced maintenance planners and reliability engineers to manually read and process MWOs to get information for their analysis is a significant impediment to improving their productivity [15]. Machine augmentation of this basic task is required.

Our interest is improving data quality using ontology-based reasoning on MWO unstructured texts pre-processed with natural language processing techniques. Currently questions such as ‘was pump 101 actually replaced on 12/1/20?’ are resolved manually by a reliability engineer [10,54]. Such analysis requires the reliability engineer to examine MWO records one at a time as described in [3]. However, the reasoning used to answer a number of these questions can be formalised into a set of rules [3]. This opens the door to the use of ontologies as part of the MWO data quality improvement process. The specific focus of this paper is an ontology for maintenance activity terms.

We propose a solution to improve MWO data quality using a reference ontology and an application-level ontology. The reference ontology contains a holistic view of the types of activities conducted by maintenance personnel. Elucidations based on ontological analysis are provided for seven core maintenance activity terms. The application-level ontology uses the reference ontology and real-world MWO data to perform the necessary reasoning tasks for our use case. This ontology examines each MWO and determines if the maintenance activity word used in the MWO is consistent with the other information in the maintenance record.

If the activity described in a MWO’s unstructured text matches the record’s structured data, then we can be more certain that the activity as described actually occurred in practice. This information can then be used, with confidence, for a number of important downstream analytics tasks such as in calculating reliability metrics such as mean-time-to-failure, in root cause analysis following major undesirable events, and for assessing the effectiveness of maintenance strategy [33,42,43,46].

The paper is organised as follows. Section 2 describes previous ontology development and industry reference data models in the maintenance area and provides details on MWOs and their contents. The use case, including instance data, is presented in Section 3. Section 4 describes steps in the process of identifying seven maintenance activity classes and their elucidations. Ontology design and competency questions are presented in Section 5 and the performance of the ontology is assessed in the evaluation in Section 6. Finally, Section 7 critically examines the performance of the ontology and identifies opportunities for further work.

Background

Maintenance

Maintenance is defined by an International Standard as “the actions intended to retain an item in, or restore it to, a state in which it can perform a required function” [20]. We note from this that the notion of an action or activity is central to the concept of maintenance.

In mature maintenance organisations, each maintainable item has an associated maintenance strategy. The maintenance strategy determines what work should be done, when to do it and at what level in the asset hierarchy it should be performed. Deciding on maintenance strategy is a well established process based on failure modes and effects analysis and reliability centred maintenance (RCM). These are described in international standards such as [21,52]. The ‘what strategy?’ decision is informed by factors such as the safety, environmental, production or cost consequences of an item’s failure, whether loss of function is technically and cost effective to observe, and how long it takes from observation of deterioration to failure event. RCM decision-logic guides the user towards one of the following strategies: a) use based, also known as fixed interval restoration/repair/inspect strategy, b) strategy based on condition monitoring and inspections, c) failure-finding and d) run-to-failure [17]. Colloquially, these are classified into preventative and corrective maintenance strategies, with

Review of previous ontology work on maintenance activity terms

There is increasing interest in automating tedious data processing tasks that require the input of experienced people and in being able to automatically process equipment maintenance and failure data using ontologies for decision support to standardise the decisions made when analysing the data [15,33,42,46]. Interest is high in industries such as defence, aerospace, oil and gas, and manufacturing and this is compelling groups to come together to consider standardisation as they recognise the scale of the task and the challenge of going it alone. In 2021 the ISO/IEC 21838-1 for Top Level Ontologies [26] was issued alongside ISO/IEC 21838-2 Standard [27] describing Basic Formal Ontology (BFO). Standardisation of a further two top level ontologies, Descriptive ontology for linguistic and cognitive engineering (DOLCE) and TUpper is in progress. This standardisation process is a key plank in industry acceptance of ontologies. There is a surge of activity to develop industry-relevant, domain specific ontologies, that are aligned to one of these Top Level Ontologies. Of relevance to this paper is the work of the Industrial Ontology Foundry (IOF) and their work to publish a domain ontology for manufacturing aligned to BFO. The IOF promotes a principles-based approach to the design of ontologies for design, maintenance, supply chain, production, and lifecycle management of equipment. A number of the terms and relations necessary to support description of maintenance activities are already formalised in the IOF [59].

While there have been a number of papers published on maintenance ontologies these have, in the main, been developed using a top down approach. Some have tried to capture maintenance in general [30,35] while others have focused more specifically on various processes in maintenance such as maintenance work management [19], failure modes and effects analysis [16], fault classification from warranty records [48], modelling maintenance strategies [38], and in criticality assessment [44]. Dealing with real-life maintenance data has not been the primary motivation for ontology development. Instead, conceptual models have dominated with limited use cases selected to support and illustrate specific reasoning and conceptualisation challenges. There have been some exceptions. For example, in the automotive industry, Rajpathak and General Motors have used natural language processing and ontologies to extract data from hundred of thousands of unstructured warranty records [46,48,49] and there is similar interest in the analysis of safety data from accident databases [57]. In the oil and gas industry, there is emerging work on using ontology patterns to ingest instance data from MWOs and failure modes and effects analysis records into ontologies for use in reasoning about the effectiveness of maintenance strategy for a complex operating asset [32].

When looking for previous ontology work specifically on maintenance activity classes we located a list of 16 drawn from a data set of 654 activities/tasks for a wire harness assembly case study [41]. However, these activities are defined using natural language phrases such as ‘laying cable flat’ and ‘inserting into the tube or sleeve’ and can be used only in their specific case study. The activity classes identified are not generic to maintenance in general.

Maintenance work order records

A MWO record is generated every time work is done, or needs to be done, on an asset. MWO records are the only digital record of maintenance events for the life history of an asset. Hundreds of thousands of these MWOs are generated every year in asset intensive organisations. In a MWO record the primary interest to the reliability engineers are the fields described in Table 1. The functional location is the asset identifier, the work order description contains the unstructured text of interest in our work, the work order type identifies if the work is preventative or corrective, and there are fields for the start and end dates and various budget and actual costs. Other fields, not shown, include metadata for the record. The information contained in MWOs is the only record of what maintenance work is done when, to what item, and how much it cost.

Examples of other fields of interest in MWO records

Examples of other fields of interest in MWO records

The reader will note from Table 1 that there is no dedicated fields for key information such as “what specific item needs work?”, “what work needs to be done?”, “why does it need to be done?”. The answers to these questions have to be inferred from a 4–8 word sentence captured in the ‘Work description’ field. A typical example, drawn from [13] is ‘change out leaking engine’. This phrase is illustrative of MWOs in general in that it contains an activity word ‘change out’, a state or problem word ‘leaking’ and an item identifier ‘engine’. Work to develop pipelines to separate the words in these MWOs into classes using natural language processing is very active [7,13,42,46,55,62]. Once extracted, the information in these activity, item, and state fields is being used for visualisation and analysis to support a number of maintenance and reliability decisions [3,48,53,54]. However, one challenge for all users is the large number of terms in all these classes resulting from synonyms, misspellings, different tenses, abbreviations, and jargon.

Work on defining classes for types of maintenance

Lists of maintenance activity terms from international standards

In this work we draw on two international standards, ISO 14224 (2016) and ISO 15926-4, for their maintenance activity terms and definitions. The terms, definitions, and their alignment between the two standards is shown in Table 2. The following sections briefly introduce the two standards.

The ISO 14224 standard (Petroleum, petrochemical and natural gas industries – Collection and exchange of reliability and maintenance data for equipment) [23] is an influential international standard originally developed for the oil and gas industry but now widely used in other process and heavy industry sectors. This standard was originally developed in the early 1980s due to work by the oil and gas sector seeking to standardise maintenance and failure data collection for an Offshore Reliability Data handbook called OREDA [58]. Data quality is a key consideration in OREDA because the data in this handbook is so widely used by industry for safety-critical design decisions [50]. ISO 14224 has been through 5 editions and contains widely-used lists for equipment hierarchies, failure modes, mechanisms and causes, and a set of maintenance activity terms. We list the activity terms and their definitions from ISO 14224 in Table 2.

ISO 15926-4 activity class hierarchy

The ISO/TS 15926-4 Standard (Industrial automation systems and integration – Integration of life-cycle data for process plants including oil and gas production facilities – Part 4: Initial reference data) is a data model developed for the engineering design community particularly in the process sector. It includes a number of reference data models for activities, rotating equipment, static equipment, instrumentation, units of measure, valves etc. In the activity data model there are 1795 activity terms organised into a deep taxonomy (7 levels) from a superclass set of 22 terms. These terms are: acting, absorbing, affirming, behaving, being, choosing, competing, consigning, directing, disjoining, drawing, happening, occurring, passing-letting, process, pushing, reacting, reducing, refusing, relieving, squeezing, watching. Maintenance activity terms relating specifically to interactions with equipment such as

Mapping of activity super-class terms from ISO/TS 15926-4 (industrial automation systems and integration – integration of life-cycle data for process plants including oil and gas production facilities – part 4: initial reference data) showing their maintenance-related subclass terms

Mapping of activity super-class terms from ISO/TS 15926-4 (industrial automation systems and integration – integration of life-cycle data for process plants including oil and gas production facilities – part 4: initial reference data) showing their maintenance-related subclass terms

The descriptions for maintenance activities drawn from ISO 14224 and ISO 15926-4 are outlined in Table 2. In these descriptions we can see there are two motivations for a maintenance activity. The first is to ‘change (or restore) the state’ of the item. The second is a ‘discovery’ process to determine if work is required. Under the ‘change of state’ notion, either: a) the state of the item is known to be deteriorated and needs restoration, e.g., for repair, adjust, and corrective replacement; or b) work is required as a result of pre-determined strategy at a predetermined interval regardless of the state of the item, e.g., preventative replacement, fixed interval calibration, test and service. Under the ‘discovery’ notion, the state of the item is unknown, but a problem is suspected. The state of the item needs to be determined, e.g., by a diagnostic activity such as a test or inspection.

However, we note that these two standards do not agree on several of the term definitions or the same set of terms. For example, the terms

Classification workflow capturing logic to distinguish between maintenance activity terms.

To move forward in the presence of this partial agreement on terms and their definitions in the ISO 14224 and 15926-4 Standards, we have captured general concepts in Table 2 in a the classification workflow shown in Fig. 1. In this workflow, diamonds contain questions for the users to answer and depending on the yes/no answer, an inference about the nature of the activity is shown in a parallelogram. In developing this we focus on information of potential value to reasoning as follows:

Is the activity initiated by a preventative maintenance strategy or is it corrective maintenance?

Is the item performing its desired function?

Does the activity involve an action that restores function?

Does the activity change the function and/or capability of the item?

Is the state of the item known or unknown?

A corpus of +800,000 unstructured MWO descriptions from rail, process and mining sectors was processed using NLP to identify activity words used by maintenance and operations personnel (this is described in Section 4.1.1). The use case for demonstrating reasoning using this ontology is real-world industrial data for centrifugal pump system in a process plant. The general subunit breakdown of a centrifugal pump system is given in Table 4 while Table 5 contains a set of MWOs for a single functional location for a single pump in a process plant from 2012–2020. There are hundreds of other similar pumps in this facility. Columns 2–3 and 6–8 in Table 5 are representative of a data set a reliability engineer would normally work with. These fields are usually of most interest to the reliability engineer and are often the most readily available as reports downloadable as CSV files from the computerised maintenance management system (CMMS). The first column contains an ID to assist us with discussions of the data set and the analysis thereof. The second column contains a date, in this case we have selected the date on which the work was started. The third column contains the unstructured text describing the work and is subject to natural language processing. The sixth column describes the work order type as either corrective or preventative and the final two columns contain the labour and material costs; referring to Table 1, only one of labour hours or labour costs is required, in this case the records include cost, while material cost is equivalent to the actual parts cost.

Equipment subdivision for a centrifugal pump-motor system into subunits and maintainable items

Equipment subdivision for a centrifugal pump-motor system into subunits and maintainable items

Example of a data set of maintenance work orders for a single pump over the period of 2012–2020. Abbreviations used: D& E – driver and electrical, PT – power transmission, PU – pump unit, C&M – control and monitoring, LS – lubrication system, P&V – piping and valves

Table 5 contains two columns not found in the CMMS. The fourth and fifth are calculated columns that capture the outputs of processing the unstructured text with an NLP pipeline. The ability to do this depends on lexical normalisation [63] and annotation of a large corpus of MWOs [62] from fixed and mobile plant assets which has been used to train a deep learning model. The outputs from this processing that are of interest to the work in this paper are the extraction of the

Over the 7.5 year period there have been 36 MWOs raised against the pumping system in this functional location. The majority (16 out of 36) are related to the pressure switch. The pressure switch is part of the Control and Monitoring subunit. The pump has been replaced once, in 2017, at a material cost of

The MWOs include both preventative and corrective work, with preventative work being associated with a semi-structured form in the unstructured text field usually starting with some periodic interval such as ‘78W’ for 78 weeks. Preventative work passes through the planning and scheduling process in the maintenance work management system [31]. Corrective work orders are urgent and need to be actioned immediately by shift maintainers, whereas preventative works go into the maintenance management process to be dealt with by maintenance planners and then scheduled into a weekly maintenance plan. In general, there is a desire to move towards maintainers spending their time on preventative work with correctives generated from preventative inspections. Correctives are costly and disruptive and typically represent a failure of strategy, except where they associate with a deliberate run-to-failure strategy.

Finally, of note is that some MWOs (e.g., 6, 12, and 19 in Table 5) have

There are many data models to describe how asset systems can be decomposed into functional or physical breakdown. A detailed review of these is beyond the scope of this paper. Our taxonomy is informed by the hierarchy in ISO 14224 [23] of maintainable item, subunit, and equipment unit. The maintainable items have been separated into a number of subunits as shown in Table 4. The proposed structure mirrors one commonly found in asset registers and computerised maintenance management systems in our industry partners. We use the information on where maintainable items sit in the hierarchy to support reasoning in the activity ontology.

In this section, we present a reference ontology containing the types of activities that are typically performed in maintenance. This reference ontology contains elucidations for seven core maintenance activities. Given the inconsistencies in the existing standards presented in Section 2.6, we decided to extract reference-level activity terms from NLP analysis of +800,000 real-world MWO records. We then use the existing standards to distinguish between these terms and inform their elucidations. The methods that we use to extract the activity terms and group them according to seven core activities is described in Section 4.1. Elucidations for these activity terms, and the ontological choices underpinning them, are provided in Section 4.2. The purpose of the reference ontology is to provide a set of terms and loose definitions to be used in future ontological analysis by the wider research community. We demonstrate the use of this reference ontology as an input to our application-level model described in Section 5.

Developing notions for maintenance activity terms

Step 1: Activity term identification

To generate a holistic view of the types of activities performed by maintenance technicians, we used a corpus of +800,000 maintenance work orders from heavy mobile equipment, fixed plant, and rail assets. The work order data is similar to the sample data shown in Table 5. Initially we explored using off-the-shelf part-of-speech (POS) taggers (NLTK and spaCy) to extract verbs automatically. However, these approaches could not extract verbs in most cases due, in part, to the informal grammar inherent in these short maintenance work descriptions [7,12]. There are currently no off-the-shelf pre-trained/configured POS algorithms for maintenance work orders. To overcome this limitation, we applied named entity recognition to our corpus using a pre-trained neural model fine-tuned on annotated maintenance work descriptions extracted from these asset types. This pre-trained entity recognition model created by the UWA NLP-TLP group [66] is the product of 3 years research effort involving schema development [13], lexical normalization [2], and collaborative annotation [62] of MWOs. A description of the end-to-end pipeline is available [61]. The entity recognition model is fine-tuned on a set 6,000 high-quality, gold-standard, annotated MWOs and extracts notions such as

The processing steps are described below.

Apply the pre-trained NER model to our corpus of +800,000 unstructured maintenance work order, filtering extracted classes to tokens tagged as ‘Activity’ (i.e. activity terms). Produce a list of more that 470,000 activity terms including duplicates, synonyms and misspellings, of this 10,860 were unique terms. Perform frequency analysis over the extracted activity terms to identify high-frequency occurrences. Filter the activity terms to the top 99% by a frequency that constituted our primary collection of terms. This resulted in a list of 109 terms that represent 99% of the usage due to the very long tail of low frequency words. In the final list of 109 terms the top two terms by frequency of occurrence ( Examine the resulting list of 108 terms, manually identify misspelling and run a script to 1) normalise words such as replaced, replacing, repl, relace, replacement to replace, 2) change past tense to present, and 3) remove the ‘re’ in reweld, re-seal, resecure and so on to the weld, seal and secure, for example. The resulting 108 terms are discussed below.

Step 2: Synonym grouping

A number of high frequency use activity terms are immediately apparent on examination of the NLP pipeline results.

The team used subject matter experts to group the remaining words to maintenance activity classes described in the paragraph above which align with terms proposed by ISO 14224 and ISO 15926-4 shown in Table 2. In performing this synonym grouping consideration was given to a) the semantics of the activity word, b) mention of the class as part of maintenance strategy development as described by reliability-centred maintenance (RCM) philosophy and standards [39,52], and c) associating the verb with either preventative or corrective work. This work produced eight synonym groups, seven maintenance-related activity terms and a group associated with supporting activities. The resulting list of maintenance activity terms and their semantic variants is presented in Table 6.

Maintenance activity terms and semantic variants identified using NLP from the top 1% of words by frequency of occurrence (shown in []) in the +800,000 corpus

Maintenance activity terms and semantic variants identified using NLP from the top 1% of words by frequency of occurrence (shown in []) in the +800,000 corpus

We propose

We propose

We consider

We have removed

We have moved

The activities in supporting activities are not the primary focus on this work but we have made an initial set of activities into which the verbs in Table 6 have been grouped. These are

Combining the grouping of NLP extracted terms with the standards literature identified a set of core maintenance activity types for this ontology. In addition, we identified distinctions between these activity types that, in practice, have not been captured in the definitions in the standards. However, having identified these seven core concepts, the label used to describe the concept can be adjusted to suit specific organisations through edits to the annotations.

Table 7 provides a subject matter expert natural language description, a semi-formal description and an elucidation each of the seven maintenance activity terms. The elucidations refer to concepts defined by BFO, IOF Core, and maintenance state ontology [68] using

Subject Matter Expert (SME) natural language descriptions, semi-formal descriptions and elucidations for maintenance activity terms

Subject Matter Expert (SME) natural language descriptions, semi-formal descriptions and elucidations for maintenance activity terms

In this section, we discuss the elucidations given in Table 7 and the ontological choices underpinning these elucidations. These elucidations draw on our previous work on

First, we describe the elucidation for the

The semi-formal description for a

An

An

An

The final maintenance activity type is a

Reference ontology implementation

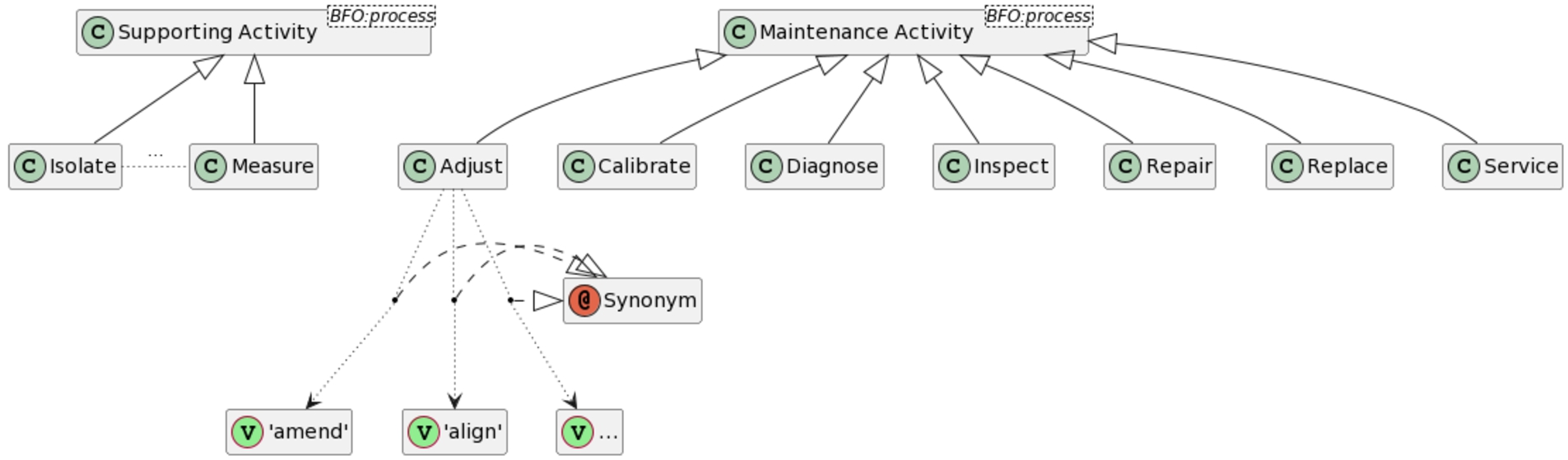

A conceptual diagram of the maintenance activity reference ontology with example links to synonyms (synonyms are listed in Table 6).

The reference ontology is implemented in OWL and is available at Possibly the maintenance community within a specific organisation which could tailor the synonym allocations through data profiling on their own MWO records, for example.

In this section we define an application-level ontology to answer a set of competency questions related to the use case described in Section 5.1. The purpose of this model is to meet a real industrial need while making use of the reference-level terms and ontological analysis described in Section 4. As discussed, the quality of maintenance work order data is an unsolved problem that is imperative for downstream statistical tasks such as mean-time-to-failure analyses. However, maintenance engineers rarely spend time checking the quality of this data, as there is no widely-accepted, repeatable manner to perform this task. This model uses SWRL rules and ontological classification to provide a structured and repeatable way for organisations to assess the quality of maintenance work orders that reflects the logic currently used by engineers performing this task in industry.

Competency questions

The application-level ontology is designed to perform a specific reasoning task to meet a real industrial need. The work order data described in our use case (Section 3), contains seven distinct activity words. These are Is the information in a MWO record indicating a Is the information in a MWO record indicating a Is the information in a MWO record indicating a What is the likely activity type for a record in which no discernible activity term was recorded?

Application-level ontology implementation

This ontology was developed making use of the reference ontology described in Section 4. The ontology consists of eight modules, as shown in Fig. 3. A description of each of these modules is contained in Table 8. This ontology can also be found on GitHub at

We have developed a set of modular, inter-operable ontologies aligned to a top-level ontology (BFO) guided by the processes described in [65]. The diagram in Fig. 3 should be read from top to bottom, first

Import structure of application-level ontology for assessing MWO data quality.

Purpose of each module in the application-level ontology for assessing MWO data quality

A conceptual diagram of information content entities for work order records in the application-level ontology.

At the core of this application-level ontology is an

As shown in Table 5, a

In this ontology,

Where a

Where a

Finally, we are choosing not to link the

A conceptual diagram of NLP identified properties for work order descriptions in the application-level ontology.

As discussed, the goal of this application-level ontology is to check if the activity described in the MWO’s unstructured text field matches the information in the rest of the ontology. To achieve this, we store the Note that the OWL implementation provided does not use annotation properties due to errors occurring in the use of the tools. Repeated savings of the file would cause the annotation properties to shift into data or object properties in ways that would break the outcomes of the reasoning. So, the authors defined them as data/object properties to avoid such recurring issues. We assume that different tools would not demonstrate the same issues and the properties could be defined as annotation properties as described here.

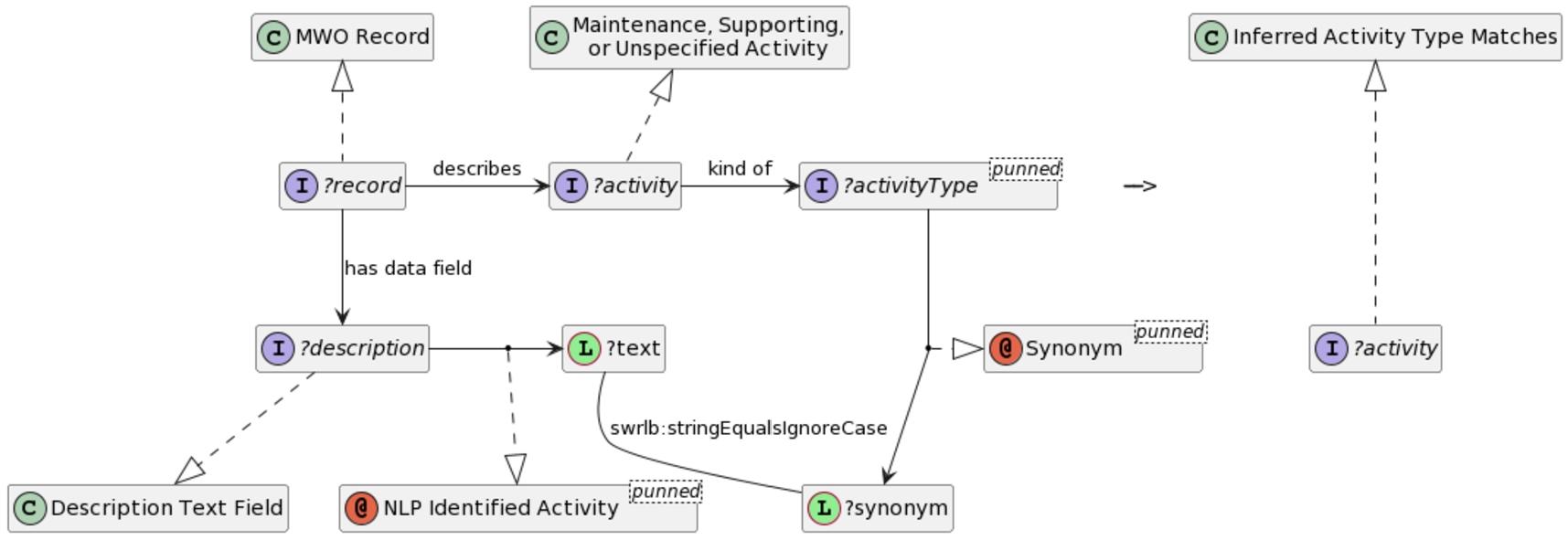

SWRL rule reconciling NLP extracted activity term with the ontology. Elements representing variables have names beginning with a question mark (‘?’).

Using the inferred maintenance activity, we can then compare the NLP identified activity to the result of the workflow in Fig. 1. The rules for which are discussed in Section 5.3. If there is no

To realise the logic contained in the workflow in Fig. 1, we need to know the type of equipment being worked on (or, more specifically, which subunit from Table 4 the item fits into). In the MWO structured data, there is a functional location field (a

A conceptual diagram of items and subunits in the application-level ontology.

The only structured information in the MWO record that indicates the item being acted upon is the

Thus far, we have shown how we model the structured information from the work orders as

The conceptual diagram in Fig. 8 shows how we model the inference of the activity type and its relation to a MWO record. In the figure, our

A conceptual diagram of maintenance work order execution processes and activities in the application-level ontology.

The actual maintenance activity classification is inferred using SWRL rules that capture the logic of the workflow shown in Fig. 13. To support this inference, there are several supporting elements, including additional classifiers and rules, that surface information used by other rules or for subsequent querying. The primary rules and supporting elements are captured in the Maintenance Activity Classification Rules module, described in Table 8.

There are two main categories of additional activity classifiers: intermediate classifiers, and final classifiers. The latter correspond to classifications inferred at the leaf nodes of the workflow (refer Fig. 1), while the former may be inferred at non-leaf nodes. Furthermore, some of the final classifiers are intended for querying purposes (namely those of the

The interim classifiers are based on the subsets of activity class they encompass and include:

While the

The final classifiers include a pair of disjunctive classifiers, to meet the decision logic as best as can be where there is not enough information from the MWO records and explicit knowledge from the ontology to make a complete classification. In addition, there are marker classes to indicate the status (i.e., match, non-match, uncertain) of the classification result. These include:

Additional equipment classifiers

For ease of writing the SWRL rules, some additional classifiers are used to identify particular subsets of equipment subunits required by the rules; these are identified through the process of capturing the expert knowledge used by the classification rules. The classes are quite general and apply orthogonal classifications without restricting the primary classification of subunit. As such, they should be broadly applicable to categories of equipment or, at the very least, the classes of pump other than centrifugal pump. This should control the number of classes required when defining activity classification rules for broader classes of equipment than illustrated here. For example, it does not matter if a particular class of pump does not include a subunit of a particular type; only if there is a contradiction in the types of subunit relevant to particular actions would more fine-grained distinctions need to be made. While it is possible to directly identify the class expressions in the rules, providing named classes is more flexible, convenient, and manageable. The classes include:

Item material and service costs

The decision logic for classifying the activities relies quite heavily on the material costs of the work that was performed. These costs are proxies used to evaluate whether work was performed and whether it was of the expected type. According to the workflow of Fig. 13, there are two different material costs that are evaluated

Of course, the item cost and service cost information is not currently in the scope of the available data. However, this information could be derived from other sources, data profiling, or other means of estimating the costs associated with activities related to equipment. If available, such information can be incorporated without modifying the rules to improve the granularity of the activity classification rules. Since we do not currently have the detailed information, we assume that the current data is indicative of the maintenance activities performed on the centrifugal pumps of this organisation and set default values accordingly: material item cost is $150, while item service cost is set at $1.

The possibility of avoiding the material costs, as it pertains to replacements, is discussed in Section 7.2 as it is unlikely to be the most accurate approach in general.

SWRL rules

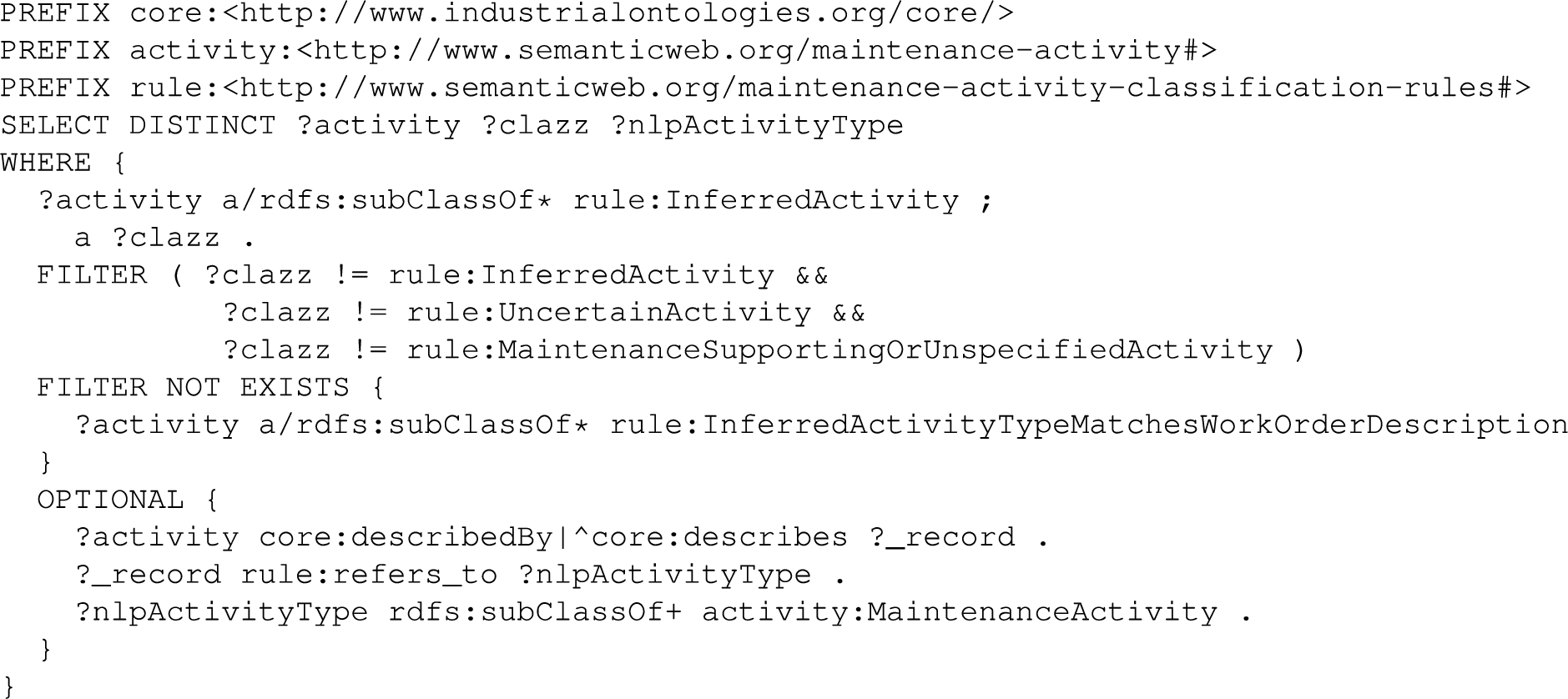

In total there are 13 rules for classifying activities according to the leaf nodes of the workflow (Fig. 13), 4 additional rules inferring intermediate classifications, and 2 rules for final comparison of classification to the NLP Identified Activity type. Figure 9 illustrates a simple rule for identifying the corrective maintenance branch of the workflow.

Rule for inferring the Corrective Action classification based on the information in the MWO record.

Intermediate classifications cannot be fully relied upon due to some of the activity classes falling under both corrective and preventative maintenance categories. This application ontology does not address the ontological concern of the activity individual being a corrective or preventative occurrence, it merely uses the classifications as indicators. Therefore, the actual maintenance type of the associated MWO record is relied upon to ensure that the final classifications are on the correct path of the tree where activity classes that may be of either category are involved. Capturing it in a rule also makes it easier to reuse the pattern in other, more complex, rules. Figure 10 illustrates a subsequent terminal rule that identifies the activity as repair or replace, alongside a marker classification indicating that it is uncertain as it cannot fully distinguish between the

Rule inferring the

The rule for the final cross-check for matching the activity classification is shown in Fig. 11.

Rule inferring the match between a concrete activity classification and the NLP Identified Activity type.

SPARQL Query for retrieving the activities that did

The query identifies all the activity individuals that have an inferred activity classification, a

We verify this ontology using the OOPS! Ontology Pitfall Scanner [45]. Issues identified by the scanner with a severity of “important” and “critical” have been considered and addressed where appropriate for this ontology.

We validate this ontology by 1) comparing the results of classification of the activity term in each work order by the ontology and subject matter expert (the agreement is either yes, no or partial), and 2) using a confusion matrix to examine the performance of the ontology and subject matter expert in identifying a data quality issue. A data quality issue is identified when the activity term in the original text of the work order disagrees with the result of ontology and/or subject matter expert’s reasoning. The results of the classification activity are shown in Table 9 and the confusion matrix is shown in Table 10.

In order to assess performance a ground truth for the activity term needs to be established. This was done through consultation with reliability engineers whose job it is to manually review these work orders and extract activity terms to support their analysis as discussed in Section 1, This reasoning process is described below.

When reviewing maintenance work order records, these subject matter experts use the data in the work order fields, their intuition and other explicit information (e.g., parts and labour costs) to determine what activity was actually performed. An example of the logic used by engineers to assess if the description provided by the author of the maintenance work order is consistent with data in other fields of the work order is shown in Fig. 13. First, the item that work was performed on is identified. This might be at the equipment level, e.g., pump, or at the maintainable item level, e.g., pressure switch. Items at the maintainable item level can be located in their respective sub-classes using standardised asset class hierarchies such as that shown in Table 4.

Classification workflow capturing the logic used by a reliability engineer when interpreting text in maintenance work order records from commonly available information.

Next a check is made to see if the labour cost field has a value greater than zero dollars. This is necessary to determine if the proposed activity occurred or not. While reliability engineers are interested in both cases, our interest from a data quality perspective is on MWOs that are actioned. The next decision point is determining if the

Table 9 is used to identify data quality issues in each record. The table shows for each record a) the activity term in the original MWO unstructured text, b) the classification by a subject matter expert (SME), and c) the activity classification inferred by the ontology and rules. The 5th column shows the agreement between the SME and the ontology as Yes (Y), No (N) and Partial (P). The Partial is used to capture the situation when the ontology identifies two possible activities, one of which was identified by the SME, for example

Results of activity classification by the ontology and rules comparing the ontology outputs with the SME classification showing agreement as yes (Y), no (N) and partial (P). The table also includes outputs from the NLP on the original record including activity, item and corrective and preventative (C, P) status

Results of activity classification by the ontology and rules comparing the ontology outputs with the SME classification showing agreement as yes (Y), no (N) and partial (P). The table also includes outputs from the NLP on the original record including activity, item and corrective and preventative (C, P) status

A summary of the data quality issues identified by the ontology is shown in a Confusion Matrix in Table 10. For the 36 records in this pump MWO data set from 2012–2020, 20 records (55%) were identified by both the ontology and the expert as having data quality (DQ) issues. There were 14 records with no DQ issues and 2 records in which the ontology and the expert disagree. An analysis of the identification of maintenance activity class by the ontology compared to the class described in the original (NLP-processed) record is shown in Table 11.

Confusion matrix: true positive – the expert and the ontology both identify a data quality (DQ) issue. True negative – the expert and the ontology both identify there is no DQ issue. False positive – the ontology identifies a DQ issue but the expert does not. False negative – the expert identifies a DQ issue but the ontology does not

Confusion matrix: true positive – the expert and the ontology both identify a data quality (DQ) issue. True negative – the expert and the ontology both identify there is no DQ issue. False positive – the ontology identifies a DQ issue but the expert does not. False negative – the expert identifies a DQ issue but the ontology does not

A comparison of the text in the original record (rows) with the classification provided by the ontology (columns) for each activity class

Is the information in a MWO record indicating a

All original records with terms

Is the information in a MWO record indicating a

This is discussed in Section 7.1.

Is the information in a MWO record indicating a

There is high agreement between the expert and ontology with the original text on the activity

What is the likely activity type for a record in which no discernible activity term was recorded?

This is discussed in Section 7.1.

What worked well?

What could be improved?

What insights did we get into maintenance activity classification

One insight gained in this work is the value of determining if maintenance activities are corrective or preventative. This information is contained in the

Another insight is the importance of an item’s state in distinguishing between activity types. To realise this, we link our elucidations to our previous work on

In our use case and this application-level ontology, we do not have an item’s state information available to us (as condition and performance data is captured in a different data base, not generally in a MWO record). However with the increased emphasis on condition monitoring on assets and process instrumentation of systems this information could be available but would need data from data bases beyond the CMMS. Future work should look at how to use this process and condition data to assist with the decisions being made in this application-level ontology. Digital twins will have to be able to incorporate changes in state resulting from maintenance activities in their models.

Choice of upper ontology

In Section 4.2 we discussed the limitation we encountered with using BFO for the notion of

The authors of this paper are part of IOF Maintenance working group and this relationship was the primary reason for selecting BFO as the upper ontology. Our interest is in understanding performance of this upper ontology on a range of use cases requiring reference and application ontologies and the use of real industry data, and using these experiences to encourage further development of BFO. We are also interested in how other ontologies perform and are active also in using the emerging ISO/CD TR 15926-14 ontology for maintenance data [16].

Conclusion

There is significant opportunity to use ontologies as an integral part of the data cleaning process for maintenance work order records. This paper focuses specifically on data quality checks on what maintenance activity was performed based on information contained in the MWO. Understanding these maintenance actions on a specific piece of equipment is analogous to a doctor being able to determine all the procedures and prescriptions a patient has had from historical medical records. In doing so, we cannot afford to rely only on the words used by the data generator to describe the

The generation of instance data for the reasoning in the application-level ontology is dependent on having a suitable NLP pipeline. Work in NLP for MWOs is an active area of research. While the pipeline used here is state of the art for extracting

The ontology community has a tendency to regard processes as failures if they perform in a less than perfect way. In the case of improvements in data quality of MWOs the task is so huge and laborious and the consequences of poor data quality so difficult to quantify and yet significant that any automation and standardisation is a worthwhile improvement. This work is a stepping stone to the use of ontologies for cleaning and processing maintenance work orders in industry. A necessary step in the digitisation journey modern industry players are now on.