Abstract

News consumption has shifted over time from traditional media to online platforms, which use recommendation algorithms to help users navigate through the large incoming streams of daily news by suggesting relevant articles based on their preferences and reading behavior. In comparison to domains such as movies or e-commerce, where recommender systems have proved highly successful, the characteristics of the news domain (e.g., high frequency of articles appearing and becoming outdated, greater dynamics of user interest, less explicit relations between articles, and lack of explicit user feedback) pose additional challenges for the recommendation models. While some of these can be overcome by conventional recommendation techniques, injecting external knowledge into news recommender systems has been proposed in order to enhance recommendations by capturing information and patterns not contained in the text and metadata of articles, and hence, tackle shortcomings of traditional models. This survey provides a comprehensive review of knowledge-aware news recommender systems. We propose a taxonomy that divides the models into three categories: neural methods, non-neural entity-centric methods, and non-neural path-based methods. Moreover, the underlying recommendation algorithms, as well as their evaluations are analyzed. Lastly, open issues in the domain of knowledge-aware news recommendations are identified and potential research directions are proposed.

Keywords

Introduction

In the past two decades, there has been a shift in individuals’ news consumption, from traditional media, such as printed newspapers or radio and TV news broadcasts, to online media platforms, in the form of news websites and aggregation services, or social media. News platforms use a form of a recommender system to help users navigate through the overwhelming amount of news published daily by suggesting relevant articles based on their interests and reading behavior. Recommender systems have proven successful over time in numerous domains [124], ranging from music [31,106], movies [7,110], or books recommendation [36,62,77], to e-commerce [141,142,149], travel and tourism [15], or research paper recommendation [5].

In comparison to these domains, news recommendation poses additional challenges which hinder a direct transfer of traditional recommendation techniques [132]. Firstly, the relevance of news changes quickly within short periods of time and is highly dependent on the time sensitiveness and popularity of articles [84,120]. Secondly, articles may be semantically related and users’ interests evolve dynamically over time, meaning that it is not trivial to accurately capture the preferences of individual users [120]. Thirdly, common limitations of recommender systems (i.e. the cold-start problem, data sparsity, scalability), are further intensified by the greater item churn of news [39], the fact that usually user profiles are constrained to a single session [44], and that their feedback is typically collected implicitly, from their reading behavior rather than explicitly provided during a session [101]. Additionally, news articles contain a large number of knowledge entities and common sense knowledge, which are not incorporated in conventional news recommendation methods [170].

Enhancing classic information retrieval and recommendation methods with external information from knowledge bases has been proposed as a potential solution for some of the aforementioned shortcomings of recommender systems in the news domain. Knowledge graphs are directed, labeled heterogeneous graphs which describe real-world entities and their interrelations [126]. Knowledge-aware recommender systems inject information contained in knowledge graphs or domain-specific ontologies to capture information and reveal patterns that are not contained directly in an item’s features [66]. In the case of news recommendation, such knowledge-enhanced models have been developed to capture the semantic meaning and relatedness of news, remove ambiguity, handle named entities, extend text-level information with common sense knowledge, discover knowledge-level connections between news, and overcome cold-start and data sparsity issues.

Previous works provide overviews of this field from two directions. On the one hand, surveys such as [66] or [59], focus on knowledge-aware recommender systems applied to a variety of domains, such as movies, books, music, or products. Although a few of the discussed models come from the news domain, none of these works extensively review how external knowledge can be used to enhance news recommendation. On the other hand, a vast number of surveys analyze the news recommendation problem from various angles, including challenges and algorithmic approaches [11,14,47,84,94,95,120,132], performance comparison in online news recommendation challenges [43,44], user profiling [67], news features-based methods [129], or impact on content diversity [114]. However, the focus of these studies is not on the use of external knowledge resources. In contrast to existing studies, this survey focuses on categorizing and examining knowledge-aware news recommender systems, developed either specifically for or evaluated also on the news domain, as a solution for enhancing recommendations and overcoming limitations of traditional recommendation models. The analysis of such systems covers both a review of the algorithmic approaches used for computing recommendations, as well as a comparison of evaluation methodologies and a discussion of limitations and research gaps.

The contributions of the paper are threefold:

We propose a

This survey aims to provide a

We examine the limitations of existing models and open issues in the field of knowledge-aware news recommender systems, and we identify eight potential

The rest of the article is structured as follows. Section 2 introduces recommender systems and outlines challenges specific to the news domain, while Section 3 outlines the methodology used in this survey, including the search strategy, the sources, the inclusion and exclusion criteria, as well as the study execution process. Section 4 covers related work in news and knowledge-aware recommender systems. Section 5 introduces and defines commonly used notations and concepts, and analyses different aspects of knowledge-aware news recommenders. Section 6 classifies and discusses knowledge-aware news recommender systems, whereas Section 7 investigates various evaluation approaches adopted by the different models. Section 8 discusses open issues identified in the field. We close with a short summary in Section 9.

Challenges in news recommendation

Recommender systems consist of techniques that filter information and generate recommendations of items deemed potentially interesting for users, based on their preferences and past behavior, in order to help individuals overcome information overload [136]. User’s preferences are learned using either explicit (e.g. ratings) or implicit (e.g. browsing history) feedback [79]. Recommender systems are generally categorized into collaborative filtering, content-based, and hybrid methods, based on the underlying algorithm. Collaborative filtering systems recommend items liked in the past by users with similar preferences to the current user [1]. In content-based algorithms, the recommendations depend only on the user’s past ratings of items, meaning that the suggested items will have similar characteristics to the ones preferred in the past by the current user [1]. Hybrid models combine one or more types of recommendation approaches to alleviate the weaknesses of a single technique, such as the cold-start problem (which refers to the difficulty in the computation of the recommendations for new items, without ratings, or new users, without a profile) or the over-specialization issue (i.e. the lack of diversity and serendipity in results) [18].

The unique characteristics of news not only distinguish them from items in domains such as online retail, movies, music, or tourism, where recommender systems have already proven successful, but also impede the straightforward application of conventional recommendation algorithms to the task of news recommendation. A large quantity of news is published every day, with articles being continuously updated. Such a

Furthermore, the user’s interests evolve over time as individuals display both

In addition to the previously described challenges, in the news domain, users are usually not required to sign in and create profiles in order to read articles.

Another related challenge is that the users rarely provide explicit feedback in terms of likes and ratings, and, unlike for, e.g., online retail, there is no difference between

Furthermore, users often read multiple news stories in a sequence [132]. Although

News articles often describe events that occur in the world, which can be represented in terms of

Additionally, news recommendations can also be subjected to

Another significant challenge for the news domain is the existence of

Methodology

As aforementioned, this survey aims to provide a comprehensive review of knowledge-aware news recommender systems. The following subsections will describe the methodology used for conducting the study. More specifically, we firstly present our search strategy, including the platforms and queries used to retrieve relevant publications. Afterwards, we discuss the criteria for including and excluding papers from our study, followed by the description of the selection process.

Search strategy

The search strategy of our survey consists in defining a set of queries for retrieving relevant publications from a list of sources. The results are then de-duplicated, as explained in the following paragraphs.

Search queries

We defined two queries, targeting the task of

Search strings used in the search process

Search strings used in the search process

The following bibliographic databases and archives constitute the sources used for the literature search: (i) DBLP1

The results collected from the previously specified sources are then merged and de-duplicated in a threefold process. Firstly, for all the publications retrieved during the keyword-based search, we gather the associated bibtex files produced by each of the digital libraries and store them using the Zotero7

Selection inclusion and exclusion criteria

The publications retrieved during the keyword-based search need to be further filtered in order to eliminate false positives, which are irrelevant for the current survey. Consequently, a pre-defined set of inclusion criteria (Section 3.2.1) are applied to the retrieved papers in two stages, as described in Section 3.2.2.

Selection criteria

The list of inclusion criteria displayed in Table 2 was developed based on the goals of the survey in order to filter out irrelevant publications. Each criterion is composed of both an

Selection process

The study selection process is composed of two phases.8 The corresponding spreadsheets for both phases are available at:

Number of papers in different phases of the selection process

This section gives an overview of surveys published in the areas of news recommendation and knowledge-aware recommender systems.

News recommender systems

Several surveys on news recommender systems and corresponding issues have been conducted. A comparison and evaluation of content-based news recommenders are performed in [11]. Borges and Lorena [14] first provide a high-level overview of recommender systems in general, including similarity measures and evaluation metrics, followed by an in-depth analysis of six models applied in the news domain. A more general overview and comparison of the mechanisms and algorithms used by news recommendation approaches, as well as corresponding strengths and weaknesses, is provided by Dwivedi and Arya [47].

Özgöbek et al. [120] identify the challenges specific to the news domain and discuss twelve recommendation models according to the targeted problems, without considering evaluation approaches. In contrast to these studies, Karimi et al. [84] provide a comprehensive review of news recommender systems, not only by taking into account a large number of challenges and algorithmic approaches proposed as a solution, but also by discussing approaches and datasets used in evaluating such systems, as well as proposing future research directions from the perspectives of algorithms and data, and the aspect of evaluation methodologies.

Li et al. [94] review issues characterizing the field of personalized news recommendation and investigate existing approaches from the perspectives of data scalability, user profiling, as well as news selection and ranking. Additionally, the authors conduct an empirical study on a collection of news articles gathered from two news websites in order to examine the influence of different methods of news clustering, user profiling, and feature representation on personalized news recommendation. More recently, Li and Wang [95] analyzed state-of-the-art technologies proposed for personalized news recommendation, by classifying them according to seven addressed news characteristics, namely data sparsity, cold-start, rich contextual information, social information, popularity effect, massive data processing, and privacy problems. Furthermore, they discuss the advantages and disadvantages of different kinds of data used in personalized news recommendation, as well as open issues in the field [95].

In comparison to the previous general studies, Harandi and Gulla [67] investigate and categorize approaches used for user profiling in news recommendation according to the problems addressed and the types of features used. Additionally, Qin and Lu [129] survey feature-based news recommendation techniques, which they categorize into location-based, time-based (i.e. further classified into real-time and session-based), and event-based methods.

Lastly, Feng et al. [52] conduct a systematic literature review of research published in the area of news recommendation in the past two decades. They firstly classify and discuss challenges from this domain according to the three main types of recommendation techniques. Various recommendation frameworks are then categorized according to application domain, such as social media-based, semantics-based, and mobile-based systems. Even though Feng et al. [52] briefly review a small number of semantic-based recommenders, their analysis is limited to older models, and does not include any of the newer approaches of the past five years. Furthermore, the authors briefly examine evaluation approaches and datasets used, before discussing which of the numerous challenges of news recommendation have been addressed by the surveyed recommenders [52].

Although these surveys provide comprehensive overviews of news recommendation methods, domain-specific challenges, and evaluation methodologies, they do not discuss knowledge-aware models or the latest state-of-the-art recommendation methods. In contrast, our survey focuses solely on news recommender systems that incorporate external knowledge to enhance the recommendations and to overcome the limitations of conventional recommendation techniques.

Knowledge-aware recommender systems

Knowledge graphs, a type of directed heterogeneous networks, describe real-world entities (represented as nodes) and multiple kinds of relations between them (represented as edges), either spanning multiple domains (e.g. Freebase [12], DBpedia [92], YAGO [151], Wikidata [167], Microsoft Satori [10]) or focusing on a particular field (e.g. Bio2RDF [6]) [49,126]. In addition, such graphs can capture higher-order relations connecting entities with several related attributes [66].

This strong representation ability of knowledge graphs has attracted the attention of the research community working on developing and improving recommender systems for several reasons. Firstly, using knowledge graphs as side information in recommendation models can help diminish common limitations, such as data sparsity and the cold-start problem [66]. Secondly, the precision of recommendations can be improved by extracting latent semantic connections between items, while the diversity of results can be increased by extending the user’s preferences taking into account the variety of relations between items encoded in a knowledge graph [59,183]. Another advantage of using knowledge graphs as background information is improving the explainability of recommendations, to ensure trustworthy recommendation systems, by considering the connections between a user’s previously liked items and the generated suggestions, represented as paths in the knowledge graph [183].

Guo et al. [66] provide a detailed review and analysis of knowledge graph-based recommender systems, which are classified into three categories, according to the strategy employed for utilizing the knowledge graph, namely embedding-based, path-based and unified methods. In addition to comparing the algorithms used by the three types of methods, the authors also analyze how knowledge graphs are used to create explainable recommendations. Lastly, the survey clusters relevant works according to their application and introduces the datasets commonly used for evaluation in each category [66].

Recent advancements in deep learning techniques for graph data, in the form of Graph Neural Networks (GNN) [183,196], have given rise to new knowledge-aware, deep recommender systems. Gao et al. [59] are the first to provide a comprehensive overview of Graph Neural Network-based Knowledge-Aware Deep Recommender (GNN-KADR) systems, in which they analyze recommendation techniques, discuss how challenges such as scalability or personalization are addressed, and briefly summarize the domain-specific datasets and metrics used for evaluation, before suggesting a number of directions for future research.

Gao et al. [59] categorize GNN-KADRs depending on the type of graph neural network components used for recommendation. More specifically, graph neural networks are comprised of an aggregator, that combines the feature information of a node’s neighborhood to obtain the context representation, and an updater, which uses this contextual information together with the input information for a given graph node in order to compute its new embedding. According to Gao et al. [59], aggregators are divided into relation-unaware (i.e. the relation information between nodes is not encoded in the context representation) and relation-aware aggregators (i.e. the information contained in different relations is considered in the context representation). The latter category is further split into relation-aware subgraph aggregator and relation-aware attentive aggregator, depending on how the relations in the knowledge graph are modeled in the framework [59]. The first subcategory creates multiple subgraphs for each relation type found in a node’s neighborhood graph, while the second encodes the semantic information contained in the edges of the knowledge graph using weights which measure how related different knowledge triples are to the target node [59]. Similarly, updaters are also categorized into three clusters, namely context-only updaters (i.e. only the node’s context representation is used to produce its new embedding), single-interaction updaters (i.e. both the target node’s current embedding, as well as its context representation are used to obtain its updated representation), and multi-interaction updaters (i.e. different binary operators combine multiple single-interaction updaters), where the first two groups of updaters are more often encountered [59].

GNN-based recommender systems are investigated also by Wu et al. [183], who classify the recommendation models based on whether the models consider the item’s ordering (i.e. general vs. sequential methods) and on the type of information used (i.e. without side information, social network-enhanced, and knowledge graph-enhanced). According to the proposed taxonomy of Wu et al. [183], knowledge-aware models can be found only in the group of general recommender systems. In this category, four representative recommendation frameworks are examined from the aspects of graph simplification, multi-relation propagation, and user integration.

The research commentary of Sun et al. [155] consists of an extensive, systematic survey of recent advancements in recommender systems that use side information. The models, mostly hybrid techniques, are analyzed from two perspectives. On the one hand, Sun et al. [155] categorize the models according to the evolution of fundamental methodological approaches into memory-based and model-based frameworks, where the latter category is further split into latent factor models, representation learning models and deep learning models. On the other hand, the recommender systems are classified based on the evolution of side information used for recommendation, into models using structural data and models using non-structural data. The first group includes information in the form of flat features, network features, feature hierarchies, and knowledge graphs, whereas the second consists of text, image, and video features [155].

In the surveys discussed above, knowledge-aware news recommender systems are rarely analyzed. In comparison to these works, the current survey focuses on the investigation of approaches for injecting external knowledge only into the news recommendation model. To this end, it provides a categorization and an extensive overview of the knowledge-aware recommender systems developed either for or evaluated also in the news domain.

Definitions and categorization

This section firstly introduces and defines commonly used concepts and notations. Afterwards, it provides an overview of knowledge-aware news recommender systems according to multiple criteria.

Definitions

Firstly, a minimal set of concepts and notations referred to in the rest of the article are defined. Bold uppercase characters denote matrices, while bold lowercase characters generally indicate vectors. The notations used throughout this article are illustrated in Table 4, unless specified otherwise.

Commonly used notations

Commonly used notations

An

In the recent years, knowledge graphs comprising a large number of instances have been developed and utilized also in news recommender systems.

A

In the scope of this paper, we consider concepts in knowledge graphs

The

Knowledge-aware news recommendation models can be investigated according to multiple criteria, ranging from the used knowledge resource to target function types or addressed challenges.

Types of recommendation techniques

News recommendation systems generally adopt one of the three main techniques for predicting whether a user will interact with a certain article, namely content-based, collaborative filtering, and hybrid. However, content-based approaches are the most widely used in the field of news recommendation [84].

Knowledge base

The knowledge resources used by knowledge-aware recommender systems can be grouped into domain ontologies and knowledge graphs. In the remainder of the paper, these will be referred to as

In the latter category, one can distinguish between open source and commercial knowledge graphs. In the first subgroup, cross-domain knowledge graphs such as Wikidata, DBpedia, and Freebase are widely used in news recommender systems. Freebase [12] was initially launched by Metaweb in 2007, and later acquired by Google in 2010, before being shut down in 2015 [126]. The latest version of Freebase, available at Google’s Data Dumps10

WordNet [111], a large English lexicon containing nouns, verbs, adjectives, and adverbs grouped into synsets (i.e. sets of synonyms), which are further interconnected via semantic relations of antonymy, hyponymy, meronymy, troponomy, or entailment, is often used in knowledge-aware news recommender systems for word sense disambiguation. More specifically, each word in WordNet is associated with a set of senses, which denote the set of possible meanings that the word might have. For example, the noun “Jupiter” can refer to either the planet in the solar system or the supreme god of the Romans. WordNet 3.014

In the subgroup of commercial knowledge bases, Satori [10], the knowledge graph proposed by Microsoft, is the most often used one, especially by recent deep learning-based news recommender systems. Although very little information about the data contained in Satori is publicly available, it was estimated to contain in 2012 approximately 300 million entities and 800 million relations [126].

News recommendation models use knowledge bases by exploiting their different structures in order to extract either semantic, structural, or both types of information. A few knowledge-aware news recommender systems exploit only the semantic information contained in a knowledge graph or ontology, by extracting concepts or entities that appear in a news article, which will be denoted as

Target function

Two main target functions can be distinguished in news recommendation models, namely click-through rate (CTR) prediction and item ranking. Models classified in the first group aim to predict the probability that the user will click on the target article, whereas methods in the second group recommend the top

Addressed challenge

In addition to enhancing the accuracy of recommendations, knowledge-aware news recommender systems aim to address different challenges of the news domain or limitations posed by conventional recommendation techniques. Several news articles, written in different manners, using semantically related terms, can describe the same piece of news, and numerous words have different meanings depending on the context in which they are used. While humans can easily distinguish ambiguous words, or words connected via certain semantic relations, such as synonyms, this constitutes a challenge for recommendation models using text representations. Knowledge-aware recommender systems propose to remove such ambiguity from text by representing an article using only disambiguated knowledge entities or concepts from a controlled vocabulary, instead of all the terms. In turn, this leads to faster computations, since the model is required to consider a limited number of concepts or entities, which is significantly smaller than the total number of words contained in an article. Moreover, the semantic meaning of news, as well as the semantic relatedness of concepts (i.e. news describing similar or related concepts might indicate different interests of a user) can be captured by further considering the relations between the different concepts found in an article.

News articles contain a large number of named entities, used to denote information regarding the events described, such as the location, actors involved, time, or what the event refers to. However, named entities are not taken into account in traditional text-based recommendation models. In contrast, knowledge-aware techniques handle named entities by extracting them from the text and enriching them with external information encoded in knowledge graphs. Furthermore, using external information for recommendation can help overcome the data sparsity and cold-start problems, as articles can be connected using relations in the knowledge graph between the entities extracted from text, such that new items without user feedback can also be included in the recommendations.

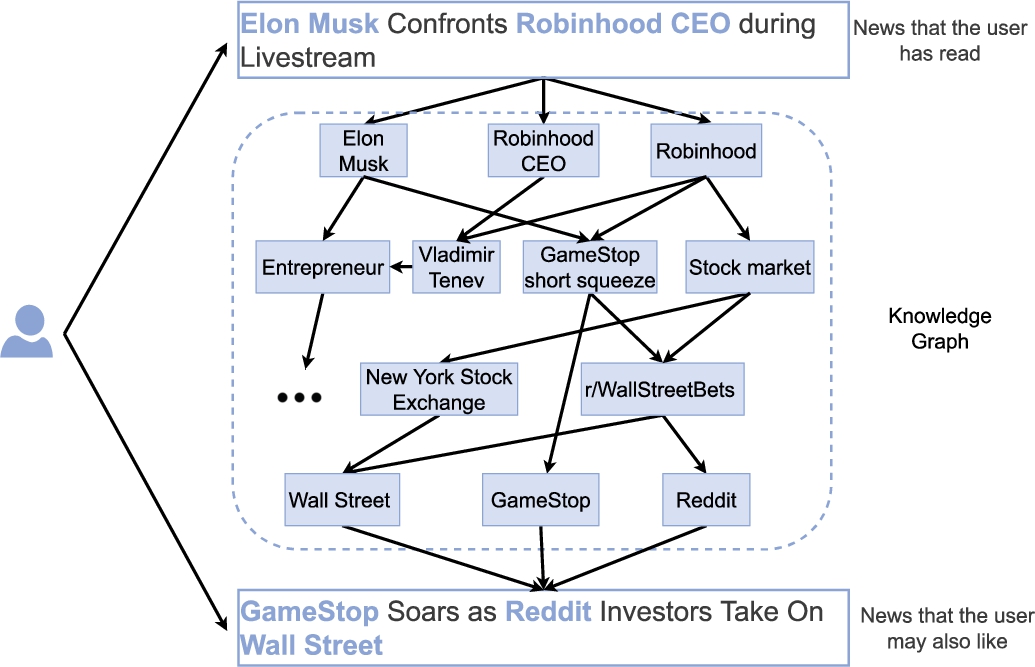

Moreover, injecting external knowledge into the recommendation model has three additional benefits. Firstly, it extends text-level information with common sense knowledge which is encoded in knowledge graphs but cannot be extracted only from an article’s text. For example, a user reading the titles of the news articles in Fig. 1 will probably know that

Illustration of a knowledge graph-enhanced news recommender system (reproduced from [170]).

Overview of knowledge-aware news recommendation approaches. We present the model’s category, abbreviated name, publishing year, type of recommendation technique, external knowledge resource and its used structures, target function, and challenges addressed by injecting external knowledge in the recommendation model. “Accuracy” is not explicitly mentioned as a challenge, as all discussed models aim to improve recommendations on this measure. The following abbreviations are used: NNECM = Non-Neural, Entity-Centric Methods, NNPM = Non-Neural, Path-Based Methods, NM = Neural Methods, RS = Recommender System, CB = Content-Based, CF = Collaborative Filtering, H = Hybrid, DO = Domain Ontology

Overview of knowledge-aware news recommendation approaches. We present the model’s category, abbreviated name, publishing year, type of recommendation technique, external knowledge resource and its used structures, target function, and challenges addressed by injecting external knowledge in the recommendation model. “Accuracy” is not explicitly mentioned as a challenge, as all discussed models aim to improve recommendations on this measure. The following abbreviations are used: NNECM = Non-Neural, Entity-Centric Methods, NNPM = Non-Neural, Path-Based Methods, NM = Neural Methods, RS = Recommender System, CB = Content-Based, CF = Collaborative Filtering, H = Hybrid, DO = Domain Ontology

(Continued)

(Continued)

Knowledge-aware news recommendation systems can be classified into different categories based on how external knowledge is injected in the recommendation model, on the used structures of the knowledge base, as well on how latent representations of users and articles are computed. Our proposed taxonomy, illustrated in Fig. 2, distinguishes between the methods based on how the latent representations are generated from entities and/or concepts in a knowledge base, i.e., Non-Neural Methods and methods based on neural networks (Section 6.3). We further split Non-Neural Methods into Entity-Centric (Section 6.1) and Path-Based (Section 6.2), depending on whether the recommendation approach defines the similarity between users and news articles based on distances between the concepts or entities from the knowledge base. To support readers reviewing the literature, the surveyed models are listed in Table 5 according to the aforementioned criteria.

The categorization of knowledge-aware news recommender systems. We divide existing frameworks into three categories, based firstly on how latent representations of user and news article profiles are generated, and secondly, on the type of similarity measure used.

Factorization models constitute some of the state-of-the-art recommendation techniques in various fields [9,87,147], and have already been adopted in the area of news recommendation [131,185]. Moreover, latent factor models have also been adapted to support knowledge graphs in hybrid knowledge-aware recommendation engines [30,146,154,191]. Nonetheless, as it can be observed from Table 5, factorization models are rarely used by knowledge-aware news recommender systems. More specifically, only one model from the 39 surveyed ones uses matrix factorization (see Section 6.3). Hence, we have not added a dedicated subcategory for such methods to our taxonomy.

Recommenders based on factorization models are collaborative filtering-based approaches. However, this recommendation technique is the least adopted one in the domain of news recommendation [132]. Raza et al. [132] have shown that content-based methods are the most widely used recommendation techniques in this field, followed by hybrid approaches. This phenomenon can be explained by the challenges faced by recommender systems in the news domain, explained in Section 2, such as the lack of explicit feedback (i.e. ratings), or limited amount of data available for user profiling. In turn, this affects collaborative-filtering approaches such as factorization models, which rely on a large amount of information regarding the user-item interactions in order to generate accurate recommendations.

Another categorization of knowledge graph-based recommender systems divides models into different categories based on how they utilize the knowledge graph information, namely into embedding-based methods, path-based methods, and unified methods [66]. Embedding-based methods encode the knowledge graph information by means of knowledge graph embeddings and directly use it to enhance the representations of users or items. This category is further split into models that construct knowledge graphs of items and their relations, extracted from a dataset or external knowledge base, and those that build user-item graphs, in which the users, items, and their attributes form the graph’s nodes, while user-related and attribute-related relations constitute the edges [66]. In our survey, this category overlaps with the neural-based models, which use a form of knowledge graph embedding, as it will be explained in Section 6.3. However, we do not further differentiate between neural-based recommenders depending on how the underlying knowledge graph is created.

Guo et al.’s [66] second category of path-based methods includes those recommenders that leverage connectivity patterns of entities in the user-item graph. In this context, this category has similarities with our proposed non-neural, path-based methods. However, in our case, the connectivity patterns are exploited from any source of structured knowledge base and are not restricted to user-item graphs.

The third category proposed by Guo et al. [66], namely unified methods, incorporates models that combine the first two types of techniques by leveraging both the connectivity information, as well as the semantic representation of entities and relations. This class, containing the RippleNet [168] and RippleNet-agg [169] models, overlaps again with our neural-based methods due to the neural nature of latent representations used to profile items and users.

Lastly, Sun et al. [155] classify recommender systems on two dimensions. The first dimension is concerned with the recommendation technique and differentiates between memory-based methods, latent factor models, representation learning models, and deep learning models. The second dimension focuses on the type of side information used, namely structural data (i.e. flat, network, hierarchical features, and knowledge graphs) and non-structural data, in the form of text, image, and video features. According to this categorization scheme, models classified by Sun et al. [155] under deep learning methods that use knowledge graphs as side information would correspond to neural-based methods in our taxonomy.

The following subsections analyze the knowledge-aware recommender systems presented in Table 5 according to the taxonomy introduced above. For each category of models, the overall framework, as well as representative models are investigated.

Recommender systems classified in this category represent the profiles of users and news articles using latent representations generated from concepts and/or entities in a knowledge base using non-neural methods. Generally, such representations are computed using a Vector Space Model [140], most often variants of the Term Frequency-Inverse Document Frequency (TF-IDF) model [139], modified to take into consideration side information from a knowledge base. The similarity between articles and the preference of a user for a candidate article are determined using different semantic or non-semantic similarity metrics.

Overall framework

Non-neural, entity-centric methods first create a vector representation of both the target article and the user profile, where the latter consists of the user’s reading history. Afterwards, the models compute the similarity between the two representations and recommend a list of the top

Representative models

In this subsection, we discuss 12 representative non-neural, entity-centric recommendation techniques.

Cantandor et al. [21,23] developed a

A personalized content retrieval approach assigns a relevance measure

Following the construction of the runtime context, Cantandor et al. [23] introduce a semantic preference spreading strategy which expands the user’s initial preferences through semantic paths towards other concepts in the ontology. This contextual activation of user preferences constitutes an approximation of conditional probabilities. According to this formulation, the probability that concept

Consequently, the semantic spreading mechanism requires weighting every semantic relation

The context-aware personalized recommendation model computes the relevance measure of an item

The weights spreading strategy addresses both the cold-start and the data sparsity problems, whereas incorporating contextual information captures the changing utility of a news article to a user based on temporary circumstances. While this model applies to single users, Cantandor et al. [23] also employ a

Semantic Communities of Interest are derived from the users’ relations at different semantic levels [23]. More specifically, each ontology concept

Cantandor et al. [23] propose two recommendation models that use the extracted latent communities of interests among users. On the one hand, model

Here

On the other hand, model

The same context-aware and multi-facet, group-oriented hybrid recommendations are also adopted by Cantandor et al. [24] to generate

Concept Frequency – Inverse Document Frequency (

In the CF-IDF recommender, each user’s interests are represented as a vector of CF-IDF weights

The CF-IDF weights are computed similarly to TF-IDF weights. Firstly, the

A major difference between the TF-IDF and CF-IDF lies in the fact that the latter considers only the ontology concepts contained in the text, instead of all the terms. Therefore, it assigns a larger value to the concepts deemed more important, and results in faster computations, as it considers a smaller amount of elements during similarity computations. In turn, this also implies that CF-IDF can assign a single representation to multi-word expressions (e.g. “Elon Musk” is one concept), compared to TF-IDF which would compute an embedding for each word individually (e.g. for “Elon” and for “Musk”). Consequently, if the concepts are disambiguated, CF-IDF can handle ambiguous terms, in contrast to TF-IDF.

The Synset Frequency – Inverse Document Frequency (

The synsets in both profiles are weighted using SF-IDF weights, obtained from TF-IDF by replacing terms with synsets

However, SF-IDF yields a limited understanding of the semantics of news. Therefore,

Hence, the item’s and user’s profiles,

Furthermore, SF-IDF+ not only uses extended synsets instead of synsets, as is the case for the SF-IDF model, but also assigns different weights

A similar strategy to the one proposed in SF-IDF+ is also adopted by The Bing similarity is comparable to the Normalized Google Distance [34].

The SF-IDF+ profiles and weights are built and calculated according to Eqs (12)-(15). For the Bing component, new user and item profiles are built using sets of named entities extracted from the text with a named entity recognizer, denoted as follows:

Subsequently, the Bing search engine is used to compute the page count

The SF-IDF+ similarity

This approach of enhancing semantics-driven recommender systems with named entity similarities using the Bing page counts are prototypical also for other models, such as

An approach combining CF-IDF and SF-IDF, which aims to address the ambiguity problem by representing news articles using key concepts, synonyms, and synsets from a domain ontology, is represented by the

In Eq. (22),

In Eq. (23), the vectors

The

In comparison to item profiles of models such as CF-IDF (Eq. (8)), in Eq. (24) the total number of concepts appearing in an article’s profile is represented by the number of distinct concepts

The enclosure similarity between two concepts

The Ranked Semantic Recommendation (

Each concept

Another assumption underlying RSR [78] is that the more articles containing concept

The final rank of every concept in the user’s extended profile, denoted

A min-max normalization is applied to the extended user profile to ensure that the ranks are in the range

The Ranked Semantic Recommendation 2 (

Another difference to RSR is that RSR2 uses different weight values to determine the concepts’ ranks. The rank of a concept in the extended article representation

Non-neural, entity-centric news recommendation techniques are summarized in terms of three aspects:

Path-based methods (non-neural)

The profiles of users and news articles in non-neural, path-based recommendation methods are represented using concepts or entities from a knowledge base. Similar to the models in the previous section, some of the recommendations approaches classified here generate latent representations of these concepts or entities using non-neural methods. However, in contrast to non-neural, entity-centric recommenders, path-based ones define the user-item and item-item similarities using metrics that take into account the distance between concepts and/or entities from the knowledge base.

Overall framework

The majority of methods in this category represent a news article as a set of tuples consisting of the concepts contained in an ontology and their corresponding weights. Formally, this can be written as

Representative models

In the following, we investigate six representative recommendation techniques for this category.

Three types of partial matches between concepts were defined by Maidel et al. [105] based on hierarchical distance. A

A similarity score

A different approach is adopted in

The centrality score ensures that concepts with shorter and stronger connections to the top-ranked concept will be assigned higher importance than those situated further away in the ontology or having weaker relations. The centrality weight is complemented by the prestige of a concept in the ontology, a method that ranks the concepts based on their incoming relations. The more a concept is referred to via different relations by another concept (i.e. the larger its in-degree), the higher its prestige in the ontology. Consequently, the final importance score of a concept is computed as the product of centrality and prestige (denoted as rank in Eq. (30)), weighted by a constant value

The final weight

Similar to SF-IDF, the Semantic Similarity (

Furthermore, a subset is created from

The final similarity score of an unread article is given by the sum of all combinations’ similarity rank

The WordNet taxonomy constitutes a hierarchy of “is-a” relationships between its nodes which, in turn, constitute synsets. As such, Capelle et al. [26] propose five semantic similarity measures to calculate the similarity rank

The two remaining metrics, of Leacock and Chodorow [90]

Similar to Bing-SF-IDF+,

Lastly, the Bing and the SS components are combined in the final BingSS similarity score using a weighted average with predefined weight

The similarity between the profile of a target news article and user is computed in the following way [130]:

According to Eq. (44), two concepts

In contrast to the previous models,

The shortest distance between two entities over the knowledge graph represents the shortest path length between the corresponding nodes, mathematically denoted as

Lastly, the similarity between the two articles is computed as the pair-wise shortest distance over the union of their subgraphs [83], as shown in Eq. (46).

This method provides a symmetric average minimum row-wise distance which places higher importance on the entity pairs with the highest likelihood of co-occurrence in news article. Additionally, a weighted shortest distance between the articles could be used by weighting the edges of the subgraphs and computing the sum of all the weights of the traversed edges [83]. For the weighted SED algorithm, different weighting schemes could be used, including the relation weighting scheme, which assigns edge weights based on the number of shared neighbors of two entity nodes from an article.

Summary

Non-neural, path-based knowledge-aware news recommender systems are summarized from the following perspectives:

Neural network-based methods

In recent years, the rapid advancements in the field of deep learning have also led to a paradigm shift in the domain of news recommendation. State-of-the-art knowledge-aware recommendation models combine latent representations of news articles, generated using neural networks, with external information contained in knowledge graphs, encoded by means of knowledge graph embeddings, defined below. Given a dimensionality

Frameworks classified in this category generally use a knowledge distillation process to incorporate side information in their recommendations. Firstly, named entities are extracted from news articles using a named entity recognizer. Secondly, these are connected to their corresponding nodes in a knowledge graph using an entity linking mechanism. Thirdly, one or multiple subgraphs are constructed using the linked entities, their relations, and neighbors from the knowledge graph. Afterwards, the obtained graphs are projected into a continuous, lower-dimensional space to compute a representation for their nodes and edges. Thus, these models use both the structural and semantic information encoded in knowledge graphs to represent news. Figure 3 exemplifies this process.

Illustration of the knowledge distillation process used by neural-based recommendation models (reproduced from [170]).

In contrast to models from the previous categories, the neural-based recommenders we reviewed for this survey predict the probability that a user will click on a target article, namely the click-through rate. We consider several factors underlying these recommendation models:

The architectures of 11 neural-based news recommendation frameworks are discussed in this section.

The Collaborative Entity Topic Ranking (

The user behavior component takes as input the user-news interaction matrix

The

The user-item interaction matrix is factorized into a matrix

In the following step, topic analysis is conducted at the entity level, where entities belonging to the same topic are sampled from a Gaussian distribution [193]. The third module learns knowledge graph embeddings with the TransR model [98]. The probability of observing a quadruple

The

The inner-circle in Fig. 4 exemplifies this concept. DKN takes as input the embedding of

Illustration of ripple sets of

One of the input elements to the recommendation model is constituted by the embedding of an entity’s context, defined in the following manner.

The

The first level in DKN’s architecture is represented by a knowledge-aware convolutional neural network (KCNN), namely the convolutional neural network (CNN) framework proposed by Kim [85] for sentence representation learning extended to incorporate symbolic knowledge in the text representations. Firstly, the entity embeddings

Secondly, the matrices containing word embeddings

The word-aligned KCNN applies multiple filters of varying sizes to extract patterns from the titles of news, followed by max-over-time pooling and concatenation of features to obtain the final representation

Additionally, DKN employs an attention network to capture the diverse interests of users in different news topics by dynamically aggregating a user’s history according to the current candidate article [170]. The second level of the DKN framework concatenates the embeddings of a target news

Given the normalized attention weights, a user

Lastly, DKN [170] predicts the click probability of user

The recommendation model of

Secondly, the item-level attention model computes the final representation of news article

The attention weights of words are calculated as shown in Eq. (56), while those of entities and context can be computed analogously.

Thirdly, the user-level self-attention module computes the final representation of the user

Fourthly, the vector representation of the user and the target news article are combined using a multi-head attention module [163] with ten parallel attention layers. Lastly, the output of the fourth module is passed through a fully connected layer to calculate the user’s probability of clicking the candidate article.

A different approach is constituted by

Giving the knowledge graph

The

The user’s interests in certain entities are extended from the initial set along the edges of the knowledge graph, as shown in Fig. 4. The further the hop, the weaker the user’s potential preference in the corresponding ripple set becomes since entities that are too distant from the user’s initial interests might introduce noise in the recommendations. This behavior is exemplified in Fig. 4 by the fading color of the concentric circles denoting ripple sets. The closer a neighboring entity is to the center seed, the more related the two are assumed to be. In practice, this is controlled by the number

In the first step, RippleNet calculates the probability

The 1-order response

Equations (60) and (61) theoretically illustrate the preference propagation mechanism of RippleNet, through which the user’s interests are spread from the initial set

An inward aggregation version of this model, denoted

In RippleNet-agg, higher-order proximity information is captured by encoding the ripple sets in the final prediction function at the item-end, compared to the user-end, as it was the case in the original RippleNet model. To this end, the topological proximity structure of a news article

Lastly, the representations of the entity

Although the aggregation function in Eq. (62) is represented by the sum operation followed by a nonlinear transformation

In contrast to previous models, the Multi-task feature learning approach for Knowledge graph Recommendation (

MKR is composed of three modules. The recommendation component uses as input two raw feature vectors

The features of news article

The module outputs the probability of user

The goal of the KGE module is to learn the vector representation of the tail entity of triples in the knowledge graph. For a triple

The two task-specific modules are connected using cross&compress units which adaptively control the weights of knowledge transfer between the two tasks. The unit takes as input an article

Afterwards, the compress operation projects the cross features matrix back into the latent feature spaces

Although such units are able to extract high-order interactions between items and entities from the two distinct tasks, Wang et al. [172] only employ them in the model’s lower layers for two main reasons. On the one hand, the transferability of features decreases as tasks become more distinct in higher layers. On the other hand, both item and user features, as well as entity and relation features blend together in deeper layers of the framework, which deems them unsuitable for sharing as they lose explicit association.

The Interaction Graph Neural Network (

The knowledge-based component jointly learns knowledge-level and semantic-level representations of news, similar to KCNN. More specifically, the embedding matrices of words, entities, and contextual entities are stacked before applying multiple filters and a max-pooling layer to compute the representation of news. In contrast to DKN, in IGNN the embeddings of entities and context, obtained with TransE [13], are not projected into the word vector space before stacking. However, as observed by Wang et al. [170], this simpler approach disregards the fact that the word and entity embeddings are learned using distinct models, and hence, are situated in different feature spaces. In turn, this means that all three types of embeddings need to have the same dimensionality in order to be fed through the convolutional layer. Nonetheless, this might be detrimental in practice, if the ideal vector sizes for the word and entity representations differ.

Higher-order latent collaborative information from the user-item interactions is extracted using embedding propagation layers that integrate the message passing mechanism of GNNs [128] using the IDs of the user and candidate news as input. This strategy is based on the assumption that if several users read the same two news articles, this is an indication of collaborative similarity between the pair of news, which can then be exploited to propagate information between users and news. The propagation layers inherit the two main components of GNNs, namely message passing and message aggregation. The former passes the information from news

The latter component aggregates the information propagated from the user’s neighborhood with the current representation of the user, before passing it through a LeakyReLU transformation function, namely

The KCNN results in a content-based representation of news and of users, where the latter is the result of a mean pooling function applied to the embeddings of the user’s previously read articles. Similarly, the

In addition to using side information to extract latent interactions among news, the Self-Attention Sequential Knowledge-aware Recommendation system (

The encoder of the sequential-aware component of Saskr is composed of an embedding layer, followed by multi-head self-attention and a feed-forward network. The embedding layer projects an article’s body in a

The article’s embedding can be obtained in two ways. On the one hand, it can be computed as the sum of the pre-trained embeddings of its words, weighted by the corresponding TF-IDF weights, as

These representations are then fed into a multi-head self-attention module [163], to obtain the intermediate vector

Given the embedding

The knowledge-aware module uses external knowledge from a knowledge graph to detect connections between news. The knowledge-searching encoder extracts entities from the body of articles and links them to predefined entities in a knowledge graph for disambiguation purposes. The set of identified entities is additionally expanded with 1-hop neighboring entities. The contextual entities are embedded using word embeddings pre-trained with a directional skip-gram model [150]. The resulting contextual-entity embedding matrix

The final recommendation score for candidate news article

Liu et al. [100] propose a Knowledge-aware Representation Enhancement model for news Documents (

In Eq. (73),

The next, context embedding layer encodes the dynamic context of entities from a news article, determined by their position, frequency, and category. The entity’s position in the article (i.e. in the title or body) is encoded using a bias vector

The entities’ representations are aggregated into a single vector in the information distillation layer, by means of an attention mechanism that takes into account both the context-enhanced entity vectors and the original embedding of an article to compute its final representation. More specifically, the attention weights

The knowledge-aware document vector

In addition to injecting external knowledge into the recommendation model, the Topic-Enriched Knowledge Graph Recommendation System (

Firstly, the KG-based news modeling layer is composed of three encoders and outputs a vector representation for each given article. The word-level news encoder learns news representations using their titles without considering latent knowledge features. The first layer of the encoder projects the titles’ sequence of words into a lower-dimensional space, while the bidirectional GRU (Bi-GRU) layer encodes the contextual information of a news title. Bi-GRU obtains the hidden state of an article by concatenating the outputs of the forward and backward gated recurrent units (GRUs) [91]. This is followed by an attention layer which extracts more informative features from the vector representations by giving higher importance to more relevant words. Hence, the final representation of news article

The knowledge encoder extracts topic information from the news titles through three layers [91]. The concept extraction layer links each news title with corresponding concepts in a knowledge graph using an “is-a” relation. Afterwards, the concept embedding layer maps the extracted concepts to a high-dimensional vector space, while the self-attention network computes a weight for each word in the news title according to the associated concept and topic. For example, in the news title from Fig. 1,

The third, KG-level news encoder firstly performs a knowledge distillation process. The resulting subgraph is enriched with 2-hop neighbors of the extracted entities, as well as with topical information distilled by the knowledge encoder [91]. Therefore, not only are knowledge entities from the text disambiguated and their contextual information is taken into account but also adding topical relations among entities decreases data sparsity by connecting nodes not previously related in the knowledge graph. The topic and knowledge-aware news representation vector are computed with a graph neural network [171]. The final news embeddings are obtained by concatenating the word-level and KG-level representations.

Secondly, the attention layer computes the final user embedding by dynamically aggregating each clicked news with respect to the candidate news. This step is accomplished as in DKN, by feeding the concatenated embedding vectors of the user’s click history and the candidate news into a DNN. Lastly, the user’s probability of clicking on the target article is computed in the scoring layer using the dot product of the user’s and article’s feature vectors.

In the next step, the words

The textual-level and semantic-level article embeddings are concatenated and integrated with user features to obtain the final article embedding

Neural-based news recommendation systems are summarized by focusing on four distinguishing aspects:

Evaluation approaches

This section analyses approaches used for evaluating the surveyed knowledge-aware news recommender systems, as well as potential limitations concerning the reproducibility and comparability of experiments.

Evaluation methodologies

The type of evaluation methodology depends on the target function of the recommendation models and the user data. In this context, the surveyed recommender systems were typically evaluated either through offline experiments based on historical data, through online studies on real-world websites, or in laboratory studies. Frameworks based on an item-ranking target function usually use an online setting or laboratory experiment. In these scenarios, participants are asked to annotate news articles recommended to them by the model based on their relevance to the user’s profile. In turn, the user profile is either created during the experiment or predefined and assigned to the participants by the evaluators. Once the annotations are obtained, the performance of the model is evaluated by comparing the predicted recommendations against the truth values provided by the annotators. In contrast, systems that target the click-through rate are evaluated through experiments in an offline setting, using data comprising of logs representing users’ historical interactions with sets of news.

Table 6 provides an overview of evaluation settings in terms of datasets and metrics used. As it can be observed there, all models are evaluated using different types of information retrieval accuracy measures, such as precision, recall, F1-score, or specificity. The performance of some of the more recent, neural-based systems is also evaluated in terms of rank-based measures, such as Normalized Discounted Cumulative Gain, Hit Rate, or Mean Reciprocal Rank. Generally, these metrics are computed at different positions in the recommendation list to observe the recommender’s performance based on the length of the results list. Moreover, for non-neural, entity-centric methods, the authors use statistical hypothesis tests, such as the Student’s

Overview of evaluation settings. We list the model’s category and abbreviated name, datasets used, and reported evaluation metrics and setup information. The abbreviations used in the table are the following: Eval. = Evaluation, Acc = Accuracy, P = Precision, R = Recall, F1 = F1-score, NDGC = Normalized Discounted Cumulative Gain, RMSE = Root Mean Square Error, MAE = Mean Absolute Error, ROC = Receiver Operating Characteristic, PR curves = Precision-Recall curves, AUC = Area Under the Curve, NDPM = Normalized Distance-Based Performance measure, HR = Hit Rate, MRR = Mean Reciprocal Rank, Kappa = Kappa statistics, Student’s t -test = One-tailed two-sample paired Student t -test, ESI-RR = Expected Self-Information with Rank and Relevance-sensitivity [56], EILD-RR = Expected Intra-List Diversity with Rank and Relevance sensitivity [162], PROCS = processing steps, DS = data split, PARAMS = parameters, All available = PROCS + DS + PARAMS + CODE

Overview of evaluation settings. We list the model’s category and abbreviated name, datasets used, and reported evaluation metrics and setup information. The abbreviations used in the table are the following: Eval. = Evaluation, Acc = Accuracy, P = Precision, R = Recall, F1 = F1-score, NDGC = Normalized Discounted Cumulative Gain, RMSE = Root Mean Square Error, MAE = Mean Absolute Error, ROC = Receiver Operating Characteristic, PR curves = Precision-Recall curves, AUC = Area Under the Curve, NDPM = Normalized Distance-Based Performance measure, HR = Hit Rate, MRR = Mean Reciprocal Rank, Kappa = Kappa statistics, Student’s

(Continued)

In comparison to the relatively uniform usage of evaluation metrics, the type of datasets used for evaluation varies significantly among recommender systems. The majority of non-neural models can be clustered into two groups, depending on the dataset used for their evaluation. As shown in Table 6, semantic-aware context recommenders are evaluated using the News@hand architecture described in [22]. Most of the remaining models are incorporated in the Hermes News Portal [54]. The authors of a few of the path-based, non-neural recommender systems construct their own datasets using news articles collected from websites such as the New York Times, or Sina News,16

Overview of evaluation datasets. We list the model’s category and abbreviated name, the dataset’s source, language, time frame, as well as the number of users, items, and interactions. An entry annotated with “*” denotes that the statistics were approximated by us based on the data provided by the authors. The abbreviations used in the table are the following: # = number of, N/A = not applicable

(Continued)

The datasets used to evaluate neural-based frameworks consist of user interaction logs gathered from websites such as Bing News,22

As it can be further observed in Table 7, which summarizes the statistics of the used evaluation datasets, the number of users and items contained in these datasets varies widely. Non-neural models are evaluated on small datasets, usually with less than 1000 articles, with the exception of the semantic contextualization systems, tested with nearly 10,000 items. In contrast, neural-based methods are mostly evaluated on over 1 million click logs from more than 100,000 users and items.

Another critical finding is that in many cases, datasets are not described clearly enough. In a fourth of the cases, the data source is not specified. Moreover, the language of the dataset is rarely mentioned explicitly. While the language can easily be deduced from monolingual news websites, this does not hold true for international news platforms, leading to an unknown language in more than half of the cases.

Table 6 also lists the type of information provided by each model with regards to the evaluation setup. Replicating experiments requires not only access to the data used, but also knowledge of how the data was split and processed for training and evaluation, and which values were used for the different parameters and hyperparameters of the model. Moreover, differences in the models’ implementation, especially of neural-based models, can further influence the results obtained when reproducing experiments. Hence, access to the original implementation constitutes an important factor for the comparability and reproducibility of results.

However, as it can be observed in the last column of Table 6, only 5 out of the 40 surveyed papers provide all this information. For both sub-categories of non-neural models, generally only the data split and some of the parameters or processing steps are specified. Even when some processing steps are explained in the paper, not enough details are provided regarding how procedures such as named entity recognition or entity linking were performed. Moreover, systems in these categories each propose their own evaluation setups, without following a uniform procedure.

In contrast, most papers describing a neural-based framework offer extensive details regarding their evaluation settings and model architecture. This phenomenon could be explained in two ways. On the one hand, since all neural-based models are deep learning architectures, hyperparameters play a central role in their performance. On the other hand, most of the approaches in this family have been published in the recent past, and there has been a trend in the recent years in the academic community to make implementation details available when publishing a research paper in order to facility reproducibility.

Nonetheless, important aspects which would increase the comparability of experiments are still neglected in some works. Often, the entity extraction and linking processes are not thoroughly explained, meaning that if no implementation details are available, it would be impossible to reproduce the exact steps of the original experiments. Nonetheless, all the previously discussed knowledge-aware news recommender systems use a form of named entity recognition and linking in order to identify entities and concepts in the news articles and to map them to a knowledge base. However, almost none of the papers explicitly mention how these steps are performed and implemented. Other important steps required by any news recommender system, such as general text pre-processing, which can heavily influence the data representation, and ultimately, the generated recommendations, are also not discussed. In addition to the recommendation module itself, such steps constitute important dimensions that may differ between systems, and in turn, choices in their design and implementation may lead to great differences in performance. In Saskr, for example, the authors offer few details on the construction of the news-specific knowledge graph used, and no specification of the news data source.

Overview of evaluated features and components. We report the model’s category and abbreviated name, the features evaluated and the components evaluated during an ablation study, if one was conducted. The abbreviations used in the table are the following: dim. = dimension, emb. = embedding, init. = initialization, # = number of

Overview of evaluated features and components. We report the model’s category and abbreviated name, the features evaluated and the components evaluated during an ablation study, if one was conducted. The abbreviations used in the table are the following: dim. = dimension, emb. = embedding, init. = initialization, # = number of

(Continued)

Another significant factor to be considered in the evaluation and comparison of recommender systems is how different model features and components affect its performance. All of the surveyed papers compare their knowledge-aware news recommendation models against baselines which do not incorporate side information in order to illustrate the gains of a knowledge-enhanced system. In addition to evaluating a model against baselines and state-of-the-art systems, it is also necessary to understand the effect of different features and modules on the recommender’s performance. To this end, the choice of knowledge resource is critical for a knowledge-aware model. However, none of the papers compare their model’s performance using different knowledge bases to determine the extent to which the resource itself influences results.

As it can be seen in Table 8, only 6 out of 16 papers describing non-neural, entity-centric systems evaluate their model’s parameters. In these cases, the threshold values determining which articles are suggested to the user are empirically tested. In the case of non-neural, path-based recommenders, only the authors of ePaper [105], BingSS [27], Kumar and Kulkarni [88] and SED [83] evaluate the influence of different parameters or user profile initialization on the model’s performance. In comparison, the evaluation of neural-based systems involves parameters sensitivity analysis, as well as experiments with different initialization, training or embedding strategies. Such extensive experiments could also be influenced by the type of models, since neural network architectures comprise of several components, and are more sensitive to hyperparameters and design choices than models from the first two categories.

An additional finding is that few works perform an ablation study to determine the contribution of each component to the overall system. In the case of semantic-aware context recommenders, the authors analyze variants of the model obtained by removing either the contextualization of user preferences, the extension of user and news profiles, or both. Werner and Cruz [179] investigate different similarity metrics and recommendation techniques, while BKSport’s authors examine the performance of the recommender when taking into account only semantic similarities, only content similarities, or both. Colombo-Mendoza et al. [35] similarly experiment with two network-based feature learning algorithms and different similarity metrics. Wang et al. [170] remove not only the knowledge component during the ablation study, but also experiments with different types of knowledge graph embedding models. Additionally, DKN’s performance was tested using different transformation functions, as well as with and without the attention module. The authors of MKR evaluate the contribution of its cross&compress units by replacing them with different modules, while those of IGNN examine the effectiveness of the embedding propagation layers by comparing different model variants which use them either to enhance the news, the user, or both representations. Lee et al. evaluate the improvements of using side information in TEKGR by analyzing the effect of its KG-level and knowledge encoders. Similarly, KRED’s authors conduct an ablation study in which they remove each of KRED’s entity representation, context embedding and information distillation layers. KCNR’s performance is analyzed with and without the user preference prediction module, and the influence of the preference propagation in the knowledge graph by considering different

Overall, this investigation of evaluation approaches shows that there is no unified evaluation methodology to produce comparable experiments. Moreover, the datasets used for evaluation are freely chosen by the authors, and there is no clear benchmark set of datasets used by all the systems. Although an effort has been made in recent years to provide more details on the evaluation setup, model architectures and choice of parameters, there is often still too little information specified for critical processing steps. In conclusion, we argue that only some of the most recent, neural-based approaches could be replicated given the available data, whereas the remaining methods cannot be reproduced in accordance to the original implementations. In this context, knowledge-aware news recommendation models lack reproducibility and comparability, standards which have been strongly encouraged in other fields of machine learning.

Open issues and future directions

Existing works have already established a strong foundation for knowledge-aware news recommender systems. In this section, we firstly discuss which fundamental challenges of news recommendation have already been addressed by knowledge-aware models, then identify and elaborate on several open issues in the field, and propose promising research directions.

In addition to general challenges for recommender systems, such as the cold-start, data sparsity, personalization, diversity, or privacy issues [143], news recommenders systems face additional domain-specific challenges, as explained in Section 2. Data sparsity and cold-start problems have clearly been addressed by the injection of external information from a knowledge base into the recommendation module, as such information enriches the initial data available about items and users. Similarly, the

However, the backbone of knowledge-aware recommender systems is represented by the recognition and disambiguation of named entities in the articles, a plain task for humans, but difficult for automatic systems. While knowledge representations obtained from identified entities in the text have been claimed to provide a solution for many shortcomings of traditional recommender systems, this is only true if such entities have been firstly disambiguated correctly and knowledge about them has been created and stored in knowledge bases. In turn, this passes the problem to the entity linking and knowledge graph construction components, which are most often not thoroughly discussed by the existing works, as shown in Section 7.3. Moreover, these components themselves are being subjected to the same challenges of resolving ambiguities and discovering knowledge-level connections as a knowledge-unaware recommender system. If such knowledge is extracted automatically, the problem is pushed further down the pipeline, whereas if human experts are involved, the challenge becomes how to create structured knowledge in the face of the high churn of the news domain. Overall, these problems have not been tackled in the existing literature, although they pose significant problems for deploying a high-performing system as an effective solution in the real world. Therefore, we strongly believe that future research should not only be concerned with achieving increased performance on benchmark datasets while ignoring the additional challenges that a real-world application of the recommender system would pose, but should instead also try to address these open questions.

Several challenges have only been partly addressed by the surveyed works. For example, recommenders that construct user and item profiles based on ontological concepts take into account a smaller amount of data than those that use the full-text of news articles. Therefore, computations are faster and the negative effect of the large volume of data characterizing news recommendations is diminished. However, as it will be explained in Section 8.2, it remains unclear what is

Nevertheless, a large number of news-specific challenges are not yet tackled by knowledge-aware news recommender systems. Issues related to the

In the remainder of this section we elaborate on the identified open issues and propose future research directions.

Comparability of evaluations

Zhang et al. [194] have observed that the entire field of recommender systems lacks a unified evaluation methodology or benchmark datasets, which are common, e.g. in the domains of computer vision or natural language processing to ensure a fair comparison of models. A similarly troubling finding with regards to the reproducibility of research published in the area of recommender systems has been discussed by Dacrema et al. [37]. The authors compared numerous works published in recent years at prestigious conferences in the domain of neural, collaborative filtering-based recommendation approaches and found that less than a half could be reproduced. Moreover, the majority of the proposed methods were equally good or even outperformed by simpler methods, due to methodological issues such as the choice of baselines, propagation of weak baselines, or the poor tuning of these baselines [37].

The findings of Section 7.3 have shown that currently, knowledge-aware news recommender systems also hardly produce comparable experiments. While neural-based methods have a higher degree of reproducibility, the other models do not provide enough details on their evaluation methodology in order to be accurately replicated and verified. Another important observation is that none of the deep learning models have been compared against recommenders from the non-neural approaches. Nonetheless, comparability of evaluations is essential for benchmarking different models, which in turn, drives advancements in the field. Therefore, we argue that the field of knowledge-aware news recommender systems needs a stricter and more unified evaluation approach, including common benchmark datasets, clear processing steps, unification of evaluation metrics, usage of comparable resources and hyperparameters of pre-trained models, and ablation studies.

As discussed above, while natural language processing methods, in particular entity recognition and linking, play a crucial role in processing new texts, their effect is rarely documented. In the realm of ablation studies, we would encourage to investigate those effects more thoroughly (e.g. by exploiting different entity linking methods), and to also investigate interaction effects between the natural language processing methods, recommendation methods, and the knowledge resource used.

Overall, all these steps would ensure that models are not only fairly and transparently compared against each other without great variations in parameter settings, but would also indicate whether the improvement of a new model over the state-of-the-art results is determined by the system’s architecture, or simply, by a better-tuned set of hyperparameters. Similar studies that can serve as an example for the field of news recommendation have been conducted for graph neural networks [48] or knowledge graph embeddings [138].