Abstract

Going from natural language directions to fully specified executable plans for household robots involves a challenging variety of reasoning steps. In this paper, a processing pipeline to tackle these steps for natural language directions is proposed and implemented. It uses the ontological Socio-physical Model of Activities (SOMA) as a common interface between its components. The pipeline includes a natural language parser and a module for natural language grounding. Several reasoning steps formulate simulation plans, in which robot actions are guided by data gathered using human computation. As a last step, the pipeline simulates the given natural language direction inside a virtual environment. The major advantage of employing an overarching ontological framework is that its asserted facts can be stored alongside the semantics of directions, contextual knowledge, and annotated activity models in one central knowledge base. This allows for a unified and efficient knowledge retrieval across all pipeline components, providing flexibility and reasoning capabilities as symbolic knowledge is combined with annotated sub-symbolic models.

Introduction

Performing household activities such as cooking and cleaning have, until recently, been the exclusive provenance of human participants. However, the development of robotic agents that can perform different tasks of increasing complexity is slowly changing this state of affairs, creating new opportunities in the domain of household robotics. Most commonly, any robot activity starts with the robot receiving directions or commands for that specific activity. Today, this is mostly done via programming languages or pre-defined user interfaces, but this changes rapidly. For everyday activities, instructions could be given either verbally from a human or through written texts such as recipes and procedures found in online repositories, e.g., from wikiHow. From the perspective of the robot, these instructions tend to be vague and imprecise as natural language generally employs ambiguous, abstract, and non-verbal cues. Often, instructions omit vital semantic components such as determiners, quantities, or even the objects they refer to [38,44]. Still, asking a human to, for instance, “take the cup to the table” will typically result in a satisfactory outcome. Humans excel despite many uncertain variables existing in the environment: for example, the cups might all have been put away in a cupboard or the path to the table could be blocked by chairs.

In comparison, artificial agents lack the same depth of symbol grounding between linguistic cues and real world objects, as well as the capacity for insight and prospection to reason about instructions in relation to the real world and the changing states of that world. In order to turn an underspecified text, issued or taken from the web, into a detailed robotic action plan, various processing steps are necessary, some based on symbolic reasoning and some on numeric simulations or data sets. The research question in this paper is therefore the following: how can ontological knowledge be used to extract and evaluate parameters from a natural language direction in order to simulate it formally? The solution proposed in this work is the Deep Language Understanding (DLU) processing pipeline. This pipeline employs the ontological Socio-physical Model of Activities (SOMA) [6], which serves not only to define the interfaces of the multi-component pipeline but also to connect numeric data and simulations with symbolic reasoning processes.

Scope of DLU

Directions, instructions delivered textually, and commands, delivered verbally, are special instructions as they always demand an action. In contrast, other instructions can also be descriptions or specifications of circumstances. For example, “take the cup to the table” demands the addressee to perform an action. Sentences such as “knives must be placed to the right of the plate” or “the onions should be brown after 20 minutes” might entail directions or commands, but they do not explicitly call to action. In this work, the focus lies on directions as an important special case of instructions.

More specifically, DLU only handles directions in which a trajector needs to be moved by an agent along a trajectory, thus directions which can be described using a

Following convention, image-schematic notions are written in small capitals.

Example directions that DLU does not handle and corresponding explanations

DLU also only handles directions in isolation. That means, if a previous direction is referenced or sets up a certain pre-condition for another direction, SCG will not know about it and not provide a correct semantic specification as described in Section 3.2.

The context in DLU is always the physical context, that is a scene in form of a semantic map as discussed in Section 3.3. No other context is taken into account, neither previous instructions nor social interactions or information from other knowledge graphs.

Another important point is that DLU assumes error-free communication; thus faulty directions such as “Take the chair to the table” when in fact instead of chair the user meant cup will not be corrected by DLU, mostly because of the isolation and context arguments above.

To evaluate the DLU pipeline, the direction “take the cup to the table” is used, as it has several interesting properties: (a) “Take” makes no specific assumptions about the means, but together with “to” it entails a movement with a trajectory. (b) “The cup” could easily be replaced with other items, based on similar functional properties or affordances. (c) “To the table” does not explicitly specify that the cup should be place on top of it, but only that it needs to be close to it. (d) While two items are involved in the direction, one remains stationary. (e) Additionally, the task is rather forgiving in terms of precision. While covering a pot requires placing a lid exactly on top of it, placing the cup on the table allows for more freedom in location (albeit not in orientation). (f) Lastly, taking a cup to a table is usually a mundane exercise for humans. The direction is thus prototypical of everyday activities and the directions DLU handles.

In summary, this work builds upon the existing SOMA ontology [6], the semantic parser SCG [9,10], and the human computation game Kitchen Clash [34]. In this work, a pipeline is build around these three modules to parse directions into semantic specifications. To ground the directions inside a simulated physical context, this work introduces a prototype heuristic for grounding. Additionally, this work generalizes data collected using Kitchen Clash, stores it inside an ontology for task specific retrieval, and successfully simulates its generated task plans in a kitchen environment in Unity.

Research on natural language understanding for artificial agents dates back at least to the late 1940s. Recent examples include various artificial neural networks such as BERT [17] and GPT-2 [41] which can extract information from written text, answer questions, or tell stories when presented with a short teaser. These and similar machine learning techniques power chatbots and virtual assistants that rely on natural language understanding to answer questions2

E.g., Alexa (

Dealing with the transition from symbols in language to actions and objects in the real world is partly a problem due to the fact that the underlying deep understanding of natural language is not a direct mapping from either syntax or grammar. Instead, semantics appears in the form of linguistic constructs that correspond to particular mental patterns of meaning [19]. A theory for how these constructs manifest in the cognitive sphere can be constructed by grounding it in the embodied experiences of agents, thereby offering a seamless transition to simulations and robotics research. By looking to the embodied theories of cognition [47], recorded human activity, simulation, and action execution of robots can be used as a foundation for how to construct meaningful ontological structures. One proposal for how experiences turn into meaningful constructs is through conceptual patterns called image schemas [26]. They are spatiotemporal relationships that to varying degrees capture the specifics of particular static relationships and dynamic transformations of objects, agents and environments. Relationships like movement between points (SPG), relative distance in the

In DLU, the first steps of using image-schematic relationships as ontological micro-theories included in SOMA are introduced with the purpose to ground robotic action plans into the large body of linguistic research on embodied semantics. The theories define slots, such as trajector for an SPG, which can be filled using parameters (cf. Section 3.5), motivated by Bergen and Chang’s embodied construction grammar [5]. To extract the theories from natural language, the SCG parser [10] is employed.

While this application is a novel research contribution, employing ontologies for knowledge representation and reasoning in autonomous robot control is an established field of research with developments in both service and industrial robotics (see [31] for an overview). One example in the industrial robotics domain is the ROSETTA project [32,49]. The initial scope of this project was reconfiguration and adaptation of robot-based manufacturing cells, however, the authors have further developed their activity modeling for coping with a wider range of industrial tasks. Other authors have focused on modeling industrial task structure, part geometry features, or task teaching from examples (e.g. [3,33,35]). These industrial tasks tend to be more structured and less demanding in terms of flexibility compared to the everyday activity domain that is the focus of this paper.

Another ontology is described by Eberhart et al. [18], who use a domain specific ontology to model instructions for cognitive tasks. In contrast to this work, they do not simulate instructions and work in a more abstract scenario. However, their ontology is similar in spirit to some modules in SOMA [6] as both provide models of task instructions.

An approach to activity modeling in the service robotics domain is presented by Tenorth and Beetz [50]. The scope of their work is similar to this work, as the authors also consider how activity knowledge can be used to fill knowledge gaps in abstract instructions given to a robotic agent performing everyday activities. However, the scope of the work presented here is wider, as it is also considered here how knowledge can be used for the integration of numeric approaches and reasoning. Another difference is that, in Tenorth and Beetz’ modeling, there is no distinction between physical and social context, and therefore, it is less expressive than SOMA which is used here.

A more general approach to activity modeling for robotic agents is presented by the IEEE-RAS working group Ontologies for Robotics and Automation (ORA) [45]. The group has the goal of defining a standard ontology for various sub-domains of robotics, including a model for object manipulation tasks. It has defined a core ORA ontology [39], as well as additional modules for industrial tasks such as kitting [20].

Interactive task learning, the work by Kirk et al. [27], also presented by Gluck and Laird [23], is similar to DLU in that they also simulate natural language instructions. In contrast to DLU, their focus in on task learning for puzzles and games, while DLU puts the focus on robust task execution for household tasks. Learning in DLU is mostly an off-line process and, for example, seeded using human computation.

The architecture of the implemented natural language understanding pipeline DLU. A user supplies a direction to be performed in a given scene. The semantic parser SCG (b) parses the direction into a semantic specification (c). Together with the context information (d), the semantic specification is grounded (f) in the scene. Subsequent reasoning steps create action plans (g) and simulate them (h). Interoperability between the modules is achieved with the help of SOMA (a). The data flow between Kitchen Clash [34] and the DLU ontology and from the DLU to the action creation is slightly unexpected: in DLU, the models derived via human computation in Kitchen Clash are stored inside the ontology. They are retrieved during the action creation to facilitate human-like behavior. Note: This diagram represents the actual use of data, not the implementation: the output of (f) is available for (e), but currently not exploited there. Dashed modules: previous work. Dashed white arrows: ontological annotations.

The DLU processing pipeline consists of the interconnected components depicted in Fig. 1. In this section, the most important components are described.

DLU starts by parsing a sentence into semantic specifications (c) (Fig. 1) based on SOMA (a) using the Streaming Construction Grammar (SCG) parser (b) [10]. Next, DLU determines referents (f) for the extracted semantic specifications in a pre-defined semantic map (d) of a kitchen scene. Additionally, the pipeline extracts image schema theories (e) from the semantic specifications. Each image schema theory has a set of parameters which DLU fills with values. These values are either references to objects or other image schemas, or they are values from evaluating pre-trained models. For example, one model discussed below contains information on where glasses can be placed on top of a table. Eventually, all knowledge from the previous steps – parsing, grounding, theory extraction – is combined into a sequence of trajectories (g), which DLU executes inside a game engine (h).

SOMA and other ontologies

Across the DLU pipeline the Socio-physical Model of Activities (SOMA) is employed as a common interface. SOMA is based on the DOLCE+DnS Ultralite (DUL) foundational framework and its plugin Information Objects ontology (IOLite) [6,22,29]. Consequently, SOMA has two knowledge branches; one physical and one social [6], which leads to a distinction between objects and actions in the physical branch on the one hand, as well as roles and tasks in the social branch on the other. Beßler et al. explain that axiomatizations in the physical branch express physical contexts which can be classified by axiomatization in the social context [6]. For example, a cup and its properties of being a designed physical artifact would be described using parts of the physical branch, but its potential usage or affordances would be axiomatized within the social branch. SOMA is built out of multiple modules for different aspects. For example, the SAY module defines the linguistic theories and knowledge required by SCG, and the HOME module defines typical household objects. For DLU, SOMA is an optimal choice ontology as it is designed to describe actions with high precision.

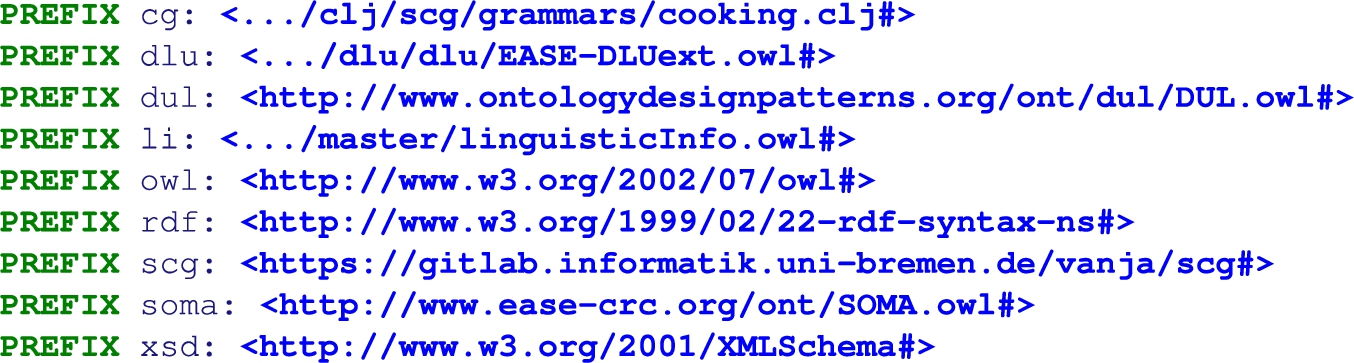

To keep the ontology prefix definitions in the article at a minimum, all of the used prefixes are defined in Listing 1.

All prefixes for ontology namespaces used in this paper. Some are shortened for legibility, the full list is available online

To extract a semantic specification from a sentence, the Streaming Construction Grammar (SCG) parser [10] is employed. Given a textual input, it produces a set of triples which describe the sentence’s syntax as well as semantics. The underlying grammar maps the semantics onto SOMA concepts where possible,3

If a mapping is not possible, the output will be less detailed.

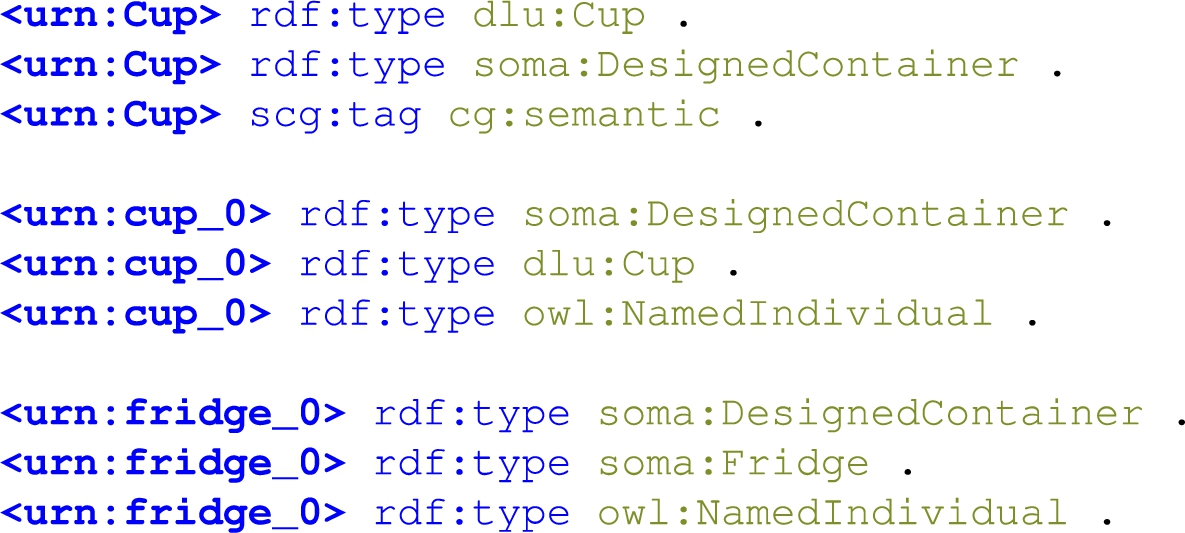

An excerpt from SCG output: semantic triples involving a cup in n3/turtle-notation. The term

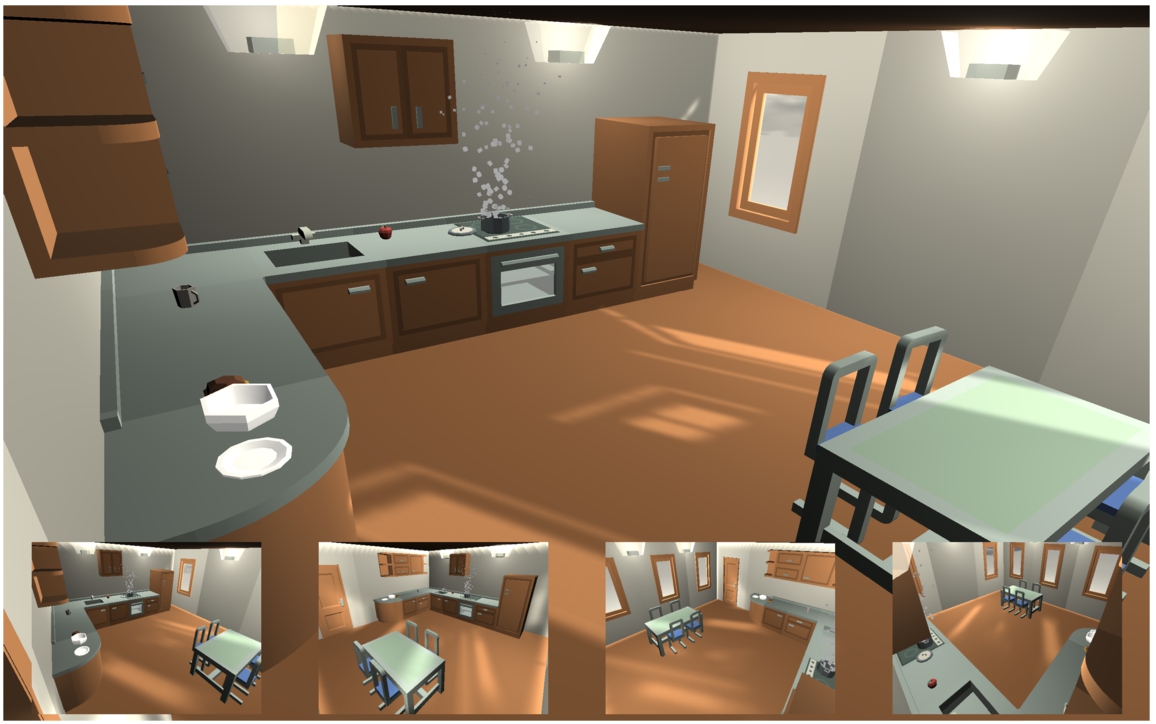

The kitchen scene. All objects – chairs, bowls, pots, cupboards, etc. – have semantic labels aligned with SOMA and unique identifiers, e.g.,

For a direction in the scope of DLU to be executed, an environment with objects to manipulate is mandatory. This environment is a context or a scene. Figure 2 shows a kitchen scene in which actions in DLU are simulated. In the semantic map, each object in the kitchen scene is already annotated with unique identifiers and semantic labels aligned with SOMA.4

The concepts are from the DLU ontology, which is a descendant of SOMA.

In DLU, the parts of the semiotic triangle are defined in SOMA. The symbols are part of the natural language direction, e.g., the word “cup”. The objects are the entities in the kitchen scene, e.g., the table entity has a mesh, textures, and defined physical properties. And the mapping from the symbols to the concepts is performed by SCG. With the semantic map, the mapping from the objects to the concepts are given a priori.

The missing link, the mapping between the objects and symbols, is called grounding in DLU; this is also known as referencing or can be described as framing. One might wonder why the missing link needs to be established at all: there is a link from the symbols via concepts to objects and vice-versa. However, these links are not sufficient to fully disambiguate a sentence under a given context. For example, if in a kitchen scene one cup is on top of a shelf and another cup stands on a counter top, “take the cup to the table” might refer to either. But from the context it should be clear which of the cups is the correct one, as otherwise the speaker would have made more specific instructions. To resolve these ambiguities, we need to find the direct mapping between specific objects and symbols.

Grounding is implemented using an ad-hoc heuristic in DLU. The implementation is a prototype placeholder for future sophisticated methods. The heuristic designed for DLU is: a symbol references an object if the mean path depth of their common types to

Semantic specifications for the term referred to as

SELECT statement to select all symbol-object pairs with the same assigned object types.

The Prolog predicate5

Candidate groundings for the symbol

“Take the cup to the table” is a

An extract of the semantic specification of “take the cup to the table”. A full image can be found online at

The direction “take the cup to the table” evokes a SPG schema [26], denoted by a

The initial roles for the SPG schema evoked by “take the cup to the table”

By having theories reference other theories, the tree of theory references can be traversed to filter those theories which lead towards our goal state. In the example sentence, this applies only to the SPG theory, which references the proximal theory. The proximal theory satisfies the terminal scene of the direction and has no further references. Thus, it is possible to infer that DLU only needs to process the SPG and the proximal theory itself to calculate the required actions to reach the goal of the direction.

There are several accounts of what “actions” are (e.g., [21]). In this work, an atomic action is the process of a trajector following a trajectory. It is possible to generate such actions from the extracted schemas in order to reach the goal of the direction. For this, a distinction between two types of theories has to be made: resolvable and executable theories. While this is mostly an implementation detail, it allows for picking theories from which actions can be built: each executable theory eventually describes a trajectory, while each resolvable theory adds information to such trajectories.

In “take the cup to the table”, there is one executable theory, the SPG mentioned above. Eventually, it should describe a trajectory from the original location of the cup to a pose somewhere in the proximity of the table, as is defined by the proximal theory. Finding this trajectory requires two steps: first, resolving the proximal theory to a proper pose, then using the initial pose and the resolved pose to plan a path between the two.

One can see the proximity theory as a specialization of Johnson’s

The complete dataset can be found online:

GMMs with 1–6 Gaussians, using different covariance types. Lowest BIC was 143.82 for three Gaussians with spherical covariances. Adapted from

The positions of the collisions of glasses with the table surface in VR, together with the resulting GMM. Collisions were projected onto the same horizontal area and show the collisions from the top. The table width and length are normalized to

Having distinct models for proximity relations between cups and tables, between lamps and chairs, between houses and trees, etc., offers a way to select the correct model that is required. To achieve this in DLU, the model of proximity relations between cups and tables is stored in the ontology as an instance of a

A full example can be found at

The standard way to serialize Python objects,

After selecting a candidate model for the proximity theory, it is evaluated to sample a goal pose for the cup in the example and replace the reference to the proximity theory in the SPG (cf. Listing 5) with the concrete sampled pose. As a final step, a trajectory between the start and goal pose is generated to build a path. Currently, this means a linear interpolation between the start and goal pose, but DLU provides an occupancy grid of the scene to allow for more sophisticated path planning in the future, e.g., using the A* algorithm.

Physics engines are popular tools to simulate real life phenomena. Computational fluid dynamics (CFD) solvers such as OpenFOAM [53] or Autodesk CFD10

Autodesk Inc., Autodesk CFD,

NVIDIA Corp., PhysX SDK,

Roboti LLC, MuJoCo,

Unity Technologies, Unity,

In DLU, Unity3D is used to perform naive physics simulations. It executes the actions described above in the scene and records the actual trajectories of all objects in the kitchen scene. After a simulation run, the results are evaluated visually until automatic evaluations are developed, as described in the future work in Section 5.1.

As discussed in Section 1.1, the direction “take the cup to the table” is used to evaluate the DLU pipeline. The evaluation criterion is that the final simulation matches common sense expectations. In future work, DLU can be used as a platform to compare different evaluation strategies. One possible strategy is briefly discussed in Section 5.1, but in general out of the scope of this paper.

DLU is built upon a few assumptions. First, it assumes error-free natural language directions and the existence of a semantic map. Further, it expects that each direction maps to an action as defined above and can be achieved by moving objects along a trajectory in the environment. Also, it presumes that a real robot can perform that action when given a trajectory and a trajector, allowing it to leave out the robot – instead, objects “fly” around. For the model of proximity relations between glasses and tables, it is assumed that collision events are a good enough indicator of proximity. Lastly, the hypothesis is that once a sufficiently large set of functional relationships and image-schematic micro-theories is modeled in DLU, the pipeline will scale up and be able to understand more directions.

When the DLU pipeline is evaluated under these assumptions and given the above criterion, “take the cup to the table” results in a simulation that human observers, such as DLU’s authors, would consider an appropriate execution of the task. Still, individual components of the pipeline can be observed in isolation to reveal their strengths and weaknesses. SOMA and SCG have been evaluated by their authors [6,9].

The grounding step (Section 3.4) works for many objects in the scene, given the parser provides enough information. With insufficient or imprecise information, for example, labeling all objects as generic

In the schema or theory extraction step, only those theories for which queries were written can be found. To date, these are the ones which are relevant in “take the cup to the table”, namely SPG,

Conclusion and future work

This paper introduced DLU, a natural language processing pipeline which is capable of simulating natural language directions using SOMA as a common interface for individual components. On the basis of “take the cup to the table”, this work showed that the architecture is successful in simulating an everyday activity task.

DLU runs on a live instance at

A video demonstration is available at

The external modules, SOMA and SCG, are under active development and as they progress to become more advanced and complete, DLU benefits by being able to parse more directions and harvest better ontological descriptions. For the grounding module, other systems to replace the heuristic are currently being evaluated. Possible options are a system to ground unknown synonyms [43] and end-to-end machine learning models to ground directions such as “go to the red pole” [13,28].

Other future work includes building models on different relations between different objects to scale up the knowledge base, e.g.,

As introduced in Section 4, one future plan for DLU is to use it as an evaluation platform to compare different evaluation strategies. Simulating tasks is much cheaper and faster than performing tasks on a real robot platform and the modular design of DLU will allow individual evaluation of exchanged components. But a simulation alone is not enough. When the simulation places a cup inside the sink or puts it into the fridge rather than placing it on top of a table, it is important to distinguish the different outcomes: another algorithm must rate the resulting trajectory data as successful or not. Currently, to the best of the authors’ knowledge, no generic evaluation criterion exists to determine a successful task execution of an arbitrary task. DLU will be of assistance to develop and test such platforms.

A possible platform to process the output of DLU with a trajectory transformer and to compare the result with the semantic embedding from a sentence transformer. The similarity between the outputs of the transformers would be a good measure for simulation correctness. To build a good trajectory transformer model, data from human computation will be vital in the training process. Bold line: this work. Dashed line: future work.

One possible platform the authors envision could draw from ideas in recent advances of transformers [51], and is sketched in Fig. 5. A sentence transformer [42] provides a semantic embedding, a vector which encodes the semantics of a sentence. Analogously, a similar model, say a trajectory transformer, could be used to represent the essence of the trajectories recorded using DLU – or on a real robot. Such a trajectory transformer will be trained with a sentence transformer using data gathered by human computation. The outputs of the sentence transformer and the trajectory transformer should thus match, and could be compared using common methods in transformer training, e.g., a cosine similarity. This way, DLU could work as a platform to compare different components for language understanding, and, by replacing the approach presented here, also for comparison of evaluation strategies.

Footnotes

Acknowledgements

The authors wish to thank Susanne Putze, Dmitry Alexandrovsky, Nina Wenig, the reviewers Stefano Borgo, Christopher Myers, and five anonymous reviewers for their valuable feedback. Stefano Borgo provided examples to help us narrow down the scope of DLU, they are discussed in Section ![]() . The research reported in this paper has been (partially) supported by the FET-Open Project #951846 “MUHAI – Meaning and Understanding for Human-centric AI” funded by the EU Program Horizon 2020 as well as the German Research Foundation DFG, as part of Collaborative Research Center (Sonderforschungsbereich) 1320 “EASE – Everyday Activity Science and Engineering”, University of Bremen (

. The research reported in this paper has been (partially) supported by the FET-Open Project #951846 “MUHAI – Meaning and Understanding for Human-centric AI” funded by the EU Program Horizon 2020 as well as the German Research Foundation DFG, as part of Collaborative Research Center (Sonderforschungsbereich) 1320 “EASE – Everyday Activity Science and Engineering”, University of Bremen (