Abstract

Ontology Design Patterns (ODPs) have become an established and recognised practice for

guaranteeing good quality ontology engineering. There are several ODP repositories where

ODPs are shared as well as ontology design methodologies recommending their reuse.

Performing rigorous testing is recommended as well for supporting ontology maintenance and

validating the resulting resource against its motivating requirements. Nevertheless, it is

less than straightforward to find guidelines on how to apply such methodologies for

developing domain-specific knowledge graphs. ArCo is the knowledge graph of Italian

Cultural Heritage and has been developed by using eXtreme Design (XD), an ODP- and

test-driven methodology. During its development, XD has been adapted to the need of the CH

domain e.g. gathering requirements from an open, diverse community of consumers, a new ODP

has been defined and many have been specialised to address specific CH requirements. This

paper presents ArCo and describes

Keywords

Introduction

Museums, libraries, archives, private collections and other cultural institutions have the essential mission to preserve the cultural objects they collect. Hence, data about these objects is of utmost importance, since it allows to keep memory of them, their life cycle as well as their artistic, social, and historical context. If data are shared, they can be used as a means of enhancing cultural properties, by spreading knowledge on cultural heritage, and widening its potential consumers. Cultural Heritage (CH) data can have various types of consumers such as citizens, students, scholars, scientists, managers, public administrations and companies. Consequently, it can impact different domains such as tourism, research, management, teaching, etc. Moreover, cultural institutions and research organisations can mutually benefit from the data they publish, especially by creating connections between their knowledge bases. The Linked Data paradigm has shown its effectiveness in supporting this practice [2], and its adoption in the Cultural Heritage domain is leading to a significant transformation in the management of CH data [14,16,18,39,41].

The Italian Cultural Heritage is an important part of the world’s CH,1 According to UNESCO, Italy

is the country with the highest heritage sites in the world [9].

In [9] we introduce ArCo,2 Architecture of Knowledge,

from Italian

ArCo KG (composed of ArCo ontology network and LOD data) is available at the MiBAC’s

official SPARQL endpoint.3

Created with

LODE:

Besides the relevance of the produced resource, described in [9], ArCo’s project contributes to push the state of the art in knowledge graph engineering, with special focus on the CH domain, by sharing its “behind the scenes”, i.e. the intellectual and methodological processes performed, the adopted design principles and the lessons learned, all of which constitute the main focus of this paper. ArCo KG development follows a pattern-based ontology design methodology named eXtreme Design (XD) [4,6], and has contributed to extend and improve it, as discussed in Section 3.

ArCo KG is an evolving creature, so is the methodology it follows i.e. XD. New requirements

are continuously collected, incremental versions are regularly released, and its

methodological approach is discussed with the community, and possibly refined and

evolved.10 ArCo’s implementation of XD is discussed on a dedicated

mailing list

There are several, valuable existing models for representing Cultural Heritage data and

publishing them as LOD. The Europeana Data Model (EDM) [41] and CIDOC Conceptual Reference Model (CRM) [19] are two prominent examples. EDM defines a basic set of classes and properties

for describing cultural objects, which are used to aggregate CH data into the Europeana

portal.14

As compared to EDM and CIDOC CRM, ArCo KG aims at modelling the Cultural Heritage universe of discourse with a much finer grain and by addressing a wider variety of concepts, ranging from cultural properties’ metadata (e.g. authors, creation date, current location, style) to research findings and theories (e.g. scientific processes performed for analysing a cultural property, theories and foundations about possible former settlements in an archaeological site).

By formalising cultural properties, the events they participate in, the types of places they are located in, the processes they are involved in, etc. ArCo KG provides the CH and the Semantic Web communities with a set of ontology patterns to encode CH knowledge graphs. The ultimate goal is to enable researchers and scholars to make new findings about cultural entities, and to develop new theories based on observations performed on knowledge graphs modelled by means of ArCo ontologies.

ArCo ontologies are aligned to EDM and CIDOC CRM, in order to facilitate linking and reuse

by aggregators. Alignment and differences between ArCo ontologies, EDM and CIDOC CRM are

discussed in detail in Section 4 and in Section 8.1. Nevertheless ArCo ontologies take a different foundational

commitment than CIDOC CRM and EDM. The foundations of ArCo KG are: (i) the theory of

Constructive Descriptions and Situations (cDnS) [23] and (ii) the reflection of an epistemological perspective on cultural

properties. These are discussed in detail in Section 4 and

Section 5. Informally, ArCo KG is

an extension of the eXtreme Design methodology for dealing with Cultural Heritage ontology projects (or for knowledge domains with characteristics similar to CH)

an architectural ontology pattern for implementing large ontology networks

a detailed explanation of the foundations of ArCo ontologies

a detailed description of the main modelling issues addressed by ArCo and the related implemented ODPs

a formal evaluation of ArCo ontologies based on both established structural metrics [13,27,44,49,58,63,66], and XD-based unit testing

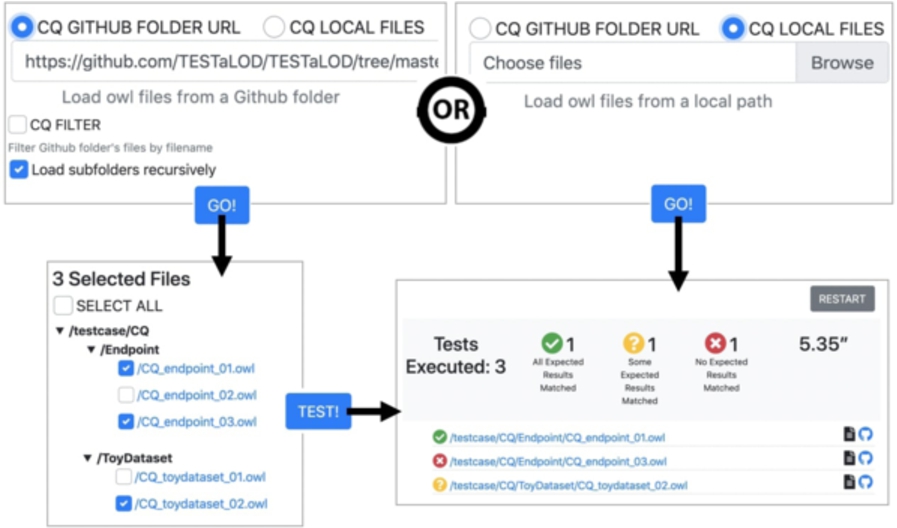

a tool (TESTaLOD) for supporting XD-based regression tests

a thorough description of the experience in applying XD to the development of ArCo KG.

After Section 2, which describes the General Catalogue of Italian Cultural Heritage, Section 3 explains the eXtreme Design methodology and discusses how we applied and extended it in the context of the ArCo project. Section 4 provides details about the foundations of ArCo ontologies and poses the basis to discuss, in Section 5, the main modelling issues addressed in ArCo ontologies and how they have been matched to existing Ontology Design Patterns. Section 6 evaluates ArCo knowledge graph. Section 7 summarises the lessons learned from the experience of developing ArCo, so far, and Section 8 discusses relevant related work. Finally, Section 9 wraps up the paper and points out some ongoing and future work.

Building a knowledge graph and its reference ontology network requires to understand the domain and the ontological commitment that its conceptualisation conveys, and to transform the available data into linked entities that comply with the resulting ontologies. There may be different scenarios in terms of what is available at the beginning of a knowledge graph project, but one of the most common situations is having a (set of) database(s) where the data are stored and maintained. Along with a continuous interaction with the administrators of the databases and the domain experts, these resources are to be analysed in order to extract the (often implicit) conceptual model of the domain that they encode. ArCo KG main datasources have been an XML database of catalogue records and a set of pdf documents describing catalogue standards.

Cataloguing cultural heritage is the process of identifying and describing, through metadata, entities that are considered cultural properties, by virtue of their historic, artistic, archaeological and ethnoanthropological interest. In Italy, the Italian Ministry of Cultural Heritage and Activities (footnote 12) (MiBAC), regions and local agencies are in charge of cooperatively cataloguing Italian cultural heritage they own, aiming at safeguarding, enhancing and making publicly available data on cultural heritage.

The General Catalogue of Italian Cultural Heritage

ICCD coordinates these cataloguing activities by maintaining the General Catalogue of Italian Cultural Heritage (footnote 11) (GC), which is the official institutional database of Italian cultural heritage, promoting integrated management of data coming from all over Italy and from diverse institutions and local contexts.

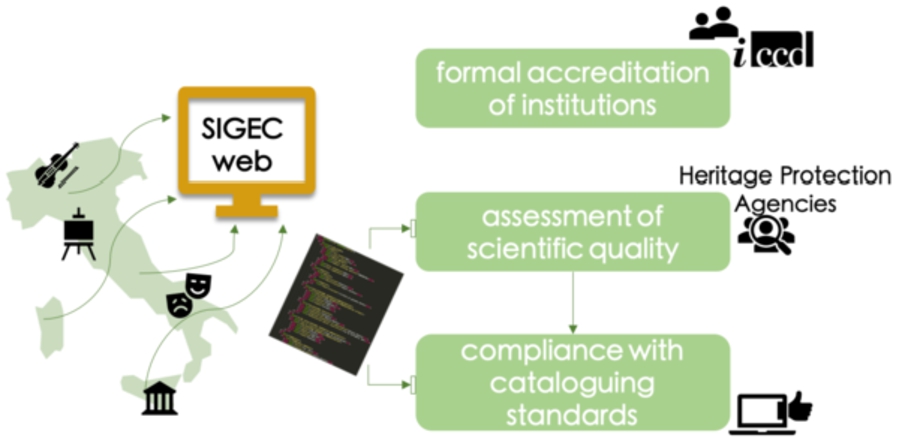

The General Catalogue is built upon a collaborative platform, named SIGECweb (footnote 13), to which national or regional, public or private, institutional organisations that administer cultural properties can submit their catalogue records, i.e. files containing data on cultural properties and compliant with predetermined standards and guidelines (see Section 2.2). Only users from institutions that are formally authorised by ICCD can access and contribute to SIGECweb, with specific profiles (e.g. administrator, cataloguer). The highly reliable provenance of the database is guaranteed by (i) an accreditation process that allows only authorised entities to contribute to the platform, (ii) a data validation phase performed by heritage protection agencies that assess the scientific quality of catalogue records, and (iii) an automatic data validation phase based on compliance with specific cataloguing standards (see Fig. 1). Based on its authoritativeness, it is assumed as a high-quality database, in terms of accuracy and completeness.

Accreditation and validation process for contributing to SIGECweb.

SIGECweb currently contains 2,735,343 catalogue records, 831,114 of which are publicly accessible through the General Catalogue. The privacy level associated with the remaining records prevents them to be openly published, since they refer to properties either private, or being at stake (e.g. items in unguarded buildings), or still requiring a scientific assessment by accounted institutions.

In order to guarantee high quality, consistency and interoperability between data

accessible through the General Catalogue, ICCD defines a set of standards

(

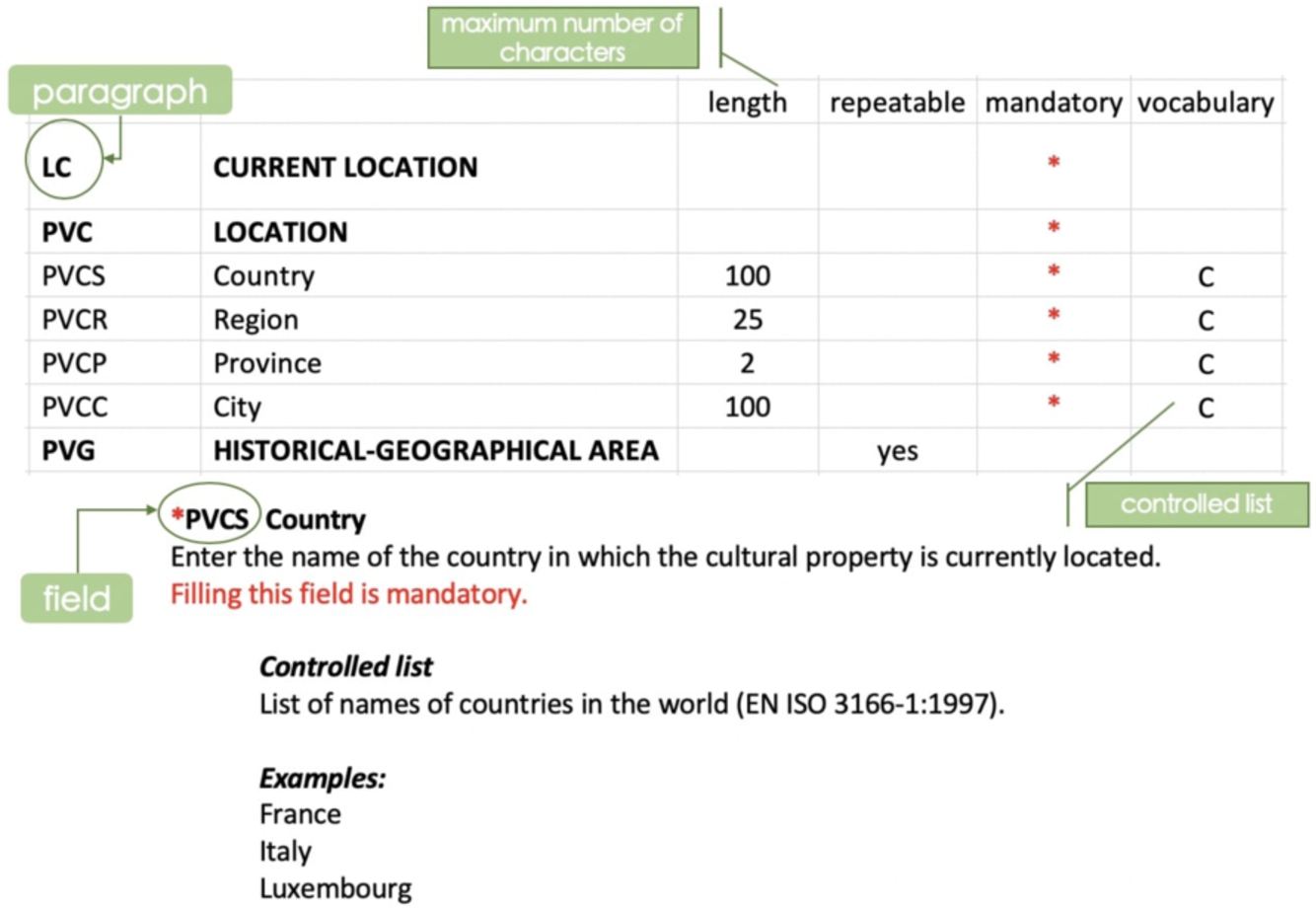

An example of the structure of an ICCD cataloguing standard.

Each cataloguing standard consists of a PDF document15 These standards are gradually

being published also in XML Schema Definition (XSD) format.

ICCD collects catalogue records about 9 categories of cultural properties, which

generalise over 30 different more specific types: archaeological, architectural and

landscape, demo-ethno-anthropological, photographic, musical, natural, numismatic,

scientific and technological, historical and artistic properties. For each of the 30

types, a specific cataloguing standard has been defined, while a

Currently, an effort is being made by ICCD in publishing on GitHub16

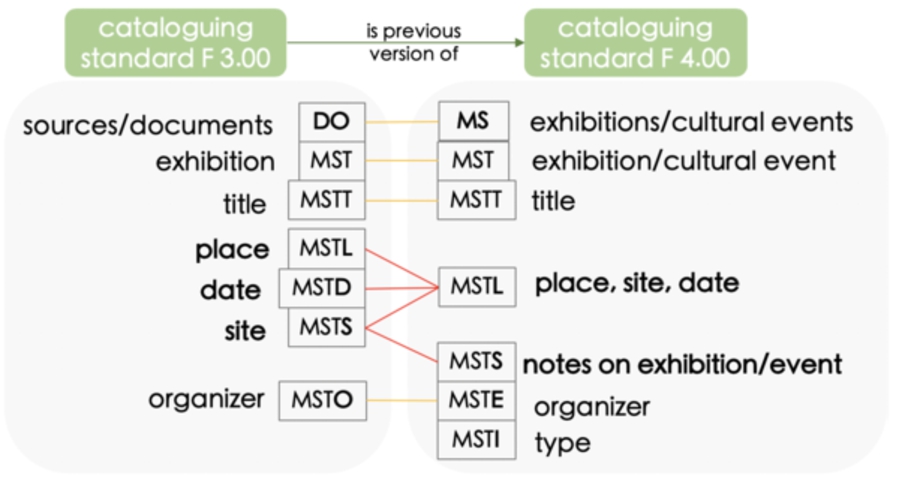

ICCD has been sharing cataloguing standards since 1990: they have undergone changes and

updates, regarding both their structure and rules for compilation.17 Previous and

current versions include: 1.00 and 2.00 (1990–2000), 3.00 (2002–2004), 3.01

(2005–2010), 4.00 (since 2015).

The ICCD cataloguing standard

Although catalogue records submitted to SIGECweb are subject to a validation process, being collaborative in nature means that catalogue records are not error-free. There are cases of: mandatory fields that are not properly filled, thus producing an error code; catalogue records containing values alternative to those provided by controlled lists, hence undermining data homogeneity; use of non-standard formats (e.g. for dates); minor bugs and typing errors. ICCD is continuously working for improving the collecting process, in order to minimise these situations.

Moreover, catalogue standards themselves could be improved in their structure, in order to maximise data mining from catalogue records: there are still many fields allowing for long descriptive texts, from which structured high-quality information could be derived and extracted.

Applying eXtreme Design principles to model the Cultural Heritage domain

In order to develop ArCo ontologies, which cope with a huge and complex domain such as Cultural Heritage’s, we use ontology design patterns (ODPs) [30,36]. Ontology patterns provide solutions to recurrent modelling issues. Their adoption guarantees a high level of the overall ontology quality, and favour its re-usability [3].

The use of design patterns in ontology engineering is less evident than in software

engineering. Software design patterns are such a standard practice that many programming

languages have built-in types implementing, or inspired by, them e.g. Observer in Java and

Iterable both in Java and in Python. ODPs instead, although recognised as good practices in

general, are yet distant to achieve a clear standard reference in the knowledge engineering

community, and very far to be common practice in the Linked Data community. Their

introduction in the Semantic Web is relatively recent [22] and to date there is still a lack of tooling to ease their adoption. A very

recent and promising contribution to fill this gap is CoModIDE [59], a Protégé18

At the time of ArCo development the tool was not

available, we plan to test and use it in future developments.

XD is an ontology design methodology that puts the reuse of ODPs at its core both as a principle and as an explicit activity. It provides guidelines for such an activity. Experiments have demonstrated its positive impact on ontology engineering and ontology quality [6,59].

XD is inspired by eXtreme Programming (XP) [60], an agile software development methodology that aims at minimising the impact of changes at any stage of the development, and producing incremental releases based on customer requirements and their prioritisation. Although the two approaches share the same principles and general guidelines, they in practice diverge towards different focuses, mainly due to the core differences between software systems and ontologies. XD is test-driven, and applies the divide-and-conquer approach as well as XP does. Also, XD adopts pair design (as opposed to pair programming). The intensive use of ODPs, modular design, and collaborative approach are the main characterising principles of the method. Further details on the relation between XP and XD, and a thorough description of XD, are given in [52].

The XD methodology as implemented for the ArCo knowledge graph.

As depicted in Fig. 4, after the project initiation, XD is

executed by iterating a set of steps, each involving one or more a a a an

In XD,

the design team works in parallel and interactively with the testing team: the same

requirements are used as input by the design team, for producing the ontology, and by the

testing team, for translating them into unit tests.

Figure 5 depicts a simple example that will be used to illustrate the main steps of the methodology.

An example of a user story translated into CQs, and of the matching between a CQ and an ODP.

Each ontology project will define its own

conventions.

In addition to deriving CQs, the design team interacts with the customer team in order to identify possible general constraints that may accompany them. General constraints express possible inferences or other rules that apply to the concepts that the story involves. In the example of Fig. 5, the following general constraints can be drawn: “An artist is a person” and “A person cannot be a role”. General constraints are the natural language counterpart of axioms that will be formalised in the ontology. The CQs and the general constraints define the ontology requirements, hence contributing to assess the ontological commitment, as far as the ontology domain tasks and scope are concerned. From the XD perspective, the ontological commitment includes both meta-level aspects such as adopting a 3d or 4d view, as well as functional/application aspects which draw the boundaries of the scope of the ontology. ArCo ontological foundations are discussed in Section 4.

A positive test means that some error has been

raised. Performing regression testing means re-running all

previously defined unit tests and integration tests to check whether the ontology

performs the same after a change, and to appropriately fix it, if it is not the

case.

[...] affect the overall

shape of the ontology, and dictate ‘how the ontology should look like’. [...] An

ontology that has a simple modular architecture is composed of a set of ontologies,

called modules, plus one ontology that imports all the modules.

Another challenge concerns the collection of requirements. Cultural heritage data are relevant for potentially many diverse consumers and applications. Our primary source of data is a catalogue, however our ultimate goal is to conceptualise the Cultural Heritage domain at large, going far beyond the cataloguing perspective. This means that ArCo needs to draw its requirements by a plethora of diverse potential consumers, which can enter the process at any time, posing new requirements. XD is adequate to support evolving requirements, but how to gather requirements from an evolving community is unclear.

A third challenge concerns testing. In this case, given the dimension and complexity of ArCo ontologies we soon realised that systematic testing, as recommended by XD, needed proper tool support, which was unavailable to the best of our knowledge. We have developed TESTaLOD to serve this purpose, which is presented in Section 6.1.

When the project started, its main

With this approach, the customer team became an evolving creature, which over time will

extend by involving all representatives of potential producers and consumers of CH data.

At the moment, ArCo is collecting requirements from private companies, public

administrations, researchers and creative developers. These requirements are collected in

the form of small stories (according to XD). A story is a non-structured text,

exemplifying some scenario or reporting real use cases. They are submitted by the customer

team to a Google Form, with a maximum of 250 characters.28

Requirements coming from user stories, as well those extracted from ICCD standards, are

translated into Competency Questions. All CQs, and related SPARQL queries, that so far

guided ArCo KG design and testing are available online.29

As an example, we report one of the stories collected through the Google form:

This story requires to address the following CQs: “What are the types of cultural properties located in a certain area?”, “Which is the current location of a cultural property?”, “Which are the geographic coordinates of the current location of a cultural property?”, “Which is the type of a cultural property?”. Other examples of stories concerned: linking cultural properties to multimedia resources, such as photographic documentation; describing specific attributes of drawings or music heritage; tracking over time the availability of cultural properties that have been confiscated from organised crime; relating catalogue records to heritage protection agencies.

Handling large ontologies is a non-trivial challenge for ontology engineers, reasoners and users. A modular approach, as opposed to a monolithic design, i.e. one ontology module addressing all CQs, favours readability, reusability and maintainability of an ontology [50,61]. Ontology modules are meant to identify conceptually coherent subparts of the domain. In this respect, XD lacks explicit guidelines on how to approach a modular design of potentially large, networked ontologies. Based on our experience in designing the architecture of the ArCo ontology network, we provide a set of guidelines as well as an architectural pattern that can be applied in other contexts with similar characteristics as the ArCo’s project.

ArCo ontology network, currently including seven modules:

We name the architectural pattern implemented by the ArCo ontology network

a root module acts as the

a second layer of the network is composed of the main

a leaf module contains foundational concepts such as the part-whole relation, agent,

physical object, role, etc. i.e. which are not domain-specific. This module is

imported by all main thematic modules. In ArCo this is the

The implementation of the

In the context of ArCo, at a very early stage of development, we could leverage and

analyse the ICCD catalogue, its data and standards, as well as the user stories provided

by the customer team. In agreement with ICCD domain experts, we first focused on the

A first observation is that catalogue records contain: (i) data directly describing a

cultural property and its contexts (e.g. techniques and materials, related exhibitions,

surveys); (ii) data about catalogue records themselves (e.g. when they were created, by

whom, their version, etc.); (iii) data about other entities referring to cultural

properties (e.g. inventories, documentation, bibliography). Based on this observation, a

main thematic module (

Cultural properties, which are the main subjects of study of the CH domain, are described

by means of measurable, intrinsic aspects such as length, weight, materials, conservation

status, as well as properties deriving from an interpretation process, such as authorship

attribution, dating. This conceptual distinction suggested us to define two additional

thematic modules of the network:

Finally, it results fairly evident that the

The foundational concepts captured by the

Finally, the

Representative competency questions answered by ArCo ontology network

Ontology Design Patterns (ODPs) are established solutions to modelling problems (i.e.

requirements) that emerge from, and evolve through, applied and theoretical results. ODPs

can have relations among them, including subsumption, overlap, merge, etc. (cf. [30]). ODP subsumption is at the core of many formal

ontology issues concerning the usefulness of foundational ontologies [22,28]. For example, the

competency question

When dealing with an ontology project as complex as ArCo, we need a good deal of generalisation that provides a shared modeling style to its data. The details of the ontological choices made against requirements are presented in Section 5.

Foundational commitment in ArCo: DOLCE-Zero

The ODPs implemented in ArCo ontologies are mostly taken from a set of interrelated

foundational ODPs, inspired by DOLCE UltraLite+DnS (DUL)37

DUL is a commonly used foundational ontology that commits to (i) DOLCE [28] distinctions: objects vs. events vs. qualities (specific attributes of objects and events) vs. qualia (dimensional representations of qualities), and to (ii) D&S (Descriptions and Situations) [23,47] distinctions for entities including situations vs. descriptions vs. concepts (see Section 4.2 for details), e.g. types, topics, roles, tasks, quality types, parameters, reified relations and classes, etc.

DOLCE-Zero contains a small set of classes on top of DUL, relaxing ambiguity resolution

when needed. In particular, it introduces four “union classes”:

DOLCE

Zero unions are formalised as follows:

However, DOLCE (and its OWL implementation in DUL) has disjoint classes for physical vs. social objects vs. spatial regions, since the authors wanted to pursue a multiplicative approach, i.e. ideally we would need to introduce an entity in the universe of discourse for each distinct entity, e.g. Uffizi-as-building, Uffizi-as-museum, Uffizi-as-organisation. However, refactoring existing datasets in order to enforce multiplicativism is not simple in practice, since we do not always know the context allowing for establishing the distinction. In addition, the co-predicative nature of certain entities is built in the cognitive intentionality of speakers or data modellers, and it would be very difficult to change the data. The result of those pragmatic issues is that it would be dangerous to choose one particular meaning (e.g. Uffizi-as-building), because that choice might induce an inconsistency if data refer e.g. to the director of Uffizi (i.e. Uffizi-as-museum).

As a matter of fact, those distinctions are seldom represented in lightweight ontologies and natural language lexicons: trying to push multiplicativism would lead to debatable inconsistencies, as argued in [51], which reports a large-scale experiment that uses DOLCE-Zero to detect millions of inconsistencies in the DBpedia knowledge graph. In that experiment DOLCE-Zero union classes have avoided a much larger amount of inconsistencies, which cannot be fixed. Of course, for integrity checking, we could resort at anytime to data analytics that test multiplicativism for e.g. a fragment of the DBpedia or ArCo knowledge graphs.

ArCo foundational distinctions have a similar foundational commitment as DOLCE-Zero, so

using more specific DUL’s distinctions only when necessary. This commitment is implemented

in

A cluster of foundational requirements in ArCo is about events, states, actions, and

their expressions, types and interpretations. Literature on these notions is heterogeneous

[12], applying pragmatical, logical, and

philosophical criteria, often mingled, to draw distinctions. As an example of the

underlying problems, we can distinguish (i) a

A related problem is that ArCo requirements (as most ontology design projects for the

Semantic Web) need to represent n-ary relations (with Cf. the

W3C Working Group Note at

While this is a representation problem, rather than an ontological one, there is ample evidence (see e.g. [15,24,31,33]) that similar cognitive constructions (intensions, logically speaking) apply to events, states, actions, event types, action schemas, frames (in the sense of [21]), and relations as first-order objects.

Based on this assumption, a useful generalisation can be applied, treating those entities as either (i) reified (extensional) relationships, e.g. the situation of Canova’s Venus Victrix being located at Galleria Borghese in Rome since 1838, or (ii) reified (intensional) relations, e.g. the Attribution frame representing the authorship attribution to cultural properties, as made by an interpreter based on some criteria.

That generalisation is applied quite often in ontology design. ODPs such as

The generalisation implements the cognitive assumption of extensional relations in the

world being “framed” or “schematised” through observation, interpretation, diagnosis,

norm, expectation, etc. In other words, framing applies a conceptual construction to a set

of sensory perceptions, given data, reported facts, etc. The correspondence between frames

and their occurrences leads to assigning contextual roles to participants (e.g. being a

ArCo adopts the framing patterns to the representation of cultural properties, using the

class

While situations allow to generalise over any relational concept, regardless of their

arity, semantic web practices sometimes prefer binary predicates (called

For instance, agencies related to a cultural property (e.g. a

Other foundational event-like notions

Other event-like notions in foundational and cultural ontologies can be aligned as

subclasses of

CIDOC CRM E5 Event, subclass of E4 Period, is defined as

As a second example, DOLCE [28] notion of Event

(a.k.a. Perdurant or Occurrent) is defined axiomatically as the class of entities that

“happen in time”, i.e. some of their proper parts/phases may not be present at each time

they are present (extreme cases include instantaneous and stationary states).

Participation is defined in DOLCE for all events. An extension of DOLCE [47] axiomatises also intensional relations (called

This stance, besides cognitive results, is also inspired by Davidson [15], which provides a solid ground to events as first-order entities, corresponding to (reified) relationships.

Finally, as discussed in Section 4.5, a constructive

stance is immediately applicable to the

Epistemological stance in ArCo

Related to the previous section, ArCo applies a distinction between three epistemological

levels:

For example,

The epistemological stance in ArCo involves the distinction between: (i) catalogue

versions, e.g. when someone adds data in a catalog record, (ii) interpretations, e.g. when

a catalogue record reports an attribution, and (iii) current factual data, e.g. the

authoritative data for a cultural property at the current state of the art. When the

catalogue record is the only source for a knowledge graph, we depend on its versions to

reconstruct the epistemological trajectory of

However, the vision of ArCo goes well beyond reengineering traditional catalogues, and its epistemological stance accommodates for a more complex knowledge graph hosting interpretations with different provenance and reliability, different reporting sources, and potentially conflicting factual data, so enabling cultural knowledge graphs as investigation tools for researchers.

ArCo top level hierarchy and distinctions

The most general Cultural Heritage concept modelled in ArCo is

The root of ArCo’s hierarchy (depicted in Fig. 7) is the

class

The taxonomy of cultural properties.

The ICCD standards extensively address different aspects of the cultural heritage

domain in order to define the structure and content of catalogue records. Catalogue

records can describe 30 types of cultural properties (cf. Section 2), each showing distinguishing features. ArCo’s further specialisations in

the top level hierarchy are inspired by these distinctions.44 We plan to reflect

additional classifications as provided by official national or international

standards.

We report the definitions for the specific classes, according to ICCD standards.

In this section, we: (i) illustrate some of the main modelling issues that have emerged from ArCo’s requirements, along with the modelling solutions adopted for addressing them; (ii) use (i) as driving examples to describe the process of matching requirements to Ontology Design Patterns (ODPs), introduced in Section 3, as part of the XD methodology.

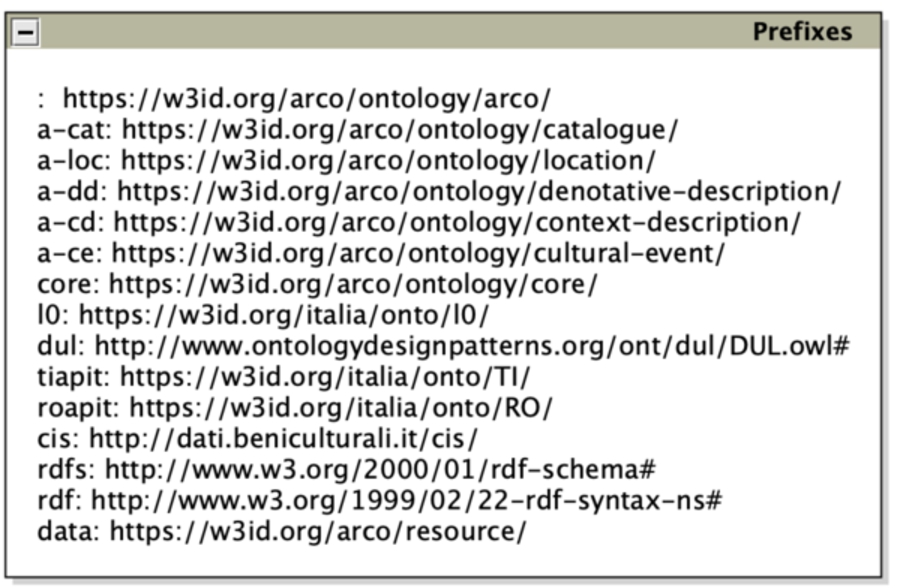

Figure 8 shows all the prefixes used in the next diagrams,

designed according to Graffoo notation.45

Prefixes used in the next figures.

Dynamic concepts, such as situations that change over time, are present in almost every domain. There are different patterns that model dynamic situations: in this subsection, we exemplify ArCo approach to dynamicity with catalogue records and cultural property locations, which may both evolve over time.

A catalogue record is then a fluent entity, an information object that changes as the

description of its denoted cultural property changes.47 Cf. Section 4.5 about the difference between factual and reporting

situations.

Every change of a catalogue record produces an information object, which is a new version of the catalogue record, including the reporting of a new situation involving a same persistent entity. Nevertheless, the catalogue record finds its persistence in describing the same real-world object, i.e. the same cultural property, independently from different versions of the content and the reported entity changes over time. Thus, the catalogue record is represented as a persistent information object, and is related to its versions, which are information objects reflecting changes of its content over time.

Information Realization and Sequence ODPs reused for modeling catalogue records.

The

Figure 9a depicts catalogue record modeling with the reused

ODPs. A catalogue record is represented by the class

In Fig. 9b we can see an instance of this model. The

Time indexed situation ODP implemented for modelling different types of locations of a cultural property.

Figure 10a shows the class

Figure 10b depicts one of the time-indexed typed locations

of a

A cultural property can be involved in many different situations during its life: it can

be commissioned, bought or obtained, used (e.g. a garment wore by one person), it can be

part of a collection, photographic or numismatic series, can change its availability as a

result of theft, destruction or rescue, etc. Each situation defines a contextual relation

between the cultural property and the other entities involved. The

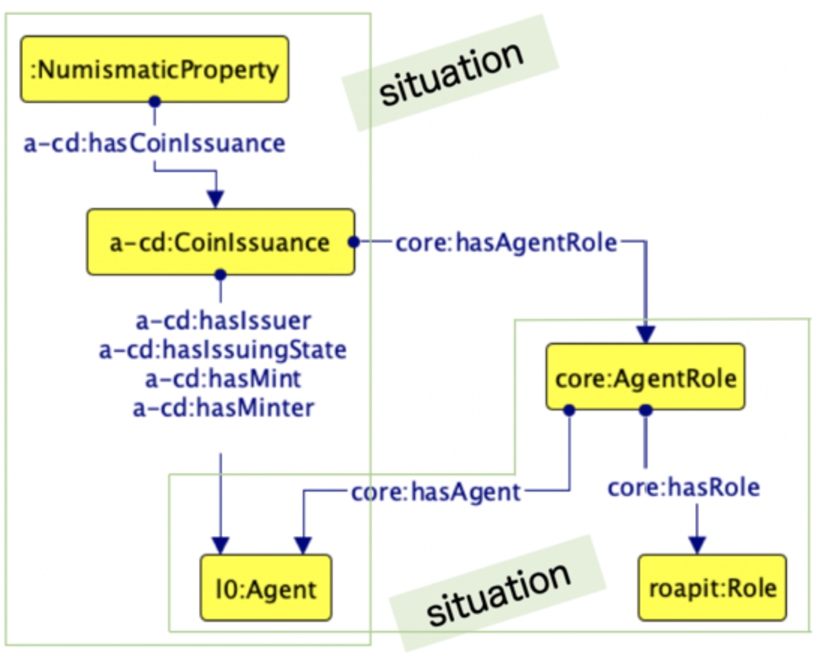

For example, when a coin is issued, many entities play a role in such context: the

cultural property itself, the issuer, the issuing State, the mint and the minter. The

“coin issuance” is a situation representing the relation that keeps together all these

entities for that purpose. Figure 11 shows how we model the

Situation ODP reused for representing the coin issuance.

Situation ODP reused for the authorship attribution.

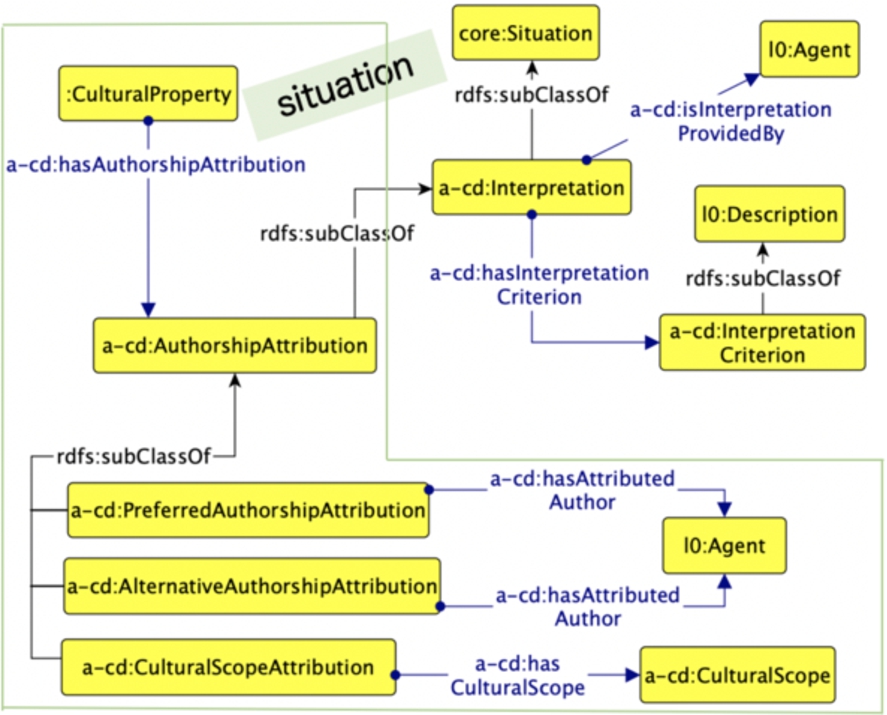

A central situation in which a cultural property can be involved is the authorship

attribution, a specific type of

Let us take as an example a coin55

Different technical characteristics of a cultural property can be specified, in order to

describe its technical status: the constituting materials (e.g. wood, clay), the employed

techniques (e.g. oil-painting, melting), the shape (e.g. square, octagon), the file format

for a digital photograph (e.g. “.gif”, “.jpeg”), the prevalent colour of a garment, etc.

All these concepts (i.e. material, technique, shape)

A specific set of

The D&S pattern reused and specialised for modelling technical descriptions and status of a cultural entity.

Figure 14a shows how we model the

Let us take a compass by an Italian workshop of the 19th century58

The involvement of a cultural property in an exhibition during its life cycle would be

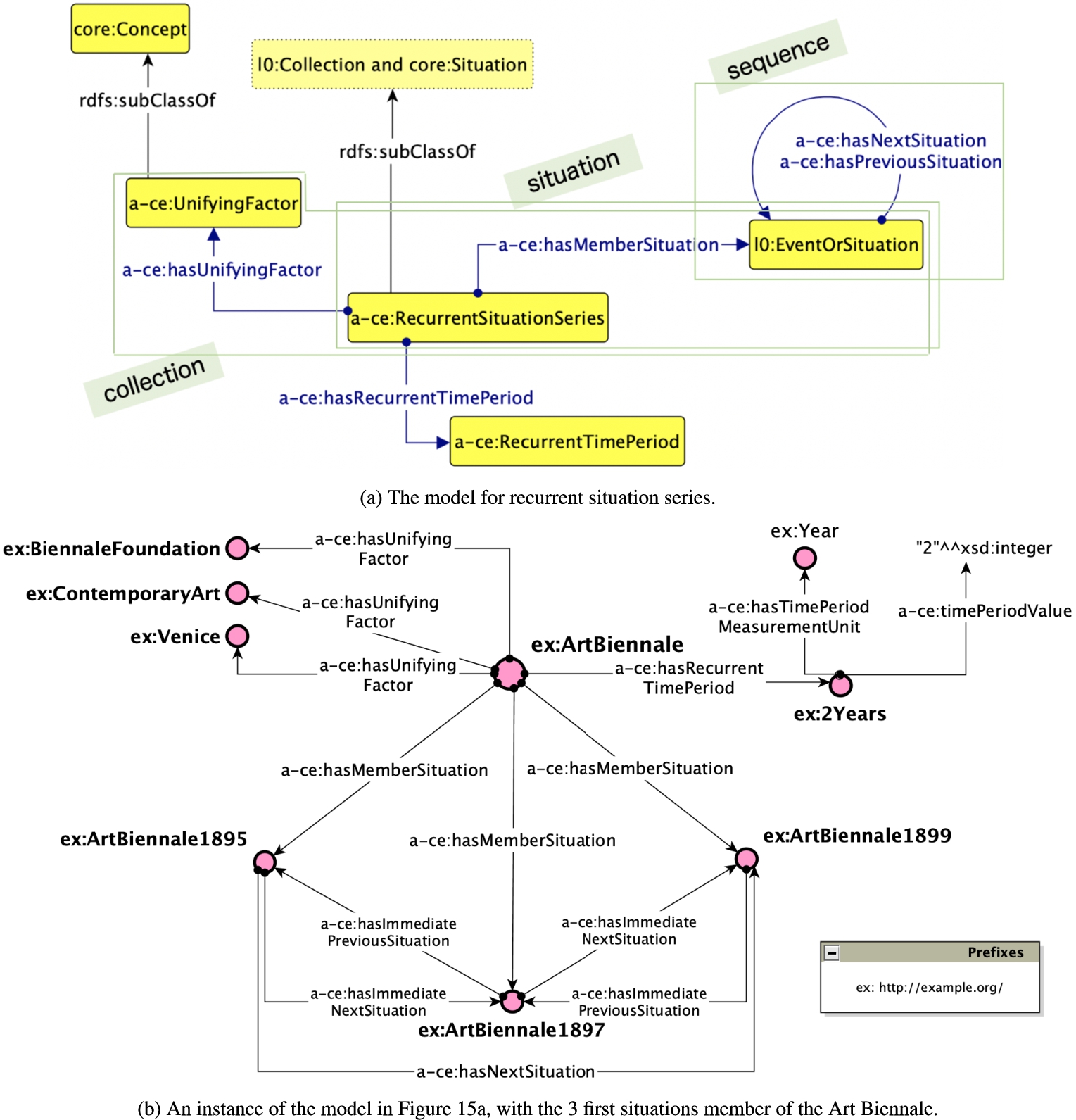

referred to, in everyday language, as a cultural event. When we informally refer to the

repetition of a cultural event (e.g. different editions of an annual painting award), we

use the term

As these particular series of situations unfold, we can recognise a pattern in their iteration: an exhibition that has different editions over years usually follows a pattern in planning consecutive editions at regular time intervals (e.g. one edition per year). Moreover, it is possible to identify attributes that give all occurrences a unity: a general topic that does not change i.e. contemporary art, a place that hosts the situation i.e. Venice, etc.

The new pattern Recurrent Situation Series as implemented in ArCo.

Recurrent situations are usually modelled as a special type of events (cf. Wikidata60

We represent, as depicted in Fig. 15a, recurrent situation

series (

In Fig. 15b we can see an instance of this pattern. The

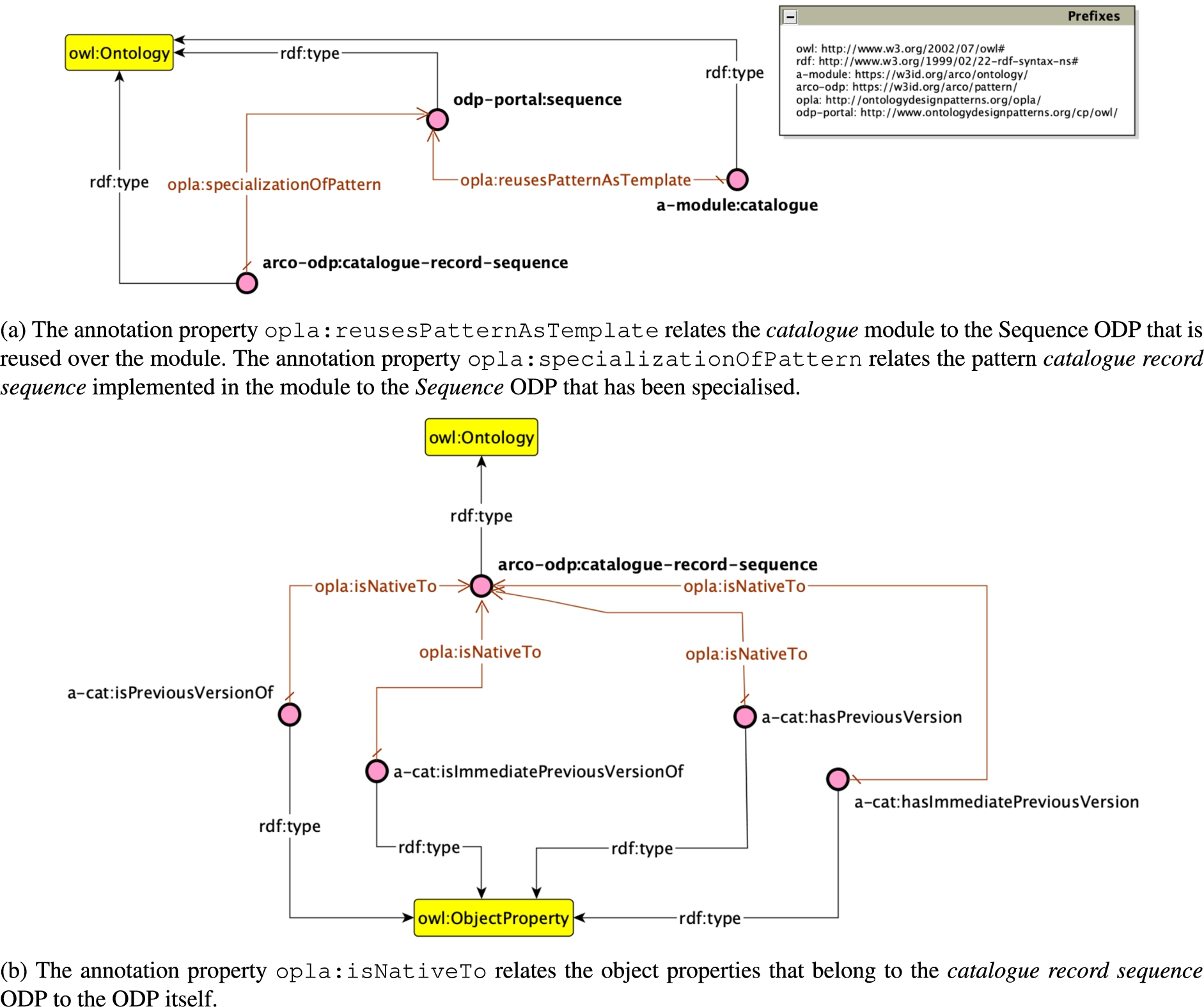

Reusing ontologies and ODPs can be done by following two main different approaches, depending on the conditions and requirements of a project: direct and indirect reuse [54].

Annotating reused patterns supports the identification of ontology alignments, which is a

tedious, non-trivial task. In fact, ODP annotations may ease the process to understand and

explore an ontology. These assumptions have driven the development of the simple Ontology

Pattern Language annotation (OPLa)66

An example of a reused ODP annotated with OPLa ontology.

ArCo is evaluated along different dimensions: functional, logical, and structural

dimensions as identified by [27]. The functional

dimension is related to the intended use of a given knowledge graph (KG) and of its

components, i.e. their function in a context. It is a core dimension for ontology testing.

In fact, it allows ontology designers to assess the ability of an ontology to address

requirements and cover the domain. The logical dimension measures whether an ontology can be

successfully processed by a reasoner (inference engine, classifier, etc.). Finally, the

structural dimension of a KG69 The authors of [27] refer to ontologies in their analysis. In the scope of this paper we

generalise their results to knowledge graphs, since we also compute the distribution of

the instances across classes.

For instance, the entities representing the concepts of dating and attributing an author

to a cultural property should be disjoint, since there can be no individuals that are

dating and authorship attributions at the same time. For validating the ontology regarding

this requirement, the testers inject in the KG an individual belonging to both

Demo:

Source code:

Workflow implemented by TESTaLOD based on the user interface.

Let us consider as an example the competency question “Which archival set (fonds, series,

subseries) a cultural property is member of?”, and that we want to verify if our ontology

models information on membership of cultural properties to archival record sets. The test

case for running this test will be an OWL file, annotated with the following

properties:72

In order to allow TESTaLOD to automatically run this test, two new annotation

properties73

The link specification files for ArCo, CIDOC

CRM, and EDM are published with the DOIs

The terminological coverage as recorded for ArCo, EDM, and CIDOC CRM.

For assessing the structural dimension of ArCo KG we use different metrics that have been defined and used in literature [13,27,44,49,58,63,66]. First, we compute base metrics that record quantitative aspects of ArCo knowledge graph: classes and their instances, properties, axioms, etc. Then, we compute schema and graph metrics aimed at assessing (i) the richness, width, depth, and inheritance at the schema level and (ii) the cohesion, coupling, multihierarchical degree, and extensional coverage of the ontologies. Those parameters are used for understanding the quality of ArCo expressed in terms of (i) flexibility, (ii) transparency, (iii) cognitive ergonomics, and (iv) compliance to expertise. These quality properties have been defined in [27]: (i) flexibility is the property of an ontology to be easily adapted to multiple views; (ii) transparency is the property of an ontology to be analysed in detail, with a rich formalisation of conceptual choices and motivation; (iii) cognitive ergonomics is the property of an ontology to be easily understood, manipulated, and exploited by its consumers; and (iv) compliance to expertise is the property of an ontology to be compliant with the knowledge it is supposed to model.

Table 280 The number of triples and individuals for EDM

were retrieved by querying the SPARQL endpoint of Europeana (i.e.

We use the CIDOC CRM v6.2.1 and

EDM v5.2.4. Available at

Available at

Available at

Comparison of base knowledge graph metrics as computed for ArCo v0.1, ArCo v0.5, ArCo v1.0, CIDOC CRM, and EDM, respectively. ArCo v1.0 is the latest release of the knowledge graph

Schema and graph metrics with corresponding quality properties addressed and values recorded. Values are reported for ArCo v0.1, ArCo v0.5, ArCo v1.0, CIDOC CRM, and EDM. ArCo v1.0 is the latest release of the knowledge graph

We focus on ArCo KG v1.0, which counts 20,030,941 individuals, for analysing the

distribution of those individuals across classes. Such an analysis allows us to understand

how individuals are organised in the knowledge graph with respect to concepts. This

suggests possible compliance to expertise. In fact, it provides an indication about the

recall of classes over the entities of the domain (i.e. the individuals). In this case the

recall is meant as extensional coverage computed as the average number of entities

captured by ontology classes. It is worth saying that compliance to expertise has a strong

functional characterisation that we investigate further by analysing the functional

dimension. Notwithstanding, the distribution of the instances across classes is a fair

structural metric as it provides us a tool for empirically validating if dense areas (most

populated parts of the ontology) correspond to ontology design patterns. The use of

patterns is among the indicators suggested by [27] for measuring the quality properties of transparency and cognitive

ergonomics. Figure 19 shows the top-50 ranked classes based

on the number of individuals they have in the knowledge graph. The ranking including all

the classes can be retrieved by querying the knowledge graph.86 The result set with

the ranking of all classes is available at

Top-50 ranked classes according to the number of individuals they have in the knowledge graph.

A high degree of modularity in an ontology is an indicator of transparency and flexibility. ArCo ontology network is highly modularised, however addressing transparency and flexibility meaningfully requires appropriate design of ontology modules. We compute the following metrics to assess the quality of ArCo modules.

Results of the module metrics

Table 4 reports the values recorded for the aforementioned

module metrics computed for each ontology module of ArCo. Module metrics are obtained by

using the Tool for Ontology Module Metrics87 The specific version of the tool we used can

be downloaded from

In the context of ArCo’s project, performing the testing activities initially resulted in a significant manual effort, for both annotating and running the unit tests. For this reason, TESTaLOD has been designed and implemented. The successful execution of inference verification, error provocation, and competency question verification is an indicator of (i) computational integrity and efficiency, and (ii) compliance to expertise. The former suggests that the ontology can be successfully processed by a reasoner. The latter suggests that ArCo KG is compliant with its collected requirements. Finally, the terminological coverage measured for ArCo (i.e. 0.72) shows very good results. In fact, the comparison with the results obtained for the Europeana Data Model (EDM) (i.e. 0.07) and for CIDOC CRM (i.e. 0.2) supports the claim that the expressiveness provided by such existing reference ontologies is not completely suitable for addressing ArCo’s requirements.

The analysis of the structural dimension shows that ArCo KG provides a larger terminological component than CIDOC CRM and EDM with 3,416 logical axioms, 340 classes, etc. ArCo is a massive knowledge graph counting of 172,580,211 triples describing 20,030,941 individuals. Nevertheless, ArCo is smaller than Europeana, which in turns counts of 2,836,270,332 triples describing 415,410,190 individuals. This finding is fair, first because ArCo is much younger than Europeana. Additionally, we remark that ArCo organises knowledge about Italian cultural properties only; on the contrary, Europeana contains structured knowledge about digital artifacts provided by 28 EU countries. Then, if we analyse the indicators obtained, we record that they suggest good transparency. In fact, we record:

39.55 axioms per class (i.e. axiom/class ratio), which is similar to that recorded for CIDOC CRM (41.7) and much higher than the number recorded for EDM (7.3);

an inheritance richness (2.48) comparable to CIDOC CRM (1.17) and EDM (0.32). This is a good indication of how well knowledge is grouped into different categories and subcategories in the ontology. Hence, it suggests a deep (or vertical) ontology, which, in turns, may indicate that the ontology covers a specific domain in a detailed manner;

a higher NoC (i.e. number of external classes) value for ArCo (38) than for CIDOC CRM (0) and EDM (3). However, this result should be contextualised with respect to the total number of classes (340, 84, and 41 for ArCo, CIDOC CRM, and EDM, respectively). Accordingly, we record 0.1, 0 and 0.07 external classes on average for ArCo, CIDOC CRM and EDM. This indicator suggests, besides transparency, low coupling;

a high degree of relatedness among the different classes, i.e. strong cohesion. In

fact, the classes are organised in a hierarchy with (i) a low depth (i.e.

Low coupling (i.e. NoC) and high cohesion (NoR, NoL, and ADIT-LN) also suggest flexibility, i.e. the property of adapting or changing the ontology with limited side-effects. The property of cognitive ergonomics (i.e. property of a knowledge graph to be easily understood, manipulated, and exploited by final users) is suggested by:

a lower class/property ratio for ArCo 0.44 (on a scale ranging from 0 to 1) than for CIDOC CRM (0.74) and EDM (0.84);

a low depth and breadth of the inheritance tree (i.e. 3.93 as ADIT-LN, 5 as max depth, 5.75 as average breadth, and 34 as max breadth). According to these indicators ArCo has a similar inheritance tree as EDM. Instead, the inheritance tree of CIDOC CRM is slightly different as it results in higher values for depth and lower for breadth. This means that ArCo has a more compact inheritance tree than CIDOC CRM;

a moderate tangledness (i.e. 0.56 on a scale ranging from 0 to 1) if compared to CIDOC CRM (0.18) and EDM (0.07). This suggests that the inheritance tree is more complex (a number of classes have multiple superclasses) than that of CIDOC CRM and EDM. We remind that this moderate complexity is only structural and derived from functional requirements. Nevertheless, the high number of annotation axioms (8,374) facilitates user readability. This value is much higher than (i) the total number of classes and properties in the ontology and (ii) that recorded for CIDOC CRM (2,589) and EDM (125);

the use of patterns. With regards to this it is worth noticing that patterns identify

dense areas within the knowledge graph. In fact, most of the top-ranked classes among

the most instantiated (cf. Fig. 19) identifies

patterns, such as those described in Section 5.

Significant examples are

With respect to the evolution of ArCo KG during the design process, we record a

significant growth of the knowledge graph from v0.1 to v0.5. For example the number of

axioms, classes, and object properties changes from 715, 54, and 38 to 9,564, 329, and

332, for v0.1 and v0.5, respectively. This observation is confirmed by the fact the

knowledge graph counts

Module metrics suggest that all modules are modelled by following a similar design

principle: identifying small and highly cohesive partitions as basic building blocks for

ontology design. This result is fully compliant with the pattern-based approach adopted

for modelling ArCo. As a matter of fact, the atomic size values we record are low and they

differ only slightly from one module to another, i.e. ranging from 4.85 (core module) to

6.63 (context description module). The appropriateness values recorded are optimal

(

Developing a KG using XD: Lessons learned

This project led us to reflect on both strong and weak points of the methodology applied, thus suggesting possible improvements for the future. In particular, in this section we want to focus on two key aspects of eXtreme Design methodology: (re)using patterns and test-driven design. Finally, we discuss how involving the community let us collect a wider set of requirements.

Reusing existing ontologies and patterns

eXtreme Design is a methodology that encourages the reuse of Ontology Design Patterns

(ODPs), as common modelling solutions to classes of problems recurring in ontology design.

Patterns to be reused can be both selected from dedicated catalogues (such as the

Even if ODP catalogues represent a relevant support for pattern-based ontology design, there is a lack of well-documented and well-maintained high-quality ontology design patterns, as well as of tools for supporting ODP-driven ontology-engineering [5], which could guide the user in the selection of ODPs, e.g. by recommending possible ODPs to be reused for a certain modelling requirement. Additionally, using available ontologies as input to generate new ontologies is a difficult process, far from being automated [8,42,64], and can be hampered by scarcely documented ontologies, ontologies big in size and with a high number of classes, properties and axioms. Moreover, there is a need to carefully (thus time-consuming) consider the context, intended usage and semantic meaning of the ontology entities. Issues in reusing existing ontologies seem to be confirmed by [1], which observes a lack of explicit alignments between ontological entities in Linked Open Data, while the high number of top level classes may suggest a high number of conceptual duplicates.

Ontology reuse would benefit from annotations about the ODPs implemented by ontologies: [37] proposes a simple representation language for ontology design patterns (OPLa ontology), which makes use of OWL annotation properties for documenting ODPs. OPLa certainly contributes to fill a gap but its expressiveness requires an improvement. ArCo ontologies have been annotated with OPLa, but we soon realised that we were missing many relevant attributes of, and relations between, patterns that could be annotated and therefore possibly later detected from other parties.

As described in Section 5, during ArCo KG development we

incrementally selected CQs from the available list and then match them with one or more

existing ODPs. In this process, we also inspected state-of-the-art ontologies, such as

CIDOC CRM (footnote 75), EDM (footnote 74), BIBFRAME,88

Testing an ontology network, which is periodically released in unstable and incremental versions, can be a time-consuming and repetitive activity, and, if performed manually, error-prone. Tests need to be run in order to validate our ontology, by translating competency questions into SPARQL queries, verifying expected inferences and provoking expected errors. Each time there are changes over the ontologies (e.g. a new version which models new information), new tests are created, and all previous tests must be executed again and, if needed, updated, in order to identify new possible bugs.

While performing testing in the context of ArCo KG, we realised that tools automatising it would have been of great support for the testing team. Building TESTaLOD (described in Section 6.1) helped us executing tests over new versions of the ontology network, allowing for automatic regression tests. At the moment TESTaLOD only addresses CQs-based testing and their corresponding SPARQL queries. Tests for inference verification and error provocation are executed externally. Moreover, the creation and annotation of test cases is not automatised. We believe that developing tools supporting (semi-) automatic creation of unit tests is of paramount importance to push the overall quality of released knowledge graphs. TESTaLOD is just a scratch on the surface of a possible tool suite for automatising many activities of ODP-based and test-driven methodologies such as XD.

Extended customer team for Cultural Heritage LOD projects

In ontology engineering methodologies, domain experts are the main actor and input source

of requirements and validation tests: they give a crucial contribution, especially in

defining domain and task requirements that guide the ontology design and testing phases

[46]. User stories (then translated into

Competency Questions) were used as a

Whilst not denying the key role played by ICCD domain experts in eliciting requirements, by means of both cataloguing standards, catalogue records and discussions on specific topics and issues, we believe that the Cultural Heritage (CH) domain has a specificity in its users: the community interested in CH data for different purposes is wide and diverse, involving domain experts, researchers, art critics, students, simple citizens, institutions and companies owning and managing CH data or data on related domains (e.g. tourism), public administrations and private companies offering services related to the CH domain, etc.

Cultural Heritage is usually managed with a top-down approach, where professionals and data owners (Galleries, Libraries, Archives, Museums, etc.) are in charge of defining standards and means for describing, representing and making available data on cultural heritage. More rarely, end-users are involved in this process. Instead, institutions aiming at enhancing cultural heritage would benefit from a bottom-up approach, alongside a top-down one, for collecting requirements from the community that consumes their data.

Linked Open Data projects can help in getting domain experts closer to their potential

wide and diverse audience, and in promoting interactions between them. In carrying out the

ArCo’s project, considering the characteristics of the CH domain and CH users, we involved

a wider community in the requirements and feedback collection phase. Launching an Early

Adoption Program, and involving the community in the unstable and incremental phases of

the project, allowed us to capture a wider range of perspectives and requirements. For

example, Synapta,90

With ArCo’s EAP, we experimented the involvement of private and public organisations, and extended XD to this purpose by identifying a set of tools (web forms, mailing lists, GitHub issue tracker), and practices that could support collecting requirements from such a diverse community (webinars, meetups). We believe that collecting requirements from a very diverse community is relevant for the CH domain but can apply also to other contexts, hence methodologies and possible supporting tools shall consider this aspect, so far neglected to the best of our knowledge, among their key requirements.

The Semantic Web and LOD principles have changed how cultural institutions manage and publish their data, how machines and users can access linked and enriched data on Cultural Heritage (CH), and have widened the possibility of reuse and generation of new knowledge starting from existing data. Ontologies make it possible to go beyond traditional CH data production and publication, providing users with new, more intelligent and eventually personalised Web applications and services, and with more and richer data [39].

LOD and ontologies for Cultural Heritage

Projects such as LODLAM92

Publishing and interconnecting data is leading to the creation of international CH

portals [39], such as Europeana,99

Along with the publication of LOD collections, ontologies representing the CH domain are

being developed, and some of them are becoming widely adopted standards, e.g. the

Europeana Data Model (footnote 74) (EDM) [41] and CIDOC Conceptual Reference Model (CRM) (footnote 75) [19]. In addition to

them, many other ontologies model specific domains that can be relevant to CH (e.g. the

PRO ontology101

ArCo substantially contributes to the existing LOD CH cloud with a huge amount of invaluable data of Italian cultural properties, and an ontology network tackling overlooked modelling issues.

There is quite a variety of knowledge associated with works of art and their dynamics, including their dating, authorship attribution, history, maintenance, symbolic and cultural interpretation, catalog reporting, etc. The design of CH knowledge dynamics requires then sufficient flexibility, which ArCo gathers from its constructive stance, described in Sections 4.2 and 4.5.

We provide here some cases, where ArCo supplies modelling solutions that are not easily obtained in other widely adopted CH ontologies (see also some related foundational differences in Section 4.4).

As an example, the painting “Woman Portrait” by Caspar Netscher (17th century) is associated with several types of locations: it is now located at the Uffizi in Florence, it was stored in 1942 at Poppi Castle, it was involved (hence temporarily moved) in an exhibition at Pitti Palace in Florence in 1773 (cf. Fig. 20).

The painting “Woman Portrait” by Caspar Netscher (17th century) and the different (types of) locations it is associated with.

CIDOC CRM allows us to encode the data about all these locations by means of a “move”

event that is both temporally and geographically indexed. This representation lacks the

means to express (i) the knowledge about the type or motivation of a specific location,

e.g. production, exhibition, storage, etc.; (ii) the temporal validity of that location,

which is different from the time at which a moving event occurs; (iii) in addition,

location types can be further characterised by other data or entities specific to them. In

ArCo it is possible to represent the moving event with its temporal and spatial indexing,

as well as the (functional) type of its locations with their own temporal validity, and

their specificities. We also remark that CIDOC CRM events are defined as

Other examples of ArCo design patterns motivated by its constructive stance include: the

modelling of catalogue records as entities of the CH domain representing

We will call the first of these levels

ArCo distinguishes these three levels (cf. Section 4.5),

by providing models for: the denotative representation of a cultural property (materials,

conservation status, inscriptions, measurements, etc.); the process of cataloguing

(catalogue records as documents describing cultural properties, cataloguing agents, etc.);

the interpretation process, with agents involved and criteria guiding it

(

Both EDM and CIDOC CRM distinguish between the cultural object and possible information

resources related to it:

For example, a musical instrument would be of type

Specific types of cultural properties share some features (e.g. location, dating, author), but ArCo also needs to satisfy more modelling issues, overlooked by other ontologies so far, related to specific cultural properties, such as the diagnosis of a paleopathology and the interpretation of sex and age of death in anthropological material, other types of surveys on archaeological objects (e.g. laboratory tests), the coin issuance, the Hornbostel-Sachs classification of musical instruments, musicians and musical ensemble, recurrent art exhibitions, etc.

For example, let us take a modelling problem that a cultural institution publishing data

on anthropological materials may need to address: it has been estimated that discovered

anthropological materials (teeth, mandible and radius) are from a female individual dead

at young age. The assignments of the attributes “female” and “young age” would be modelled

in CIDOC as

Even in the case of features shared by most cultural properties, in many cases CIDOC

lacks the expressiveness needed for modelling ArCo data without missing information. For

example, according to CIDOC, changes of the physical location of a cultural property are

represented by move events, and we can only know from and to where the cultural property

was moved, and when the move happened, while there is no means to express e.g. the role

that a specific location played during a time interval, with respect to a specific

cultural property. EDM specialises

For each module

of ArCo, a separate file stores its alignments, e.g.

Other alignments include: BIBFRAME (footnote 88), FRBR

(footnote 89), FaBiO109

As discussed in [17], when building a knowledge graph (KG) for publishing its data, a cultural institution makes a first relevant choice: it can publish Linked Open Data by building and using its own infrastructure, give its data to a cultural heritage data aggregator such as Europeana, or invest in infrastructure for publishing its data as well as in the whole process for producing them, by using the ontology model of an aggregator. In making this choice, a cultural heritage administrator is influenced by different aspects both political, economical and technical.

An aggregator provides a single point of access to different collections from many

cultural institutions, giving visibility and guaranteeing respective enrichment and

interoperability. Nevertheless, the adopted ontologies only capture a subset and a

simplified encoding of the available information about a cultural property because they

prefer a

In our opinion, when possible, it is preferable for an institution to carry out the whole process of data production and publication and to release as much rich data as possible, while guaranteeing the interoperability with and the publication (of simplified or subsets of its data) through aggregators: this is the approach followed by ArCo. This choice allows a cultural institution to clearly define its requirements regardless of which data is possible to publish through an aggregator, and by using which ontology. Moreover, such an approach better supports an open requirements collection, not limiting the commitment of the developed ontologies to the institutional guidelines. Having full control on the ontological commitment minimises loss of information contained in input data, for reaching a wide audience of diverse users.

Conclusion

This paper presents how ArCo, a knowledge graph of Italian Cultural Heritage, has been designed, following the principles of the XD methodology. There are other valuable LOD resources containing and describing the Italian CH. Nevertheless, ArCo KG has a prominent role in this domain, not only because it injects in LOD a huge amount of high-quality data, extracted from the official institutional database of Italian Cultural Heritage (General Catalogue), but also because the expressiveness of its ontologies facilitates the adoption of its LOD by scholars and researchers in humanities and beyond, to make discoveries and find new patterns. The expected impact of ArCo KG on the general CH domain is motivated by a set of new requirements, addressed by its ontologies, which have been overlooked so far. These requirements emerged both from the richness of details provided by the General Catalogue records as well as from a growing community of consumers and producers of CH LOD.

ArCo KG can have an impact on the general Semantic Web community as well, since it is designed by following a robust methodology, based on the reuse of ontology design patterns, including extensive testing, detailed documentation and tutorial material, and formal evaluation: thus, it is a well-documented case study of the application of a methodology of ontology engineering (eXtreme Design), and can be used as a reference example by other researchers that are approaching knowledge graph engineering.

ArCo KG is still evolving and growing, and can be further improved and enriched. We plan to

extend our ontologies, in order to model other aspects not addressed by the current version,

e.g. some specific characteristics of naturalistic heritage, like slides and phials

associated to an

ArCo KG will be enriched by extracting structured data from many textual metadata contained in the catalogue records (e.g. generic narrative descriptions of the cultural properties, historical biographical data about authors, etc.), using NLP techniques. Additional effort is being put to complete the translation of the data to other languages, starting from English, with an automated bootstrap to be refined by the community. Finally, ArCo’s project has highlighted the need for tools for facilitating reuse and testing, in general but also specific to the CH domain.