Abstract

Cooperative Intelligent Transport Systems (C-ITS) play an important role for providing the means to collect and exchange spatio-temporal data via V2X-based communication between vehicles and the infrastructure, which will become a central enabler for road safety of (semi)-autonomous vehicles. The Local Dynamic Map (LDM) is a key concept for integrating static and streamed data in a spatial context. The LDM has been semantically enhanced to allow for an elaborate domain model that is captured by a mobility ontology, and for queries over data streams that cater for semantic concepts and spatial relationships. Our approach for semantic enhancement is in the context of ontology-mediated query answering (OQA) and features conjunctive queries over DL-LiteA ontologies that support window operators over streams and spatial relations between spatial objects. In this paper, we show how this approach can be extended to address a wider range of use cases in the three C-ITS scenarios traffic statistics, traffic events detection, and advanced driving assistance systems. We define for the mentioned use cases requirements derived from necessary domain-specific features and report, based on them, on extensions of our query language and ontology model. The extensions include temporal relations, numeric predictions and trajectory predictions as well as optimization strategies such as caching. An experimental evaluation of queries that reflect the requirements has been conducted using the real-world traffic simulation tool PTV Vissim. It provides evidence for the feasibility/efficiency of our approach in the new scenarios.

Introduction

The development in (semi)-autonomous vehicles leads to an extensive communication between vehicles and the infrastructure, which is covered by Cooperative Intelligent Transport Systems (C-ITS). These systems produce temporal data (e.g., traffic light signal phases) and geospatial data (e.g., GPS positions), which are exchanged in vehicle-to-vehicle, vehicle-to-infrastructure, and combined communications (V2X). This aids to improve road safety by analyzing traffic scenes that could lead to accidents (e.g., red light violations), and to reduce emissions by optimizing traffic flow (e.g., dissolve traffic jams). A key technology for this is the Local Dynamic Map (LDM) [8] as an integration platform for static, semi-static, and dynamic information in a spatial context.

In previous work, we have semantically enhanced the LDM to allow for an elaborate domain model that is captured by a mobility ontology, and for queries over data streams that cater for semantic concepts and spatial relationships [36]. Our approach is based on ontology-mediated query answering (OQA) and features conjunctive queries (CQs) over

In this paper, we continue the work in [34,36,37] with the goal of showing how spatial-stream OQA can be used to address a wider set of C-ITS scenarios. For achieving this, the approach in [36] is extended with new domain-specific features beyond “generic” spatial-stream OQA. In cooperation with ITS domain experts from Siemens, the C-ITS scenarios – traffic statistics, events detection, and advanced driving assistance systems (ADAS) – were defined and used to single out requirements derived from a domain-specific list of features. We then formulate, for each use case, requirements that should be covered by our approach. The focus of the new, more specific features will be on temporal relations, e.g., we outline the field of V2X integration using LDMs and provide details on our ontology-based LDM (Section 2); we define three scenarios, use cases, desired features, and requirements (Section 3); we conducted expert interviews to obtain feedback on the scenarios, use cases, and query features (Section 4); we present our current approach including data model, query language, and evaluation strategy (Section 5). we report on the implementation of our approach in a prototype and comment on the implementation details (Section 6); we evaluate our work regarding the set of features and requirements based on a traffic simulation and assess the results (Section 7). we list related work, and evaluate existing stream reasoning systems, wherein we compare the performance of our prototype to the systems C-SPARQL [14] and CQELS [55] (Section 8).

In Section 9, we discuss lessons learned and conclude with ongoing and future work.

This article is a revised and extended version of our preliminary work [34], which we have enriched by the following extensions:

a report on interviews of C-ITS experts regarding the spatial-QA approach for real-world ITS applications, whose suggestions have been incorporated to improve the approach (Section 4); a further version of the LDM ontology (Section 2) that builds on the Sensor-Observation-Sampling-Actuator (SOSA) vocabulary [48], following an expert suggestion; a complexity assessment of the query answering approach (Section 5); an outline of optimization strategies for the query rewriting and evaluation covering related parameters for the strategies (Section 5); an increased set of experiments taking the optimization strategies into account (Section 6); a quantitative and qualitative comparison with existing stream reasoning systems (Section 8); and a discussion of the lessons learned (Section 9).

Furthermore, we have enhanced Sections 5 and 6 with the purpose of adding clarity and self-containment to the paper. For this, we have added definitions and details on the semantics and the initial algorithm for the query answering approach from our initial work [36].

C-ITS data integration and query answering

Our setting is the ongoing efforts in data integration and querying in the C-ITS domain. The base technologies for C-ITS are already available and experimentally deployed in infrastructure projects as in [8]. The communication technology is based on the IEEE 802.11p standard, and the data integration effort is the Local Dynamic Map (LDM); there are starting points for this work. IEEE 802.11p allows wireless access in vehicular environments, called V2X communications, which enables messaging between vehicles and the infrastructure. The messages are broadcast every 100 ms by traffic participants, i.e., vehicles and roadside ITS stations, to update other participants about their current states [8]. The main standardized message types are [3–5]:

CAMs (Cooperative Awareness Messages) provide high frequency status updates of a vehicle’s position, speed, and might include vehicle type, model, and turn signals; MAPs (Map Data Messages) describe the detailed topology of an intersection, including its lanes, their connections, and assigned traffic light (TL) signal groups; SPaTs (Signal Phase and Timing Messages) contain the projected TL signal phases (e.g., green, yellow, and red) for each lane; DENMs (Decentralized Environmental Notification Messages) informing whether specific events like road works or a traffic jam occur in a designated area. CPMs (Collective Perception Messages) are aimed to complement CAMs with information from the surroundings that is collected by the LIDAR and RADAR sensors of a vehicle or by an ITS station and partially shared with other traffic participants. For instance, a temporary obstacle such as a parked car can be detected by a vehicle and forwarded to the other participants; PAMs (Precise Awareness Messages) are lightweight correction messages, which are used to correct a vehicle’s positions regarding a fixed “virtual anchor”, since in urban areas the GPS position could be imprecise.

Local dynamic map

The V2X technology does not yet consider the integration of the different types of messages. As a comprehensive integration effort, the EU SAFESPOT project [8] introduced the concept of an LDM, which acts as an integration platform to combine static geographic information system (GIS) maps, with dynamic environmental objects (e.g., vehicles or pedestrians) [1,2]. The integration is motivated by advanced safety applications, which need an “overall” understanding of a traffic environment. The LDM consists of the following four layers (see Fig. 1):

Permanent static: the first layer contains static information obtained from GIS maps and includes roads, intersections, and points-of-interest (POIs);

The four layers of a LDM [8]. (a) The lightweight and (b) SOSA-based LDM ontology (partial renderings).

Transient static: the second layer extends the static map by detailed local traffic informations such as fixed ITS stations, landmarks, and intersection features like lanes;

Transient dynamic: the third layer contains temporary regional information like weather, road or traffic conditions (e.g., traffic jams), and traffic light signal phases;

Highly dynamic: the fourth layer contains dynamic information of road users taken from V2X messages or in-vehicle sensors like the GPS module.

Recent research by Netten et al. [63], and Shimada et al. [76] suggested that an LDM can be built on top of a spatial relational RDBMS enhanced with streaming capabilities. Netten et al. recognize that an LDM should be represented by a world model, world objects, and data sinks on the streamed input [63]. However, an elaborate domain model captured by an LDM ontology and extended query processing or rule evaluation methods over spatial data streams were still missing in the current approaches. An ontology-based LDM has advantages regarding the maintainability and understandability of the model, since dependencies between the concepts are clearly defined and easy extendable without altering the underlying database (DB).

With the support of Siemens and AIST domain experts, we defined two ontologies capturing the main elements of an LDM. In our second modelling, we took the recommendations of the interviewed experts (see Section 4) into account and extended the initial ontology with the “standard” vocabulary of the Sensor-Observation-Sampling-Actuator (SOSA) ontology [48]. This includes concepts such as

Available at http://www.kr.tuwien.ac.at/research/projects/loctrafflog/LocalDynamicMapITS-v0.5-Lite.owl and http://www.kr.tuwien.ac.at/research/projects/loctrafflog/LocalDynamicMapSOSA-v0.1-Lite.owl.

Lightweight LDM ontology Our initial ontology is intended to make querying ITS streams simple, but still capturing the main concepts of a LDM. It follows a layered approach starting with a separation between the top concepts as follows:

We also have defined “domain specific” roles and attributes such as

Extended SOSA ontology In the following, we give an outline of the main concepts of the extension of our initial ontology, aligning it with the SOSA ontology:

We have incorporated the “standard” roles and attributes of the SOSA ontology. This includes the central relations between

The previously defined “domain specific” roles and attributes such as

We illustrate according to Fig. 2(b) a possible query template for

OWL 2 QL Both of the above LDM ontologies are represented in

The OQA component is central part regarding the usage of a semantically enhanced LDM, since it allows us to access the streamed data in the LDM.

The following query detects red-light violations on intersections by searching for vehicles (in y) with an aggregated trajectory and speed above 30 km/h in a 8 secs window, projecting 3 secs into the future (represented as a negative distance), which move on lanes (in x) during the time intervals, where the signal phases of these lanes will turn to “Stop”, i.e., red, in a 10 secs window with a 5 secs look into the future to capture the current/next changes in the signal phases:

Query

the relation

Note that we added for readability the implicit definitions of

In this section, we present three application scenarios that are used to define requirements and features split into three complexity levels. On the infrastructure side, we have C-ITS (roadside) stations that receive nearby V2X messages and send messages to inform other participants on their current state, i.e., the traffic light phases. Other participants such as vehicles share their states such as their current speed, acceleration, and position. On the vehicle side, ADAS perceive driving environments and make safe driving decisions to improve safety of autonomous vehicles. The ADAS use sensors such as Lidar/Radar or cameras, and process the sensor data to avoid accidents by detecting pedestrians, vehicles, or other obstacles [81]. The sensor data can be linked to our ontology-based LDM and enables the system to represent the driving environments.

Scenario description

First, we give an overview of the three scenarios.

S1: Traffic statistics The focus of this scenario is on the collection of statistical data that concerns stops, throughput, traffic distribution, or types of participants by aggregating the streaming data on specific intersections. Regarding this scenario, we have identified the following use cases and related challenges:

Object level: for a single vehicle or station, the average speed, acceleration, number of stops, as well as the temperature could be collected;

Road/Intersection level: on this level, besides calculating a summary of road/lane level indicators such as average throughput, waiting time, the amount of stops, also matrices regarding transfers (e.g. how many cars head straight on), modality, and type mix, (e.g. which vehicle classes are present) could be determined;

Network level: on the network level, intersections are represented by nodes connected by roads. We could collect statistical summaries of indicators on intersections. For instance, estimating the transfer times and traffic flow between intersections.

S2: Hazardous events detection An important C-ITS application is road safety [8], where a reliable event detection is central to find unexpected, hazardous events. This is a more challenging case, since it requires the combination of the topology, vehicle maneuvers, and temporal relations that might be evaluated over longer and shorter periods. We identified the following events as possibly hazardous:

Simple vehicle maneuvers: the following maneuvers are relevant for this case and are directly extractable from trajectories: (1) quick slow down/speed up; (2) drive straight on, turn left, turn right; (3) stop, unload, park;

Complex vehicle maneuvers: the aim is to detect lane changes, overtakes, and u-turns, which are complex maneuvers, composed of simpler maneuvers;

Red-light violation: in Example 2.2, a description of the detection of red-light violations is given;

Vehicle breakdown/accident: this event is based on the stop maneuvers, where we identify vehicles that are not moving and are inside a dangerous area of an intersection. This case can be extended to several vehicles;

Traffic congestions: this is a more complex event, where short and long term observations must be combined. Queuing cars could indicate a congestion and be detected by checking the stop maneuvers of several vehicles that are behind each other, but not stopped by a longer red light phase.

S3: ADAS ADAS features are an important step towards fully automated driving by enabling the vehicle to take control of speed or breaking, where drivers still have the “full” control over the vehicle. The following challenges come for ADAS:

Self monitoring: self-monitoring is a central requirement of ADAS, where intelligent speed adaptation is an important feature to improve roadway safety; Obstructed view: this concerns dangerous situations where a vehicle might collide with another vehicle, since they have no visual contact due to an obscured view (e.g., buildings). The crossing of the predicted trajectories of two vehicles has to be verified to provide a simple collision detection. Traffic rules: the embedding of traffic rules like checking of traffic rules such as right-of-way rules could become an important requirement for autonomous driving.

Features for spatial-stream QA

The eight “standard” requirements for stream processing identified by [78], namely volume, velocity, variety, incompleteness, noise, timely fashion, fine-grained information access, complex domain models, and user intention, as well as the three entailment levels identified by [31] for stream reasoning systems, namely stream-, window-, and graph-level entailment, are not discussed here; they should hold for C-ITS stream systems as well. Besides the generic features F1, F2, F3, and F9, we also focus on domain specific features that are mapped to requirements crucial for enabling the above scenarios. For this, we distinguish for each feature three levels of fulfillment: basic (L1), enhanced (L2), and advanced (L3). We have identified the following feature sets:

F1 – Time model: possible time models are point-based (L1), and interval-based (L2), where L1 is the “simplest” representation. Also belonging to L2, on point-based data, applying aggregations can be represented by intervals based on point-based data items. If we apply an interval-based model, temporal relations (L3) such as Allen’s Time Interval Algebra [6] with operators like before that can be used for querying and inference.

F2 – Process paradigm: queries that are processed in a pull-based (L1) manner should be the baseline. Push-based processing (L2) in particular with sliding windows is already more challenging. If we allow a combined (L3) processing, we could treat high velocity, resp. low velocity, atoms by push-based, resp. as pull-based queries.

F3 – Query features: we consider “basic” query features that are include in a standard query language such as SPARQL or SQL. These features are part of L1 and include selection, projection, join, and filter, as well as time- and triple-based window operators. Combining the basic features by query nesting, unions of CQs, and allowing inter-stream joins belongs to L2.

F4 – Numerical aggregations: aggregations can be “simple” functions such as sum or average on either a set or multiset (bag) of data items (L1). L2 extends L1 by statistical functions such applying the mean, median, or mode, as well as standard deviation, variance, and range. Note that the aggregations are mainly over multisets, since we often have data items of different objects in a single stream.

F5 – Spatial aggregations: a wide range of spatial aggregations can be applied to geometric objects like points and lines (L1) and the aggregation functions need to take the peculiarities of geometries into account, e.g., convex vs. concave objects. L2 adds the computation of spatial relations using a simple point-set model [44] or the more detailed 9-Intersection model (L2) based on the aggregated objects. Smoothing and simplification of complex objects could also be included,which leads to L3.

F6 – Numerical predictions: predictions allow the generation of unknown data items projecting from the past into the future. Several prediction functions such as moving average (L1) or exponential smoothing (L2) regression should be available. Depending on the task, also more complex machine learning methods could be envisioned (L3).

F7 – Trajectory predictions: we predict a vehicle’s movement, by linearly projecting the trajectory into the future (L1). More accurate results could be achieved by (1) a “point-to-curve” aggregation, and (2) calculating possible paths using a road graph (L2), and the usage of machine learning for trajectory predictions (L3).

F8 – Spatial matching: basic spatial matching is the extraction of specific features such as angles from the objects (L1). Advanced features include the matching of complex geometries such as road graphs (L2).

F9 – Advanced reasoning: include the different forms of reasoning ranging from rule-based to ontological reasoning that reaches beyond the expressivity of query answering in OWL 2 QL [52]. The underlying language can include “simple” implications as

Requirement matrix (level L1/L2/L3 implies required, blank is not required, P is possibly required)

Requirement matrix (level L1/L2/L3 implies required, blank is not required, P is possibly required)

In Table 1, we show the requirements that are derived by analyzing each scenario and use case with respect to the needed features. The requirements build the base line for the implementation and a later experimental assessment. In case of single features, we only distinguish between L1 to L3 for required, blank for not required, and “P” for possibly required. For instance, in S2.2 for F1, a point-based time model (L1) suffices for detecting left/right turns; however, if we want to detect u-turns, an interval-based time model in combination with temporal relations (L2) will be needed. Furthermore, push-based queries are desired for swift reaction on changes.

The requirements of advanced reasoning (F9) start with QA using OWL2 QL as the baseline. This allows one to access the streams and to enrich them with a domain model. However, in some use cases the expressive power of QA is too limited, since more ‘advanced” features are needed. For instance, if a use case requires to take the road network and reachability into account, OWL2 RL has the expressive power for capturing it. If a use case requires to model traffic rules and traffic regulations, one needs, besides reachability, also constraints and NAF (as provided by ASP). For instance, a warning has to be generated if two traffic lights of crossing lanes are green at the same time.

In our previous work of [36], we already cover level L1 for the features F1 to F5, but aim in this work to introduce new features such as time intervals, temporal relations, and unions of CQs. F6, F7, and F8 are entirely new features.

Expert interviews

We conducted four long interviews with ITS experts, who play different roles in the field. The first two experts work in industry and the other two experts come from academia. The interviews were conducted as guideline-based expert interview [19]. Following the guidelines of [19], we left the interviewee the freedom to choose the topic he/she prefers to discuss. However, we had prepared a set of questions, which we asked if the interviewee heads into the direction of a particular topic, e.g., the query language.

Importantly, guideline-based expert interviews are a standard practice of qualitative research in social sciences [60]. Maccoby et al. [56] define an interview as an “interchange in which one person attempts to elicit information or expressions of opinion or belief from another person or persons.” If complex topics are to be investigated, long interviews can be a better choice compared to other (quantitative) methods in filling a knowledge gap; they allow to obtain a more profound insight into the positions of the experts [61]. Other approaches such as questionnaire-based interviews are more appropriate if many interviewees are participating and a quantitative but not qualitative analysis has to be conducted.

Furthermore, we encountered limited availability of experts (for time and business reasons) that have also a good understanding of the methods and techniques surroundings.

The main goal of the interviews is to answer the following general question:

“How suitable is our spatial-stream QA for real-world ITS applications?”

The above question can be split into more specific parts:

Q1: “What are important technologies/developments for future ITS systems?”

Q2: “How well is the LDM suited for the integration of vehicle/traffic control sensor data such as V2X messages?”

Q3: “Is the presented ontology and the approach of ontology-based data access suitable to realise an LDM?”

Q4: “We present three scenarios with several use cases, how relevant are they? Are different use cases needed?”

Q5: “We present different query features, such as trajectory predictions, how important are these features in your opinion? Should they be extended?”

Q6: “We present you an example for our queries for detecting red-light violations; how well is this query comprehensible for you? Do you believe another query language is better suited for this?”

In the remainder of this section, we give a summary of the interviews that were conducted with each expert. We do not transcribe the full interview, which range from 30 minutes to 1.5 hours, but only present a summarized version of each interview. In the Conclusion, we shall come back to recommendations and suggestions from the experts consulted for future work.

Expert 1 The first expert is an initiators of the V2X technology development in Europe. He was responsible, in a large ITS company, for the standardization of V2X messages in different committees of standardization organizations such as ETSI, ISO, and SAE. Additionally, he was participating in several large research projects such as DRIVE C2X.3

First, the expert pointed out that there are not only CAM, SPaT, MAP, and DENM messages, but also messages designed for public transport signal requests called SRM/SSN, as well as position correction messages for autonomous vehicles. The latter are important since in inner-cities, the exact postion in combination with high-resolution maps are crucial for safety. An important task arising form this challenge is the continuous matching of the vehicle position to the high-resolution map. He stated that autonomous vehicles, even with advanced sensors, will in the near future not be able to drive autonomous and safely in inner-cities with complex intersections, in particular when the weather is unpredictable. He then said that messages like the CAM or DENM need to be send near real-time to the surroundings, hence the existing 4G and upcoming 5G mobile standards are not suitable for this purpose, and the standard IEEE 802.11p (also called ITS-G5) is better suited, since it is based on WLAN technology and already available for low latency (in ms) communication. However, existing WLAN technology can not be used, since these protocols require sessions and authentication with a base station, which would cause a long delay for fast moving vehicles. He gave more details on DENM messages, which inform the surrounding on dangerous events, and noted that DENM messages a fired based on triggering conditions that have specific probabilities assigned. The triggering conditions are defined by the standardization bodies, and vehicle manufacturer have to implement them accordingly. Furthermore, he highlighted that more development is needed to protect vulnerable road users, which requires the integration of bluetooth-based communication such as ZigBee, and also the sharing of data with infrastructure sensors such as mounted Lidar/Radar stations.

Second, we discussed the LDM, and he identified the LDM as an integration platform for the V2X messages, where each vehicle has its own proprietary representation of the LDM, since the ETSI standard defines only the interfaces to access it, but not its internal structure. He doubts though that a common definition of the LDM is needed, since every manufacturer should implement it on their own, where only the dynamic elements could be encoded as a snapshot (in a standardized data model) and exchanged with the surrounding. One discussed use case is the exchange of snapshots, where legacy vehicles and/or vulnerable road users are on the road, hence only fixed Lidar/Radar stations could detect them sending their snapshots to other V2X-based vehicles, since the quality of fixed Lidar/Radar stations should be more accurate than of the moving ones. Currently several companies develop Collective Perception Messages (CPMs) [70], which figure as an exchange of the mentioned Lidar/Radar data. A future development could foster the exchange of sensor data by vehicles using the CPMs.

Third, we discussed the usage of ontologies and OQA for realizing the LDM, which he regarded initially sceptical since scalability might be an issue. After explaining the intention of OQA this skepticism was in parts redressed. Regarding the modelling of the ontology, he stated that users do not care how the (ontological) model is shaped, more important is the query language, since users will directly be confronted with it. He identified that the query engine and the stream DB could be migrated and deployed on roadside ITS stations, where the user could cover custom use cases by writing and applying queries.

Fourth, he reviewed the query features, where he explained that an interval-based time model and push-based processing is favourable since more information can be extracted, and in C-ITS applications due to low latency push-based queries should be supported, which could include some buffering techniques so not every change will be computed. He pointed out that “native” query languages such as SQL might be too complicated for writing queries, and a simplified, object-oriented representation (similar to CQ) could be favourable. While discussing the example query, we observed that he understands and reads queries as rules. He noted also that, if the query get complex as in the second scenario, rules might be easier usable to express problems, since they are more capturing “the way people think”. He also stated that the aggregation feature can be also used to guarantee privacy, since on aggregated values single vehicles are not distinguishable anymore. Furthermore aggregations can be used to calculate journey times in a road network. Finally, he agreed that predictions are a crucial feature for accident prevention, hence it should be more elaborated.

Expert 2 The second interviewed expert is the head of a traffic engineering department in a large ITS company and is responsible for managing R&D projects.

The expert described that historically traffic management was a closed, autarkic system, where traffic data was collected in a slow process using radar, road loops, and cameras. She identified V2X as an important step towards collecting real-time data on traffic and vehicles, where besides the mentioned CAM, SPaT, and DENM messages, Collective Perception Messages (CPMs) will play an important role, since they allow one to exchange locally perceived objects by a vehicles sensors, and exchange the object data with other V2X vehicles. She believed that the LDM could be used in combination with CPMs, where a CPM could be aligned with a vehicle’s own LDM. However, she saw no immediate demand for the use of an ontology-based extension of a LDM and the use of spatial-stream queries to access the (streaming) data, since they use their own tools and languages for processing V2X messages. She commented though, that an ontology-enhanced LDM could be used as a data integration platform, which could be used for future data analytics tasks.

Expert 3 The interviewed expert is an associate professor in an European university, where he works in the fields where reasoning and learning over streams is applied to safe autonomous systems including robots, boats, and drones.

First, we discussed the LDM, and he noted that in robotics similar techniques have been used for long, but with the additional challenge that these dynamic maps have to be built on-the-fly and cooperatively. An interesting challenge arises if different agents have a local representation of an LDM, and for coordination a global perspective has to be constructed based upon them. He identified another important task in this context, which is the matching of identical entities (in the sense of a physical grounding of the same entity) detected by different agents/sensors, which they solved by using bridge-rules.

Second, we discussed the LDM ontology, where he identified that meta-data is important, and a possibility of capturing uncertainty should be part of an ontology. Uncertainty introduces the additional challenge that measured observations, e.g., speed, are not crisp anymore, but are inside confidence intervals were the observation holds. He also stated that by using an average or most-likely values instead of intervals, we encounter a loss of information. This uncertainty also can occur in the classification of objects, wherein an instance of a vehicle is detected, but it turns out to be a bike. After discussing our query rewriting technique, he described their approach in robotics, which aims at matching the data to the query (or formula), instead of rewriting queries and checking for instances in the DB. The data is processed/transformed until it matches the queries. If new streamed data appears, they continuously try to match the streamed data to the queries.

Third, he reviewed the query and could understand most under certain assumptions (i.e., that aggregations are grouped), and he believed that including predictions are an important extension, which they consider for their approach as well. Since predictions need an underlying model, he sees that it is important to re-evaluate and monitor the predictions to detect concept drifts. He also commented on the semantics of query language and windows, where it is important to define when to forget data in the stream, and how long a data item holds into the future. Regarding the language features, he noted that intervals are good way to also express temporal uncertainty. Further it would be crucial to respect the underlying time model, which could be dense continuous or discrete. If someone assumes the finite number of changes assumption, it is possible to map the observation from the continuous into the discrete space. Finally, he believed that numerical aggregations and predictions could be enriched by different types of filters, whereby he mentioned that Kalman or particle filters [80] are often used in robotics.

Expert 4 This interviewed expert is a senior researcher in the fields of stream reasoning, graph DBs, and autonomous driving in an European university, and has also been involved in the development of stream reasoning tools.

First, we discussed the LDM, which he saw as a suitable approach to integrate map and streaming data. He pointed out though that classical DB technology as B+ trees [29] are not suited for spatial-stream data, but advances in the field of moving object DBs [45] result in efficient query languages and spatio-temporal indexing methods. Furthermore, the LDM does not consider a 3D representation of maps, which is crucial if the map is used in the context of autonomous driving, since detected objects of a image recognition step (e.g., building), need to be anchored in a 3D map.

Second, he evaluated the LDM ontology, where he agreed on the modelling regarding map features, but criticized that the LDM ontology misses to concept of sensors, resp., observations, which is crucial in C-ITS applications. He noted that elements such as

Third, he reviewed the query language, and easily understood the presented query (for red-light violations). He noted that the definition of the ontology and spatial atoms are clear, but the stream atoms need a formal grammar to capture an atoms parameterization such as

Fourth, he criticized that the scenario S3 is too generic, and should be narrowed to more specific tasks in the domain of autonomous driving; he suggested to include motion planing as a replacement for the generic scenario.

First, we introduce the data model and the definition of a spatial-stream knowledge base, which leads to our main focus of spatial-stream queries. Then, we introduce the “standard” query rewriting and extend it with temporal relations. Finally, we describe how the queries are evaluated putting the focus on stream aggregation and predictions. We start from previous work in [36], which introduced spatial ontology-mediated query answering over Mobility Streams using

Data model and knowledge base

Our data model is point-based and captures the valid time, extracted from the V2X messages, saying that some data item is valid at that time point. Importantly, while evaluating a query, the model can change (temporary) to an interval-based model that results from the window and aggregation functions. To capture streaming data, we introduce the timeline

For the timeline

A “slower” stream

We consider a vocabulary of individual names

inclusion assertions of the form

functionality assertions of the form

membership assertions of the form

We extend

The extension with streaming consists of the following axiom schemes

A TBox may contain

Finally, a spatial-stream knowledge base is a tuple

Semantics Without a spatial-stream extension, we keep the given semantics of

First, we give a semantics to

Second, we give an initial streaming semantics by interpreting the streamed KB over the full timeline. The timeline is captured by a finite sequence

The semantics of the

Our query language is based on conjunctive queries (CQs) and adds spatial-stream capabilities (as shown in Example 2.2). A spatial-stream CQ each atom each atom each atom each atom

The “historic” window operator

Query rewriting by stream aggregation

For the evaluation of spatial-stream CQs, we have to extend OQA to handle spatial and streaming data, which is not considered in the standard approach as [26]. In detail, we aim at answering pull-based queries at a single time point

In the rest of this subsection, we give a detailed outline of evaluating EAQs following [36].

Epistemic aggregate queries (EAQs) Introduced by [27], EAQs are defined over bags of numeric and symbolic values, called groups, denoted as

We simplified EAQs of [27] by omitting ψ and consider only aggregates with a single variable.

A

Then, the set of epistemic

Lifting EAQs to streams Our approach is to evaluate the EAQs over filtered and merged temporal ABoxes, which are created according to the window operator and

In a stream setting, a current window with a past window with a future window with the entire history of a stream resulting in

We obtain KB

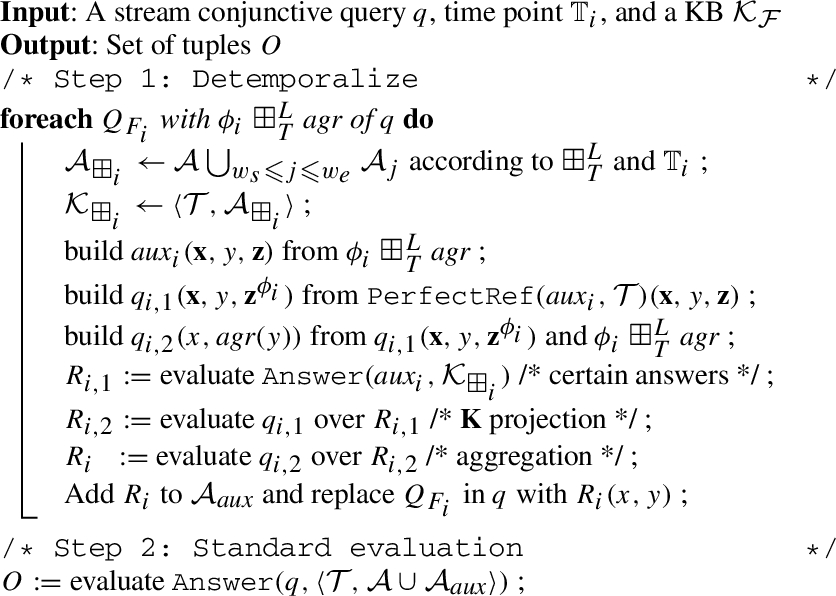

Naive algorithm Finally, we introduce the algorithm

calculate the epistemic answer for each stream atom over the different windowed ABoxes and store the result in an auxiliary ABox using fresh atoms. Furthermore, replace each stream atom with a new auxiliary atom;

calculate the certain answers over

We omit the details of different aggregate functions used in

At first sight, spatial and temporal relations could be treated similarly. As shown in [36], we are able to evaluate spatial relations regarding their Point-Set Topological Relations. This amounts to pure set theoretic operations on point sets using the function

Interpretation of IA relations IA relations can be interpreted over the sets of intervals

From timestamps to intervals Time intervals are not directly represented in our streamed data, but are an intermediate product of the EAQ evaluation and are used to annotate the resulting objects. After the evaluation of an EAQ, the results (also called answers) are aggregated data items, hence each single timestamp is not “meaningful” anymore; we need to assign time intervals to the answers, which are taken either from the window itself, or are extracted from the aggregated data items.

We already have introduced the function v that assigns to each element of

The function

More sophisticated approaches could include a segmentation of the data items, thus creating different fragmented sub-intervals.

Query evaluation by hypertree decomposition

The four types of query atoms need different evaluation techniques over separate DB entities. Ontology atoms are evaluated over the static ABox A using a “standard”

For temporal atoms, we consider three techniques, where the first is only suitable for time points and the others are suitable for time intervals:

Adding the filter conditions to the rewritten query for delimiting possible time points;

Rewriting each IA relation of

Full IA-based reasoning.

In the third technique, we would have to provide full IA reasoning. This includes the closure of the IA graph by applying the (predefined) transitive rules on all intervals derived from an EAQ. Then, the derived intervals with the annotated objects from the IA graph are extracted, which hold according to the queried relations in

In [36], we introduced two spatial query evaluation strategies assuming two restrictions:

no bounded variables occur in spatial atoms, and the CQ is acyclic.

Queries with bound variables in spatial atoms are unlikely to occur, since we introduced a separation between spatial objects and their spatial extension, where the former is shown in the results and the later is used for filtering. Acyclicity, roughly speaking, is given if a CQ has no proper cycle between join variables.

The main strategy is based on the decomposition of the query hypergraph and the derived join plan, is well-suited for implementing spatial-stream CQs, as it gives us fine-grained caching, full control over the evaluation, and possibly the handling of different DB entities. Details are given in the standard DB literature such as [58].

The main steps of our query evaluation strategy are as follows. First, we construct the acyclic hypergraph The following example is a simplified version of Hypergraph of

Then, we build the join tree

In this section, we address the computational cost of our spatial-stream QA approach, where we refer to the following complexity classes:

DL-Lite The DL-Lite family of languages such as DL-Lite

R

and

The languages above have combined complexity in

The motivation for our work was to extend

Spatial extensions Dealing with spatial queries, three aspects have to be considered regarding data complexity. First, we restrict the input to a finite (active) domain. This restriction is due to the structure of Boolean fan-in circuits, where each relation is encoded by input nodes concerning all domain elements. Finiteness is guaranteed, if we encode geometries as sets of points, which we call admissible geometries. The binary (spatial) relation between two admissible geometries can be defined according to pure set operations of these points, e.g., the

Stream relations As is with spatial data, the first concern regards the finiteness of the domain, since our timeline

Temporal relations Earlier in this section, we introduced two possible strategies for rewritting temporal IA relations of the form

Query structure and decompositions A large body of works have been dedicated to connecting hypergraphs, (acyclic) DB schemes, and join trees, an overview is given in [30,58]. For decomposing a query q, the query hypergraph

Note that there are different notions of acyclicity, i.e., α, β, and γ-acyclicity; we focus in this work on α-acyclicity, which can be efficiently tested by the GYO-reduction (cf. [30,58]). We say that a CQ is acyclic, if its hypergraph is α-acyclic. A specific join tree

Aggregations and predictions

In this section, we give more details on the aggregation/prediction functionalities, where normal objects, spatial objects, and constant values need different types of aggregate functions.

For normal objects and constant values, we allow the aggregate functions Order of items: Simple aggregations: Descriptive statistics (DS): Predictions: we apply predefined regression methods to predict values from existing (time-series) data items inside a window. Model building (i.e., the training) and prediction should be fast, hence we support the following lightweight methods: (a) (b) (c) (d)

Since the order of items is lost due to the bag semantics, the temporal annotations (e.g.,

For spatial objects, geometric aggregate functions are applied to the bag of data items applying the function obtaining a simplified geometry using smoothing, and calculating the angles between the lines of the simplified geometry;

For the trajectory computation, besides a simple linear, also a curvature-based model could be applied. To improve the accuracy of the model, we could use the speed of the last data points, so a speed-up or slow down would be taken into account. Since this extension needs further investigation, it will be considered for future work.

Optimization techniques

We outline three suitable optimization techniques for the query processing, namely by (a) optimizing the query rewriting itself with the aim of generating small rewritings and keeping the number of generated CQs low; (b) the caching of static sub-queries for faster re-evaluation of the full query on recurring evaluations; and (c) the parallelization of the stream sub-queries for improving the performance for streamed, no-cachable data. A fourth optimization techniques that focuses on improving the query decomposition with the aim of finding larger sub-queries for reducing in-memory joins is out of the scope of this work and needs further investigation.

Query rewriting Optimizations for DL-Lite query rewriting is a well studied topic. As recognized in [66], the original PerfectRef algorithm suffers from producing large rewrittings, since an exhaustive factorization to guarantee completeness, leads to unnecessarily large unions of CQ. Significant improvements regarding PerfectRef were presented with REQUIEM [66], where the factorization was replaced with functional terms, and in Presto [75] were unnecessary existential joins are eliminated by computing the most-general subsumees and producing a non-recursive Datalog program instead of an union of CQ as the rewriting. Finally, the most recent optimization technique, called tree-witness-based rewriting [49], which boils down to introducing fresh constant symbols (nulls) for non-ABox elements of the canonical model to allow for matches of the query in the extended (canonical) model. The tree-witness-based rewriting was implemented in [74], where also additional optimization techniques such as pushing joins into unions, eliminating sub-queries, and self-join elimination were added. Our approach is based on the PerfectRef algorithm, which is implemented with minor optimizations in the query rewriter of

structure of the ontology;

structure of the query; and

number of existentally-quantified query atoms.

Caching For RDBMS, several approaches for caching were developed over the years. Traditionally, caching between client- and server systems (with a DB present) was page-based (i.e., groups of related tuples), where full pages are cached on the client and requested from the server if missing. Tuple-based caching introduces a smaller data granularity, where individual tuples are cached on the client. Both caching approaches can implement different cache replacement policies such as First in First Out (FIFO), Least Recently Used (LRU), or Most Recently Used (MRU) [58]. More sophisticated caching approaches, called “semantic caching”, introduce “semantic spaces” used for caching, which allow a segmentation of the domain space of the attributes of a (DB) relation [71].

System architecture.

Our approach for caching the sub-queries relates to “semantic caching”. However, the segmentation of the attributes/tuples is given (a) by the separation between static and stream query atoms, and (b) by the structure of the TBox, i.e., the concept/role hierarchies as well as the domain range of roles of a query atom. Note that our segmentation does not consider spatial data, which also could be used for caching using the Euclidean distance for segmentation. For a cache-based optimization, we define the following parameters:

caching strategies as mentioned above;

cache size and location, i.e., in-memory caching or integrated caching as used by

cache invalidation based on the stream update frequency;

number and size of static/spatial sub-queries; and

ratio between static/spatial and streamed sub-queries.

Query parallelization Similar to caching techniques, parallel query execution is a standard RDBMS query evaluation technique. We focus in our work on intra-query parallelism and do not cover inter-query parallelism, since we restrict ourselves to execute a single query at the time for evaluating our approach. Intra-query parallelism can be broken down to inter-operator and intra-operator parallelism, where we omit intra-operator executions, which would allow for the execution of multiple processes of a single operator such as merge-join [43]. Inter-operator execution of different operators is, besides caching, the central optimization method for our evalution of queries. Inter-operator execution can be implemented by vertical, i.e., pipeline-based execution of the operators, or by horizontal (also called bushy) execution. The horizontal, inter-operator execution fits well to our hypertree-based approach, since it can be used for a horizontal separation into sub-queries if there are no dependencies, i.e., hyper-edges, between them. Note that a sub-query in our case is always a set of operators, hence we separate sets and not single operators. Due to the labelling of hyper-edges by type (i.e., streamed, static, or spatial), we can identify the sub-queries that contain stream atoms, and execute them in-parallel. Upon completion, the results can be propagated along the join tree, as long as the other branches of the join tree are already executed. For an optimization by parallelization, we define the following parameters:

number and size of stream sub-queries;

ratio between static/spatial and streamed sub-queries; and

shape of join tree, i.e., the depth or the average or maximum branching factor of a join tree.

We have implemented a prototype of our spatial-stream OQA approach in

The query parser and decomposer component is used for parsing the input spatial-stream CQ, and then decomposing the query hypertree using Gottlob et al.’s [32] implementation.9

The standard query evaluator performs the DL-Lite

A

query rewriting using

The stream aggregator detemporalizes for each stream sub-CQ

extracting the data items according to the defined window size;

evaluating

evaluating

applying the prediction function on

applying the grouping/aggregation function on

The stream predictor is integrated in the stream aggregator, wherein predictions are applied on the collected data items after they are grouped. We provide standard implementations for the functions

The spatial evaluator evaluates the different spatial relations based on the grouped/aggregated data items. For performance reasons, we do not compile the spatial relation to SQL, but evaluate them in-memory using the functions of the

The temporal evaluator supports the mentioned IA filtering technique, since temporal relations can be directly rewritten into SQL by encoding the relations as joins, where each relation is encoded as a filter on the start/end points of the aggregated data items. The second technique, IA reasoning, is planned for future work.

Note that we designed the prediction function as an integrated part of the stream evaluator. However, predictions could also be treated as external data streams, generating data items continuously. This would be an appealing extension, but would change the query evaluation process and needs further investigation. The same considerations would apply to the trajectory predictions. The aggregate function

Optimizations In Section 5.8, we outlined suitable optimization techniques, where the techniques regarding query rewriting come at no cost, since it is part of the rewriter component. For instance, the query rewriter of

We have implemented an initial caching technique, which is based on the separation of static/spatial and streamed sub-queries. Our implementation applies in-memory storage and mixes a LRU policy for the static sub-queries and a FIFO policy for streamed sub-queries, where data streams naturally capture a FIFO policy.

As already mentioned before, our approach favours a vertical and inter-operator parallelism, since our hypertree-based query decomposition results in a join tree, which allows us to extract stream sub-queries that are independent of each other. After the query is parsed, the query evaluator collects all independent stream sub-queries, execute them in-parallel on

We evaluated our platform regarding the requirements/features (cf. Table 1) derived from the use cases. The requirements are encoded into a set of queries that include the introduces features of Section 3.2. The lightweight LDM ontology, queries, the experimental setup, log files, and collected results, as well as the implementation are available on the evaluation website.12

Four intersection scenario.

For having realistic traffic data, we generated our streaming data with the microscopic traffic simulation tool

intersections, roads, lanes, signal groups, and vehicles as concept assertions;

geometries for each lane, road, etc. as attribute assertions; and

lane connectivity, signal group assignments, etc. as role assertions.

Based on the requirements, we derived a set of queries to assess each scenario, where each query aims at answering a specific problem of the use case taking the set of features into account. We use a more compact representation, where the commas between atoms are conjunctions and disjunctions are explicitly declared using the

For the use case S1.1 (object statistics), query

For the use case S2.1 (simple maneuvers), query

For the use case S3.1 (self monitoring), we aim to detect with

In S3.3 (traffic rules), our ego vehicle approaches an uncontrolled intersection at the same time with other vehicles. According to (local) traffic regulations, preference (shown Fig. 6) is given to (1) the vehicle on the main road, (2) the one that is not changing lanes, and (3) the vehicle approaching from the right. Vehicle C has preference over A and B, where B would have preference over A, but the preference can not be given, since A is on a single-lane road. We can express the traffic regulations with the following Datalog rules:

Use case traffic rules.

The atom

Results (t in secs) for scenario with (l)ow, (m)edium, and (h)eavy traffic, where Y means results with caching and parallelization, and N means results non-optimizations; d (%) is the average of deviations between optimized/non-optimized runs

We conducted our experiments on a Mac OS X 10.14.4 system with an Intel Core i7 2.9 GHz, 8 GB of RAM, and a 250 GB SSD. The average of 21 runs for query rewriting time and evaluation time was calculated. The results are shown in Table 2, where the average evaluation time (AET) t is measured in seconds for three traffic densities and four update delays in ms. The columns represent the number of sub-queries

The new experiments confirm results of [36] with closer to “real-world” queries and simulation data. The AET ranges between 0.86 s and 2.06 s with the exception of use case S3.3, which emulates rules using unions of CQs. Query

The added functions for statistics, matching, and predictions, i.e.,

Discussion on optimizations A second set of experiments highlights the effect of the optimizations based on caching and sub-query parallelization. The results are shown in the second row of each query in Table 2. The optimizations improve the orginal AET ranging between 0.49 s and 1.05 s, to the new results with a minimal AET of 0.37 s (in

The performance gain in AET is shown in percentage terms for each traffic density in the columns 9, 14, 19, and 20, which includes the calculated average of the other three columns. We observe that the gain for each query is almost uniform over the traffic densities, and the variation is between the queries (shown in column 20), and smaller between the four delays (shown in row 23). Regarding the results in column 20, the queries

the rewriting of the ontology of sub-queries into views is only done in the initial run, since afterwards the results are taken from the cache;

the artificial delay of 150 ms for evaluating consecutive stream sub-queries is dropped, since this evaluation is newly conducted in parallel.

Note that query

Feature coverage

As shown with the queries, we covered in the implementation all initial levels (L1) of features that are defined in the scenarios/uses cases. We support temporal relations based on a (partial) interval-based data model (F1) that are evaluated by pull-based queries (F2). Then, we allow temporal relations and nested queries that include unions of CQs (F3). However, we have not yet implemented the IA reasoning for temporal relations, since an in-memory evaluation of the transitive rules completing the IA graph needs further investigation. Regarding F4 and F5, we have implemented the initial set of numerical, descriptive statistical, and spatial aggregation functions. For F6, we covered

Summary of expert evaluation

Overall, the experts confirmed that (a) the extension to time intervals and temporal relations, and (b) the inclusion of prediction capabilities are important extensions of the initial spatial-stream OQA approach. However, it turned out that the experts identified several limitations and interesting extensions.

The first limitation regards the LDM ontology, which should be aligned with the SSN ontology [46] to include patterns such as

The first extension regards Collective Perception Messages [70], which could be added as a new type of message used to share local sensor data. The second extension addresses Scenario 3, which could be extended with use cases that are taken from motion planing tasks in autonomous driving. The third extension identifies Kalman filters [80] and top-k aggregates [47] as more powerful aggregation functions. The fourth extension is likely the furthest reaching, since the ontology and query language (with the underlying semantics) could support uncertainty on the level of (a) data items and (b) of TBox assertions, e.g., inclusion assertions. The fifth extensions describes the handling of data items inside windows, where adaptably forgetting data items and extending their validity into future could be added to have a more flexible query language. The last extension is again more generic and captures the use of LDMs in an autonomous agent-based scenario, which would lead to the challenge of aligning different LDMs to a global dynamic map.

Related work and system comparison

First, we give a general overview on related work, and will discuss in-depth related systems in the sections afterwards, where we provide an qualitative and quantitive comparison with selected systems.

Overview

In a more formal setting, stream processing started with Data stream management systems (DSMSs) such as STREAM [11], Aurora [28], and TelegraphCQ [57]. Notably in STREAM, the authors introduced the Continuous Query Language (CQL) [11], which provides an explicit operational semantics based on stream-to-relation, relation-to-relation, and relation-to-stream operators. Furthermore, three kinds of window operators, namely partition-based, time-based, and tuple-based windows were applied in CQL.

With the need for more complex domain models, such as provided by background KBs, streams were lifted to a “semantic” level, leading to the development of RDF stream processing engines, such as Streaming SPARQL [20], C-SPARQL [14], SPARQLstream [24], and CQELS [55]. These systems proposed processing of RDF streams integrated with other Linked Data sources and background KBs. The C-SPARQL framework also has been extended to support deductive and inductive reasoning [13]. A system for efficient spatio-temporal query execution was proposed in [69], which supports spatial operators as well as aggregate functions over temporal features. EP-SPARQL [9] and LARS [17] propose languages that extend SPARQL respectively CQs with stream reasoning, but translate KBs into more expressive and less efficient logic programs. Regarding spatial RDF stream processing, a few SPARQL extensions were proposed, such as SPARQL-ST [67] and st-SPARQL [54].

In [12],

Qualitative comparison of F1–F9 on selected systems, where ∗ indicates comments in the system descriptions; Ext, Pre, and Lmt means evaluation by using of external atoms, a preprocessing step needed, and with limited coverage

Qualitative comparison of F1–F9 on selected systems, where ∗ indicates comments in the system descriptions; Ext, Pre, and Lmt means evaluation by using of external atoms, a preprocessing step needed, and with limited coverage

There is a wide range of systems for stream processing, stream reasoning, and event detection available. For a comparison with our approach, we focus on systems that fulfill the following criteria: (1) ability to handle streaming and/or temporal data, either by having a window operator, or supporting incremental updates; (2) ability to provide reasoning, either based on ontology-, rule-, or query rewriting-based methods; and (3) a maintained implementation of the system has to be available.

As commented in Section 3, we will not re-evaluate the eight “standard” requirements of [78], and the three entailment levels for stream reasoning systems of [31]. Still, this work overlaps on some features with the comprehensive study of Margara et al. [59], where the authors compare systems according to the criteria:

continuous queries (F2/F3), background data (F9), time model (F1), reasoning (F9), and temporal operators (F1).

The other criteria in [59], namely data transformation, uncertainty management, historical data, quality of service, and parallel/distributed processing are not investigated in the context of this work.

The comparison in Table 3 shows the evaluation of the selected systems on the generic features F1 (Time model), F2 (Process paradigm), F3 (Query features), and F9 (Advanced reasoning), as well as the ITS domain specific features F4 (Numerical aggregations), F5 (Spatial aggregations), F6 (Numerical predictions), F7 (Trajectory predictions), and F8 (Spatial matching). We separate the table into two groups, where the first group represents query-based systems, and the second group rule-based systems. In the following, we give details on the systems that are compared.

C-SPARQL

C-SPARQL was introduced by Della Valle et al. [14] and was one of the first contributions in the area of stream processing with reasoning extensions. The main intention of this work lies in bridging the gap between the world of stream processing systems, i.e., stream RDBMS, and the Semantic Web taking RDF and SPARQL into account. C-SPARQL includes (a) a language for continuous queries over streams of RDF data, (b) an evaluation engine for this language, whereby C-SPARQL has the distinguishing features of (i) supporting timestamped RDF triples, (ii) supporting continuous queries over streams, and (iii) defining ad-hoc, explicit operators for data aggregation. The results of C-SPARQL queries are continuously updated as new data items appear on the stream, hence an efficient evaluation of sliding windows is possible.

CQELS

Similar to C-SPARQL, CQELS [55] also offers a query language and processing engine for answering queries over a combination of static and stream RDF data. While C-SPARQL adopts a “black box” approach, i.e. static and stream query elements are first divided and sent to the respective underlying stream and RDF query engines, CQELS, on the other hand, takes a “white box” approach by natively implementing the required query operators for the RDF-based data model, both for streams and static data. This native approach enables better performance and can dynamically adapt to changes in the input data. Another difference to C-SPARQL is that CQELS takes an eager query execution strategy: input data is processed as soon as it arrives in the system, contrary to the periodic evaluation of C-SPARQL which is triggered periodically, regardless of the input data throughput.

ETALIS with EP-SPARQL

The ETALIS system [9,10] was applied to the ITS domain and offers the combination of Datalog-style rules with a background KB. In ETALIS a Prolog-based language is used to express complex event patterns with predicates like

INSTANS

INSTANS [73] is an event processing engine based on handling multiple interconnected SPARQL queries with updates. It supports continuous evaluation of incoming RDF data using an encoding of SPARQL queries into Rete-like structures. INSTANS supports stateless/stateful filters using its internal memory, enrichment, (de-)composition, aggregation and pattern matching on the streamed events. The authors have implemented their approach, where they provide a conversion of the SPARQL queries to Rete rules, which then are evaluated on the Rete [38] rule engine

SPARQLstream/Morph-streams

SPARQLstream [24] extends standards SPARQL with time windows over streams similar to C-SPARQL, but adds the relation-to-stream operator for dealing with relational streams. SPARQLstream was further extended to Morph-streams [25], where OBDA techniques are applied to access the underlying streams, which then are stored in a stream processing system (SPS). R2RML mappings are used to create virtual streams on-the-fly, which can be accessed in their SPARQL extension. The authors implemented and tested their query rewriting techniques for different SPS, namely for SNEE, Esper, and Global Sensor Networks.

Clingo with multi-shot ASP

Multi-shot Answer Set Programming (ASP) is an extension of existing ASP solving techniques, which deals in a reactive fashion if new information arrives at the logic program, instead of solving the program from scratch. Clingo 4 [40] supports natively multi-shot solving by offering high-level constructs and control capacities via the scripting languages Lua and Python. The authors introduced the

LARS with ticker/laser

The LARS framework [16] is a recent development in stream reasoning that considers lifting ASP to streams with generic windows for capturing data snapshots with the aim of generalizing time-, tuple- and partition-based window functions. To this end, the ASP syntax is extended with window operators and with temporal operators for evaluating truth at every, some, and a specific (exact) time point in a stream; variables ranging over domain constants or time points are allowed in rules. Semantically, answer sets of ASP programs are naturally generalized to answer streams of LARS programs. Fragments of LARS programs have been implemented in Ticker [18] and Laser [15], based on plain LARS rules.

In Ticker,14

Laser15

The STARQL framework of Özçep et al. [64] is an effort of streamifying ODBA by introducing an extensible query language, hence it is closely related to our approach. It uses a first-order logic fragment for temporal reasoning over sequences of ABoxes. The framework extends the first-order query rewriting of DL-Lite with intra-ABox reasoning. In a second extension of ONTOP, the authors added Metric Temporal Logic (MTL) to allow querying of log data using LTL operators that are extended with time intervals [22,23]. For this purpose, they introduced datalogMTL, which combines non-recursive Datalog with the MTL operators.

C-SPARQL encoding (left) and CQELS encoding (right) of query

RDFox [62] is the combination of a scalable main-memory RDF store that supports materialisation and parallel Datalog reasoning, which also includes SPARQL query answering. The Datalog materialisation is based on a novel parallel reasoning algorithm extending the well-known DRed algorithm, computing incremental updates very efficiently on its internal triple store and thus is well suited to handle data streams.

TrOWL

TrOWL [72] is an incremental DL reasoner over the expressive OWL2 DL language. It handles streams of KBs instead of using fixed time windows over streams, hence allowing to add and remove axioms from the KB on-the-fly. The authors applied syntactic approximation to reduce the reasoning complexity, which guarantees soundness but looses completeness in certain cases. TrOWL provides justifications for the following entailments: atomic concept subsumption, atomic class assertion and atomic object property assertion.

Feature comparison

Based on the literature review and discussions with the authors of the systems, we conducted a feature evaluation of the above systems. In Table 3, we summarized the reviews and discussions, where we use the underlying specifications for the three levels of fulfillment: basic, enhanced, and advanced. For F1, the basis level matches to a point-based time model, the enhanced level would relate to an interval-based model, and the advanced level would include AIA over an interval-based model. For F2, the basic level includes pull-based, the enhanced level push-based queries, and the advanced level the combination of pull- and push-based queries. In F3 and F9, we list the query, rule, or ontology language and the possibility to allow windows, but do not classify the fulfillment level. For F4 to F8, we evaluate how a specific feature is covered by the basic fulfillment levels, since most systems are generic reasoners, and are not intended to support ITS-specific features.

As shown in Table 3, for F1 the ONTOP- and LARS-based systems offer similar or richer query- and ontology languages, since these systems allow the use of LTL and MTL operators. For F2, our work is on a par with most presented systems, except the two push-based systems CQELS and INSTANS, which support a higher reactiveness, since they support an eager query execution strategy. For F4 and F5, our work covers the widest range of numerical aggregation and prediction functions, with the exception of STARQL, which covers similar functionalities. One of the motivation of this work are the coverage of F6 to F8; hence our work is the only approach that supports spatial aggregations and predictions.

Performance comparison

We conducted our experiments again on a Mac OS X 10.14.4 system with an Intel Core i7 2.9 GHz, 8 GB of RAM, and a 250 GB SSD. We calculated the average of 50 runs for query evaluation time with warm starts, hence, we did not restart the systems over 50 runs in each experiment.

For the experiments, we compared our prototype on two selected queries with the state-of-the-art systems C-SPARQL and CQELS, which support limit reasoning but are designed to deal with high velocity and volume streams. The comparison of all presented queries is not feasible, since C-SPARQL and CQELS do not support natively the features spatial/temporal relations, spatial aggregation, and inline predictions. Hence, we selected the two queries

Results (t in secs) for query

and

with the scenarios (l)ow, (m)edium, and (h)eavy traffic

Results (t in secs) for query

The results of our experiments are shown in Table 4 where t is the AET in seconds for different traffic densities and update delays in ms. The baseline systems C-SPARQL and CQELS outperform our prototype in the range between 70 ms in

After profiling the runtime of our prototype, we noticed that approximately 200 ms are lost by establishing a connecting to

This work was sparked by the need of applying spatial-stream query answering as an effort to integrate and access streamed mobility data, e.g., vehicle movements, in a spatial context over the complex C-ITS domain. In [36] we have introduced simple aggregate queries over streams, which often do not suffice to capture more complex C-ITS use cases. In this paper, we presented an extension with temporal relations and numerical/trajectory predictions, which allows us to query complex mobility patterns such as traffic statistics, or complex events such as detecting (potential) accidents. Based on the newly developed scenarios of traffic statistics, event detection, and advanced driving assistance systems (ADAS), we have defined a set of domain-specific features such as trajectory computation, which are matched with the scenarios/use cases to define key requirements. Given the new features, we extended the LDM ontology, the spatial-stream query language, and extended the methods used for query answering accordingly. We also redesigned the architecture and optimized the system by pre-compiling the static query elements and executing stream atoms in parallel. The experimental evaluation provides evidence for an improved performance of approx. 40% and an evaluation time below 700 ms. This indicates that potentially the feasibility and efficiency of our approach in the mentioned scenarios is given.

Lessons learned The presented approach of spatial-stream query answering is well-suited for data integration and query answering in the C-ITS domain. The concept of a LDM was a good starting point, since it has been developed and standardized by the C-ITS community and was already extended by Netten et al. to an ontology-like model [63]. In particular, Semantic Web Technologies play to their strengths in easily modelling a complex domain such as C-ITS, and allowing the (expert) user to formulate powerful queries on top of the streams that are integrated by the ontology. This can be seen by the new scenario ADAS, where small modifications of the ontology and new queries open up a new application field. However, our approach of using OQA revealed some limitations that are discussed in the expert summary.

Using spatial-stream CQs for capturing the scenarios worked out to our satisfaction in most of the use cases. But as illustrated with use cases S2.4, S2.5, and S3.3, our language reached its limits regarding “usability” and also “expressivity”. If we have a larger set of rules as in S3.3 (even without transitivity), the conversion to unions of CQs becomes cumbersome and inefficient (AET is between 2.46 s and 3.34 s), hence rule engines such as in Ticker [18] or Laser [15] might be better suited. In S1.3 and S2.5, we can see that qualitative temporal relations like

Furthermore, the usage of CQs with sub-queries can be directly transferred to SPARQL queries; the underlying evaluation system would have to be adjusted to deal with the mobility-specific features.

Outlook We believe that stream processing/reasoning methods could well be applied to the mentioned C-ITS scenarios, which was confirmed by the experts; they also acknowledged that the new features such as time intervals with temporal relations and prediction capabilities are important extensions of the initial spatial-stream OQA approach.

Nevertheless, the experts identified practical and theoretical extensions that should be addressed in future research. On the practical side, they suggested that the LDM ontology could be (and has been) combined with the SSN ontology [46], where Collective Perception Messages [70] could be used to integrate local sensor data of other vehicles. This could be further elaborated such that the different LDMs (of each vehicle) could be aligned to a single global dynamic map. Furthermore, the experts suggested that we could integrate Kalman filters [80] and top-k aggregates [47] to provide more powerful aggregates. On the theoretical side, our methods could be extended to capture uncertainty on the level of data items and also of TBox assertions, which would lead to a change in the underlying semantics and the computational properties. Furthermore, our methods could be extended to handle windows in a more flexible manner by flexibly forgetting data items or extending their validity into the future. Finally, one expert suggested that the focus of Scenario 3 could more on motion planning instead of ADAS.

In addition to the expert suggestions, we believe that efforts on following issues would be beneficial: (a) allowing the integration of external (domain-specific) modules such as functions for advanced trajectory prediction, which would be similar to external atoms in ASP [33]; (b) the possibility of analytic queries over longer periods, hence an extension with a transient cache and variable window sizes would be needed; (c) the full integration of OQA with IA relations, which would include the representation of IA networks and the rewriting of subsets of the composition table; and (d) handling more complex queries and rules while still maintaining scalability, which would bring our approach closer to Ticker [18] and Laser [15].

As discussed in the section above, ongoing and future research should be directed to extend the languages, methods, and the platform to fulfill the defined requirements, which will enable us to apply our approach and prototype to more complex scenarios such as advanced traffic monitoring and motion planing.

Footnotes

Acknowledgements

This work has been supported by the Austrian Research Promotion Agency project LocTraffLog (FFG 5886550) and DynaCon (FFG 861263), as well as by the European Commission through IoTCrawler (H2020 contract 779852).