Abstract

DBpedia is a large-scale and multilingual knowledge base generated by extracting structured data from Wikipedia. There have been several attempts to use DBpedia to generate questions for trivia games, but these initiatives have not succeeded to produce large, varied, and entertaining question sets. Moreover, latency is too high for an interactive game if questions are created by submitting live queries to the public DBpedia endpoint. These limitations are addressed in Clover Quiz, a turn-based multiplayer trivia game for Android devices with more than 200K multiple choice questions (in English and Spanish) about different domains generated out of DBpedia. Questions are created off-line through a data extraction pipeline and a versatile template-based mechanism. A back-end server manages the question set and the associated images, while a mobile app has been developed and released in Google Play. The game is available free of charge and has been downloaded by more than 5K users since the game was released in March 2017. Players have answered more than 614K questions and the overall rating of the game is 4.3 out of 5.0. Therefore, Clover Quiz demonstrates the advantages of semantic technologies for collecting data and automating the generation of multiple choice questions in a scalable way.

Introduction

Wikipedia is the most widely used encyclopedia and the result of a truly collaborative content edition process.1

DBpedia is the prototypical cross-domain dataset in the Web of Data [12, ch. 3] and is employed for many purposes such as entity disambiguation in natural language processing [16]. An appealing application case is the generation of questions for trivia games from DBpedia. Some preliminary attempts can be found in the literature [5,14,20,21,29], but these initiatives have fallen short due to simple question generation schemes that are not able to produce varied, large, and entertaining questions. Specifically, supported question types are rather limited, reported sizes of the generated question sets are relative low (in the range of thousands), and no user base seems to exist. Moreover, some of these works create the questions by submitting live queries to the public DBpedia endpoint, hence latency is too high for an interactive trivia game, as reported in [20].

The hypothesis of this work is that a semi-automatic approach can produce varied, numerous, and high-quality questions from DBpedia. In a first stage, a human editor can guide the extraction of data by specifying the classes, literals and relations between classes of interest for a particular domain. In a second stage, the generation of questions is driven by a flexible template authoring process, allowing high control in the selection of the entity sets and ample variety in the formulation of question types. Importantly, the produced questions are production-ready, without requiring any kind of post-processing for readability or presentation purposes. The generated questions can then be hosted in a back-end server that meets the latency requirements of an interactive trivia game. The target case is Clover Quiz, a turn-based multiplayer trivia game for Android devices in which two players compete over a clover-shaped board by answering multiple choice questions from different domains. This paper presents the outcomes of this project, including the mobile app and actual usage information of the players that have downloaded the game through Google Play.

The rest of the paper is organized as follows: Section 2 presents the game concept of Clover Quiz. Section 3 describes the data extraction pipeline, while Section 4 explains the question generation process. The design of the back-end server and the mobile app is addressed in Section 5. Section 6 deals with the actual usage of Clover Quiz, including user feedback and latency measures. Next, Section 7 draws some lessons learned. The paper ends with a discussion and future work lines in Section 8.

Clover Quiz is conceived as a turn-based multiplayer trivia game for Android devices. In an online match, two players compete over a clover-shaped board. Each player has to obtain the eight wedges in the board by answering questions on different domains. The player with the floor can choose any of the remaining wedges and then respond to a question on the corresponding domain. If the answer is correct, the player gets the wedge and can continue playing, but if it is incorrect, the floor goes to the opponent. Once a player obtains the eight wedges, there is a duel in which each player has to answer the same five questions in a row. The match is over if the player with the eight wedges wins the duel. In other case, this player loses all the wedges and the match continues until there is a duel winner with the eight wedges.

The target audience of Clover Quiz corresponds to casual game players with an Android phone, in the age range of 18–54, high school/university level education, and Spanish- or English-speaking. Importantly, target users are not supposed to know anything about the Semantic Web and do not require a background on Computer Science or Information Technology. Since the game is purposed for mobile devices, user typing should be limited as much as possible. For this reason, Clover Quiz employs multiple choice questions with four options; note that this is also the solution adopted by other mobile trivia games like QuizUp4

Clover Quiz includes questions from the following domains: Animals, Arts, Books, Cinema, Geography, Music, and Technology – all of them have a good coverage in DBpedia [17] and are arguably of interest to the general public. About the generation of questions, an important design decision is whether to prepare the questions beforehand or to submit live queries to DBpedia. The latter option was discarded due to the complexity of the question generation process and to the stringent requirements of interactive applications (like Clover Quiz) that cannot be met by the public SPARQL endpoint over the DBpedia dataset – queries to the public DBpedia endpoint can easily take several seconds and periods of unavailability are relatively common, according to the tests carried out in the inception phase of the game. Instead, the question set of Clover Quiz is generated in advance and deployed in a back-end server. This architecture corresponds to the crawling pattern employed in some Semantic Web applications [12, ch. 6].

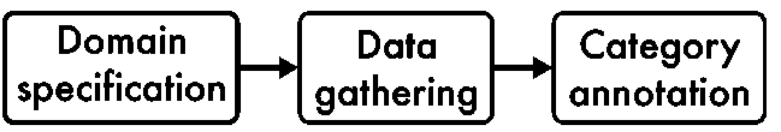

The goal of the extraction phase is to gather the data of interest from a knowledge source, e.g. DBpedia, and produce a consolidated dataset that can be easily exploited to generate multiple choice questions. This is accomplished through a series of steps that are graphically depicted in Fig. 1. The question set will then be produced programmatically through and off-line process; for this reason the proposed workflow is supported with a collection of scripts coded in Javascript, while all generated input and output files are in JSON format [6]. In the first stage, a human editor prepares a

The specification file includes the classes to gather entities in a domain of interest, e.g.

Overview of the data extraction process.

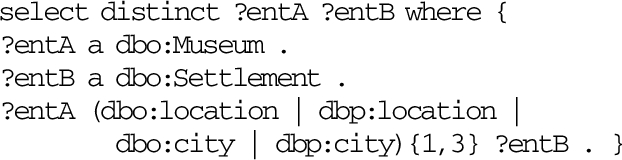

SPARQL query for retrieving the entities of the

A domain specification file also identifies the literals to be extracted for the entities of a target class – like labels, years, or image URLs – by providing the corresponding datatype properties used in DBpedia. In addition, relations between entities of different classes are also defined; simple cases just involve an object property, e.g.

SPARQL query for retrieving the cities where museums are located

JSON object generated in the data gathering stage for a sample entity

In the

Summary of the data extraction process for the different domains

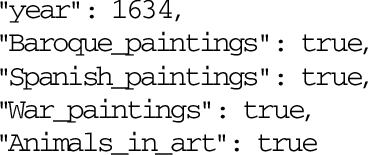

The snippet in Listing 3 includes the URI of the entity, the collected literal values, the

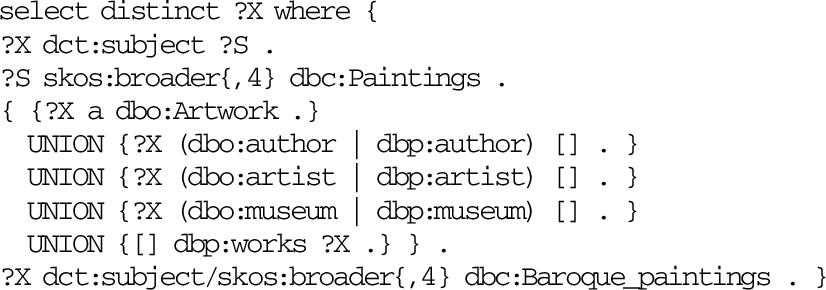

SPARQL query for retrieving

This query is based on the one in Listing 1 (corresponding to the

Annotations added to the JSON object in Listing 3 after the data annotation stage

Table 1 gives some figures about the number of classes specified, the number of entities extracted from DBpedia, and the size of the annotated data files for each domain in Clover Quiz.

A multiple choice question consists of a

The question generator supports different template types in order to allow the creation of varied questions; Table 2 describes the nine template types with illustrating examples. The first seven template types only involve entities from a class:

Supported question templates

Supported question templates

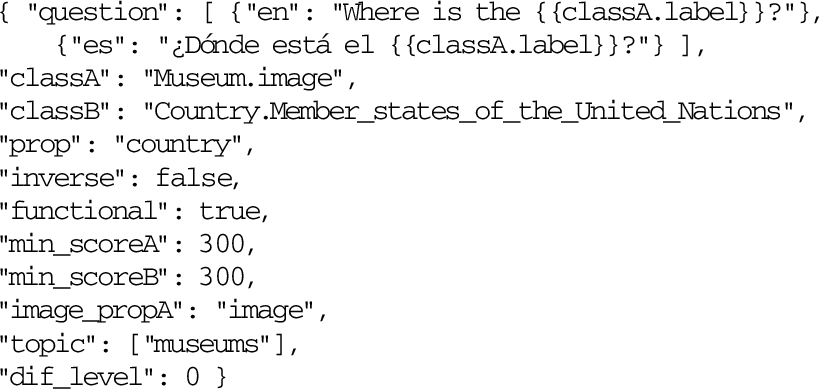

A question template is just a JSON object with a set of key-values, e.g. Listing 6. Each template includes its own multilingual stem template (see the

Example of an

When the template in Listing 6 is evaluated, the question generator first obtains the set of paintings that comply with the requirements, e.g. “The Surrender of Breda”. It will then generate a question for each occurrence by getting the image URL (to support the question) and the label of the painting (this will be the correct answer). Finally, the script will prepare three lists of distractors that correspond to distinct difficulty levels. This is performed by taking a random sample of 50 paintings in the same set, estimating the similarity of each element to the correct answer, discarding the less similar distractors, and finally preparing the three lists. Note that a question is more difficult if the distractors are closer to the correct answer [3], so similarity is computed with a measure based on Jaccard’s coefficient [15] that is defined for every class by providing the array keys, e.g.

Sample questions from the Arts domain obtained with the mobile app of Clover Quiz. The distractors correspond to the “easy list” – this is especially evident in the second example that includes countries quite dissimilar to France, i.e. non-members of the EU, in different continents, non-French speaking, and so on.

Example of a

Sample questions from the Music, Books, Animals and Technology domains obtained with the mobile app of Clover Quiz.

All template types have a similar structure, although double class templates are slightly different. Listing 7 shows the template employed to generate the question in Fig. 2 (right). This template involves two classes (

Summary of the question generation process for the different domains. There are significantly more English questions in Arts and Books because Spanish labels were missing in many DBpedia entities in these domains

System architecture of Clover Quiz.

After creating the questions associated to a template, the script computes an estimator of the questions’ difficulty. It relies on the popularity of the involved entities (see

Table 3 presents some aggregated figures of the question set generated for Clover Quiz. The overall process consisted on the creation of several “meta-templates” for every domain (20 to 50, typically) and then preparing the specific templates, e.g. Listings 6 and 7. The rationale is to produce more cohesive questions related to specific topics (like Romanesque, Gothic, Renaissance, Baroque, etc. in Arts) by partitioning the space in smaller and more coherent sets. The downside is that more templates are needed, although the required effort was kept low due to the massive use of copy&paste from the “meta-templates”.

After generating the question set of Clover Quiz, the next step is the system design. Figure 4 outlines the overall architecture, split into the mobile app and the back-end server. This separation is purposed to keep the mobile app as lightweight as possible, while the server is in charge of delivering the questions and associated images – note that questions in Clover Quiz are supported with more than 37K low-resolution images, totalling 1.12 GB. To simplify the back-end, a key design decision was to embrace the JSON format to avoid data transformations of the question set, already in JSON. Due to this, a MongoDB database is employed – MongoDB is a scalable and efficient document-based NOSQL system that natively uses JSON for storage.8

The role of the application server is to handle question requests without exposing the database server to the mobile app directly. In this way, the security of the database is not compromised and an eventual upgrade or replacement of the database component does not require changes in the mobile app. Since JSON was derived from JavaScript and is commonly employed with this language, a natural decision was to use Node.js for the application server. Node.js is a popular JavavaScript runtime environment for executing server-side code.9

Sample snapshots of the mobile app of Clover Quiz.

Application servers are purposed for handling dynamic content, but they are not very strong for serving static content. Since 67% of the questions in Clover Quiz have an associated image, fast static file serving is an important requirement. This is addressed through the use of Nginx,10

Regarding the mobile app, an Android version of the game described in Section 2 was coded. It has been designed following Android conventions and is structured in three layers: the user interface consists of Android activities and fragments, the domain layer handles user requests and implements the game logic, and the technical services layer addresses data storage, compression, encryption, and network access. The mobile app can be played in phones and tablets and the user interface is built following the Material Design guidelines11

Overview of the questions answered from March 11 to August 1

After selecting a match, a clover-shaped board is displayed with eight wedges corresponding to the different domains – see Fig. 5 (right). The player can push any of the available wedges, e.g.

The game was released for Android devices on March 11, 2017 under the names ‘Clover Quiz’ in English and ‘Trebial’ in Spanish. It is available free of charge through Google Play13

At the time of this writing (August 2017), more than 5K users have downloaded the game. Table 4 shows some statistics of the questions answered during this period. It can look striking that most of the requested questions were in Spanish, but this basically reflects the user base of Don Naipe (note that Clover Quiz has been only promoted with in-house ads). Approximately two thirds of the questions were correctly answered, while each player has taken 122 questions on average, thus indicating a reasonable engagement with the game. Interestingly, the number of problem reports is quite low and concentrated in a small set of questions. A subsequent audit served to spot some problems: a group type template with a wrong option, several animals with misleading images, and one intriguing case in which

Clover Quiz users have also given feedback through Google Play. Specifically, the average rating is 4.3 out of 5.0. The mobile app prompts users to write a review after playing the game for some time, and approximately 1% of them have left a comment. Users’ reviews are generally very supportive: there are some suggestions of new domain areas, e.g. sports, and also a complain about a server failure on April 14, 2017 – there was a system reboot, and the question back-end was not automatically restarted, now it is up again.

The latency of the production back-end server was evaluated in May 2017.

A substantial part of the riches of DBpedia correspond to Wikipedia categories.

With respect to the proposed question generation mechanism, several SPARQL queries are needed in order to build a question. For example, the running example in Fig. 2 (left) requires gathering paintings with animals, painting labels, painting images, inlinks and outlinks. A question of

Overall,

The proposed question difficulty estimator is based on the popularity of the involved entities in a question to assign a within-template difficulty score. This estimation is further controlled through a between-template difficulty assessment defined by a human editor. In addition, distractor similarity is used to provide three lists of distractors with varying closeness to the question key.

https://en.wikipedia.org/wiki/Ecce_Homo_(Martínez_and_ Giménez,_Borja)

A large body of research has addressed the automatic generation of multiple choice questions, especially using ontologies as a knowledge source – a systematic review on this topic can be found in [1]. The main advantages for using ontologies are their ability to generate deep questions, e.g. questions that asks about relations between the different notions of the domain, and their ability to produce good distractors. However, an ontology is not always available for a domain of interest, thus limiting the applicability of this approach. As an alternative, Linked Data can be used as a source of structured knowledge to generate multiple choice questions. The use of Linked Data for question generation is particularly appealing given the amount of RDF data available. In this regard, DBpedia is one of the preferred knowledge sources due to its quality and breadth of topic coverage.

There are several works in the literature that use DBpedia to generate quiz questions, such as [5,14,18,20,21,25,26,29]. Most of them are early demonstrators that are no longer available. Perhaps the main problem of these initiatives is the use of a simplistic question generation process, e.g. [29] and [14] only support one query type. In addition, none of them exploits Wikipedia categories and supporting images are rarely employed. A notable exception is [5] that invests more effort in the creation of question types by defining subsets of DBpedia and then generating questions (even with images). This approach for question generation is closer to the one devised in Clover Quiz, but it does not scale so well: each question type requires the extraction of a DBpedia subset, as well as changes in the quiz generation engine. In contrast, the approach of Clover Quiz is completely declarative and can be easily ported to other languages. As a result, there are more than 200K questions (in English and Spanish) built from 2.9K templates, while the other initiatives report question sets in the range of thousands.

[1 ,7 ,10 ,11] are examples of question generation systems not purposed for quiz games. [7] uses Linked Data as input, while [1,10,11] employ domain ontologies. [7] only supports two types of multiple choice questions, one based on entity descriptions and another built from verbalising a triple pattern. [1] can produce seven types of questions about ontology classes – this limits its applicability with Linked Data in which entities are the most prominent elements. Finally, [11] is an extended version of the system proposed in [10] that provides an extensive set of question templates that can be potentially used with Linked Data.

Controlling the overall difficulty is a very important feature in question generators, but this aspect is not addressed in many of the surveyed works. Interestingly, [11,26,29] also rely on entity popularity for estimating the difficulty of a question as a basis. [11,26] further control difficulty through an automatic mechanism: [26] analyses the coherence of entity pairs to estimate the difficulty of a question, while [11] assigns a triviality score of the predicates involved in a question stem. The between-template difficulty score used in Clover Quiz plays a similar role, though it relies on the assessment of a human editor. With respect to the generation of distractors in multiple choice questions, DBpedia-based question generators typically use random distractors, e.g. [5,7,14,29]. Since distractors have an impact on difficulty [9, ch. 41], [3,10,11,18,26] have proposed different approaches to generate suitable distractors – indeed, this is the only mechanism to control question difficulty in [3,18]. In particular, [3] investigates semantics-based distractor generation mechanisms, proposing several measures based on Jaccard’s coefficient [15] to control the difficulty of questions and running a user study to evaluate its effectiveness. Unfortunately, [3] is limited to ontology-based questions that exploit class subsumption, so it cannot be directly applied to generate questions about entities in DBpedia. Nevertheless, Clover Quiz takes inspiration from this work to generate different lists of distractors for distinct difficulty levels, as discussed in Section 4.

On the effort required to produce the question set in Clover Quiz, the most time-consuming tasks correspond to the authoring of the domain specification files and the question templates. The former requires a close inspection of DBpedia to deal with its messiness, as discussed along Section 7. To reduce human effort, a disambiguation tool such as AIDA [13] could be used to find suitable entity types in the knowledge source for a given domain. With respect to the templates, the generation of varied and high-quality questions relies on a thorough template authoring for the selected domains of interest. The approach is indeed scalable, since Clover Quiz is an individual pet project that has been fully carried out during 10 months on a part-time basis. Note that other proposals sometimes rely on the advances of the related domain of question answering over Linked Data [19]. The key challenge of question answering is the translation of information needs into a form suitable for Semantic Web technologies. Thus, [7,26] employ existing natural language generators to verbalise the set of RDF triples that conform a generated question. Natural language conversion is not required in Clover Quiz since every question template includes its own stem template and support for regular expressions, allowing high-control in the produced stems.

Furthermore, an entirely automated question generation pipeline without any configuration step seems unrealistic. Indeed, fully automated approaches normally assume a post-generation step with a human editor in order to improve the verbalisation of the obtained question set. In this regard, the system proposed in [11] underwent an editing phase to do some minor corrections of the generated questions, while [3,7] report grammar problems with their obtained questions in two user studies. Another challenge of automatic generators is the relevancy of the produced questions. Reported user studies with ontology-based approaches show encouraging results in terms of usefulness [3,10,11]. With respect to Linked Data-based approaches, question usefulness is even more challenging given the vastness of data available, but results are somewhat preliminary: [26] reported a crowdsourcing task to filter out irrelevant questions produced with their system; [7] uses the most frequent properties to generate questions from Linked Data, although the effectiveness of this approach is not evaluated in their reported user study; [29] employs property ranking heuristics to generate questions from DBpedia, reporting inconsistencies in DBpedia data and complaints about questions being too simple or too difficult in a user study.

Moving to system design, performance problems are experienced when submitting live queries to DBpedia, as in the case of [20]. Moreover, [7] reports around four seconds to generate a question, while [11] takes several minutes to generate a question set of 25 questions from a domain ontology. To improve latency, some initiatives take small snapshots of DBpedia and run their own triple stores, e.g. [5] and [21]; [14] uses a question cache; and [29] creates the question set off-line. Clover Quiz also adopts the latter approach, but it goes further by transforming the crawled data into JSON to facilitate the generation of questions, in particular to exploit Wikipedia categories. The back-end server in Clover Quiz is able to cope with the requirements of the mobile app, obtaining an average response time of 0.1 s in a benchmarking experiment presented in Section 6.

With the release of Clover Quiz as an Android app in Google Play, more than 5K users have downloaded the game and answered more than 614K questions. User ratings are high (4.3 out of 5.0) and comments encouraging, thus suggesting that the questions generated from DBpedia are entertaining and that the game mechanics work. Future work includes the development of an iOS version and a real time mode to further improve user engagement. Moreover, Clover Quiz could be extended to improve DBpedia’s content through the game. Beyond Clover Quiz and DBpedia, the proposed data extraction pipeline and question generator can be used with any other semantic dataset – the only requirement is a SPARQL endpoint. As a result, a promising future line is the generation of multiple choice questions from other knowledge bases; this is especially relevant in the e-learning domain, given the importance of multiple choice questions and the advent of Massive Online Open Courses (MOOCs) [8].

Footnotes

Acknowledgements

This work has been partially funded by the Norwegian Research Council through the SIRIUS innovation center (NFR 237898) and BIGMED (IKT 259055) project, as well as by the Spanish State Research Agengy (AEI) and the European Regional Development Fund, under project grants SmartLET (TIN2017-85179-C3-2-R) and RESET (TIN2014-53199-C3-2-R).