Abstract

The Learning Analytics and Knowledge (LAK) Dataset represents an unprecedented corpus which exposes a near complete collection of bibliographic resources for a specific research discipline, namely the connected areas of Learning Analytics and Educational Data Mining. Covering over five years of scientific literature from the most relevant conferences and journals, the dataset provides Linked Data about bibliographic metadata as well as full text of the paper body. The latter was enabled through special licensing agreements with ACM for publications not yet available through open access. The dataset has been designed following established Linked Data pattern, reusing established vocabularies and providing links to established schemas and entity coreferences in related datasets. Given the temporal and topic coverage of the dataset, being a near-complete corpus of research publications of a particular discipline, it facilitates scientometric investigations, for instance, about the evolution of a scientific field over time, or correlations with other disciplines, what is documented through its usage in a wide range of scientific studies and applications.

Introduction

While there exist a wealth of datasets containing bibliographic metadata, such as ACM1

See Official Journal of the European Union, 2014/C 240/01, 57, (2014), http://eur-lex.europa.eu/legal-content/EN/TXT/HTML/?uri=OJ:C:2014:240:FULL&from=EN & European Union: Directive 2013/37/EU in Official Journal of the European Union, 56, 2013/L 175/1 (2013),

Such a lack of access to openly licensed and structured research information hinders researchers from carrying out scientometric investigations or to deeply investigate the evolution of scientific disciplines, topics or researchers over time. In particular, for the investigation of the inherent dynamics and the evolution of an entire discipline over time, no dedicated corpus exists which (a) provides bibliographic metadata and full text in a structured and machine-processable format such as Linked Data and (b) covers the near-complete output of a particular research community over its entire existence.

This paper describes the Learning Analytics and Knowledge (LAK) Dataset4

The dataset provides Linked Data about bibliographic metadata as well as full text for all publications. Publication agreements were reached with ACM for publications not already available as open access. The dataset is published and maintained with support of the LinkedUp project,5

Publishers of bibliographic data and especially scientific bibliographies have been early adopters of Semantic Web technologies for several years, possibly because of the strong relationship between the fields of library management and information management and the strong use case for sharing scientific publications and related data. That led to a wealth of datasets and vocabularies in the area, where some of the most prominent datasets in the Linked Data cloud today are exposed by organisations such as the British Library (see Linked Open BNB10

That also led to the emergence of vocabularies for bibliographic information, where earlier works include the SwetoDblp ontology [1] and more recent efforts include the BibBase ontology [10], linked also to the Bibliographic Ontology BIBO,13

The Semantic Web Dog Food (SWDF)18

While we also offer regularly updated dumps (RDF/XML, N-Triples and R19

LAK Dataset facts table

Data, including metadata and full text, is extracted from papers sourced from all editions of the two main conferences in the LA and EDM fields (ACM Learning Analytics and Knowledge, International Conference on Educational Mining), the two main journals, namely the recently founded Journal of Learning Analytics and the Journal of Educational Data Mining, and the proceedings of the two editions of the LAK Data Challenge held in conjunction with the LAK conferences. Table 3, shows the number of papers included from each source. This collection constitutes a near complete corpus of research works in the areas Learning Analytics and Educational Data Mining. Given the variety of sources and data, the data is split into four subgraphs where different license models apply:

Further details about the publications in each graph are shown in Table 3. Data from graphs (1)–(2) are available under CC-BY licence.20

The knowledge extraction process implemented to transform unstructured publications into structured data is composed of three main steps: (1) transforming PDF to plain textual representation, (2) pre-processing, clean-up and consolidation of the textual information, (3) lifting data into RDF schema (Section 4.1). Given the inherent differences of the structure of papers across the different venues, the extraction had to be tailored to each publication origin. Additional issues arose from papers not complying entirely with the suggested layout, requiring several improvement iterations. Further details are provided in [9]. At this stage the full text has been extracted without further considering its structure, while ongoing work is concerned with further structuring the text body. Literature references are also extracted and made available in order to support scientometrics based on co-citation networks.

Given the nature of the dataset, new publications are added continuously as these become available, i.e. whenever new proceedings or journal issues of the reflected series are published. Optimisation of the processing pipeline throughout previous years facilitates a straight-forward and efficient extraction process for new publications.

The ongoing maintenance of the dataset is carried out as a collaborative activity of all partners including the authors of this paper and their institutions, as well as SoLAR, being one of the central organisations driving the advancement of the LA discipline. Maintenance is not only carried out at the data or instance level, but also with respect to the actual ontology and its alignment with other vocabularies, e.g. by frequently adding new alignments with emerging vocabularies.

Schema, mappings and interlinking

Schema

For each publication the following features are extracted: title, authors, keywords, abstract, text body, references, publication venue (journal/conference proceedings). To ensure wide interoperability of the data, we have adapted Linked Data best practices21

While this URL always refers to the latest version of the schema, current and previous versions are also accessible, for instance, via

The majority of schema elements are based on BIBO, FOAF,24

Schemas and namespaces used in LAK Dataset

The main classes and predicates are listed in Fig. 1, and Tables 4 and 5. By relying entirely on established and frequently used types and properties, we aim for a high reusability of the data.

Academic publications in the dataset

Mappings were evaluated for consistency (using the HermiT reasoner26

Key classes and properties used in the LAK Dataset (conference proceedings only).

The following Table 4 provides a general overview of the number of represented entities per type in the LAK dataset.

Entity population in the LAK Dataset

Table 5 summarizes the most frequently populated properties.

While bibliographic metadata is widespread in the LOD graph, our interlinking efforts have particularly focused on co-reference resolution across entities such as authors, publications, and organisations. Given that LAK is considered a sub-discipline of Computer Science (CS), we have particularly considered the datasets DBLP and Semantic Web Dog Food. While DBLP allows us to link authors to their corresponding representation in a more exhaustive bibliographic CS knowledge base, the Semantic Web Dogfood has been particularly useful to relate equivalent organisations, given its strong overlap with the LAK Dataset with respect to authors’ affiliations. All considered datasets complement each other with respect to the schema, i.e. the expressed properties and conceptual model, as well as its population, i.e. the amount of distinct entities actually represented within each dataset. While the LAK Dataset has a high depth with respect to the represented properties and features, even including references and textual body of publications in contrast to most bibliographic databases, it has a fairly narrow scope by focusing entirely on specific CS subjects (Learning Analytics and Educational Data Mining). Coreference resolution of entities, for instance authors, in other more broad bibliographic knowledge bases provides a more complete view on the work of individual authors or organisations and the CS community as a whole. Similarly, the LAK Dataset complements existing corpora by (a) enriching the limited metadata with additional properties and (b) containing additional publications not reflected in DBLP or the Semantic Web Dogfood, creating a more comprehensive knowledge graph of Computer Science literature as a whole. For instance, in DBLP and Semantic Web Dogfood, LAK publications are not exhaustively represented, references and full text are missing in both cases and, in the case of DBLP, affiliations are not reflected as explicit entities.

Most frequently populated properties in the LAK Dataset

Most frequently populated properties in the LAK Dataset

While overlap among authors in LAK and Semantic Web Dogfood has been less prominent, the majority of authors could be resolved using DBLP. Such links enable a broader understanding of the general scientific output of LAK researchers. For establishing coreferences, literals (foaf:name, dc:title) of entities in all three datasets have been matched. To improve recall and cater for different representations, some preprocessing was applied to address issues with character codes and distinct naming conventions.

Additional outlinks were created to DBpedia as reference vocabulary. To allow a more structured retrieval and clustering of publications according to their topic-wise similarity, we have linked keywords, manually provided by paper authors, to their corresponding entities in DBpedia, thereby using DBpedia as reference vocabulary for paper topic annotations. Keywords, i.e. terms, were disambiguated through state of the art NER (Name Entity Recognition) methods (DBpedia Spotlight), allowing to link for instance keywords such as “educational gaming” to corresponding DBpedia entities, such as

The following Fig. 2 depicts the links of resolved or enriched LAK entities.

A high resolution version of this figure is available at:

Links in the LAK Dataset.28

With respect to inlinks, the dataset is referenced by the LinkedUp catalog29

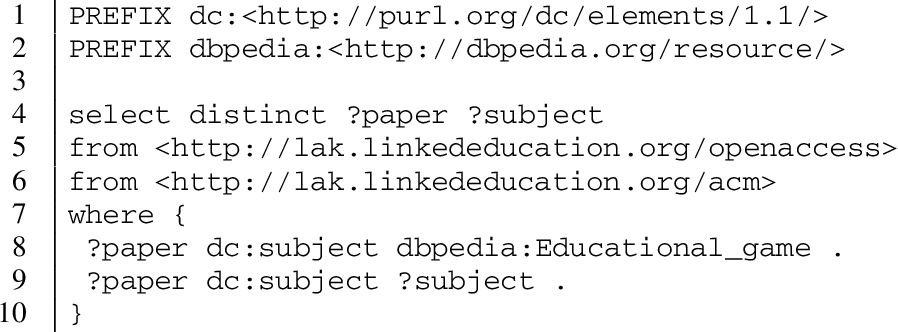

Some example queries31

Additional queries available at:

Retrieving papers covering related topics (sharing same DBpedia entities).

The following example shows a federated query executed across the LAK dataset and the DBLP dataset. In this query, the information about a specific paper of the LAK dataset has been completed with additional data (DOI, reference to bibsonomy) included in DBLP.

Federated query retrieving bibliographic data related to one paper from DBLP and LAK-Dataset.

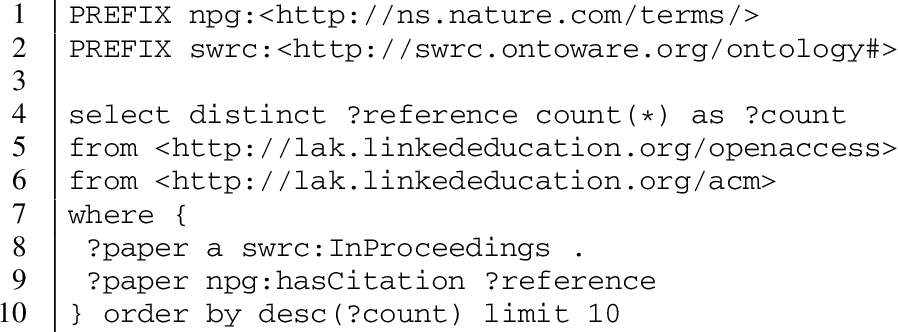

Listing 3 shows a query to retrieve influential publications in the LA field by selecting the most cited papers.

Retrieving influential publications by means of the most cited papers.

The LAK Dataset has received considerable attention and support from organisations such as SoLAR, which also advertises the dataset for its own purposes.32

Known publications listed at

The dataset also forms the basis of the LAK Data Challenge, organised by the authors and a team of researchers affiliated with SoLAR, LinkedUp34

The challenge is revolving around the overall question on what insights can be gained from analytics on the LAK dataset about the evolution Learning Analytics as a whole or individual topics, researchers or organisation as well as their correlation with other fields. Given the narrow scope of the data, the variety of the short-listed submissions (so far 13 in total) has been very wide, where Fig. 3 gives an overview of the involved author origins.

LAK Data Challenge submissions – authors per country.

Applications are further described in the proceedings of the 2013 and 2014 LAK Data Challenge editions [3,4] and are available online.37

Analysis & assessment in terms of topics, people, citations or connections with other fields.

Applications to explore, navigate and visualize the dataset (and/or its correlation with other datasets).

Usage of the dataset in recommender systems.

While all submissions are notable and in many cases, combine features from several categories, we would like to emphasize particularly works which have received recognition beyond the challenge, such as “Cite4Me” [7], or near complete scientometric environments such as DEKDIV [8] (depicted in Fig. 4).

DEKDIV active LA researchers exploration.

The latter combines a range of features, such as trending topic analysis, co-citation and collaboration analysis with recommendation approaches, for instance to suggest adequate reviewers and experts, where Fig. 4 shows the most frequent authors with regards to a specific set of topics.

Next to these applications, the dataset and some of its applications have been endorsed and supported by SoLAR and ACM, where current discussions are geared towards embedding some of the described applications into their more general libraries and platforms. In addition, as joint activity of the authors and SoLAR, current work aims at expanding the dataset with actual learning analytics research data, i.e. data usually used in the captured publications. The joint vision is to provide a near-complete corpus which provides not just the actual scientific publications in structured formats, but also to a larger extent, their used raw research datasets. This is meant to further facilitate LA & EDM research and open access to research publications and data in general.

In this paper, we have presented (a) the LAK Dataset, as a particular resource which enables the exemplary investigation and analysis of the evolution of scientific disciplines and the validation of scientometric methods and tools, and (b) a vocabulary, collection of mappings and linking practices for adoption in similar efforts, towards a wider movement engaging in the publication of open and machine-processable scholarly resources.

While, according to the 5-star classification39

Additional insights were gained from the vocabulary definition process. Given the specific scope of our dataset, covering bibliographic metadata and full text, it has been necessary to combine elements from different, partially overlapping vocabularies. We relied on established vocabularies to represent the different involved notions. Due to cross-vocabulary statements, implicit type and predicate mappings emerged which were explicitly represented through dedicated mapping statements. Next to these, additional mappings were introduced to ensure wide interoperability of the data. Given the complex relationships emerging from such vocabulary usage, assessing the compliance of new introduced cross-vocabulary mappings is crucial to eliminate any conflicts. In particular the evolution of external vocabularies might pose issues, where continuous monitoring is required to ensure compliance at all times. To this end, the encapsulation of all schema-level statements in our datasets is meant to serve as a starting point for similar efforts, for instance, for exposing bibliographic data in other disciplines.

While the LAK Dataset has a fairly well-defined and somewhat narrow scope, covering only literature in a very specific subdiscipline – i.e. LA and EDM – analysis and correlation with bibliographic information in other sources already now enables interesting investigations and applications [3,4]. Given that the actual text body of publications contains substantial information but is yet still missing from the majority bibliographic Linked Data, we would like to encourage work on similar efforts, i.e. the creation of bibliographic datasets containing both metadata and the actual content. In this context, our work provides a set of practices for related efforts in other scientific areas. This would allow a more direct processing and analysis of scientific works across disciplines. Furthermore, applying such approaches to a wider area could contribute to resolving the gap between unstructured and hard-to process publication formats such as traditional PDFs and structured Linked Data, a topic widely discussed not only in the Semantic Web community but also supported by corresponding European directives.3