Abstract

PURPOSE:

This study introduces a new scale for the assessment of head control called the Head Control Scale (HCS). The purpose of this study was to establish interrater reliability of the HCS and to determine its usefulness in a clinical setting.

METHODS:

The HCS assesses head control in four positions (prone, supine, pull to sit, and supported sitting) on a 0–4 rating scale. The authors used both a focus group and pilot testing to refine the scale to its final version, which was then used to assess interrater reliability. Twenty-six therapists used the HCS to evaluate head control of five subjects of varying ages and abilities who were videotaped spending 30–40 seconds in each position. Participants also completed a post-rating survey.

RESULTS:

Fleiss’s weighted kappa coefficient is excellent for the prone (0.82), pull to sit (0.83), and sitting (0.88) positions as well as for the scale overall (kappa

CONCLUSIONS:

The HCS has high interrater reliability and users report it to be a needed tool, applicable to clinical practice, and easy to use.

IMPLICATIONS:

The results of this study indicate that the HCS has great potential for clinical use.

Keywords

Introduction

The ability to control the head is a vital component of motor development and is one of the first skills an infant attains. An infant’s inability to control the head is thought to be due to the lack of muscle strength combined with the inability to organize muscle activity [1]. Emergence of head control begins early; infants as young as one month of age may begin to demonstrate postural control responses in neck musculature [2]. Postural control typically develops in a cephalocaudal nature in that head control is a precursor to trunk control; as a result, delays and deficits in the development of head control can interfere with subsequent motor milestones. Additionally, the development of head control is an indicator of a maturing neurological system and is required for the proper integration of vision and righting reflexes [1].

Difficulties with obtaining and maintaining head control is a common finding in children with conditions such as cerebral palsy (CP) and may be one of the first signs that a child is not developing typically [3]. There are several reasons why head control may be targeted as part of therapeutic interventions. One is because head control is a developmental milestone upon which future movement is dependent. It also contributes greatly to visual development, body awareness and social interaction. Another is because head control, even in isolation, is a critical skill for the use of head array switches for power mobility and eye gaze communication systems as well as improving alignment in equipment such as standing frames and adaptive seating. Evidence suggests active strategies to promote motor development can be effective [4]. However, little is known about how therapists assess head control in the evaluation of a pediatric patient or for monitoring progress to determine the effects of intervention. Anecdotal evidence suggests assessment is largely subjective, using portions of standardized assessments or descriptive language to document observed head control.

Current literature indicates a variety of ways in which head control is assessed. This includes simple “yes” or “no” indicators if head control is present [5], the number of times and how long the head is in midline [6, 7], use of inclinometers [8], motion analysis via computer software [9, 10, 11, 12, 13, 14, 15], and assessment tools such as the Sitting Assessment Scale [16] and Segmental Assessment of Trunk Control [17]. The above-mentioned methods of assessing head control have limitations such as expense, special training needed, or evaluating head control in only one position.

Another common way of assessing head control is the use of motor assessment tools such as the Gross Motor Function Measure (GMFM) [16, 18], the Test of Infant Motor Performance (TIMP) [12, 19], and the Bayley Scales of Infant and Toddler Development (Bayley) [19, 20]. The GMFM and the Bayley are reliable and valid assessment tools [21, 22] when used according to standardization protocol. However, the proportion of test items related to head control to other items is so small that it could be difficult to detect improvements in head control.

The TIMP is a valid and reliable assessment of functional motor skills in newborns and infants under the age of four months [23]. Of the 42 items, 20 are related to head movement, some of which are directly related to the infant’s ability to maintain head control in different positions, such as supported sitting and during a pull to sit [24]. The TIMP is a well-designed tool that is effective for the early detection of conditions such as cerebral palsy [25]; however, it requires special training at the cost of time and financial resources and is only applicable to infants under the age of four months.

To the authors’ knowledge there is no validated, reliable, widely-implemented instrument to assess head control in children with significant motor delays in an objective manner that is practical, accessible, and easy for clinicians to use. Therefore, the purpose of this study was to develop such a scale and determine its interrater reliability, which was done in three phases.

Materials and methods

Phase one

The focus of the initial phase of this project was to develop a scale for objective assessment of head control. The Head Control Scale (HCS) (Fig. 1) in its current form was based on the Clinical Rating Scale for Head Control (CRSHC) developed by an occupational therapist [26]. Chavan’s scale was developed with input from a pediatric physician and two occupational therapists with more than 10 years of experience. The scale is a 5-point ordinal scale for two positions (prone and supported sitting) and a 4-point ordinal scale for one position (supine) [26]. The CRSHC was used as an initial structure for a new scale and was done with the author’s permission.

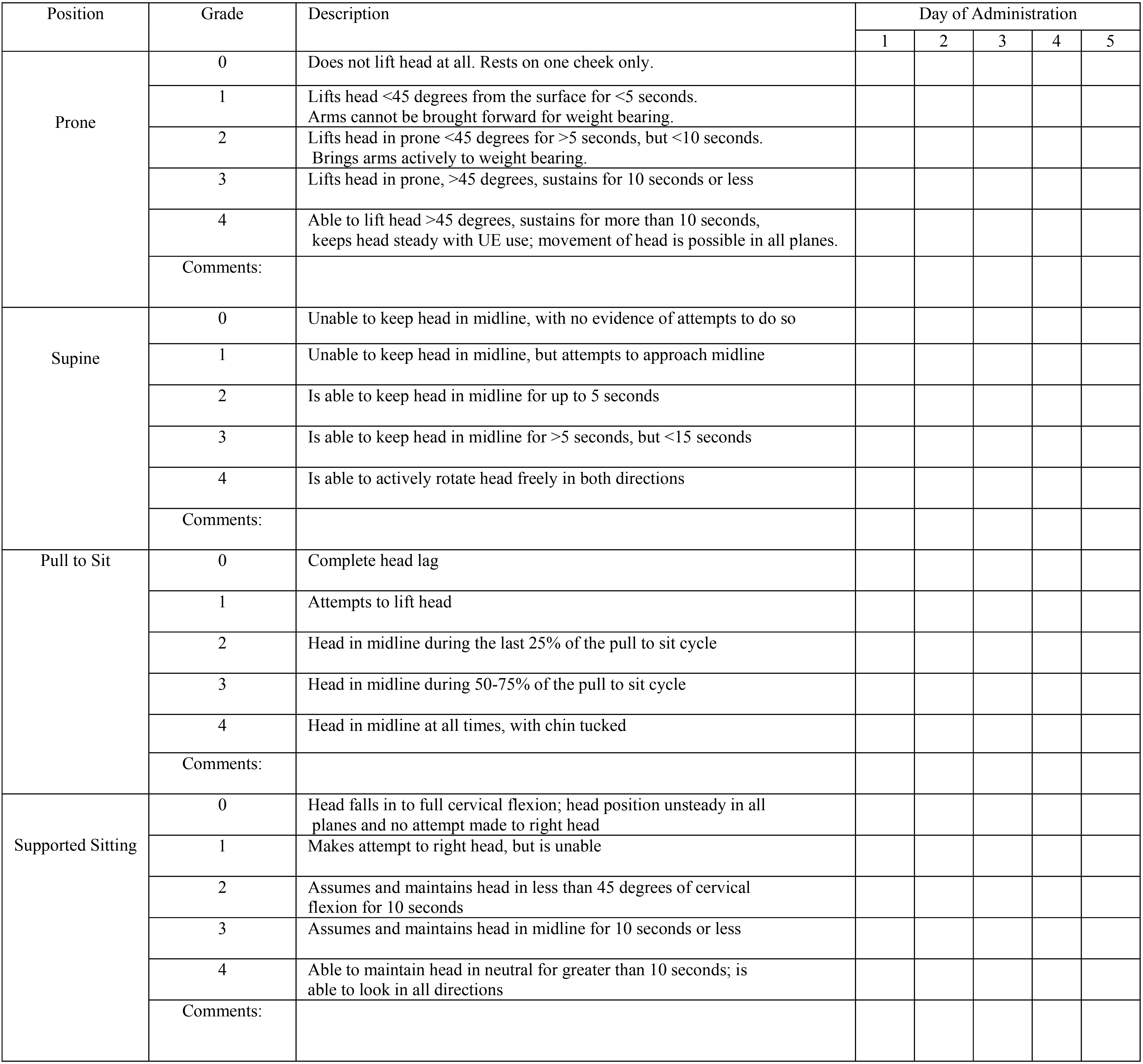

The Head Control Scale. Instructions: Left column indicates starting position; Items may be elicited and/or observed, with or without use of toys; Make note of parent report, but do not score accordingly; Use comments section to provide any additional information (e.g. sitting on mat or bench, how much assistance provided, any compensations noted).

The primary researchers for the current study are a physical therapist with over 13 years of experience and an occupational therapist with 20 years of experience, both in pediatric settings. The two authors used the original CRSHC along with a review of head control elements in other standardized assessments, literature and textbooks on the development of postural control, and personal clinical experience to develop a new version, renamed the Head Control Scale (HCS). In the HCS, descriptors for each rating were clarified and simplified, a section was added dedicated to head position during a pull to sit, and the four sections were rated on a common scale (0–4).

Next, a group of clinicians was recruited to review and discuss the HCS in a process similar to the Nominal Group Technique (NGT), a five step method designed to aid in group decision-making [27]. The NGT was used because it is time and cost effective, offers both qualitative and quantitative data, and has been shown to be useful in rehabilitation research [27, 28] as well as in the development of assessment tools [29]. The group consisted of 10 physical therapists, eight occupational therapists, and one speech therapist representing a variety of clinical settings (outpatient, acute, early intervention and school-based practice) and experience levels (ranged from one to 39 years of practice).

After a brief presentation by the primary researchers (NGT Step 1) [27], the group members were given the scale to review (NGT Step 2) [27], after which group members shared their ideas for improving the HCS (NGT Step 3) [27]. Group discussion proceeded to help clarify thoughts and ideas (NGT Step 4) [27]. True NGT has an additional step consisting of voting by the group [27]; this step was not done because the primary researchers made the decisions on how best to use the feedback and discussion to modify the HCS. Changes included adding brief instructions to the HCS form, adding a comment box for each section, and making minor changes to the wording of each rating’s description.

The Northern Arizona University Institutional Review Board reviewed the proposal and deemed the research project exempt. The second phase of the project was designed to assess the agreement of HCS scores among multiple raters, when a single subject was evaluated from a video. Informed written consent was obtained from the parent of the video subject as well as of the recruited raters. The child in the video is a three-year-old male with spastic quadriplegia CP, Gross Motor Classification System (GMFCS) Level V. The GMFCS is a standardized system to categorize levels of CP; levels range from the least severely impaired (I) to the most severely impaired (V) [30]. This child was purposefully selected to represent severe functional limitations as this is the population for which the HCS will most likely be used and for whom head control is more challenging to describe. The subject was recorded spending 30–40 seconds in each position of the HCS (prone, supine, pull to sit, and sitting). One researcher (PT) facilitated the movements (pull to sit, supported sitting) while the other researcher (OT) helped motivate the subject with auditory and visually appealing toys.

A convenience sample of therapists (PTs, OTs, and SLPs) was recruited through flyers sent to local clinicians. Video viewing and rating were done at a time and place of convenience for the raters and often occurred in groups; the video was shown on a large television screen or computer, but all ratings were completed independently. No formal training was provided, though brief instructions were given to participants prior to video viewing and rating. A total of 20 raters viewed the video and completed the HCS.

Phase two results

Phase two results

1 subject rated by 20 participants.

A high percent of agreement among raters can indicate good interrater reliability [31]. As reported in the Results section below (Table 1), the high rate of agreement among the raters in Phase 2 using the HCS indicated to the researchers that the scale was reliable enough to proceed with Phase 3 of the study.

In addition to rating the subject, each participant was asked to complete a survey, the purpose of which was to collect demographic information and elicit feedback from participants. The survey consisted of multiple choice questions, statements rated on a Likert scale, and a comment box for free text (Appendix A). Feedback from the survey was used to make additional changes to the HCS, which included moving the instructions to the top of the page and editing the video for clarity.

Though the results of Phase 2 indicated a high percentage of agreement, this measure alone is limited in its usefulness because Phase 2 was based on a single subject (video) and does not account for the role of chance, which may provide an overestimation of reliability [31]. Therefore, Phase 3 was conducted in which 26 participants used the HCS to rate five different video-taped subjects; a description of the subjects can be found in Table 2. Video 1 was used in both Phases 2 and 3; all other videos were used only in Phase 3. The video-taped subjects were chosen to represent all the ranges of head control the HCS represents, from none to full control. Though three of the videos included children who were typically developing, these were chosen due to their age; at 10 days, 6 weeks, and 16 months, a wide range of head control ability was represented. Each subject in the video was recorded spending time in each of the four positions. Videos varied slightly in length, ranging from one minute thirty-nine seconds to two minutes, forty-seven seconds. The varied length of videos was due to each subject’s performance; those who were more mobile were less likely to spend much time in certain positions such as prone or supine. The number of videos made was limited due to the resources required to produce them.

Description of subjects rated in phases two and three (videos)

Description of subjects rated in phases two and three (videos)

Demographics of Phase Three Raters (

Phase three results

A convenience sample of raters was again recruited by emails to local clinic owners. None of the raters from Phase 2 participated in Phase 3. A brief demogr-aphic/post-rating opinion survey was filled out by each participant. Demographic results from that survey are given in Table 3. Data collection occurred on-site at the participants’ clinic. No formal training was provided, though brief instructions were given, after which raters viewed the videos on a computer or television screen and rated them simultaneously, but individually. All participants had the same amount of time to view the video.

Average percent agreement across the 20 raters of the video used in Phase 2 was calculated for each HCS section (Prone, Supine, Pull to Sit, and Supported Sitting) and overall (the total score across the four sections). For rater data from Phase 3, in addition to average percent agreement, Fleiss’s weighted kappa coefficient [32] was estimated to assess evidence for rater agreement beyond what can be attributed to chance alone, for each HCS section score and overall. All analyses were carried out in the

Results

Phase two

Twenty raters were each asked to rate the single subject video on all four sections of the HCS in Phase 2, but some raters chose not to complete all four sections. For each section, the number of raters was at least 18. The average percent agreement across raters in scoring each section of the HCS is reported in Table 1. Average agreement exceeded 89% for each section, and overall was 98.1%. Because only one subject was rated in Phase 2, it is not possible to calculate kappa statistics for this data.

Phase three

Twenty-six study participants each rated the five subjects on the four sections of the HCS. For each HCS section and overall, Table 4 gives the median of the twenty-six participants’ scores for each subject and a summary of the agreement in scoring the five subjects across participants. For each HCS section, median scores across subjects range from zero to four. For each HCS section score and the overall score, the average percent agreement across the raters in Phase 3 was at least 90%.

Post-rating opinions – frequencies

Post-rating opinions – frequencies

Fleiss’s weighted kappa coefficient falls between

Landis and Koch [35] provide guidelines for interpreting the value of the kappa statistic. With the exception of HCS Supine, with a kappa of 0.68 – falling in the “substantial” agreement range – all of the remaining HCS sections (and overall) have a kappa coefficient that would be categorized as “almost perfect” agreement (kappa greater than 0.81).

The 95% confidence intervals reported in Table 4 for kappa show that for each HCS section, the set of plausible values for kappa for this study falls between “moderate” or “substantial” agreement to “almost perfect” agreement. The exception to this is Supine score where the lower end of the confidence interval is just below 0.40.

Results concerning the opinion questions in the post-rating survey are given in Table 5. Nearly all (24 out of 26, or 92%) Phase 3 participants answered either “Agree” or “Strongly Agree” to the statement “I feel there is a need for this scale in some practice settings.” For each of the two statements, “I feel that I could use this scale in my clinical practice,” and “I felt that the scale was easy to use,” a slightly lower percentage (22 out of 26, or 85%) of participants answered “Agree” or “Strongly Agree.” For each of these last two statements, there was one rater (out of the 26) who disagreed with the statement. Rater 7 was a speech therapist who did not feel the scale was applicable to her clinical practice. Rater 13 was an OT student who reported in the comment section that there was difficulty in distinguishing midline and flexion postures.

The purpose of this study was to develop a scale for the objective measurement of head control that is practical and accessible for practitioners as well as to determine its interrater reliability. The scale was developed in three phases to ensure it was done in a structured, methodical manner. Ultimately, changes were made based on input and results along the way so that the final results were as accurate as possible.

When compared to the clinical rating scale developed by Chavan [26], the HCS resulted in higher interrater reliability. However, comparisons are made with caution as the methodologies differed in the Chavan study. In that study, three raters used the scale to rate 28 children with known impairments in head control; interrater reliability among raters ranged from 0.16–0.51 (prone), 0.43–0.61 (supine), and 0.58–0.67 (supported sitting). The clinical rating scale did not have a pull to sit section, so no comparison can be made with that portion of the HCS.

Content validity of the HSC was established via the use of literature, expert input, the focus group and pilot testing of the scale, described as Phases 1 and 2 above, to inform the scale’s development.

Lack of available assessments for the measurement of head control leaves health care professionals with limited options for baseline measurements and documenting outcomes of therapeutic interventions. With the importance of head control development discussed previously, objective evidence-based resources are needed to document progress and outcomes of interventions for children with atypical development. Current standardized assessment tools are often not as useful for children with severe motor limitations or highly discrepant gross motor development and are not sensitive enough to detect minor change. Additionally, there is no uniform, widely accepted means of objectively assessing head control for researchers conducting studies on head control. The HCS has the potential to fill a need for the assessment of head control; future studies to establish the HCS’s ability to detect change and its usefulness in research can help determine this more fully.

The authors designed the HCS with this population in mind to allow the objective assessment of head control; this will improve documentation and consistency and eliminate a measure of educated guesswork that has traditionally gone in to assessing head control. In addition to “substantial” to “almost perfect” inter-rater reliability according to our findings, the HCS scale appears to have high clinical utility, whereby it can be reliably used with no training and is brief enough to be utilized regularly in practice. Completion of the scale for five videos took less than 10 minutes and the form was designed in a one-page format that can be used more than one time (Fig. 1).

Limitations exist within this study. First, the overall HCS score is based upon a 16-point scale derived from the four HCS sections each scored on an ordinal 0 to 4 scale. An overall score of 0 indicates lack of head control and a total score of 16 indicates fully developed, anti-gravity head control. Since each of the HCS sections is scored on an ordinal scale, the validity of adding the four section scores to produce an overall score requires the assumption that consecutive points on the scale for each HCS section score measure the same amount of difference in the underlying physical condition being measured. Even so, our analysis shows that the each HCS section demonstrates strong reliability across the subjects rated.

The raters who participated in this study were a convenience sample of therapists from local clinics, not a random sample from a larger population. To the extent that these raters are not representative of all therapists who might use the HCS, the reliability results presented here may be different than what one might find were a random sample of therapists available. Nevertheless, the kappa statistic provides a valid summary measure of the strength of agreement among the raters included in the study.

Videos were used so that each participant had the exact same experience rating the subject. There was no effect of fatigue or variances in performance from one day to another as might be expected if the subjects were rated in real-time. The time it took to take and produce each video limited the number provided to participants. As a result, an additional study limitation is that only five subjects (videos) were rated by participants. With just five subjects, this study may not represent the full range of head control conditions in the population to which the HCS could be applied.

An additional limitation is that disciplines and settings are not evenly represented, with OT being overrepresented compared to PT and SLP and 88.5% of participants work in the outpatient pediatric setting (Table 3). These differences are a result of the nature of participant recruitment; specific disciplines were not targeted and no attempt was made to have an equal number of participants across all disciplines and settings. Future studies could investigate the use of the HCS within specific disciplines and settings.

The HCS could be a beneficial tool for monitoring progress of patients with head control limitations and tracking change over time; it was designed with this intent. However, its ability to detect change was not part of this study and will be included in future studies along with the further development of reliability through a test-retest study. Future studies will include a larger sample size of children whose head control abilities represent the full spectrum of the head control scale. The minimal detectable change (MDC) will also need to be determined to distinguish true change from measurement error or variabilities in performance not related to true change such as fatigue. Future studies should also examine the reliability of the HCS when used by individuals not trained as therapists. The HCS was designed to be used with minimal training, but its reliability for this use needs to be investigated.

Conclusions

The Head Control Scale is a reliable, useful tool that can be immediately put in to practice for the objective assessment of head control. It fills a gap in the assessment tools currently available to therapists and has potential for a variety of purposes.

Footnotes

Acknowledgments

The authors wish to thank Ashley Sinnappan, Occupational Therapy Student, for her assistance with data collection and various other tasks that helped make this project possible.

Conflict of interest

The authors do not have any conflicts of interest to report.

Appendix A: Participant survey

After completing the Head Control Scale, please answer the following questions.

Occupation (please check the appropriate box)

Occupational therapist

Physical therapist

Occupational therapy assistant

Physical therapy assistant

OT student

PT student

Speech language pathologist

Speech language assistant

SLP student

Years of experience in profession (please check the appropriate box)

Under 3

3–5

6–10

10–20

21+

Practice Setting-please estimate years practiced in each setting (leave blank areas not practiced)

Acute care adult

Acute care pediatrics

Rehabilitation facility-adult

Rehabilitation facility-pediatric

Outpatient-adult

Outpatient-pediatrics

Early intervention

School based practice

Home health adult

DDD/home health pediatrics

SNF

Other

I feel there is a need for this scale in some practice settings. Strongly agree Agree neutral disagree strongly disagree I feel that I could use this scale in my clinical practice. Strongly agree Agree neutral disagree strongly disagree I felt that the scale was easy to use. Strongly agree Agree neutral disagree strongly disagree Please use the space below for any additional comments. -------------------------------- -------------------------------- --------------------------------