Abstract

Particle Swarm Optimization algorithm (PSO) has been widely utilized for addressing optimization problems due to its straightforward implementation and efficiency in tackling various test functions and engineering optimization problems. Nevertheless, PSO encounters issues like premature convergence and a lack of diversity, particularly when confronted with complex high-dimensional optimization tasks. In this study, we propose an enhanced version of the Island Model Particle Swarm Optimization (IMPSO), where island models are integrated into the PSO algorithm based on several migration strategies. The first contribution consists in applying a new selection and replacement strategies based on tabu search technique, while the second contribution consists in proposing a dynamic migration rate relying on the Biogeography-Based Optimization technique. To assess and validate the effectiveness of the proposed method, several unconstrained benchmark functions are applied. The obtained results confirm that the approach yield better performance than the old version of IMPSO for solving NP-hard optimization problems. Compared to the performance of other well-known evolutionary algorithms, the proposed approach is more efficient and effective.

Keywords

Introduction

Several evolutionary algorithms (EAs) are based on a population of candidates which are simulated a natural phenomena such as evolution and biology. They have attracted the research community thanks to their simplicity of mathematical modelling. From the most studied algorithms, the genetic algorithms (GA) are presented and are relied on mechanisms of selection, crossover and mutation to search for potential solutions [8]. Moreover, swarm intelligence algorithms, based on a group of agents in interaction, are able to optimize a problem through collaborative research. Evolutionary algorithms derive from the same source of inspiration applying different mechanisms to generate a new population. The difference among them can establish by adaptation to the treated optimization problem (continuous, discrete), likewise EA do not utilize the same concepts to evolve. Swarm intelligence algorithms can be distinguished by the movement of its agents in the search space according to the type of inspiration system (terrestrial, aquatic, micro-organism) [23]. In this context, the particle swarm optimization method (PSO) is characterized by a global and self-organized collective behavior. Firstly, the efficiency of PSO compared to other evolutionary algorithms lies essentially in the stability and adaptability of the swarm which is based on the particle displacements during the search process [7]. For example, the population generation in the GA can provide fluctualisations, during the selection of new descendants, caused by the elimination of certain individuals. However, the population of the artificial bee colony algorithm (ABC) are presented by three families [16]: workers, spectator bees and scout bees, which the interaction among them depend on a setting of parameters quite tricky [15]. Further, the population generation of the cuckoo search algorithm (CS) is based on reproducing new agents in terms of the current state of existing agents and Levy flight mechanism and also on the selection between these agents in order to survive [31, 12]. Its difficulty remains in the choice of the Levy flight strategy [11]. By imitating the social interaction behaviours of bird flocking and fish schooling, PSO was proposed [18], it is based on the interaction between particles to find the best solution. Because of its easy implementation and variety of applications in optimization problems [13], such as continuous, discrete and engineering problems [13], PSO has attracted the attention of the research community [6]. However, PSO has the ability to quickly converge, which can lead to stagnation at local optima. Besides, it does not explore the whole search space, especially in the case of solving optimization problems that have large dimensionality [10, 32, 30]. To improve this algorithm, many hybrid techniques and models, such as the island model, were introduced.

The main contributions of this study can be summarized as follows:

The improved IMPSO approach integrates IM techniques. The migration process is triggered after certain number of iterations. Different types of communication topologies among the sub-swarms with various numbers of islands are employed to explore the search space. To enhance island model strategies, a dynamic migration rate is applied based on the migration strategy derived from the Biogeography-Based Optimization algorithm. An innovative selection and replacement technique is adopted. It utilizes a tabu list to store the emigrant particles. Particles with a history of improvements are prioritized for selection to migrate to neighboring islands, while particles with a less favorable history are more likely to be replaced in the migration process.

This paper is divided into three sections:

The first section is dedicated to the background of the used methods, the particle swarm optimization, the island model, the tabu research and the Biogeography-Based Optimization. On the other, the second section focuses on the hybridization between the island model and some evolutionary algorithms such as genetic programming and cuckoo search. The third section defines our contributions to improve IMPSO. In the proposed approach, the BBO algorithm is used to make the migration rate work dynamically. Then, other selection and replacement strategies based on the tabu search are presented. After that, the different steps of the introduced approach escorted by its pseudo-code and its model are described. The fourth section is concerned our approach is validated using the CEC05 benchmark as continuous optimization problems. Subsequently, the obtained results of proposed approach are presented and are compared with other evolutionary algorithms.

Mono-objective optimization

The optimization problem can be formulated by an objective function to be optimized and a set of equality and inequality constraints to be met simultaneously:

Minimize

Where

PSO algorithm

The PSO is based on a population of candidate solutions called a swarm including candidate solutions or particles. In PSO, every particle, having a position in the search space, is a potential solution to the considered optimization problem. This space includes the set of all possible solutions to the optimization problem.

Each particle has a position and a velocity. The position

The particles interact and learn from each other, following the same simple rules, to find the best solution for the optimization problem. In addition to position and velocity, every particle has a memory that saves its best position. Thus, the movement of each member of the swarm is influenced by its personal experience and by the experience of its neighbours. In fact, the PSO algorithm records the common best experience among all the members of the swarm. This experience is called the global best.

In each iteration of PSO, the position and the velocity of each particle are updated according to this simple mechanism:

The particle moves towards the new position. It uses two components: the velocity and the previous position. The new velocity is created according to the previous velocity, personal best, and global best.

The mathematical model is described for the velocity and postion updating as follows:

For each iteration, the position of the particle is updated according to its previous position and current velocity. The velocity of each particle is also changed according to its personal information which is the best position found during its search and the best position found by the whole swarm. Furthermore,

The first studies based on the island model apply the genetic algorithms [27]. It has been proved that the strategies of island models can enhance remarkably the GA equilibrium between exploration and exploitation in the search space. Nowadays, the IM successfully used in other evolutionary algorithms such as PSO and cuckoo search algorithms [5].

The IM divides the population into subpopulations called islands; each of which finds, in the first step, works to find the best solution among its group of solutions. In the next step, it compares its result with those provided by other islands and replaces its least optimal solution by the best one obtained by its neighbours. Indeed, the IM has specific parameters used to select the candidate solutions in an island. Then, it applies a defined strategy (depending on the topology) to send solutions between two or more islands. However, the most strategy of IM is how the islands cooperate to find the optimum solution. Additionally, in the IM, migration, which is the exchange of solutions between the islands, helps the island get new information from its neighbours, compare it with its data and, finally, update its value if needed.

Characteristics of IM The island model strategy employs specific parameters: the number of islands, migration topology (the structure of interaction between the islands), migratory frequency (the number of migration occurrences), migratory rate (the ratio of migration between the islands), the type of migration (synchronous or asynchronous), the policy of migration (defines which individuals will be replaced from an island and changed during migration) and migratory flow ( the trajectory that emigrants follow). Migration topologies The migration topology establishes the communication links between the islands. It influences the final result of the algorithm. It can be static or dynamic [21].

The tabu search is an optimization method based on the history of search [26]. In fact, it has a memory called a tabu list where the last found solutions to which it refused to move are collected. The role of this memory is to allow choosing the best non-tabu neighbour. In the introduced approach, the tabu search is used to save the already sent solutions in order to avoid forwarding them again. Indeed, it depends on the tabu list length because, if the tabu list becomes full in the next iteration, the algorithm will revoke the tabu status from the first saved element on the list (i.e., remove it from the tabu list) and the new element will be saved in the last tabu position.

Biogeography based optimization

Biogeography is a science inspired from nature. It appeared since 1960 to analyse the geographical distribution of biological organisms. Besides, biogeography applications can be utilized to solve the optimization problems. Precisely, the BBO is a method inspired from the mathematics of biogeography [28]. In fact, the mathematical biogeography models explain the migration of species from one island to another, the development of new species and the disappearance of other ones. In the BBO, an island is any habitat separated geographically from the other similar regions.

In the biogeography, the fitness of each island is determined by their high suitability index (HIS), a measure of the quality of a candidate solution. Each island is represented by Suitability Index Variables (SIV). The best solution for the optimization problem is an island with a low number of species, which corresponds to an island with a large HSI.

The number of species existing on an island depends on the balance between the immigration rate of the new species and that of species already established on the island. In BBO, each habitat has its immigration rate

The maximum immigration rate I which is a constant value, The island size S, The swarm size SMAX is a user-defined parameter.

The emigration rate

While the performance of PSO has seen significant enhancements in recent years, it still possesses certain limitations. Like other Evolutionary Algorithms (EAs), this algorithm suffers from premature convergence because of the lack of diversity. To enhance its search performance, several PSO variants have been introduced. A dynamic neighbourhood-based switching PSO was developed, using a new velocity updating scheme relying on the adjustment of pbest and gbest in terms of a distance-based dynamic neighbourhood, in order to use all swarm evolution information [32]. To resolve the premature convergence of PSO algorithm, Tolabi et al. integrated different strategies including the division of the search space to find the promote zone, and the use of a probabilistic function to manipulate the inadequate particles [6]. PSO with aging leader and challengers (ALC-PSO) was developed in [9]. In ALC-PSO, the swarm’s leader possesses a lifespan that can be tailored by adjusting its leading power, with stronger leading power resulting in a longer leader lifespan. Meanwhile, other particles in the swarm, known as challengers, have the opportunity to assume leadership once the current leader has aged. If the leader maintains a high leading power, it continues to attract other particles; otherwise, new particles are allowed to compete for leadership. A modified PSO approach is develepped using adaptative strategies [20]. In the MPSO algorithm, a non-linear inertia weight based on chaos theory is introduced to effectively harmonize global exploration and local exploitation within PSO. Also stochastic and mainstream learning strategies are adopted to mitigate premature convergence.

The hybridization between island model and the evolutionary algorithms

Improvement of the island genetic programming

Genetic programming (GP) is considered as one of the most successful evolutionary computation algorithms [19]. Its initialized variables are represented under the form of a tree. In fact, it uses the same techniques as the genetic algorithm (crossover, mutation and selection). It has been used in several domains such as robotic, electrical engineering [14] and other image processing algorithms (e.g., image filtration) [17]. Nevertheless, GP has some weaknesses in terms of premature convergence to the local minimum. Therefore, to improve it, island model genetic programming based on frequent trees IMGP was proposed [24]. In this algorithm, the initialized possible solutions (or populations) were divided into sub-populations (or islands); each of which used the GP to calculate the fitness of its population. Then, it applied the migration strategy to exchange the information (random topology has shown the best migration strategy performance [24]). Moreover, this algorithm can be also enhanced by controlling the frequent tree and the local search [25].

Improvement of the island cuckoo search

CS is a metaheuristic algorithm that simulates how the cuckoo birds deal with their eggs by leaving them in other birds’ nests to grow their chance of survival. In short, there are two kinds of birds (cuckoo and host). The host birds either figure out the different eggs. At this point, the host bird must choose between throwing the egg away or leaving the nest and building a new one. Moreover, CS simulates the levy flight which is a random method that mimics the behaviours of some birds. It is used to select the arbitrary values [3]. For this reason, Island-based Cuckoo Search with Highly Disruptive Polynomial Mutation [5] was proposed to improve the CS algorithm by dividing the CS population into sub-populations and by replacing the levy flight method with another strategy called a polynomial mutation. Moreover, this hybridization provided better results, compared to the CS algorithm.

Improvement of the island particle swarm optimization

IMPSO is an algorithm proposed by [4]. It is based on the hybridization between the PSO algorithm and the island model strategy. The approach starts by dividing the whole swarm into sub-swarms and the IM’s parameters, such as the number of islands (number of sub-swarms), the island size and the arbitrary position and velocity of each particle, are initialized. In fact, the IMPSO calculates the fitness value of each particle. Then, it extracts the best position in all islands. Indeed, at each iteration, there is a new position computed for each particle. Subsequently, the algorithm compares this position with the personal best position. If the new calculated position (called

Other integrated strategies of IM are presented as below:

Migration rate: It represents the rate of the emigrant particles. Selection strategy: It is the method used to select the emigrant particles. There are many ways to select those particles. The most popular strategy considers the best particles of the current island (according to their fitness) and sends them to the neighbouring islands following the migration topology. Replacement strategy: After the selection step and the migration of the best particles toward the neighbouring islands, a replacement strategy should be applied to substitute some particles in the current island by the emigrant particles. As instance of these strategies, we can mention the replacement of the worst particles by the emigrant particles.

As stated previously, the island model particle swarm optimization algorithm (IMPSO) is a hybrid method combining the PSO and IM. This hybridization was established to improve the performance of the PSO by avoiding some of its weaknesses such as the premature convergence, which can lead to stagnation in local optima, and the lack of exploration in the search space, especially in the case of solving large-scale optimization problems. The IMPSO starts by dividing the population into sub-populations (islands) including a set of particles and having a position (a possible solution) in the search space and a memory to save its best-found position. Later, each island works alone to generate new solutions. In fact, the global algorithm has a variable called Gbest belonging to the whole algorithm to save the global best position. At the end of the search process, the algorithm changes the value of Gbest according to the best solution of the islands. In the migration step, every island communicates with its neighbours following a migration topology (chain, ring or torus), to improve its group by replacing its worst particles by emigrant particles collected from the neighbouring islands. This process depends on two major issues. The first one is the selection strategy applied to select the best current island solutions and send them to its neighbours. The second issue is the replacement of the worst solutions with the best sent ones. The migration policy uses another parameter, called rate, used to define the rate of the emigrant particles. The application of the proposed approach can be divided into two steps:

Establishing a dynamically migration rate by using the immigration strategy of the Biogeography Based Optimization (BBO).

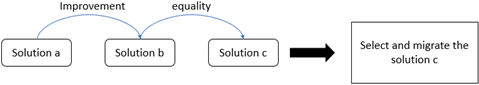

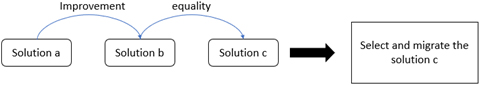

The first priority: Improvement-improvement selection.

The second priority: Improvement-equality selection.

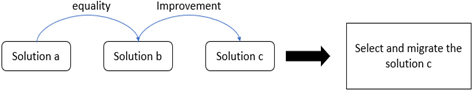

The third priority: Equality-improvement selection.

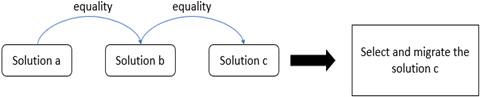

The fourth priority: Equality-equality selection.

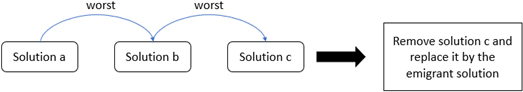

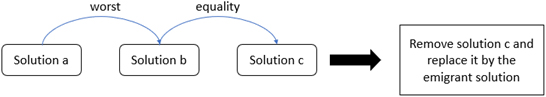

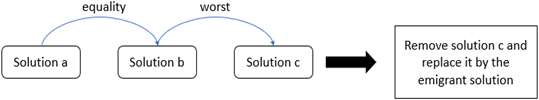

The first priority: Worst-worst replacement. Applying a new selection and replacement strategies, depending on the history search of the particles: particles having an improvement history are more likely to be selected to emigrate to neighbours and particles having a bad history are more likely to be replaced during the migration process.

The Biogeography Based Optimization BBO, the tabu search algorithm and how their principles are integrated and presented in IMPSO approach. For the selection strategy, four priorities are proposed for choosing the immigrant solution (Figs 1–4). For the replacement strategy as well, three priorities are adapted (Figs 5–7).

The second priority: Worst-equality replacement.

The third priority: Equality-worst replacement.

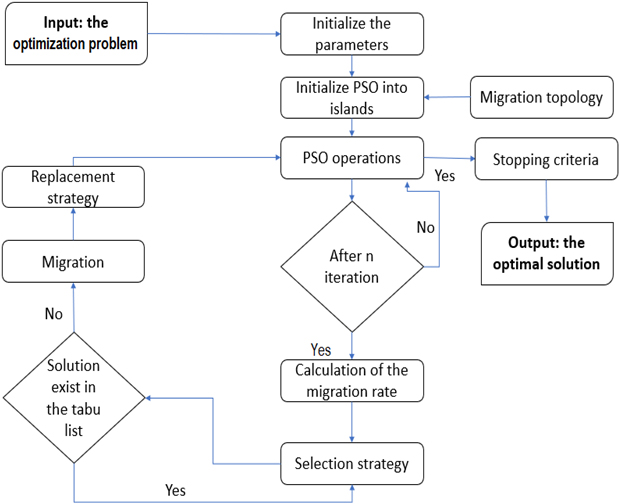

Improved IMPSO flowchart.

The Algorithm 1 and Fig. 8 illustrate the new version of IMPSO starting by initializing, first, the objective function and the decision variables (the problem to solve) and, then, the parameters of the IMPSO algorithm (swarm size, number of iterations, number of islands and migration topology).

Input: The optimization problem Initialize the IMPSO parameters (the number of islands, the number of iterations, the migration strategy (topology), the tabu list size).

The benchmark functions

To validate the proposed approach, 25 test functions, representing the collection of benchmark functions of IEEE-CEC2005 [29] and having different properties (from F1 to F25),were chosen. These benchmark functions are separable and the rotated ones contain a large number of local minima. They can be divided into four groups defined below:

The first five functions (from F1 to F5) represent the unimodal and shifted functions. The next seven functions (from F6 to F12) represent the basic multimodal and shifted functions. The next two functions ( F13 and F14) represent the expanded multimodal functions. The next eleven functions (from F15 to F25) represent the hybrid composition functions.

The CEC competition has a set of priorities and conditions that should be respected. Among these properties, the number of runs must be 25; it means each result is a sum of 25 executions divided by 25, which provides an average of 25 runs. where the average error rate

IMPSO the initial version versus the approach

In this part, we compare the obtained results with those given by the initial version of the IMPSO in order to evaluate the performance of the algorithm. Tables 1–3 show the comparison of the average error rate among the new and old version of IMPSO using the chain, ring and torus topology. Eval refers to the evaluation of the introduced approach compared to the original IMPSO; it contains (

Average error rate obtained by IMPSO using chain topology

Average error rate obtained by IMPSO using chain topology

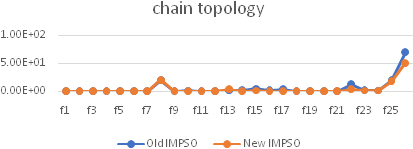

Convergence rate obtained by IMPSO algorithms using chain topology.

In the chain topology, 21 out of 25 functions showed a remarkable positive improvement in the first demonstration, against14 out of 25 positive improvements, in the second demonstration, and 16 from 25 positive improvements in the third demonstration. It was also obvious that the difference between the two approaches was too small in the negative improvements.

Figure 9 illustrates the difference between the initial and the new versions of IMPSO in terms of convergence rate. Each one represents the best-found result (best average error rate) in the three demonstrations based on the chain topology. The sum of the old error rate

Average error rate obtained by IMPSO using ring topology

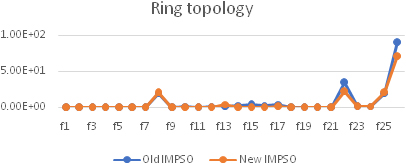

In the ring topology, 17 functions out of 25 demonstrated considerable positive improvement in the first demonstration, against 16 out of 25 positive improvements, in the second demonstration, and 16 out of 25 positive improvements in the third demonstration. It is worth to note that the difference between the two approaches was too small in the negative improvements.

Convergence rate obtained by IMPSO algorithms ring topology.

Figure 10 illustrates the difference between the initial version of IMPSO and the new one. Each result represents the best-found result (best average error rate) among the three demonstrations in the ring topology. From the sum of the old error rate

Comparison of the average error rate between new and old IMPSO using the torus topology

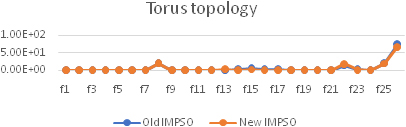

In the torus topology, 19 out of 25 functions exhibited a positive improvement in the first demonstration, against 19 out of10 positive improvements, in the second demonstration, and 13 out of 25 positive improvements in the third demonstration. Besides, we notice that the difference between the two approaches was too small in the negative improvements.

Convergence rate obtained by IMPSO algorithms torus topology.

Figure 11 illustrates the difference between the initial version of IMPSO and the new one in terms of the convergence rate. It represents the best-found result (best average error rate) among the three demonstrations in the torus topology. The sum of the old error rate

From the above-presented findings, we may deduce that our approach gave better results than the initial IMPSO because, in most cases, it provided more positive improvement in terms of the best average error rate Indeed, in the negative improvement, the difference between the two approaches was too small. It is also clear that, in the three topologies, the developed approach did not provide acceptable result in function f8, which is the same problem found in the initial version of IMPSO. Besides, in the function f15, an interesting improvement was observed, especially in the first demonstration with the chain topology, and the sum of the best average error rate in the three topologies provided by the new version was less than that given by the old version.

The new IMPSO algorithm has demonstrated its effectiveness by achieving the best convergence rates when utilizing various migration topologies (chain, ring, and torus). In fact, new IMPSO attains the lowest average error rates for the majority of multimodal optimization problems. The diverse migration topologies employed facilitate significant movement of solution migrants between different islands, promoting thorough exploration of the search space.

Comparison of the average error rate between new and old IMPSO using the torus topology

Comparison of the average error rate between new and old IMPSO using the torus topology

To compare the proposed approach performance, four evolutionary algorithms from the state of the art PSO, GA, ABC and CS were adopted. The EAs were applied to solve continuous optimization problems using the set benchmark functions CEC’05 (F1 to F25). The stopping criterion for the PSO algorithm is fixed at 500 iterations while for the GA, ABC and CS algorithms, it is fixed at 20000 evaluations of the objective function. Table 4 presents the average error resulting from 25 test functions obtained by comparative algorithms. The best values are written in bold after 25 executions of each algorithm.

Obviously, the ABC algorithm obtained the best average error rate values for the unimodal functions F1 and F2. While, for the other three functions, the new version of IMPSO outperformed PSO’11 by two out of three functions. For the two groups of multimodal and extended test functions, new IMPSO, PSO’11 and GA are considered the most efficient for reaching the global optimum. Except that, CS algorithm achieved a good result for the function F7. On the group of hybrid composite functions, the new IMPSO had better performance than ABC and CS algorithms.

For the 25 continuous optimization functions, new IMPSO and PSO’11 achieve good results on 15 benchmark functions, compared to GA, ABC and CS algorithms, especially on benchmarks with difficult geometric characteristics such as multimodality, non- separability and discontinuity. Therefore, the proposed approach exhibited powerful ability in solving NP-hard optimization problems. It is also clear that the different proposed island model strategies are feasible and effective in improving the PSO ability to balance diversity and convergence.

While new IMPSO has demonstrated outstanding performance for solving continuous optimization problems, it lacks strong theoretical support for its convergence and stability. Similarly, the results of the proposed approach on functions F8, F22, and F25 are not ideal, revealing a performance gap in MPSO. Our future work will be dedicated to further enhance the performance of IMPSO.

This paper presented an adaptive multi-strategy PSO algorithm used to solve continuous optimization problems. The improved island model techniques were embedded into the PSO algorithm in order to combine the convergence rate of PSO with the enhanced exploration performed by the IM parameters. The improved IMPSO integrates the different island model parameters: migration topology, selection strategy replacement strategy, migration frequency and migration rate. The BBO algorithm was introduced to make the migration rate more dynamic. New selection and replacement strategies based on the tabu search were also introduced. The IM consists in launching several sub-populations by applying PSO algorithm and the islands which evolve independently but interact at certain number of iterations. Subsequently, the proposed approach was validated employing different test functions CEC’05. Afterwards, the obtained results are illustrated and compared with those provided by the initial version of IMPSO and other well-known metaheuristic algorithms. This comparative study demonstrated that the introduced approach showed a remarkable improvement in most benchmark functions. For future work, we will apply the developed approaches to classify the massive large-scale, spatial-temporal dependent and uncertain data. In this area, image classification has many problems such as feature selection and parameter optimization. We intend also to propose and examine other island model techniques such as the reinforcement learning and to apply it for solving large-scale optimization problems.