Abstract

Supervised/unsupervised machine learning processes are a prevalent method in the field of Data Mining and Big Data. Corona Virus disease assessment using COVID-19 health data has recently exposed the potential application area for these methods. This study classifies significant propensities in a variety of monitored unsupervised machine learning of K-Means Cluster procedures and their function and use for disease performance assessment. In this, we proposed structural risk minimization means that a number of issues affect the classification efficiency that including changing training data as the characteristics of the input space, the natural environment, and the structure of the classification and the learning process. The three problems mentioned above improve the broad perspective of the trajectory cluster data prediction experimental coronavirus to control linear classification capability and to issue clues to each individual. K-Means Clustering is an effective way to calculate the built-in of coronavirus data. It is to separate unknown variables in the database for the disease detection process using a hyperplane. This virus can reduce the proposed programming model for K-means, map data with the help of hyperplane using a distance-based nearest neighbor classification by classifying subgroups of patient records into inputs. The linear regression and logistic regression for coronavirus data can provide valuation, and tracing the disease credentials is trial.

Keywords

Introduction

In the modern human way of life and existence, people suffer from a wide variety of diseases for which they are accustomed to consult medical procedures. Nowadays, medical professionals rely on an assortment of clinical trials to diagnose and treat diseases. Clinical pathology is an important part of the causal study of disease and major areas in modern medicine and diagnosis. There are different kinds of pathologies. They can be identified as general medical pathology, anatomical pathology, dermatopathology, cytopathology, forensic pathology and neuropathology. Since pathology is an important part of the medical field, it will continue to grow in the near future. Due to the emergence of new diseases of the day, some innovative improvements are needed to diagnose, treat and classify the COVID-19 disease. Coronavirus disease is an infectious disease caused by a recently discovered coronavirus. Most people infected with the virus will experience mild to moderate respiratory illness and recover without requiring special treatment. In this direction, the pathologist would like to see genetic-based laboratory testing and diagnosis. Humanity is suffering from an epidemic problem with the use of this method like statistic-based machine learning. This compendium can be used to store a database of people affected by each hospital infection and to estimate the number of people affected. Therefore, it is useful for preliminary investigation in hospitals. It can inform health care organizations about the affected area. Infectious diseases like coronavirus are the major diseases causing more problems in the society. These infections are affecting the economic and health condition of mortality today.

In this study an extensive research effort be located complete to identify studies that apply to more than one monitored machine-learning process on a disease approximation. Machine Learning Evolve Predicting epidemic data proposes statistical inference that is useful to society. The coronavirus is not an organism, but a protein aota (RNA) encased in a protective layer of phospholipid (fat) in which cells of the optic, nasal, or buccal mucosa terminates their hereditary sign. Turn them into antagonists and multiplication cells. Since the virus is not an organism, a protein molecule is not killed but degraded on its individual. Failure time depends on humidity, temperature and type of material. The coronavirus is very mild. Therefore, the only thing that protects here is the thin surface layer of the portal. A good solution is to use any cleanser or detergent because the foam will cut the portable, so we have to rub it in for no more than 20 seconds or make more foam. By dissolving the fat layer, the protein molecule dissolves itself. The heat melts the fat. That is why it is advisable to use water above 25

COVID-19 patient’s data can be analyzed, extracted, interpreted and tailored to prosecutions. Data mining is the enormous data of the data analysis and discovery process is the data stored in various databases such as the data granary is the extraordinary pattern that can be understood, the undetected, the valid, and the useful data. Data mining is a kind of classification and clustering methods are used to extract invisible samples from virtually large databases. The benefits of data mining include faster retrieval of data or information, retrieval of knowledge from several databases, detection of hidden patterns and undetected patterns, reduction of complexity level, saving time and so on. Data mining proficiency collects relevant information from Revenue Structured Patient Data. Then, it helps to achieve specific benefits. The purpose of a data mining effort is usually to create a detailed or live presence structure. Provides a detailed sample of the main features of the data set in abbreviated form. The uniqueness of the attendance model is that it allows estimating the unknown value of a specific variable for the data minor target variable. Our advantage of predictive and descriptive can be achieved using a variety of data mining methods. Data mining and machine learning come with a better public event tool than all fashions. We can apply this to numeric values for high dimensional inputs, characters, images, etc. Clustering-based non-supervised repetition in which includes code samples for some classic clustering techniques, such as K-Means. Unsupervised learning can extract important critical features from input data without additional data or guidance. These features can often be provided as input to more expansions; supervised practice is used and allows more effective learning for intricate tasks and compared to training on raw data. Usual instances are the extraction of attributes from X-ray images. These are important and critical capabilities for machine learning scaling, as these features are not far off, collected by experts in the human domain of craft and image processing, who have rare, slow and very expensive resources and often limited access to machine learning outcomes. One of the most upfront methods for feature extraction is to use auto encode. Autoencoder is a simple encoder-decoder building where encoder encodes input into compressed representation. The decoder then attempts to reconstruct the original input from the compressed encode representation. In this chapter, supervised /unsupervised machine learning procedures are a prevalent method in the field of data mining. Disease assessment using COVID-19 health data has recently shown the potential application area for these methods. This study classifies important tendencies in a variety of monitored machine learning procedures and their function and use for disease performance assessment.

Demographics of COVID-19 using Naive Bayes

In the COVID-19 trial, various teams of computational investigators applied Naïve Bayes classification method to a single cluster set of patient data gathered from hundreds of patients with severe Corona Virus. COVID-19 in which Assessment and Methods, is a platform for crowdsourced studies that focus on emerging computational tackles to solve biomedical problems. A rivalry serves as a large and long-standing. Severe Corona Virus presented a worthy challenge since there is no solitary genetic cause of the disease in which makes it hard to select treatments for patients suffering from the deadly breathing, continuous pain or pressure in your chest, bluish lips or face, and sudden confusion problem of the body. For COVID-19 in each is presented with training data from patients that included demographic information like age and gender and more complex data that describes signaling protein pathways believed to play a role in the disease. Assumed a demographic patients information record

Now, the record

Analysis of trajectory of COVID-19 data clustering network

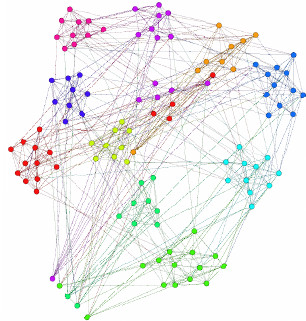

COVID-19 patients trajectory clustering network.

Clustering is an effective way to calculate the built-in COVID-19 cluster data and undetectable confusion. The development of GPS devices is characterized by maximum-maximum number of features that are recorded as sources on airwaves. To concentrate on affecting disease is related for this method.

With this expertise, we initially identified as primary of moving particles. The resemblances and similarities of these cells are determined by the trajectories. Finally, the result given by this trajectory is checked to see if it is correct. A patient with coronavirus infection is recognized by these means and a cluster is formed for easy identification. By observing the network diagram above, we can easily understand the concept of trajectory clustering network includes various districts of Andhra Pradesh such as West Godavari, East Godavari and Krishna grouped one by one at this time.

K-Means clustering is a modest and simple clustering approach to performance. How many clusters (or

Make the cluster centroids.

Re-repetition until the impending together:

For every

For each

Let us select the cluster family of classifiers

Hyperplane

The unbiased of the hyperplane, Fredrick Jury, 2002, is to isolate unknown variables in the COVID-19 database for the disease detection process. For each cluster of the input patient data taken from the input devices. This input of COVID-19 patient apply for classification performance in which measure the feature of each patient in which is stored in the local database. Database

Association of COVID-19 cluster data using MapReduce based hyperplane

COVID-19 dataset for India state wise

COVID-19 dataset for India state wise

Software usefulness that works in the network of trajectories in parallel to find solutions to large of COVID-19 data and process it using the MapReduce procedure. Hyperplane based MapReduce is an indoctrination outline that allows us to do distributed and parallel processing on large data sets in a distributed setting. The first step in COVID-19 Data Processing using MapReduce is the Mapper Class. At this time, Record Reader processes each Input of COVID-19 patient record and generates the respective key-value pair. Mapper store protects this intermediate patient data into the local repository. It is the rational representation of COVID-19 data. It signifies a chunk of effort that contains a single map task in the Hyperplane based MapReduce Program. The Record Reader interacts with the COVID-19 patient data input split and converts the obtained data in the form of Key-Value Pairs. The Intermediary output generated from the mapper is nourished to the reducer in which processes it and makes the last diseased output in which is then protected in the Hyperplane. The main constituent in a MapReduce job is a Hyperplane namely as Driver Class. It is in control for setting up a MapReduce to run-in Hadoop. We stipulate the designations of Mapper and Reducer Classes extended with data kinds and their own job names.

This section fully describes the famous business problems approach with the help of Libra and can be used to perform machine-learning operations on the Hadoop platform to overcome some memory problems. The two important rules of machine learning are as follows, namely as Linear regression and Logistic regression. This is one of the most important machine learning techniques used to know the relationship between target variables and exploratory variables. We use this method to estimate the target variables in numerical form. To know about the two types of regression, we first need to know about the target variables and the descriptive variables. Target variables: The values of the variables in the problem to be estimated are considered as “target variables.” Descriptive variables: Variables that help to estimate the value of target variables are called “explanatory variables.”

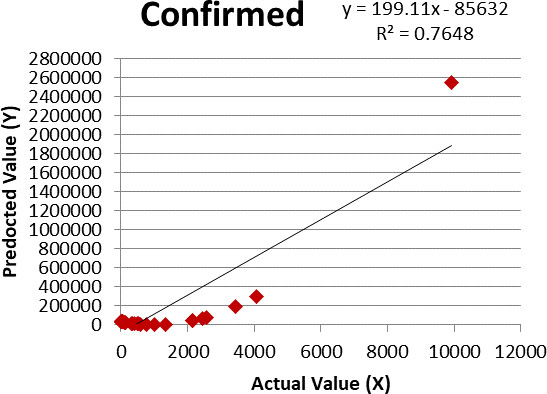

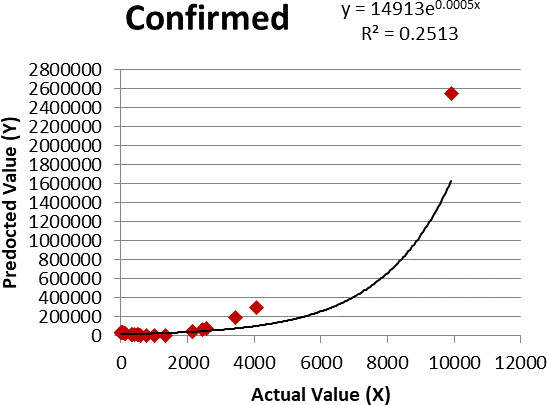

Linear regression for confirmed cases.

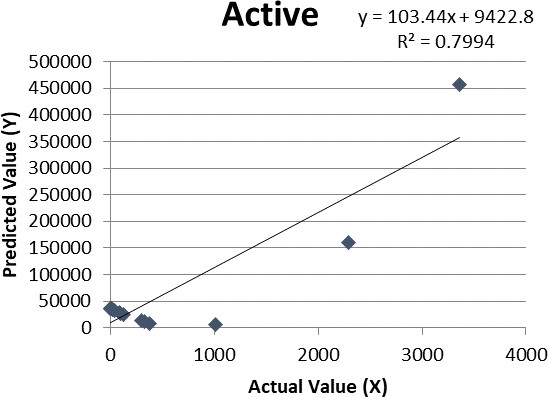

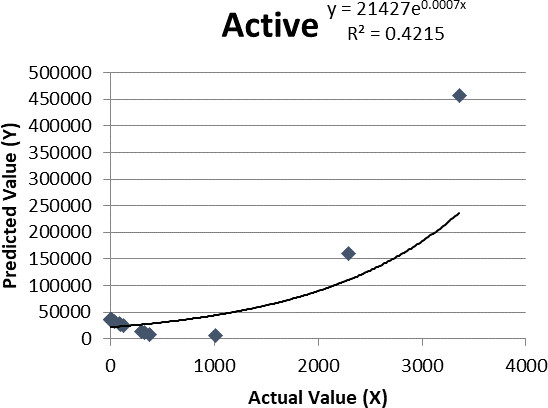

Linear regression for active cases.

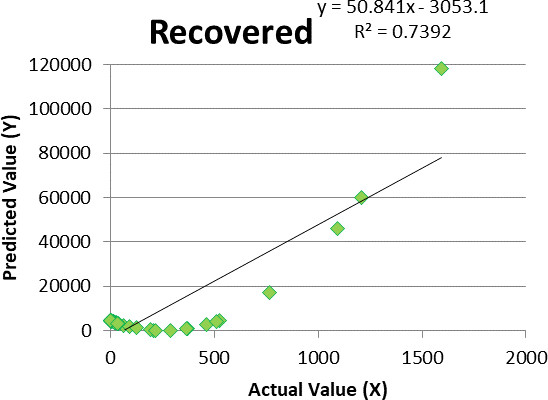

Other formulas are also needed to calculate the slope of the regression line and for the intercept point of regression. The slope of regression is given below:

The intercept point of regression is given by:

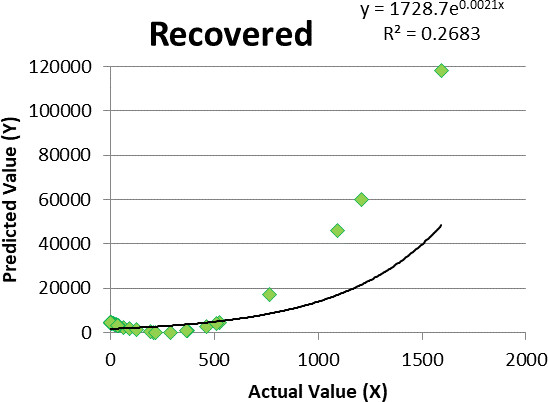

Linear regression for recovered cases.

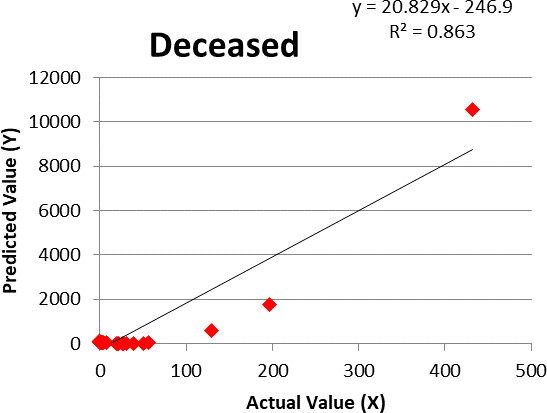

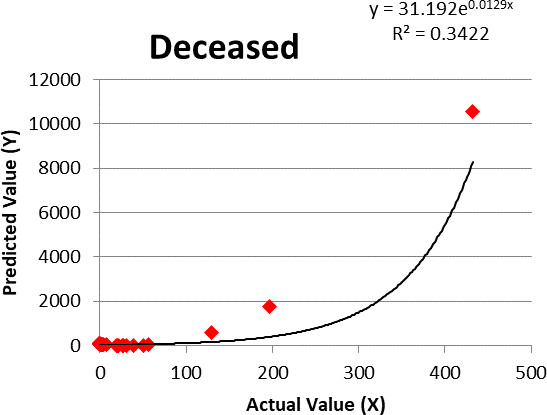

Linear regression for deceased cases.

Here,

These are the values obtained by taking

Static approaches result values

# The MapReduce job can produce XT*Y Mapreduce ( Input

Logistic regression for confirmed cases.

Logistic regression for active cases.

Logistic regression for recovered cases.

Logistic regression for deceased cases.

The probability formula is as follows:

Logit(

In this algorithm, majorly follows four-step input, iteration, dims, and alpha as follows:

Input: This is an input dataset. Iterations: This is the fixed number of iterations for calculating the gradient. Dims: This is the dimension of input variables. Alpha: This is the learning rate.

Let us see how to develop the logistic regression function.

# MapReduce job – Here MapReduce function executing for logistic regression Logistic.regression

The COVID-19 application uses of linear regression and logistic regression for corona virus data can assessment. To trace out the disease of Corona virus credentials from various sources and campaign. Consider a statistical technique to implement a regression model for the provided dataset. Assume that the given number of statistical units.

Its formula is as follows:

Here,

Conclusion

This paper applied the structural risk minimization on data for linear classification, and then form a trajectory cluster, and the data prediction trial applies to each individual with supported data search, outline detection, and sample rearrangement. We have included it here to assess data search, outdoor detection, and pattern detection. Finally, three issues have been developed to develop a broader view of coronavirus on the trajectory data prediction trial to control linear classification efficiency and to issue clues for each individual.