Abstract

Human motion analysis has been a common thread across modern and early medicine. While medicine evolves, analysis of movement disorders is mostly based on clinical presentation and trained observers making subjective assessments using clinical rating scales. Currently, the field of computer vision has seen exponential growth and successful medical applications. While this has been the case, neurology, for the most part, has not embraced digital movement analysis. There are many reasons for this including: the limited size of labeled datasets, accuracy and nontransparent nature of neural networks, and potential legal and ethical concerns. We hypothesize that a number of opportunities are made available by advancements in computer vision that will enable digitization of human form, movements, and will represent them synthetically in 3D. Representing human movements within synthetic body models will potentially pave the way towards objective standardized digital movement disorder diagnosis and building sharable open-source datasets from such processed videos. We provide a hypothesis of this emerging field and describe how clinicians and computer scientists can navigate this new space. Such digital movement capturing methods will be important for both machine learning-based diagnosis and computer vision-aided clinical assessment. It would also supplement face-to-face clinical visits and be used for longitudinal monitoring and remote diagnosis.

Keywords

INTRODUCTION

The field of computer vision has advanced considerably and approaches that recognize faces, handwriting, and digitize human form are now routine [1–4]. Yet, despite these tools becoming relatively commonplace, we are still reluctant to embrace them during the clinical assessment of movement disorders. There are many potential reasons for the slow adoption of computer vision and machine learning in movement disorders assessment. First, the size of training, validation, and testing datasets for movement disorders is limited. Due to privacy issues, individual patient data will require extensive data usage agreements before sharing within aggregate datasets [5]. Consequently, it requires either building an accurate diagnosis system from datasets with only a few examples or producing a representation of the patient’s data without privacy concerns. Second, there is concern about the robustness of clinical diagnosis systems. For example, medical machine learning systems can be misled by small perturbations like rotation or some invisible additive noise for humans [6]. A well-known example is that a panda will be recognized as a gibbon with high confidence after adding noise not perceptible to humans [7]. For Parkinson’s disease (PD), automated diagnosis based on detecting dysphonia dropped to 37% from 93% after similar in-perceptible perturbation [8]. Third, there are potential legal and ethical concerns about the black-box nature of machine learning and end-to-end solutions that bypass clinicians [9].

The objectives of our hypothesis are to present the emerging field of computer vision and provide cases for future adoption in clinical assessment of movement disorders. Using a synthetic patient interface where we represent human movements within a framework of 3D realistic human mesh-volumetric body models [10, 11], we hope that the size of available training, validation, and testing datasets can be increased (by sharing) and this will enable standardized digital movement disorder diagnosis. Importantly, capture of human 3D pose within an articulated 3D body model provides both path to visualization and quantification since body keypoints coordinates are available for further analysis and automated clinical severity scoring.

Digital capture of human movements

The most instinctual usage of video recording is to capture movements. In clinical diagnosis, it would be as a permanent record of a motor assessment in response to the commands of a clinical expert. While there are methods to directly measure parkinsonian movements using accelerometers and other sensors that hold considerable promise [12, 13], the procedure of data capture is complex and requires specific devices with often limited bandwidth. The raw signals (such as angle, velocity, and acceleration) do not directly lead to a diagnosis of movement disorders and are hard to visualize and directly interpret by clinicians [14]. Therefore, approaches that align with current observer-based clinical motor assessment such as video recordings and motion capture are potentially more instinctive for clinicians than sensor-based methods. While such videos would be a necessary adjunct to the clinical record, standardization of parameters such as lighting, camera angle, field of view, and concern around privacy also may limit utility. Notwithstanding the drawbacks of video recording, advances in computer vision provide the means of capturing human form within a digital environment [15–17]. Here, previous research has established that biological motion and pose (disposition in space) can be represented using coordinates based on body keypoints (limbs, digits, etc.) [18]. There is also the possibility to represent these poses within the context of skeletal or volumetric body models [10, 19]. We suggest that human body synthetic models may provide a more generic solution enabling anonymization and standardization across centers necessary for future use of digitized movements as a clinical biomarker.

What’s important for the diagnosis of movement disorders?

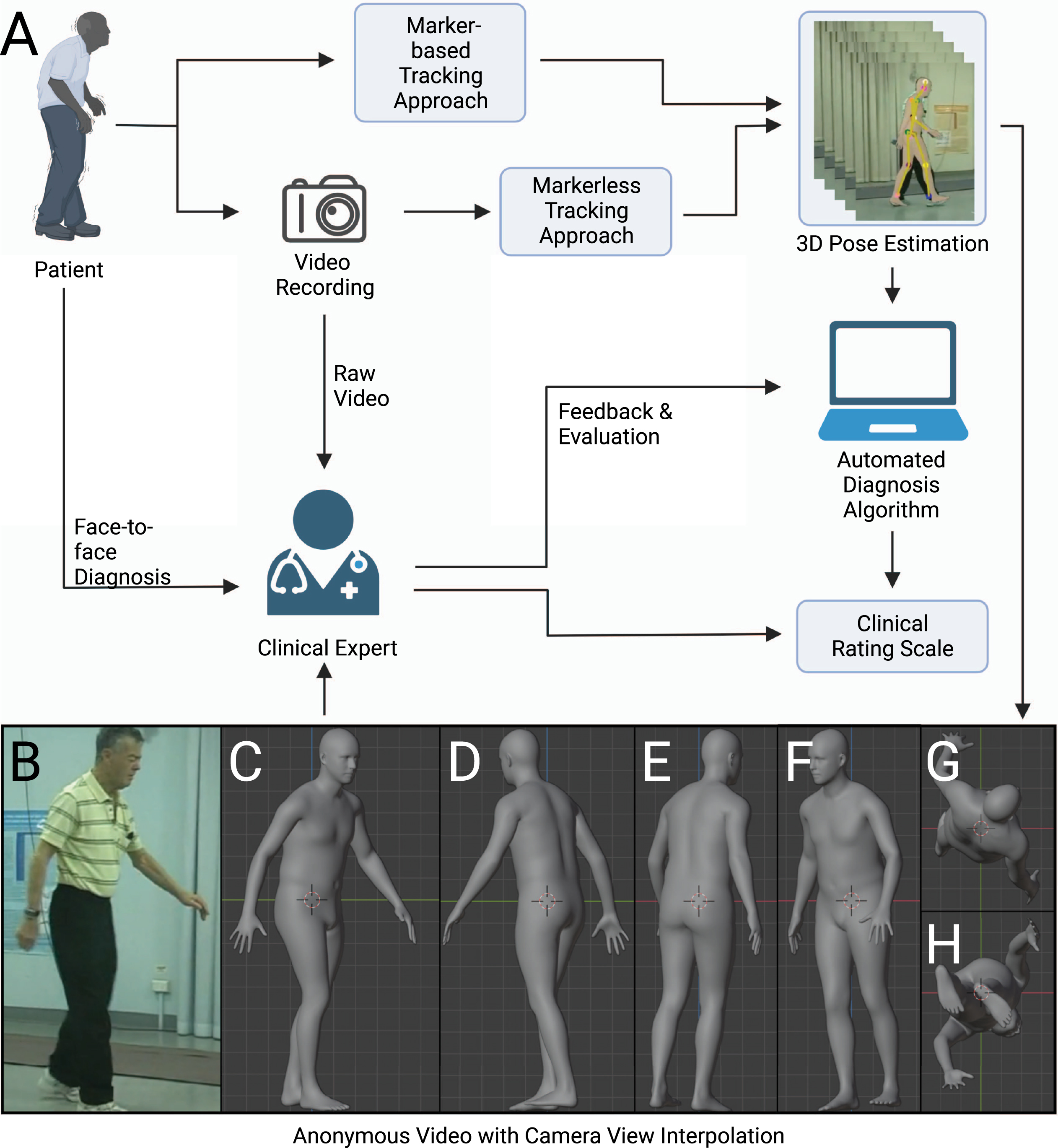

The diagnosis of movement disorders such as PD is rooted in patient and family history, symptom onset, and physical exam features [20]. Particular importance is placed on qualitative features of resting tremor, bradykinesia, rigidity, and gait disturbance as these symptoms can be followed to evaluate disease progression and treatment response using scoring by human observers. Typically, clinical movement disorder rating scales have clinical severity scores for both whole body posture and walking movements, but also more fine features such as movements of hands and fingers (tapping). To illustrate these points, we examine the Movement Disorder Society Unified Parkinson’s Disease Rating Scale (MDS-UPDRS) [21]. Computer vision and augmentation (interpolated 3D views) through the use of synthetic human form could be particularly useful for motor examination (part III) of the MDS-UPDRS (Fig. 1). Initial scales (MDS-UPDRS Sections 3.1-3.2) involve speech and facial expressions. Facial expressions are also potentially well suited to automatic analysis using higher resolution models [22, 23] or specific face models [3]. Some aspects of motor scales, such as Motor Examination in MDS-UPDRS Section 3.3, require assessment of rigidity using a clinical observer to manipulate limbs and the neck. Since they require observer action and patient feedback, it is unlikely that these components of motor scales could be fully automated. Computer vision could potentially contribute to most of the other aspects: on the finest level, cameras optimized to report finger tapping would be necessary for Motor Examination in MDS-UPDRS Section 3.4 and lower resolution full body capture for Motor Examination in MDS-UPDRS Sections 3.5 and 3.6 that involve aspects of locomotion and transitioning from seated to standing posture. Re-creation of complete clinical rating scales from a single camera view is not possible as it will require subjects to perform tasks not suited to computer vision-based analysis or will involve different levels of resolution. Conceivably, a subset of movement-related phenomena that are well suited to computer vision could be used to predict the overall MDS-UPDRS score [24] or treatment response [25] providing some form of clinical guidance but still requiring interactive tests in other domains.

Scheme for clinical assessment with any movement-related clinical rating scale. The movement-related clinical rating scale has a pivotal role in the diagnosis of neurological diseases such as Parkinson’s disease. There are several possible ways to conduct an assessment of clinical rating scale: face-to-face assessment, remote assessment based on raw video recording, remote assessment based on anonymous videos, and automated assessment with algorithms. We argue that the anonymous video is of benefit to privacy protection, assessment, and algorithm design.

Video monitoring of Parkinson’s disease to refine clinical diagnosis

There are many challenges in idiopathic parkinsonism, such as differential diagnosis from Parkinson-plus syndromes [26, 27]. While most physicians may readily notice tremor, bradykinesia, and postural instability, there is currently no test that can effectively confirm the diagnosis of idiopathic PD. At initial assessment, the main focus is put on confirming cardinal signs in PD [20]. However, the clinical course of the illness may reveal it is not PD, requiring that the clinical presentation be periodically reviewed to confirm the accuracy of the diagnosis [20, 28]. When PD diagnoses are checked by autopsy, on average, movement disorders experts are found to be 80% accurate at initial assessment and 84% accurate after they have refined their diagnoses at follow-up examinations [29]. Capture of human 3D pose within an articulated 3D body model can facilitate the remote diagnosis and longitudinal monitoring of motor function to promote diagnostic accuracy.

Towards the diagnosis of movement disorders based on video recording

A typical diagnosis procedure of movement disorders based on video recording could involve the following steps: capturing human motion from videos, analyzing motion trajectories with diagnosis algorithms or experts, and evaluating the result in light of the patient’s clinical history and presentation (Fig. 1). Here we review the steps that form the basis for diagnosis within any movement-related clinical rating scale. We also introduce the use of synthetic human form that enables the expert to be remote or diagnose at a later time, as the anonymized data can be stored and sent without risks to privacy [30].

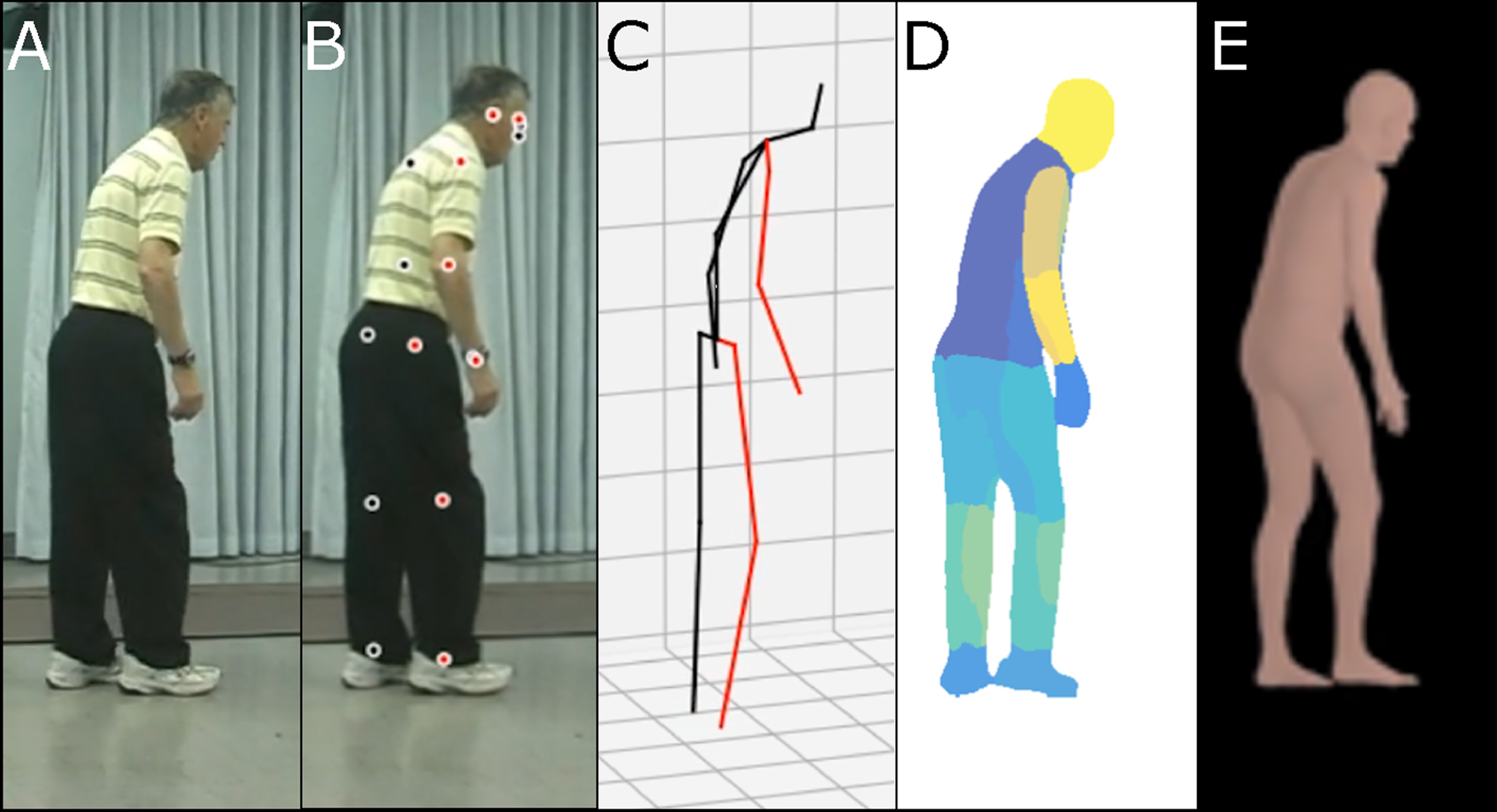

A variety of methods have been proposed to capture human pose and motion necessary for diagnosis of movement disorders. In this hypothesis, the term ‘human pose estimator’ will be used in its broadest sense to refer to all computer vision-based algorithms to capture human pose and motion. A human pose estimator can be categorized into marker-based and markerless approaches. A marker-based approach requires subjects to wear fiducial markers on a specialized bodysuit or attached to their clothes [31, 32]. Disadvantages of this approach include demands for markers themselves, additional complexity, as well as concerns around sterilization and use across subjects. The markerless approach involves using a pre-trained deep neural network (see Supplementary Material for a glossary) to automatically identify joints and other keypoints of subjects [33, 34]. Although it can be slightly less accurate than the marker-based approach, advantages of markerless approaches include generalizability to different clothing and background and ease of use. A markerless approach can be classified based on how it virtually represents the human body: including a skeletal model with keypoints [2], a 2D human body model [35], or a 3D human body model (volumetric model) [1, 37] (Fig. 2). Human synthetic body models are potentially adaptable to any population including elderly patients with movement disorders. One of the most widely used models, SMPL, was learned from the CAESAR dataset. The CAESAR dataset consists of a total of 3800 3D body scans collected from American and European civilians whose age ranges from 18 to 65 [38]. The latest upgraded version of the SMPL model, STAR, is able to account for differences in body mass index by learning from both CAESAR and SizeUSA datasets [11]. The SizeUSA dataset was collected from 2845 male and 6434 females with ages varying between 18 to 66 + providing diversity that may better match clinical populations [39]. The output of a human pose estimator is movement data (a series of vectors) that can be overlaid on the original raw video (see Supplementary Video 1). The movement data can then be analyzed to examine aspects of motor behaviors. Reducing video data to a series of vectors greatly simplifies the complexity and the computational cost of analysis.

Illustration of synthetic human body modeling methods. A) Raw video image from open-source examples of Parkinson’s disease characterization through postural assessment. This video was obtained with permission from publisher Wiley [57]. B) Keypoints overlaid on video images. C) Skeletal representation. D) Body-parts-based representation. E) 3D volumetric representation.

We have focused on models necessary for automated diagnosis. It is also necessary to emphasize the importance of training, validation, and testing dataset scale and quality. Potentially, the “no free lunch” theorem holds for any algorithm [40], where any improvement in performance on one task must be paid for by lesser performance on other tasks. In contrast, the scale of datasets is a universal driving factor of performance, and it has some unique features [41]. First, the scale of data is often more important than the quality of the data. Second, there is a positive logarithmic relationship between the performance of computer vision algorithms and the scale of datasets. In summary, the scale of the training dataset guarantees the lower bound of the algorithm’s performance. On the other hand, garbage in, garbage out: the quality of the dataset limits the upper bound of the algorithm’s performance. A positive correlation was found between the resolution of video recording and performance of pose estimation for normal human subjects [42]. Motion blur and occlusion can also result in false pose detection and should be avoided or compensated for with both normal and PD datasets [43]. In general, the quality of any training, validation, and testing dataset can be assessed from the following dimensions: accuracy, completeness, redundancy, reliability, consistency, usefulness, and confidentiality [44]. In the context of PD datasets, these criteria could refer to the accuracy of 3D pose, completeness of patient information, reliability of MDS-UPDRS score, consistency in data format, etc.

In human pose estimation, large-scale training, validation, and testing datasets use marker-based tracking and triangulation from multiple cameras to place subjects within a 3D coordinate system and are typically captured in a specialized studio. The most widely used datasets for 3D human pose estimation model training, validation, and testing are: HumanEva, Human3.6M, MPI-INF-3DHP, TotalCapture, 3DPW, and AMASS [31, 45–50]. These datasets include images, videos, and annotations. Annotations can include: 2D poses, positions of keypoints in 3D space, types of activities, and 3D body scans corresponding to images (Table 1). To date, one of the major obstacles of applying any computer vision approach to movement disorders related data is access to training, validation and testing datasets that reflect both the scale and diversity of expected clinical populations.

Summary of datasets on 3D human pose estimation

Comparing the performance of human pose estimators

Neural network pose estimators are trained, validated, and tested on a large example set collected in a controlled studio. To be generalizable, they need to be adapted to apply to human motion data, collected from any scene, performed by different objects. Domain adaptation can broadly be defined as the ability of a model trained on one dataset to still perform well when employed on different but relevant datasets. Existing research has recognized the critical role of domain adaptation and investigated the performance of 3D human pose estimators in the context of wild settings (non-studio or controlled environment) [51, 52], but in the absence of motor impairments.

In most recent studies, deep neural networks have dominated the field of human pose estimation [34]. Those algorithms can be coarsely classified according to their input and output. The explicit structure of deep neural networks can be designed to work with different inputs and outputs. Generally, the input of a deep neural network can be one single frame (frame based) or a sequence of successive frames (video based). The output can be keypoints that depict joints of the human body (skeletal), parameters that control an existing 3D model of the human body (volumetric model based) or pointcloud which directly represent the human body in 3D space (pre-built modelfree).

To compare the different algorithms that could be applied to video of PD patients, we choose densepose3D (pre-built model free), VIBE (video based), and PyMAF (frame based) as these represent the most accurate tools for volumetric based methods of human pose estimation to date [1, 37]. As a comparison, we report accuracy with VideoPose3D, a well-used skeletal method (keypoints model) that can serve as a benchmark measurement [2]. We list the performance and features of different 3D human pose estimators [1, 37] on large scale human 3D pose datasets of healthy people [45] in Table 2. The performance was measured using mean per joint position error (MPJPE) in mm on Human3.6M datasets (see Supplementary Material for a glossary). Human3.6M datasets consist of 3.6 million different human poses taken during 15 daily activities like walking, sitting, eating, etc. [45]. The poses were collected with a motion capture system and its corresponding image recorded from 4 camera views. The motions in Human3.6M datasets were performed by 11 healthy professional actors, 5 female and 6 male, whose BMI ranges from 17 to 29. Poses from 2 male and 2 female motions were kept as testing datasets. A limitation is that demographic information like age and ethnicity was not reported. It is significant that most reported error measurements are relatively small compared to expected changes during movements such as walking or stooped posture that characterize motor impairments, with the exception of tremor and bradykinesia (see below). Nonetheless, it is conceivable that error values reported for commonly used algorithms may not necessarily be generalizable to all subjects, in particular those affected by movement disorders such as PD. Therefore, we also evaluated the performance of different pose estimators [1, 53–56] on available open-source movement disorders videos [57] collected under “wild settings” that could represent video captured without specialized equipment within a clinical environment (Table 3, see Supplementary Video 1). The open-source movement disorders videos from a male subject late 70 s with advanced PD with sporadic responses to oral levodopa. The videos were taken in the “off” state. In the absence of these motion-capture studios and 3D ground truth (see Supplementary Material for a glossary), we projected the information from 3D back to 2D and examined its alignment relative to 2D images. We adapted two metrics in 2D human pose estimation to assess the reprojection accuracy, the percentage of correct keypoints (PCK) and the area under the curve (AUC) [58, 59]. The accuracy of different human 3D pose estimators (assessed using reprojection) was up to 90% when compared to the human ground truth or a computer vision-based algorithm DeepLabCut (DLC) [53] and, as expected, was lower for all methods when expressed as the more stringent AUC (Table 3). It will be important to estimate how well the volumetric models trained on large groups of normal individuals transfer to PD patients; so far, our results with 2D video to 3D volumetric models (Table 3) indicate that this will work. These results must be interpreted with caution because the pose data is likely limited to only gait and gross body posture analysis. It has previously been observed that rating tremor and bradykinesia with video recording can be difficult for even well-trained human raters [60, 61]. In contrast, gait and postural stability analysis based on video recording has strong agreement between human raters [60, 61]. This difficulty may be due to technology limitations in video recording using webcam or cell phone cameras: such as camera motion blur, single view angle, resolution, etc. However, a full discussion of camera related issues is beyond the scope of this study.

Performance of 3D human pose estimators for normal human subjects based on published values

Calculated reprojection accuracy of different human pose estimators

Challenges and opportunities with digital movement capture and analysis.

There are also multiple challenges associated with video analysis. Although all three model types (skeletal models, 2D human body models, and volumetric models) (Fig. 2C-E) satisfy ethical concerns around patient anonymity, only volumetric models are particularly suited for qualitative clinical diagnosis by expert observers because they have the appearance of human subjects. However, volumetric models could suffer from inherent biases and assumptions about shape, which are built into the model. In terms of computational load, the skeletal model requires less processing and would be the most efficient, but lacks human appearance. Overall, by having data within the context of 3D models, observers have additional flexibility with respect to viewing angle for potentially more accurate scoring, or the ability to match view angles from different studies or sites. It is noteworthy that artifacts could be introduced due to camera occlusion and other factors. Furthermore, tremors and small repetitive body movements associated with PD would be within the error of most human pose estimators using the most advanced commercial video capture systems [25]. Alternatively, depth-sensing motion ability (like Azure Kinect, Intel RealSense, etc.) [62, 63] or motion sensing devices such as accelerometers [12, 13] might be better suited for this use case and used as an adjuvant to video scoring. As human movement recording equipment and pose estimation algorithms advance, we anticipate these techniques can be used to collect visualizable, de-identified biomarkers of human movement disorders. First, digitally captured movement disorder data allows anonymization of datasets which will be a vehicle for data sharing and future collaboration by placing the data within a common synthetic format. It can relieve the problem of insufficient data during training of neural networks. Second, it provides a vehicle for re-evaluation by multiple clinical raters. Previous studies have shown interrater variability within the MDS-UPDRS: human raters often disagree on the exact severity of a single patient [24, 64]. With digitally captured data and the use of volumetric models, it will be possible to potentially elicit second opinions on ambiguous findings from re-analysis by human raters. Third, collection of raw video data and then conversion to a synthetic format may help remove bias clinicians could have if they were trained on a patient population which was not necessarily representative of all current patients. For example, synthetic models can help remove the influence of factors due to the clothing or lighting associated with particular patient image acquisition. Conversion of movements to a standard body plan may also reduce ambiguities around a person of extreme variation within body mass index (BMI), although synthetic models also exist that can represent BMI. Fourth, video capture and the use of robust synthetic methods for anonymization could potentially provide the opportunity to capture variation in movement chronically within the patient homes. These assessments combined with robust algorithms to differentiate a normal from disease-based movements could be useful in detection of early pre-manifest events which would be otherwise rare to observe within a short typical clinical assessment.

There are more challenges in the later stages of PD, as most PD patients eventually need levodopa and later develop levodopa-induced fluctuations and dyskinesias [28, 65]. In this case, the aim is to reduce PD symptoms while controlling fluctuations in the effect of the medication [28]. Instead of periodically following up in the clinic, a better solution may be longitudinal monitoring of motor fluctuations and dyskinesias in patient-own environments. However, some limitations are expected in patient-own environments during longitudinal monitoring. First, the major cause of occlusion will be furniture instead of clinicians and other patients. Second, more variability in illumination is caused by daylight instead of institutional lighting. Third, the recording devices in patient-own environments are consumer-grade devices like webcams, cellphones, and surveillance cameras. The resolution and sampling rate may result in inability to capture high frequency movement like tremors. Similarly, long shutter speeds can lead to motion blur. To relieve those problems to a certain degree, multiple cameras can be employed for better results. We suggest that patients and providers could fix with tripods and standardized pipelines for video capture aided by known fiducial objects. In the absence of calibration, advanced machine learning approaches could approximate spatial calibration and perspective from known structures such as walls and the orientation of the patient within the assumed vertical axis.

Going from movement data to diagnosis and treatment

Once human poses and movements (pose sequences) are obtained, algorithms can further align findings to clinical rating scales and alert the clinician to pay attention to the abnormal movements presented in the video. To date, several studies have had some success for aligning movement data with clinical rating scales [66–68]. To address rater variability, one can employ focal neural networks and introduce a regularization (see Supplementary Material for a glossary) term such as a rater confusion estimation which encodes the rating habits and patterns of different raters [24]. Using this approach, an automated video-based PD diagnosis algorithm reached an accuracy of 72% for consistency with the majority rater vote and an accuracy of 84% for consistency with at least one of all raters when predicting MDS-UPDRS scores for gait and finger tapping. Although recent work suggests markerless video approaches have had some success for PD [24, 69], overall accuracy could be improved when compared to sensor-based approaches [12, 70]. We should emphasize that there are minimal potential barriers for application as most models are pre-trained requiring little user input.

Accurate diagnosis of PD requires assessment of both dynamic properties of human pose such as gait analysis, but also static properties like postural deformities and dystonia. For example, in the early stages of PD, rigidity is often asymmetrical and tends to affect the neck and shoulder muscles prior to the muscles of the extremities [71]. The continuous contraction of muscles may cause typical posture of the body [20, 72]. We assert that static postural changes would be ideally quantified using automated methods that could potentially be less subject to limitations around bandwidth associated with motion capture. Previous work in pose estimation was able to identify differences within subjects based on individual static images [73, 74]. The patient may also manifest mask-like facial expression (hypomimia, a reduced degree of facial expression) [20]. The facial expression is not easy to track by keypoints-based methods (that mark discrete body parts), but recent expressive body recovery methods, such as PIXIE [75], show promising results that can reconstruct an expressive body with detailed face shape and hand articulation from a single image.

Current automated systems assist diagnosis instead of bypassing clinicians and efforts to improve their interpretability have been minimal. Intuition, pattern recognition, and clinical training are an important part of any movement disorders diagnosis. We emphasize that the use of synthetic data does not exclude the use of supervised machine learning approaches that have been trained based on labels derived from the intuition of clinical experts. As digital movement capture methods become more routine, more data will be gathered within common formats. By combining these evolving datasets with existing everyday human activities datasets like MPII and Human3.6M [45, 76], it makes measuring the deviation from normal movements plausible. It is conceivable that this could be used as a screening tool to assist clinician diagnosis by highlighting suspicious movements, and examining at-risk individuals leading to earlier symptom identification, and potential lifestyle or treatment options being implemented proactively. It also benefits the development of a human interpretable algorithm in quantitative diagnosis. Although machine learning models have been successfully applied in medical imaging, these methods often fail to convince users because of a lack of transparency, interpretability, and visualization. Human interpretability (see Supplementary Material for a glossary) is fundamental to developing a reliable and understandable diagnosis algorithm for clinical use since the user might be misled by incorrect prediction of a black box system [77, 78]. Interpretability can be enhanced when the algorithm can weight model features based on known human body parts [79]. With a transparent and interpretable algorithm, users can decide whether the prediction for a body part of interest is reasonable or not. However, human interpretability is hard to quantify. We suggest the volumetric representation could be used as a visualization tool during the collection of human feedback, such as anonymized evaluation by expert raters.

As the disease advances, deep brain stimulation (DBS) has been used to reduce motor symptoms in severe cases where drugs are ineffective and cause side effects [28, 80]. In this case, the video-based 3D body model approach can be used to create body maps of motor changes for a patient and can serve as a visualization tool for physicians and open the opportunity for remote modulation of DBS parameters.

Conclusion and future directions

We anticipate that the use of synthetic human form may aid the uptake of movement data as an open-source biomarker. Synthetic human form also provides an ideal vehicle for the generation of augmented training data for use within next-generation computer vision algorithms [81–83]. Animal studies have already taken advantage of a synthetic mouse model [84] to generate training data for pose estimation. In other work, a synthetic full body drosophila model [85] permits virtual force measurements. We anticipate that human 3D body models will be both tools for addressing relevant physiological questions concerning human form and providing specific forms of training, validation and testing datasets that lead to improved quantification of both normal function and disease.

Footnotes

ACKNOWLEDGMENTS

This work was supported by Canadian Institutes of Health Research (CIHR) Foundation Grant FDN-143209 and project grant PJT-180631 to T.H.M. THM was also supported by the Brain Canada Neurophotonics Platform, a Heart and Stroke Foundation of Canada grant in aid, and a Leducq Foundation grant and a National Science and Engineering Research Council of Canada Discovery grant 2022-03723. HR was also supported by a National Science and Engineering Research Council of Canada Discovery grant. This work was supported by resources made available through the Dynamic Brain Circuits cluster and the NeuroImaging and NeuroComputation Centre at the UBC Djavad Mowafaghian Centre for Brain Health (RRID SCR_019086) and made use of the DataBinge forum. Dongsheng Xiao was supported by Michael Smith Foundation for Health Research. Hao Hu was supported by the Canadian Open Neuroscience Platform. We thank Nicole Cheung for collating open-source patient videos and Pankaj Gupta for assistance with segmentation. We thank Jeff LeDue for initial discussions on this concept and Lynn Raymond and Daniel Ramandi for critically reading the manuscript. ![]() was created with BioRender.com.

was created with BioRender.com.

CONFLICT OF INTEREST

The authors have no conflict of interest to report.