Abstract

A key aim of the HighNESS project for the European Spallation Source is to enable cutting-edge particle physics experiments. This volume presents a conceptual design report for the NNBAR experiment. NNBAR would exploit a new cold lower moderator to make the first search in over thirty years for free neutrons converting to anti-neutrons. The observation of such a baryon-number-violating signature would be of fundamental significance and tackle open questions in modern physics, including the origin of the matter-antimatter asymmetry. This report shows the design of the beamline, supermirror focusing system, magnetic and radiation shielding, and anti-neutron detector necessary for the experiment. A range of simulation programs are employed to quantify the performance of the experiment and show how background can be suppressed. For a search with full background suppression, a sensitivity improvement of three orders of magnitude is expected, as compared with the previous search. Civil engineering studies for the NNBAR beamline are also shown, as is a costing model for the experiment.

List of acronyms

See Table 1.

List of acronyms

List of acronyms

The European Spallation Source (ESS) [102,147] will be the world’s brightest neutron source. To exploit the unique potential of the ESS for fundamental physics, the HIBEAM/NNBAR collaboration is planning a two-stage program of high precision searches for neutron conversions in channels that violate baryon number (

The HIBEAM/NNBAR program fits well within the future experimental physics landscape. The construction of the ESS makes possible an ultra-high sensitivity probe of baryon number violation (BNV) with free neutrons that is not available at other laboratories. Furthermore, tests of the symmetry protecting baryon number and searches for dark sector candidates are topical and of long term importance. They are described as essential activities in the 2020 Update to the European Particle Physics Strategy [192].

An observation of BNV via neutron oscillations would be a discovery of fundamental significance [148]. It would address a number of key, open questions in modern physics including the origin of the observed matter-antimatter asymmetry (baryogenesis) [18,36,38], dark matter [55,56], and non-zero neutrino masses [141,169,171]. It would also falsify the Standard Model (SM) of particle physics [135]. Neutron oscillations occur routinely in proposed extensions to the SM [45,73,103,145].

A key Figure of Merit (FOM) for a free

Here, N is the number of free neutrons arriving at a thin annihilation target after a propagation time t. The FOM is proportional to the probability of a signal event being produced. The FOM is valid for neutrons travelling in a low magnetic field. The opposite sign of the magnetic dipole moments of the neutron and antineutron implies that the earth’s magnetic field can break the degeneracy between these particles and thereby suppress a transition.

The transformation of a neutron to antineutron during transit would be observed with a detector that measures the products of the antineutron annihilation in the target. Together with determinations of the efficiency of observing an antineutron annihilation and background suppression, the FOM is a key indicator of the discovery potential.

As can be seen by the form of Equation (1), a high-sensitivity search requires a large intensity and long neutron propagation time. This has driven the design of the experiment. As described in Volume I of the HighNESS Conceptual Design Report [161], the provision of a bespoke liquid deuterium (

This paper is organised as follows. Section 3 provides an executive summary of the work and design choices. In Section 4 the scientific motivation of the project is described. Section 5 summarises previous searches. Simulation software used in this work is described in Section 6. In place of dedicated sections describing the ESS and the

Executive summary of the experiment and design choices

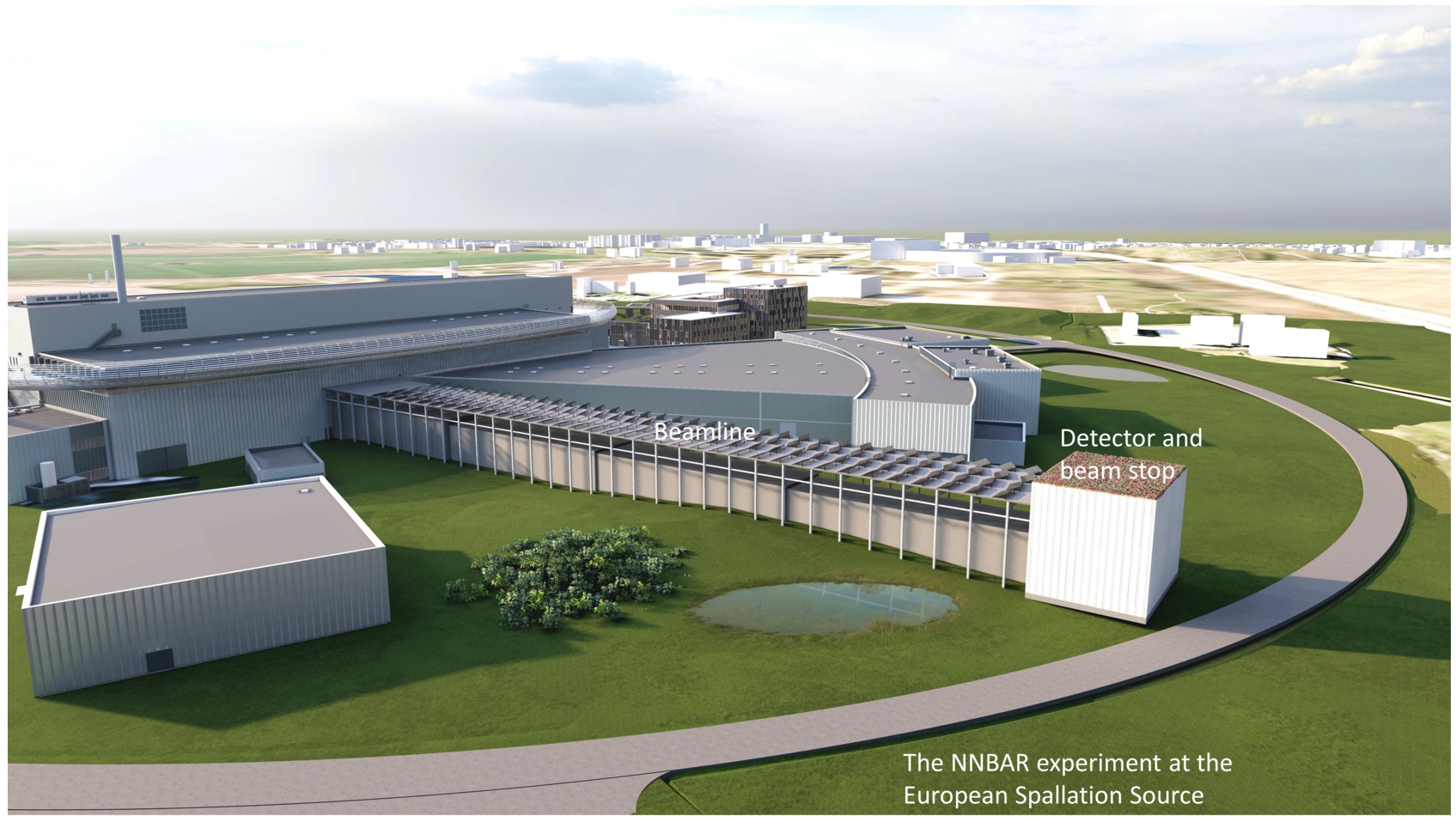

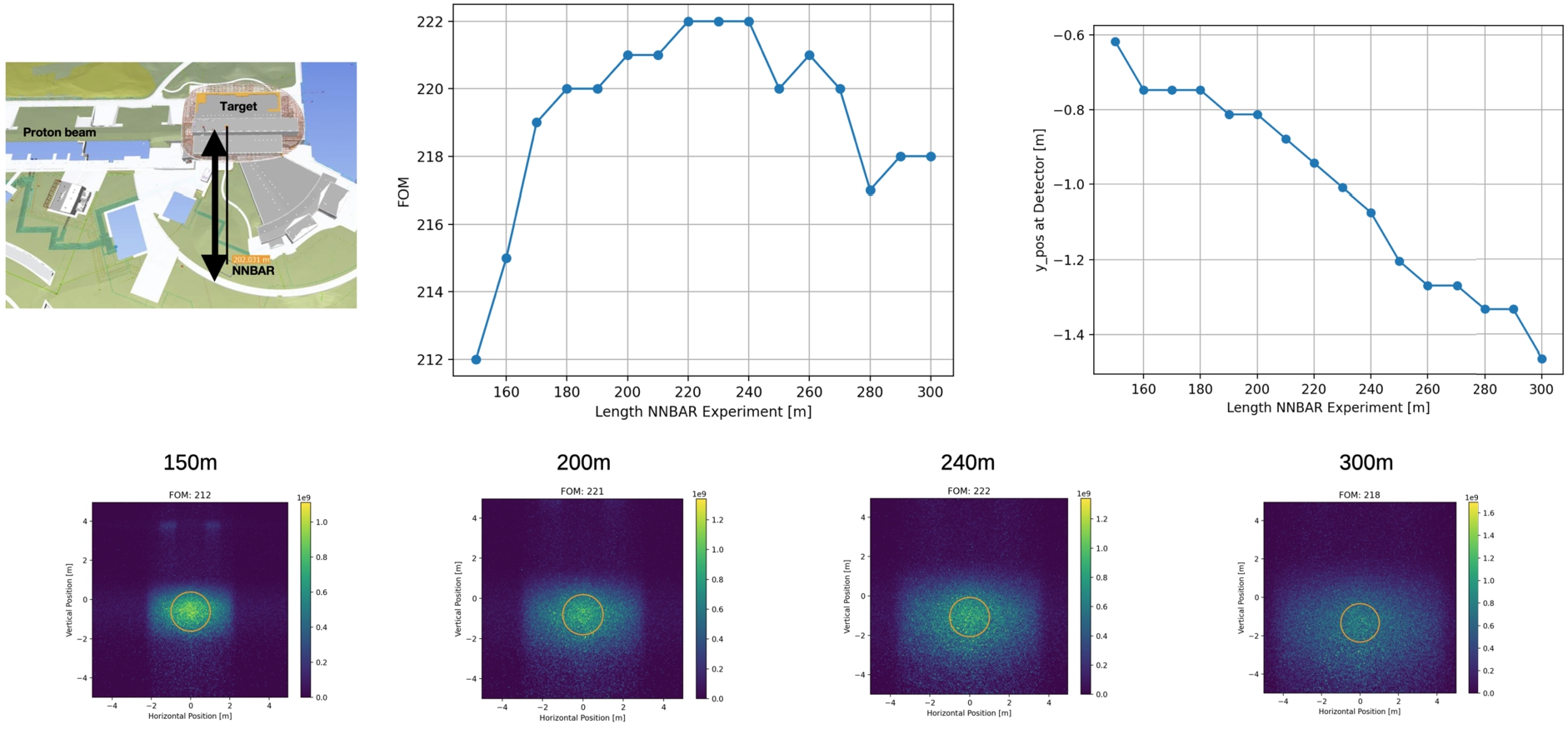

The strategy of the NNBAR experiment, represented pictorially in Fig. 1, is driven by the need to maximise the FOM, which is defined in Equation (1) and which represents the discovery potential. The last search was conducted at the ILL [44]. The design aim for NNBAR is to achieve a FOM a factor of

Overview of the search for

Figure 1 provides an overview of the experiment, which is also described below, together with, where appropriate, a short discussion on design choices.

Neutrons are passed into a beamline via the LBP. The

A neutron mirror configuration is then used to focus the neutrons towards a distant carbon target. Various nested mirror systems were investigated using the neutron ray-tracing simulation, McStas [196]. Realistic physical constraints from the LBP and beam pipe shape were considered. The most promising optics option is a planar nested mirror system followed by a section of beampipe with a square cross section, which can deliver a FOM of 300 ILL units per year at 2 MW.

The neutrons move out through the shielded bunker region into the NNBAR tunnel. Magnetic shielding surrounds the neutron path from the LBP up to the detector region. The ‘field-free clock’ of a neutron starts ticking when the particle enters the magnetic shielding region and is reset should the neutron be scattered e.g. in the optics system. The magnetically shielded region corresponds to a distance of around 200 m. A larger distance can potentially provide a higher sensitivity. However, physical limitations (the presence of a road, as seen in the cover picture of this volume) prevent a longer beamline.

A two-layer octagonal mumetal magnetic shield with additional active compensation was designed with the finite element method code, Comsol. This is largely a scaled-up version of a proven concept used for an atomic fountain [197]. With the magnetic shield, the field can be kept to values below 5–10 nT. For such a low field, the neutrons are quasi-free and optimising the experiment to maximise the FOM is thus appropriate.

Biological shielding of the full beamline to match ESS safety standards was calculated using the MCNP radiation transport code [109], together with the Comblayer toolkit [30] for building geometric models. This was also an important study in showing that the performance of other beamlines would not be affected by radiation from NNBAR.

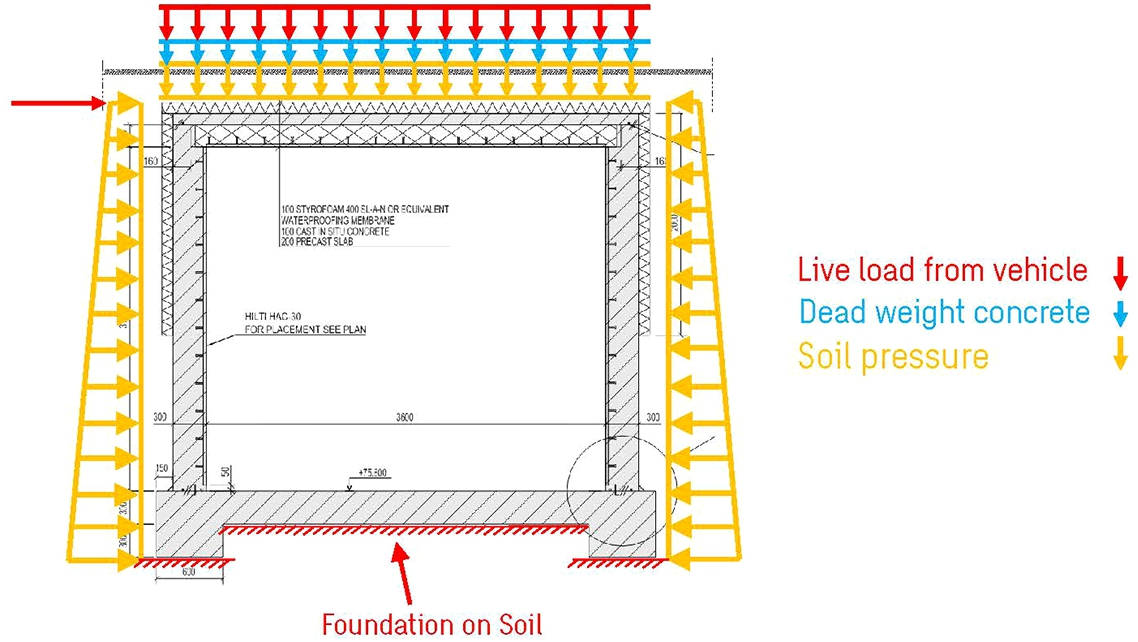

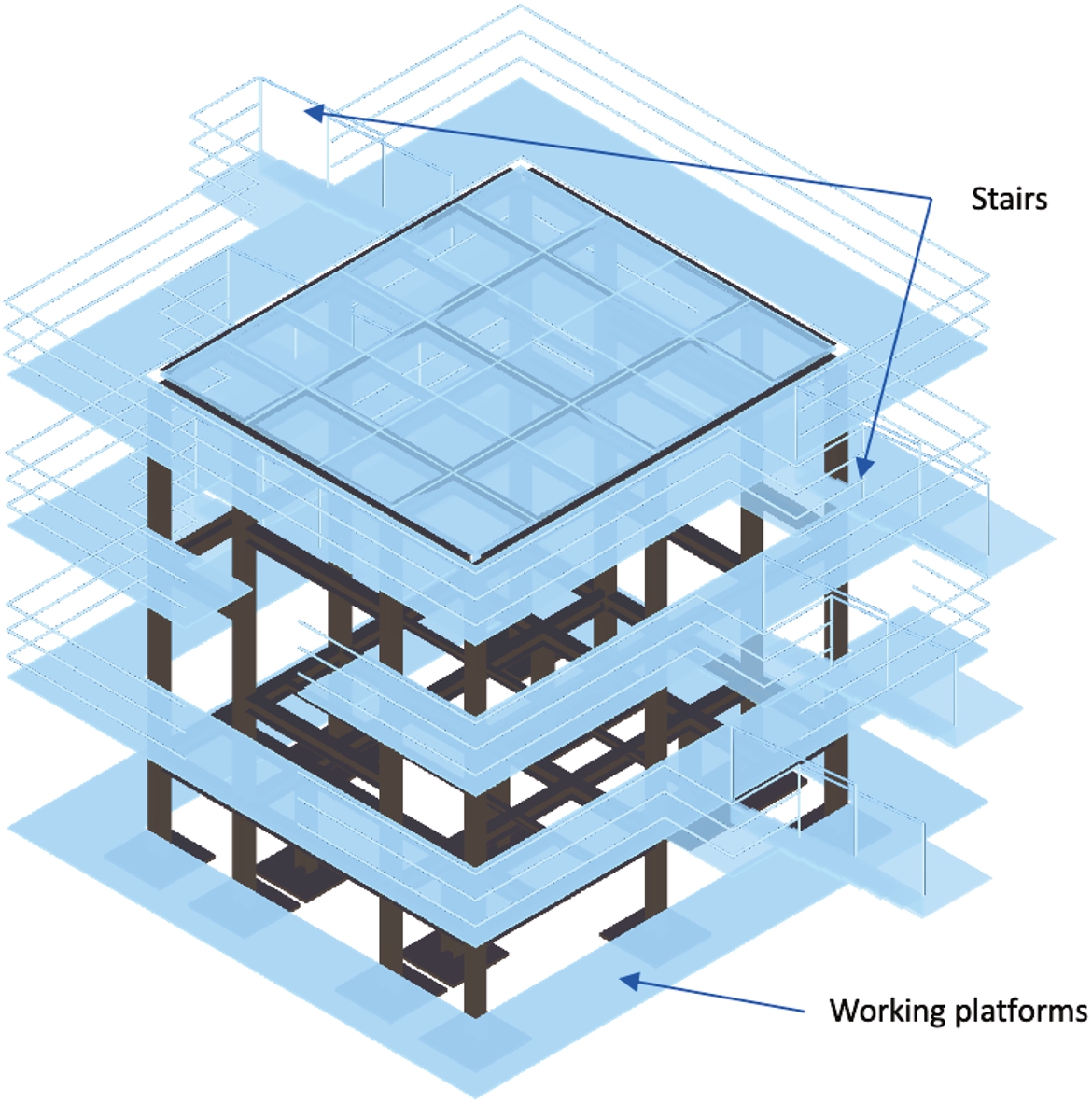

Beyond radiation emission, the NNBAR beamline must fit within the engineering and physical constraints of the ESS infrastructure. A civil engineering study took place to quantify topics as diverse as floor loads and interference with the ESS instrument suit.

An annihilation target sits near the end of the beamline. This is foreseen to be made of carbon and with a thickness of 100 μm. The target is a circular disk of radius 1 m. Varying this size changes both the FOM and, as is shown in this paper, the experiment cost. With a 1 m radius, the goal of a factor

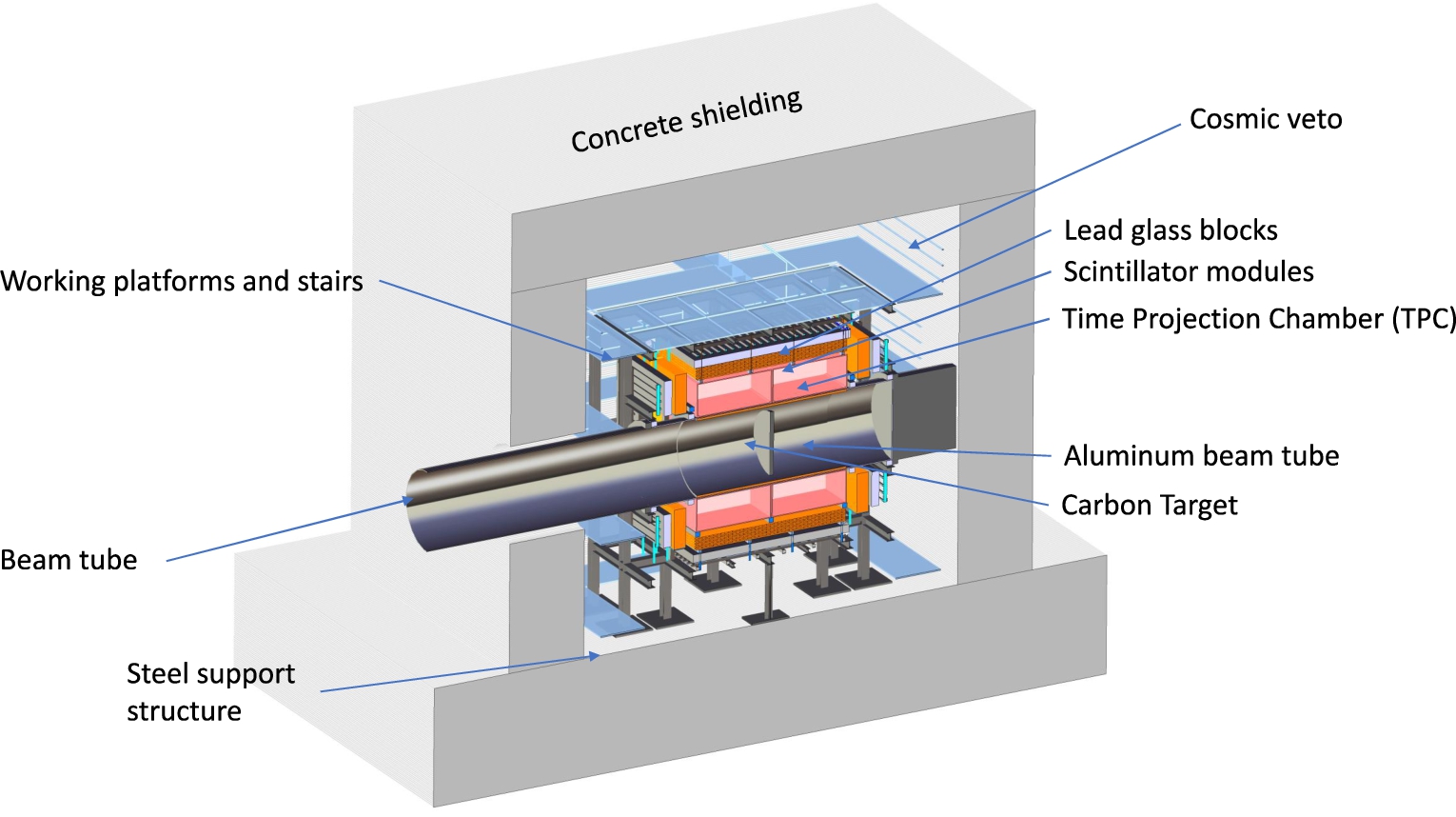

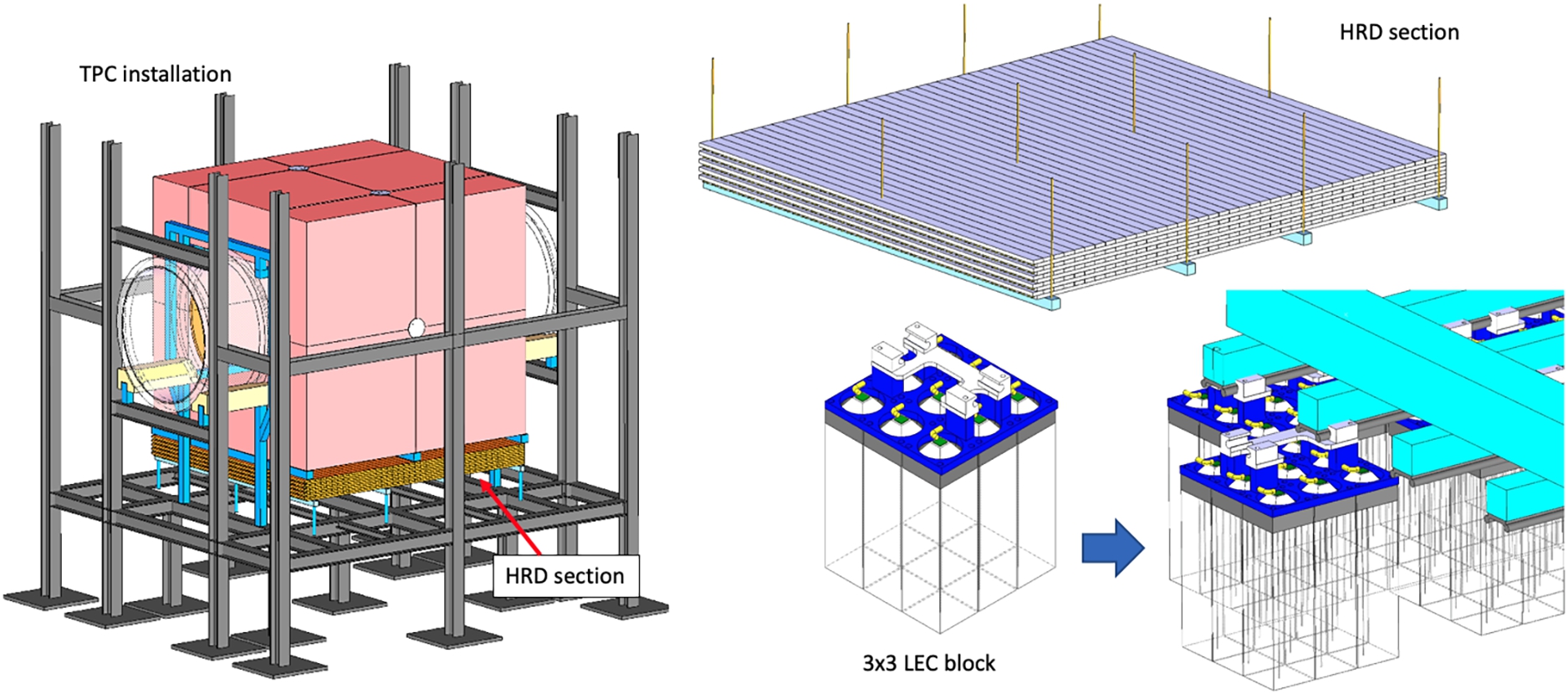

The annihilation detector sits in the shielded detector cave and surrounds the carbon target. The detector comprises time projection chamber (TPC) tracking, a scintillator and lead-glass calorimeter, and an active (scintillator) and passive (steel) cosmic veto. The detector measures the products from an antineutron-nucleon annihilation. The signature is a multi-pion state of invariant mass up to 1.9 GeV. TPC tracking was chosen as it provides 3-dimensional tracking, has good particle identification through continuous energy loss

The bulk of neutrons would pass through the carbon target and eventually hit a beam stop made of neutron absorbers and moderators: B4C, polyethylene, steel and concrete. As for the carbon target, background from the beam stop forms a small part of the low energy particle flux into the detector.

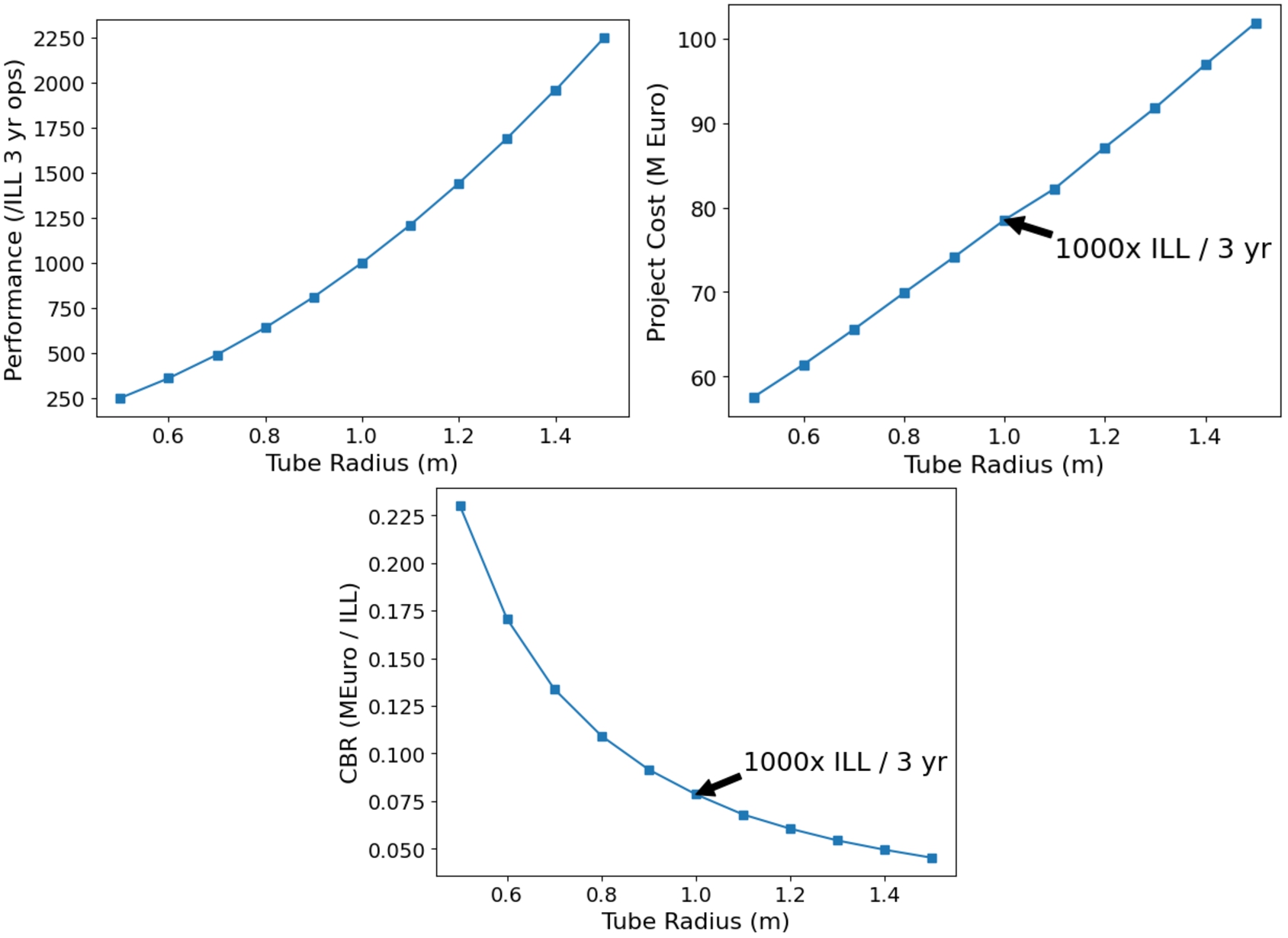

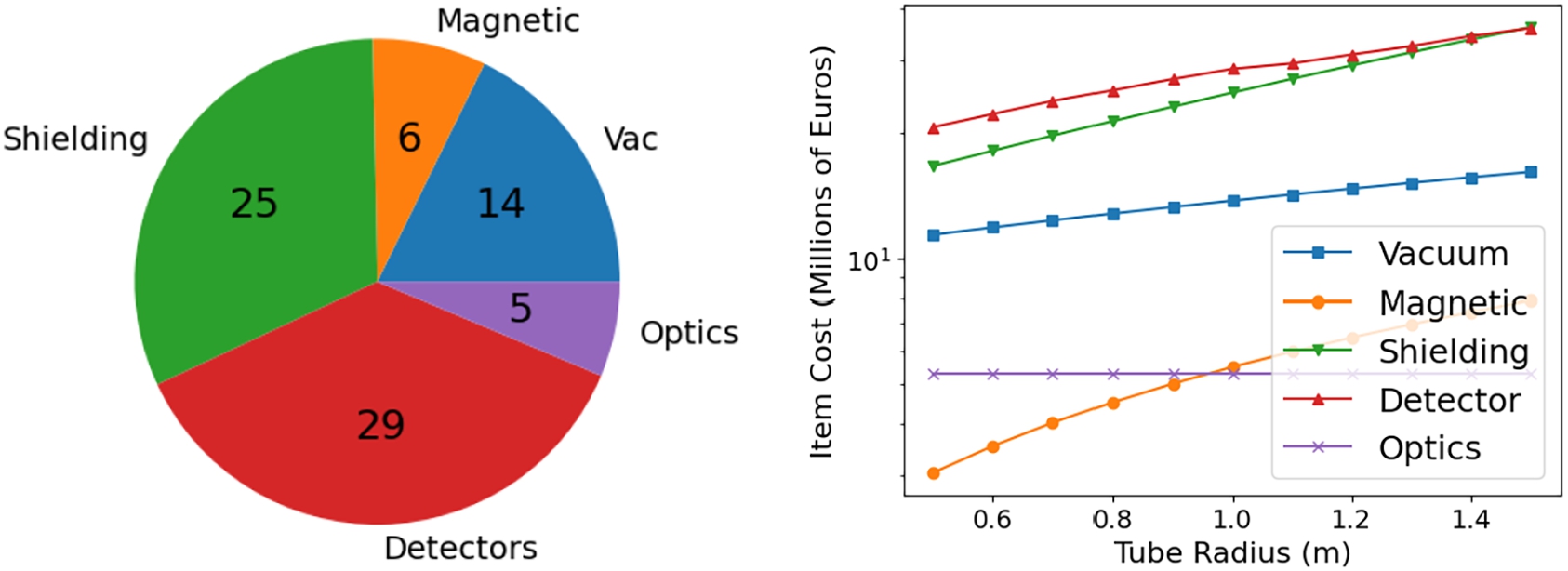

Considering the relative efficiencies of the ILL and NNBAR detectors and a three-year running time for NNBAR, a performance of around 1100 times the capability of the ILL experiment can potentially be achieved should the ESS with an accelerator power of 2 MW. This is more than doubled if the power is 5 MW. Furthermore, a parametric costing model was used which shows how the performance can be enhanced or reduced depending on the beam pipe size.

A summary of design parameters is given in Table 2.

NNBAR design parameters

This paper also points towards future work. In particular, experiments on detector prototypes and a smaller-scale NNBAR, such as HIBEAM, can be used to test and optimise simulations. A dedicated study on detector granularity and beamline optimisation in the detector area can quantify and suppress the influence of low energy beam-related particles. A further civil engineering study is required to guarantee that the NNBAR beamline does not interfere with neighboring beamlines or other ESS facilities.

This section describes the motivation for searching for

Neutron–antineutron oscillations as a key observable of baryon number violation

It can be strongly argued that baryon number is not expected to be conserved. Baryon number violation is one of the so-called Sakharov conditions [159], necessary for a theory of baryogenesis to explain the origin of the observed matter-antimatter asymmetry. Furthermore, like lepton number (

It should also be noted that, notwithstanding the conservation of

It should also be noted that the existence of sphaleron processes at the electroweak scale implies that a baryon asymmetry produced at a higher scale by, e.g. a (

Given the above arguments, it is no surprise that the phenomenon of

The first phenomenological model, made within a unification paradigm, was introduced by Mohapatra and Marshak in 1980 [140].

A theory-agnostic and exploratory approach to BNV searches also highlights the need to search for

The

In an effective field theory approach, the

Calculations for

Neutron–antineutron oscillations and post-sphaleron baryogenesis

As the

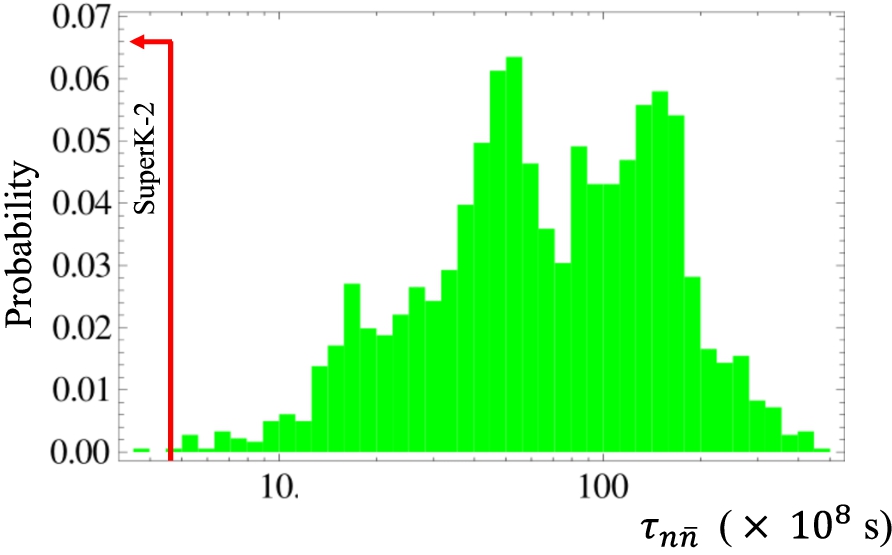

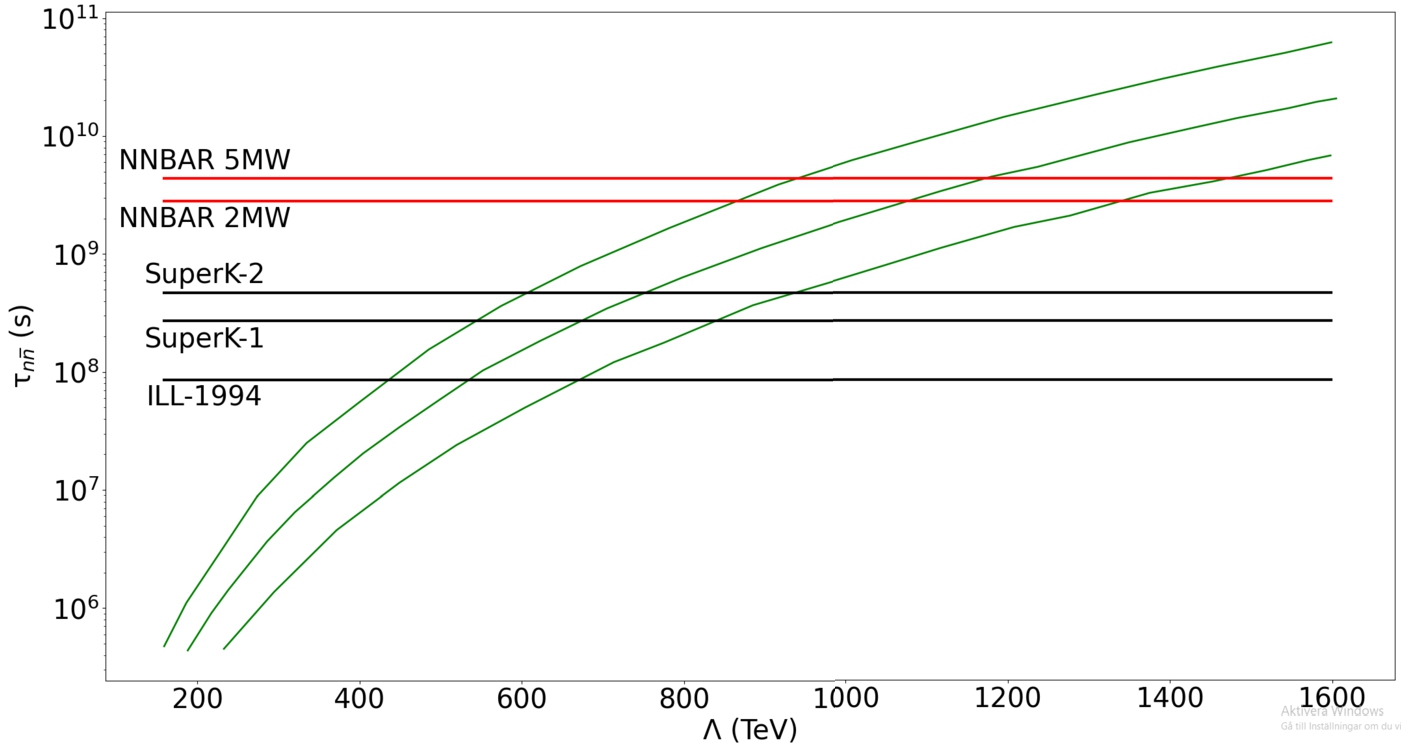

Figure 2 shows the scalar mass scale as a function of the

Another open question which

Other scenarios of neutron–antineutron oscillations

A range of extensions of the SM, beyond those described in the aforementioned sections, predict observable

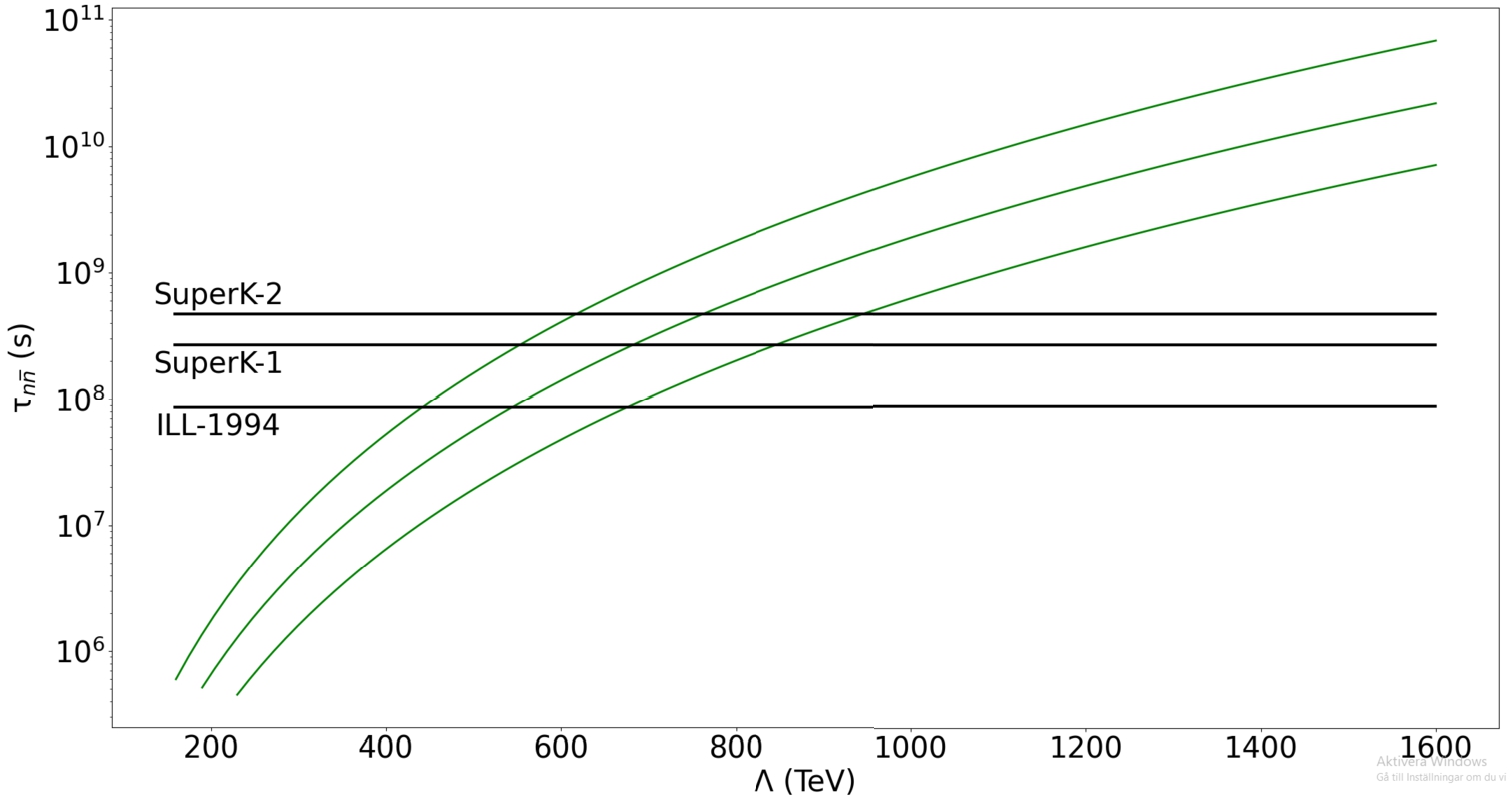

Relationship between the oscillation time and the mass scale for new physics in a R-parity violating supersymmetry scenario. The calculations are presented using the best estimate for the matrix element together with two predictions corresponding to shifting the matrix element according to uncertainties thus forming an uncertainty envelope around the central estimate. The limits on oscillation time from searches at the ILL (ILL-1994) [44] and Super-Kamiokande (SuperK-1 [4] and SuperK-2 [6]) are shown. Figure adapted from reference [74].

Neutron oscillations also feature in extra-dimensional models. One such type of model [103,145] gives an example of how proton decay can be strongly suppressed but

Extensions of the SM with scalar fields give rise to

The Schrödinger equation for the evolution of an initial beam of slow-moving neutrons which can convert to antineutrons after a time t is shown in Equation (2).

The term

Here

It can thus be seen that the conversion rate becomes suppressed when the neutron and antineutron are no longer degenerate in energy. For neutrons bound in nuclei, values of

With magnetic shielding such that the average magnetic field is less than around 5–10 nT for an experiment with cold neutrons, the so-called quasi-free regime (

From this equation it becomes clear that the FOM defined in Equation (1) approximates the number of antineutrons arriving at the annihilation detector region at a given experiment for a specific value of oscillation time. The FOM is thus a proxy for an experiment’s discovery potential.

Neutrons bound in nuclei belong to the region:

Unlike Equation (4), Equation (5) retains the

Antineutron-nucleon annihilation

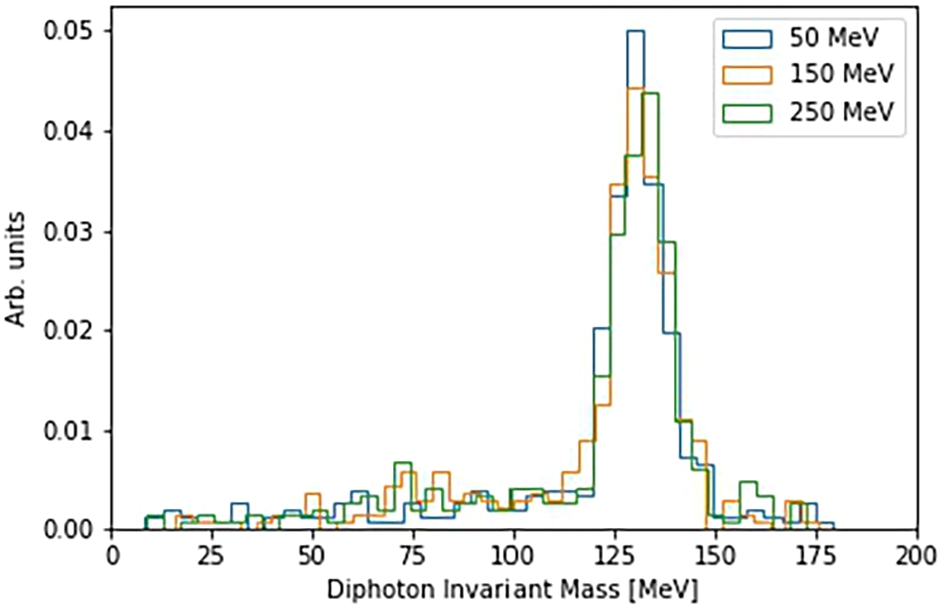

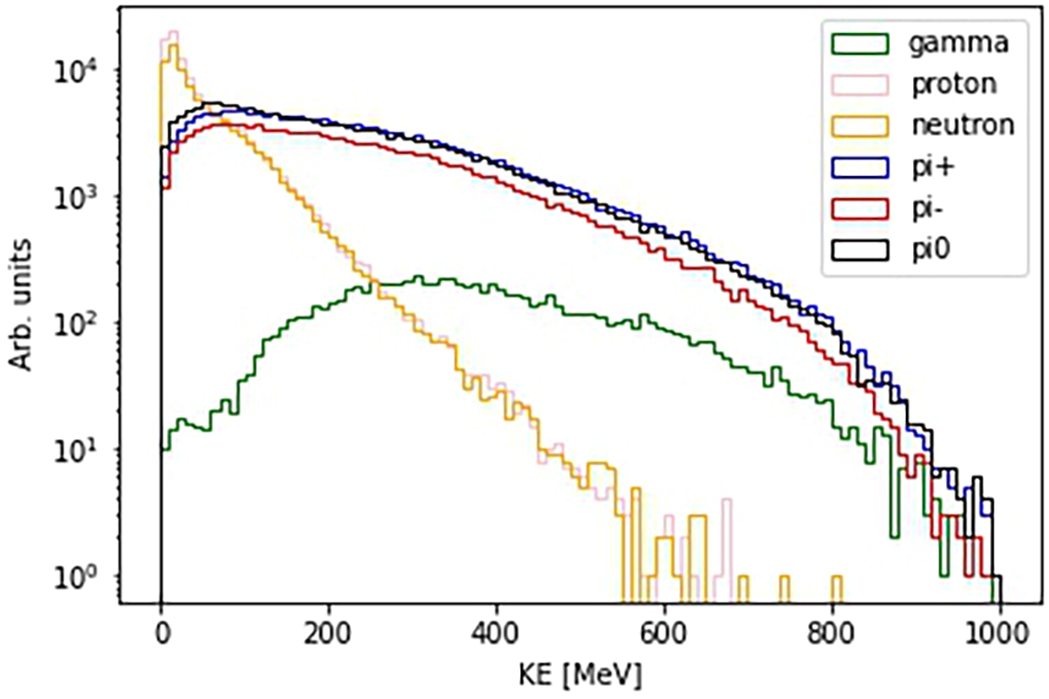

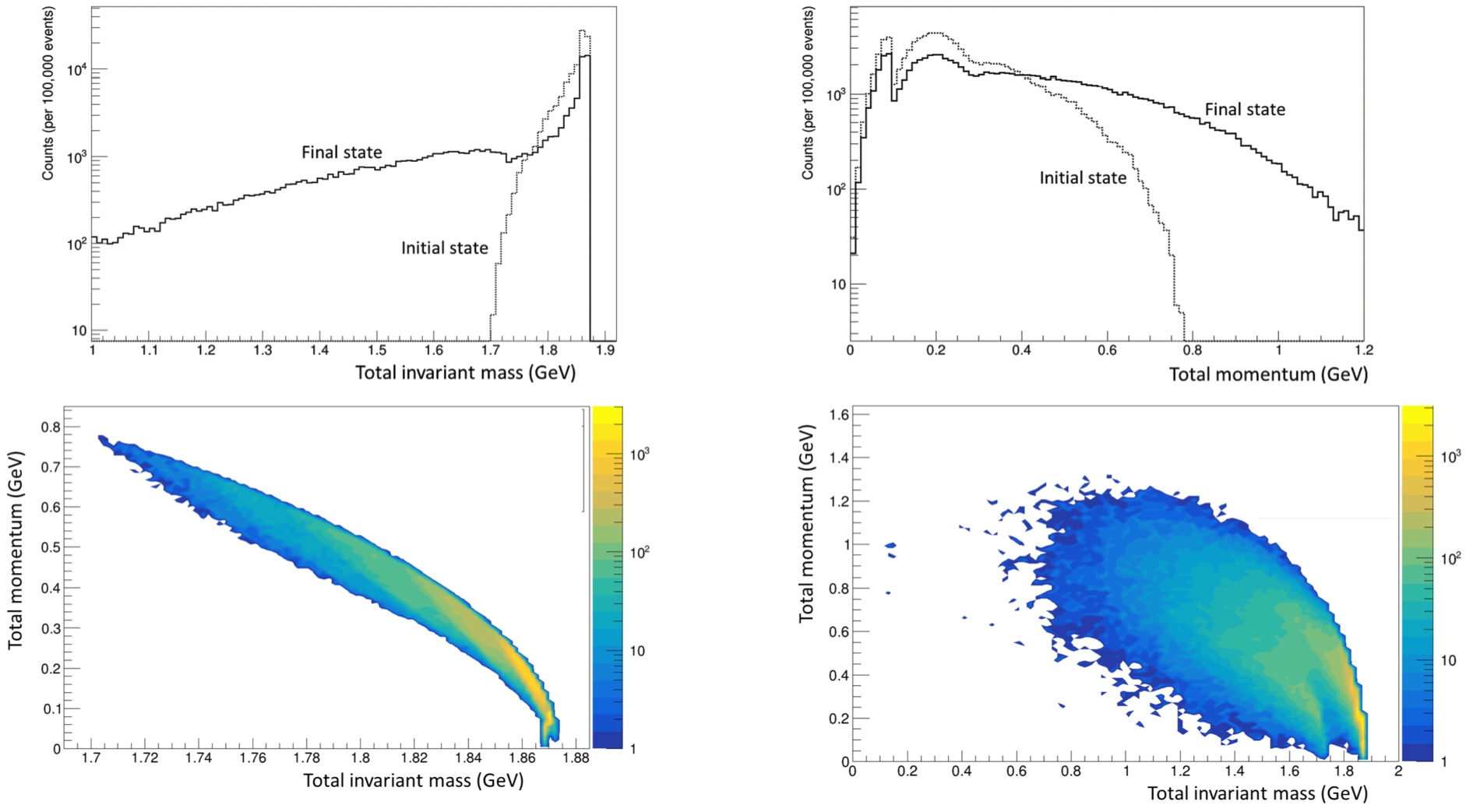

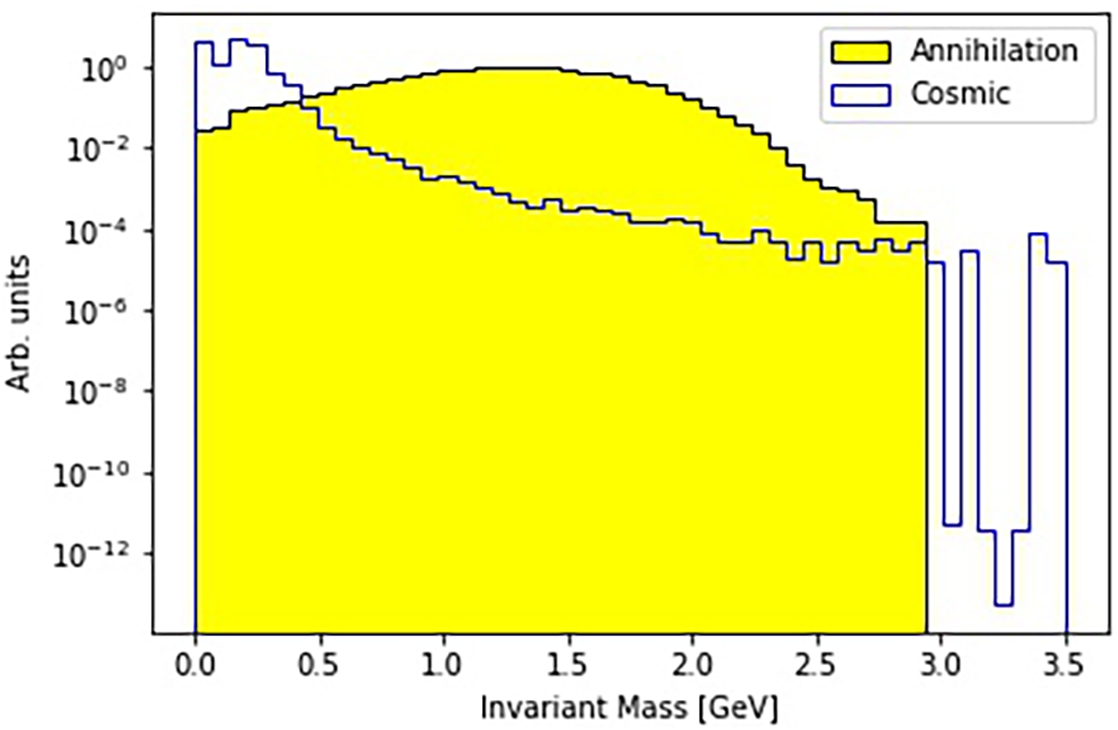

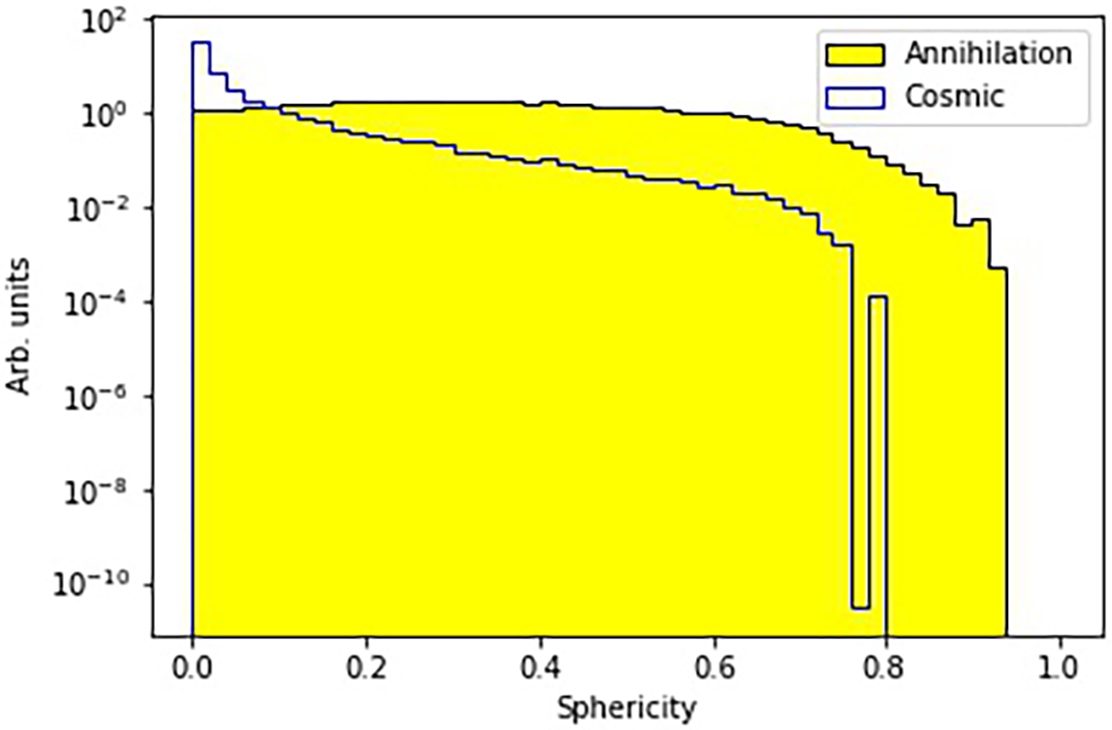

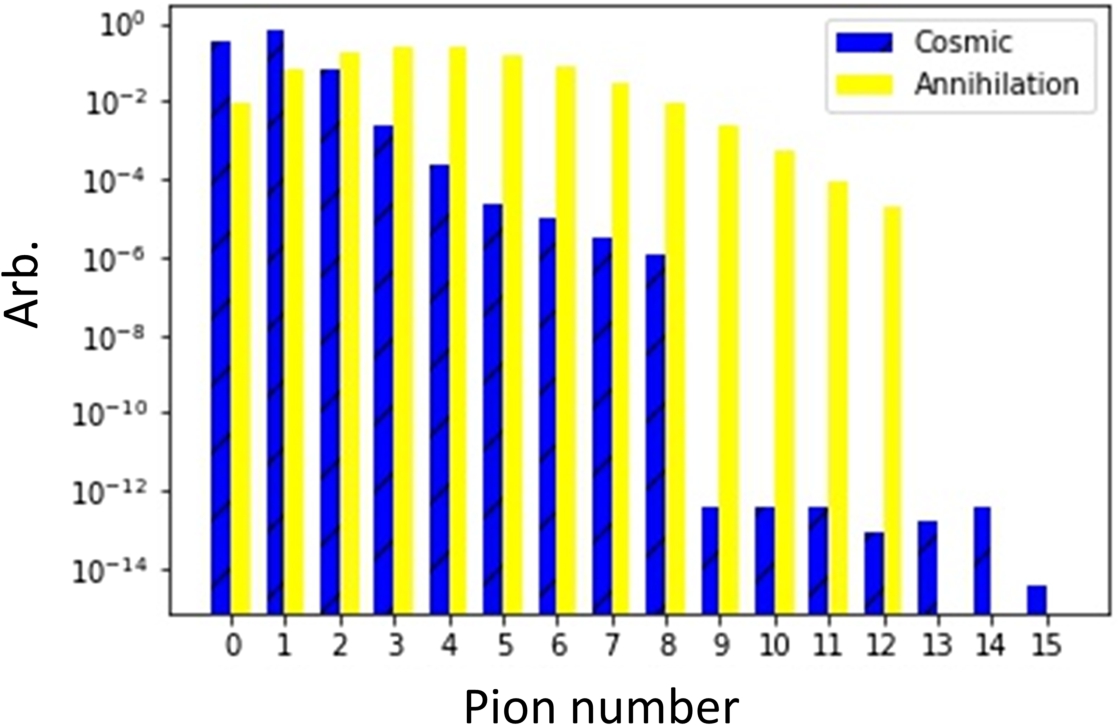

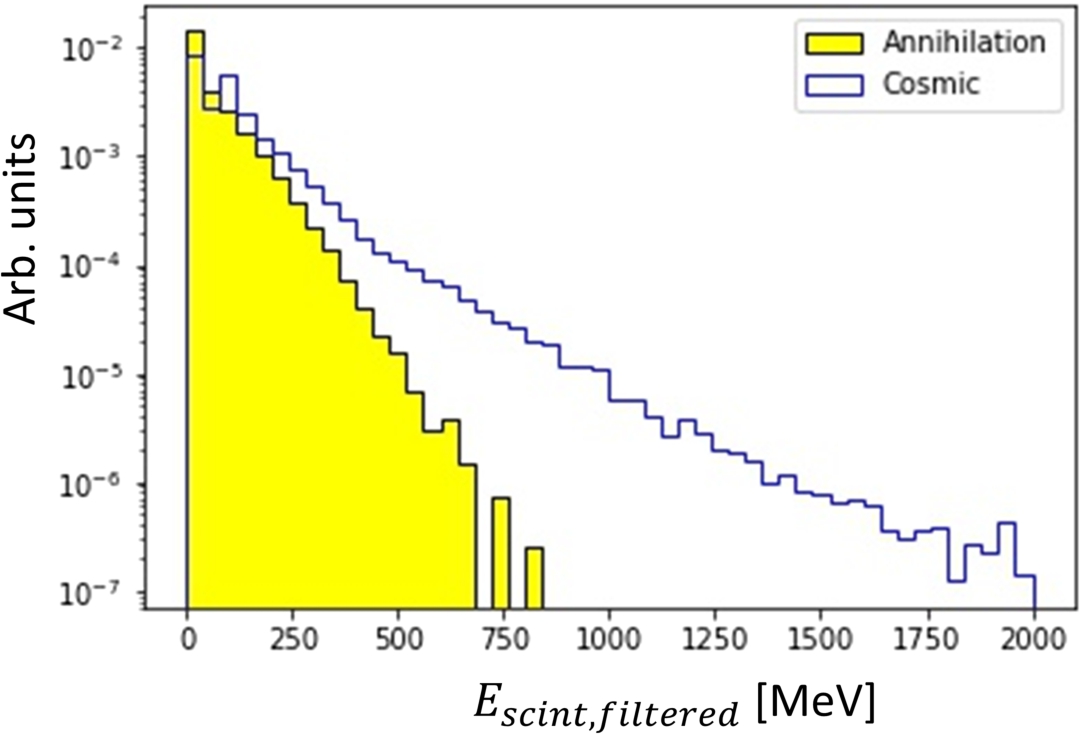

The composition and properties of the visible final state produced following an extranuclear

A model of

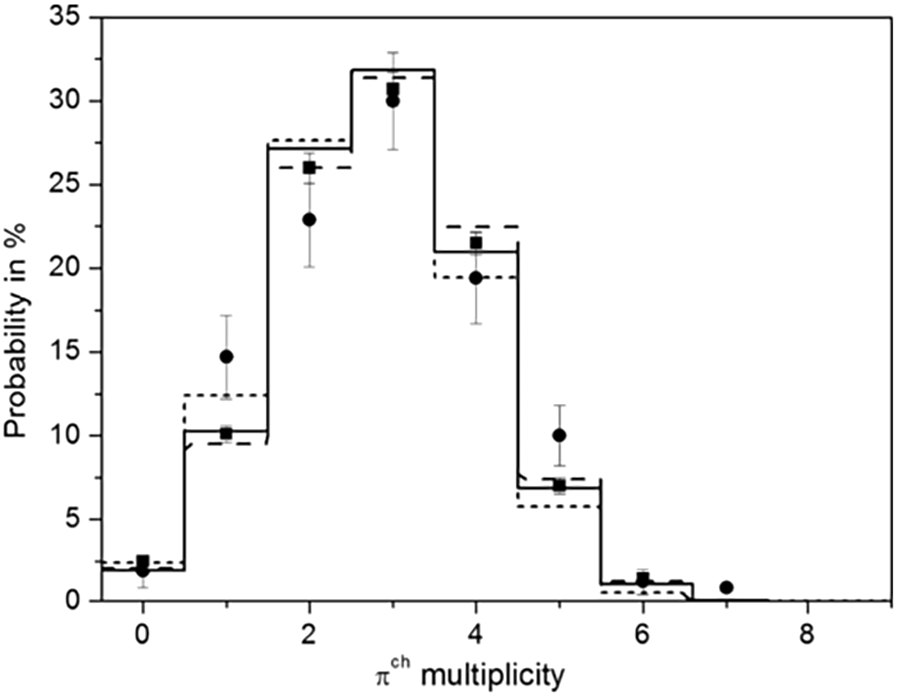

Table 3 shows the simulated and measured particle multiplicities (M) and the total energy of final state particles, produced after

Average particle multiplicities (M) compared between data and simulation. From reference [15]

Average particle multiplicities (M) compared between data and simulation. From reference [15]

This section describes ways in which

Cosmological and astrophysical implications of neutron oscillations

There is limited sensitivity from cosmological and astrophysical measurements to the free

Searches with free neutrons in a beam

Neutrons are cooled in a moderator and then passed to a system of neutron optics. The neutron beam is focused across a propagation region. Neutron focusing is important as interactions between the propagating neutron and the guide wall can ‘reset the oscillation clock’.2

Interesting work is ongoing investigating whether materials can be deployed along the guide wall such that the relative phase shifts of the neutron and antineutron wave functions can be small upon reflection [110,144,150]. This has the potential to allow the reduction of the size of a future

Free neutron searches using beams have been made at the Pavia Triga Mark II reactor [67,68] and at the ILL [44,95]. The final ILL search [44] gave a limit on the free neutron oscillation time of

Searches have also been made with bound neutrons at large mass detectors. Such searches look for a signature of pions and photons arising from a

Limits on conversion times for neutrons bound in nuclei (

Super-Kamiokande has provided the current most competitive inferred limit on the free neutron conversion time. This is based on a putative

Searches with ultracold neutrons

In addition to the above searches, it has been proposed to use ultracold neutrons (UCNs) in a material trap [119,125,127]. This offers the possibility of potentially cheaper experiments than one using beam neutrons as long propagation distances are not needed. However, this approach has its own drawbacks as, for a large volume tank, the UCN free flight time is around 1 s. A possible search at the WWR-M reactor has been considered [96,97,172]. The sensitivity would be lower by more than an order of magnitude than for NNBAR.

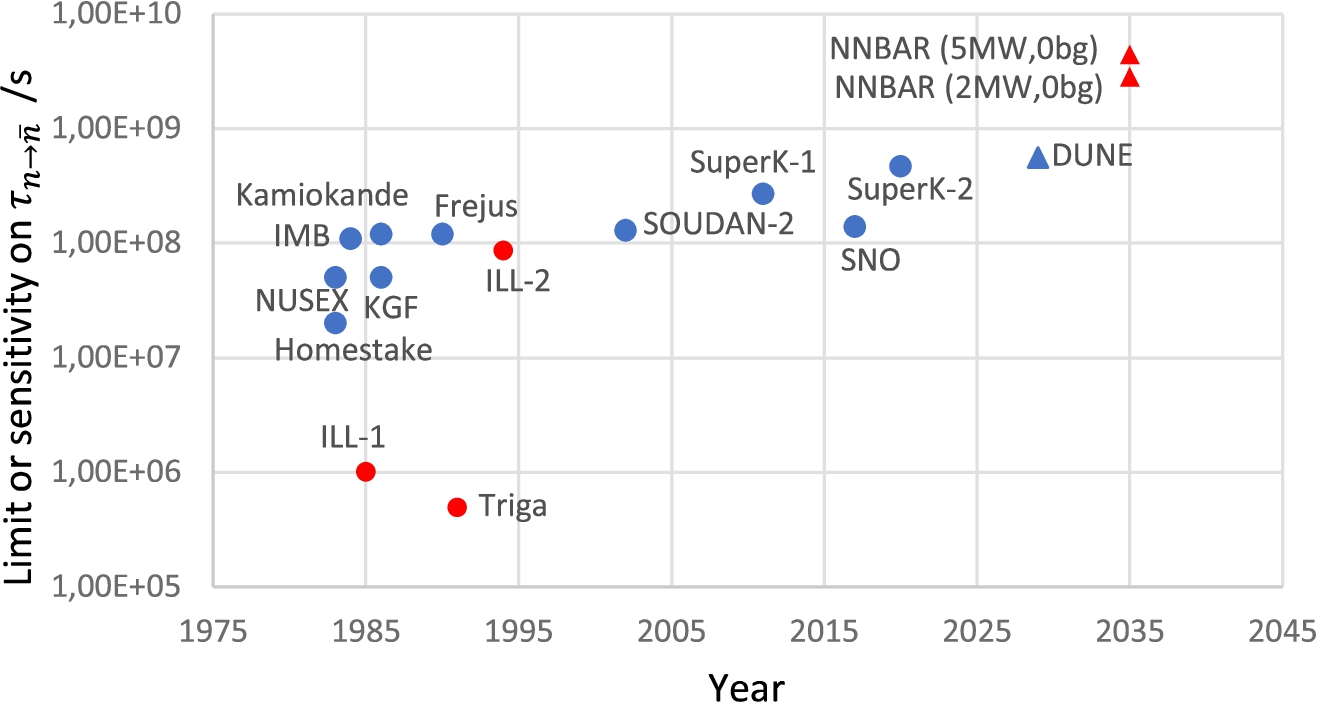

Comparison of limits and sensitivities

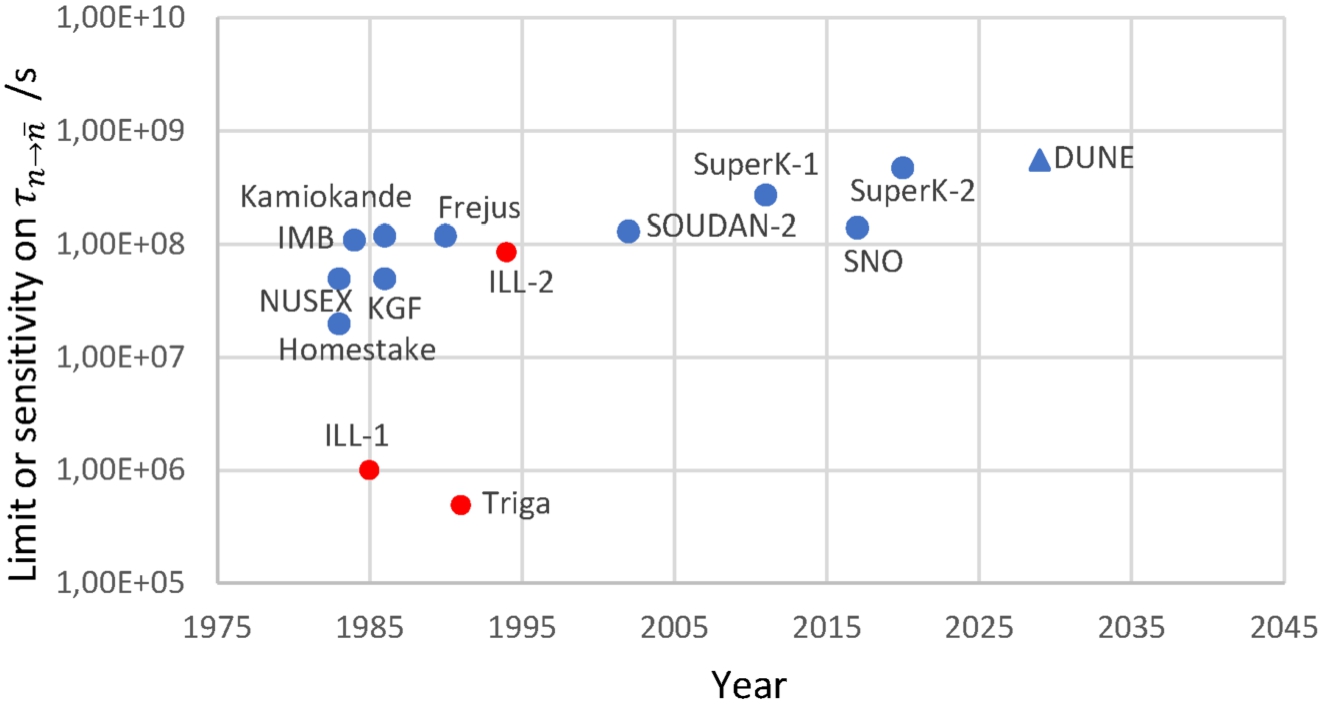

Figure 4 shows limits on conversion time which have so far been achieved with bound and free neutron searches. A new generation of large mass detectors at DUNE [11,12], Hyper-Kamiokande [3,5] and JUNO [14,88] has the potential to substantially extend sensitivities. The projected sensitivity for the future DUNE experiment [12] is shown. The sensitivities of the future Hyper-Kamiokande and JUNO experiments are not yet published.

Limits from searches with bound neutrons (blue circles) and free neutrons (red circles) and the sensitivity of the future DUNE (blue triangle) experiment.

It should be emphasised that both types of searches (free and bound) are needed. Any comparison between limits from free and bound neutron searches relies on model assumptions, such as

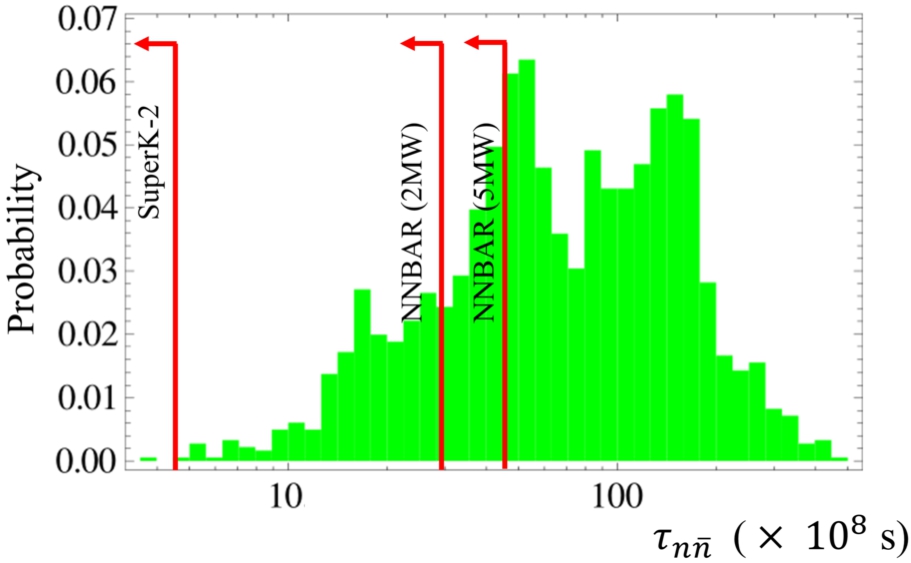

In order to calculate the sensitivity of the

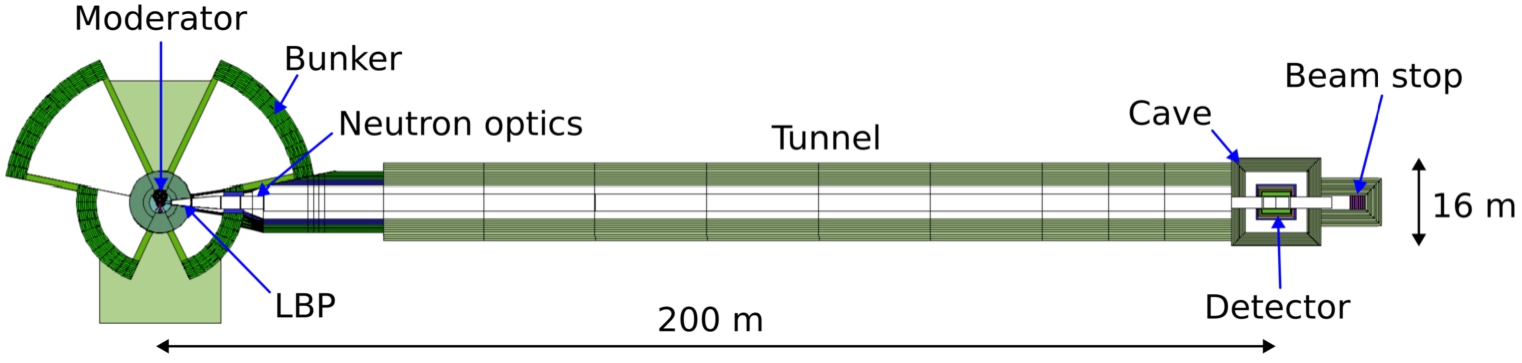

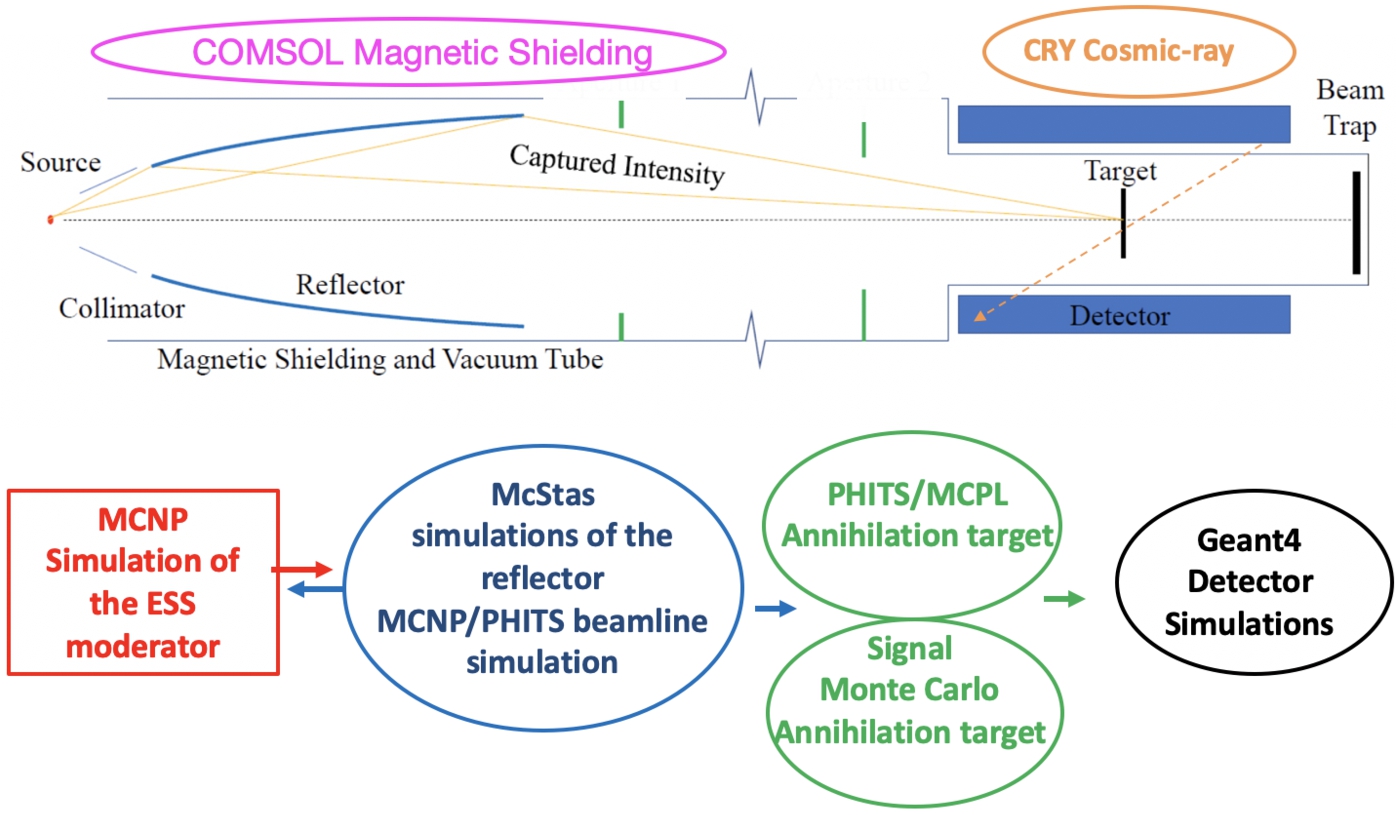

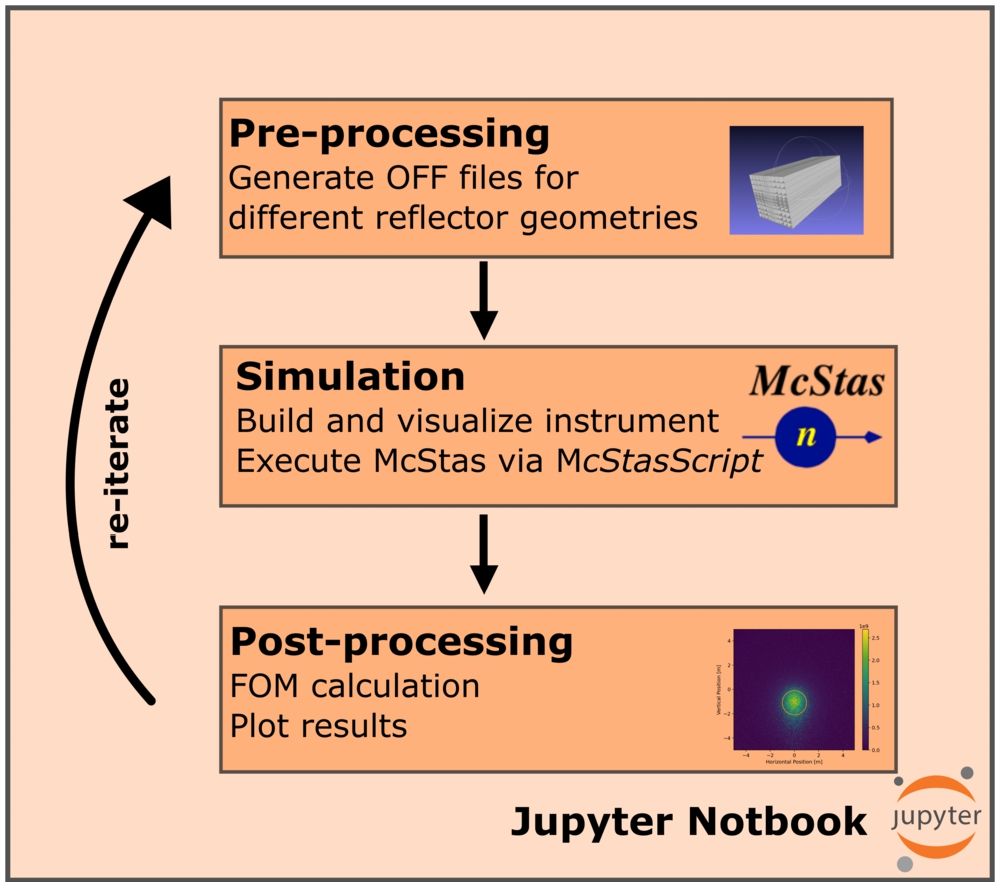

Above: an illustrative overview of the essential components required for the NNBAR experiment. Below: a simulation flowchart outlining the simulation and optimization of the NNBAR experiment. MCNP simulations of the ESS lower moderator are used as starting point. The focusing of cold neutrons is studied using McStas while the background stemming from faster neutrons is investigated using MCNP. Finally, GEANT4 is used for the detector simulation. The MCPL format is used to exchange data between the different simulations. Figure from reference [47].

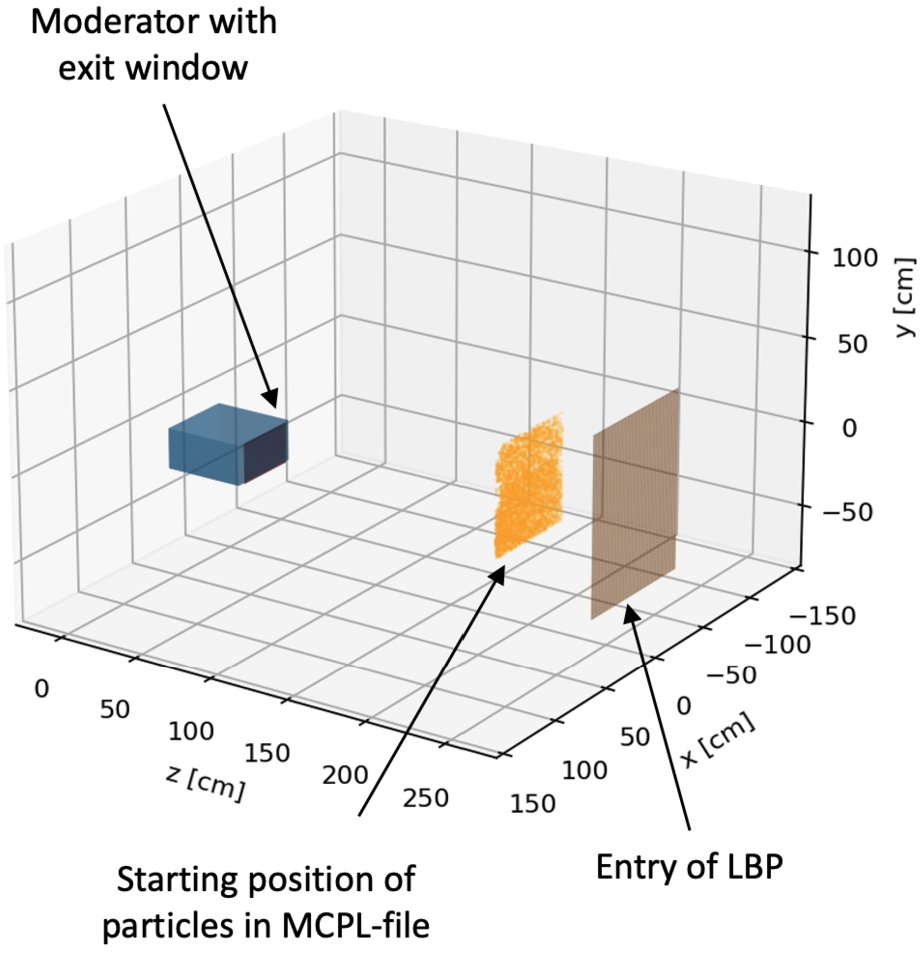

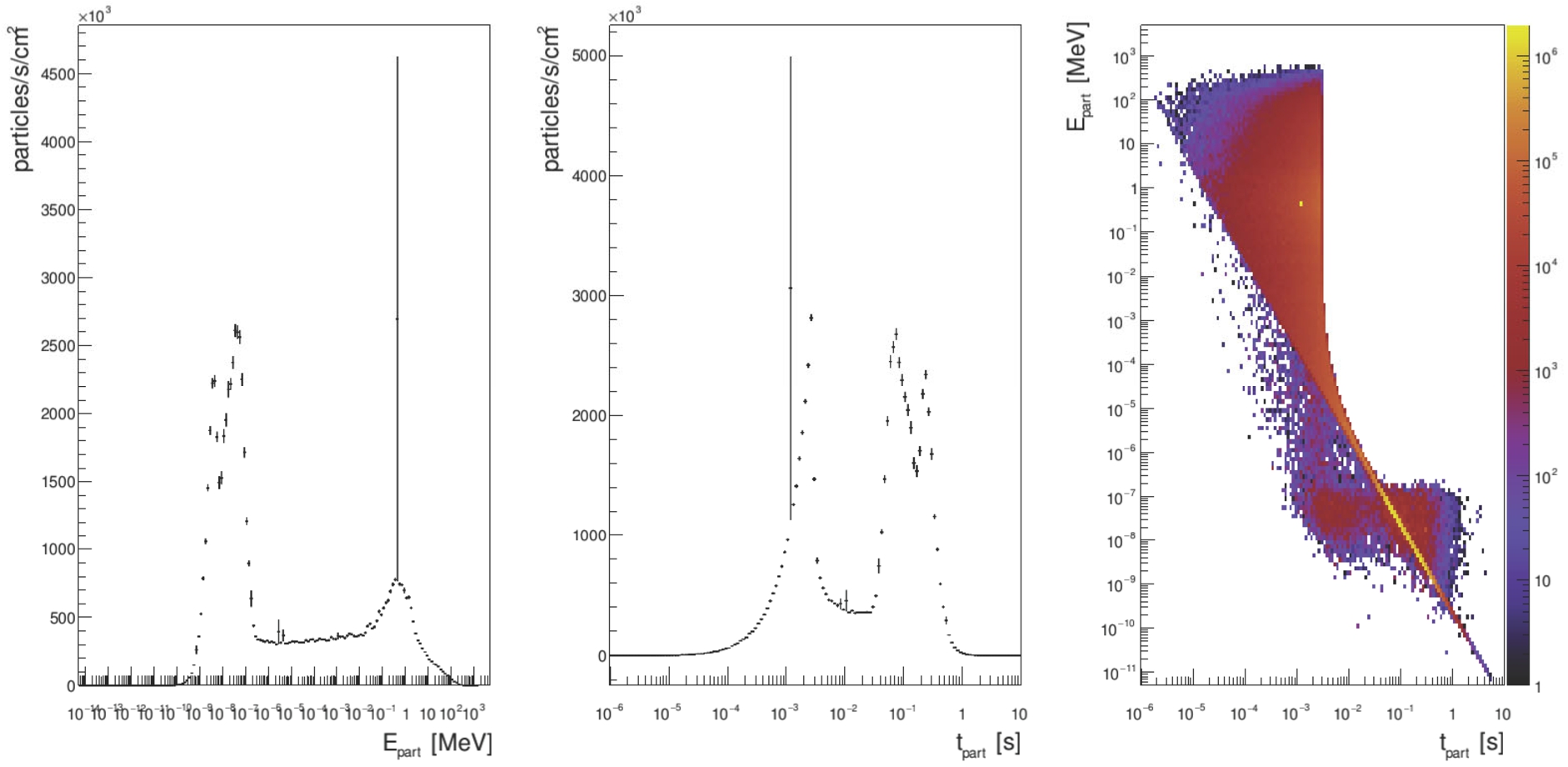

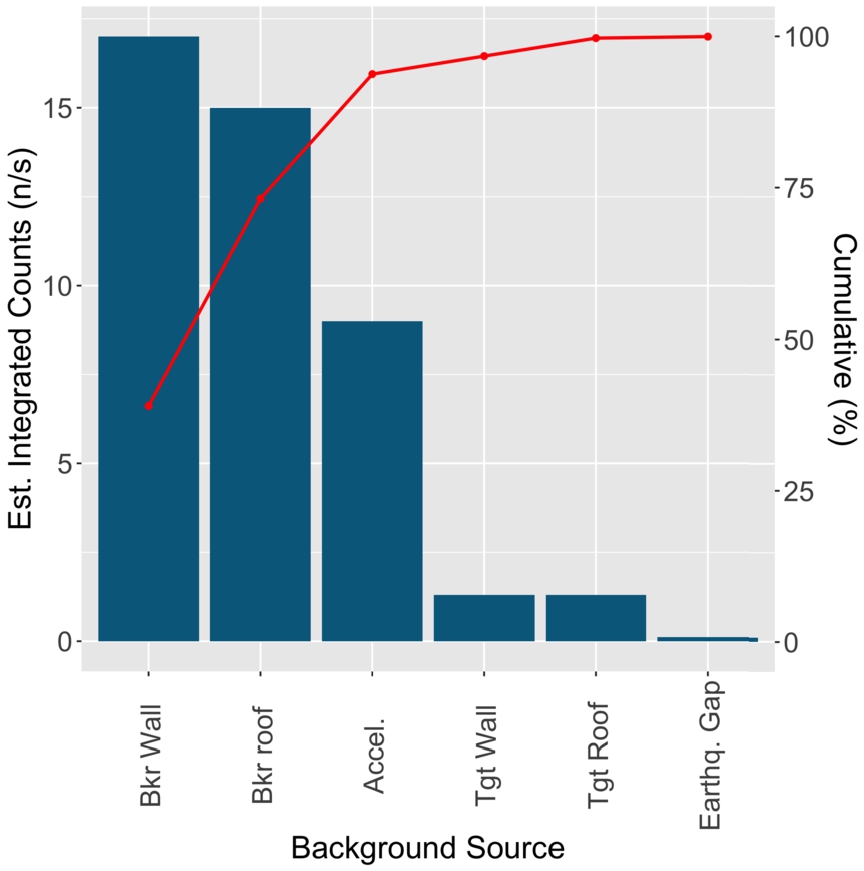

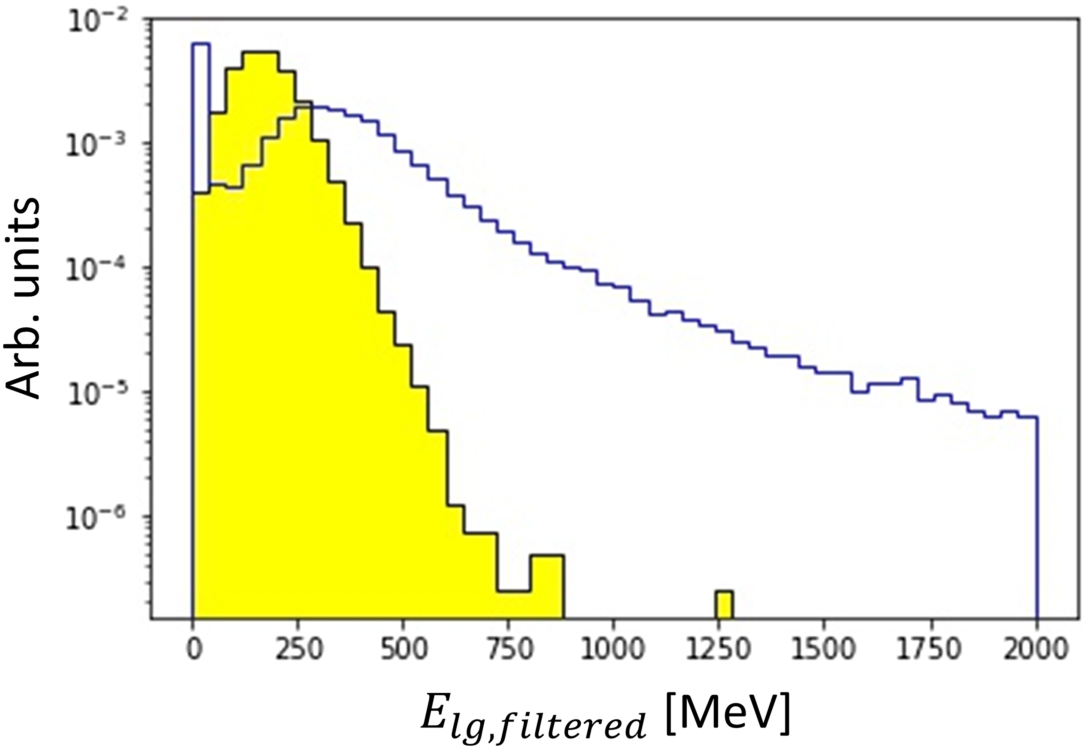

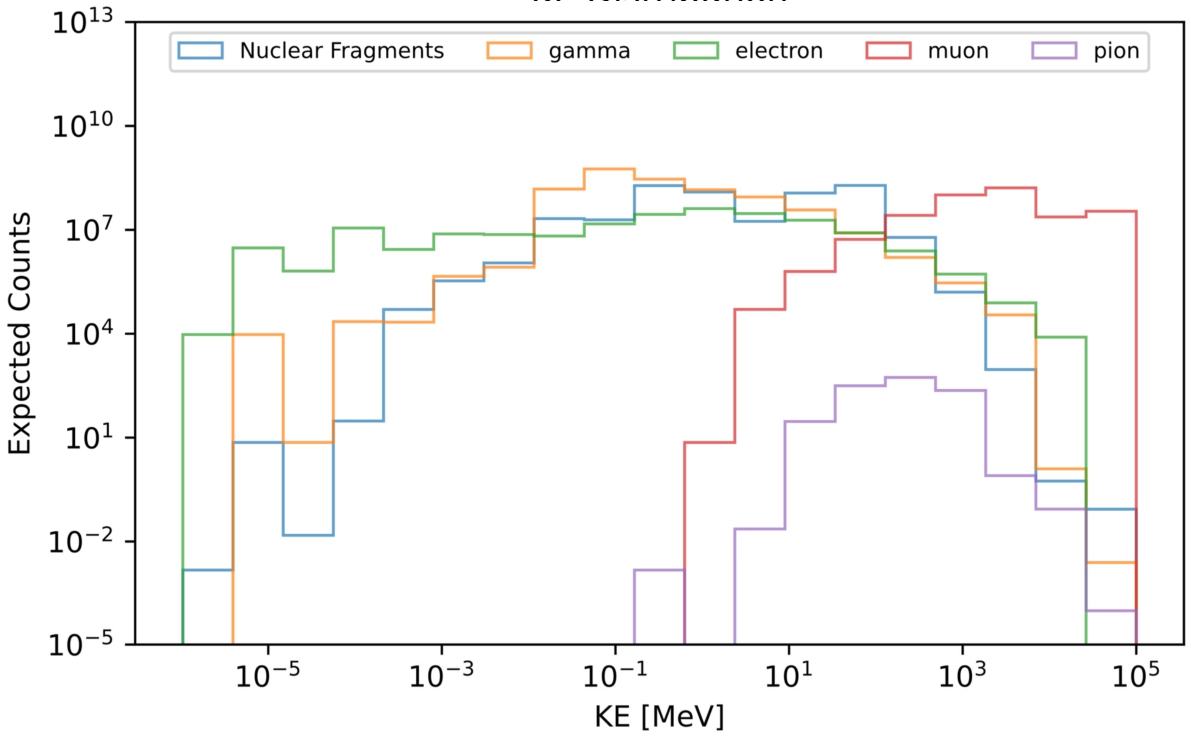

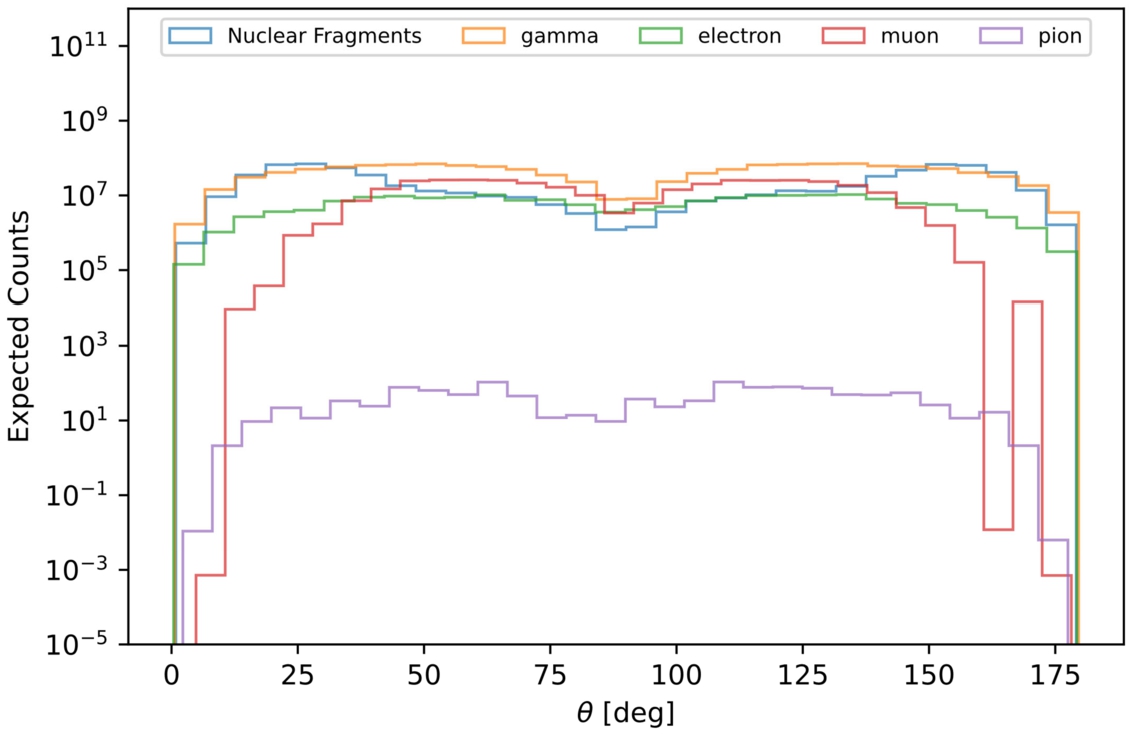

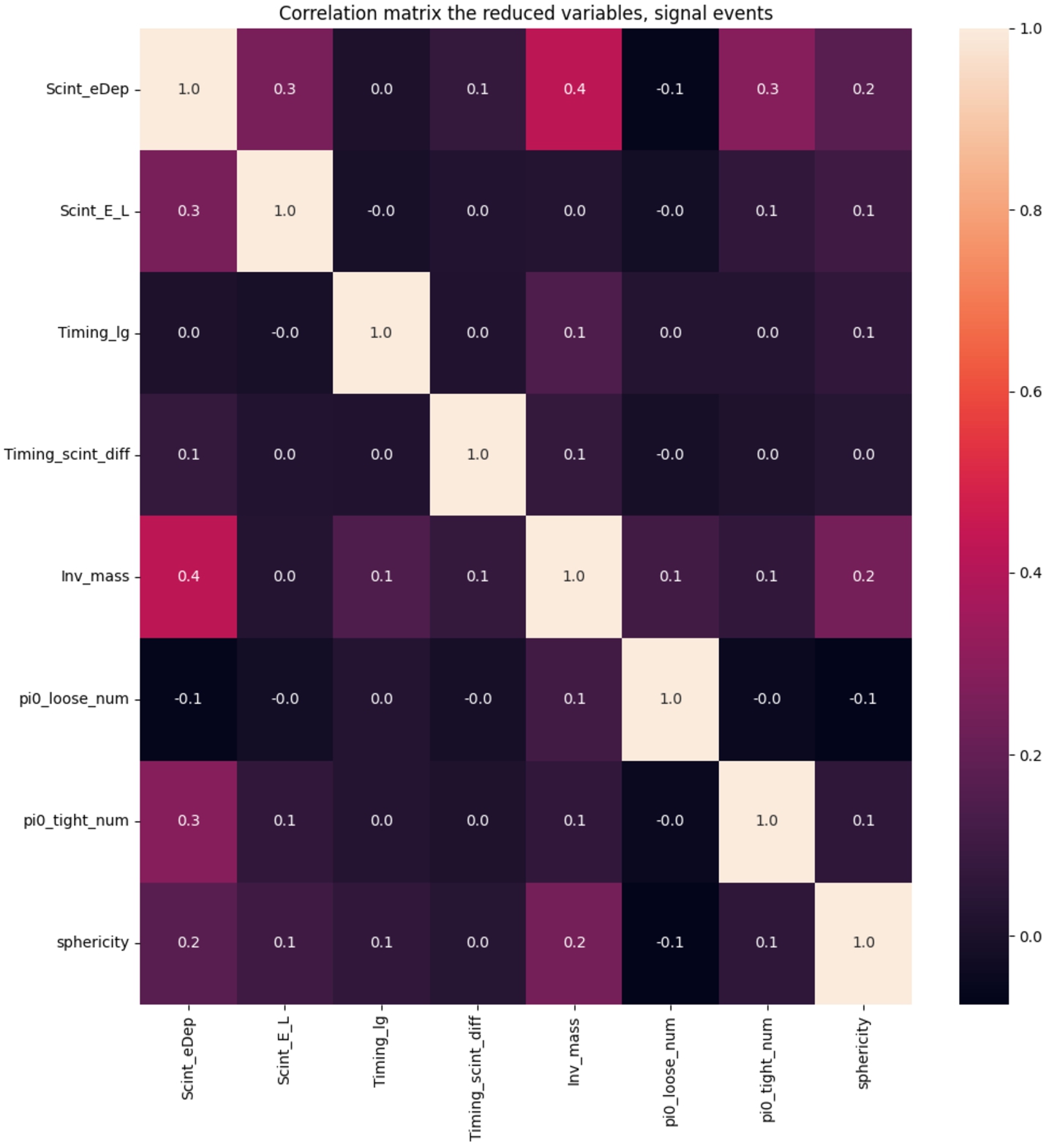

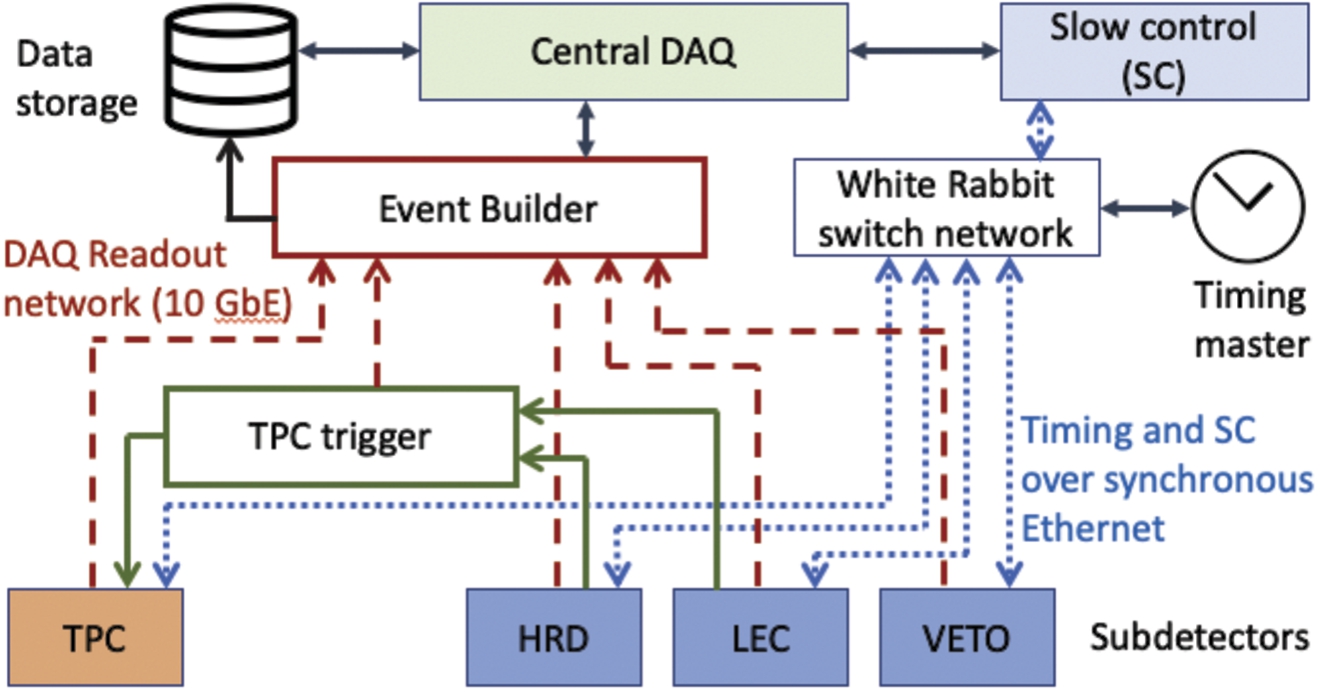

An overview of the simulation chain used to construct a comprehensive model of the NNBAR experiment is given in Fig. 5. This simulation chain consists of multiple components, each dedicated to specific aspects of the experiment. It effectively tracks the path of neutrons as they are focused and propagate towards the annihilation detector. The first step is the simulation of the neutron source as described in Section 2 in Reference [161]. Following this, several different simulations are necessary depending on the particle energy. The reflection and focusing of cold neutrons is modelled using McStas [196]. The low magnetic field region is designed and optimized using the finite-element simulations tool COMSOL [81,82]. The propagation of fast neutrons is simulated using MCNP [108] or PHITS [166] in order to determine the required beamline shielding as well as their contribution to the experiment’s radiation background. The MCPL format [128] is used to exchange particle trajectories between the different tools.

The annihilation detector, which observes the multi-pion final state of the annihilation of antineutrons on the carbon foil target must also be modeled. This simulation relies on GEANT4 [20], a standard simulation toolkit widely employed at particle physics experiments for detector simulations. The annihilation events are generated using a simulation tool that assumes an initial antineutron interacting with a carbon nucleus [48,50,106]. The detector modeling also requires the simulation of backgrounds, such as fast spallation products interacting with the foil, and external backgrounds, such as those arising from cosmic rays. The cosmic ray particles are generated using the Cosmic-ray Shower Library (CRY) [113].

In addition to the GEANT4 full simulation used for the analyses, parameterizations of the detector subsystems were also developed and employed in some cases to provide a fast estimate of the detector response and to cross-check the results obtained from the full simulation.

Details of some of the infrastructure necessary for NNBAR can be found in the first volume of the HighNESS CDR [161]. Section 1 of that document gives an overview of the ESS. The design of a

The NNBAR beamline

Following their production in the moderator, the neutrons undergo extraction via the LBP, as detailed in Section 1 of Reference [161]. These neutrons are then directed and focused toward the annihilation target, located at a distance of 200 m from the neutron source.

The LBP holds a central and indispensable role in the NNBAR experiment. Without this infrastructure, the experiment’s sensitivity objectives could not be reached. The LBP is strategically designed to occupy the equivalent space of three standard ESS beam ports. The physical dimensions of the LBP undergo a gradual increase in size as it extends from a modest area of

The LBP serves not only to transport cold neutrons for use in the NNBAR experiment through the target monolith but also leads to a substantial increase in radiation outside of it. Consequently, the NNBAR beamline require additional shielding to handle the increased dose rate compared to a standard ESS beamline. To determine the necessary amount of shielding, simulations of the entire NNBAR beamline, including the experimental cave and the beam stop, were conducted using the radiation transport code MCNP [108]. A detailed beamline model of the NNBAR beamline was constructed for these simulations using CombLayer [30]. The CombLayer software package allows the construction of geometry models in C

The complete simulation of a beamline, even in the case of a beamline like NNBAR, is computationally expensive. To make these calculations feasible, the simulation of the beamline was split up in separate simulations of different sections of the beamline. For each of these sections, inputs were generated using the duct source method [87], a standard variance reduction method for long beamlines. However, the unique characteristics of the LBP necessitated the development of a dedicated duct source method. Unlike most regular beamlines at ESS and other neutron facilities, where the neutron distribution can be reasonably assumed to be uniform over the area of their beam port, the large size of the LBP precludes such an assumption. Consequently, when generating the simulation input, it was essential to account for the correlations between neutron position, direction, and kinetic energy.

The coordinates of particles coming from the moderator were sampled to reproduce the distribution at 2 m from the source. The particles were then assigned momenta following the duct source method and kinetic energies following a uniform distribution. Finally, each of these tracks was assigned a particle weight based on the probability density function calculated from their position, momentum and energy in order to reproduce the original distribution [116]. All the beamline calculations presented in this section were made using this methodology.

Only the radiation doses caused by neutrons were calculated for the results presented in this section. Doses from other particles, e.g. gamma-rays, will be evaluated in the future. However, neutrons are expected to be the dominating source of radiation from the LBP and the NNBAR beamline so the shielding solutions presented here should be still be sufficient when taking other types of radiation into account.

NNBAR beamline simulation in the bunker area

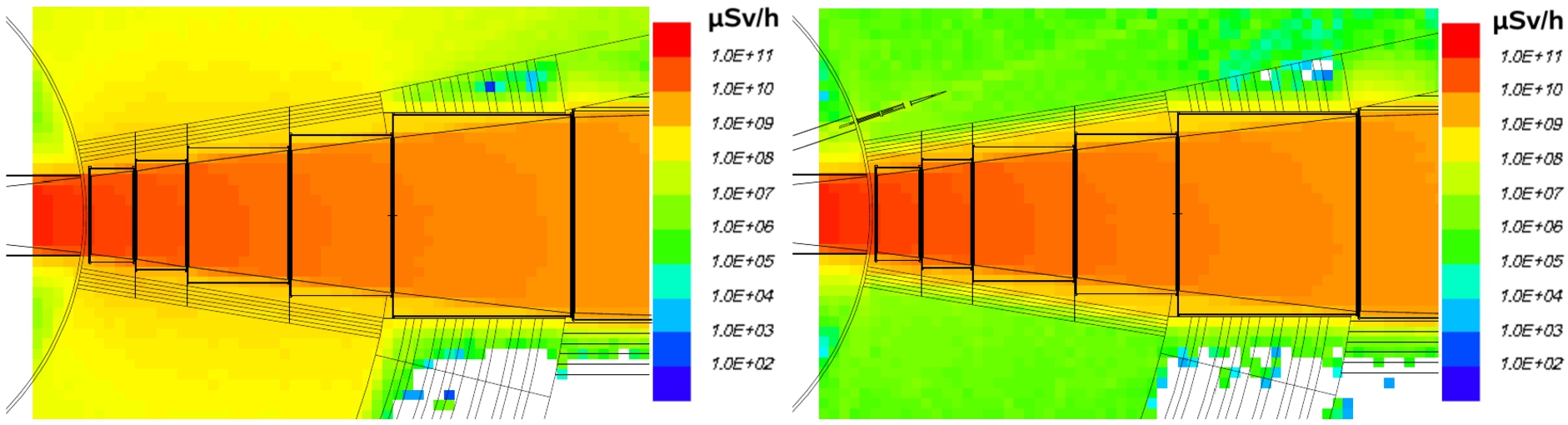

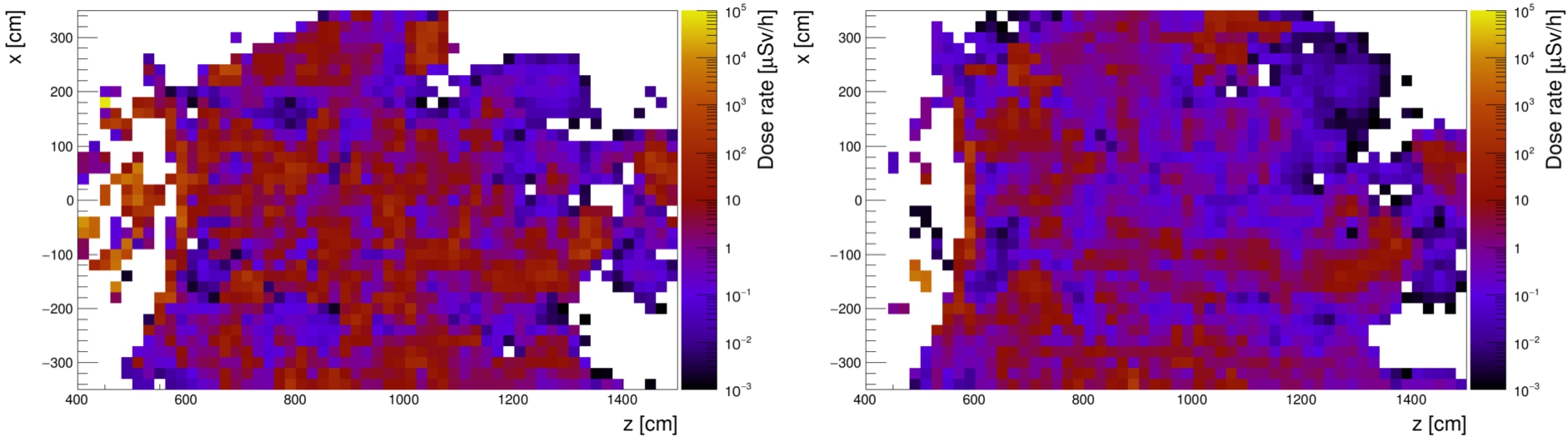

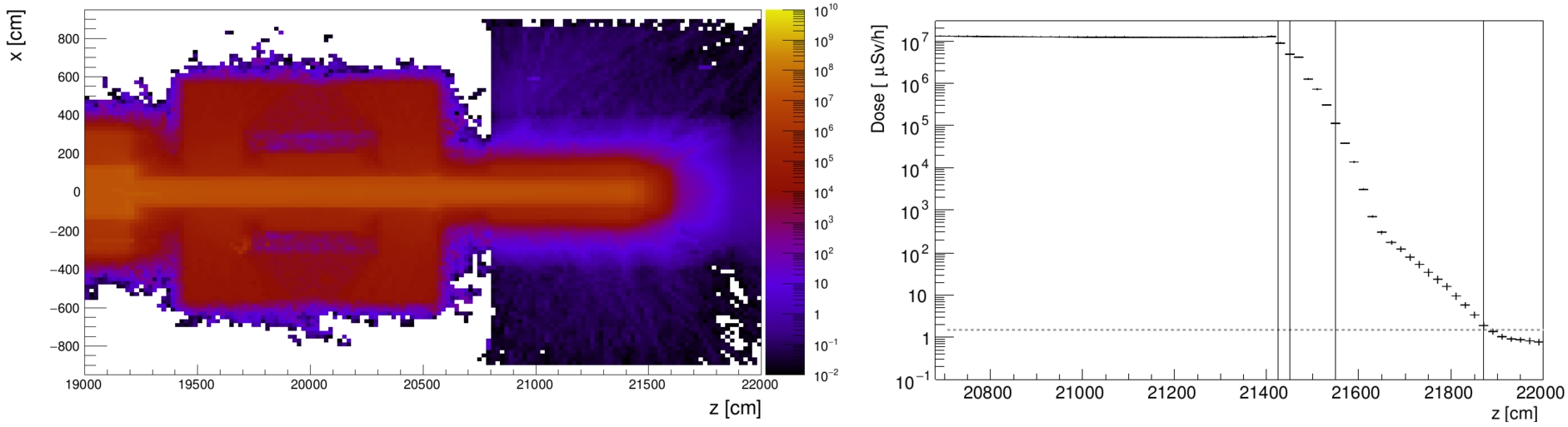

Radiation dose map of the inside the bunker close to the LBP. Left: radiation dose map without additional shielding. Right: dose map with 40 cm thick walls of heavy concrete around the NNBAR beamline.

The ESS bunker wall was designed with the objective of reducing the radiation dose outside it to a level below the threshold defined with respect to the supervised area at the ESS [189]. This threshold is set at

The current design of the bunker wall achieves this target value for the parallel use of 22 standard ESS beamlines at 5 MW without the need for shielding inside the bunker. However, in the case of the NNBAR experiment, due to the size of the LBP, shielding inside the bunker will be necessary. This is illustrated in the left panel of Fig. 6 which shows a neutron dose map of the bunker interior. The resulting dose in the vicinity of the beamline exceeds 100 Sv/h, several orders of magnitude higher than is expected from regular ESS beamlines.

The right panel shows the situation when the beamline is surrounded by 40 cm thick walls of heavy concrete. The dose outside the shielding is reduced by two orders of magnitude and is similar or somewhat lower than a standard ESS beamline. In Fig. 6 above the LBP, the first part of the guide for the HEIMDAL beamline is depicted. As can be seen, the additional shielding effectively reduces the background dose generated by the LBP to a level below that produced by HEIMDAL. Thus, it is evident that the dose has been reduced to a sufficient extent, allowing the bunker walls to shield it below the specified limit.

Dose map of the top of the existing ESS bunker roof when running the LBP.

The requirements for the bunker roof differ from those for the wall. Since personnel will not be working regularly on the roof, the established dose limit criteria by the ESS are slightly higher than those for the bunker wall. The limit is set at 25 μSv/h though, after applying a safety margin, this reduces to 12.5 μSv/h. A further factor taken into account for the design of the bunker roof is the dosage in other areas, such as the ESS main office building, due to skyshine. The limit for the dose received due to skyshine is 100 μSv/y [200]. Once again, the design of the bunker roof was selected to meet these limits when operating with the complete set of regular ESS beamlines. However, in the case of NNBAR, it will be necessary to add extra shielding in the area near the LBP. Figure 7 illustrates the situation without any additional shielding. The average dose on top of the roof directly above the LBP is calculated to be 3.3(4) mSv/h. A conservative empirically based estimate of the resulting skyshine can be made using Equation (7).

Dose map of the top of the existing ESS bunker roof with additional shielding of the LBP. Left panel: additional shielding of 70 cm inside of the bunker. Right panel: additional shielding inside the bunker (70 cm of steel) and on top of the roof (40 cm of heavy concrete).

The current study only investigated cases in which the existing roof itself is not modified. This leaves two options to add shielding to the bunker roof. The shielding can be added inside the bunker or on top of the existing roof. The size of the NNBAR beamline leaves space to add 70 cm of shielding material between the vacuum pipe and the bunker ceiling. In the current simulation, steel was chosen as material for this additional interior shielding. The left panel of Fig. 8 shows the dose map on top of the roof with this addition. The average doses are reduced to 16(2) μSv/h and the skyshine, calculated using Equation (7), to 8.5(9) μSv/y. This is at the edge of the dose limit criteria.

The right panel of Figure shows the dose map when also adding 40 cm of heavy concrete on top of the current bunker roof. The average dose is 5.0(7) μSv/h and the skyshine 2.6(4) μSv/y. While more detailed studies are required, this seems sufficient to fulfill the ESS dose limits. Further studies need to be conducted to fully assess how to integrate this additional shielding into the bunker structure. Additionally, a discussion of the possible inclusion of shutters in this area should be undertaken. This is discussed in Section 19.1.

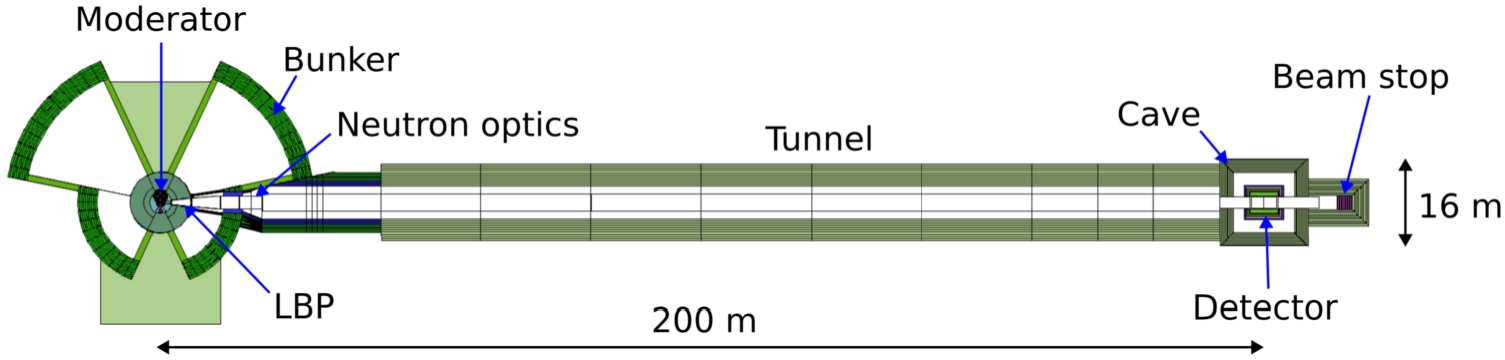

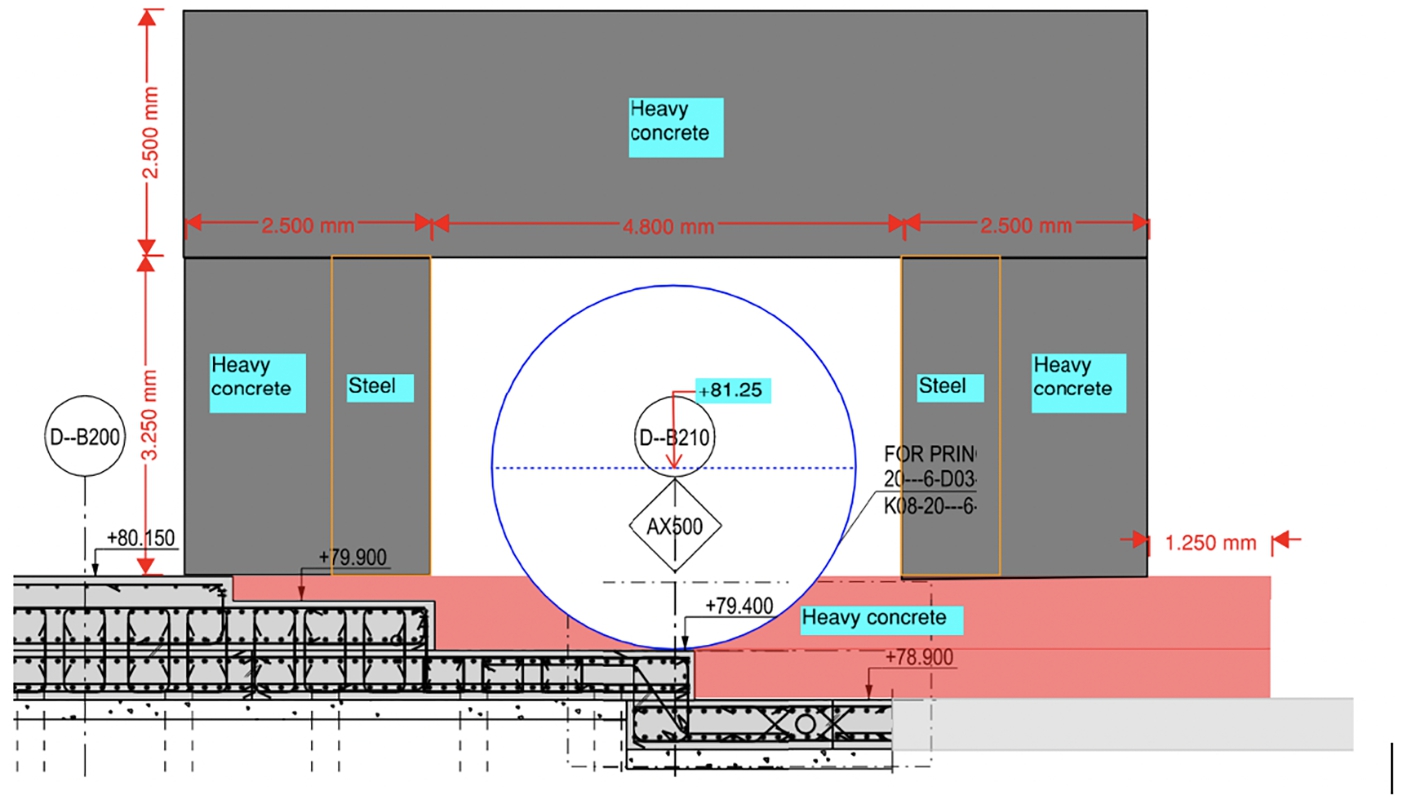

Detailed view of the CombLayer model of the NNBAR experiment for the region from the bunker wall until the exit of the experimental hall.

The construction of the NNBAR experiment implies the installation of a feed-through in the bunker wall, as well as the creation of an opening large enough to accommodate the NNBAR vacuum pipe and the magnetic shielding structure (see Section 11). Biological shielding must be sufficient such that the specified dose limits for the supervised area are not exceeded. The vacuum pipe has a rectangular cross section of 2.4 m × 2 m up to 18 m from the moderator. After this, the pipe has a circular cross section with a radius of 1.5 m. It is worth pointing out that existing instruments and infrastructure within the ESS instrument hall impose constraints on the dimensions of the shielding.

The model of the tunnel used in the simulations is shown in Fig. 1. Figure 9 provides a more detailed view of the region extending from 10 to 50 m from the moderator. The initial 25 m, starting from the bunker, remain within the confines of the D03 experimental hall (as shown in Fig. 7 of Section 1 of Reference [161]). Within this zone, the NNBAR tunnel’s wall configuration comprises 1 m of steel backed by 1.5 m of heavy concrete. This design was chosen to minimize interference with existing structures and adjacent experiments, notably the LOKI experiment located to the south of the NNBAR experiment. After leaving the bunker, the tunnel widens, providing both room for the magnetic shielding of the beamline and facilitating access to the tunnel for tasks such as vacuum maintenance.

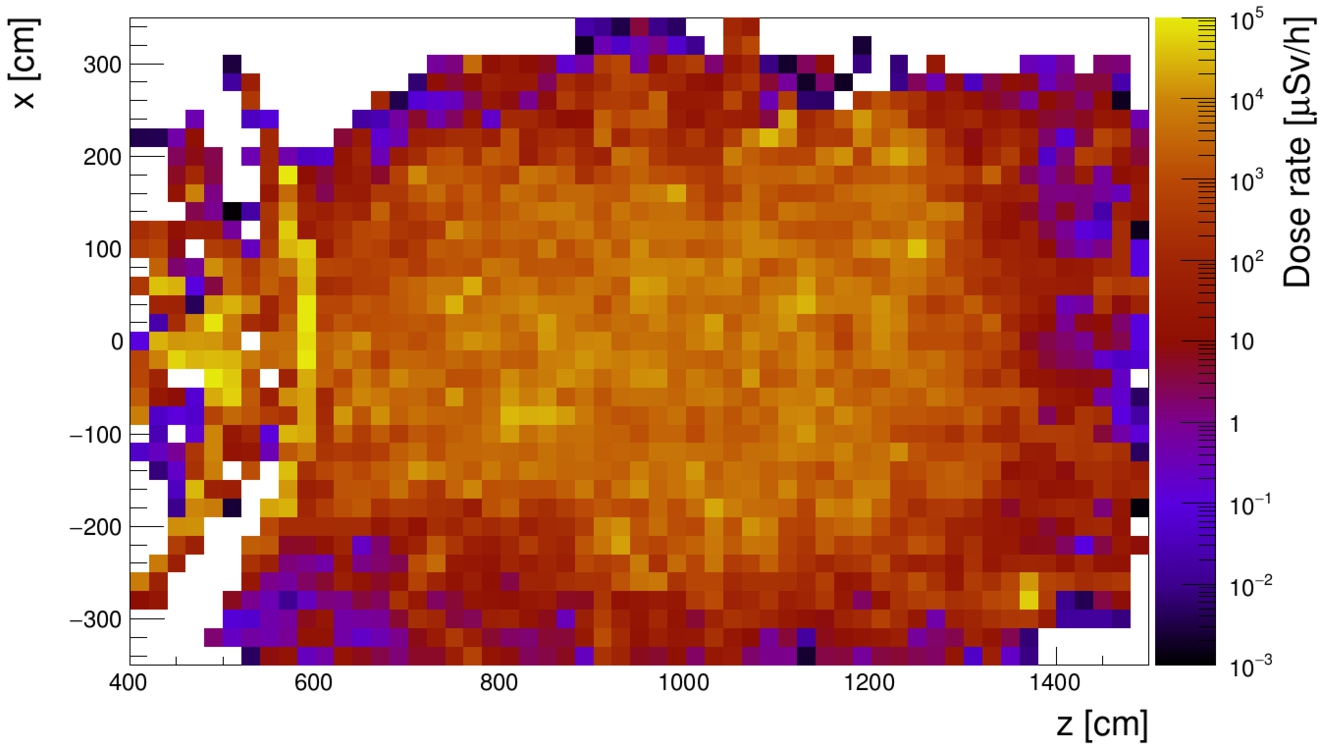

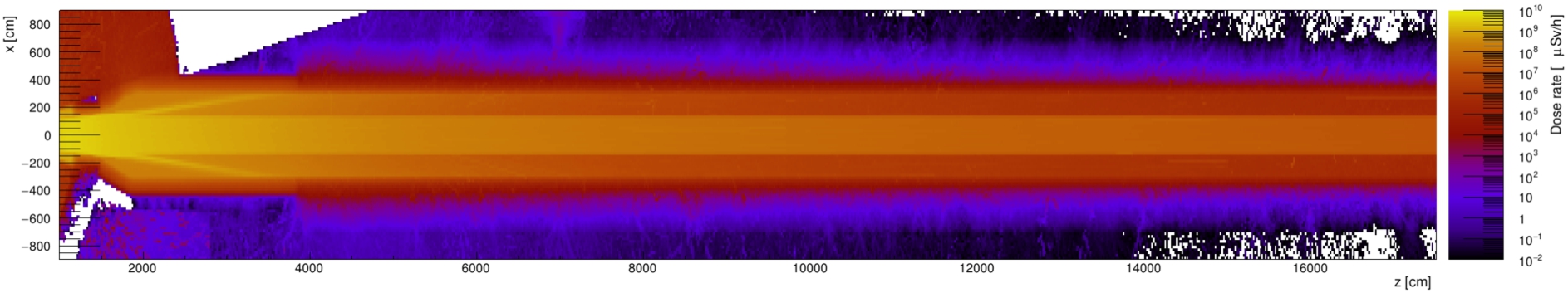

Dose map of the NNBAR tunnel.

Beyond the D03 experimental hall, there are fewer space constraints, allowing for the use of regular concrete as the wall material. In the simulations, a uniform wall thickness of 4 m was initially employed for the entire tunnel. However, the simulation results indicate that this thickness is not required along the entire length.

Figure 10 illustrates the dose map of the NNBAR beamline from the bunker wall up to 175 m away from the source. The dose rate remains below the limit of

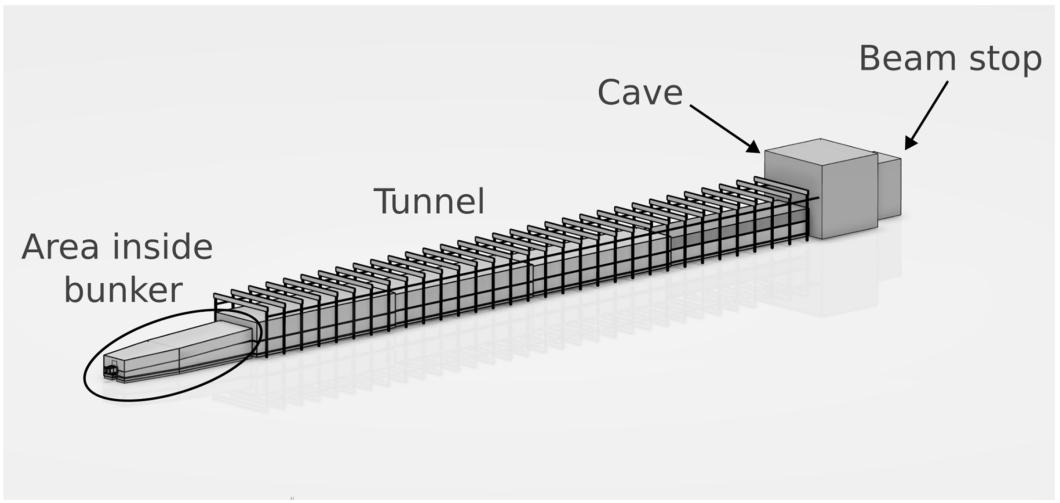

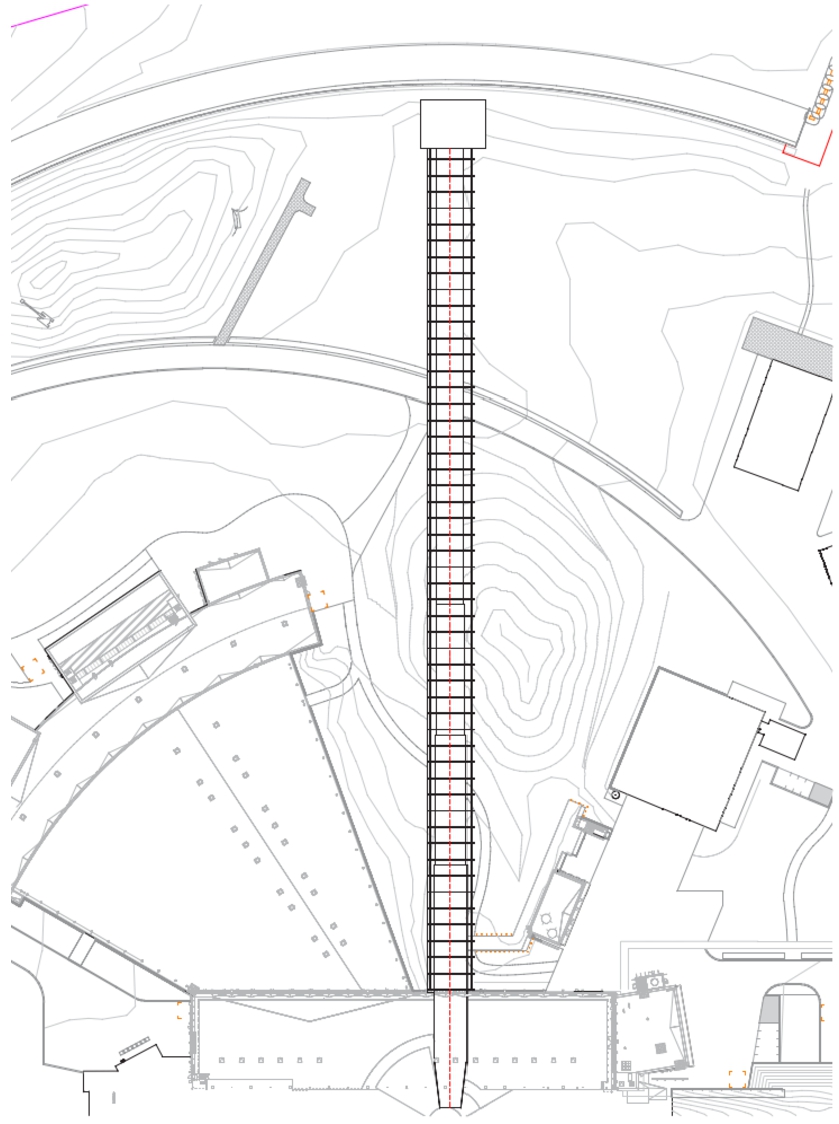

The shielding dimensions presented in this section have formed the basis of a CAD model of the NNBAR beamline. The model includes the area inside the experimental hall, the tunnel, experimental cave and beam stop. An overview of the model is shown in Fig. 11.

CAD model of the NNBAR beamline.

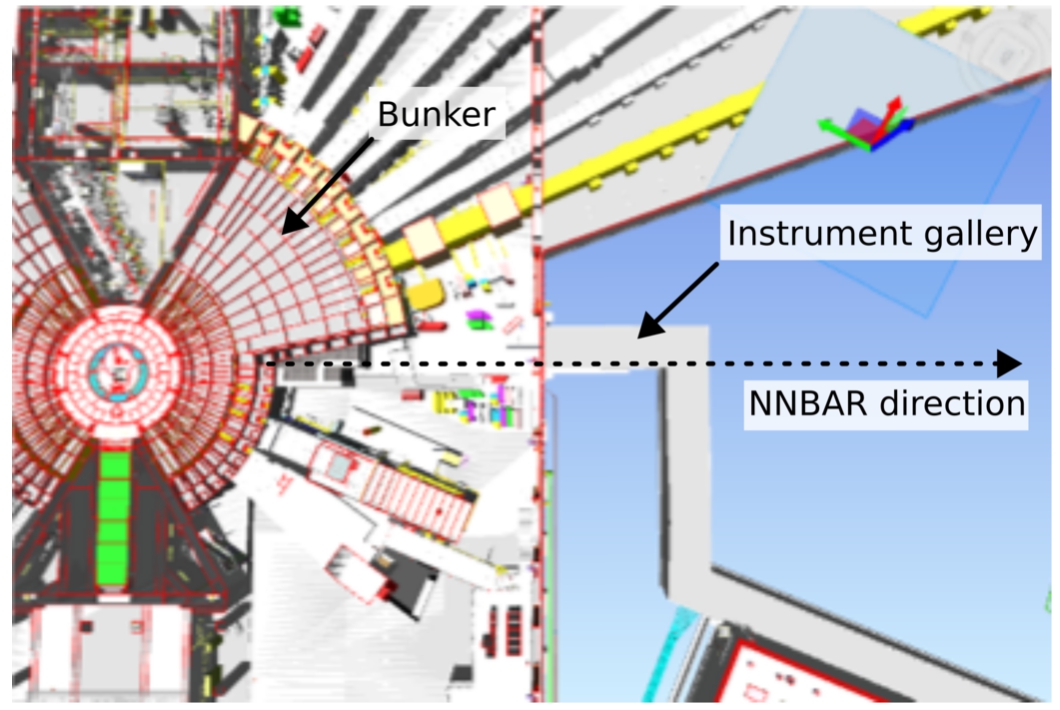

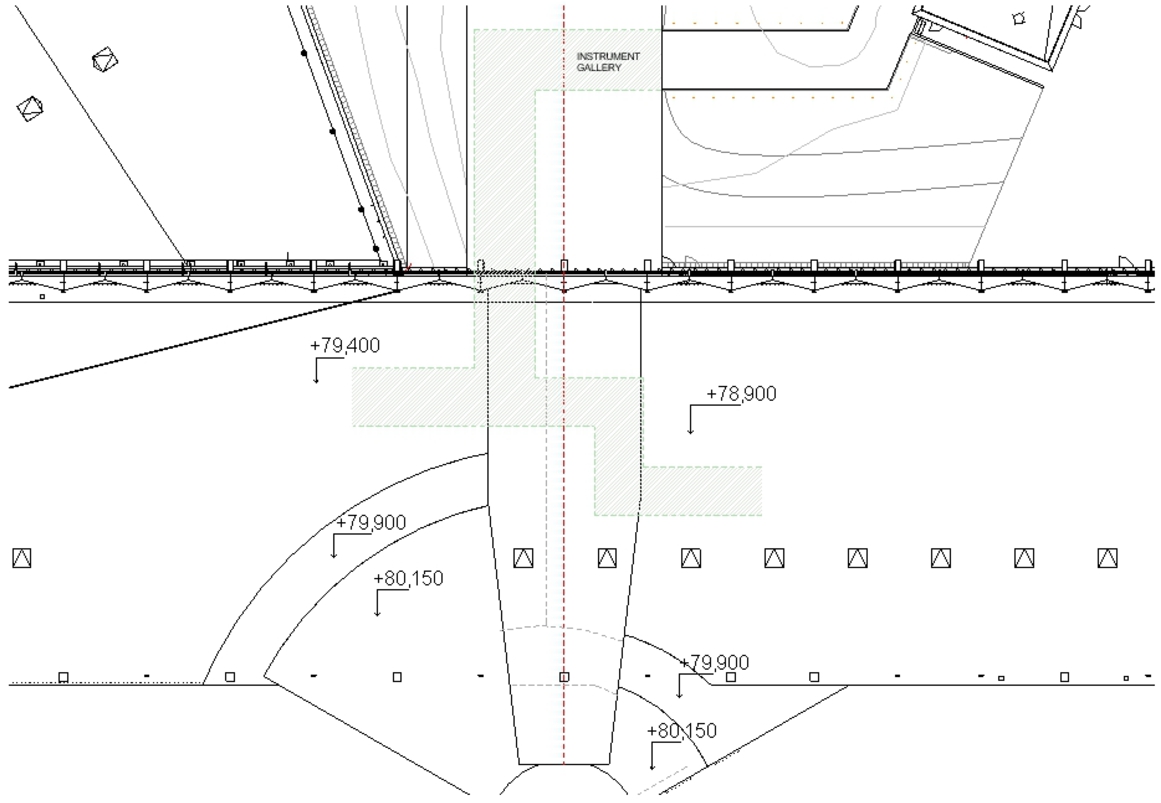

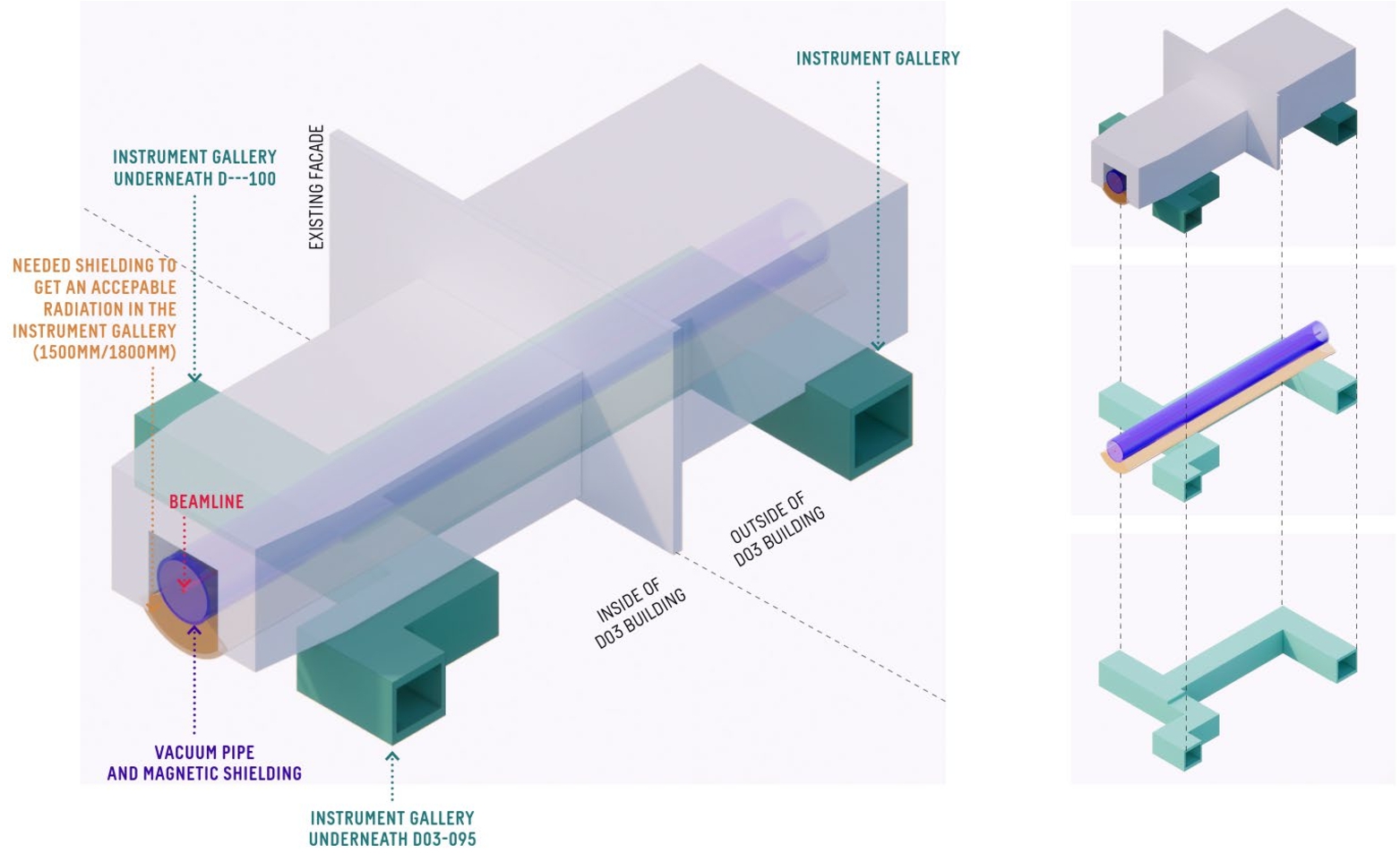

A particular challenge in designing the shielding for the NNBAR tunnel is the presence of the already existing instrument gallery located beneath the D03 building, as shown in Figs 12 and 13. The instrument gallery serves the purpose of housing supporting equipment such as ventilation systems and instrument-related pipes. Notably, the gallery intersects with the planned tunnel approximately 30 m from the moderator, takes a turn, and then runs parallel to the tunnel for approximately 25 m. The gallery has a roof made of 55 cm of regular concrete, which will require replacement and reinforcement. Additionally, to ensure adequate shielding, extra measures will be necessary in front of the gallery. This is discussed further in Section 19.1.

The instrument gallery located at the exit of the D03 building.

The interface between the NNBAR beamline and the ESS instrument gallery (circled in red).

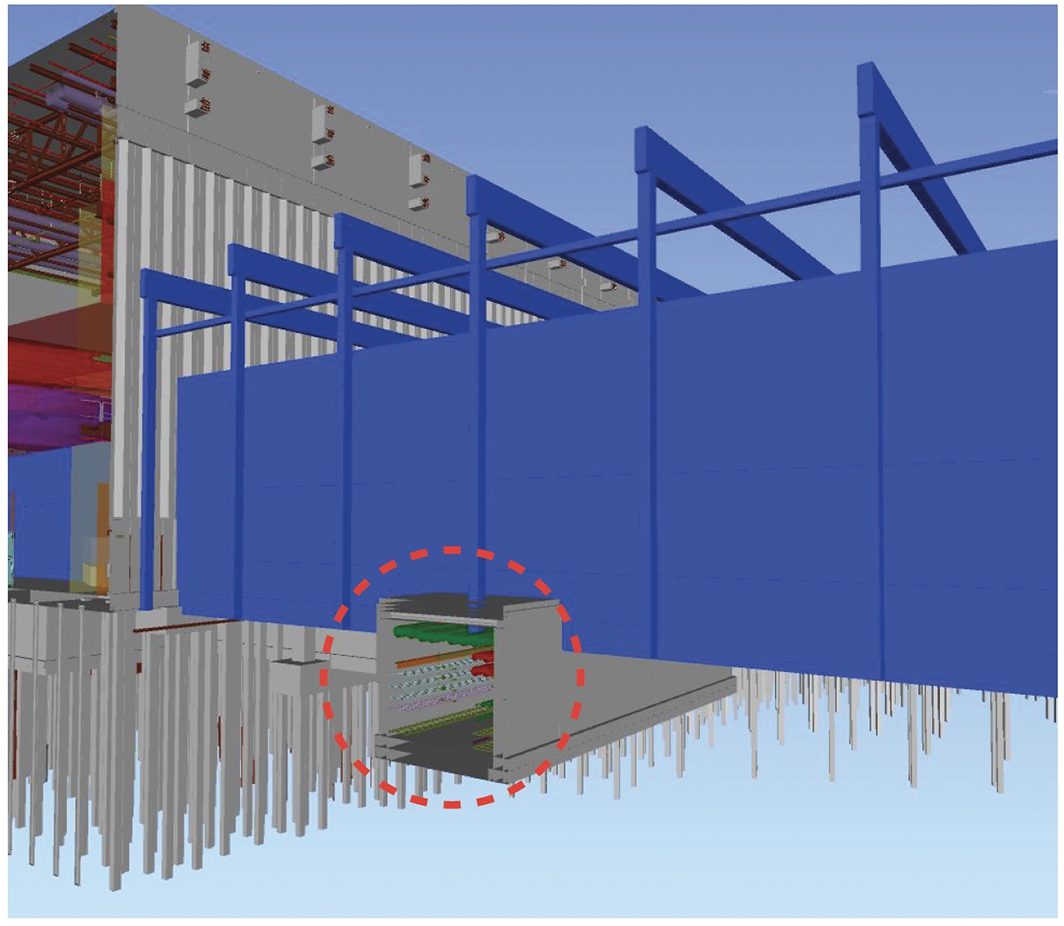

Dose maps for the NNBAR beamline and D03 instrument gallery for different shielding options. The investigated geometry is shown in the top row, the middle row shows the respective dose map, and the bottom row gives a projection of the dose map directly below the ceiling of the gallery for the respective geometry.

Different options for the gallery shielding are summarized in Fig. 14. In the left panels, while the gallery roof has been replaced, the dimension of the gallery has been kept the same and only the space available between the gallery and the NNBAR beamline has been used for additional shielding. In total, 105 cm of shielding have been placed above the gallery comprising 60 cm steel and 45 cm of heavy concrete. This is not sufficient to create a safe working environment, with the dose just below the ceiling of the gallery exceeding

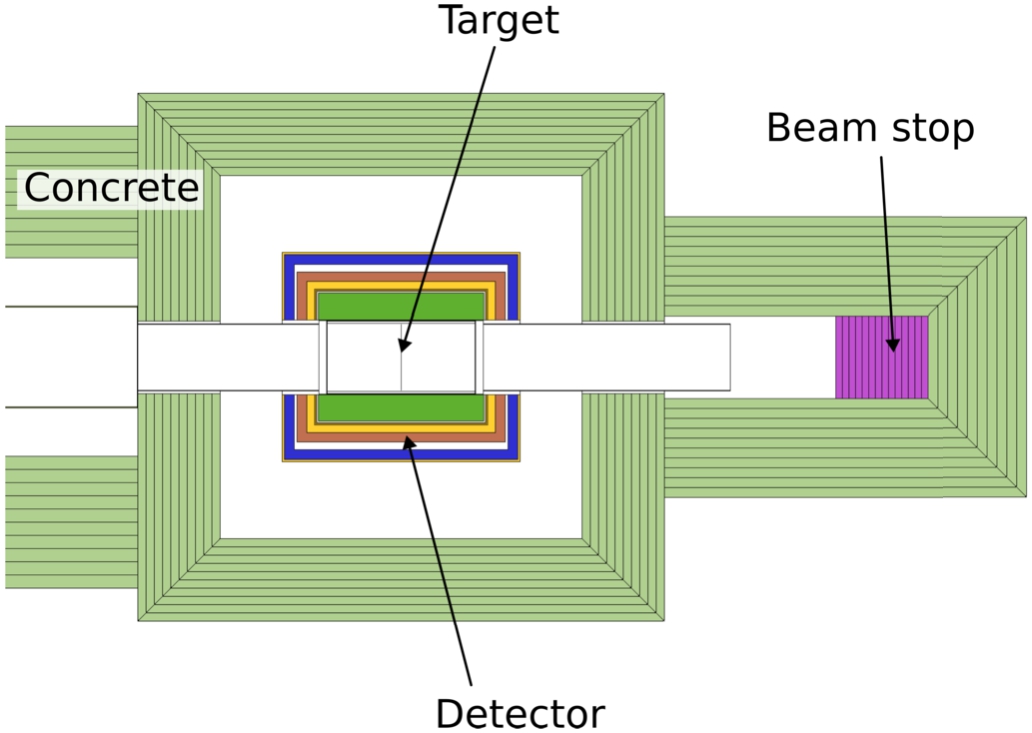

Model of the NNBAR cave and beam stop.

At the end of the NNBAR beamline, the annihilation target and the annihilation detector (as described in Section 13) will be positioned. This equipment will be housed in an experimental hall referred to as the ‘NNBAR cave’ (see Fig. 15). Within this hall, not only will the detector be situated, but it will also accommodate auxiliary electronics and support structures. The NNBAR cave will receive the full NNBAR beam and thus necessitate robust shielding and a beam stop to safely terminate the beam.

In the current model, the front wall of the NNBAR cave is located at a distance of 192 meters from the moderator. At this point, the beamline’s radius reduces from 1.5 m to 1.0 m. Importantly, the thickness determined for the far end of the tunnel (250 cm concrete or 175 cm heavy concrete) will also meet the ESS dose requirements for a supervised area. The beam stop will be positioned approximately 10 to 15 m from the annihilation target and is responsible for absorbing the entire neutron beam that travels down the NNBAR beamline. Therefore, it must have a diameter at least as large as that of the beamline itself. Various material combinations and thicknesses have been explored for the beam stop.

Left: dose map of the NNBAR cave and beam stop. Right: projection of the dose map at the center of the beam stop.

In Fig. 16, a dose map is presented for a beam stop configuration comprising a thin top layer (0.5 cm) of B4C, supported by 25 cm of polyethylene, 100 cm of steel, and 320 cm of concrete. The projection illustrates that this configuration is effective in reducing the dose to below

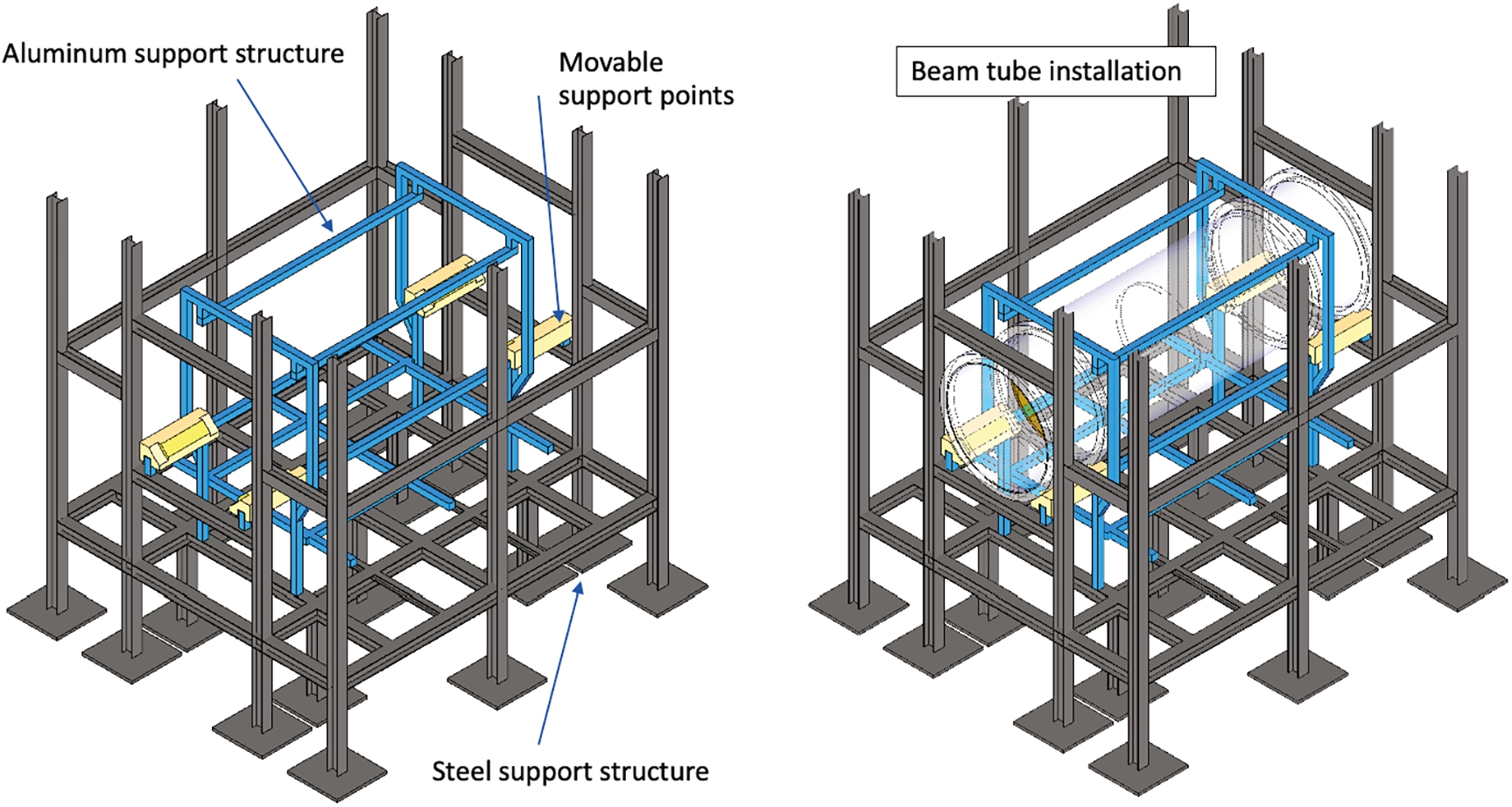

The construction of the NNBAR beamline will require a redesign of some parts of the existing facility that may interfere with the proposed beamline. In order to understand the extent of potential interference between NNBAR and ESS infrastructure and evaluate associated construction work, a civil engineering project was conducted as part of the HighNESS project. This project was a collaborative effort involving the NNBAR team, the ESS Facility Management division (FM), and the SWECO company [193]. It aimed to determine the required length of the NNBAR beamline, assess its structural requirements, and analyze factors such as width, height, and force loads related to shielding and surrounding structures.

Modifications to the bunker area and the D03 instrument hall

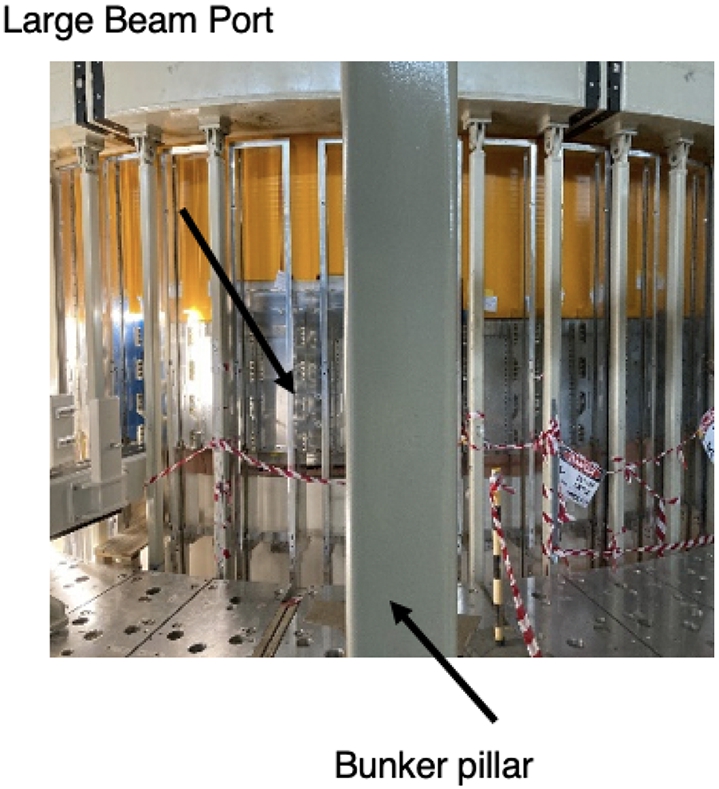

The first 15 m of the NNBAR beamline will be placed inside the neutron bunker (see Section 1 of Reference [161]) in the position currently occupied by the ESS Test Beamline. This beamline will be used in the early days of the ESS project to characterize the neutron source but could later be moved to a different location to allow the construction of the NNBAR experiment. The significantly larger size of the NNBAR beamline will introduce interference with the existing steel structure supporting the bunker roof as shown in Fig. 17.

Interference between the bunker steel structure and the NNBAR beamline.

This problem may find a solution as the steel structure is planned to undergo significant modifications. These modifications are necessary due to the additional shielding requirements for the bunker roof, driven by the radiation contribution from the NNBAR beamline (see Section 8.1). Additionally, modifications to the north sector bunker wall (see Section 1 of Reference [161] for an explanation of the ESS sectors) will be required to accommodate a sufficiently large feed-through.

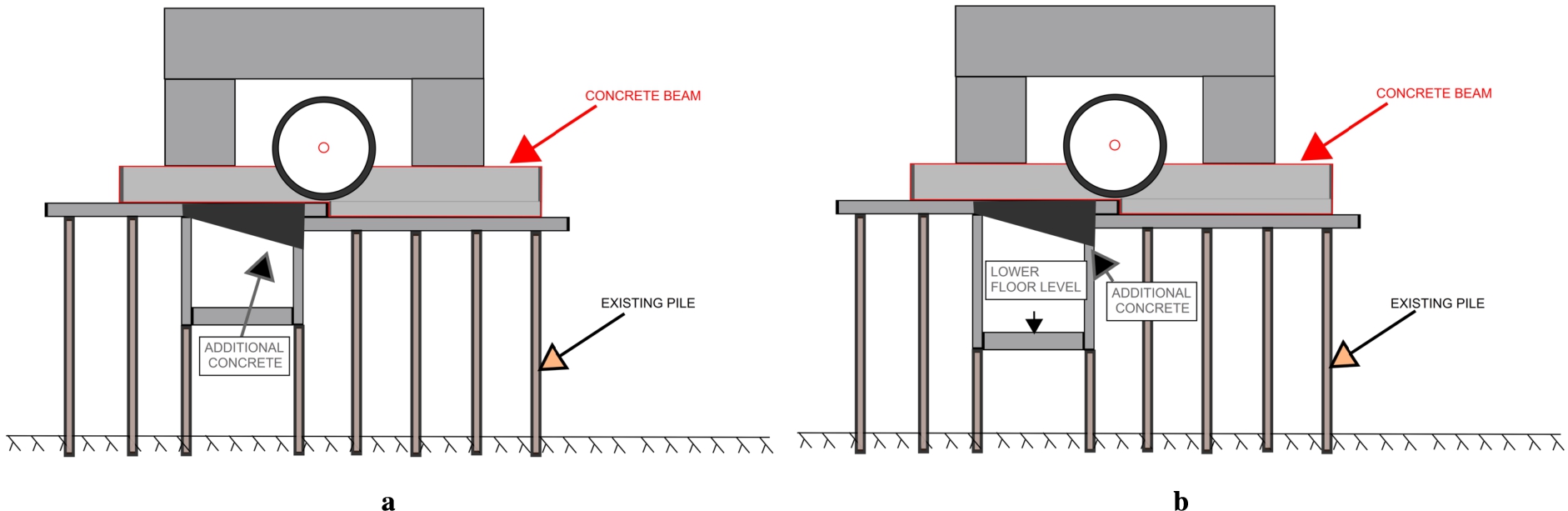

Beyond the bunker, the subsequent 21 meters of the beamline will be situated within the experimental hall of the D03 building. The existing concrete slab of the building has a load capacity of 14 t/m2 (10 t/m2 close to the facade) which will prove inadequate to support the heavy load required for the beamline shielding, as discussed in Section 8.2. Consequently, the reinforcement of the floor will be necessary. This entails the addition of an extra distribution slab, measuring 750 mm in thickness towards the west sector and 1250 mm for the north sector section outside the bunker area as shown in Fig. 18.

Proposed floor reinforcement solution for the NNBAR beamline for the D03 area.

Furthermore, the length of the beamline makes it necessary to cross the already existing D03 building facade. Given the substantial height and width of the NNBAR beamline and its shielding, it is likely that modifications to the anti-seismic steel structure frame and a section of the building foundation will be necessary. This is because one of the building pillars is situated in the path of the new beamline. However, it should be pointed out that the structure has been designed to accommodate the accidental removal of any of these individual columns. This was achieved by designing the truss structure to span a double bay, allowing for the accidental removal of one of the columns in the experimental hall. This design ensures that the structure is robust enough to withstand unforeseen events, such as the loss of a column due to incidents like a vehicle collision or a swinging crane load. Furthermore, this design approach offers a degree of future flexibility, as it allows for the intentional removal of one of the columns during structural alterations, as would be the case with the NNBAR beamline. In such a scenario, it will be necessary to reinforce the columns, crane beam, brackets supporting the crane beam, and the walkway support beams on adjacent columns (typically two or four). An independent analysis should assess the feasibility of this undertaking.

Interference between the NNBAR beamline and the ESS circular road for a beamline of length 300 m.

The proposed NNBAR beamline has a total length of 200 m, with approximately 160 m extending outside the D03 building before reaching the NNBAR cave (see Fig. 12 of the HighNESS CDR Volume I). While the optics studies in Section 10.9 determined that the 200 m design provides the best trade-off for experiment sensitivity, this section examines the implications of a longer beamline from a civil engineering perspective. As depicted in Fig. 19, an extension of the beamline beyond 200 m would imply intersecting with the circular road encircling the ESS area, necessitating a rerouting of the road. When considering the circular road as a boundary, a detailed analysis of the area near the NNBAR beamline confirmed that there are no conflicts between the beamline foundation and local utilities or subservices. However, a more thorough analysis is required to assess whether local modifications to the foundation are needed to support the loads of the shielding and the instrument cave. Furthermore, an investigation into the necessity of downward shielding to ensure the safety of subservice contents is pending. Nonetheless, given that the subservices are situated at a substantially lower level than the instrument gallery, as discussed in Section 9.3, it is very likely that this is not a significant concern.

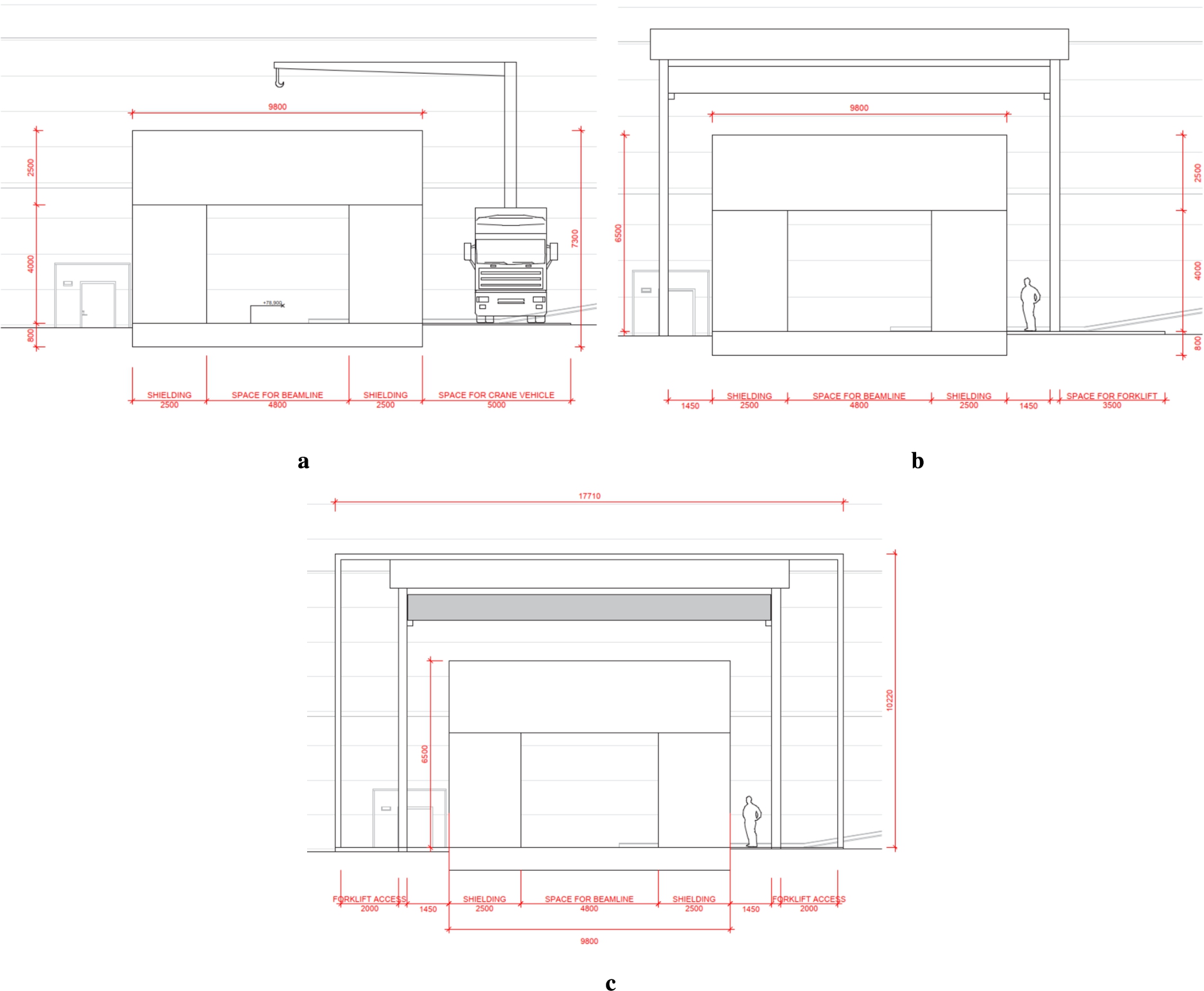

Different options for the NNBAR tunnel structure. A: shielding without weather protection and a crane vehicle. B: shielding and traverse-crane with roof. C: shielding, traverse-crane and full weather protection.

As presented in Section 8, the necessary shielding thickness outside the D03 hall depends on the chosen material and decreases over the length of the beamline. For regular concrete the required thickness ranges from 400 cm to 250 cm, while for heavy concrete, it ranges from 250 cm to 175 cm. The use of heavy concrete is associated with a higher cost of material. A further study of the structure surrounding the shielding resulted in three possible options, as illustrated in Fig. 20 using the dimensions for heavy concrete. Figure 20a depicts the simplest option, which consists of a shielding layer without any additional weather protection and an autonomous crane vehicle for moving shielding blocks. Further investigation is needed to determine whether it is feasible to leave the structure unprotected, as exposure to water and wind could lead to damage. This option would require the construction of a service road along the entire length of the beamline. Nevertheless, it is likely the most cost-effective option in terms of construction. The second option is shown in Fig. 20b, consisting of shielding and a traverse-crane going along the entire structure, with a simple roof structure as weather protection. This option would also require a service road, but not of the same magnitude as for the first option, as is it would not need to be adapted for such heavy vehicles.

Lastly, in Fig. 20c, you can see the configuration featuring shielding and a traverse-crane, all enclosed within full weather protection, effectively creating an annex-building connected to the D03 instrument Hall. To accommodate incoming truck or forklift vehicles, the internal communication pathways must be sufficiently wide. This solution offers the advantage of simplified communication between D03 and the NNBAR experimental cave through internal walkways. However, this construction imposes different requirements on installations, such as ventilation, electrical systems, and plumbing.

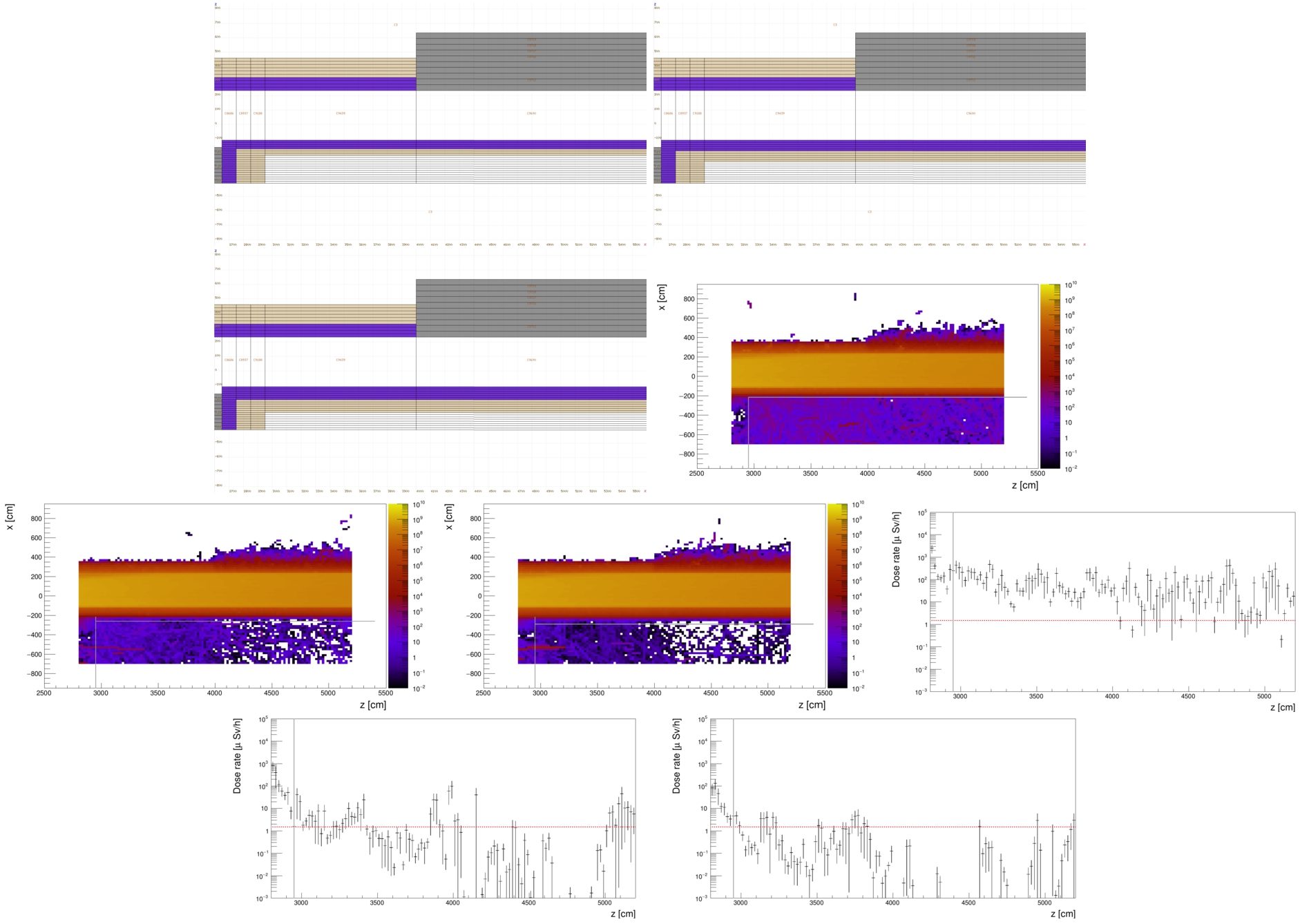

Position of the instrument gallery in relation to the beamline. The instrument gallery is shown in green and the beamline in red.

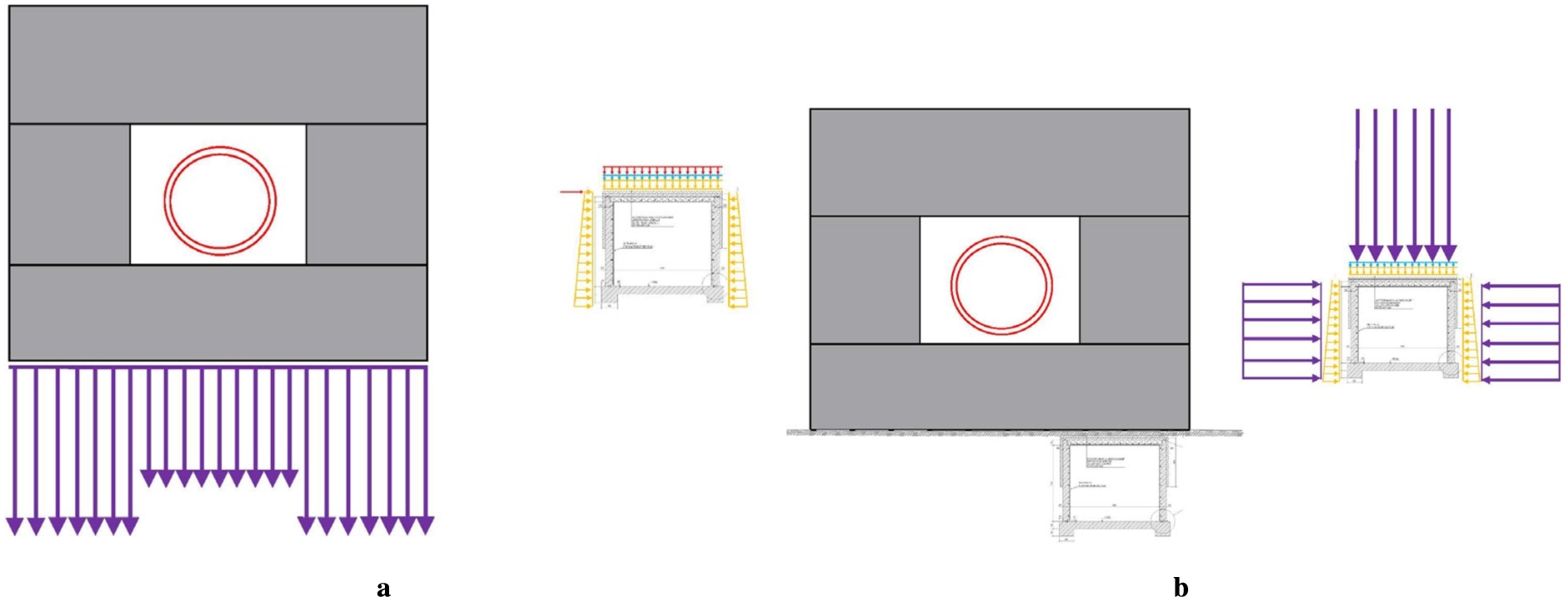

As discussed in Section 8.3 and illustrated in Fig. 13, the position of the instrument gallery intersects with the NNBAR beamline, resulting in overlapping structures at multiple points (see Fig. 21). This situation presents a complex engineering challenge, as it requires both shielding the instrument gallery from the radiation emitted by the beamline and managing the load transferred from the beamline to the gallery.

Position of the instrument gallery in relation to the beamline. The instrument gallery is shown in green and the beamline in red.

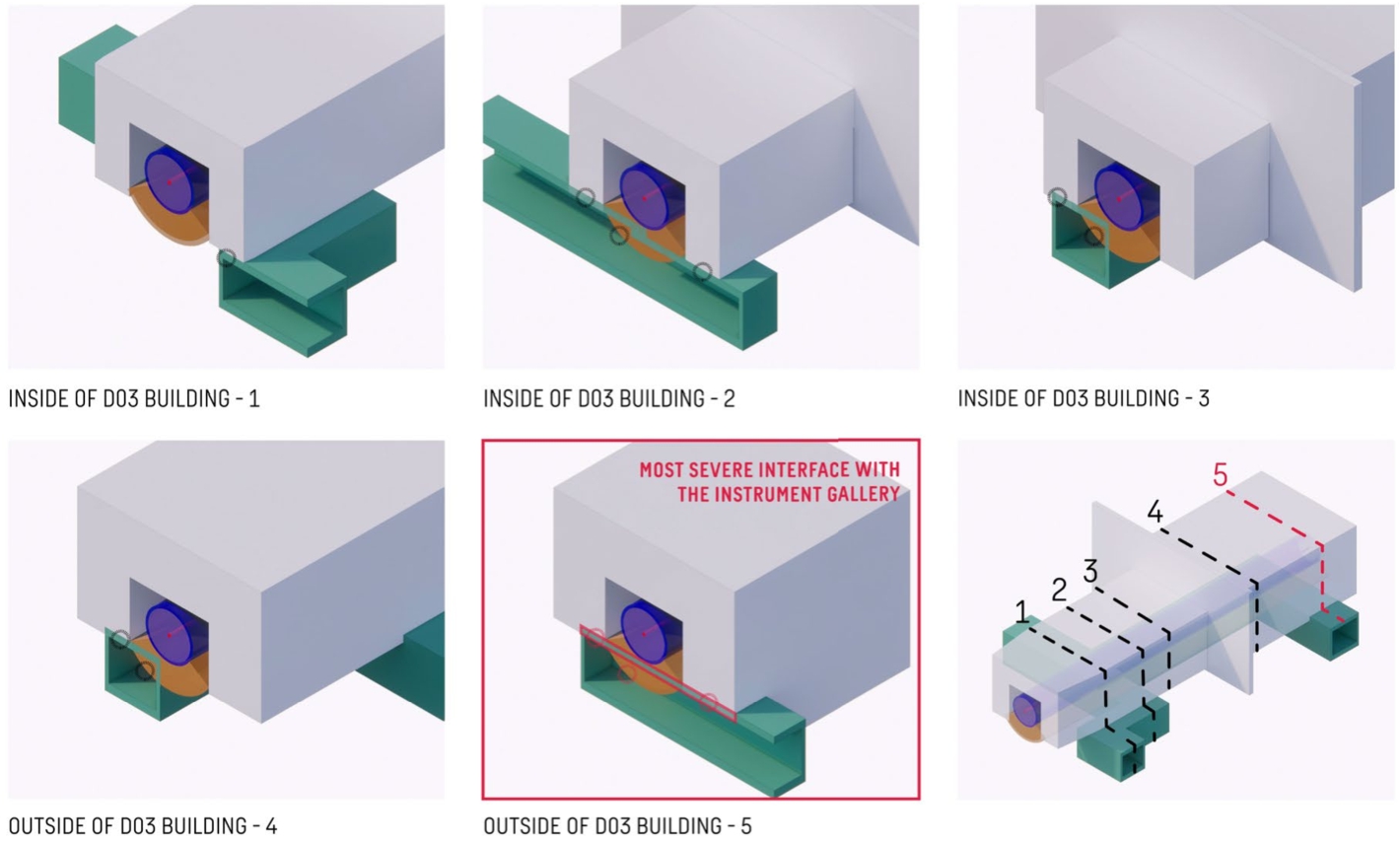

Interfaces between the instrument gallery and the NNBAR beamline. Conflicts are highlighted with circles.

As was shown in Section 8.3, between 150 cm and 180 cm of shielding material (half steel, half heavy concrete) is required to reduce the radiation from the NNBAR beamline to an acceptable level in the instrument gallery. Figure 22 shows a 3D-sketch of the beamline (purple) and the gallery (green). The orange volume shows the space needed for the shielding. The pictures on the right side of Fig. 22 show the disassembled beamline, to give an impression of the relation between the instrument gallery and the beamline. It is clear that there is not enough space in between for the shielding. This is also illustrated in Fig. 23, where the interface between the gallery and the beamline is shown in five different positions. Circles highlight the conflicts between the two. This leaves two options, raising the beamline or adding shielding inside the instrument gallery which would require the rerouting of existing pipes.

Figure 23 highlights not only direct conflicts between beamline and gallery but also issues due to the load caused by the shielding. The study of how much load the instrument gallery can manage was conducted in parallel.

The current loads on the instrument gallery, which were the base for its design.

A: estimated loads from the NNBAR shielding. B: loads from the shielding in relation to the instrument gallery design loads.

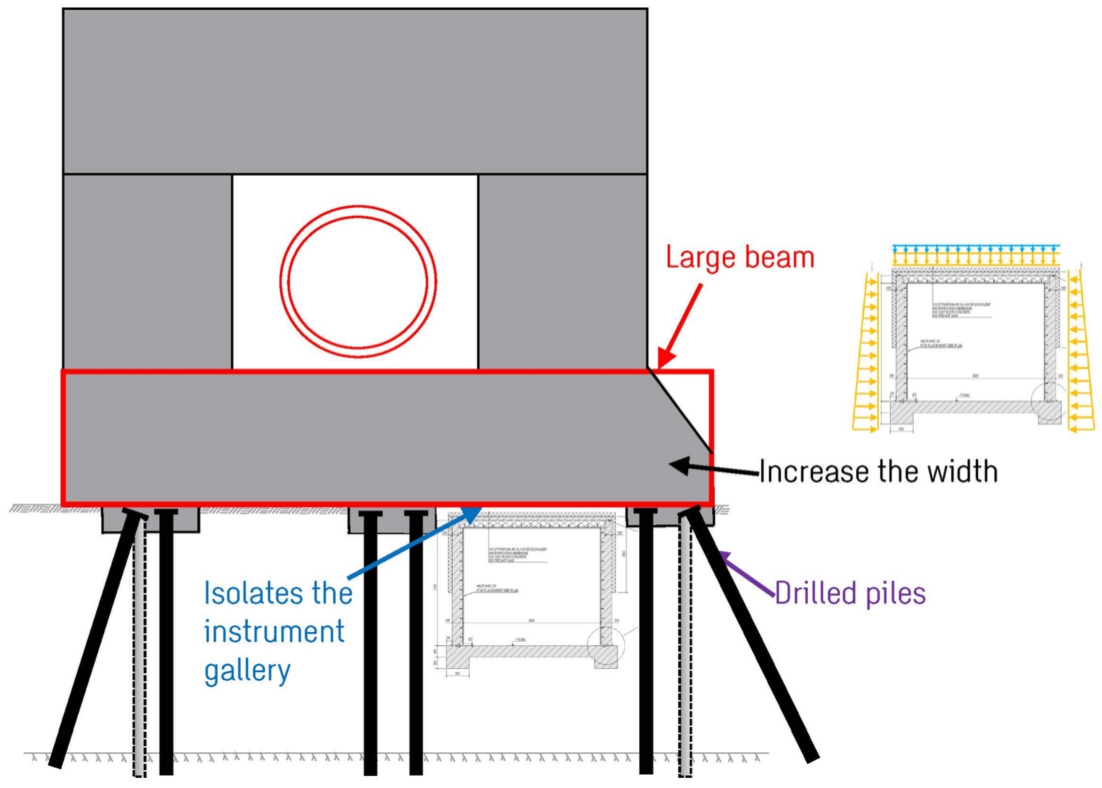

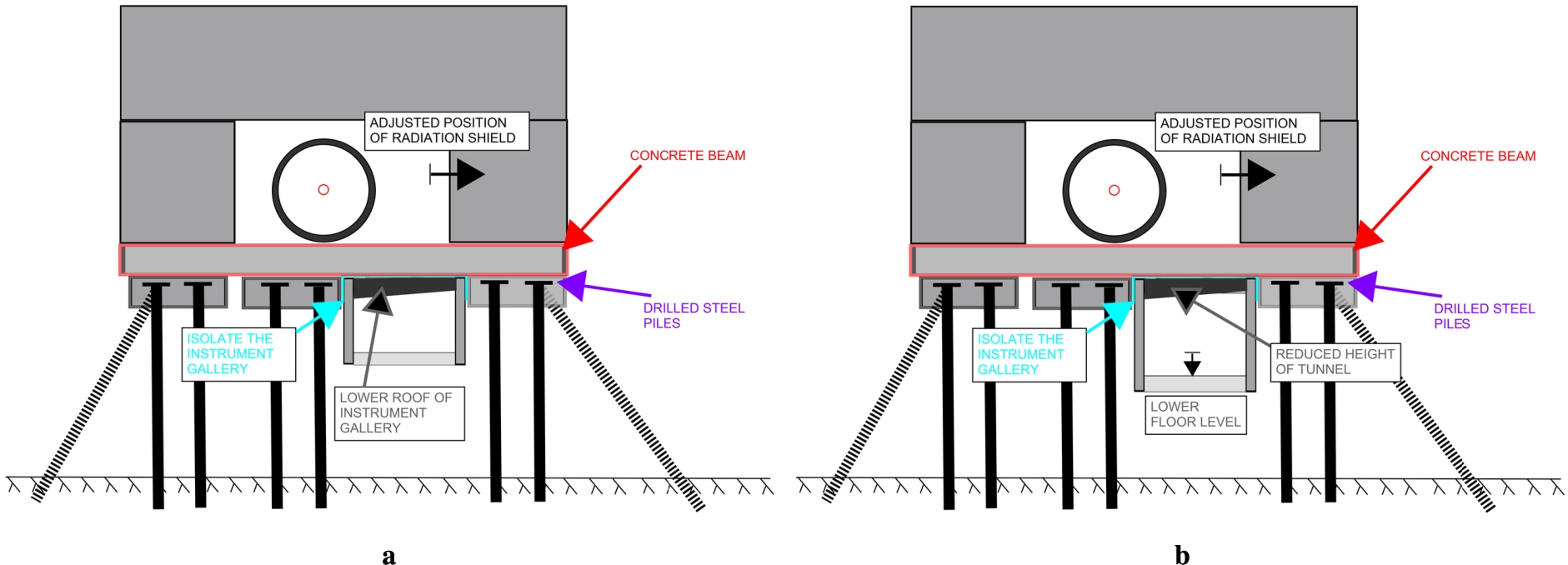

When the instrument gallery was initially designed, it was based on expected loads at that time, as shown in Fig. 24. Comparing this to the load produced by the NNBAR shielding in Fig. 25, it becomes evident that the additional load exceeds the gallery’s capacity. Consequently, the new construction around the beamline must be designed to support this additional load. The principle for solving the problem is illustrated in Fig. 26. A substantial beam, supported by drilled steel piles, can effectively transfer the loads around the instrument gallery to the bedrock, isolating the gallery from the new loads.

Sketch of the proposed solution for removing the load from the ceiling of the instrument gallery.

Two possible solutions for the interface between beamline and instrument gallery outside the D03 hall. A: only the ceiling of the gallery is lowered. B: both ceiling and floor of the gallery are lowered.

Two possible solutions for the interface between beamline and instrument gallery inside the D03 hall. A: only the ceiling of the gallery is lowered. B: both ceiling and floor of the gallery are lowered.

Additionally, the beam serves as shielding for the instrument gallery. Figure 27 shows a more concrete example for how to implement this in the case of position 4 from Fig. 23, situated outside the D03 hall. The same principle is shown in Fig. 28 for inside the experimental hall (position 3 in Fig. 23). In each figure, two possible solutions are presented, both featuring a concrete beam positioned between the gallery and the beamline, supported by drilled steel piles. Lowering the gallery ceiling is necessary to implement these solutions, and additional shielding material is placed inside the instrument gallery in both cases. In the simpler approach, apart from lowering the ceiling (Figs 27a and 28a), the instrument gallery floor remains unchanged. However, it might be necessary to retain the current size of the gallery and to also lower the gallery’s floor. Figures 27b and 28b therefore show a scenario in which the floor of the gallery is also lowered.

The NNBAR reflector system plays a pivotal role in achieving the goal of a performance increase of three orders of magnitude compared to the previous experiment. The primary requirement for the reflector is to efficiently collect the highest fraction of neutrons emanating from the LBP and direct them through the magnetically shielded region toward the annihilation target situated around 200 meters away. Given its size and stringent requirements, this optical system is likely to be one of the most intricate ever developed in the field of neutron research. The design and optimization of this system are detailed in the following section.

The optimization of the focusing reflector

In the optics simulations in this paper, calculations of the FOM are made using the exact uninterrupted flight time

To facilitate comparison with the previous ILL experiment [43], the FOM is normalized following the procedure described in Reference [15]. The ILL experiment ran for one year. As a result, the FOM is quantified in terms of ILL units per year. A value of

The sensitivity

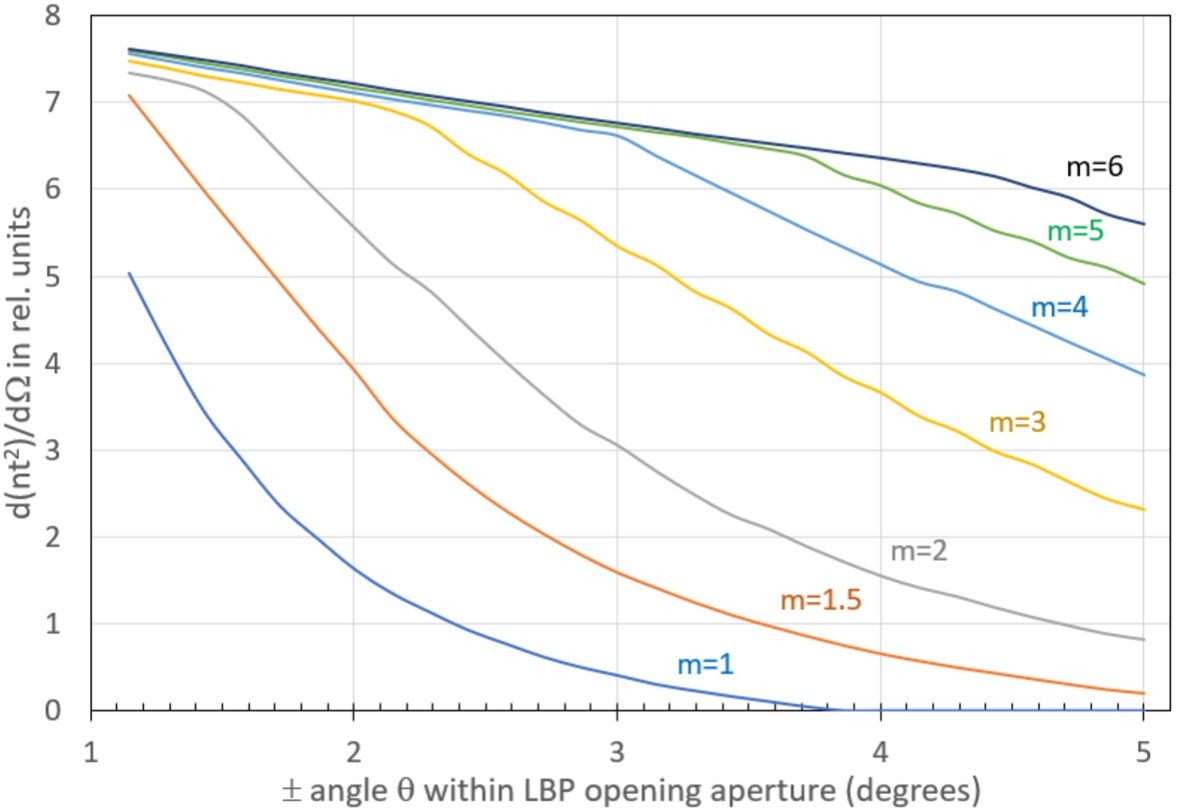

With the above discussion of sensitivity in mind, the concept of the elliptical focusing reflector [123] can be used. Lambertian brightness emission from a cold neutron moderator surface can be intercepted by a large open aperture and super-mirror reflector elements installed within this aperture. This directs neutrons to the annihilation target by a single reflection. An important performance parameter of super-mirror reflectors is the m-value [79]. Given an aperture opening of

Gains in FOM that can be achieved by increasing the m-value of a nested mirror reflector that covers an opening of

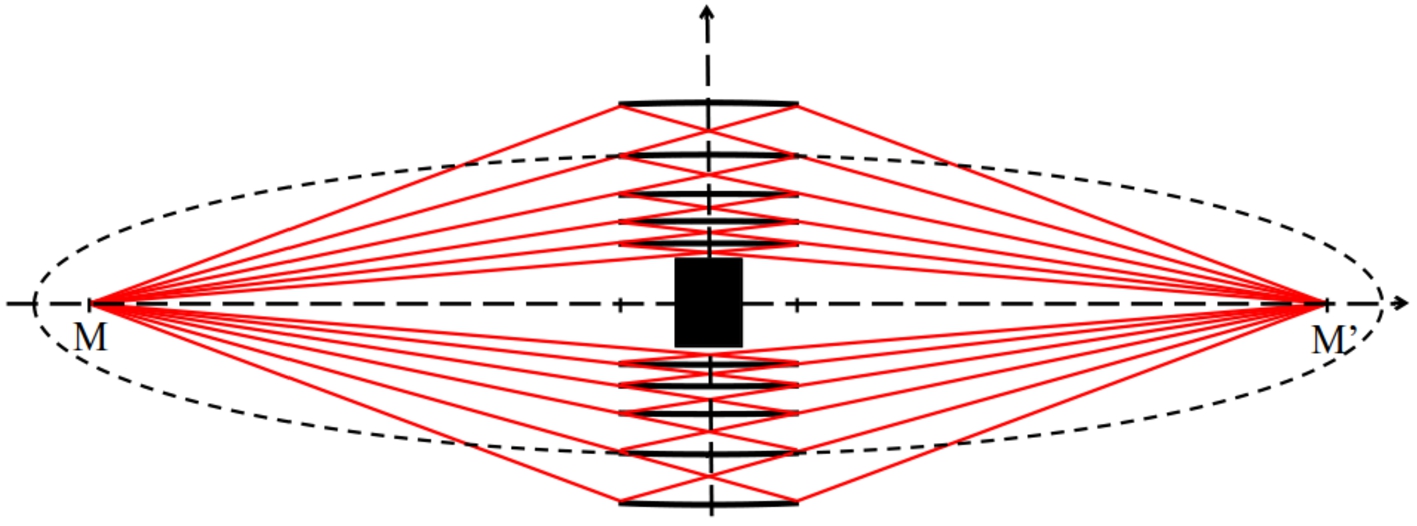

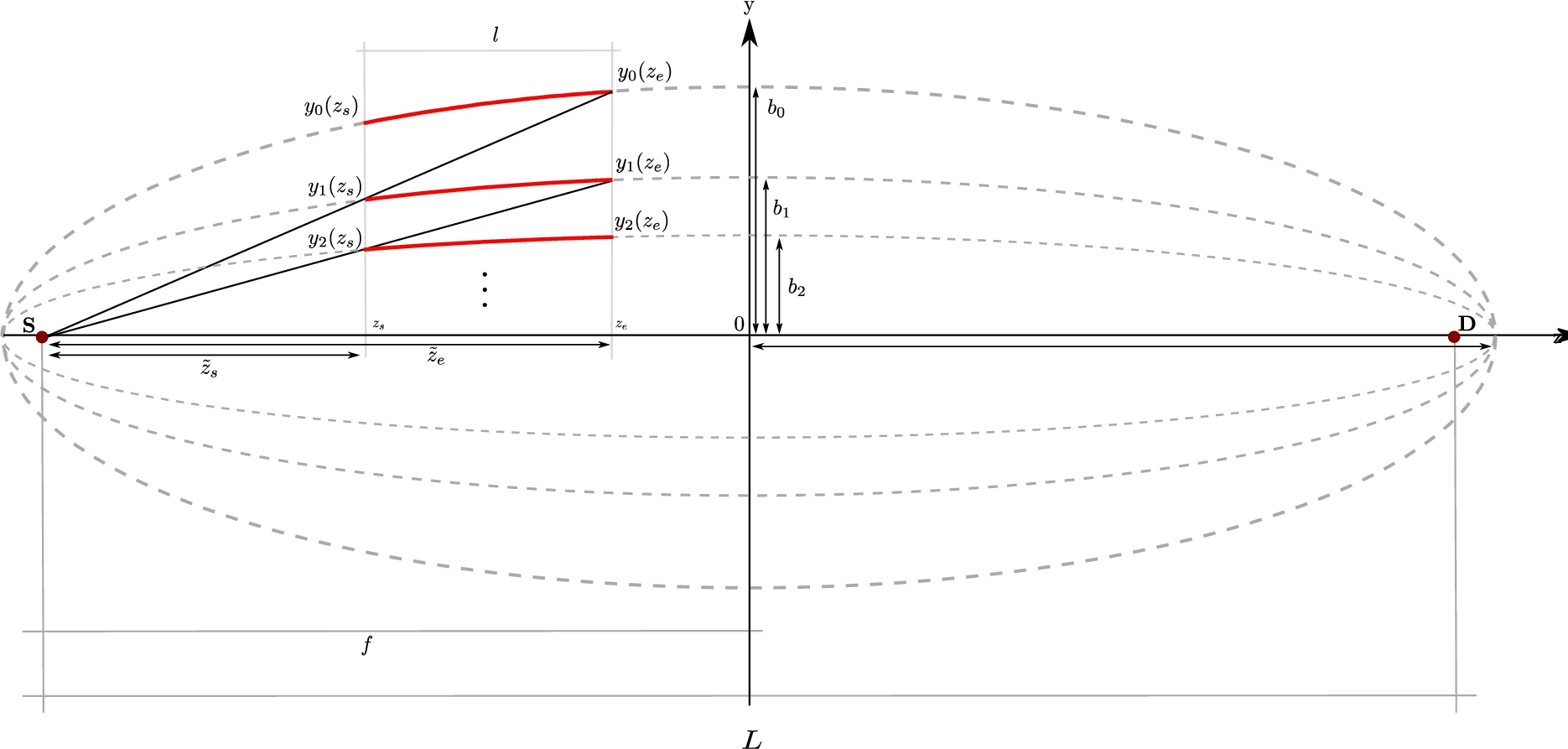

A possible architecture which transports neutrons diverging from a source to a detector is an elliptical guide. The surfaces of such a device have the shape of an ellipse where the focal points coincide with the centre of the source and the detector, respectively [201,202]. An ellipse has the optical property that a beam that emanates from one of its focal points is reflected directly to the other one. Since this feature does not apply to rays starting not at the focal points, the ellipse is therefore a non-imaging device. Nested layers of several guides are able to build up a spatial tight optical component. If the outer layer of such a nested elliptical guide is given, the inner layers can be designed in a recursive manner such that the layers will not shadow themselves. In the diagram shown in Fig. 30 the construction principle is shown. The finite size of the optical layers is currently not taken into account.

Schematic of a nested elliptical guide. M and

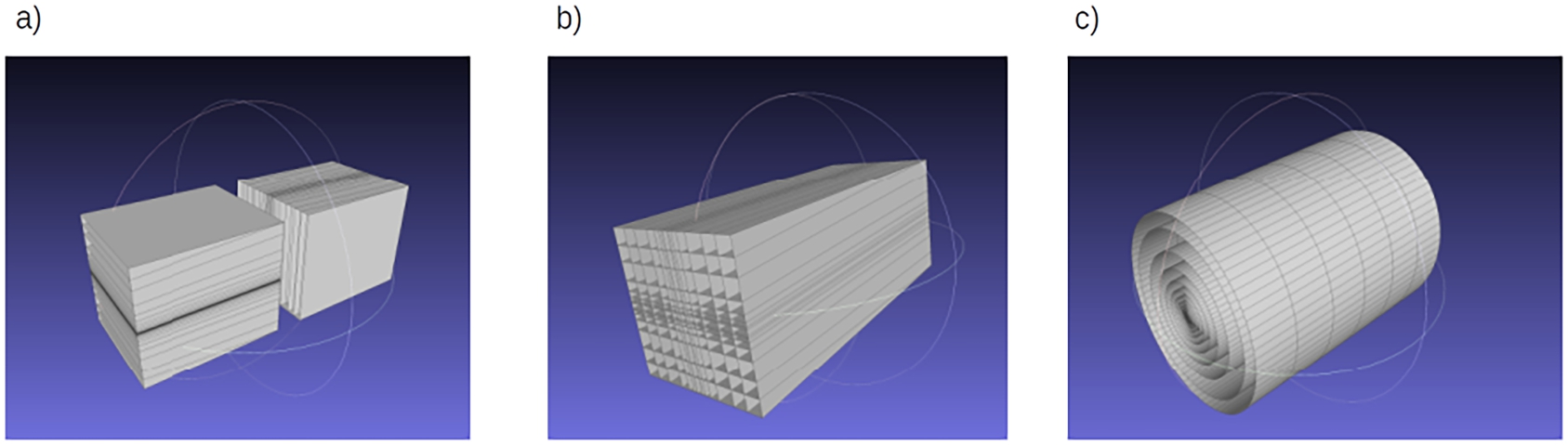

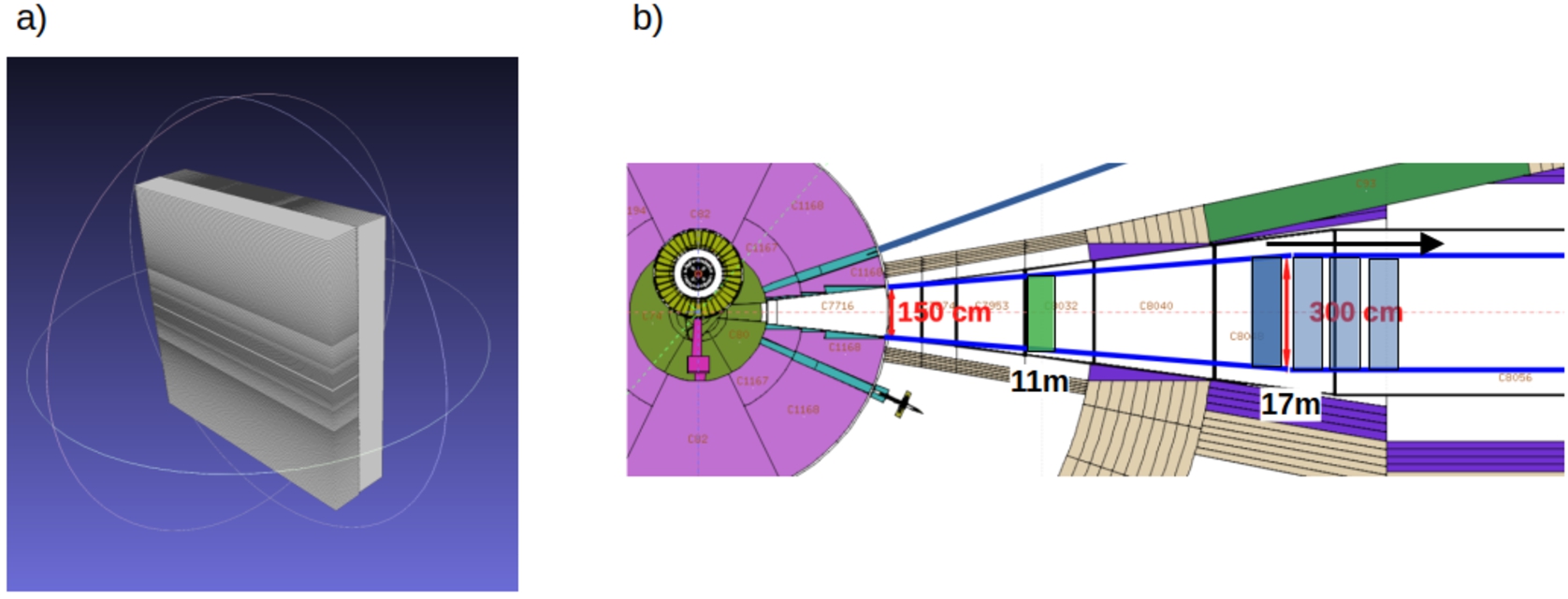

Types of nested optical components: a) mono planar b) double planar c) cylindrical.

Different nested layouts of the reflector that are symmetrical around the z-axis are possible. These are (a) a mono planar, (b) a double planar, and (c) a cylindrical system. In Fig. 31 three dimensional diagrams of the different types are shown. In a double mono planar reflector, neutrons would have to be reflected twice in order to be directed to the center of the detector. For the cylindrical symmetrical case, only one reflection is needed. The mono planar reflector comprises two separated devices that are rotated by ninety degrees with respect to each other such that one component acts as a horizontal and the other as a vertical reflector. From an engineering perspective this configuration seems to be particularly promising.

A difficulty of the nested reflector design is the thickness of the glass substrate which is used for the construction of the stable high-quality industrial super-mirrors. Recent developments of self-sustaining substrateless super mirrors [174] offer an elegant possible solution to this problem.

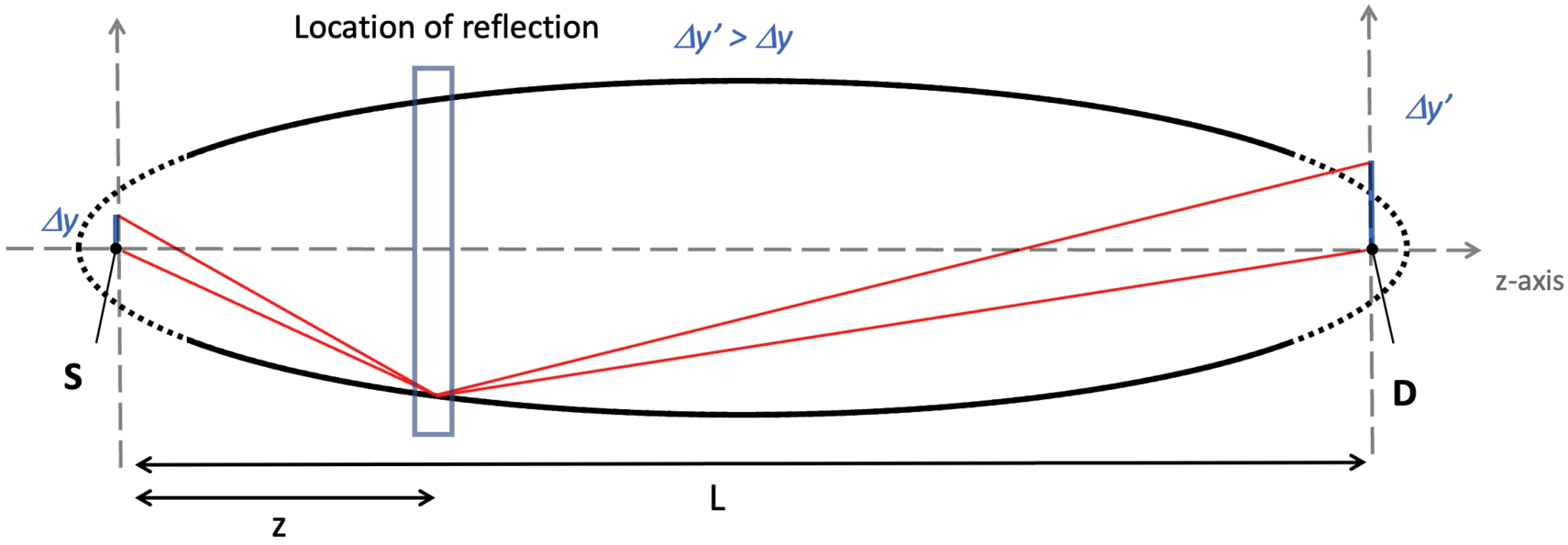

For any portion of a perfect (without accounting for gravity effects) rotational ellipsoid surface, a point-like emission source in one focal point is projected exactly to the image point in the other focal point. However, as is the case for any realistic optical system, if the source point is displaced from the ellipsoid focus laterally to the optical z-axis then the image point will similarly be laterally displaced with a magnification factor. Figure 32) summarises this situation. The magnification M for an off-axis point of height

Off-axis magnification of an elliptical reflector. The origin at the source S in the left focal point, L is distance between focal points and to the detector D, and z is the coordinate of reflection along z-axis. Ghe off-axis height at the source is given by

Since the size of the source plays an important role in the capability of the reflector to transport neutrons from it to the detector, the properties and parameters of the focusing reflector are simultaneously optimized with the design of the cold moderator in an iterative process.

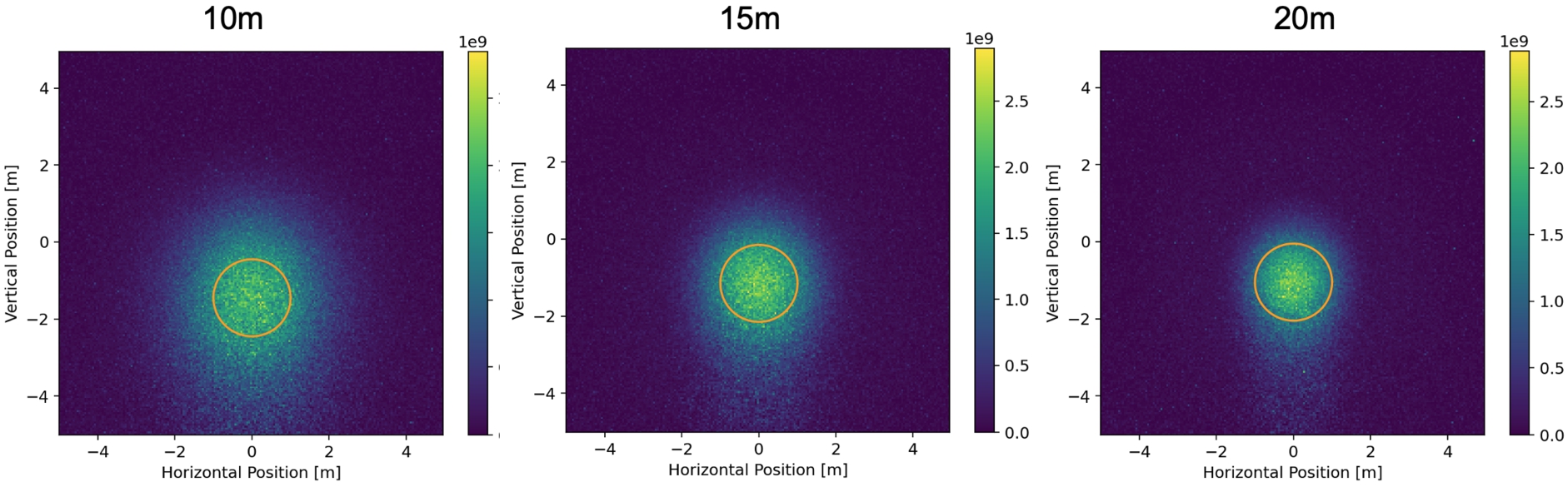

The impact of magnification is illustrated using a 4-level nested elliptical reflector with a length of 10 m, positioned at various starting locations. The representation shows the neutrons arriving at a distance of 200 meters, weighted by the square of their uninterrupted flight time. The orange circle designates the estimated location of the actual antineutron detector, with a radius of 1 meter. The degree of magnification diminishes as the reflector’s starting position is farther from the source. However, it’s important to note that the FOM is greatest for the 15-meter plot. This variation in FOM is essentially a trade-off between achieving effective focusing and covering a broader solid angle.

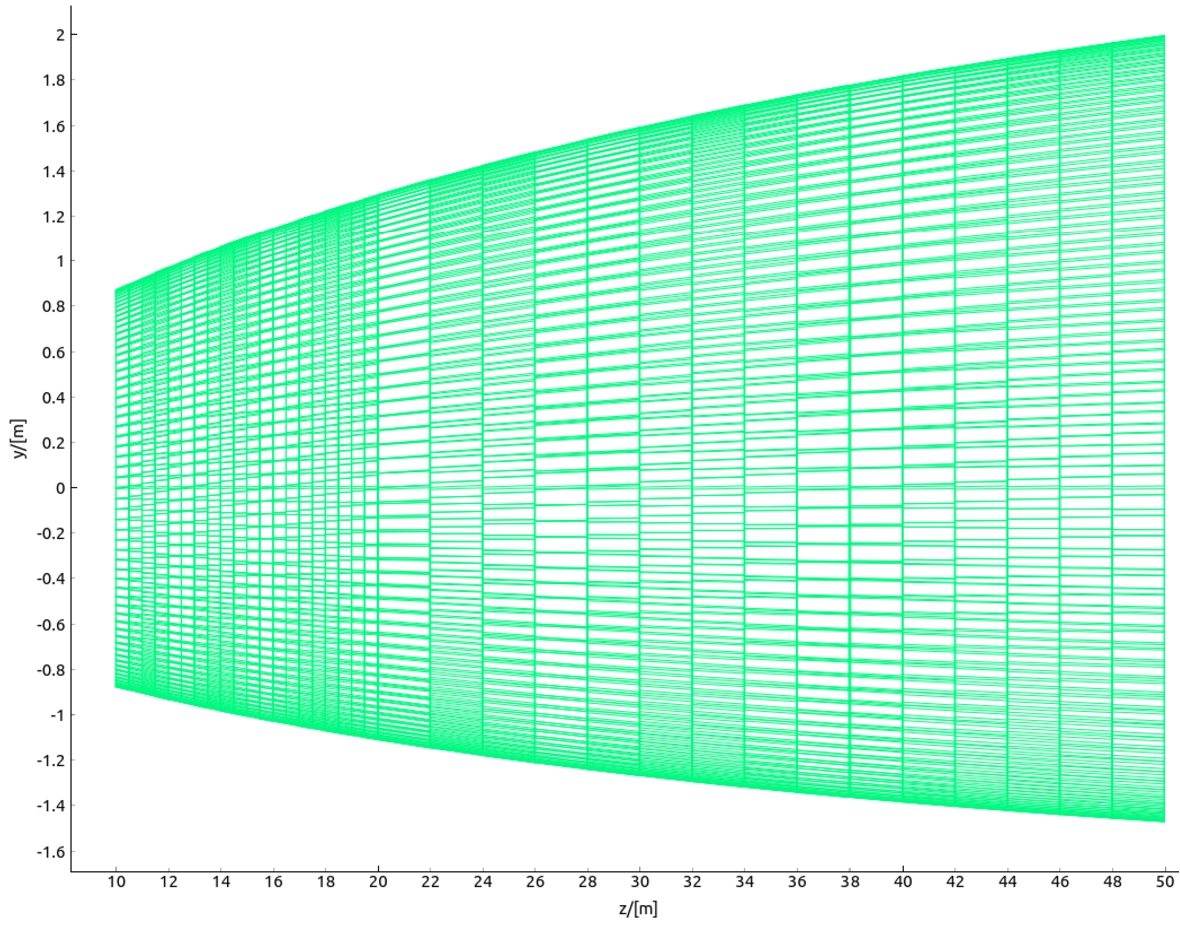

The principal setup for the reflector that is under study has been already shown in Fig. 30. The optic is supposed to start at a minimum distance of 10 m from the moderator center and the detector is placed 200 m away from the center of the moderator. The flight time is measured from the point in time of the last interaction (reflection) with the optic. The transversal dimension of the reflector is bounded by a maximum assumed tube width and height of 3 m. Gravity is turned on for all simulations.

Overview of the simulation strategy.

To facilitate comparisons between various geometries and the precise positioning of the reflector system, neutron ray-tracing simulations are conducted using McStas [196]. In McStas, the instrument components are defined using a high-level language, which is subsequently compiled into C-Code to execute the Monte Carlo simulations.

An extension called McStasScript 3

Source term calculation with MCPL. The particles are emitted from the moderator window, but already forward propagated to a distance of 2 m, just before the entry of the LBP.

The geometry of the optics is described in the plain text, object file format (OFF-File). This file is automatically generated from a set of input parameters using Python functions. The OFF-file is subsequently employed as input for the McStas component called

By conducting simulations with different designs, utilizing various geometries, and adjusting various reflector parameters (e.g., starting position, length, etc.), a substantial number of optical configurations can be explored to identify optimal parameters. Figure 34 illustrates the general sequence of a typical simulation cycle.

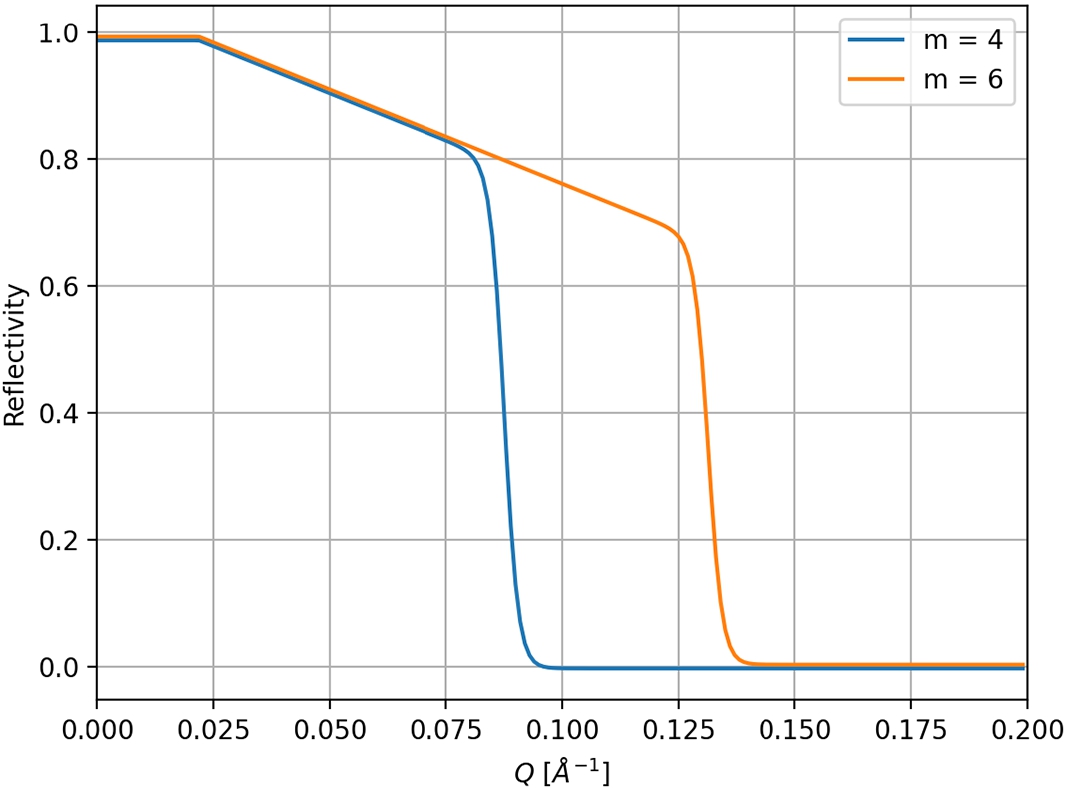

The reflectivity R of the supermirror is calculated in McStas by applying an empirical formula (Equation (10)) derived from experimental data [195].

Here

Two typical reflectivity curves for a supermirror derived from equation (10).

If the outer layer of a nested elliptical guide is given, the inner layers can be constructed in a recursive manner. A sketch of the construction is shown in Fig. 37 (y and z axis have been chosen in to comply with the coordinate system used in McStas). The source S and the detector D are located at the ellipses’ foci.Here,

Schematic diagram showing how the inner nested layers are constructed from the outer ones.

In these expressions,

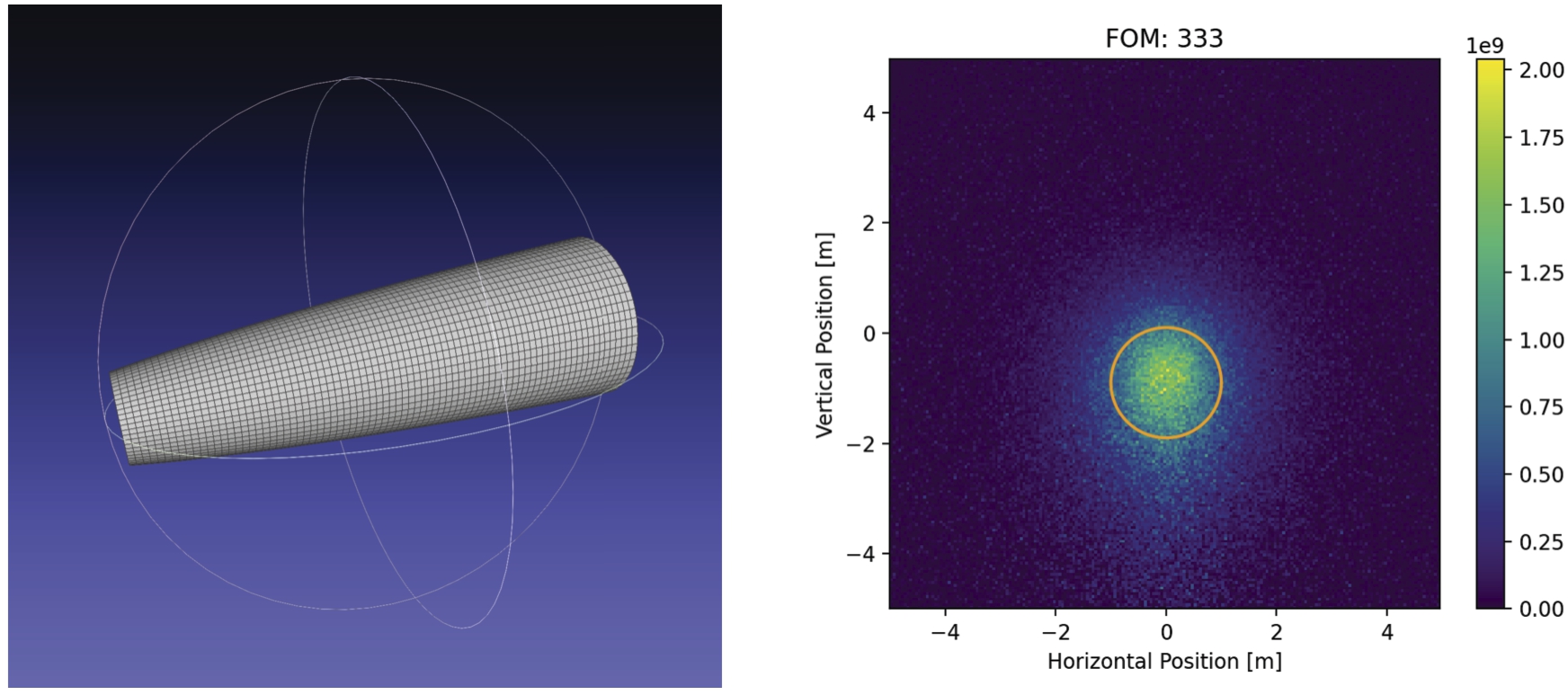

As a starting point for the simulations, a “baseline” design was defined for comparison purposes. This baseline design consists of a cylindrically shaped elliptical reflector with a single layer. The key parameters of this design include: distance of 200 m between the two foci and a small semi axis b of 2 m. The center of the source (moderator) is located in one focus, while the center of the detector is located in the other focal point. The reflector covers the part of the ellipse that starts at 10 m from the source and ends at a distance of 50 m and is therefore 40 m long. For this standard baseline configuration, various aspects of the NNBAR experiment have been studied, including different sizes of the cold source moderator (see Section 2 of Reference [161]), the neutron emission spectra, and the beamline design. This baseline design scheme has, in addition, been previously used for optimization of parameters and for comparison of several NNBAR configurations in previous publications [15,100,148].

A McStas simulation performed with this reflector with the horizontal axis at the center of LBP gave a

(Left) 3D visualization of the 40 m long baseline reflector (axis are not in scale). (Right) result of a McStas simulation with the baseline reflector for an accelerator power of 2 MW. The orange circle marks the detector area of 1 m radius. The FOM is 333.

Given that the center of the lower moderator is approximately 20 cm below the axis of the LBP (as illustrated in Fig. 3 of HighNESS Conceptual Design Report Volume I), a symmetrically placed reflector relative to the moderator may not effectively utilize the entire aperture provided by the LBP.

To cope with that issue the concept of a “differential reflector” was proposed [100]. The reflector is positioned exactly in the middle of the LBP but has a distorted ellipsoid shape (See Fig. 39). The constituting panels fulfill the solution of a coupled differential equation, to behave on each position like an elliptical mirror and form a continuous surface. This reflector focuses and bends the neutron beam by a few degrees in the vertical direction at the same time. This will allow the preservation of the FOM with the horizontal beam axis between the centers of the cold source and the annihilation detector.

Depiction of the differential reflector.

McStas simulations performed show the comparability of this layout to the baseline design. With the “differential reflector” a

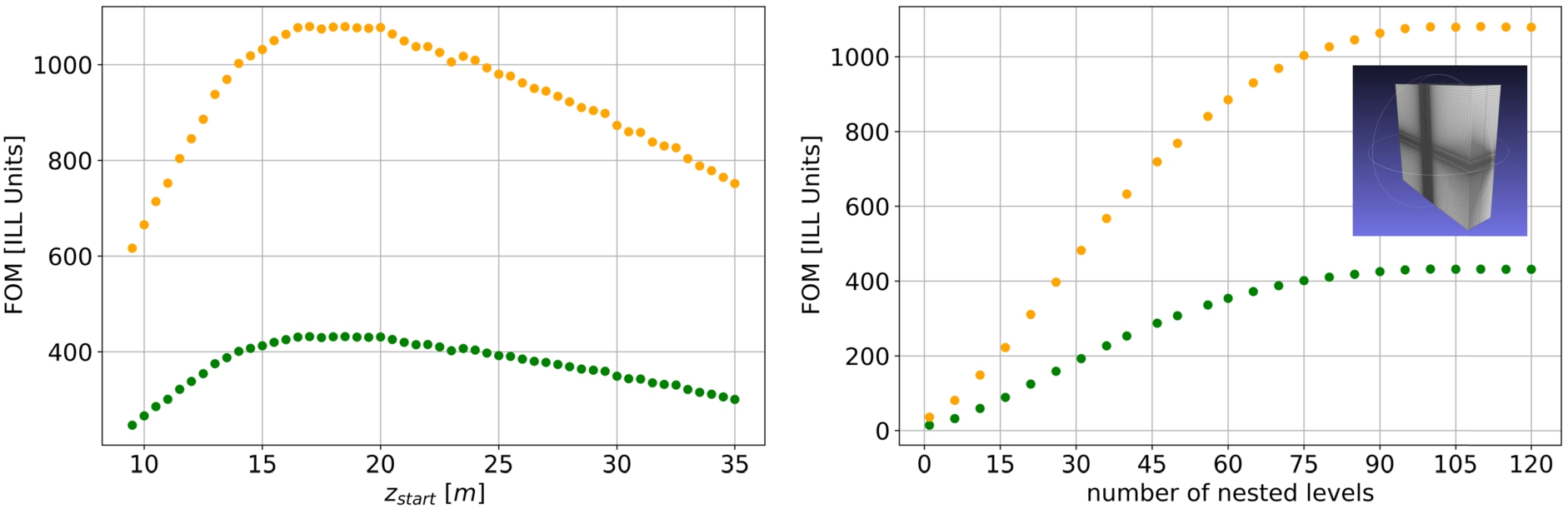

Result of simulations for a nested double planar reflector of length 10 m (see inlay) for 2 MW (green) and 5 MW (orange) accelerator powers of the ESS. (Left) variation of the starting point of the optic. (Right) effect of increasing the number of nested levels.

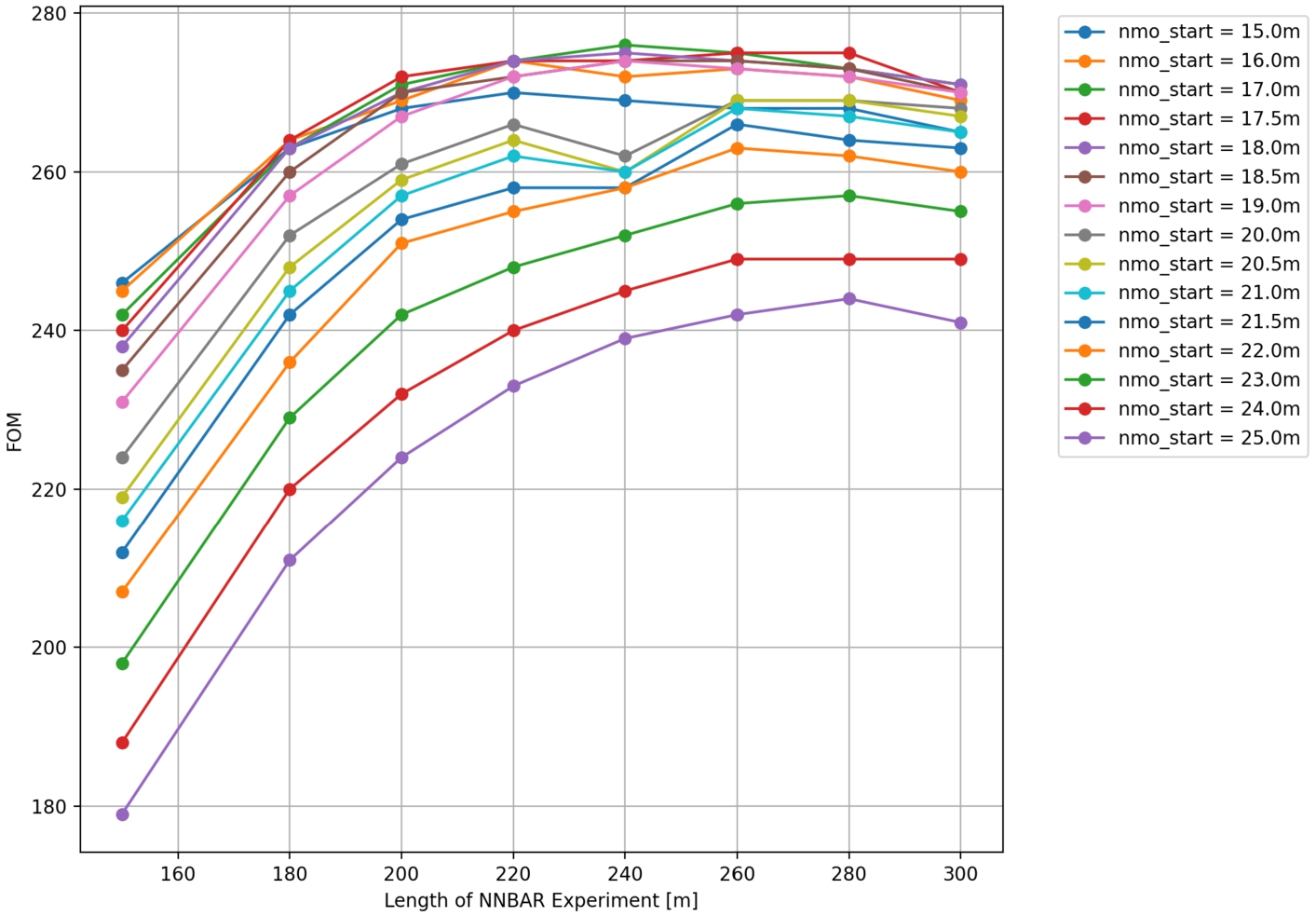

The optimal parameters for various nested mirror optics geometries are determined through extensive simulations, and the resulting figures of merit (FOMs) are compared. Two such parameter scans are depicted in Fig. 40 for a double planar nested reflector with a length of 1 m. The scan for the

Regarding the number of nested levels, there appears to be a point of saturation where adding more levels does not lead to a significant further increase in the FOM. This suggests that a balance must be struck between the complexity and cost of the optics system and the achievable sensitivity.

For the simulations of the mono-planar components, the arrangement with mirrors in a horizontal layout was consistently placed in front of the one with mirrors in a vertical layout. This choice was made with magnification in mind. Since the moderator has dimensions of approximately 40 cm in width and 24 cm in height, it is advantageous to have the vertical component with less magnification positioned farther away from the moderator. As the components become shorter, this effect becomes less pronounced.

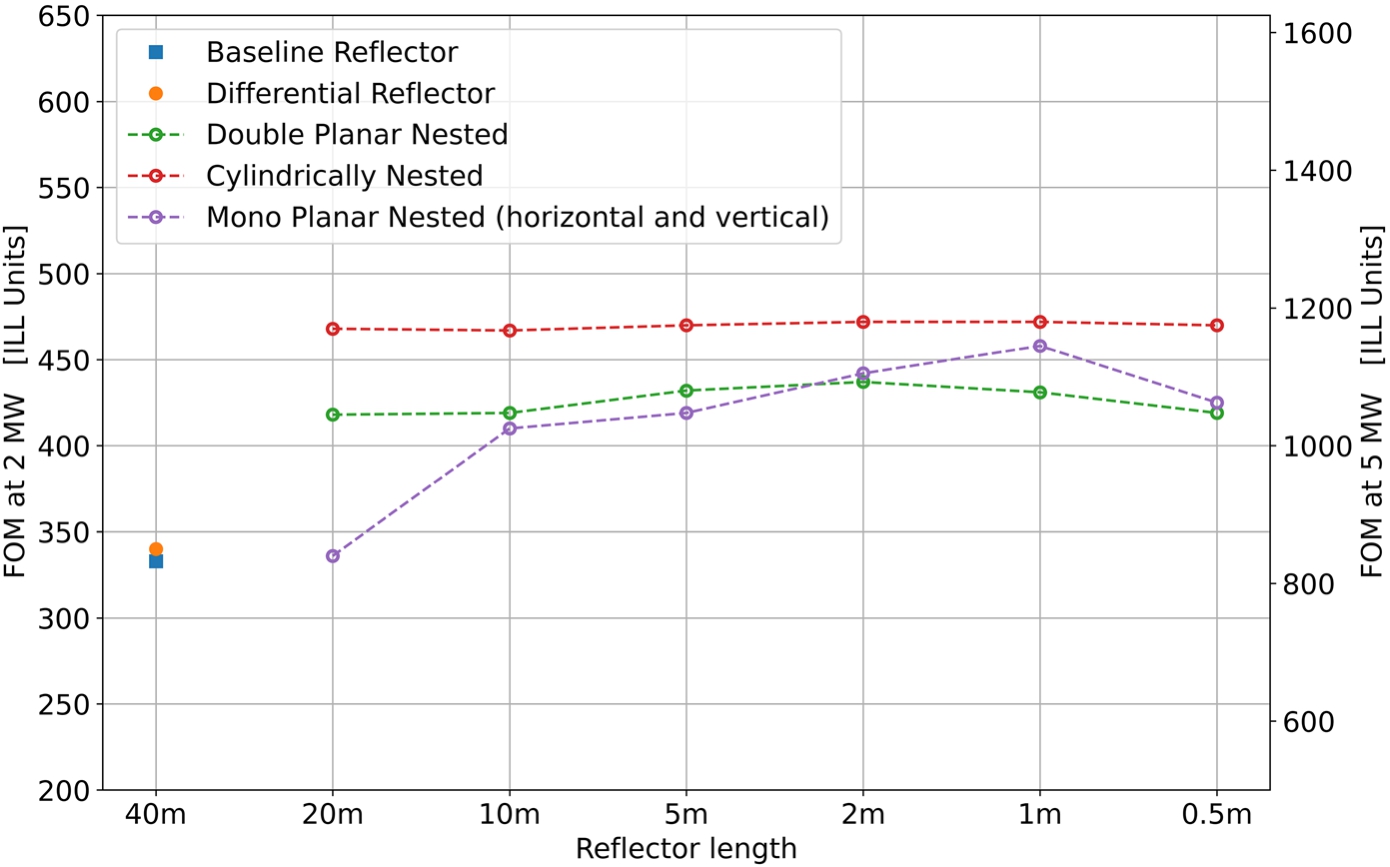

In Fig. 41, the results of the simulations for the various reflector geometries are summarized. The nested components consistently achieve significantly higher FOMs compared to the baseline or the differential reflector. Notably, the cylindrical components slightly outperform the planar ones. This advantage arises because the former (latter) require only one (more than one) reflection to reach the target. In general, the nested components offer gains of at least 20% over the baseline reflector in terms of FOM.

Collected FOMs for different reflector geometries for accelerator powers of 2 MV and 5 MW.

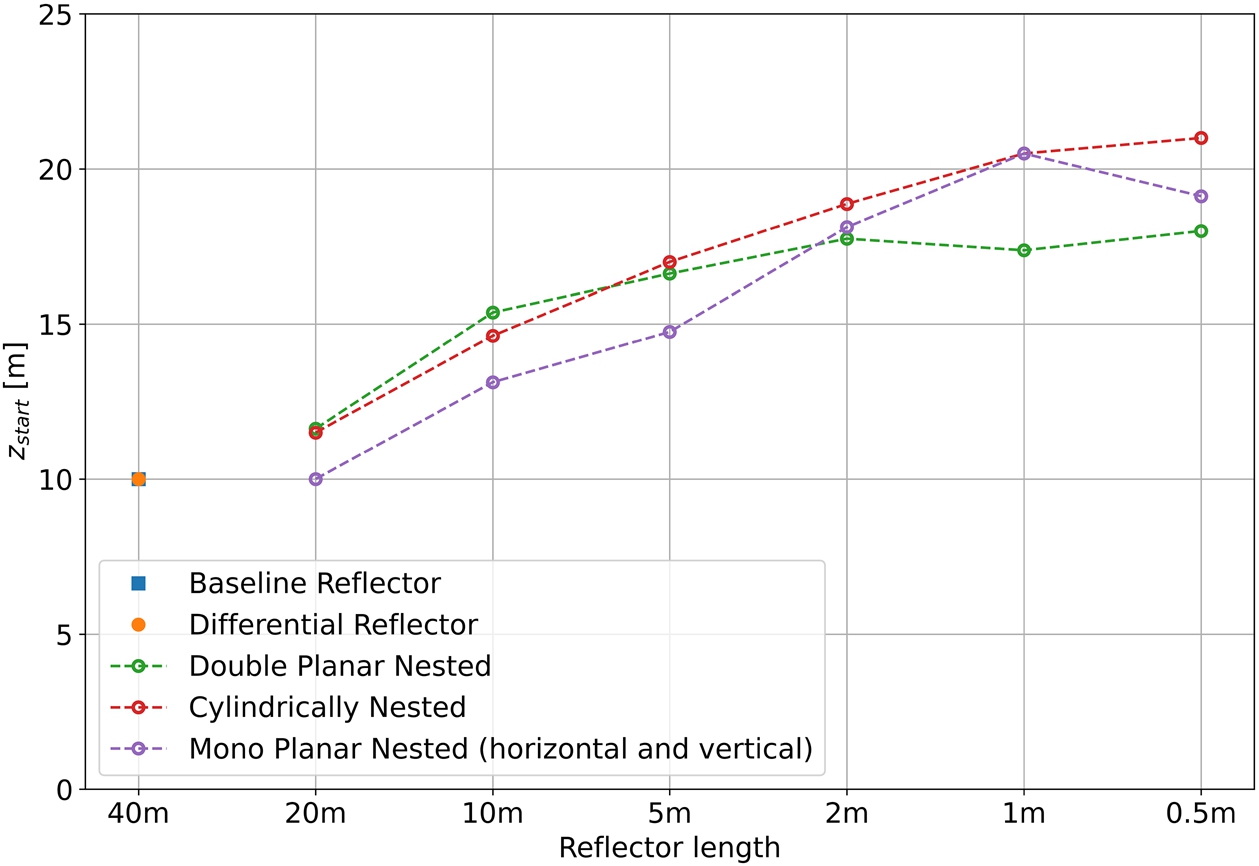

The results regarding the optimal starting locations, denoted as

Starting position

The studies conducted in the previous sections did not consider a detailed beamline layout, apart from the limitations imposed by the LBP. To assess the potential impact of further constraints related to the presence of neighboring instruments (e.g., the LOKI instrument located in close proximity to the NNBAR beamline) and the size of the vacuum tube, additional simulations were performed with the following modifications:

A first rectangular aperture after the LBP at 7.9 m of size 1.8 m × 1.63 m vertically centered at 0.84 m from the top. A second rectangular aperture after the LBP at 10 m of size 2.2 m × 2 m vertically centered at 1 m from the top. A circular aperture at 17 m (respectively after the reflector for starting positions that are larger) of radius 1.5 m centered symmetrically.

With this set of apertures, the available paths for the neutrons are limited to areas that align with the model used for the beamline shielding calculations (as described in Section 8).

As a reflector for this study, the monoplanar type with a length of 0.5 m has been chosen (as shown in Fig. 43 a) for representation). The reason for this choice is that the construction of this reflector poses fewer engineering challenges compared to the other geometries.

Two possible scenarios for the locations of the reflector that are shown in Fig. 43 have been studied:

Inside the bunker at 11 m.

Outside the bunker starting from 15 m.

Left side: depiction of the reflector used for the simulations. Two mono-planar (MP) nested mirror assemblies, each of 0.5 m length. Right side: sketch of the placement of the reflectors in the physical model of the experiment; inside bunker position at 11 m (green); outside bunker (starting at 15 m) (blue).

For both scenarios, the length of the experiment was also varied to study the impact of longer NNBAR baselines on the FOM. The results in the following sections are presented for a 2 MW accelerator power, but they can be easily scaled to a 5 MW power scenario.

The results of the simulations for a longer baseline reflector at a distance of 11 m are shown in Fig. 44. For a detector at 200 m away from the moderator, a FOM of 221 is achieved. Increasing the experiment’s baseline length further only leads to marginal gains. This is primarily due to the high magnification factor at this position, which becomes even more significant as the baseline is increased. As a result, the image at the detector becomes smeared out, as depicted for several lengths in Fig. 44.

Results of simulations for a reflector placed at 11 m. The plot on the right shows the shift of the center of intensity on the detector due to gravity for longer baselines. At the bottom, images for selected lengths of the experiment are shown. The blurring is due to the increasing magnification.

Results of simulations for a reflector placed beyond 15 m as a function of the length of the experiment.

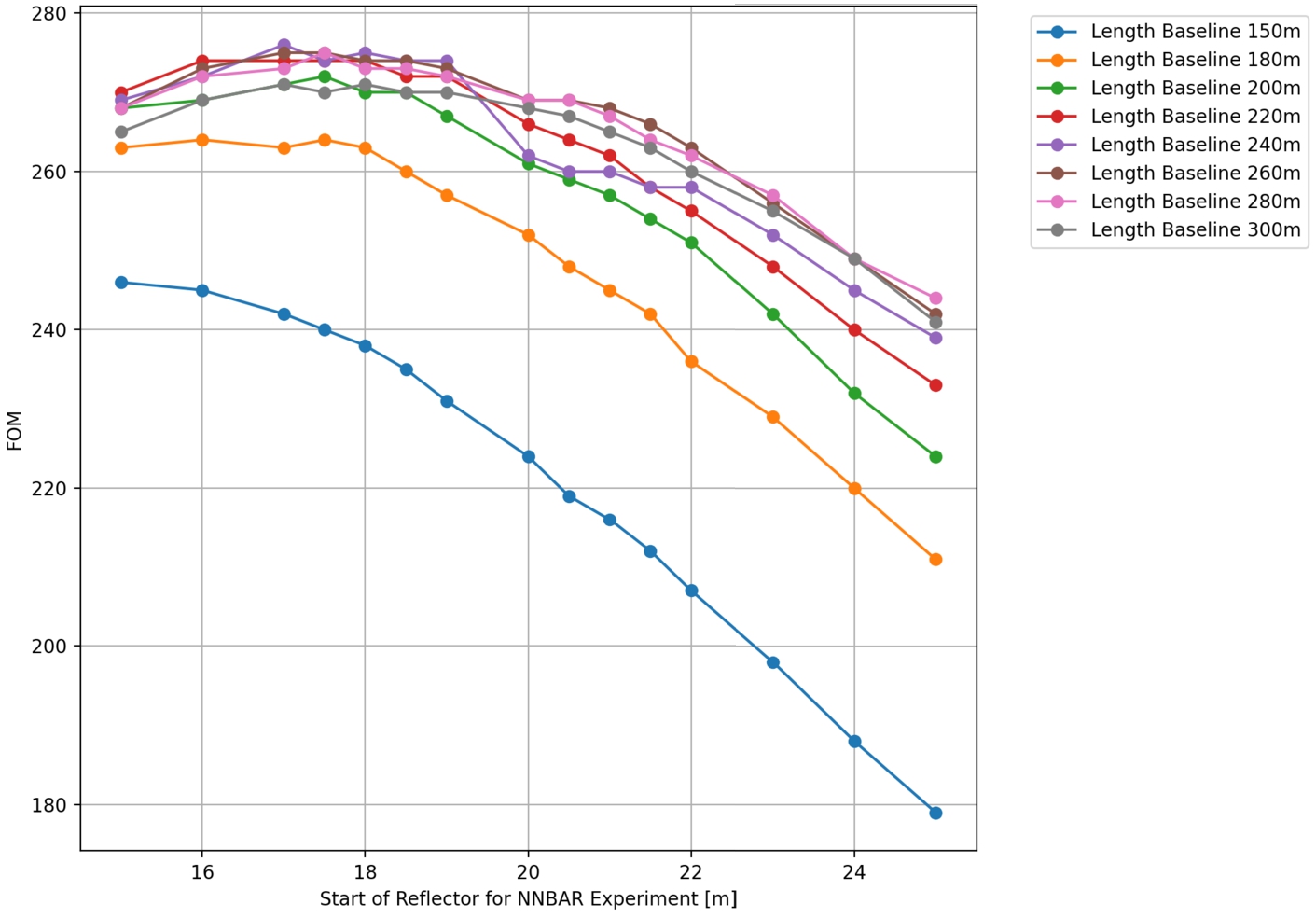

In this scenario, both the baseline of the NNBAR experiment and the starting position of the reflector have been varied. The results are visualized in Fig. 45 and Fig. 46.

Figure 45 shows that the FOM remains relatively constant at lengths of about 200 m. The optimum starting location for the optics outside the bunker scenario is found to be at about 18 m, with a FOM ranging between 270 and 275.

These results suggest that, within the considered parameter space, a baseline beamline length of approximately 200 m and a starting location of around 18 m outside the bunker could provide an optimal configuration for the NNBAR experiment.

Results of simulations for a reflector placed beyond 15 m as a function of the starting position. Alternative visualization of the same data as in Fig. 45.

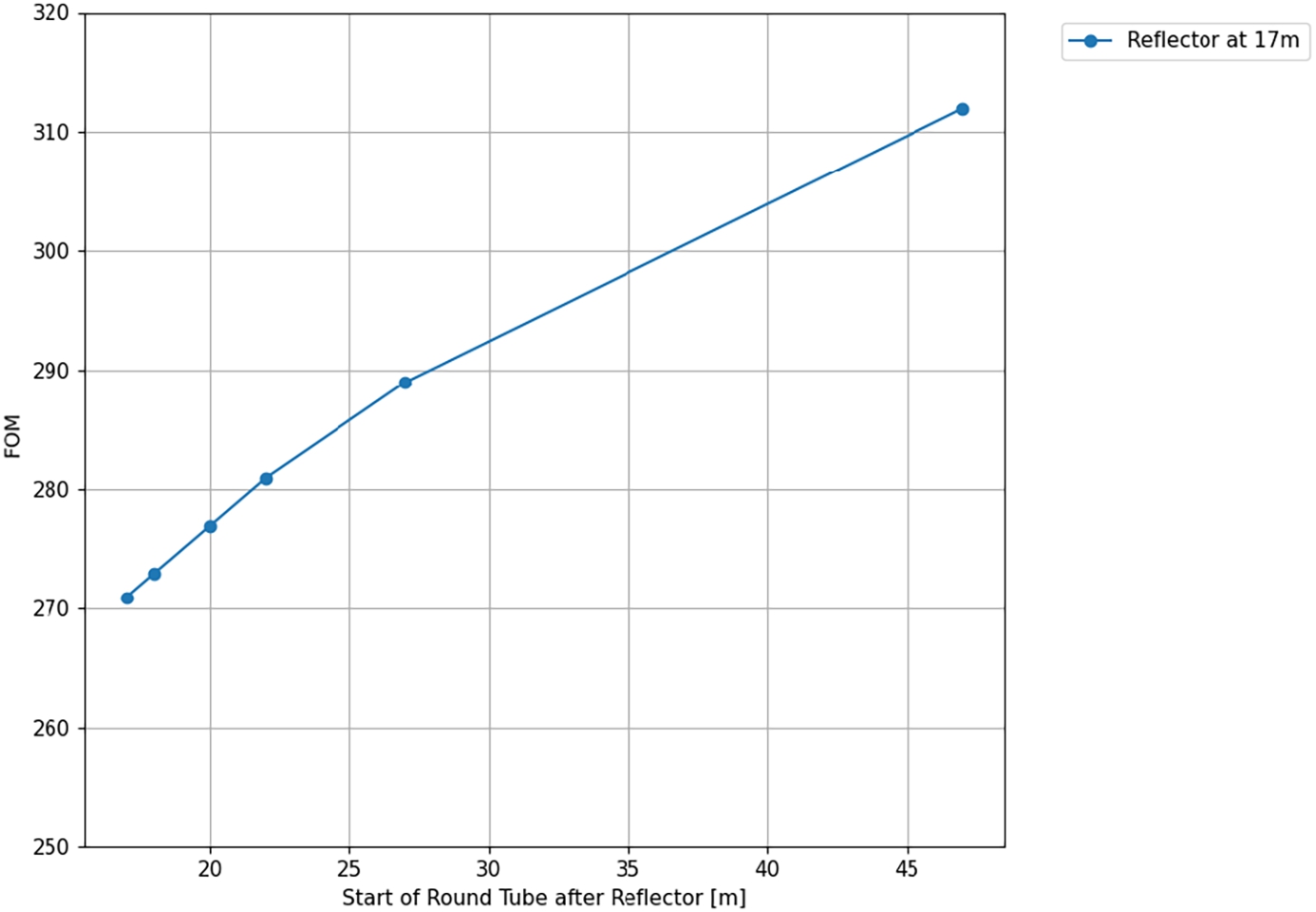

The results indicate that using a square-shaped tube section after the optic, which transitions to a circular cross-section after a certain distance, can improve the performance of the NNBAR experiment. Figure 47 shows the results for a reflector placed at 17 m with different lengths of the square-shaped section.

For instance, a length of 20 m for the square-shaped section would result in a FOM of 300. This suggests that modifying the beamline geometry in this manner can lead to a substantial improvement in experimental sensitivity. This configuration used for estimations of the full sensitivity of the NNBAR experiment that are given in Section 17.

Results of simulations when adding a squared shaped tube section after a reflector placed at 17 m. The FOM increases with the length of the section.

Magnetic fields must be sufficiently small so as not to inhibit the transition between a neutron and an antineutron owing to a lack of degeneracy between the particles caused by their opposite magnetic moments. It is necessary to achieve the quasi-free condition such that the probability of a transition becomes proportional to the square of the propagation time. Maintaining a magnetic field of less than approximately 5–10 nT satisfies this condition [84,148].

Neutrons travelling 200 m inside a shielded environment will nevertheless by subject to a time-varying field in the rest frame of the particle. This results in gradients and spatial and temporal distortions in the field. It was shown in Reference [84] that such effects do not significantly suppress the neutron–antineutron transition. The most important quantity is the magnitude of the average field. The goal for NNBAR is therefore to permit neutron propagation with an average field experienced along each neutron trajectory of less than around 5–10 nT. An additional constraint is that, in order to avoid interactions, the neutrons move in a vacuum. A shielding system, described below, has been designed [39], which gives a typical field of around 5 nT.

Magnetic shielding concept

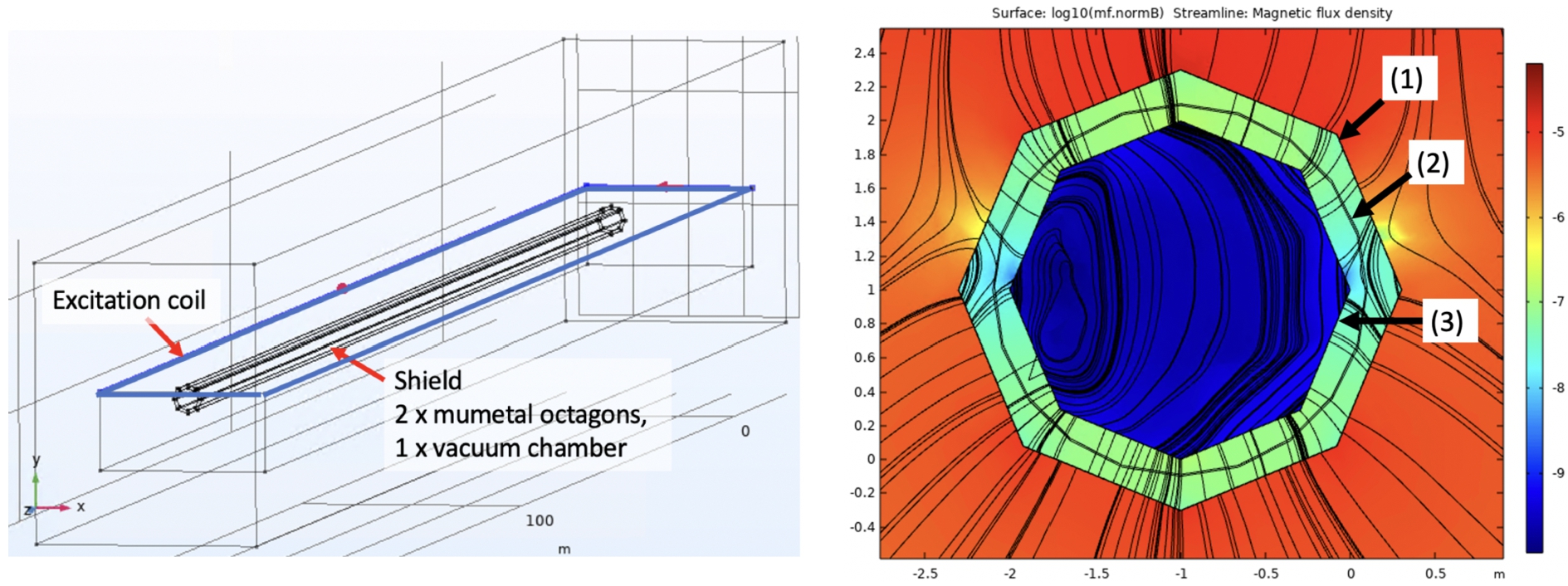

The shield concept is illustrated in Fig. 48 and is simulated with COMSOL [81,82]. The model comprises a two-layer octagonal mumetal shield combined with a stainless steel vacuum chamber, which also aids with the shielding. In addition, an external excitation coil is arranged around the shield to monitor the performance.

Mumetal shielding gives a static lowering of the magnetic field in addition to damping changes in the external magnetic field of up to about 10 Hz. The shielding of higher frequencies is given by the combination of the conductivity of the vacuum chamber and the mumetal.

Left: the 200 m long and 2 m inside diameter arrangement. This consists of a pair of octagons made from mumetal, with a 200 m length and a stainless-steel vacuum chamber separating them. Right: a shield cross section. A logarithmic scale magnetic field magnitude is shown. (1) outer mumetal shield; (2) vacuum chamber; (3) inner mumetal shield.

The design of the mumetal shield is based on a proven small-scale design of the magnetic shield which is used for an atomic fountain [197]. This comprises mumetal sheets, deployed in an octagonal shape and which are clamped together. Overlaps of 50 mm width of the mumetal sheets are foreseen to ensure proper magnetic flux connection while being a reasonable compromise with magnetic equilibration. Using this approach, the independent assembly and detachment of the shield parts can be done when needed. The vacuum chamber can be arranged in between the mumetal layers or inside the inner mumetal layer.

For a low magnetic field, magnetic equilibration of the mumetal is required [27] through a set of coils. These surround each shield layer independently as a toroidal coil. The shield diameter is determined by the volume accessible to the neutrons together with a distance of around 20 cm to the shield walls, where the fields after equilibration are too high for the experiment. Furthermore, the gap between the mumetal shells is estimated from simulations and set to a minimum of 20 cm.

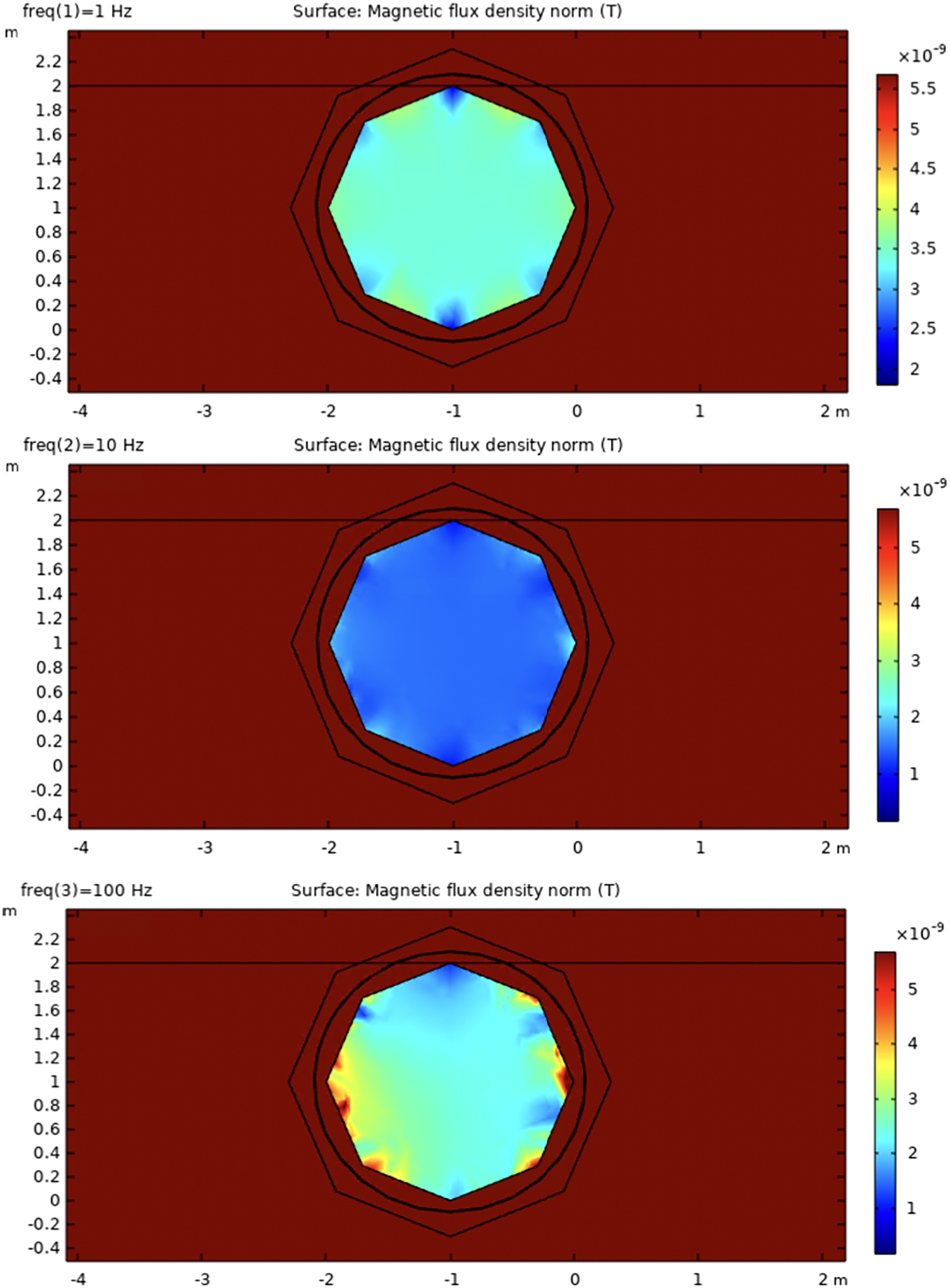

Figure 48 shows a schematic diagram of the shield modelled in the simulation. For the optimization of parameters, the shield was deployed inside a static background field which is normal to the shield axis. The influence of various static fields from other ESS instrument magnets at LoKi, ESTIA, SKADI, DREAM, HEIMDAL and T-REX [94] were also considered. Representative tests of steel rebar from magnetic concrete and structures were additionally made. A large external coil is deployed surrounding the shield. This produces a magnetic field mostly perpendicular to the shield axis. The coil is placed closer to one open end of the shield than on the other side in order to also study the effect of fields entering the shield longitudinally. Estimates of shielding efficiency were then made for 1, 10 and 100 Hz frequency, which included permeability and currents. The estimation of DC field reduction is not quantitatively possible for fields of very low magnitudes via a magnetostatic approach. However, it should be pointed out that the effect of magnetic equilibration is both well studied and tested and, in specific and simple scenarios, can be calculated [182,183]. It can thus be scaled from a magnetostatic simulation based on a typical mumetal anhysteric curve. Figure 49 shows the fields inside the shield arising from excitations of frequencies in the range of 1 to 100 Hz, together with an external excitation which has an identical amplitude.

Field inside the shield (cross section in middle region), for 1, 10 and 100 Hz external excitation.

From the ratio of outside and inside magnitude, the shielding factor

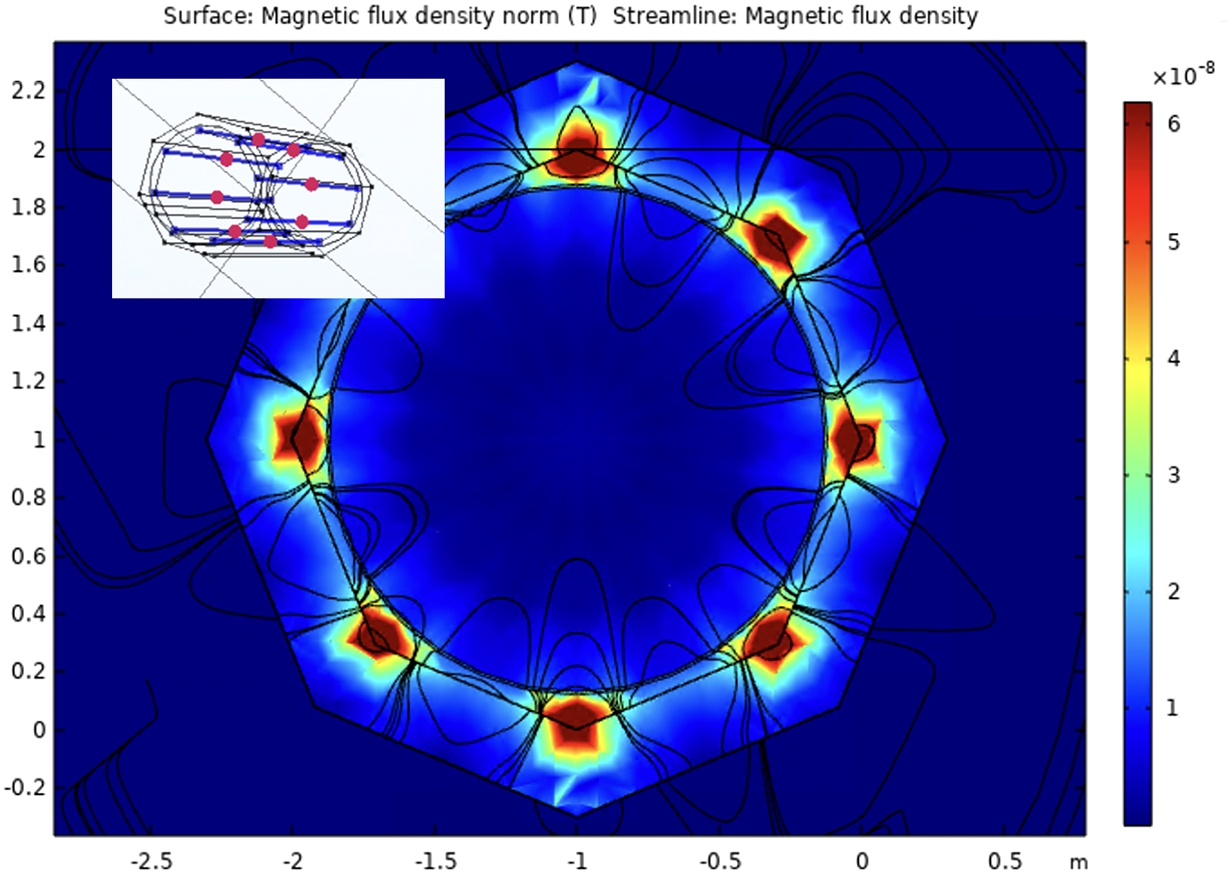

Although the equilibration efficiency is not simulated quantitatively but scaled from experimental findings, the field distribution inside the shield after equilibration is modeled, as seen in Fig. 50.

The expected field pattern from the magnetic equilibration process.

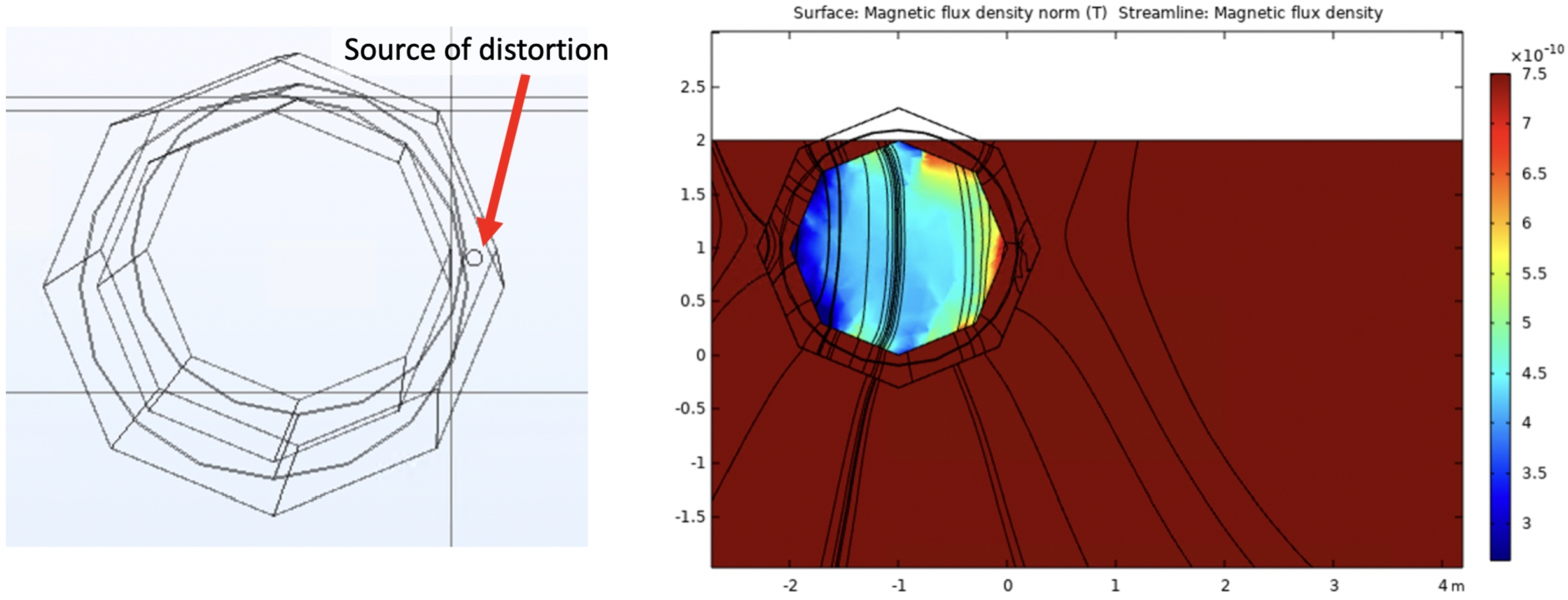

Magnetic equilibration can be realized, as studied in the simulation, using 8 turns for each octagon, with 80 Amp-turns. An additional point to consider is the effect of local magnetic distortions which can have as their origin, e.g. the vacuum chamber or an outside source. The modelling of these is shown in Fig. 51. A typical scenario could be a magnetic weld in the vacuum chamber, which can be >1000 nT extending over distance of few centimeters.

An example of a magnetic distortion arising to due to a magnetic weld. The impact on the inside field after equilibration is shown.

A Monte Carlo study which tracked particles inside the shield using simulated residual field maps has been carried out. This showed that the efficiency loss due to the presence of the small but finite magnetic field is around several per cent.

As previously explained, it is necessary to transport neutrons within a magnetically shielded and low-vacuum environment. For quasi-free neutrons, the vacuum pressure should be maintained below

The ESS monolith was designed to be able to operate with He atmosphere (100 kPa) or at a low pressure

The vacuum vessel will house the neutron optics (Section 10.1) in a vacuum environment and will provide support for the external magnetic shield (Section 11) along the entire length of the beamline. During the optimization of the NNBAR optics design, the following approach was adopted for the vacuum pipe. Initially, a rectangular vacuum pipe is used from the LBP up to the location of the optics, which lies around 17 meters after the moderator as discussed in Section 10.9. Following this, the design transitions to a cylindrical vacuum pipe with a 3 m diameter, extending up to the detector area, where it will then reduce to a 2 m diameter.

A combination of mechanical pumps (dry rough and turbo-molecular) units for pump down and a combo-type (sputter ion pump and non-evaporable getter) are currently planned as a permanent pump solution to minimise vibration on the optics system and to assure a low level of physical access during the periods of operation.

The NNBAR detector

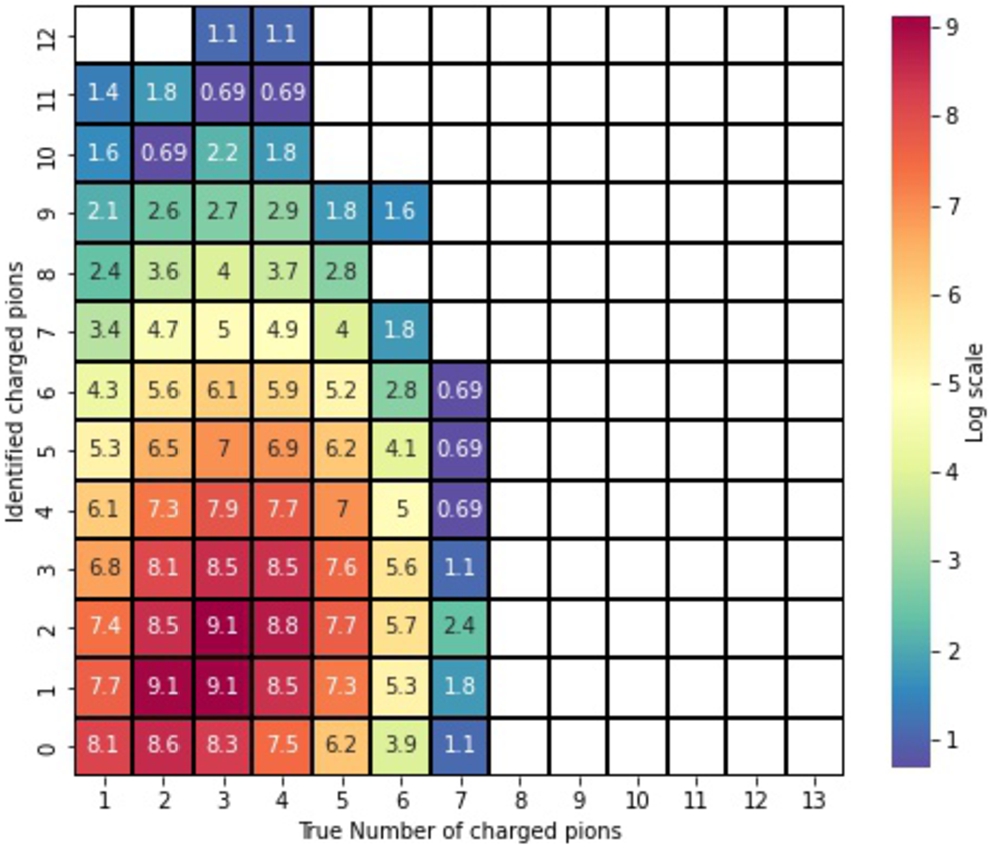

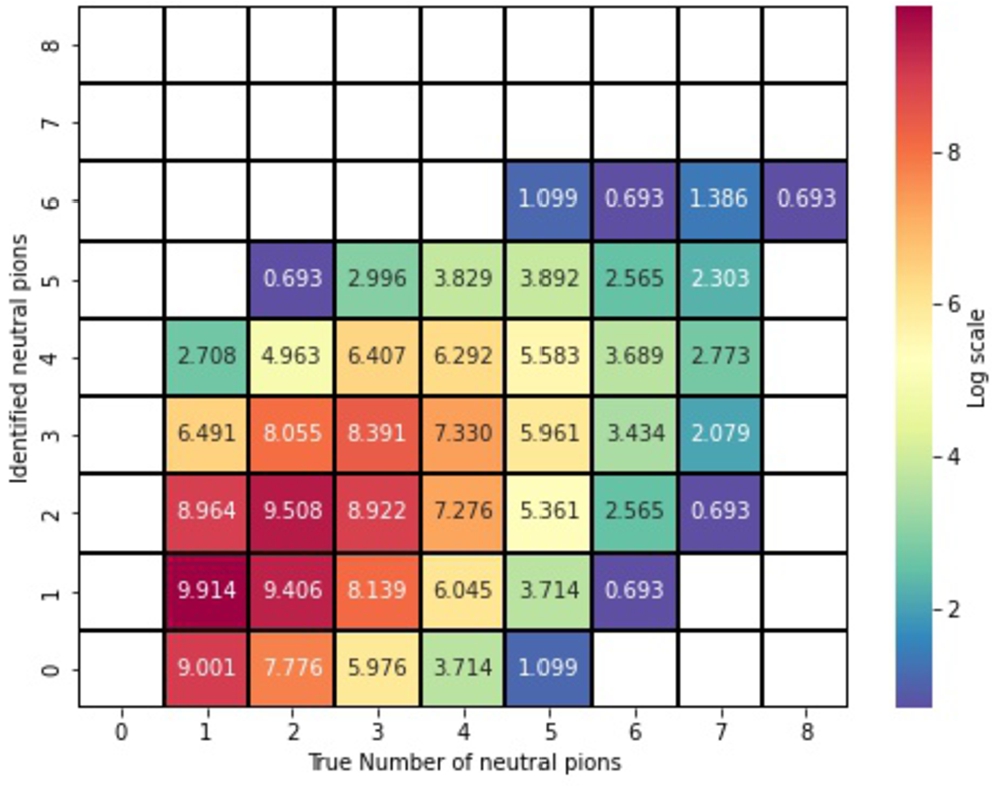

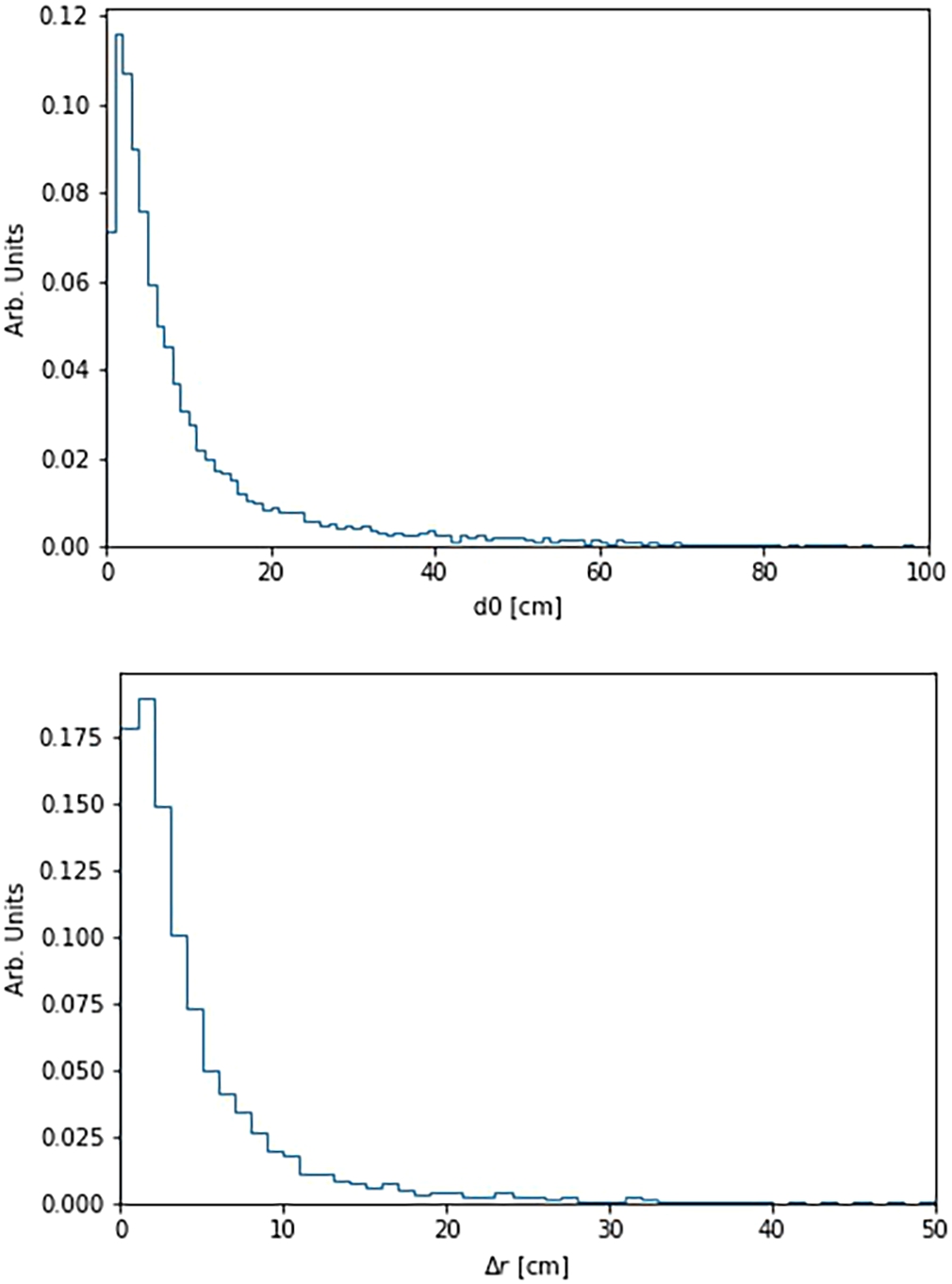

The ultimate goal of the NNBAR detector is the reconstruction of an annihilation event and rejection of background. The signature is an isotropically produced multi-pion final state (between 2 to 8, with an average of ∼5) with a centre-of-mass energy of up to around 1.9 GeV. The constraints in designing such a detector are as follows.

All primary particles hitting the detector should be identified and the directions and energies of annihilation and nuclear products must be measured. Given the constraint of the field-free propagation region, no magnetic field is used in the tracking system. Due to the rare nature of the process being measured, a statistical correction cannot be used, i.e. the determination of an inferred signal contribution from a large number of selected events comprising signal and background processes. As a consequence, combinatorial mistakes must be suppressed. Since the annihilation occurs in a nucleus and not in free space, the rejection power of some observables such as invariant mass and total energy will be degraded, as discussed in Section 14.3. This implies that some of the overall demands on the calorimeter energy reconstruction for the signal final-state particles can be relaxed. It is also important that all particles from background processes are identified as such and that these should not degrade the signal, e.g. due to background events piling up in-time with a signal event. Finally, it is important that technological and geometrical choices are made such that the final detector is affordable. These points represent an ideal case. However, in practice, compromises between the different choices will be needed. In order to identify an annihilation event, a combination of different types of evidence from the detector are needed.

The first type of evidence to guide the detector design is topological. A common vertex of origin in the carbon foil from several charged particles is helpful.5

An identified charged pion pointing to the foil and an identified

Figure 52 shows the charged-pion multiplicity from antiproton annihilation in 12C. Antineutron annihilation in 12C would give states in the same proportions as for antiproton annihilation. A simulation is also shown of antineutron-nucleon annihilation in carbon, which is used in this work and which agrees well with the data. Predictions are also shown for antineutron-nucleon annihilation in argon, which show minimal differences with expectations for 12C. In 10% of the cases there is only one outgoing charged pion, whereas in 88% of the cases there are two or more charged outgoing pions. The charged pions are the cornerstones of the annihilation topology, since these can be tracked and be used to reconstruct the event. Beyond the charged pions, charged nuclear fragments can also be produced and tracked. For the events that have only one charged pion, a

The probability (%) of the formation of a specific multiplicity of charged pions in antinucleon-nuclei annihilation. The solid histogram shows data from

Another set of evidence comes from conservation of energy and momentum, which is intrinsically connected to particle identification (PID). Since a significant fraction of the available energy (∼30%) corresponds to the rest masses of the pions, accurate PID and thus measurements of the multiplicities of different types of particles are themselves indirect energy measurements. For a final-state with four pions, nearly 600 MeV of the total energy is accounted for in this way. In principle, the momentum and energies of all annihilation and nuclear products should be measured in order to reconstruct event kinematics and exploit event-level characteristics of the signal event, such as the expected isotropy of produced particles.

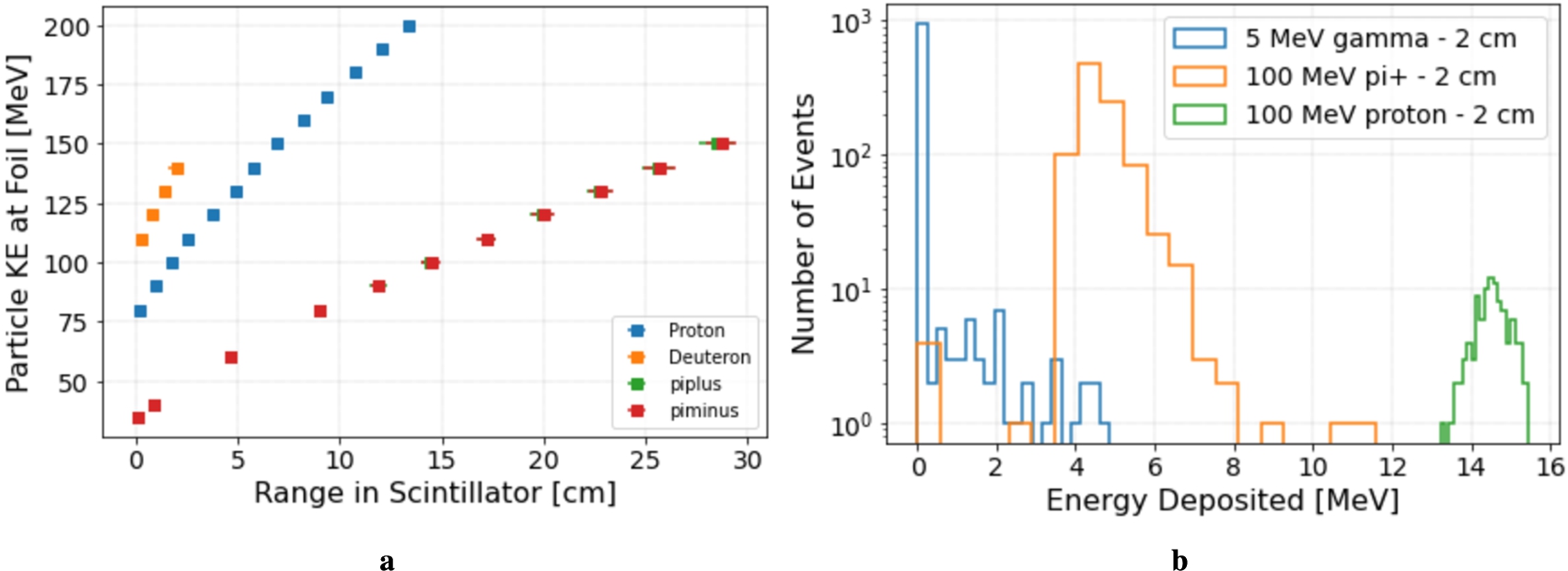

A number of technologies were considered. Out of the gaseous trackers, a TPC was chosen. A TPC is ideal for the purpose of pattern recognition in three dimensions with minimal combinatorial ambiguities and has a number of other desirable properties such as providing high precision specific energy loss,

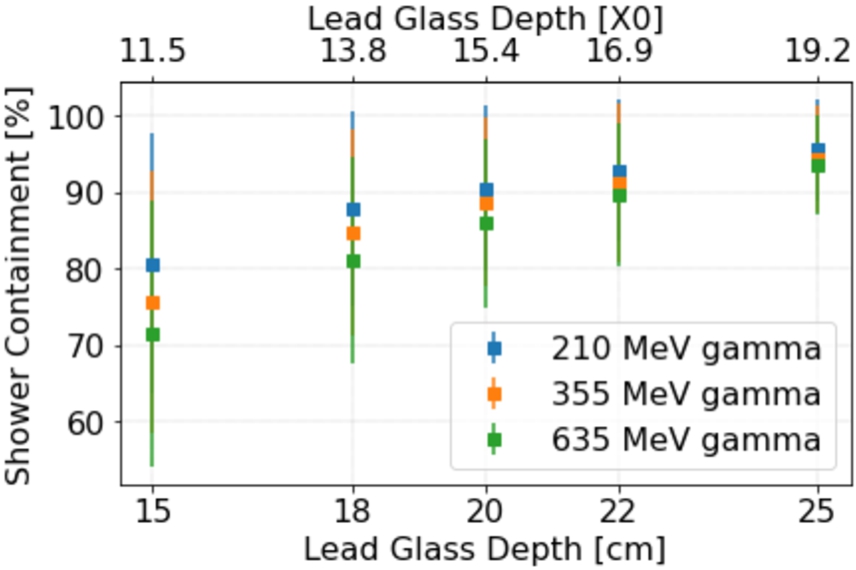

For calorimetry, a scintillator-based hadronic range detector (HRD) and a lead glass electromagnetic calorimeter (LEC) are the chosen technologies for photon and pion energy measurements. A sampling calorimeter was considered [39] for electromagnetic energy reconstruction. But it was found to have lower

The lower energy charged hadrons (p and π) can be stopped gently, by ionization energy loss. The measured energy deposit will then be a correct measurement of the kinetic energy. The higher energies require a substantial amount of material to stop the particle by ionization energy loss. The probability is then high that nuclear reactions will occur before the charged hadron has fully depleted its kinetic energy. An energy deposit will then be a very crude measurement of the kinetic energy. There is no detection way around this fact unless a magnet is introduced. For charged hadrons the energy resolution is poor at high energies and here it will be even worse for hadron calorimetry [198]. Neutral pions decay in almost all cases to 2 photons. A

Figure 53 shows a cut-away of the detector, encased with concrete. This was produced as part of the mechanical construction study (Section 16).

Cut-away of the NNBAR annihilation detector.

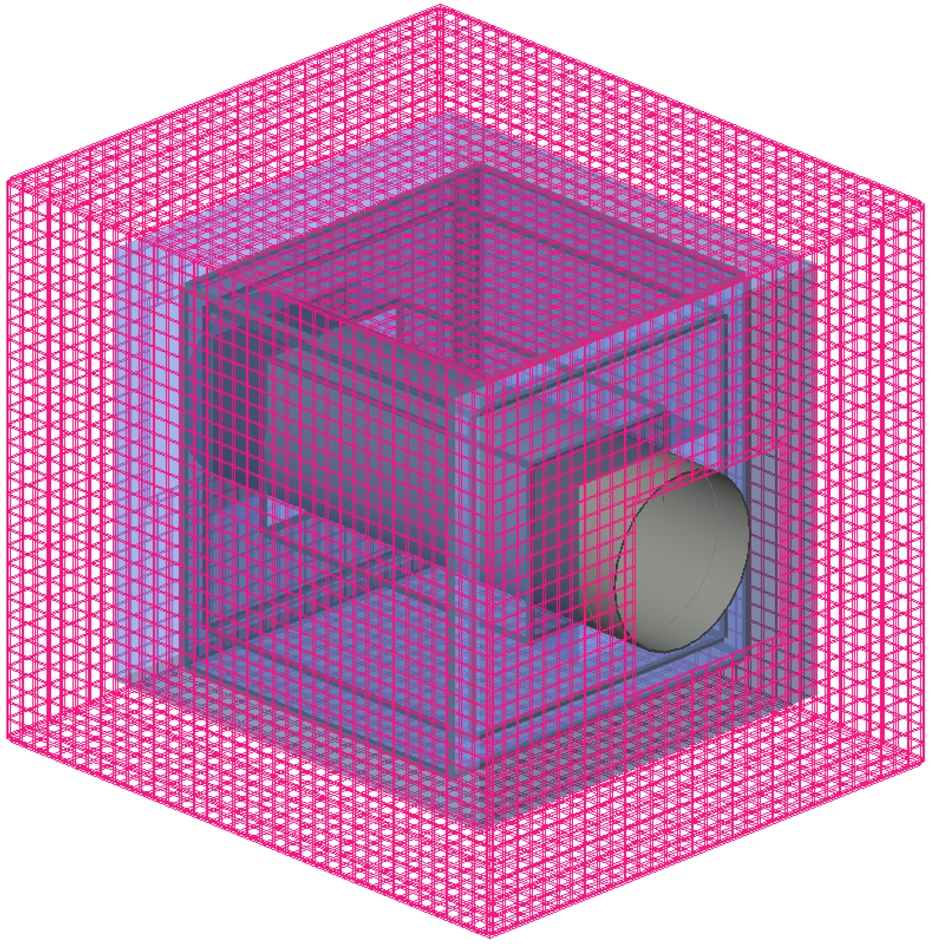

A GEANT4 model was developed to describe this detector. The model includes all major material sources (beam pipe, TPC, scintillator staves) though omits smaller items such as electronics.6

A dedicated

A microscopic simulation of tracking in the TPC is a formidable and CPU-expensive task. This is not done here. At this point it is most reliable to utilise experiences from TPC’s in operation, mainly the ALICE TPC and its upgrade [10,17,157] which is the most modern large scale installation with decades of prior R&D by many experts. In particular the most performance-driving parameter, the drift length, ∼2.5 meter at NNBAR, is the same as for the ALICE TPC. The NNBAR TPC with its shorter track length may have to carefully optimize the drift conditions near the inner and outer edge of the drift field. The ALICE TPC has operated at low magnetic field which also makes it similar to our zero field. The ALICE TPC with 2.5 m drift length in the drift dimension and with no magnetic field has track residuals (normally claimed as the single point resolution) of about 0.5 mm for tracks parallel to the readout plane. This is moderately better at short drift and worse at long. With a tilt angle, the residuals deteriorate to mm scale. The single point resolution is, however, not the parameter of interest in a TPC where many points per track are measured. It is the track location and direction obtained by a straight line fit to many coordinates which matters. However, the inherent resolution of the TPC is not a limiting factor for the pointing towards the vertex. It is, instead, the fact that the measured track direction outside the vacuum chamber is not the same as inside due to multiple scattering in the chamber tube wall (see Section 14.2).

Neutrons arriving at the experimental hall enter an evacuated aluminum beam tube with 1 m nominal inner radius and 2 cm wall thickness, and encounter a thin (

TPC

Outside the beam tube is the TPC system, which provides three-dimensional charged particle tracking for event vertex identification and track matching, as well as particle identification capabilities through measurements of the specific energy loss

The TPC is nominally constructed from eight rectangular chambers each 70 cm deep, 200 cm long and 280 cm wide and contains an assumed 80:20 Ar/CO2 mixture.

The TPC chamber walls are constructed from fiberglass. Charges are collected at the anode wall by gaseous electron multiplier (GEM) foils read out by 32-channel mixed-signal SAMPA ASICS originally developed for the ALICE TPC and muon chamber upgrades at the LHC. The SAMPA chips provide a full-on detector readout chain that includes amplification/shaping, digitization, a DSP layer, and readout over multiple parallel 4.8 Gb/s serial links.

The full TPC system contains 256000 GEM channels that can be continuously read out with sample rates as high as 10 MHz, corresponding to more than 5300 4.8 Gb/s serial links.

HRD

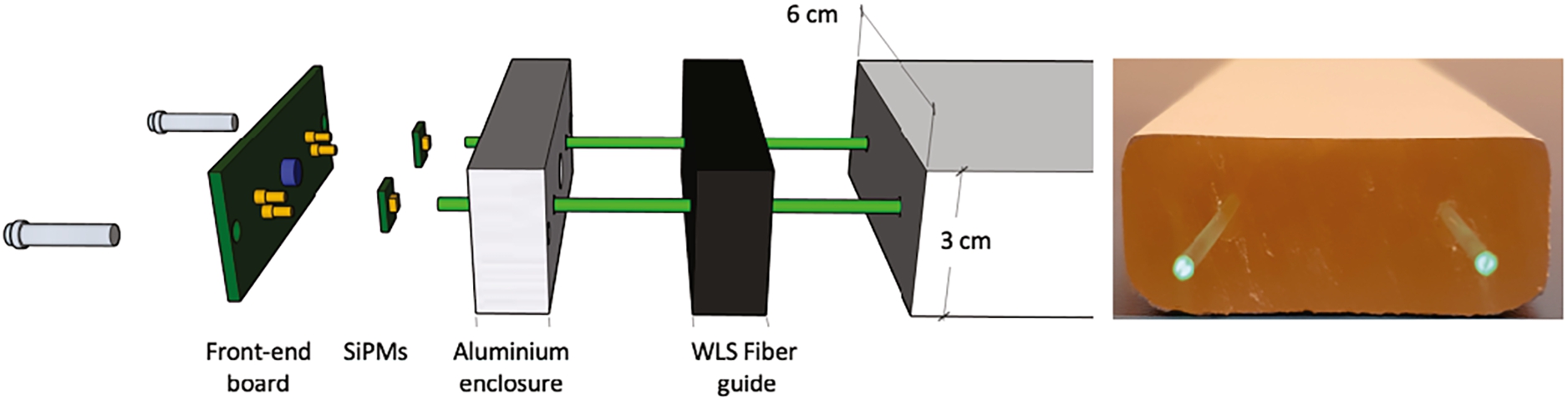

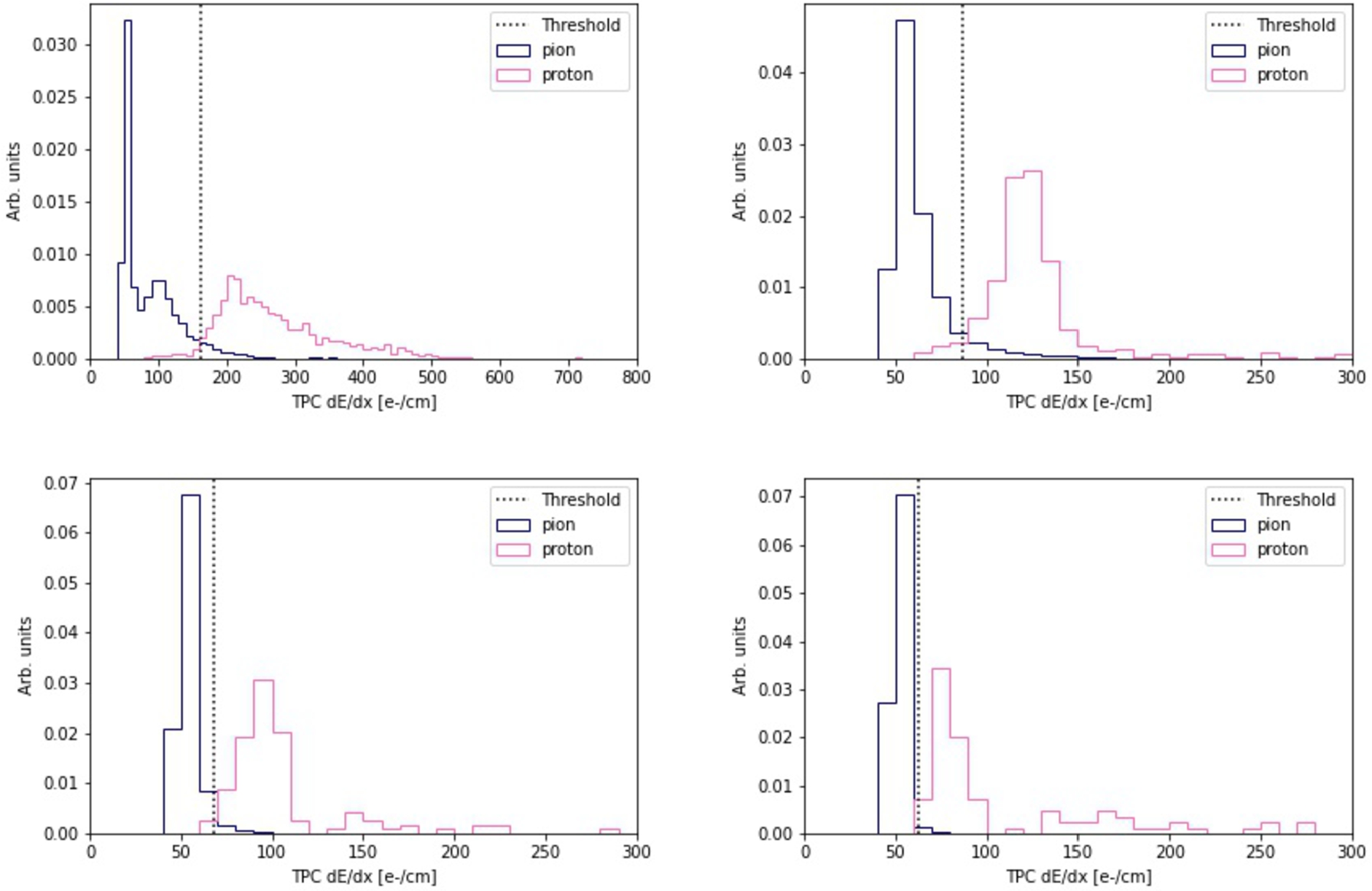

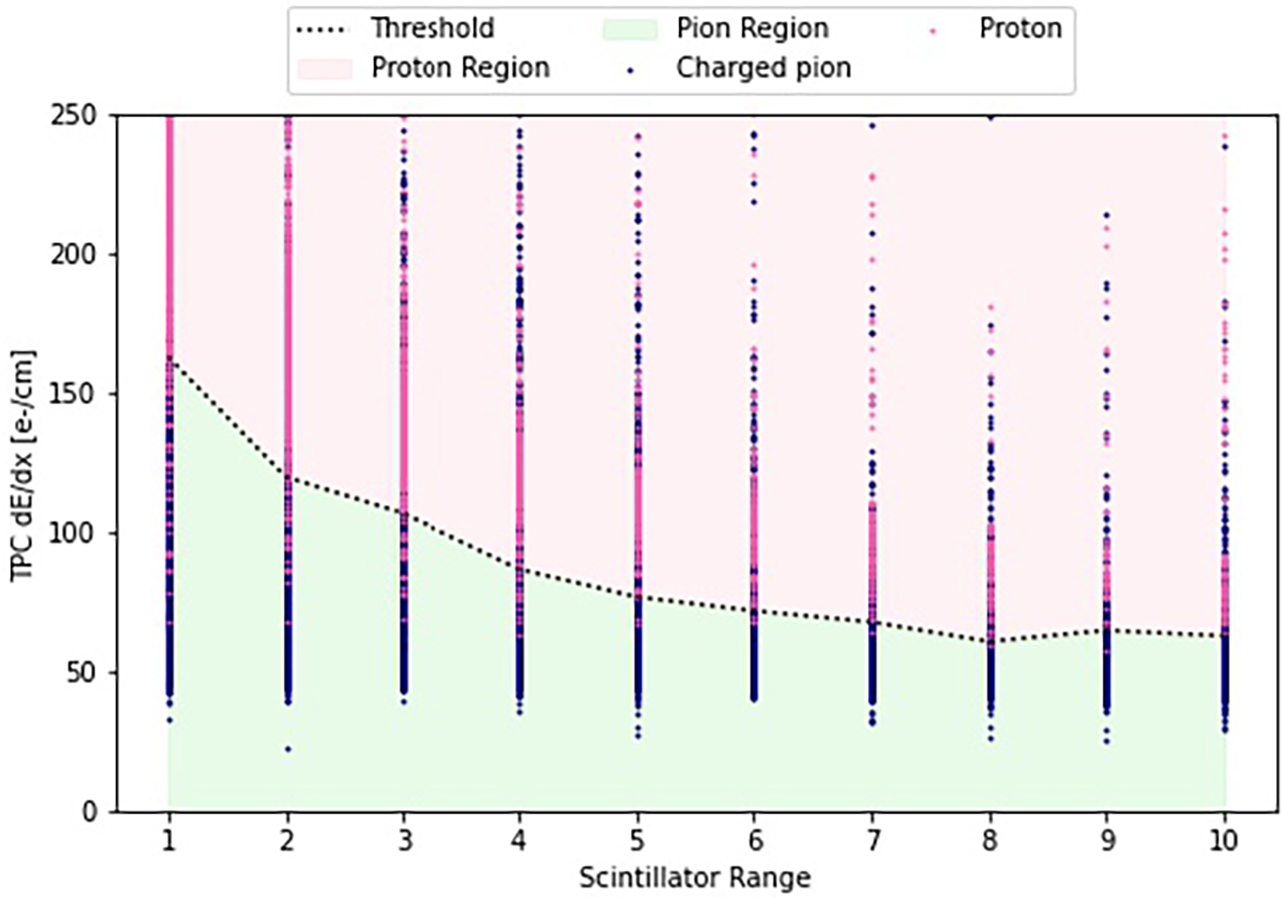

The HRD is a multi-layer calorimeter with plastic scintillator staves that function both as an active material and absorber. It is designed to identify and measure minimum-ionizing energy deposits from low-energy charged pions traversing the scintillator, and is capable of distinguishing between proton and pion tracks by their specific energy loss

The HRD has a nominal thickness of 30 cm deep, and is built from 10 layers of long scintillating staves, each with a thickness of 3 cm.

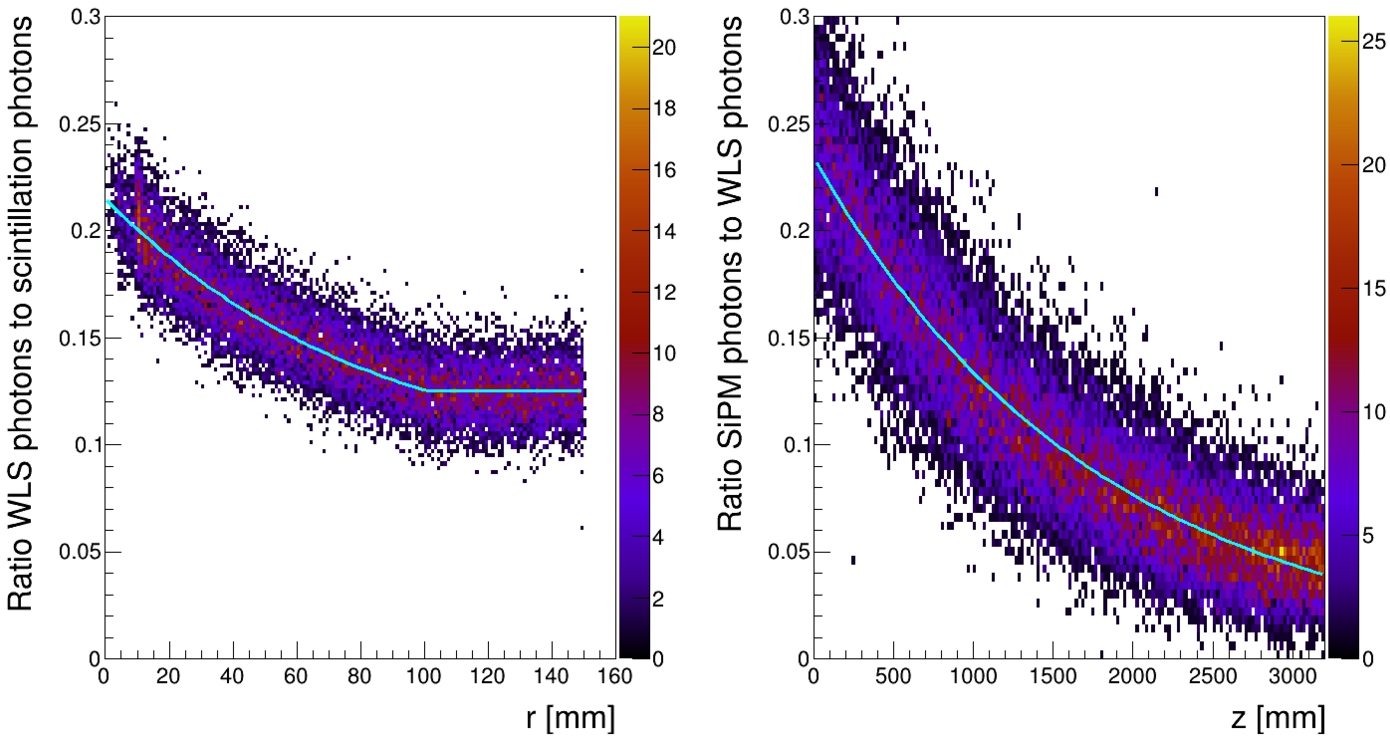

The full HRD stack corresponds to around 0.6 interaction lengths and around 0.5 radiation lengths. The staves in each HRD layer run perpendicular to those in neighboring layers, producing crossing points for reconstructing minimum ionizing tracks of particles passing through multiple layers. Two embedded wavelength shifting (WLS) fibers running along the length of each stave collect and transport scintillating light to silicon photomultipliers (SiPMs) at the ends and the staves are coated with white reflective paint for optimal light collection in the WLS fibers.

The expected timing resolution of the SiPM stave readout is around ∼1 ns [42], and the majority of staves have SiPMs at both ends, allowing position and timing of hits to be estimated from the light propagation timing differences. An exception is at the “end cap” regions where the stave geometry around the beam tube makes this difficult; in the baseline design these shorter staves are therefore read out at only the stave ends furthest from the beam.

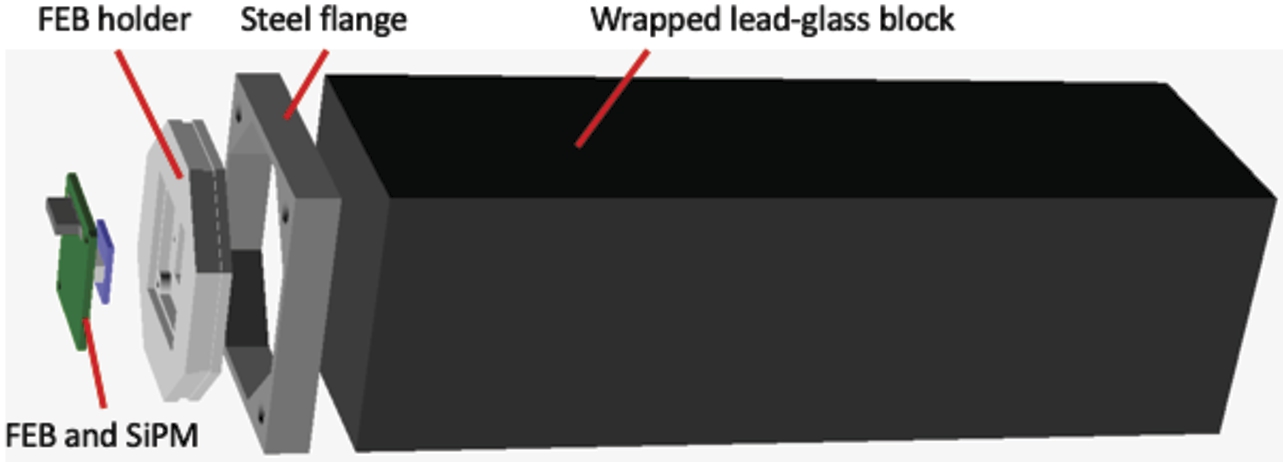

Assembly of two SiPM diode arrays and analog front-end electronics at the end of a scintillator stave (left), end-view of a stave with two wavelength shifting fibers installed. This picture is of a prototype stave (reference [92]). The HRD staves in the design presented here are of different dimensions (see Table 5).

An illustration of SiPM and front-end electronics board (FEB) assembly at the end of each HRD stave is shown in Fig. 54, alongside a photograph of a prototype stave. The WLS fiber ends are aligned by a plastic guide glued to the end of the stave, where they are then cut and polished. A milled aluminum enclosure holding the two SiPMs is mounted over the fiber guide. The enclosure also hosts a front-end electronics board (FEB) with analog amplification, shaping and readout circuitry for the SiPMs, calibration LEDs, and low- and high-voltage power distribution.

This HRD design includes more than 3000 scintillating staves with a combined length of more than 9 km. The staves are read out by approximately 4500 front-end boards and 9000 SiPMs.

The above gives the broad principle of the HRD baseline design. A more segmented HRD with shorter staves of length 40 cm aimed at mitigating light attenuation in the WLS fiber readout and pile-up is possible. This is used here in simulations of signal and background discrimination. The final design depends on details of accurate and reliable estimates of pile-up.

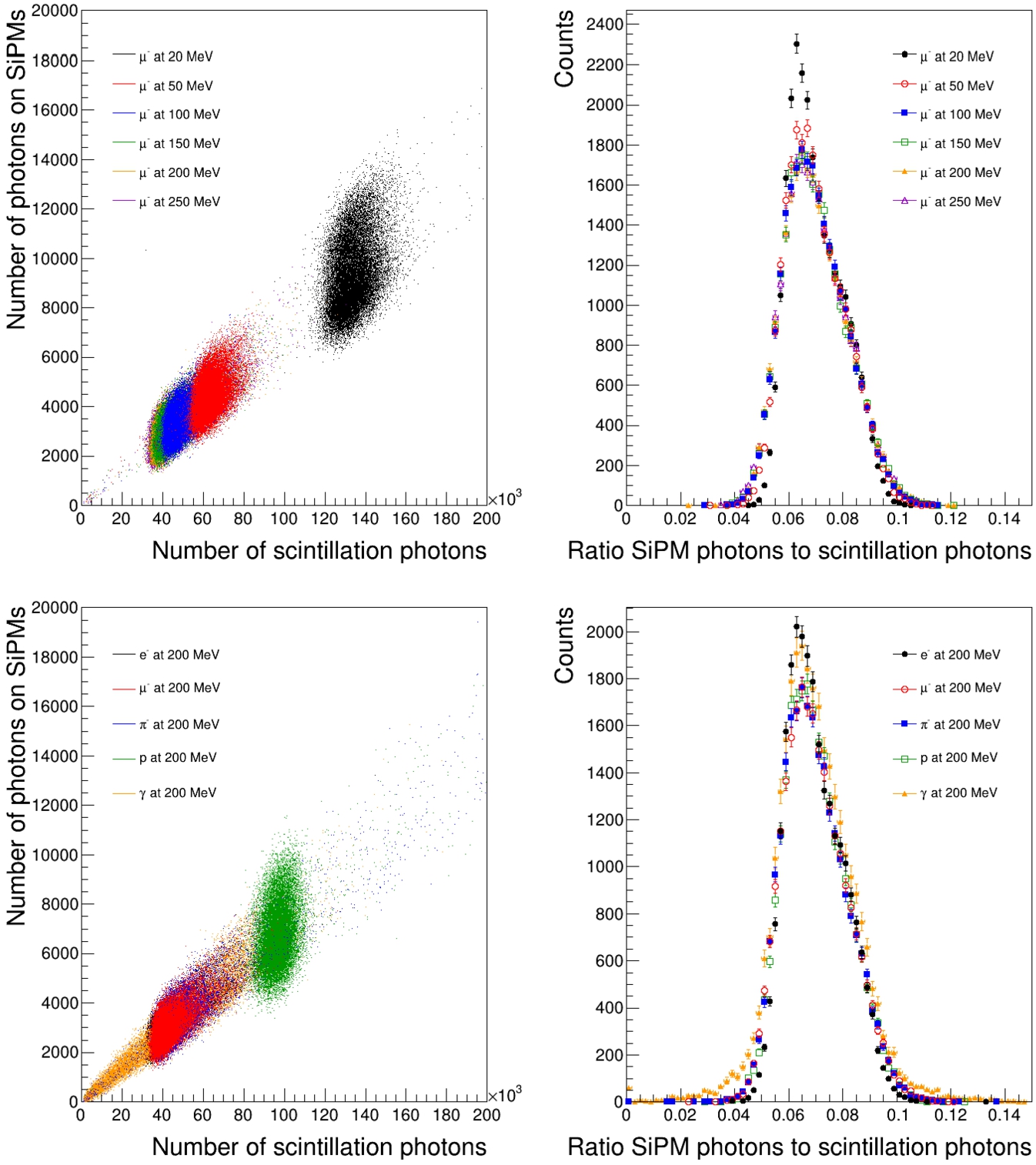

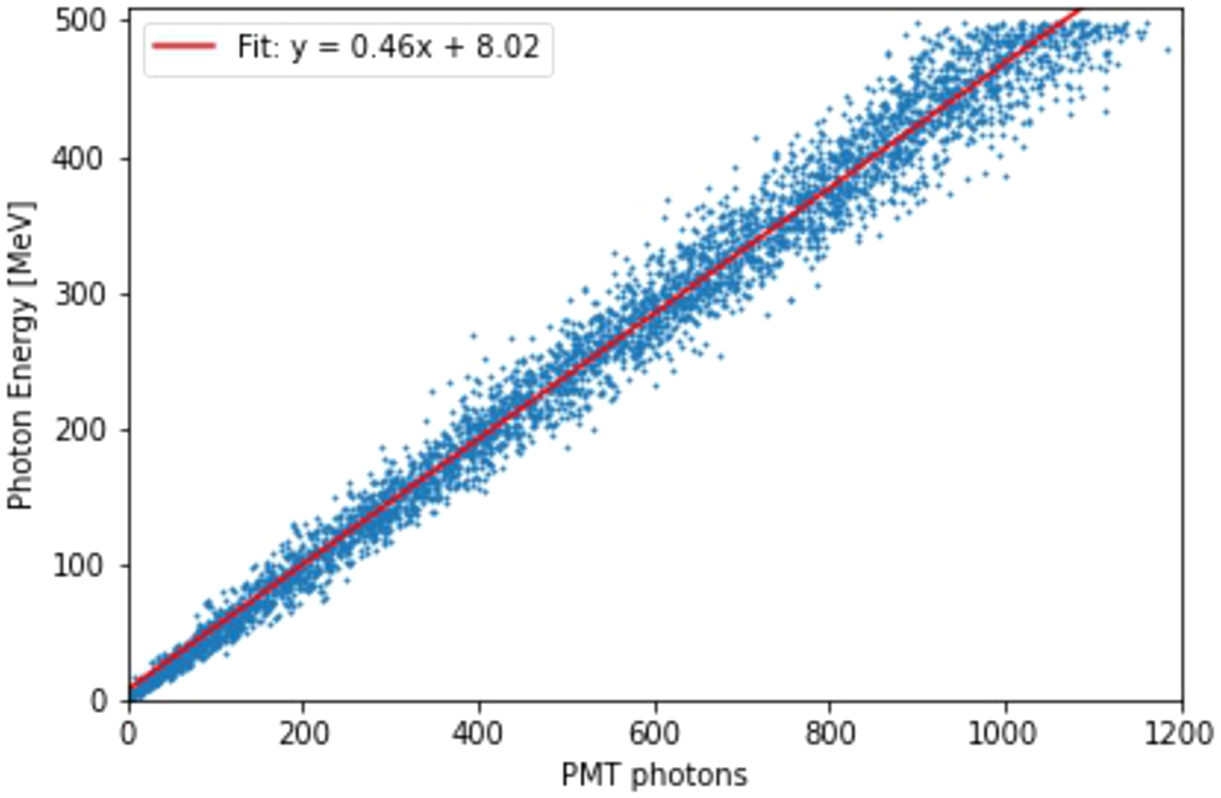

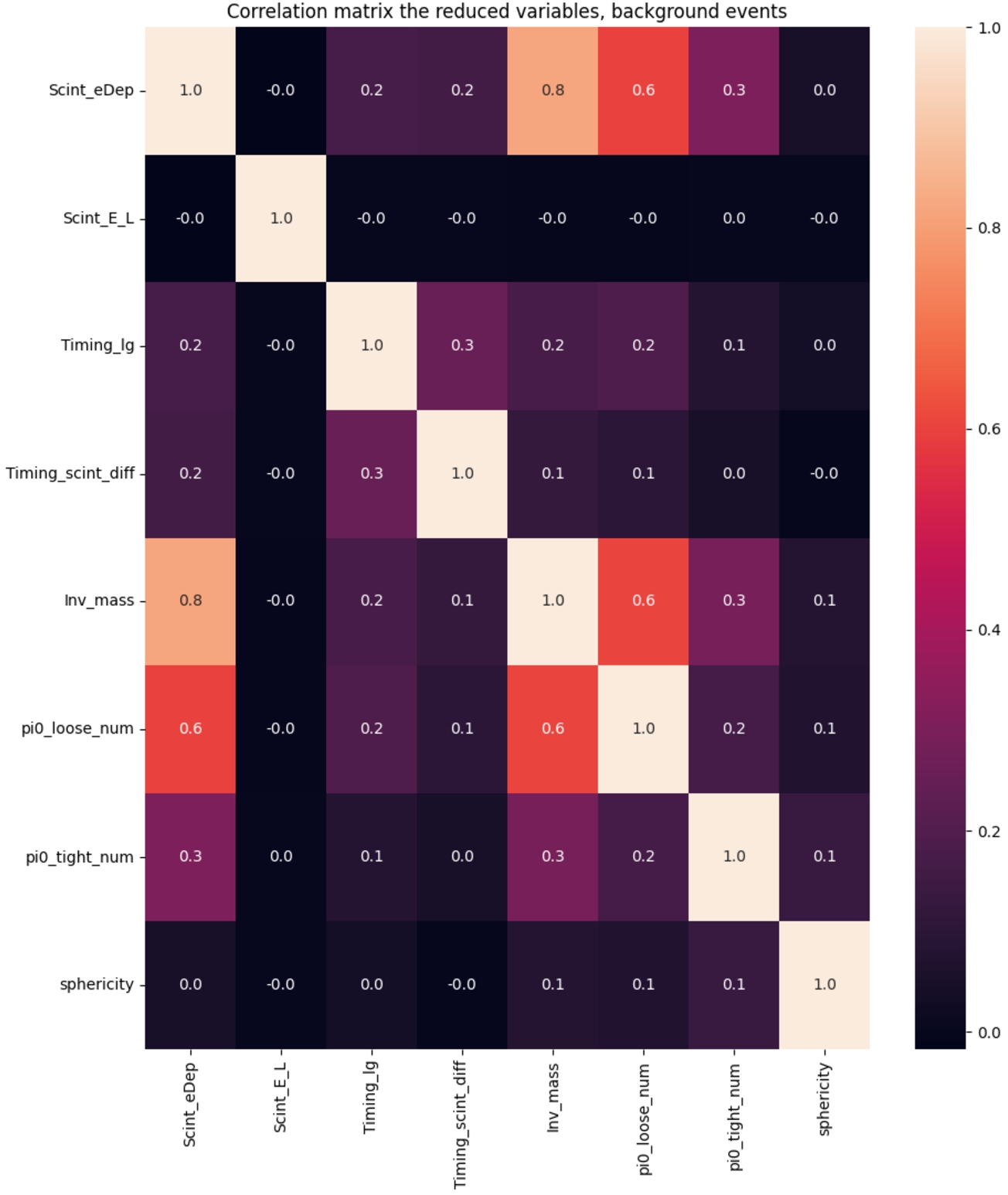

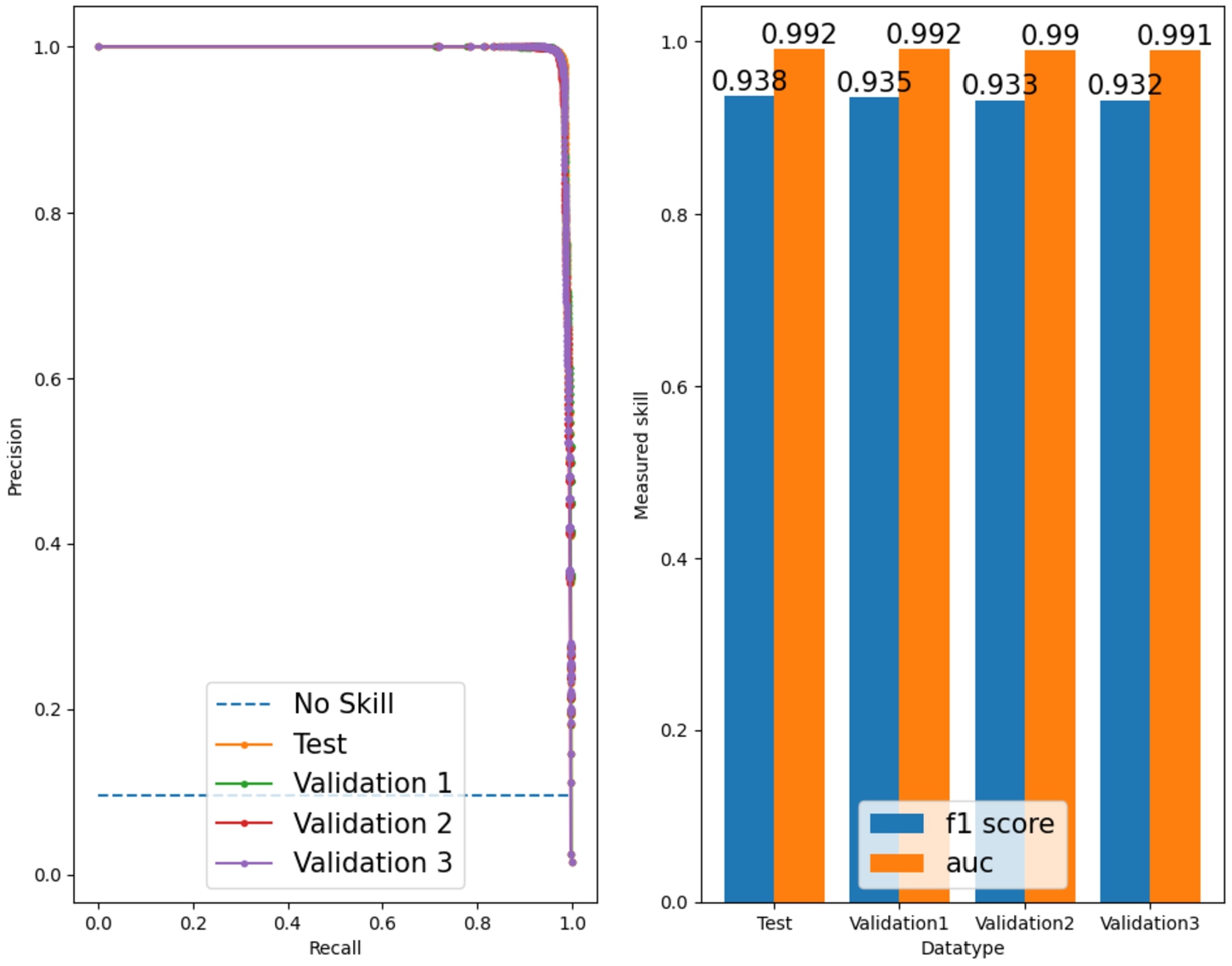

GEANT4 simulations have been used to study the expected energy loss for various thicknesses of scintillators.