Abstract

Data-reduction software used at neutron-scattering facilities around the world, Mantid and Scipp, ignore correlations when propagating uncertainties in arithmetic operations. Normalization terms applied during data-reduction frequently have a lower dimensionality than the quantities being normalized. We show how the lower dimensionality introduces correlations, which the software does not take into account in subsequent data-reduction steps such as histogramming, summation, or fitting. As a consequence, any uncertainties in the normalization terms are strongly suppressed and thus effectively ignored. This can lead to erroneous attribution of significance to deviations that are actually pure noise, or to overestimation of significance in final data-reduction results that are used for further data analysis. We analyze this flaw for a number of different cases as they occur in practice. For the two concrete experiments that are comprised in these case studies the underestimation turns out to be of negligible size. There is however no reason to assume that this generalizes to other measurements at the same or at different neutron-scattering beamlines. We describe and implement a potential solution that yields not only corrected error estimates but also the full variance-covariance matrix of the reduced result with minor additional computational cost.

Keywords

Introduction

The number of neutron counts measured in a detector element during a neutron-scattering experiment follows a Poisson distribution. In practice this is typically handled with a Gaussian approximation, setting the standard-deviation

Disclaimer: The authors of the present paper are the principal authors of Scipp and have previously contributed to Mantid for several years.

One caveat in both Mantid and Scipp is that correlations are not taken into account in the propagation of uncertainties. This is generally not considered a problem since the raw data are assumed to be uncorrelated. However, as we will show, ubiquitous operations in neutron-scattering data-reduction introduce such correlations in intermediate computation steps but subsequently fail to take these into account. This leads to a systematic underestimation of uncertainties in final results such as

The normalization term could be, e.g., a neutron monitor spectrum or a reduced vanadium spectrum.

The remainder of this work is organized as follows. We describe the problem in Section 2. In Section 3 we analyze a couple of concrete examples, in particular for small-angle neutron scattering (SANS) and powder diffraction. Furthermore, we derive full expressions for the variance-covariance matrices of the reduced data, which turn out to be simple and computationally tractable. We confirm the correctness of the improved estimates for the uncertainties using a bootstrapping [9] approach. The results are discussed in Section 4 and we draw conclusions in Section 5.

For neutron-scattering data-reduction the most relevant operations that require handling of uncertainties are addition, multiplication, and division. Normalization operations can be implemented as division or as multiplication with the inverse. The Taylor series expansion (truncated after first order) for uncertainty propagation [3] gives

Whether this assumption is always correct is not the topic of this manuscript.

The most straightforward example of the incorrect treatment of correlations is the computation of a histogram of event data after normalization. As described in [19], CS event-mode normalization first histograms the normalization term. Then, for every event of the data to normalize, the corresponding bin in the normalization histogram is identified and the event weight is scaled with the inverse of the value of that bin. We consider a single bin with n events, their weights

Here we chose to compute the same result in two steps using correlations of summands since it aids in highlighting the difference to the CS approach. A direct and likely simpler way to compute this is using

Multiplication

Concretely, for the example of histogramming event data, all neutrons typically have the same initial weight

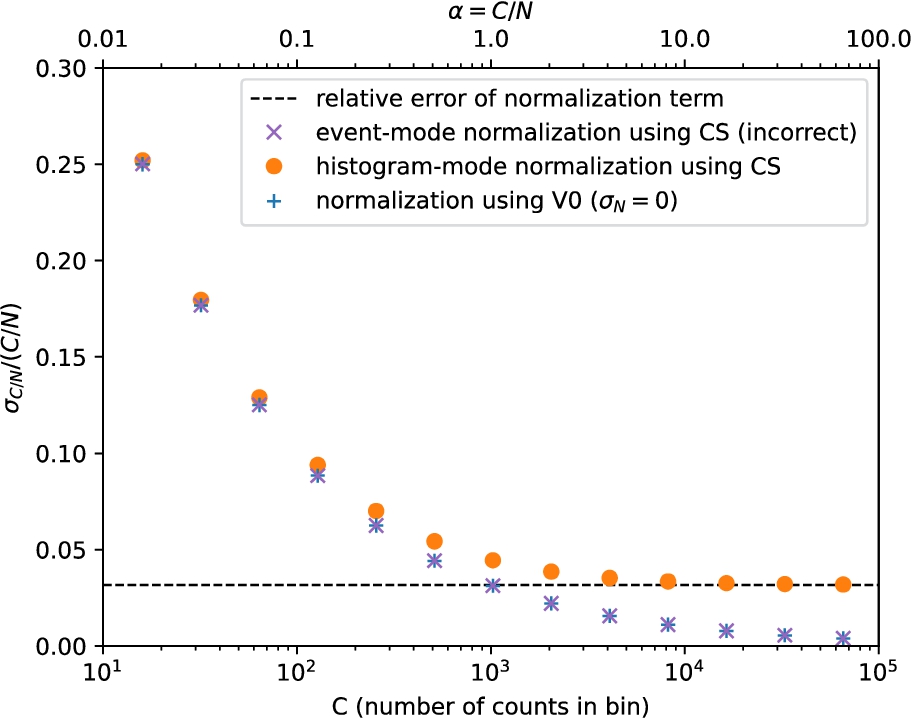

To illustrate this effect, we show in Fig. 1 how the relative uncertainty for a histogram of normalized event data scales with the number of events. Both the CS implementation (×) and the V0 approximation (+) of event-mode normalization lead to a relative error of the histogrammed result that goes to 0 with increasing event count. Importantly, CS and V0 yield near-identical results for all values of C. In contrast, normalization after histogramming (∙) or correct handling of correlations yields a relative error limited by the relative error of the normalization factor.

Relative error

In summary, the CS approach is thus either wasteful (since it is equivalent to V0 with additional compute overhead), or incorrect. In the next section we analyze more complex cases, namely normalization in SANS and powder diffraction workflows. These involve a transformation of coordinates6

In Mantid such coordinate transformations are referred to as unit conversion.

SANS

In a time-of-flight SANS experiment, the differential scattering cross section

The direct-beam function allows to cross-normalize the incident spectrum to that of the empty beam (without sample) seen on the main detector.

In the SANS case the CS calculation of the normalization term itself (rather than the normalization of the detector counts) in Eq. (17) suffers from the issue described in Section 2. In contrast to the example from Fig. 1, the problem here is not caused by event-mode normalization, but by a different type of broadcast operation. Since the λ-dependent factors in the denominator do not depend on i and j, and the solid angle

Here we assumed quasi-elastic scattering. A correction factor for inelastic-scattering [14] would depend on i, j, and λ.

Note that there are a number of other contributions to the uncertainties with similar issues, which we ignored in this analysis. Our goal here is to provide an example for illustration of the problems in CS, not a complete analysis of the entire SANS data reduction. See the discussion in Section 4 for more background.

To illustrate the issue we study the data-reduction workflow for an experiment on non-ionic surfactants that was carried out at the Sans2d instrument [22] at the ISIS facility at the STFC Rutherford Appleton Laboratory.9

The data has been published in [17], where all the details on the experimental setup can be found.

The monitors from the direct run,

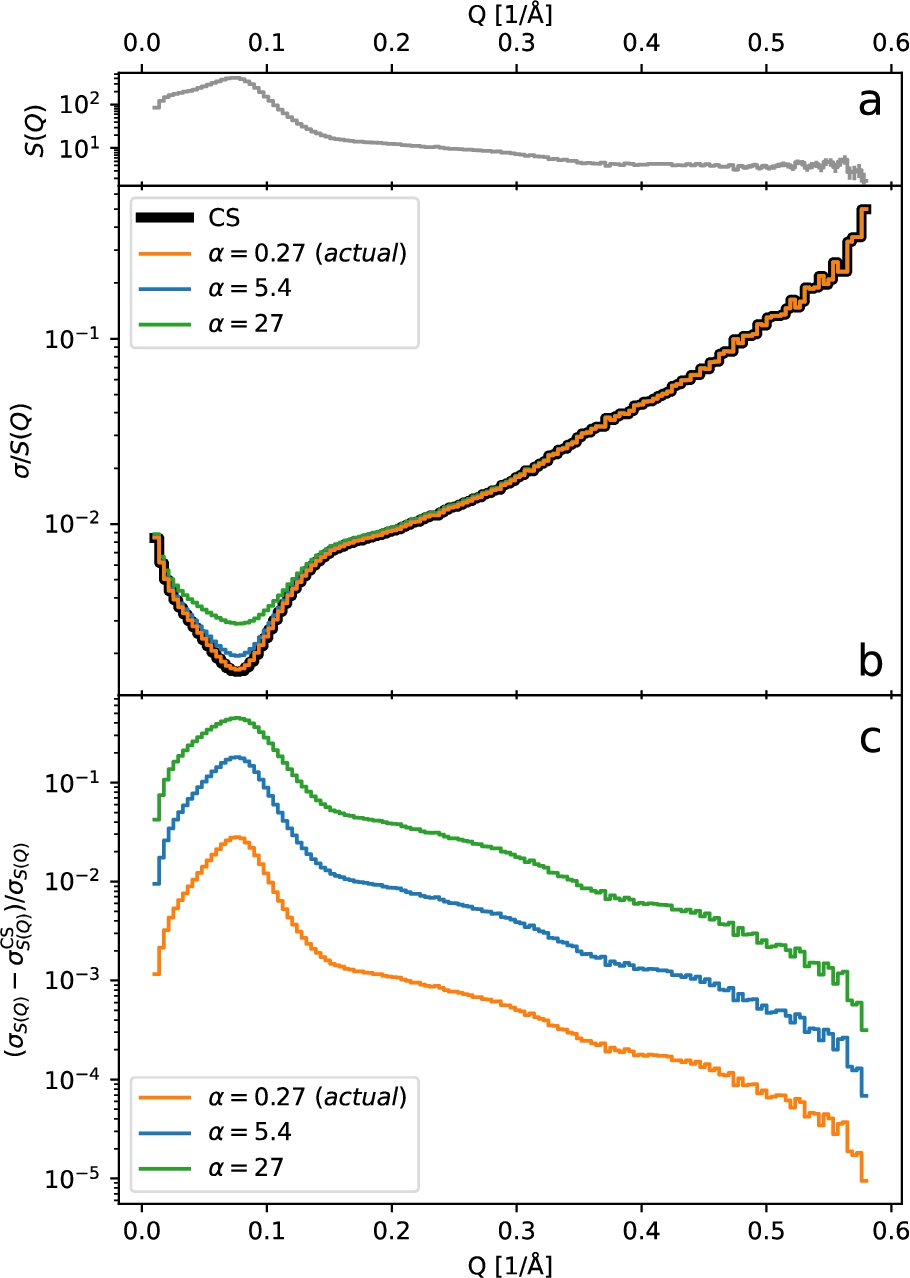

(a) The scattering differential cross-section

Comparison of the relative standard deviation for

As a verification of these results, we independently computed the uncertainties by using a bootstrap [9] method – a sampling technique that can be used to estimate the distribution of a statistic.11

For related methods such as Markov-chain Monte-Carlo uncertainty estimates see, e.g., Ref. [2] and [18].

Including background subtraction in our bootstrap calculations, i.e., computing

The correct (first order) expression for the uncertainties for this reduction workflow can be derived in a straightforward manner. We adopt a simpler and more generic notation equivalent to Eq. (17) as follows. We define

We use bins of constant width to simplify the notation, but the following results are equally valid for other choices of bin widths.

Note that in software histogramming is not actually implemented via a matrix multiplication as this would be highly inefficient.

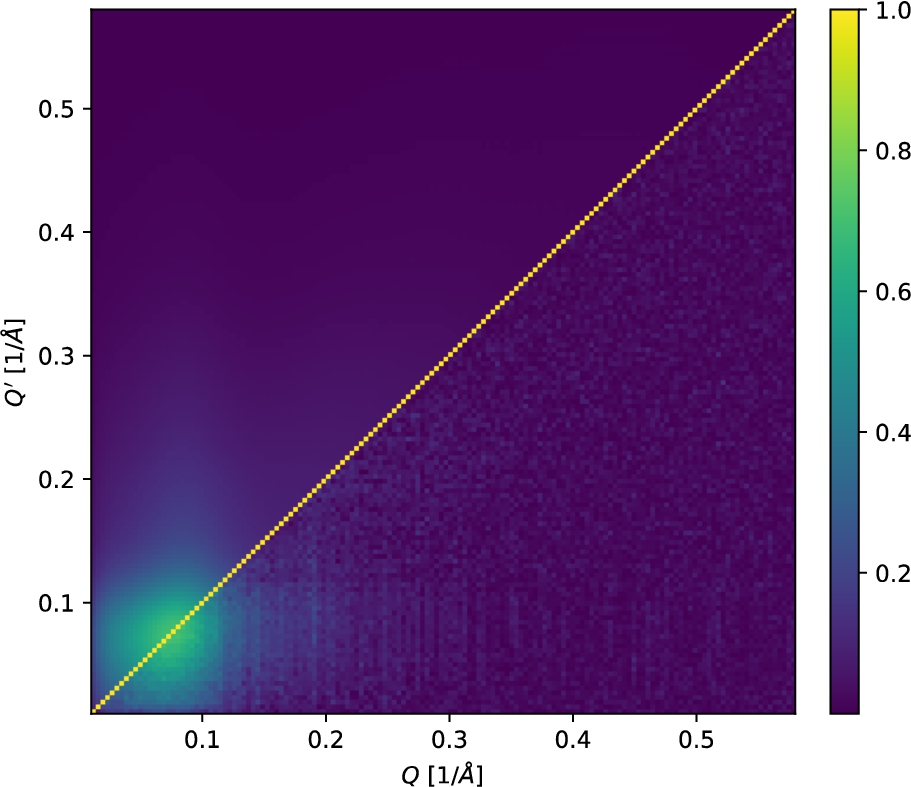

The diagonal component of this result is included in Fig. 2. In addition, we show the resulting Pearson correlation matrix

For example,

scipy.optmize.curve_fit

accepts the covariance matrix as input via the

Correlation matrices for the time-of-flight SANS-reduction workflow using

We consider a simplified model of a time-of-flight powder-diffraction reduction workflow based on the workflow used at ISIS [15]. Our model workflow consists of the following steps: (1) compute wavelength coordinates for detector data and monitor data, (2) compute wavelength histograms, (3) normalize the detector data by the monitor, (4) compute d-spacing coordinate for detector data, and (5) compute d-spacing histogram, combining data from all (or a user-defined subset of) detector pixels. This yields the normalized d-spacing spectrum

The sample is NaCaAlF measured in Wish run number 52889 and the vanadium was measured in run number 52920.

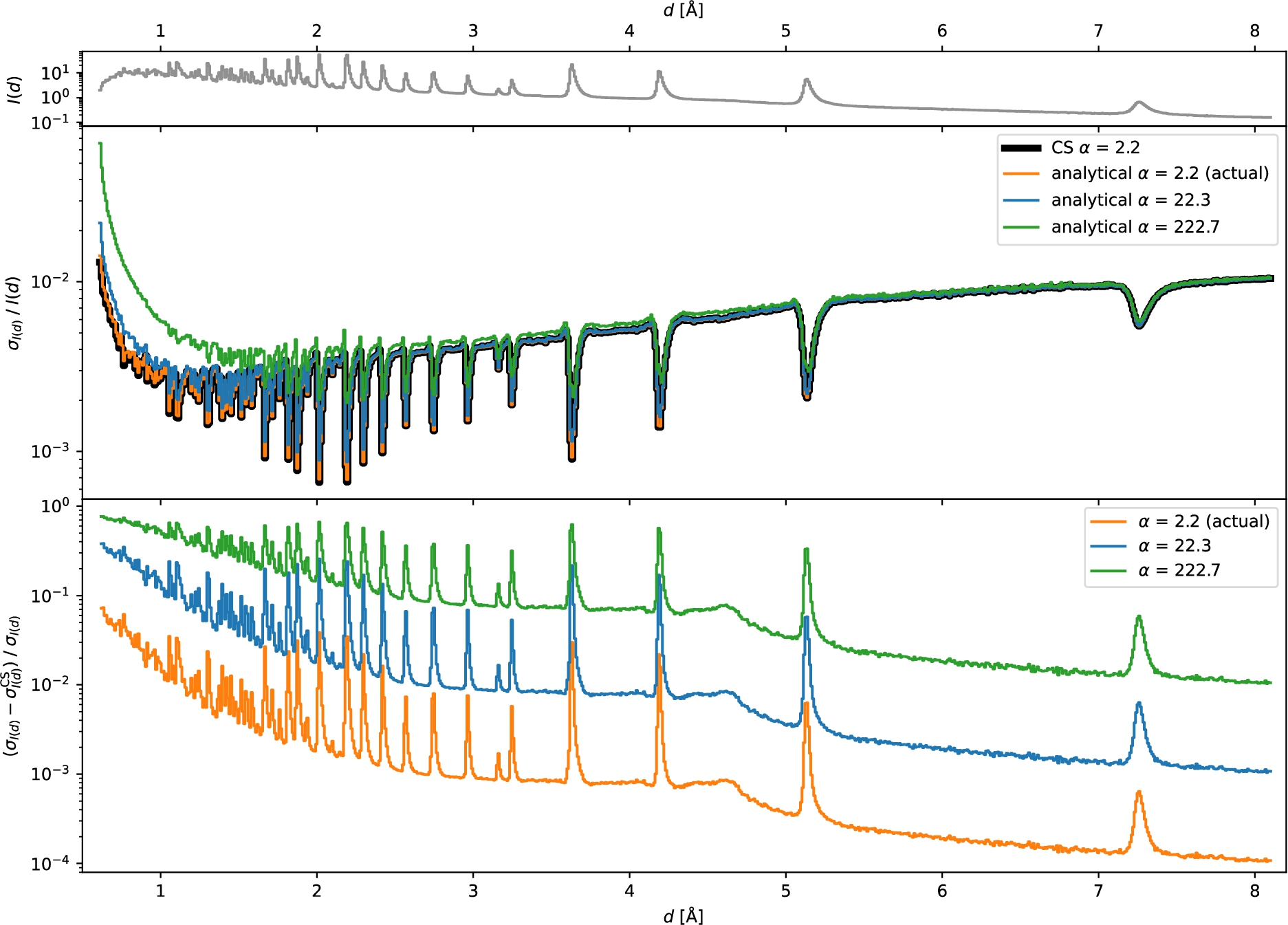

In Fig. 5 we compare the uncertainties computed by CS and by an improved analytical approach which will be described below. Source code for reproducing these results can be found in the supplementary material [13]. As described in Section 3.1, we study the influence of the ratio of total detector to monitor counts, α (defined equivalently to Eq. (19)), influenced, e.g., by the sample volume. For

Top:

Comparison of the relative standard deviation for

For the simplified model workflow we can derive the correct (first order) expression for the uncertainties in a straightforward manner. This also provides the covariances or correlations between different d-spacing values. We adopt the following notation. Let

We use bins of constant width for simplifying the notation, but the following results are also valid for other choices of bin widths.

For histogrammed data the expression may need to be adapted depending on the rebinning approach that is being used.

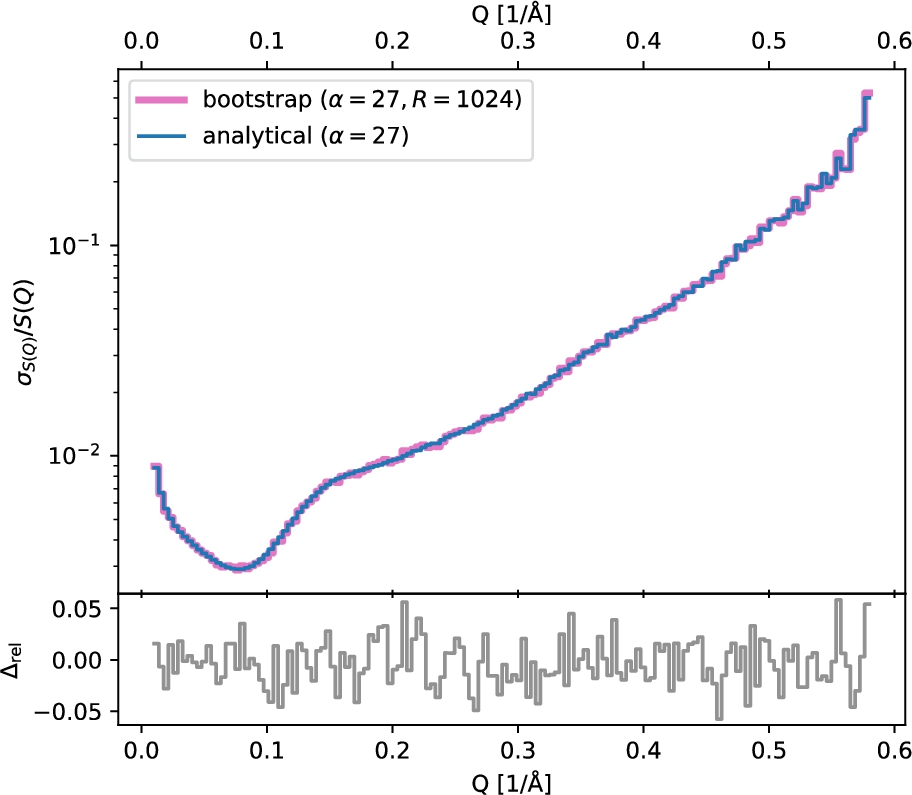

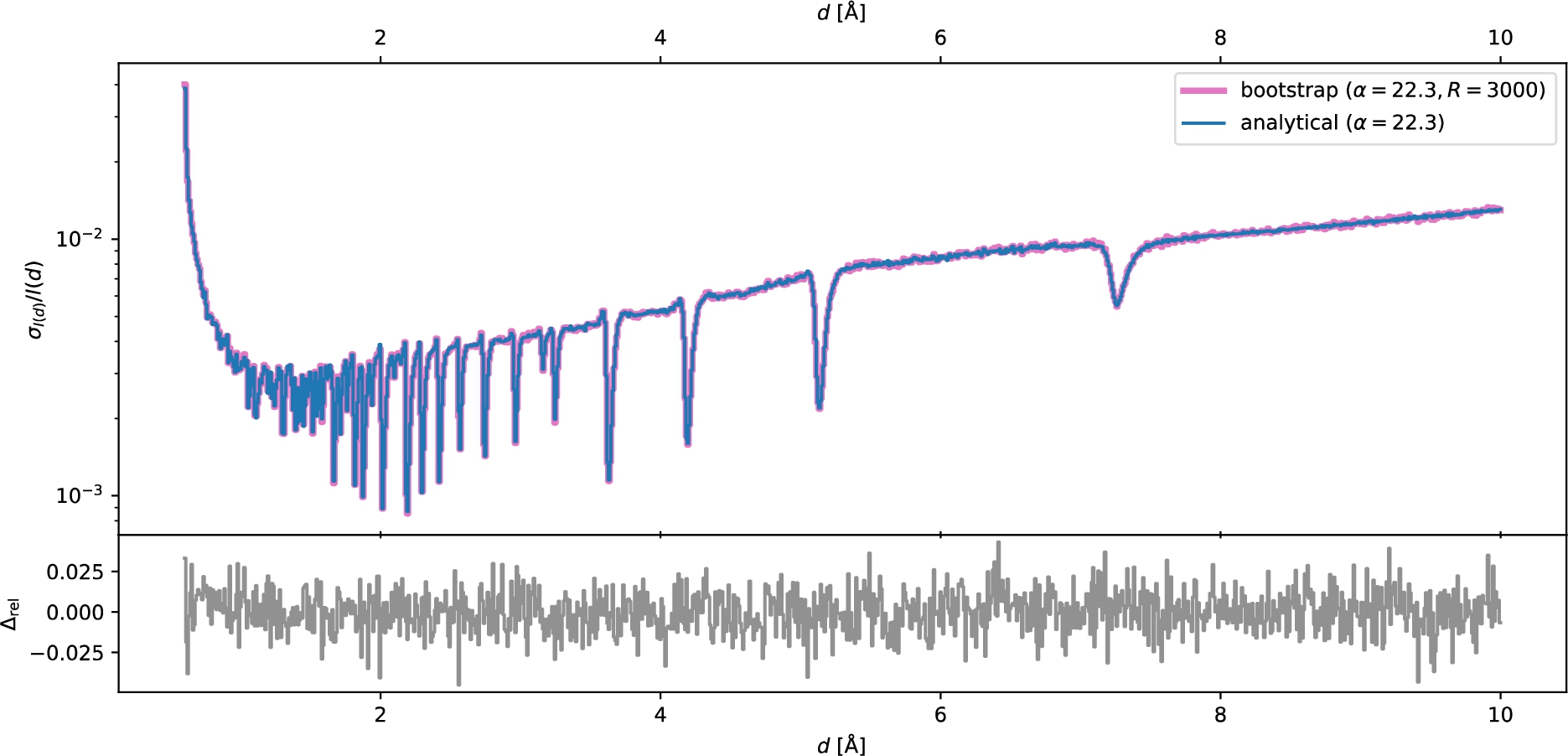

In Fig. 6 we compare the relative errors computed using the analytical approach and bootstrap (see Appendix A, in particular Fig. 9 which shows the same result for

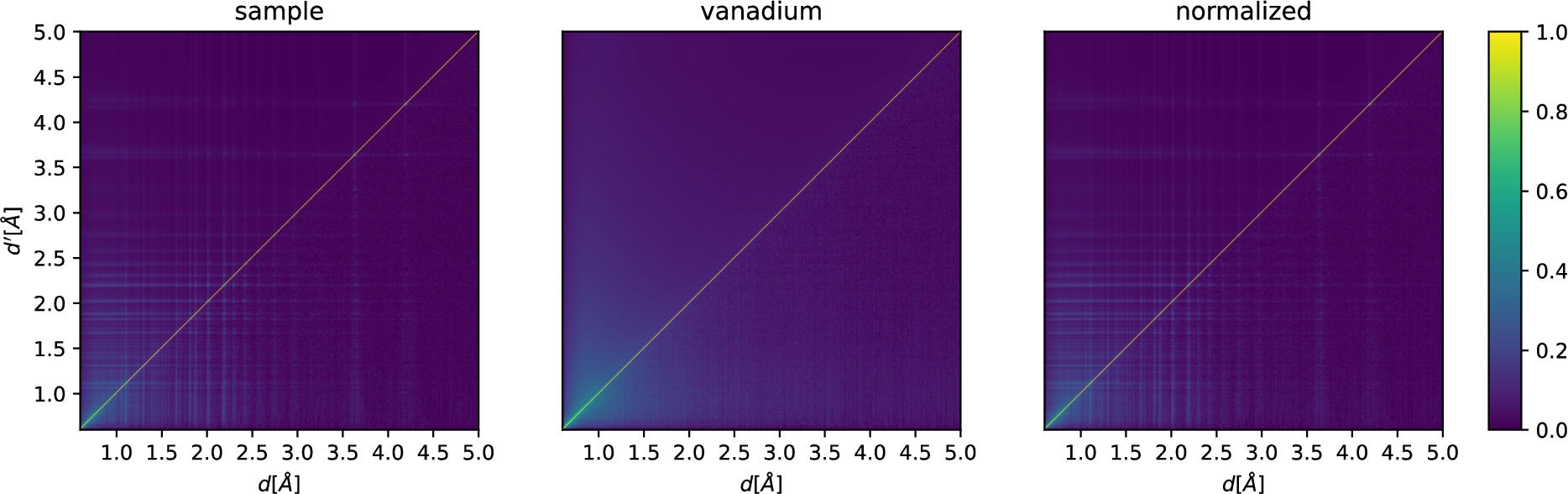

Correlation matrices for a powder-reduction workflow. We show the result for the sample S (left,

For scattering off a vanadium sample, we define

The effect of smoothing

Smoothing is routinely used to reduce the effect of noise in normalization terms such as a neutron monitor spectrum or a reduced vanadium spectrum. This reduces the size of the variances of the normalization term,19

Note that, e.g., Mantid’s

Current software: Scope and impact of incorrect uncertainty handling

We have described and demonstrated a systematic problem with the treatment of the statistical uncertainties of normalizations terms in Mantid and Scipp. This constitutes the most important result of this work.

Affected software includes Mantid (all versions at the time of writing, at least up to Mantid v6.5) and Scipp (all versions up to Scipp v22.11, inclusively) but the issue may also be present in other similar data-reduction software. Mantid is used at the ISIS Neutron and Muon Source at the STFC Rutherford Appleton Laboratory (

To our knowledge little or no impact of the issue described in this manuscript has been reported in practice. There are at least two likely explanations. Firstly, variations of Eq. (13) may hold in many cases. That is, in practice the normalization terms often have very good statistics – as we have seen for the concrete SANS and powder diffraction examples in Section 3.1 and Section 3.2, respectively. This can be both due to practicality (it is relatively simple to measure many vanadium counts, or have a monitor with a high count rate) and due experience (instrument scientists know how long to measure normalization terms for good results). Secondly, coordinate transformations, as well as preprocessing steps of the normalization terms such as smoothing, lead to correlations that spread over a wider range. These correlations might affect mostly non-local aspects, which could be harder to discern when, e.g., fitting a physical model to the reduced result.20

We do not have enough background knowledge about concrete subsequent data analysis methods to tell when and where such non-local properties might be irrelevant.

In our opinion the above can however not justify dismissal of the issue. The current state-of-the-art software gives a false sense of security (of having handled uncertainties correctly) to the scientist. While we managed to compute corrected analytical results for variances and covariances in the model workflows used in this manuscript, this cannot be directly substituted in CS implementations for the operation-by-operation error-propagation mechanism. At the very least our software must thus avoid giving a false sense of security and should therefore reject normalization terms that have uncertainties. This will force the scientist to consider whether the normalization uncertainties fulfill Eq. (13). Based on this they can then either (A) confidently drop this term or (B) increase the statistics by measuring the normalization terms better.

A complete analysis for any particular technique such as SANS or powder-diffraction is beyond the scope of this manuscript. The workflows discussed in Section 3 are therefore not necessarily capturing all potential sources of uncertainties, but are meant to illustrate the key aspects. To give an idea of what a more complete analysis might involve, consider the SANS case. Our analysis in Section 3.1 covered the effects of the variances of the monitors. There are however a number of other contributions: (1) Before computation of wavelengths and Q, the beam center is determined. The uncertainty on the beam center leads to a correlation of all computed wavelength and Q values. (2) For the computation of the transmission fraction, each monitor is background-subtracted: The monitor background is computed as the mean over a user-defined wavelength range and then subtracted from the monitor. This broadcasts the mean value to all monitor bins, leading to correlations. (3) The direct beam function D itself comes with uncertainties which were computed using CS and may thus be underestimated in themselves.

Intriguingly, the SANS data reduction implemented in Scipp prior to this work (and used for this analysis) actually ignored the uncertainties of D. This provides a worrysome glimpse of the potentially cascading negative effects of the CS implementation: An interpolation is required to map D to the wavelength-binning of the monitors, and it was unclear how uncertainties should be handled during this interpolation. During development of the workflow it was determined empirically that setting D’s uncertainties to zero had no influence on the uncertainties of the final result. Looking back, we can now tell that this empiric evidence was based on the incorrect handling of uncertainties – since the broadcast of the pixel-independent D to all pixels supresses its contribution to the final uncertainties. Thus the decision to ignore them needs to be revisited. Preliminary results indicate that correct handling of the uncertainties of D may noticably impact the final uncertainties.

Aside from highlighting the systematic problem, we have succeeded in Section 3 in deriving expressions for variance-covariance matrices of the data-reduction results. As we will show in the discussion in Section 4.3, there is likely no major difference in terms of computational cost compared to the flawed approach currently implemented in Mantid and Scipp, even though it provides access to not just the variances but also the covariances of the final result. We conjecture that our approach may be feasible also for complete and realistic data-reduction workflows. We plan to investigate this in more detail in future work, in particular to extend this to other neutron-scattering techniques and to include corrections such as absorption corrections and background subtractions.

A fundamental difference – and possibly the main challenge – is that unlike in the CS implementation, handling and computation of variances is difficult to automate. While Python packages such as JAX [4] and Uncertainties [16] implement automatic differentiation approaches, it is unlikely that this is directly applicable for the components of a neutron-scattering data-reduction workflow.21

Even if automatic differentiation could handle operations such as histogramming, the Uncertainties Python package stores arrays with uncertainties as arrays of values with uncertainties. The very high memory and compute overhead makes this unsuitable for our needs.

On the positive side, our new approach can produce data-reduction results including covariances. This may be beneficial for subsequent analysis and model fitting procedures. Furthermore, Scipp has proven flexible enough to allow for computation of the expressions for the variance-covariance matrix with very concise code and adequate performance. This further increases our confidence that the described approach may be a viable solution for handling data from day-to-day instrument operations.

In Sections 3.1 and 3.2 we described an improved analytical approach for computing uncertainties and correlations for the result of the data-reduction. The computation of the variance-covariance matrices turns out to be not significantly more expensive than the computation of the cross sections. Despite yielding the full correlation matrix the overall cost is thus comparable to that of the CS implementation. This holds true even for event-mode data-reduction. To understand this, one important aspect to realize is that – in contrast to CS – it is no longer necessary to carry the uncertainties of the detector counts in intermediate steps, since for the raw input data the variances are equal to the measured counts. A full analysis of the performance characteristics is beyond the scope of this paper. We briefly touch on the main aspects and proceed to report concrete timings for an example below.

Firstly, considering the normalization operation we observe the following. In addition to the normalized counts,

Timings measured for NumPy [10] backed by a multi-threaded linear-algebra library.

We illustrate this on the example of the Wish powder-diffraction data-reduction. The input data has a file size of

Timings for the relevant part of the Wish histogram-mode data-reduction.

In this paper we have shown a systematic problem with the treatment of the statistical uncertainties of normalizations terms in Mantid and Scipp. We have shared our findings with the Mantid development team. In the Scipp project, we have disabled propagation of uncertainties if one of the operands is broadcast in an operation with the release of Scipp v23.01. Support for event-mode normalization operations with normalization terms that have variances is also disabled. In both cases an exception is raised instead, i.e., the operation will fail, forcing the user to address the problem correctly. We had initially considered simply displaying a warning to the user when an operation introduces correlations. However, experience shows that warnings are almost always ignored and we now do not consider such an approach as a solution.

Aside from highlighting the systematic problem, we have succeeded in deriving expressions for variance-covariance matrices of the data-reduction results. Computationally the new approach would be feasible, but whether it is applicable and useful in practice remains to be investigated.

Footnotes

Acknowledgements

We kindly thank the authors of Ref. [![]() ] for allowing us to use their data for the SANS example. We thank Pascal Manuel for sharing Wish data, used in our powder-diffraction example. We thank Evan Berkowitz and Thomas Luu for valuable discussions about bootstrap and correlations. We thank our coworkers Celine Durniak, Torben Nielsen, and Wojciech Potrzebowski for helpful discussions and comments that helped to improve the clarity of the manuscript.

] for allowing us to use their data for the SANS example. We thank Pascal Manuel for sharing Wish data, used in our powder-diffraction example. We thank Evan Berkowitz and Thomas Luu for valuable discussions about bootstrap and correlations. We thank our coworkers Celine Durniak, Torben Nielsen, and Wojciech Potrzebowski for helpful discussions and comments that helped to improve the clarity of the manuscript.

Bootstrap

This section gives a brief overview of bootstrapping as used in this work. For a more complete explanation we refer to Ref. [9]. Given a measured distribution of random variables

In this work,

We assume all

This assumption also underlies the regular propagation of uncertainties.

As an example, Figs 8 and 9 show the relative standard deviation of

Figures for correlation matrices

For completeness, we show in this section the figures of the correlation matrices from the SANS and powder diffraction workflows using a logarithmic color scale.