Abstract

Information amount has been shown to be one of the most efficient methods for measuring uncertainty. However, there has been little research on outlier detection using information amount. To fill this void, this paper provides a new unsupervised outlier detection method based on the amount of information. First, the information amount in a given information system is determined, which offers a thorough estimate of the uncertainty of this information system. Then, the relative information amount and the relative cardinality are proposed. Following that, the degree of outlierness and weight function are shown. Furthermore, the information amount-based outlier factor is constructed, which determines whether an object is an outlier by its rank. Finally, a new unsupervised outlier detection method called the information amount-based outlier factor (IAOF) is developed. To validate the effectiveness and advantages of IAOF, it is compared to five existing outlier identification methods. The experimental results on real-world data sets show that this method is capable of addressing the problem of outlier detection in categorical information systems.

Introduction

Background and related work

Data mining aims to select the best information from massive amounts of data. Most information conforms to certain rules, but there are also small quantities of information that runs counter to the rules for various reasons, and these are called outliers.Until now, there has not been a uniform and rigorous definition of outliers, and the description commonly cited by scholars was proposed by Hawkins in the 1980s, namely, “outliers are different data points in a data set”. We can understand that outliers are significantly different from the rest of the data set. Outliers are also commonly referred to as outliers, blemishes, outliers, inconsistencies, deviations, and novelties [13]. Edgeworth was the first to perform outlier detection by analyzing and mining outlier points using mathematical statistics. Since then, many scholars have applied new theories and technologies to outlier detection and optimized and improved them constantly, making outlier detection an important branch of data mining on a par with predictive modeling, cluster analysis, and association analysis and playing a pivotal role in many fields such as public health and medical treatment [25], loan approval [31], weather forecasting [4], education management [26], and power operation [19, 38]. The name, definition, and criterion of outliers are different due to different application fields or purposes.

There are various ways to categorize outlier detection methods. Outlier detection methods can be classified into three categories according to whether labels are involved in the process of recognition: supervised, supervised nested, and unsupervised supervised. It can be divided into four categories based on different method principles as well: probability distribution-based methods, classification-based methods, clustering-based methods, and proximity-based methods.

Probability distribution-based methods utilize a standard distribution to fit the data set and identify outliers based on the probability distribution. For example, Yamanishi et al. [33] used a Gaussian mixture model to represent normal behavior and scored each case according to the variation of the model, and a high score indicates a high probability of being an outlier. Later, this research result was used in conjunction with a supervised learning method to obtain another probability distribution outlier detection model.Shin [30] employed Markov chains to probabilistically model abnormal events in networked systems.While classification-based methods do not need to know the sample distribution and do not need to label the sample, They have strong learning inference ability, good generalization, and are theoretically more suitable for outlier detection of high-dimensional data. Such as neural network-based methods, Bayesian network-based methods, support vector machine-based methods, and rule-based methods.For clustering-based methods such as DBSCAN [11] and DPC [27], no strict rules about data types, no priori knowledge, and class labels are needed. They mainly focus on finding clusters and regard objects far from the cluster centers as outliers.Proximity-based methods have similar advantages to clustering-based methods and identify outliers mainly based on the distance or density of objects. The method commonly used for local proximity is the LOF method [3], and then a series of methods such as LDOF and LoOP have been proposed based on LOF [6, 18].

With the rapid development and gradual improvement of methods related to rough set theory (RST), these methods are widely used in the fields of knowledge acquisition, machine learning, pattern recognition, attribute approximation, and decision analysis. RST has also been successfully used for outlier detection.Shaari [29] used the new concept of nonapproximation to detect outlier patterns based on RST.Jiang et al. [16] proposed the IEOF method for a categorical information system based on information entropy, one of the metric tools of uncertainty.Chen et al. [5] introduced a neighborhood model to combine the coarse-grained technique with an outlier detection technique. Sangeetha et al. [28] considered the weighted density values of attributes and objects in outlier detection for an intuitionistic fuzzy information system. Domingueset et al. [8] gave a comparative evaluation of outlier detection methods.Yuan et al. [34] proposed the NIEOD method by building a neighborhood information system using heterogeneous distances and adaptive radius and then realizing the information metrics by using the neighborhood information entropy. Degirmenci et al. [10] put forward efficient density and cluster-based incremental outlier detection in data streams. Yuan et al. [35–37] proposed methods based on fuzzy information entropy, fuzzy roughness granularity, and weighted fuzzy roughness density, respectively. Jin et al. [15] introduced intrusion detection on the internet of vehicles via combining log-ratio oversampling, outlier detection, and metric learning. Kandanaarachchi et al. [17] brought up unsupervised anomaly detection ensembles using item response theory. Meira et al. [22] came up with fast anomaly detection with locality-sensitive hashing and hyperparameter autotuning. Wang et al. [32] provided outlier detection based on a weighted neighborhood information network for mixed-valued data sets.

Motivation and inspiration

The existing detection methods apply to numerical data or mixed data, and only a few methods can deal with data using categorical attributes, but categorical attributes account for the majority of data in real life and applications. In addition, data processing methods for casting numerical data into categorical data tend to be more complex and sometimes lead to suboptimal results, while categorical data can be converted into numerical data more easily. Therefore, outlier detection for categorical data is still a topic that needs to be continually explored and developed.

The information amount is a basic metric for the uncertainty of an information system. However, the study for outlier detection based on information amount has not yet been reported.

Based on the research motivations stated above, we propose a new unsupervised method for spotting outliers that takes advantage of the information amount in rough set theory. Our method’s main idea is as follows. If we have an information system and a set of indiscernibility relations on domain

The main contributions are summarized as follows:

(1) The information amount and relative information amount for categorical data are proposed, which provide a comprehensive measure for the uncertainty of categorical data. The degree of outlierness and weight function are presented to find outlier factors.

(2) An outlier detection method based on information amount is presented. Instead of labeling data and determining the neighborhood radius to identify outlier samples, this method measures the difference in the information amount after abandoning a certain object. Compared with neighborhood-based and clustering-based methods, it does not need to consider finding out the optimal parameter values, nor does it need to calculate the distance between two objects.

(3) The information amount-based outlier factor method is given. The experimental results show that the proposed method has better validity and adaptability for categorical data.

Organization

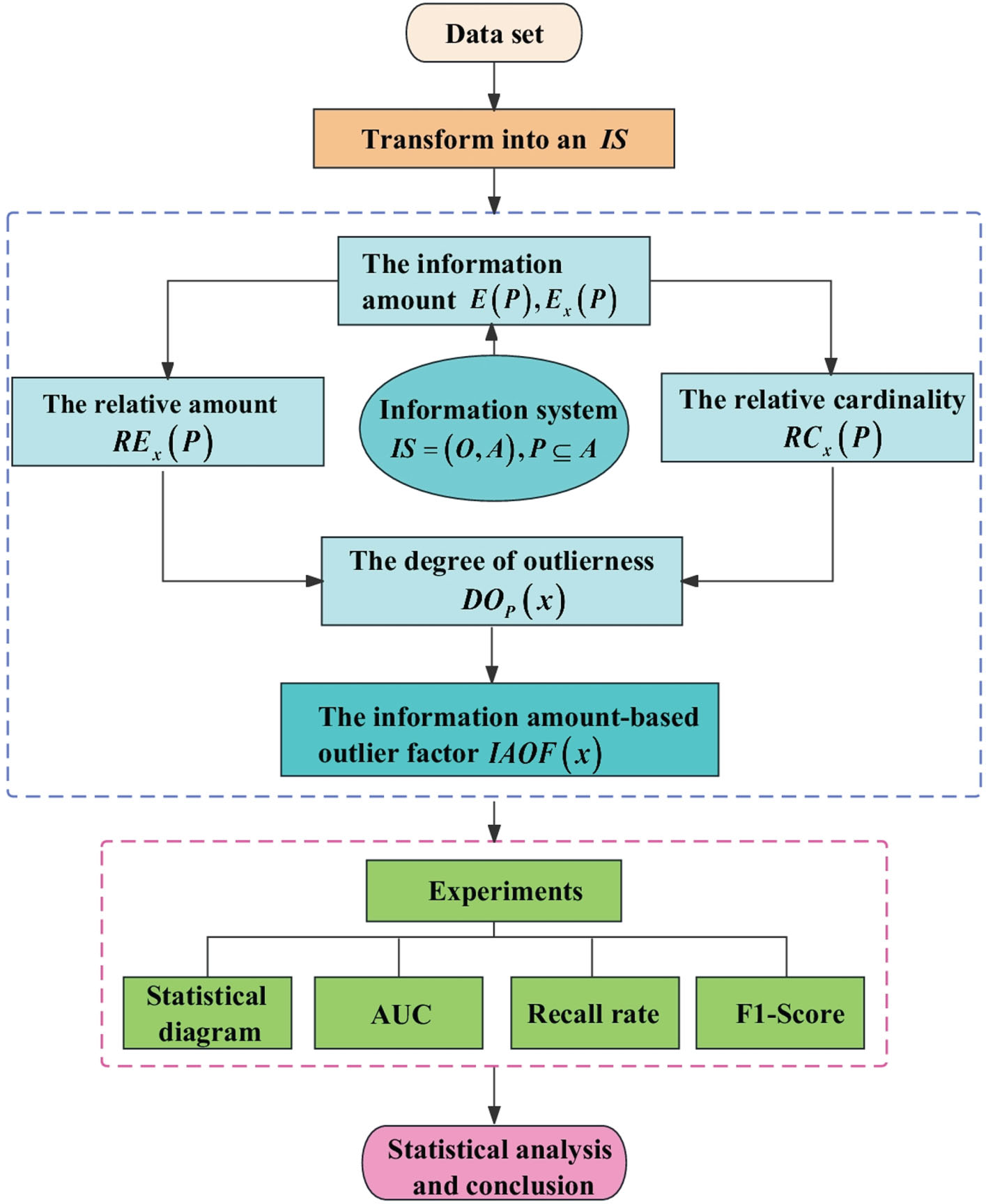

The remainder of this essay is structured as follows: Section 2 briefly reviews information systems and information amount. Section 3 introduces the necessary definition and concept of the outlier detection method based on information amount, and Section 4 describes the particular method of the proposed detection method and the spatial and temporal complexity of the method. The experimental findings are presented in Section 5, along with a discussion of the findings following statistical analysis. Section 6 of this work contains its conclusion. Fig. 1 depicts the research framework of this paper.

The research framework of this paper.

The section recalls information systems and the concept of information amount. In this paper,

If the attribute in (

In a given CIS (

Obviously,

Then [

Suppose that

This implies that ∀

Thus ∀

Hence

In this section, we present a formally and strictly defined method for detecting outliers in a CIS based on information amount.

Let (

Naturally, from Proposition 2.4, the information amount of

It’s easy to derive 0 ≤

Especially,

In particular,

From the form of the formula, relative cardinality denotes the difference between the cardinality of [

Suppose that (

Denote

Denote

Then

In this article, the set of all μ-outliers in a CIS is denoted as

Initial CIS for Example 1

The equivalence class of each object

For {

For {

For {

(1) According to Proposition 2.4 mentioned above, the information amount for each singleton attribute subset of

(2) A descending sequence of attribute subsets can be constructed as

For

For

For

Analogously, the information amount for each attribute subset in the sequence becomes

And the information amount for each attribute subset in the sequence after removing an equivalence class is

(3) Based on Definition 3.1 and the above results, we next determine the relative information amount of different subsystems.

Similarly, we can obtain

(4) From Definition 3.2, the relative cardinality

(5) Correspondingly, from Definition 3.3, the degree of outlierness of

Meanwhile, the degree of outlierness of different objects with respect to different subsets of attributes is

(6) In addition, according to Definition 3.4, the weights of different objects with respect to different subsets of attributes can be calculated:

Besides,

(7) As a result, the information amount-based outlier factor of

Similarly, we compute each object’s information amount-based outlier factor:

Finally, the IAOF for each instance is compared against the judgment threshold λ = 0.72:

As a result,

Section 3 describes an outlier detection method based on the information amount, including theoretical concepts, specific calculation procedures, and an illustrative example. This section also presents the corresponding algorithm and examines its complexity.

Algorithm 1 depicts the process of calculating the IAOF for a CIS (

1:

2:

3:

Calculate

4: Calculate

6:

7: Determine

8: Construct

9:

10: Calculate

11: Calculate

12:

13:

14:

15: Calculate

16: Calculate

17: Calculate

18: Calculate

19: Calculate ω

20:

21: Calculate

22: If

23:

24:

25:

26: return

Experiments and comparative analyses

In this section, data sets collected from the real world are chosen for experiments to assess the behavior of IAOF. Due to the limited number of categorical data sets provided by the UCI (University of California Irvine) machine learning repository and the problems of a large number of missing values or a high percentage of outliers in some data sets, we selected several data sets from the KEEL (Knowledge Extraction based on Evolutionary Learning) data set repository [2]. The KEEL is available under the GNU General Public License version 3, which can be used for a variety of data discovery applications. It provides a simple user interface that uses data flow to design experiments with different data sets and computational intelligence methods. Almost all of the imbalanced data sets in the KEEL are from UCI. After preprocessing, the original UCI data sets are divided into five folds employing stratified cross-validation. In this way, a sufficient quantity of minority objects in the test partitions is disposed of, and the test partition objects are more representative of the underlying knowledge. Besides, the imbalance rate of the data set ranges from 1.5% to less than 10%, which is extremely suitable for imbalanced data classification as well as outlier detection.

Experimental settings

Data descriptions

In the experiment, we selected three datasets from the UCI repository, namely the Hayes-Roth dataset (referred to as Hayes), the Congressional Voting Records dataset (referred to as Voting), and the Mammographic Mass dataset (referred to as Mamm). Additionally, four datasets were chosen from the KEEL repository, including the MONK-2 dataset (referred to as Monk), the Australian Credit Approval dataset (referred to as Aust), the Pima Indians Diabetes dataset (referred to as Pima), the Breast Cancer Wisconsin (Diagnostic) dataset (referred to as Cancer), and the Solar Flare-2 dataset (referred to as Flare). The datasets in question comprise categorical attributes, including both nominal and ordinal attributes, as well as numerical attributes, encompassing interval and ratio attributes. Moreover, the data sets exhibit a few instances of missing data. In the context of semantically relevant datasets, classes that are rare and deviate from the typical case are defined as outlier classes. Alternatively, if there is no clear deviation, the outlier class is determined based on the class with the fewest number of objects [7, 20].

Consequently, three main steps are involved in data preprocessing: The first step is imputing the missing values. Because of the relatively low percentage of missing values (the data sets Mamm and Voting suffer from 3.41% and 5.63% missing values, respectively), missing values for numerical attributes are filled with the mean, while missing values for categorical attributes are filled with the mode, which is also known as the maximum probability value method. The second step is to cut the numerical attribute data into three bins using the equal-range discretization method to obtain categorical data. The third step is to generate outliers using the downsampling method proposed in [7]. Outlier detection usually involves the random downsampling of a specific class to generate outliers while retaining all instances of the remaining classes to generate inliers. Random downsampling usually results in a dramatic change in the data set. Therefore, to mitigate the effect of randomization after downsampling, we repeat downsampling 10 times for the data sets with more than 10% outliers, use IAOF to detect outliers for each of these 10 variants, and finally select the variant with average detection performance for the comparison experiment. Table 3 shows the detailed information and data preprocessing of each data set.

Detailed information and data preprocessing for the data sets

Detailed information and data preprocessing for the data sets

To evaluate our method’s performance quantitatively and comprehensively, we compare it to five other popular outlier detection methods: the k-nearest neighbor method (KNN) [24], the isolation forest method (IForest) [21], the cluster-based local outlier factor method (CBLOF) [14], the information entropy-based method (IEOF) [16], and the neighborhood-based outlier detection method (NED) [5].

KNN is one of the simplest methods in data mining. This algorithm is used to classifying rare events. To apply KNN for outlier detection, all features of the training data must be numeric, as it is based on the distance between two objects. Therefore, in the case of categorical features present in the training data, it is necessary to encode the categorical features with dummy variables to convert them into numerical features. Throughout this experiment, the Euclidean distance is used as the distance matrix after data transformation, and cross-validation and grid search are used to select the optimal value for hyperparameter

KNN, CBLOF, IEOF, and NED are proximity-base methods, while IForest is a classification-based method. All in all, the five outlier detection methods mentioned above apply to categorical data indirectly. For the outlier detection experiments, Table 4 gives the optimum parameter settings for both KNN and CBLOF.

Optimal parameter for KNN and CBLOF on different data sets

Optimal parameter for KNN and CBLOF on different data sets

In the binary classification of machine learning, there is a difference between the predicted outcomes and the actual classification, so some classification evaluation metrics such as precision, recall, receiver operating characteristic (ROC) curve, area under curve (AUC), and

Precision is the probability that samples predicted to be outliers are outliers. Recall denotes the possibility of outliers being indicated to be outliers. It requires a threshold

The comparative experiments in this section are conducted on a computer with the Intel (R) core (TM) i5-8250U processor platform, 1.80 GHz frequency, and 8 GB of memory. The operating system is Windows 11. The experiments are performed in Python 3.8.

In this subsection, outlier detection is carried out for IAOF and the other five existing methods on the eight data sets mentioned above. Scatter diagrams, broken line graphs, and tables are presented as results to reflect the performance of different methods.

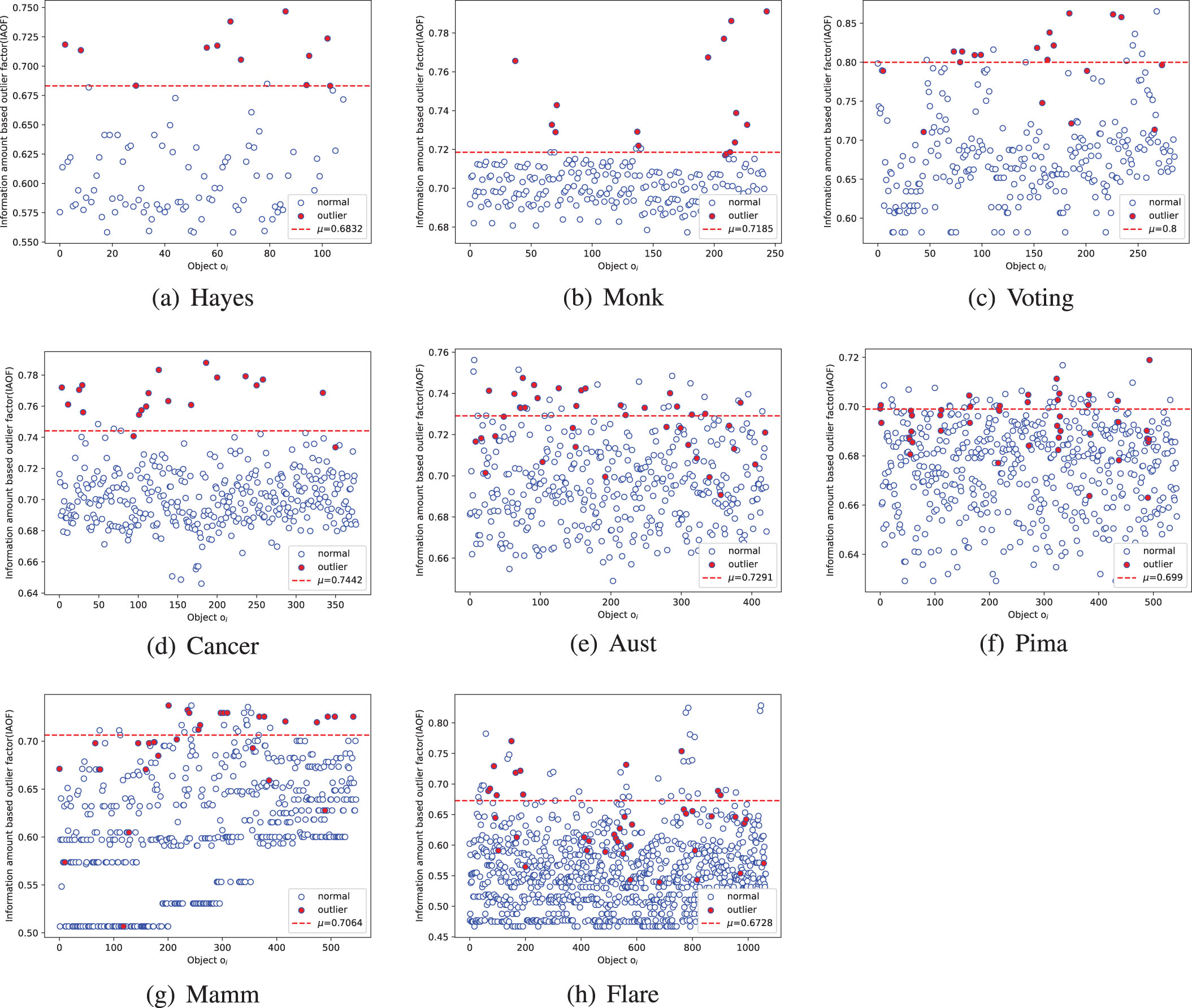

First, we calculate the outlier factors of each object in every data set and the judgment threshold μ by the process of IOAF. In turn, scatter diagrams are generated by taking an object’s serial number as the horizontal coordinate and the outlier factor as the vertical coordinate. In the diagrams, blue circles represent the true inliers, red-filled circles represent the true outliers, and the red dotted line stands for the judgment threshold μ. According to the IAOF, objects above the red dotted line are considered outliers, and objects below the red dotted line are considered inliers. It can be seen from Figs. 2(a)-(h) that IAOF detects well, where the method recognizes almost all true outliers in the Hayes, Monk, Voting, and Cancer data sets and about half of the true outliers in the Aust, Pima, and Mamm data sets. Although IAOF only recognizes a small part of the outliers in the Flare data set, it can be seen from Fig. 2(h) that almost all of the outliers in the data set were distributed in the middle and top of the scatter diagram, indicating that the outliers calculated by IAOF have relatively large outlier factors, which indirectly demonstrates the effectiveness of the method in the data set.

Outlier factor scatter diagrams of the data sets.

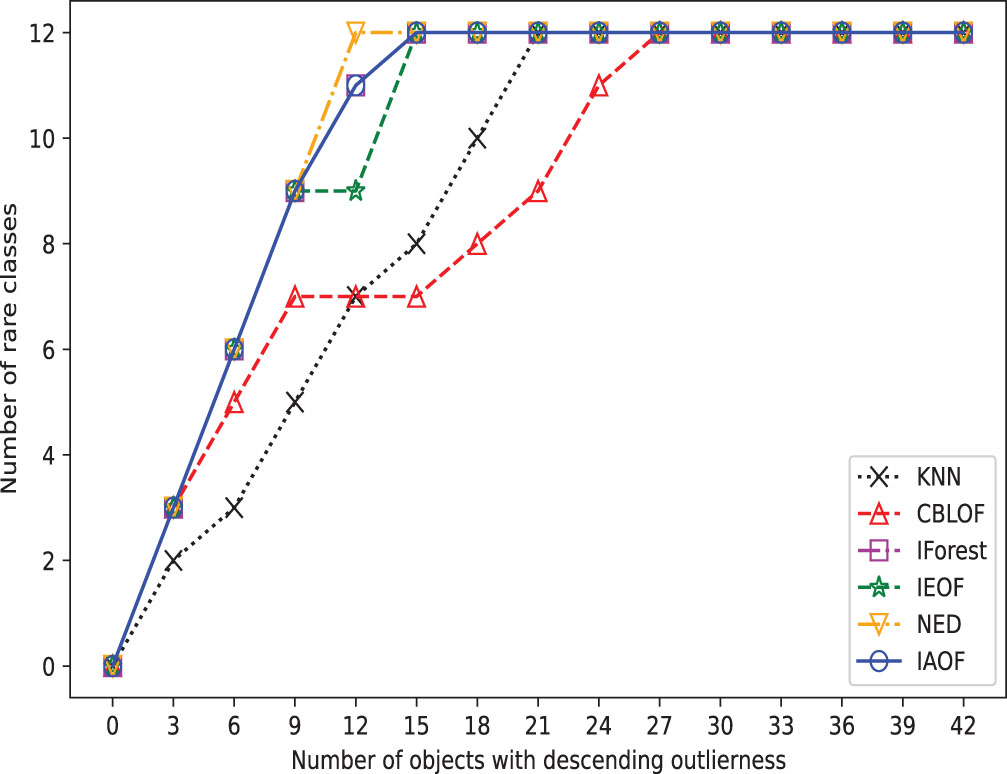

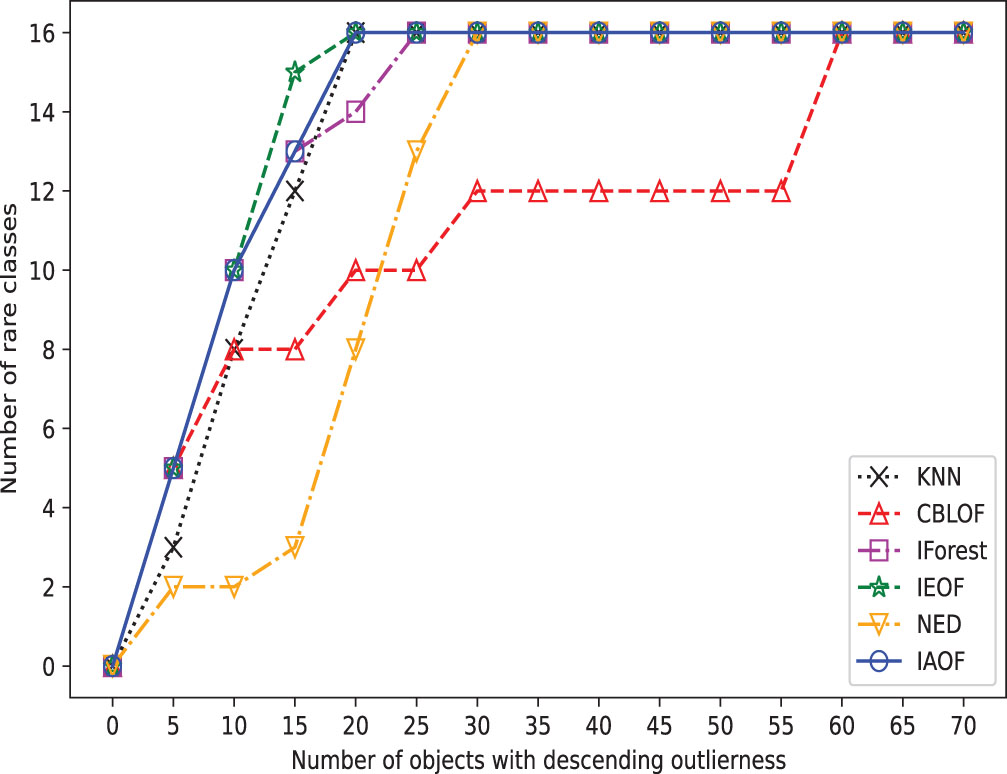

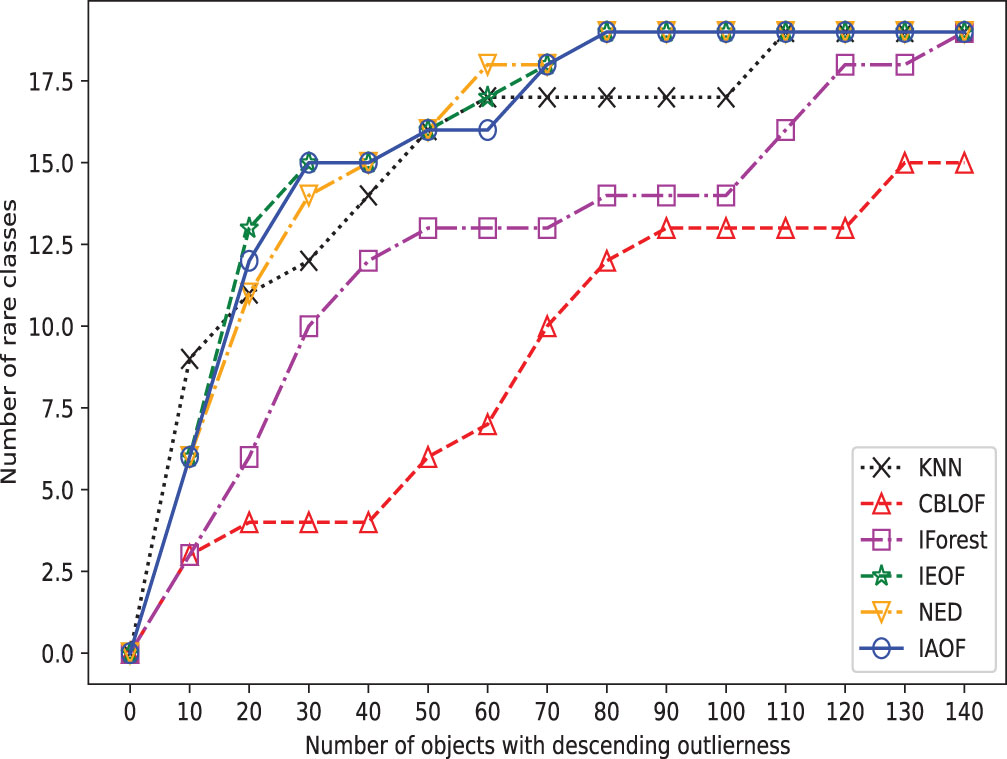

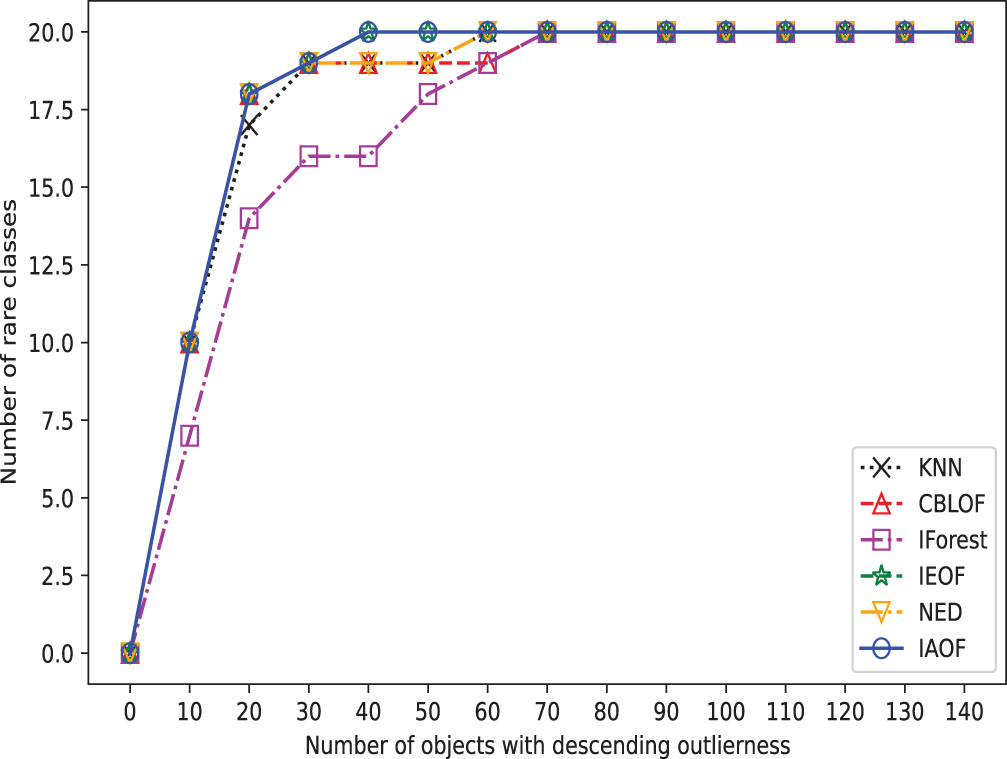

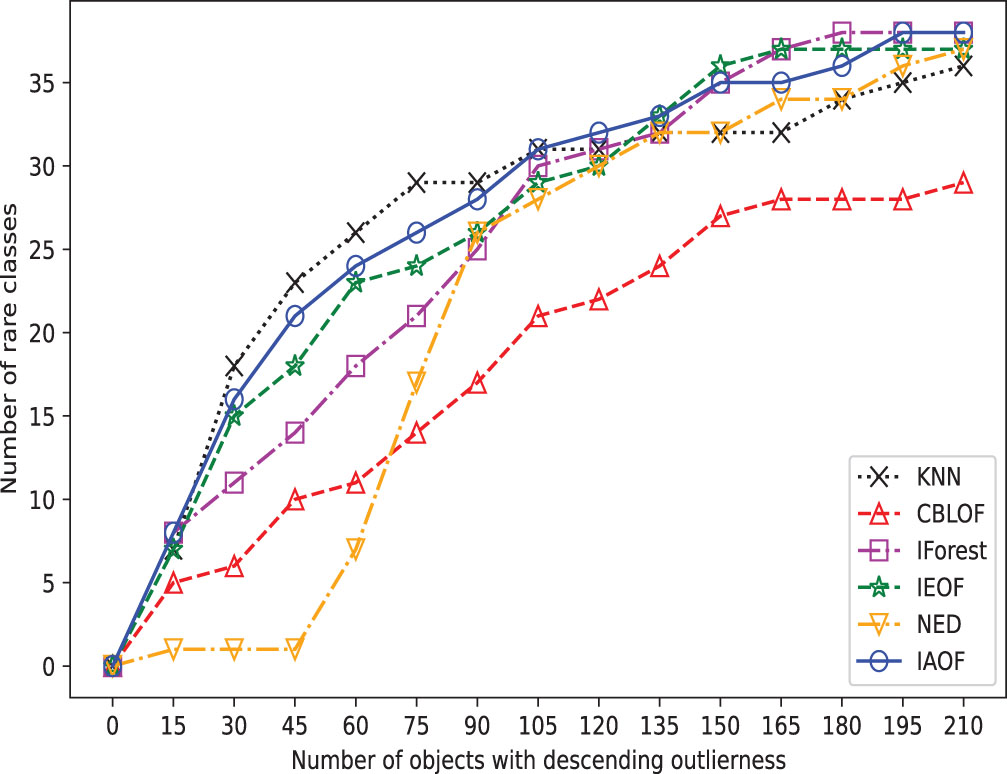

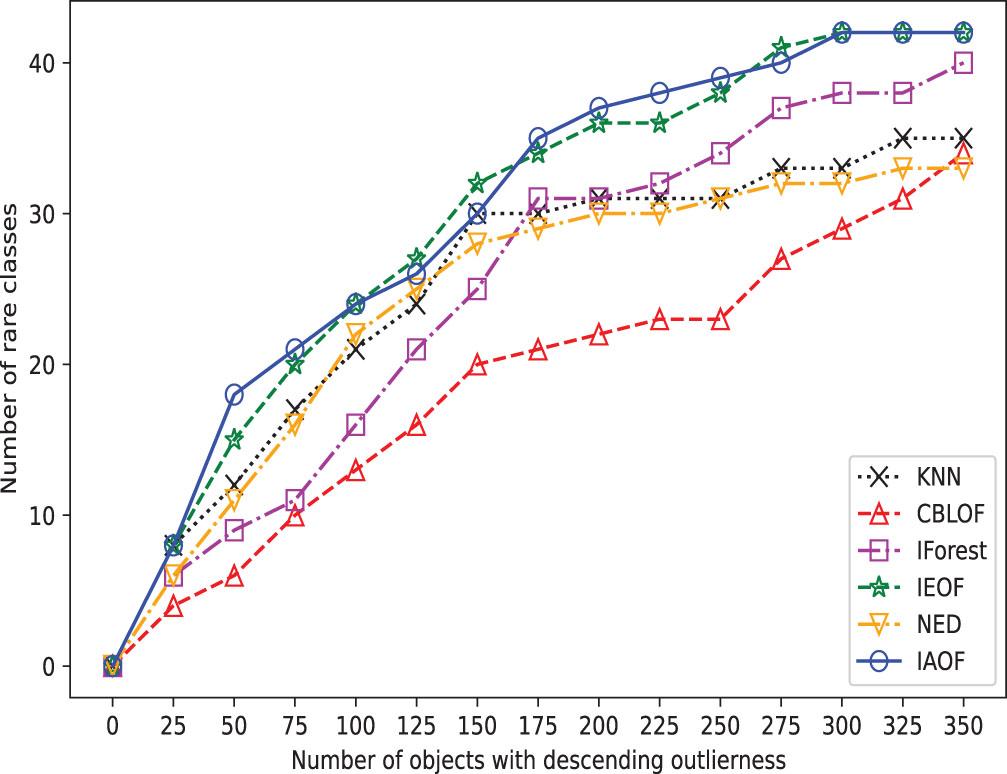

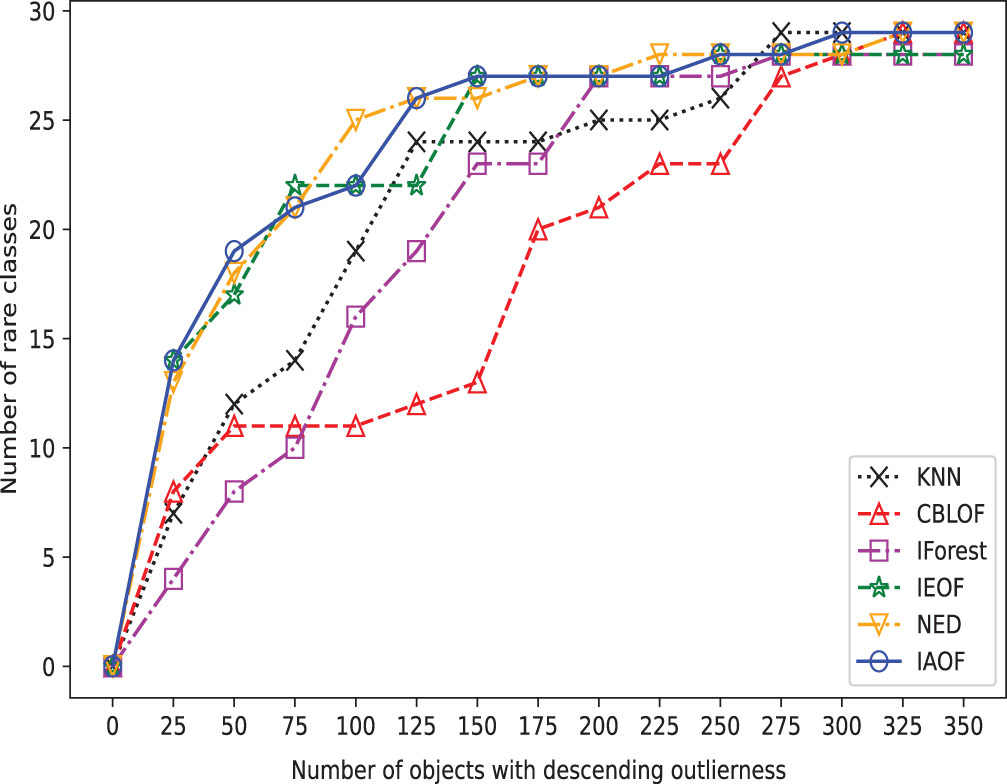

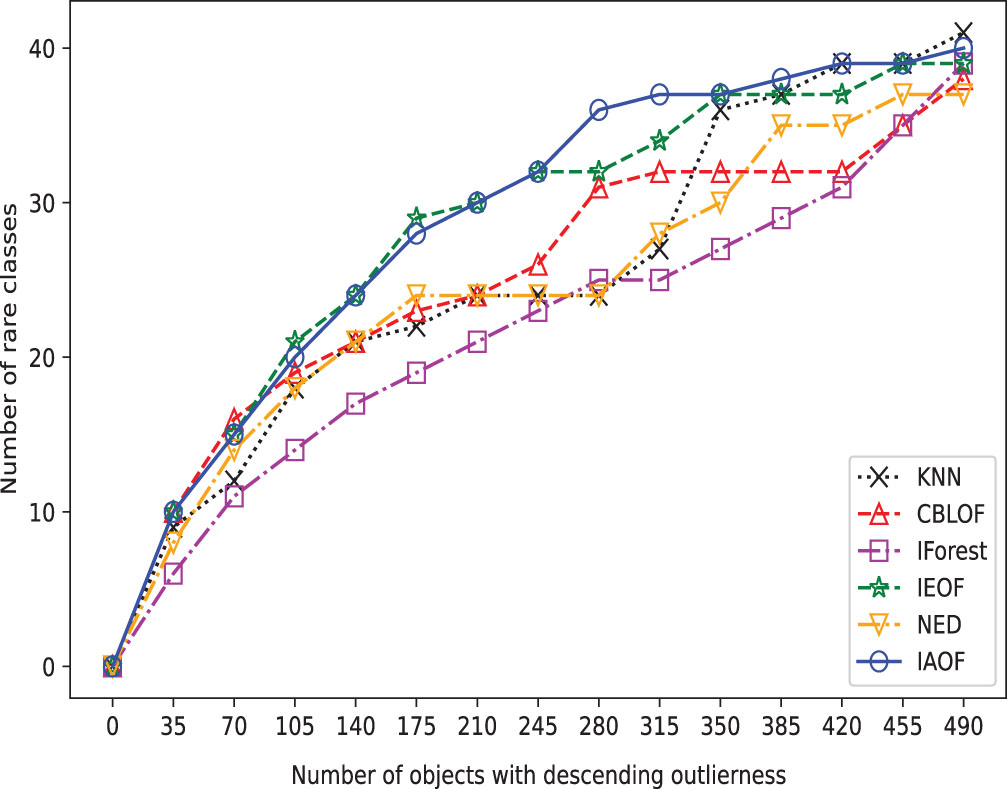

Furthermore, we perform outlier detection on every data set using the six methods. In real-world scenarios, identifying outliers in a dataset is generally difficult due to a lack of prior knowledge about the precise occurrences that differ greatly from the norm. These methods put all objects in descending or ascending order based on their determined “outlier factors,” and then consider the objects at the top of the sequence to be outliers. In detail, as shown in Figs. 3–10, if a detection method deems the objects at the top of the sequence as outliers and the number of objects in this part is “number of objects", then the proportion of objects in this part of the data set is “Top ratio”. In fact, only some of these objects are true outliers, and the number of real outliers is written as “Number of true outliers”, and the proportion of the currently successfully identified outliers to all true outliers in the data set is expressed as “outlier coverage”. In other words, a larger value of “Number of true outliers (outlier coverage)” indicates that the detection method is capable of detecting outliers more effectively. The maximum number of outliers identified successfully (outlier coverage) on each line is typed in bold characters in the table. According to Figs. 3–10, IAOF performs significantly better than KNN, CBLOF, IForest, and NED in terms of overall performance, and IAOF has a similar detection efficiency to IEOF. However, when the number of objects equals 15, 30, 45, 60, and 75 on the Aust data set, the outlier coverage of IEOF is slightly smaller than that of IAOF.

The broken line chart of outliers detected on Hayes.

The broken line chart of outliers detected on Monk.

The broken line chart of outliers detected on Voting.

The broken line chart of outliers detected on Cancer.

The broken line chart of outliers detected on Aust.

The broken line chart of outliers detected on Pima.

The broken line chart of outliers detected on Mamm.

The broken line chart of outliers detected on Flare.

In order to provide a concise overview of the detection effects of all methods, we generated broken line graphs using the experimental data. These graphs are depicted in Figs. 3–10. In most cases, except for Figs. 6 and 7, the broken line representing IAOF consistently surpasses the line representing KNN in terms of detection efficiency. This suggests that IAOF generally offers a superior degree of detection performance compared to KNN. Similarly, it can be observed from Figs. 3–10 that the performance of IAOF in detecting outliers surpasses that of CBLOF, IForest, and NED. The broken lines representing IAOF in Figs. 3, 4, and 6 almost overlap with those representing IEOF, while in Figs. 7, 8, and 9, the broken lines of IAOF are positioned above those representing IEOF. Consequently, it can be inferred that IAOF demonstrates slightly superior performance compared to IEOF.

Confusion matrix for outlier detection

Experimental results for different detection methods

In the preceding subsection, we examined the performance of IAOF and the other five methods in detecting outliers based on experimental results. The goal of this subsection is to compare the efficiency of IAOF and the other five methods using the classification evaluation indices stated above. The following is a summary of the key analysis:

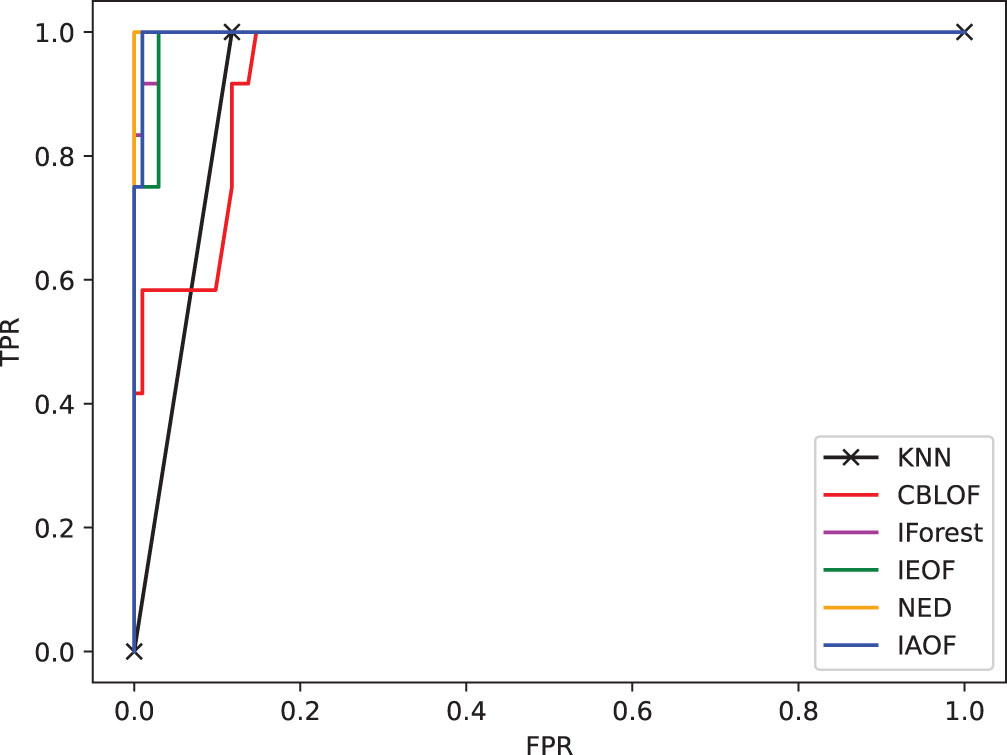

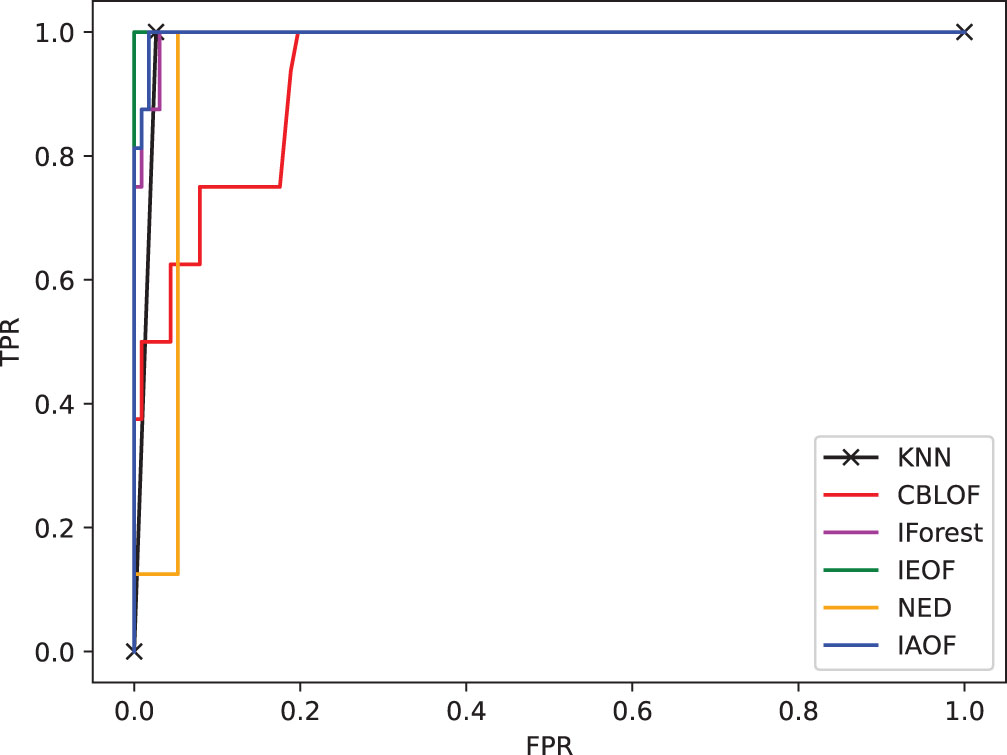

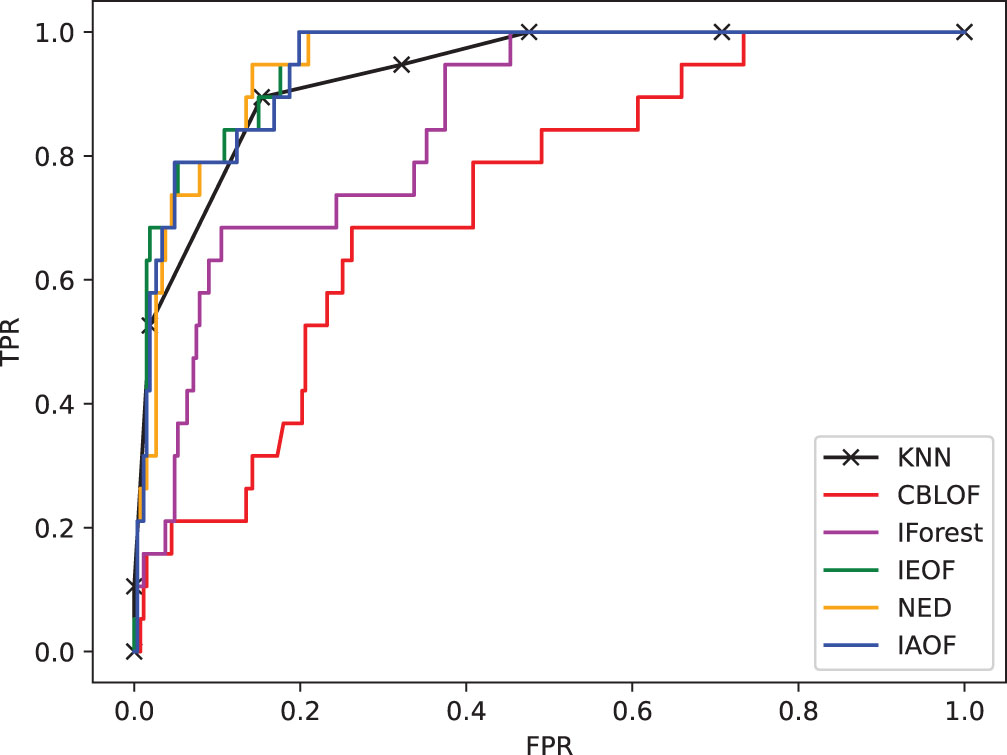

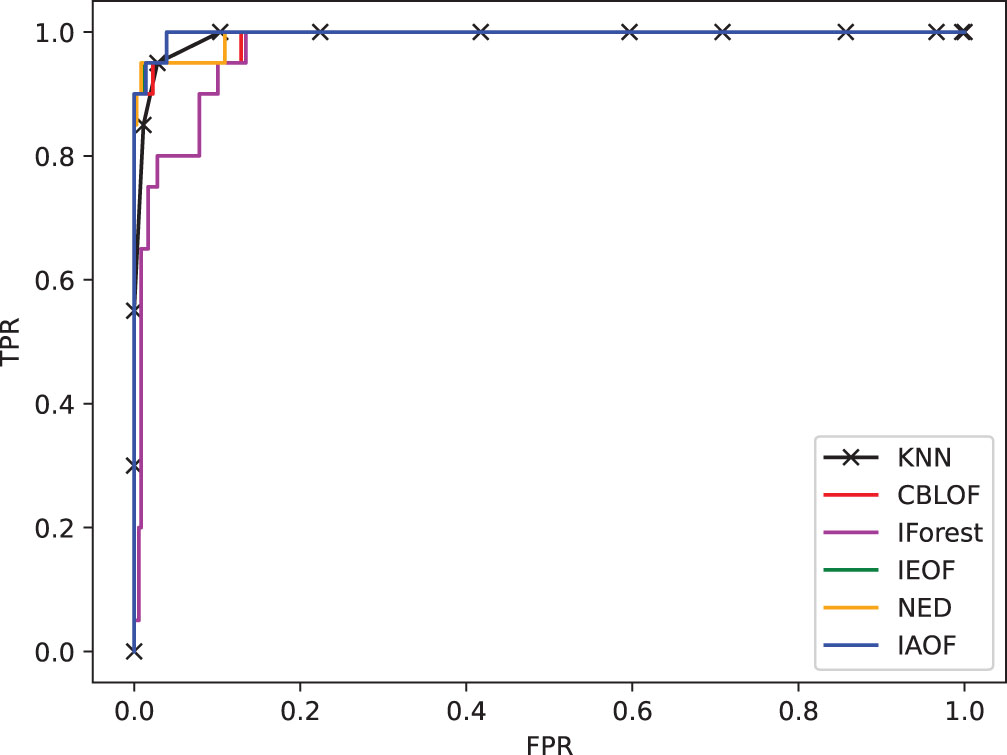

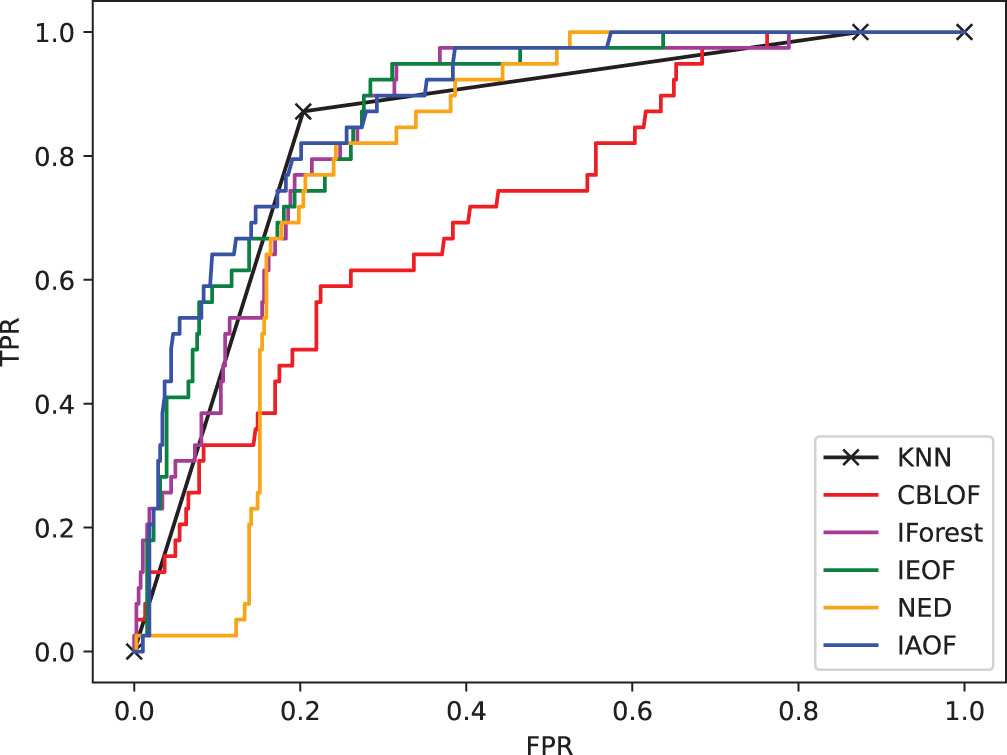

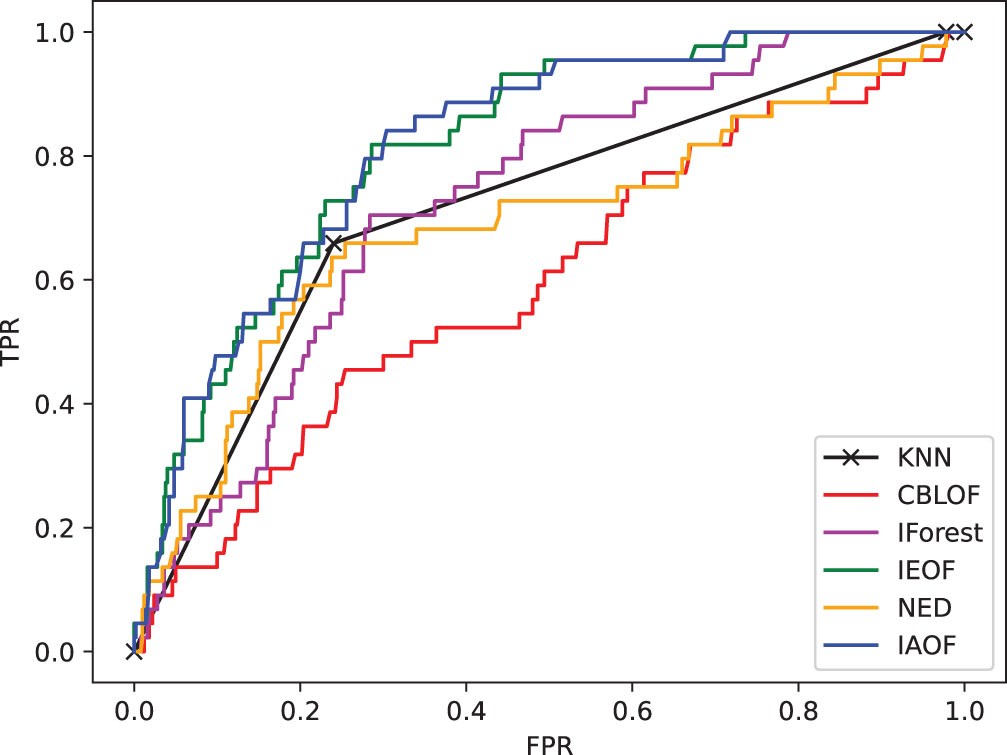

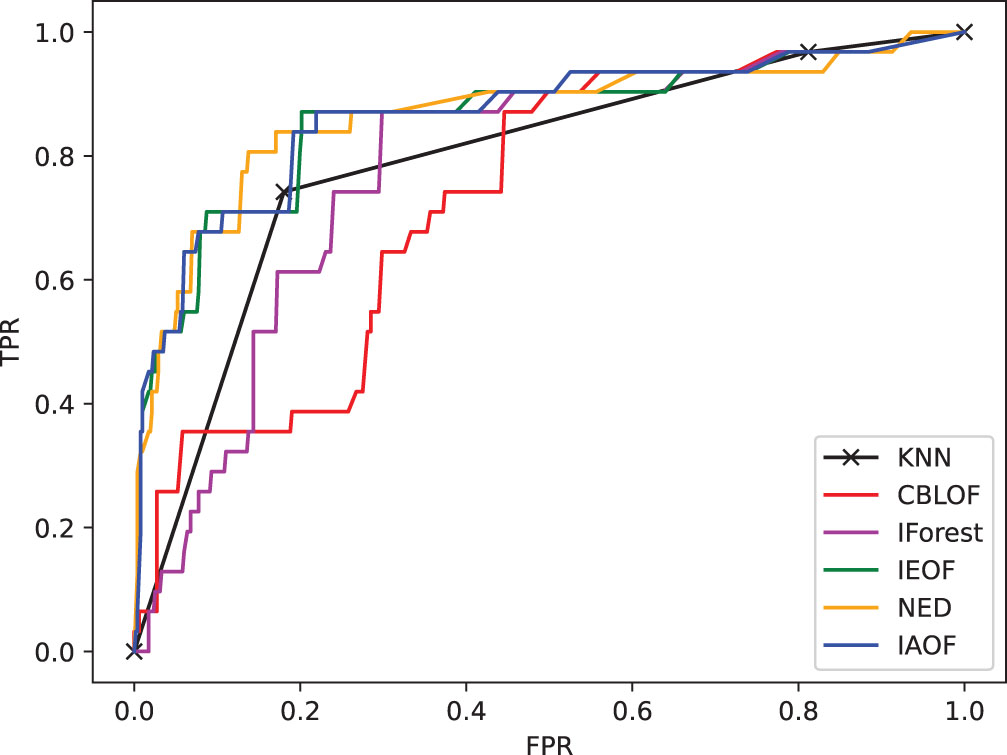

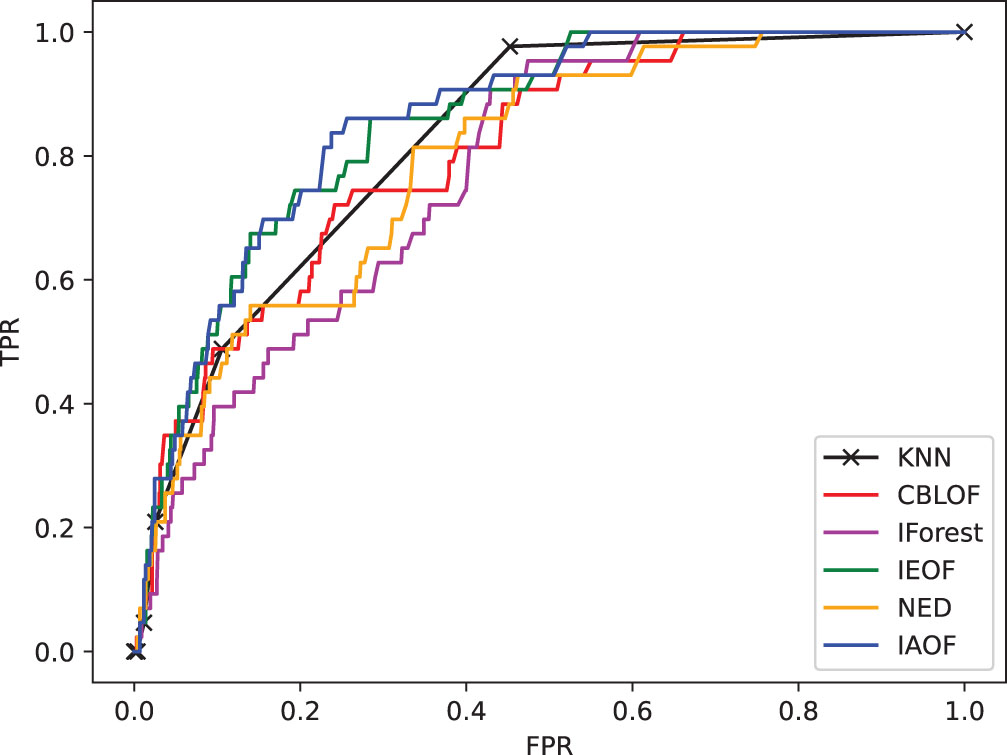

(1) From Figs. 11–18, we can also see the effectiveness of the IAOF algorithm more graphically. Figs. 15, 16, and 18 show the Aust, Pima, and Flare datasets. The IAOF algorithm is closest to the upper left corner of the first quadrant with the largest area under the curve for these datasets. On the other datasets, from Figs. 11–14 and 17, it can be observed that the IAOF is also very close to the upper-left corner of the first quadrant as compared to most of the methods. By calculating the area under each ROC curve, we get Table 7. From Table 7, we can see that the IAOF algorithm is ranked second in terms of area under its ROC curve on the Hayes, Monk, Voting, Cancer, and Mamm datasets.

The ROC curve on dataset Hayes.

The ROC curve on dataset Monk.

The ROC curve on dataset Voting.

The ROC curve on dataset Cancer.

The ROC curve on dataset Aust.

The ROC curve on dataset Pima.

The ROC curve on dataset Mamm.

The ROC curve on dataset Flare.

AUC results

(2) Table 9 sketches the variation of

Comparison results with precision, recall, and

In general, IAOF has superior detection performance compared to other methods when applied to the eight data sets. Consequently, IAOF can be efficiently employed for the purpose of detecting outliers in categorical information systems.

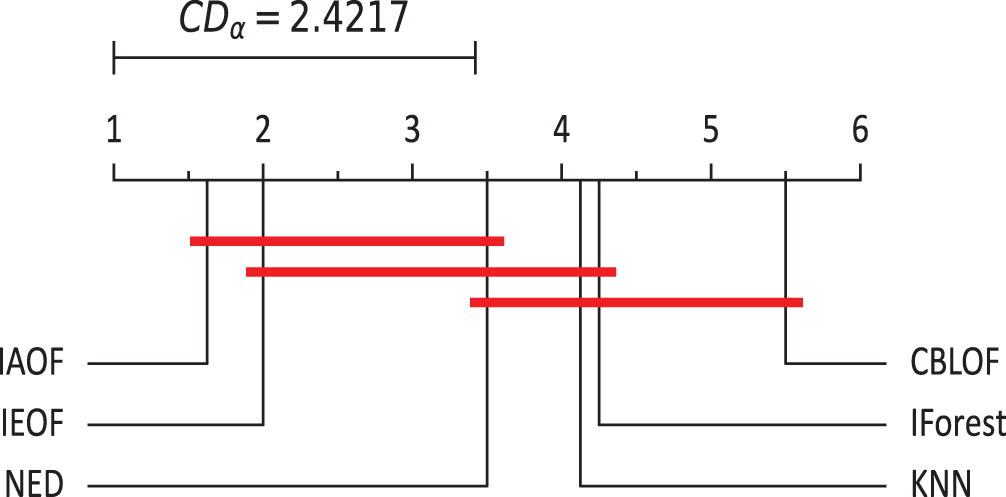

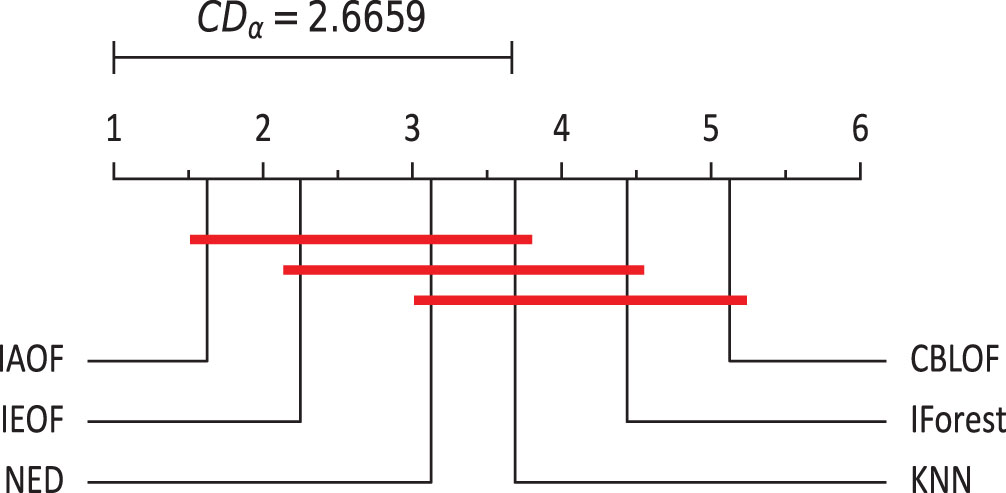

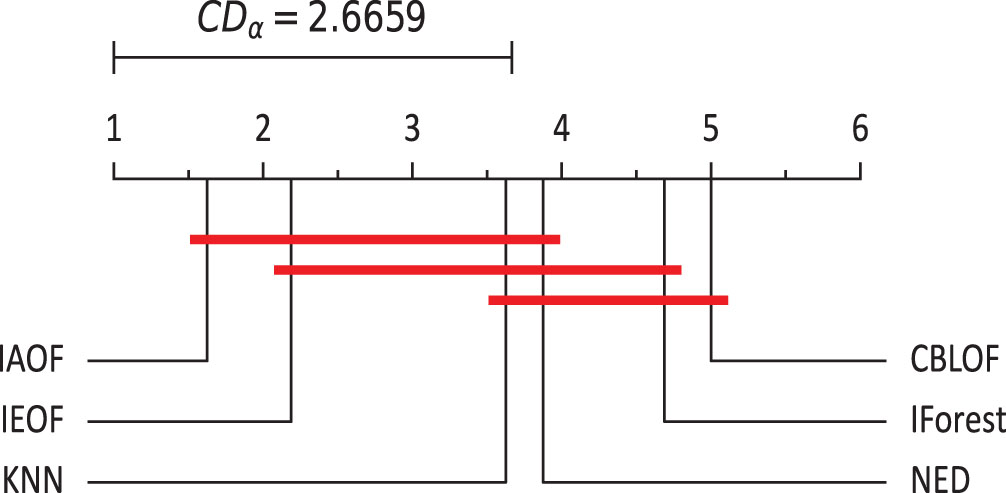

Since the three sets of indicator values (AUC,

The null hypothesis of the Friedman test, which is dependent on the Fisher test, is that there is barely any difference in rank across various algorithms. Let

If the statistic

By conducting tests on the index values of this experiment, one can derive the following conclusions based on Figs. 19–21.

The Nemenyi test on AUC (α = 0.1).

The Nemenyi test on

The Nemenyi test on

(1) IAOF has the greatest average rank in AUC values, indicating that it has the best outlier identification performance. Furthermore, when the significance level is taken at 10%, there are significant variations in the detection performance of IAOF and CBLOF, IAOF and KNN, and IAOF and IForest, whereas the other groups of methods have no significant differences.

(2) IAOF has the highest average rank in the values of

(3) IAOF has the greatest average rank in terms of the

This paper develops a novel and efficient IAOF method for identifying outliers, which is based on a thorough and rigorous theoretical framework and includes a real-world illustration of the various steps involved in outlier detection. The method finds outliers by using the information amount to calculate the degree of outlierness of an object, which is one of the uncertainty measures in rough set theory. There is no related research on the issue of outlier detection. IAOF can be used to identify outliers in both categorical and discretized numerical information systems. Using a collection of eight UCI standard datasets, we ran tests to evaluate and compare various existing outlier detection methods. The experiment results show that the suggested method is adaptable, works well, and could be useful in real life because it finds patterns more accurately in categorical datasets than other methods tested in the same conditions. IAOF expands the utilization of the information amount in measures of uncertainty and enriches the techniques for identifying outliers. Nevertheless, the method’s time cost and space complexity do not possess substantial advantages.

In the future, we will consider adopting other efficient attribute approximation methods to lower the time and space complexity of the process of IAOF in order to deal with outlier detection problems for high-dimensional data sets. What is more, applying the IAOF technique to outlier identification in other types of information systems, such as categorical information systems with missing values, numerical information systems with missing values, set-value information systems, and so on, is a future research area.

Footnotes

Acknowledgments

The authors would like to thank the editors and the anonymous reviewers for their valuable comments and suggestions, which have helped immensely in improving the quality of the paper. This work was supported by the project of Improving the Basic Scientific Research Ability of Young and Middle-aged Teachers in Guangxi Universities (2020KY14013) and the project of Natural Science Foundation of Guangxi (2020GXNSFAA159155, 2020GXNSFAA159061).