Abstract

The wilting of leaves caused by disease poses risks to both harvest yield and the environment. Therefore, the timely detection of disease signs on leaves is crucial to enable farmers to prevent disease outbreaks and safeguard their crops. However, manually observing all diseased leaves on a large scale demands substantial time and human effort. In this study, we propose an effective method for automated disease detection on leaves. Specifically, this method utilizes images captured from mobile phones. The proposed technique combines four models (ensemble of models) with distinct features: (1) ResNeXt50 model with a high-quality image processing, (2) ViT model with a low-quality image processing, (3) Efficientnet B5 model combines a self-learning with noisy input, and (4) Mobilenet V3 model with image segmentation. Experimental results demonstrate that the proposed method outperforms some of the state-of-the-art methods on TLU-Leaf dataset (ours) with F1-score of 90% and Cassava Leaf Disease dataset with F1-score of 87%.

Introduction

Countries with monsoon climates have a diversity of organisms and fertile soils, which offer advantages in terms of agricultural development. However, the monsoon climate is also perfect for fungi, bacteria, and viruses to grow. They cause various plant diseases such as leaf rollers, and yellow leaves every year. These diseases can spread quickly, affecting large areas of crops without prediction and control. As a result, farmers can lose a lot of agricultural products. Furthermore, some pests also pose a threat to the environment. We need to construct classification models, data processing pipelines, and machine learning models [1] to predict the presence of plant diseases in advance.

Therefore, it is necessary to detect leaf diseases and prevent unwanted consequences. In addition, appropriate measures to detect pests [25] and diseases can help to map the locations of crops affected by pests and diseases in mountainous areas to provide timely information to local authorities and farmers.

Detecting foliar diseases poses a significant challenge due to the intricate nature of visual imagery [3]. This complexity has prompted a heightened demand for intricate and specialized analysis of foliar indicators. Our objective is to leverage a diverse set of data features for pest detection, given their effectiveness, precision, accessibility, and cost-efficiency. Particularly in expansive fields characterized by dense crop populations, employing close-range equipment offers heightened accuracy advantages. However, this approach is resource-intensive in terms of labor, expenses, and installation time. Conversely, a composite feature approach can be readily deployed and operated across extensive regions. Furthermore, outcomes obtained through multi-model utilization of images show promising potential. In this study, we propose a strategy founded on a multi-model framework and an intermediate anomaly extraction module for analyzing images of infected trees. The proposed approach boasts a lightweight nature, rendering it suitable for implementation on low-cost embedded boards.

Our contributions are three folds and are summarized as follows:

The remainder of this paper is structured as follows. Section 2 discusses relevant previous studies. Section 3 presents our method. The experimental evaluation is shown in Section 4. Finally, some concluding remarks and a brief discussion are provided in Section 5.

Related works

Our proposed method in this article encompasses five research domains: deep learning models (DL) with high-quality images, DL with low-quality images, DL with self-supervised learning incorporating noisy inputs, and DL with image segmentation, and finally, we research to propose a framework that fuse deep learning models to fully solving the four aspects of the task we are posing.

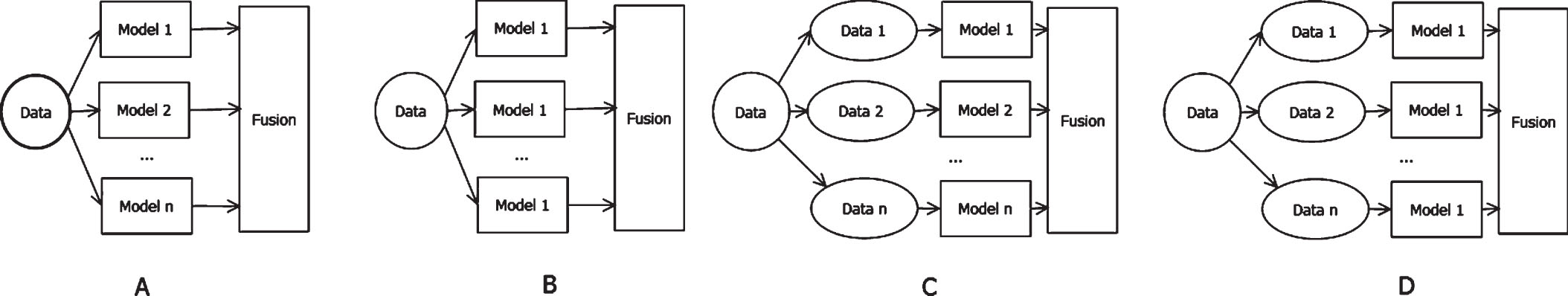

Four cases of ensemble deep learning.

MnLeaf framework

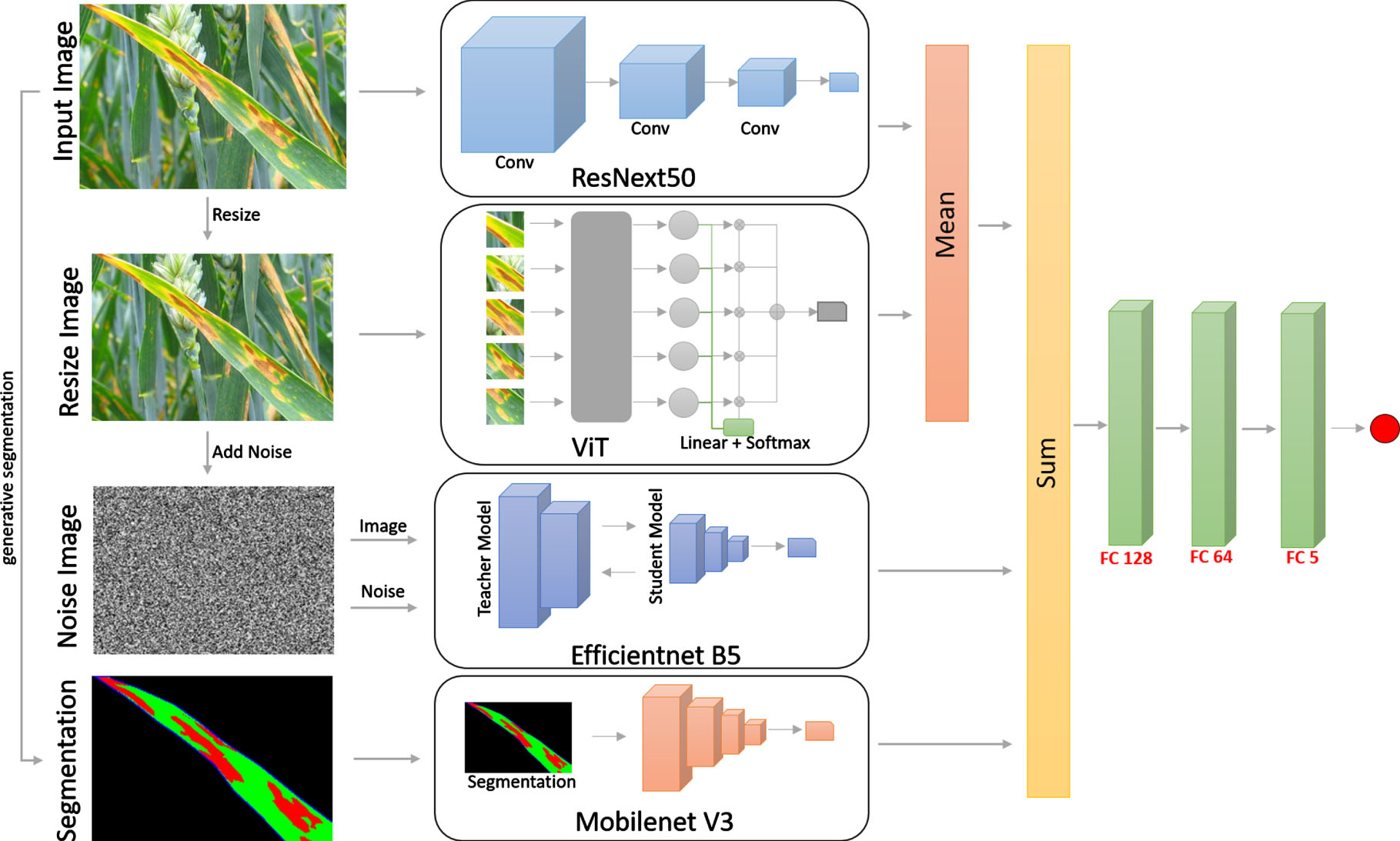

In this study, we present a sophisticated multiple-model neural network aimed at effectively classifying various leaf diseases, named MnLeaf framework. Our proposed methodology entails the integration of four distinct neural network architectures. Specifically, we employ the ResNext50 architecture to facilitate precise leaf disease detection in scenarios involving high-quality images. In cases where image quality is relatively lower, we deploy the Vision Transformer (ViT) architecture to ensure accurate disease classification. To address the challenges posed by noisy images, we introduce the EfficiencyNetB5 architecture, which serves as both a teacher and a student model. Additionally, we leverage the capabilities of the MobilenetV3 architecture to extract pertinent segmentation features, as visually depicted in Figure 2. Through this comprehensive approach, our study contributes to the advancement of leaf disease classification using cutting-edge neural network architectures and carefully curated datasets.

Overall architecture of the proposed method.

The architecture of the multi-channel deep learning model is proposed to combine ResNeXt50, EfficientNet B5, and MobileNet V3 for feature extraction, and ViT layers for sequence prediction in four channels, as fully connected as illustrated in Figure 2. Each channel or head consists of a stack of Conv1D layers, followed by max-pooling layers. The Conv1D layers, configured with 128 filters, directly map and abstract the sensor inputs to extract features. Feature extraction is achieved through convolutional operators applied to kernels, and feature maps are computed as described in Table 3 below. To enable feature extraction at different resolutions, we employed two layers, ResNeXt50 and ViT, for the Conv1D layers of corresponding channels. The outputs from each Conv1D layer are then fed into max-pooling layers, reducing the size of learned features by summarizing them into separate elements without compromising accuracy. The EfficientNet B5 layer has an output configuration of 128 units for the Conv1D layers and max-pooling layers. The advantage of this is that the EfficientNet B5 layer is well-suited for learning the input image noise, leading to improved image classification. This model helps minimize overfitting and enhances model accuracy. Finally, the MobileNet V3 layer is employed to learn segmentation features for identifying the objects to be classified, in this case, leaves and leaf diseases. The outputs from the four channels are flattened and then concatenated within the model. They pass through three fully connected layers, capable of generating interpretable features into different classes. The final output of the model is from a dense layer with a softmax activation function to compute the probability distribution over the predicted operational classes.

In the next section, we will present in detail the used features and the architecture of the four networks.

To streamline and enhance the quality of input records, we performed three preprocessing steps (show in Figure 2), including data dimension reduction, adding noise to the data, and generating segmentation images. Firstly, we applied several preprocessing techniques to reduce the data dimension. For instance, we employed PyTorch functions to reduce the image size from 512×512 to 224×224 while retaining essential features. Additionally, we utilized downsampling techniques in convolutional neural networks (CNNs) to further reduce the data dimension.

After reducing the data dimension, we introduced image noise using the GrandInversion [63] technique to add noise. GrandInversion utilizes ResNet-50 as a backbone to introduce random noise and generate a natural-looking image. GrandInversion reconstructs the input from gradients as part of an optimization process. The addition of noise results in two images: (i) an origin image; and (ii) an image with noise.

Finally, we carried out the generation of segmentation images to isolate individual objects, with a focus on objects affected by diseases, such as plant leaves. We employed a segmentation technique [64] developed by Facebook’s research team. This technique effectively separates all objects within the image, after which we processed the data to select only the diseased plant leaves and classify them accordingly. The primary objective of our segmentation approach is to generate binary masks for each leaf of the plant, for each type of disease on the leaves, and associate it with a specific plant based on real agricultural field images. Therefore, we perform simultaneous segmentation of individual leaf samples and plants. This enables us to determine the shape and size of each leaf, as well as the condition of disease on the leaves, which is highly suitable for conducting morphological analysis.

ResNeXt50

The conventional approach to enhance model accuracy involves deepening or expanding the network architecture. However, as the number of hyperparameters (such as channels, filter sizes, etc.) increases, challenges in network design and computational costs also escalate. Building upon the foundation of ResNet, ResNeXt integrates the block-stacking strategy of ResNet with the grouped convolutions of the Inception structure. This amalgamation creates a synthesis approach that elevates the accuracy of the recognition model without amplifying its complexity. Through this strategy, ResNeXt achieves improved classification efficacy by employing a simplified topology, eliminating the need for additional parameters. Experimental results demonstrate that ResNeXt can achieve superior classification performance while utilizing a more straightforward architecture, thereby avoiding the necessity for parameter augmentation. Table 1 presents the detailed architecture of the proposed network.

Detailed ResNeXt50 network to produce the first modality

Detailed ResNeXt50 network to produce the first modality

The proposed architecture in Table 2, referred to as ViT, is specifically devised for the purpose of classifying objects with correlations between similar classes and dissimilar classes. While global classification can be achieved through relatively simple global cues, achieving finer classification necessitates highly localized discriminative regions. In this study, to capture these subtle distinguishing features, prototypes have been applied to condensed image patches. This approach aims to differentiate between categories at a more detailed level, allowing for more precise classification by focusing on localized features.

Detailed ViT network to produce the second modality

Detailed ViT network to produce the second modality

The scaled dot-product attention serves as a pivotal element within the Multi-Head Self Attention layer (MHSA) [51] of the Transformer architecture. In the MHSA, a set of queries

To mitigate the issue of exceedingly small gradients and enhance training stability, every element within the QK

T

matrix is multiplied by the constant factor

We synergistically combined the method of proportional scaling to optimize parameter efficiency and training speed for EfficientNetB5 models. In addition, we integrated the concept of Noisy Student training to implement a semi-supervised learning approach that capitalizes on self-training and knowledge distillation principles, as presented in Fig. 2. This involves training a teacher model on labeled images to produce pseudo labels for unlabeled images. Subsequently, a student model is trained using a blend of labeled and pseudo-labeled images. Notably, this process employs student models of equal or larger size and introduces controlled noise to the student during the learning phase, resulting in further performance enhancement.

MobilenetV3

In this section, we introduced the utilization of MobileNetV3 as the foundational network architecture for the mobile semantic segmentation task. Concurrently, we employed specialized segmentation heads known as R-ASPP, as initially proposed in [xxx]. R-ASPP represents a streamlined adaptation of the Atrous Spatial Pyramid Pooling module [xxx], focusing on two distinct branches encompassing a 1 × 1 convolution and a global-average pooling operation. To harness richer features, we implemented atrous convolution within the final block of MobileNetV3. Additionally, we integrated a skip connection from low-level features to ensure the incorporation of finer-grained details.

Fusion

Given an input image, after passing through four models, we obtain a predictive label that amalgamates distinctive feature characteristics from each deep learning model. Tailoring the fusion process according to the method we propose is expected to enhance the accuracy in classifying diseased tree leaves. We have synthesized these processes into an algorithm outlined in Table 3.

Fusion model of four features

Fusion model of four features

Finally, our model has gained significant improvements in performance compared to the previous version at almost no computational cost. In detail, the new non-linearity activation called h-swish has fixed some weakness of its base by replacing the computationally expensive sigmoid with a piecewise linear analogue (RELU6):

Dataset

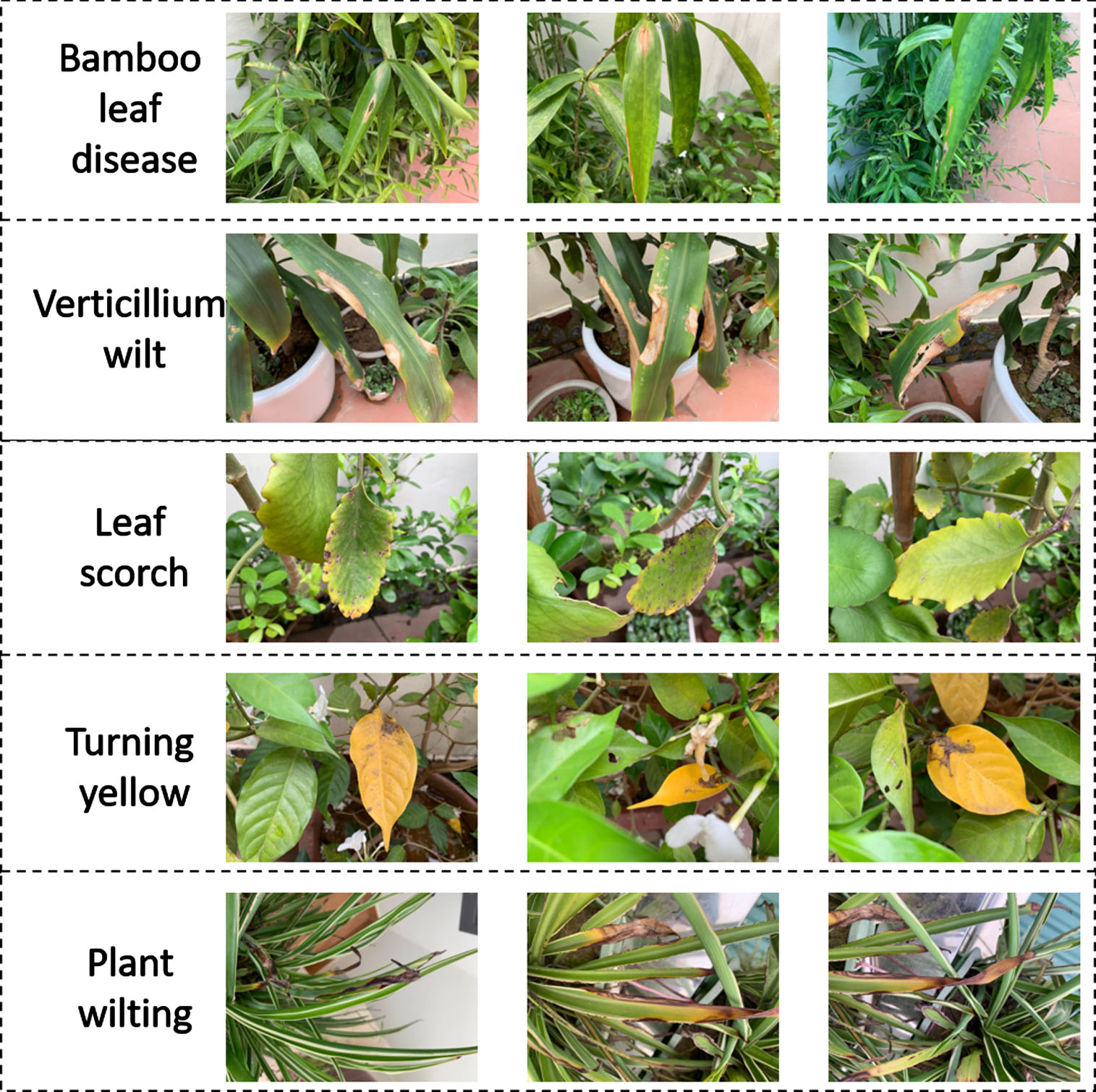

The model is trained and evaluated using expansive datasets: the Cassava Leaf Disease Classification dataset, comprising 15,000 images, and the TLU Leaf Disease dataset (show in Fig. 3), comprising 2,500 images. The TLU Leaf Disease dataset comprises 5 labels (bamboo leaf disease, verticillium wilt, leaf scorch, turning yellow, plant wilting), each containing 500 images. In Figure 3, each row corresponds to 3 sample images of the same label. Both datasets encompass five distinct labels corresponding to different diseases.

Samples images from the own collected dataset (TLU-Leaf).

The experimental outcomes were evaluated utilizing metrics including accuracy, F1 score, precision, recall, Kappa, and the confusion matrix. These metrics can be computed as follows:

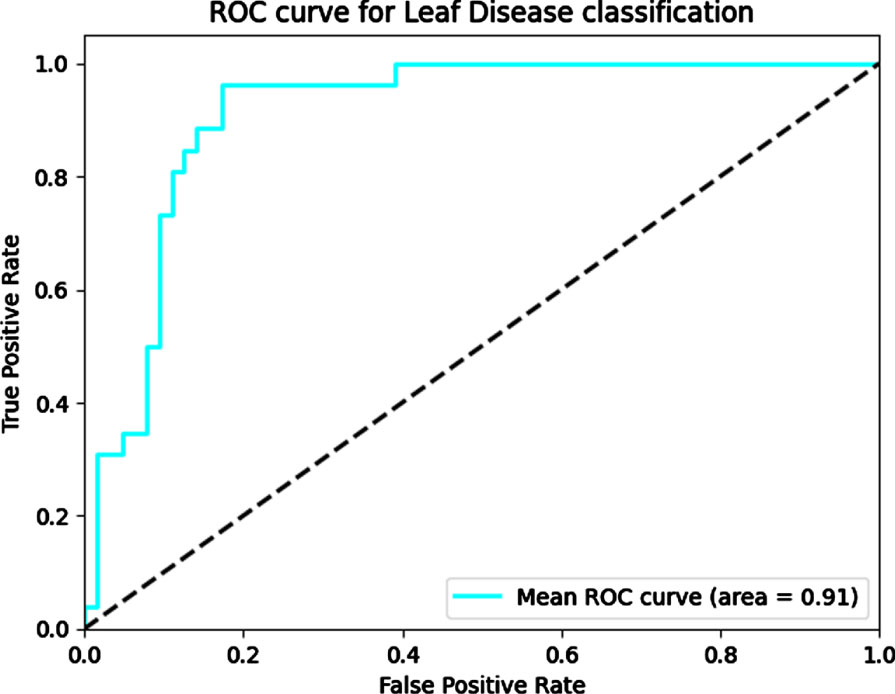

We also computed the Area Under the Receiver Operating Characteristic Curve (AUC) as a metric for assessing diagnostic accuracy.

We conduct training for our models using a synchronous training configuration on four Tesla M10 GPUs. We employ the PyTorch framework alongside the standard TensorFlow, incorporating a momentum of 0.9. The initial learning rate is set at 0.01, coupled with a batch size of 32 (equivalent to 8 images per chip). A learning rate decay rate of 0.001 is applied every three epochs. To enhance regularization, we integrate a dropout rate of 0.8 and an L2 weight decay of 1e-5. Our image preprocessing protocol aligns with the approach detailed in the backbone framework. Additionally, we integrate exponential moving averaging with a decay factor of 0.9999. Notably, all convolutional layers within our architecture incorporate batch-normalization layers, with an average decay rate of 0.99.

Experiment setup

We design our extensive empirical study to answer the following five key research questions (RQs): RQ1: How much does MnLeaf improve deep learning performance compared to classical deep learning methods? RQ2: How does each scenario in MnLeaf contribute to correct deep learning? RQ3: How do the key parameters affect the performance of MnLeaf?

In RQ2, we carried out a total of six distinct scenarios, which are outlined in Table 4. For RQ1, we showcase the experiments conducted on the three foundational network baselines. Furthermore, for both RQ3 and RQ4, we employed the two synthetic datasets to assess the performance of the models in the presence of noise. The results will be averaged over experimental runs on two datasets.

Six scenarios with different networks

Six scenarios with different networks

Comparison With Four Baselines (RQ1)

We conducted a comparative analysis between the outcomes achieved by our proposed method and a recently published approach using Cassava Leaf Disease dataset. The results, as demonstrated in Table 5, highlight the comparison based on metrics such as AUC, Kappa, accuracy, F1 Score, recall (specificity), and precision (sensitivity). Remarkably, our method surpasses all of these previous works in terms of accuracy, F1 Score, recall and precision. This also demonstrates that using a greater number of features will lead to higher accuracy in detecting diseased cassava leaves. Additionally, because the characteristics of various types of diseased cassava leaves are quite similar, the accuracy is still not very high (below 90%).

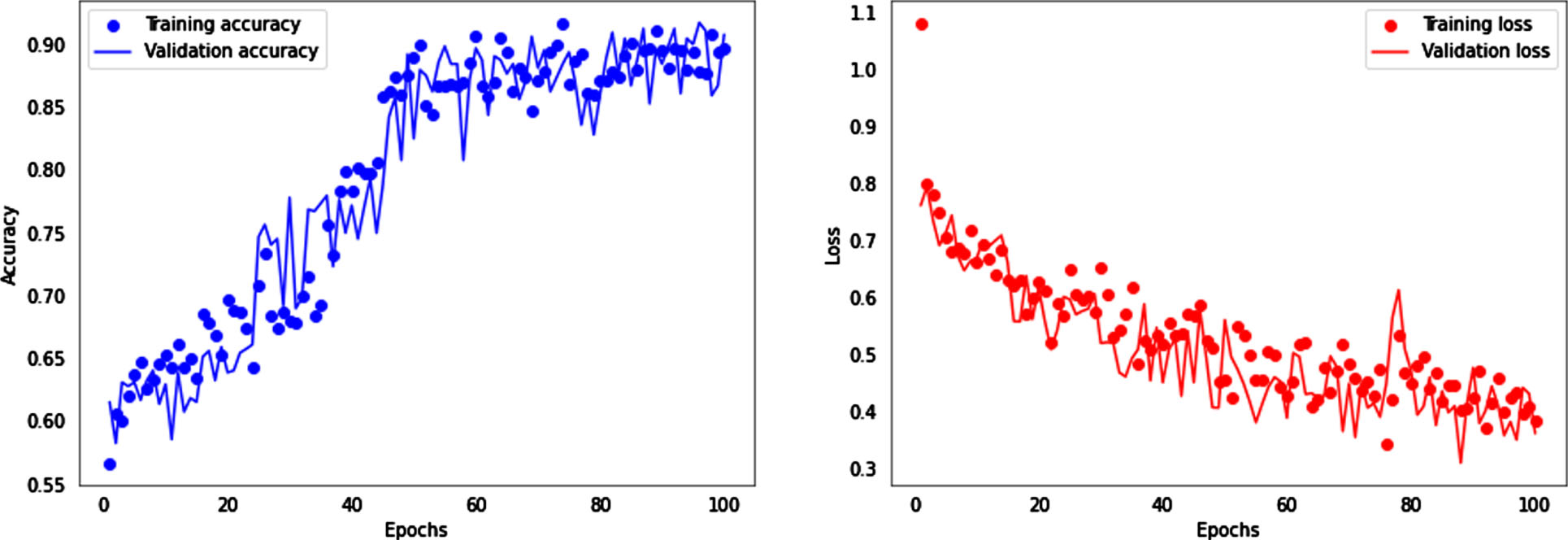

Training and loss progress with TLU-Leaf dataset.

Performance comparison with Cassava Leaf Disease dataset

We conducted model comparisons by ranking the overall accuracy results, as presented in Table 6. The results are reported in percentages. For each model, the authors ran experiments on various datasets, such as the PlantVillage Dataset and Paddy Leaf Dataset, among others. We observe that our proposed MnLeaf model achieves relatively high overall accuracy. It outperforms models like DenseNet 121 [53], CNN-based architecture (modified LeNet) [58], Modified U-net segmentation [59], ResNet152, and InceptionV3 [60] on the PlantVillage dataset. Additionally, MnLeaf’s performance surpasses that of the baseline EfficientNet models based on their respective rankings. In general, our deep learning model achieves a reasonably high level of accuracy, but it does have a weakness in terms of model size, which is quite large (156M params).

Performance comparison with others study

The outcomes of six scenarios with TLU-Leaf dataset are presented in Table 7. The table illustrates four models capable of detecting leaf diseases by utilizing input data and the corresponding network. Among the obtained results, the sixth experiment demonstrates the most favorable performance, achieving an accuracy of 90% and an F1 Score of 90%. This elevated performance can be attributed to the amalgamation of diverse methodologies and a well-suited neural network. As a result of effectively synthesizing multiple input features through neural networks to learn requisite characteristics, the AUC, Kappa, precision, recall, and F1 Score all surpass 90%, with marginal disparities from the accuracy benchmark. With our data still containing a considerable amount of noise, such as variations in vibration and blurriness due to different devices, achieving an AUC of 91% on the TLU-Leaf dataset is relatively good. Therefore, our future efforts will focus on improving data quality and enhancing the recognition capabilities of our models for various types of diseased leaves.

Scenario results with TLU-Leaf Dataset

Scenario results with TLU-Leaf Dataset

Due to the utilization of early stopping technique, the training process was halted after 48 epochs. Figure 4 depicts the model training progression over time, showcasing accuracy and loss trends for the sixth scenario with the TLU-Leaf dataset. Both training and validation accuracies exhibit an upward trend, while training and evaluation losses decrease as the number of training iterations increases. The close proximity of the curves indicates the absence of overfitting.

The mean ROC curves for the sixth model are depicted in Figure 5. The figure illustrates that our proposed multiple-model architecture has attained a substantial AUC of 91%, signifying strong performance in discriminating between negative and positive samples.

Mean ROC curves for the classifiers on the test set.

Table 8 presents a detailed disease classification across various leaf conditions in the sixth scenario. The model performs exceedingly well, with the precision, recall, and F1 Score for the macro average reaching 81%, 79%, and 79% respectively. The micro average and weighted average stand at approximately 91%. Notably, we observed a 91% accuracy with our model without excessive tuning. Support reaches 166 for disease-afflicted leaf classes (bamboo leaf disease, verticillium wilt, leaf scorch, turning yellow, plant wilting) and 830 for each accuracy, micro average, and weighted average.

Leaf Disease classification with TLU-Leaf dataset

We have presented a complex multiple-model neural architecture for the detection of leaf diseases. The MnLeaf framework can detect diseased leaves that are masked by four main models: ResNeXt50, ViT, EfficientNetB5 and MobilenetV3. With the initial high-quality images, we convert to lower-resolution images, noisy images, and segmented images. These four types of images are used to train the four models mentioned above, respectively. The accuracy of the proposed architecture is 99.62% when using the PlantVillage dataset for training and testing, which outperforms other studies using only one model (Table 6). To evaluate the effectiveness of the proposed architecture, we collected a dataset of 2,500 images captured by a phone. Tests with several modern models including ResNeXt50, ViT, EfficientNetB5, MobilenetV3, and multi-networks rules show that our proposed method can reach a 90% F1-score, better than other methods. These results show the promise of the proposed method.

The limitations of current methods for plant disease detection can be summarized as follows. Each of these challenges is listed by the authors: (1) larger sized model; (2) limited data availability; (3) real-world image usage; (4) more accurate disease classification; and (5) disease stage identification. In ongoing work, we focused on the detection of diseases at different positions on plants and various disease stages. The developed model can be integrated as part of an IoT-based system that finds widespread use, enabling early disease detection on leaves from remote distances.

Footnotes

Acknowledgement

This work was supported by Thuyloi University, Vietnam.