Abstract

Since it satisfies all prerequisites for the growth of humanity, agriculture is currently regarded as being the most significant sector for civilization. One of the main forms of human energy production is thought to be plants, which also provide nutrients, cures, etc. Any damage or disease brought on by exposure to pathogens, viruses, bacteria, etc., while cultivating plants results in a decline in productivity, making it crucial to prevent such diseases and take the required precautions to avoid them. Accurately identifying such fatal diseases is a crucial first step for both the businesses and farmers. Six different Convolutional Neural Networks (CNNs) that accept plant leaf images as input, along with the Enhanced Symbiotic Organism Search (ESOS) optimization algorithm, have been implemented in our research. We intend to extensively contrast the various models based on accuracy, precision, recall, and F1-score. In the area of image recognition and classification, convolutional neural networks (CNNs), in particular, and deep learning, in general, are developing. The literature contains a variety of CNN designs. The dataset size, the number of classes, the model’s weights, hypermeters, and optimizers are a few examples of the variables that have an impact on a CNN model’s performance. Because of its benefits, transfer learning and fine-tuning a pre-trained model are now very popular. This study examines the impact of six popular CNN models: DenseNet, MobileNet, EfficientNet, VGG19, ResNet and Inception. As a result, DenseNet demonstrates an optimal accuracy rate of 98% when compared to other models.

Keywords

Introduction

India’s economy has grown significantly as a result of agriculture. Based on the soil type, weather in the area, and the crop’s economic value, the farmer chooses the necessary crop. Due to climate change and political uncertainty, the agricultural sector began looking for novel ways to increase food production because of population growth. This makes it possible for researchers to hunt for new, highly productive inventions that are precise and accurate. To decide how to maximize agricultural output, farmers can collect data and information using precise agriculture technologies. The economy of India and its population both significantly rely on agriculture. Typical leaf growth and color are two common indications that units suffered from distortion, development difficulties, withering, and damage. Although parasites and viruses can severely damage crops and spread disease to plants, they are also critically harmful to human health. The yields require meticulous inspection and appropriate management in order to guard against significant losses [2]. Plant infections can impact the leaves, stem, and natural products, among other parts of the plant. Over flowers and other natural objects, leaves provide some noteworthy points during all four seasons of the earth.

Climate change has considerably increased the likelihood of plant illnesses developing in the present. Plant diseases have a significant effect on their development, production, and quality. As a result, a technique for plant disease diagnosis and control at an early stage is needed. An early disease forecasting system must take into account crop phenology, meteorological factors, and the time of a disease’s attack. The date of the pest observation (BioFix) and the most recent meteorological data for a field are used to estimate pest assaults under field conditions. The forecast system’s main goals are to minimize the use of control methods and fungicides, as well as to minimize crop loss. To create a forecasting model, it is necessary to simultaneously monitor field micrometeorological parameters and pest/disease data. Identification and classification of plant diseases are based on the pathogens’ symptoms and indications. Numerous times, the detection of diseases on the basis of symptoms becomes extremely challenging. As a result, identification via digital images has been advancing. However, multispectral and hyperspectral photos are more expensive for farmers and offer less information.

Literature survey

Compared the capabilities of deep learning models, DenseNet, MobileNet, EfficientNet VGG19, ResNet, and Inception, to detect tomato plant diseases. The accuracy of disease classification obtained through experimentation and disease detection using deep learning approaches is pretty good. Table 1 illustrates the study of various deep learning models with their pros and cons.

Literature review of various approaches with their pros and cons

Literature review of various approaches with their pros and cons

A publicly accessible dataset called PlantVillage was used to train all of the deep learning models. It includes 10,050 images of various healthy and diseased leaves associated with ten different classes (Fig. 1 displays several plant diseases). For the purpose of this article, the term “responsible party” refers to any person or organization that has the authority to act on behalf of another person or organization. The dataset was divided into three groups: training, validation, and testing datasets, correspondingly, to avoid overfitting.

Sample images of diseased leaves.

The symbiotic link between organisms in nature served as the inspiration for the metaheuristic optimization algorithm known as Enhanced Symbiotic Organism Search Optimization (ESOS). To resolve challenging optimization issues, it imitates the interactions and dynamics seen in ecological systems. Three different types of organisms— host organisms, symbionts, and free-living organisms— are used to mimic the optimization process in SOS. Each creature in the search space of the optimization problem indicates a potential solution.

The optimization process of SOS consists of the following steps: Initialization: Produce a starting population of symbionts and hosts. Each organism should be given a fitness score based on an assessment of its function. Symbiosis Phase: Engage in symbiotic relationships with symbionts and host organisms. They communicate and adjust based on their individual fitness levels. By collaborating with one another and exchanging knowledge, this stage seeks to improve the quality of solutions. Reproduction Phase: From the symbiotic phase, pick the organisms that perform well. Reproduce, mutate, or recombine to produce new host organisms and symbionts. Free-living Phase: Permit some creatures to exist separately from symbiotic relationships. Exploration of the search space is encouraged during this stage, broadening the range of potential solutions. Evaluation of Fitness: Assess the level of fitness of the newly created organisms during the reproduction phase. Adapt the organisms’ fitness values based on the objective function. Termination Criteria: Choose a condition for termination, such as reaching a particular solution quality or a set number of iterations.

The system seeks to discover optimal or nearly optimal solutions for the given optimization issue through the interactions and evolution of organisms in ESOS. It makes use of the cooperative, competitive, and adaptive processes found in natural ecosystems to effectively comb the search space and converge on promising answers.

Pseudocode for ESOS algorithm

Deep learning architectures

The utilization of a deep learning architecture in conjunction with the ESOS algorithm presents a viable approach to augment the optimization procedure in the context of training. ESOS is an evolutionary optimization technique that draws inspiration from natural processes, specifically the principle of natural selection. Its purpose is to optimize the hyperparameters or parameters of a deep learning model.

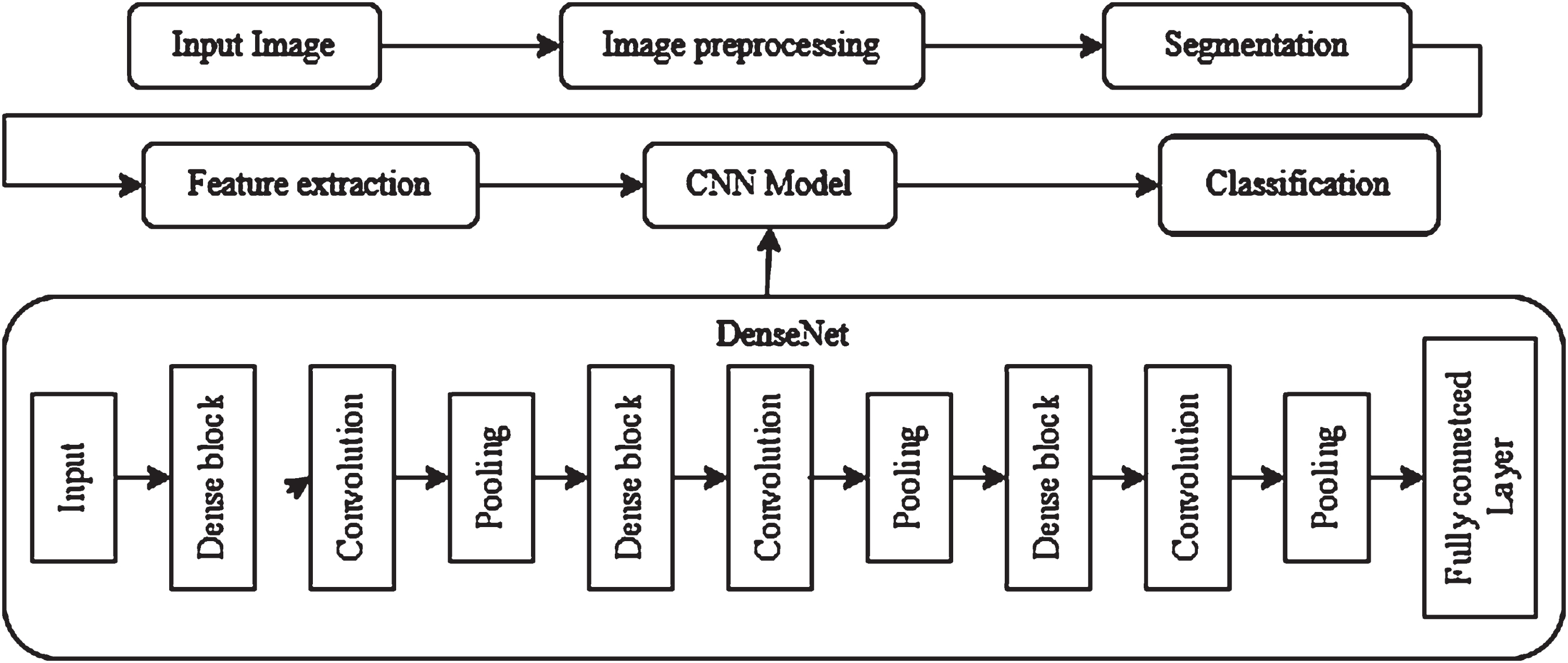

DenseNet

Using Dense Blocks, which connect all layers (with matching feature map sizes) directly with one another, a DenseNet is a type of convolutional neural network that makes advantage of dense connections between layers [11]. Each layer receives new information from all layers that came before it and sends its own feature-maps to all layers that came after it in order to preserve the system’s feed-forward component. Each layer in the DenseNet transmits its own feature maps to all layers below it and gets new information from all levels above it [12]. Concatenation is employed. Each layer receives the “collective knowledge” of all layers underneath it. Figure 1a illustrates the basic block diagram of DenseNet architecture.

a). Block diagram of DenseNet architecture.

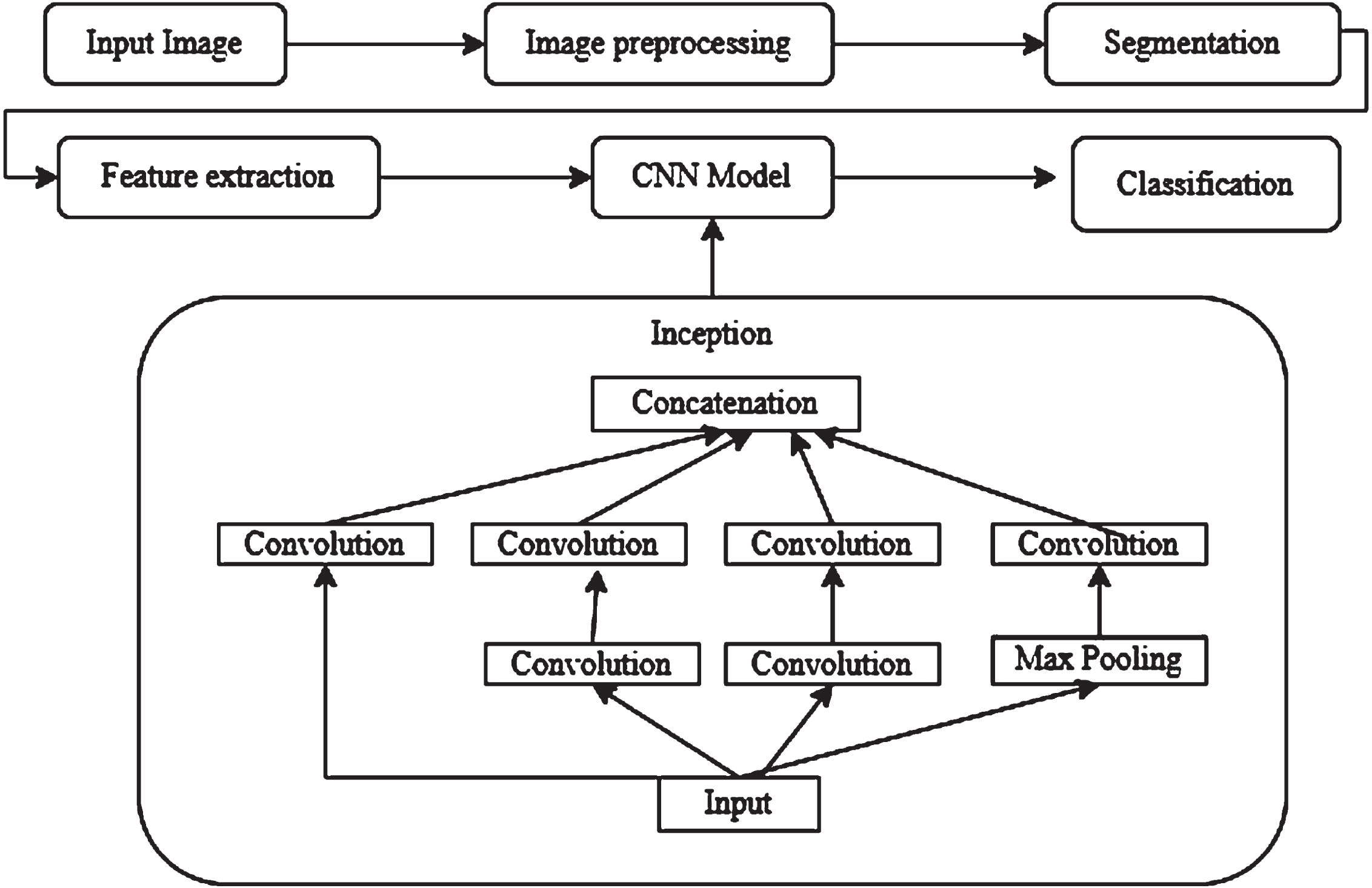

Extending GoogleNet is the 48-layered DCNN known as Inception. Convolution is a process that develops feature maps by using a combination of symmetric and asymmetric blocks implementing a filter to the picture input, computing the average from each patch’s feature map using standard pooling, Max-pooling, which optimizes the pixel and helps cut down on the amount of the parameters needed for learning [13], lowers processing costs. Concerts pool inputs of similar size and usually include dropouts after pooling, improving accuracy and reducing overfitting. Figure 1b illustrates the basic block diagram of Inception architecture.

b). Block diagram of Inception architecture.

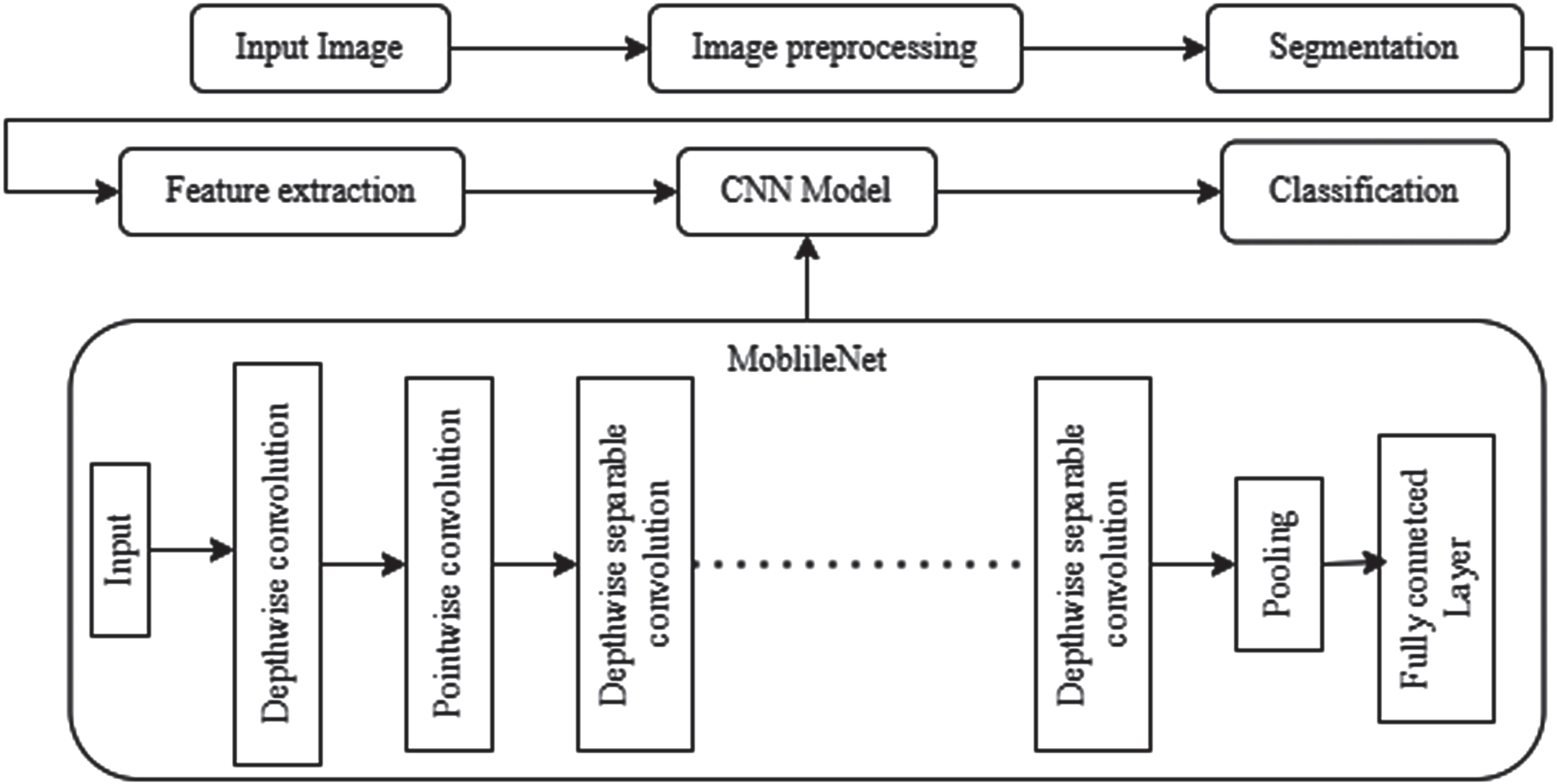

Google has made its open-source MobileNet machine vision model available for classification training. It creates a thin deep neural network by employing depthwise convolutions to dramatically lower the number of parameters compared to previous networks. The initial Tensorflow mobile computer vision model is known as MobileNet. When compared to other networks with normal convolutions and the same depth in the nets, depthwise separable convolutions dramatically reduce the number of parameters [14]. Lightweight deep neural networks are the outcome of this. Convolutional neural networks (CNNs) of the MobileNet class, which Google open-sourced, can be used to train extremely quick and compact classifiers. Figure 1c illustrates the basic block diagram of MobileNet architecture.

c). Block diagram of MobileNet architecture.

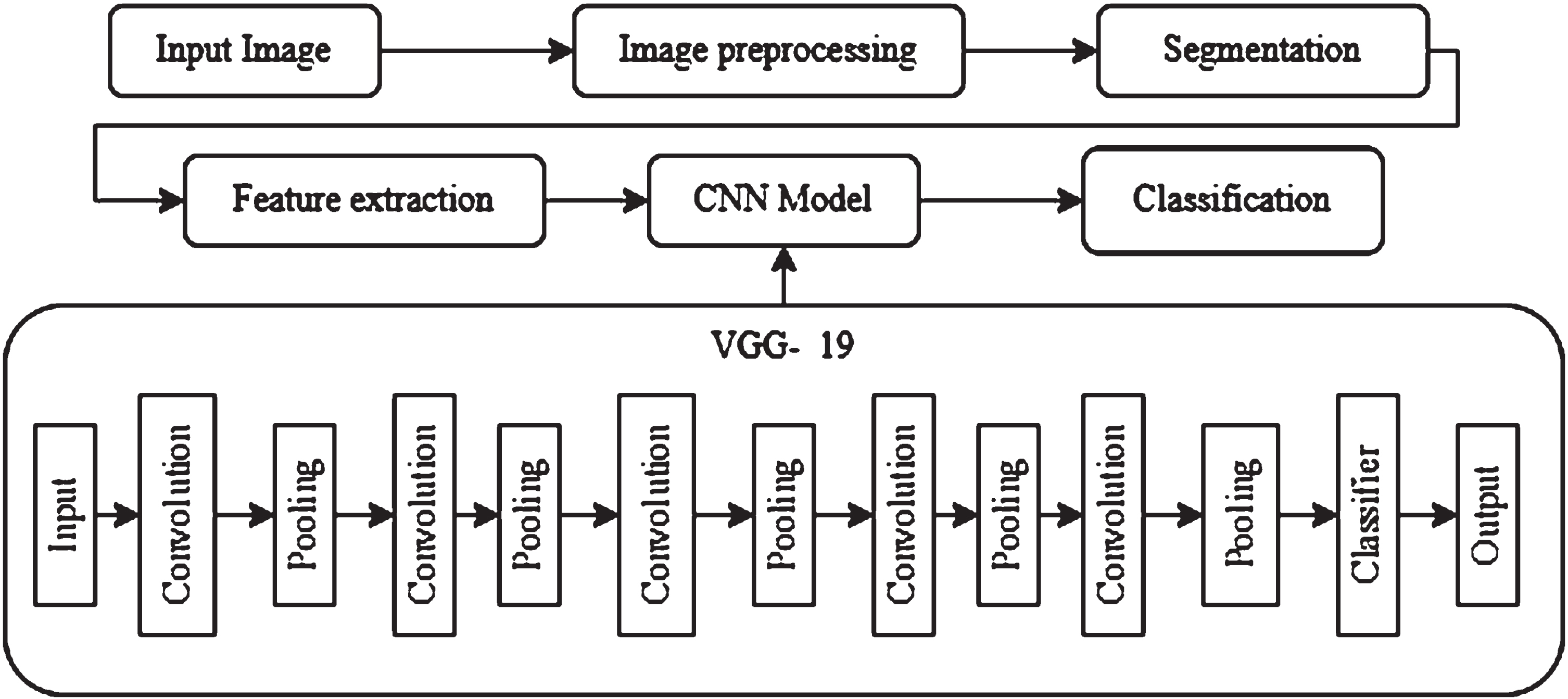

Only the most powerful and predictive neurons are activated to produce a forecast after the network iterates through all of the training examples in forward and backward propagation. The training process for the model involves running all training samples twice, once forward and once backwards. When utilizing forward propagation, the loss and cost functions are calculated by comparing the anticipated target and the actual target for each tagged image [15]. Gradient descent is used through backward propagation to update each neuron’s weights and bias, providing greater weight to the neurons with the highest predictive potential until the best possible activation combination is found. Figure 1d illustrates the basic block diagram of VGG19 architecture.

d). Block diagram of VGG19 architecture.

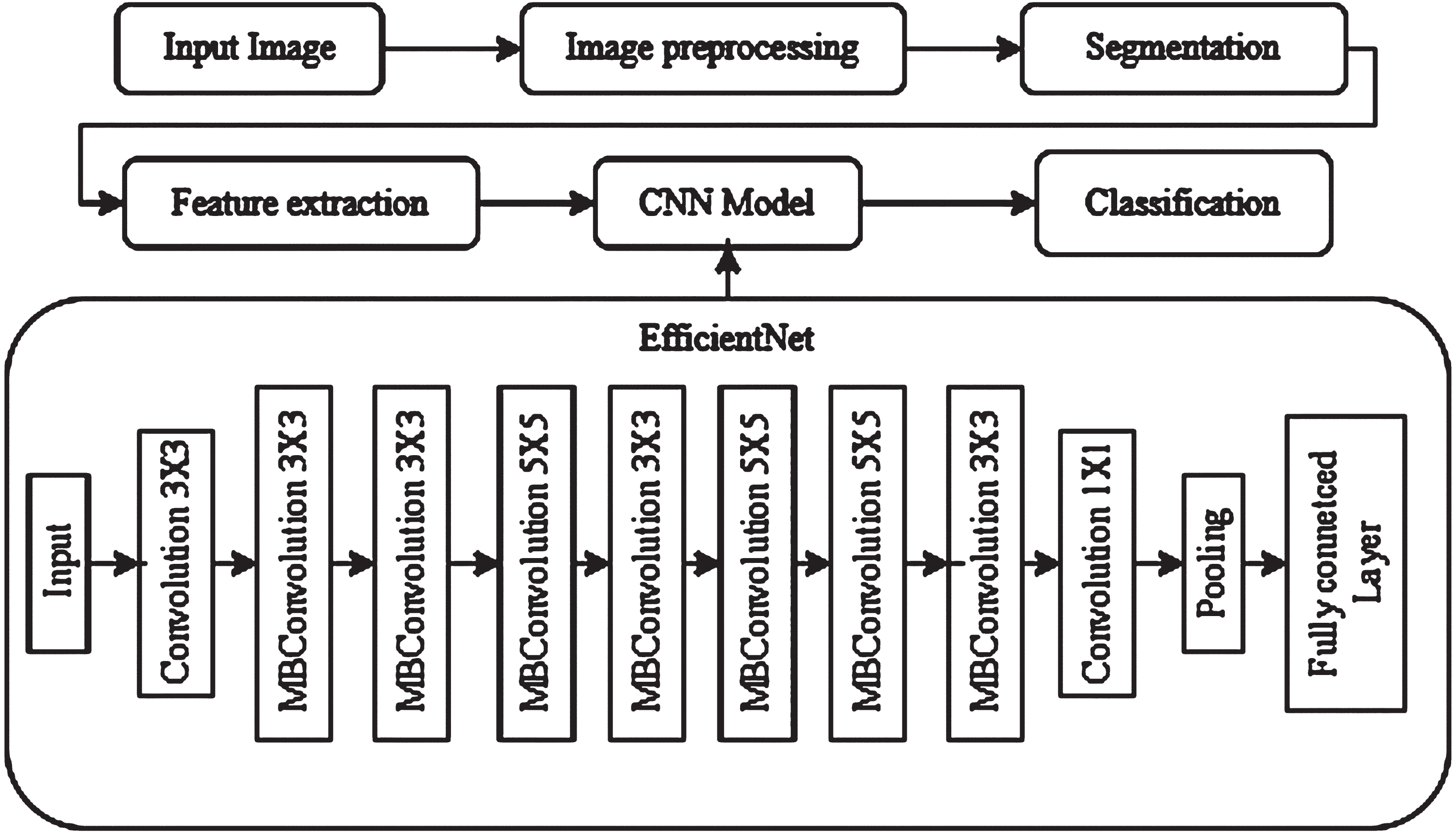

The design tenets of EfficientNet centre on striking a balance between model correctness, computing effectiveness, and model complexity. It addresses the issue of creating models that are extremely accurate while also being computationally economical, which is essential for real-world applications where computational resources are frequently constrained. EfficientNet’s architecture is built on the idea of compound scaling, which entails uniformly increasing the network’s depth, width, and resolution. In comparison to earlier models that were individually optimized for depth or width, EfficientNet delivers improved performance by maintaining a balance between both scaling factors. The employment of a compound scaling formula by EfficientNet to establish the ideal scaling factors for depth, width, and resolution is one of its distinguishing characteristics [16]. This equation guarantees that the model obtains the best accuracy-to-efficiency ratio. EfficientNet improves accuracy while retaining processing economy by scaling up the network design. Figure 1e illustrates the basic block diagram of EfficicentNet architecture.

e). Block diagram of EfficicentNet architecture.

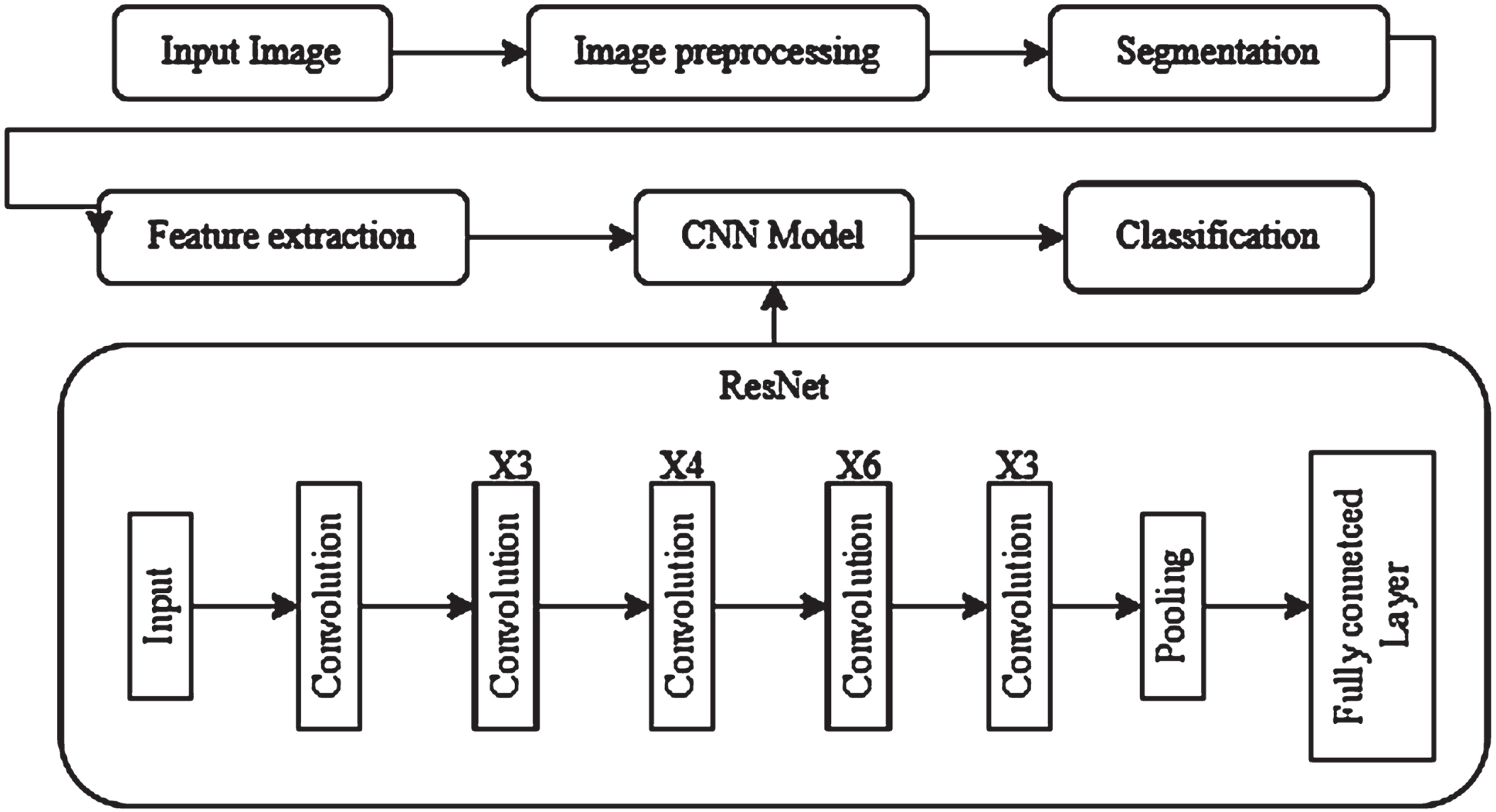

ResNet’s ability to solve the issue of vanishing gradients in deep neural networks represents its main innovation. Gradients may become less pronounced as a network gets deeper, making it more difficult for it to learn and spread relevant information. The introduction of residual connections, also known as skip connections, which enable the network to skip some layers and immediately transfer data from previous layers to later ones, solves this issue. A residual block is the fundamental unit of a ResNet. A skip connection that adds the input to the block’s output comes after each residual block’s two or more convolutional layers [17]. ResNet topologies come in a variety of depths, with the number indicating the total number of layers in the network, such as ResNet-18, ResNet-34, ResNet-50, ResNet-101, and ResNet-152. On a variety of image classification tasks, deeper ResNet models have demonstrated enhanced performance, frequently outperforming shallower architectures. ResNet architectures have also been used for tasks including object detection, semantic segmentation, and picture synthesis, in addition to image classification [18]. ResNet is a popular option for researchers and practitioners in the field of computer vision due to its adaptability and efficacy. Figure 1f illustrates the basic block diagram of ResNet architecture.

f). Block diagram of ResNet architecture.

In this section, we have evaluated each model using the accuracy, precision, recall, and F1-score result characteristics [19]. A comprehensive breakdown of each model’s output parameters can be found in Table 2.

Exhaustive deterioration of model output parameters

Exhaustive deterioration of model output parameters

The highlighted values in Table. 2 above show the best model results. DenseNet did the best, producing 98% accuracy. Adam, a middling category optimizer, was used in this model’s configuration, and training took 156 seconds. The algorithms utilised had an accuracy of 89% and included MobileNet, EfficientNet, Resnet, Inception, and VGG19. Out of the six learnt models, the VGG19 model’s accuracy was the lowest. The Inception model took the longest (214 seconds), but it failed to get the desired results.

Accuracy is used to gauge performance; accuracy is calculated as the ratio of the model’s correct predictions to all other predictions. The average accuracy of the enhanced images with the various models is shown in Table 2. From Table 2, it can be found that the testing accuracies of DenseNet, MoibileNet, EfficientNet, VGG19, ResNet and Inception are 98%, 93%, 95%, 89%, 95% and 96%, respectively.

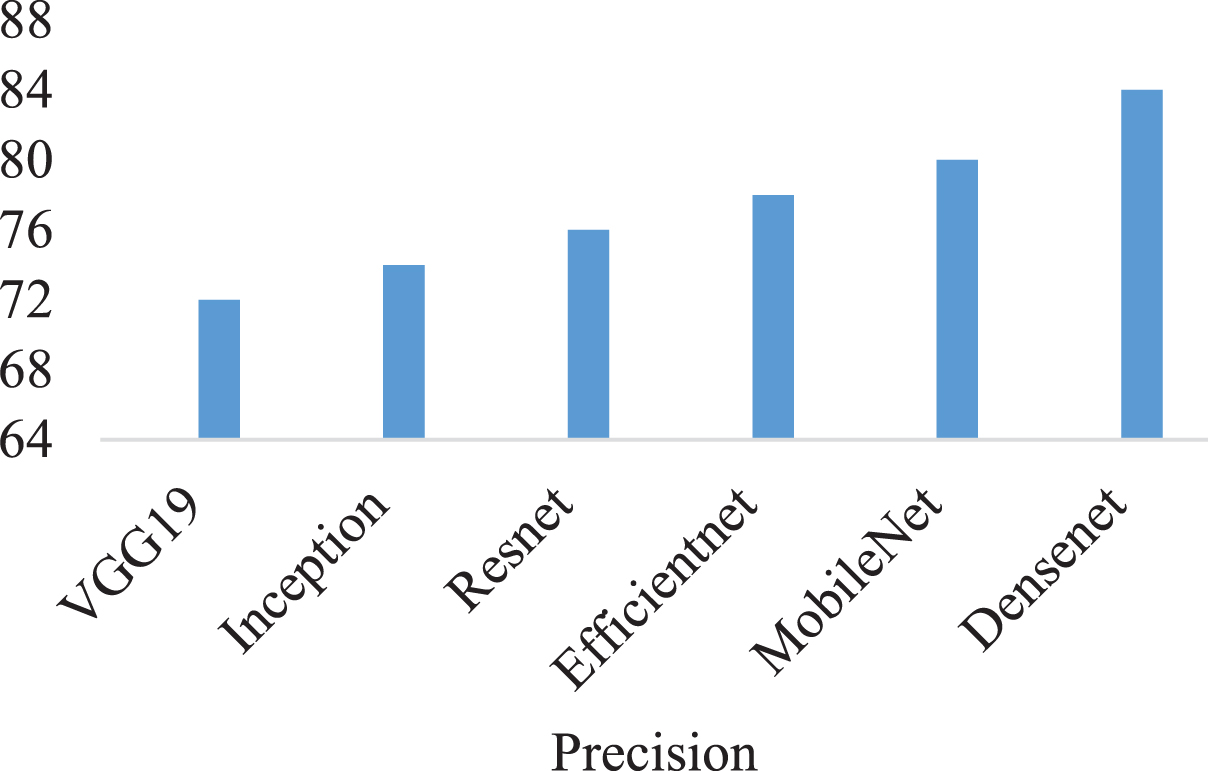

Furthermore, the performance’s recall, precision, and f1 score are assessed. Precision is defined as the ratio of the model’s true positive (TP) predictions to its total positive (TP+FP) projections. The subsequent standards are used to evaluate precision:

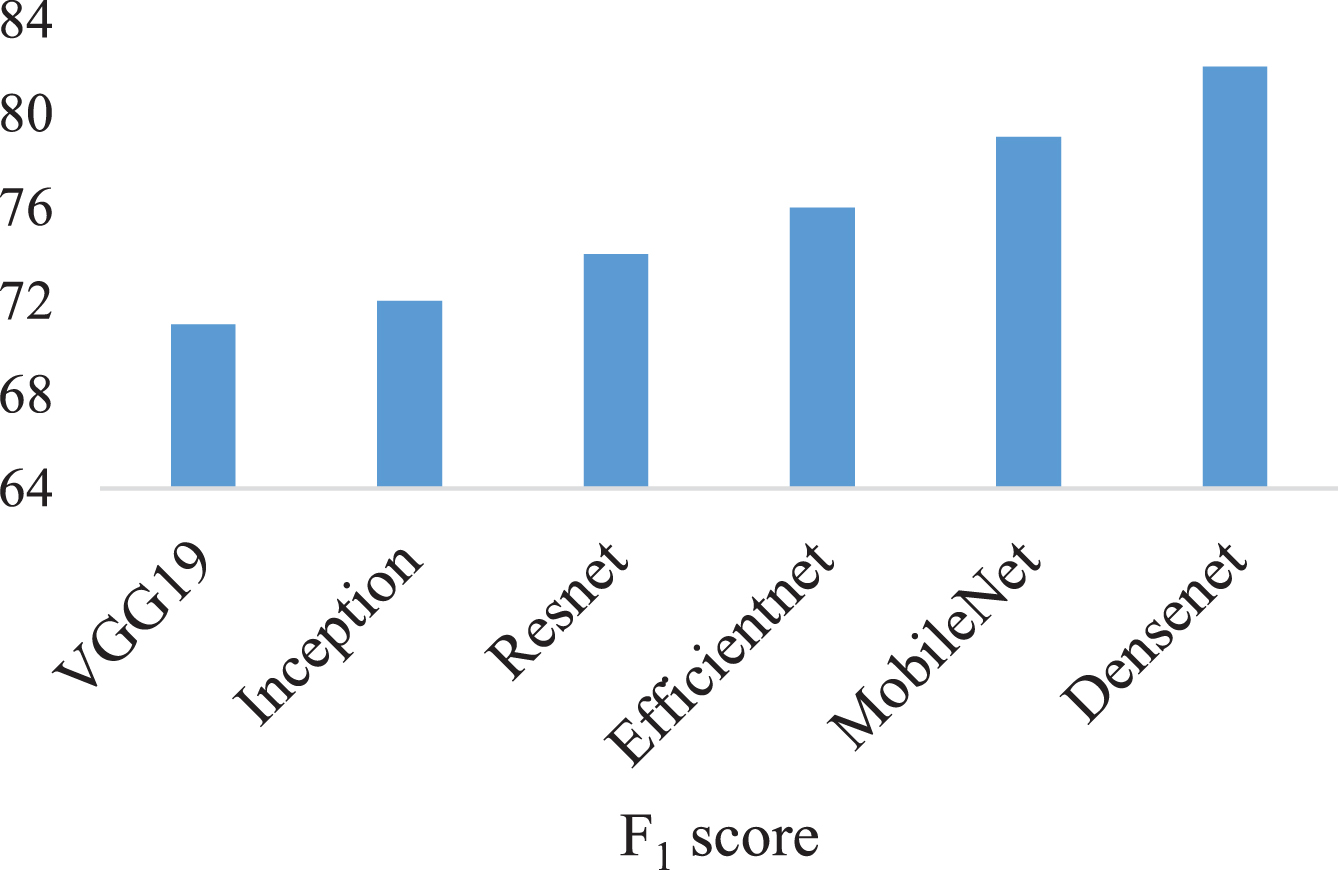

Figure 2 shows the precision of the various models. DenseNet yielded precision values of 84, MobileNet of 80, EfficientNet of 78, VGG19 of 72, ResNet of 76 and Inception of 74. The DenseNet model was observed to provide superior precision than other ensemble models. Recall is defined as the ratio of true positives (TP) to all sample data (TP+FN). Recall can be calculated using the formula below:

Comparing Precision Metrics across CNN Models.

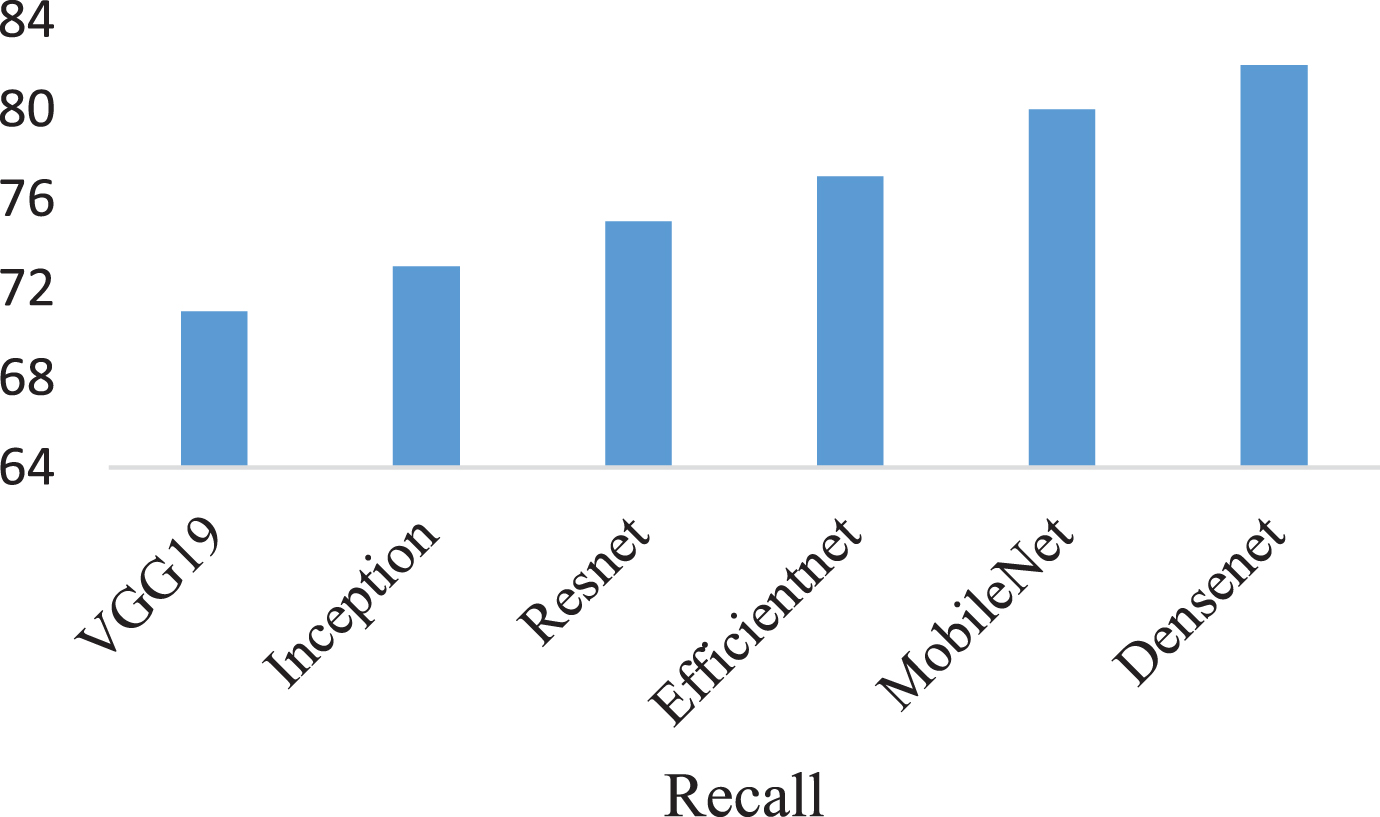

The recall percentages for various models are shown in Fig. 3. Recall values DenseNet of 82, MobileNet of 80, EfficientNet of 77, VGG19 of 71, ResNet of 75 and Inception of 73. We can infer from the recall value that the DenseNet outperforms other deployed models. Another popular performance indicator for deep learning algorithms is the F1 score. The balance between recall and precision is expressed by the F1 score. The harmonic mean of recall and precision is used to calculate the F1 score. The F1 score is determined by the following factors:

Recall Evaluation across Different CNN Architectures.

The F1 scores for models are shown in Fig. 4. The F1 values that were attained were DenseNet of 82, MobileNet of 79, EfficientNet of 76, VGG19 of 71, ResNet of 74 and Inception of 72. We can infer from Fig. 4 that DenseNet perform better than other ensemble models.

F1 score Performance Assessment of CNN Models.

In this section, using some of the existing deep learning models, such as DenseNet, MobileNet, EfficientNet, VGG19, ResNet, and Inception, we will assess the results of our proposed model. The number of parameters and classification accuracy of deep learning models are compared in Table 2. Additionally, when we compared the performance accuracy of our suggested CNN model with DenseNet to that of existing deep learning models, we discovered that it performed more accurately while using fewer parameters [20]. The accuracy of the suggested CNN with DenseNet is 98% with relatively few parameters, while MobileNet, EfficientNet, VGG19, ResNet and Inception are 93%, 95%, 89%, 95% and 96% accurate with more parameters, respectively.

Comparison of six CNN models with and without ESOS algorithm

Comparison of six CNN models with and without ESOS algorithm

We examine how the ESOS algorithm affects CNN models for plant leaf disease detection. ESOS optimizes CNN model hyperparameters to increase performance. We train six CNN models, to evaluate the ESOS algorithm performance. Our investigations show that the models operate differently. ESOS-trained CNN models outperform those without hyperparameter optimization. ESOS-based models detect plant leaf diseases with greater accuracy, precision, recall, and F1-score. The ESOS approach increased CNN model performance, suggesting it might be used for hyperparameter optimization in deep learning-based plant disease detection applications. This study recommends integrating ESOS to maximize CNN model performance, develop precision agriculture, promote sustainable crop management. Comparison of six CNN models with and without ESOS algorithm integration is shown in Table 3.

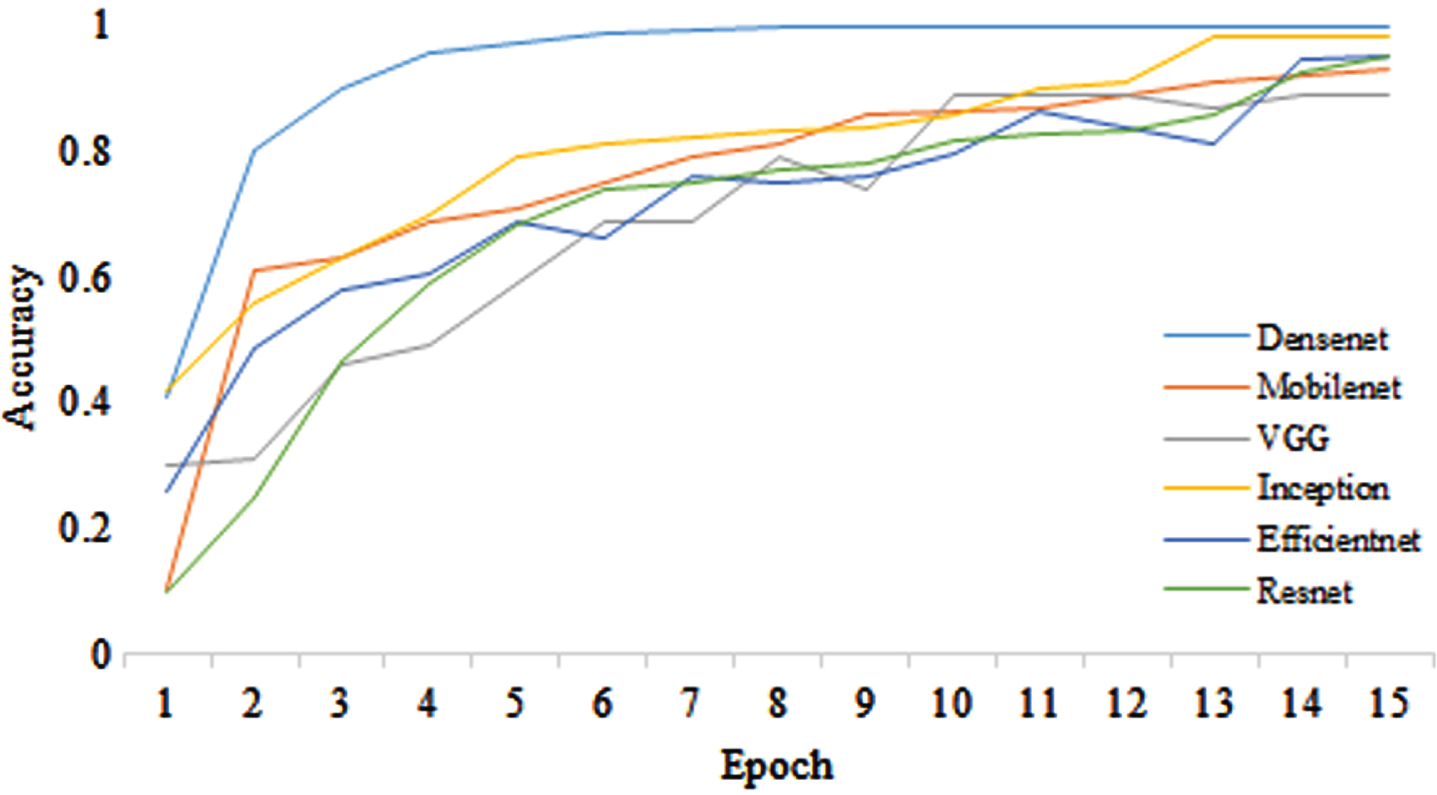

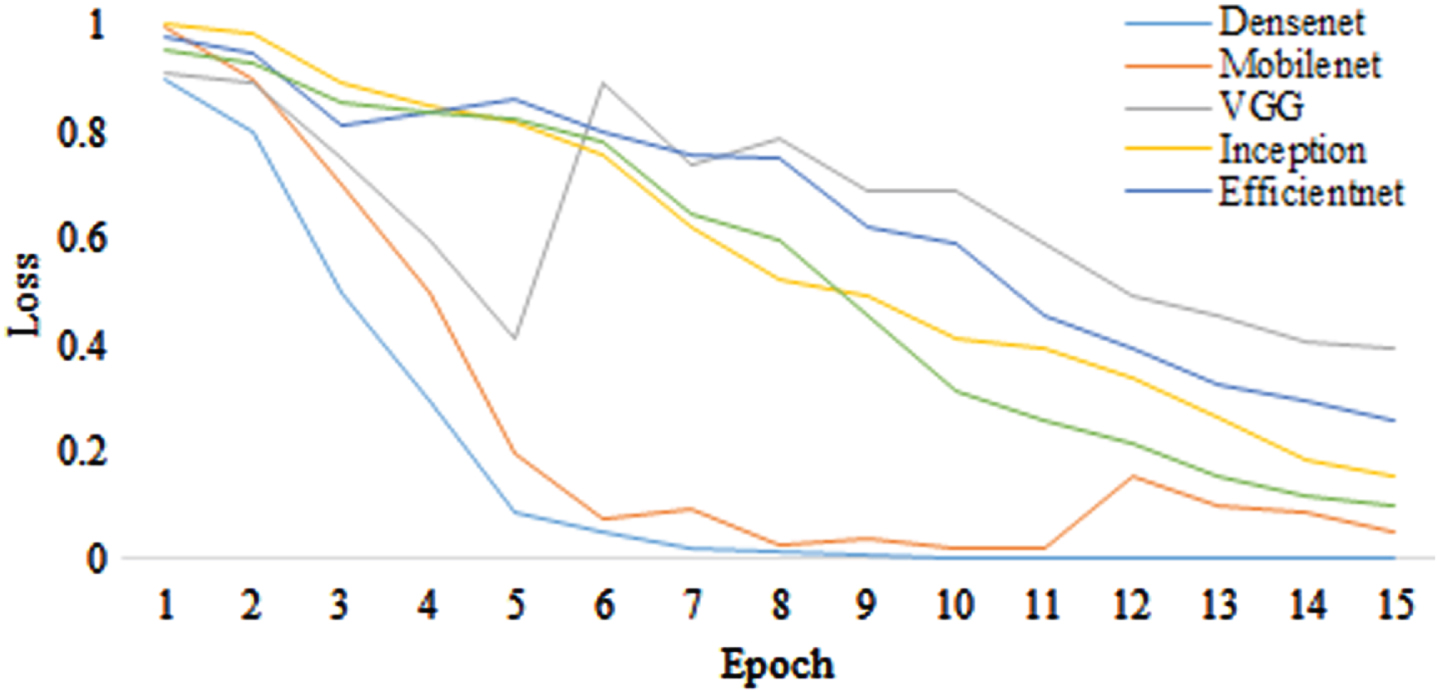

The accuracy curves are presented in the following part in terms of epochs. Models such as DenseNet, MoibileNet, EfficientNet, VGG19, ResNet and Inception have been developed and imparted using the following criteria:

Metric: Accuracy and loss

No. of epochs: 15

Training & Validation: 10 batches

The Number of images in the entire Train dataset: 10050 for each class

Number of images overall in the validation dataset: 1050 for each class.

Figures 5 and 6, depict the training accuracy and loss curves, respectively, regarding the number of epochs for DenseNet, MoibileNet, EfficientNet, VGG19, ResNet and Inception. The Fig shows that while EfficientNet takes longer than other models to achieve the ideal value, it stabilizes after 5 or 7 epochs. According to Fig. 5, DenseNet has considerably greater accuracy than the other models for the provided dataset. DenseNet works better in terms of training accuracy than the other models. If there had been more than 15 epochs, DenseNet might have exhibited greater precision.

Comparison of training accuracy.

Comparison of training loss.

We impart an ensemble model of CNN based on deep features in this article. Pre-trained deep networks for disease detection are evaluated using six different models. DenseNet, MobileNet, EfficientNet, VGG19, ResNet and Inception are the names of these deep networks.

In this research study, we used the transfer learning technique to enhance pre-trained CNN models. The outcomes of the plant leaf disease identification clearly demonstrate the efficacy of the ESOS algorithm. We assessed the accuracy scores of fine-tuning for the DenseNet, MobileNet, EfficientNet, VGG19, ResNet, and Inception models to ascertain how transfer learning affected system performance. The outcomes of the modified models were then combined with the output of the ESOS algorithm. An ESOS algorithm was therefore given the deep characteristics of pre-trained CNN networks. Furthermore, Adam (stochastic gradient descent with momentum) learning was used as the deep network optimisation method. After 15 iterations, the training process was over. Table 2 contains the accuracy ratings that were obtained for this experimental study. Table 3 shows that the DenseNet design, among deep networks based on transfer learning, had the highest accuracy (98%), while the VGG19 architecture performed the worst (89%).

In this research, we proposed identifying plant diseases using deep CNN-based deep learning models. We assessed the effectiveness of deep learning-based feature extraction for detecting plant diseases using a range of models. The results show that deep learning algorithms are more effective than manual feature extraction techniques at extracting results. Faster calculation on the same hardware platform is the main advantage of the suggested paradigm because of higher performance with fewer parameters. Deep learning models are helpful for identifying diseases and in the field of agriculture. The simplest models to construct for transfer learning and fine-tuning are CNN models where the new assignment closely resembles the original. One of the elements that influences how well a model operates after being tweaked is the model optimisation approach. In this study, the performance of six enhanced CNN models with the Adam-trained ESOS algorithm is studied. The MoibileNet, DenseNet, EfficientNet, VGG19, ResNet, and Inception, CNN models are used in the study. Training accuracy curves are employed as statistical metrics for contrasting various models. DenseNet with an accuracy of 98%, precision of 84%, recall of 82%, and F1-score of 82% together with the ESOS optimization algorithm performs better than other CNN models and has somewhat greater accuracy when the number of epochs is set to 15. For forthcoming research, we plan to develop models that integrate a number of machine learning classifiers with deep learning networks. Additionally, methods for feature reduction will be researched in order to extract the most beneficial characteristics from the acquired deep data.