Abstract

In fuzzy clustering algorithms, the possibilistic fuzzy clustering algorithm has been widely used in many fields. However, the traditional Euclidean distance cannot measure the similarity between samples well in high-dimensional data. Moreover, if there is an overlap between clusters or a strong correlation between features, clustering accuracy will be easily affected. To overcome the above problems, a collaborative possibilistic fuzzy clustering algorithm based on information bottleneck is proposed in this paper. This algorithm retains the advantages of the original algorithm, on the one hand, using mutual information loss as the similarity measure instead of Euclidean distance, which is conducive to reducing subjective errors caused by arbitrary choices of similarity measures and improving the clustering accuracy; on the other hand, the collaborative idea is introduced into the possibilistic fuzzy clustering based on information bottleneck, which can form an accurate and complete representation of the data organization structure based on make full use of the correlation between different feature subsets for collaborative clustering. To examine the clustering performance of this algorithm, five algorithms were selected for comparison experiments on several datasets. Experimental results show that the proposed algorithm outperforms the comparison algorithms in terms of clustering accuracy and collaborative validity.

Keywords

Introduction

Clustering is a typical unsupervised learning technique. If objects in the same clusters are more similar, and ones in different clusters are more dissimilar, the final clustering performance will be better. At present, clustering has been widely used in many fields [1–7] such as data mining, pattern recognition, image segmentation, fuzzy network, bioinformatics, etc. In order to make clustering widely available in more fields, it can be applied to large-scale group decision-making [8, 9]. Existing clustering algorithms mainly include hard clustering [10, 11] and fuzzy clustering [12–14]. The former has only two membership degrees, 0 and 1, that is, each data object is strictly divided into a certain cluster; The membership of the latter can have any values within the interval [0,1], that is, a data object can be classified into multiple clusters with different membership. The representative algorithm of fuzzy clustering is the Fuzzy C-Means (FCM) [15] algorithm, which is famous for its simplicity and rapidity but criticized for its sensitivity to the initial cluster centers and noise data. In order to improve the robustness of FCM, the possibilistic c-means (PCM) clustering algorithm introduced in [16] relaxes the requirement for fuzzy membership normalization, thus obtaining a possibilistic partition, thereby reducing the impact of noise data. However, PCM relies on initialization conditions that may produce clustering overlap. To overcome this shortcoming, membership and typicality are introduced in [17], and constraints the sum of typical values from all data points to one cluster is 1 (

The possibilistic fuzzy c-means (PFCM) algorithm [18] based on the FPCM algorithm, efficiently solved the problem that FPCM is prone to produce small typical values when facing large-scale datasets by relaxing the row sum constraints on typical values, and ensuring the generation of better cluster centers. The PFCM algorithm adds weight coefficients

A wide range of similarity measures enrich our choices, but at the same time increase the subjectivity in the selection process. To avoid this subjective error, clustering algorithms based on information bottlenecks have attracted much attention. The information bottleneck theory approach, based on the joint probability distribution between data points and features, uses the information loss generated in the process of sample merging as a measurement standard to perform clustering, and achieves a good clustering effect. At present, many clustering algorithms based on information bottlenecks have been proposed [23–25] and have solved some problems very well. In [23], the bottleneck of bicorrelation multivariate information is introduced into multi-view clustering (MVC), thus solving the problem that MVC only learns the correlation relationship between features or clusters, and solving the problem of unsatisfactory clustering performance. In [24], to cope with a large amount of unlabeled and heterogeneous data, shared view knowledge is transferred to multiple tasks enabling automatic classification of human behavior in unlabeled cross-perspective video collections, which can improve the performance of each task. In [25], used interactive information bottlenecks to deal with high-dimensional co-occurrence data clustering problems, and proposed an interactive information bottleneck for high-dimensional co-occurrence data clustering.

Traditional clustering algorithms assume that different features are independent of each other, thus ignoring the correlation between features, which easily affects clustering accuracy. Collaborative clustering utilizes the collaborative relationship between different feature subsets for clustering, which is conducive to forming a more complete and accurate description of the data organization structure. According to the correlation between different feature subsets, the collaborative fuzzy clustering (CFC) algorithm [26] was proposed, which firstly performs clustering based on independent subsets of the data, and then collaboratively generates the final result by exchanging information on the local partition matrix. The study [27] introduced preprocessing method into collaborative fuzzy clustering, and proposed a collaborative fuzzy clustering data analysis method based on a preprocessing-induced partition matrix. The CFC algorithm has been widely used in many fields [28–30], and has been applied to brain MRI images intensity non-uniformity correction, super-pixel satellite influence on surface coverage, and overseas oil and gas exploration, respectively, and achieved relatively better clustering results.

In order to make full use of the relationship between different feature subsets and further improve clustering accuracy, this paper proposed a novel algorithm named collaborative possibilistic fuzzy clustering based on information bottleneck (ib-CPFCM). This algorithm uses the information bottleneck theory to measure the “distance” between the data points and the cluster centers. This theory takes the mutual information loss generated during merging clusters as the similarity measure, and therefore is conducive to improving the clustering accuracy. Besides the theory, the correlation between different feature subsets is used to perform collaborative clustering, and the corresponding fuzzy membership matrix and typical value matrix are obtained, which is easy to form a more complete description of the dataset.

The rest of this paper is organized as follows: Section 2 briefly introduces the PFCM algorithm, information bottleneck theory, and collaborative clustering algorithm. Section 3 introduces the proposed ib-CPFCM algorithm in detail. Section 4 presents the experimental preparation and experimental results. The final section summarizes this paper and proposes further research directions.

Related works

In this section, we briefly review the PFCM algorithm, information bottleneck theory, and CFC algorithm. Before that, the variables defined in this paper and their mathematical descriptions are given as Table 1.

Variables used in this paper

Variables used in this paper

The PFCM algorithm not only improves FCM in terms of robustness but also overcomes the clustering coincidence problem of the PCM. Furthermore, by relaxing the row sum constraints on typical values, the problem of generating small typical values with an increasing dataset is solved. The objective function of the PFCM algorithm is designed as follows:

In Eq. (1),

where

Information bottleneck applies the knowledge of information theory to the clustering process, and the desired clustering is the process of minimizing information loss in the cluster aggregation process. To minimize the mutual information loss in the entire clustering process, the greedy aggregation method is usually adopted, and when merging two clusters, the choice results in the smallest mutual information loss in each step.

Many existing pieces of literature have shown [33–37] that, the clustering performance of clustering algorithms using information bottleneck as a similarity measure is obviously better than traditional clustering algorithms, and it can better indicate the correlation between data points and features.

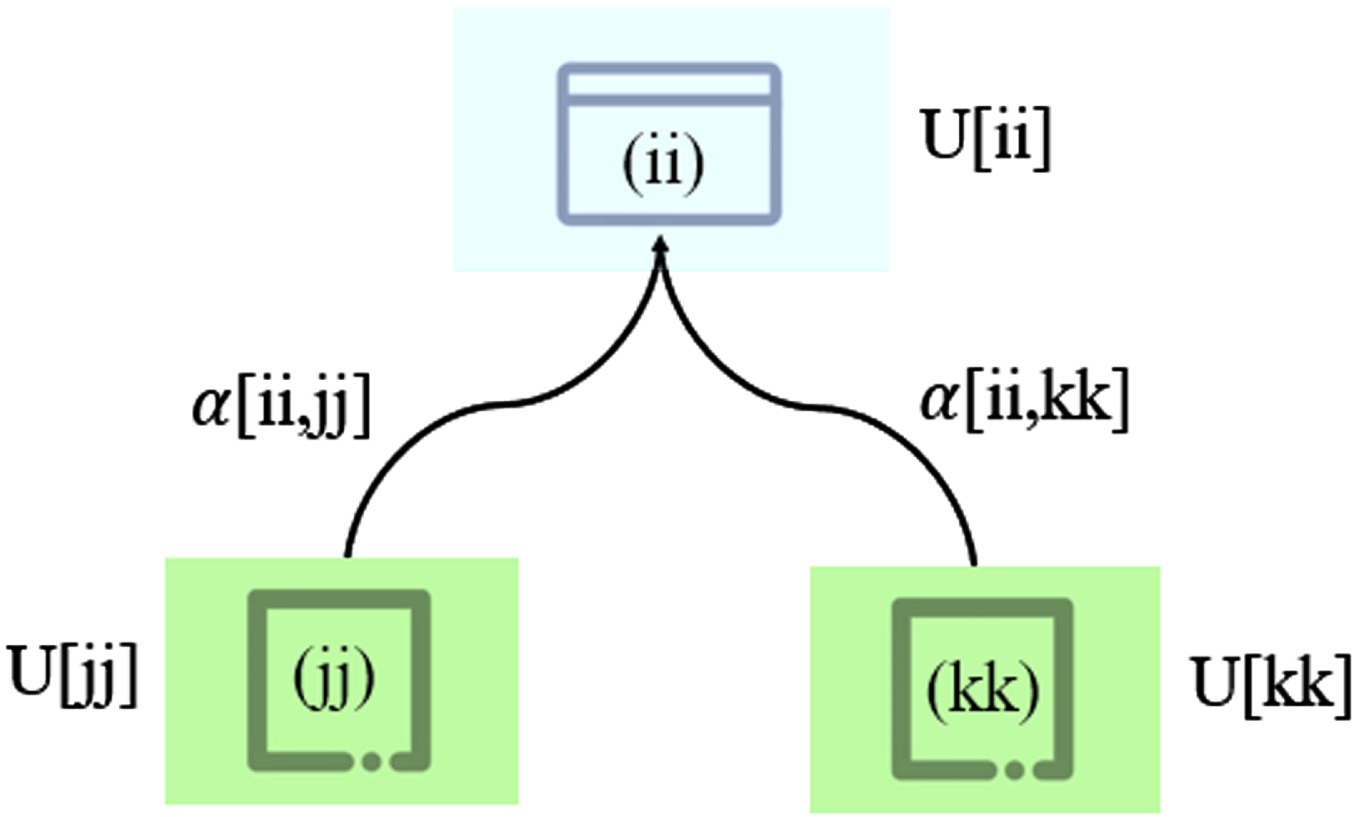

The CFC algorithm can utilize the collaboration relationship between different feature subsets for clustering. In this algorithm, the collaboration between feature subsets is established through the connection matrix, as shown in Fig. 1. Given an unlabeled dataset

Collaboration in clustering is represented by interaction matrix between subsets.

where

where

In this paper, based on collaborative clustering and information bottleneck theory, a collaborative possibilistic fuzzy clustering algorithm based on information bottleneck (ib-CPFCM) is proposed. The clustering performance of ib-CPFCM is outstanding because the algorithm uses the mutual information loss generated during merging clusters as a similarity measure, which improves the clustering accuracy in high-dimensional data. ib-CPFCM simultaneously performs collaborative processing of multiple subsets of relevant features, which helps to form an accurate and complete representation of the data organization structure. Given an unlabeled dataset

where the first part is the objective function of the possibilistic fuzzy clustering algorithm based on information bottleneck, and the second part is the collaborative relationship among feature subsets.

where

where

The clustering objective of the ib-CPFCM algorithm is to minimize the objective function under the premise of satisfying the constraint conditions. Whereby the Lagrange multiplier method can be used to construct the Lagrange equation as follows:

Eq. (16) calculates the first order partial derivative of each variable and makes it equal to 0, so that:

where

where

To simplify Eq. (20),

Using Eqs. (20), (25), (26), (27), and (28), the cluster center

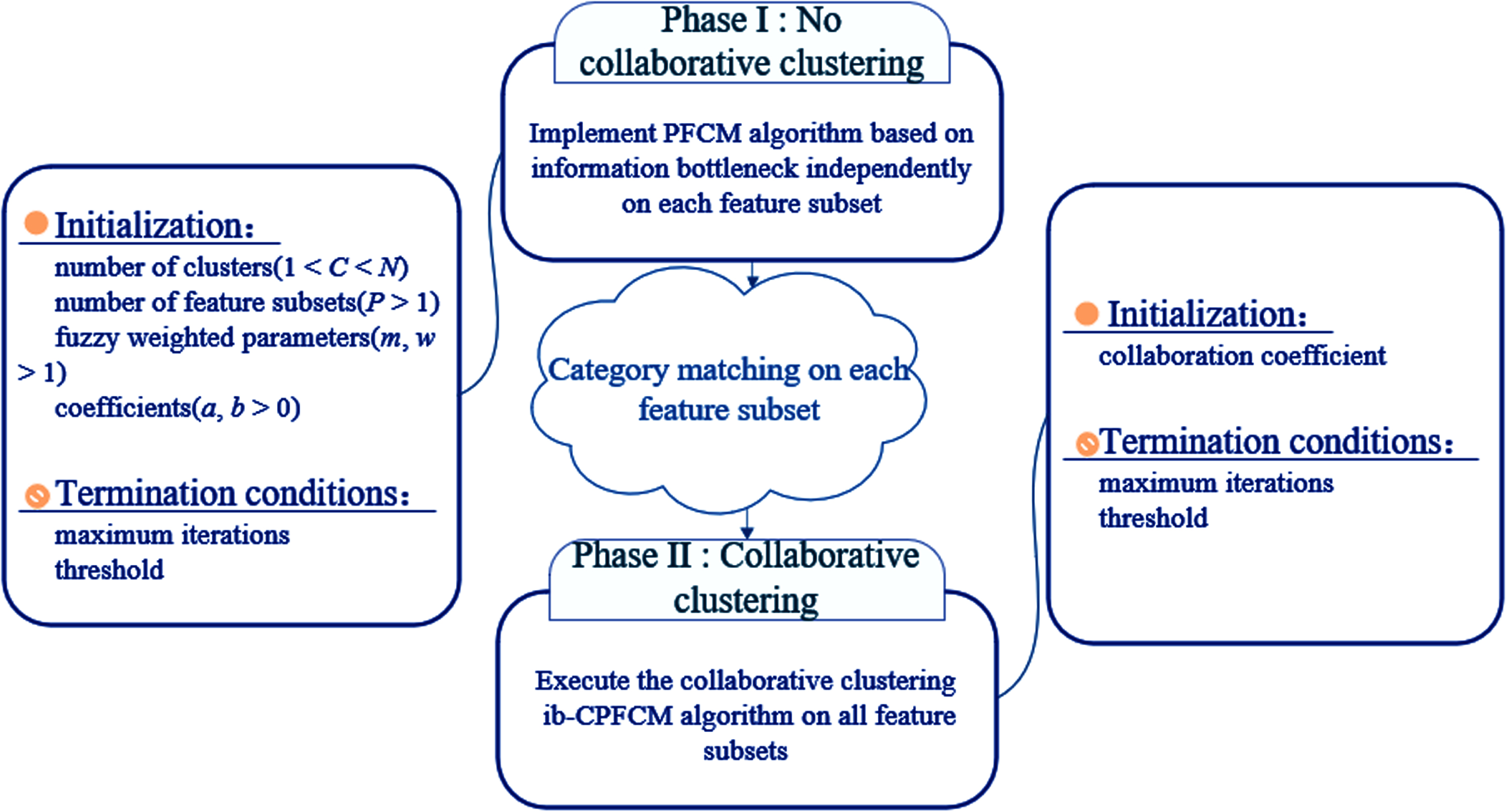

The clustering process of this algorithm mainly includes two stages (as shown in Fig. 2):

(1) Possibilistic fuzzy clustering based on information bottleneck

Two stages of ib-CPFCM clustering algorithm.

PFCM algorithm based on information bottleneck clusters each feature subset, requiring the same number of data points for each feature subset, and the feature attribute dimensions can be different.

(2) collaborative clustering algorithm

Set the collaboration coefficient α[

It should be noted that because each feature subset is an independent cluster, the corresponding cluster in

After the above theoretical analysis, algorithm 1 is the entire process of implementing the ib-CPFCM algorithm:

In order to evaluate the clustering effectiveness of the ib-CPFCM algorithm, five algorithms were selected for comparison experiments on nine datasets, and their clustering results were compared based on four evaluation indexes.

Experimental preparation

Nine datasets were selected from the UCI machine learning dataset (http://archive.ics.uci.edu/ml/datasets.php) for the comparison experiments, and the specific information of each dataset is shown in Table 2.

Features of the dataset

Features of the dataset

Horizontal distribution of datasets

There were five comparison algorithms in our experiments, namely FCM, PFCM, WPFCM, PFGG, and CFC. The experimental process simulated the clustering situation under different data conditions. The value of parameters

Table 3 shows three different horizontal distributions of each dataset to test the clustering effect of the algorithm under different horizontal distributions. The dataset on each horizontal distribution is divided into two feature subsets according to the features. The square bracket part represents the data site, and the numbers in the square bracket represent the feature value of the data point. For example, {[0, 1, 2] , [1, 2, 3]} represents that there are two feature subsets in the horizontal distribution, the first of which consists of attributes 0, 1, and 2, and the second of which includes attributes 1, 2, and 3. Table 4 shows the optimal value of each algorithm’s parameter in each dataset, and its value range is (1,5].

Optimal parameters of each algorithm on each dataset

To quantify the clustering results and facilitate comparative analysis, four evaluation indexes were selected in our experiments, namely Accuracy (ACC), F-measure, Adjusted Rand Index (ARI), and Partition Coefficient (PC). The first two indexes were used to evaluate the accuracy of clustering algorithms, and the latter two ones were used to evaluate the effectiveness of collaborative clustering algorithms. These indexes are defined as follows:

(1) Accuracy

(2) F-measure

(3) Adjusted Rand Index

(4) Partition Coefficient

Tables 5 to 8 show the ACC, F-measure, ARI, and PC indicators corresponding to the clustering results of each algorithm on the nine UCI datasets. The clustering results in terms of accuracy on the nine UCI datasets are shown in Table 5. It can be seen from this table that in each horizontal distribution, the clustering accuracy of our ib-CPFCM algorithm is better than that of the FCM, PFCM, WPFCM, PFGG, and CFC. For example, it will increase by 5.06% under the second horizontal distribution of the Wine dataset, 5.29% higher in the first horizontal distribution of the Sonar dataset, it is 10.38%, and 10.66% improvement under the first and third horizontal distributions of the Dermatology dataset, respectively, in the third horizontal distribution of the Knowledge dataset it is increased by 11.42%, and 5.41% under the second horizontal distribution of the Lymphography dataset, the improved range of clustering accuracy on other datasets are distributed in the interval [0.67%, 3.97%]. From the above analysis, we can see that the ib-CPFCM algorithm has significant improvement in the clustering accuracy in most of the datasets with different horizontal distributions, which is a good indication of the ib-CPFCM algorithm has good clustering performance. In turn, it highlights the use of information bottleneck theory to measure the “distance” between data points and cluster centers, which is beneficial to improving clustering accuracy.

Clustering accuracy on UCI dataset (ACC) (%)

Clustering accuracy on UCI dataset (ACC) (%)

As can be seen from Table 6, ib-CPFCM and all comparison algorithms can get better F-measure, and the clustering results of ib-CPFCM are not worse than any comparison algorithm. For the UCI dataset, the F-measure value of ib-CPFCM is only lower than PFGG on the Tae dataset but better than other comparison algorithms. Under the second horizontal distribution of the Iris dataset, ib-CPFCM slightly outperforms all comparison algorithms. For the Wine dataset, ib-CPFCM achieved the best F-measure. For the remaining UCI datasets, ib-CPFCM obtained better results than all comparison algorithms, especially on the Wine, Dermatology, and Lymphography datasets, with F-measure values 6% –7% higher than the comparison algorithms. In summary, ib-CPFCM has strong robustness.

F-measure on UCI dataset (%)

Table 7 shows the ARI values of all clustering algorithms. It can be seen that on the Iris, Wine, Wdbc, Sonar, Tae, Knowledge, and Lymphography datasets, the ARI values are improved by 5.23%, 1.66%, 5.23%; 3.91%, 10.57%, 8.12%; 7.82%, 4.22%, 4.21%; 7.15%, 1.12%, 0.39%; 1.04%, 0.58, 0.93%; 6.03%, 3.44%, 10.24%; 3.6%, 9.29%, 3.97%, respectively, under the first, second, and third horizontal distributions. On the Ecoli and Dermatology datasets, the values are improved by 1.48%, 8.28%, and 3.41%, 9.24% under the first and third horizontal distributions, respectively, while in the second horizontal distribution, the former is 0.57% lower than the PFGG algorithm and the latter is 1.92% lower than the CFC algorithm. Therefore, we can conclude that the ib-CPFCM algorithm is higher than the comparison algorithms on other datasets, except that ARI is slightly lower than PFGG and CFC algorithms in the second horizontal distribution of Ecoli and Dermatology datasets. It can also be concluded that the ARI values of the ib-CPFCM algorithm are different under different horizontal distributions of the same dataset. From the above analysis, we can also know that the ARI of the Dermatology dataset is significantly improved under the first and third horizontal distributions, but decreased in the second horizontal distribution, which indicates that the number of feature subsets and different feature combinations in each feature subset in this algorithm will affect the final clustering results.

Adjusted rand index on UCI dataset (ARI) (%)

It can be seen from Table 8 that all algorithms can obtain better PC values, and the PC values of the ib-CPFCM algorithm on the nine UCI datasets are outperforms those of the comparison algorithms. For example, its PC values are 0.898, 0.801, and 0.888 under the three horizontal distributions in Iris; 0.835, 0.681, and 0.791 under the three horizontal distributions in Tae; 0.568, 0.668, and 0.6778 in the three horizontal distributions in Ecoli. It can be seen that the clustering results of ib-CPFCM in different feature subsets of the same collaboration coefficient α[ii, kk] are different. The PC values achieved the best results under the first horizontal distribution of the Lymphography dataset, which was 30.45% higher than PFGG. In the Knowledge dataset, the PC value improved slightly. For the Dermatology dataset, 13.79%, 14.74%, and 14.16% were improved over the comparison algorithm under the three-level distributions, respectively. The results show that it is easy to form a more complete description of the data by using the correlation between different feature subsets for collaborative clustering, resulting in better clustering results. Through the analysis of the above four evaluation indicators, we can see that the ib-CPFCM algorithm proposed in this paper has better clustering performance and better clustering quality than the comparison algorithms.

Partition coefficient on UCI dataset (PC) (%)

In this paper, we introduce the idea of collaborative clustering and the theory of information bottleneck into possibilistic fuzzy clustering, and propose a collaborative possibilistic fuzzy clustering algorithm, named ib-CPFCM. The work of this algorithm mainly includes the following two innovations. (1) This algorithm uses information bottleneck theory as a similarity measure to calculate the distance between cluster centers and data points; (2) it makes full use of the relationship between feature subsets through the idea of collaboration. In order to evaluate the clustering performance of this algorithm, comparative experiments were conducted on 9 UCI datasets with other 5 clustering algorithms. Experimental results show that the proposed ib-CPFCM algorithm is superior to the comparison algorithms in terms of clustering accuracy and collaborative effectiveness. Since the collaboration coefficient α[

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Footnotes

Acknowledgments

The authors would like to acknowledge the support of National Science Fund subsidized project under Grant no. 62273290.