Abstract

Social distance is considered one of the most effective prevention techniques to prevent the spread of Covid19 disease. To date, there is no proper system available to monitor whether social distancing protocol is being followed by individuals or not in public places. This research has proposed a hybrid deep learning-based model for predicting whether individuals maintain social distancing in public places through video object detection. This research has implemented a customized deep learning model using Detectron2 and IOU for monitoring the process. The base model adapted is RCNN and the optimization algorithm used is Stochastic Gradient Descent algorithm. The model has been tested on real time images of people gathered in textile shops to demonstrate the real time application of the developed model. The performance evaluation of the proposed model reveals that the precision is 97.9% and the mAP value is 84.46, which makes it clear that the model developed is good in monitoring the adherence of social distancing by individuals.

Keywords

Introduction

The concept, ‘Social distancing’ has gained greater importance in the present day. Ever since the outbreak of the Covid19 pandemic, social distancing has been considered one of the most vital measures to break the chain of the spread of the virus. With the first and second waves of Covid19 hitting the globe and affecting nearly 180 countries across the globe, governments and health fraternities have realized that social distancing is the only way to prevent the spread of the disease and to break the chain of infections till a majority of the population are vaccinated. In the field of artificial intelligence, researchers are coming up with solutions that could be implemented in order to ensure that the Covid19 protocols are strictly followed by the people. However, there are cases in countries like India, France, Russia, and Italy where either people are heavily populated or do not adopt preventive measures like social distancing in crowded places [1]. Social distancing is a healthy practice or preventive technique which evidently provides protection from transmission of the Corona (Covid19) virus. It has been identified that when there is a maintenance of a minimum of 6 feet distance between two/more people [18] the spread of the virus could be avoided. Social distance doesn’t have to be always people’s preventive technique rather it could also be a practice that could be adhered to reduce physical contact towards transmitting diseases from a virus-affected person to another healthy being [9]. Deep learning like its role in every field has been found to create a significant impact in addressing this problem [4]. Manually monitoring, managing and maintaining distances between people in a crowded environment like colleges, schools, shopping marts and malls, universities, airports, hospitals and healthcare centres, parks, restaurants and more places would be impractical and therefore adopting the machine language, AI and deep learning techniques in automatic detection for social-distancing is essential. Object detection with RCNN in machine language-based models had been adopted by researchers since it offers the investigators faster detecting options. Faster RCNN is found to be more advantageous, faster convergence and also provides higher performance [3] even under a low light environment.

In the regions of America, Europe and South-East Asia due to poor social distancing and improper measures against Covid19 by the people, in the years 2020 and 2021 many fatalities were recorded and most of them which were because of the violation of the threshold in social distancing measures during Covid19 [10]. The concept of adherence to social distancing has been implemented by machine learning researchers in the past through techniques like face recognition, object (human) identification, people monitoring, object identification, image processing and so on [29, 32].

Deep learning and machine learning-based based Artificial Intelligence (AI) models surpass various criteria like time consumption, cost reduction and reduction of human intervention. Machine learning-based solutions help in achieving results with greater accuracy, minimal loss and errors in addition to reducing the prediction time from several years to a few days [16]. Majorly R-CNN (region-based CNN) and faster R-CNN are applied for accurate and faster predictions. To measure the predicted outcomes, researchers generally utilize the metric evaluation approaches/ techniques like regressors (Random-Forest-Regressor, Linear Regressor), IOU (intersection-over-union) and more. For object detection, the IOU approach is commonly adopted to evaluate performance.

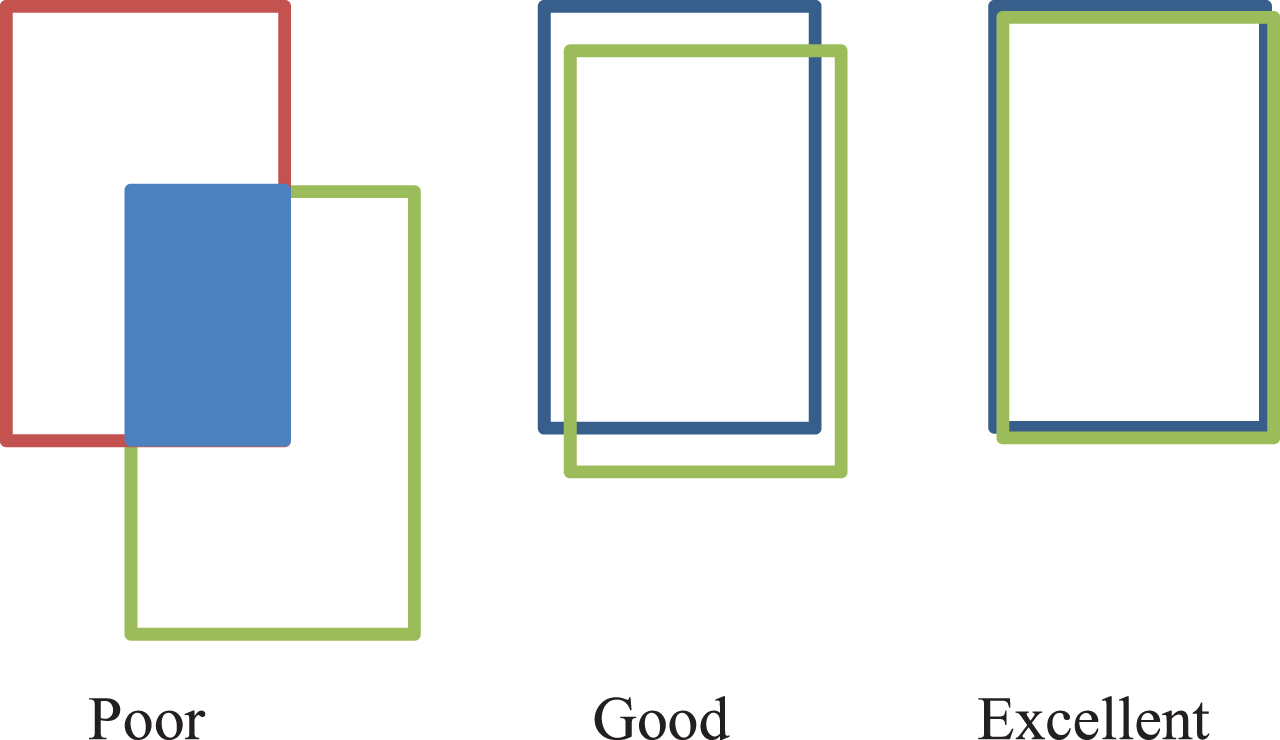

The uses and scope of RCNN in object detection models are mainly to identify/detect the image/video and bounds the class targeted by researchers. The bounding box separates the class needed from other classes and objects in an image. Thus to validate the bounding box, investigators can code them with colors (for instance

This research has developed a customized deep learning RCNN-based architecture. A hybrid approach combining Detectron2 and Intersection over Union method has been applied in order to predict whether the social distance is maintained by the individuals or not, especially in crowded places and public places.

Related works and research background

Social distancing post Covid19 was vigorously studied by many researchers where prediction models were developed to maintain and monitor social distancing. Authors [31] focused on the prediction of social distancing post Covid19 outbreak in India with DNN architecture. [19] utilized the TWILIO and the authors [31] utilized the YOLOv3 as their base architectures respectively for addressing the same problem. These studies utilized the faster R-CNN and found that the architecture is more flexible and faster than other existing approaches. The conclusions from the studies revealed that efficient and effective object-detection outcome is attained with the YOLOv3 with faster R-CNN model. However authors [14] developed a YOLOv4-based ML model and tested the model with COCO datasets. Their model architecture was also based on DNN (Deep-Neural-network). The latter model was found to render 99.8% accuracy with a speed of 24.1 frames per second (fps) when tested on a real-time basis. Authors [26] had developed an AI-based social distancing model which measured the distances among two-or-more people with social-distancing norms. The authors adopted YOLOv2 with the Fast R-CNN for detecting the objects. The objects considered by them were ‘Humans’ through person class. The accuracy was 95.6%, precision was 95% and recall rate was 96%.

Authors [22] examined the different techniques of metrics in evaluating the prediction models to monitor the social distancing via ‘Computer-vision’ as an extensive-analysis with existing social distancing reviews. They concluded that mAP is found effective in evaluating the prediction models. The study also focused on examining the performances of security-threats identification and facial expressions-based models that had adopted deep learning and computer vision, through real-time datasets with video-streams. The authors found ‘YOLO’ to be effective in the detection models among other AI-based models. Henceforth, from the study it’s streamlined that, adopting two-stage object detectors is wiser, efficient, effective and accurate.

The study by [6] investigated the social-distancing concept, post Covid19, by developing the vision and critical-density based detection system. They developed the model based on two major criteria. First criterion was to identify violations in social-distancing via real-time vision-based monitoring and communicating the same through deep-learning-model. Second the precautionary measures were offered as audio-visual cues, through the model towards minimizing the violation threshold to 0.0, without manual supervision by reducing the threats and increasing the social distancing. The study adopted YOLOv4 and Faster R-CNN where the precision average (mAP) was achieved higher in BB-bottom method with 95.36%. Similarly, accuracy at 92.80% and recall score at 95.94% were also achieved.

The study [10, 11] focused on deep-learning architecture that utilizes the social-distancing concept as a base for their evaluation with people monitoring/ management as a secondary focus, post the Covid19. The authors [10] have utilized YOLOv3 for identifying humans and faster R-CNN as the social-distancing algorithm. The model achieved 92% accuracy in tracking, without transfer-learning and 98% with transfer-learning; similarly, 95% of tracking accuracy. The study insisted that social-distancing detection through YOLO with tracking transfer-learning technique is highly effective than other deep-learning models. Authors [17] proposed a study to monitor Covid19 based social distance with person detection and tracking with fine-tuned YOLOv3 and Deep sort techniques. The authors developed the deep learning model for task automation, to supervise social distances between people, using surveillance videos as datasets. The model used YOLOv3 for object detection, towards segregating background and foreground people; similarly, deep sort methods were utilized towards tracking the identified people, with assigned identities and bounding boxes. The deep sort tracking method together with YOLOv3 showed good outcomes with balanced scores of fps (frames-per-second) and mAP (mean-Average-Precision) towards supervising real-time social-distancing, among people.

Similarly a study by [3] proposed an advanced social-distance monitoring model to surpass the drawback of existing models where low light surroundings are the major hindrance in the identification of people, as the foreground. Identifying and monitoring people, especially in crowded and low-light environments in deep-learning model prediction models, especially in circumstances like Covid19 and other pandemic circumstances has made researchers to develop new models and improvise existing models, where social distancing is a must. The developing disease caused by the SARS-covid-2 virus (Covid19) has acquired a worldwide crisis, with its deadly distribution, all-over the globe. Due to isolation and social distancing, people try to walk out of their homes, during the nighttime with their families to breathe in some fresh air. In such circumstances, it is essential to consider efficient steps towards supervising the criteria of safety distancing to avoid positive cases and to manage the death toll.

Thus, from the existing researches and studies it is observed that YOLO for object detection has been adopted by the investigators majorly, with RCNN and Faster RCNN in their architecture. Though YOLO detects the objects meaningfully, it lacks behind in detection speed and thus affects the tracking process. Whereas, according to [24] the Detectron2 model is found effective and rapid in tracking processes than YOLO. Thus, in this research, a deep learning-based method is proposed using the RCNN with Detetcron2 for measuring social distances among individuals and detection of real-time object-class (i.e. person class) with a single motionless ToF (time of flight) camera.

The below Table 1 shows the reviews of social distance prediction during covid-19:

Reviews of social distance prediction during covid-19

Reviews of social distance prediction during covid-19

Through the reviews it is evidently understandable that, YOLO with CNN, R-CNN and Faster R-CNN are majorly utilized in the recent years, towards social-distancing based object detection models. However the usage of models with different neural networks, algorithm and evaluation metrics are observed to be lesser through the reviews undertaken.

The present study has developed a novel approach to detect whether social distancing has been maintained by individuals at public places. The current approach has combined “Detectron2” and “IOU’ techniques and developed an R-CNN based customized machine learning model for detecting the social-distancing between people, especially in the crowded areas. This research has developing and tested a customized model for social distancing prediction, through video object detection using COCO dataset.

In this research, the author has developed a Detectron2 model with IOU metrics for predicting the social distancing between individuals in crowded public areas that has not been attempted by any authors, yet. This paves way in the research to adopt new evaluation techniques and prediction models than attempting to adopt existing models and comparing with other similar models.

The system proposed here is a social distancing technique for analyzing objects that utilizes the deep learning, computer vision along with python language, for detecting intervals (distance) among people for maintaining safety and monitoring crowd. RCNN was used to develop the model along with deep learning algorithms, computer vision and CNN (convolutional neural networks). To detect objects (people) from video, object detection method was used where ‘Detectron’ algorithm is applied. From CoCo datasets and obtained results with class-as-objects “People” as the class was alone filtered, by disregarding other classes. To map people from other classes in the video or frame, bounding boxes are mapped with different colors. Finally, distances between identified people are measured through obtained outcomes.

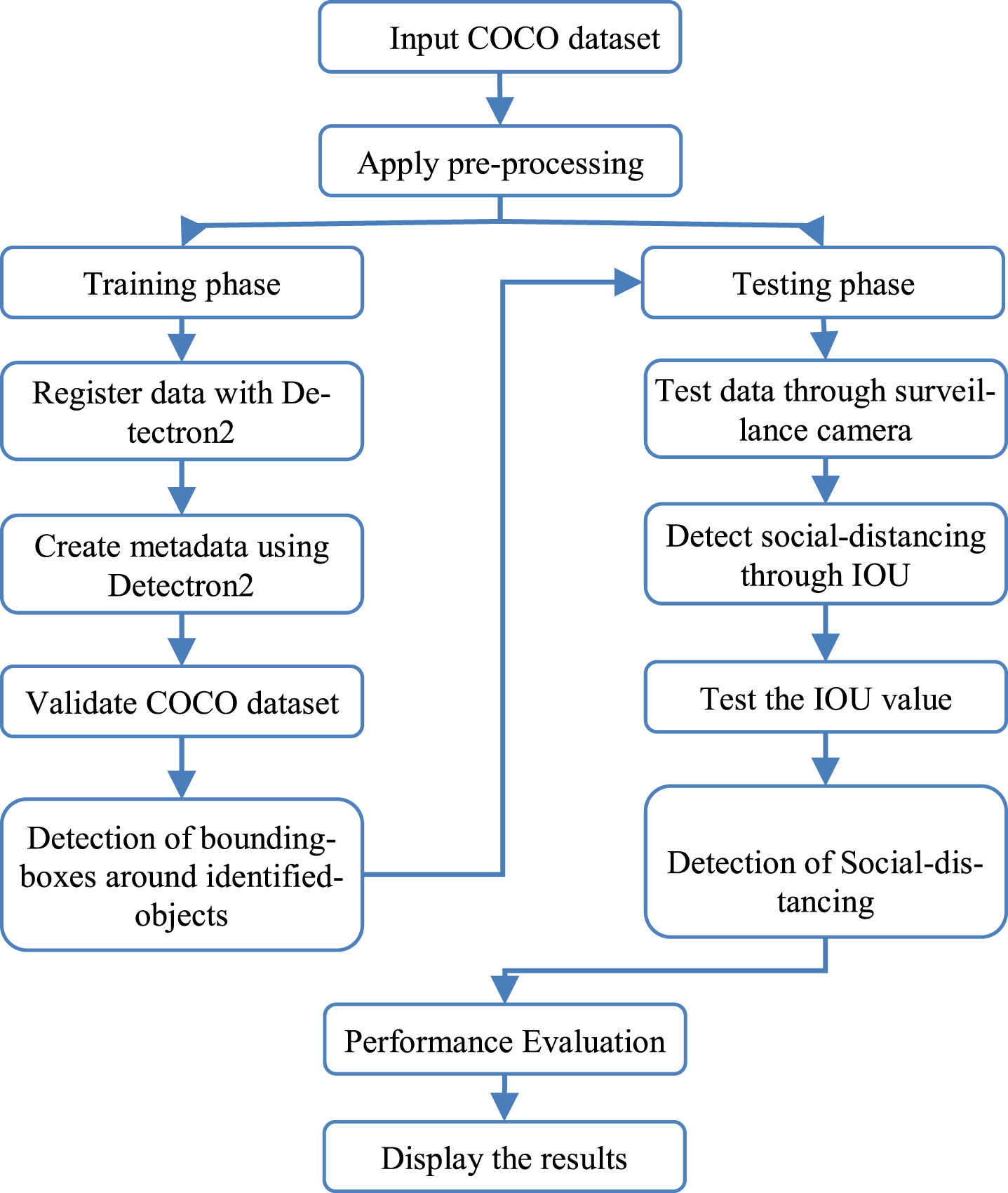

Flow-chart of proposed research.

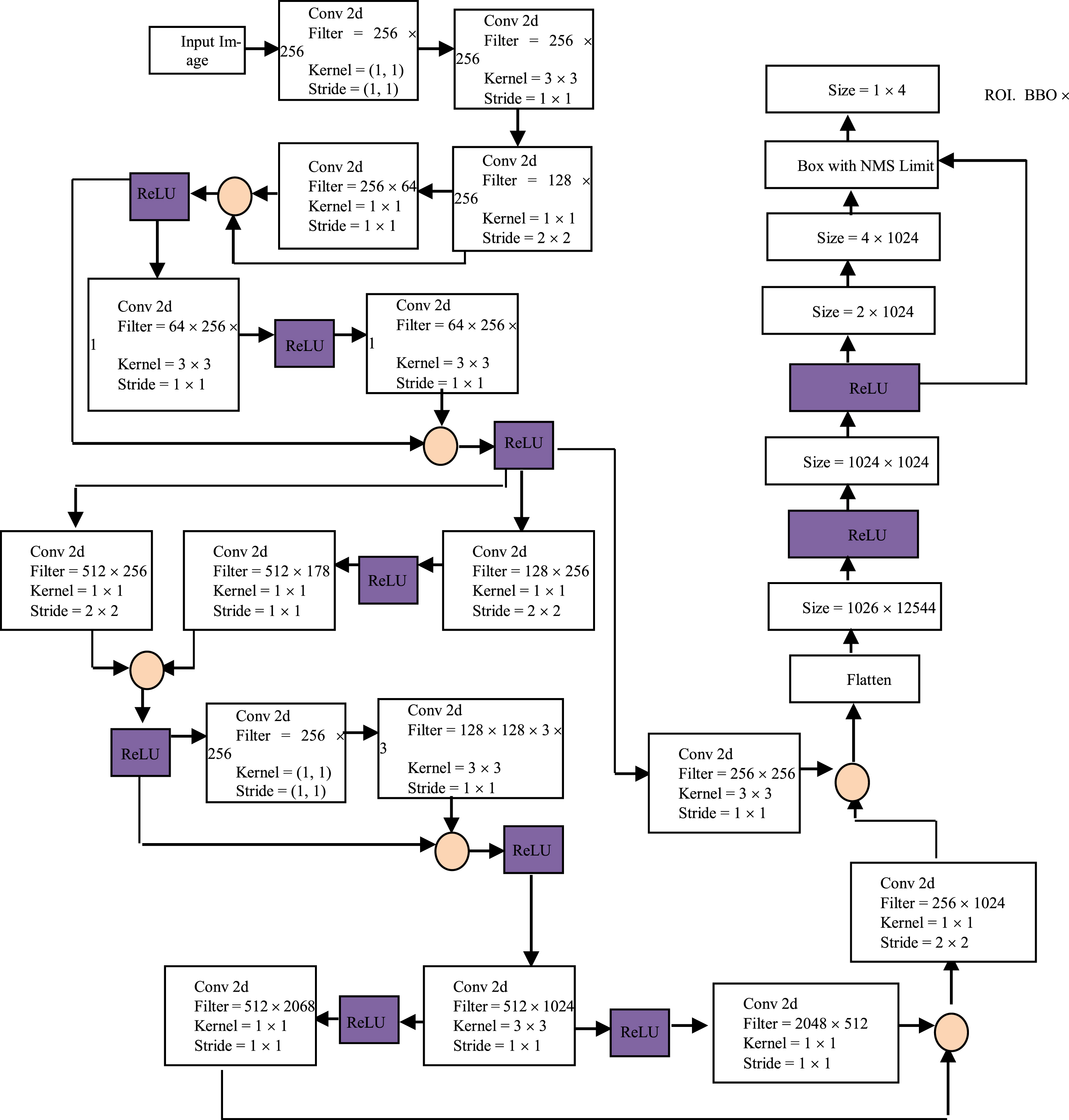

The architecture (refer to Fig. 2) is developed with faster R-CNN. The reason for adopting and utilizing faster R-CNN as the backbone in the developed model is for its features namely: accuracy, precision and speed. The developed model architecture includes 5 Convolutional layers with a configuration of 128, 256, 512 and 1024. The research includes deep learning with 8layers of ReLU activation function. 3*3Conv Kernel size has been adapted.

Architecture of the customized detectron2 with RCNN model.

The image is fed as input in the first layer of proposed detectron model with image size of 224x224x3. In the middle layer of the model, 2d convolutional, batch normalization ReLU and max-pooling are included. The convolutional layer here maps image features, where the filter is set-as 5x5. The width along with height of image in regions, is defined through filters. In regularizing model and eradicating the overfitting challenge/issue, the batch normalization is included in the model as middle layer. Later, the ReLU loss-function is used in the neural-network to introduce non-linearity. Finally, the max-pooling were included to obtain down-sampled images in pooling-region.

The stride-size is set at 2x2. Detectron2 ResNet50 is utilized for object detection in the model with similar layers.

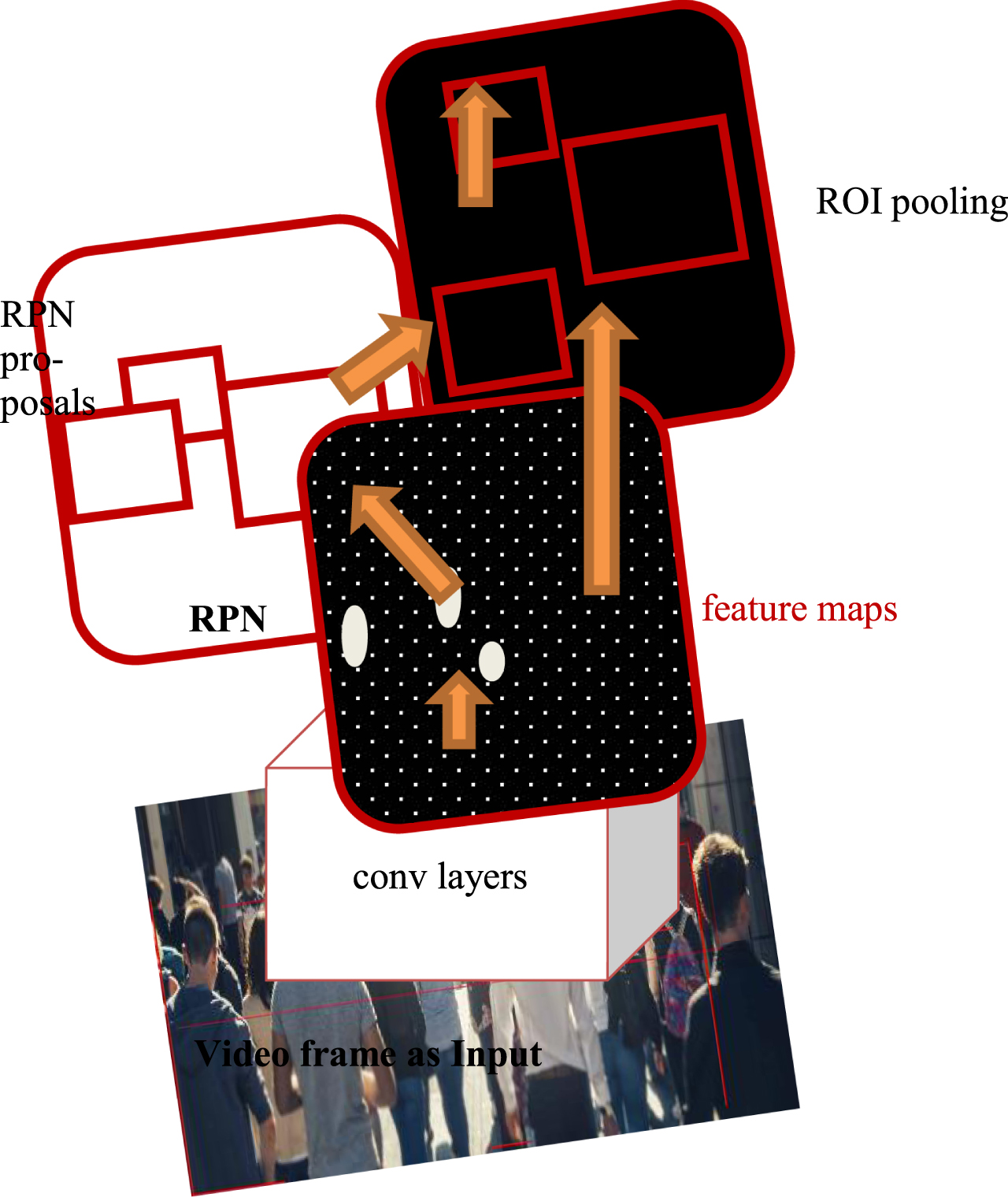

Based on the architecture the study adopts the following schematic representation (refer to Fig. 3). The image is passed through the convolutional layers (Backbone network) where RPN transforms the image as feature maps. In the transformation RPN (Regional proposal network) identifies the regions with objects known as ‘proposals’. Identified proposals will be examined for feature extraction, with RPN as backbone network. If there are no proposals found, the ROI extracts features directly from RPN and passes on to classifier for object detection network layer. Thus the faster-RCNN in this research is developed for object detection along with Detectron.

RCNN schematic diagram.

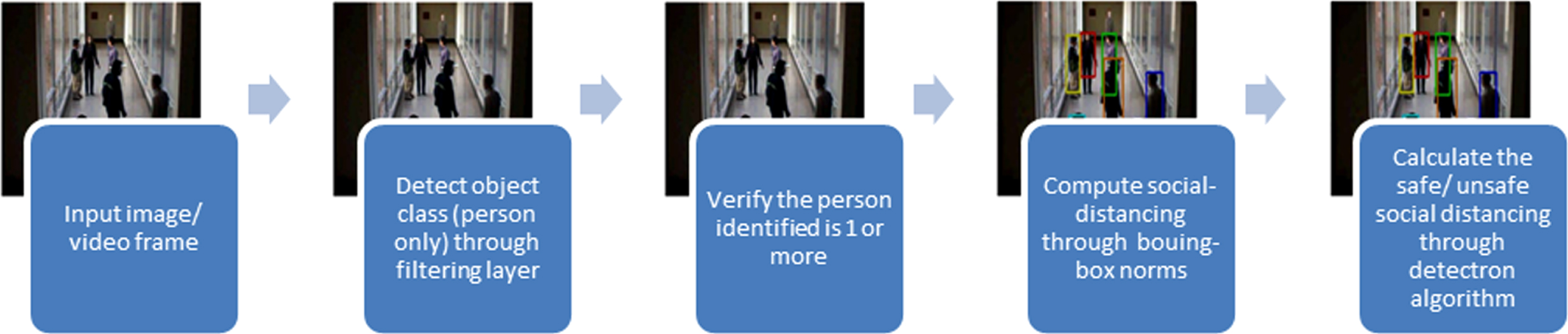

Thus the social-distancing along with detecting people (person-class) upon video frames as images is done in this research. The steps involved are represented in the following illustration (refer to Fig. 4).

Classification and social-distancing of person-class.

Thus IOU is estimated through bounding-box intersection (refer to Fig. 5) as:

IOU estimation in object detection.

Generally, the more the bounding-boxes overlaps the excellent the score is and vice-versa. Hence in the research, 0.75 as threshold for bounding-box in object detection for person class is used in IOU metric evaluation.

The research is done to measure the social distancing between people as “person class” through object detection method in deep learning technique. For the proposed aim, the developed model adopts neural networking (RCNN) and deep learning with python as programming-language. Initially the datasets are acquired, trained-and-tested and evaluated for its efficiency through IOU evaluation method. For detection of objects (‘person class’) ‘detectron2’ is utilized.

The datasets were obtained from COCO dataset. The dataset has 95categories of classes (refer Table 2), where, researchers opt for required object detection classes alone.

Classes in COCO dataset

Classes in COCO dataset

Here ‘person’ as class from Humans category is alone opted and other 9categories are ignored for the prediction model. The data is obtained from open source through “Kaggle” [5].

Input: COCO 2017 dataset

Output: Bounding boxes around objects detected Filter the COCO dataset and annotations specific for person category only; Register the data using DataCatalog feature of Detectron2; Create Metadata using MetadataCatalog feature of Detectron2 which is a key-value mapping that contains information contained in the entire dataset; Train the model on COCO dataset; Use the model on validation COCO dataset and evaluate mAP scores.

Followed by the training phase, the research uses the SGD algorithm for gradient descent in developed model.

Generally, the GD variants could be basically categorized as SGD (stochastic gradient descent (SGD), BGD (batch gradient descent) and MBGD (mini-batch gradient descent). When compared against other gradient descents (Eq.1 and 2), the SGD (Eq.3) is found effective as loss function for object detection. The BGD also known among researchers as ‘Vanilla GD’ is formulated as:

This formula is mostly utilized for cost function gradient by researchers since this doesn’t allows the researcher to update gradients more than once and thus slower in operation processes.

The MBGD is formulated as:

Though the MBGD has stable convergence it allows only smaller batches (50in size) for evaluation and thus SGD is mostly preferred by researchers. The SGD formula utilized here is:

Input: Images from a surveillance camera

Output: Detecting social distancing amongst people

Testing the above model for test dataset; Using Intersection Over Union (IOU) method, detect the social distancing between people using the overlapping (intersection) area; If the value of IOU is non-zero, then it can be said that people are not at proper social distancing from each other;

Loss Function and other formulae used:

a) ReLU: The ReLU (Rectified Linear Units) is better and performs higher than Tanh or Sigmoid in Loss Function estimation. Here, the ReLU is estimated since it rectifies and evades vanishing-gradient issues. Similarly, it is less-expensive when computations are compared against Tanh and Sigmoid and thus researchers adopt ReLU loss function a lot; it also provides simpler, easier and faster mathematical operations.

However, it has certain drawbacks, like: it shouldn’t be utilized out of hidden-neural layers of network model, fragile gradient loss during training with dead neurons, activation blow-ups and ReLU might stop replying to error variations when gradient range is 0 (equation 4).

The formula for ReLU activation could be measured through:

b) R-CNN: (Regional –Convolutional Neural Network): The research makes use of bounding-box regression to improvise the performance of localization. The ground-truth prediction of bounding-box localization is estimated with its size and location, through the formula:

where the initial two-equations (5a and b) specifies the translation of scale-invariant of P’s centre (a and b) and the latter two-equations (5c and d) specifies transformation of log space with the height (f) and width (v).

c) IOU (intersection-over-Union): The IOU is measured through dividing the area of bounding boxes, where: overlapping (intersection of bounding-boxes) is divided by union (combined area of bounding-boxes). The IOU is estimated through [34]:

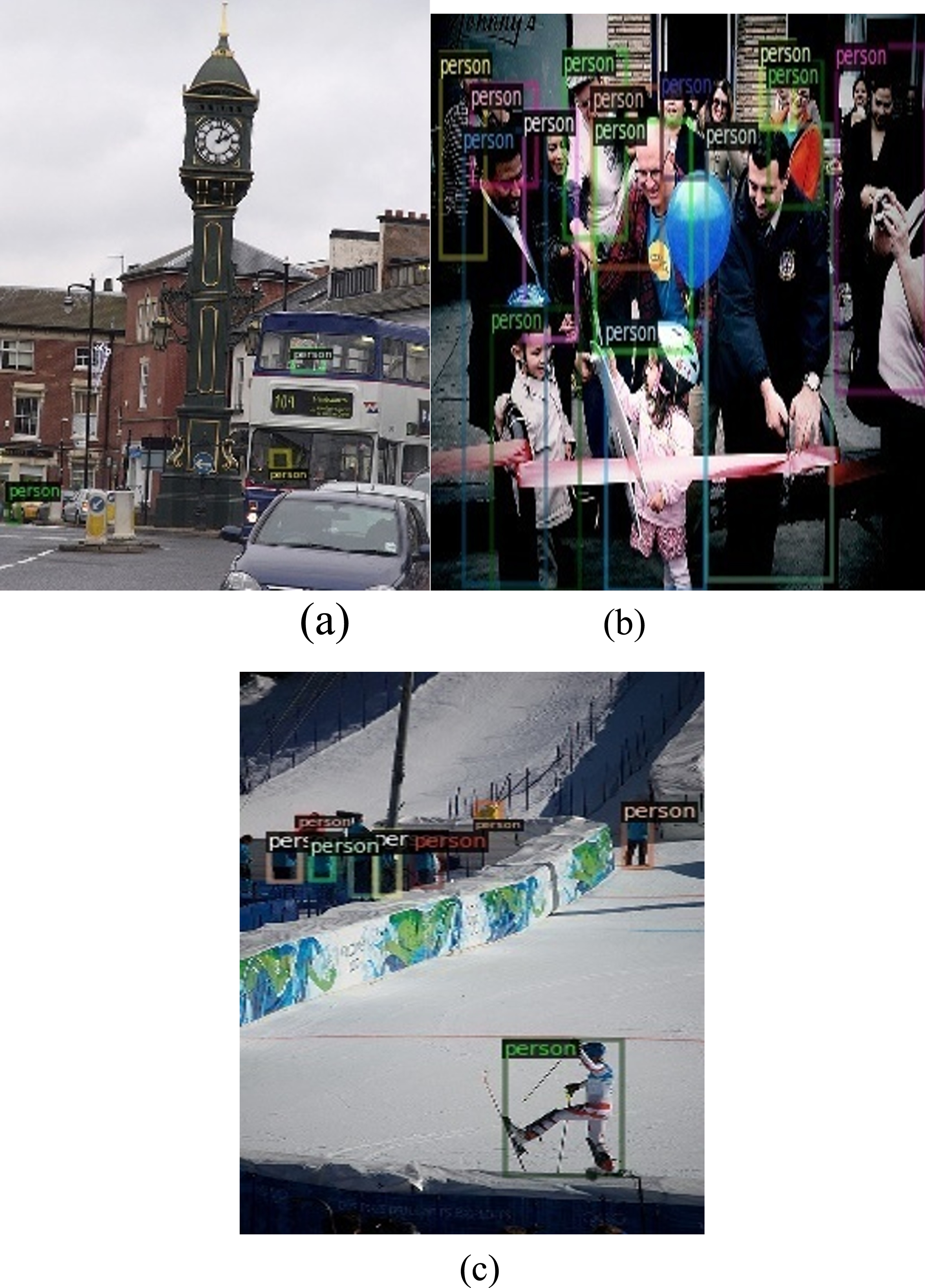

Input dataset selection.

a)

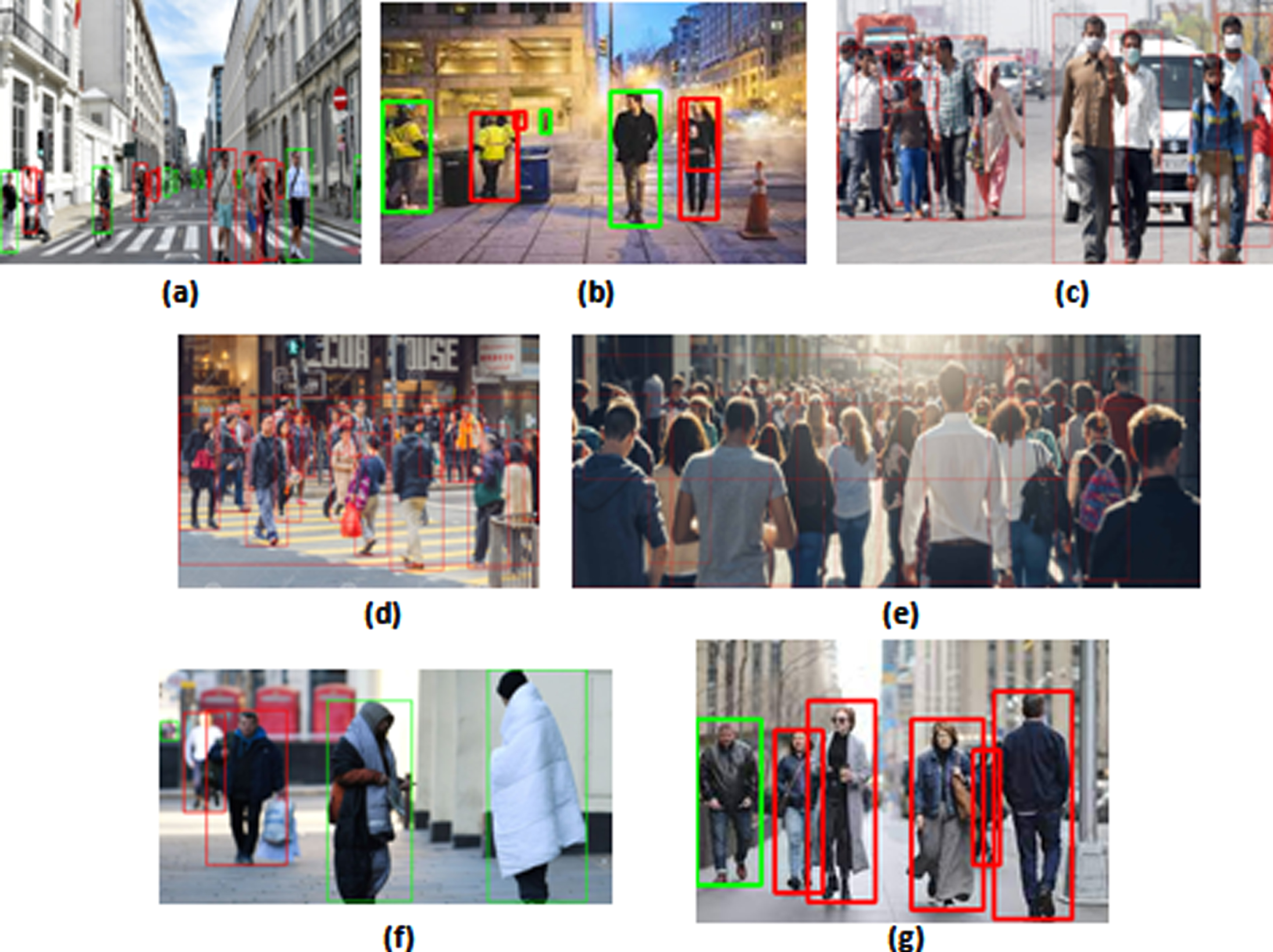

Individual as person-class identification.

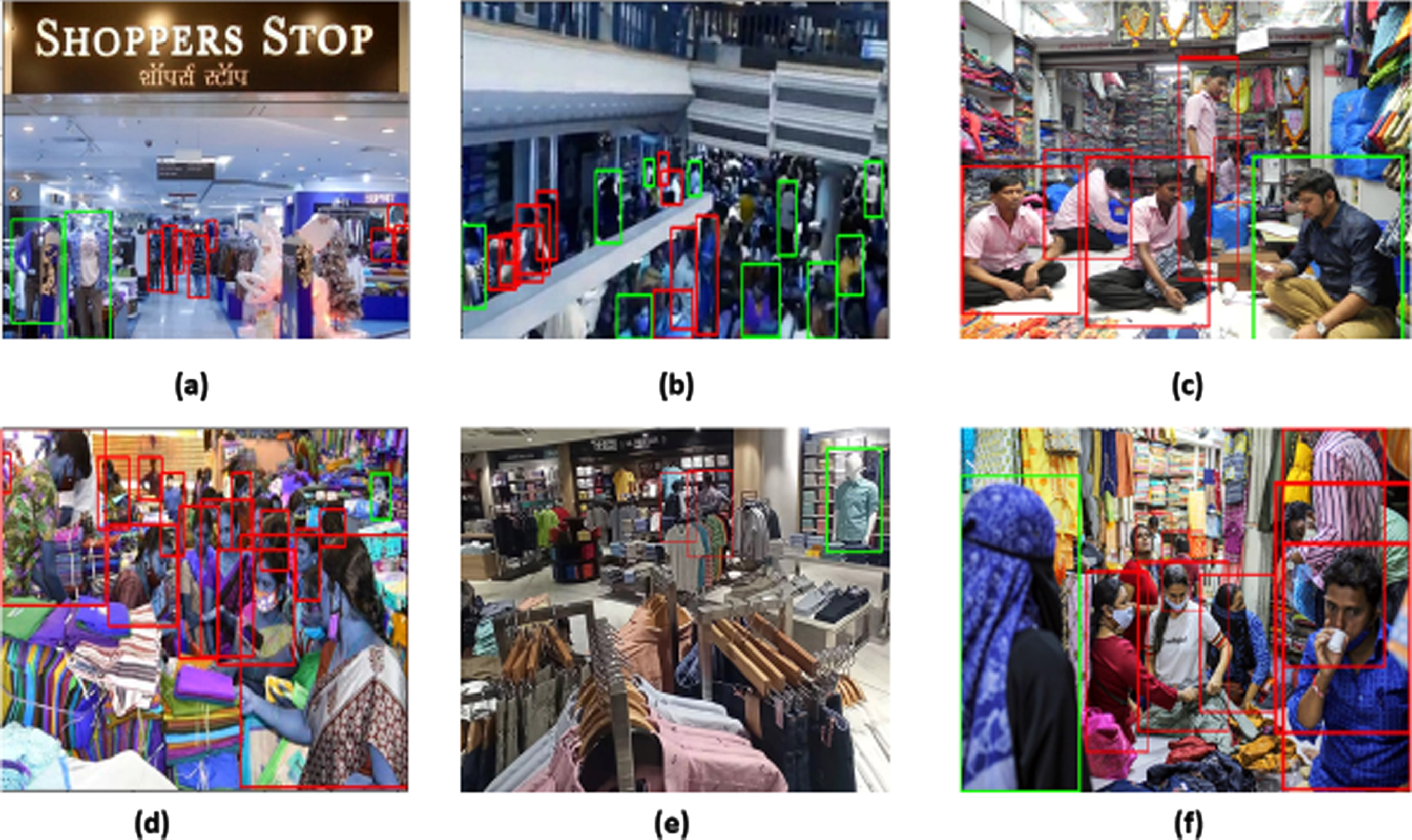

b) Classification of Group of People versus Objects: The following figure (refer to Fig. 8a) represents the ground truth of identified person in the image versus Fig. 8b representing the prediction made by the model with identification of person-class only.

Group as person-class identification.

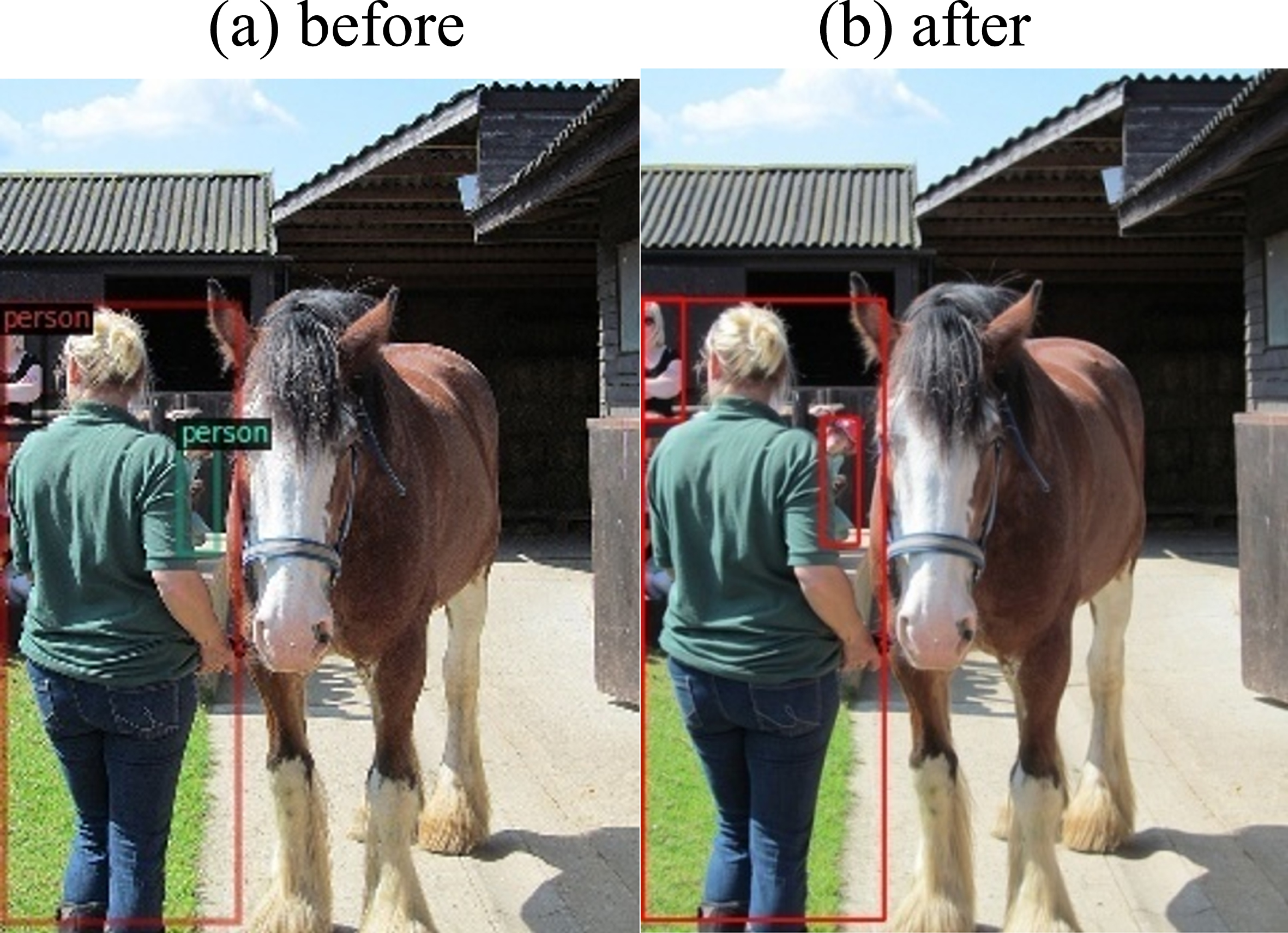

c) Classification of People and Animals: The figure (refer to Fig. 9a) represents the ground truth of identified person in the picture versus the Fig. 9b representing the prediction made by the model with identifying person-class only.

Identified person-class social distancing.

d) Classification of people from other categories:

Prediction and social-distancing by developed model.

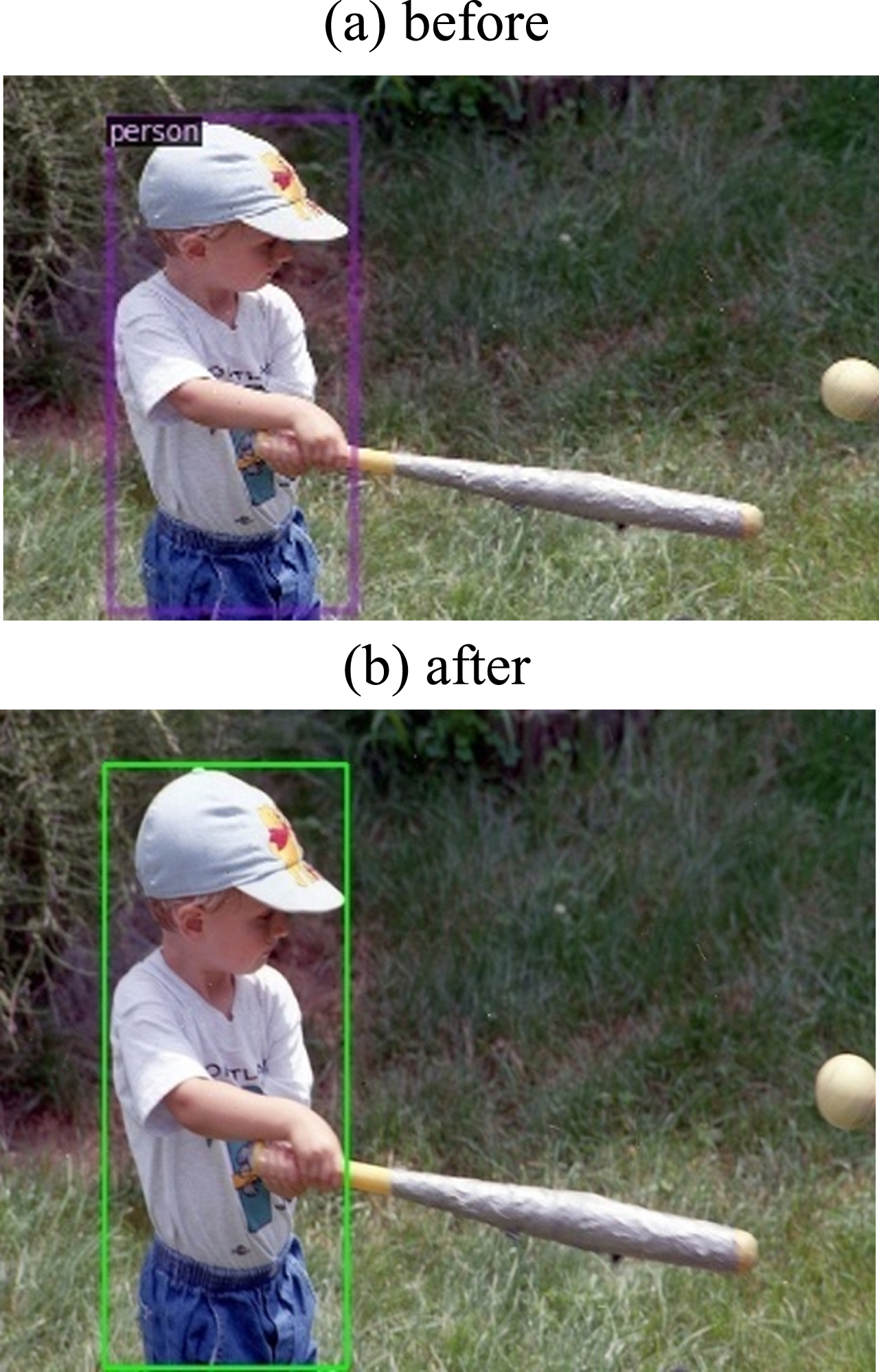

Since, there is just one person in the image (refer to Fig. 11-before), the model identified the person with green-bound-box (refer to Fig. 11-after), stating that the norms of social-distances between people is justified by the identified ‘person’.

Identification of individuals (with person class).

Identification of people in crowded areas.

Measuring the social-distancing through the developed model.

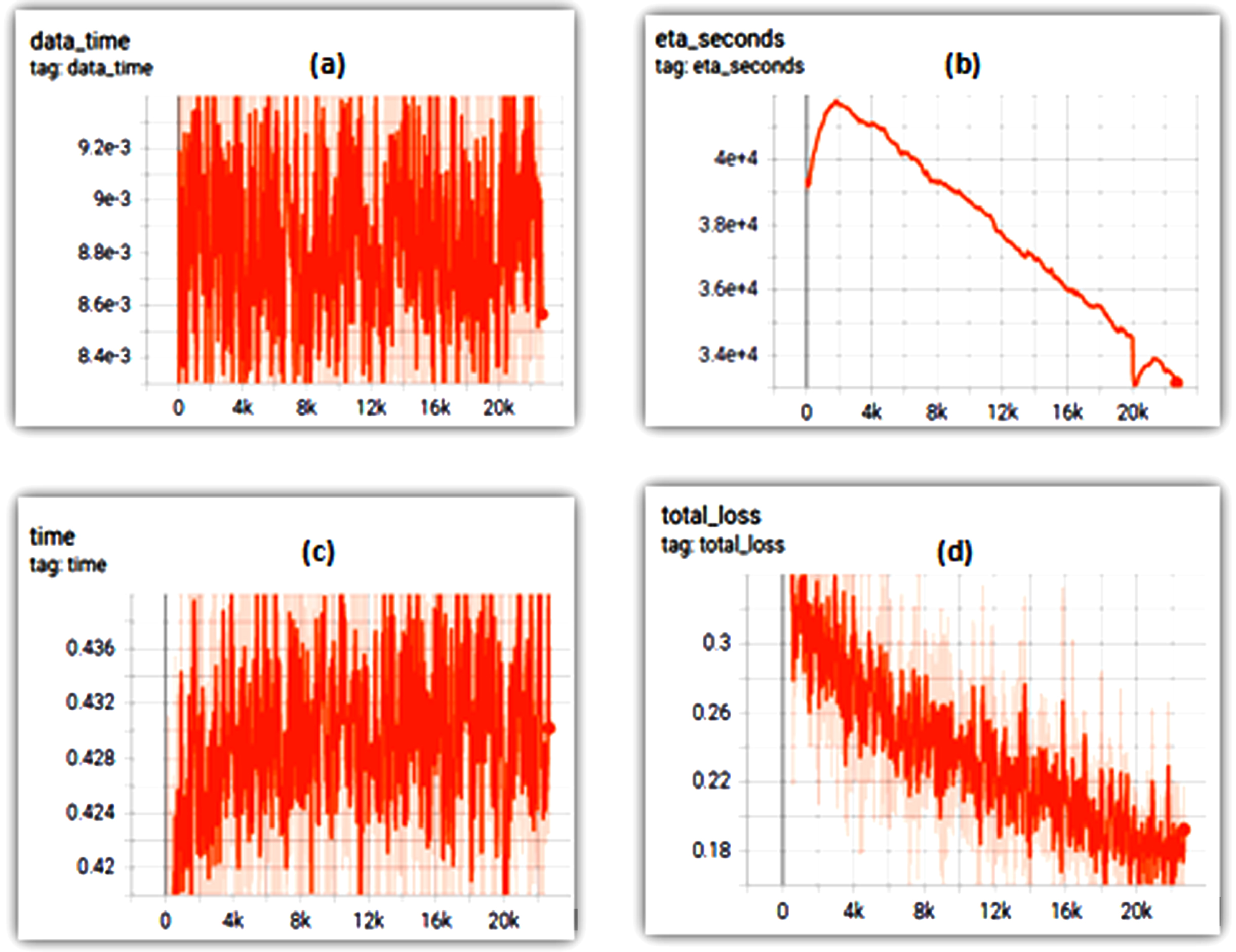

f) Loss function: Based on the estimated versus obtained outcomes, the graphical representation of loss function (the time, data time, total loss and eta-seconds) of the developed model had been evaluated in python and represented below in the graphical representation of the Fig. 14a–d:

(a) Time, (b) Data time, (c) ETA seconds and (d) Total loss.

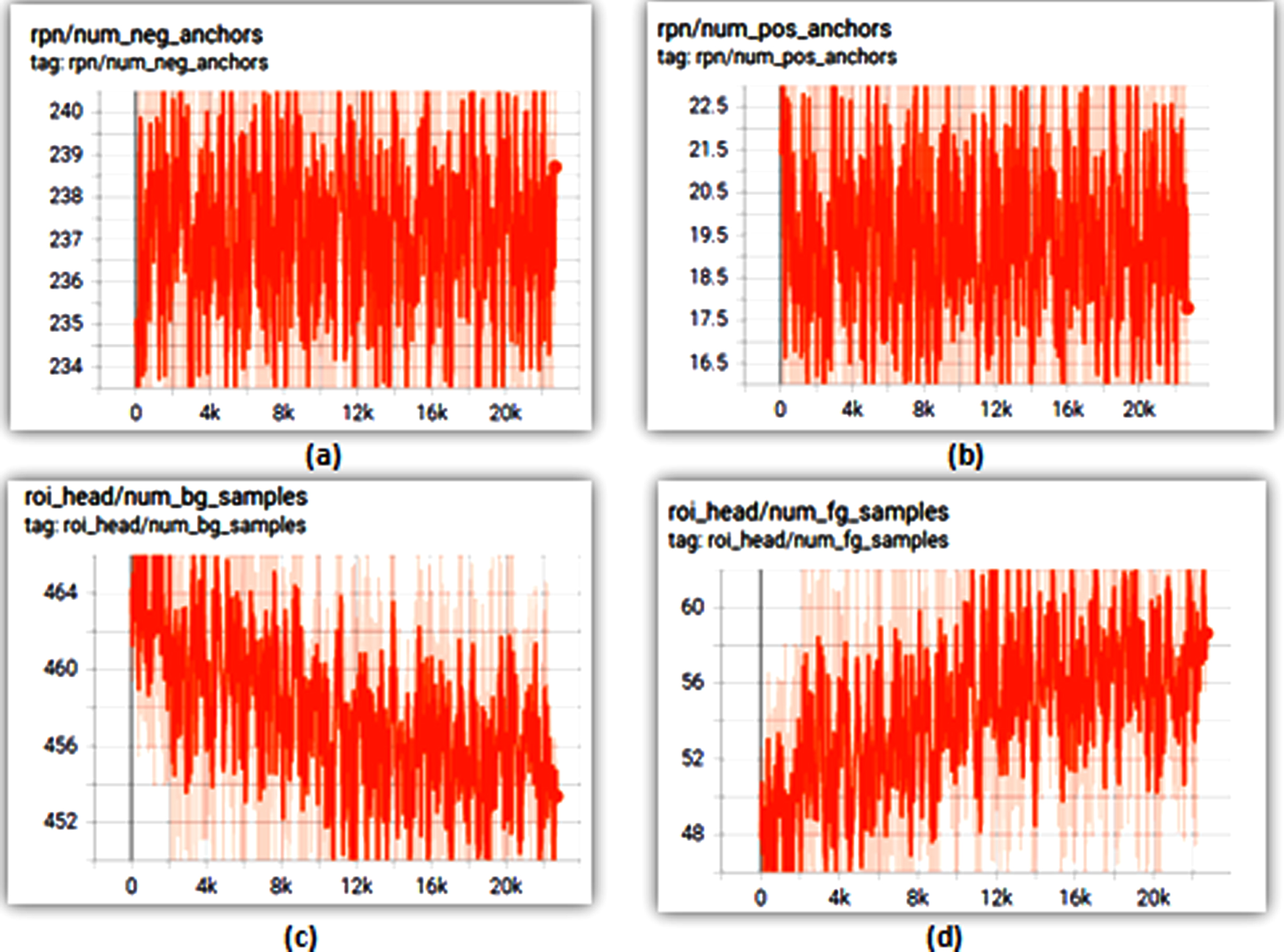

The figures (refer to Fig. 15a and b) denote the anchor values (negative-positive), whereas the Fig. 15c and d represents the Background samples and Foreground samples of the input images, for training the model.

RPN number (a) negative anchors and (b) positive anchors; ROI-head number (c) BG-samples and (d) FG samples.

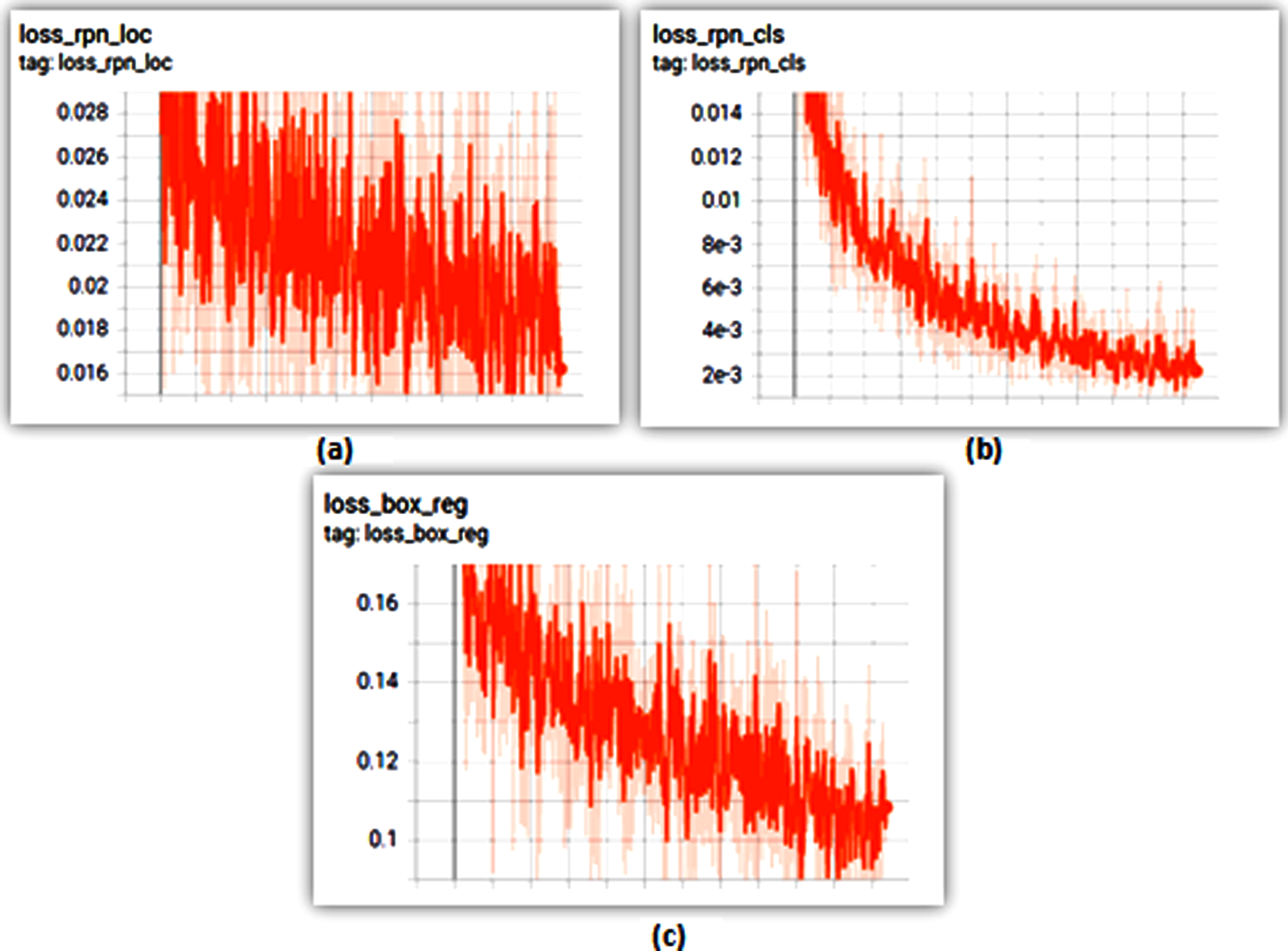

The Fig. 16a–c represents the loss_rpn with loc (loss-rpn-localization), class and regression of bounding box outcomes. The results evidently insist that the regions are overlapped. Thus NMS (non-maximum suppressions) is used towards minimizing the proposal numbers. The loss is minimized at 0.1, as total loss of the developed model.

Loss RPN (a) LOC, (b) Class and (c) Box regression.

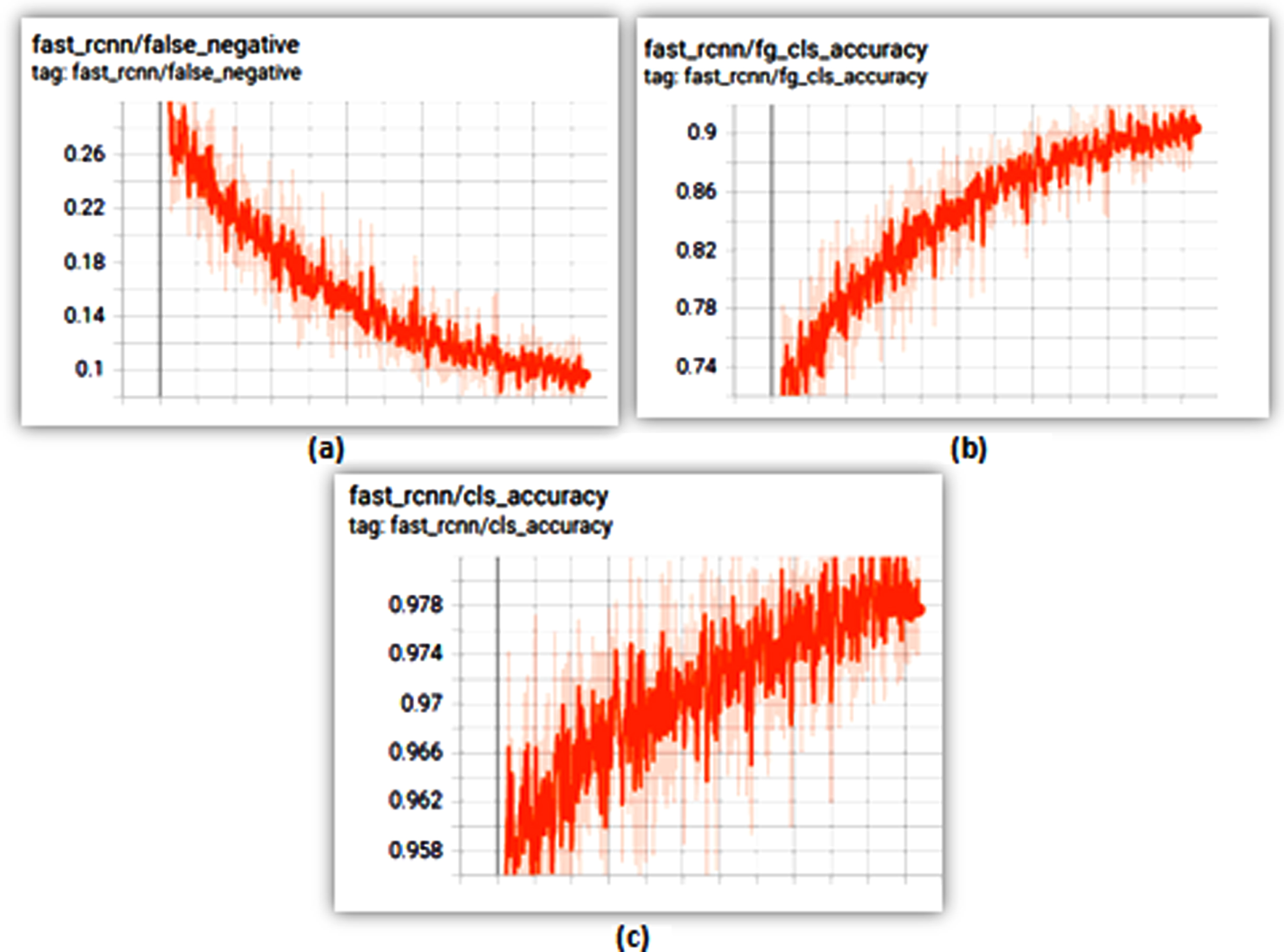

The outcomes from Fig. 17a–c denote that, faster R-CNN outcome of accuracy is achieved at 98%, where the foreground-accuracy is achieved at 10% and false-negative at 0.1%. Concluding that, the model is accurate and precise in detecting objects and measuring social-distancing. Obtained results were higher than estimated outcomes with 75% (threshold value) and above stating the performance was effective.

Faster RCNN (a) False negative, (b) FG class accuracy and c) Class accuracy.

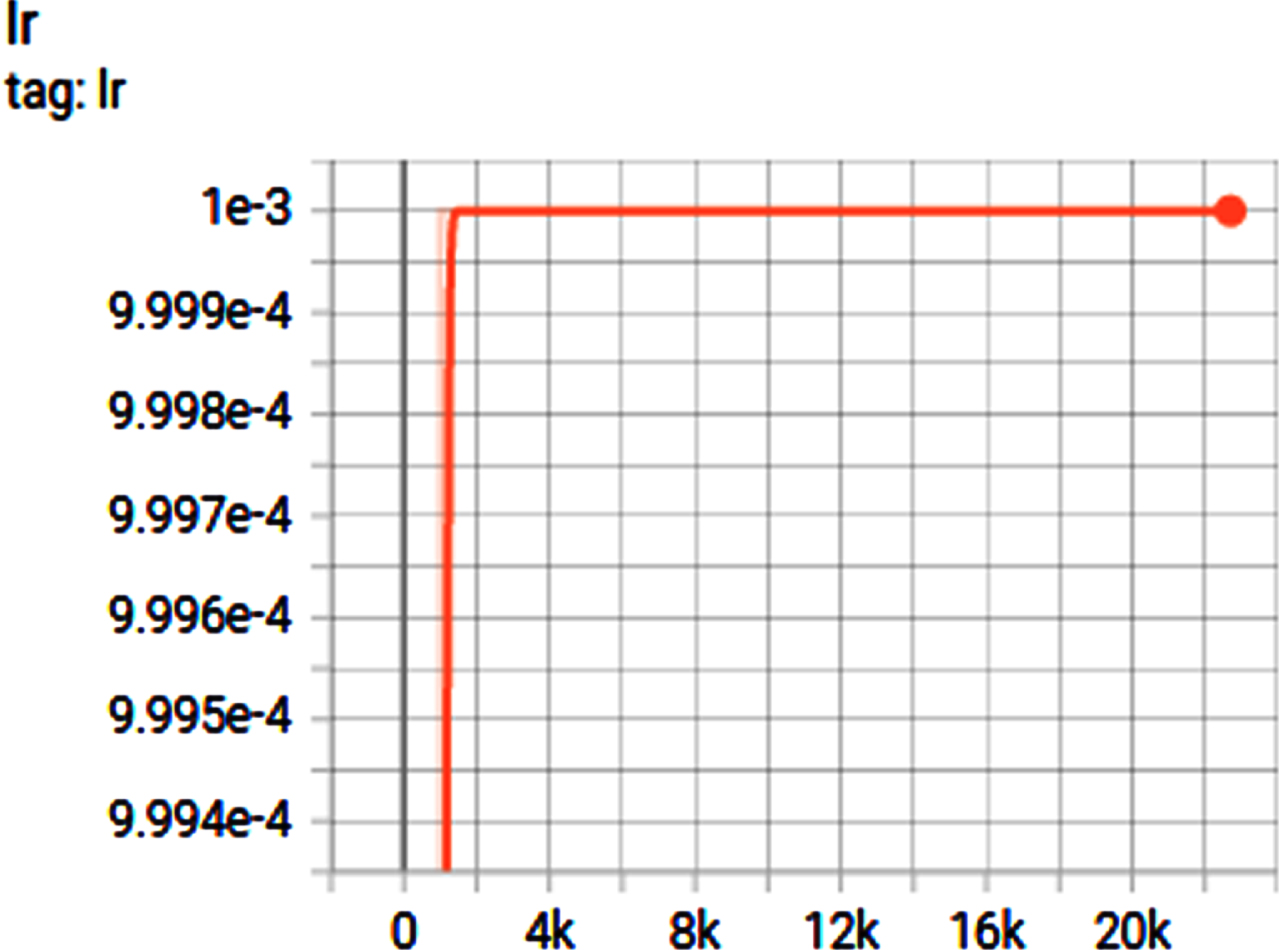

g) Scheduling LR: The learning rate (as shown in Fig. 18) is set-to 4k intervals where the developed model attained successful learning rate at 1000k. It remained the same till 23k iterations stating that, there are no sudden dropping-down in the learning rate, but rather, the LR steadily decreased and remained constant from 1000k-70k iterations.

Learning rate.

Outcome of the trained model

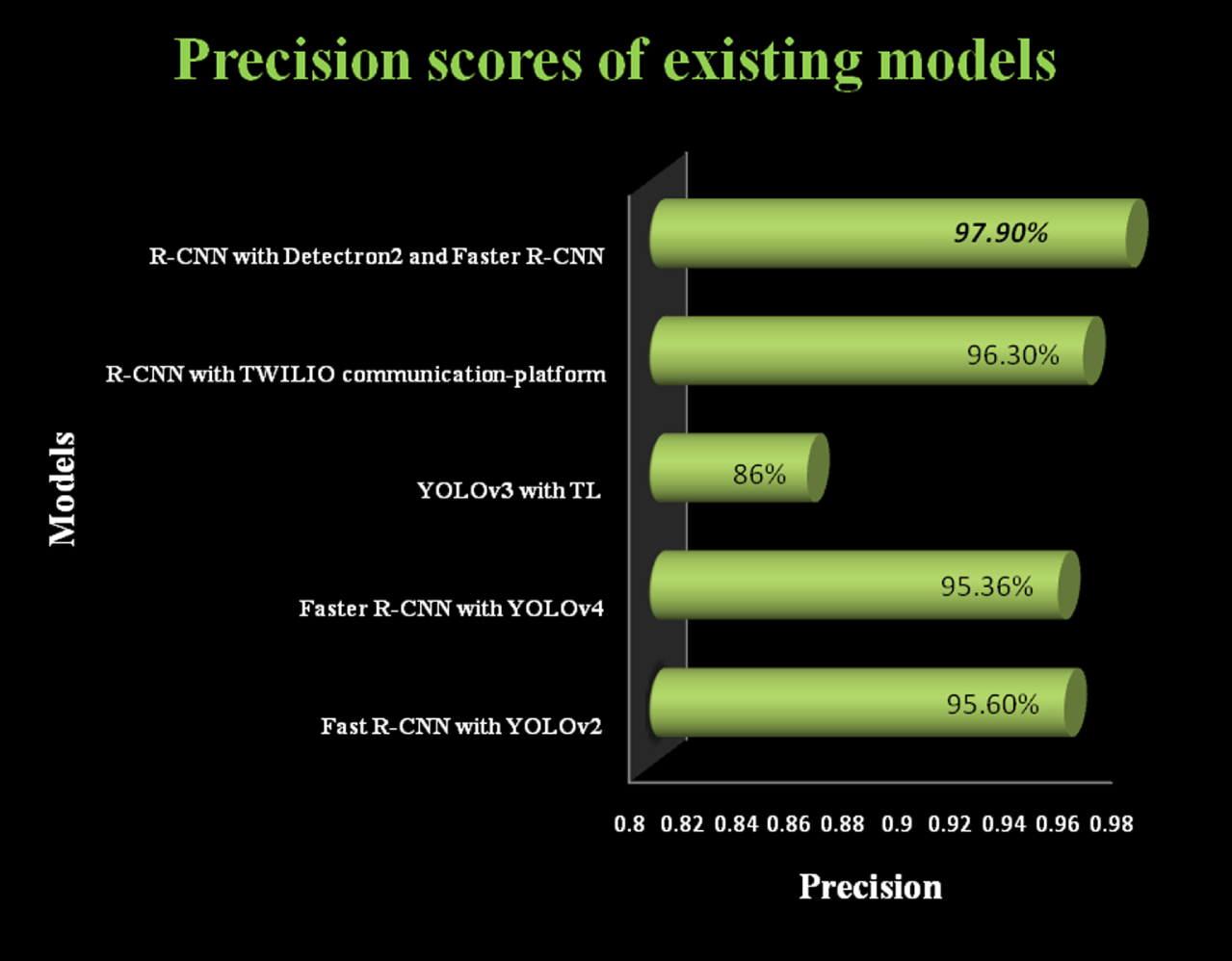

h) Performance metrics: The object detection (human) in the model developed has acquired recall rate of 87% and precision of 97.9% which is more than average 75% stating that the model is a success with effective precision with minimal total loss of 0.1 and mAP at 84.5%; where existing models (refer Table 4) lack precision in human detection towards social-distancing threshold violation measures (refer Fig. 19).

Comparative analysis of models and architecture

Source: Author.

Performance evaluation and comparison with existing approaches.

R-CNN with TWILIO communication-platform architecture attained 96.30%; Fast R-CNN with YOLOv2 architecture attained 95.60%; Faster R-CNN with YOLOv4 architecture attained 95.36%; TL with YOLOv3 architecture attained 86.0%;

Thus the researcher examined and evaluated the datasets with developed detetcron2 model where it’s evidently concluded that, the developed model is a success and good-fit for object detection based analysis models and for violation threshold based applications in object detection and monitoring. Majorly for evaluating the social-distance criterion the model is reliable, accurate, precise and also has a fine recall score (87%) with better mAP (84.5%) that exceeds the average score of existing models.