Abstract

Web applications play a vital role in modern digital world. Their pervasiveness is mainly underpinned by numerous technological advances that can often lead to misconfigurations, thereby opening a way for a variety of attack vectors. The rapid development of E-commerce, big data, cloud computing and other technologies, further enterprise services are entering to the internet world and have increasingly become the key targets of network attacks. Therefore, the appropriate remedies are essential to maintain the very fabric of security in digital world. This paper aims to identify such vulnerabilities that need to be addressed for ensuring the web security. We identify and compare the static, dynamic, and hybrid tools that can counter the prevalent attacks perpetrated through the identified vulnerabilities. Additionally, we also review the applications of AI in intrusion detection and pinpoint the research gaps. Finally, we cross-compare the various security models and highlight the relevant future research directions.

Introduction

Web applications are becoming ubiquitous in modern digital world and play a vital role in wide-spread use of E-commerce [169]. The recent COVID pandemic has further highlighted their importance where government, semi-government, and private establishments aim to provide seamless online services to the customers. However, their prevalent usage makes them an attractive target for the malicious attackers who normally aim to leverage the underlying vulnerabilities and misconfiguration in these applications [86]. According to a recent security review by the application defense center, more than 85 percent of web applications are vulnerable to cyber-attacks [6,26,67,119]. In view of this, we begin by presenting a discussion on top ten vulnerabilities as identified by the Open Web Application Security Project (OWASP). Subsequently, we highlight main tools for scanning the web vulnerabilities i.e., OWASP, Web Application Protection (WAP), and (Re-Inforce PHP Security) RIPS [163].

The OWASP is serving as a core foundation to handle the security issues therefore it is widely being used in web securities. The authors utilize two free source tools for scanning for web vulnerabilities: OWASP, WAP and RIPS [163]. In addition to these tools, we also identify various other approaches that can improve the scanning accuracy. These include static and dynamic code analysis [103,142], Machine Learning (ML) based approaches, and many penetration testing tools [179]. We also compare static and dynamic analysis techniques which are fast and promising for detecting vulnerabilities and can effectively work with penetration testing tools. The use of various testing methodologies is critical in order to uncover software faults early in the development process and to prevent unauthorized access. The authors conduct test automation exactly for web applications by introducing planning models for attacks and their use in online application testing [20]. People must understand how to protect their websites from attacks, as well as the credentials which they are using on several websites. The top ten methods that every website developer and the owner should use to protect the websites include updates, passwords, and many other techniques by which we can secure our website from different types of attacks [107]. E-commerce security is another essential aspect of web security or web application security. The article also discusses E-commerce security because of growing attack and security issues. The popularity of websites worldwide causes an increase in electronic business and online transactions.

This paper addresses the wide-ranging vulnerabilities, web securities and their impacts on E-commerce. It also provides a survey of vulnerabilities, comparing tools, threats, and several ways to secure the web and avoid vulnerabilities. In the last decades, ML and Deep Learning (DL) have revealed significant progress for web security. Therefore, we have discussed several approaches based on DL and ML. Further, we have discussed several open problems which can be addressed for more secure and vulnerability-free systems.

The rest of the paper is organized as follows: Section 2 describes the basic theory behind the advance web security and vulnerabilities. Section 3 is based on the types of web securities. Sections 4, 5, and 6 are based on attack impact, comparison of tools, and security threats, respectively. Section 7 thoroughly studies E-commerce security related to its purpose, issues, tools, and secure techniques. Section 8 discusses several ways to secure web and state-of-the-art methods based on ML and DL. Sections 9 and 10 confer the overall issues and conclude the paper.

Theory of web security and vulnerabilities

Network Security Situation Awareness (NSSA) refers to acquiring and understanding network security elements, assessing the current network security situation, and predicting the future network security development trend [24]. In recent years, the Internet has developed rapidly, and more and more new concepts have been put forward, including “Internet of Things (IoT)”, “big data”, “cloud computing” etc. Many researchers have strengthened cybersecurity research and vigorously made several developments [69,78,91,93], including critical national information infrastructure network, spatial information infrastructure, military information infrastructure, smart city, Internet of Things, autonomous driving, etc. In the mid-1990s, attacks on web applications began nearly immediately after the inception of the worldwide web. Web application issues have become the most common factor of enterprise security breaks [75]. The web application is an essential feature because of its versatility in providing social services. The web application has rapidly grown into one of the most widely used technologies [169]. Web applications have become a popular target for internet attackers because of their growing popularity and complexity. Even with the assistance of security experts, it is still challenging to manage due to the complexity of penetration testing and code review procedures. It requires many testing methods in code review and penetration testing, and most websites are hacked daily [8].

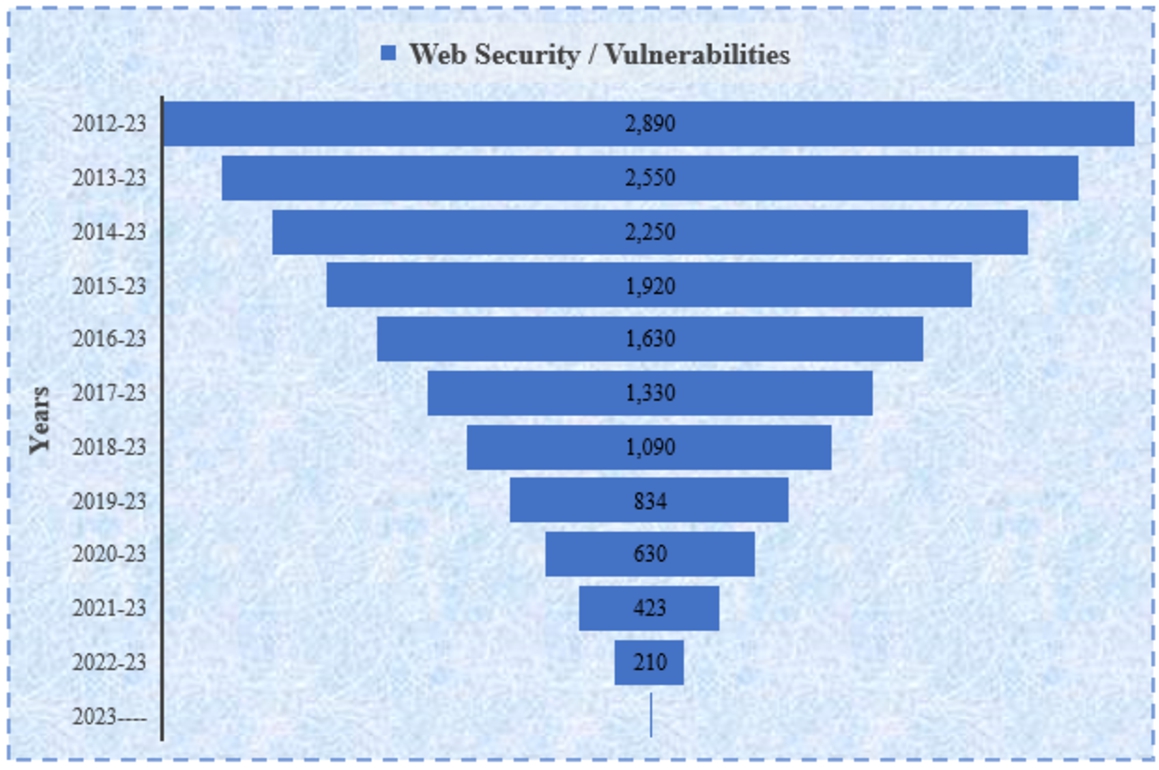

Users’ accounts and other information are now susceptible to fraud and many other risks as more customer data is transferred online through digital purchases or remittance activities [132]. Many web services that handle payments online are more likely to attack than other websites. If data is lost or altered, the consequences are more severe. The fear of credit card numbers or stealing sensitive information is a primary factor that makes websites less attractive [150]. Consumers are also concerned about web security attacks, which have led to a lack of trust in the industry. The number of articles published since 2012 with the title web security or vulnerabilities according to Google scholar metrics, have been shown Fig. 1. Due to its importance in the current era of multimedia, it is required to explore it more and provide valuable solutions for the current limitations.

Published articles since 2012 with the title web security / vulnerabilities.

As a consequence of it, numerous business owners, as well as internet users, are feeling unenthusiastic about adopting this new technology. Privacy and security are critical factors because the commercial sector is paying close attention to the privacy and security sector since it has the potential to ensure whether a business is successful or not [162]. According to the Web Application Security Consortium (WASC) report, nearly 49% of websites have threats and risks with a high severity level, and 13% of websites are automatically compromised for security issues [23].

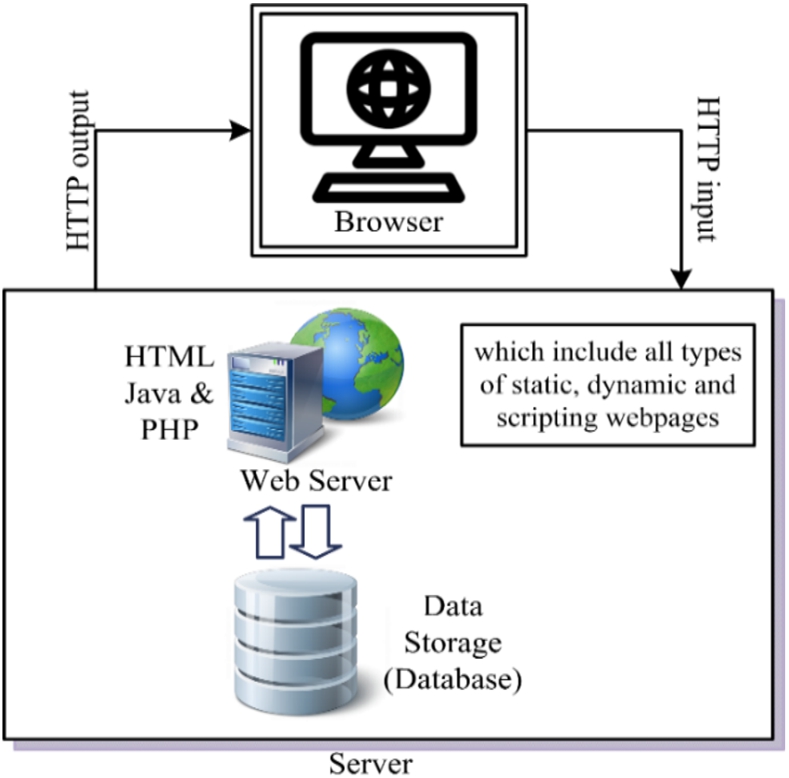

Figure 2 demonstrates a website’s basic logic and an internet application with a client interface and a server end on a web server. It is accessible via a Uniform Resource Locator (URL). The name of the internet server is understandable; TCP is the transport protocol used by the browser (clients) and the server to communicate. It is the basic architecture of data flow in a web application. HTTP is the transport protocol; CSS: data format; and HTML is the hypertext mark-up language. The user clicks or enters a URL. Then clients send an HTTP request via a communication protocol. The browser gets the outcome from the server-side and returns an HTML (i.e., web pages) output page to the user, which the client’s browser displays as a site [10].

Architecture of the website.

Web security and cyber security are closely related but distinct fields. Both are concerned with protecting computer systems and networks from unauthorized access, but they have different focuses and approaches [42,137]. Web security is specifically focused on protecting web applications and the data that they handle. This includes preventing SQL injection attacks, cross-site scripting (XSS) attacks, cross-site request forgery (CSRF) attacks, and other types of attacks that target web applications. Web security also includes protecting against malware that is distributed through the web and safeguarding against phishing and other types of social engineering attacks.

Cyber security, on the other hand, is a broader field that encompasses web security as well as other types of security concerns. It involves protecting all types of computer systems and networks from unauthorized access, including not only web applications but also desktop software, mobile devices, and IoT devices [80]. Cyber security also includes protecting against advanced persistent threats (APTs), nation-state cyber-attacks, and other types of sophisticated cyber-attacks [9]. One key difference between web security and cyber security is that web security is mainly focused on protecting the client-side of the application while cyber security is focused on protecting the whole infrastructure. Another key difference is that web security is mainly focused on protecting against known vulnerabilities and attacks, while cyber security is also concerned with identifying and mitigating unknown threats. Cyber security also includes incident response and disaster recovery planning, as well as compliance with regulations such as HIPAA, PCI-DSS and others.

Web security and cyber security also use different tools and techniques. Web security tools include web application firewalls (WAFs), intrusion detection and prevention systems (IDPSs), and web vulnerability scanners. Cyber security tools include firewalls, intrusion detection and prevention systems (IDPSs), antivirus software, and network security monitoring (NSM) tools.

Artificial intelligence and web security

Artificial intelligence (AI) plays a critical role in web security, helping to protect against a wide range of cyber threats [106,128]. One of the key ways in which AI is used in web security is through the development of sophisticated algorithms that can detect and respond to suspicious activity on a network [71]. These algorithms are able to analyze large amounts of data in real-time, looking for patterns that indicate a potential threat. They can also learn from past incidents, becoming more effective over time at identifying new and emerging threats. One example of how AI is used in web security is through the development of intrusion detection systems (IDS) [180]. These systems use machine learning algorithms to analyze network traffic and identify suspicious activity, such as attempts to exploit vulnerabilities or access sensitive data. They can also be configured to respond to threats in real-time, blocking malicious traffic or isolating affected systems to prevent further damage.

Another way in which AI is used in web security is through the development of advanced antivirus software [109]. These programs use machine learning algorithms to identify malware and other malicious software based on their characteristics and behavior. They can also be configured to update their databases with new malware signatures in real-time, ensuring that they are always able to detect the latest threats. AI is also used in web security through the development of behavior-based detection systems. These systems analyze the behavior of users and devices on a network, looking for patterns that indicate suspicious activity. For example, a system might flag an account that has been accessed from multiple locations in a short period of time, indicating a potential account takeover.

In addition to these specific applications, AI is also used more broadly in web security through the development of automated incident response systems. These systems use machine learning algorithms to analyze large amounts of data from a variety of sources, such as network logs and security cameras, to identify potential threats. They can also be configured to respond to incidents in real-time, triggering security measures such as isolating affected systems or escalating the incident to human security teams for further investigation. AI is also used in web security to identify and protect against Advanced Persistent Threats (APT) [9]. These are cyber-attacks that are typically carried out by nation-states or other highly-skilled adversaries. They are typically characterized by prolonged and stealthy access to a target network, during which the attacker seeks to gain access to sensitive data or disrupt operations. AI-based security solutions can help detect APT by analyzing network traffic and identifying patterns of behavior that indicate an APT is in progress. They can also be configured to respond to APT in real-time, taking actions such as isolating affected systems or escalating the incident to human security teams for further investigation. Another way AI is used in web security is through the development of threat intelligence platforms. These platforms use machine learning algorithms to analyze data from a variety of sources, such as social media, dark web forums, and other open-source intelligence. They help to identify potential threats and provide actionable intelligence that can be used to protect against them.

Types of security vulnerabilities

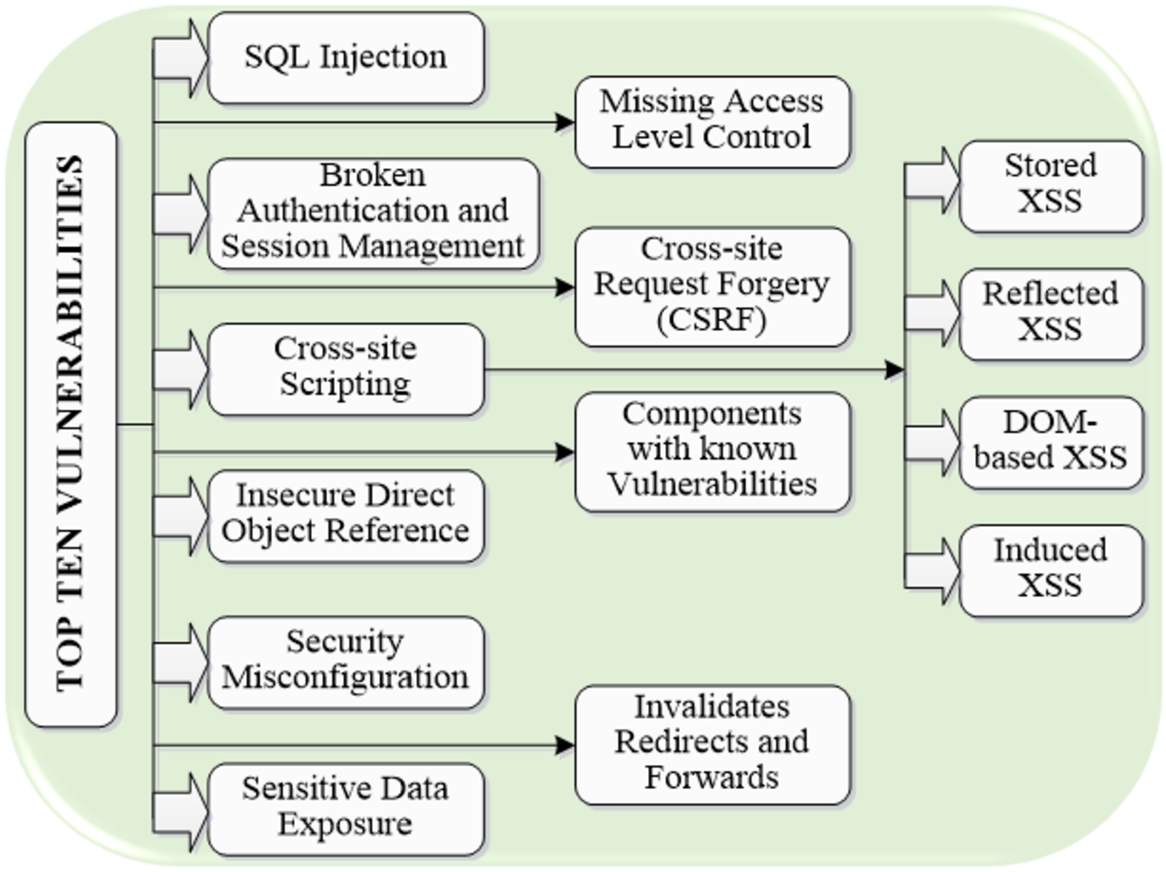

Vulnerability is defined as a set of circumstances that leads to and may lead to an information system’s implicitly or explicitly failure confidentially, integrity, or availability [75]. According to the report, nearly 9/10 percent of vulnerabilities are found during the development phase because of paying no attention to known vulnerabilities in another information system [102]. According to the report, the ten most commonly used vulnerabilities are responsible for 3/4 percent of violations in today’s software applications. The OWASP most frequently used vulnerability project, emphasizes identifying the most severe threat to a wide range of people. In other words, if developers are aware of these ten vulnerabilities, it is possible to avoid a large number of them. These flaws can be complicated and appear under a variety of circumstances. Using a web application firewall may minimize the effect of some activities, but it does not address the primary vulnerabilities [44]. We highlight a few common attacks in a web application, like Cross-Site Scripting (XSS), browser attacks, and cookie-session hijacking [76]. SQL injection and XSS are those security issues that are commonly encountered in web applications [19,35,99,122]. We have discussed the top ten mostly used vulnerabilities of the OWASP. According to OWASP, the top ten vulnerabilities are shown in Fig. 3.

Top ten vulnerabilities.

A remote code execution technique allows attackers to obtain critical data from the web servers’ database. The attacker successfully launched this attack because user inputs are not properly validated before being sent to SQL queries. An attacker can easily manipulate query results using SQL keywords with user inputs [154]. SQL injection usually happens due to including user input data to the interpreter in the form of the command. The attacker forces the interpreter to run these queries to acquire access to the user’s sensitive information without his awareness [126]. SQL injection can cause authentication bypass, data loss, denial of access, and the destruction of the entire database or host takeover.

Broken authentication and session management

Another widespread shortcoming in the OWASP list is session management, which comes across due to flaws in the implementation of session management in web applications. This vulnerability (broken authentication) allows attackers to steal keys, passwords, credit card numbers, and session IDs due to improper function implementation, such as management and authentication. This attack can be caused by misconfiguration, such as storing passwords in plain text or storing users’ credentials with weak encryption. According to the OWASP, these two attacks can be caused by ineffective password management, logout mechanisms, forget pass, and other similar features [155,157]. Users are the ones who implement strong authentication and session management controls [55].

Cross-site scripting

The XSS is considered one of the critical threats to the security of web applications. XSS attacks are web application attacks caused by improper validation and sanitation of inputs provided by users. Such an attack allows the attackers to run malicious scripts on the browser to steal cookies and hijack user sessions [43,45]. Session hijacking, sensitive information disclosure, perimeter defense bypassing, and site trusting are possible outcomes of XSS. These scripts can change the content of a page by rewriting the HTML.

The following are the steps that hackers take in this attack [144]:

Identify vulnerable websites and issue the necessary cookies.

Create malicious code and test it to ensure it works as expected.

Create URLs; users can also include the code in web pages and e-mails.

Encourage users to run the malicious code, which will result in the account being hijacked or collecting sensitive data.

XSS attacks are classified into four types:

Stored (persistent)

Reflected (non-persistent)

Induced XSS,

Dom-based XSS.

The first two are still the most popular XSS attacks, while the remaining three are less well-known.

Stored XSS

This vulnerability is activated when the injected suspicious code is stored indefinitely on the victim’s servers. First, the attacker tries to find a weakness in the software system to inject malicious code. Then The attacker grabs the user’s personal information, which may lead to serious damage [94]. When a client uses information through a web-based application, the attack is triggered, giving the attackers to gain access to it. According to [166], store XSS is more dangerous than other types.

Reflected XSS

Reflected XSS attacks are different from previous XSS attacks. These attacks target web application features that repeat data provided by the client, such as forms. Because the injected code could not be found on the server, the attacker generates a crafted Link with suspicious script code, leading the suspect to think the Link is legitimate genuinely [167]. When the user visits the web page via a click on the provided

//When the user clicks on the

DOM-based XSS

This attack is launched on the client-side, similar to the reflected XSS. A significant variance is that the attack codes are not embedded in the HTML content sent back by the server. As a result, all server-side detection mechanisms fail (e.g., session cookie or form field). Such vulnerability may be activated when the active content (e.g., JavaScript function) is transformed by a created request, letting an attacker use a DOM element [94,134,166,167].

Induced XSS

The web server has a vulnerability known as HTTP response splitting in induced XSS [134]. An attacker can use this vulnerability to modify the web page by interrupting the server’s header. This can be accomplished by looking for an invalid request parameter in the HTTP response headers [73]. XSS is a commonly exploited security weakness in modern websites [141]. Stored and reflected vulnerabilities can be found in both server and client-side code. On the other hand, DOM-based is only detected on the client-side [94]. Detecting XSS vulnerabilities has received a lot of attention. However, researchers are still trying to develop a reliable and appropriate method for analyzing source code and detecting XSS vulnerabilities in web applications [143,161].

Insecure direct object references

Generally, it is created due to internal implementation objects such as database keys, files, etc. Regardless of any protection mechanism, an attacker can control these references to steal illicit data [44]. For example, consider the case where a repository or login information file that should only be accessible by network administrators is made available to other users on the network. In the non-existence of the access control check, unauthorized access to these resources could often be attained by handling URL parameters [65].

Security misconfiguration

A well-defined security configuration must have been required for web applications and their corresponding web servers. In most cases, the default security configurations are insecure. For example, default passwords, accounts, and enabled directory listing are options. Furthermore, secure mechanisms should be up to date. According to OWASP, external and internal attacks can be enabled by security misconfigurations, resulting in unauthorized access or system compromise [10,36].

Sensitive data exposure

The passwords and cookies are exchanged between the browser and the server, requiring extra security because they are sensitive to the user [87]. Sometimes sensitive information is left unprotected on the web applications; attackers can easily steal or change this information and utilize it to gain access or conduct unauthorized transactions. The use of encryption schemes that are not secure can also expose sensitive information. An attacker can also use sensitive information to manipulate a web application or discover other accessible vulnerabilities [44,48].

Missing access level control

It mostly occurs when users access restricted resources/private data even after not being properly authenticated. If a web application cannot control the resource access system, then attackers could easily get this to use limited resources; also, attackers can modify data on the server, which is harmful. It could seriously damage the data integrity. Security controls should be in place to ensure that a user is authenticated and has the appropriate access rights, especially for web applications with multiple users with different roles [1,23].

Cross-site request forgery (CSRF)

CSRF is one of the unique attacks that deceives an attacker into performing malicious actions on a website where he/she is currently a legitimate user. In contrast to XSS, CSRF takes advantage of a site’s trust in a browser. Because trust exists and the website is compelled to carry out these requests. On the other hand, malicious users create fake HTTP requests and trick victims into transmitting these forged requests using various techniques [44,98].

Components with known vulnerabilities

Web applications that use a variety of components, such as modules, libraries, and a wide range of supporting frameworks, run with full rights. Moreover, if an attacker successfully manipulates the vulnerability component, sensitive data can be lost due to the attack. Integrating these components with known vulnerabilities into a web application may result in a broader range of attacks by weakening the web application’s defensive measures [44].

Unvalidated redirects and forwards

Attackers can utilize this approach to redirect sufferers to malicious websites because usually, user data is not properly validated or invalidated. Forwards can also be used to gain access to restricted pages. Due to insufficient mechanisms, attackers can normally redirect malicious web requests to phishing or other malicious websites. This may affect data confidentiality [10,44,102].

Attack impacts (low, medium, high)

The impacts of threats are determined by the methods, targets, mediums, and magnitude used in carrying out the attacks. As a result, the attack implication must be addressed to identify the risk type of the threats explained in the research. It will be relatively simple to prioritize protective mechanisms to overcome risk factors. We can categorize the attack impact into three risk levels based on the information: high, medium, and low. We assorted every threat consequence previously using the OWASP risk rating methodology. There are two possible outcomes to consider in this approach: commercial and technical developments. The technological impacts could be measured using information security statistics like confidentiality, integrity, and availability. On the other hand, the commercial impacts can be altered depending on the state of each organization. We evaluate the technical consequences of malicious activities due to this limitation. The percentage of attacks based on risk level is related to Transport Layer Security (TLS) and websites. It specifies there are twenty-six different cyber-attacks, in which high-risk cyber-attacks are ten, medium-risk is eleven, and low-risk are fifteen [169].

High level

This level relates to attacks that cause significant information leakage, honesty, or ease of access. TLS attacks are the major source of concern in terms of confidentiality. In the meantime, session management will be a primary concern of identity verification failure. Due to this reasoning, web applications can be divided into two types; Man-In-the-Middle (MITM) and Browser Cache Poisoning (BCP). The MITM and BCP seem to be closely correlated techniques that increase the risk of client-server authentication breakdown. To use this attack, the attacker can completely deceive an entity or alter the source’s legitimacy. It will eventually result in a loss of security and privacy. This MITM and BCP position violates both information and connection integrity in terms of integrity. An attacker can modify any message without leaving any marks on the client or server side.

Medium level

There is another security weakness that belongs to the medium level. The developer’s approach to avoiding robust encryption mechanisms puts the user at risk. The accumulation of a session mechanism generally supports the partially enforced encryption. As a result, a potential attacker would struggle to defeat such a mixed mechanism. These criteria also include poor cookie implementation, which results in cookie misconfiguration. Even if an attacker successfully bypasses the secure and HTTP Only flag mechanisms, stealing the cookie is difficult. This process also puts users’ Personally Identifiable Information (PII) at risk by checking browsing history, such as e-mail information. As a result, the confidentiality of the data is adversely affected.

Low level

This category includes attacks that have directly affected data usage, data loss, or modifications of the user’s data caused by weak security authentication or security headers such as HTTP Strict-Transport-Security, Content Security Policy (CSP), and HTTP Public Key Pinning (HPKP). Poor implementation of HSTS and CSP has little impact on confidentiality, apart from a MITM case already discussed in the above high-level category. Because HPKP is not configured correctly, an attacker can attack the system’s authenticity or integrity.

Comparison between static, dynamic, and hybrid tools

Dynamic analysis is unable to obtain optimal code coverage with low accuracy, whereas static analysis can. Several research findings have already tried combining these two methodologies to reduce their drawbacks and enhance their benefits [183]. Static analysis is a set of approaches for predicting the dynamic aspects before execution. These are the critical advantages of static analysis, which cannot require the application’s deployment and implementation. i.e., the static analysis will not provide any false negatives. On the other hand, during code execution, the dynamic analysis contains a set of tests to detect vulnerabilities and prevent attacks. False positives are reduced because this is performed on “live” websites. However, it is vulnerable to false negatives [30].

These tools can be compared by installing vulnerable applications in various programming languages. SQL injection, directory traversal, XSS, and SQL command injection are possible. The vulnerability of apps was analyzed using dynamic tools such as App spider and Burp Suite Pro on a Windows workstation. Those launched on a Windows operating system with Java (i.e., Tomcat) and a Linux server with a PHP interpreter. The result is a report with all details after completing the scanning process. It can be where the vulnerability was discovered, the time taken to scan the program, etc. A scanner comparison is performed based on the report.

In the static approach, the static tools scanner can be instigated directly on a local machine/Linux system and scan the weak application manually using the time frame. After it completes analyzing, it provides a detailed report of results, and explore the outcomes based on the information. For example, a static scanner, such as a sonar cloud scanner, can be accessed via the internet. After the scanning, the scanner generates the report with the complete information which is required. Finding a bug is an IDE software plug-in that detects weak apps and creates a notification if the code includes vulnerability. The code can discover the vulnerability after recording the plug-in’s completion time. The hybrid method combines dynamic and static approaches to control the vulnerabilities mentioned above. However, they also share their drawbacks and are inefficient in practice [174]. The significant increase in vulnerabilities shows that present code evaluation and software quality assurance approaches must be enhanced in capability and reliability [164].

Security threats

Web services introduced new forms of threats or attacks. Security risks can be divided into the following few categories.

Denial of Services Unauthorized Access Identify Spoofing Security (Theft and Fraud)

Denial of services

Website technologies are well recognized for detecting standard denial of service activities. This attack halts a system or infrastructure from working correctly. It is usually performed by sending multiple requests to the application. If the application receives more requests than it can manage, it will hang, and the user will be incapable of exploiting the service [16]. A distributed denial of service describes where more computers are employed to cause a denial of service [155]. DOS attacks are classified into spamming and viruses [116].

Unauthorized access

It refers to unauthorized access to systems, applications, or data. We must ensure that no information is accessible to unauthorized users when designing and implementing a web application. We should use strong authentication and authorization to authenticate and authorize the service customers. We can avoid this by preventing sensitive information from passing through SOAP headers and using strong encryption techniques to encrypt the communication channel [150].

Data alteration and message reply

Identify spoofing

The technique of making someone fool is known as spoofing. It is the most popular method of attack on a system that uses user credentials. The illegal usage of a user’s credentials via web services is identity spoofing.

Security (theft and fraud)

We already discussed data theft in unauthorized access When someone uses stolen or modified data for the wrong attention, then fraud occurs. Theft of software through unauthorized copying from a company’s server Hardware like laptop theft.

E-commerce security

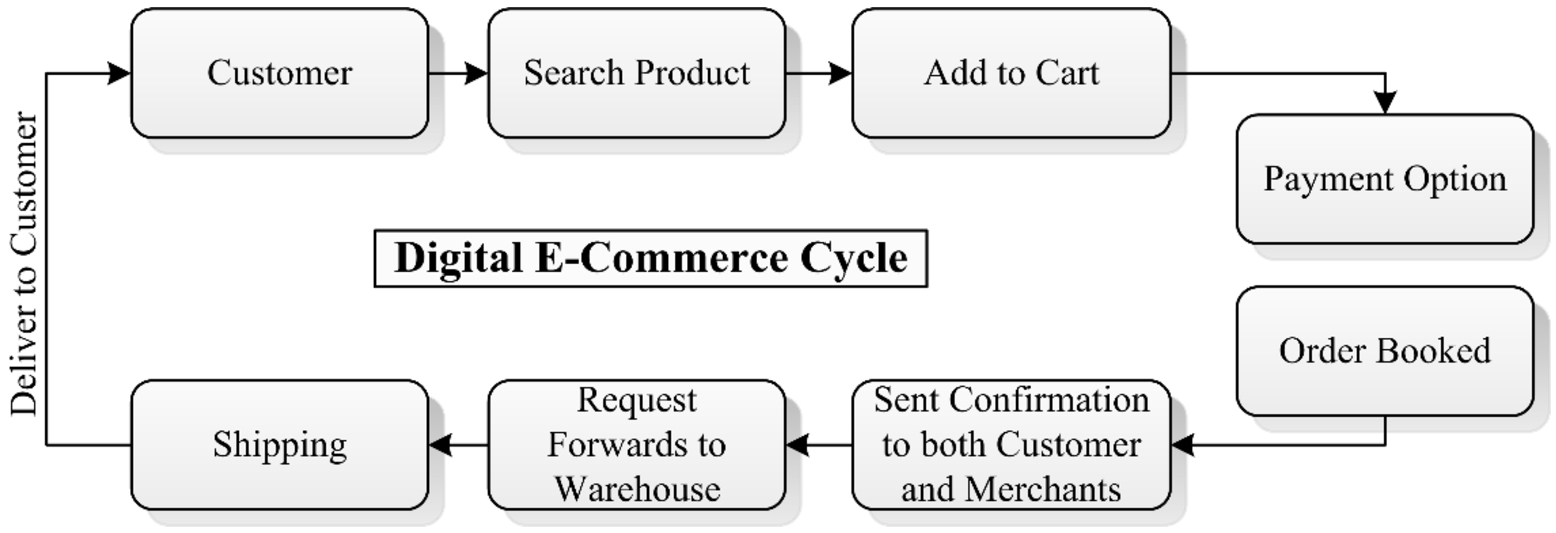

Digital E-commerce cycle

A high level of security is required for shopping and E-commerce websites. Many products are now ordered online via websites because it is a very convenient and straightforward way to purchase goods, including clothing and automobiles. Popular websites include Amazon, E bay, Best Buy, and many others [13]. The Internet has suffered various network attacks, threatening everyone’s network information security. If someone does not pay attention, it may bring severe economic losses and even affect the network security of the whole country. For example, Ctrip, a well-known tourism website in China, suffered an unknown network attack in 2015, which led to the direct downtime of the company’s servers for up to 12 hours. Besides, the United States encountered the most significant DDoS attack in history, involving companies including Google, Facebook, Twitter, and other well-known enterprises. It directly led to the “disconnection” of half of the country. In 2019, the Amazon AWS DNS service (i.e., route 53) was attacked by a distributed denial of service (DDoS). The attacker sent a large amount of useless data to the server, resulting in a slow response and even server downtime. By sending a large amount of meaningless data, an attacker can run his process on TSIG configuration, causing the binding domain name resolution server to crash.

Many companies are taking advantage of E-commerce opportunities. The main reasons for grabbing businesses’ attention are high efficiency, low cost, and more profitability. Still, security issues in E-commerce are a significant cause of loss and make websites less attractive [22]. In E-commerce security, several key prospects need to be analyzed for the security purpose, such as computer security, data security, network security, and so on. Because of its unique structure, one of the most apparent security components can impact the end-user through everyday payment interaction with business [116]. Data loss is a crucial concern in E-commerce websites because E-commerce deals with online shopping and payments by debit, credit cards, and PayPal, increasing website risk. Web mining technologies can also use to improve the security of E-commerce websites. The relationship between web data extraction privacy and E-commerce is observed using online user behavior. E-commerce websites are secured using a variety of web mining techniques and security algorithms [3,90,96,118].

A risk assessment model has been designed using the Fuzzy Inference System (FIS). The model generates risk assessment results based on four risk factors: vulnerability, threat, possibility, and influence. Finally, the feasibility of the model is verified [5]. Hu et al. established a quantitative security risk assessment model. The model predicts the threat to network security based on the dynamic Bayesian attack graph and then performs the quantitative assessment of security risk based on the threat prediction [54]. An evaluation index system was proposed for enterprise cloud accounting risk evaluation, which divided the risk evaluation into internal and external service factors, subdivided into the standard layer and index layer [32]. Han et al. aim at the security problems in the cloud IoT based on the three-layer index system of Software Defined Network (SDN). The assessment is divided into the non-overlapping perception layer, SDN layer, and cloud application layer according to the cloud IoT architecture [47]. Some researchers have also established an evaluation index system from the perspective of specific network attacks. To quantify the attack effect of Advanced Persistent Thread (APT) and the ability to visualize APT; the authors built an index system based on APT’s persistence, concealment, diffusion, and intractability characteristics [85]. The entire digital E-commerce cycle is shown in Fig. 4.

E-commerce cycle.

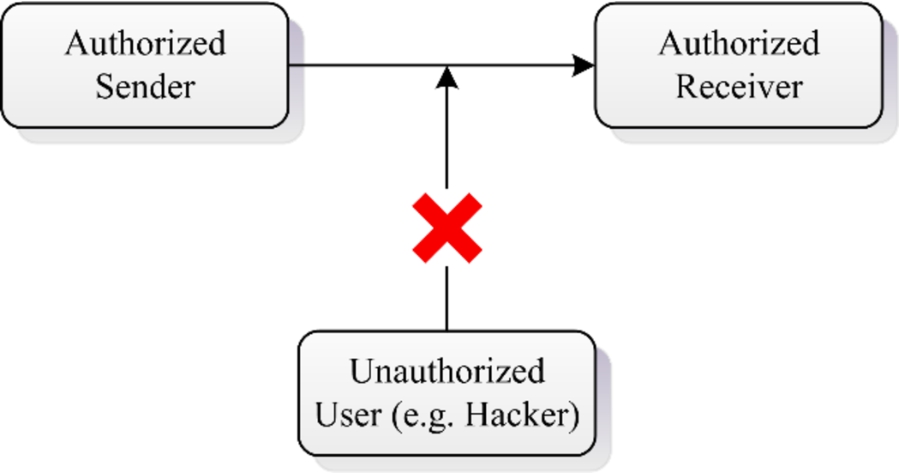

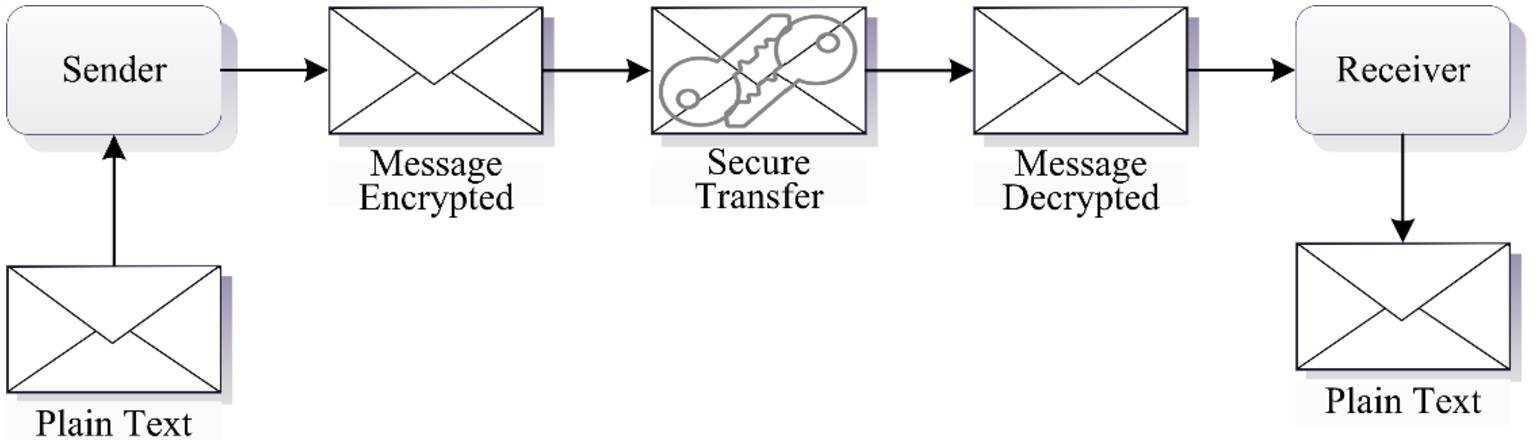

Data confidentially

It involves securing valuable data from unauthorized access by a third party, as shown in Fig. 5. Basically, it provides encryption/decryption [13].

Confidentially.

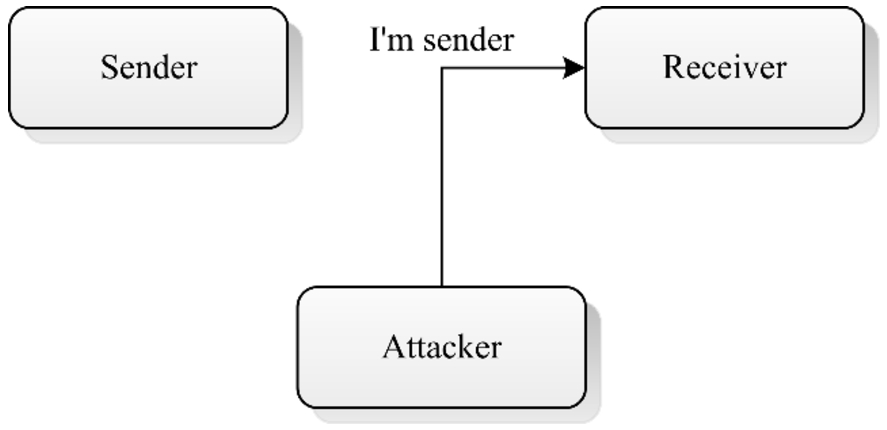

Digital signatures are utilized to authenticate the person’s identity who wants to use the service, and basic authentication is shown in Fig. 6. In other words, authentication means verifying the sender’s identity. A message authentication code (MAC) is one of the most common algorithms used to authenticate a message [72].

Authentication.

It controls those features which a user needs to access. Valid credentials are required [123].

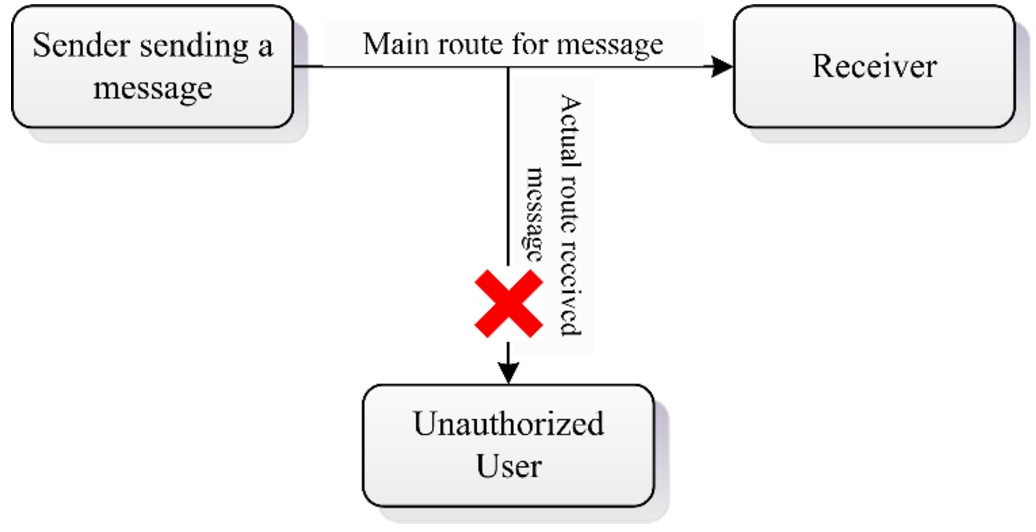

Data integrity

It makes sure that the data has not been altered. It has been achieved through the use of a message digest or hashing. Integrity is a method of maintaining the data’s trustworthiness, consistency, and accuracy throughout its life cycle, as shown in Fig. 7. It also refers to the process of protecting data from unauthorized modification. This can be done in a network by implementing a hashing algorithm like the Secure Hashing Algorithm (SHA) [34].

Integrity.

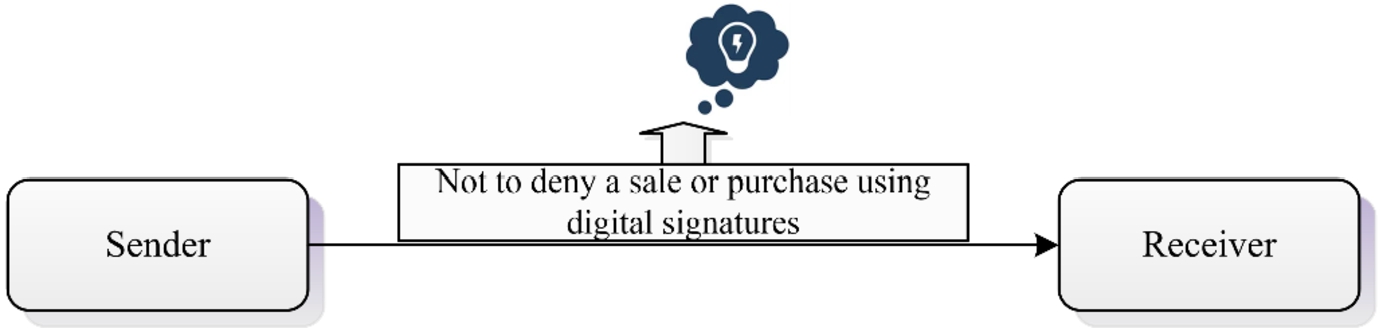

It is not essential to reject a sale or purchase using a digital signature, as shown in Fig. 8. Alternatively, it defines a service that can prove the data’s integrity and origin [177].

Non-repudiation.

Plaintext/Cleartext – Readable message by Humans.

Ciphertext – unreadable, uses encryption and decryption.

The whole encryption-decryption process is shown in Fig. 9. A cipher is a cryptographic process and a mathematical operation. Typically, most attacks aim to locate the keys, and sometimes it can lead to catastrophes on success.

Encryption and decryption process.

The security of E-commerce assets against illegal entry, usage, variation, or damage is known as E-commerce security. While security features cannot guarantee a secure system, these are necessary for its progress. The given security protocols should be considered for robust E-commerce security:

E-commerce security tools

Our computer becomes vulnerable to cyber-attack when we link it to a network. A firewall system protects our computer by redistricting all types of traffic that our computer initiates and directs [152]. Some essential firewalls are listed below:

Infrastructure with Public Keys Software for encryption-Encryption is the transformation of essential data in any structure into a structure that must be delivered clear with the help of a decoding key Digital certificates (Certificates of authenticity) Digital Signatures (Signatures in digital form) Bio-metrics – Eye scanner, fingerprints, etc. Passwords Bars and locks – Center for Network Operations Secure Protocols (Protocols for providing security)

Data mining techniques to secure E-commerce website

We can secure E-commerce website using the web mining techniques and can evaluate the relationship between web mining and security based on user behavior. Web mining belongs to data mining, which automatically searches and collects information across web documents. Web mining is a news service center that provides information, E-commerce data, financial management, and other services. Web mining frameworks can be used to evaluate E-commerce websites. In general, web mining can be classified as follows:

Web Structure Mining

Web Content Mining

Page rank and trust rank algorithms are the most popular techniques in web mining.

Web structure mining

At this stage, we need to examine a website using both algorithms. A page’s rank is determined by its link structure rather than its content. The trust rank is an algorithm to evaluate website quality. The outcome is a quality-based score that demonstrates the website’s level of trustworthiness. The first step is to collect information from websites and store it in a web storage system [96].

A search engine utilizes the page rank algorithm. We can determine a website’s page rank by decoding web pages for specific Links, computing the page rank incrementally, and sorting the documents using a page search ranking engine.

The trust rank algorithm is a method for determining the quality of websites by generating a measurement for page quality based on the linking structure. The trust rank algorithm procedure is given below:

The selection of trustworthy web pages is the starting point. By linking to other pages, trust can be transferred. Trust spreads in the same way that Page Rank does. The negative form of measuring propagates reverse, indicating flawed pages. The ranking algorithm can consider both measures.

Web content mining

Finding new information from a website is called web content mining. The user gets information about any topic according to his/her choice. Web content mining phases can be classified into hierarchical clustering and K-mean clustering.

It is one of the bottom-up clustering methods in which clusters have many sub-clusters. It begins with each object within a single cluster. It agglomerates with the closest pair of the clusters in each subsequent iteration based on some standard features until all information collects in one cluster. It can generate an object order that could be useful to display data. On the other hand, smaller clusters form, which may aid in the discovery process.

It’s a clustering technique that divides an observation into k-clusters, with each observation belonging to the specific cluster with the closest mean. K-means might be more highly scalable than hierarchical clustering (i.e., when K is small) while dealing with many variables. K-Means clustering might generate tighter clusters than the hierarchical clustering if the clusters are globular.

Ways to secure web and avoid vulnerabilities

How to improve the website?

Web Applications make things very easy for companies, and any company can easily create an online presence quickly. Because of their excellent and flexible structure, control unit, and enhanced version of websites make it easy to run a website without even requiring any training or having expertise. It is suitable for business and many other purposes, but there are also some drawbacks. Many website owners don’t know how to secure their websites from cyber-attacks; they do not know the importance of web security. Here we highlight a few points that all website holders and developers should know to secure their websites from attack [107,111].

Update

Every day, many websites are being hacked because of software vulnerabilities and less secure security mechanisms. It is imperative to keep your website up to date. Many attackers use advance techniques on vulnerable websites and the systems continuously scan every website to get the hacking opportunity. It is not adequate to update once a week or month because current developed systems detect the vulnerability quickly and accurately. It is possible that one of them finds a vulnerability before a user could fix it. Exception, users have a website firewall installed such as Cloud Proxy and have up to date with the latest versions.

Passwords

Continuing to work on client sites commonly requires logging in with their admin user credentials to their sites. We have only concerned about the vulnerabilities of their root passwords. Using ubiquitous names like

COMPLEX: Random username and password must be created.

LENGTH: The length of a password must be at least 12 characters.

UNIQUE: Passwords should not be reused, use a different password for each account.

One site = one container

A most important point to understand is if you want to host multiple websites on a single server, then tactlessly, this is one of the worsts and most dangerous security practices. The hosting of multiple websites on a single server indicates greater security threats. So, we prefer to use one server only for one website; it may reduce security threats.

Sensible user access

This rule is only for that website, which has multiple users. Every user must require permission to complete their tasks. If someone needs temporary access for a specific task, approve them, but once the task is completed, they must terminate their access. It will make it worth-fully.

Change CMS settings

While today’s applications are simple to use, they can cause a threat to end-users. The large numbers of attacks on websites are usually automatic using Bots or other automated systems, and many attacks are driven by their default settings. If you want to avoid most attacks, change their default setting while installing your preferred software.

Extension selection

Nowadays, one of the critical aspects of web applications is their extensibility. Most people are unaware that the same extensibility is also its main weakness. How do you know which extension to install when there are several that provide similar functionality? Here are the factors we consider while choosing the extension; check the extension updates history when it was last updated. Must prefer to use the extension which is up to date because it indicates if any security issue or bug is found in it, they just fixed it accordingly.

Backups

Every website requires backup because it is essential for a website, but if you want to store a backup on the server, it will create a severe security risk. Backups contain the unpatched software version and are publicly available, making it easily accessible for a hacker.

Server configuration files

We should spend some time getting to know the webserver configuration files. The “apache2.conf/httpd.conf” file is used by Apache webservers, the Nginx server uses “Nginx.conf”, and Microsoft IIS server uses the “web.config”. All files which are located on the source web directory are compelling. It lets you run server rules properly and enhances web security. Here are a few guidelines:

Avoid directory browsing Avoid image hotlinking. Protect sensitive files

Install SSL

We explored many articles where they explained that using SLL would solve all their security issues. Still, it is utterly wrong because SLL has nothing to do with website security, and SLL does not protect websites from malicious attacks. SSL provides strong encryption between the server and the browser. One of the important reasons behind this encryption is that it prevents the traffic from intercepting, so the SLL server and browser communicate smoothly. SSL is significant for the security of many websites and online buying selling websites that handle online payments and sensitive information.

Avoid vulnerabilities

Protection against injection attack

Many methods are available to identify SQL Injection because SQL Injection is one of the most commonly used attacks [60]. In [110], a Web Application Firewall (WAF) based on an Artificial Neural Network (ANN) was proposed by the researchers as a method to avoid the majority of SQL attacks. It divides into two steps: training and working. The system is fed a collection of regular and malicious data for the ANN training in the training stage. Integrate ANN into the WAFs to identify the attacks at the working stage. The authors described a semantic comparison-based scheme in [95]. During the training and run time, after the conceptual comparison has been carried out between two syntax trees, the query is considered malicious if two trees are not equal. Still, the query is considered genuine if these trees are alike or similar. In [46], the researcher introduced another tool, WASP, which is used to avoid SQL attacks. It is based on two different concepts positive contaminating and syntax aware evaluation. Positive contaminating is used to find and manage trusted data rather than untrusted data as in negative contaminating. It is producing false positives rather than false negatives.

Protection against broken authentication and session management

Binding the client’s IP address is a standard method of preventing session hijacking. In more detail, the web server collects user session data to create an IP address for a specific user and then ignores all other requests from other IP addresses. But it only works if each user has a unique and Static IP Address. Moreover, most networks follow the NAT protocol for IP address distribution, which allocates the same IP address to multiple clients on a network, making the technique less productive [17]. Tracking the person’s search engine bio-metrics is another way of preventing session hijacking. The user’s browser fingerprint is made up of several characteristics. Any change in the user’s browser fingerprint could indicate that an attacker has stolen a session [115]. The cloud services are targeted by Macaroons, which restricts access to the cookie. Macaroons use a shared secret and a message chain to create a chain of nested Hash-based message authentication codes [31].

Protection against XSS attacks

User-input validation on the server-side is the first step against XSS. Validation can be carried out using either blacklisting or whitelisting techniques. It is possible to reject malicious user input if detected [17]. However, it is difficult to provide complete security to complex websites using input handling methods. In [171], the researcher also suggested a WA proxy method for identifying and restricting XSS attacks. The recommended framework provides a reverse proxy that detects returned HTML texts before locating vulnerable scripts using a modified web browser.

Protection against insecure direct object references

The access control mechanism is mainly used to provide proper security to resources and use of internal WA operations. In Role-Based Access Control (RBAC) [37], for example, programmers use permissions to control objects which are assigned to roles. The approval gives a user access to a specific role during a session. Because of some duty rules, users cannot take more than one role simultaneously. For example, RBAC is used by Cisco ACE WAF to define the administration roles of the WAF itself. In [121] researcher identifies a secure cookie-based implementation of an access control system with different roles. The role data of the user is stored in a pair of secure cookies and sent to the suitable servers. They used verification procedures known as Pretty Good Privacy (PGP) to validate these cookies. In [11], the authors proposed an access control method for open web services and applications. The Extensible Access Control Markup Language (XACML), a type of access control language, is the foundation of their work.

Protection against sensitive data exposure

Data Breach: This flaw occurs only when the developer leaves some weak points during coding; it can only improve by paying close attention to what he left in code and handling errors safely. Database Theft: Cryptography, in combination with a good database access security policy, is a critical solution for overcoming this attack. In [33], the researchers suggested database-driven security protocols to protect against this attack.

Protection against CSRF

Some server-side security measures are available to mitigate CSRF attacks [2,33]. In [66], the researcher defined no forge as a backend proxy that can be integrated into a system to detect and prevent CSRF attacks while remaining invisible to applications running. The primary function of a proxy is to detect and protect PHP applications from CSRF attacks. The characteristics of server-side safety measures have been discussed for user protection, and a server-side plug-in was created to protect users from the attacks [181].

Protection against invalidated redirects and forwards

The authors classified phishing defenses into the following types: blacklist-based, visual similarity-based, machine learning-based, and heuristic-based [139]. The blacklist-based technique is used for creating a repository to discover phishing URLs regularly. The Google safe browsing API is the most remarkable work in this category, PhishNet [127]. It identifies malicious URLs based on previously identified malicious URLs and Automated Individual White List (AIWL) [21], which maintains a record of secure Login User Interface (LUI). Unfortunately, this record has a problem with unreliable LUI predictions. In general, blacklists-based have high True-Positive (TP) rates but low False-Positive (FP) rates [18,130].

Machine learning and deep learning for web security

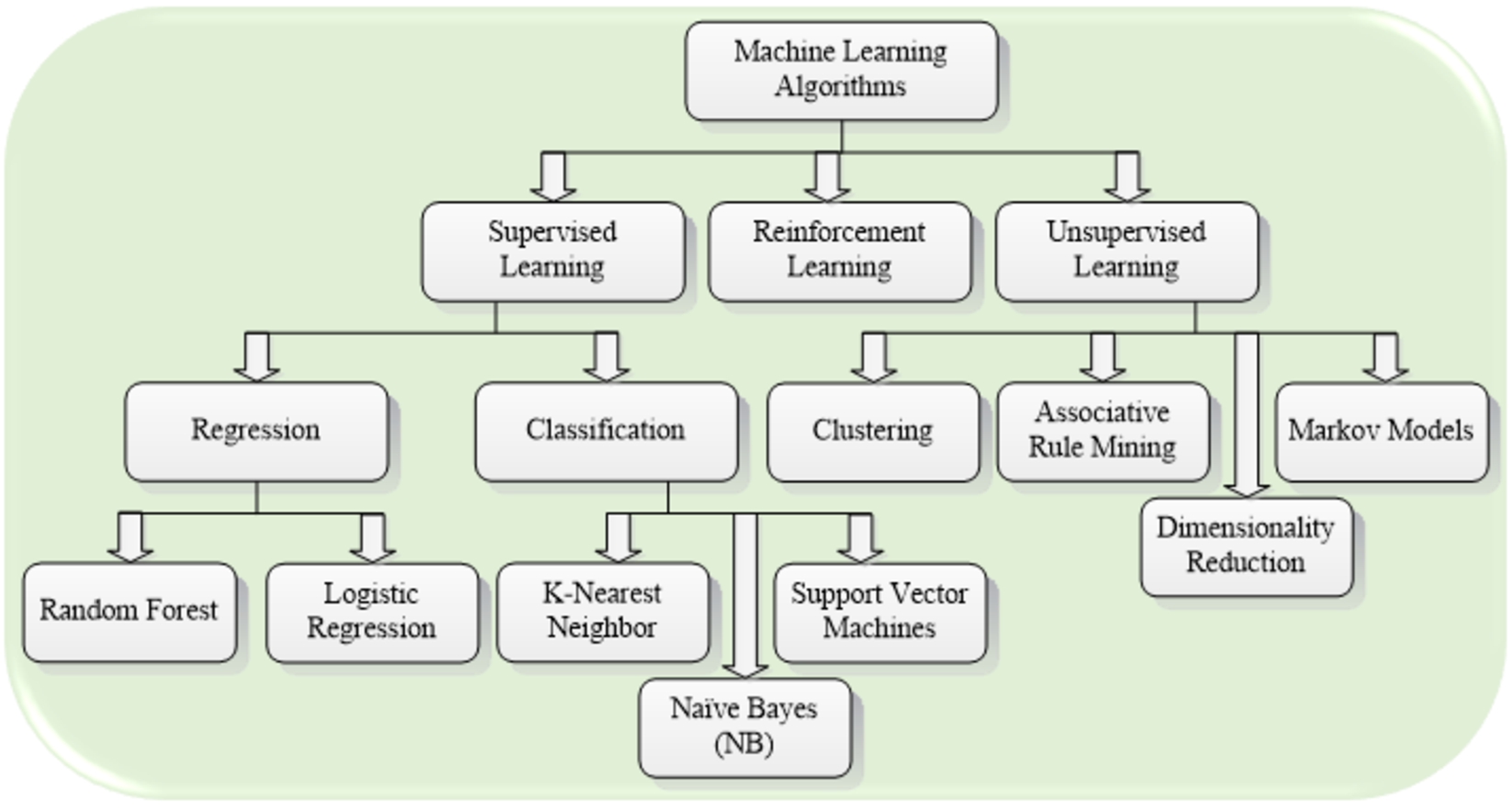

Machine learning algorithms

Machine learning (ML) offers an alternate method for discovering vulnerabilities, and it is possibly quicker for finding vulnerability [29,39]. The ML algorithms could learn abstract and latent risky coding structures, possibly improving overall generalization. ML algorithms currently in use are mainly operable with source code, which is more human-readable. Imports (e.g., header files), software complexity metrics, function calls [114], and coding changes; researchers have used all of these as indicators for discovering possibly code snippets or vulnerable files [147,148]. Additionally, Version control systems include functionalities and information gathered, such as development activities [104] and code pushed [125], which were used to predict vulnerabilities. To address the limitations of the available methods, because many malware variants usually share the same behavior patterns [113], anti-malware organizations decided to create more advanced methods based on data mining and ML techniques [151]. These techniques utilize various forms of feature extraction (i.e., data representation) to create intelligent malware tracking systems. They often use an SVM-based classifier [133], a Naive Bayes classifier [68], or a combination of classifiers (nave Bayes, decision trees, and SVM) [74]. Recently, there is a lot of different ML techniques are used to identify malicious URLs. In [92], researchers identify malicious URL attributes using Statistical methodology, derive a few more features for ML, and compare different models with this classified model. Based on heuristic and feature-based methods, they proposed an approved semi-supervised method for training the URL, known as the multi-classification model [176]. This method achieves more accurate classification results. The classification of the state-of-the-art ML algorithms is shown in Fig. 10.

Classification of machine learning algorithms.

The entropy clustering and other technologies were utilized to predict and effectively detect DDoS attacks and make the system from the original passive defense to an active defense [117]. Jason used a variety of network detection technologies and proposed a single-factor evaluation framework [145]. Xiaoling et al. proposed a hierarchical multi-domain network security situational awareness method based on a graph database, which established a hierarchical model and divided the network into different domains, which can more effectively collect and process awareness data [158]. With the advance of cloud computing, authors integrated game theory into network security situational awareness and measured the network situation value by the utility of game theory [182]. Xiaowu et al. proposed a network security model based on NSSA to break through the communication barriers between different networks [88]. Z Ying et al. integrated the advantages of rough set theory and ML in data processing and feature selection into situation assessment, proposed a new network assessment model, and verified the feasibility and effectiveness of the model through experiments [188]. Experts proposed a network security prediction model based on adaptive evolutionary strategy optimization of a covariance matrix, optimized the super parameters of SVM through CMA-ES, and then preprocessed the accumulated data to strengthen the prediction ability of the model [64]. Jinsoo et al. used a Bayesian network to measure network security situational awareness and established the corresponding network security model [146]. Rajesh et al. proposed a security situational awareness system based on attack measurement using the attack measurement method [120]. A situation element extraction model is designed based on projection pursuit and uses Particle Swarm Optimization (PSO) to minimize the projection index. The experimental results show that the model has advantages in accuracy and convergence speed [168]. A situation element extraction mechanism has been developed based on Logistic Regression (LR) and an improved particle swarm optimization model. The experimental results show that the element extraction model constructed by this mechanism has a more vital generalization ability [79].

Many research efforts have been put into improving the automated vulnerability detecting process. However, human intelligence still plays a vital role in providing information and developing different methods used to detect vulnerability [164]. Many tools have been developed based on the experience of experts to predict how security experts focus on vulnerability detection, whether it is static or automated detection tools. For example, the static code analysis tool was based on extracted rule templates and developed with best software design practices. If any predefined rules are broken, the system will alert you. In addition, the designed selected features attained from code analysis, such as Abstract Syntax Tree (AST) and Control Flow Graph (CFG). ML-based detection methods depend significantly on wisely engineered feature sets that can be extracted from the code analysis (i.e., CFGs).

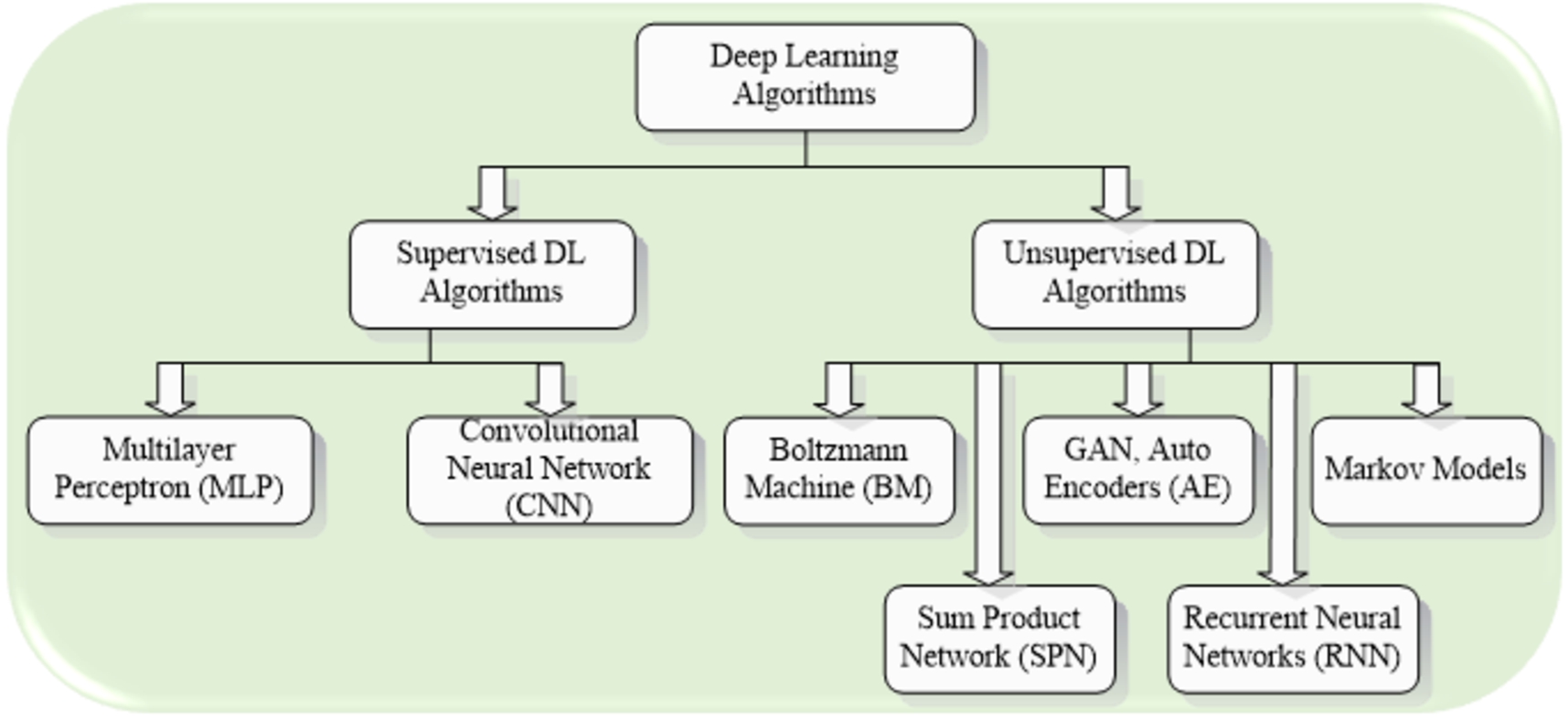

Deep learning (DL) algorithms are based on Deep Neural Networks (DNN), and these are large neural networks organized in many layers, which are proficient in automated feature representation. ML algorithms are in demand to identify different features based on human experience and deep domain knowledge. The process of classification algorithm might be time-consuming and error-prone [81,170]. DL techniques are proliferating in the fields of ML for finding vulnerabilities. On the one hand, the neural network/deep learning-based model layered structure facilitates memorizing conceptual and highly nonlinear structures, capturing the intrinsic structure of complex data. The usage of neural networks, on the other hand, allows for the automatic extracting of the features from different layers, as well as maybe with a higher level of generalization [84], removing expertise from the time-consuming and potentially error-prone process of feature engineering. Furthermore, DL algorithms can detect unique information that a human expert would never notice [140]. As a result, the function search area has grown, and researchers are motivated to use neural networks to understand vulnerable code patterns suggestive of application weaknesses. The DNNs are used to identify vulnerabilities; these models can advantage and acknowledge code concepts. DL algorithms can go one step toward limiting the gap than traditional ML-based approaches based solely on feature extraction. It is done by learning deep structures and high-level representations of web application codes that expose code concepts [7]. Supervised learning performs classification and regression for several web security tasks [105,108], and unsupervised copes with associative rule mining and clustering problems [51,53,56,58,59,61–63]. At the same time, reinforcement learning copes with exploration and exploitation problems [27]. Several unsupervised algorithms have revealed astonishing performance, such as Generative Adversarial Networks (GAN) [40], Auto Encoders (AE) [50,100], Recurrent Neural Networks (RNN) [14], Boltzmann Machine (BM) [135], Deep Belief Networks (DBN) [15] and various variants of these state-of-the-arts. The hybrid DL algorithms have also shown their importance in web security [57,70,89,101,108,160]. The classification of state-of-the-art DL algorithms is shown in Fig. 11. The CNN algorithms for text has also been adopted in the field of network security. As a special language parsed by the server, URL is similar to the traditional text language in composition, structure and characteristics. It is data with semantic relations. It is suitable to treat URL as a natural language in the feature representation. Therefore, the TextCNN model has the application in the web threat classification and detection scenario [178].

Classification of deep learning algorithms.

RNN model is designed for processing sequential input, unlike feed-forward networks like FCN or CNN (e.g., text). It is called a cyclic neural network because the current output of a sequence is related to the previous output. Specifically, the input of RNN is divided into time sequence relationships. The key feature of the RNN is memory, which can remember input values in earlier times. In web security detection, the data characteristics of URL can be transformed into vector sequences with time sequences after normalization. Using the memory feature of the RNN algorithm to extract features can achieve better detection results. As a result, many studies have used RNN versions to obtain a conceptual understanding of security breaches. The two-way form of RNNs is proficient in capturing a sequence’s dependencies. However, in complex cases, it is difficult to get relevant results only by analyzing the prior time series data, and it requires longer memory to trace the source. Therefore, Long Short-Term Memory (LSTM) can analyze time-series data in a longer time range. As a result, many researchers have used two ways LSTM (i.e., Bi-LSTM) [52] and Gated Recurrent Unit (GRU) [25] structures to gain knowledge of contextual code dependencies, which are essential to understanding the concepts of several different types of threats (e.g., buffer overrun vulnerabilities), which have code circumstances with multiple code lines that are either consecutive or intermittent, forming a vulnerable context. DL has been used to detect attacks in recent years, outperforming typical detection methods. In [14], the researcher investigated many URL identification models and realized that Neural networks do not require manual features, and their detection results are much better than other approaches [184,186], URL embedding [138].

A security feature extraction model based on an improved discrete wolf pack algorithm (IDWPA) utilized large-scale data and changeable intrusion behaviors in the cloud computing environment, which minimizes the redundancy of extracted features and the complexity of problems [131]. The experimental simulation shows that this algorithm’s data feature extraction and classification accuracy have significantly improved. Tian et al. used a Deep Belief Network (DBN) to extract network intrusion features, effectively improving the ability to resist Internet attacks [159]. Preethi et al. proposed a DL model based on Sparse Autoencoder (SAE) [129]. The new feature representation can be reconstructed effectively to reduce the error rate of intrusion prediction and improve the prediction rate. In [175], the authors proposed a situation assessment method based on adversarial DL and directly established the autoencoder deep neural network model (AEDNN) based on DAE and DNN.

For the advancement of network security intrusion detection, the authors used the DL method to detect intrusion data more comprehensively and introduced the latest work of network anomaly detection using DL [12,124]. Ones proposed an intrusion detection model based on DecisionTree (DT) algorithm with DBN fusion limit gradient lifting, which provides a new method for intrusion detection to process unbalanced data and improve the detection performance against rare attacks [136]. Furthermore, a Wi-Fi network intrusion detection model based on DBN was proposed, which improves the accuracy of intrusion detection and has better performance [165]. Liang et al. proposed a combined intrusion detection method based on a convolutional neural network and limit learning machine, which can effectively improve the accuracy of intrusion detection and has good generalization ability and real-time performance [82]. Similarly, an intrusion detection method based on Deep Convolution Neural Network (DCNN) was proposed and proved through experiments that this method reduces training time and false positive rate and improves detection accuracy and real-time processing performance of intrusion detection system [149]. Zhang et al. proposed an improved LeNet-5 and LSTM neural network structure for network intrusion detection [185]. The anomaly detection method based on improved CNN and LSTM neural networks was developed, effectively improving the detection rate and false alarm rate of network traffic detection [187].

The fundamental problems in applying the DL techniques to network security include algorithm performance problems, such as interpretability, traceability, adaptability, and self-learning. It is also needed to improve the false positive rate and unbalanced datasets [4,172]. The latest research results of DL technology with network anomaly detection as the core should be more investigated, including Deep Believe Network (DBN), Deep Neural Network (DNN), Recursive Neural Network (RNN), and ML techniques related to network anomaly detection. The application of DL algorithm in different network layers should be introduced, and discussed to enhance other network functions, such as network security, data sensing and compression, etc. [77,97]. In combination with the present stage and the problems and deficiencies, it is necessary to carry out in-depth research on the following aspects in the future:

The definition, extraction, analysis, and automation of network security are the premise for the further development of network security. Many methods are involved in feature engineering, so it can be considered to adopt feature extraction or feature selection combination methods for different data to improve the efficiency and effect of a large number of secure data processing. It is valuable and has a broad future to continue to study the application of DL in the field of network security. Most network security industries’ security defense mechanisms sincerely rely on the traditional rule matching mode to generate alarms. However, the attackers have made rapid progress regarding tools and code level. The attack techniques have been evolving with the continuous advancement of the network and technology. If the intrusion detection method is permanently adopted, it not only depends on the experience of security experts but also has a severe time lag. Perhaps, most of the alarms found today seem outdated, which will cause losses to enterprises, and even more stringent security incidents will affect the national economy and the people’s livelihood. Therefore, only the updated detection model can truly confront the increasingly complex network environment and better protect the security of IT infrastructure and the rights and interests of Internet users. Amalgamation and standardization of network security measurement indicators need to be more advanced. Currently, the network security measurement indicators have not reached a consensus, mainly because the network security measurement indicators in different application scenarios are different. Moreover, among the indicators of network security, the indicators of network security protection ability are complex and challenging to describe, which sets obstacles for the research of network security assessment and prediction. Establishing a unified and standardized network security system is more conducive to objectively, comprehensively, and accurately depicting the network security and facilitating the reference and integration of network security evaluation and prediction models. The situation assessment network security can be oriented to large-scale network security data, focusing on the efficiency of element extraction, and parallelization can be added to improve the evaluation efficiency. The user behavior detection and evaluation method can be verified based on the public dataset and establish the evaluation index to verify the algorithm based on the user behavior data in the real-time network environment. The network structure is complex, and the perceived network security data is ever-changing, so the network security prediction efficiency and accuracy in the dynamic environment should be improved. In addition, in different application scenarios, the network security prediction will have some unknown new features, and its network security may deviate from the expected trend. The corresponding network security prediction model should also be dynamically adjusted or combined with more intelligent DL methods, which can become the focus of further research. The network security awareness model needs higher automation and intelligence. It also needs to study the network security risk assessment further to improve the active defense of the model. The network security model must be further verified in different simulations and real-time environments. Therefore, the further expansion of the model will have unique application value and practical significance.

Discussion and limitations

Nowadays, web applications are dominant because of their regular use in many sectors. Attacks on web applications are also on the rise due to rising demand. In this paper, we highlight the necessary critical features for web security. Discuss the top 10 vulnerabilities of web applications that may lead to massive damage. According to most research/studies, SQL and XSS attacks are the most common use attack, and both attacks are used widely on different platforms. SQL is a remote execution technique that allows attackers to obtain critical data from a web server database. On the other hand, XSS attacks are also considered a major security threat to a web application. Typically, XSS is caused by improper validations and user inputs. Such attacks allow running malicious scripts on the browser to steal cookies, hijack the session, etc. Pikachu platform is also an open-source and accessible to build web vulnerability platform. Compared with DVWA, the Pikachu platform takes the latest owasptop10 as the core and constantly updates the vulnerability module. The Pikachu platform now includes common SQL injection, code injection, XML external entity injection, sensitive information disclosure XSS cross-site script attack, file inclusion, file upload, PHP deserialization, and other web vulnerability scenarios. At the same time, each type of vulnerability in the Pikachu platform is designed into different subclasses according to different situations. The SQL injection is divided into digital injection, character injection, search injection, HTTP header injection. Additionally, the significant types of SQL injection vulnerabilities are based on Boolean, time, and wide byte injection. In addition, the code structure of the Pikachu platform is clear, and the content is easy to read. After the virtual machine is built, students can, according to the different needs of the experiment, modify the Pikachu platform’s source code. For example, add some filter functions appropriately, increase the difficulty of the experiment, etc.

Furthermore, we discuss how to protect the user from these top vulnerabilities and evaluate different techniques for protection. SQL injection is the primary attack. Several solutions were proposed to mitigate this attack, such as based on grammar, machine learning, entropy, and tainting. The grammar-based technique is very effective, but it is time-consuming and error-prone because they need to create a model for each request in this technique which is a time-consuming process. These approaches are unsuitable for detecting store procedure attacks and the subqueries in DBMS. Sometimes, the web page’s computational complexity and loading time influence the website’s security, becoming vulnerable to attackers. These issues were considered many times, and evolving strategies improve e-business [38,49,153].

Pros and cons of different network security models

Pros and cons of different network security models

Network technology is gradually progressing, with the coming of more and more types of network attacks, the scale of network attacks is becoming larger and larger, and the way of attacks has also changed. In contrast, the current network attacks are gradually improving based on advanced approaches. Each network attack provides information for the next attack. For example, suppose one wants to attack the system host. In that case, he will first scan the port to obtain the host or port that may have vulnerabilities and then attack the host through vulnerabilities until achieve the purpose of attacking the host. The correlation analysis models are divided into several types and have different forms of expression and effects. Furthermore, several well-known network security mechanisms have been developed in the last decades, such as Zero Trust, Network Segmentation, Hyperscale, Intrusion Prevention System, Email Security, and Remote Access etc. The significant pros and cons of some of these methods are given in Table 1.

Additionally, it is nonviable to detect the attack less or in real-time because of its high time complexity. Some common examples of web security limitations are shown in Fig. 12. Entropy approaches are currently unstable because they are based on probabilistic models. Taint-based approaches take time because they must track every attribute on the website. ML techniques are also unsuitable for this situation because they require a lengthy practice period and can produce many positives and negatives. The second serious flaw concerns authentication and session management. A user’s level of access control changes to combat potential session vulnerabilities, and the value of the validated cookie can be updated. Website developers can keep improving the authentication system by using time-signature or photo-based verification methods. The common protection against session attacks restricts JavaScript’s access to session cookies. Identifying a list of security initiatives is another good proposal. Numerous defensive system solutions are used to counter the XSS attack, and existing manufacturing strategies depend primarily on user input sanitization. The probability distribution of tokens is utilized in some methods to process web pages. Some methods rely on modifying the page code, either by creating a shadow site, trying to insert a script ID, or injecting boundaries [18,130]. The misuse of objects and web functions on the web can be protected by controlling and managing the roles. Also, it needs permission to handle them. The web developer must provide perfect website configuration during the implementation and testing. As a result, the attacker’s knowledge of the component’s default values is reduced while its security is enhanced. A manual scan of the server will be more beneficial in this stage.

Examples of web security limitations.

Regarding the sixth flaw, when personal information is exchanged between user and client, the web server must use a secure connection. Administrations must choose robust data to server policies and strong encryptions for the user’s personal information, which is stored in the database. The “Captcha” is one of the best ways to avoid CSRF attacks, i.e., the eighth flaw. To prevent bypassing it, we need to use strong models. Many other works have been proposed to deal with this type of threat. Users improve the client-side with some procedures and add a few scripts to the web server. In the ninth vulnerability, the developer should take extra precautions when working with external components on a website because it can cause weakness to the website, particularly in open-source frameworks and libraries. Developers can reduce risk by rebuilding their interface of these components. The last category in the list of top vulnerabilities is caused by phishing, which can be countered by creating fake URLs.

Web security and vulnerabilities are vital factors, specifically the environment where everything is dealt online. This paper has pointed out the challenges of web security problems. At first, we have discussed and given the essential features of web securities such as their types, attack impacts, comparison of different tools, security threats, and their importance for E-commerce security. Then, we have described several ways to secure the web, avoid vulnerabilities, and confer a wide range of privacy and security concerns related to web applications and E-commerce. Privacy and security are vital issues that need to be addressed to design a trustworthy and reliable environment. Lastly, we have described the role of ML and DL in web security. This paper will assist future web security endeavors and emerging secure e-business.

At present, the boom of machine learning has swept the world, and more and more relevant algorithms and models have been applied to different fields, including the field of web security. In terms of intrusion detection, several classifiers do not show ideal effect for intrusion detection, and exhibit some problems, such as weak generalization ability and low classification accuracy. These problems are accompanied by some factors which can be considered in future work, such as the setting of model parameters, a single sample of training data set, excessive noise interference in training data, and so on.

Footnotes

Acknowledgements

This paper and the research behind it would not have been possible without the exceptional support of all coauthors.

Conflict of interest

The authors have no conflicts of interest to declare.

Ethical approval and consent to participate

All participants provided ethical approval and informed consent to participate in the study.