Abstract

With escalating demands for high-definition video, cache collaboration allows neighbor nodes to share locally stored content in order to reduce download traffic. High energy consumption associated with content delivery remains a concern for Content Distribution Networks (CDNs). Therefore, this paper proposes cluster-based collaborative caching in a core network employing IP over WDM. The aim is to allow sets of core caches to fully share content while minimizing power. A Mixed Integer Linear Programming (MILP) model is used to form energy-efficient cache clusters. The energy consumption of the network is evaluated under different cluster sizes to find the optimum size that minimizes energy. To evaluate the influence of content popularity distribution, a heavy-tailed Zipf distribution and an Equal popularity distribution are evaluated. In addition, the work investigates the influence of downlink traffic behavior and power consumption parameters on optimum cluster sizes. Attained results reveal that maximum savings in energy consumption introduced by cluster-based collaborative caching are up to 34.3% and 21.8% under the Zipf and Equal distribution, respectively. Cache collaboration is not recommended when all core nodes contain fully replicated content servers. Results also show that power consumption parameters do not influence cluster formation. It is recommended keeping cache collaboration in the core network simple, so as to reduce intra-cluster communication.

Introduction

Today, Internet video traffic is responsible for 82% of total global Internet traffic [7]. The huge growth rate of Internet traffic coupled with the escalating share of demanding video traffic raises global concerns with respect to Internet energy consumption. Storage and transport are considered major energy consumers in Internet video services. Caching the most popular content towards the edge of the network is an effective technique that reduces network traffic and offloads servers. Content distribution among caches remains an open area of research. The amount and popularity of content stored in caches governs the reduction in traffic due to caching [18]. Caching can be implemented such that requests can be served from a hierarchy or cluster of caches [17,26]. The challenge is how to organize caching topologies for maximum benefit.

Cache collaboration is a technique that allows two or more caches to share content. This implies that requests for content made for a certain cache can be retrieved from a collaborative cache. The advantage of cache collaboration is extending cached content beyond the capacity of a single cache. However, the downside is the additional overhead caused by signaling in order to locate the cache with requested content.

This paper investigates the power consumption associated with cache collaboration in a core network. Network nodes are arranged into clusters to fully distribute cache contents among cluster nodes. The objective is to evaluate the power consumption of the network having different sizes of clusters and caches. In addition, the paper studies the tradeoff between having large caches that are restricted to a limited number of nodes and deploying smaller caches while allowing wider cache collaboration. Large cache collaboration implies having smaller local caches which reduces power consumption of storage. Nevertheless, retrieving content from neighbor caches consumes power on transport. A Mixed Integer Linear Programming (MILP) model is developed to select the optimum nodes to form clusters while guaranteeing the minimum power consumption. The goal is to minimize cache sizes to reduce the power consumed on storage without increasing the total network power consumption due to downloads from remote caches. The significance of this work arises from the fact that storage consumes up to 27% of total network energy [28]. Previous studies evaluated network performance while assuming cluster formation based on geographical locations [3,16]. Forming clusters with respect to geographical locations alone minimizes power consumption only when equal traffic demand between equally distributed nodes is assumed. In previous work, the distances between network nodes are neglected and the main goal is to reduce overall network traffic. However, a traffic demand passing through a certain link does not necessary consume the same power compared to the same amount of traffic passing through a different link. The reason is that the power consumption of routing traffic through a link is relative to the length of the link and other demands routed over the same link. In this paper, the power minimization problem is tackled through optimizing cache collaboration taking into account distances between nodes. Cache collaboration for video services is evaluated where asymmetric regular traffic demands between nodes are assumed. The traffic demand for video content from a node having a server to another node is related to the regular traffic demand between the nodes. As a result, video traffic demand generated by nodes is unequal. In addition, the paper investigates the influence of content popularity distribution on the energy efficiency of collaborative caching. This is achieved by applying two distributions: A heavy-tailed Zipf distribution and an Equal distribution. The number of servers from which content is downloaded influences energy consumption, since additional links are required to transport content from servers to nodes. The influence of the number of origin servers is evaluated to demonstrate its effect on optimum cluster sizes and energy consumption. Moreover, the paper illustrates the impact of cache sizes on cache collaboration. The cache size governs its hit ratio, influencing the amount of download traffic and power consumed on storage. 50 GB and 100 GB caches are assumed under each popularity distribution, and energy consumption results are calculated and demonstrated. Finally, the impact of power consumption parameters on optimum cache sizes and energy efficiency is revealed by assuming different values for the power consumption of router ports, the major power consumers in IP. The contributions of this paper can be summarized as follows: (1) formation of energy-based clusters in the core network that allow collaboration among cluster caches. (2) evaluating the energy efficiency of collaborative caching in the core network under different cluster sizes. (3) investigating the influence of content popularity distribution on cache hit ratios and resultant optimum cluster sizes. (4) studying the energy consumption of content delivery with respect to traffic behavior, where variant number of content servers are assumed. (5) investigating the influence of power consumption parameters on energy efficiency by reducing the power consumption of transport. Results demonstrate optimized cluster formation for different cluster and cache sizes for utilized content popularity distributions and input parameters. Maximum savings in energy consumption introduced by cluster-based collaborative caching are up to 34.3% under the Zipf distribution when the network had 7 origin servers.

The remainder of this paper is organized as follows. Section 2 reviews related work and explains cache collaboration in video services. In Section 3, the proposed power minimized MILP model for cache cluster formation is presented. In addition, the artificial nodes technique that is utilized in the model for cluster formation is explained. Section 4 demonstrates by results the optimized cluster formation for various cluster sizes. It further reveals the impact of content popularity distribution, download traffic behavior and power consumption parameter values on optimum cluster sizes and energy consumption. Section 5 is conclusions.

Cache collaboration

This section presents an overview of previous studies on cache collaboration including location-aware caching, collaborative caching in fog and edge networks and cluster-based caching. It also explains the collaborative caching architecture.

Related works

Hierarchical and location-aware caching

A collaborative content caching scheme for radio access networks is proposed in [23]. Storage space is divided into two parts: the first part caches the most popular local content while the second part collaboratively caches the most popular zone-wide content. Results show that the proposed scheme reduces average content delivery latency compared to other caching schemes and achieves server load balancing. The work in [20] investigates the content routing and caching optimization problem for interconnected regional and local Internet Service Providers (ISPs). The objective is to minimize routing cost by placing the most popular content in Set-Top Boxes (STB) while populating servers with least popular content. Attained results demonstrate that cache collaboration among ISPs guarantees investment profit in contrast to individual service provision.

In [9], a cooperative cache management algorithm is developed to minimize bandwidth cost and maximize traffic served from caches. The work classifies cache collaboration into intra-level, where content is only retrieved from leaf nodes and not from the parent node, and inter-level cache collaboration, where content is only fetched from the parent node. Results show that the proposed algorithm is guaranteed to perform within a constant factor from the globally optimal performance with better worst-case scenarios than previous work. The authors of [14] propose a content routing scheme, SCAN, for content-aware networks. The proposed mechanism locates multiple nearby copies of requested content for efficient delivery. They demonstrate that SCAN delivers content faster than other schemes and reduces network traffic. The content caching scheme presented in [4], WAVE, adjusts the number of file chunks to be cached with respect to the popularity of the file. Each file request results in exponentially increasing the number of chunks to cache at each node on the path between the origin server and the end host. The proposed scheme reduces the average hop count of content delivery and achieves higher hit ratios compared to other schemes.

Collaborative cachign in fog and edge networks

The authors of [24] develop an online algorithm to minimize data caching and migration costs for edge computing. The performance of the algorithm is compared with: Delay-Oriented, Online-Optimal, Revenue-Oriented and Coverage-Oriented approaches. The proposed algorithm outperformed other approaches in terms of system cost and Quality-of-Service (QoS) penalties. In [27], a learning-based cooperative edge caching approach is proposed to minimize content delivery latency. A model that predicts the popularity of future contents is first developed. Then, a dynamic programming caching strategy optimizes content placement. Compared to leading caching schemes, the proposed strategy is found to achieve improved performance in terms of cache hit rate and average content delivery latency.

The work in [2] reduces the energy consumption of a cache-enabled fog network by optimally associating IoT devices to fog nodes. Results proved that utilizing more fog nodes improves energy efficiency for various network sizes with minimum influence on computational power and delay. Paper [15] proposed caching in the fog network with respect to edge network connectivity capacity to reduce delay. Attained results showed that when the connectivity capacity of the edge network is high, delay is minimized by maximizing the hit ratio of the fog network. In contrast, storing more content in the cloud data center is more efficient when the connectivity capacity of the edge network is low.

The authors of [13] introduce IS-Fog, an Information Centric Networking Social-Aware Fog that allows caching and computing collaboration between end device by forming Fogs. Simulation results show that IS-Fog results in higher cache hit ratio and offload benefit compared to other schemes. In [17], Mixed Integer Linear Programming (MILP) models are utilized to optimize the amount of data stored at each level of a Cloud-Fog-Mist network. The goal is to minimize energy consumption while not exceeding acceptable delay. Attained results revealed that when data processing requirements are low, the most energy-efficient solution is to store data at the Mist while the Cloud remains the most efficient layer to store delay-sensitive data.

Cluster-based caching

Paper [26] reduces content transmission delay in user-centric mobile networks. It proposes a Greedy Algorithm that finds the optimal content placement and cluster size considering network topology, traffic distribution and content popularity. The algorithm successfully reduces average file transmission delay by up to 45% compared to non-collaborative solutions. The work in [16] investigates cooperative caching in content delivery networks. It evaluates the influence of request rates and the size of locally stored objects on the local cache hit rate and the cluster hit rate. The results reveal that devoting a small portion of storage area for local popular contents ensures high local and cluster cache hit rates.

A two-layer cache clustering strategy in Named Data Networking (NDN) is proposed in [25] considering location and content popularity. The proposed strategy reduces average hop count compared to other caching strategies by implementing caching decision policy in cluster heads. The work in [1] also involves NDN and proposes a Popularity and Freshness Caching (PF-ClusterCache) approach. The proposed approach allows sharing storage capacity of different nodes to improve performance. Simulation results showed that the proposed approach outperforms benchmark caching schemes in terms of server access and content retrieval time.

Collaborative caching architecture

Caches are utilized in video services to store the most popular content at the edge of the network. A request for a video item is served from the local cache if it is available at the cache. Otherwise, the content is retrieved from the origin server. In Content Distribution Networks (CDNs), multimedia caching is employed to reduce access latency and decrease congestion around the origin server. When the network consists of more than one caching level and a requested object is not found in the local cache, the request is forwarded to parent caches on the path to the origin server, and the closest cache having the requested object delivers the content [27].

In cluster-based collaborative caching, nodes caches are virtually grouped to form a cluster, and a cluster head or a representative cache is selected for each cluster randomly or using node distances. The role of the representative cache is to maintain a directory of the contents of cluster caches [3]. When a request is not served from the local cache, it is forwarded to the representative cache rather than broadcasted to all cluster nodes. This way, the cluster avoids being flooded with requests. The representative cache identifies the cluster cache having the requested object and forwards the request to that node. When requested content is not found in cluster caches, it is downloaded directly from the origin server.

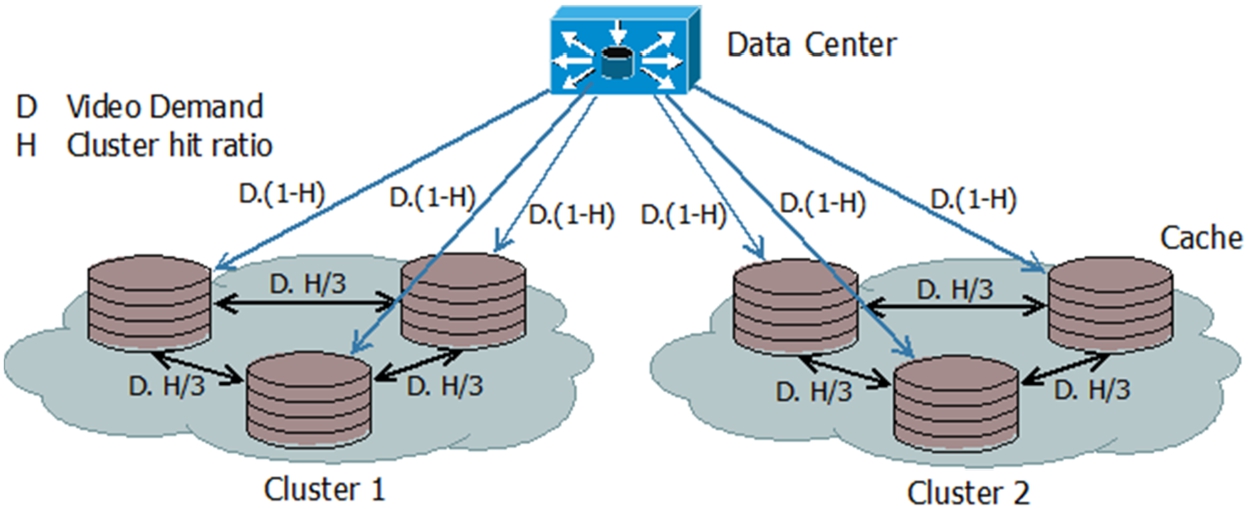

Cluster-Based Cache Collaboration Example using Clusters of 3 Nodes. Each Link is Labeled with the amount of Video Demand it Carries Due to Cache Collaboration.

In the core network, nodes are connected following a topology and are not necessary arranged in similar clusters. Servers containing the full library of videos are located at various distances from clusters and there is no content replication between them. Figure 1 shows a network having clusters of variable sizes and topologies and shows traffic flow among collaborating caches. A cache locally serves H of requested traffic, where H is the cache hit ratio, and therefore

In this section, the methodology of the proposed MILP model is described. Then the model is introduced and explained. The section also illustrates how virtual nodes are utilized to form clusters.

The methodology

The aim of this study is to investigate how cache collaboration influences power consumption. The objective is to determine whether retrieving content from a cluster of nodes reduces network power consumption and to examine the effect of the cluster size.

The core network employs Internet Protocol over Wavelength Division Multiplexing (IP over WDM) [8] and is similar to the IP over WDM network in [22]. The network contains one or more video servers storing the entire library of video files. A cache of a limited capacity is deployed at each node in the network populated by some of the video server’s contents, commonly the most popular content [18,19]. The proposed MILP model groups nodes into clusters of a predefined size such that content cached at cluster nodes is shared among the cluster. The cluster formation is carried out to minimize power consumption. To achieve power minimization, cache collaboration must be performed such that the additional wavelengths allocated to deliver content from neighbor nodes are minimized. Wavelength sharing features provided by WDM are the main reason for the variance between clustering based on power minimization and clustering based on geographical location.

The MILP model

The MILP model defines sets, parameters and variables declared in Table 1, Table 2 and Table 3, respectively.

The objective function

The goal of the proposed model is to minimize network daily power consumption. The power consumption of the network consists of the power consumption of router ports, optical switches, transponders, amplifiers, multiplexers, demultiplexers, and caches. Objective function (1) minimizes the power consumption of occupied network components at each time of the day.

Model sets

Model sets

Model parameters

Model variables

Constraints (2) and (3) are the physical link capacity constraints. Constraint (4) is the capacity constraint of the optical layer. Constraint (5) and (6) are the flow conservation constraints in the optical and IP layer, respectively. Constraint (7) and (8) are the flow conservation constraints for upload and download traffic terminating and originating at nodes equipped with a video server, respectively. Constraint (9) is the flow conservation constraint for video traffic from a collaborative cache. Constraint (10) calculates the total downlink traffic from collaborative caches. Constraint (11) guarantees mutual cache collaboration. Constraint (12) limits downlink traffic from a cluster cache to its maximum. Constraint (13) and (14) maintain a transitive relationship between cluster nodes to prohibit cluster intersections.

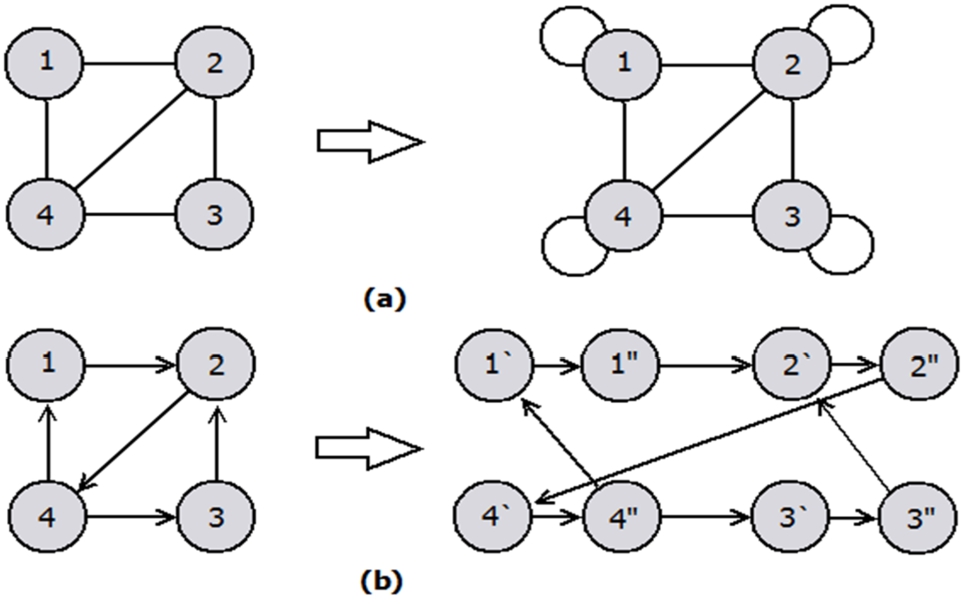

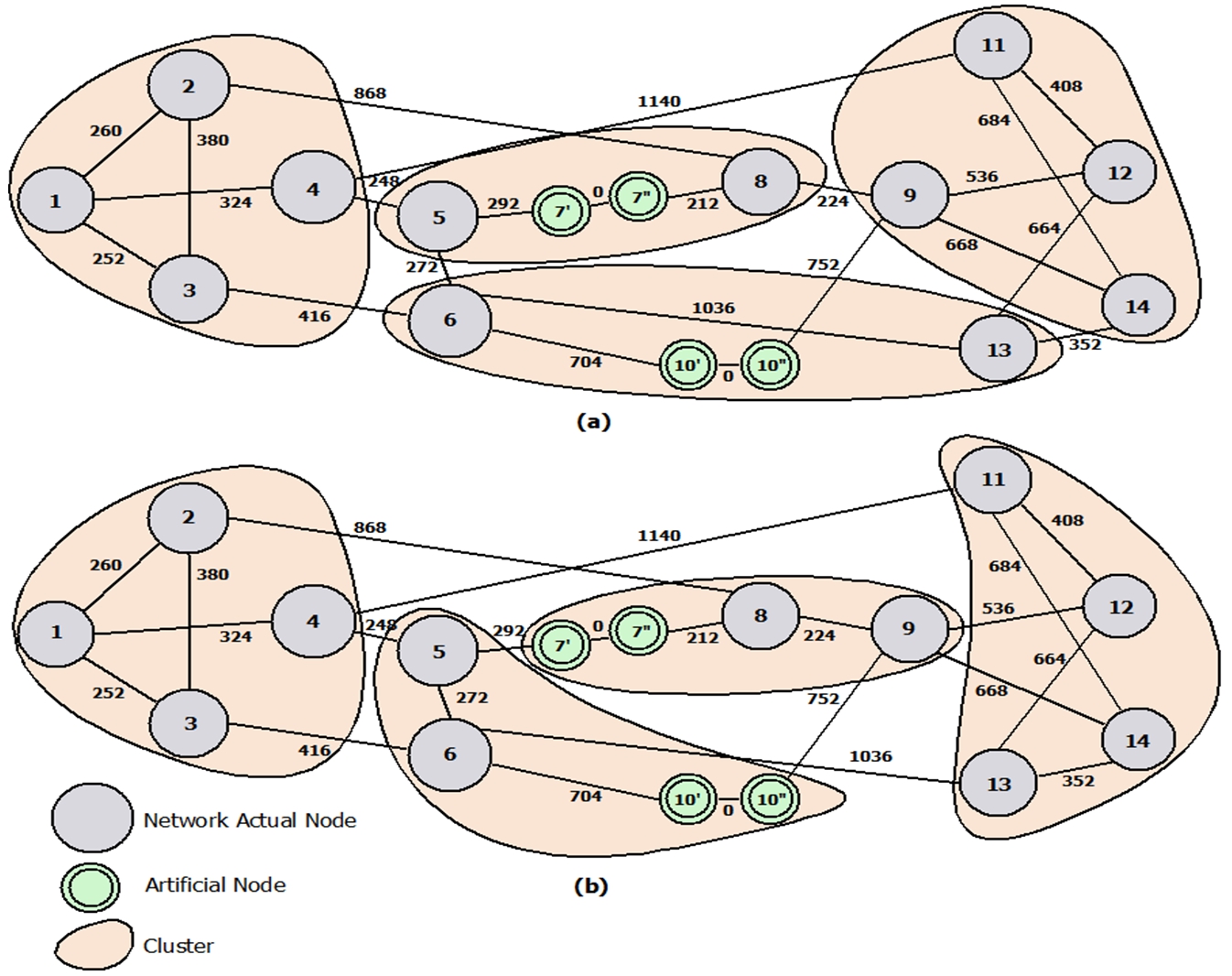

Network Topology Transformation using Artificial Nodes: (a) Undirected Links (b) Directed Links.

Input values including network topology, node distances, cache and cluster sizes, link capacities and traffic demand are input to the MILP model. The model uses simplex iterations to find the optimum cluster formation that minimizes energy while fulfilling all constraints. To maintain the linearity of the model, no cluster overlapping is allowed.

Artificial node insertion is a technique that allows associating cost to the load passing through a certain node. That is performed by adding an extra link to the node when undirected links are used. When bidirectional links are used, adding cost to the nodal traffic is realized by replacing a node in the network with two virtual copies of the node. In addition, an internal link is inserted between the two artificial nodes. The artificial node technique is useful when signifying various problems including modeling node failure where eliminating the link between the artificial nodes denotes the failure. It can also be used to enforce a maximum load on a node by imposing this maximum on the internal link [21]. Figure 2 illustrates how the network initial topology is transformed using artificial node insertion for directed and undirected links. In the proposed model, artificial nodes are utilized to modify the original network topology into a desired total number of nodes. The model employs clusters of fixed sizes for cache collaboration, and in order to solve the model for various cluster sizes (3, 4, 5 and 8 nodes), the intended cluster size has to devise the total number of nodes. For example, in a topology of 14 nodes, when forming 2 clusters, each cluster will have 7 nodes. However, when forming 3 clusters, it is not possible to form clusters of equal sizes. Therefore, an artificial node is inserted in the original topology resulting in a topology of 15 nodes allowing the formation of 3 clusters of 5 nodes.

Results

One goal of this evaluation is to investigate whether storing less content at the nodes while distributing content amongst cluster nodes decreases the overall network power consumption. Reducing the amount of stored videos in local caches consequently reduces the power consumption of storage. However, streaming more content from neighbor caches increases the power consumption of transport. The net effect of these two drives results in finding the optimum cluster size that minimizes power consumption.

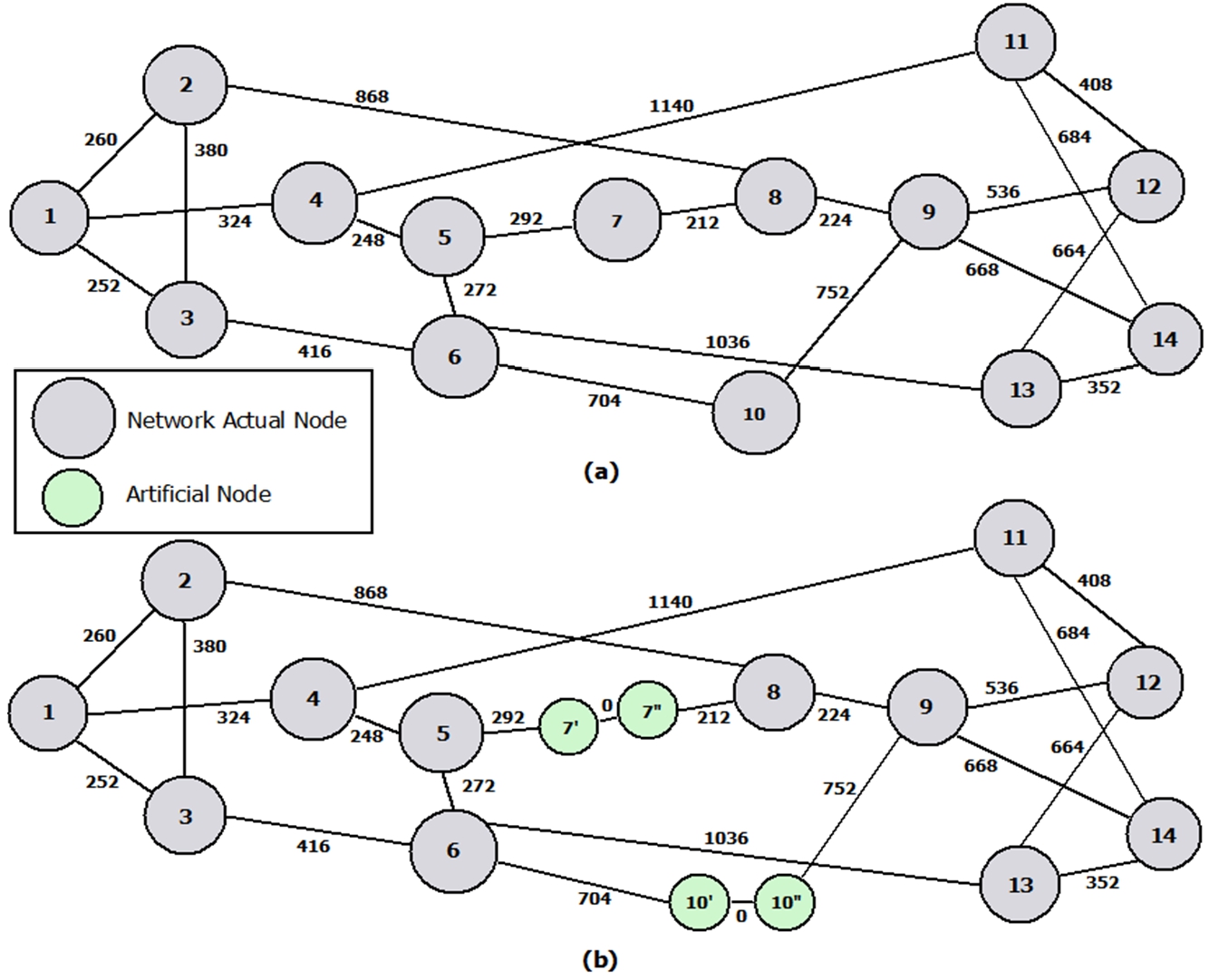

The model is tested using the NSFNET topology of 14 nodes and 21 links. Figure 3 (a) shows the network topology and the fiber length between connected nodes. The energy consumption of delivering requested videos from servers to network core nodes is calculated. A number of scenarios are evaluated having different values for video popularity distribution, number of origin servers in the network and assumed values for influential power consumption parameters.

The NSFNET Topology with Physical Fiber Lengths (km): (a) The Original Topology (b) The Modified Topology uisng Artificial Nodes to Replace Node 7 and 10.

Regular and video traffic

The evaluation assumes regular traffic between each node and other (cluster and non-cluster) nodes. The average demand between node pairs ranges from 20 Gb/s to 120 Gb/s and the peak occurs at 23:00. The traffic demand between each node pair is randomly generated with a uniform distribution [27]. The download demand from a server to a node is assumed to be 10 times the regular traffic between the two nodes. This value can be increased to reflect growth in network video traffic.

Cluster formation rules

To maintain the linearity of the model, clusters are assumed to have equal sizes. The formation of 2 clusters of 7 and 7 clusters of 2 nodes is possible under the original NSFNET topology. By employing artificial nodes, node 7 in the original topology is replaced with its two artificial copies 7` and 7`` to obtain a topology of 15 nodes. The transformed topology supports the formation of 3 clusters of 5 and 5 clusters of 3 nodes. Node 10 is further replaced with its artificial copies 10` and 10``, resulting in a topology of 16 nodes. This topology is utilized to form 2 clusters of 8, 4 clusters of 4 and 8 clusters of 2 nodes. The model selects the optimum nodes to assign to each cluster such that the overall network power consumption is minimized. The power consumption of storage is considered in addition to the power consumption of streaming video content from cluster caches as well as from the server. The transformed NSFNET topology using 2 artificial nodes is shown in Fig. 3 (b).

Power consumption parameters

The input values for power consumption parameters and other parameters such as the distance between two EDFAs and the wavelength capacity are shown in Table 4. These values are derived from [5,6,10–12,19,22].

Input data for the model

Input data for the model

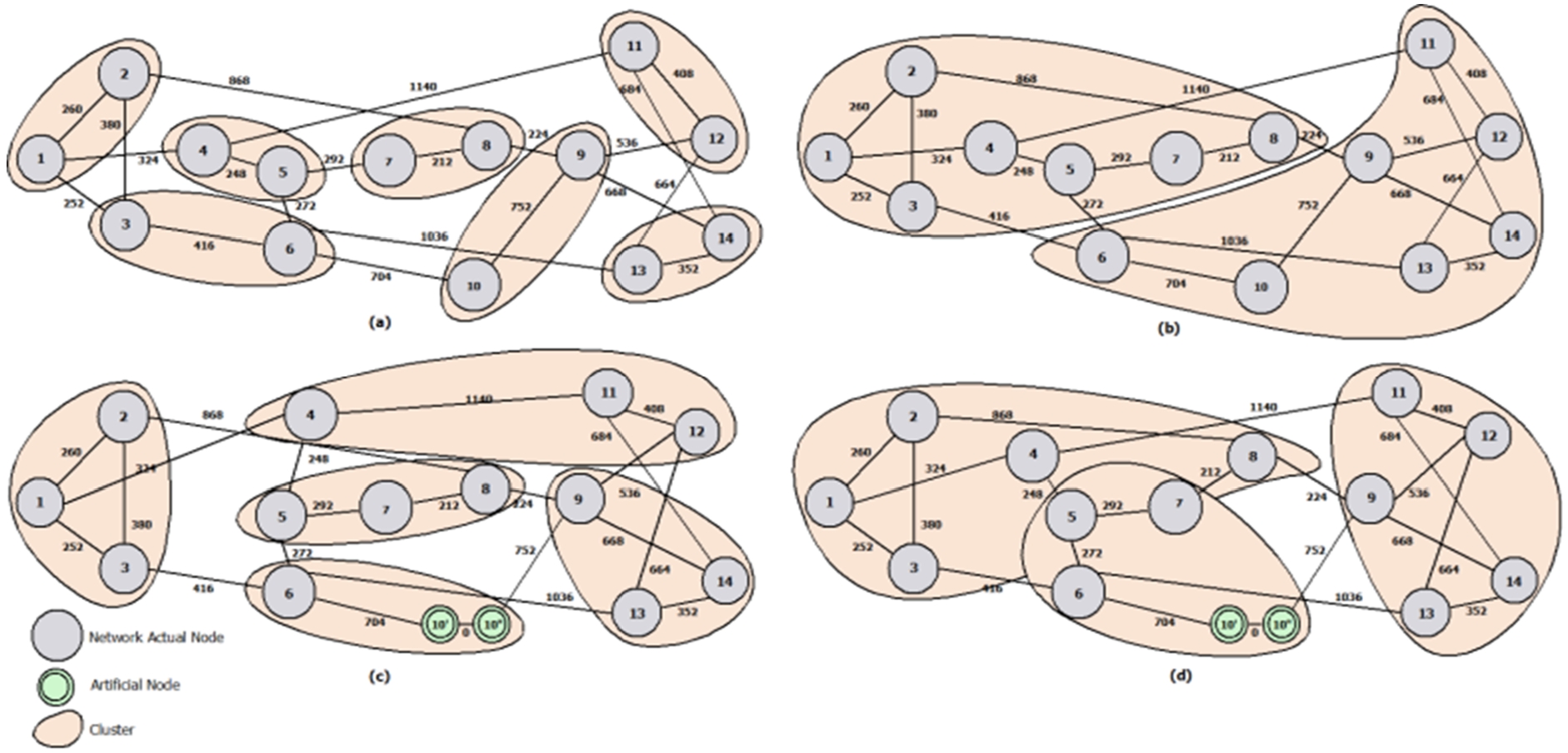

Cluster Formation: Cluster Sizes of: (a) 2 Nodes, (b) 7 Nodes, (c) 3 Nodes using Artificial Nodes, and (d) 5 Nodes using Artificial Nodes.

The attained results demonstrate how network nodes are selected to form clusters of various sizes to minimize power consumption. Each node in a cluster retrieves video content from caches of nodes that belong to the same cluster. Figure 4 (a) and (b) show the formation of 7 clusters of 2 and 2 clusters of 7 nodes, respectively. Given that cluster sizes are equal, the original NSFNET topology of 14 nodes is used to obtain the clusters. For cluster sizes of 3 and 5 nodes, the network topology is required to have 15 nodes. Using artificial nodes, node 10 is replaced with its artificial copies 10` and 10``, and the resulting topology has 15 nodes. Figure 4 (c) and (d) show the formed clusters of 3 and 5 nodes, respectively. Observing Fig. 4 (c), the optimum solution that guarantees minimum power consumption is to form clusters of 3 nodes with the exception of nodes 6 and 10, which form a cluster of 2 (actual) nodes on their own. Similar observations can be obtained from Fig. 4 (d), where 2 clusters of 5 and a third cluster of 4 (actual) nodes are arranged.

To form cluster sizes of 4 nodes, the artificial nodes technique is further utilized by replacing node 7 with its artificial copies 7` and 7``, and the resulting NSFNET topology is of 16 nodes. Figure 5 (a) shows the optimized cluster formation of 4 clusters of 4 nodes. Note that this configuration implies that the minimum power consumption is achieved by 2 clusters of 4 nodes and 2 clusters of 3 (actual) nodes. The NSFNET topology of 16 nodes is employed to attain 2 clusters of 8 nodes and 8 clusters of 2 nodes, and the resulting cluster formation is similar to that in Fig. 4 (a) and (b). It is observed that the model always arranges both artificial copies of a node in the same cluster. This is plausible due to the fact that the distance between a node and its artificial copy is 0 kilometers, resulting in transmission power consumption between the two nodes being the minimum.

Cluster Formation using Artificial Nodes under Cluster Sizes of 4 Nodes: (a) The Optimum Formation Over 24 hours (b) The Optimum Formation at 18:00.

It is worth mentioning that the illustrated formed clusters are the optimum over 24 hour duration with respect to overall network traffic. Nevertheless, at some point in time and with respect to network traffic at that time of day, an alternative cluster arrangement might be the most power efficient. In Fig. 5 (d), we show that the optimum cluster arrangement at time 18:00 is different from the overall optimum formation shown in Fig. 5 (a).

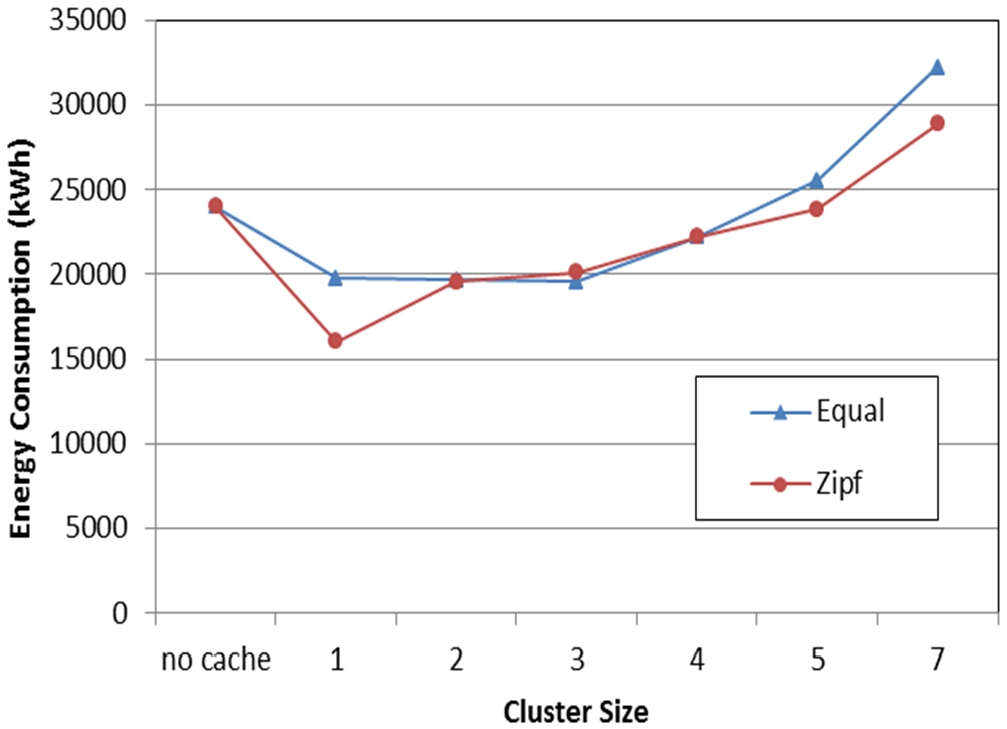

The popularity distribution of video content determines the optimum number of nodes each cluster should have to minimize network power consumption. This is due to the fact that content popularity distribution controls the relationship between the cache size and its hit ratio. Consequently, different popularity distributions influence the amount of traffic passing through the network. This results in different power savings on storage and transport achieved under each popularity distribution. In this evaluation, two content popularity distributions are assumed: a heavy-tailed Zipf distribution having a few popular videos at the top of the distribution, and a large number of less popular content. 10000 videos are assumed where the popularity of the most popular video is 0.068. Additionally, a distribution where all videos are equally popular is assumed. This Equal distribution assumes 2000 videos where the popularity of the most popular video is 0.0005.

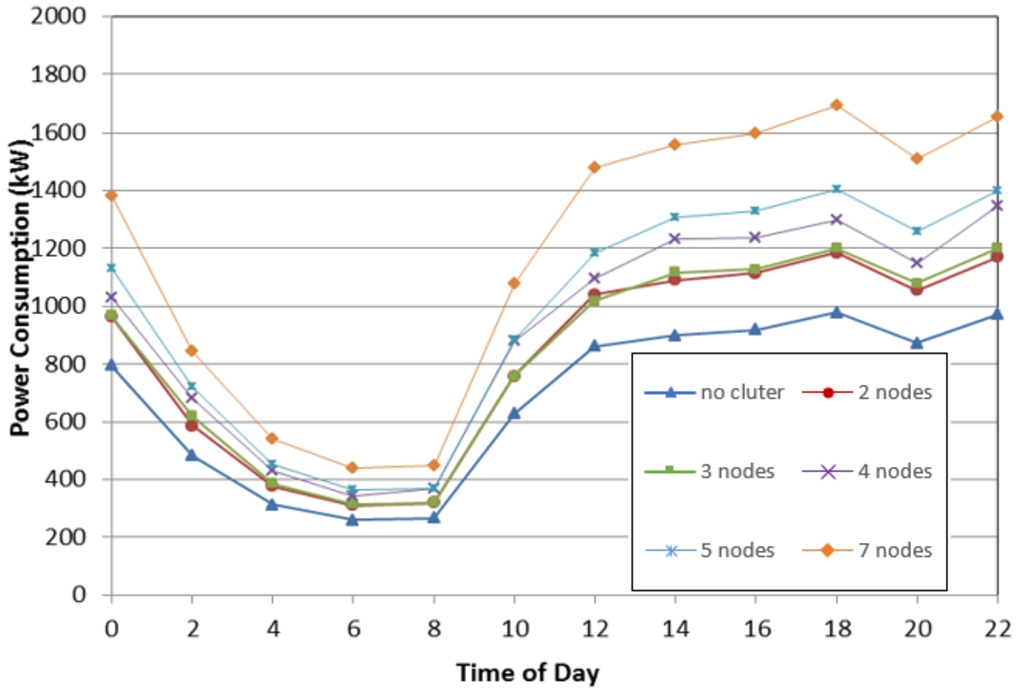

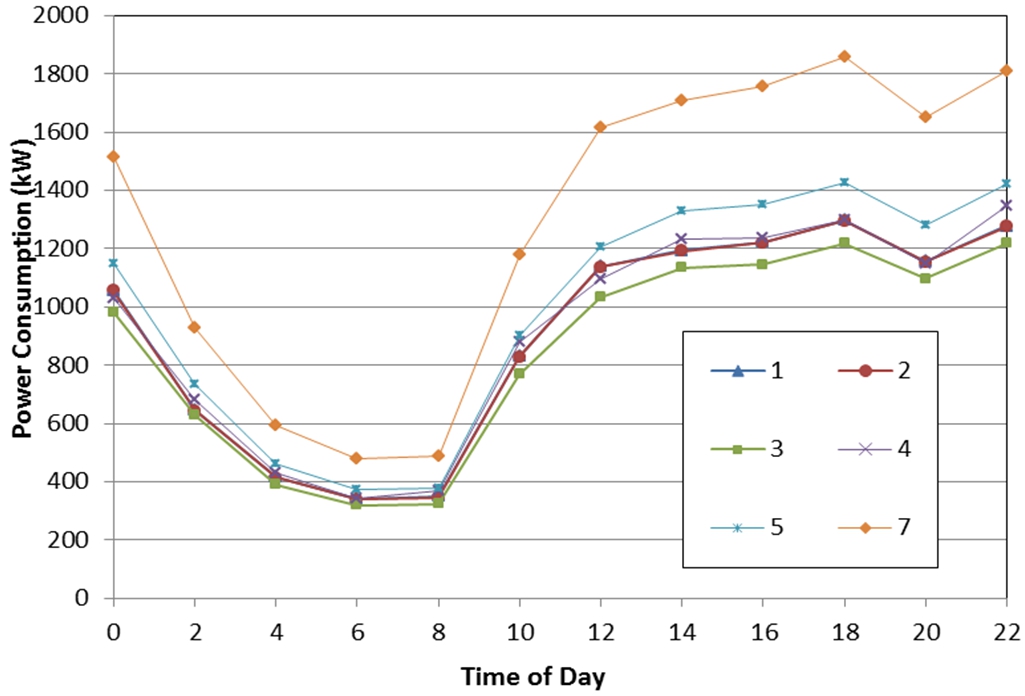

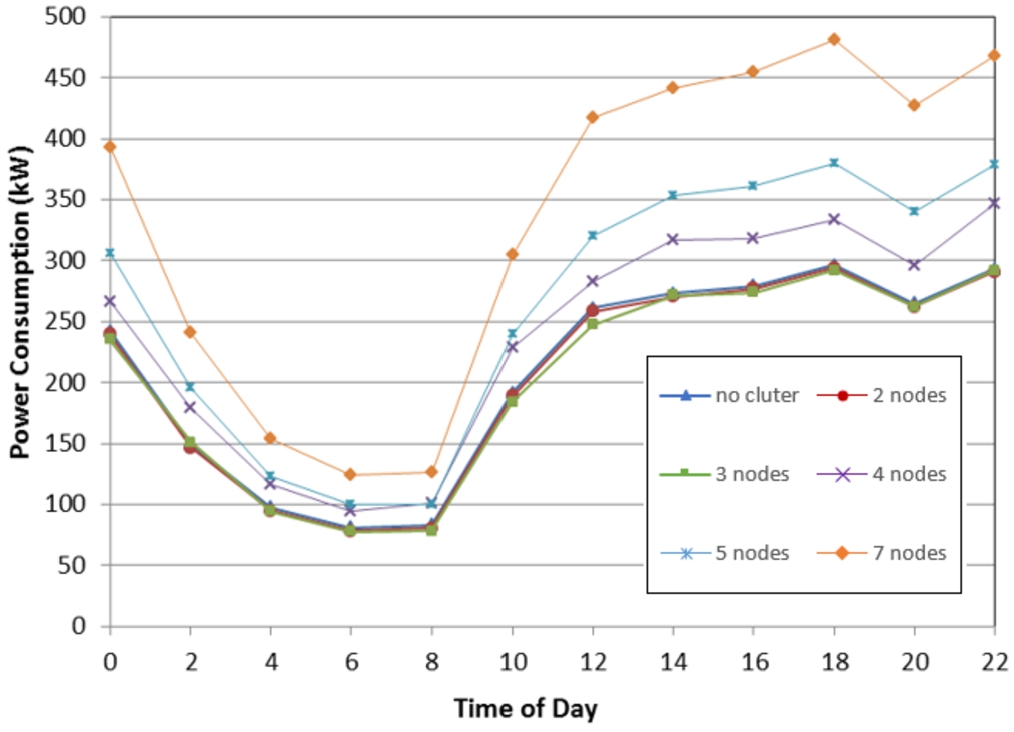

With no cache collaboration, each node retrieves videos from the cache deployed at the node. Videos that are not found in the cache are downloaded from the origin server. A Zipf distribution with relatively more popular videos can achieve higher cache hit ratio compared to an Equal distribution. Moreover, the influence of cache collaboration on cache hit ratio is negligible, not compensating for the increase in energy consumption on intra-cluster communication. Thus, as seen in Fig. 6 clustering under Zipf distribution results in increasing energy consumption, and the least energy is consumed when no cache collaboration is allowed.

The power consumption of the network under Zipf distribution and different cluster sizes.

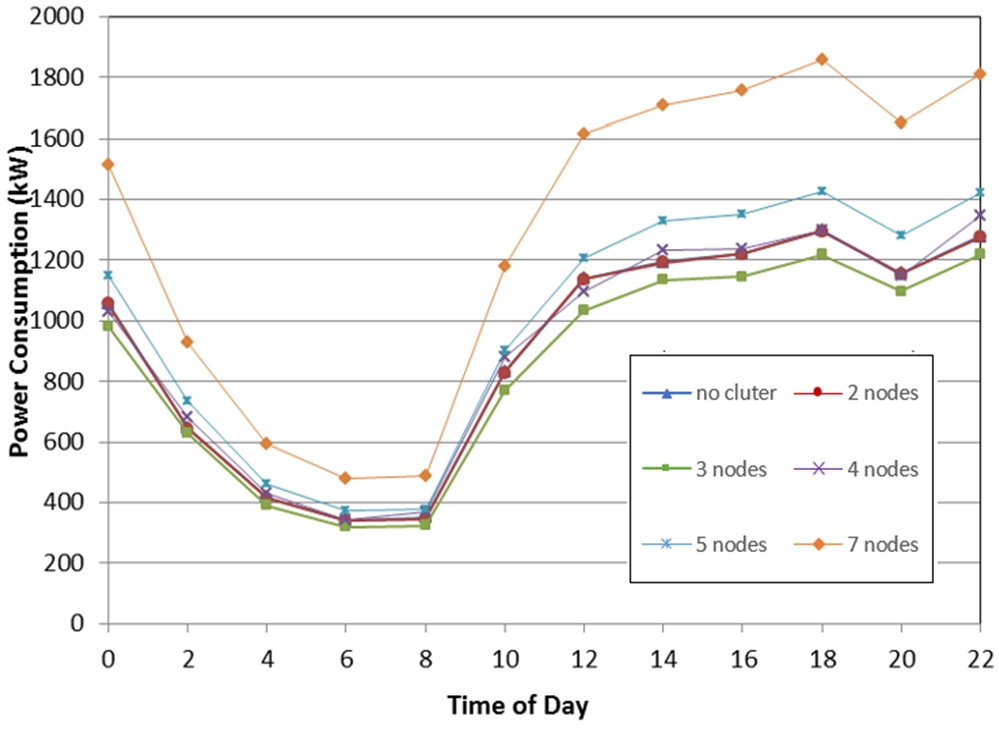

The power consumption of the network under Equal distribution and different cluster sizes.

The energy consumption of the network under Zipf and Equal distributions and various cluster sizes.

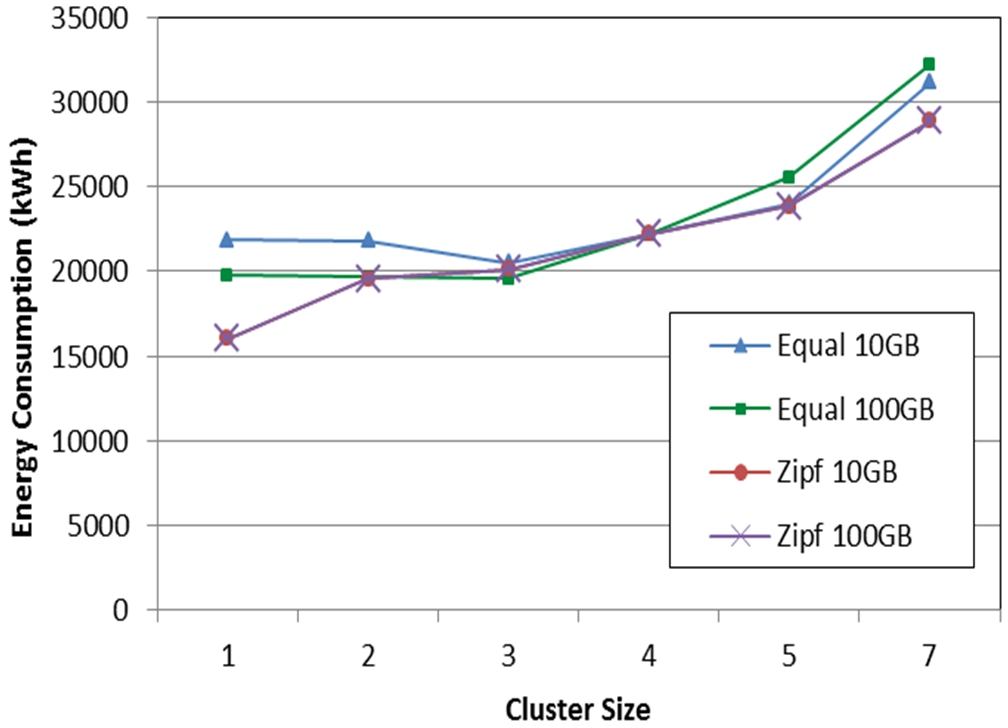

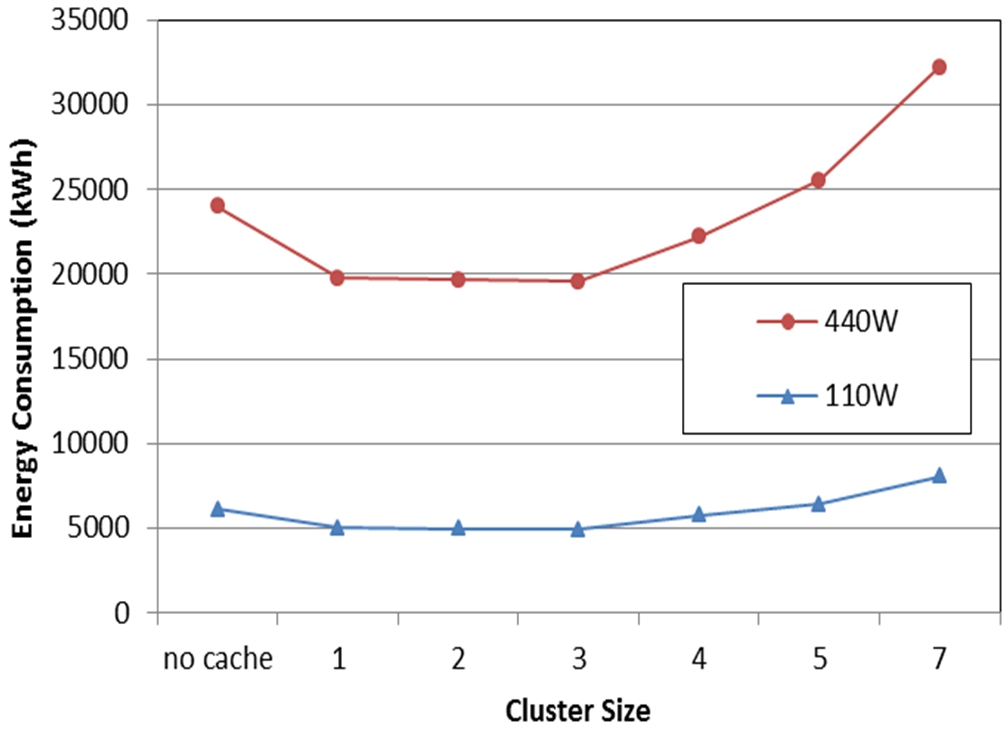

On the other hand, content of equal popularity is stored in each cache in the network. Collaboration among a number of caches leads to a significant increase in cache hit ratio. Higher cache hit ratios imply less traffic being requested from remote servers, however increases intra-cell communication. Savings in energy consumption due to increased hit ratios are contrasted with the increase in energy consumption due to delivering video from neighbor nodes. The net effect of this tradeoff decides the optimum cluster size. Figure 6 shows the power consumption of the Equal distribution under different cluster sizes while Fig. 8 demonstrates the network energy consumption under the two popularity distributions. Observing Fig. 8, when no cache collaboration is allowed, more energy is consumed under the Equal distribution, as cache hit ratios are smaller under an Equal distribution compared to the Zipf distribution, reducing traffic on the long path from servers to nodes. As cluster sizes increase, the resultant cluster hit ratio increases when the Equal distribution is used (0.75, 3-node clusters), exceeding that of the Zipf distribution (0.54, 3-node clusters), reducing consequent energy consumption. The optimum cluster size depicted in Fig. 8 is 1 and 3 under Zipf and Equal distribution, respectively. Utilizing larger clusters, cache hit ratios reach 1 under an Equal distribution. However, inter-cluster communication significantly increases which consequently increases energy consumption. Maximum savings in energy consumption introduced by cluster-based collaborative caching under Zipf and Equal popularity distribution are 34.3% and 21.3%, respectively.

In order to evaluate the influence of the size of deployed caches, the energy consumption of video delivery is evaluated using 10 GB and 100 GB caches under the two utilized popularity distributions. Under the Zipf distribution, increasing the cache size does not have a significant improvement to the hit ratio, since the most popular videos are stored in caches first. Other videos that populate the extended part of the cache are less popular. Therefore, and as can be seen in Fig. 9, the energy consumption of the network is similar for both cache sizes. The additional energy consumed in storage is negligible, and cache collaboration is still not recommended. Maximum energy savings under the Zipf distribution due to caching are 32.2% and 34.3% when 10 GB and 100 GB caches are deployed, respectively.

Doubling the cache size under the Equal distribution doubles its hit ratio. This leads to reducing the amount of downlink traffic which reduces energy consumption. Under each cluster size, the energy consumption is less when 100 GB caches are deployed. In terms of the optimum cluster size, Fig. 9 proves that the optimum cluster size remains 3. Maximum energy savings of 17.2% and 21.3% are achieved when deploying 10 GB and 100 GB caches, respectively.

The energy consumption of the network under Zipf and Equal distributions employing 10 GB and 100 GB caches.

The power consumption of the network under Equal distribution with 1 server.

The power consumption of the network under Equal distribution with 14 servers.

The energy consumption of the network under Equal distributions with 1, 7 and 14 servers.

It is apparent from attained results that the size of deployed caches influences power consumption by varying the cache hit ratio which directly impacts the amount of download traffic. Increasing cache sizes reduces network energy consumption. Nevertheless, varying the size of deployed caches has no influence on the optimum cluster size.

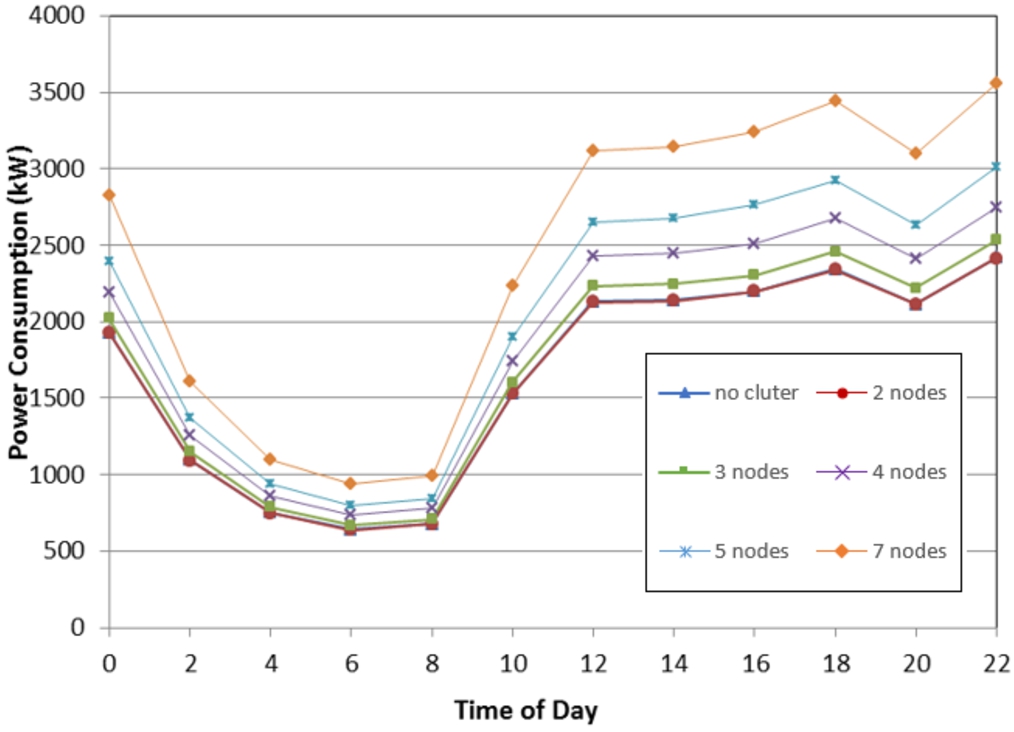

Video files located in servers are downloaded to all requesting nodes in the network. To investigate the influence of downlink traffic behavior on the energy efficiency of cache clustering, the evaluation is implemented by assuming three scenarios where videos are located in: 1) 1 server, where data has a single source, 2) 7 servers and 3) 14 servers which implies that all nodes request videos from all nodes, as the network topology contains 14 nodes. The goal is to investigate whether cache collaboration saves energy when the number of traffic sources increases.

The results are illustrated in Fig. 10 throughout Fig. 12 and compared to those in Fig. 7. Figure 10 and Fig. 11 show the power consumption of the network under an Equal popularity distribution where video traffic is downloaded from 1 and 14 servers, respectively. Figure 12 shows network energy consumption having 1, 7 and 14 servers. When videos originate from a single server, a single path is required to download videos from the server to the node. Clustering increases the number of links connecting cluster nodes, which increases energy consumption. As a results, the optimum cluster size having a single server decreases to 2.

When the number of servers is 7, forming clusters of small sizes (2 and 3 nodes) reduced energy consumption by maintaining a limited number of neighbor nodes while limiting the need to download videos from the many scattered servers. The optimum cluster size is 3 as shown in Fig. 7. As the number of origin servers increases, data is downloaded from all these servers. Therefore, the number of links established between servers and nodes increases as well. These links are used to download part of the traffic demand which is not served by caches. It is therefore beneficial to make use of link capacity in retrieving most requested data instead of establishing additional communication with cluster nodes, as this also consumes energy in transport. The optimum cluster size with 14 servers is 2. Observing these results, the cluster sizes that attain the most energy efficiency remain small regardless of the number of content origins. Maximum energy savings under 1, 7 and 14 servers are 11.6%, 21.3% and 9.8%, respectively.

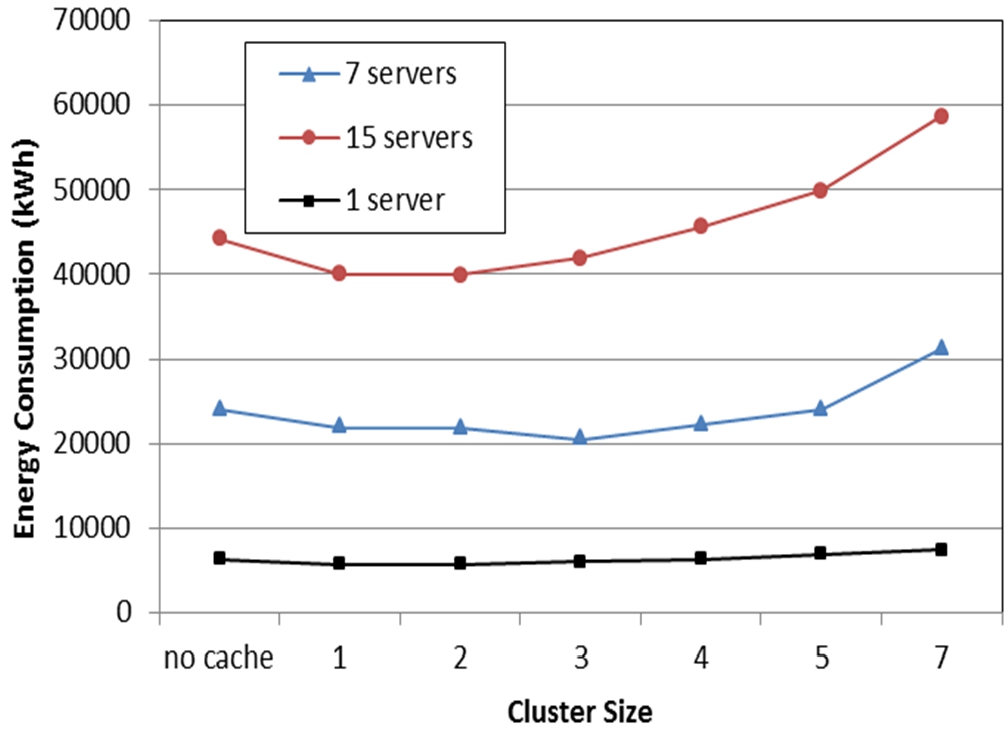

The influence of power consumption parameters

The power consumption of the network under Equal distributions when the power consumption of router ports is 110 W.

The energy consumption of the network under Equal distributions when the power consumption of router ports is 110 W and 440 W.

The network component that consumes the most energy in the IP over WDM network is the router port, which is 440 W as depicted in Table 4. An important direction in hardware technology improvement is to produce power efficient equipment. The power consumption of a CRS-1 16-Slot port has dropped from 1000 W [28] to 440 W [16] within a few years. Here, the model is evaluated assuming an optimistic power consumption value for router ports, which is 25% of the current value. Figure 13 shows the power consumption of delivering videos from 7 servers having an Equal distribution when router ports consume 110 W. Figure 14 contrasts the energy consumption of this network with the energy consumption when router ports consume 440 W. The energy consumption of the network significantly reduces when router ports consume 25% of power. Energy consumption curves follow the same trend. The optimum cluster size in this scenario is also 3, indicating that the change in dominant power consumption parameters does not influence the optimum cluster size. Maximum savings in energy consumption achieved by cache collaboration are 21.3% and 21.8% when router ports consume 440 W and 110 W, respectively. These results prove that the network energy consumption significantly reduces when power-efficient network component are utilized. Nonetheless, savings in energy consumption introduced by cache collaboration are not influenced by power consumption parameters. Table 5 summarizes optimum cluster sizes and energy consumption savings under the Zipf and Equal distributions demonstrating all evaluated scenarios.

Optimum cluster size and maximum energy savings of clustering for different parameters

This paper proposed energy-efficient cluster-based caching in an IP-over-WDM core network. A MILP model minimizes power consumption by finding the best cluster sizes. The paper also investigates the influence of content popularity distribution, sizes of deployed caches, the number of content sources and power consumption parameters on energy consumption. Results revealed that for each cluster size, there exists an optimum cluster arrangement that minimizes energy consumption. This arrangement depends on physical distances and the amount of download traffic. Cache collaboration is more suitable when objects have similar popularity such as under an Equal distribution. In addition, it is considered more energy efficient to minimize cache collaboration when content popularity follows a heavy-tailed distributions such as a Zipf distribution. Sizes of deployed caches and power consumption parameters influence the energy efficiency of the network; however, they have no influence on optimum cluster sizes. The number of servers from which content is downloaded has a slight influence of the optimum cluster size. Having a single server or a network where servers are located in all nodes should utilize smaller clusters compared with a network with an average number of servers. It is concluded that even though cache collaboration in the core network reduces energy consumption under different scenarios, it is recommended to maintain clusters of small sizes so that intra-cluster communication is minimized. For future work, we plan to investigate the influence of network topology average hop count on the energy efficiency of collaborative caching in core networks.

Conflict of interest

The author has no conflict of interest to report.