Abstract

The number of published findings in biomedicine increases continually. At the same time, specifics of the domain’s terminology complicates the task of relevant publications retrieval. In the current research, we investigate influence of terms’ variability and ambiguity on a paper’s likelihood of being retrieved. We obtained statistics that demonstrate significance of the issue and its challenges, followed by presenting the sci.AI platform, which allows precise terms labeling as a resolution.

Keywords

Key objectives of the study and its significance

Over the last two decades, life sciences articles have become substantially more complex, reflecting technological evolution, particularly OMICs experimentation, increasing cooperation between multiple institutions, and involving more advanced math and statistics applied to the data. In many publications, plain unstructured text is supported by algorithms, code, and multiple files of processed and raw datasets with annotated metadata and graphs. With such enhancements in place, experimental articles, per se, might become a driving force of the Literature Based Discoveries (LBD) [10]. Recently, the whole field of “meta-analysis” has arose to describe “dry lab” studies on normalization, unification, and analysis of many similar datasets derived from different labs and projects. However, a number of experimental papers are missed, because they cannot be retrieved from the body of literature by keywords search. Needless to say that scientists are keenly interested in higher discoverability of their published research and referencing to their findings, as citation index becomes an increasingly prevalent metrics in evaluation of their work. The issue can be addressed with proper semantic labeling of the texts as the very first step in global analysis of the research reports.

The state-of-the-art section reflects the ways published papers are algorithmically processed in text mining applications. Such computations rely on preexisting, statistically supported information, while text mining of the scientific literature targets novel findings. This leads to Information Retrieval (IR) and then Information Extraction (IE) underperformance when applied to scientific literature.

The objective of the paper is to consider just one issue of many in biomedical texts processing: false terms recognition caused by ambiguity of the concepts’ names and multiple-terms spelling variants. This leads to at least two undesirable effects:

Lower recall rate when search engines and aggregators retrieve articles, so the target audience does not receive a full set of relevant papers.

Retrieving a paper that is irrelevant to the sought-for concept. For example, reader can query ‘cat’ with the ‘cat’ animal in mind but receive texts about the ‘CAT’ gene.

We address: A global need of initial transformation of the plain text to a machine-readable format; and uncertainty issue mentioned above;

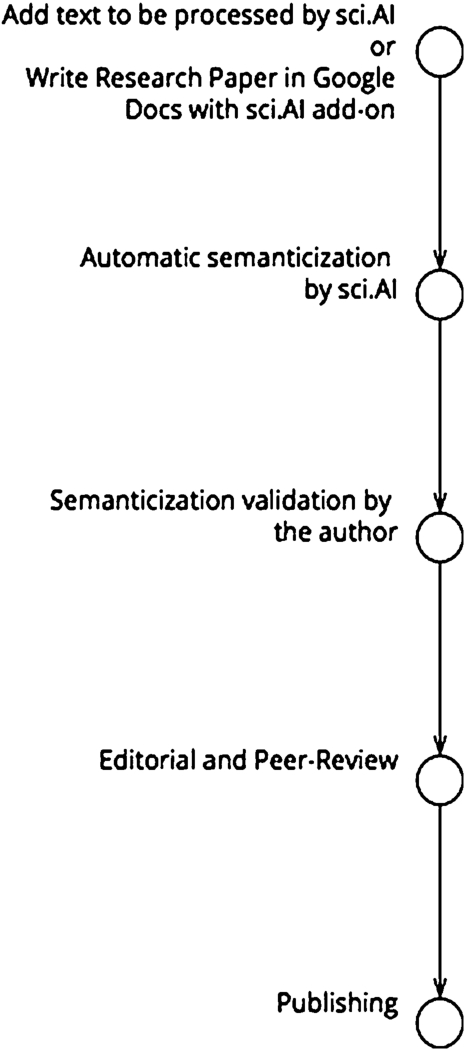

by releasing the sci.AI system. This system combines automatic metatagging and manual validation of the results by the author or reader and supports generating semantic structures during writing and editorial processing. Human validation eliminates almost any possibility of term misinterpretation in the following IR and IE tasks, because authors can be expected to have a comprehensive understanding of the concepts being mentioned in their papers and can supervise the machine’s results.

Computational Linguistics is one of the most dynamic fields with innovations being released almost monthly. Unfortunately, there is no solid state-of-the-art solution for biomedical text labeling yet that unites all the latest advances in each subfield into a single package.

The first subfield is metatagging standards and paradigms. Semantic Web’s objects, concepts, knowledge association, and data representation utilize schema.org vocabularies and W3C RDF/XML [11]. A current limitation is the lack of a similar single schema for the life sciences. Former related initiatives here are W3C Scholarly HTML [13] and JATS4R [8]. Still both schemas do not provide a standard namespace for biomedical concepts labeling.

The second subfield is terms labeling or Named Entities Recognition.

Just as in many other areas, deep learning and neural networks (NN) methods are increasingly popular for extracting information from professional texts [1,20]. NN algorithms are rather generic and can be applied for the text analysis in unsupervised fashion (i.e., to a variety of texts without establishing prior rules generation). However, its precision and recall hardy depend on statistical data and cannot be considered as stable solution for concepts and challenges that have appeared recently. Still, NN demonstrates the highest recognition rates [6,12] among automatic methods.

Then there are methods of increasing precision by reducing concepts ambiguity by connecting the same concepts in various ontologies.

UMLS (Unified Medical Language System), in combination with MetaMap, provide graph-like links between objects from various ontologies, as widely-accepted solution for the Word Sense Disambiguation (WSD) task in the biomedical domain. Essentially, UMLS represents a metaontology of biomedical terms and concepts. UMLS is extensive and well supported by NIH, and it is in constant development. Future considerations include possibly connecting this data to the sci.AI application. Currently, there are several limitations:

Lack of details for specialised ontologies, such as Uniprot and ChEBI.

Focus on the indexing task for the NCBI. This leads to the same dropdown in precision and recall of post-publication text processing.

It is not a simple plug-and-play solution for the publishing industry [2].

SciGraph by the Neo4j [15] framework allows objects to be interconnected and can be used as a technical basis for future metaontologies.

Resolving terms’ ambiguity and variability represents a significant challenge in text processing. Here, we investigated how these factors affect the paper’s influence. Such causality is assumed based on the logic that findings described in the paper can be reused and cited – only if the paper will be discovered by the readers first. To model paper’s influence potential mathematically, we defined Paper’s Influence as a function of a variable we called Discoverability.

The paper’s potential for influence greatly depends on how accurately search engines and aggregators solve the IR task. For further explanation, we will continue with our query scenario from above. A reader is discovering the paper about animal ‘cat’ after querying string

If we assume that a reader will read the paper if the search engine returned it in response to the query

As long as such cases could be found across biomedical terminology, when concept can have several synonyms (variable terms) or single term can refer to several concepts (ambiguous terms), probabilistic precision and recall can be calculated based on the numbers of possible outcomes when querying

We can estimate chances of such event using a basic definition of the probability as the ratio of the number of favorable outcomes to the total number of possible outcomes. Term’s ambiguity and variability define those numbers of possible outcomes. Finally, when we know precision and recall of the paper’s retrieving while searching for the concept

How many papers out of all existing literature about concept

How many papers out of retrieved and containing terms from

This means that precision for the specific concept does not depend on the number of variants, as long as we assume that all variants are describing the same concept in the event.

There is term

Did we receive all papers containing term

What is the overall probability of retrieving a relevant paper for the concept

This means a probability of two independent events:

If operating only with the number of variants per concept, then prior probability of variants occurrence can be approximated as uniformly distributed, as long as actual frequency of terms occurrence in the papers will be retrieved in the next steps. This means that, in the first approximation,

As long as the

In order to estimate influence of the existing terms’ uncertainty on the papers discoverability, we have searched for:

homographs across Uniprot, ICD-10, ChEBI, MeSH, Drugbank, and Gene Ontology databases;

possible spelling variants for the same objects;

actually used terms’ variants in the 26782464 Pubmed, 26404 Bioline and 5426 eLife papers.

MeSH Categories G–Z were not analysed because they contain generic objects, such as countries’ names, which are out of scope of sci.AI semanticization for now.

Our research is ongoing and the latest results can be found on the sci.AI webpage [14].

Variability in the ontologies and influence on paper’s recall

Ambiguity in the ontologies and influence on paper’s precision

We had not only considered synonyms that exist in the ontologies but also created a rules-based term variant generator (TVG) to cover a case when the same object, Uniprot [P01375], might be written as “TNF alpha”, “TNFa”, or “TNF

orthographic;

abbreviations and acronyms;

inflectional variations;

morphological variations;

Table 1 shows average number of original terms’ synonyms and how much variants were generated. Then we’ve searched for them in the papers. There is increase of the concept detection of 2.03 – 3 times more when searching for all variants.

Table 2 shows how much objects has terms with identical spellings, i.e. ambiguous terms. Higher overlap within the same ontology than across other ontologies makes algorithmic recognition even more challenging tasks, because algorithms have to distinguish objects within the same class.

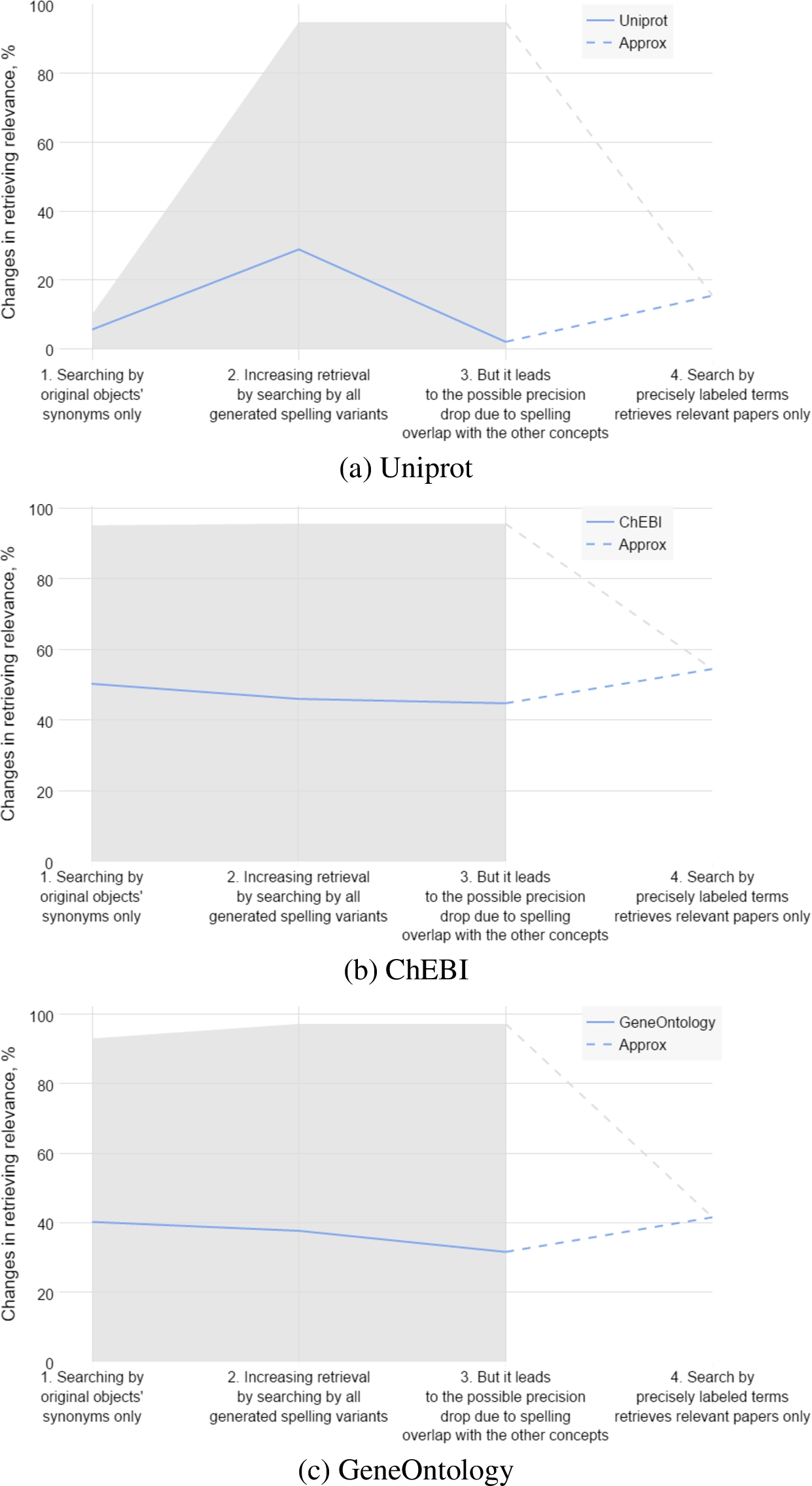

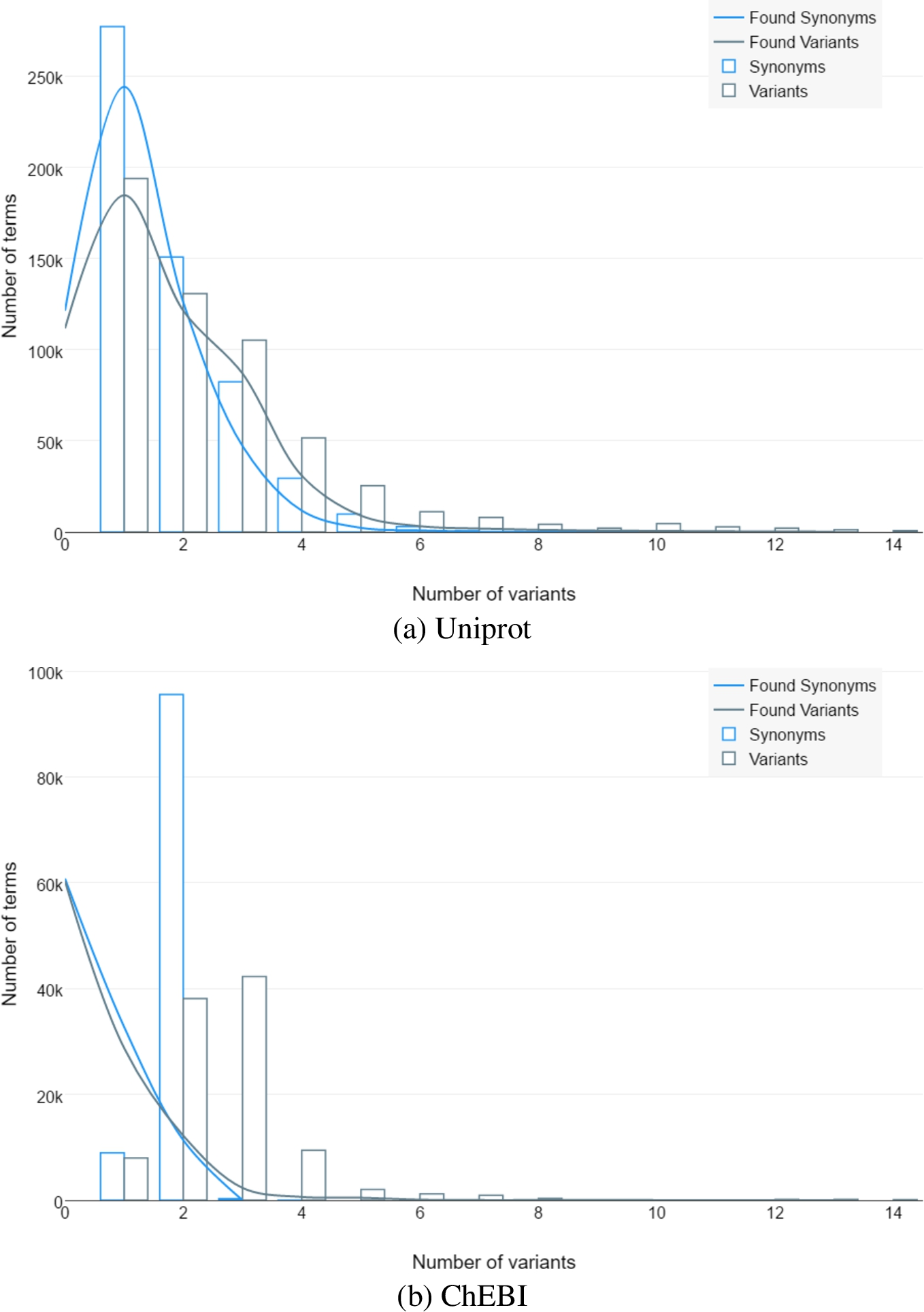

Figure 1 shows overall influence of the variability and ambiguity of the terminology on paper’s discoverability.

Influence of the terminology variability and ambiguity on a paper retrieval when text contains proteins, chemical elements, genes, drugs, diseases.

(Continued.)

When searching by original synonyms only, average likelihood of finding papers is lower than searching by all possible variants. Retrieving higher amount of the papers can be done at the cost of their relevance. Increasing amount of variants leads to the drop of the probabilistic precision. Relevance can be guaranteed only in case of labeling terms and searching by exact ID instead of a string. As long as current literature is not labeled, exact recall and precision can’t be calculated for. We used relative changes instead, to visualize scale of the issue across most of the available literature.

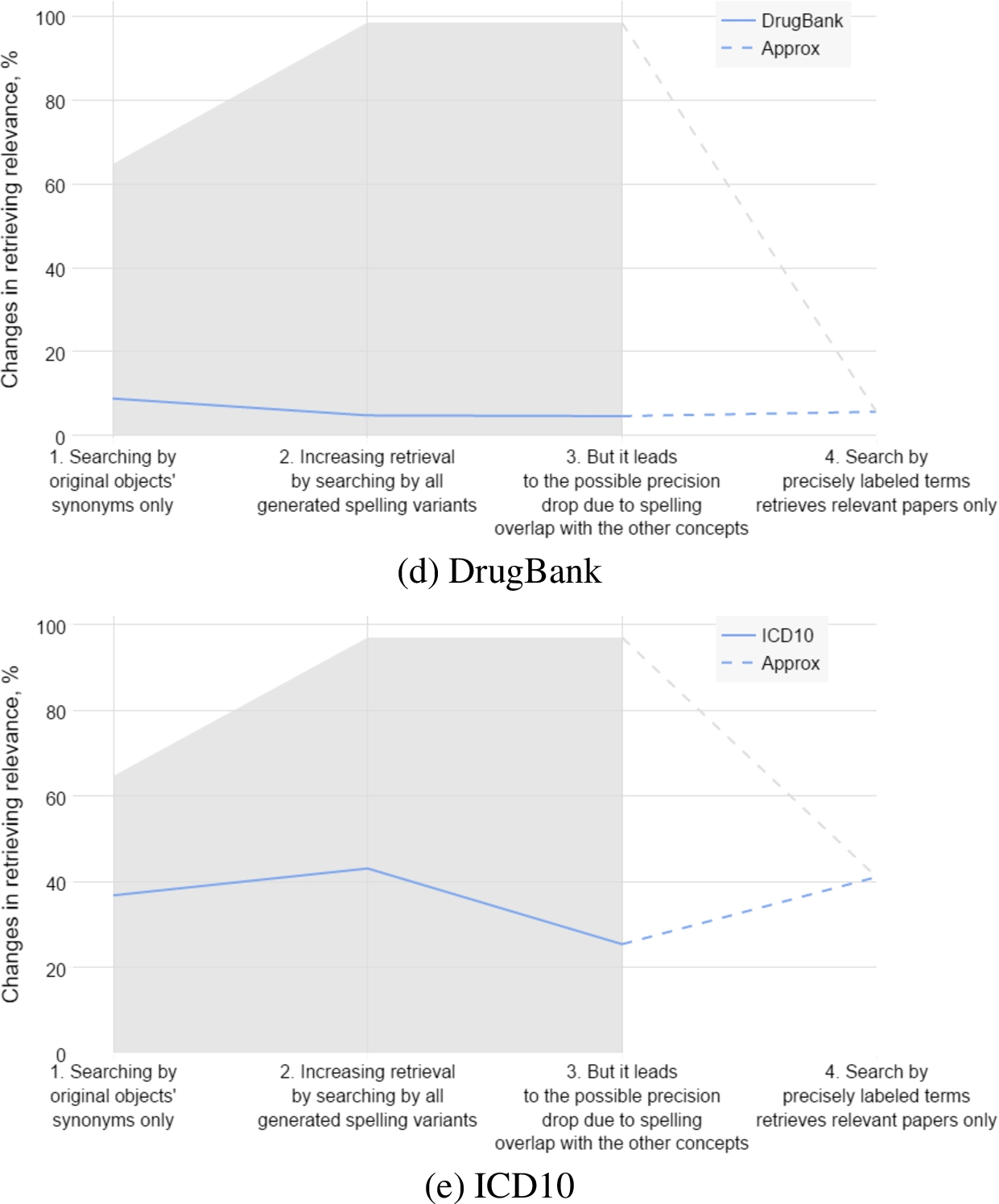

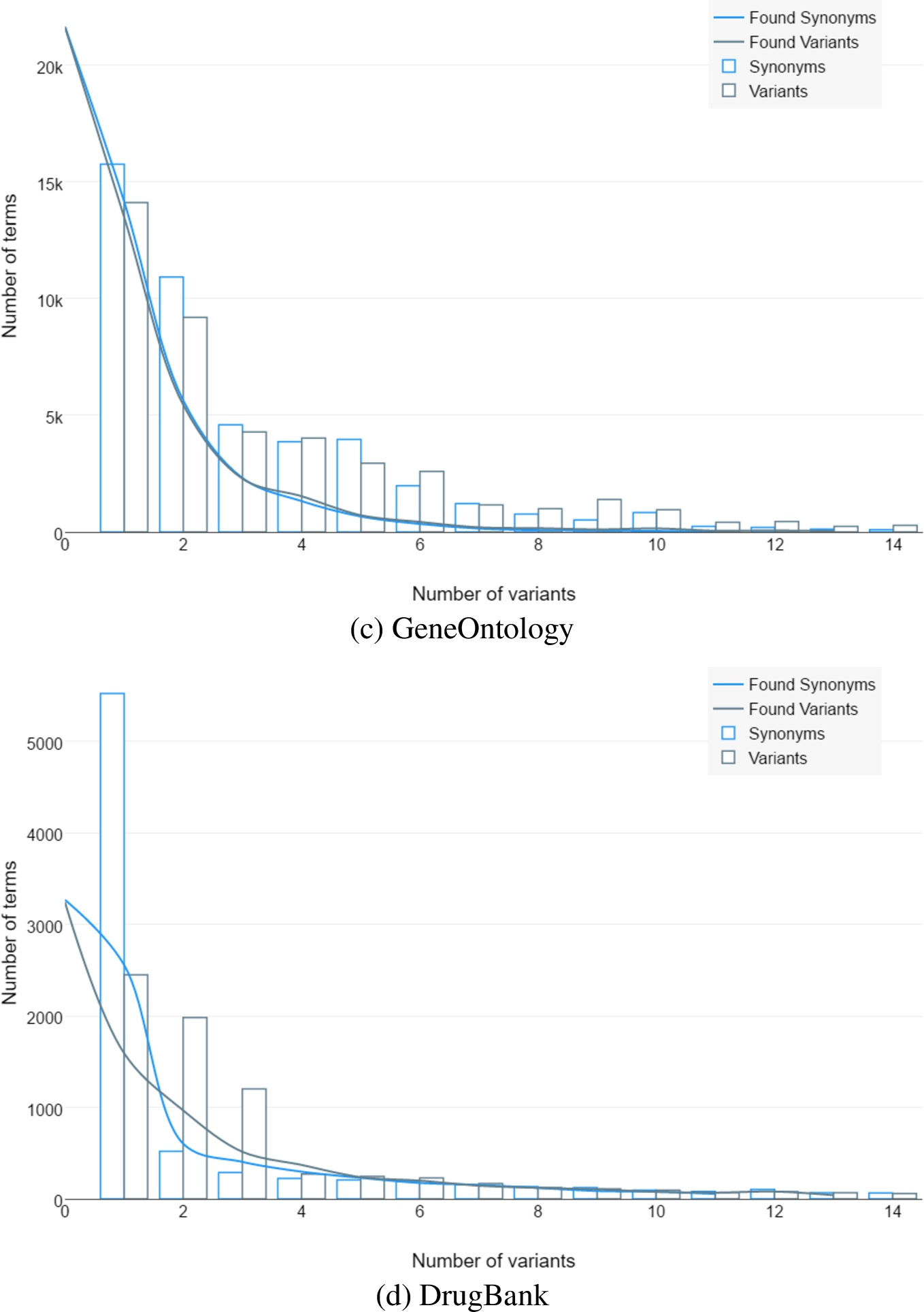

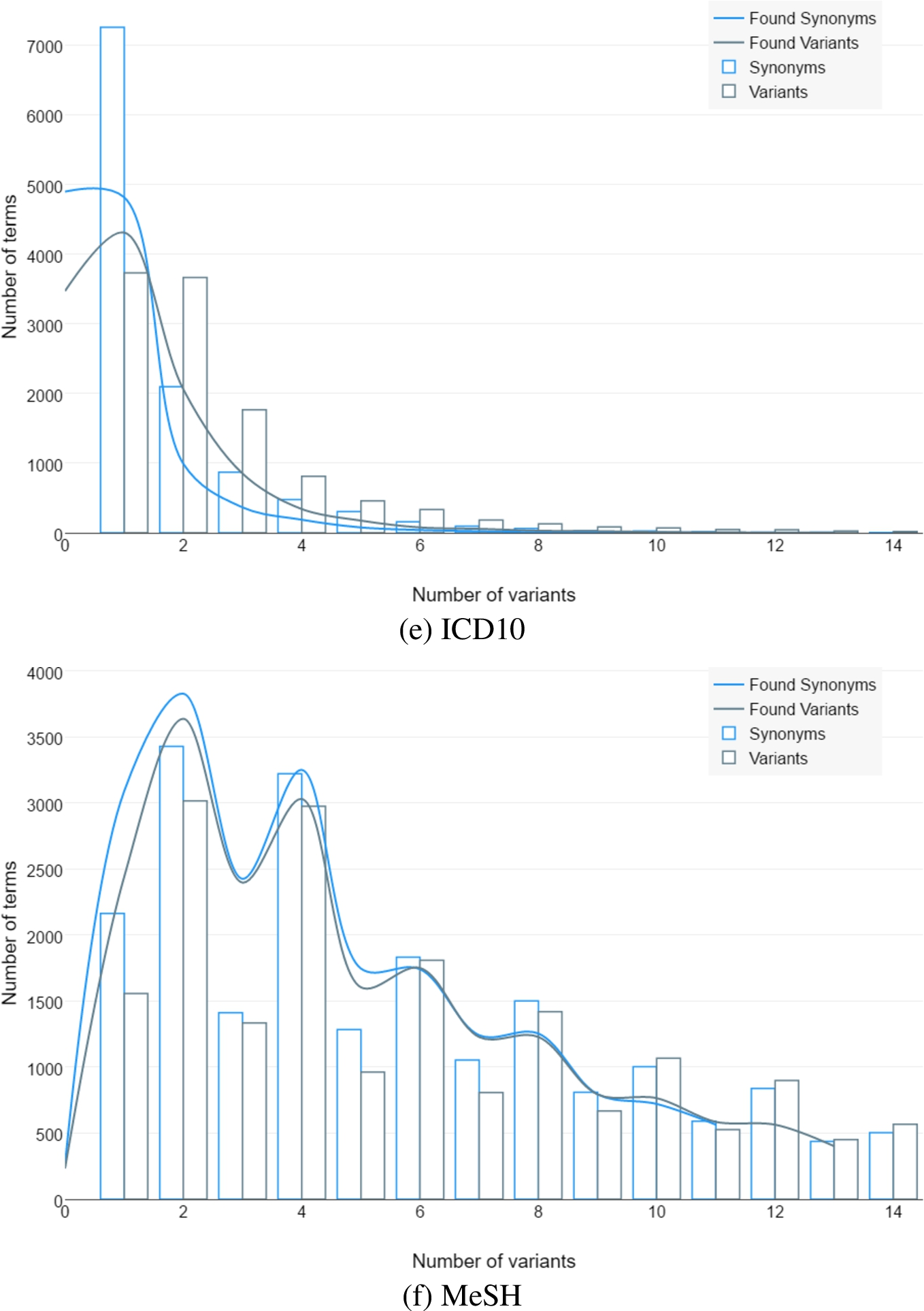

Figure 2 shows the distribution of the variability across ontologies.

Number of objects that have specific amounts of original synonyms, generated variants and variants found in the papers.

(Continued.)

(Continued.)

There are original synonyms in the ontologies, generated variants and how much of them were found in the papers. It shows that there are significant chances to found term’s spelling that was never mentioned in the ontology. Thus, it reduces recall of the relevant papers.

We were going to use the MSH WSD Data Set [19] initially for the ambiguity testing purposes, but it turned out to contain generic words only. So, we performed a generic wide search across all ontologies and variants to obtain low-level detalization.

There is also a case of “artificial” ambiguity in ontologies. It is caused by intersection of alternative names and terms’ descriptions in attempt of extending variants to increase recall. “Carbon monoxide” example is provided in the Table 3.

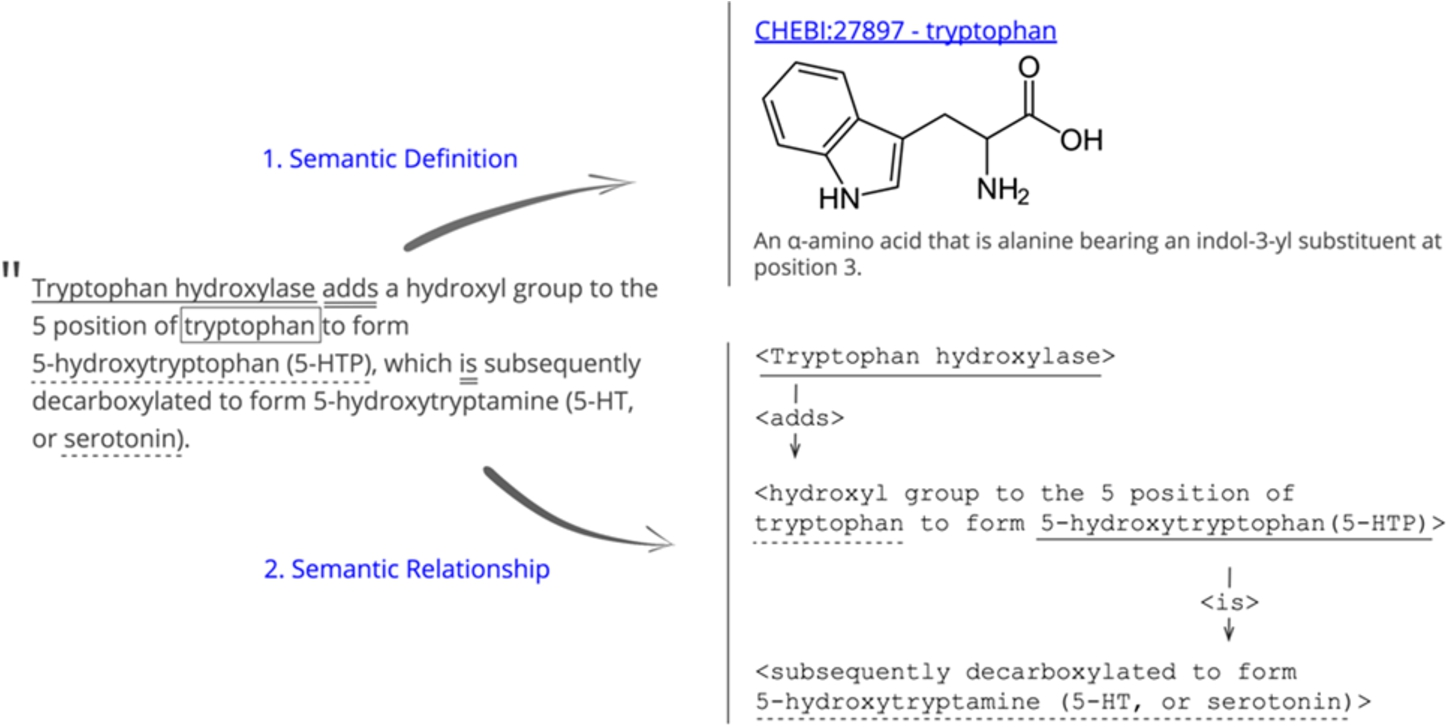

This leads to the necessity of human validation of the same concepts identification. Such functionality exists in sci.AI to validate several ID’s from the various ontologies for the same term and relationships identification (Fig. 3).

Formalization and statistics above show that uncertainty is not an exception but basic feature of the biomedical text mining. This uncertainty might lead to significant deviations when interpreting academic papers with unsupervised methods only. While it might be acceptable for fiction literature mining, because the major task there is context and sentiments analysis that acts as a smoothing function – such uncertainty might contradict goals of mining STEM research communication, where we are looking for the anomalistic or novel discoveries, exact objects interactions, verification of facts, and relations between statements in various texts. This is why accepting uncertainty might have significant negative consequences on the LBD.

In order to address this issue, we implemented the sci.AI platform that has supervised labeling functionality on top of the text mining framework. After initial automatic terms recognition, no matter whether precision is 70% or 99%, users can make final verifications to level up recognition precision to 100%. From the perspective of the search and text mining algorithms, this means removing any uncertainty, which, in turn, leads to exact papers extraction in an SQL-like querying manner. Thus, assuming that author will always label terms correctly, maximum precision and recall will be achieved.

Human-made corrections will be used as training data for the next processings of a text. Such learning with human feedback provides steady path to gradient growth of text mining quality.

Current version of the sci.AI allows to upload text, then performs Named Entities Recognition (NER) task automatically. Author or annotator can validate labeling results via interface and export final structured text to the XML file (Fig. 4).

Development roadmap includes release of the next features:

Machine Learning based analysis to provide the most likeable variants in the first place. This feature will be based on the (a) logs of the terms validation events and (b) statistical co-occurrences of the terms in all available texts. We expect that it will provide approximately 90% recognition rate, as reported by NN researchers [1,20]. Introduction of the NN prioritisation is expected to reduce cases of the necessary authors intervention to the reasonable minimum. Internal time tracking done by our team members suggest that 10 pages validation can be done in 30 min, approximately.

The system can be embedded into the publishing process directly. Both authors and editors can create semanticized versions during submission of even publish new version of the digital paper. Key features of the current production version of sci.AI publishing engine are as follows:

Automated metatagging of biomedical concepts (named-entity recognition and context-dependant semanticization of terms). Current tags contain links to the related objects in the ontology.

User-friendly web preprint for tags editing and recognition supervising.

Library for easy integration into the existing publishing process.

Graph based connections between objects in existing ontologies.

Generating JATS, RDF/XML and RDFa files.

Concepts interactions labeling, for example, protein-protein.

Detailed biomedical metadata generation.

sci.AI allows labeling a term with several term-2-ontologyId relationships. For example, ‘

We expect another possible positive effect for the future papers too. Authors might come to the same single spelling variant of the concept, like “ClNa” only and not “salt” or “NaCl”.

As of the middle of 2017, sci.AI is production ready semanticization and metadata generation tool for academic publishing. Roadmap plan is to become a full-fledged artificial intelligence (AI) platform applied to the life sciences for hypothesis generation and machine reasoning. Wide adoption of this application will extend the publisher’s role even further into research results delivery to the intended target audience.

Example of the objects with the same spelling

(Continued)

This paper is the first in our series of researchers about precise semantic labelling of life sciences texts. Our goal was to focus on dependency of the paper’s influence on two fundamental factors: ambiguity and variability of terms. In order to avoid excessive complication, we made several assumptions which may bias the results. These simplifications will be addressed in follow-up studies:

Prior precision estimation is calculated with assumption that probability of retrieving a paper with concept

Labeling term with several objects. Paper semanticization in sci.AI.

Prior recall estimation is calculated with assumption that probability of retrieving a paper with concept

Simplified dependency of recall from ambiguity and precision from variability.

Categories of terms variability and implementation of the terms variant generator deserve full comprehensive description in the following research.

We intended to show fundamental specifics of biomedical language that makes it is challenging to achieve 100% recognition of terms with unsupervised methods only. Still, there are various NLP approaches including metaontologies like UMLS based disambiguation and statistical methods that significantly improve terms recognition. Those methods are integrated by sci.AI development team and performance of each of them will be evaluated in separate paper.

We assumed that there is the same number of concepts and objects within single ontology.

“Human factor” was removed from consideration by assuming that author can always correctly label every biomedical concept in own manuscript. Under “precise labeling” we mean “labeling verified by the actual text’s author”.

There are several studies, where researchers propose models of the future paper’s success, for example, [9]. Future analysis might take into consideration ambiguity and variability as variables in the prediction models.

Part of speech tagging might improve precision of the variants validation. This functionality exists in the sci.AI but was not applied for the statistics calculation.

We assume that all possible spelling variants were generated. Further validation is required.

Footnotes

Examples of the objects’ synonyms and generated variants

Variants, that were found in actual papers are marked with * and DOI of one of the retrieved papers.