Abstract

INTRODUCTION:

Ultrasound in conjunction with mammography imaging, plays a vital role in the early detection and diagnosis of breast cancer. However, speckle noise affects medical ultrasound images and degrades visual radiological interpretation. Speckle carries information about the interactions of the ultrasound pulse with the tissue microstructure, which generally causes several difficulties in identifying malignant and benign regions. The application of deep learning in image denoising has gained more attention in recent years.

OBJECTIVES:

The main objective of this work is to reduce speckle noise while preserving features and details in breast ultrasound images using GAN models.

METHODS:

We proposed two GANs models (Conditional GAN and Wasserstein GAN) for speckle-denoising public breast ultrasound databases: BUSI, DATASET A, AND UDIAT (DATASET B). The Conditional GAN model was trained using the Unet architecture, and the WGAN model was trained using the Resnet architecture. The image quality results in both algorithms were measured by Peak Signal to Noise Ratio (PSNR, 35–40 dB) and Structural Similarity Index (SSIM, 0.90–0.95) standard values.

RESULTS:

The experimental analysis clearly shows that the Conditional GAN model achieves better breast ultrasound despeckling performance over the datasets in terms of PSNR

CONCLUSIONS:

The observed performance differences between CGAN and WGAN will help to better implement new tasks in a computer-aided detection/diagnosis (CAD) system. In future work, these data can be used as CAD input training for image classification, reducing overfitting and improving the performance and accuracy of deep convolutional algorithms.

Introduction

Medical image analysis plays an important role in breast cancer screening, feature extraction, segmentation, and classification breast lesions locally. There are several breast cancer detection methods, such as Positron Emission Tomography (PET) [1], Computer Tomography (CT) [2] and Magnetic Resonance Imaging (MRI) [3], which are usually used when women are at high risk of breast cancer. Other complementary techniques such as X-ray mammography [4] and ultrasound (US) [5] are more commonly used in screening programs, according to the American Cancer Society.

Among these modalities, US is used as a complementary imaging modality for further evaluation of lesions detected early by mammography due to its non-invasive nature, low cost, safety, portability, and low radiation dose. However, one of its main shortcomings is the poor quality of US image, which is corrupted by random noise added during its acquisition [6, 7], i.e. low contrast and different brightness levels, resulting in increased noise and artifacts that can affect the radiologist’s opinion and diagnosis. US images have a granular appearance called speckle noise, which degrades visual assessment [8], making it difficult for humans to distinguish normal from pathological tissue in diagnostic examinations.

Image denoising techniques, typically low-dose, address this problem [9]. The primary purpose of denoising is to restore the maximum detail of the image by removing excess noise [10], while preserving as much as possible the feature details to benefit the diagnosis and classification of benign, premalignant, and malignant abnormalities (microcalcifications, masses, nodules, tumors, cysts, fibroadenoma, adenosis, and lesions) that may be difficult to identify at first sight or early in the patient.

Thus, denoising medical images is essential before training a classifier based on deep-learning models. Recently, several US denoising techniques based on deep learning have been widely used, such as Convolutional Neural Networks (CNN) [11, 12, 13, 14], Generative Adversarial Networks (GANs) [15, 16, 17], and Autoencoders (AEs) [18, 19], which can recover the original dataset and make it noise-free with better robustness and precision [20]. Deep learning methods have obtained better results in medical imaging in comparison with previous methods such as Wavelet, Wiener, Gaussian [21], Multi-Layer perceptron [22], Dictionary Learning [23], Least Square, Bilateral Filter, Non-Local Mean [24]. Variational approaches [6, 25], because these filters have presented some limitations such as smoothing problems, more computational cost, and inability to preserve information such as edges and textures of images as well as possible [25].

Related work

Many traditional denoising filtering techniques have been proposed in the literature to reduce speckle noise [26, 27, 28, 29], which can be categorized into three main types: 1) Spatial domain (Median filter, Mean filter, Adaptive Mean Filter, Frost, Total variation filter, Anisotropic Diffusion, Nonlocal means filter, Linear Minimum Mean Squared Error (LMMSE)). 2) Transform domain (Wiener filter, Low pass filter, Discrete wavelet transform), and 3) Deep learning-based techniques such as Convolutional Neural Networks (CNN), Generative Adversarial Networks (GAN), and Variational Autoencoders (VAEs).

The Spatial and Transform domain methods are computationally simple and fast but sometimes blur the image, and there can be a loss of resolution and low accuracy. Spatial domain filters also have size limitations and window shape problems [28].

However, Deep learning-based models can provide better results compared to these traditional methods, because deep models gives better visual quality by extracting various features of an image as example Li et al. proposed TP-Net [30] as 3D shape classification and segmentation tasks, on a wide range of common datasets, which main contribution is the design of dilated convolution strategy tailored for the irregular and non-uniform structure of 3D mesh data.

Several Generative models (GANs, VAEs) have been successfully used for medical image denoising and data augmentation to improve robustness and prevent overfitting in deep CNN image classification algorithms. Some relevant works are discussed in this section.

Wu et al. [31] implemented a perceptual metrics-guided GAN (PIGGAN) framework to intrinsically optimize generation processing, and experiments show that PIGGAN can produce photo-realistic results and quantitatively outperforms state-of-the-art (SOTA) methods. Pang et al. [32] implemented the TripleGAN model to augment the breast US images. These synthetic images were used to classify breast masses classification using the CNN model, achieving a classification accuracy of 90.41%, sensitivity of 87.94% and specificity of 85.86%. Al-Dhabyani et al. [33] first used breast US data augmentation with GAN and then two deep learning classification approaches: (i) CNN (AlexNet) and (ii) TL (VGG16, ResNet, Inception, and NASNet), achieving in the BUSI dataset an accuracy of 73%, 84%, 82%, 89%, 91% and in Dataset B (UDIAT) an accuracy of 75%, 80%, 77%, 86%, 90% respectively.

Jain et al [34] found that CNN provided comparable and, in some cases, superior performance to Wavelet and Markov Random Field methods. Thus, the Resnet approach proposed by MRDG et al. [11] was used to improve mammography image quality with a peak signal-to-noise ratio (PSNR) of 36.18 and a similar structural index metrix (SSIM) of 0.841. Feng et al [13] implemented a hybrid neural network for US denoising based on the Gaussian noise distribution and VGGNet model to extract the structural boundary information, the results show a (PSNR

Denoising autoencoders based on convolutional layers also perform well for their ability to extract spatial solid correlation [35]. Kaji et al. [9] present an overview describing encoder-decoder networks (pix-2-pix) and cycle GAN as image noise reduction.

Chen et al. [12] proposed the autoencoder and the residual encoder–decoder CNN for low-dose computer tomography (CT) imaging, achieving a good performance index (PSNR of 39.19/SSIM of 0.93 and Root Mean Square Deviation (RMSD) of 0.0097), compared to with other methods in terms of noise suppression, structure preservation, and lesion detection.

However, the use of GANs is considered more stable than autoencoders. GANs are typically used when dealing with images or visual data and work better for signal image processing, such as anomaly detection; on the contrary, VAEs are used for predictive maintenance or security analysis applications [35]. For the previous reason, several GANs have recently been used for data augmentation [36, 37, 38, 39, 40], image super-resolution [21], image translation [9], and noise reduction in the medical field [41, 42].

Zhou et al. [37] proposed a GAN

The most recent deep GAN models used for image denoising are Conditional GAN [43] and Wasserstein GAN [44], which have shown better performance than conventional denoising algorithms [45, 46]. Kim et al. [43] implemented a CGAN network as a medical image denoising algorithm, where the SSIM metric was improved by 1.5 and 2.5 times over conventional methods (Nonlocal Means and Total Variation) respectively, demonstrating a superiority in quantitative evaluation. Vimala et al. [47] proposed an image noise removal in US breast images based on Hybrid Deep Learning Technique, where local speckle noise was destroyed, reaching a signal-to-noise ratios (SNRs) greater than 65 dB, PSNR ratios greater than 70 dB, edge preservation index values more significant than the experimental threshold of 0.48. Zou et al. [37] proposed a network model based on the Wasserstein GAN for image denoising, which improved the noise removal effect.

Based on the previous mentioned our propose integrates concepts from breast cancer research and ultrasound image denoising in a comparative study to evaluate the effect of image pre-processing in improving breast image quality. Improving image quality clarifies patterns, allowing the deep learning model to identify and classify features within the image more accurately. In this study, we explore a novel approach by combining fine-tuning techniques GANs

Denoising of medical images has been used to improve the performance of CNN segmentation and classification algorithms [48, 50]. Ans several CNN methods for general image denoising have been studied ADNet, NERNet, SAnet, CDNet, DRCNN [51], but in this research, as a technical novelty, we combine Conditional GAN

Consenquently, this study aims to: (i) to implement two types of GANs

Materials and methods

Databases collection

Three publicly available breast US databases were used in this study: (i) The Breast Ultrasound Images Dataset (BUSI,

A total of 1060 US images were used to train the GAN models; see Table 1.

Breast ultrasound public databases

Breast ultrasound public databases

Workflow of GANs

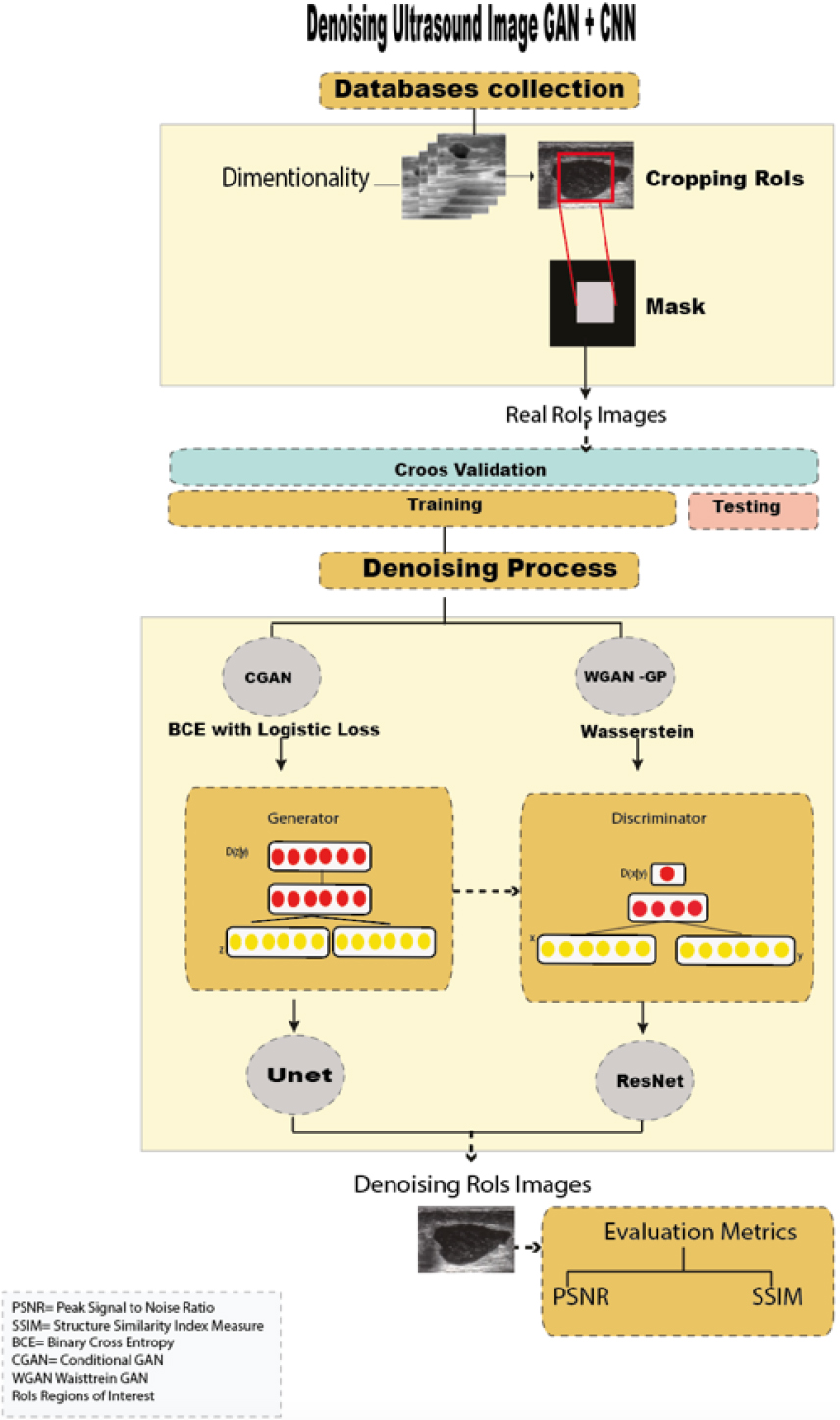

Figure 1 shows the workflow used in denoising breast ultrasound images, which is divided into the following steps: i) Acquisition of public ultrasound databases, ii) Dimensionality and cropping of regions of interest (RoIs), iii) Image denoising using two GANs

The torchvision (pytorch) library was used to perform transformations (preserving all features and structure of the images) and to standardize the images to a single dimension (256

According to Wu et al. [36], synthesizing a lesion into RoIs (regions of interest) gives advantages to the generative model, as it generates more realistic lesions, improving subsequent classification performance over traditional augmentation techniques. Thus, automatic RoI extraction was performed on all US images.

Then, using a cross-validation technique, the dataset was randomly divided (with the Sklearn library) into a training set (80%, 851 images) and a testing set (20%, 209 images) for training the GAN models (with the Tensorflow, Keras libraries).

Generative adversarial network

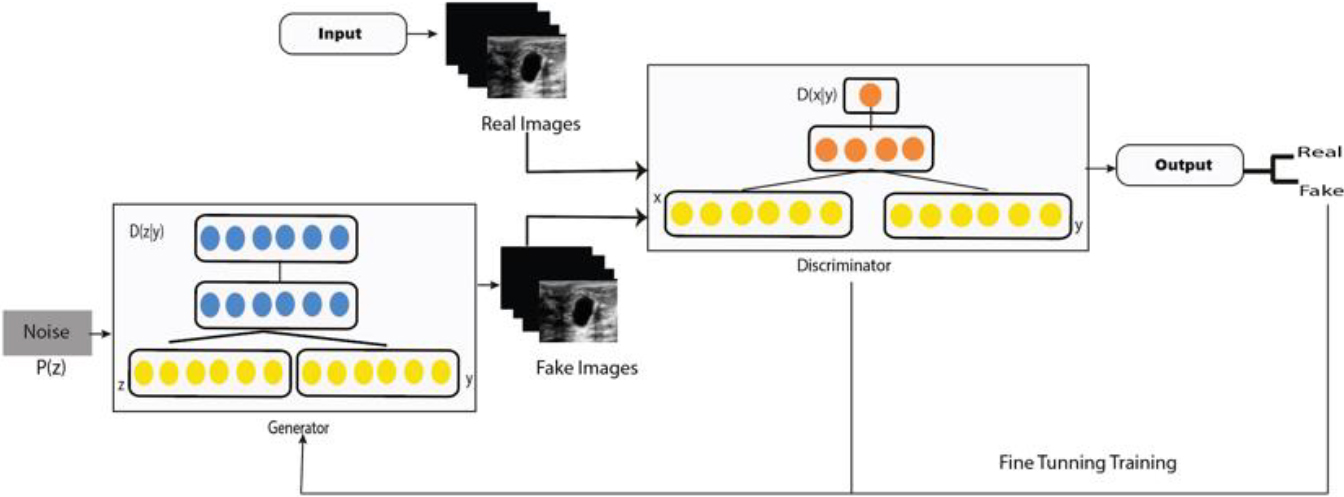

The GAN architecture is represented by a generative (

Given random noise vector

In this work, we used two ultrasound denoising GANs; (i) conditional GAN and (ii) WGAN, both has been widely used in medical image reconstruction, denoising and data augmentation [56]. Especially CGAN model have been propose as new framework that can largely mitigate the biases and discriminations in machine learning systems while at the same time enhancing the prediction accuracy of these systems [57].

CGAN was introduced by Douzas et al. [58], as an extension of GAN with conditional information in

In this work, the generator and discriminator architectures were adapted from [60, 61]. A manual exploration of different configurations in the general hyperparameters was performed to optimize the denoising of breast US images, before selecting and implementing our CGAN model. The selected hyperparameters are: Number of epochs

CGAN model.

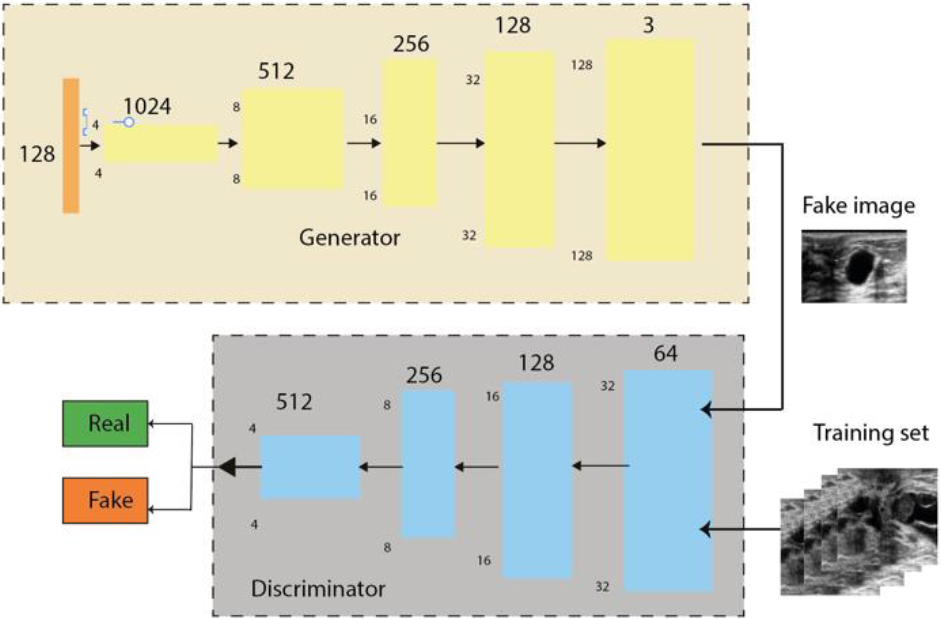

The denoiser discriminator network is based on a Markovian random field (PatchGAN). This consists of an input convolutional layer and 24 convolutional layers followed by batch normalization and a ReLU function (Fig. 2). The output consists of successive convolutional layers 256, 128, 64 and 1. This means that as the input image passes through each of the convolution blocks, the spatial dimension is reduced by a factor of two.

WGAN was introduced by Arjovsky et al. [62], which uses a Wasserstein distance instead of a JS (Jensen-Shanon) or KL (Kullback-Leibler) divergence to evaluate the discrepancy between the distribution distance of noisy and denoised images. It provides a better approximation of the distribution of the observed data in the training data.

The Wassertein (W) model is defined as Eq. (3):

Where

The denoising generator, was trained by the Resnet model [63]. The generator contains 54 layers, including the input layer, 8 sequential layers of 3 layers each (convolutional layer, normalisation layer and LeakyReLU layer), 7 residual sequences of 4 layers each (transposed convolutional layer, normalisation layer, dropout layer and LeakyReLU layer) and finally a transposed convolutional layer (Fig. 3, Appendix S.3 and S.4).

The denoising discriminator uses the PatchGAN model combined with the Res-Net architecture (convolutional layer, normalization layer and LeakyReLU layer), where the layers were connected directly in a single sequence instead of linking several sequences.

The training phase was carried out with the Google Colab GPU PRO environment, using the Tensorflow and Sklearn libraries for image pre-processing, and PyTorch (CUDA 10.2 graphics cores) to obtain more computational resources and minimise the algorithm execution time. The Tensorflow and Keras libraries were used to train the GAN models.

In addition, most filter techniques use various evaluation metrics such as Mean Square Error (MSE), Root-Mean-Square Error (RMSE), Signal-to-Noise Ratio (SNR), Peak Signal-to-Noise Ratio (PSNR) and Structural Similarity Index (SSIM) to assess image quality.

For quantitative comparison, the PSNR and SSIM [64, 65] were introduced to measure image restoration quality, which is widely used in biomedical applications, especially in mammography and US diagnosis and cancer detection fields.

The PSNR is the metric used to measure the quality of the denoising image when it is corrupted due to noise and blur. A higher value of PSNR indicates a higher quality rate. The standard value of PSNR is 35 to 40 dB (Table 2). The PSNR is calculated by Eq. (4), where is the variance of noise evaluated over the RoI image and is the variance of the filtered image.

SSIM is a perception-based model that considers the image degradation as perceived change in contrast and structural information. Thus, we can apply this value to assess the quality of any images [66], which lies from 0 to 1 (Table 2).

PSNR and SSIM range values

WGAN model. Adapted from Hao, Zhuangzhuang et al. (2022).

SSIM index is computed using the correlation coefficient, see Eq. (5).

Where,

Summary of the CGAN and WGAN average comparison results (PSNR and SSIM)

This section presents the most relevant numerical experiments obtained from speckle removal GAN algorithms. First, to improve the algorithm performance, the RoI images were used as GAN training models; in total, we denoising 1060 malignant and benign RoIs. The image quality of the generated data was evaluated with PSNR and SSIM metrics, which are expressed in terms of average value. The most relevant scores are displayed in Table 3; these indicate that the Conditional GAN model showed a significant improvement compared to the other model.

Visual comparison between original ultrasound RoI images and denoising images generated by Conditional GAN and WGAN

Visual comparison between original ultrasound RoI images and denoising images generated by Conditional GAN and WGAN

Although they are visually very similar according to Table 4, the quality values obtained define that the CGAN network achieves a higher mean value in PSNR

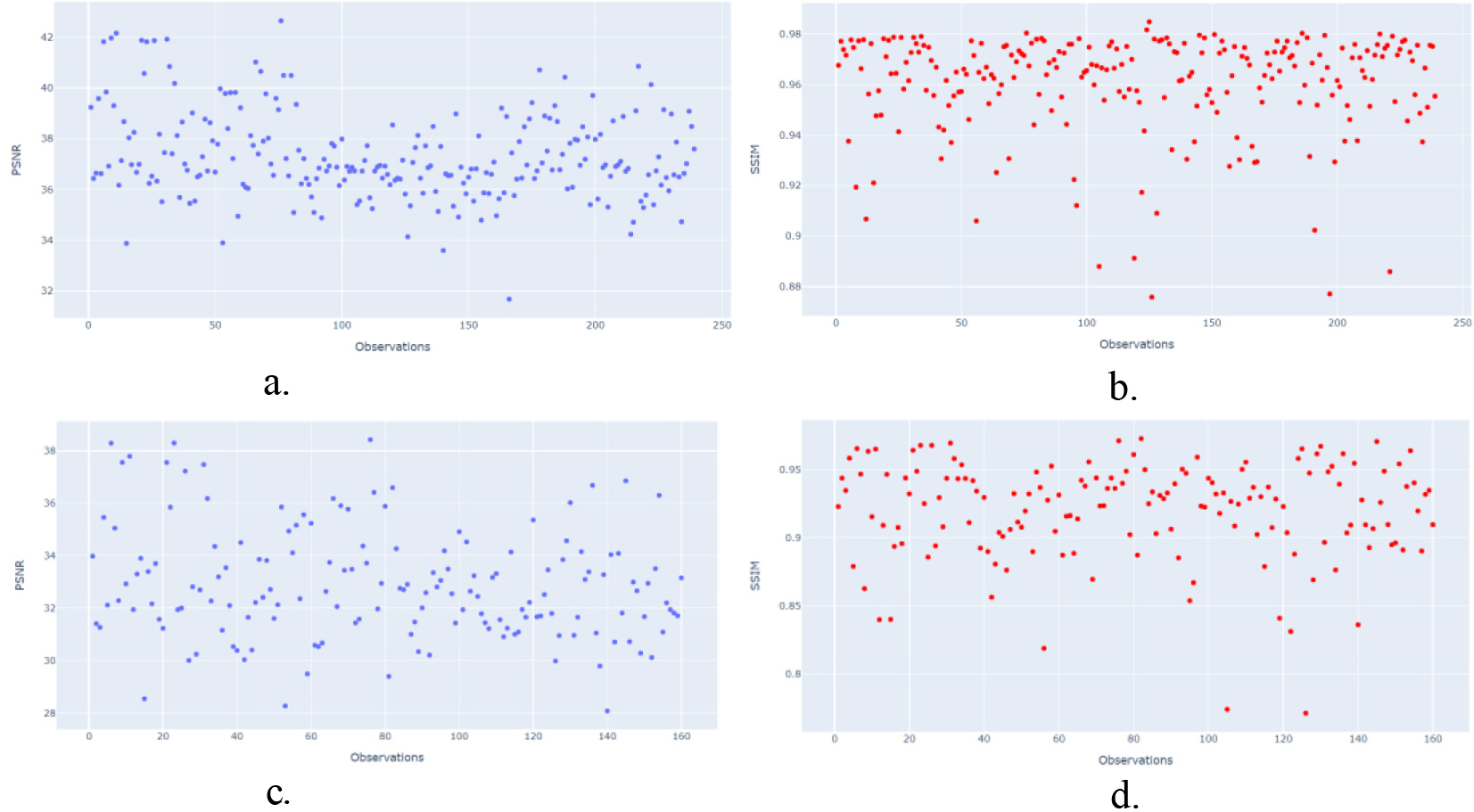

To confirm the previous information, the test dataset (239 US images) was used to evaluate the data dispersion of the CGAN and WGAN algorithms using the PSNR and SSIM metrics.

Dispersion report for PSNR/SSIM metrics. a). CGAN network with PSNR metric. b). CGAN network with SSIM metric. c). WGAN network with PSNR metric. d). WGAN network with SSIM metric.

Figure 4a–4d show the statistical results obtained using R software, where a and b show the dispersion data obtained by CGAN. The blue points represent the PSNR metric, which ranges from 30 to 40 dB, and the red points represent the SSIM metric, which ranges from 0 to 1.

Figure 4a and 4b show more signal of better image quality using CGAN network, it means better luminance (PSNR 36–42dB/SSIM 0.85 to 0.98), contrast and structural information in the restructured images by CGAN with respect to WGAN network (PSNR 36–48dB/SSIM 0.85 to 0.95) Fig. 4c and 4d.

Ultrasound is a complementary technique to mammography and is used for breast cancer detection due to its sensitivity. However, the appearance of speckle noise in US is an interference mode that causes low contrast resolution [33], which makes it difficult to specialize in identifying abnormalities in the breast. In this paper, we trained a pair of GANs combined with CNN architectures as US image denoising, and then evaluated the quality of the denoised images using PSNR and SSIM metrics.

The quality of the denoising image in the Conditional GAN achieved a higher average PSNR (41.03 dB) and SSIM (0.97) in contrast to the average PSNR (35.47 dB) and SSIM (0.93) in the WGAN. Thus, according to the values given in Table 4, the CGAN is consistent with a higher quality image [63] and achieves success in ultrasound denoising images compared to the WGAN. This can be attributed to the fact that CGAN uses the Unet architecture as the generator model and Binary Cross Entropy (BCE) as the loss function (in addition to the L1 loss) [67, 68] to generate real images and provide greater robustness to the model. The Unet has an encoder-decoder network to reconstruct the despeckled image by extracting features from the noisy image to effectively enhance the image features and suppress some speckle noise during the encoding phase [69].

In contrast, WGAN uses Wasserstein distance and Resnet architecture as the generator model with gradient clipping as the loss function to achieve a 1-Lipschitz function. Although this network sometimes avoids the mode collapse problem, resulting in more stable training and less sensitivity to hyperparameter settings (because it is trained based on image distribution loss, rather than image pixel loss) [69], in this work the results generated by WGAN are not statistically significantly better than those generated by CGAN. For the previous reason Gulrajani et al. [70] proposed a WGAN with gradient penalty (GP) to replace the gradient clipping and to enforce Lipschitz continuity, which performs better and more stable training than WGAN with almost no hyperparameter setting

Comparison of the accuracy of our denoising method with others GAN and CNN denoising methods

Comparison of the accuracy of our denoising method with others GAN and CNN denoising methods

These performance differences in performance observed between the CGAN and the WGAN will also help to better implement new tasks in a computer system for detection/diagnosis of benign or malignant breast lesions. The pre-processing steps such as denoising, super resolution, or data augmentation based on deep learning algorithms help to improve the performance and accuracy in terms of clinical relevance in detection, diagnosis, segmentation, or image classification using CNN algorithms.

The main advantage of using GAN algorithms are the quality of the new images produced and the ability to generalize beyond the boundaries of the original dataset to produce new patterns.

Consequently, many researchers have been proposed a deep residual network structure based on GAN networks for image denoising.

Zhang et al. [71] used GANs Unet-based architecture as ultrasound image denoising, with residual dense connectivity and weighted joint loss (GAN-RW) to overcome the limitations of traditional denoising algorithms. The results demonstrated that the noise level (PSNR

The GAN-based combination methods have been applied to different tasks, and have achieved better results. For example [72], proposed a conditional GAN using a WGAN as an objective loss function in medical image denoising, the PSNR/SSIM values (29.4/0.88) demonstrated good results with respect to other state-of-the-art methods, perceiving the structure and details of the images.

Cantero J. [73] investigated two GANs (DCGAN and WGAN-GP) for the generation of synthetic PET (positron emission tomography) breast images. The visual results show that these two architectures can generate sinogram images that confound human evaluators. According to [74] the lower the amount of noise present in the real images the faster the DCGAN network learns to generate high fidelity images, but the results obtained here by WGAN-GP are not significantly better than those produced by DCGAN. In conclusion joint training of denoising and image classification significantly improves the performance of classification. A comparison of the accuracy of our work with more recent methods is shown in Table 5.

Finally, in this study, some limitations were presented, particularly in the availability of private data collection, because only public breast ultrasound databases were used. The implementation of hyperparameters in GAN training is very complex due to the sensitivity of their modification, generating some challenges (collapse mode, convergence, Nash equilibrium, and gradient), which are typical of generative networks. To minimize this problem during the training, it is essential to manually modify some hyperparameters (optimization functions, loss functions, number of epochs, layers, iterations), even to implement new alternatives based on deep convolutional networks to train the generator and the discriminator in a better way.

The research is reproducible, replicable and generalizable, and all code, data and materials have been deposited in the Mendeley repository [75], where the information can be accessed and used by others.

In conclusion, in this work CGAN proved to be a useful tool with a better-quality result for denoising breast ultrasound images than the WGAN model. This was obtained by comparing the mean statistical values (PSNR and SSIM) of the GAN models. The higher robustness demonstrated by CGAN is attributed to the fact that the generator uses U-Net encoder-decoder architecture with BCE loss function to remove the speckle noise in a better way than the Resnet architecture used in WGAN. The proposed CGAN technique is particularly useful for small data sets with low variance. These networks are widely used for image generation or data augmentation, but their application in US image denoising is still limited. In future work, other advanced deep learning methods for denoising such as convolutional neural networks and autoencoders will be used, and additional features will be considered in denoising breast images such as PET, thermal, CT, MRI to improve the performance of breast lesion classification algorithms.

Author contributions

Conceptualization Y.J.-G. and V.L.; methodology Y.J.-G.; formal analysis, Y.J.-G., M.J.R.-Á, and V.L.; investigation Y.J.-G and O.V; resources, D.C, Y.S, L.E, A.S, C.S; writing original draft preparation Y.J.-G, O.V; writing manuscript and editing, Y.J.-G., M.J.R.-Á, and V.L.; visualization, Y.J.-G.; supervision, M.J.R.-Á and V.L.; project administration, M.J.R.-Á and V.L.; funding acquisition, M.J. All authors have read and agreed to the published version of the manuscript.

Data availability statement

The data that support the findings of this study are openly available in the Mendeley repository (https:// data.mendeley.com/drafts/g3cmj46xyx) [75].

Abbreviations

Supplementary data

The supplementary files are available to download from http://dx.doi.org/10.3233/IDA-230631.

Footnotes

Acknowledgments

This project has been co-financed by the Spanish Government Grant Deepbreast PID2019-107790RB-C22 funded by MCIN/AEI/10.13039/501100011033.

Conflict of interest

The authors declare no conflict of interest.