Abstract

Graph neural networks (GNNs) have been applied to various graph domains. However, GNNs based on the message passing scheme, which iteratively aggregates information from neighboring nodes, have difficulty learning to represent larger subgraph structures because of the nature of the scheme. We investigate the prediction performance of GNNs when the number of message passing iteration increases to capture larger subgraph structures on classification and regression tasks using various real-world graph datasets. Our empirical results show that the averaged features over nodes obtained by the message passing scheme in GNNs are likely to converge to a certain value, which significantly deteriorates the resulting prediction performance. This is in contrast to the state-of-the-art Weisfeiler–Lehman graph kernel, which has been used actively in machine learning for graphs, as it can comparably learn the large subgraph structures and its performance does not usually drop significantly drop from the first couple of rounds of iterations. Moreover, we report that when we apply node features obtained via GNNs to SVMs, the performance of the Weisfeiler-Lehman kernel can be superior to that of the graph convolutional model, which is a typically employed approach in GNNs.

Introduction

Graph-structured data are ubiquitous in various domains from social networks to system engineering and bio- and chemo-informatics. Most of the recent machine learning methods for graphs are essentially based on the

In machine learning on graphs,

GNNs have emerged as a way of learning representation on graphs. The message passing scheme is commonly used in most of proposed GNNs [2, 4, 10, 11]. For example, graph convolutional network (GCN) is known to be a simple GNN model for semi-supervised learning [10], which is similar to the WL scheme. Graph isomorphism network (GIN) is more similar to the WL graph kernel, which have been theoretically and empirically shown to be as powerful as the WL scheme with respect to their discriminability and representation power [2]. In addition, to learn better representation of node features and overcome the limitation of graph convolutions, attention-based graph neural models have been studied [3, 12]. These graph neural network models are helpful to solve graph prediction tasks as well as node or link prediction.

In GNNs, the problem of over-smoothing, where the similarity between nodes becomes closer and closer when more and more message passing is performed, is one of common issues. The over-smoothing deteriorates the quality of graph representation, particularly when multiple convolutional layers are stacked, because of the low information-to-noise ratio of the message received by the nodes, which is partially determined by the graph topology [13]. As a result, the performance of a trained model such as the accuracy of classification worsens when over-smoothing occurs. This means that the message passing scheme may inherently have a limitation in terms of capturing large subgraph structures as it requires many message passing iterations. Thus, if such large subgraphs are relevant in prediction, this would be a serious problem for GNNs.

In this paper, to investigate the advantage and the limitation of the message passing scheme in GNNs and graph kernels, we focus on the influence of the over-smoothing problem. More precisely, we empirically examine how features of graph substructures obtained by the WL (or message passing) scheme affect the predictive performance as the number of convolutional layers increases. First, we compare the WL sub-tree graph kernels with GNNs, including GCNs and GINs on classification and regression real-world graph datasets collected from a variety of domains. In GNNs, for certain types of datasets, we show that the large number of message passing iterations deteriorates the predictive performance due to the over-smoothing phenomenon. Second, we argue that the averaged feature vectors over nodes learned from graph convolutional layers, which are expected to represent the overall graph characteristics, are not stable as the number of message passing iterations increases. Finally, we evaluate graph features on multi-layer perceptrons (MLPs) and support vector machines (SVMs) in the task of classification and regression. We also show that SVMs with features obtained from GCNs can be used as a way of measuring how strong the contribution of node features is, where the node features are concatenated at each message passing iteration.

Our contributions are summarized as follows:

The prediction performance of the WL kernel outperforms the most fundamental GNNs, GCNs and GINs, if the number of message passing iteration increases. The WL kernel does not deteriorate significantly for many message passing iterations in most of datasets, while GCNs and GINs do deteriorate due to their large number of parameters and ill-trained previous node features. Transition of node features tend to be small, which leads to difficulty of determining which feature is informative for prediction, and the features converge to indistinguishable values when the training fails.

Notation

A

For two graphs

The vertex histogram kernel

One of the simplest graph kernels is the vertex histogram kernel, which counts node label matching between a pair of graphs. Let

The Weisfeiler–Lehman (WL) sub-tree kernel is based on the Weisfeiler–Lehman test of isomorphism. The key idea of the kernel is the “WL scheme”, which is re-labeling of node labels by gathering neighboring node labels. Then each new label is a good proxy of an indicator of subgraph structure. This re-labeling step is repeated until every pair of graphs have different node labels or reaching at a certain step

In the WL kernels, these subgraph structures obtained from the WL scheme are mapped into the Hilbert space. In other words, the obtained node label sets are used to compute the similarity between graphs. The WL kernel is also based on the vertex histogram, but it extends the original vertex histogram through iterations of node re-labeling. The final kernel matrix after

where

where

The graphlet kernel is calculated by the frequency of occurrence of matched subgraphs, called graphlets [14]. Graphs are decomposed into graphlets. Let

Graph convolutional neural networks are first introduced for semi-supervised learning of classifying nodes in a graph. In graph convolutional networks, node labels are treated as a feature matrix

where

For regression and classification settings, the output of node features at the final layer is reduced by the pooling operation, such as taking the mean and the sum over vertices, which is equivalent to the readout operation that can obtain the representation of graph features. The readout graph feature is passed into MLP layers and trained through back propagation.

In computing for classification and regression, the reduce function that returns the final output value

where READOUT

The graph isomorphism network (GIN) is a model that is known to be as powerful as the WL test [2]. To update the representation of each node feature vector

where

The READOUT function aggregates node features from the final iteration to obtain graph features of

where

Our focus in this paper is not on developing state-of-the-art GNNs, but on examining the effectiveness of GNNs with respect to treating large substructures of graphs. Therefore, we fix every weight to be the identity matrix and

Note that the obtained features can be combined with not only MLPs but also any machine learning models. In SVMs, the graph feature of

Since the dimensionality of concatenated features of graphs becomes larger and larger if more and more iterations are performed, we introduce the norm to normalize each feature matrix as follows:

We call these features pre-fixed GCN as the weights of GCN is prefixed into unity.

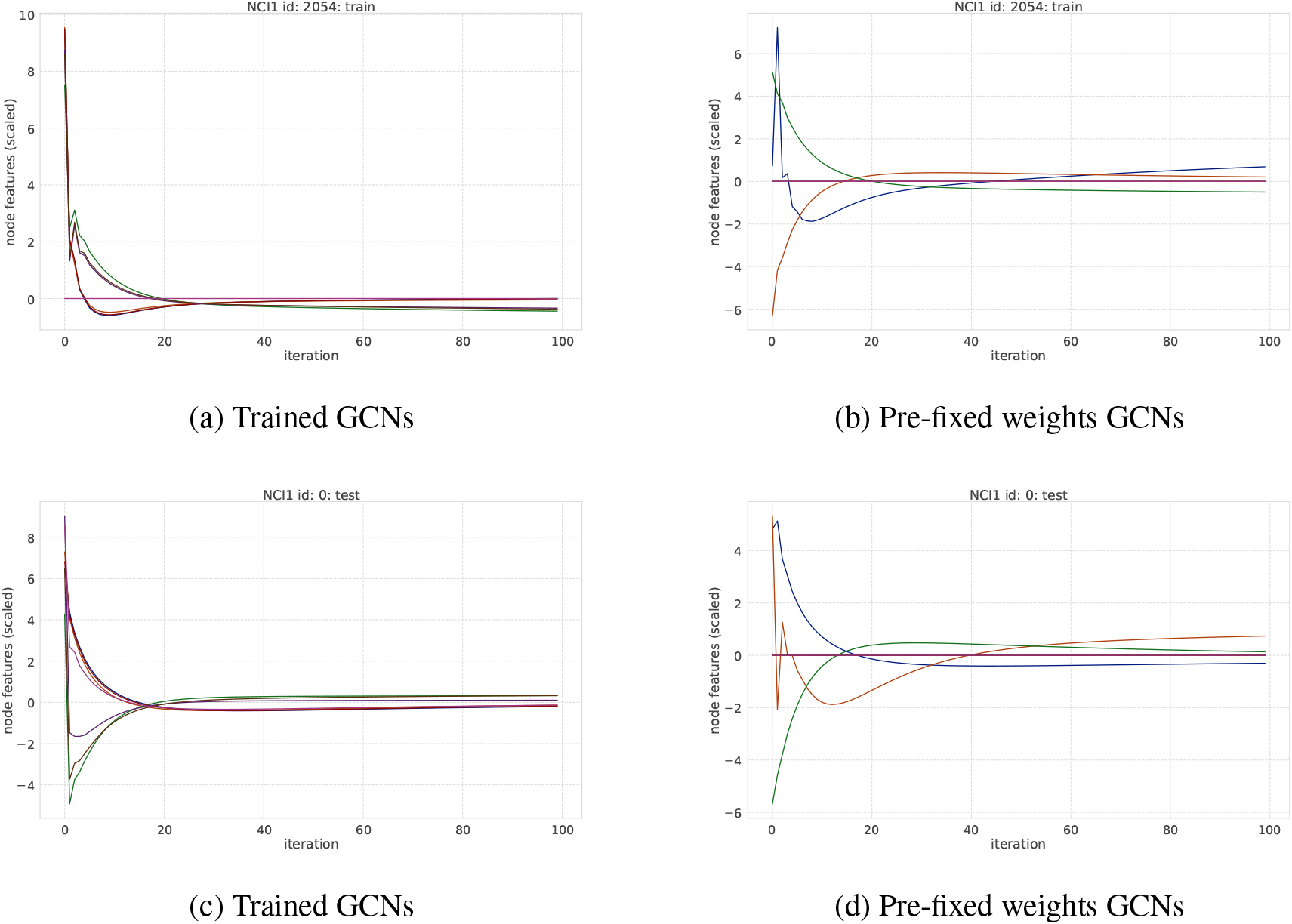

To measure the degree of transition of node features after weight training in graph convolutional layers, we simply introduce the AVERAGE operation of node feature vectors for each convolutional layer. In GCNs, learned node features of

The update of

We expect that embedded node features include information about the neighboring node features.

Experimental setting

Datasets

To investigate how sub-graph structures affect the performance in classification and regression tasks, we collected eight classification datasets and three regression datasets from various domains widely used as benchmarks of graph machine learning. In terms of classification datasets, Kersting et al. [16] listed various benchmark sets for graph kernels. Classification benchmarks include five bio-informatics datasets (MUTAG, PTC, NCI1, PROTEINS, and DD) and two social network datasets (IMDB-BINARY and REDDIT-BINARY). Regression benchmarks are three chemo-informatics datasets: ESOL from MoleculeNet for the prediction of water solubility, FreeSolv from MoleculeNet for prediction of hydration free energy of small molecules in water, and Lipophilicity from MoleculeNet for the prediction of octanol/water distribution coefficient (logD at pH 7.4) [17]. Node labels in graphs are converted into a one-hot matrix for GNNs while graph kernels use node labels directly to compute gram matrices. As for social network graphs, all node feature vectors are the same as each other according to the procedure proposed in literature [2, 18]. Our goal is to investigate whether or not graph machine learning models can appropriately learn information of large subgraph structures when the amount of message passing increases. We do not use edge features and node features obtained from 3D structures such as the distance between nodes for chemo-informatics dataset as they are not relevant to our task. All datasets are available from DGL [19] and DGL-LifeSci [20]. The statistics of datasets are summarized in Table 1.

Statistics of benchmark datasets

Statistics of benchmark datasets

We chose two graph neural networks models that are highly related to the WL scheme: Graph Convolutional Network (GCN) [10] and Graph Isomorphism Network (GIN) [2]. All neural networks are implemented in Deep Graph Library (DGL) [19] and PyTorch [21]. The graph kernel of vertex histograms and the WL sub-tree kernel are implemented in GraKel [18] and GRAPHLETs are obtained using gSpan [22] library1

In classification, datasets are evaluated in stratified 10-fold cross-validation, where each fold preserves the class ratio of samples. In regression, we evaluated the performance as root mean squared errors (RMSE) with a holdout validation set, where a training set is 70 percent in the entire dataset. To perform fair comparison of learned node features, the dimensionality of initial weights in convolutional layers is the same as that of initial node features, which is

Results and discussion

Classification

In classification, we use the Vertex Histogram kernel as a baseline, which computes the similarity (kernel value) between graphs by simply counting the matching node labels; in so doing, it completely ignores the graph topological information. We also use the GRAPHLET kernel, which includes information of subgraphs under the designated number of vertices.

Classification accuracy of 10-folds cross-validation on Bio-informatics datasets

Classification accuracy of 10-folds cross-validation on Bio-informatics datasets

Classification accuracy of 10-folds cross-validation on social network datasets

The WL kernel can consider additional information of subgraph structures through the WL scheme of iteration. The GRAPHLET kernel has a potential to include more information about substructures than the WL kernel according to the setting of maximum number of vertices through enumeration of subgraphs (graphlets). Note that the complexity of enumeration of subgraphs for the GRAPHLET kernel exponentially increases as the number of vertex increases [15], so it is not feasible to set the large number of vertices.

The pre-fixed GCN SVM is the support vector machine with input features computed from GCN layers where the weight is the identity matrix. Such graph features represent the graph structure itself. GCN is related to the WL kernel, while features obtained through graph convolution can include information of subgraph structures as embeddings through training at the final layer. In contrast, GIN can include all features of substructures at each iteration.

In Table 2, the accuracy score of the GRAPHLET kernel, which explicitly considers all the possible subgraphs under the certain number of vertices, is highest on the MUTAG and DD dataset. The accuracy of the WL kernel is also high overall and shows the best score in three datasets (PTC-MR, NCI1, and PROTEINS). The accuracy scores of both GIN and GCN are comparable to those of the WL graph kernel. When we consider downstream classification methods in GCNs, SVM with the features computed from GCNs with pre-fixed weights, which can be viewed as the continuous version of the WL scheme and weights in GCNs are not trained and node features depend on the initial node attributes and its structure (the adjacency matrix) can be effective in certain types of datasets as it shows comparable or better accuracy in, for example, MUTAG and DD than the standard GCN. While our result is different from that in [2] due to hyperparameter settings, but our comparison is fair as hyperparameters are tuned in the same way across comparison partners.

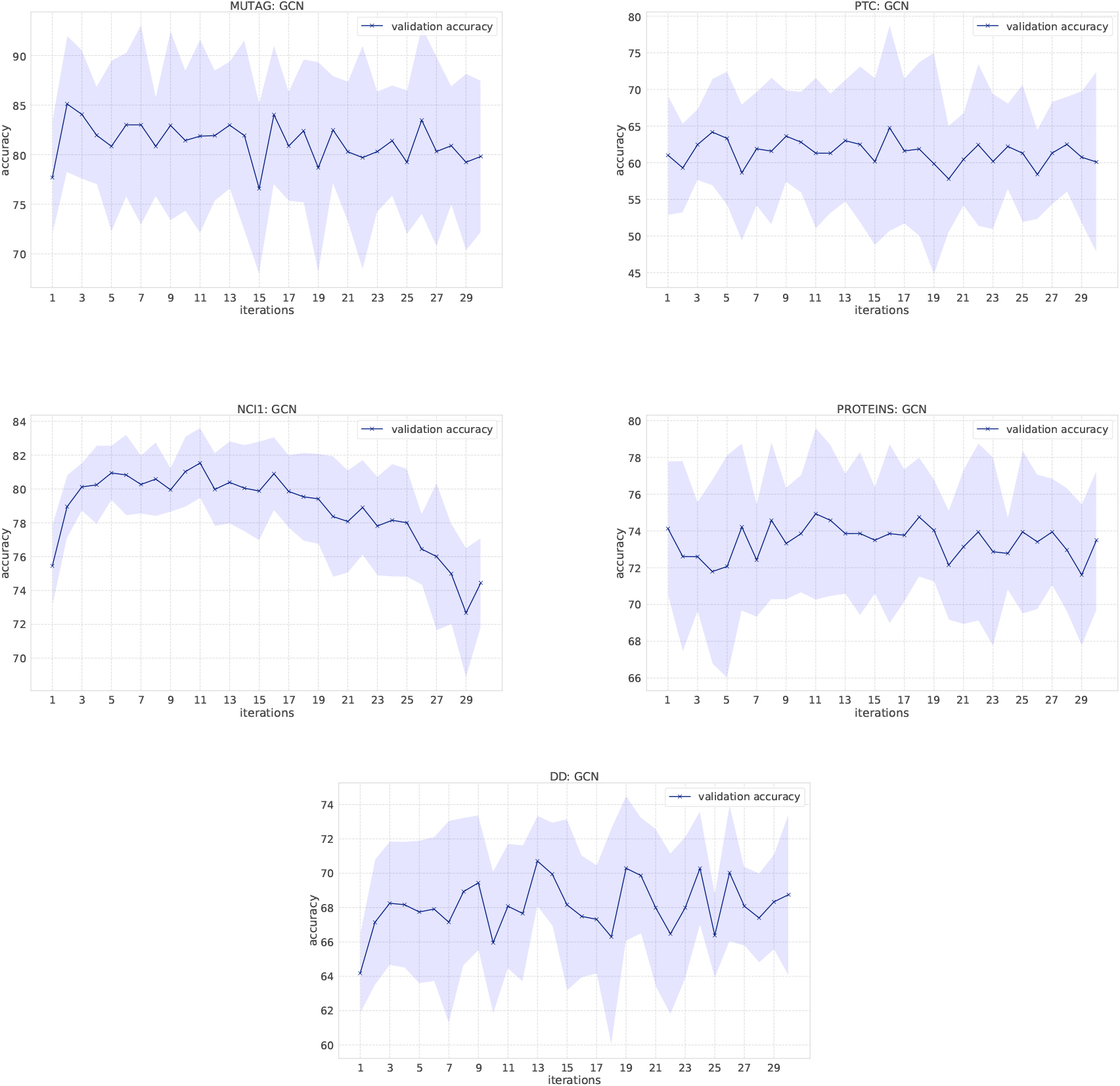

Classification results of GCNs on bio-informatics datasets. X-axis shows the number of convolutional layers (the number of message passing). Blue bold line shows the average of accuracy evaluated in 10-fold cross validation and the filled area shows the standard deviation.

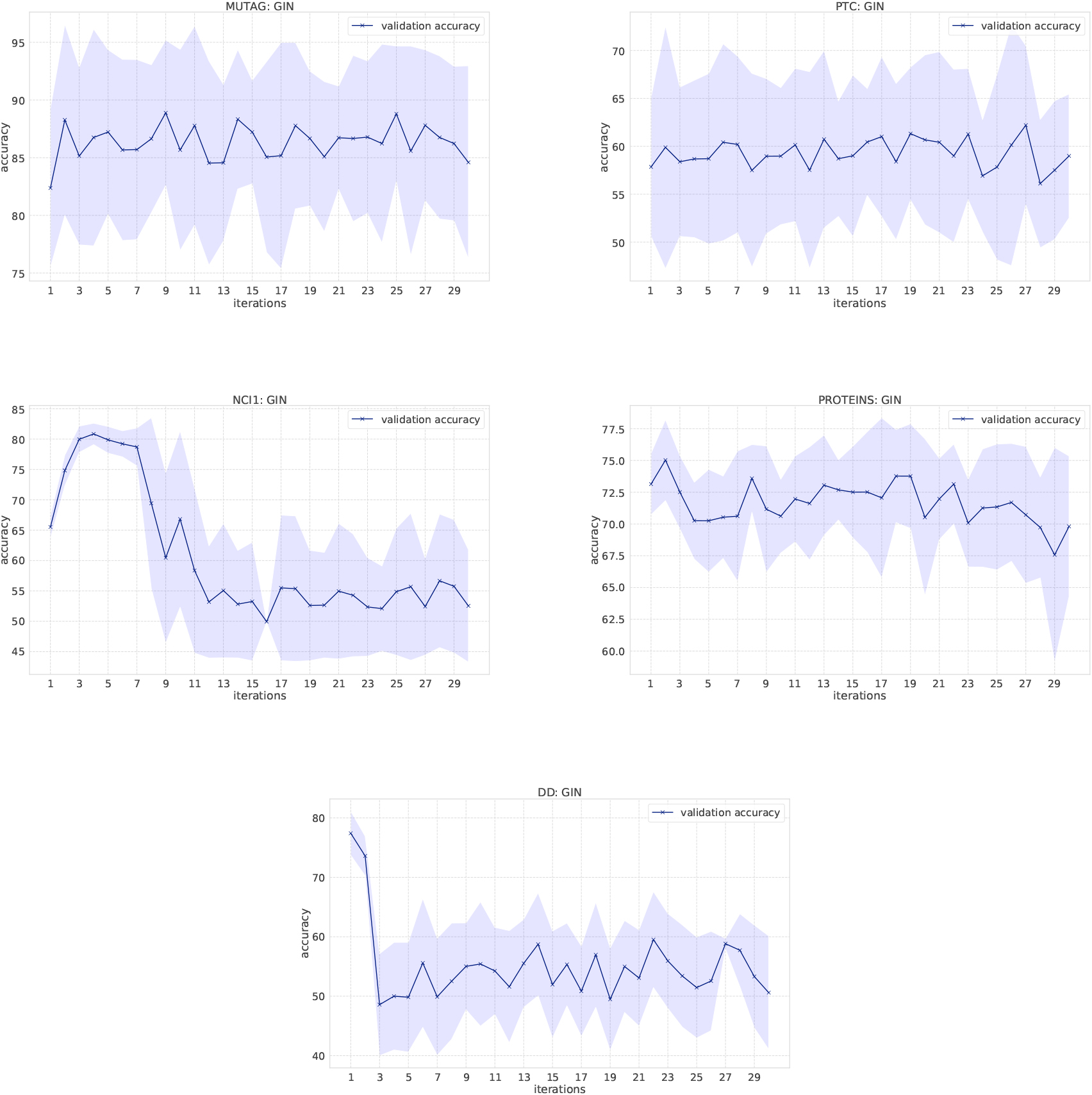

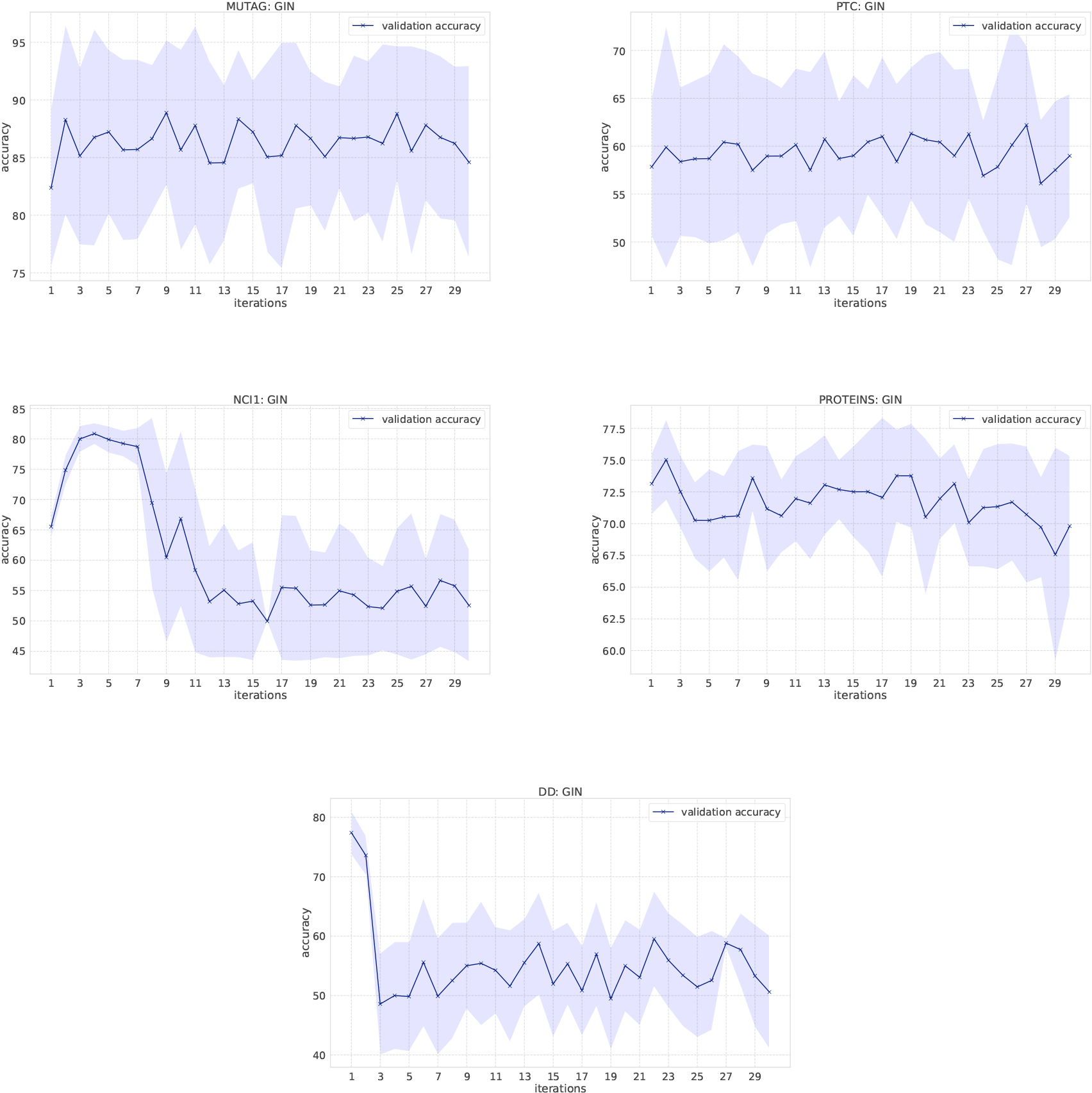

Classification results of GINs on the bio-informatics dataset.

Classification results on social network datasets.

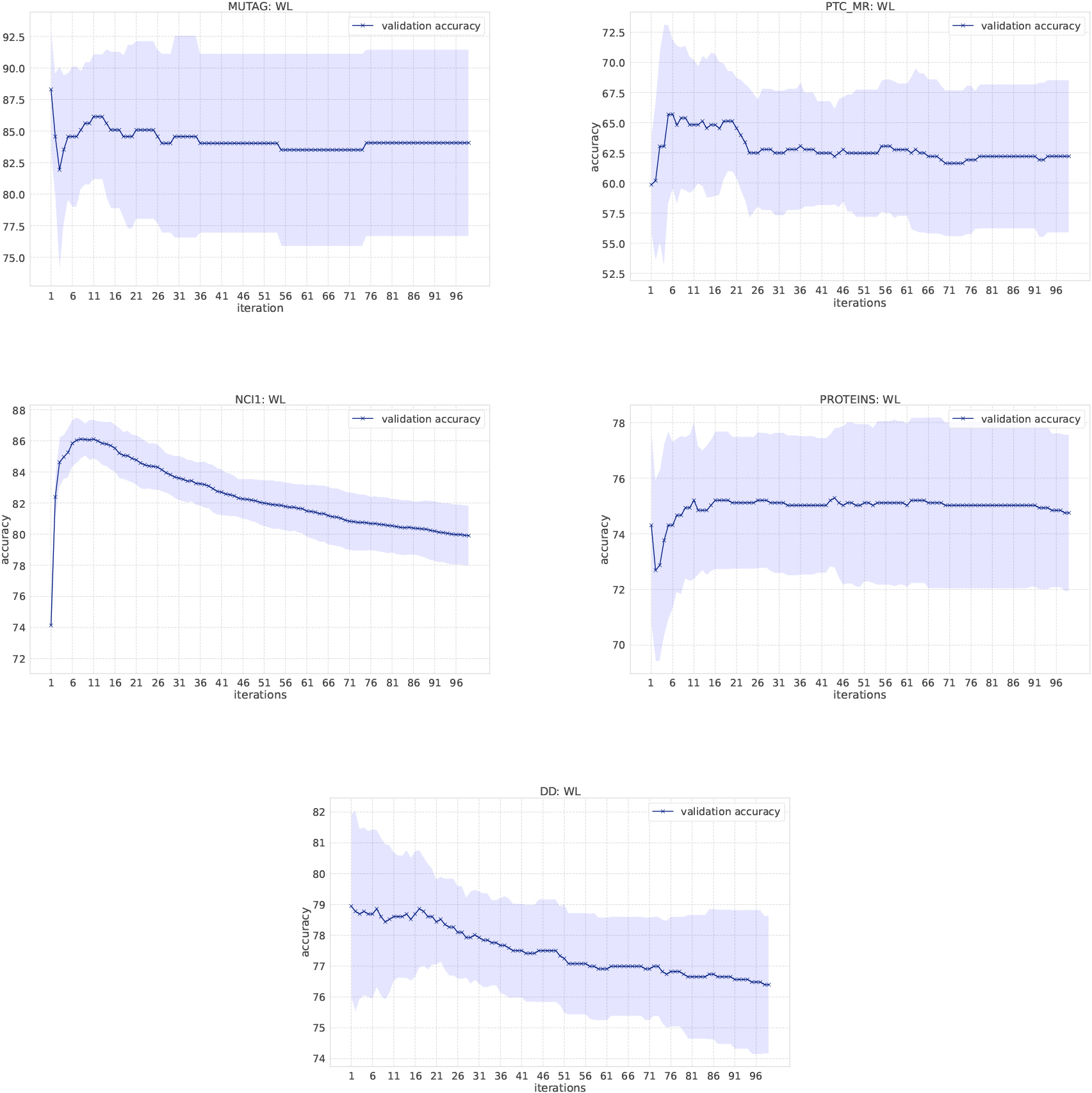

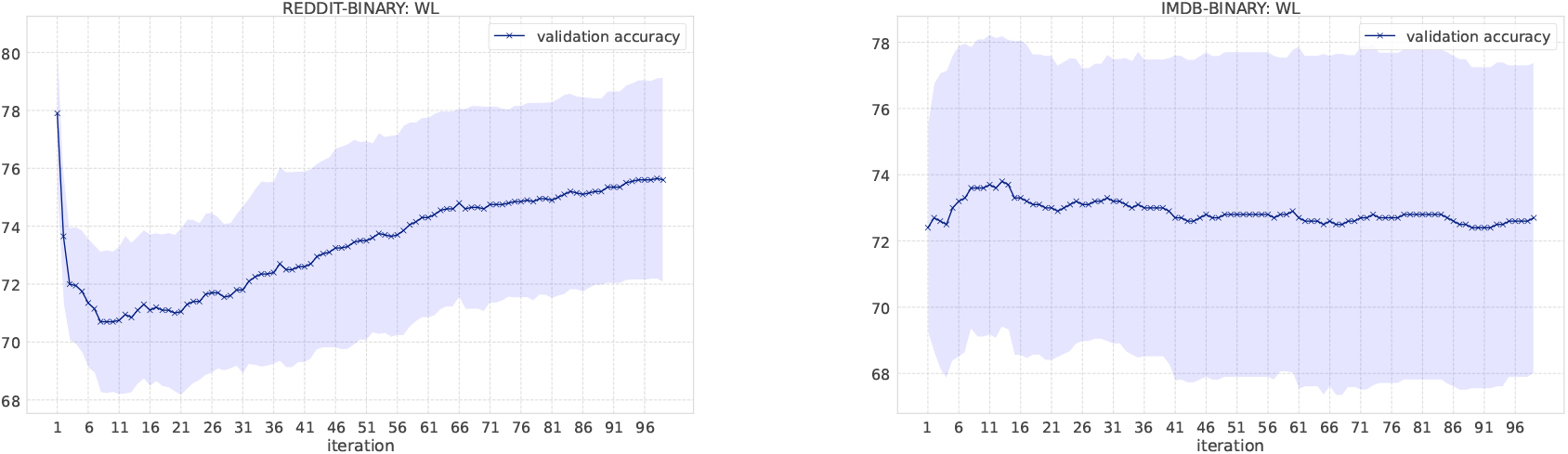

Classification results of the WL kernel on Bio-informatics datasets.

Classification results on social network datasets.

In the WL kernel, it is easy to compute the kernel even if the number of message passing iterations increases. Figure 4 shows the classification accuracy as the number of iterations increases. We have increased the number of iterations in the WL kernel up to 100. In the MUTAG dataset, labels obtained from the WL scheme become unique when iterations reach to 13, which means no more information gains. Small fluctuations of the resulting accuracy comes from the loop structure in graphs. Overall, the accuracy score is not largely affected by the number of iterations in the WL kernel, except for NCI1 and DD. One possible reason for such deteriorating accuracy in NCI1 and DD is that they have more node labels than other datasets and it becomes difficult to discriminate graphs as the number of iteration increases. Various node labels contribute to the occurrence of multiple types of unique subgraphs. Since graph kernels fundamentally measure the similarity between graphs based on subgraph matching, the kernel value gets smaller if there are many unique subgraphs. In the case of NCI1 and DD, when the number of iterations increases, more and more unique subgraphs appear, and the additional contribution to kernel values becomes smaller and smaller, resulting in the difficulty of discrimination. An interesting observation is that the accuracy on the REDDIT-BINARY dataset increases when the number of iterations increases, although the highest accuracy is at the first iteration.

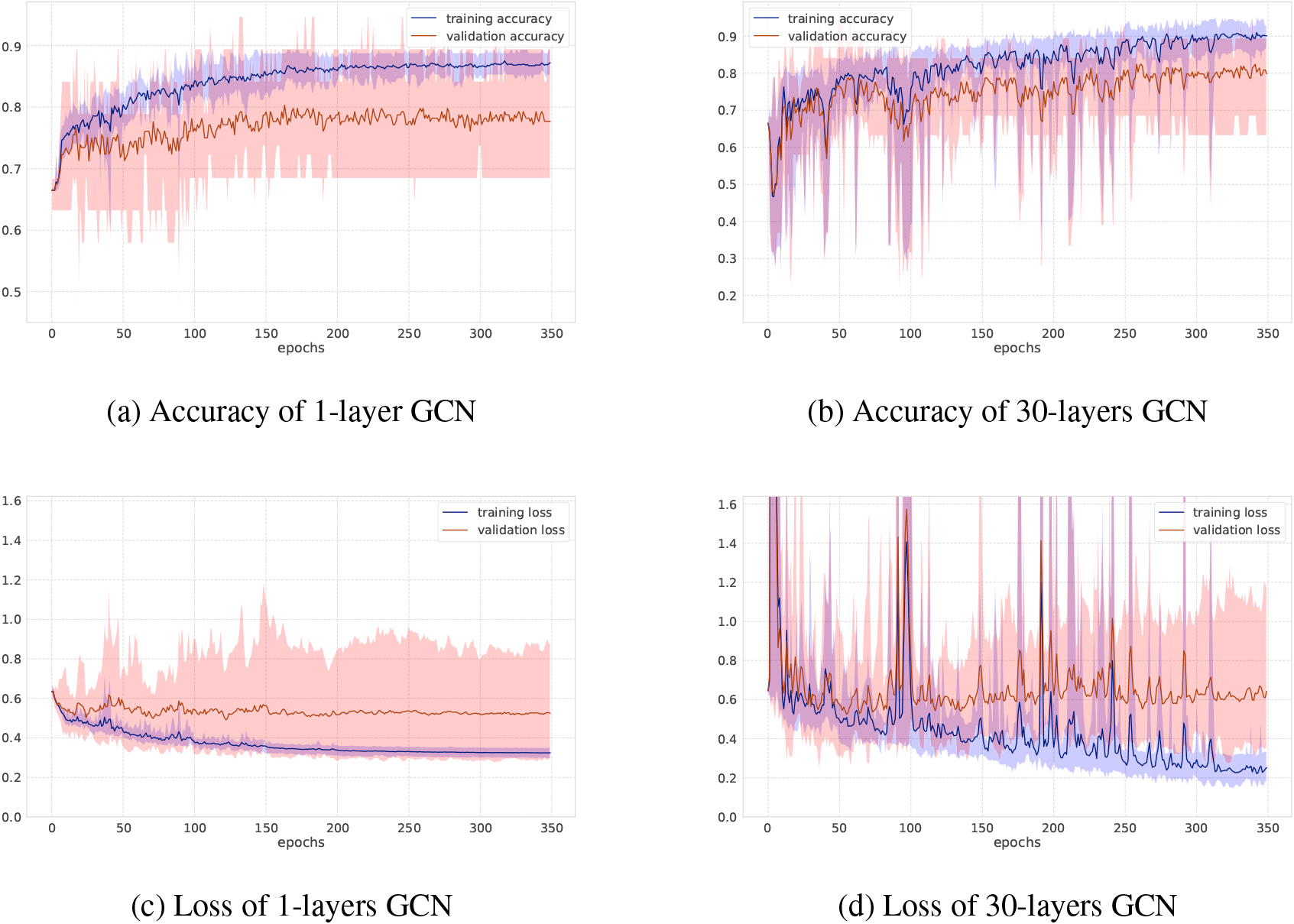

Accuracy and loss on MUTAG dataset.

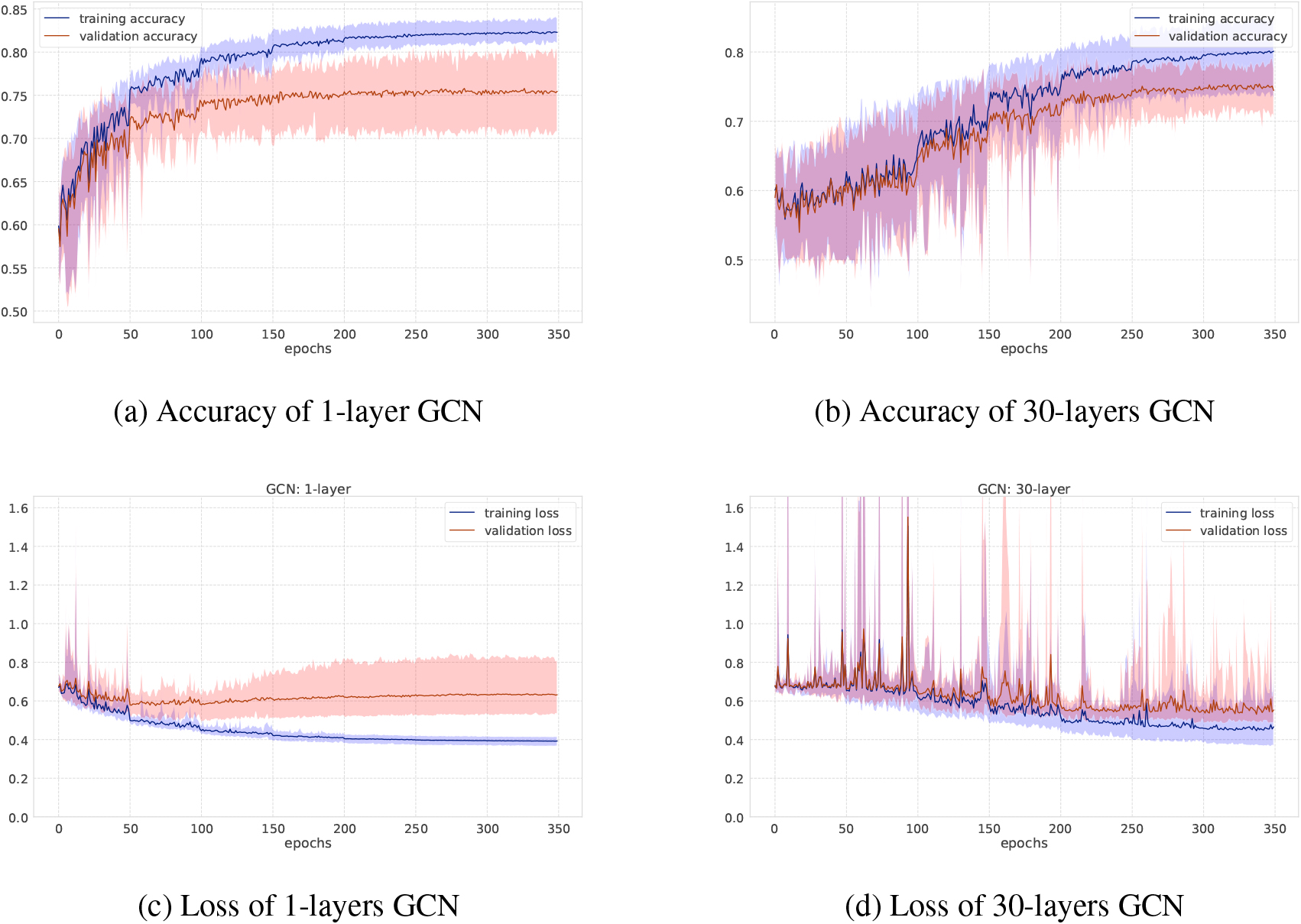

Accuracy and loss on NCI1 dataset.

As for GCNs and GINs, Figs 6 and 7 show the training-validation loss and accuracy via 10-fold cross-validation per epoch on the MUTAG and NCI1 datasets. In each figure, left and right plots represent the training log of GCNs under the setting of the number of iterations 1 or 30, respectively. When the number of graph convolutional layers is one, the loss and accuracy of training-validation sets are rather smooth compared to the case where the number of graph convolutional layers is 30. After training after 350 epochs, the standard deviation of the training-validation loss and accuracy fluctuates greatly, although the final accuracy score is almost the same, at around 70 percent. This shows the difficulty of learning for a larger number of iterations where there are more trainable parameters as well. Figure 3 shows results on the social networks datasets. In GCNs, the accuracy does not change so much compared to GINs. All graphs on social network datasets have the same node labels, and GCNs may be easier to learn representation of node features, while GINs can get the best accuracy at the first or second steps.

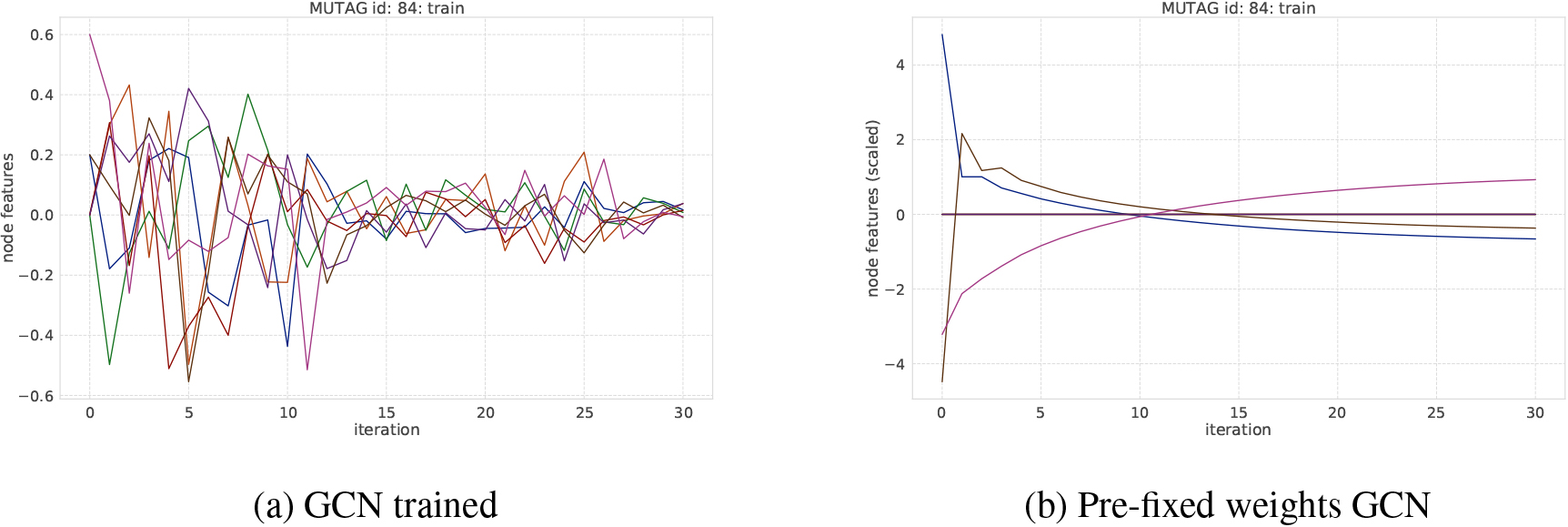

An example of transition of node features on MUTAG dataset. Each curve represents the averaged node feature value in a graph at each iteration step.

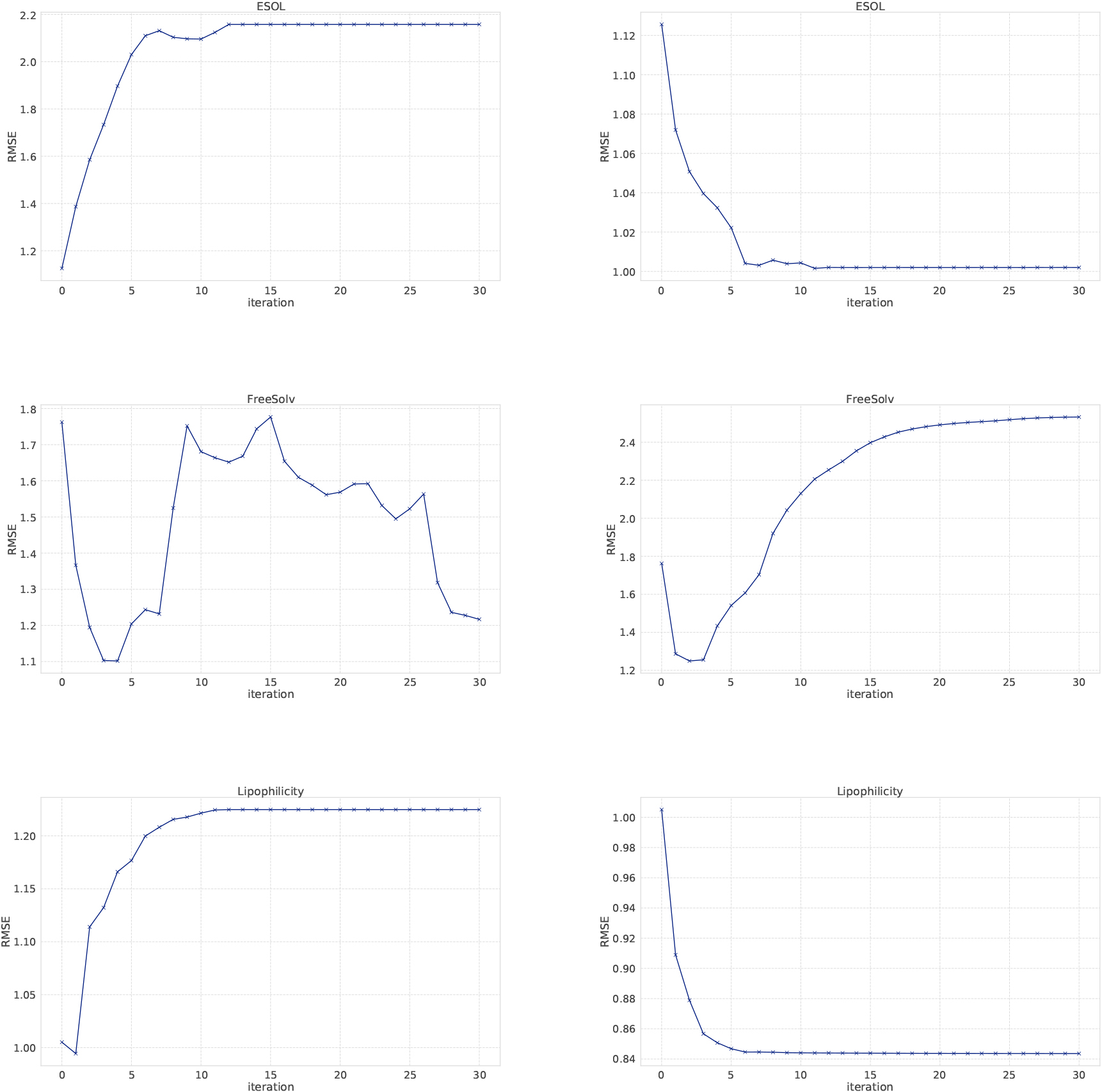

RMSE of holdout validation (training 70% test 30%). “

An example of transition of node features on NCI1 dataset. Each curve represents the averaged node feature value in a graph at each iteration step.

Regression results of SVR using node features learned from GCNs. Left plots show SVR results using features produced from each GCNs layer and right plots show SVR results using concatenating features.

In this paper, we have investigated the effect of iterations of GCNs layers. In the WL kernel (which is the state-of-the-art graph kernel and shares the iteration process with GNNs to incorporate graph topological information), it usually maintains the good performance for large number of iterations in both classification and regression tasks. This may be because the WL kernel implicitly constructs a feature vector and addition of iteration means the concatenation, not the summation, of features. Since the large number of iterations means that the corresponding method tries to capture larger subgraphs, it can become insignificant if only local graph information is relevant. In GNN models such GCNs and GINs, by contrast, the number of iterations is largely affected because there are more and more trainable parameters and it is often difficult to learn them properly. Another reason could be that since node features can include noise, message passing iteration contributes to accumulate noise, resulting in worsening the performance of even the WL kernel. Since the predictive power of GCNs and GINs is comparable to that of the WL graph kernel, GNNs with mini-batch training can be an effective choice on larger dataset, as it is much more efficient than the WL kernel that requires the computation of the full kernel matrix. In our future work, we will investigate other methods such as attention-based graph neural networks.

Footnotes

Acknowledgments

This work was supported by JST, CREST Grant Number JPMJCR22D3, Japan, and JSPS KAKENHI Grant Number JP21H03503.