Abstract

Combinations of Monte-Carlo tree search and Deep Neural Networks, trained through self-play, have produced state-of-the-art results for automated game-playing in many board games. The training and search algorithms are not game-specific, but every individual game that these approaches are applied to still requires domain knowledge for the implementation of the game’s rules, and constructing the neural network’s architecture – in particular the shapes of its input and output tensors. Ludii is a general game system that already contains over 1,000 different games, which can rapidly grow thanks to its powerful and user-friendly game description language. Polygames is a framework with training and search algorithms, which has already produced superhuman players for several board games. This paper describes the implementation of a bridge between Ludii and Polygames, which enables Polygames to train and evaluate models for games that are implemented and run through Ludii. We do not require any game-specific domain knowledge anymore, and instead leverage our domain knowledge of the Ludii system and its abstract state and move representations to write functions that can automatically determine the appropriate shapes for input and output tensors for any game implemented in Ludii. We describe experimental results for short training runs in a wide variety of different board games, and discuss several open problems and avenues for future research.

Introduction

Self-play training approaches such as those popularised by AlphaGo Zero (Silver et al., 2017) and AlphaZero (Silver et al., 2018), based on combinations of Monte-Carlo tree search (MCTS) (Kocsis and Szepesvári, 2006; Coulom, 2007; Browne et al., 2012) and Deep Learning (LeCun et al., 2015), have been demonstrated to be fairly generally applicable, and achieved state-of-the-art results in a variety of board games such as Go (Silver et al., 2017), Chess, Shogi (Silver et al., 2018), Hex, and Havannah (Cazenave et al., 2020). These approaches require relatively little domain knowledge, but still require some in the form of:

A complete implementation of a forward model for the game, for the implementation of lookahead search as well as automated self-play to generate experience for training. Knowledge of which state features are required or useful to provide as inputs for a neural network. Knowledge of the action space, which is typically used to construct the policy head in such a way that every distinct possible action has a unique logit.

The first requirement, for the implementation of a forward model, is partially addressed by research on using learned simulators for tree search as in MuZero (Schrittwieser et al., 2020), but in practice a simulator is actually still required for the purpose of generating trajectories outside of the tree search. For the board games Go, Chess, and Shogi, MuZero still requires the input and output tensor shapes (for states and actions, respectively) to be manually designed per game. We remark that MuZero was also evaluated on 57 different Atari games in the Arcade Learning Environment (ALE) (Bellemare et al., 2013), and it can use identical tensor shapes across all these Atari games because ALE uses the same observation and action spaces for all games in this framework. The requirement for knowledge of the action space may be avoided by not training a policy at all (Cohen-Solal, 2020), but throughout this paper we assume that it is still desirable to train one.

The challenge posed by General Game Playing (GGP) (Pitrat, 1968) is to build systems that can play a wide variety of games, which makes the three forms of required domain knowledge listed above difficult. A number of systems have been proposed that can interpret and run any arbitrary game as long as it has been described in their respective game description language, such as the original Game Description Language (GDL) (Love et al., 2008) from Stanford, Regular Boardgames (RBG) (Kowalski et al., 2019), and Ludii (Browne et al., 2020; Piette et al., 2020).

In this paper, we describe how we combine the GGP system Ludii and the PyTorch-based (Paszke et al., 2019) state-of-the-art training algorithms in Polygames (Cazenave et al., 2020), with the goal of mitigating all three of the requirements for domain knowledge listed above. Section 2 provides some background information on these training techniques. Section 3 describes existing work and limitations in applying these Deep Learning approaches to general games. Section 4 presents the interface between Ludii and Polygames. Experiments and results are described in Section 5. We discuss some open problems in Section 6, and conclude the paper in Section 7.

Background

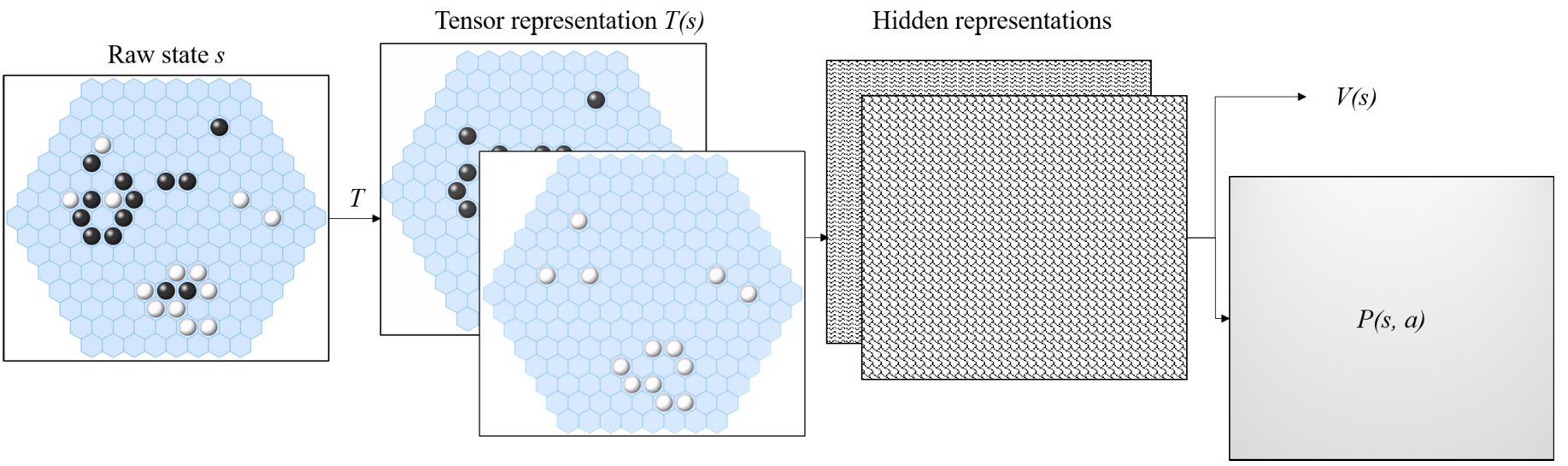

The basic premise behind AlphaZero and similar approaches in frameworks such as Polygames is that Deep Neural Networks (DNNs) take representations

DNNs in general have a fixed architecture, requiring fixed and predetermined shapes for both the input and the output representations. The value output is always simply a scalar,1

Assuming 2-player zero-sum games; see (Petosa and Balch, 2019) for relaxations of this assumption.

Basic architecture of DNNs for game playing. Raw game states s are transformed into a tensor representation

For the policy head, it is customary for neural networks to first output real-valued logits

In addition to such typical architectures, Polygames (Cazenave et al., 2020) includes various different structures, such as:

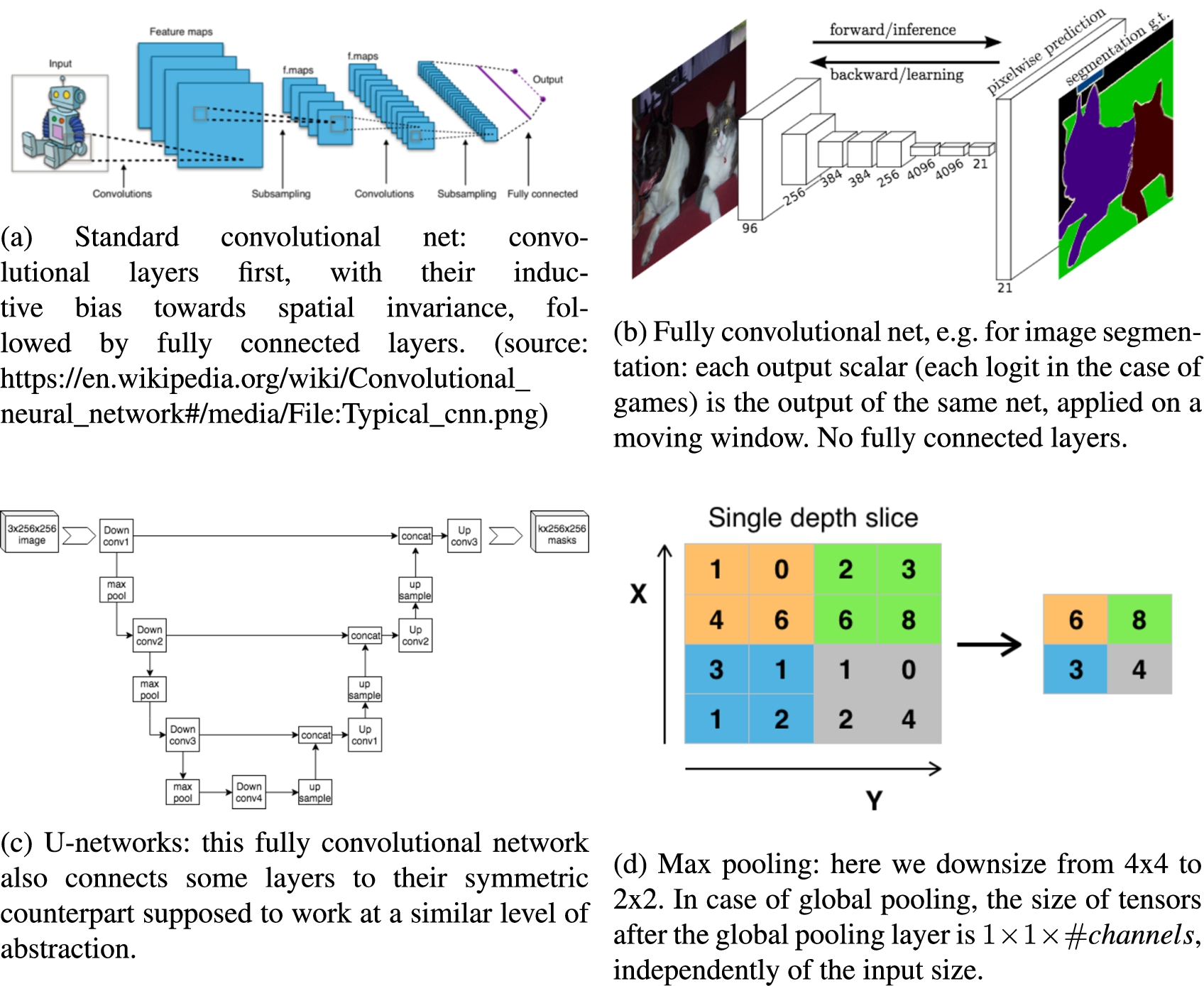

Some of these different structures are depicted and explained in Fig. 2.

Convolutional neural network (a). Fully convolutional counterpart (b, image from (Shelhamer et al., 2017); other images from Wikipedia), typically used in image segmentation: image segmentation is related to policy heads in games in that the output has the same spatial coordinates at the input. U-networks (C): only convolutional layers, and skip connections symmetrically connecting layers. Global pooling (d): here we down-sample to a spatial size 1x1 in the value head: this is boardsize invariant. Global pooling can use channels for mean, standard deviation, max, etc: the number of channels is not necessarily preserved. (B+d) or (c+d) allow boardsize-invariant training (Cazenave et al., 2020).

To some extent, all GGP systems mitigate the requirement for the implementation of complete forward models for every distinct game, in the sense that new games can be added and supported simply by defining them in a game description language. Ludii’s game description language in particular has been designed in such a way that game descriptions for new games are fast and easy to write and understand (Browne et al., 2020; Piette et al., 2020), which has allowed for a significantly larger library of distinct games2

Ludii has over 1,000 distinct built-in games at the time of this writing, with many of them having multiple variants for different board sizes, board shapes, variant rulesets, etc.

Similarly, we may argue that running games through a GGP system removes the requirements for game-specific knowledge about how to shape state inputs and action outputs, but introduces requirements for similar knowledge about the GGP system. Given any arbitrary game defined in a game description language of a GGP system, we require the ability to construct tensor representations of game states, and the ability to map from any index in a policy head to a matching action in any non-terminal game state.

GDL (Love et al., 2008) is a low-level logic-based game description language, where games are described as logic programs consisting of many low-level propositions. Many GDL-based agents convert such a GDL description into a propositional network (Schkufza et al., 2008; Cox et al., 2009; Sironi and Winands, 2017), which can more efficiently process the games than Prolog-based reasoners or other similar techniques. Such propositional networks can be automatically constructed from GDL descriptions, and the structure of such a network remains constant across all game states of the same game. Goldwaser and Thielscher (2020) therefore proposed using the internal state of a game’s propositional network as the input state tensor for a deep neural network. A downside of this approach is that the state input tensor is a flat tensor, and there is no possibility to use inductive biases such as those of CNNs for inputs with spatial semantics. Galvanise Zero (Emslie, 2019) does exploit knowledge of spatial semantics through CNNs, but it only supports a limited selection of GDL-based games because it requires a handwritten Python function to create the mapping from game states to input tensors for every game that it supports. The action space can automatically be inferred from GDL descriptions, which means that these approaches require no extra domain knowledge with respect to the output policy heads.

In the game description language of Ludii (Browne et al., 2020; Piette et al., 2020), common high-level game concepts such as boards, piece types, etc. are all “first-class citizen” of the language, as opposed to GDL where every separate game description file encodes such concepts from scratch in low-level logic. Based on these concepts, Ludii also has an object-oriented game state representation that it uses internally, which remains consistent across all games. This enables us to write a single function that automatically constructs input tensors from Ludii’s internal state representation, using our domain knowledge of Ludii as a whole instead of domain knowledge of every individual game. Unlike GDL, it is not straightforward (if at all possible) to infer the action space from game description files in Ludii. However, actions in Ludii do have an object-oriented structure, and at least an approximation of the action space can be constructed based on these properties – again, based on domain knowledge of Ludii rather than any individual game. In many games, this is sufficient to distinguish most or all legal actions from each other.

Based on the insights described above, we developed an interface between the Ludii general game system, and the Polygames framework with state-of-the-art AI training code. In Polygames, different games are normally implemented from scratch in C

Constructing the spatial dimensions

CNNs normally operate on grid structures of “pixels”, such that every position can be indexed by a row and column, and every position has a square of up to eight neighbour positions around it. This structure resembles the game boards of games such as Chess, Shogi, and Go most closely. Some other boards, such as the tilings of hexagonal cells used in games like Hex and Havannah, can also be “packed” into such a grid. This approach is used for the game-specific C

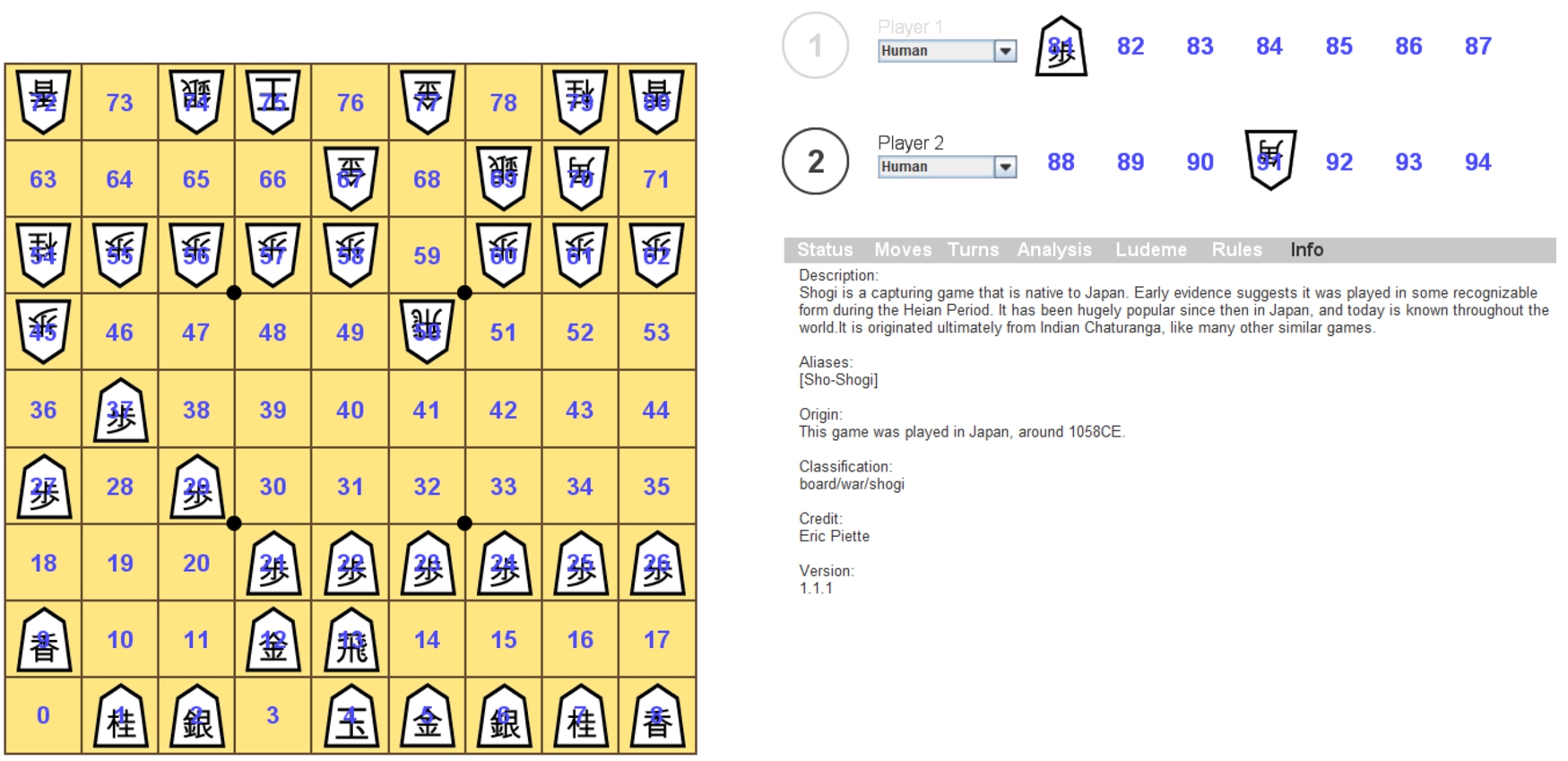

For every game in Ludii, there is at least one (and possibly more than one) container, which specifies a playable “area” with positions that may contain pieces, have corresponding clickable elements in Ludii’s GUI, etc. (Browne et al., 2020; Piette et al., 2020,2021). The first container typically corresponds to the board that a game is played on, and is often the largest. Any other containers represent auxiliary areas, such as players’ hands to hold captured pieces in Shogi. Even games that are not generally thought of as being played on a board are still modelled in this way in Ludii. For instance, Rock-Paper-Scissors is modelled as a board with two (initially empty) cells, and two hands for the two players, each containing rock, paper, and scissors “pieces” which players can drag onto their designated cells on the board to make their move.

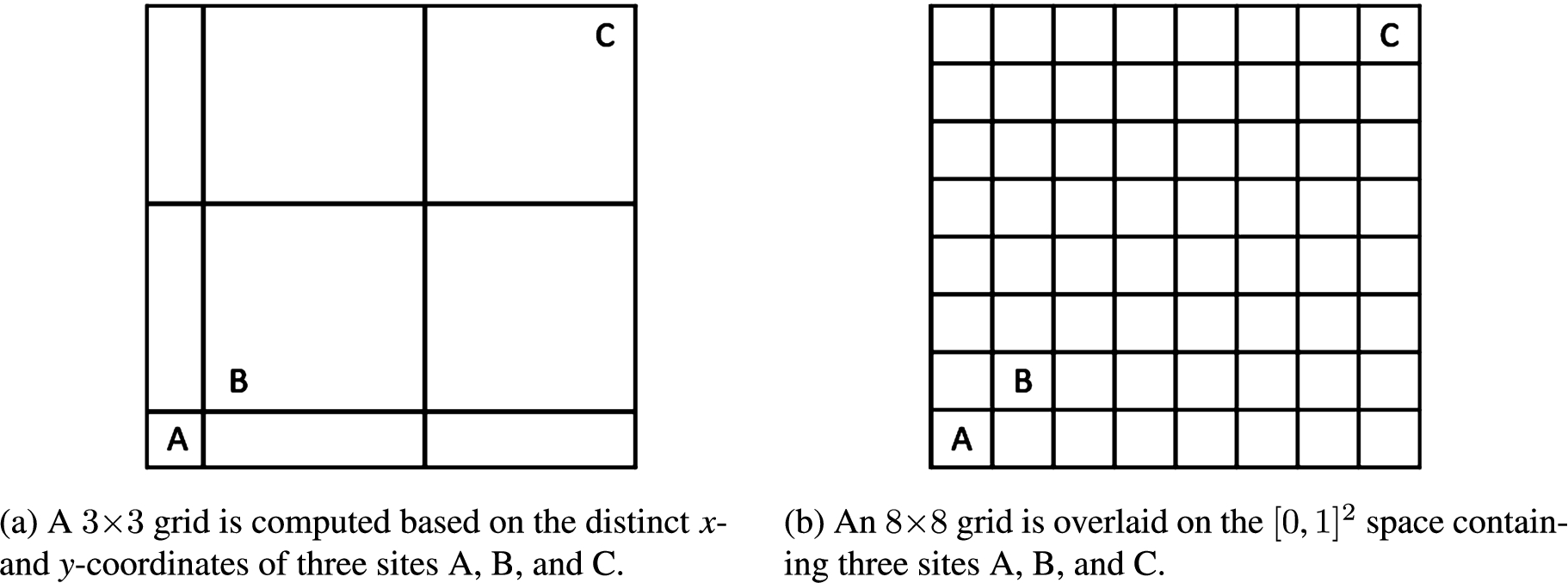

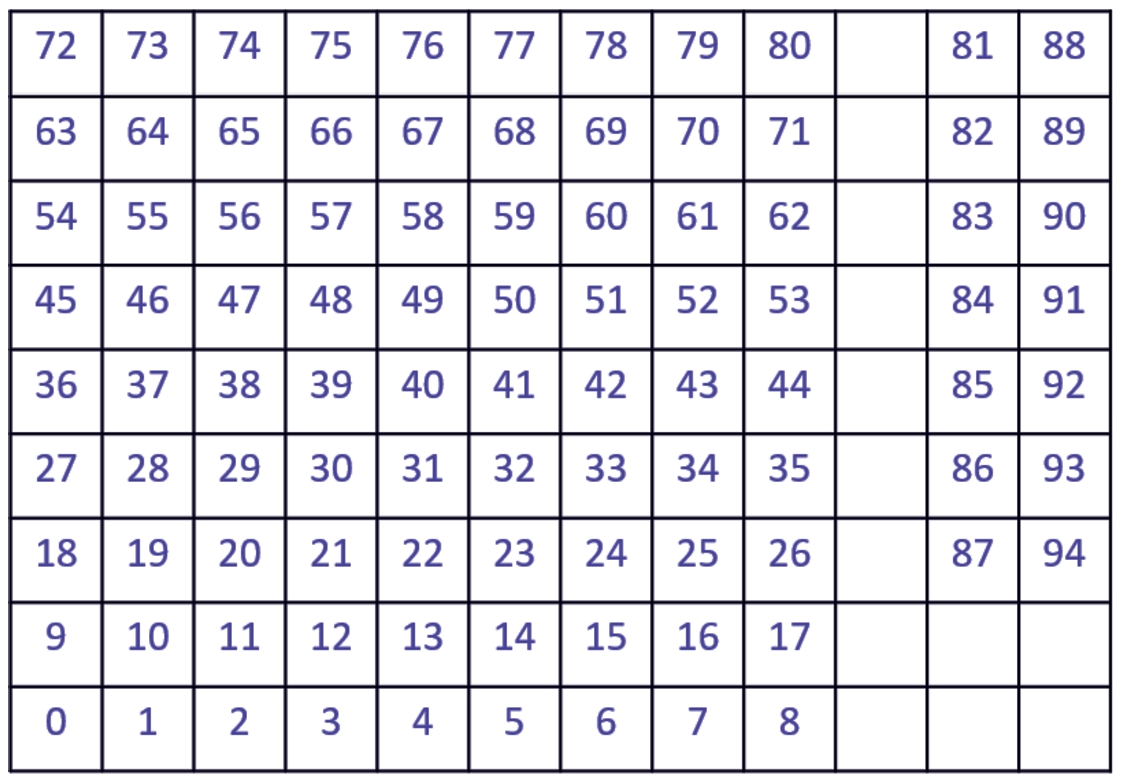

Every site in any such container in Ludii has x and y coordinates in

Two different approaches for computing a grid based on a playable space defined by three sites A, B, and C, each with distinct x- and y-coordinates. The approach we use is depicted in (a). This approach results in smaller, more dense tensors, but information of the relative distances between all sites is not necessarily preserved. The alternative approach, depicted in (b), preserves more of this information, but can result in large and sparse tensors.

In the current version of Ludii, containers other than the first one (corresponding to the “main” board) never have more than one meaningful dimension; they are always a single, contiguous sequence of cells. Each of those containers is concatenated to the grid constructed for the first container, either using one extra column or one extra row per extra container (whichever results in the lowest increase in total size of the tensor). Additionally, one extra dummy row or column is inserted to create a more explicit separation between the main board (for which we expect there to be meaningful spatial semantics) and the other containers (for which there is no expectation that any meaningful spatial semantics exist). For example, Shogi is played on a

Shogi being played in Ludii’s user interface. The game board is on the left-hand side, and each player has a “hand” with seven slots to hold captured pieces on the right-hand side. Figure 5 shows how the numbered positions get mapped to positions in a tensor.

Mapping from positions in Shogi’s three containers to positions in a single tensor. Numbers 0 through 80 correspond to positions on the board, 81 through 87 are positions in the hand of Player 1, and 88 through 94 are positions in the hand of Player 2 (see Fig. 4).

Let s denote a raw game state in Ludii’s object-oriented state representation (Piette et al., 2021), for a game Binary channels indicating the presence (or absence) of every piece type defined in If If Ludii’s state representation can include an “amount” value per player, primarily intended to represent money for games that involve betting or other similar mechanisms. If If In some games, every position has a “local state” variable, which is an integer value. Different games can use this in different ways to store (temporary) auxiliary information about positions. For instance, local state values of 1 are used for positions that contain pieces that are still in their initial position, and values of 0 otherwise (this is used for castling). Most games only use low local state values, if any at all. Hence, we use separate binary channels to indicate local state values of 0, 1, 2, 3, 4, and If the game uses a swap rule (or “pie rule”), such as Hex, we include a binary channel that is filled with values of 1 if and only if a swap has occurred in s. For every distinct container in For each of the last two moves m played prior to reaching s, we add one binary channel with only a single value of 1 in the entry corresponding to the “from” position of m (typically the location that a piece moves away from), and a similar channel for the “to” position of m (typically the location that a piece is placed in).

With these channels, we did not yet exhaustively cover all the state variables in Ludii’s game state representation (Piette et al., 2021), but we covered the most commonly-used ones. Whenever new variables are added to Ludii’s game state representation, engineering effort for including these in the tensor representations is only required once for Ludii as a whole – not once per game added to Ludii.

Representing Ludii actions as tensors

In contrast to GDL (Love et al., 2008; Emslie, 2019; Goldwaser and Thielscher, 2020), it is not straightforward – if at all possible – to automatically infer the complete action space for any arbitrary game described in Ludii’s game description language. This is because in Ludii’s game description language, the function that generates lists of legal moves is defined as a composite of many simple functions (ludemes), which may be arranged in any arbitrary tree structure. While each of these ludemes in principle has some domain for its possible inputs, and range for its possible outputs, these are not strictly defined in logic-based or other formats that permit automated inference.

Similar to its state representation, Ludii has an object-oriented move3

In this document we use the terms “move” and “action” interchangeably, to refer to complete decisions that players make. Within Ludii, these are referred to only as moves, and actions are smaller parts of moves.

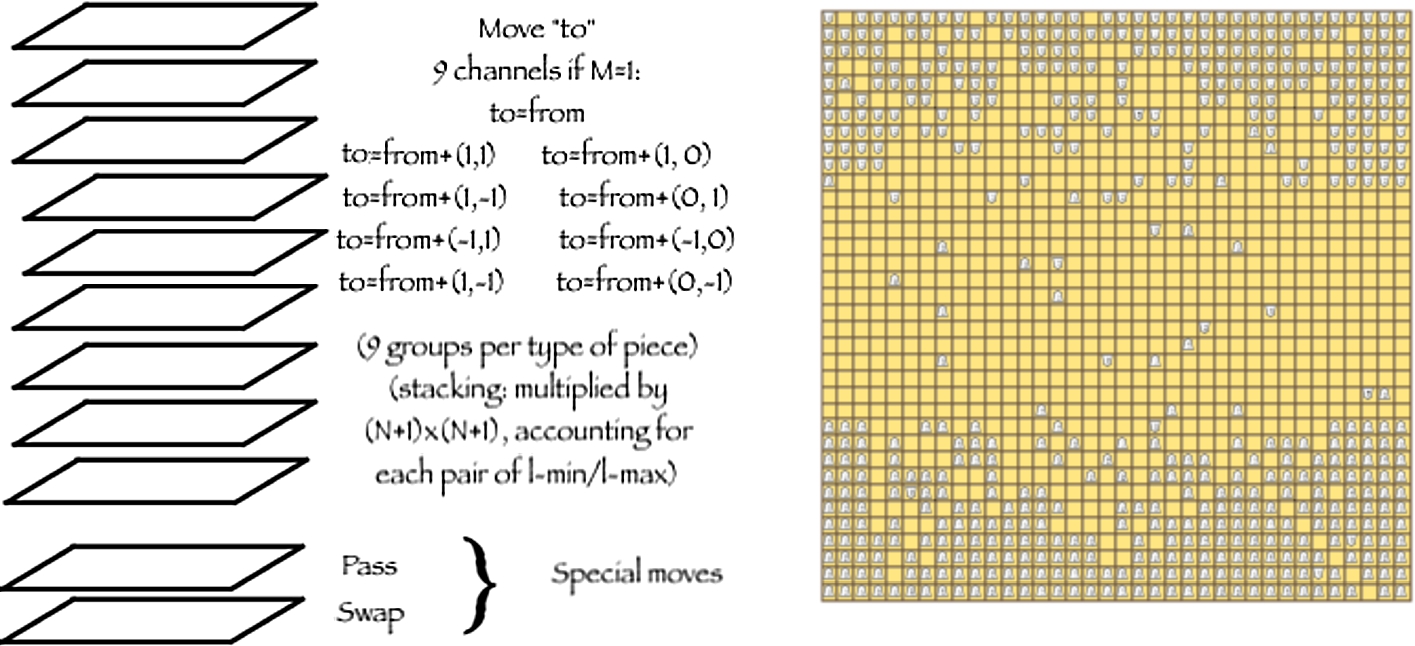

The action space is organised as a stack of 2-dimensional planes, with the spatial dimensions being identical to those of the state tensors (see Section 4.2). Every action will map to exactly one position in this space – i.e., one location in the 2-dimensional area, and one channel.

Pass and swap moves have been identified as special cases that are sufficiently common, important, and semantically different from any other kind of move that they warrant the inclusion of their own dedicated channels.

Many games only involve moves that can be identified by just a single position in the spatial dimensions; these are generally games where players place stones (Go, Hex, Havannah, etc.), but may in theory also be games like Chess if they have been defined in a way such that movements are split up into two separate decisions (picking a source and picking a destination). These games can be automatically discovered in Ludii. For these games, we only add one more channel in addition to the pass and swap move channels, to encode all other moves based on their positions in the spatial dimensions. In Ludii, this position is referred to as the “to” position.

In all other games, moves may have distinct “from” and “to” positions; typical examples are standard implementations of Chess, Amazons, Shogi, etc. For moves that have an invalid “from” position, we assume that it is equal to the “to” position. For games that involve stacking, moves may additionally have

Left: representation of the action space tensor. Right: Taikyoku Shogi (804 pieces of 209 distinct types per player) leads to an unmanageable explosion in state and spaces.

While we find this approach to be sufficient to distinguish moves from each other in many cases, there are cases where multiple distinct moves that are legal in a single game state will end up being represented by exactly the same logit. When multiple distinct moves are represented by the same logit in a DNN’s output, we say that they are aliased. DNNs cannot distinguish between aliased moves, and hence always provide the same advice (in the form of the prior probabilities

In this section we describe experiments4

The source code of Ludii is available from

We used the same training hyperparameters across all games. Every training run used 20 hours of wall time, with 8 GPUs, 80 CPU cores, and 475GB memory allocated per training job. Every training job used 1 server for model training, and 7 clients for the generation of self-play games. The MCTS agents used 400 MCTS iterations per move in self-play.

The final model checkpoint of every training run is evaluated in a set of 300 evaluation games played against a pure MCTS – a standard UCT agent (Kocsis and Szepesvári, 2006; Browne et al., 2012) without any DNNs. In evaluation games, the MCTS with a trained model used 40 iterations per move, whereas the pure MCTS used 800 iterations per move – where at the end of every iteration, the average outcome of 10 random rollouts is backed up. The final column of Table 1 reports the win percentages of the trained MCTS against the untrained MCTS. The table also provides further details on the number of trainable parameters in each of the DNNs, and for some games summarises unusual properties that these games have which we did not yet observe in much of the existing literature on learning through self-play in games.

In the majority of the evaluated games, the trained MCTS easily outperforms the untrained one, even using 20 times fewer MCTS iterations (or 200 times fewer if the number of random rollouts performed by the untrained MCTS is counted). Note that, in comparison to work that focuses on achieving superhuman playing strength (Silver et al., 2018; Cazenave et al., 2020), we focused on short training runs using fewer resources and smaller networks. Our primary aim is to demonstrate the possibility of training effectively using a single implementation without game-specific domain knowledge.

Data for a variety of different games, all implemented in Ludii v1.1.6, for which we trained models in Polygames over a duration of 20 hours using 8 GPUs and 80 CPU cores per model. The second column lists some interesting properties for games that we have not yet often seen (if at all) in existing literature using AlphaZero-like training approaches. The third column lists the number of trainable parameters in the model (we used identical Polygames hyperparameters for the DNN architecture across all games, but in Polygames by default the number of channels in hidden convolutional layers scales with the number of input channels). The last column lists the win percentages of MCTS with the trained model using 40 iterations per move, against MCTS without any trained model using 800 iterations per move – where every iteration backs up the average outcome of 10 random rollouts

The two results that stand out most are for Lasca and Fanorona. The win percentage of

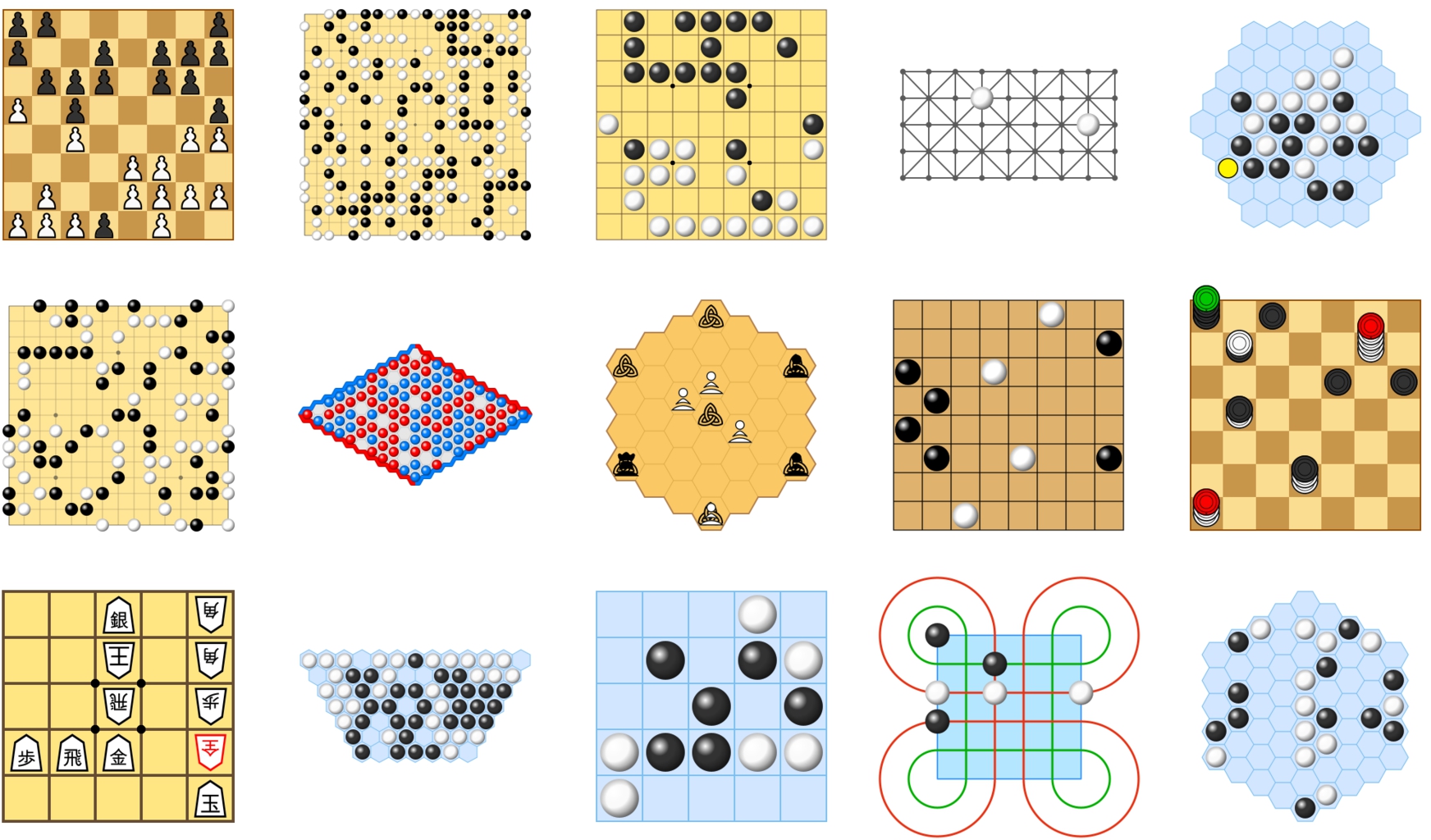

Screenshots of all the Ludii-based games included in our experiments.

Thanks to the large library of games available in Ludii (Browne et al., 2020; Piette et al., 2020), we can get a clear picture of categories of games that are open problems to various extents; some that are simply not supported yet by Polygames (Cazenave et al., 2020) and require more engineering effort, and some that appear to have been neglected across the majority of recent game AI literature. All of these types of games are supported by Ludii:

Stochastic games: these were not included in this paper because they are temporarily unsupported by the MCTS implementation of Polygames, but were supported in earlier versions of Polygames and will be again in future versions. Games with more than 2 players: support for these can be added relatively easily (Petosa and Balch, 2019), but is not yet available in Polygames. Imperfect-information games: there has been some recent work towards AlphaZero-like training approaches that support imperfect-information games (Brown et al., 2020), but tractability is still a concern for games with little common knowledge. Simultaneous-move games: simultaneous-move games will at least require significant changes in the MCTS component (Browne et al., 2012) as it is typically used in AlphaZero-like training setups. Games with excessively large state or move tensors: games such as Taikyoku Shogi (depicted in Fig. 6), with a Games played on a mix of cells, edges and/or vertices of graphs: while games like Chess are only played on cells, and games like Go only on vertices, there are also games such as Contagion that are played on a mix of multiple different parts of a graph. It is not clear how to directly support these with the standard CNNs. Games without an explicitly defined board: games such as Andantino or Chex are not played in a limited area that is defined upfront, but in a playable area that grows dynamically as play progresses. The standard DNN architectures require these spatial dimensions to be predefined and fixed. Games with more than 2 spatial dimensions: games such as Spline have a third spatial dimension, which cannot be handled by the standard 2D convolutional layers. While a straightforward extension to 3D convolutional layers may be sufficient, we are not aware of any existing research towards this for games, and also imagine that a third spatial dimension can rapidly lead to tensors becoming excessively large again for many non-trivial games.

Conclusions

We have described our approach for constructing tensor representations of states and moves for any game implemented in the Ludii general game system, and used this to implement a bridge between Ludii and the Polygames framework. This allows for the state-of-the-art tree search and self-play training techniques implemented in Polygames to be used for training game-playing models in any game described in Ludii’s general game description language. Whereas AlphaZero-like approaches typically require game-specific domain knowledge to define a Deep Neural Network’s architecture and its input and output tensors, we only require such domain knowledge at the level of the general game system as a whole, and can now leverage Ludii’s wide library of games – which can quickly grow thanks to its user-friendly game description language – to facilitate more general game AI research with minimal requirements for game-specific engineering efforts. We have identified a series of “open problems” in the form of classes of games that are already supported by Ludii, but not yet by Polygames. For some of these there is a clear path that merely requires additional engineering effort, but others are likely to require a more significant amount of extra research.

Footnotes

Acknowledgements

This work was partially supported by the European Research Council as part of the Digital Ludeme Project (ERC Consolidator Grant #771292), led by Cameron Browne at Maastricht University’s Department of Data Science and Knowledge Engineering. We thank Éric Piette for his image editing skills, and Matthew Stephenson for his mastery of the English language. We thank the anonymous reviewers for their feedback on this paper.