Abstract

3D shape learning is an important research topic in computer vision, in which the datasets play a critical role. However, most of the existing 3D datasets use voxels, point clouds, mesh, and B-rep, which are not parametric and feature-based. Thus they can not support the generation of real-world engineering computer-aided design (CAD) models with complicated shape features. Furthermore, they are based on 3D geometry results without human-computer interaction (HCI) history. This work is the first to provide a full parametric and feature-based CAD dataset with a selection mechanism to support HCI in 3D learning. First, unlike existing datasets, mainly composed of simple features (typical sketch and extrude), we devise complicated engineering features, such as fillet, chamfer, mirror, pocket, groove, and revolve. Second, different from the monotonous combination of features, we invent a select mechanism to mimic how human focuses on and selects a particular topological entity. The proposed mechanism establishes the relationships among complicated engineering features, which fully express the design intention and design knowledge of human CAD engineers. Therefore, it can process advanced 3D features for real-world engineering shapes. The experiments show that the proposed dataset outperforms existing CAD datasets in both reconstruction and generation tasks. In quantitative experiment, the proposed dataset demonstrates better prediction accuracy than other parametric datasets. Furthermore, CAD models generated from the proposed dataset comply with semantics of the human CAD engineers and can be edited and redesigned via mainstream industrial CAD software.

Keywords

Introduction

Computer-aided design (CAD) plays an important role in modern engineering and manufacturing [1, 2]. Parametric CAD models are particularly important in the design process. Design semantics and human-computer interaction (HCI) represent the design intent and design knowledge of human CAD engineers. Adding HCI metadata to CAD files creates more possibilities for customized manufacturing [3, 4]. However, existing 3D geometric data do not possess these characteristics.

With the drastic development of deep learning for computer science [5, 6, 7, 8, 9, 10, 11, 12], how to learn 3D shapes is becoming an important research area in which 3D datasets play a critical role [13, 14, 15, 16]. Typical 3D datasets, such as voxel-based, point cloud-based, and mesh-based datasets, are typically used in classification tasks, segmentation tasks, and retrieval tasks [17, 18, 19, 20, 21, 22]. However, these datasets are geometry results of 3D shapes, which do not contain HCI processes or parametric features.

Some recent works have begun to consider CAD processing as a sequence of CAD commands, in which the CAD datasets typically include simple features and monotonic combinations of simple features, such as sketch-extrude to learn 3D modeling [23, 24]. Unfortunately, these works continue to ignore the HCI processing of modern CAD systems, which inherently adopts human-centered computing architecture and parametric feature-based history to express the design intention and design knowledge of human CAD engineers. Thus, the above datasets and learning approaches are still far from real-world engineering applications.

To this end, this paper is the first to provide a full parametric and feature-based CAD dataset (WHUCAD1 We will open source all of the WHUCAD dataset and codes at

The main contributions of this paper are as follows:

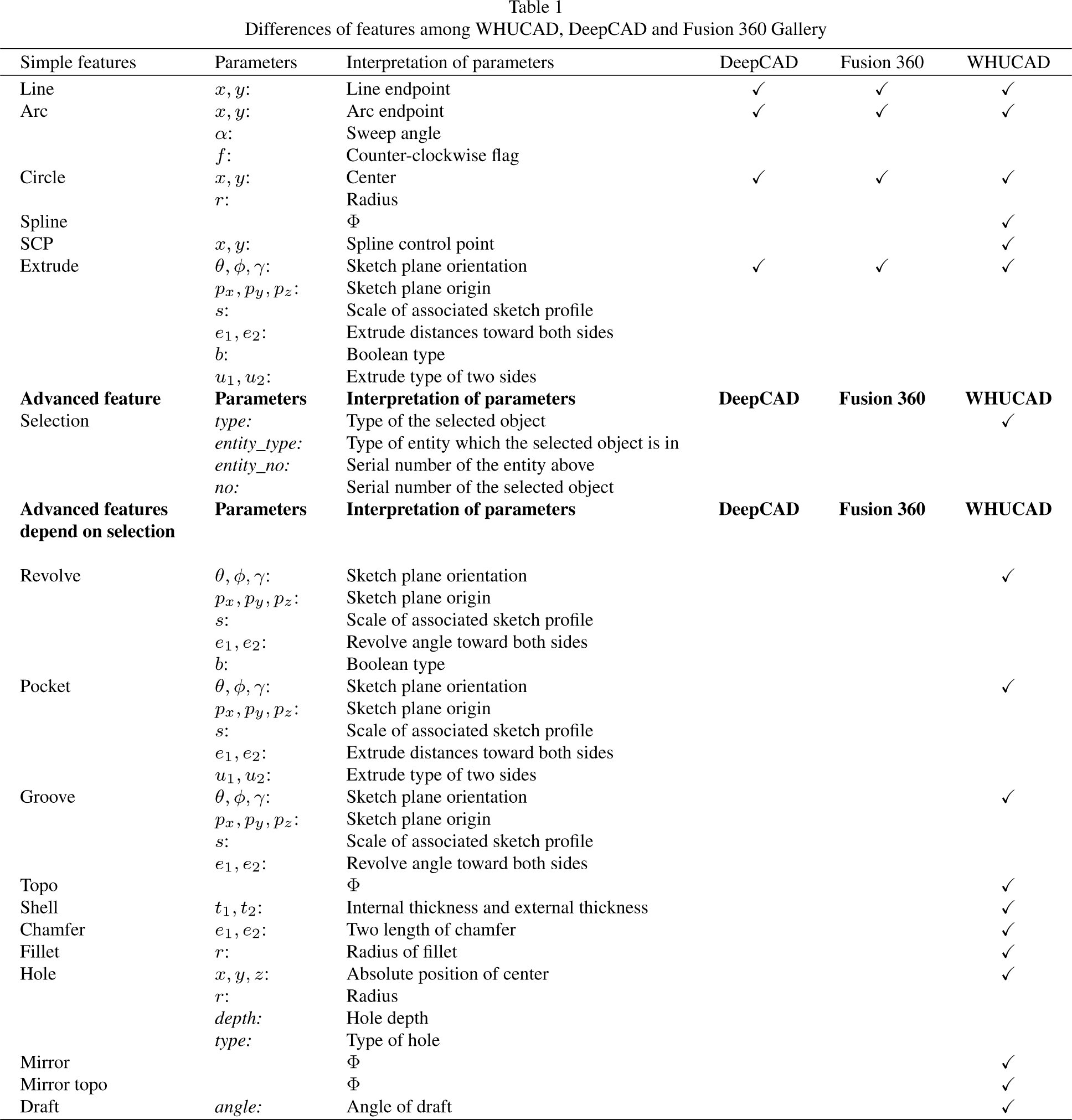

First, unlike the previous DeepCAD dataset, in which the data size is large but the feature types are simple, WHUCAD includes industrial-level features such as extrude, fillet, chamfer, revolve, groove, pocket, shell, mirror, draft, and hole. There are 4 times more feature types in the WHUCAD dataset than in the DeepCAD dataset and the Fusion 360 Gallery dataset, as shown in Table 1. Second, beyond the existing dataset, in which the feature history is a monotonic combination of simple features, WHUCAD devises a learning-based HCI approach, i.e., a selection mechanism, which enables a deep learning network to focus on a particular topological entity. The proposed HCI mechanism learns how a human engineer establishes the relationship between complicated engineering features and topological entities and therefore can fully express the design intentions and design knowledge of human-like AI CAD engineers in the process of 3D learning. Third, CAD models generated from the proposed network trained by WHUCAD can be easily edited and redesigned on mainstream industrial CAD software by human engineers. Therefore, our dataset and discovery are nontrivial and can be a cornerstone for various real-world engineering tasks involving parametric and historical feature-based CAD.

This paper is organized as follows. Section 2 introduces related works on 3D datasets. Section 3 describes WHUCAD in detail. Section 4 shows the results of the experiments and comparisons. Section 7 concludes the paper, including suggestions for future work.

Currently, artificial intelligence (AI) and machine learning impact computer science and HCIs to a large degree [25, 26]. This paper presents a full parametric and feature-based CAD dataset (WHUCAD) to support HCI in 3D learning. Related works are cataloged in the following subsections.

B-rep-based CAD dataset

B-rep is the mathematical representation of the 3D shape. B-rep-based CAD models are geometric models (mathematically defined geometry and topology) that can be further discretized into point clouds and meshes.

The

Many other 3D datasets are the same as ShapeNet [37, 38, 39]. For example,

For another example, the

The above datasets are geometric results of CAD models but not parametric CAD models.

Modern CAD systems adopt human-centered computing architecture and parametric feature-based history to express the design intention and design knowledge of human CAD engineers [54].

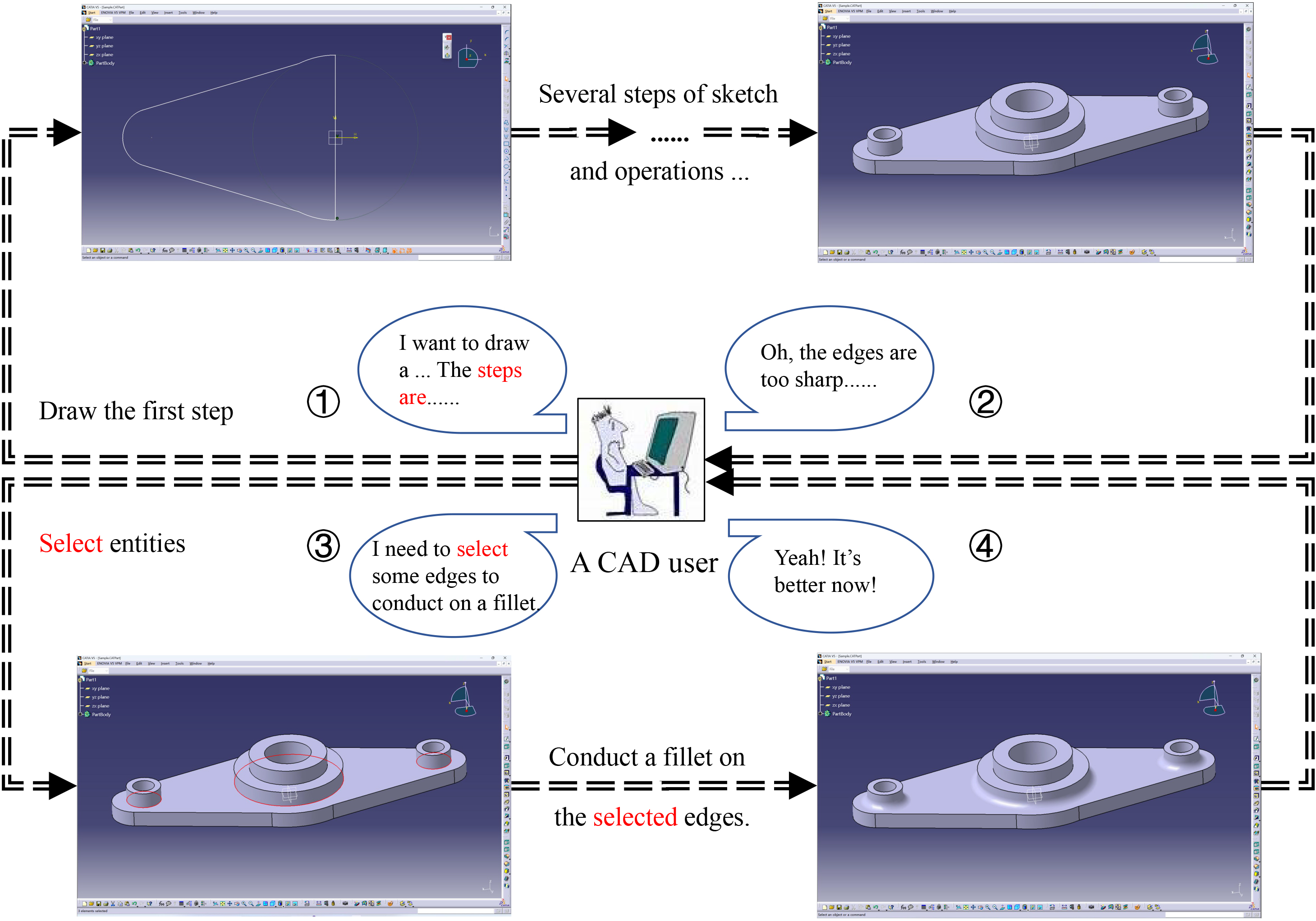

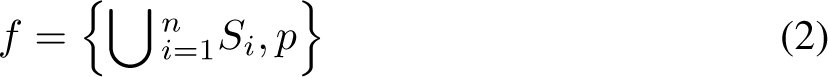

In the process of human-computer interaction, a CAD user is building a CAD model. First, the user needs to conceptualize the model and outline the steps required for creation. After building a generally complete model, the user needs to

Overall, modern CAD systems include two types of model representations. The first one is the B-rep, which is the geometric model and can be further discretized into a point cloud and mesh. The second is the parametric and historical feature-based model (modeling history), which is a set of modeling operations (features) that are issued by a human engineer during the modeling process. The parametric models fully represent the design intention and design knowledge of a human engineer.

The B-rep is the final result (similar to a snapshot) of the parametric model. Thus, one parametric CAD model can derive a family of different B-rep models with different parametric values and sizes [55, 56].

Therefore, for parametric and historical feature-based CAD systems, the parametric model is the source model, which is created by a human engineer with many analyses and considerable labor, while the B-rep is the resulting model (derived from the parametric model) [57]. When a parametric model is converted into a B-rep model, the

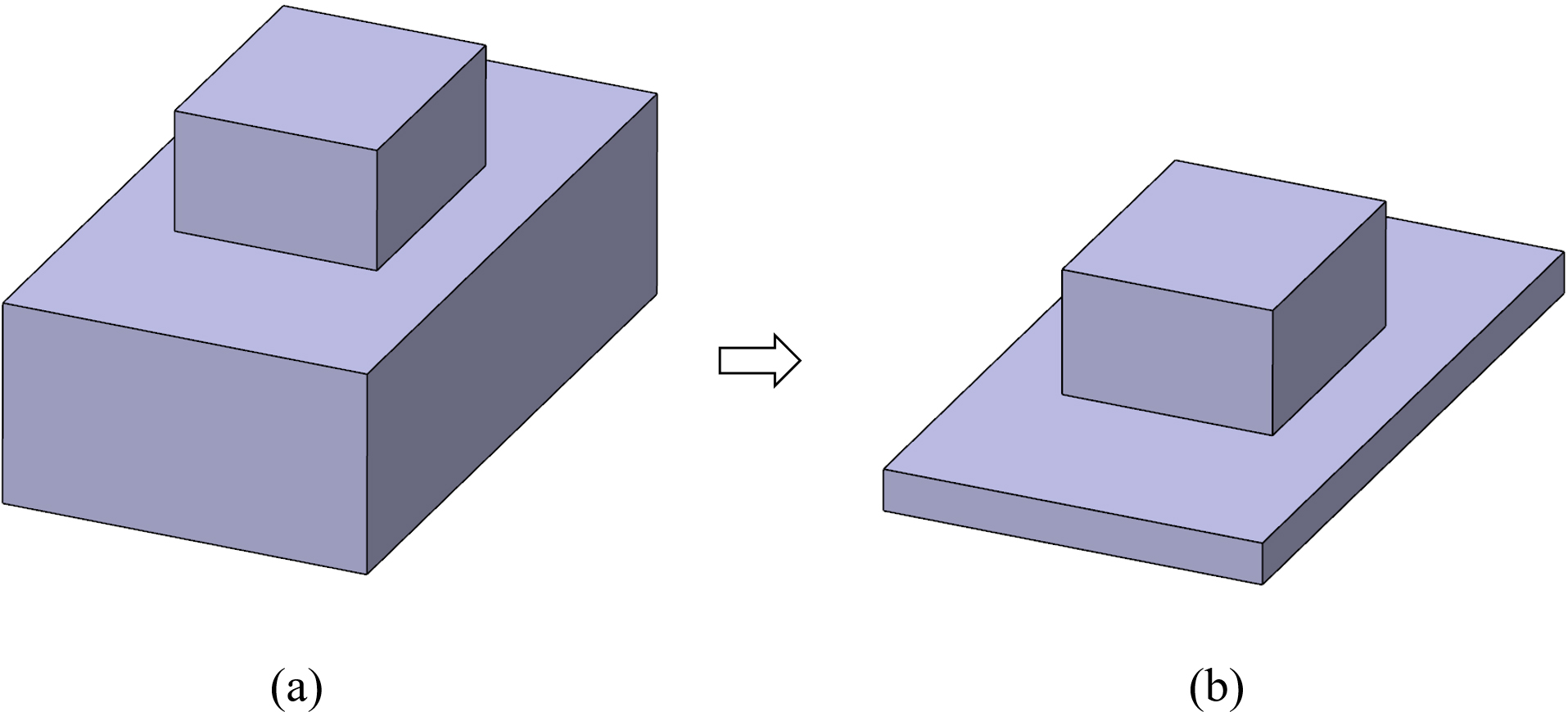

More importantly, as shown in Fig. 1, the parametric and feature-based history contains the HCI process (the selection mechanism). In this way, human engineers can express design intentions and design knowledge with advanced and complicated features.

Some emerging works such as the

The

There are three versions of the

In conclusion, DeepCAD and Fusion 360 Gallery bypass human-computer interactions and therefore do not support advanced features.

To the best of our knowledge, WHUCAD is the first dataset to support human-computer interactions in 3D learning at a high level, similar to mainstream industrial CAD systems; therefore, WHUCAD can efficiently reconstruct and generate CAD models with advanced features for real-world engineering tasks.

Differences of features among WHUCAD, DeepCAD and Fusion 360 Gallery

Differences of features among WHUCAD, DeepCAD and Fusion 360 Gallery

Unlike traditional 3D datasets consisting of geometric data, the WHUCAD dataset includes complicated advanced features. More importantly, to support HCI in 3D learning, we devise a selection mechanism for the WHUCAD dataset.

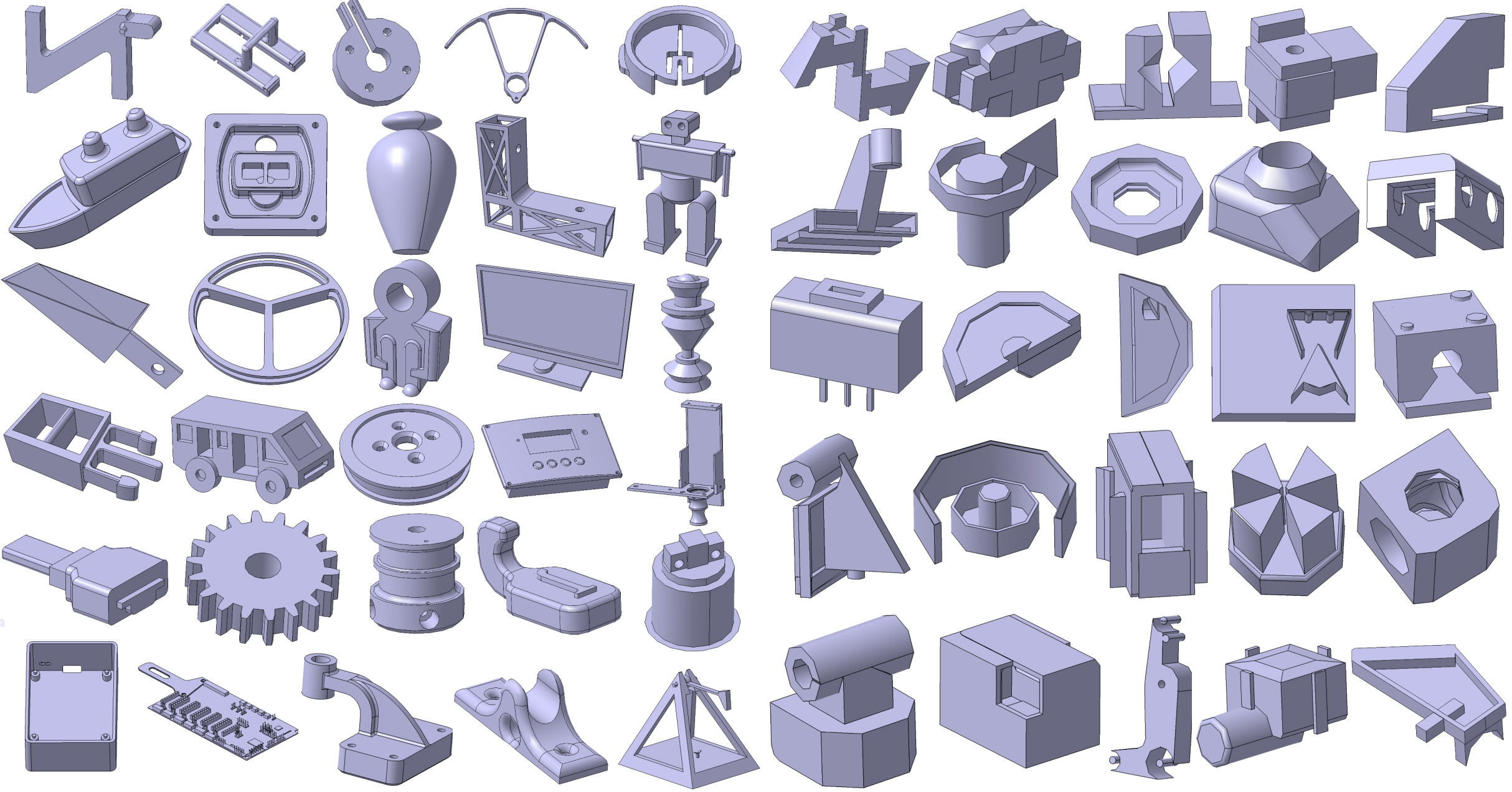

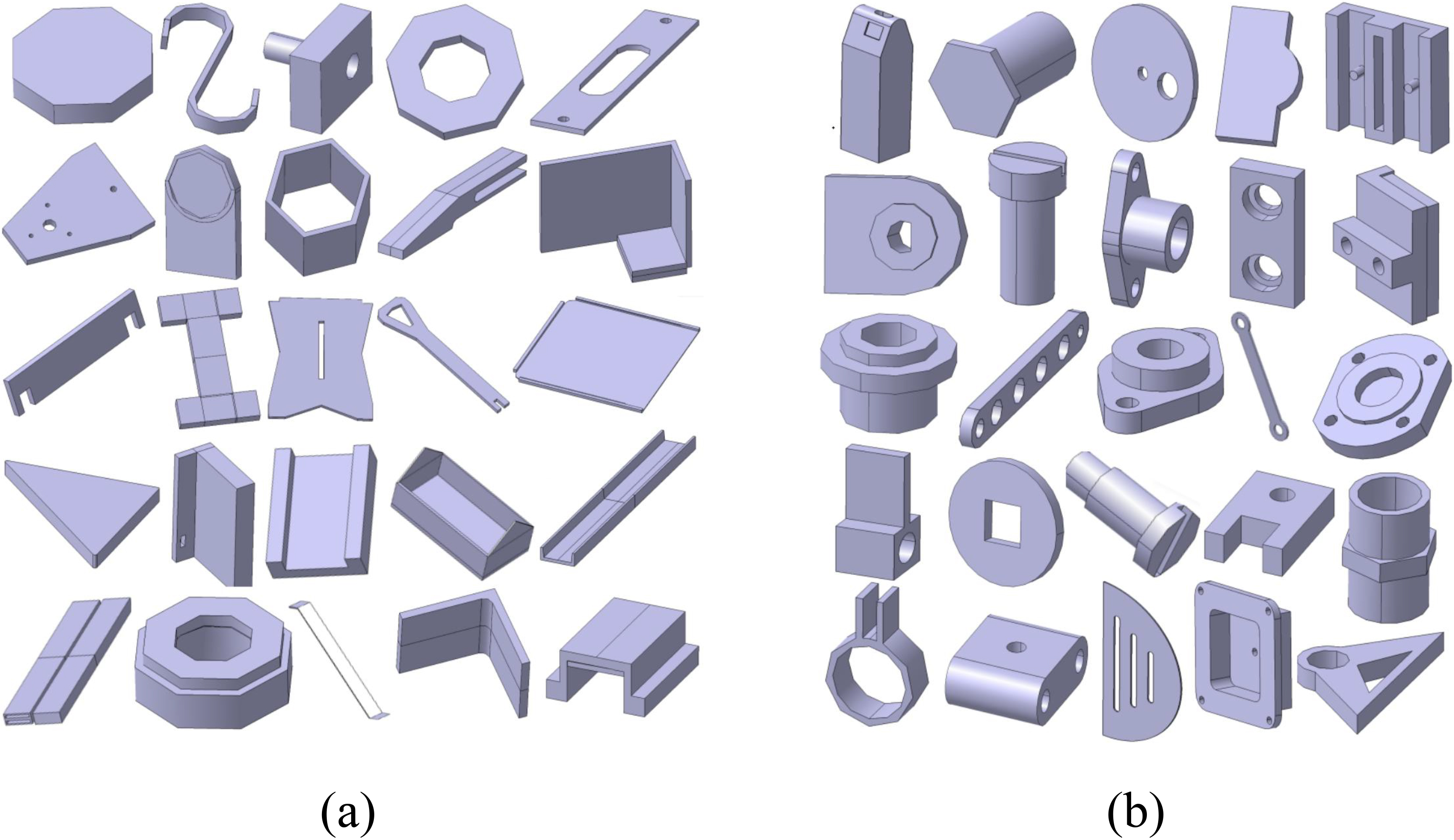

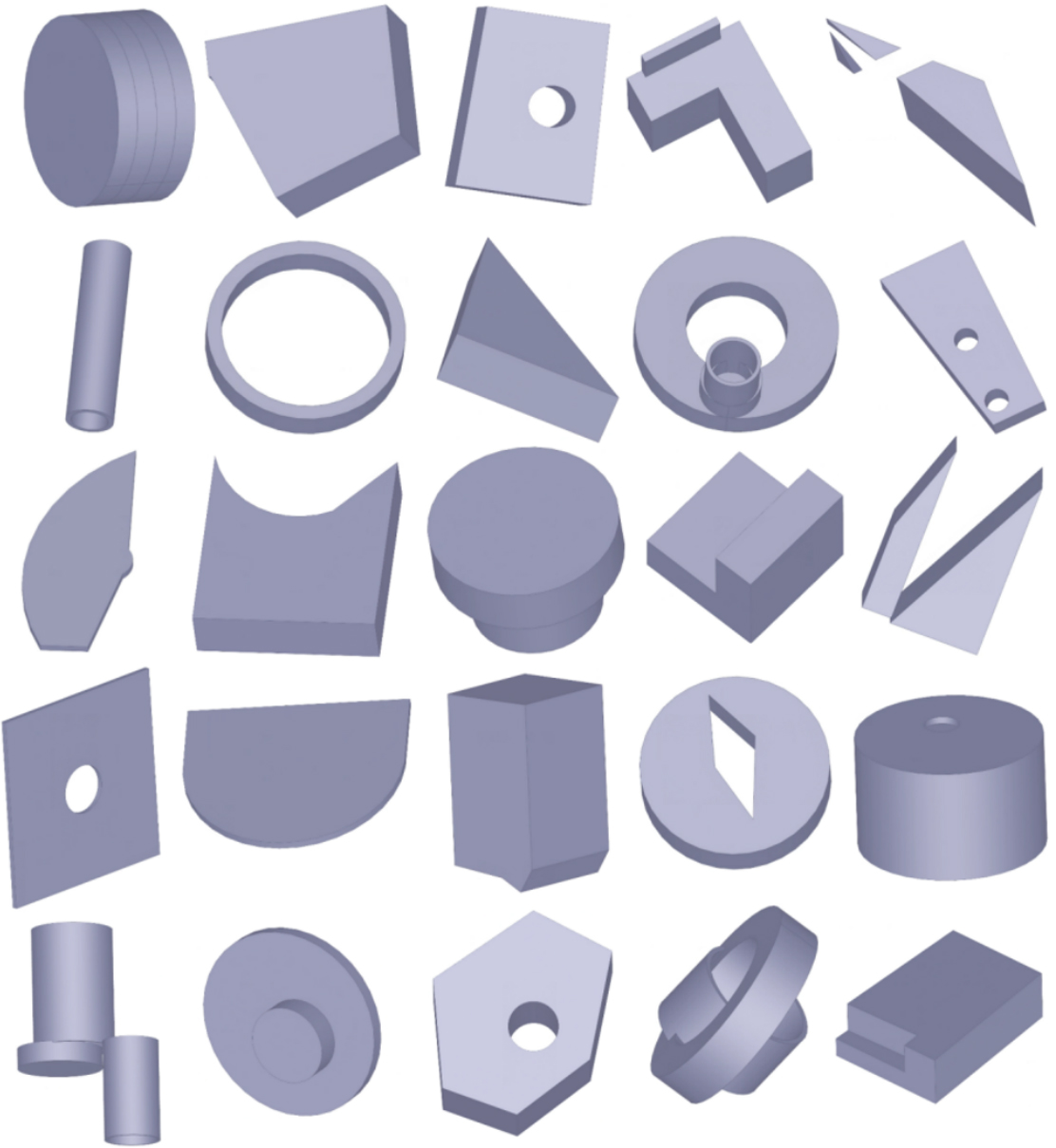

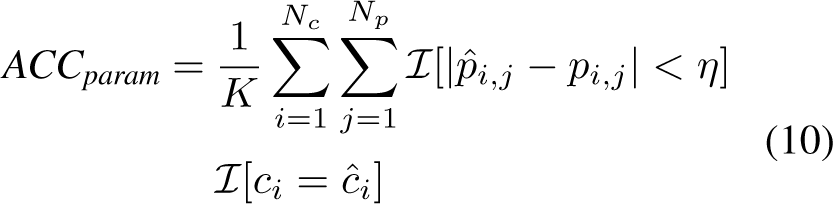

Figure 2 shows examples of the final results in WHUCAD, which show better qualitative comparison results than those of DeepCAD (in Fig. 3(a)) and Fusion 360 Gallery (in Fig. 3(b)).

A gallery of WHUCAD dataset.

A gallery of (a) DeepCAD and (b) Fusion 360.

As shown in Figs 2 and 3, the CAD models in the WHUCAD dataset are obviously more complicated and have more parametric and feature-based characteristics than those in the DeepCAD dataset and the Fusion 360 Gallery dataset. Both the DeepCAD dataset and the Fusion 360 Gallery dataset consist of simple features such as sketch and extrude. As a result, the DeepCAD dataset and the Fusion 360 Gallery dataset cannot support the reconstruction and generation of CAD models that contain advanced features, while the WHUCAD dataset can reconstruct and generate complicated 3D shapes with advanced feature operations such as fillet, chamfer, revolve, pocket, groove, shell, hole, mirror, draft, and especially the HCI mechanism (selection feature).

Therefore, the most important value of the WHUCAD dataset is not the data size but the HCI mechanism and feature types, the number of which is 4 times greater than that of the DeepCAD dataset and the Fusion 360 Gallery datasets (further discussed in Section 3.3).

In modern industrial CAD/CAE/CAM environments, major 3D shape models can be cataloged as discrete representations, B-rep, and parametric representations [68, 69, 70]. Typical discrete representations are mesh, point cloud, and voxel, which can be converted from CAD models for visualization, CAE analysis, and CAM manufacturing. B-rep represents a solid object by its boundary surfaces, such as faces, edges, and vertices. A parametric model is a set of modeling functions (features) that a human engineer issues during a feature-based modeling history. The

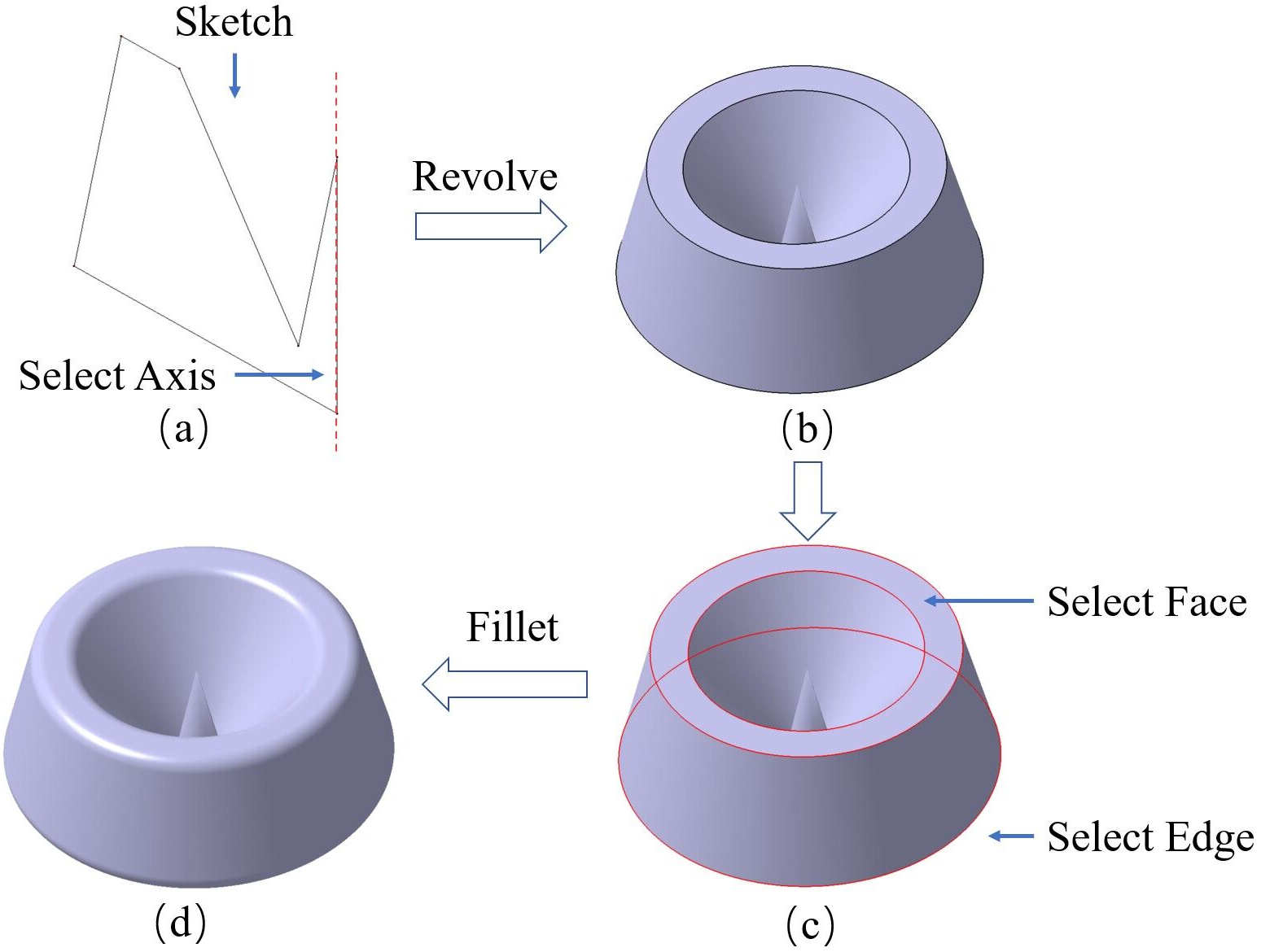

The process of constructing a parametric model is shown in Fig. 4. The fundamental definitions of parametric and historical feature-based CAD models are the following [59, 71].

where

where

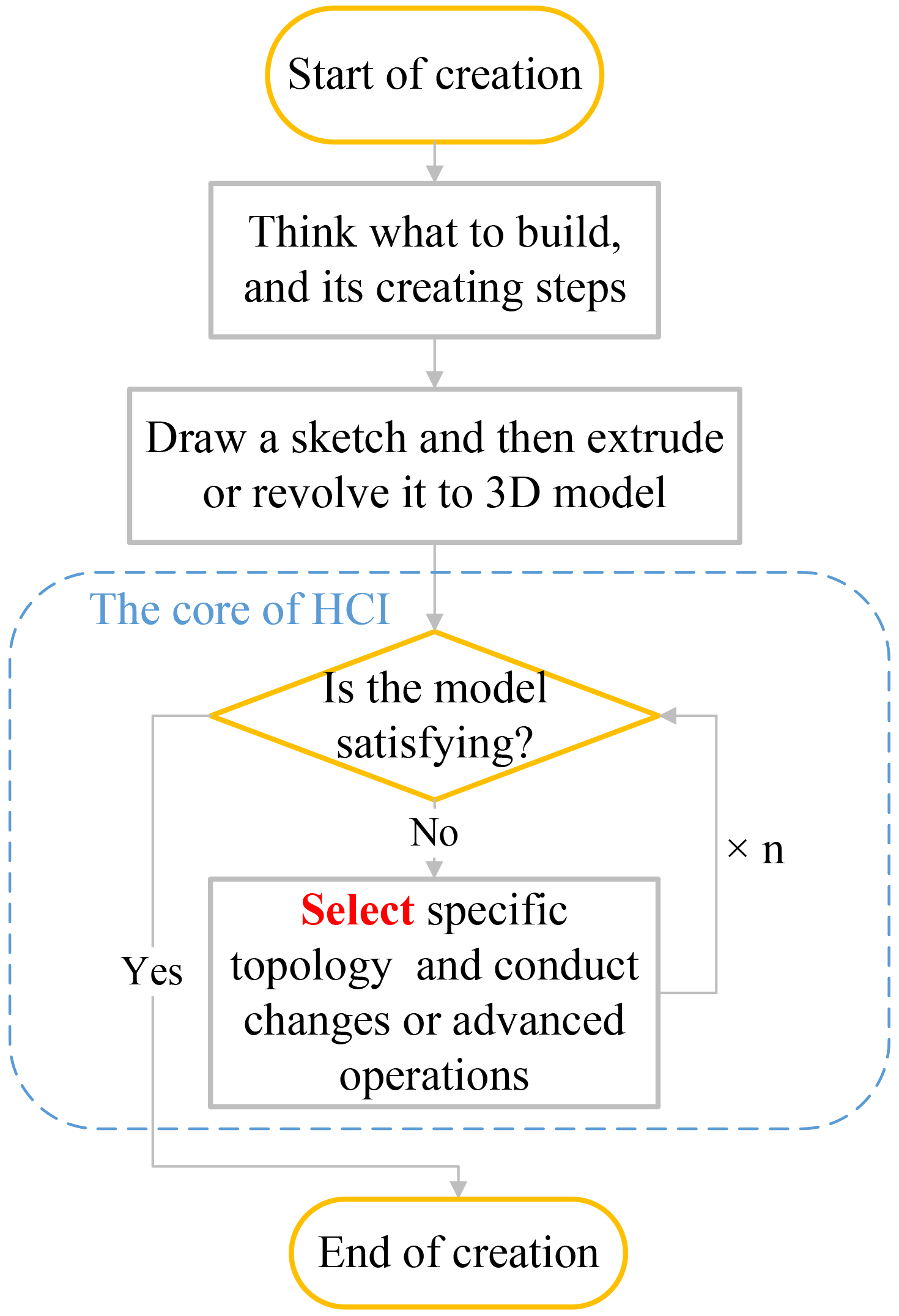

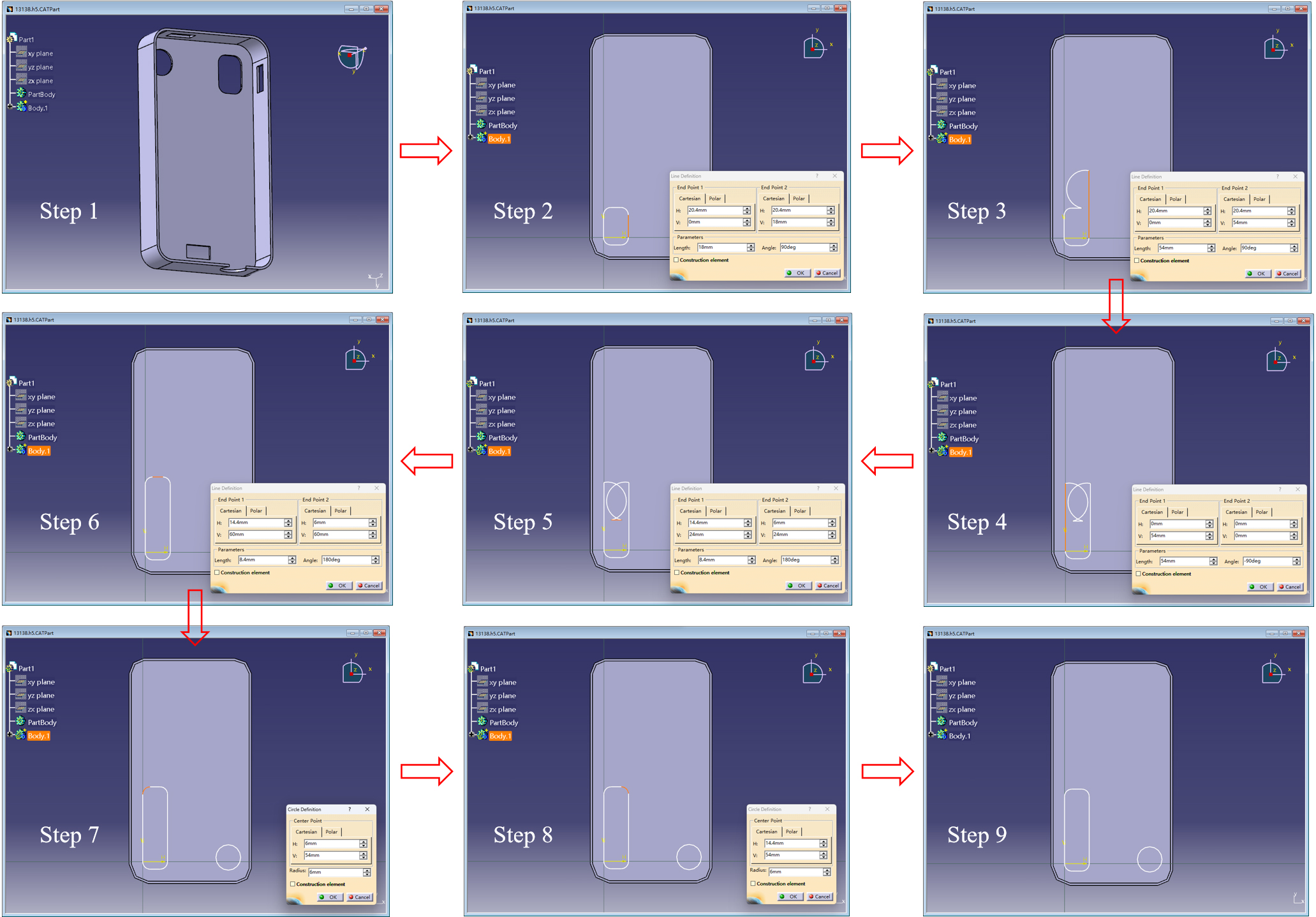

When an engineer watches a 3D shape, he or she can build a parametric CAD model step by step as shown in Fig. 5.

Then, the For advanced features, After selecting the acute edges that are watched and focused on by the human engineer, he or she issues a Finally, a smooth 3D shape with a beautiful round edge is generated for real-world engineering applications, that is, a

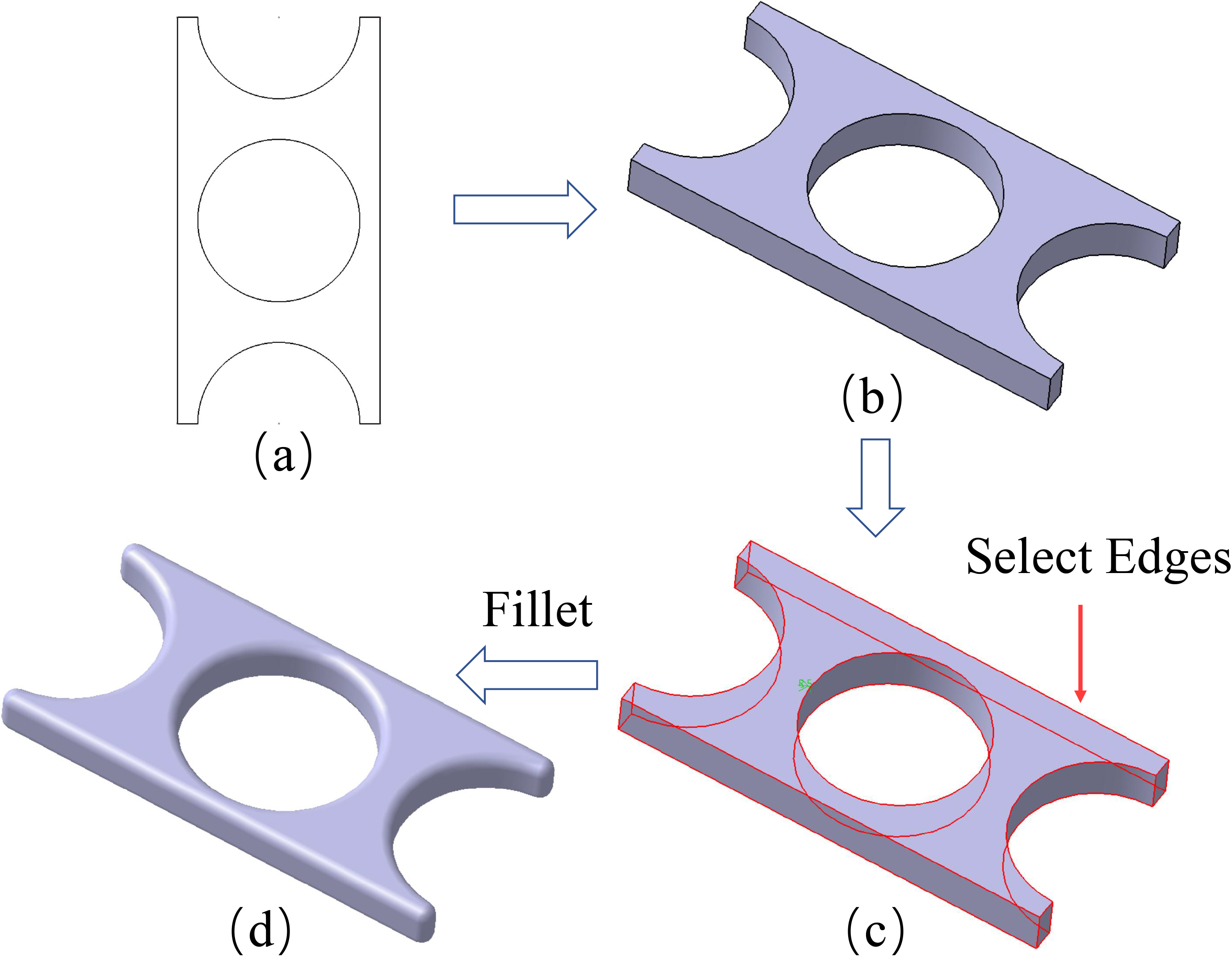

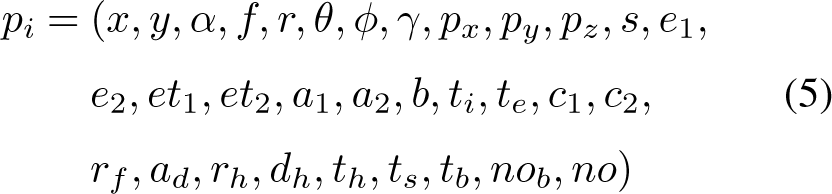

Step 3 and 4 can be iterated for many other complicated features, such as selection-chamfer, selection-revolve, selection-shell, and selection-mirror. A typical revolve feature is shown in Fig. 6, and a typical mirror feature is shown in Fig. 7.

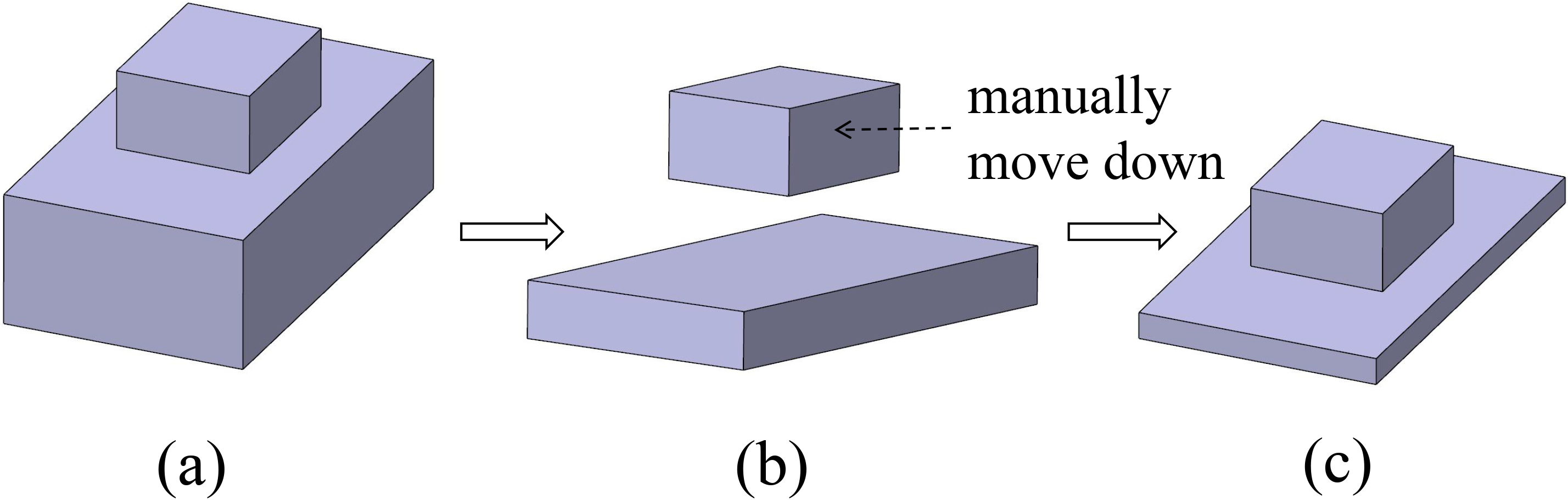

In WHUCAD, 3D CAD data are described as a sequence of features. As a full parametric and feature-based CAD dataset to support HCI, the representation of the feature parameters of a model in the WHUCAD dataset is as follows:

where:

and

The process of building a parametric CAD model.

An example of parametric and feature-based modeling.

An example of the revolve feature.

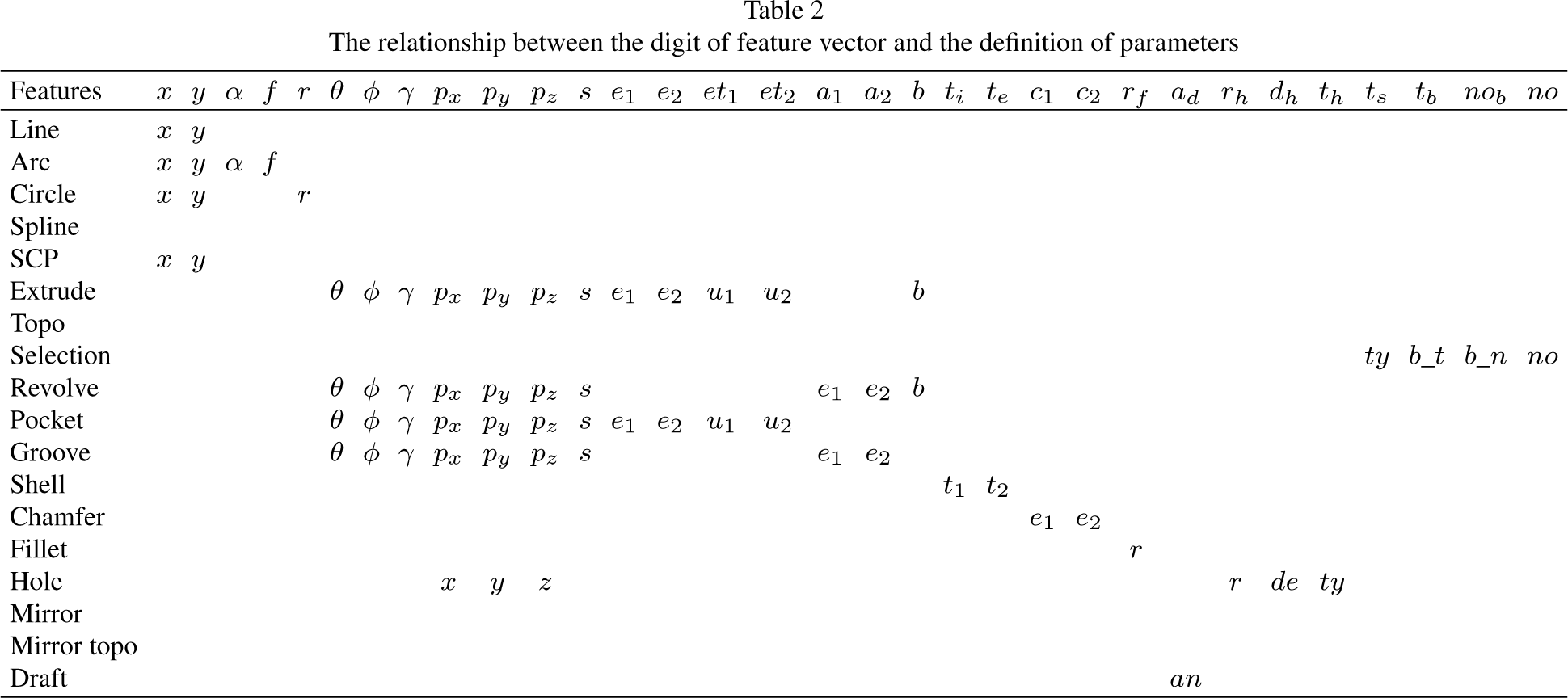

The relationship between the digit of feature vector and the definition of parameters

The definition of

which describes the parametric features of the particular

The corresponding relationship between the feature vector and the definition of features is shown in Table 2. When designing the number of bits occupied by parameters with different features, we try not to reuse them as much as possible. However, when containing the same meaning such as coordinates or angles, a small amount of bit reuse is allowed. In addition, due to the special characteristic of the selection feature, we allocate 4 exclusive bits for it.

Table 1 describes the simple features, advanced features (selection feature and advanced features depend on selection), and their corresponding parameters established by WHUCAD dataset. There are 4 times more feature types in the WHUCAD dataset than in the DeepCAD dataset and the Fusion 360 Gallery dataset. The feature operations and parameters of WHUCAD are as complicated as those in industry CAD systems. Thus, the most important value of the WHUCAD dataset is not the data size but the fruitful feature types for supporting HCI.

Furthermore, the proposed WHUCAD dataset is scalable because of the development of mainstream CAD systems.

Selection feature to learn and imitate HCI process under deep learning network

The WHUCAD dataset contains a selection feature that can learn and imitate the HCI process to focus on and select a particular topological entity for the next feature operation. In this way, the design intention and design knowledge of a CAD engineer can be fully learned in a deep learning network.

An example of the mirror feature.

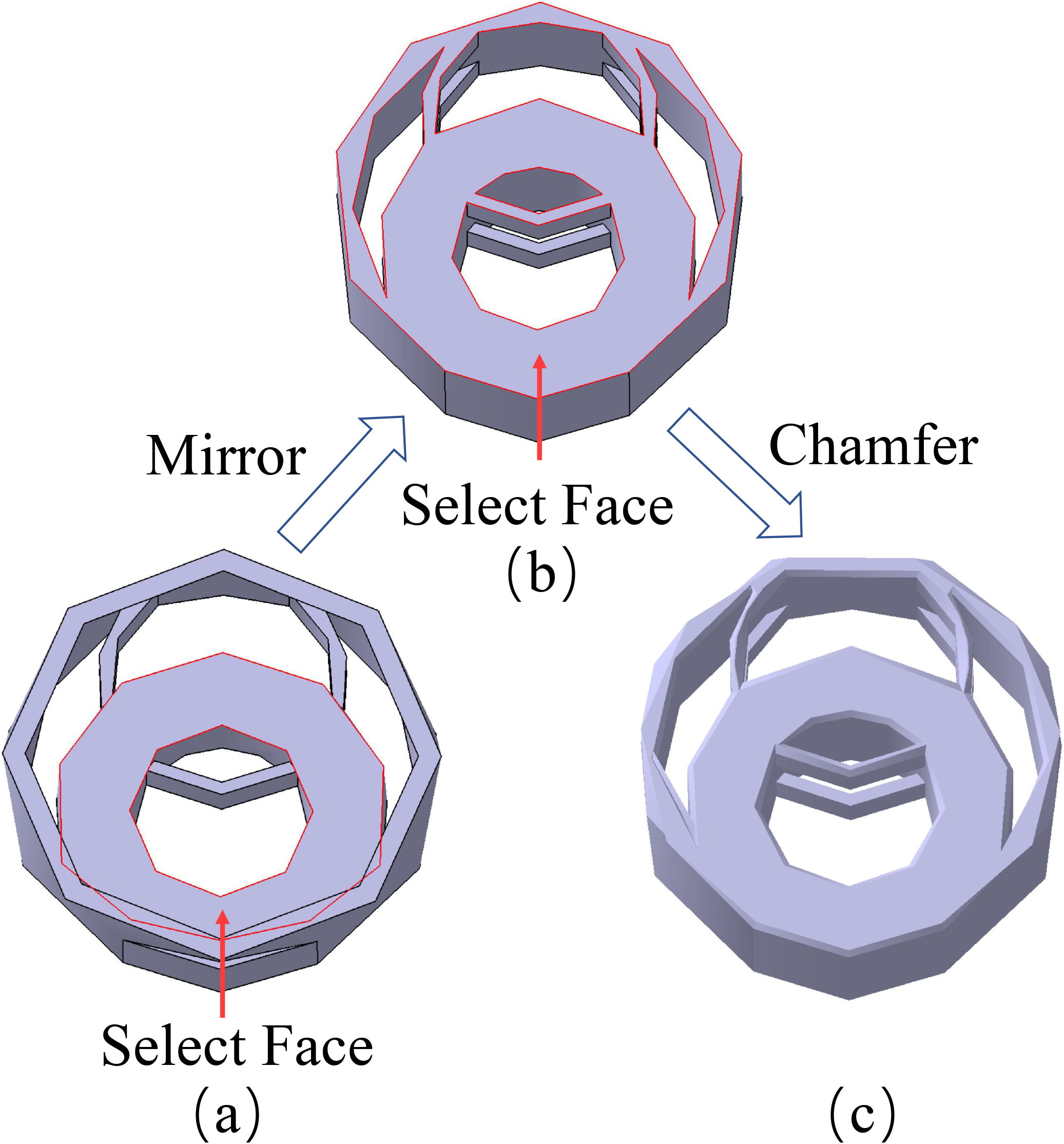

The selection mechanism is important because it establishes a relationship between two topological elements. The existing learning-based 3D modeling tasks, such as reconstruction and generation, can only reach the solid modeling stage and cannot reach the parametric modeling stage. For example, as shown in Fig. 8, a small cuboid is created in the center of the top surface of the large cuboid. Due to the lack of a selection mechanism, in DeepCAD, once the extrusion parameter of the large cuboid is decreased, two related entities will be separated. The CAD designer must manually move the top cuboid downward and reposition it on the top surface of the large cuboid. However, a true parameterized modeling process should allow the small cuboid to move as the extrusion parameters of the large cuboid decrease. This situation will be solved in the WHUCAD. Figure 9 shows this situation in WHUCAD. Due to the naming mechanism, the top cuboid and the bottom cuboid are truly linked together through the parameterized modeling process.

When modifying parameters in DeepCAD data, as the two extruded sketches record absolute coordinate information, reducing one of the extruding parameters can cause the two bodies to separate.

In WHUCAD, the sketch of the second extrusion is created by selecting the plane of the first extrusion. This selection operation associates the coordinate information of the second sketch with that of the previous entity. Reducing the parameter of the previous extrusion does not result in the separation of the two entities. This is what a true parametric modeling should achieve, and aligns with the design intent in most scenarios.

The proposed selection and name mechanism can be input into a deep learning network to learn how a real CAD engineer selects an entity in CAD software. The selection feature and the name mechanism are not available in any existing 3D datasets, i.e., they are available only in the WHUCAD dataset.

Typical examples of selection and the name mechanism in parametric and feature-based modeling history are illustrated in the following.

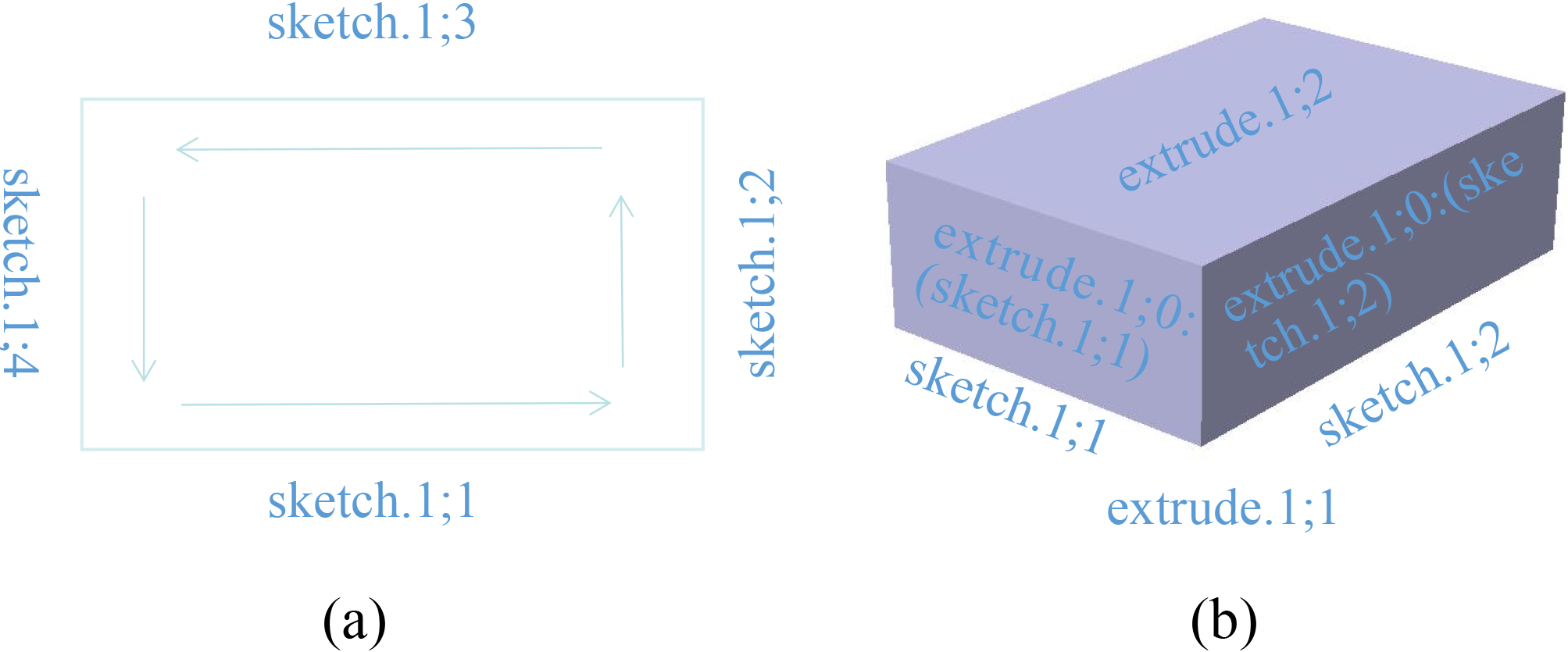

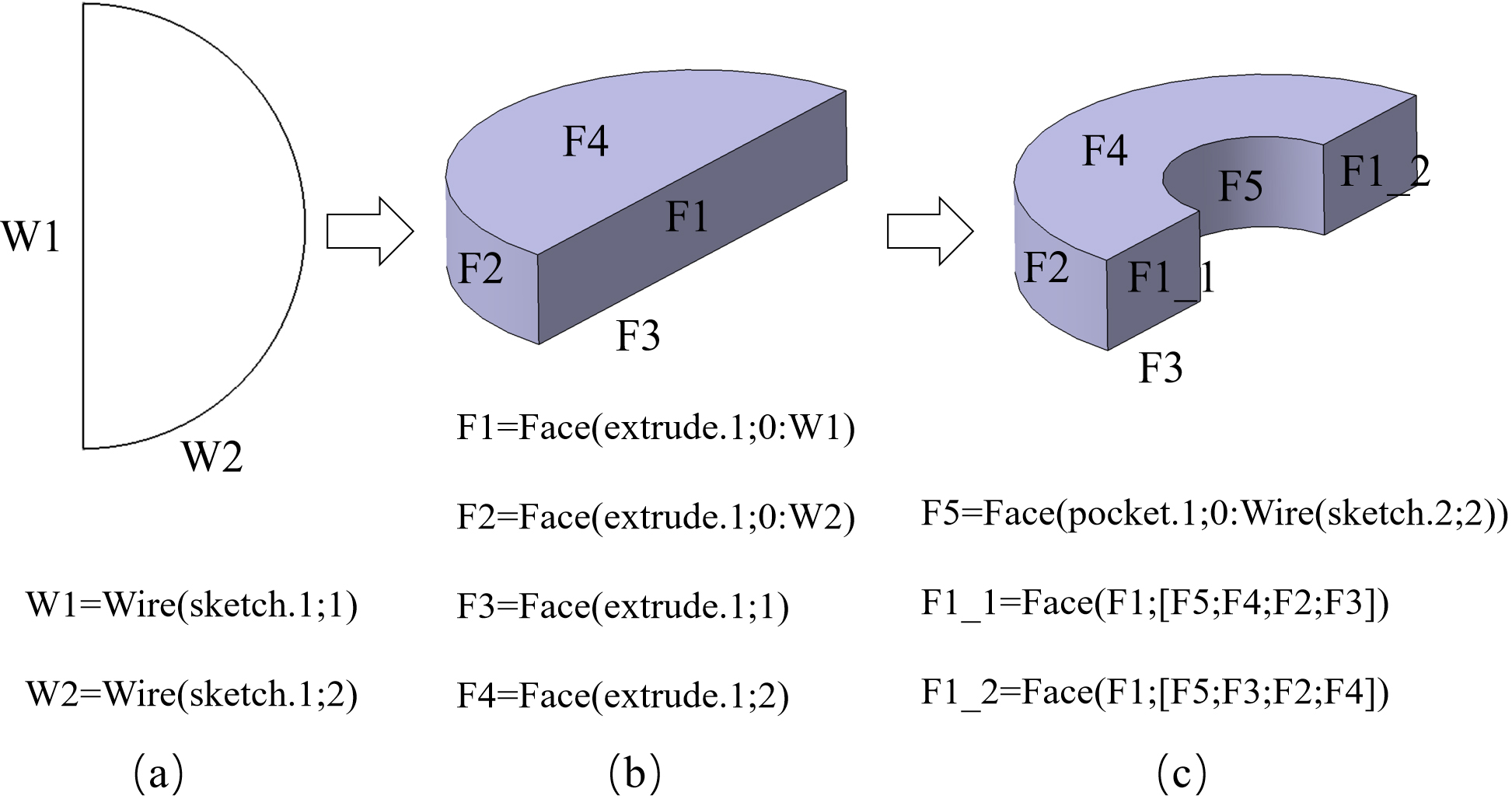

(1) How the selection and name mechanism work when extruding a sketch into a 3D model

As shown in Fig. 10(a), regardless of how a rectangle is sketched, we name every edge in a counterclockwise direction.

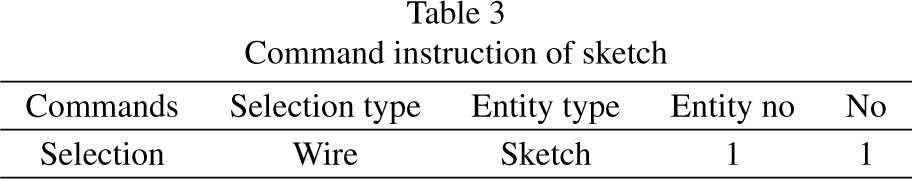

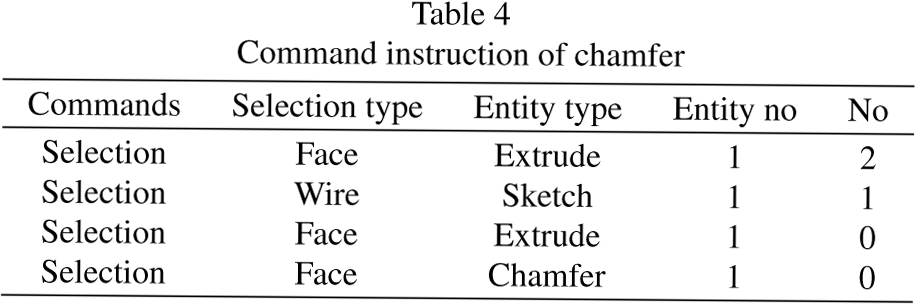

According to the feature definitions in Table 1, the selection feature operation has four parameters, which are the type of object, the type of entity, the serial number of the entity, and the serial number of the selected object, respectively. For the case of Fig. 10(a), the four edges can be named “sketch.1;1”, “sketch.1;2”, “sketch.1;3”, and “sketch.1;4”. For further illustration of “sketch.1;1”, the detailed name structure is shown in Table 3.

After selecting the sketch, an extrude feature operation is executed to create a number of faces, which construct a 3D box.

As shown in Fig. 10(b), once the box is constructed, these new faces are also named and can be selected for further feature operation.

An example of the selection feature and naming mechanism for selecting edges from the sketch and new faces from extrude.

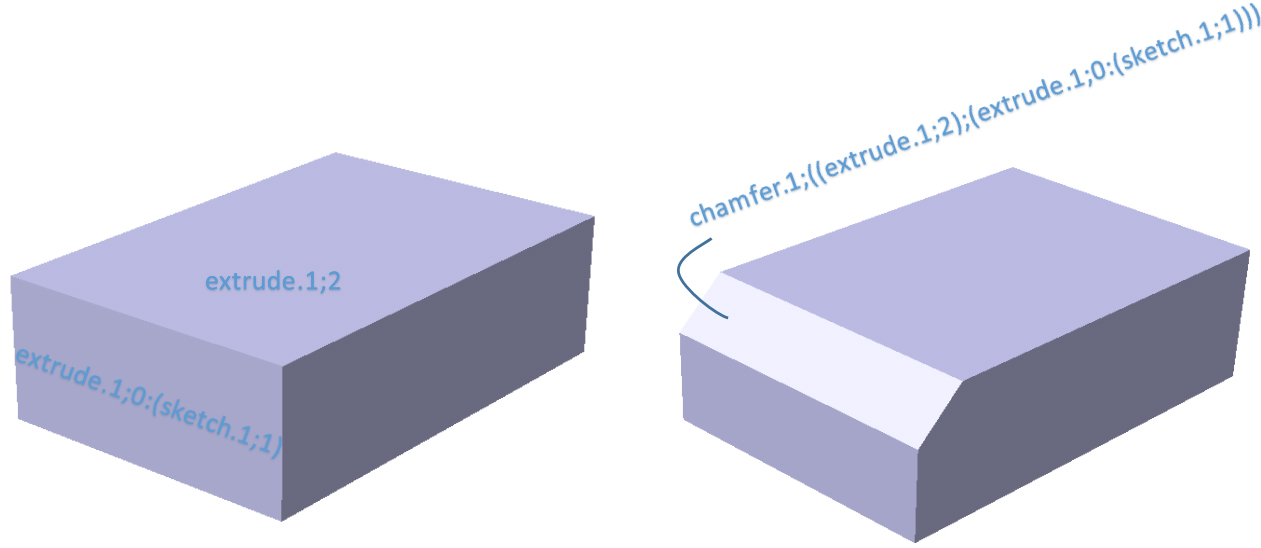

(2) How the selection and name mechanism work when conducting a chamfer operation on an edge of the box.

As shown in Fig. 11, the edge between the face of extrude.1;2 and extrude.1;0:(sketch.1;1) can be named “(extrude.1;2);(extrude.1;0

After a chamfer operation is executed on the edge “(extrude.1;2);(extrude.1;0

An example of the selection feature and naming mechanism for selecting a chamfer.

Command instruction of sketch

Command instruction of chamfer

(3) How the selection and name mechanism work when a face is split by a previous operation.

In Fig. 12, a semicylinder (Fig. 12(b)) is extruded from a closed semicircle (Fig. 12(a)), and then a pocket operation is conducted to another closed semicircle with a smaller radius (Fig. 12(c)). Thus, face F1 is divided into two parts. F1_1 and F1_2 are both from the original face F_1 and the pocket operation (Although some other methods regard the pocket operation as the same result as conducting an extrude operation with Boolean abstraction; thus, they abandon the pocket as a feature. However, the design intention and design knowledge of pocket features are quite different from extrude. Furthermore, if the WHUCAD dataset is applied to classification or segmentation tasks, there will be a significant difference in real meaning between pocket and extrude.) From the basic cases in the previous part, the names of W1 and W2 are “sketch.1;1” and “sketch.1;2” respectively. Here we denote W1

How the selection and name mechanism work when a face is split by a previous operation.

Although DeepCAD and Fusion 360 Gallery consider CAD processing as command sequences, they have some critical problems. While DeepCAD claims to have 178,238 data, after removing redundant models (just simply removing the same models by comparing each command sequence), there remains 131,553 models remain. These 46,685 redundant data are not conducive to training and may cause overfitting. In addition, the models in DeepCAD only contain a few simple features and several steps. The complexities of the models are generally low. The Fusion 360 Gallery dataset has only 8,625 CAD models, which is insufficient for well training a deep learning network.

WHUCAD consists of two subsets. The first subset is derived from DeepCAD. To address the simplicity of DeepCAD, we augment the data by randomly inserting sequences containing advanced features into DeepCAD data. The advantage of this approch is that it can obtain a rich amount of data with advanced features for learning and complex processing steps. We filter out unreasonable augmented data by integrating with industrial CAD software such as CATIA to ensure that every newly created model with advanced feature types can be visualized and converted to B-rep, mesh, and point cloud data. Furthermore, we check every model to ensure that WHUCAD has no redundant data. The second subset comes from manual construction. Our team spent more than two years building 4,244 CAD models from the ABC dataset that have fruitful advanced features and semantic meaning but cannot be parsed into a command sequence by DeepCAD due to the lack of a selection mechanism. All models in the second subset of WHUCAD are macro recorded by CATIA and then parsed into vector sequence format. These two subsets constitute WHUCAD, and each has its own characteristics. We annotate the two subsets in the final merged WHUCAD dataset and will open-source all code at Github.

We provide WHUCAD data in .h5 format. Each file represents a CAD model. Each row in the CAD file represents a feature operation. The relationships between each digit and its meaning are shown in Table 2. These data in .h5 files can be directly as input data to deep learning network for training and testing. Furthermore, we provide a parsing tool along with the source code to integrate WHUCAD with major industrial software such as CATIA for visualization and further edit for researchers.

We will also issue a license to researchers who require WHUCAD for academic research.

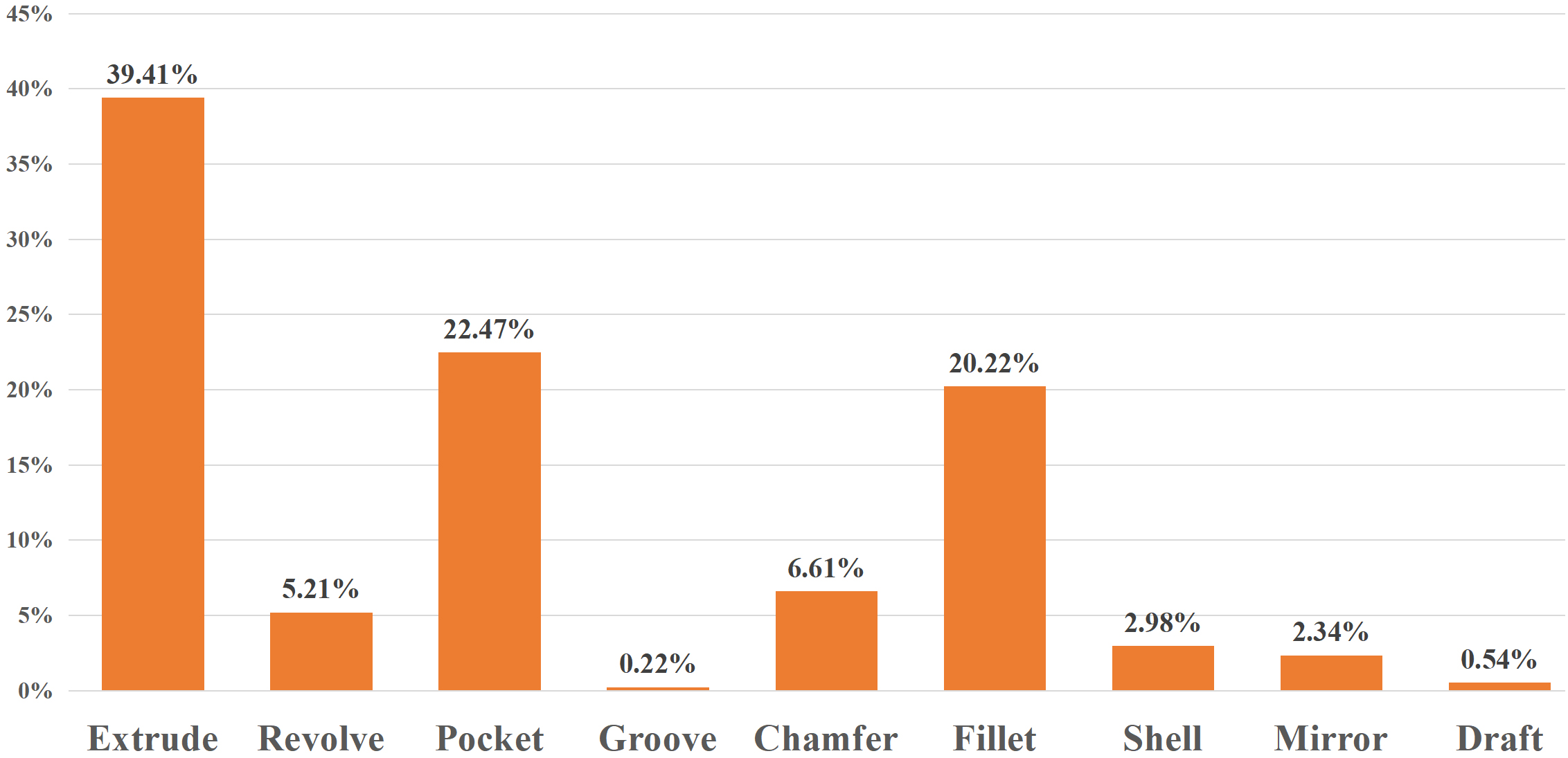

Discussion of feature types, history length and type distribution

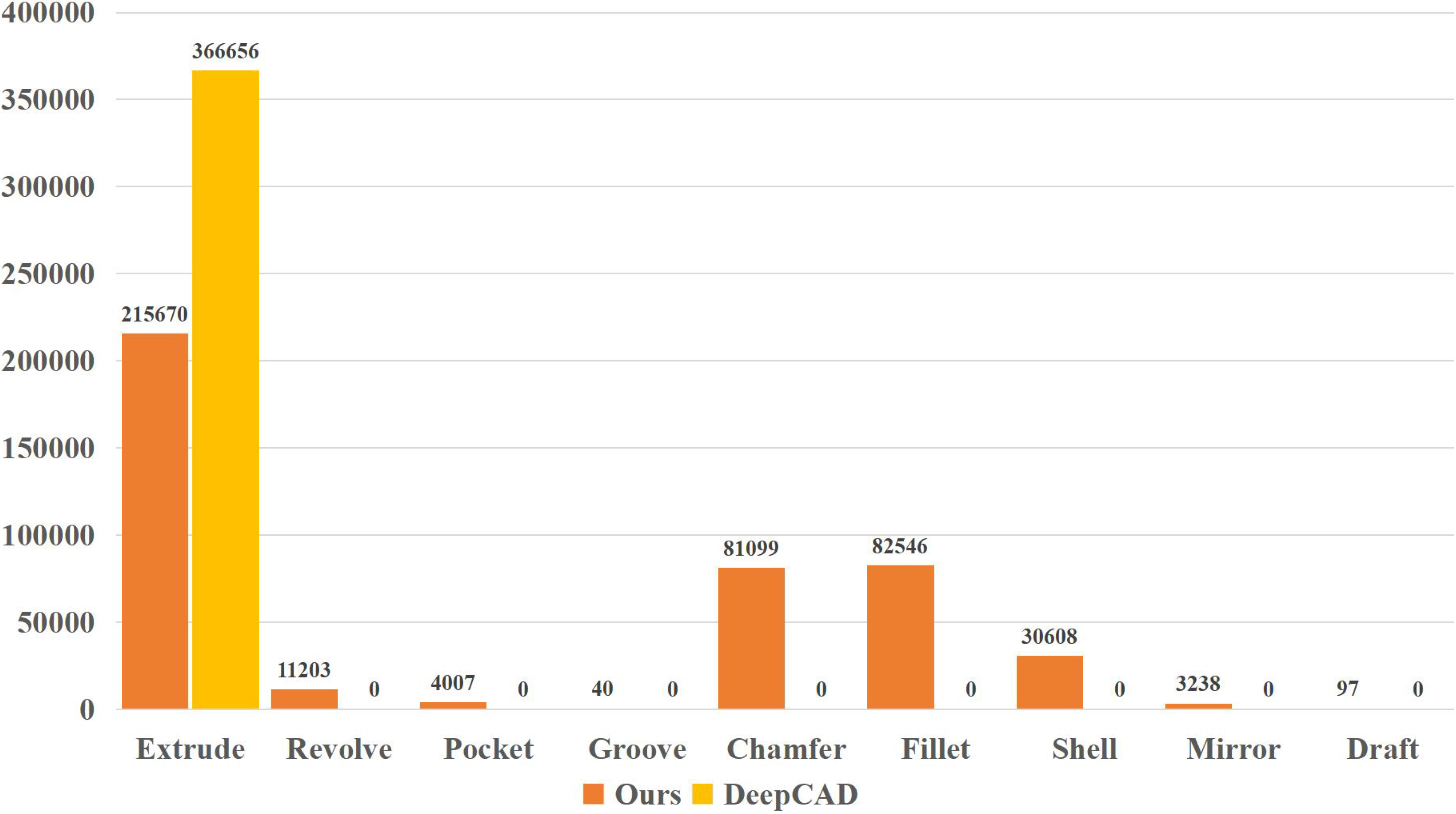

Compared with DeepCAD, WHUCAD is much richer in terms of types of features and has a more reasonable proportional distribution. A distribution of complicated feature types selected from our 146,066 models is shown in Fig. 13.

Distribution of complicated feature types in 4,244 manually constructed subset from WHUCAD.

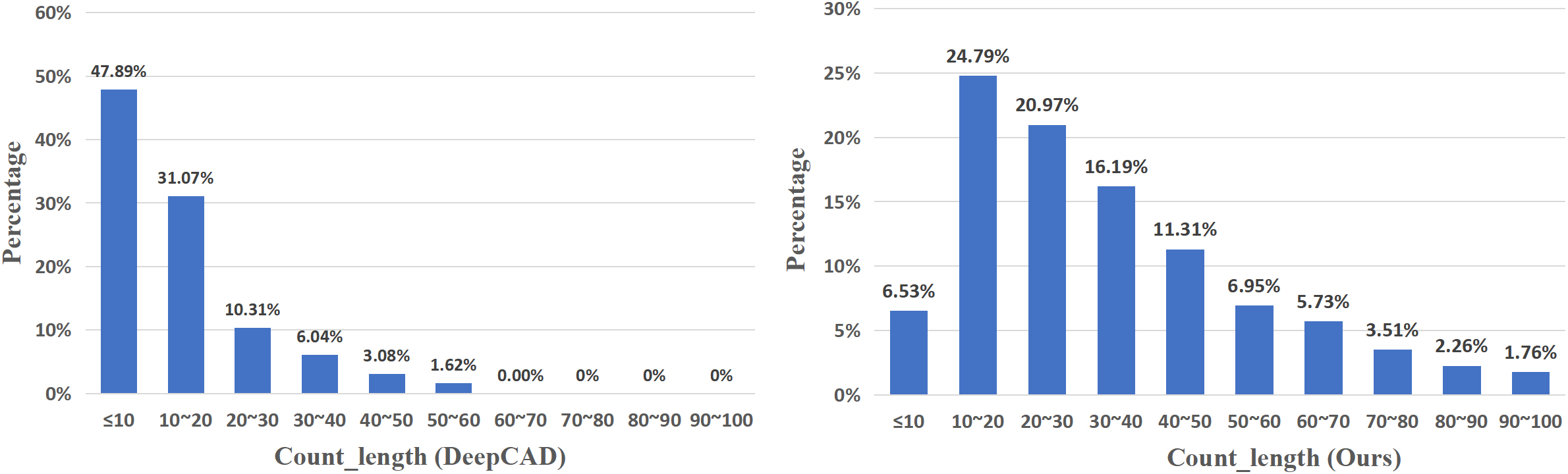

Comparison of the modeling history complexity of individual CAD models (creation steps) between DeepCAD (left) and WHUCAD (right).

The length of the modeling history is an important metric for evaluating the complexity of a CAD model. Figure 14 shows the history length of each CAD model between DeepCAD and WHUCAD, in which the WHUCAD models have a longer history than DeepCAD. Therefore, WHUCAD models are more complicated and contain more features.

Distribution of complicated features between the WHUCAD and DeepCAD datasets.

In addition, the distribution of feature types in the WHUCAD dataset complies with the thinking and reasoning of human engineers. The extrude feature is the most frequently used feature. Features that human engineers use a lot are fillet and chamfer. Therefore, the overall distribution of WHUCAD feature types is more reasonable than that of DeepCAD as shown in Fig. 15.

To the best of our knowledge, at present, only the DeepCAD and Fusion 360 Gallery reconstruction datasets are historical 3D datasets in which the CAD models are described as a sequence of CAD commands.

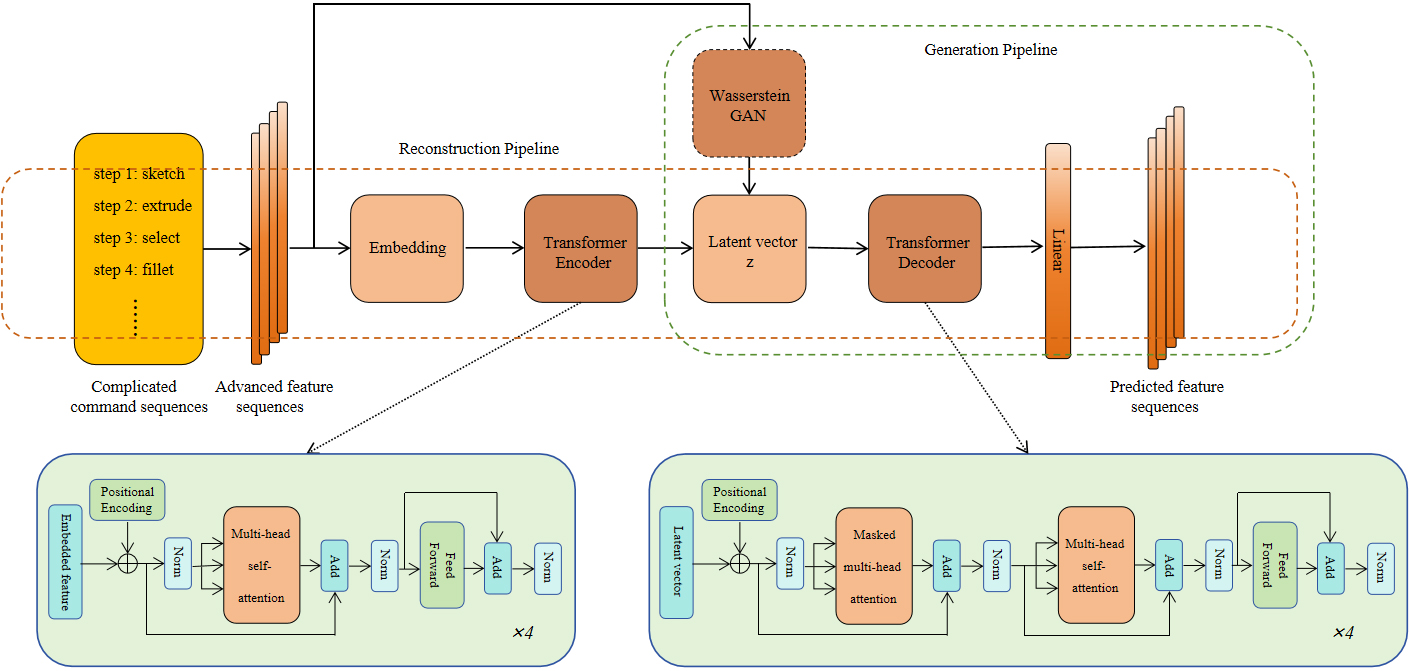

Therefore, we compare the WHUCAD dataset with the DeepCAD and Fusion 360 Gallery datasets in the reconstruction and generation tasks. In Section 4.1, we present quantitative experiments of the reconstruction task. In Section 6.1, we present qualitative experiments of the generation task. The network architecture of the reconstruction and generation tasks is shown in Fig. 16. In Section 6.2, we present industrial experiments on the WHUCAD dataset with the modern interactive CAD software.

The network architecture of the reconstruction and generation experiments.

In the quantitative experiments, we train the WHUCAD, DeepCAD, and Fusion 360 Gallery reconstruction datasets in the original DeepCAD network and the WHUCAD dataset in our proposed network, as shown in Fig. 16. The process in the orange dashed box is the reconstruction pipeline. The command sequences are first parsed into advanced feature sequences (the vector format) using a parsing python script, which will be open source with the training and testing code. The vector sequences are not only transmitted to the embedding process but also used as input data to the generation pipeline. After the transformer encoder, the latent

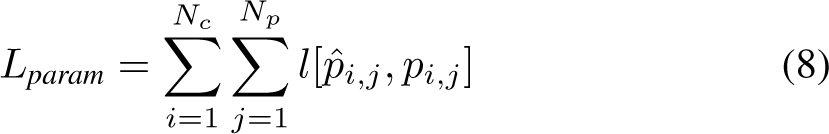

The equation of the loss function between the predicted sequences and ground truth is defined as follows:

where:

and

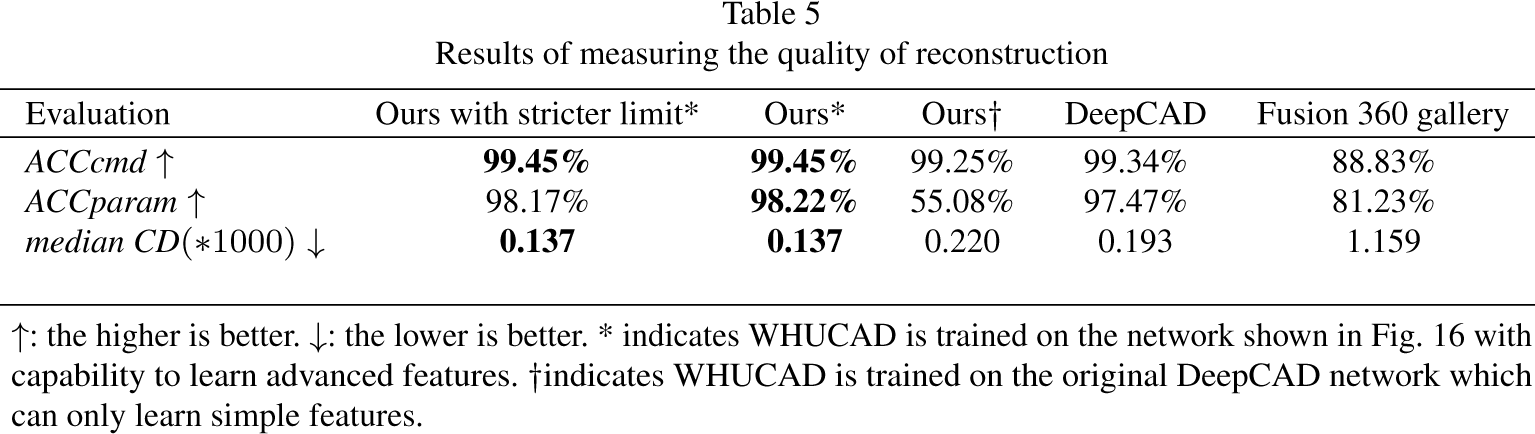

Results of measuring the quality of reconstruction

Generated CAD models from the WHUCAD dataset with feature notations.

Generated CAD models from the DeepCAD dataset.

In the network architecture, the number of heads in multihead attention is set to 8. The dimensions of the embedded feature sequence and latent vector z are 256. The training process adopts the Adam optimizer with a learning rate of 0.001. The dropout rate is set to 0.1.

Similar to DeepCAD, both WHUCAD and Fusion 360 Gallery are split into training, validation, and test sets by 90%-5%-5%. We train the three datasets for 1,000 epochs with a batch size of 512. All the experiments are conducted on a PC with an Intel I9-12700 CPU and an NVIDIA GeForce RTX 3090ti GPU. The quantitative results of the reconstruction experiments are shown in Table 5.

ACCcmd and ACCparam represent the correctness of the predicted CAD command (feature operation) type and the correctness of the command (feature operation) parameters when the command type is correctly predicted.

ACCcmd is defined as:

where

Once the command type is predicted correctly, the command parameter is calculated. The definition of ACCcmd is as follows:

where

For a fair comparison, we choose to remain consistent with DeepCAD, which sets

To solve the problem of DeepCAD being unable to learn advanced features, in the “Ours*” experiment shown in Table 5, we incorporate the ability to process advanced features when converting “complicated command sequences” to “advanced feature sequences”, which was demonstrated in Section 3. The experimental results show that we achieved better results than DeepCAD and Fusion 360 Gallery by using our networks with advanced feature learning abilities.

Generated CAD models from the Fusion 360 Gallery dataset.

The process of editing details of generated models from the WHUCAD dataset.

However, to more rigorously verify the effectiveness of feature learning, we consider that a small fluctuation of the parameter in the selection operation may cause the wrong edge or face to be selected. Therefore, for a stricter requirement, we add an extra experiment that does not allow any errors in predicting the parameters of the selection feature. In Table 5, “Ours with stricter limit*” indicates that the predicted parameters in the selection operation do not allow any errors from the ground truth. The results show that even under the requirement of allowing zero errors in the parameters of the selection feature, the results of ACCparam on the WHUCAD dataset trained by our network are still better than those on the DeepCAD and Fusion 360 Gallery datasets.

The prediction rates of ACCcmd and ACCparam are both important. Only when their accuracy is simultaneously high can it be concluded that the model’s predictions are relatively accurate. For example, for ACCcmd, when a rectangular sketch is selected and an extrusion operation is desired, if the intended “extrude” feature type is mistakenly predicted as “revolve”, it will lead to an overall error in the model. This is because the bits of parameters required for the revolve feature differ significantly from those of the extrude feature (the specific feature types and the corresponding bits of parameter are shown in Table 2). Therefore, ACCcmd is crucially important. However, if a topological element is supposed to be revolved by a small angle and the revolve type is correctly predicted, but the parameter is incorrectly predicted for a very large angle, the resulting model will also be significantly different. This indicates that the ACCparam prediction is equally important. Therefore, the ACCcmd and ACCparam are crucial metrics for evaluating whether a model has been accurately constructed. Both are important and indispensable.

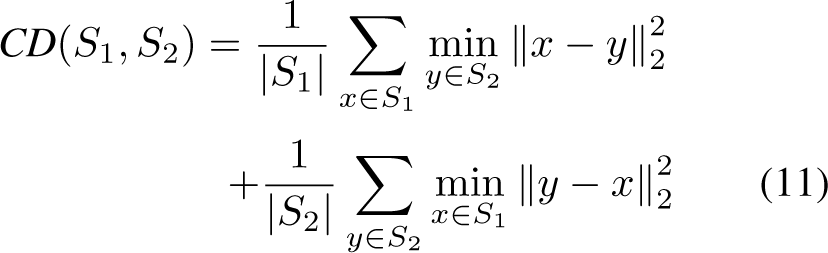

From another perspective to measure the quality of the recovered 3D geometry, the chamfer distance (CD) is chosen for evaluation by uniformly sampling 8000 points on the surfaces of the reference shape and the recovered shape. The equation of the chamfer distance is as follows:

where

When using the DeepCAD original network to train the WHUCAD dataset (“Ours†”), WHUCAD has a higher median CD, which also indicates that models in WHUCAD are generally more complex and harder to reconstruct than those in DeepCAD. In addition, conducting experiments under our network (Ours*) has a lower median CD than DeepCAD, which proves the superiority of our network.

In conclusion, in Table 5, for quantitative comparisons, experiments “Ours†”, “DeepCAD”, and “Fusion 360 Gallery” indicate that models in WHUCAD are generally more complicated and harder to learn than DeepCAD and Fusion 360 Gallery, which reflects the value of WHUCAD. Advanced features and the key selection mechanism in WHUCAD provide additional possibilities and new benchmarks for parametric and feature-based CAD tasks. Furthermore, comparisons among the experiments “Ours*”, “Ours†”, and “DeepCAD” indicate the effectiveness of our network for advanced feature learning.

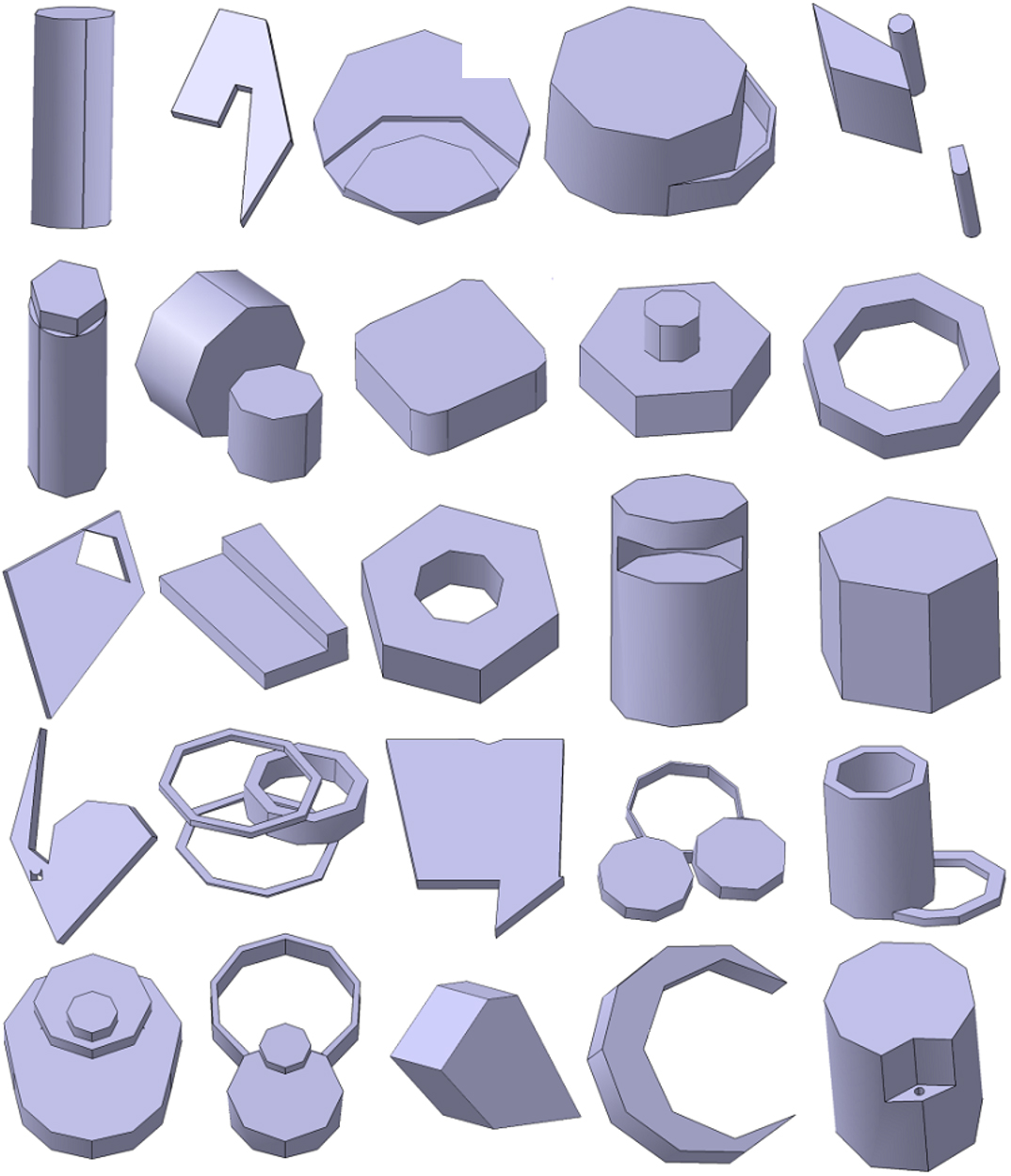

Once the reconstruction pipeline is well trained, the weight parameters in the decoder are fixed. To generate CAD models, advanced feature sequences in vector format are input to the generation pipeline (the process is shown in the green dashed box) for training. Similar to DeepCAD, we use the Wasserstein GAN network [23, 75, 76] to train the input feature sequences. The generator and discriminator of the GAN are both MLP networks with four hidden layers. After sufficient training, random vectors sampled from a multivariate Gaussian distribution are fed into the GAN generator to generate the latent vector

Figure 17 shows examples of CAD models generated from WHUCAD. These examples include real-world engineering features, such as fillet, chamfer, shell, pocket, draft, mirror, revolve, hole, and groove, which are as advanced as those in CAD models created by a real human engineer who interactively works on modern industrial CAD software. For better visualization, notations for advanced features are added to the above examples.

The models generated from the DeepCAD and Fusion 360 Gallery datasets are shown in Figs 18 and 19, respectively.

According to Figs 17 to 19, for qualitative comparisons, it is clearly that the 3D shapes generated from WHUCAD are more complicated, more advanced, more vivid, and more detailed than those from the DeepCAD and Fusion 360 Gallery datasets.

Industrial experiments

The WHUCAD dataset is compatible and transferable with modern interactive CAD software.

The CAD models generated from the WHUCAD dataset can be easily imported into industrial software. Then, the input CAD models can be edited and redesigned by a human engineer who works on historical and feature-based CAD software, such as CATIA,3

Figure 20 shows an HCI editing process of an input CAD model generated from WHUCAD on one industrial CAD software.

Step 1: A human engineer imports an initial CAD model (a phone protection case) generated from the WHUCAD dataset into the software.

Step 2: To adjust the size of the camera frame, the human engineer switches the 3D model to the related sketch plane.

Step 3 to step 9: The human engineer selects the edges of the camera frame one by one. After selecting each edge, the human engineer modifies the length of the sides and the center coordinates of the fillet operations.

Thus, WHUCAD has real-world engineering ability and can support collaborative product development (CPD) in modern globalization era.

In this paper, we provide WHUCAD, a full parametric and historical feature-based CAD dataset with fruitful advanced features, which enables deep learning to learn HCI in 3D CAD learning. In contrast to existing datasets with simple CAD commands (line, arc, circle, and extrude), WHUCAD includes many more rich advanced feature types (such as selection, revolve, pocket, groove, shell, chamfer, fillet, hole, mirror, and draft). More importantly, the existing CAD datasets and methods only support overall extrusion on sketches, without the characteristics of HCI. Therefore, deep learning networks are also unable to learn and mimic HCIs to select specific topological elements for more advanced features. To compensate for this deficiency, WHUCAD first devises a selection feature and a name mechanism that can be input into a deep learning network to learn how a human CAD engineer selects an entity in CAD software. Quantitative experiments, qualitative experiments, and industrial experiments verify the superiority of WHUCAD. Furthermore, we first provide a parsing tool with a parametric and feature-based CAD dataset for integrating our parametric CAD models with industrial software, which can help human engineers truly implement edits and redesigns of historical and parametric models.

To the best of our knowledge, WHUCAD is the first parametric and feature-based CAD dataset to support HCI in 3D learning. In the future, we will continue to study the limitations commonly found in existing CAD models. For example, the feature types have not fully covered all types in the real world, and there is still a lack of parameterized feature-based datasets for classification. We will continue to extend the WHUCAD dataset to support more complicated HCI in 3D learning. Additionally, we will combine WHUCAD with advanced machine learning methods [77, 78, 79, 80, 81, 82, 83, 84, 85, 86, 87] for knowledge-based CAD/CAM engineering applications [88, 89, 59].

Footnotes

Acknowledgments

This work is supported by the National Natural Science Foundation of China under Grant No. 62072348, and the Shenzhen Polytechnic University Research Fund under Grants 6023310030K, 6022312044K, 6023240118K, and 6024310045K. The numerical calculations in this paper were performed on the supercomputing system at the Supercomputing Center of Wuhan University.