Abstract

In this paper, we propose a new method of representing images using highly compressed features for classification and image content retrieval – called

Keywords

Introduction

With virtually unlimited access to information, storing large amounts of data becomes increasingly problematic. For example, images from surveillance cameras must be overwritten due to a lack of adequate storage resources. Image datasets used in data science or video require terabytes of storage capacity to store on disks. However, such images often contain unnecessary, redundant information that can be removed to achieve significant compression while preserving the most essential information. In many classification tasks, storing the original image datasets on which classifiers are trained is unnecessary or these datasets can be offloaded to other cheaper but slower media [1]. Ultimately, the image representations given at the input are sufficient to receive the corresponding label at the output. Storing a less dimensional and compressed representation of the originals can help reduce the required storage volumes and allow a much larger number of examples to be stored, with no apparent impact on classification performance. As regards to sparse representation of images, not only the storage space is essential, but also the processing time. An example is content-based image retrieval (CBIR), which we also address in this paper. In this task, a common scenario is that a subset of the most similar representations is selected from many millions of images based on the highly compressed representations. Then, the whole process is repeated on the subset of less compact representations chosen in the previous iteration, allowing for more accurate matches and significantly reducing processing time. These two limitations, the huge storage usage of present-day datasets and the long processing time were the primary motivation for the works on the presented approach.

Such representations can be local sparse features that carry information in a very compact form, allowing inference about the image content. They can be used to search for common areas in different images, to determine camera positions, to combine photos into panoramas, or to perform 3D modeling. At the beginning of the 21st century, the most popular method for determining local features became the scale-invariant features transform (SIFT), proposed by David Lowe [2]. This work generated significant interest in sparse image descriptors and contributed to the development of many other methods. Currently, the field of image analysis is dominated by various types of neural networks [3, 4, 5, 6, 7, 8, 9, 10, 11, 12] and visual transformers [13]. We have demonstrated that the use of deep neural networks enables the construction local semantic descriptors which allow for more efficient image analysis and retrieval than known solutions.

In this paper, we propose a more compact and robust version of the ResFeats features proposed by Mahmood et al. [14, 15]. Our main contributions are as follows. First, we propose a set of dimensionality reduction methods for the original ResFeats, which count 3854 floating-point values each in their original form. In this context, we present and analyze (i) various combinations of features from output layers, (ii) principal component analysis (PCA) [16, 17], as well as (iii) feature sampling on combined high- and low-level ResNet-50 features. We also investigated (iv) the impact of a floating-point compression, called ZFP by its creators [18], on the proposed features. Second, we have extensively tested various setups in the classification tasks on six referential datasets and CBIR. The results show that the most promising is the combination of PCA with about 60 components and ZFP, which in some cases allows up to 220-fold reduction in memory consumption for the representation of features without significantly reducing or even increasing the accuracy of classification and CBIR results, as compared to other published methods.

Related works

The quest for the best-performing features has been the “Holy Grail” of the computer vision community for decades. We have experienced several breakthroughs in this field. After using different edge and corner detectors for a long period, the real breakthrough came in 2004 after D. Lowe published his seminal paper on the SIFT [2]. It gained many citations, as well as improvements and modifications, such as [19, 20], to name a few.

Exciting in the context of our work is the method by Ke and Sukthankar [19]. They proposed to employ PCA in the last step of the SIFT algorithm, that is, building a representation of key points based on patches. Thanks to this, the PCA-SIFT is constructed, which allowed not only for data reduction but – in many cases – also for increased classification accuracy.

The great success of SIFT spawned research in the field of sparse image descriptors and resulted in many improvements and similar methods. For example, we can mention here the speeded-up robust features method (SURF) [21], keypoint detector Features from Accelerated and Segments Test (FAST) [22], the Binary Robust Independent Elementary Feature detector (BRIEF) [23], as well as their follow-up oriented FAST and Rotated BRIEF (ORB) [24], then very efficient to compute densely DAISY [25, 26], or in the other group – the scale and affine invariant features by Mikolajczyk and Schmid [27], as well as histograms of oriented gradients (HOG) [28, 29], to name a few. However, in recent years, deep neural networks have shown their superiority also in the computation of local descriptors and sparse features for such tasks as image classification or content retrieval.

In the work of Mihai Dusmanu et al. [30], a D2-Net architecture is proposed that finds reliable keypoints under challenging imaging conditions. The approach presented there assigns a dual role to a single convolutional neural network (CNN): it is both a feature descriptor and a feature detector. The backbone of the D2-Net network is a pretrained on ImageNet VGG16 network. The proposed method achieves promising results both on the difficult Aachen Day-Night dataset with photos of objects taken during both day and night and on the InLoc benchmark dataset with photos of building interiors. In 2022, a paper was published [31] that corrected two shortcomings of D2-Net: low positioning accuracy of detected keypoints and emphasis only on the repeatability of detected keypoints, which could lead to mismatches in areas with the same texture. To increase the invariance and the correctness of local descriptor matches counted based on CNN, a paper by Liang et al. [32] proposed a Multi-Level Feature Aggregation (MLFA) module for efficient information transfer between levels. Each level extracts a feature vector, and the final descriptor concatenates them. In addition, to exploit the spatial structure within a local image fragment, the authors proposed a Spatial Context Pyramid (SCP) module to capture spatial information. The algorithm was implemented based on the Harmonic Densely Connected Network (HarDNet) framework [33]. The results obtained by the authors show that their method compares favorably with state-of-the-art (SOTA) methods. Nevertheless, these require significant computational resources and memory for data storage. There are also approaches to representing a point cloud using a single feature vector. In [34], using Self-Organizing Map (SOM), the proposed SO-Net network performs hierarchical feature extraction at individual points and processes them into a feature vector.

A method that proposed PCA to be applied directly to the last semantic layer of ResNet-50 is investigated in [35]. It shows good results in classification with spares data representation. However, the size of the features is still a few kilobytes per image. We will refer to this method in a further part of this paper.

A very influential is the work by Mahmood et al. [14]. For global feature extraction of images the features of the last residual layer called Res5c of ResNet-50 trained on the ImageNet are proposed. These

Features extracted from deeper layers perform better than those from shallower layers. However, their size is also significant due to the size of these output layers, but also because these need to be represented in the floating-point format that consumes at least 4 bytes per feature. In this paper we address this issue, proposing new ways for this size reduction even by two orders of magnitude with comparable or even better performance than the original ResFeats.

Based on superpixels and feature fusion, the solution proposed in [36] uses a codebook of size 2048 or 1024. On the other hand, Arco et al. proposed a sparse coding approach [37]. The images are first divided into tiles, and after applying PCA to these tiles, a dictionary is created. The original signals are then transformed into a linear combination of dictionary elements. The technique presented in [38], on the other hand, reduces CNN layers through an iterative process of removing neurons from the layer and tuning the network. The method presented in [39] is based on the use of wavelet packet transform (WPT)/Dual-Tree Complex Wavelet Transform (DT-CWT) to transform images from the spatial domain to the wavelet domain. The selected channels are stacked to form a tensor of the

In this paper a method is proposed for global image feature computations of

Proposed method

Based on the assumption that deep features extracted from ResNet-50 contain redundant information, we propose the following method for obtaining highly compressed image representations. First, we combine high-level and low-level features extracted from ResNet-50 residual network blocks (see Fig. 1). Then, we apply dimensionality reduction techniques to these features. Finally, we also check the effect of lossy floating-point ZFP compression on the classification of these compact features. This allows us to store highly compressed datasets that still maintain information sufficient for high accuracy image classification or retrieval.

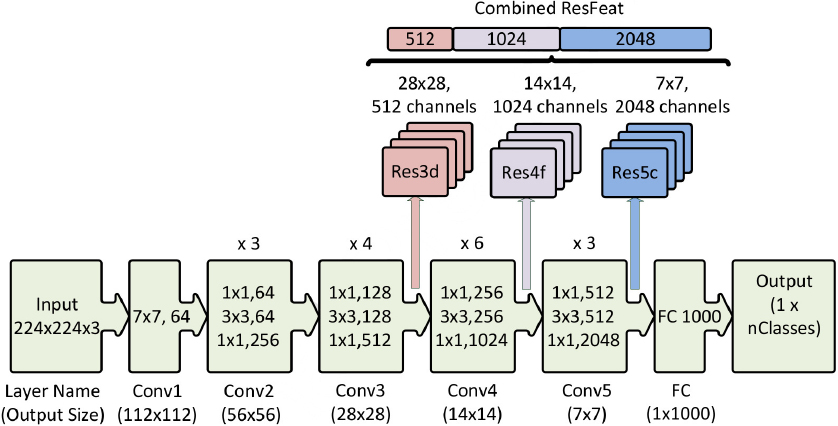

A scheme of the ResNet-50 network architecture and subsequent ResFeats extraction layers. Adapted from [15].

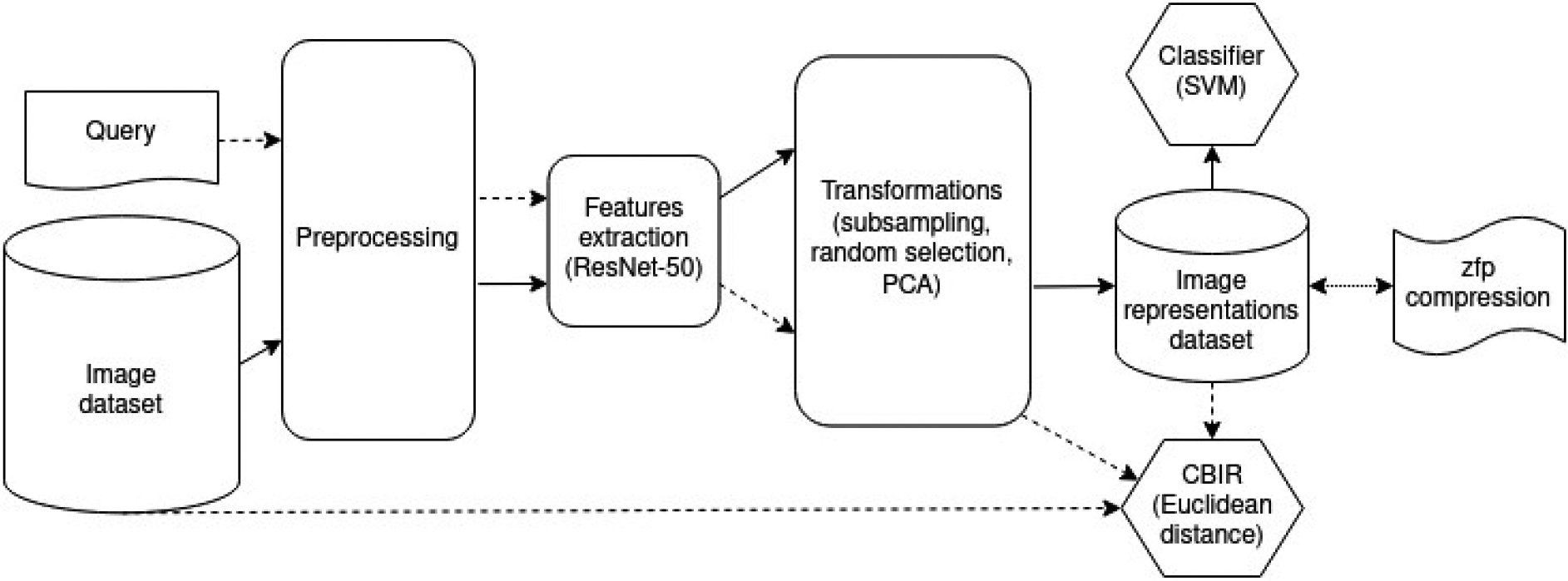

A general picture of the proposed approach. The regular arrows indicate the data flow in the classification task, and the arrows with a dashed line show the data flow in the CBIR task. ZFP compression is performed on a dataset of image representations.

This work skillfully combines several concepts, approaches, techniques and components. Specifically, our main contributions are as follows.

Investigations of various combinations of features from the (2), (3), and (4) layers of ResNet-50 and feature sampling; Application of PCA to various combinations of features from the (2), (3), and (4) layers of ResNet-50 that allows even two orders of magnitude size reduction – we call the new features Investigation of the floating-point compression methods to PCA-ResFeats for further required memory reduction and potential use for computing summaries of datasets. In this respect, we applied and verified (i) 32-bit to 16-bit conversion, as well as (ii) application of the ZFP method; Verification of PCA-ResFeats in the classification tasks of various datasets; Verification of PCA-ResFeats in the CBIR problem.

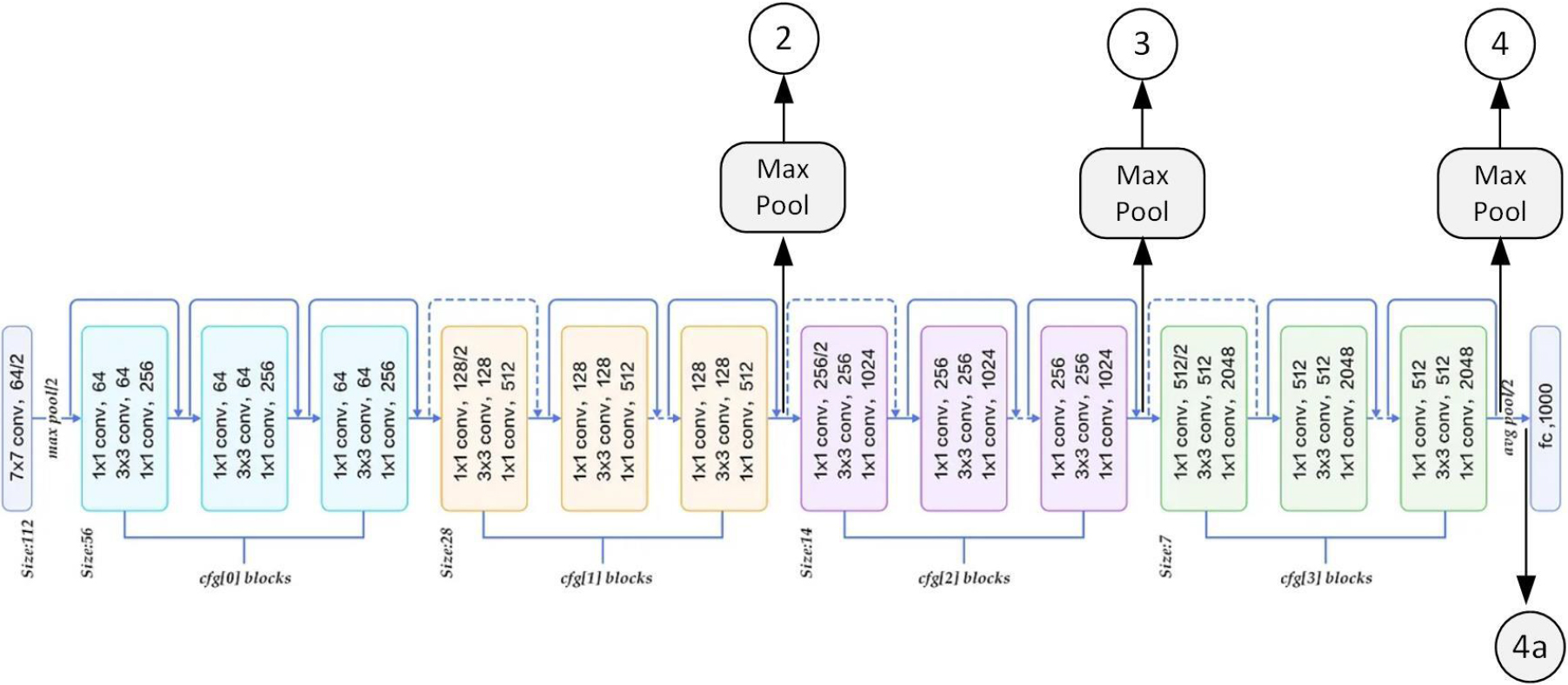

A schematic of the ResNet-50 network architecture and various feature extraction layers used in this paper. (4a) is the ResNet-50 layer after the last average-pool block and before the fully connected one. ResFeats are composed of the (2)

A general picture of the proposed approach is shown in Fig. 2. The regular arrows indicate the data flow in the classification task, and the arrows with a dashed line show the data flow in the CBIR task. ZFP compression is performed on a dataset of image representations. The use of this compression will further reduce the necessary storage. Hence, a possible use-case scenario of our method is as follows: a database with a large number of images can be stored on a cheaper but slower medium (such as ’old’ tape technology, now reconsidered as a remedy for energy conservation [1]), while much smaller representations compressed by our method can be stored on faster media (such as SSD). Then various classification and/or data retrieval tasks, even not known at the time of PCA-ResFeat off-line computation, can be performed exclusively with the latter much more compact representation. In the next step, however, if one wishes to retrieve the original images, then these are available after an access to the former, slower database representation. All this at high speed and ensuring higher data protection during processing original data.

Another example of using our approach could be searching for particular video content based on a limited number of frames. Namely, instead of searching through the huge database with original videos (such as YouTube), we can just quickly search through the much smaller video representations in the form of our proposed PCA-ResFeats. Then, only the suitable videos in which a match was found can be retrieved from the original repository.

Since our starting setup is identical to the one from [15], we omit the exact description of the method of obtaining ResFeats features here. Nevertheless, the reader interested in the details of ResNet-50 can refer to publication [11], while the description of base ResFeats and the used SVM classifier is in [15, 17].

The following subsections describe the details of the aforementioned feature preprocessing methods.

The outputs of successive residual units of the residual neural network ResNet-50 pretreated on the ImageNet, used to extract ResFeats, are stored in the 32-bit floating-point (float32) representation [43]. Using data conversion from float32 to float16, should halve the memory size required to store image representations. However, this reduction may adversely affect the classification results. The experiments are designed to determine whether the increased efficiency due to this type of conversion comes at the cost of decreased accuracy during classification.

Selection of a subset of ResFeats coordinates

Mahmood et al. in [15] proposed to concatenate the outputs of the second (2), third (3), and fourth (4) residual units of the ResNet-50 network after the max pooling operation, which have lengths 512, 1024 and 2048, respectively. For more information about ResFeats, our study compared classification results obtained with various variants of ResFeats. Namely, we investigated feature vectors formed by concatenating the outputs of third (3) and fourth (4), second (2) and fourth (4), as well as second (2) and third (3) of the residual block. The corresponding tensors with lengths of 3072, 2560, and 1536 respectively – carry more information than the outputs separately and may contain less noise than the original ResFeats. Using them would reduce the memory needed to store the image representations by about 14%, 29%, and 57%, respectively. Figure 3 shows the simplified ResNet-50 architecture and the extraction locations of ResFeats and their proposed modifications. In addition to the new features from the fusion layers of ResNet-50, the experimental results of selecting a subset of ResFeats vector coordinates by randomizing and sampling each

Essential information about the datasets used in the experiments, such as the number of classes (classes ), the number of samples given in thousands (samples [in K] ), the size of the smallest class (min class size ), the size of the images (image size ), and the difficulty of the dataset (difficulty )

Essential information about the datasets used in the experiments, such as the number of classes (

A well-known technique for data dimensionality reduction is the principal component analysis (PCA) [16, 17, 29]. Since applying this method to the SIFT [19] allowed to increase the efficiency, given the suspected correlation of the data in the tensors of ResFeats, our proposition is to employ PCA also in this case. The study aimed to find the number of components for which the classification results on most of the datasets proposed for the evaluation would decrease by about five percentage points relative to those obtained with the ResNet-50 output (4a).

Floating-point compression of PCA-ResFeats

The necessity of feature representation in the floating-point (FP) format is a frequently overlooked property. This negatively influences the size of the output representation since the common FP representations occupy either 8 or 4 bytes. In effect, also the response time is negatively affected. Hence, to reduce the memory needed to store image representations, apart from the PCA, we also propose the use of the ZFP compression method, initially presented by Lindstrom et al. to computer graphics applications [45, 18, 46]. It is a lossy method for compressing tensors with FP values, where it is possible to set up the maximum acceptable error threshold for a single FP value in a tensor compared to its decompressed value. As shown, ZFP compression significantly reduces the memory required to store floating-point data. Our method proposes the use of ZFP for PCA-ResFeats. Analysis of the classification results on the representations after decompression compared to those obtained without compression will determine whether the losses resulting from its application have a significant impact. Another advantage of the ZFP method is its ability to recover a single value from the compressed representation, i.e. it is not necessary to decompress the entire representation.

Experiments

Datasets

Six datasets were used to determine the quality of image classification methods. Four of them were used by the authors [14, 15]. These are: MLC2008, Caltech-101, Caltech-256, Oxford Flowers. We added the EuroSAT and Dog vs Cat datasets. These last two datasets were selected for further evaluation because they are from different domains and have different difficulty levels. Table 1 provides a summary of information about the datasets used in the experiments, such as the number of classes, the number of samples given in thousands, the size of the smallest class, the size of the images, and the difficulty of the dataset.

Experimental settings

Some datasets proposed for evaluation are imbalanced, which negatively affects the classification. To perform the classification, we balanced these datasets as follows: we performed 10 draws

Classification

Following the original work on ResFeats [14, 15], the same type of classifier, SVM, with a linear kernel and the accuracy metric were also used. During the initial research, dozens of experiments were performed with different SVM kernels: linear, radial (RBF), polynomial, and sigmoid. However, the results did not show that any kernels performed noticeably better. Therefore, in this work we only present results with a linear kernel.

Results

In the following results, we refer to the

Various combinations of ResFeats

The results of different ResFeats combinations are compared in Table 2. The output of the ResNet-50 network (column

Classification results for various ResFeats combinations on six datasets. (4a) denotes only the last layer of ResNet-50 after the average-pooling (i.e., “standard” ResNet-50 feature extraction); all others are combinations of (2), (3), and (4) layers after the max-pooling; the last combination 2_3_4 denotes the basic ResFeats, described in [15]

Classification results for various ResFeats combinations on six datasets. (4a) denotes only the last layer of ResNet-50 after the average-pooling (i.e., “standard” ResNet-50 feature extraction); all others are combinations of (2), (3), and (4) layers after the max-pooling; the last combination 2_3_4 denotes the basic ResFeats, described in [15]

The best results were obtained (for half of the datasets) for concatenated outputs of the second and third residual units. Subsequent layers of the residual units convey different information, so their combinations may provide better results, depending on the tested dataset. Results in Table 2 indicate that the first choice can be just the combination (2)

Several thousand experiments have shown that storing data in the

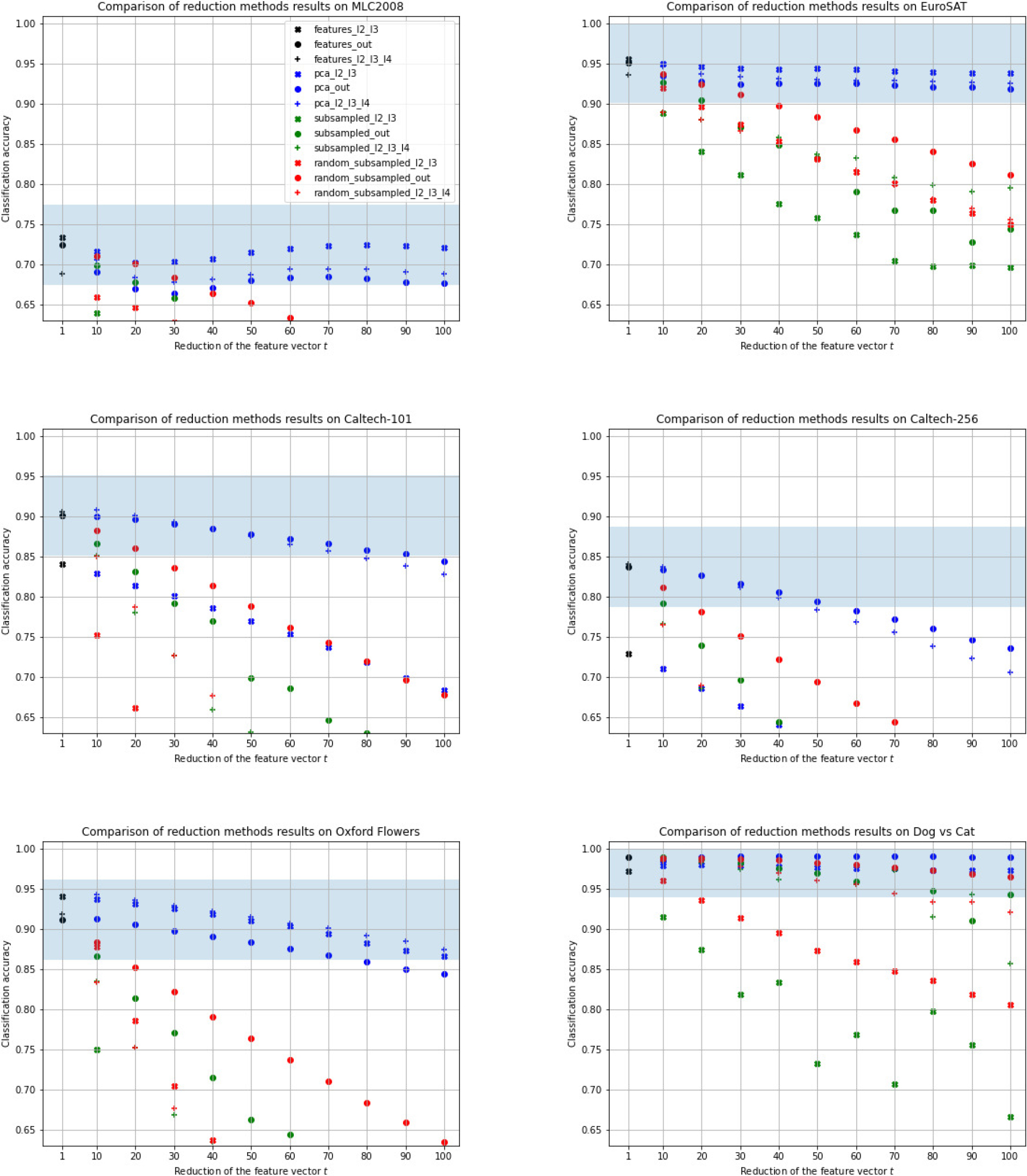

Since it was suspected that ResFeats convey redundant information, appropriate techniques to reduce their size were used. For ease of comparison, let

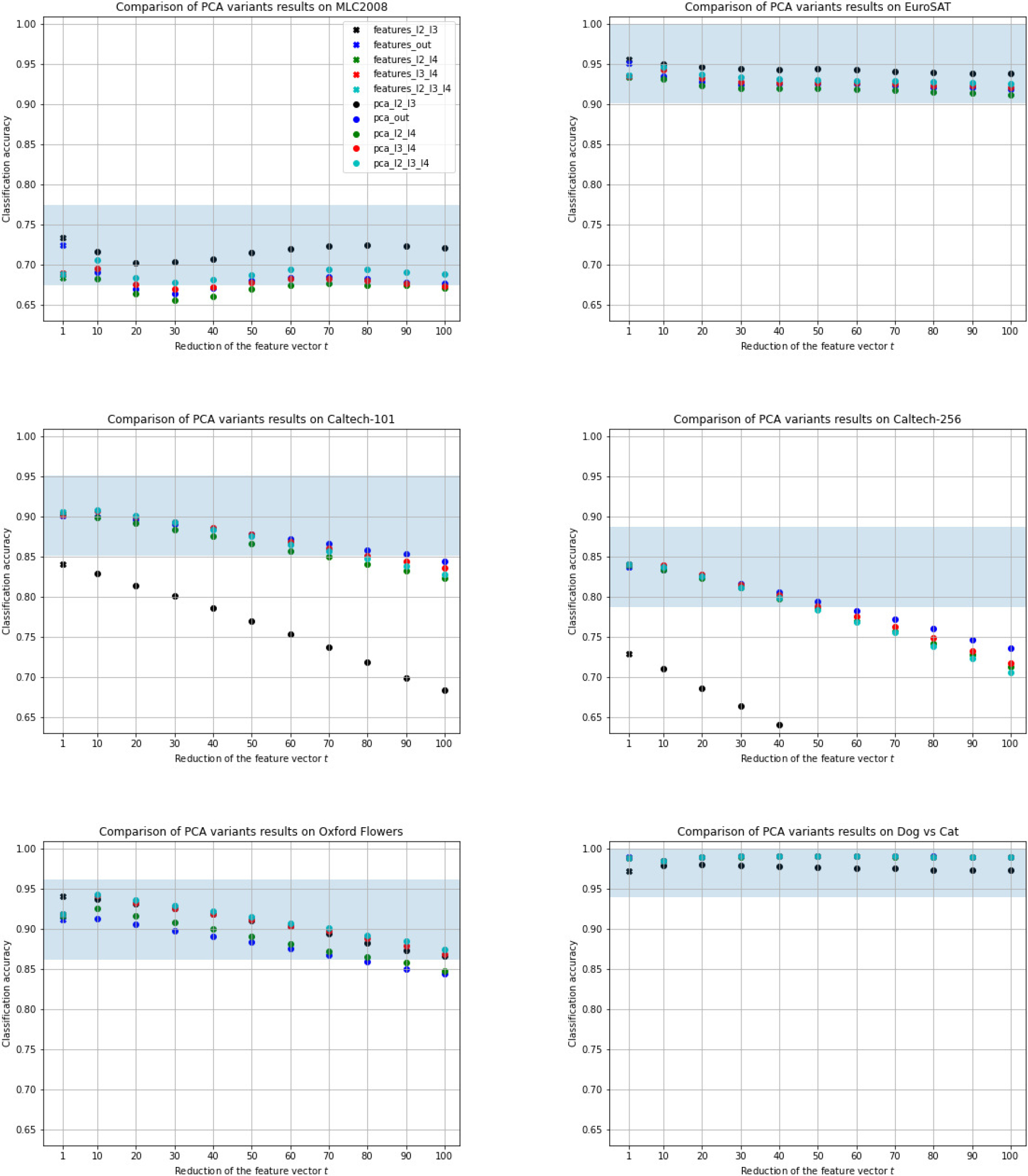

A comparison of classification results on various datasets depending on the dimensionality reduction factor

A comparison of classification results on datasets depending on the dimensionality reduction factor

For all datasets, the best results were obtained by using PCA. Increasing the dimensionality reduction causes a decrease in the classification results, while it happens the slowest with PCA. Reducing the feature vector by 60 times for almost all datasets (except for Caltech-256, where the result is slightly lower) does not cause a decrease in accuracy below the previously assumed five percentage points threshold. Moreover, for half of the datasets (the simpler ones), even after reducing the feature vector 100 times, the results remain similar to those obtained with the basic ResFeats. On the other hand, choosing a subset of the coordinates of the feature vector gives lower results, while it is usually better to randomize a subset of coordinates than to take every

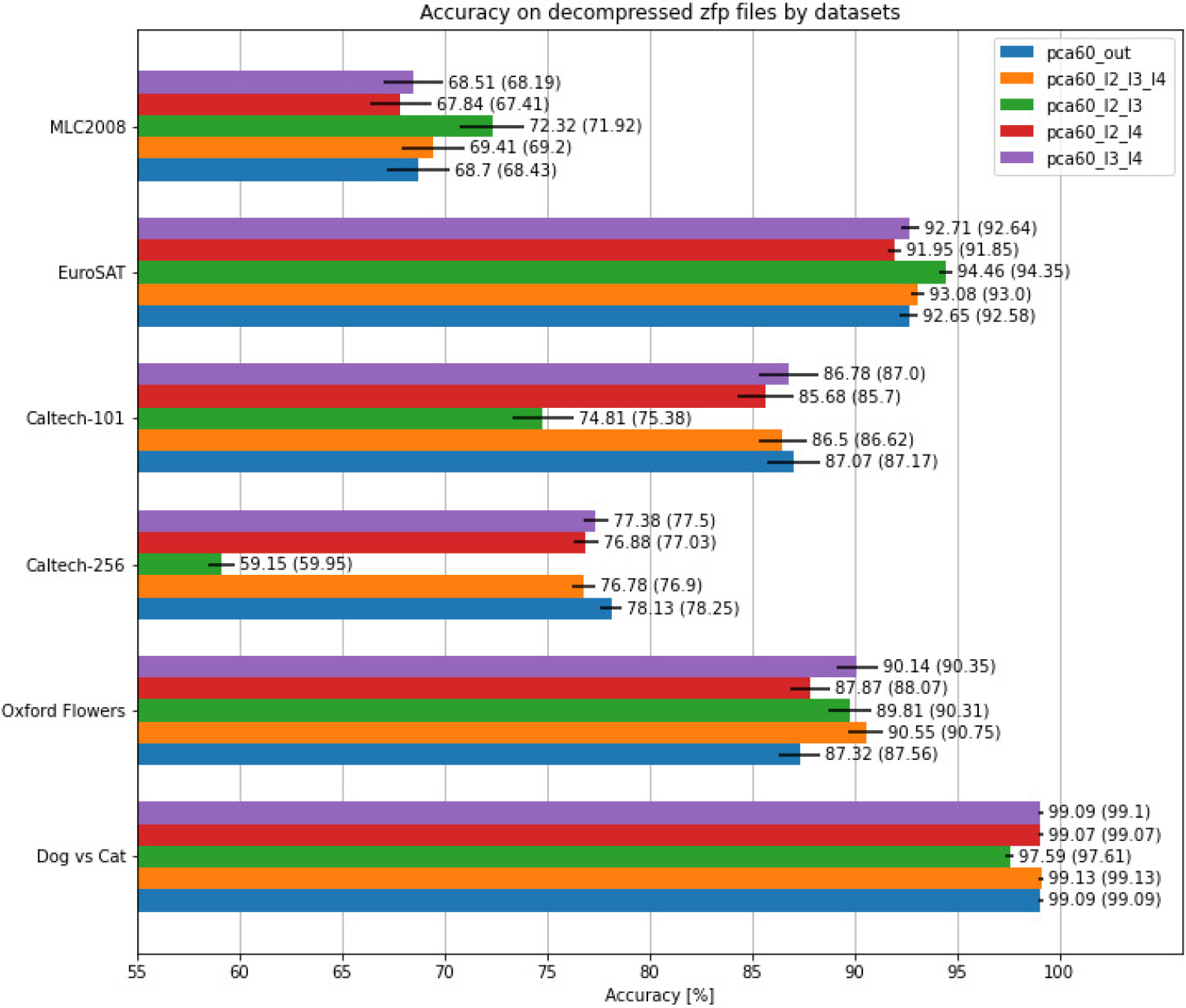

Classification results on the data obtained after decompression of ZFP compressed PCA-ResFeats with 60 components and the tolerance

Compression ratios of ZFP PCA-ResFeats representations, depending on the tolerance

As in previous experiments on the MLC2008 and EuroSAT datasets, the PCA-ResFeats classification achieved the best results for combinations of outputs from the second (2) and third (3) residual units. Unfortunately, for the more difficult two Caltech datasets, significantly lower results were obtained. The results of the other feature combinations are close to each other. Reducing the feature vector by 60 times compared to the basic ResFeats, using PCA (to get 60 components that explain most of the variance), results in no more than the assumed five percentage point deterioration away from classification with the (4a) layer (i.e., ResNet-50 output before Fully Connected Layer) for all datasets, except for the most difficult Caltech-256, for which we got slightly worse results. This means that each image can be represented by 60 float16 values, occupying only 120 bytes. This also means 120 times reduction of storage size compared to the basic ResFeats stored as float32. For the simplest set of Dog vs Cat, even after reducing the feature vector 100 times, i.e., selecting only the 36 most important components (only 72 bytes per image), the classification results are still at the same value as for the base ResFeats (i.e., about 99%). Thus, depending on a dataset, it is necessary to experimentally choose the best performing number of PCA-ResFeats components.

To make the storage and transfer of the PCA-ResFeats representation even more compact, we propose to use the ZFP compression method of its floating-point values [45]. Since this is a lossy compression technique, a comparison was made to see how the classification results are affected by the compression ratio, which is controlled by a tolerance parameter. Namely, a tolerance of

Comparison of classification results (with standard deviation bars) before ZFP compression (values in parentheses) and after decompression of ZFP compressed data, with a tolerance

Figure 6 shows a comparison of classification results (including standard deviations) after decompression of ZFP compressed data with a tolerance

PCA-ResFeats can be effectively used not only directly in the classification task but also in the well-known CBIR problem [48, 49]. Experiments were performed on the most challenging Caltech-256 dataset in two versions: balanced minimal (with 80 random images in each class, analogous to the previous classification task) and imbalanced (original). The datasets from both versions were divided into a training set and a test set in a ratio of 6:4 in 10 splits. On the training dataset, the images were preprocessed and passed through a network to determine the float16 basic ResFeats (2)

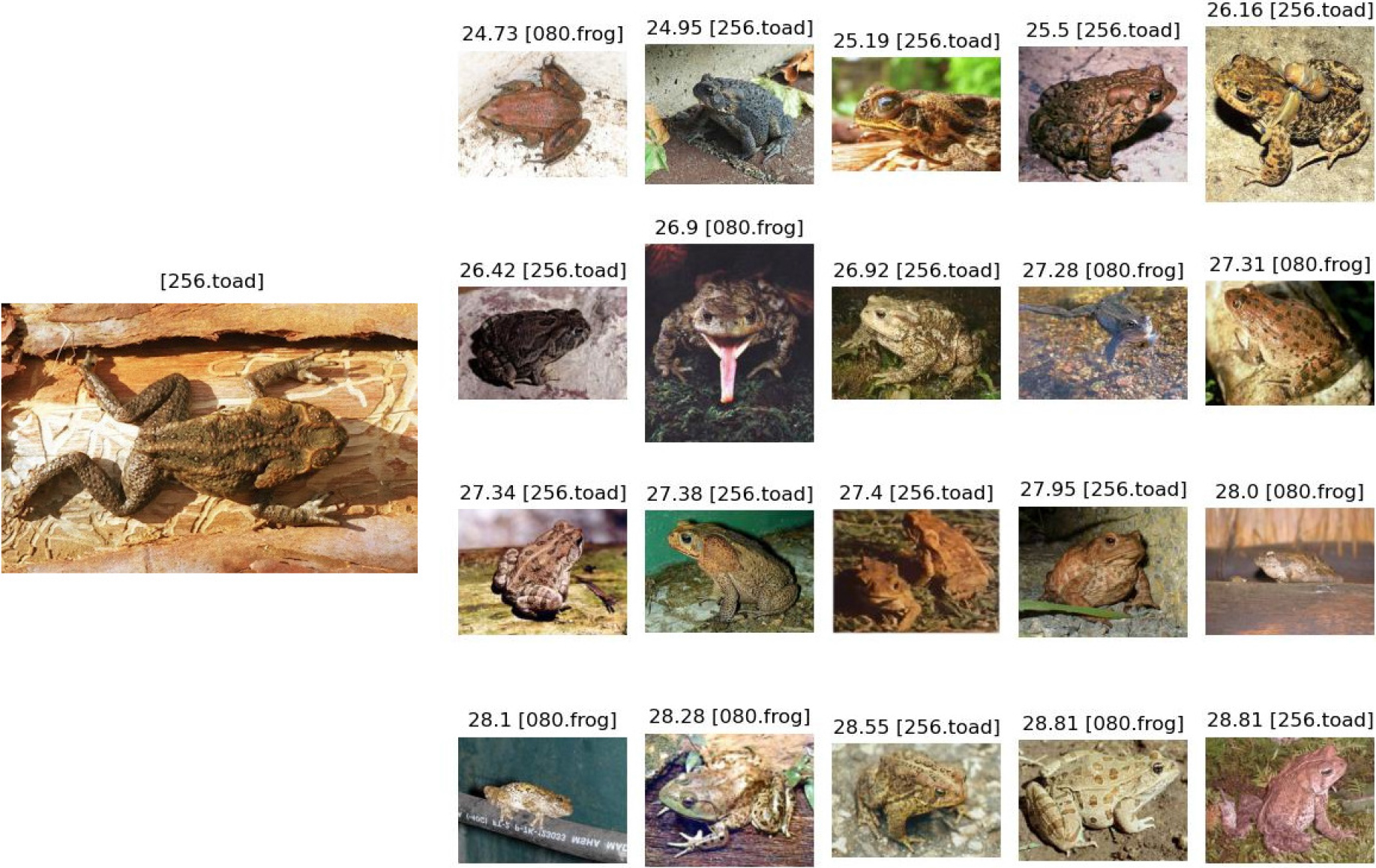

On the left, the query image (256_0081.jpg) from the Caltech-256

For evaluation, the metrics commonly used in the CBIR tasks were used: precision for

where

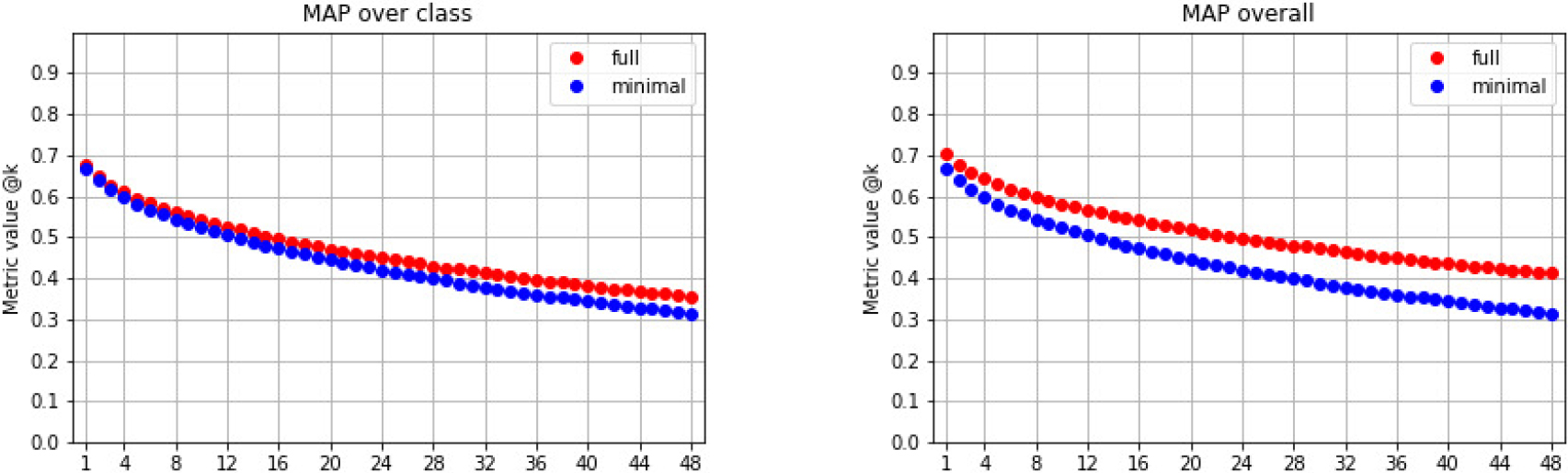

MAP for the full and minimal version of Caltech-256, along with a comparison to SOTA method, for

MAP results comparison on minimal Caltech-256 version

Figure 7 shows an example of a CBIR task. It presents the first 20 images with the smallest distance to the query image on the left. In this interesting case, just 60% of the responses indicate the correct class (i.e., “toad”), while the other returned images, not belonging to the valid class, are also “visually” similar and probably only an expert in the field can better distinguish between “toad” and “frog” classes.

The mAP (based on Eq. (5) for Caltech-256 reached 46.73% in the full version and 43.02% in the minimal (balanced) version. Figure 8 represents MAP@k from Eqs (3) and (4) respectively, as a function of the number

Table 6 compares the results of the proposed method based on PCA-ResFeats with other published methods used in the CBIR task for Caltech-256. The results clearly show that the proposed method performs about four percentage points better in the CBIR problem than other published methods, even choosing the least favorable way to compute MAP (43.02% vs. 38.98%). In addition, Caltech-256 contains very similar classes, such as “frog” and “toad”, as shown in Fig. 7.

Based on the results, the proposed PCA-ResFeats with an experimentally determined length of 60 components are particularly interesting. Table 7 shows a cross-sectional summary of the averaged accuracy results obtained for the following classification variants:

( ( ( ( ( ( ( (

Comparison of classification results with variants of ResFeats on six datasets

Comparison of our results with SOTA methods

The storage (in MB) required to store images vs. PCA-ResFeats in different formats and representations; (

For half of the datasets, the best classification results were obtained for ResFeats extracted from the output of the second (2) and third (3) residual units. In addition, these features have the shortest length (compared to other combinations). However, the Caltech classification results are significantly worse than the other features. Therefore, in the case of these two complicated datasets, the best trade-off between accuracy and feature size is the output (4a) of ResNet-50 as the image representation since the classification results are closest to the best results.

Using PCA, dimensionality can be reduced by up to several orders of magnitude. Our research has shown that selecting only 60 principal components makes it possible to reduce memory by almost 60 times without classification accuracy drop by more than 5pp compared to the raw representation. The best results were obtained for PCA-ResFeats layers (2)

PCA-ResFeats can be further compressed using the ZFP method, in order to store their several times smaller representations. Table 9 shows how much disk space (in MB) is occupied by each training dataset (in which all classes have the same number of samples), in the following representations:

( ( ( ( (

The column (

By storing a dataset as ZFP compressed PCA-ResFeats with

The CBIR task completion time was also measured for the balanced Caltech-256. The results are as follows: it lasted 2593.44 seconds to perform CBIR on features extracted from the second, third, and fourth layers of ResNet-50 with a length of 3584. It lasted 49.14 seconds to perform the same experiment with additional PCA calculation and computation of PCA-Resfeats with a size of 60 from the basic features. It lasted just 45.55 seconds to perform the CBIR task on the cached PCA-Resfeats (without determining them). This means a more than 60-fold reduction in computation time and shows the advantage of using our proposed features for this task. A possible additional cost would be to refer to the database containing the original to return a user-interpretable image. However, it is more affordable than calculations performed on more extended features.

All experiments, but the CBIR, were repeated 100 times (10 random splits of datasets in each of the 10 drawn datasets) for each combination of the method and its parameters and six data sets. This gives the total number of more than 222,000 experiments. These have shown that our proposed PCA-ResFeats performs well in image classification sets while achieving a high compression ratio for the representation of these datasets.

The method also has potential practical applications. A huge image database can be stored hierarchically on a large storage device, disk, or tape (which is increasingly becoming a storage [1]). It is combined with a high-speed layer containing access to equivalent image representations (PCA-ResFeats). The experiments have shown that in such a case, it is worthwhile to perform CBIR on small but informative representations and then perform another search limited only to the images obtained in the previous step. Such a process can be repeated iteratively for representations compressed to varying degrees. With successive iterations, the number of examples returned can also be reduced, further optimizing the process. Using our approach can significantly speed up the entire process.

All accuracy values, averaged results with standard deviations, ZFP compression reports and also other CBIR images are available here:

The experiments were conducted on a server with an AMD Ryzen Threadripper 3990X 64-Core Processor. The code was written in Python using the Pytorch library.

Conclusions and future work

In this paper, we propose methods to improve the efficiency of image analysis using features derived from the ResNet-50 convolutional neural network – PCA-ResFeats. This is a significant extension and improvement of the results obtained by the method of Mahmood et al. [15]. Computation of PCA-ResFeats consists of several steps. First, ResFeats – feature vectors consisting of no more than 3584 floating-point values – are extracted as a concatenation of outputs (after max-pooling) of residual units of ResNet-50, pretrained on ImageNet. However, we also tested different combinations of the output layers, showing their usefulness. Then, thanks to PCA, we reduce the size of the feature vector to 60 floating-point values. Research has shown that they can be stored in the float16 format without losing information. The proposed technique gives classification accuracy not worse than 5pp when compared with much larger basic ResFeats on the benchmark datasets MLC2008, EuroSAT, Caltech-101, Caltech-256, Oxford Flowers or Dog vs Cat. At the same time, they achieve significant storage reduction.

The second contribution proposed in this paper is the further compression of PCA-ResFeats with the ZFP method. An additional 3.5 compression ratio and up to about 220-fold memory reduction can be achieved compared to storing basic ResFeats.

Our approach has also been tested in the CBIR task. For example, for the difficult dataset Caltech-256 using the proposed PCA-ResFeats with 60 components, results outperforming other published methods were obtained, and this is the third contribution of this paper. In conclusion, compared to other studies, the developed method of representing images with semantic local descriptors PCA-ResFeats, combined with the ZFP compression, achieved SOTA. It can also be successfully used to prepare summaries of datasets.

In the future, we will explore the use of other network architectures, such as densely connected convolutional networks (DenseNet) [53] or MobileNet [54, 55], as well as network ensembles [56], to extract raw features and see if they contain redundant information with little impact on classification. We will also test the application of our approach to medical data [57, 58, 59, 60]. We plan to generalize our approach to 3D representations of medical images [61], such as whole scan imaging (WSI) in histopathology. In this case, our approach can be very effective due to the enormous size of the WSI data. We will also compare the results of PCA-ResFeats extracted from the network pre-trained on ImageNet with those extracted from the network after fine-tuning the domain dataset. In this case, our method can be used in many other fields that use compact image representation, e.g. construction, various production lines, transport, autonomous vehicles, etc. [62, 63, 64, 65, 66]. The next subject of study will be a fusion of the features obtained from different neural networks. Another direction for future research may be the issue of image preprocessing. Perhaps the approach presented in [67] would enable the application of our method to thermal images. We can also explore the modern image binarization [68].

Footnotes

Acknowledgments

This work has been supported by the AGH University of Krakow under subvention funds no. 16.16.230.434.