Abstract

Left bundle branch block is a cardiac conduction disorder that occurs when the electrical impulses that control the heartbeat are blocked or delayed as they travel through the left bundle branch of the cardiac conduction system providing a characteristic electrocardiogram (ECG) pattern. A reduced set of biologically inspired features extracted from ECG data is proposed and used to train a variety of machine learning models for the LBBB classification task. Then, different methods are used to evaluate the importance of the features in the classification process of each model and to further reduce the feature set while maintaining the classification performance. The performances obtained by the models using different metrics improve those obtained by other authors in the literature on the same dataset. Finally, XAI techniques are used to verify that the predictions made by the models are consistent with the existing relationships between the data. This increases the reliability of the models and their usefulness in the diagnostic support process. These explanations can help clinicians to better understand the reasoning behind diagnostic decisions.

Introduction

Automated ECG-based diagnosis is a classical field [1, 2, 3] with recent improved research due to the advances in Artificial Intelligence. Left bundle branch block is a cardiac conduction disorder that occurs when the electrical impulses that control the heartbeat are blocked or delayed as they travel through the left bundle branch of the cardiac conduction system. This results in a characteristic electrocardiogram (ECG) pattern, which progresses from incomplete LBBB (LBBB) to strict LBBB (sLBBB), defined by a prolonged QRS duration, a QS or rS pattern in the QRS complexes at leads V1 and V2 and the presence of mid-QRS notch/slurs in 2 leads within V1, V2, V5, V6, I and aVL [4]. sLBBB is associated with various underlying cardiac conditions, including hypertension, coronary artery disease, cardiomyopathy, and valvular heart disease. The clinical significance of sLBBB lies in its potential to cause cardiac dysfunction and the risk of developing heart failure, arrhythmias, and sudden cardiac death.

Recently, sLBBB has gained much attention since it was associated with clinical outcome of cardiac resynchronization therapy (CRT). CRT is a treatment for individuals with heart failure and conduction abnormalities. It is accomplished by a biventricular pacemaker that delivers electrical impulses to both the left and right ventricles, which helps synchronize their contractions and improve cardiac function. sLBBB was linked to improvements in CRT either in simulations [5] or patient studies [6, 7]. Accompanying this revision, an initiative in 2018 called for algorithms to detect strict LBBB in a full automatic way [8]. In general, automatic diagnosis of LBBB requires the correct detection of the morphological features that separate sLBBB from LBBB, grouping the latter with no LBBB at all. However, some individuals with LBBB may eventually develop sLBBB. This progression can occur due to the worsening of underlying cardiac disease, such as hypertension, coronary artery disease, or cardiomyopathy, or due to the development of scar tissue in the left ventricle. Therefore, it is important to identify and manage any underlying cardiac conditions that may be associated with the ECG changes. This can help prevent complications and improve patient outcomes.

The diagnosis of left bundle branch block can also be made using machine learning (ML) algorithms applied to ECG data. Studies have shown that machine learning algorithms can be highly accurate in diagnosing left bundle branch block from ECG data, with reported acuracies ranging from 70% to 82% [8]. Even though ML models can speed up the LBBB diagnosis, they generally act as black boxes, giving poor clues about the physiopathological processes underlying incomplete or strict LBBB. To overcome this flaw, techniques of Explainable machine learning (XAI) have arisen.

XAI [9, 10, 11, 12] refers to a set of techniques and methods used to make machine learning models and their predictions more transparent and interpretable to human users. The need for XAI arises from the increasing complexity of machine learning models, such as deep neural networks, which can have millions of parameters and layers that are difficult for humans to understand. XAI techniques [12] can be used to extract meaningful information from these models and provide explanations for their behavior. One of the most common XAI techniques is the use of feature importance methods, which identify the features in the input data that are most important for the model’s predictions. This can provide insights into the model’s decision-making process and help identify potential biases or errors in the model. Another widely used feature attribution method is Shapley values[13, 14], which, in addition to global explanations, allow for local explanations, i.e., determining the influence of each feature on a specific prediction. XAI can be useful in diagnosing Left Bundle Branch Block by providing insights into how machine learning algorithms make predictions and identifying the factors that contribute to the prediction.

Although there is a gap between making the correlations learned by a model transparent and establishing a causal relationship of why something happened [15], XAI techniques, used in conjunction with domain experts, can be useful to increase the trustworthiness in the models and identify the features in ECG data that are most important for the prediction of LBBB, providing valuable information to clinicians.

In this paper, a reduced set of biologically inspired features extracted from ECG data is proposed and used to train a variety of machine learning models for the LBBB classification task. Then, different methods are used to evaluate the importance of the features in the classification process of each model and to further reduce the feature set while maintaining the classification performance of the models. The performances obtained by the models using different metrics improve those obtained by other authors in the literature on the same dataset. Finally, XAI techniques are used to verify that the predictions made by the models are consistent with the existing relationships between the data. This increases the reliability of the models and their usefulness in the diagnostic support process.

The rest of this paper is organized as follows: Section 2 and subsections introduce the proposed methodology, the dataset structure, and the feature extraction and preprocessing algorithms. Section 3 contains the results obtained in the classification process by all the models and strategies, including a dedicated Section 3.4 for the explainability results. Section 4 contains a detailed discussion of the results in comparison with other approaches in the bibliography. Finally, Section 5 summarizes the conclusions, main contributions, and future work.

Materials and methods

Methodology

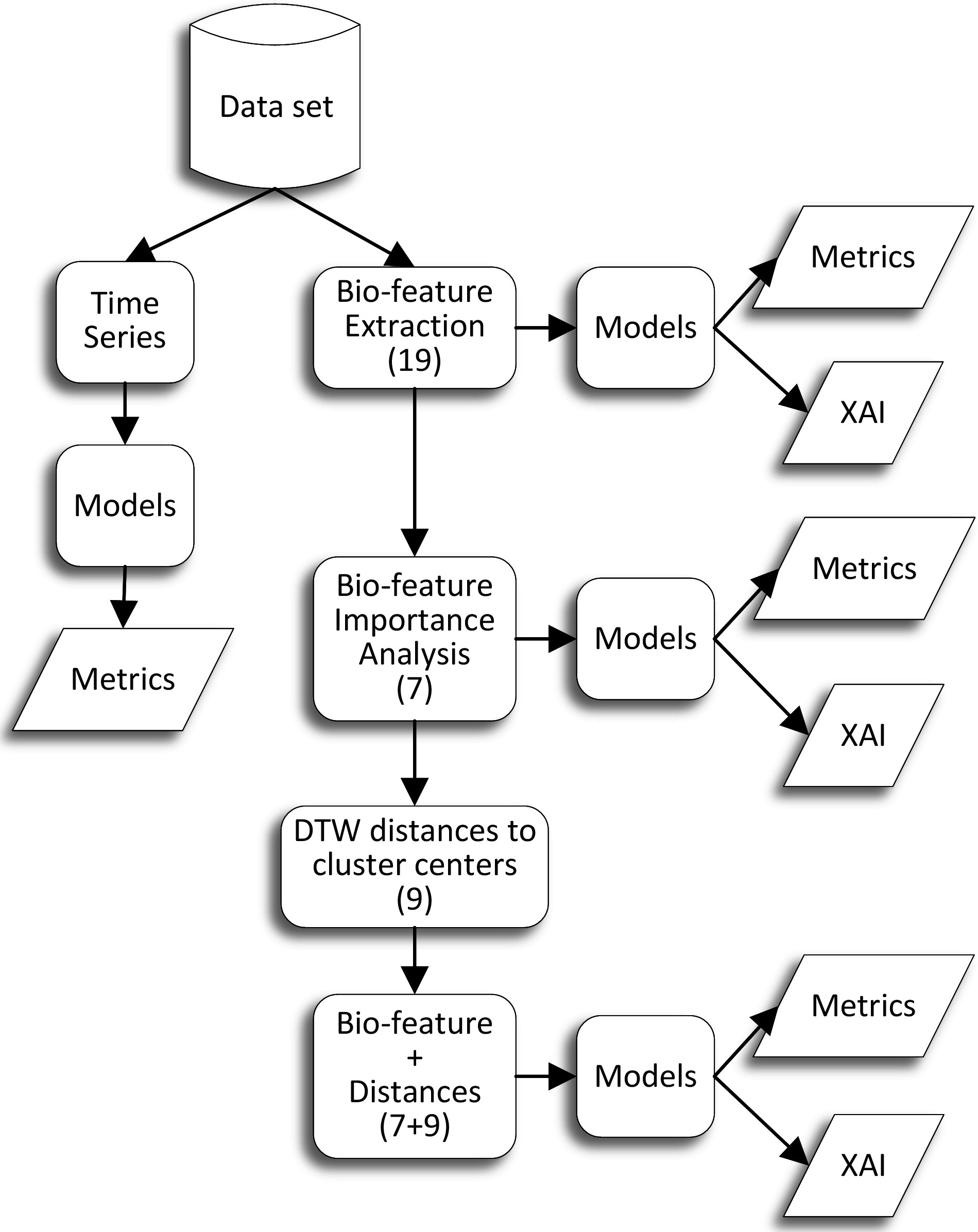

Proposed methodology.

The proposed methodology is summarized in Fig. 1. The ECG dataset used in this work is publicly available at the Telemetric Holter Warehouse project (see Section 2.2 for details). In a first preprocessing step, the ECG data were transformed into vectorcardiographic space time series (Section 2.3). We then used some of the state-of-the-art algorithms specifically designed for time series classification to train machine learning models to detect three classes: NoLBBB, LBBB and sLBBB (Section 3.1). These models were supposed to be the best performing and achieve the highest classification accuracy, since they have all the available information of each data sample (800 timesteps

The drawback of models that process time series directly, or that transform them into hundreds or thousands of statistical features automatically extracted from the time series [16], is that they are very complex, slow to train, and difficult to interpret. This is because the extracted features, if any, generally have no semantic value in the application domain, or even if they do, it is difficult for a human to analyze and understand the relationships between the (typically) hundreds of such variables that the algorithms extract and process.

Therefore, our research was aimed at extracting a reduced set of biologically meaningful features that could encode most of the information contained in the time series. In a first attempt, we computed a set of 19 bio-inspired features (defined in Sections 2.3 and 2.4). We then performed a correlation analysis to determine redundant features and a feature importance analysis using several algorithms to determine the most relevant variables for some classification models (Section 3.2). This resulted in a new reduced dataset of just 7 features for each sample.

Finally, we wanted to test whether the results on the features dataset could be improved using knowledge extracted from the time series. To this end, we used a clustering algorithm on the time series dataset to find the centroids (a kind of representative time series for each class) using the DTW [17] distance. Next, we used again the DTW to compute the distance of each time series to the centroids of each cluster. Finally we created a new dataset with 16 features, the 7-biologically relevant features, plus the 9 distances to the clusters.

We then trained machine learning models on the three bioinspired datasets and compared their performance metrics among themselves and with the time series models. In addition, we compare our results with those of other works in the literature (that deal only with the simpler case of binary classification). Finally, a post hoc explainability analysis was performed using SHAP values (Section 3.4) to determine whether the predictions made by the models were consistent with the existing relationships that could be observed in the data.

Data were obtained from the E-OTH-12-0602-024 database, publicly available at the Telemetric Holter Warehouse project (THEW) [18], as part of the initiative of the International Society for Computerized Electrocardiology (ISCE), in 2018 [8]. Data comprise 602 10-second ECG recordings of heart failure patients included in the MADIT-CRT clinical trial. The 12-lead high-resolution ECGs were recorded before CRT implantation using 24-hours Holter recorders (H12

ECG preprocessing

Transformation matrix for Inverse Dower transformation (IDT).

Transformation matrix for Inverse Dower transformation (IDT).

ECG data were transformed to the vectorcardiographic space (VCG) by means of the inverse Dower matrix as shown in Table 1. This transformation poses the orthogonal leads,

Signals were delineated by means of a wavelet-based algorithm using the WT-delineator library in Python [20]. The delineation algorithm detects the onset and offset of the QRS and T-wave, from which the QRS and T loops were constructed. In most of the cases, a manual correction was needed afterwards due to extremely aberrant ECG morphologies. From the latter ECG waves, a set of 19 features were obtained, as follows.

QRS-T angles were obtained for the planes

with

where

where

The QRS and T areas were calculated by summing up all the squared contributions from the intervening leads. Following, we provide the formula for the QRS area in the

Spatial variance quantifies the maximal Euclidean distance of every lead within an ensemble from the lead ensemble average. In [21] there is a detailed description of the method for the ECG case. In this piece of work, all three VCG leads were utilized for spatial variance computing. Briefly, a 80-ms window centered around the QRS complex in lead

Performance of the time series models

Performance of the time series models

List of 19 scalar features extracted for each record in the ECG dataset (upper), and the 7 most relevant features finally selected (lower)

Correlation analysis between leads also contributes to spatial heterogeneity, restricted to pairs of leads. In preserved conduction patients, the intrinsic deflections of both leads are conserved, then the correlation signal will be centered at zero ms. In the presence of conduction disorders, however, the maximum of the cross-correlation signal will present a latency. The original correlation marker was presented on ECG leads

with

The same computation was accomplished for the pair of leads

On the other hand, the width of the cross-correlation signal (Width_xy, in ms) was measured at 0.7*peak amplitude of the correlation between pair of leads

Classification for time series dataset

In order to have some baseline models to compare, several time series classification models have been developed over leads

For the development of the time series models, we used the sktime[16] framework that has more than two hundred dedicated time series algorithms for classification, regression, clustering and anomaly detection. We selected some of the most promising models to test our dataset such as KNNTSC, a KNN (K-Nearest Neighbors) Time Series Classifier using DTW (Dynamic Time Warping) as the distance metric; CIF and DrCIF, which are two variations of the Canonical Interval Forest classifier; TDE (Temporal Dictionary Ensemble) a model that uses a bag of words representation from the Fourier Transform of the time series; RRotForest (Random Rotation Forest), that builds a forest of trees on random portions of the data transformed in features using PCA (Principal Component Analysis); HIVECOTEV2, a meta ensemble of several classifiers (DrCIF and TDE between others) that work on different domains; and finally, ROCKET (RandOm Convolutional KErnel Transform) and MultiROCKET, that use random convolutional kernels to transform time series data and then trains a linear classifier on the transformed features, which represent nowadays the state of the art in common time series classification benchmarks.

Identification of relevant bio-inspired features

As mentioned before, our main objective was the extraction of relevant features from the ECG records that have a predictive power similar or better than the time-series models but are simultaneously smaller in number, easier to train, and more understandable for the physicians. To this purpose, from the ECG time-series, a total of 19 bio-inspired features were extracted for each record as explained in Section 2.3 and used for the bio-inspired features tabular dataset, thus having a size of [560, 19] ([samples, features]). The list of extracted features is shown in the upper part of Table 3.

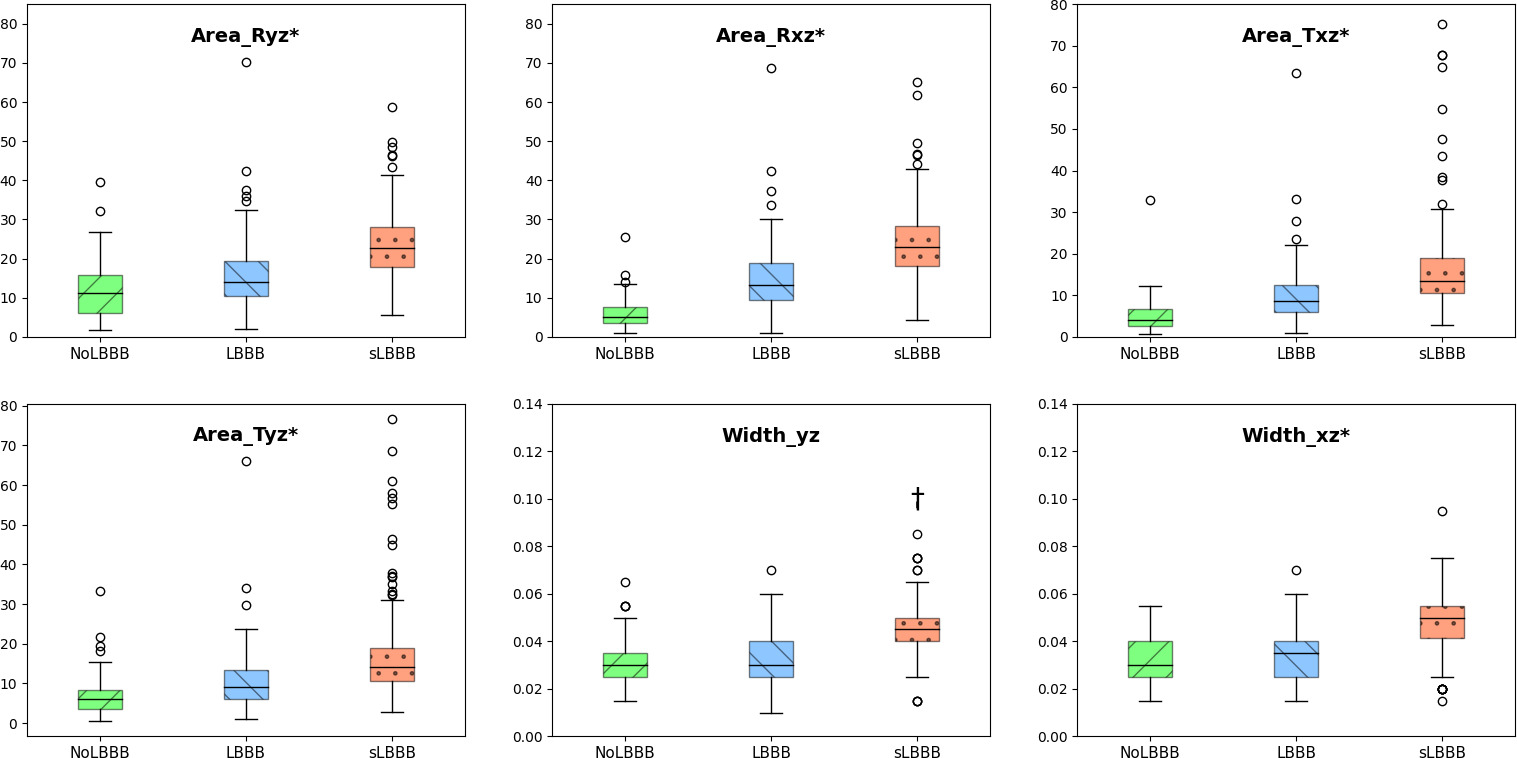

Boxplots for physiological features on classes NoLBBB, LBBB and sLBBB.

Figure 2 shows the boxplots of 6 representative physiological features used in the classification for NoLBBB, LBBB and sLBBB classes. Note that all of the features but Width_yz resulted in significantly different in pairwise comparisons among all three classes by the Bonferroni posthoc test following Analysis of Variance (ANOVA). In particular, areas either in the QRS or T loops significantly increased from NoLBBB to sLBBB in a progressive way (

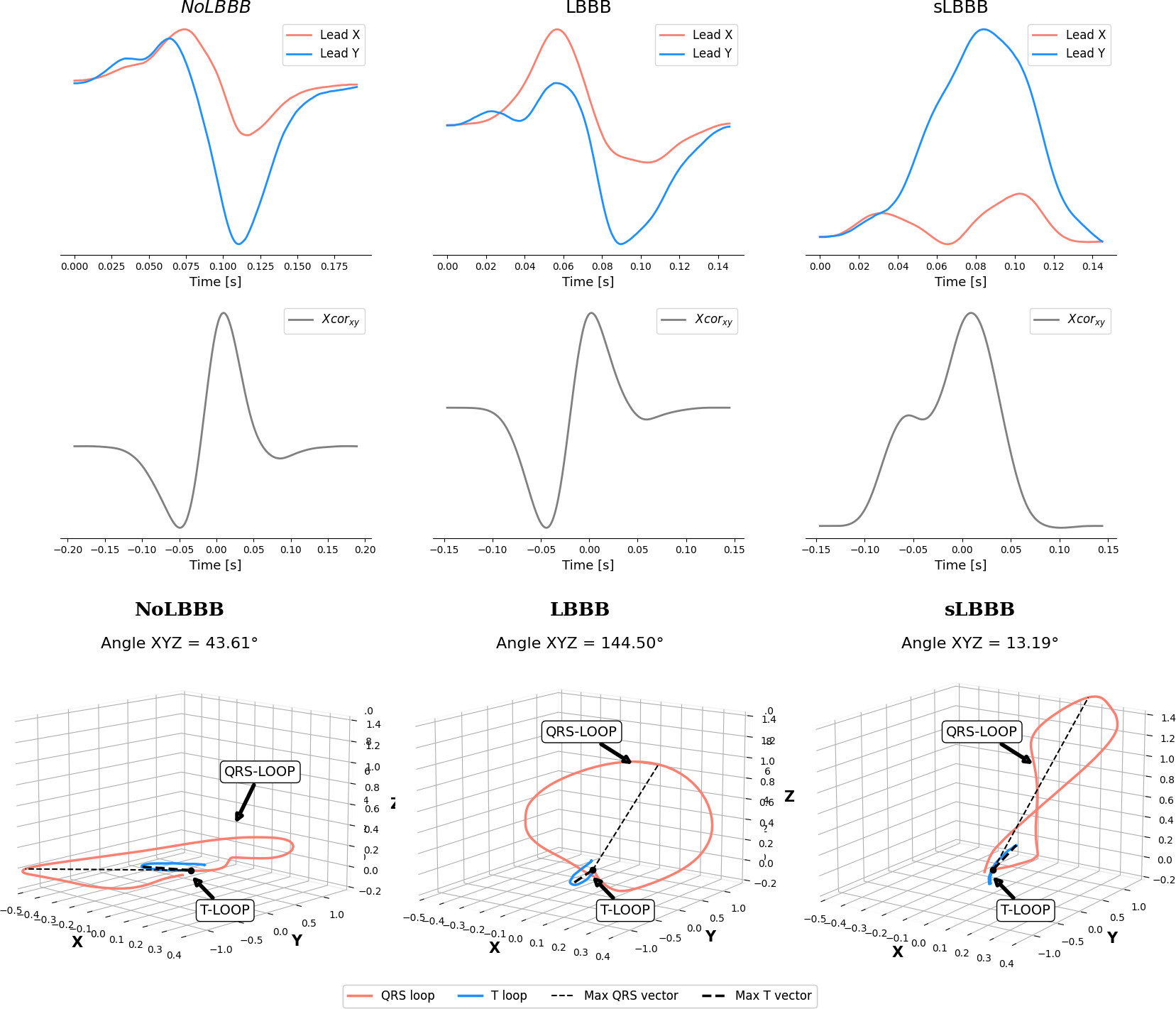

Representative example of physiological features for NoLBBB, LBBB and sLBBB patients.

A direct exploration of the physiological features showed that some of these were highly correlated, therefore a multicollinearity analysis was performed. Multicollinearity can lead to unstable and unreliable estimates of regression coefficients [30] and makes it difficult to distinguish the effects of each independent variable in the target, that is, it can hinder the explainability of the model. We used Pearson’s correlation coefficient and VIF [31] (Variance Inflation Factor) to determine the strength of multicollinearity and performed an iterative feature selection process to select a subset of independent variables that were not highly correlated. This feature selection process was complemented with a feature importance analysis. Feature importance refers to techniques that assign a score to independent features based on how useful they are at predicting a target variable. Therefore, this is a way to understand which features have the most impact on a model’s prediction. There are several methods for calculating feature importance, among others, the following were used in our case. Tree-based algorithms automatically compute importance scores (TIS) during training based on the reduction of the split points, like Gini impurity or entropy, that could be used to determine feature importance [32]. Univariate Feature Selection [33] (UFS) uses statistical test to select the features that are more correlated with the output variable. Recursive feature elimination [34] (RFE) recursively removes input features and fits a model on the remaining ones, finally it uses the model’s accuracy or any provided metric to select the subset of features that better predict the target variable. Permutation Feature Importance (PFI) [35] works by calculating the increment in the model’s prediction error when a feature value is randomly permuted. Finally, SHAP (SHapley Additive exPlanations) [14] is a method that can be used to determine the importance of features in a machine learning algorithm. It is based on the computation of SHapley values, which measure the influence of each feature on the model’s prediction. Therefore, SHAP is also one of the most relevant ML explanation methods.

As those feature importance estimators depend on the model used to predict the target variable, we used 3 of the most widely used algorithms for tabular datasets: Xgboost, Random Forest and SVM, a classical method [36, 37] with recent applications in many different fields [38], to build 3 base-models and evaluated all the mentioned feature importance estimators on them.

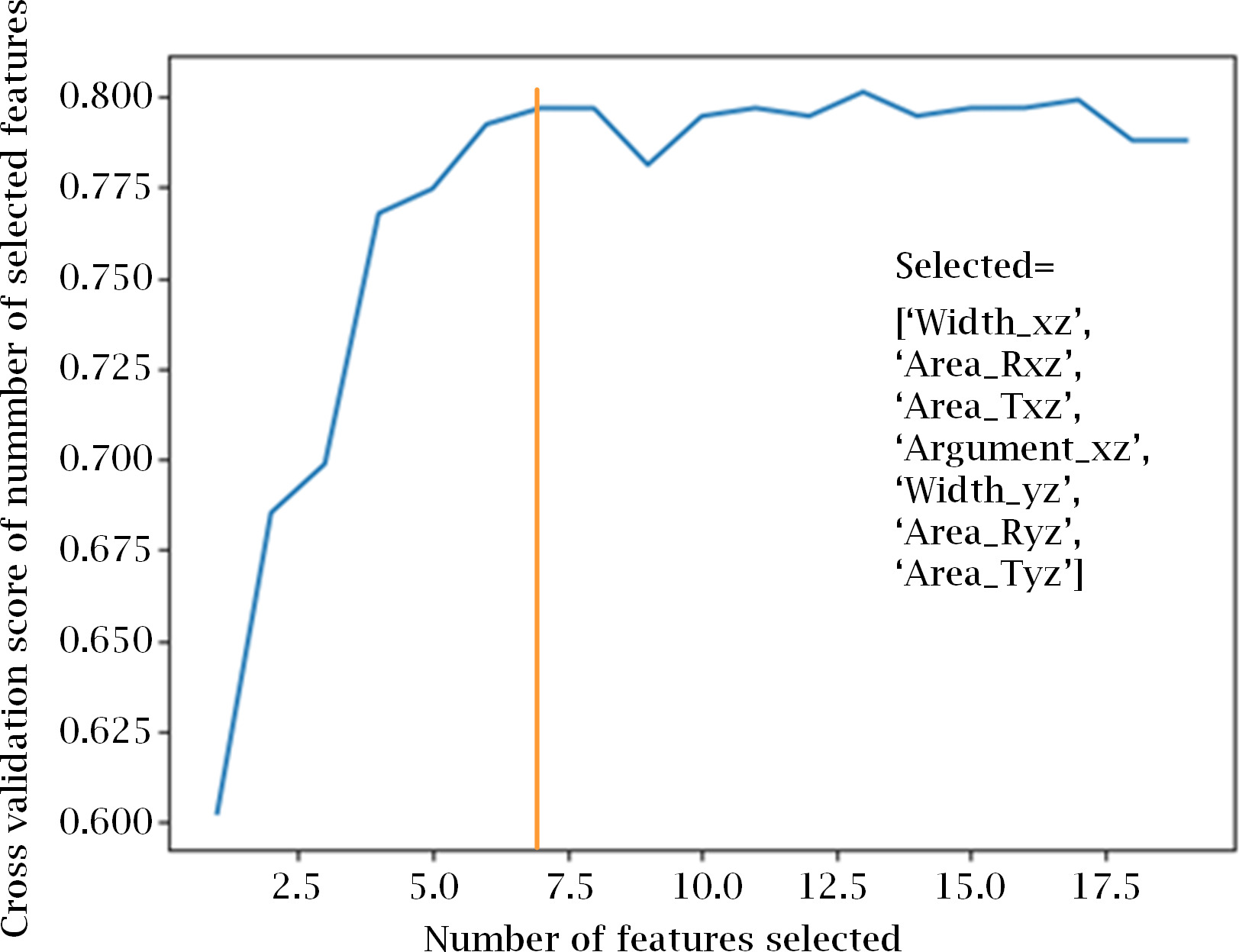

Recursive feature elimination with CV for automatic tuning of the number of features. Model’s accuracy greatly improves from using 1 up to 7 features, were it begins to saturate. Figure shows also the list of the the 7 selected features.

Determining feature importance for the Random Forest model: (a) RF tree split-entropy. (b) Univariate selection using ANOVA F-value. (c) Permutation Feature Importance. (d) SHAP values average impact on model output by class.

From the results of RFE with 5-fold cross-validation (CV) we estimated the optimum number of features as 7, as seen in Fig. 4. To select the most relevant and less correlated features, we took into consideration the 10 most important features provided by each method and model, and selected those that were common to a majority of them.

As an example, Fig. 5 shows the contribution for a Random Forest model. TIS, UFS and SHAP have 6 features in common between the first 10, while RFE has 5, and PFI, 4. The result of this analysis was a subset of relevant features, shown in the bottom part of Table 3, that we used to train our machine learning models on, as explained in the next section.

Finally, we wanted to test if results on the features dataset could be improved using knowledge extracted from the time series. To this point, we used a clustering algorithm on the timeseries dataset to automatically find the centroids of 3 clusters within the data. For time series, each centroid can be seen as representing the “mean observation” within a cluster across all the time steps, an is therefore another series. In multivariate timeseries dataset, the centroids could be used to understand the average behavior of each variable within each cluster.

We used the KNNTSC algorithm available in sktime, a version of the classical KNN for time series data, and fitted the model using both Euclidean an Dynamic Time Warping (DTW) distances. DTW [17, 39] is reportedly a better metric for time series clustering because it gives more robustness to the similarity computation. It replaces the one-to-one point comparison used in Euclidean distance with a many-to-one (and vice versa) comparison. This allows DTW to compare time series of different lengths and be invariant to time shifts, which is important when comparing time series data.

Next, we used again the DTW to compute the distance for each time series to the centroids of every cluster. Therefore, for each record in the dataset consisting in 3 timeseries (X, Y and Z components), we obtained 9 scalars: the DTW distance or each component to each centroid. Finally we created a new dataset with 16 features for each sample, consisting of the 7-biological relevant features, plus the 9 distances to the clusters.

Metrics of the base-models for the 19-features dataset

Metrics of the base-models for the 19-features dataset

Development of models for the 3 bio-features datasets was accomplished in a two stage process. In the first stage, a set of 15 well-known Machine Learning algorithms were trained on the features dataset, using a simple train-test split approach (80%–20% respectively), and mostly default or common-use set of hyperparameters. From the results obtained, shown in Table 4, we selected the 4 better performing algorithms, LDA, ET, RF and Xgboost, for further fine tuning.

Fine tuning was carried out in the second stage by using the following procedure. For each of the 4 algorithms, we defined 500 unique models using different combinations of hyperparameters based on a standard grid search. Each model was in turn trained using a 10-fold cross-validation schema, to ensure the consistence of the performance metrics.

For comparison purposes, we repeated this process for the original 19-features dataset, the 7-most-relevant features dataset and the 7-relevant features plus 9-distances-to-centroids dataset. In the end, 2000 different models were trained for each of the 3 feature-based datasets.

The 500 models of each machine learning algorithm were fitted and ordered by their mean accuracy over the 10 trained folds. We use accuracy in this case to select the best models, as it was the most relevant metric for our classification purposes and classes are not highly unbalanced, but F1-score would also had been a good choice. The scores of the best model for each algorithm on each of the 3 feature-based datasets are shown in Table 5. Precision, Sensitivity and F1-score have been averaged over the 3 classes.

Metrics for the best performing models on the 3 bio-inspired features datasets, for the 3-classes classification task

Feature dependence plots for the ET model on the 7feat+9dist dataset. Each row shows shap values for a given feature (Width_xz, area_Rxz, Area_Txz, Argument_xz), related to each class (left: NoLBBB, center: LBBB, right: sLBBB). Color corresponds to a second feature that has strongest interaction with the feature on the x-axis.

Local explanation for sample 347. Top: 3 force plots (one for each class: 0 for NoLBBB, 1 for LBBB, 2 for sLBBB) of the Shap values for this sample visualize how the 7 bioinspired features contribute to a specific prediction. Each force plot shows the base value for the class and the contributions from each feature to the final prediction. Red arrows indicate positive contributions, while blue ones indicate negative contributions. The length of the arrow indicates the magnitude of the contribution. Bottom: 7 Kernel Density Estimate (KDE) plots visualize the distribution of the 7 bioinspired features, by class. Superimposed, a dotted-red vertical line marks the value of that feature for this sample. The 8

It can be observed that the performance for the 7-feature dataset is only slightly lower than for the 19-feature dataset. This suggests that the previous feature importance analysis was correct and that most of the variance in the dataset can be explained by just the 7 most relevant features. Moreover, the best performance is consistently obtained for the 4 metrics for the 7feat+9dist dataset, with the Extremely Randomized Trees (ET) algorithm getting the highest absolute scores for each metric. This seems to support the idea that the 9 distances help the algorithm to slightly improve its predictive power.

Compared with the time series models, it should be noted that the bio-inspired features dataset achieves a slightly lower accuracy (82.64% vs. 83.75% for the best performing models), albeit still comparable, with the advantages of lower complexity and better explainability. To determine whether this difference is significant, a Kruskal-Wallis [40] hypothesis test was performed on the validation k-folds between the best models (accuracy over 80%) of both types, which resulted in p-values of 0.787, 0.974, 0.377 and 0.985 for the 4 metrics (accuracy, sensitivity, precision and F1-score respectively), all of them above 0.05. Thus, it can be affirmed that there is no significant statistical difference between the two cases, and our biological features are able to condense in a few values most of the information contained in the time series.

One commonly used method to determine feature attribution is Shapley values. Shapley Values rely on examining how each feature influences the predicted value of a model by generating many predictions based on a partial set of the features used by the model and comparing the results of the predicted values. In our case, the Python library SHAP [14, 41, 42] was used for the computation of the Shapley values. As an example, the SHAP values for 4 of the 7 relevant features are shown in rows in Fig. 6. Each column corresponds to the shap values for each of the classes. Within each plot, each point is the shap value corresponding to one of the samples for that feature and class. Positive shap values indicate that the corresponding values of the feature would contribute positively to the classification of the sample as belonging to that class. Negative shap values would indicate that the values of that feature are decreasing the probability of belonging to that class.

Note that there are biological parameters that serve to discriminate one class from the other two, but not between those two (e.g. Width_xz would separate only sLBBB from the other two classes, and Argument_xz, would separate only the NoLBBB from the rest), while other parameters serve to discriminate between the 3 classes, such as Area_Rxz and Area_Txz.

For instance, for Width_xz lower than 0.06 seconds, there are positive SHAP values for NoLBBB and LBBB, while for values greater than 0.06 seconds, positive SHAP values appear for sLBBB, indicating sLBBB. Area_Rxz and Area_Txz are different. If values are lower than 16, points to NoLBBB, between 16 and 20 to LBBB, and greater than 20 to sLBBB. The values for Area_Txz ranging from 0 to 5 account for NoLBBB, from those 5 to 15 indicate LBBB, and those greater than 15 sLBBB. Finally, if Argument_xz is lower than 5 points to NoLBBB, while if greater than 5 LBBB or sLBBB. It is worth mentioning that the yz plane with its features Area_Rxz, Area_Txz and Width_yz have followed a similar but slightly weaker pattern than their counterparts in the

In addition to global explanations, SHAP values can also provide local explanations, i.e., explanations of the model outcome (classification) for a single data sample. An example of this is shown in Fig. 7. The upper part of the figure shows 3 force plots corresponding to the 3 classes presenting the SHAP values for each of the 7 bioinspired features that explain the classification made by the model. This case corresponds to sample 347, labeled as class1 (LBBB), which the model has erroneously classified as class2 (sLBBB).

The force plots show that this sample was classified as class2 because SHAP values (Width_yz

We can try to compare the explanation that SHAP provides about the associations learned by the model with a basic statistical analysis of the data to see if the model is wrong or if the classification is consistent with the relationships that we can see in the data itself. This analysis has been performed in the lower part of Fig. 7, where the probability density functions (KDE) of each of the 7 bioinspired features are plotted for each of the classes.

In the KDE graphs, it can be seen how the values of the same features that contributed positively to belonging to class2 in the force graphs (Width_yz, Area_Rxz, Area_Ryz, Area_Txz and Area_Tyz) for this sample are indeed very typical of class2 , to a much greater extent than for classes 1 and 0. Therefore, these relationships have been correctly learned by the model.

KDE analysis also showed that Width_xz contributes positively to classes 0 and 1, which is also consistent with the model as can be seen from the corresponding force plots. However, the model considered that Argument_xz contributed positively to class0, but negatively, albeit very slightly, to class1, which in this case would be in disagreement with the observed distributions for this parameter.

Discussion

Metrics for the best performing models on the 3 bio-inspired features datasets, for the binary classification task

Metrics for the best performing models on the 3 bio-inspired features datasets, for the binary classification task

To the best of our knowledge, there are no other works in the literature that use this dataset to classify LBBB into 3 classes. Therefore, to evaluate our strategy, we decided to apply the same procedures that produced Table 5 to the simpler case of binary classification, to allow a fair comparison with the literature (results shown in Table 6).

The results show an increase in the performance of all models with respect to the 3-class problem. The majority of them achieving an accuracy above the 85%. The Extremely Randomized Trees (ET) for the 19-feature dataset is the best model, in terms of accuracy (86.47%) and F1-score (87.76%). In this case, however, adding the 6 distances to the clusters provides a negligible improvement over the dataset with only the 7 most relevant features. In any case, its metrics fall below those of the original 19-feature dataset.

With respect to the results of other groups, although it is not possible to establish a direct correspondence since the experiments were performed under different conditions, our models for binary classification improve the results obtained by all participants in the International Society for Computerized Electrocardiology’s initiative for automated LBBB detection in terms of accuracy, sensitivity and precision. According to [8], the best accuracy reported by the 7 participants in the initiative was of 82% (with 69% sensitivity and 87% precision) [43]. In 2020, however, Yang et al. achieved a 88.7% accuracy, with a sensitivity

The good results obtained for the binary classification problem validated the relevance of the extracted bioinspired features for the determination of the sLBBB presented in Section 2.3, which led us to apply the same strategy in the case of 3 classes. As explained in Section 3.3 and resumed in Table 5, for the 3-classes dataset we obtained a 82.63% accuracy and 82.32% F1-score, results similar to what other groups claim for the binary problem. At this point it is also worth mentioning that the ternary classification (NoLBBB, LBBB and sLBBB) presents quite a challenging problem, since differences among groups, in particular between LBBB and sLBBB can be very small. Despite these issues, we insist in a ternary classification, since we believe that early diagnose of mild LBBB may improve the natural evolution to sLBBB. Therefore, we give value to either sLBBB or LBBB diagnosis equally.

Regarding physiological features, we have separated those which contribute to differentiate three classes from those that discern only two. To begin with, notice that the lead

Analyzing the features by means of the feature importance obtained in Figs 5, 3 and dependence plots, as shown in Fig. 6, QRS area in the

The contribution of parameters based on correlation analysis (Width_xz and Argument_xz), however, was useful just for binary classification, with a lower performance at the ternary problem. According to Fig. 6, Argument_xz was able to distinguish between NoLBBB versus both LBBB and sLBBB, but failed in the separation of the latter groups. The oppositte occured with Width_xz, which managed to differentiate sLBBB from the remaining classes, but presented similar SHAP values for NoLBBB and LBBB. This fact is not consistent with [50], where both parameters explained the electrical activation of the free wall of the left ventricle with an Adjusted

In this study, we have explored the potential of explainable artificial intelligence (XAI) in the detection of the three levels of left bundle branch block (LBBB) using physiological parameters. The results show that XAI can increase the performance of LBBB detection, and they can be used to provide also explainable and interpretable insights into the underlying physiological mechanisms.

We have shown that a reduced set of biologically inspired features extracted from ECG data can be used to evaluate the importance of the features in the classification process of each model and to further reduce the feature sets while maintaining the classification performance of the models. The performances obtained by the models using different metrics improve those obtained by other authors in the literature on the same dataset. Finally, XAI techniques have been used to verify that the predictions made by the models are consistent with the existing relationships between the data. This increases the reliability of the models and their usefulness in the diagnostic support process. Our results also highlight the importance of transparency and interpretability in AI-based medical applications, as they can help clinicians better understand the reasoning behind diagnostic decisions and facilitate trust and adoption of AI tools in clinical practice.

In future works we well refine our research with new methods as Neural Dynamic Classification algorithm, Dynamic Ensemble Learning Algorithm, Finite Element Machine for fast learning, and self-supervised learning [51, 52, 53, 54] for improving the results and explanations.Overall, our study shows that XAI has potential to revolutionize the way we diagnose and treat cardiovascular diseases, by providing accurate and interpretable insights into complex physiological mechanisms. Further research is needed to validate our findings in larger and more diverse patient populations, and to explore the potential of XAI in other medical domains.

Footnotes

Acknowledgments

The work reported here has been partially funded by Grant PID2020-115220RB-C22 funded by MCIN/AEI/ 10. 13039/501100011033 and, as appropriate, by “ERDF A way of making Europe”, by the “European Union” or by the “European Union NextGenerationEU/PRTR”. This research has been also funded by a PhD scholarship from the National Council of Science and Technology (CONICET) and by Grant 26-DI-FEIRNNR-2023 from Universidad Nacional de Loja (Ecuador).