Abstract

Thermal images are widely used for various applications such as safety, surveillance, and Advanced Driver Assistance Systems (ADAS). However, these images typically have low contrast, blurred aspect, and low resolution, making it difficult to detect distant and small-sized objects. To address these issues, this paper explores various preprocessing algorithms to improve the performance of already trained object detection networks. Specifically, mathematical morphology is used to favor the detection of small bright objects, while deblurring and super-resolution techniques are employed to enhance the image quality. The Logarithmic Image Processing (LIP) framework is chosen to perform mathematical morphology, as it is consistent with the Human Visual System. The efficacy of the proposed algorithms is evaluated on the FLIR dataset, with a sub-base focused on images containing distant objects. The mean Average-Precision (mAP) score is computed to objectively evaluate the results, showing a significant improvement in the detection of small objects in thermal images using CNNs such as YOLOv4 and EfficientDet.

Introduction

The main targeted application (ADAS) must remain reliable whatever the weather and lighting conditions, by day and by night, for tracking pedestrians and vehicles and estimating their trajectories. That is why thermal sensors are generally used, despite their low contrast, their blurred aspect, and their low resolution. Due to such drawbacks, small objects are often indiscernible, limiting the detection distance and thus the ADAS efficiency.

To improve the quality and therefore the relevance of image interpretation, the use of neural networks has gradually replaced the application of image processing tools. Nevertheless, it is legitimate to ask whether an image pre-processing step could improve the performance of such neural networks.

We will see in the next section “Related works” that previous papers, mainly dedicated to visual enhancements, proposed various pre-processing tools to improve the aspect quality of thermal images. In the present paper, we focus mainly on algorithms performing contrast enhancement, deblurring and super-resolution.

The originality of our strategy first lies in the choice of a specific framework to perform contrast enhancement: the LIP (Logarithmic Image Processing) framework, which presents several advantages: it is based on strong physical properties, its addition law of two images remains in the considered grey scale, which means that it results in a real image, without any truncation, contrary to a classical addition of two grey levels, and finally, it is consistent with Human Vision, which ensures that preprocessed images remain interpretable by the system as a human eye would do.

Another contribution of the present work is to study precisely what can bring each of the three considered approaches (contrast enhancement, deblurring, super resolution) for improving the detection of small objects in thermal images. Various combinations of these approaches have been tested to select the most efficient ones.

Concerning the experiments, we know that multiple databases exist for visible images, but only a few exist for thermal images. CNN are known to perform better with large databases. In our case, available thermal images are in much lesser quantities than visible images. To compare our results to existing ones obtained with CNN object detectors like YOLOv4 or EfficientDet, we have chosen to work with the classical dataset FLIR. More precisely, we have created a subset of FLIR, constituted of images containing small sized objects, namely pedestrians and cars.

Related works

Previous works, mainly dedicated to visual enhancements, proposed various pre-processing tools to improve the aspect quality of thermal images, like histogram equalization [1] or dynamic range expansion [2] with the aim of improving contrast in thermal images. Recently, methods using Mathematical Morphology and more precisely the Top-hat operators [3] permit to detect bright parts, or hot zones, of a thermal image. However, bright objects, like pedestrians, will appear with variable size, making the choice of the structuring elements critical for applying morphological operators. To overcome this, [4] and [5] designed a multi-scale version of the Top-Hat operation, using two structuring elements of same shape and different size. In a same way, [6] designed a ring structuring element to be used in a Top-Hat regularization to help the detection of small targets.

The low resolution of thermal images prevents precise measure and detection. Jones et al. [7] address this problem of low resolution in their studying of leaf temperature as an indicator of plant water deficit. The studied images are mostly remote sensing images, and the authors focus on the Mixed Pixels problem. Using another high-resolution sensor, we can estimate sub-pixels values by identifying the influence of the surroundings pixels. Considering the low resolution of thermal images and our own objective to precociously detect obstacles (ie. when they are distant, therefore small), we find ourselves in a similar situation, where the pixel size is not negligible compared to that of objects. In the case where the structure of the object seen is a priori known, [8] proposes an algorithm to precisely locate defects causing a difference in thermal conductivity. Convolutional Neural Networks (CNN) have also been tested for increasing thermal images resolution [9], which could improve measure and detection precision.

Another problem regarding thermal images concerns the small number of databases, unlike the case of visible images. This is the reason why some authors predict a thermal image from a visible image. Using a segmentation approach, Lile et al. [10] develop a method predicting a thermal image from a visible one, then comparing it to a real thermal image to detect defects based on the differences found. This situation can be connected to [11], in which a conditional-Generative Adversarial Network (c-GAN) is developed to generate synthetic SEM images. First, a Convolutional Neural Network is pre-trained on real images. Then the transfer-learned CNN is trained on synthetic SEM images and validated on real ones. This approach could be of interest to synthetize thermal images from visible images. We can also refer to [12], in which a pose transfer method is proposed to produce a new image of a target person in a novel pose. Such a result is valuable in several applications. In the paper, it is used for person reidentification. In our case, it could be used to expand the thermal images database.

Thermal images are often blurred, and CNN can be used to remove the blur or at least reduce it. [13] uses a pre-processing step to sharpen thermal images, assisting in the detection of defects in scenarios where temperature variation within the scene is minimal or extreme. [14] reviews multiple techniques to increase edge information in CNN based segmentation algorithms. The use of a multi-level attention Module (MAM) composed of two sub-modules: Context Aggregation Module (CAM) and Correlation Matrix Correction Module (CMCM) permits to enhance the object edge information across the different layers of the neural network. If the neural network used is based on an encoder-decoder structure, extracting contours in the third encoder layer and fusing them with the last feature map of the decoder increases the segmentation precision. The authors present a comparative survey of existing methods based on mIoU values. In a same way, Ammari et al. [15] aim at reconstructing small inclusions inside a homogeneous object. They apply a heat flux and locate the inclusions from boundary measurements of the temperature. The model is developed in a rigorous mathematical frame by computing an asymptotic expansion of the boundary perturbations.

In [16] and [17], a CNN object detector is used, based on the Single-Shot Detection, SSD [18], architecture to detect objects in thermal images. A set of pre-processing methods are proposed in [16], like Random Noise, Training images shuffle, Random Crop and Random Brightness shift, to improve detection accuracy while [17] applies a Dilation and a Deconvolution module to enhance feature maps resolution and enlarge the receptive field zone of the neural net backbone, increasing detection performance of their SSD based detector. At last, [19] shows that using a multi-scale approach as a data augmentation technique permits to improve up to 6% the detection performance of a multi-class boosting based object detector. We can also refer to [20], where the authors present the detection of four types of objects: pedestrian, vehicle, two-wheeler, and cattle.

At last Lu et al. [21] deal with intelligent compaction and more precisely with real-time roller path tracking and mapping in pavement compaction operations. The authors propose a thermal-based method to overcome the problems due to variable weather conditions. To drive the roller optimally, they estimate its motion during successive frames, based on pavement boundary and the optical flow technique. This paper will be valuable for the rest of our work, analyzing the video instead of working image by image, when our goal will be to estimate the motion intention of the detected pedestrians, bicycles, and cars.

Methods

Recall on the LIP framework

The LIP framework was first introduced by Jourlin et al. [22, 23, 24], in the context of images acquired in transmission (cf. Annex 1). Let

The subtraction

It has been established that the laws

For 8-bits digitized images, M

Let us recall that the laws

To complement these benefits, we know that the consistency of the LIP model with the Human Visual System [25] extends its application field to images acquired in reflection, giving the opportunity to interpret such images as a human eye would do.

For the interested reader, Carré et al. [26] have shown that the LIP-addition (resp. subtraction) of a constant to an image (resp. from an image) perfectly simulates a decrease (resp. an increase) of the exposure time or of the source intensity. Concerning the scalar multiplicative law,

Many new tools have been introduced in the LIP framework, especially in link with the concept of contrast and associated metrics. Here we will limit ourselves to the Logarithmic Additive Contrast [24].

Given a grey level function

Due to the scale inversion,

and:

Obviously, we can observe that the Michelson’s approach overestimates the contrast between two dark grey levels compared to two bright ones with the same difference. A similar effect can be seen with the LAC definition. More precisely an explicit link has been established in [24] between these two contrasts concepts.

Grey level Mathematical Morphology has been introduced and developed by the Fontainebleau School (Ecole des Mines, Paris) [27]. Given a grey level image

Then these two operators are combined to produce the

It is well-known that the difference

For thermal images, these peaks correspond to hot objects (pedestrians, cars…). According to the size of

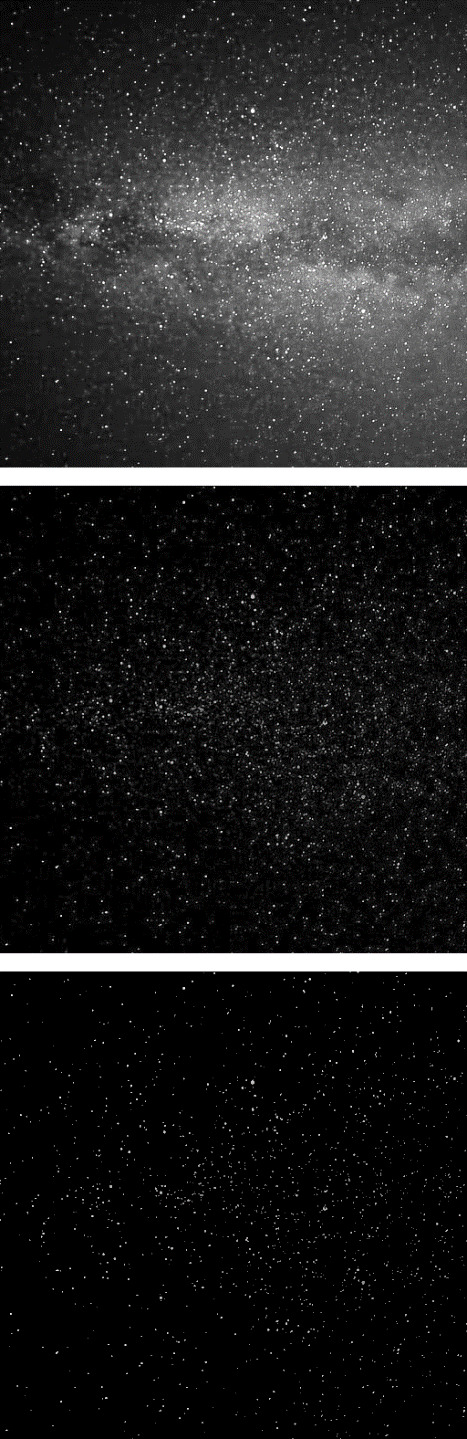

Illustration of the White Top-Hat. Top: stars picture, middle: difference between

When applying such a technique to real images, all the peaks are detected, the significant ones and those due to noise. To overcome this problem, the concept of White Top-Hat

The value of

In [4] and [5], the White Top-Hat transform is used with a rather empirical modification whose goal is to enhance the contrast of hot objects: the image

Top: image

As shown in Fig. 2, the classical addition of

Note that the Logarithmic Additive Contrast permits to quantify the contrast gain generated by this approach: for each region

In the same way, we define the closing

Finally, we can summarize the Mathematical Morphological method by performing together the White and Black Top-Hats, which means that the initial image

Top-Hat transform with different diameters. Top: 3, middle: 7, bottom: 11.

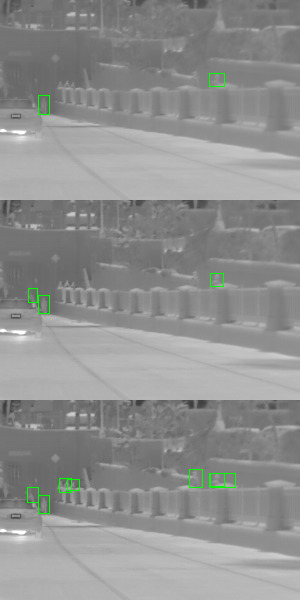

Person detection for different values of diameter. Top: 3, middle: 7, bottom: 11.

Now let us consider the multiscale aspect of the problem. Depending on the structuring element radius used in the Top-Hat operators, different information peaks are extracted. The small objects we want to detect in the background of our thermal images have very different sizes according to the scene and to their position and distance from the sensor. It’s understandable that a given structuring element will favor one size of object. Thus, we compute multiple Top-Hat transforms with structuring elements of increasing size, the maximal radius being empirically chosen from detection performance and time constraints. Chaverot et al. [28] proposed a method consisting of executing the neural network object detector on all these Top-Hat transform iterations. The next step is to concatenate the obtained results. The selection of the best bounding-boxes is made with the Non-Maximal Suppression algorithm, NMS [29]. Figure 3 shows the result of the Top-Hat transform for different radii of the structuring element: we can observe that the dark regions like the car windshield become progressively darker while the bright ones (pedestrians) become brighter.

Figure 4 shows the different detections of increasing diameters of the Top-Hat transform structuring element.

As already remarked, thermal images are systematically blurred, in the sense that they look like slightly misfocused images. From an optical point of view, it means that a single bright point is replaced by an Airy disk, whose central part can be approximated by a Gaussian.

During the digitizing step, if the Airy disk diameter is larger than the size of a pixel

In the literature [32], a resembling approach, commonly referred as the

Unsharp masking. Top: initial thermal image

An example of such a deblurring is shown in Fig. 5 with

In the case of an already trained network, modern CNN architecture permits to increase the input image resolution without having to retrain it. Increasing the network resolution has the advantage of detecting smaller targets, despite an increase of the processing time. Considering the same original dataset, in this approach, the input images require an upscale too. A bilinear or bicubic interpolation is usually used for this task. But in our situation, image Super Resolution (SR) appears particularly interesting.

SR algorithms aim at increasing images resolution while keeping precise and sharpened details in comparison of classical interpolation methods generating blur and approximated information (e.g. bicubic interpolation (BC)). Generally, SR methods provide an increase in resolution by a factor

Recently many deep learning-based SR methods have been developed and achieve new state-of-the-art performances. We can refer to the following reviews presenting recent SR works [33, 34, 35]. In our experiments we use the CARN model (CAscading Residual Network) [36], based on a residual network and implementing a cascading mechanism. This network proposes a satisfying image quality and is quite efficient in term of computational complexity thanks to its lightweight design.

In the learning phase, couples of low resolution and high resolution images are required. High resolution images correspond to the original images of the training dataset (with the native resolution). Low resolution images are obtained by reducing the images size by factors

Image quality comparison between super resolution and bicubic interpolation

Image quality comparison between super resolution and bicubic interpolation

Super resolution applied on thermal images. Top: bicubic interpolation. Bottom: SR

A comparison between SR and bicubic upscaling is proposed in Table 1. On some images of the FLIR dataset, PSNR and SSIM metrics are evaluated between Ground Truth (GT) and upscaled images. GT images correspond to original FLIR images, the upscaled ones are obtained by downscaling the GT and then upscaling them by SR or bicubic interpolation. On every comparison, Super Resolution gets the best scores.

We have also explored combinations of our previous proposed methods. We focused on combinations of SR with UM (Unsharp Masking) and SR with Multiscale Top-Hat transform. As the SR multiplies the number of pixels in each direction, we adapt the radius of the structuring element used in the Top-Hat transform and the neighboring region size of the Unsharp Masking to consider the novel targeted size. Typically, we double the size for a SR

The combination of UM and Multiscale Top-Hat transform has been tested, as the first one enhances the information peaks to be detected by the Top-Hat operations. However, such a combination yields poorer detection performance than using only one of the previous exposed methods and will not be further discussed in this paper.

Experiments

Materials

FLIR dataset and validation subset selection

The FLIR ADAS dataset [37] is a dataset of thermal images proposed by the FLIR company, a thermal image sensor manufacturer. This set is constituted of 10228 images with a resolution of 640

The FLIR ADAS dataset provides annotations for four classes: persons, cars, bicycles, and dogs. Table 2 presents the classes distribution.

FLIR ADAS classes distribution along train and validation split

FLIR ADAS classes distribution along train and validation split

Top left: 14 bits RAW image, top right: the proposed normalization, bottom left: the proposed normalization and unsharp masking, bottom right: FLIR enhanced image.

In this paper, due to the large unbalance between the classes, we decided to focus only on the two following classes: persons and cars.

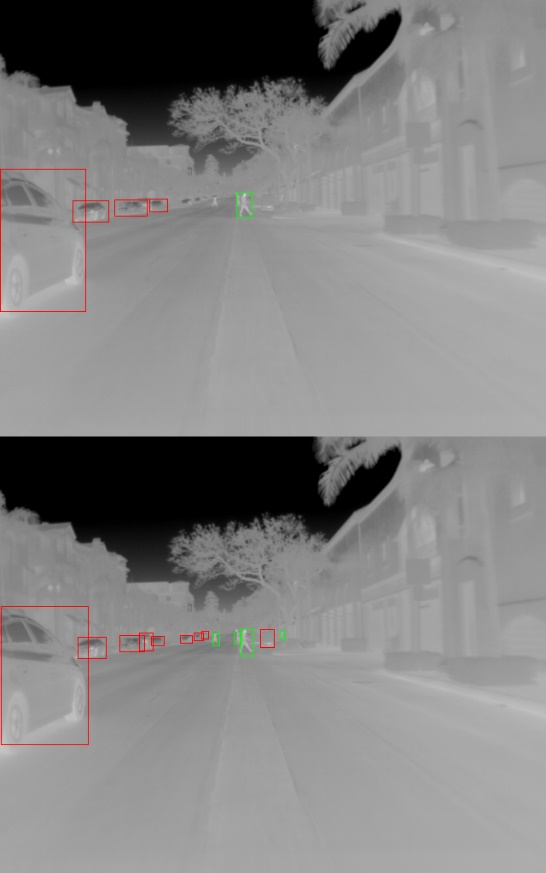

The FLIR ADAS dataset is not perfect. The annotations of small objects are not present. It can be explained by the difficulties to annotate them due to the poor quality of thermal images. To obtain accurate scores of detection performance, 693 images from the validation dataset were chosen and reannotated. One hundred of these images were chosen because of their interesting scene, with vehicles relatively far, and pedestrians crossing the street in the background. The rest of the images were randomly picked. Full list of images and annotations files are freely available on GitHub [38]. The selected validation images contain 5318 annotated persons and 3206 annotated cars. Figure 8 shows the difference between the original annotation and our novel annotation. Table 3 shows a comparison of the number of labeled objects before and after our reannotation. The number of small bounding boxes has doubled, while the count of boxes in other sizes remained relatively unchanged.

Bounding boxes areas distribution along our selection

Comparison between FLIR annotation (top) and the new one (bottom). Red boxes are labeled cars, Green boxes are labeled pedestrians.

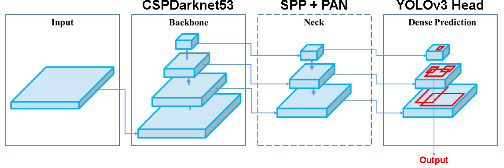

YOLOv4 is an object detector proposed by Bochkovskiy et al. [39] leveraging multiple features to obtain the best trade-off between inference time and detection accuracy. The authors aim to design a detector suitable for systems production and optimizable for parallel computation. This detector is built with CSPDarknet53 [40] as backbone due to its state-of-the-art classification results on MSCOCO [41]. CSPDarknet53 is associated with a Spatial Pyramid pooling module, increasing the separation of significant features without reducing the processing speed. The path-net aggregation neck of the detector is PANet [42], shortening the information path between lower layers and topmost feature. At last, the detector head is the same as YOLOv3 [43], anchor based.

YOLOv4 also uses state-of-the-art training strategies and data augmentation methods, increasing detection performance and overall robustness.

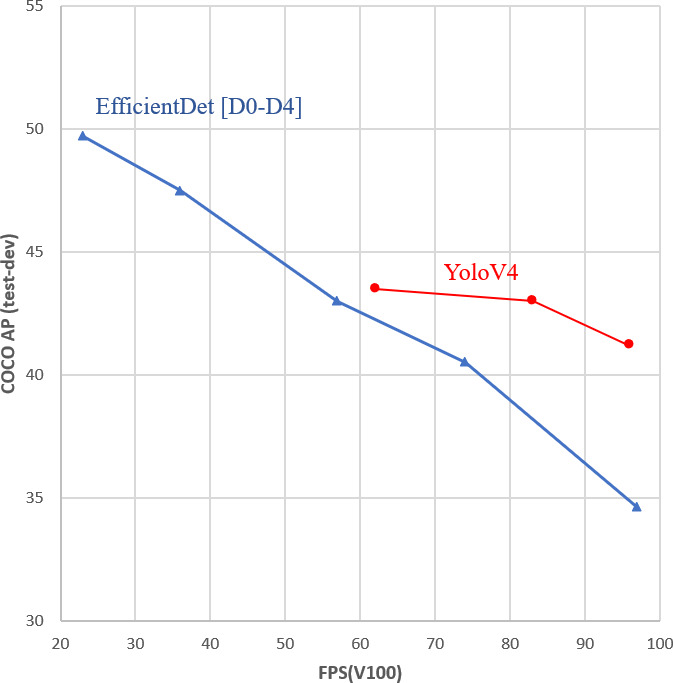

The network architecture is presented in Fig. 9 and a performance comparison chart given in [39] is shown in Fig. 10.

YOLOv4 architecture [39].

Performance comparison chart.

In our experiments, we use a fine-tuned YOLOv4 on the FLIR ADAS dataset [28].

The fine-tuning was made with the following parameters:

Resolution: 640 Optimizer: Stochastic Gradient Descent Learning Rate: 0.001

The optimizer and learning rate were selected in accordance with the original publication of the network.

The train and validation split provided by FLIR was kept. The mean Average-Precision, mAP, score was computed on the validation set at each epoch for a minimum of 60 epochs, the model obtained is the one with the best score along the training. This model has the state-of-the-art detection performance on the FLIR ADAS Dataset.

EfficientDet [44] is a detector using the EfficientNet [45] architecture as backbone. EfficientNet was designed to improve efficiency and accuracy of CNN’s by using a compound scaling method to scale the network architecture and feature resolution. This allows to balance the trade-off between accuracy and efficiency. EfficientDet uses a weighted Bi-Directional Feature Pyramid Network, BiFPN, to fuse features from different scales and a Single Shot Detector, SSD, as its head, to predict the bounding boxes and class probabilities of the objects in a scene. As shown in the performance comparison chart depicted in Fig. 10, the deepened architecture of EfficientDet, like EfficientDet-D3 and EfficientDet-D4 outperforms YoloV4 on the MS COCO dataset, albeit with a significantly decreased inference speed.

We have fine-tuned the EfficientDet-D3 model, pre-trained on the MS COCO dataset. We used the following parameters:

Resolution: 640 Optimizer: AdamW [46] Learning Rate: 0.0002

As with the YoloV4 fine-tuning, we maintained the optimizer and learning rate used by the original authors, as well as the train validation split of the FLIR dataset. Unlike the previous architecture, our network required training with a square image size. To accommodate this requirement, we employed a resolution of 640

To train the CARN network on thermal images, we have followed the steps described by the authors in the original paper. We use a batch size of 64, a patch size of 64, the ADAM optimizer with a starting learning rate of 0.0001 which is halved every 4

Experimental settings

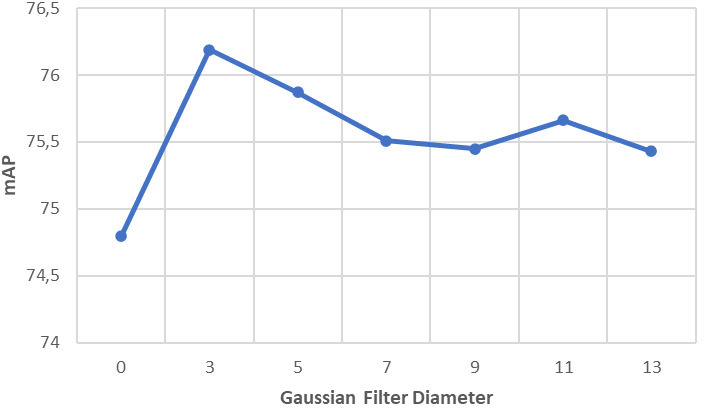

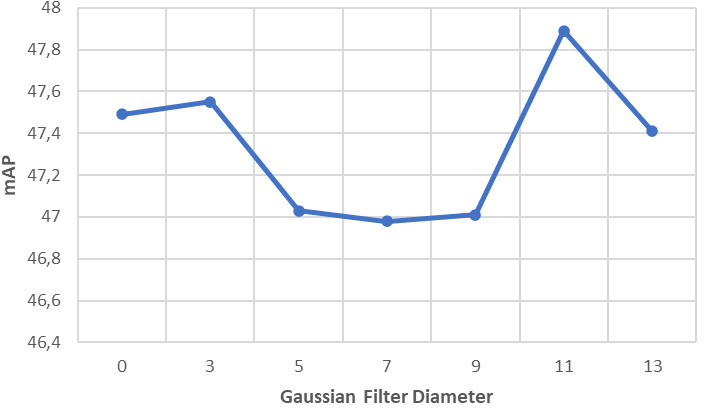

For the YOLOv4-based network, the Unsharp Mask is computed using a Gaussian filter with a standard deviation

These diameters have been set thanks to a sensitivity analysis for the impact of the kernel diameter of the Gaussian filter. This analysis is presented in Figs 11 and 12, showcasing the effects of different diameters on the results.

Sensitivity analysis: influence of the Gaussian filter diameter of the UM method with the YoloV4 fine-tuned model.

Sensitivity analysis: influence of the Gaussian filter diameter of the UM method with the EfficentDet-D3 fine-tuned model.

When the Unsharp Mask is applied to the Super-Resolution (SR)

The Multiscale Top-Hat transform employs a circular structuring element with an odd diameter ranging from 3 to 13. When used in combination with the SR

Detection performances of the different methods for the fine-tuned YoloV4

Comparison of AP

For the concatenation and selection of the best bounding boxes in the Multiscale Top-Hat method, a Non-Maximum Suppression algorithm [29], NMS, is applied with a threshold of 0.45. This threshold value is commonly used when inferring a detection network and helps eliminating redundant bounding boxes.

In our study, we present the results in Tables 4–7, which provide comparisons of Average Precision (AP) and mean Average Precision (mAP). The computation method for AP is defined in the MS COCO Dataset [41]. Specifically, we used the AP

Detection performances of the different methods for the fine-tuned EfficientDet-D3

Detection performances of the different methods for the fine-tuned EfficientDet-D3

Comparison of AP

The baseline score is obtained with the fine-tuned model presented in [28] on our validation subset. The four presented methods are: MTH (Multiscale Top-Hat), MTH LIP, UM (Unsharp Masking deblurring), SR (Super Resolution). For a comparison purpose, we have included results obtained from two additional image variations. The first variation involves using images enhanced automatically by the FLIR algorithms (FLIR Enhanced). The second variation involves resizing the images by a factor of two using Bicubic Interpolation (BC).

For each considered detector, the LIP version of the MTH has outperformed the classical one. The UM and the SR

However, it is important to mention that when employing Bicubic interpolation or Super-Resolution methods, there is a decrease in the AP

This discrepancy suggests that while the BC and SR techniques impact the detection of larger objects, the overall detection performance, as measured by mAP, remains positive. It highlights the trade-off between enhancing small objects detection while potentially affecting the detection accuracy of larger objects when using these methods.

Processing time and frames per second of the fine-tuned models (I9-10850k – RTX3090)

Processing time and frames per second of the different algorithms (I9-10850k – RTX3090)

The SR

The following combinations have been tested: SR

In our experiments, using FLIR images as training dataset, the scores obtained by EfficientDet-D3 are globally lower than those obtained with YoloV4 while these networks show more similar performances on the COCO dataset. These performance differences can be explained by the dataset sizes (328,000 images in the COCO dataset vs 10,228 in the FLIR dataset) and the ability of YoloV4 to perform new augmentation techniques [39]. YoloV4 appears more adapted to reduced datasets.

The execution times of fine-tuned models and preprocessing algorithms are presented in Tables 8 and 9, respectively. Additionally, the frames per second (FPS) obtained with the full pipeline using YoloV4 or EfficientDet are also reported. These times were obtained using an Intel Core i9-10850k CPU and an NVIDIA RTX3090 GPU, with Python, PyTorch 1.8.2 and CUDA 10.2. The YoloV4 architecture is more parallelizable than the EfficientDet one, which explains the different inference times measured. The UM algorithm requires a very low execution cost, making it an attractive option. The MTH LIP is significantly slower than the original MTH, however, parallelizing the algorithm and implementing it in CUDA would accelerate it. Although the BC2 appears to be very effective, the increasing inference time of the neural network for a doubled resolution should not be ignored. In the end, the choice of the preprocessing will depend on the initial performance level of the network, the performance requirement, and the available processing time. In our case, network quantization can be a valuable technique to reduce network inference computation, as studied by Wu et al. [47]. By applying network quantization, we can decrease storage costs and reduce computation time associated with network inference.

It should be noted that the training of the EfficientDet detector was not optimized for the best performance, the purpose of this example is to show that our method can be applied to a trained detector and achieve better performances, even when the training is not carried out in the best possible manner.

Considering the scores presented in Tables 4 and 6, we notice that with both networks YoloV4 and EfficientDet-D3 our image pre-processing techniques provide a significant performance gain. With YoloV4, from our baseline of mAP 74.80, applying SR

These results demonstrate the ability of the proposed pre-processing techniques to improve the detection performance of an already trained network. Despite the performance gap between the two detectors, the results demonstrate the effectiveness of our proposed methods, and shows the generalization possibilities to other datasets and tasks.

The objective of the present paper was to overcome the main drawbacks of thermal images and improve the detection of small objects. Three methods were studied, each addressing a specific problem: the Multiscale Top-Hat transform and its LIP version to detect objects far from the sensor and enhance their contrast with the neighboring background, the Unsharp Masking method to deblur thermal images, and the CARN network to enlarge the spatial resolution. The most efficient combinations of these techniques allowed for a significant gain compared to the baseline results. These techniques showed a real impact on performance without having to retrain a detection network, knowing that dataset acquisition and annotation are tedious tasks, especially in the case of thermal images.

For future work, we plan to study more precisely the following elements to open the way to real applications such as ADAS: better transformation of raw images into 8-bit ones and optimization of execution time, estimation of the obstacle trajectory and especially of the pedestrian intention, and optimal linking between the distance detection needed and the speed of the aided car.

Concerning the MTH approach, the multiscale technique could be replaced by an automated adaptation of the structuring element size in relation to local information. Finally, more fundamental subjects appear interesting to explore, particularly the benefit of the LIP framework application to thermal images, as demonstrated in [28] for the Top-Hat LIP version. For example, considering the vector space structure associated to the LIP operations, the concept of grey level interpolation opens the way to define a LIP Super Resolution algorithm.

Footnotes

Acknowledgments

The present paper is an extended version of a conference presented by Chaverot et al. [![]() ]. We want to thank Prof Hojjat Adeli, Editor-in-Chief of the Journal Integrated Computer-Aided Engineering (ICAE), for giving us the opportunity to submit this novel version to ICAE.

]. We want to thank Prof Hojjat Adeli, Editor-in-Chief of the Journal Integrated Computer-Aided Engineering (ICAE), for giving us the opportunity to submit this novel version to ICAE.

We would also like to express our gratitude to the nine reviewers for their numerous wise remarks and suggestions.

Annex 1: Transmittance law and LIP addition

Such a law addresses images acquired in transmission i.e., when the observed object is located between the source and the sensor. Given an image

The addition of two images

This formula means that the probability, for a particle emitted by the source, to go through the “sum” of the obstacles ![]() ] established the link between the grey level

] established the link between the grey level