Abstract

The prime goal of parallel computing is the simultaneous parallel execution of several program instructions. Consequently, to accomplish this, the program should be divided into independent sets so that each processor can execute its program part concurrently with the other processors. This study compares OMP and MATLAB, two important parallel computing simulation tools, through the use of a dense matrix multiplication technique. The results showed that OMP outperformed the MATLAB parallel environment by over 8 times in sequential execution and 6 times in parallel execution. From this proposed method, it was also observed that OMP with an even slower processor performs much better than MATLAB with a higher processor. Thus, the present analysis indicates that OMP is a superior environment for parallel computing and should be preferred over parallel MATLAB.

Introduction

Parallel computation can be achieved on an individual computer with multiple processors, or many individual computers connected by a network or a combination of the two. Application of Open MP (OMP) and MATLAB parallel environments are focused in this study with the help of illustrations to evaluate the performance of both tools. OMP stands for Open Multi-Processing. For shared memory thread-based parallel programming applications, this programming interface offers a scalable and portable approach. On a supported platform, OMP’s adaptability and user-friendly design make it incredibly simple by enhancing the source code with recently introduced directives [1, 2, 3, 4].

The master thread, or single process, is the starting point of every OMP program. Until the first parallel region construct is met, this master thread executes in sequential order. OMP executes in parallel using the fork-join mechanism. Since parallelization is explicitly controlled by the programmer in this instance, parallelism is approached with clarity. MATLAB software package performs analytical studies to solve different engineering problems. Parallel computing in MATLAB splits up the problem across multi-core or multiple computer nodes in a variety of ways. It can also support multithreading natively, which can be broadly compared to the OMP approach to parallelism. Parfor and spmd commands are used to achieve parallelism in MATLAB [5]. There were 13 problems significant for applications of science and engineering as per academic research at Berkeley University, USA [6]. These 13 problems include the ‘Dense Linear Algebra’ which consists of the Matrix Multiplication (MM) method. The selection of the MM problem is due to its wide applicability in the flexibility of matrix indexing and numerical programming [9]. It is one of the significant parallel algorithms concerning data locality, cache coherency, etc. [7, 8]. The problem of computing the product

Related work

Due to the introduction of supercomputers with massively parallel architectures in the 1980s, parallel computation has grown in popularity. Many parallel computing systems exist, including parallel MATLAB, Open MP, CUDA (Compute Unified Device Architecture), MPI (Message Passing Interface), PVM (Parallel Virtual Machine), and so on [10]. In the last ten years, several studies have been conducted to evaluate the effectiveness of various algorithms in parallel settings. Dash et al. [11] presented an overview of optimization methods that may be used for the MM algorithm, and [12] assessed the performance in the OMP parallel environment. It was investigated how well the MM algorithms performed in an MPI context [13]. Previous research also examined the MM problem to identify the effects of parallelism on issue size. However, that study was limited to a quite smaller sample size [14]. Using the OMP platform, several experiments [15, 16, 17] have been conducted to assess the MM algorithm’s performance on multi-core processors. Efficiency was not assessed in related experiments published by authors [14, 15, 16, 17]. Efficiency has been computed in the current study, and consideration was given to the size of the performance matrix (5000

In computer science, parallelism is the practice of carrying out several activities concurrently within a single program. It might be added during compilation or execution, or it can be specified in the source code of a program. By dividing programs into jobs that may run in parallel, completing separate responsibilities, or handling various portions of the work, parallelism can improve efficiency or responsiveness [18]. A recent study suggested a concurrent data structure that is scalable for shared memory systems, balancing components and optimizing priority queues to ensure effective operation execution [19]. Programming languages give the necessary information for static analysis and compiler execution, enabling effective calculation and safety verification of programs. A high-level perspective is offered by high-level programming abstractions, which enable programmers to directly describe global data. Annotations, pragmas, parallelization libraries, domain-specific languages, and classical full-fledged languages are some of the approaches [20, 21, 22, 23, 24]. Authors [25] have reviewed the literature on General Sparse Matrix-Matrix Multiplication, covering applications, formulations, compression formats, critical issues, strategies, architecture-oriented optimizations, programming models, performance comparisons, algorithm analysis, and areas for further study.

This proposed work is the extended version of our previous work [12]. In our previous work, we evaluated the speed up and efficiency of an algorithm in an OMP environment and found that parallelism can be achieved only after a certain problem size. However, in this proposed work, two effective parallel computing tools (OMP and MATLAB) have been compared and their performances were also evaluated.

Implementation of algorithm

A sequential MM algorithm was implemented in OMP and MATLAB and executed in Fedora 17 and Windows operating systems with different processors respectively. The execution time of the sequential run was evaluated first followed by the parallel run. For parallel execution, we have written the parallel code using OMP and MATLAB parallel processing toolbox functionalities and executed it in different processors with dual cores and with two threads only. The sequential (ST) and parallel (PT) running time of the algorithm on different processors was noted down and the performance measures (speed up, efficiency) of the systems were evaluated accordingly.

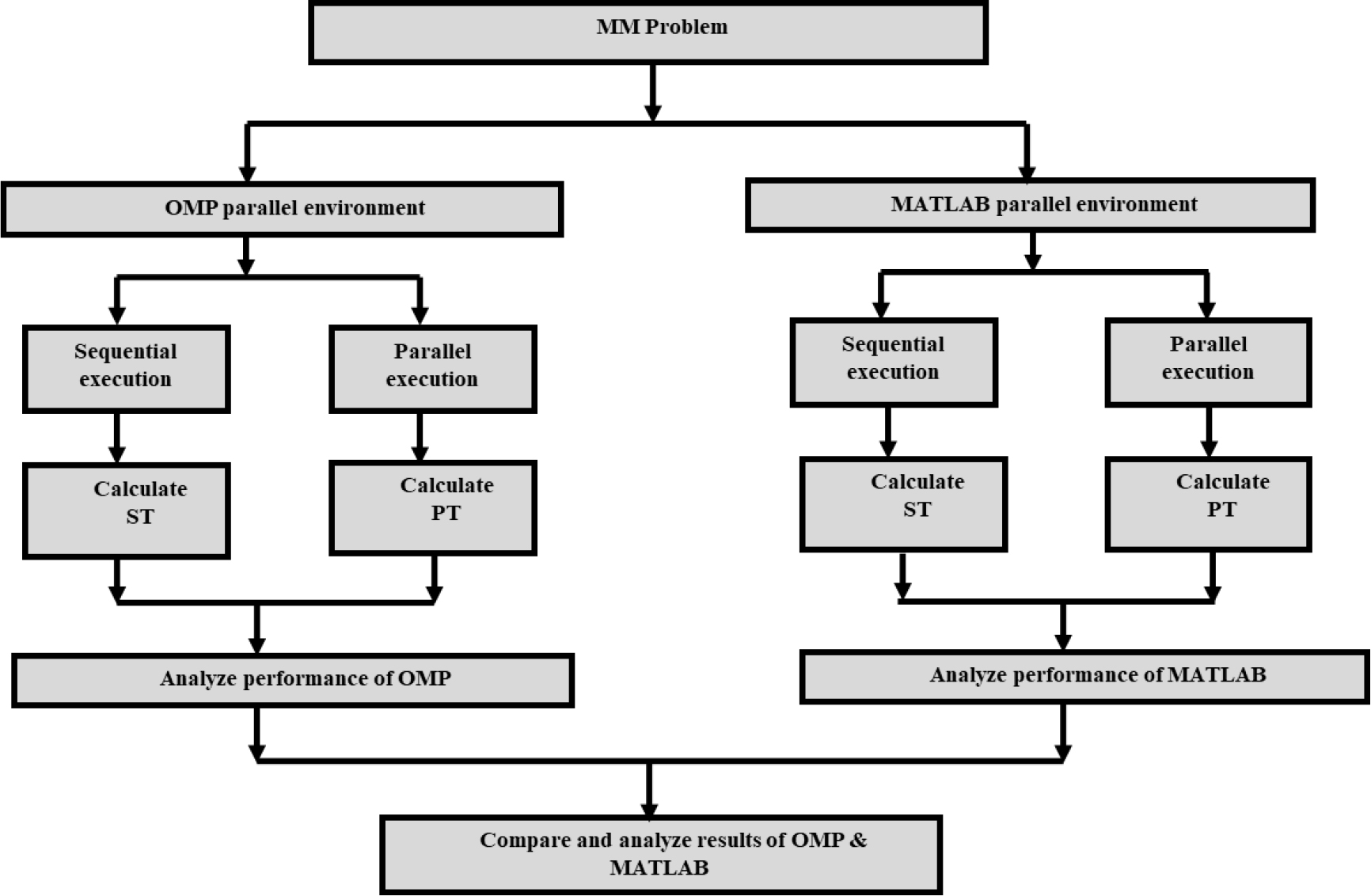

The algorithm was tested by writing the program in both OMP and MATLAB on a multi-core system and their performances were measured with their execution times as shown in Fig. 1. An analysis and comparison of the outputs from OMP and MATLAB are conducted to determine which, in terms of execution time and performance metrics, performs better. This section outlines every step required to build the method in MATLAB and OMP. Table 1 presents a description of the hardware and software along with the multi-core processors utilized in this investigation.

Multi-core processors with specifications

Multi-core processors with specifications

Process flow diagram of steps followed.

In OMP, when we compute execution time without using pragma directive, it is called serial time (ST) and when we make use of pragma command and then time computed is called parallel time (PT). First, the sequential execution time of OMP was recorded and then parallel execution time was calculated by following the aforementioned steps. The ST in MATLAB is that time which is computed without opening the matlabpool. When the matlabpool is open, then it will open 2 threads. Similarly, matlabpool can work up to a maximum of 12 threads. After opening the pool for 2, 4, 8 & 12 threads time taken is called PT.

The procedure of gathering, evaluating, and reporting data on a system’s performance as well as the technology employed is known as performance measurement. Performance metrics are used to assess a system’s efficacy, efficiency, timeliness, productivity, safety, and quality. Here, the OMP and MATLAB platform’s parallel performance was analyzed using two performance measures such as speed up and efficiency.

Speedup

Speed up is the ratio of the time of execution of a given program using an algorithm on a machine with a single processor (i.e.

It may be noted that the term

A further crucial indicator for evaluating performance is the efficiency of parallel processors, which explains how the resources are employed. We refer to this as a degree of efficacy. The ratio of the relative speed increase achieved when the load shifts from a single processor machine to a k-processor machine defines the efficiency of a program running on a parallel computing system with k-processors. A parallel computing machine uses a large number of processors to get the desired output.

The efficiency of a program on a

In the MM algorithm, no task dependency is observed. Hence thread and kernel instances when run in parallel, reduce the execution time. MM problem was solved using both OMP and MATLAB parallel programming platforms on dual cores for two threads only. It gives the best result by executing it sequentially rather than parallel when several rows and columns are fewer, but as the number of rows and columns increases, the sequential algorithm’s performance decreases where the parallel processing task comes into play.

In the simulation, Fedora 17 with an Intel Core2Duo (2.00 GHz) processor and Windows 7 operating system with an Intel Pentium (2.13 GHz) processor have been used. On both computers, different matrix sizes i.e. from 10

Comparative analysis of experimental results

Sequential time comparison of OMP & MATLAB

Sequential time comparison of OMP & MATLAB

Recently few studies have been reported in the direction of comparing OMP and MATLAB. In a poster presentation at UPPSALA University OMP was demonstrated to be 5 times faster than MATLAB [26]. Their work has been done on sparse matrices. But in the proposed study it was found that OMP is more than 8 times faster for sequential execution and 6 times faster for parallel execution than MATLAB. In another recent paper [27], OMP was found to be faster than MATLAB. But in their work ST in MATLAB was always less than the PT. However, in the present work, ST was found to be less than PT beyond a certain matrix size. A larger sample size has also been tested here as compared to this [27] paper.

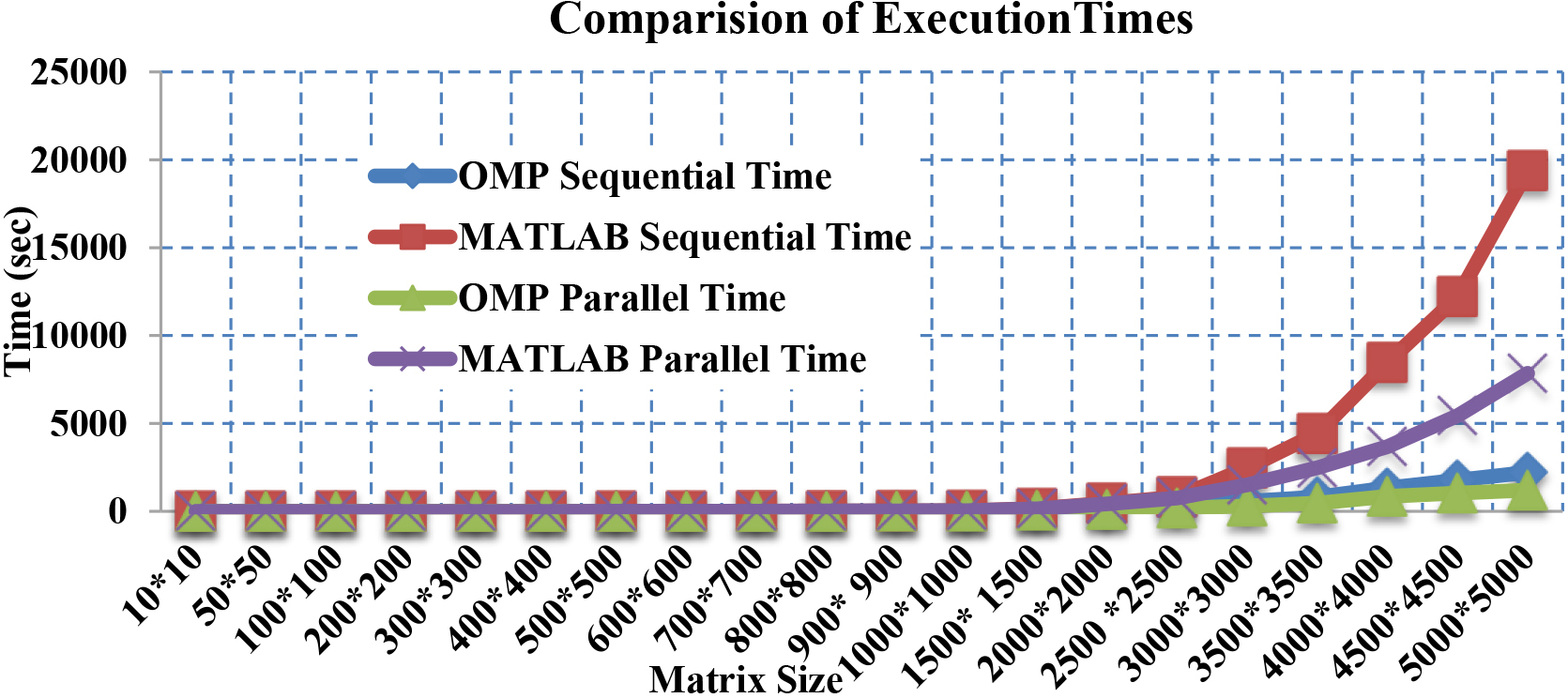

Computational execution times of both sequential and parallel runs were recorded in both OMP and MATLAB parallel programming environments. These results are shown in Table 2 and Fig. 2. It was clear from the table and graph that sequential and parallel time taken by MATLAB is more than OMP for the execution of the program. A new performance parameter called time ratio is introduced by us. A time ratio is defined as a ratio between the time required by MATLAB and the time required by OMP (MATLAB/OMP). The time ratio is calculated to find out how much faster is OMP than MATLAB. As far as the sequential time is concerned OMP is 8 times faster than MATLAB. According to the calculated time ratio, the parallel time of OMP is more than 6 times faster as compared to the parallel time of MATLAB.

ST & PT comparison of OMP & MATLAB.

Thus, from the observation of the above graph, it can be summed up as:

For OMP:

ST ST

For MATLAB:

ST ST

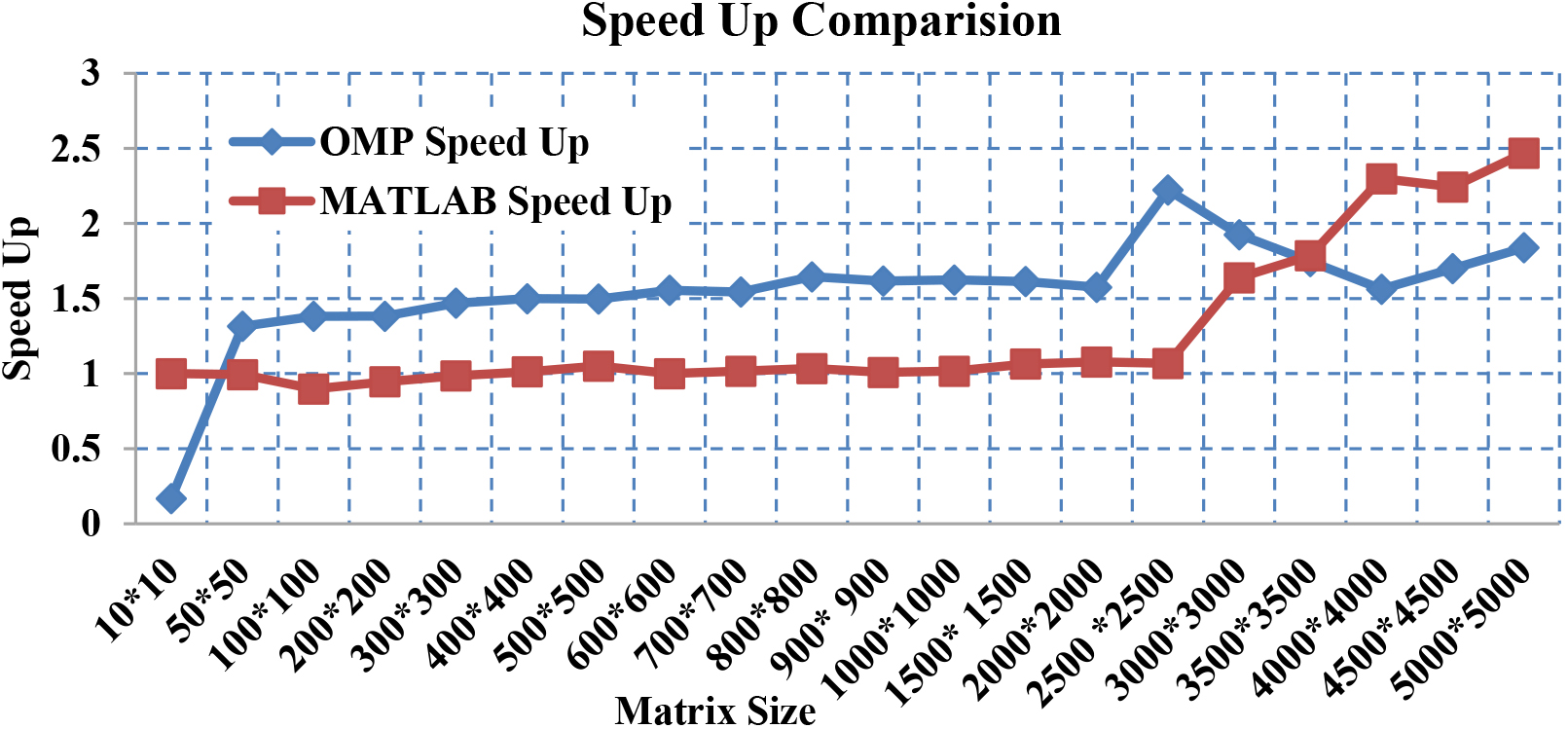

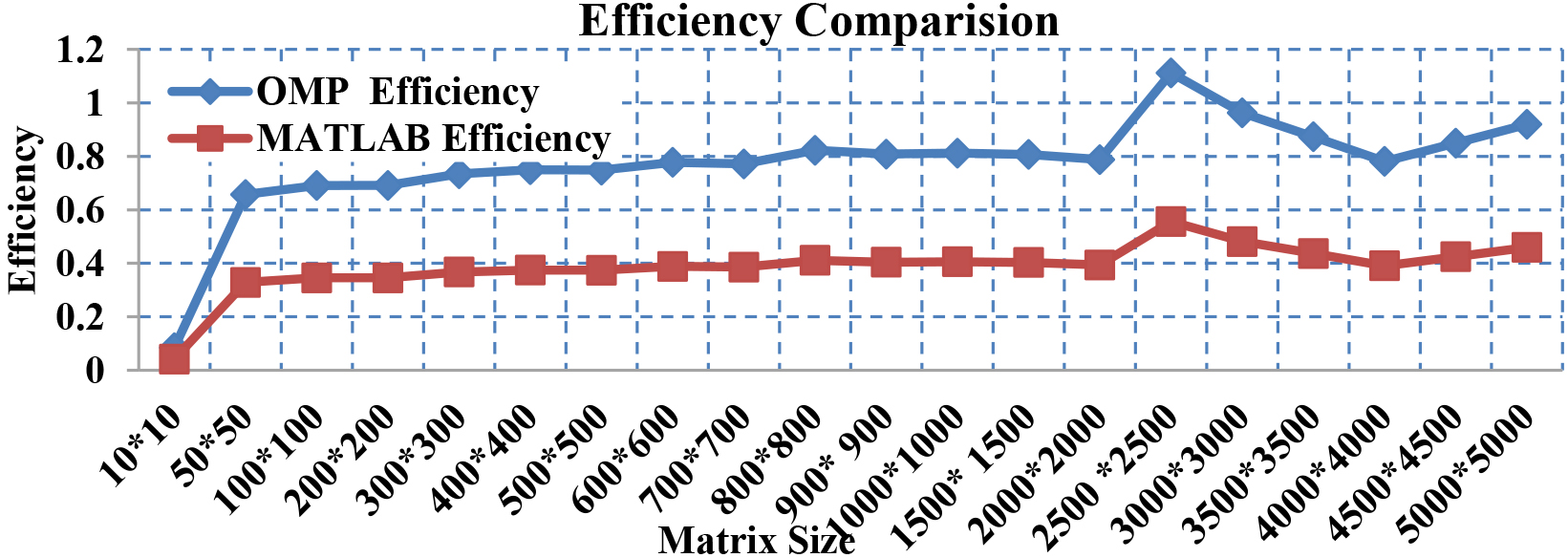

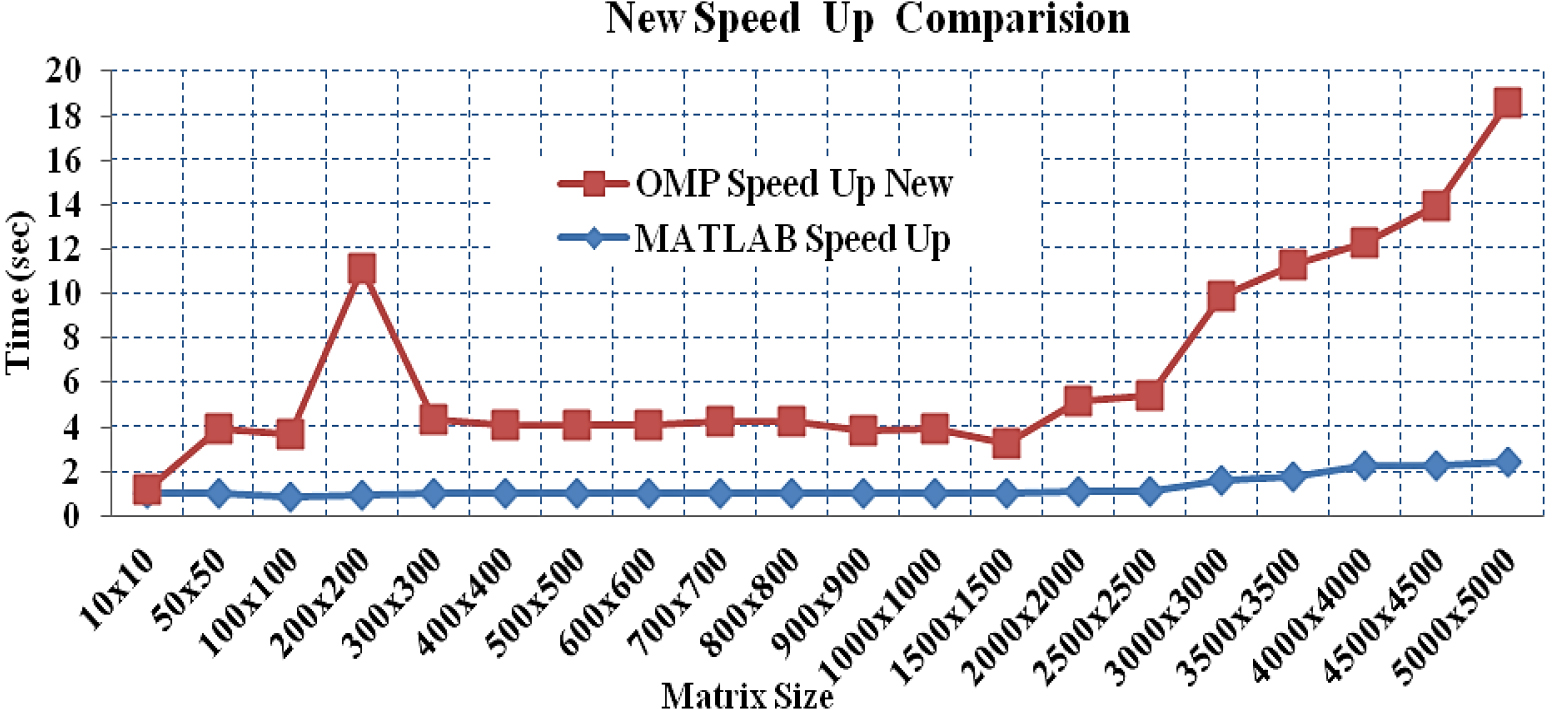

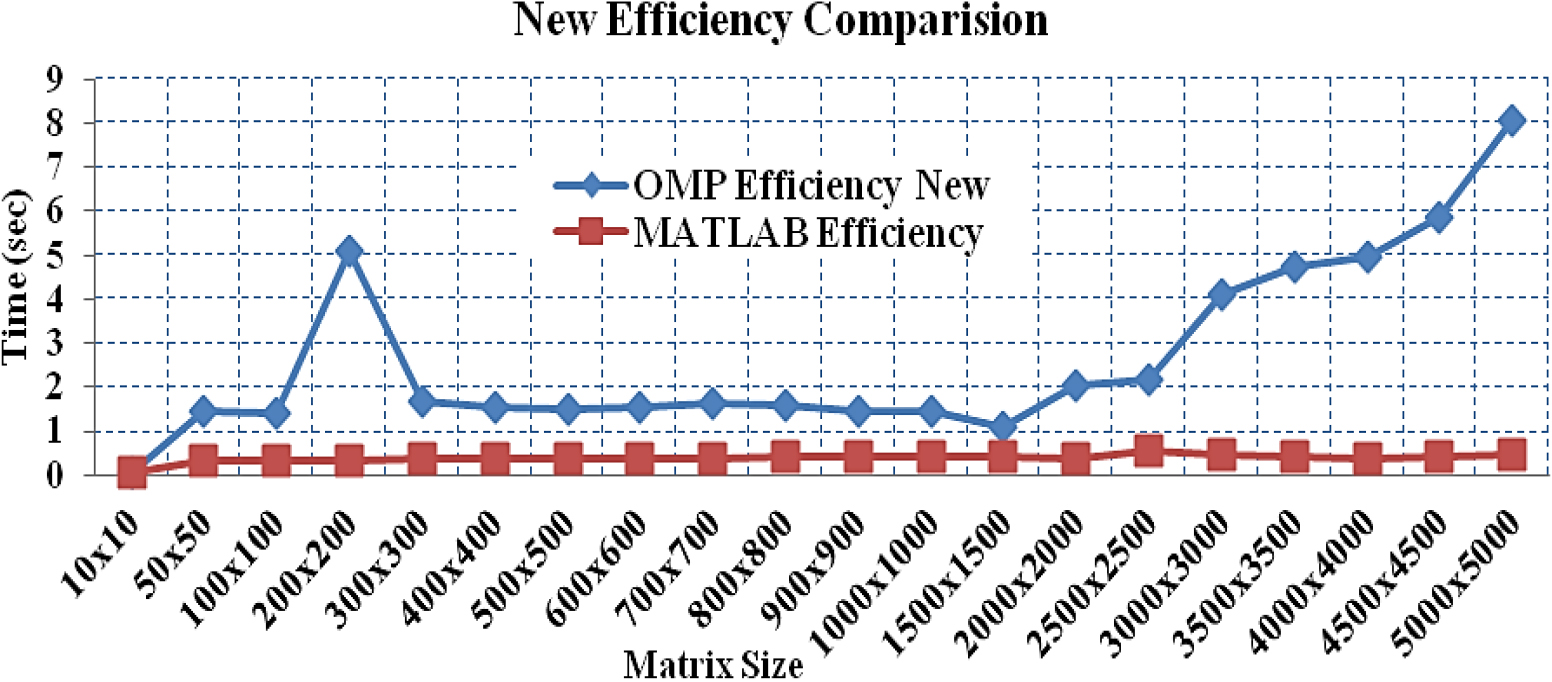

Speed-up and efficiency measures were calculated to evaluate the performance of both OMP and MATLAB programming platforms. These results and graphs were presented in the following sub-sections. The speed up and efficiency between OMP and MATLAB were compared and presented in Table 3, Figs 3, and 4.

Comparison between OMP and MATLAB speed up & efficiency

Comparison between OMP and MATLAB speed up & efficiency

Comparison between OMP and MATLAB speed up.

Comparison between efficiency of OMP and MATLAB.

The speed-up graph presented in Fig. 3 did not give the correct picture because while calculating the speed-up for MATLAB we took MATLAB sequential time as a numerator. But MATLAB’s sequential time is much larger than the sequential time of OMP. Therefore, the calculated OMP speed-up doesn’t need to be better than the calculated MATLAB speed-up. Therefore, a common base MATLAB sequential time as numerator has been taken and calculated the new speed-up of OMP as shown in Eqs (3) and (4).

As the speed-up was newly calculated for OMP, so we recalculated the efficiency for it based on the new speed-up as follows in Eqs (5) and (6).

The calculated new speed-up and efficiency of OMP were compared with MATLAB in Table 4, Figs 5, and 6. It was found that OMP has a better and more consistent speed-up and efficiency than MATLAB.

Comparison of new speed up & efficiency

Comparison of new speed up & efficiency

In Table 4 it can be seen that OMP Speed Up New is less than 1 for matrix size of 10

New speed up comparison between OMP and MATLAB.

New efficiency comparison between OMP and MATLAB.

It can be clear from Figs 5 and 6 that the speed up and efficiency of OMP is much better as compared to MATLAB parallel environment.

It was observed that OMP is more than 8 times faster in sequential and more than 6 times faster in parallel execution as compared to MATLAB parallel environment. We also experimentally found that OMP with an even slower processor performs much better than MATLAB with a higher processor. In the current experiment, OMP is found to be more suitable than Parallel MATLAB due to its faster loading capability and speed of the program. Another reason to consider, OMP is freely available whereas we have to purchase MATLAB. OMP platform is having richness of functions which makes it a viable alternative to MATLAB. Therefore, from our study, it is concluded that for research purposes, the superior tool for parallel computing is OMP rather than parallel MATLAB. This study proposed an innovative and efficient method to find out the applicability of the MM algorithm in OMP and parallel MATLAB. As two different processors were being studied for the dense execution of MM, so the results are worthy enough to substantiate its optimal usability. In the future, we will do a comparative study based on the performance of MM algorithms on other parallel environments like Message Passing Interface (MPI), Compute Unified Device Architecture (CUDA), etc. We will assess the speed and performance of the MM using the higher level of processors i.e., 8 cores and 16 cores. In a given application program, the utility of OMP or Parallel MATLAB in a heterogeneous environment consisting of multiple GPUs and multi-cores can be recognized by using this type of research work.