Abstract

Alzheimer’s disease and related dementias (ADRD) represent an increasingly urgent public health concern, with an increasing number of baby boomers now at risk. Due to a lack of efficacious therapies among symptomatic older adults, an increasing emphasis has been placed on preventive measures that can curb or even prevent ADRD development among middle-aged adults. Lifestyle modification using aerobic exercise and dietary modification represents one of the primary treatment modalities used to mitigate ADRD risk, with an increasing number of trials demonstrating that exercise and dietary change, individually and together, improve neurocognitive performance among middle-aged and older adults. Despite several optimistic findings, examination of treatment changes across lifestyle interventions reveals a variable pattern of improvements, with large individual differences across trials. The present review attempts to synthesize available literature linking lifestyle modification to neurocognitive changes, outline putative mechanisms of treatment improvement, and discuss discrepant trial findings. In addition, previous mechanistic assumptions linking lifestyle to neurocognition are discussed, with a focus on potential solutions to improve our understanding of individual neurocognitive differences in response to lifestyle modification. Specific recommendations include integration of contemporary causal inference approaches for analyzing parallel mechanistic pathways and treatment-exposure interactions. Methodological recommendations include trial multiphase optimization strategy (MOST) design approaches that leverage individual differences for improved treatment outcomes.

SCOPE OF AGE-RELATED NEUROCOGNITIVE DECLINE

The number of older (aged > 65) adults in the United States is projected to double by 2030, representing an immense demographic shift that has been referred to as the ‘silver tsunami’[1]. Along with an increasingly older and complex general population, rates of age-associated neurocognitive impairment, stroke, and Alzheimer’s and Related Dementias (ADRD) are projected to increase in tandem, representing a looming source of public health expenditures [2]. ADRD is among the mostly burdensome and costly public health concerns in the United States. As treatments for coronary disease and cancer have increased life expectancies among aging adults, the risk of ADRD has continued to increase, with a projected increase in prevalence from 26.6 million to over 106 million worldwide by mid-century [3–5]. Moreover, many more individuals will develop cognitive impairment that substantially impairs quality of life without reaching criteria for dementia, including mild cognitive impairment (MCI) and cognitive impairment no dementia (CIND) [52]. Prior estimates have suggested that ADRD may account for up to $215 billion annually in public health expenditures, [6] with an anticipated increase in the coming decades. The public health cost of ADRD is particularly troublesome as the presence of ADRD is not only detrimental to afflicted individuals but often has dramatic ramifications for the quality and productivity of life among caregivers, many of whom have to substantially restrict or discontinue their participation in the workforce in order to provide care [7]. In addition, most older adults reportedly fear the development of ADRD more than cardiovascular disease (CVD) or other common chronic diseases, [8, 9] suggesting that strategies to mitigate ADRD risk have critical importance for both the quality and quantity of life for an exponentially increasing number of ‘baby boomers’ now at risk for ADRD [10–12].

The public health cost of ADRD is particularly troublesome as the presence of ADRD is not only detrimental to afflicted individuals but often has dramatic ramifications for the quality and productivity of life among caregivers, many of whom have to substantially restrict or discontinue their participation in the workforce in order to provide care [7]. In addition, most older adults reportedly fear the development of ADRD more than CVD or other common chronic diseases, [8, 9] suggesting that strategies to mitigate ADRD risk have critical importance for both the quality and quantity of life for an exponentially increasing number of ‘baby boomers’ now at risk for ADRD [10–12]. The increasing prevalence of ADRD is particularly concerning as pharmacological treatments to mitigate or prevent ADRD have been largely unsuccessful, with a 99% failure rate among novel pharmacotherapies [13]. Indeed, despite recent trials reporting tentatively positive findings, the consistent lack of efficacy observed from interventions among symptomatic older adults has led several large pharmacological entities to reallocate resources away from therapeutic pursuits due to a perception of futility [14–16]. Accordingly, a burgeoning body of evidence suggests that targeting modifiable risk factors in midlife may hold promise for mitigating or even preventing ADRD in later life [17–23]. For example, the Lancet Commission recently concluded that optimal treatment of modifiable risk factors holds the potential to delay or prevent up to one-third of ADRD cases [2, 24]. However, due to a relative paucity of randomized controlled trials, similar systematic reviews have yielded far less positive conclusions [25]. Beyond the potential merit of lifestyle change for preventing ADRD, optimizing modifiable risk factors is increasingly recognized for its importance in protecting from more normative decline in the context of cognitive aging, which has implications across all older adult populations [1, 26]. The present review will provide a narrative review of contemporary literature examining lifestyle characteristics and neurocognition, as well as innovative opportunities to improve mechanistic inferences from lifestyle trials attempting to mitigate ADRD risk and improve neurocognition.

LIFESTYLE, NEUROCOGNITION, AND ADRD: IMPORTANCE OF MODIFIABLE RISK FACTORS

A burgeoning body of evidence suggests that targeting modifiable risk factors in midlife may hold promise for mitigating or even preventing ADRD in later life [17–20]. For example, recent consensus statements by the NINDS, NIA, and American Alzheimer’s association have all advocated an increased focus on ADRD prevention, primarily focusing on three overarching risk factors: physical inactivity, ‘Western’ dietary patterns (e.g. high intake of saturated fat and complex carbohydrates, and low intake of fruits and vegetables), and poorly controlled cardiometabolic (CVD) risk factors. In contrast to some ADRD risk factors, such as age and genetic predisposition, these lifestyle-related dimensions all share an important characteristic: they are modifiable. CVD risk factors include an array of risk factors shown to increase risk of ADRD through vascular and/or metabolic risk, including components of metabolic syndrome (hypertension, obesity, diabetes or insulin resistance, hyperlipidemia, elevated triglycerides), smoking, atrial fibrillation, and left ventricular hypertrophy. In the United States, common CVD risk factors (e.g. hypertension and obesity) affect nearly 2/3 of adults and are independently predictive of cognitive decline and ADRD [10]. Individuals with elevated CVD risk factors in midlife are 2-3 times more likely to develop dementia, [17, 18, 27, 28]. leading many investigators to characterize CVD components as primary targets for dementia prevention initiatives [11]. Because AD and dementia are projected to constitute the largest public health burdens in the U.S. within two decades and pharmacological treatments have proven ineffective, the ability to curb cognitive deterioration carries tremendous public health importance [2, 29]. Indeed, although estimates related to potential prevention of ADRD are inherently imprecise, reductions in modifiable risk factors by 10–25% could reduce up to half of AD cases worldwide, representing millions of individual cases [30]. An integrated understanding of the effects of diet and exercise on neurocognitive functioning is therefore critical to guide prevention efforts over the coming decades.

BEHAVIOR AND THE BRAIN: CONCEPTUAL FRAMEWORKS LINKING LIFESTYLE AND NEUROBEHAVIORAL FUNCTIONS

“

Neurobehavioral Considerations

Over the past two decades, an explosion of empirical evidence has been published linking lifestyle behaviors to brain function [19, 32–36]. Indeed, great strides have been made at multiple levels of inference, including randomized trials of clinical populations, [37–41]. mechanistic studies within preclinical older adults using neuroimaging modalities, [42–44] and animal studies linking neurobehavioral changes to alterations in mitochondrial structure, [45–47] neurotrophic, [48, 49] and metabolic function [50, 51] to underlying changes of neuropathological pathways [52–54]. While exciting, the abundance of data has made a systematic synthesis of the linkages between diet, exercise, and neurocognition more difficult. One specific difficulty has been delineating overlapping mechanistic pathways by which change in lifestyle may alter the brain and, as a behavioral corollary, neurocognitive function. Moreover, prevailing biomarker models of preclinical ADRD progression increasingly suggest that subtle, systemic ADRD changes occur over decades, with alterations in neurocognitive function only observable in the final stages of the process [16, 55, 56].

Translational studies across animal and human models are potentially compounded by inherent and well-characterized neurobiological differences across animal and human studies, in which the behavioral markers most sensitive to the effects of aging [57–59] and CVD risk factors [10, 60] in humans are also the most distinct and hardest to measure in animals (e.g. higher-order executive functions). For example, while the most anterior brain regions in humans (e.g. orbitofrontal cortex) and associated projections have widely studied neuropsychiatric relevance, [61–65] these brain regions have less representation in rodents, making translational behavioral inferences more difficult [66]. Therefore, while critically informative for elucidating mechanistic underpinnings, animal studies have necessarily prioritized behavioral markers of learning and memory, [67] which are critically reliant on mesial temporal lobe structures in humans.

In humans, episodic memory performance is closely tied to integrity within mesial temporal lobe structures, providing the earliest and most prototypical impairment observed in preclinical AD, often with associated cortical atrophy and ventricular enlargement [68, 69]. In contrast, the most common age-related neurocognitive changes occur on tests of complex processing speed, [57, 70] which are subserved by a small array of frontal-subcortical circuits (FSCs), some of which are preferentially vulnerable to microvascular and ischemic damage [71]. Underscoring their complexity, the FSCs all have direct and indirect pathways across the prefrontal cortex, corticocortical connections with other circuits, and more distal connections with outside brain regions [72]. While interconnected, FSCs carry various degrees of importance in facilitating distinct components of ‘executive functions’ depending on the specific needs of the task. Anterior injuries to the superior / medial FSC may experience impairments in task initiation, whereas damage to the dorsolateral prefrontal cortex are more commonly associated with impairments in set-shifting and working memory. FSCs also facilitate a delicate balance of neurotransmitter functions across various ‘reward system’ brain regions in the ventral striatum and caudate, which are particularly important in their sensitivity to various monoamines (e.g. dopamine). This may explain why neurocognitive improvements following exercise appear to preferentially improve executive functions and speed across diverse clinical populations, such as vascular cognitive impairment, [73]. Parkinson’s Disease (PD), [74] and Attention Deficit Hyperactivity Disorder (ADHD) [75]. While neurocognitive deficits across these disorders are etiologically distinct, they have shared dysfunction within neurocircuitry in the FSCs and particularly the ventral striatum and associated prefrontal cortex projections, which are critical to facilitating these complex neurocognitive functions.

Conceptual models

Several widely used conceptual models are useful in elucidating the complex associations between lifestyle factors and neurocognition. One widely used and conceptual framework is the scaffolding theory of aging (STAC) and its revision (STAC-R) [76–78]. This framework explicitly grapples with several important elements of the dynamic systems by which peripheral systems, structural markers of neuropathology, and functional neural pathways all act in concert facilitate neurocognitive function. It is worth remembering that, although an increasing focus is been placed on the identification of preclinical biomarkers the AD literature, the end goal of treatment remains the same: to preserve function through preserved neurocognition [79–81]. Importantly, many investigators hypothesize that the beneficial effects of lifestyle modification on neuroplasticity are occur primarily by strengthening compensatory functions of the brain, with more modest effects occurring through direct enhancement to neuropathological aspects of brain structure (e.g. cortical atrophy and white matter disease) [77]. However, the ability to recruit and retain new neurons is also dependent on the underlying health of the brain and supportive systems, such that there may be a ‘tipping point’ beyond which these compensatory changes are eclipsed by larger neurodegenerative changes.

An important corollary of this theory is that the impact of lifestyle on brain function may act through different mechanisms with a different time course is depending on the person’s age, neuropathological burden, and systemic health. For example, optimally managing cerebrovascular risk factors over the course of decades through exercise and diet would likely help sustain brain function by sustaining microvascular function [82]. In contrast, reducing cerebrovascular risk over the course of months among an older individual with significant cortical volume loss may not be helpful and could paradoxically worsen function if compensatory cerebrovascular remodeling has occurred within the neurovascular unit following decades of hypertension [83–85]. In essence, this theory allows for a dynamic, systems level approach to cognitive functioning that takes into account multiple factors often overlooked and more simplistic models.

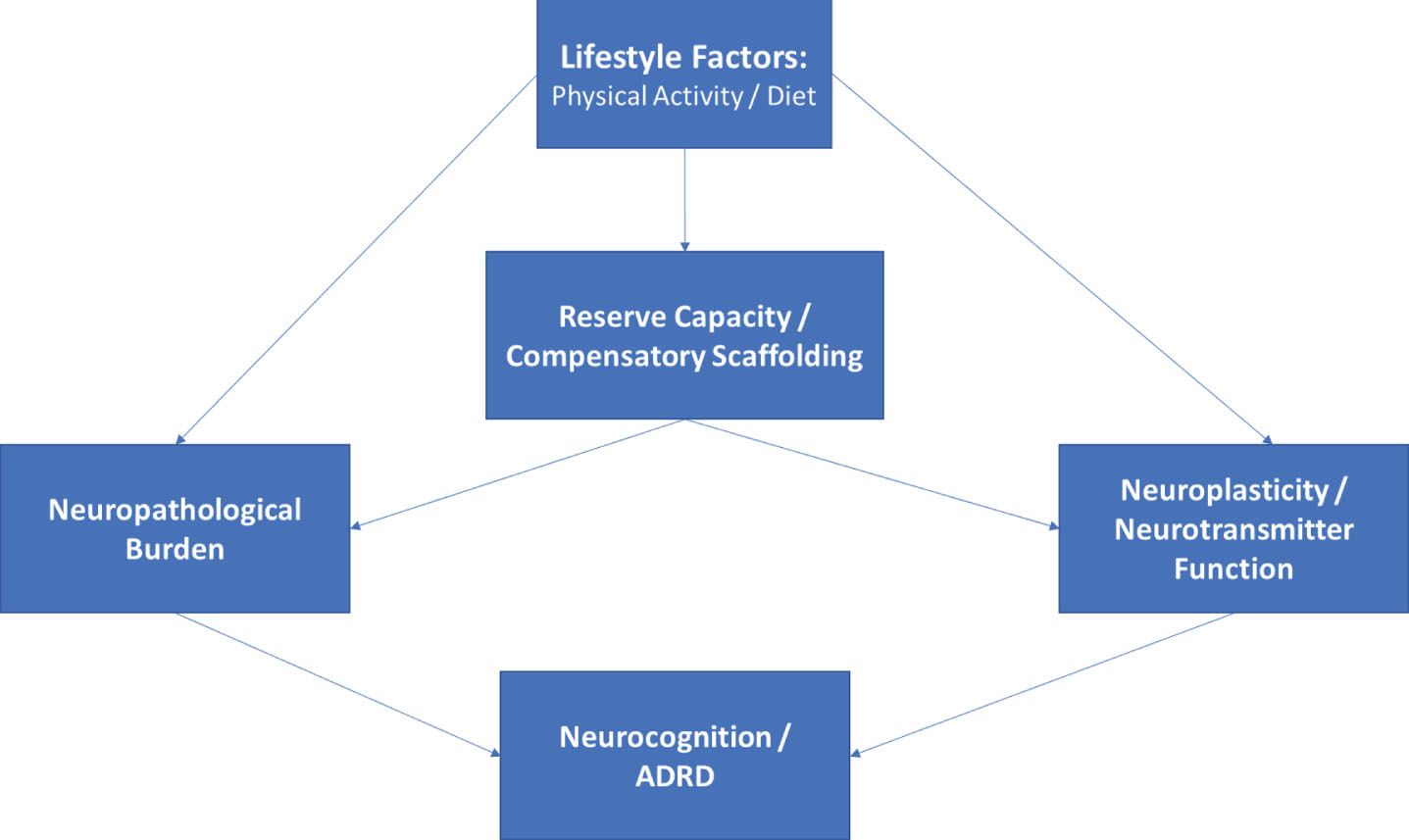

Another important conceptual approach is the potential ability of lifestyle to strengthen neurocognition through its impact on reserve capacity, which has conventionally been conceptualized as cognitive reserve [86] and more recently extended to include metabolic [87–89] and brain reserve [90]. Reserve capacity reflects wide individual differences in the ability to withstand great amounts of neuropathological damage without apparent neurocognitive impairment, which may be reflected across multiple systems that all indirectly impact ultimate neurocognitive function. For example, markers of fluid intelligence, [91] greater efficiency of metabolic ‘cross-talk’ between the peripheral and central nervous systems (CNS), [92] and greater white matter integrity [93] all could be conceptualized as representing reserve capacities, measured across cognitive, metabolic, and structural brain systems. Although there appears to be wide agreement that reserve capacity is a critical component underlying the associations between diet, exercise, and neurocognition, [32, 89, 94, 95] there remains wide disagreement about how these concepts are defined and how they should be measured [80, 81, 96]. Nevertheless, it appears likely that lifestyle has a beneficial effect on the brain through its parallel impacts on brain structure, reserve capacity, and directly on neurotransmitter systems, which indirectly influence each other (Fig. 1).

Related conceptual frameworks with smaller empirical bases in humans include the effects of lifestyle on 1) hormesis and 2) compensatory repair functions within the brain facilitated by preserved lipid metabolism. Hormesis reflects the adaptive response of the brain to intermittent, low-dose environmental exposures (e.g. dietary energy restriction) that enhance systemic functional response capabilities [97, 98]. More recent evidence suggests that alterations in phospholipid metabolism may be particularly important to the progression of subclinical ADRD biomarkers of cortical atrophy [99]. CNS lipids have a critical, structural role in supporting neuronal integrity and it has been hypothesized that intact phospholipid metabolism may serve a critical compensatory mechanism underlying neuronal repair in preclinical adults. Alterations in phospholipid metabolism are prognostic of incident cognitive decline, conversion from normal cognition to mild cognitive impairment (MCI), and conversion from MCI to ADRD [99, 100]. Altered lipid metabolism is also potentially consistent with the increased rates of ADRD among individuals with different apolipoprotein (APOE) genotypes, as the APOE gene was originally discovered because of its lipid metabolizing properties. It is therefore possible that blunted peripheral metabolic function may impair the brain’s ability to offset normative neurodegeneration, with marked dysregulation of phospholipid metabolism occurring in the years immediately preceding clinical neurocognitive impairment [101–105].

Directed acyclic graph linking lifestyle modification to neurocognitive outcomes. As shown, lifestyle modification likely has beneficial effects on structural markers of neuropathology, reserve capacities, and more directly on neurotransmitter systems, all of which contribute to neurocognitive function as observed through behavioral testing. The associations between markers of reserve capacity and/or scaffolding (i.e. blood brain barrier integrity, cerebrovascular reactivity, neurogenesis) likely impact neurocognition indirectly through their influence on structural markers and by potentiating or blunting neurotransmitter systems.

Conceptual frameworks have proven useful in guiding lifestyle-related intervention approaches, but have been used sparingly in generating falsifiable hypotheses. As discussed later in this review, this may owe partly to the inherent ‘systems-level’ nature of models following from models such as STAC-R, which posit that an individual’s level of neurocognitive function represents the brain’s ability to compensate for underlying disease burden. Implicit within this standard definition of brain reserve / resilience is a multi-level system in which function (neurocognition) is a product of disease exposure (e.g. advancing age, vascular risk), neuropathological burden (e.g. cortical atrophy), and cerebral compensatory factors (e.g. neurogenesis, synaptic plasticity). In other words, exogenous disease exposure causes both structural and functional degradation in the CNS, and neurocognition therefore reflects the degree of successful compensation exerted by an individual to sustain function in the presence of this underlying burden. Neurocognitive performance therefore reflects a gradient: it reflects both the amount brain function / reserve capacity exerted and the amount of underlying neuropathological / structural burden.

Dynamic conceptual models suggest several important and testable hypotheses. First, the association between markers of exposure and neurocognition should be mediated through their impact on underlying brain structure and compensatory function. For example, the association between advancing age and memory decline would be explained by alterations in structural (e.g. cortical atrophy and microvascular burden) and compensatory / functional (e.g. CNS metabolic function). In other words, an individual with greater vascular risk who (miraculously) does not evidence microvascular ischemic damage or impaired cerebrovascular reserve function would be hypothesized to have better neurocognition compared to an individual without evidence of these CNS sequelae, even if they were comparable in their degree of (peripheral) vascular risk. Similarly, greater white matter damage / leukoencephalopathy caused by factors unrelated to chronic vascular risk factors (e.g. CADASIL or multiple sclerosis) would be hypothesized to impair neurocognition even in the absence of vascular risk, because the primary mechanism of impairment (brain structure) is still impacted. To use a more concrete analogy, knowing that a car cannot get from point A to point B tells you little about whether the car’s impairments are structural (e.g. the frame is compromised) or functional (e.g. flat tires and out of gas). Designing intervention to make the car function would therefore be highly dependent on the type of impairment: filling up the gas is unlikely to be helpful if your car’s frame is compromised. As a corollary, lifestyle interventions designed to modify exposure variables (e.g. blood pressure) or that select participants based on their level of neurocognition may therefore overlook important individual differences in biomarkers of disease burden that could plausibly impact participants’ ability to improve brain function with treatment.

Another important and testable extension of these conceptual models is that structural markers of reserve should function as a constraining factor to any treatment-related improvements in neurocognition. Because structural reserve markers (e.g. atrophy) are less changeable and in some cases represent a marker of optimal functioning (e.g. educational status or premorbid intelligence), they may function to moderate the degree to which improving compensatory factors improves neurocognition. In other words, structural markers may impact the

PHYSICAL ACTIVITY, DIETARY PATTERNS, AND NEUROCOGNITIVE OUTCOMES

Lifestyle factors, such as physical activity and healthier dietary patterns, are increasingly recognized as potential contributors to ADRD risk [18, 107–111]. For example, meta-analytic studies of middle-aged adults have demonstrated that greater engagement in physical activity associates with significant reductions in ADRD risk, with individuals who exercise regularly demonstrating an approximate 40% reduced risk [112]. Prospective studies have also linked various dietary patterns to differential ADRD outcomes [110, 113, 114]. Multiple intervention studies have reported similar findings, with numerous meta-analytic reviews suggesting that individuals randomized to participate in aerobic exercise training experience modest but appreciable increases in cognitive performance [115–118]. These findings are reviewed briefly below.

Physical activity

Physical activity, including leisure time exercise, has gained increasing attention as a possible modifiable factor mitigating the risk of Alzheimer’s disease and cognitive impairment in later life [112, 119, 120]. Numerous epidemiological studies now suggest that greater levels of physical activity confer a lower risk of a dementia in later life, with even modest improvements and habitual activity demonstrating significant reductions in long-term risk. For example, in a meta-analysis of 15 prospective studies incorporating nearly 34,000 individuals, higher physical activity levels associated with a 38% lower risk of neurocognitive decline, with even low-to-moderate exercise levels conferring lower risk (35%) [112]. Discrepant findings have also been reported, however, with a recent study from the Whitehall II study failing to find a protective association between physical activity and risk of ADRD nearly 30 years later [121]. These discrepant findings may be influenced, in part, by differing risks between ADRD subtypes, with AD and vascular dementia (VaD) differing to various degrees in their underlying pathophysiologic risk profiles. For example, a more recent meta-analysis of prospective studies demonstrated that physical activity was protective against all-cause dementia (OR = 0.79 [0.69, 0.88]) and cognitive decline (OR = 0.67 [0.55, 0.78]), with the greatest benefits for development of AD (OR = 0.62 [0.49, 0.75]), whereas risk of VaD was not associated with physical activity (OR = 0.92 [0.62, 1.30]) [122]. An interesting but inconsistent finding within published exercise trials is the potentially differential effect based on genetic risk for AD (APOE) [123, 124]. Although results have been mixed, several studies have demonstrated that the beneficial effects of aerobic training may be greater among individuals with greater AD genetic risk, [123] although others have failed to replicate these subgroup differences [125, 126]. Taken together, these findings suggest that being physically active in middle-age is likely protective against future ADRD, although there is wide individual variation across individuals that remains to be understood, as discussed in later sections below.

Physical activity interventions also have tended to report beneficial effects, [41, 117, 127–129]. with some notable exceptions [36]. Results have generally suggested that improvements in neurocognition following aerobic training are strongest in frontal-subcortical functions, including attention and executive functions, although improvements across other domains also have been noted [117, 118]. Similar findings have been reported from resistance training interventions, with participants randomized to exercise demonstrating improvements relative to controls [130] and a tendency for the pattern of improvements to favor frontal-subcortical functions [131, 132]. As discussed in more detail below, the largest source of variation across studies appears not to be whether they can improve neurocognition, but whether the observed improvements can be attributed to changes in fitness or other downstream mechanisms. For example, multiple studies examining the physical activity and neurocognition association have failed to demonstrate dose-response benefits [112, 117, 133–135] or an association between improved aerobic fitness (the putative treatment mediator for aerobic training) [36, 136]. The comparable benefits observed from resistance training may therefore suggest that downstream changes (e.g. metabolic function or neurotrophins) represent a more plausible mechanism underlying the observed treatment benefits.

Dietary patterns

An extensive body of evidence, including hundreds of trials, has attempted to improve neurocognition through dietary supplementation. As reviewed in detail elsewhere, areas of intervention tended to focus on antioxidants (vitamins A, C, and E), B vitamins and folate, and polyunsaturated fatty acids (e.g. omega-3 s). Although the details of this large body of evidence are beyond the scope of the present paper, systematic reviews of supplementation trials have generally produced equivocal findings with one exception: individuals who are deficient in a various nutrient (e.g. vitamin B) benefit from supplementation to normalize their levels. Beyond this, supplementation does not appear to improve neurocognition and may even have adverse effects in some individuals. An emerging body of evidence has shifted focus form specific nutrients to overall dietary patterns, with a focus on the Mediterranean diet (MeDi), the Dietary Approaches to Stop Hypertension (DASH) diet, the Mediterranean-DASH for Neurodegenerative Delay (MIND) diet, and caloric restriction.

Dietary patterns have also gained interest, as a growing number of prospective studies have demonstrated that better adherence to dietary patterns with higher intake of fruits, vegetables, whole grains, and reduced intake of saturated fat and complex carbohydrates appear to be associated with reduced ADRD risk [137–141]. For example, greater adherence to the DASH diet, [142–147] MeDi, and MIND [148–150] have all been associated with lower ADRD risk. However, there have also been null findings, with recent systematic reviews reporting that evidence linking dietary patterns to ADRD outcomes remains inconclusive [113, 151, 152]. Importantly, although the MeDi and DASH were originally characterized for their beneficial impact on CVD risk factors and CVD outcomes, few studies have provided compelling evidence that the observed associations between dietary patterns and ADRD are mediated through vascular pathways, [148, 153] with multiple, indirect mechanisms connecting dietary behaviors to cognitive outcomes [144, 154].

Mediterranean diet

One of the most widely studied dietary patterns is the MeDi. The MeDi was originally identified for its characteristic pattern among individuals living in European countries surrounding the Mediterranean sea, who also consistently exhibit a low incidence of cardiovascular disease. Accordingly, the MeDi is typified by greater levels of fish intake, fresh fruit and vegetables, unsaturated fatty acids, and modest but regular consumption of wine [153, 155]. The majority of prospective cohort studies have found a protective association between the MeDi and neurocognitive outcomes [137, 156, 157]. For example, a prior meta-analysis combining results across five studies found that higher adherence to the MeDi diet (highest tertile) was associated with a 27% reduced risk of MCI and a 36% reduced risk of AD among cognitively normal adults [158]. The majority of existing observational evidence suggests that the beneficial effects of MeDi on brain outcomes are mediated by reduced cerebrovascular risk and incidence of brain infarcts on neuroimaging assessment [159]. As detailed below, results from the PREDIMED trial, [160, 161] are one of the only RCTs available to assess the benefits of MeDi diet in an intervention context, though several ongoing trials are currently underway. In addition to available trial data, a recent meta-analysis of prospective cohort studies suggested that the association between MeDi dietary pattenrs and ADRD exhibits dose-response characteristics, suggesting that increasing levels of adherence may have additionally protective effects [162].

Dietary approaches to stop hypertension (DASH)

Similar to the MeDi, the DASH diet was originally designed for its beneficial effects on blood pressure and associated hypertensive outcomes. The DASH diet emphasizes consumption of fresh fruits and vegetables, whole grains, low-fat dairy products, modest meat consumption, and modest alcohol consumption. In contrast to the MeDi, the DASH places greater emphasis on reducing dietary salt intake and does not incorporate regular alcohol consumption. Greater DASH adherence has also been associated with greater blood pressure reductions [163] and reduced risk of stroke, [164] underscoring its potential benefits to cerebrovascular mechanisms of neurocognitive impairment. Preliminary evidence suggests that individuals who are more adherent to a DASH-style diet have a lower incidence of cognitive decline [144, 146]. For example, DASH diet adherence was also associated with lower rates of cognitive decline among older adults participating in the Memory and Aging Project during a 4-year follow-up [146, 165].

Mediterranean-DASH (MIND) Diet

An emerging dietary pattern of interest is the MIND diet, which combines aspects of the MeDi and DASH diet with an emphasis on dietary components linked to neuroprotection and dementia prevention [166–168]. For example, the MIND diet has a more explicit emphasis on dietary components with polyphenolic effects, such as blueberries, red wine, and dark chocolate [169]. The MIND diet has been associated with a lower incidence of neurocognitive decline [150, 168, 170] and ADRD [169–172]. Indeed, recent evidence suggests that the MIND dietary pattern is associated with more protective effects when compared to either the MeDi or DASH directly [165–167]. Although no clinical trials have focused on modifying dietary patterns using MIND specifically, investigators are increasingly integrating this pattern of dietary intake into preventive efforts aimed at lowering the incidence of ADRD through dietary modification [173].

Caloric restriction / intermittent fasting

Lower caloric intake is historically one of the most widely studied aspects of dietary intake believed to improve brain outcomes. While early studies were almost exclusively in animal models, an accumulating body of data suggests that lower caloric intake may be associated with lower risk of cognitive decline, [174, 175] and that intentional weight loss through caloric restriction may improve neurocognition [176]. For example, both cross-sectional [177] and prospective studies have suggested that caloric intake is associated with ADRD risk, [178] and that this association may vary based on genetic risk for AD [179]. In addition, several RCTs have examined the impact of caloric restriction on neurocognition among humans, with mixed results [178, 180–182]. In one study, a three-month caloric restriction intervention improved memory performance among fifty healthy, elderly adults who were either normal weight or overweight [178]. Following three months of treatment, individuals randomized to the caloric restriction group showed improvements in verbal memory performance. In addition, these improvements were associated with decreased levels of fasting insulin and C-reactive protein and were strongest among participants with the best adherence. Similar findings were noted in recent

In one of the more positive caloric restriction trials, Horie and colleagues demonstrated that a one-year caloric restriction trial in 80 obese adults with MCI improved cognitive function, with improvements observed across neurocognitive domains [186]. Improvements in neurocognition varied somewhat by age and APOE genotype, with participants aged 60–70 demonstrating the largest improvements in memory and verbal fluency, and APOE-4 carriers demonstrating the largest improvements in executive function. Most notably, improvements in neurocognition were associated with improved metabolic function (increased insulin sensitivity and leptin), reduced inflammation (C-reactive protein), and reduced energy intake (carbohydrates and fats). Similarly positive findings were reported from a small trial examining changes in brain structure and function among 37 obese, postmenopausal women randomized to an intensive 12-week low-caloric diet or a control group [187]. In a

Other dietary patterns

Two closely related dietary patterns, with a much small literature base, include intermittent fasting and ketogenic dietary patterns. Intermittent fasting has been suggested to have beneficial effects on the brain by improving metabolic function through intentional manipulation of energy balance, as well as reducing inflammation and oxidative stress [188–190]. Intermittent fasting appears to produce comparable weight loss benefits when compared to traditional caloric restriction, [191] reduces cardiovascular risk factors, [192, 193] and has been associated with reduced risk of age-related neurocognitive deficits [194]. An additional benefit is that it may be easier for many individuals to comply with It also may be easier for many individuals to comply with, [195] increasing the likelihood of sustained adherence. Finally, the ketogenic diet (KD) has been examined, originally as a potential non-pharmacological strategy to reduce the frequency and severity of seizure activity in epileptic patients [196]. Through hermetic mechanisms, KD has been postulated to improve central metabolic function, [169, 197, 198] a critical factor in age-dependent neurocognitive decline [197]. Although very few studies have examined KD outside of epileptic samples, [199–201] mechanistic animal studies suggest that KD may improve glucose transport, with differential effects on the prefrontal cortex and hippocampus [202].

Randomized Trials Combining Exercise and Dietary Modification

Although individual lifestyle components appear to confer lower ADRD risk, preliminary evidence suggests additive benefits associated with each component, such that individuals engaging in regular exercise and healthier diets show the lowest risk [203–205]. Data from the WHICAP project demonstrated that participants engaging in physical activity or the MeDi diet had lower risk of Alzheimer’s Disease (AD), and participants engaged in both had the lowest prospective risk of AD [206]. Similar mechanistic findings have been demonstrated in animal models as well, with exercise facilitating the beneficial effects of some dietary components on brain outcomes [98, 207–212]. Preliminary data from multicomponent trials have reported similar findings, with both exercise and diet combining to improve neurocognitive performance [145, 213]. Similarly, in the recently completed Finnish Geriatric Intervention Study to Prevent Cognitive Impairment and Disability (FINGER) trial, [214] individuals randomized to the multicomponent intervention of exercise, diet, cognitive training, and monitoring of CVD risk factors experienced greater cognitive improvements. In contrast, recent data from the LOOK-AHEAD trial suggest that behavioral weight loss does not necessarily improve neurocognition [215–219] and may even have deleterious effects in older individuals, [220] despite improvements in some neuroimaging biomarkers of ADRD [221, 222]. Multicomponent trials are presented in detail below:

The physical activity intervention consisted of both aerobic and resistance training and was modified from the Dose-Responses to Exercise Training (DR’s EXTRA), which used a personalized training target for aerobic exercise of 55–65% of peak oxygen consumption. Aerobic training was gradually titrated to increase duration and intensity over the course of the trial, beginning with 30–45 mins twice per week and incrementally increasing to exercise bouts of 45–60 mins 3–5 times per week [223]. Progressive muscle training was also individually tailored in terms of intensity and titration, but standardized its approach by using exercises for eight main muscle groups (e.g. knee extension and flexion, abdomen and back, rotation, upper back and arm muscles, and leg press for lower extremity muscles), as well as postural balance exercises.

Following two years of treatment, a modified ITT analysis revealed that the intervention group demonstrated improvements in physical activity, dietary composition, and reduced weight. Most importantly, there was a small but significant improvement in a composite marker of neurocognition, as indexed by a comprehensive neuropsychological test battery z-score. Closer examination revealed that the largest improvements were observed in processing speed and executive function, without consistent improvements in memory performance [213]. While statistically significant, it should be noted that the findings were modest as a standardized effect (ES = 0.13). In addition to the primary findings from FINGER, structural MRI markers were obtained at baseline, 1-year, and 2-years after randomization, providing an opportunity to examine intervention effects on established biomarkers of ADRD risk [224]. Surprisingly, no intervention effects were observed on changes in MRI markers. However, integration of MRI and cognitive data revealed that treatment-related improvements in processing speed were more pronounced among individuals with higher baseline cortical thickness and volumetric characteristics of mesial temporal structures with critical importance for AD risk, suggesting that the beneficial effects of treatment were strongest among individuals with less advanced neuropathological changes. These findings may suggest that lifestyle modification improves neurocognition, but must be initiated earlier in the disease process to yield appreciable benefits.

Notably, mechanistic data from FINGER demonstrated similar findings to some other trials below, with cardiorespiratory fitness associating most strongly with observed neurocognitive improvements in processing speed and executive function, but not memory [225]. Improved dietary patterns also predicted improvements in executive function, [226] whereas baseline dietary patterns (not change) associated with global cognitive improvements over the course of the intervention. Perhaps most notably, changes in neurocognition were not associated with APOE genotype [126] and greater CVD risk, although associated with volumetric characteristics of ADRD risk, was not associated with beta-amyloid deposition [227].

The lack of a primary finding ignores several interesting nuances within the Pre-DIVA findings, however. First, although the overwhelming number of dementia cases were attributed to AD, intervention effects were much stronger among the limited subset of participants experiencing non-AD causes of dementia (HR = 0.37 [0.18, 0.76]), including VaD (HR = 0.43 [0.17, 1.12]). In addition, the authors conducted several sensitivity analyses in order to determine whether intervention may have conferred benefits among participants who 1) followed the intervention more closely and 2) had a less severe history of CVD or CVD risk at baseline. These analyses, although

The treatment group demonstrated substantial weight loss, much of which was maintained at the time of first neurocognitive assessment [242]. As reported in detail elsewhere, [242] the ILI group experienced an approximate 11% weight loss at year one and maintained approximately 7% weight loss through the 8-year follow-up. In contrast, the DSE group experienced a mean weight loss of around 1% at one year and 3% at the 8-year follow-up. Neurocognition was assessed using a composite score across multiple domains, including global function, verbal fluency, verbal memory, attention, executive function, and processing speed. Examination of treatment group differences, however, revealed that performance was not consistently improved between conditions, without any significant treatment group impact. There was some evidence that the intervention differentially affected overweight compared with obese participants. Among those who were overweight but not obese at enrollment (ie, 25 kg/m2≥BMI < 30 kg/m2), ILI was associated with a mean benefit for composite cognitive function of 0.276 (0.033, 0.520), compared with a deficit of 0.086 (–0.021, 0.194) among those who were obese (nominal

Results of the ILI on neuroimaging markers revealed a different pattern of findings. Among a subset of participants who underwent structural brain imaging or cerebral perfusion scans, ILI generally demonstrated improvements compared to DSE [221, 244]. For example, ILI participants demonstrated a 28% lower white matter hyperintensity volume compared to DSE participants, as well as a 9% lower ventricle volume [221]. Similarly, cerebral blood flow increased among the ILI participants relative to DSE [244]. Most interestingly, these improvements did not associate with changes in neurocognition, and changes in CBF actually demonstrated a divergence in their association with neurocognition between treatment groups, with improved CBF associating positively with neurocognition among ILI participants, but not in DSE participants.

Putative Mechanisms: Opportunities for Optimization

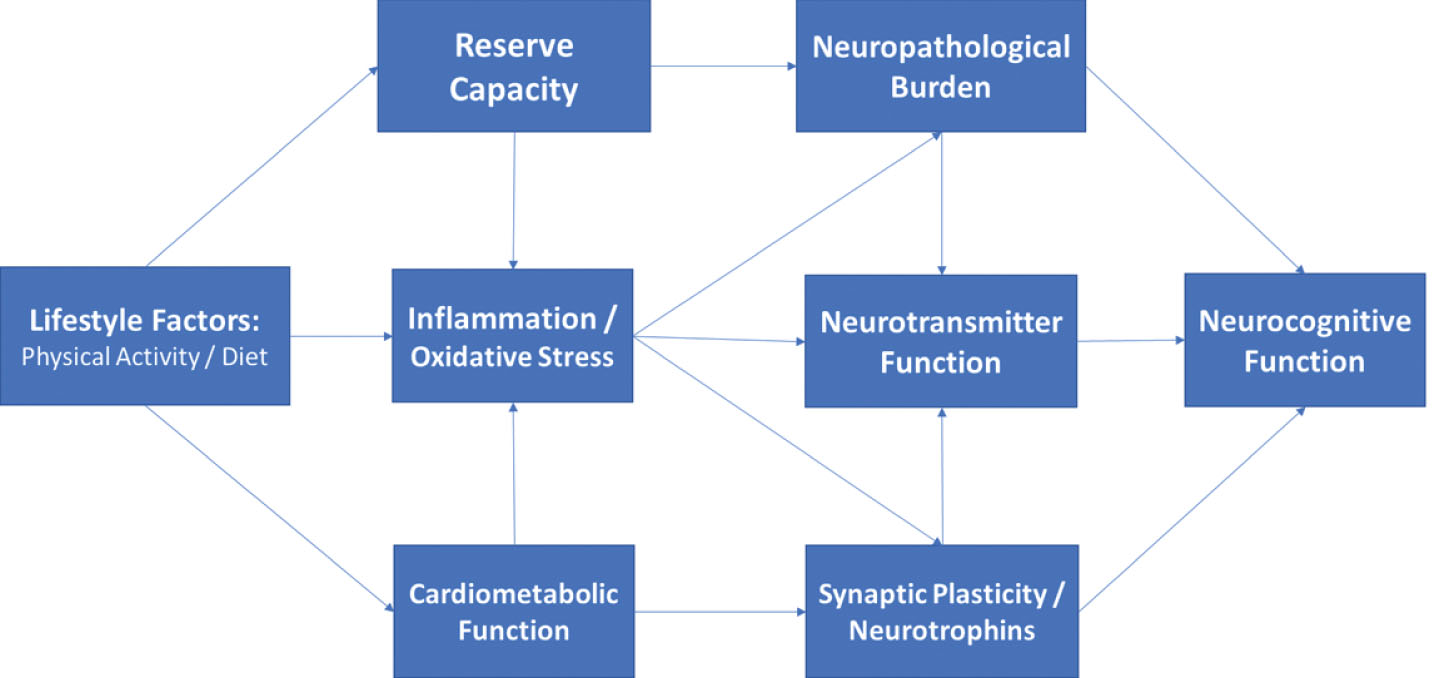

Mechanistic pathways linking physical activity, dietary consumption, and neurocognitive outcomes have been postulated across multiple levels within the peripheral and central nervous system. Despite their heterogeneity, increasing evidence suggests that disparate risk factors may have overlapping effects through ‘convergent pathways’ of risk, including cardiovascular, metabolic, and inflammatory systems. Conceptually, risk factors for neurocognitive impairment can be thought of as exerting their influences on the brain by affecting structural neuropathological features within the brain (e.g. cortical atrophy), compensatory factors buffering the CNS from age-associated injury (e.g. cerebrovascular reserve), and more direct effects on neurochemical messenger systems within the CNS (e.g. monoamines) (Fig. 2). Individual mechanisms are reviewed briefly below, with a focus on identifying convergent pathways of risk.

Directed acyclic graph linking lifestyle to neurocognition among middle-aged adults through indirect, downstream pathways including metabolic function, inflammation, and neurotrophic factors. As shown, these factors likely impact neurocognitive function through their downstream impact on brain structure and reserve capacity, with additional, indirect influences through modulation of neurotransmitter systems (e.g. monoamines).

Neurotrophins / Growth Factors

Growth factors are groups of proteins or neurohormones with effects that serve to facilitate neuronal and cerebrovascular growth, including nerve fibers, dendritic density, and arterioles [246, 247]. The primary growth factors most consistently linked to lifestyle changes are BDNF, [248–250] VEGF, [251, 252] and IGF-1, [251, 253–255] all of which are enhanced by lifestyle modification but act through distinct mechanistic channels to improve neurocognition. BDNF has been shown in numerous animal and human samples to be increased following aerobic exercise training, [256–258] for increased BDNF to associate with neurogenesis in the dentate gyrus and subventricular zone, [259–261] and for higher BDNF levels to associate with small but consistent structural increases in hippocampal volume [260]. In multiple animal studies and an increasing number of human trials, these increases in BDNF associate with improved neurocognition, particularly on tests of learning and memory [260]. In addition to these intermediate training effects, occurring over weeks and months, acute exercise has also been shown to confer transient increases in BDNF measured from peripheral sources for several hours [43, 262]. This may explain the dual effects of BDNF as having both an acute impact, such as observed among children with ADHD, and a chronic training impact in adults, both of which appear strongest on frontal-subcortical tasks.

VEGF has also been associated with lifestyle modification and is most closely tied to vascular and inflammatory functioning [263]. Endothelial cell functioning is one of the earliest, subclinical markers of vascular dysfunction, observable even in samples of obese children [263–265]. VEGF has been shown to play a critical role in angiogenesis within the brain, potentially offsetting normative damage to the small arteries with little redundancy in dorsal aspects of the prefrontal cortex. Moreover, as a marker of endothelial cell function, VEGF holds importance as a systemic, preclinical markers of vascular integrity [266]. In our own data and others, peripheral markers of endothelial function appear to mediate the associations between cerebrovascular risk factors and neurocognitive deficits in middle-aged, preclinical samples [267, 268]. In addition, endothelial cell functioning serves an important role in maintaining blood brain barrier permeability, [265, 269] which is critical for protecting the CNS from infection.

Insulin like growth factor (IGF-1) is a neurohormone with both direct and indirect influences on neurocognitive functions, primarily induced through exercise training [270, 271]. IGF-1 appears to have important regulatory effects on both BDNF and VEGF, and may therefore have a critical moderating influence on other growth factor influences within the CNS [251]. IGF-1 also appears to have independent effects on neuronal signaling, neuroprotection, and modulation of neuroinflammation [272]. More generally, IGF-1 has been hypothesized to play a mechanistic role linking peripheral impairments in metabolic function to CNS metabolic perturbations [273, 274]. IGF-1 has also been associated with neurocognition, [275] is responsive to exercise training, [276] and has been suggested to mediate the beneficial effects of exercise on brain outcomes [251].

Neuroinflammation / Oxidative Stress

Neuroinflammation is increasingly recognized as having adverse effects on brain [277, 278]. Greater levels of circulating neuroinflammatory markers are frequently shown among individuals with ADRD compared to controls, [272, 279, 280] associate with worsening neurocognition in prospective studies, [281, 282] and are upregulated among middle-aged adults with CVD risk factors and obesity [283, 284]. Neuroinflammation likely acts through several indirect pathways to worsen brain outcomes. First, the presence of elevated inflammation appears to exacerbate transient brain insults due to ischemia or hypoxia, [285] causing a prolonged recovery with potentially lingering adverse effects. Second, several lines of evidence suggest that neuropathological changes in the brain, such as beta-amyloid deposition, may independently increase inflammation levels, suggesting that they may reflect a proximal marker of neuropathological burden [279, 286]. And third, higher levels of inflammation appear to impair neurotransmitter functions, particularly in reward system brain regions critical for executive function. Taken together, elevated neuroinflammation appears to have an amplifying effect, interacting with other mechanistic channels to set of a feedback loop.

A closely related mechanism by which dietary modification may improve brain function is oxidative stress [45, 287]. Free radicals and other markers of oxidation increase with aging and are widely associated with brain health and neurocognition, with some investigators postulating that oxidative damage precedes broader neuroinflammatory changes [288]. Multiple nutritional components have been hypothesized to have beneficial effects on the brain by counteracting oxidative damage, particularly antioxidants such as vitamins A, C, and E [144, 289].

Metabolic dysfunction

A large and extensive literature base demonstrates that greater metabolic dysfunction is associated with increased ADRD risk [290, 291]. Recent consensus statements by the NIA, NINDS, and others have all advocated an increased focus on ADRD prevention, primarily focusing on three overarching risk factors: physical inactivity, ‘Western’ dietary patterns (e.g. high intake of saturated fat and complex carbohydrates, and low intake of fruits and vegetables), and poorly controlled CVD, [109, 292, 293] all of which strongly influence metabolic function. The presence of common CVD risk factors, particularly HTN and obesity, have a robust and adverse impact on neurocognitive outcomes. Obesity is one of the most common risk factors for ADRD, affecting nearly half of adults in the United States, [294, 295] and is associated with neurocognitive impairments, cortical atrophy, [76, 296, 297] and a 2-3 fold increase in long-term ADRD risk [76, 294, 298]. Midlife obesity, for example, has been associated with substantially elevated risk of impaired neurocognitive function and ADRD in later life [76, 299–302] and appears to accelerate the aging process, independent of other CVD risk factors [76]. Similar associations have been observed for HTN, with prospective studies demonstrating that the presence of HTN in midlife is associated with more than double the risk of neurocognitive decline [303–305] and dementia, [305–309] and may have particularly deleterious effects in the presence of obesity [310, 311]. As reviewed in detail elsewhere, impaired peripheral metabolic function likely impairs central metabolic function over time through insulin and leptin resistance,[50, 312] resulting in blunted CNS metabolic responses, energy exchange, and lipid metabolism.

Improving metabolic function has been hypothesized to mediate neurocognitive improvements following lifestyle modification [34, 88]. Although exercise and dietary modification both improve metabolic function, improvements in metabolic function are most closely associated with intentional weight loss, which may explain why extant studies have demonstrated improved neurocognition following variable intervention modalities, including caloric restriction [178], resistance training, [131] and aerobic paradigms [313]. This could also potentially explain why there appear to be additive effects of both aerobic training and dietary modification on neurocognitive outcomes [145, 236, 314]. Intentional weight loss among obese, middle-aged adults is increasingly recognized as a potential method to reduce ADRD risk, regardless of whether it is achieved through routine physical activity, healthier dietary patterns, or caloric restriction. For example, meta-analytic studies of middle-aged adults have demonstrated a dose-response relationship between greater weight loss and improved neurocognition, with the largest improvements observed in trials using exercise and diet to reduce weight [315]. It has been hypothesized that midlife weight loss may improve neurocognition by augmenting ‘cross talk’ between peripheral and central metabolic function, in which improved peripheral metabolism increases the efficiency and recruitment of central glucose resources and transport across the blood brain barrier [316]. Obesity is known to impair both glucose and lipid homeostasis, causing central glucotoxicity and impaired CNS insulin signaling, and weight loss may ‘recalibrate’ central homeostatic function by altering CNS metabolic mechanisms [47, 317]. Available evidence therefore indicates that pluripotent interventions “with combinatorial neuroprotective and ‘eumetabolic’ activities” [318] confer the largest neurocognitive benefits [318].

Neurogenesis / Synaptogenesis

Neurogenesis, the growth of new neurons and other neural tissue, represents one potential central pathway through which lifestyle modification improves brain function and potentially offsets normative, age-dependent brain volume loss. Synaptogenesis, the growth of new synaptic connections between neurons, is closely related to the creation of neuronal tissue [319], as immature granule cells are particularly plastic due to their sensitivity to long-term potentiation [67]. Synaptic plasticity is also a plausible downstream mechanism linking neurotrophic and metabolic factors to neurocognition, as the creation of new synaptic connections appears to be dependent both on growth factor expression and adequacy of central metabolic resources [320].

Although neurogenesis and synaptic plasticity are widely acknowledged to be improved through aerobic exercise, [32, 321] aerobic modification in and of itself appears to be a necessary but not sufficient component of neuronal growth. Although new stem cells are created, they take several weeks to integrate into the surrounding neural system and many new stem cells die off before becoming mature. The successful growth of new neurons, much like the growth of plant life in a garden, is ultimately dependent on several critical elements necessary for growth within the brain: energy, nutrients, and a conducive environment. In a simplistic model within the human brain, similar elements appear to be necessary to support neurogenesis: metabolic function, [322–324] neurotrophins, [325] and low levels of neuroinflammation [326, 327].

Several other neuropathological features of adult neurogenesis may explain their importance to the lifestyle and neurocognitive decline association. It is notable that the two primary areas of adult neurogenesis in mammals are in close proximity and have interconnections to neurocircuitry critical for learning/memory and executive functions: the dentate gyrus (proximal to the hippocampal gyrus) [259, 328] and the subventricular zone (proximal to the caudate nucleus) [329–333]. As noted earlier, these are also the two primary brain circuits implicated in AD and vascular cognitive impairment, respectively. The earliest neuropathological changes associated with AD occur in peri-hippocampal gyrus, whereas the earliest manifestations of subclinical microvascular disease are most commonly observed in periventricular brain regions because of their vulnerability to ischemia. This data could be interpreted as suggesting that the areas most vulnerable to common neuropathological changes are also most amendable to buffering and repair through behavioral modification. In addition to their impact on neurogenesis, impaired metabolic function and elevated inflammation / oxidative stress have been shown to have direct, adverse effects on neurotransmitter systems, which are worsened in the presence of both factors [334]. Higher levels of inflammation appear to disrupt connectivity between the ventral and dorsal striatum and the ventromedial prefrontal cortex [335, 336]. Both the ventral striatum and vmPFC have significant mesocorticolimbic dopamine innervations [335, 336].

Taken together, there remains a lack of consensus as to whether the effects of physical activity and dietary modification on ADRD risk overlap, are additive, or synergistic [142, 143, 214, 337–340]. For example, treatment implications would differ if the effects of physical activity and diet were found to act through aerobic fitness and inflammation, respectively, instead of both contributing to improved metabolic function. In contrast, finding synergistic effects might suggest that multicomponent interventions with both exercise and dietary components may be necessary to achieve ADRD risk reduction [129, 341–344]. Nevertheless, the present literature base suggests several important themes that may help to further optimize lifestyle approaches to ADRD prevention. First, lifestyle appears to impact the brain through pluripotent mechanisms, which vary in their importance across chronic and acute intervention settings. Second, many peripheral and indirect pathways connecting lifestyle to the brain have convergent, downstream mechanistic pathways, including central metabolic function, growth factor modulation, neuroinflammation, and neurotransmitter functions. And finally, the effects of lifestyle seem strongest when implemented early, with the greatest potential to prevent ADRD seeming to be in middle-age. Evidence increasingly may therefore suggest a ‘critical window’ for intervention among middle-aged individuals with ADRD risk factors, identified before compensatory cerebrovascular remodeling, systemic metabolic dysfunction, [345, 346] or elevated neuroinflammation set in motion a cascade of changes that may require more than lifestyle change to treat [347–350].

Mechanistic assumptions and potential solutions

Despite the overwhelming amount of evidence linking variations in lifestyle to differential brain outcomes from both animal and human literatures, there remains a lack of consensus as to whether lifestyle modification represents a viable treatment approach to mitigate ADRD risk among middle-aged and older adults. One could argue from the present review that exercising regularly and eating a healthier diet can clearly improve brain and neurocognitive function among some individuals. The most pressing questions are therefore less concerned with whether lifestyle modification can improve brain function but how and for whom. These latter questions can be informed by contemporary analytical and methodological advancements designed to address these specific issues: causal inference analytical paradigms [353, 354] and optimization trial designs [355, 356]. These techniques can readily be applied to several, potentially implicit assumptions, regarding the causal nature and underlying mechanisms linking exercise, diet, and the brain. Testing these assumptions may provide critical insights to advance ADRD preventive efforts.

Assumption 1: Homogeneous Mechanisms of Cause and Cure

Put simply, the causes and cures of neurocognitive impairment may not be the same, suggesting that different treatment approaches may be needed beyond those specifically targeting the causes of initial neurocognitive change. This may be one reason why simply reducing CVD risk factors does not consistently improve neurocognition: the presence of CVD risk causes a cascade of CNS damage that is only partially reversible once the risk factors are mitigated. The distinction in mechanisms can also be seen by a brief examination of their time-courses, with both acute and chronic effects observed [357]. For example, while the adverse effects of hypertension (HTN) on brain function are widely accepted, [358] the downstream mechanisms by which HTN impairs brain function exert their influence over the course of decades, [53, 359] whereas lifestyle HTN trials demonstrate benefits over the course of several months, [145, 236]. Moreover, reductions in white matter hyperintensities may take years to accrue [221] and do not necessarily associate with neurocognitive improvements from available trial data [242].

Assumption 2: Mechanisms are Monolithic

As easily seen in the available animal literature, many of the proposed mechanisms linking lifestyle modification to neurocognition interact with other downstream mechanistic pathways. For example, metabolic function may interaction with inflammation to increase ADRD risk [360]. While multiple mediators are widely acknowledged in animal literature, available lifestyle interventions are strikingly simplistic in their analysis of treatment mechanisms from available trials. Contemporary analytical approaches now incorporate approaches for examining multiple mechanisms and even interactions between mechanisms, which could plausibly be hypothesized to impact brain function [361, 362]. For example, the association between increased BDNF and improvements in learning might be moderated by inflammation levels, increasing learning for those with low inflammation but failing to do so among individuals with high levels. Similarly, it is possible that neurocognitive improvements may be mediated by parallel mechanistic processes, by interacting mechanistic processes, or by different mediators for different individuals. Because lifestyle modification is pluripotent, all of these scenarios are plausible and testable within contemporary analytical paradigms [363, 364]. Moreover, examination of multiple mechanisms could potentially explain the lack of a main effect in some cases, if one mechanistic process has opposing effects through a separate mechanistic pathway, [363] or if the association between the putative mediator and the outcome is confounded by an unmeasured factor. In the HTN example above, for example, the effects of BP reduction on neurocognition could plausibly confounded by impaired cerebral autoregulation or cerebrovascular reserve capacity, which are blunted by chronically elevated HTN and are not conventionally accounted for or even assessed.

Although the notion that mechanisms may interact may seem unnecessarily complex, it is consistent with several aspects of the available literature on exercise and neurocognition, which has reproducibly demonstrated that aerobic exercise training improves neurocognition, [117, 118, 365] but failed to find any association between improved fitness and neurocognitive improvements [133]. Although the magnitude of treatment effect has varied, most meta-analyses of exercise interventions and cognitive function have reported improvements across multiple domains of function [129, 366, 367]. Surprisingly, the associations between putative mechanistic features within these trials and cognitive improvements has been far less consistent, with the majority of evidence from systematic reviews of both older [136] and more contemporary trial data failing [133] to find a dose-response association between improved aerobic fitness and improved cognitive outcomes. Observational data can be interpreted as showing a similar, non-linear effect, such that modest to moderate levels of activity are most critical for improving cognitive outcomes, with diminishing returns beyond this threshold [112, 135]. This could suggest that improvements in neurocognition may parallel improvements in metabolic function, which have been shown to have a threshold effect in response to various training paradigms and may not mirror aerobic improvements [368, 369].

Assumption 3: Uniformity of the Mediator-Outcome Association

Another critically important and often overlooked assumption is that putative mediators of treatment act uniformly across participants. In other words, if one hypothesizes that improved fitness will improve neurocognition, they may also implicitly assume that this association is similar enough across participants to use linear regression-based approaches. Unfortunately, contemporary causal inference approaches have demonstrated this is often not the case for several reasons: 1) the mediating variable is not normally distributed, 2) the mediator interacts with the treatment variable to differentially modify outcomes (treatment-exposure interaction), or 3) the association underlying changes in the mediator and changes in outcome is confounded by a related but unmeasured confounder [354, 370]. One has to look no further than the LOOK-AHEAD trial to find evidence for a treatment-exposure interaction, with the effects of weight loss on neurocognition varying across participants [242], as well as the association between improved CBF and neurocognition showing differential associations across participants [244]. These findings suggest that unmeasured factors likely impacted the mechanistic function of weight loss, perhaps with more metabolically compromised participants experiencing destabilized central metabolism in the presence of weight reduction.

It is likely that treatment-exposure interactions explain some of the disparate findings across trials, as well as the potential presence of a ‘tipping point’ beyond which lifestyle modification does not appear to improve neurocognitive outcomes. Although the precise causes of these large individual differences are unclear, they appear to vary based on two premorbid characteristics that differ widely across patient populations: degree of metabolic impairment and neuropathological burden. Meta-analyses of lifestyle intervention trials have reported age-dependent effects on neurocognition, with larger benefits among middle-aged and older adults free from cognitive impairment, [36, 117, 128, 129, 371] suggesting a metabolic ‘tipping point’ for intervention efficacy [365, 366, 372]. In addition, trials enriched for metabolic disturbances appear to have a greater degree of individual treatment variation, with more metabolically compromised individuals exhibiting lesser treatment improvements, or in some cases showing an adverse impact. Paradoxically, later behavioral intervention among individuals with systemic vascular dysfunction may actually precipitate cognitive decline, [215, 220, 306, 373, 374] despite the beneficial effects of CMRF management at early disease stages [10, 375–377]. This could explain why recent multicomponent trials among participants with impaired metabolic function, including the Diabetes Prevention Program (DPP) [378] and LOOK-AHEAD trials, found that behavioral weight loss does not necessarily improve neurocognition [215–219] and may even have deleterious effects in more metabolically compromised individuals, [220] despite improvements in some neuroimaging biomarkers [221, 222].

Similar individual differences have been noted for CVD risk reduction, particularly for hypertension. The presence of CVD risk factors in midlife is one of the most widely documented and robust risk factors for future development of ADRD [379, 380]. Interestingly, the association between CVRFs and ADRD appears age-dependent, with midlife CVRFs drastically increasing risk, whereas the presence of CVD risk factors in older adults having equivocal or even protective associations with ADRD development [85, 381, 382]. It is possible that these disparate associations result from unmeasured individual differences in metabolic or brain reserve capacities, with chronic exposure to CVD risk compromising the ability of the scaffolding / reserve system to withstand additional age-related insults. Because chronic engagement in healthy lifestyle habits may reduce ADRD through its parallel effects on cognitive and metabolic reserve capacity, [89, 96, 383, 384] collection of more detailed historical information on midlife lifestyle habits may be informative, even among trials in older adults, because of its potential function as an unmeasured moderator of the treatment-exposure association.

Individual differences in reserve capacities may also explain large variations in treatment-related cognitive outcomes from randomized trials of exercise [19, 36, 116, 128, 365, 366], similar to those observed following intentional weight loss [186, 215, 217, 220, 315, 385].

Assumption 4: All Neuropathological Changes are Created Equally

Although a comprehensive discussion of functional neuroanatomy is beyond the scope of the present review, it is worth noting that the maintenance of some higher order neurocognitive functions is preferentially dependent on neurocircuitry in the frontal lobe and FSCs [386, 387]. While these areas generally undergo uniform, age-associated degradation through middle-age, occult microvascular damage and cortical atrophy are endemic among older adults, with substantial variation [388–394]. It is widely documented that strategic infarcts to the caudate nucleus, basal ganglia, and other critical brain circuits can cause precipitous cognitive impairment leading to dementia in a normally functioning adult under some circumstances [376]. Although rare in their pure form, [395] the conceptual relevance of disconnectivity between functionally critical brain circuits and larger regions is well-known and increasingly implicated as important for ADRD risk [396, 397]. In contrast to the known functional neuroanatomical correlates of intact neurocognition, most lifestyle RCTs examining mechanistic data have relied on global markers of brain health (e.g. cortical atrophy, microvascular burden), which may obscure wide variation among older participants. This is particularly important for examinations of lifestyle and neurocognitive dysfunction, which may only become manifest after substantial neuropathological burden has accrued.

The potential importance of a circuit-based appreciation for neurocognitive change is particularly relevant within the aerobic exercise literature, for which treatment improvements are preferentially observed on tests of frontal-subcortical function and more broadly in the ‘executive control’ (ECN) and ‘salience’ networks (SN), as well as their reciprocal associations with the ‘default mode’ network (DMN), all of which work in concert to facilitate complex neurocognitive functions. Within these networks, fronto-subcortical brain regions (e.g. anterior cingulate cortex, ventromedial prefrontal cortex, insular cortex) [398] interact reciprocally with critical ‘hub’ regions in the posterior cingulate cortex involved in the DMN [399]. DMN activity is traditionally anti-correlated with task-based networks, such as the SN [400, 401]. Thus, coordination of the DMN and SN are critical to attentional / executive control and reduction of internal distractors [402, 403]. A circuit- or network-based interpretation of aerobic exercise effects would synthesize mechanistic studies demonstrating that physical activity improves functioning within FSCs across disparate patient populations, including older adults, [117, 404] vCIND, [73, 236] Parkinson’s Disease, [405, 406], bipolar, [407] and ADHD [75].

Areas within the SN and ECN are also critical for executive functions because of they have a greater density of ‘rewards system’ neurotransmitter receptor sites, particularly for monoamines such as noradrenaline and dopamine. Noradrenergic function, in particular, is increasingly recognized as having an important role in the development of cognitive impairment among older adults and is critical for the maintenance of frontal-lobe functions [408, 409]. Moreover, these findings have recently been extended to healthy aging samples, with multiple investigators proposing that NE activation facilitating by the locus coeruleus may represent the best central nervous system biomarker of cognitive reserve (i.e. LC-reserve hypothesis) [410, 411]. Although no adult trials, to our knowledge, have examined the potentially moderating role of catecholamines, this association has been demonstrated in adolescent samples [412].

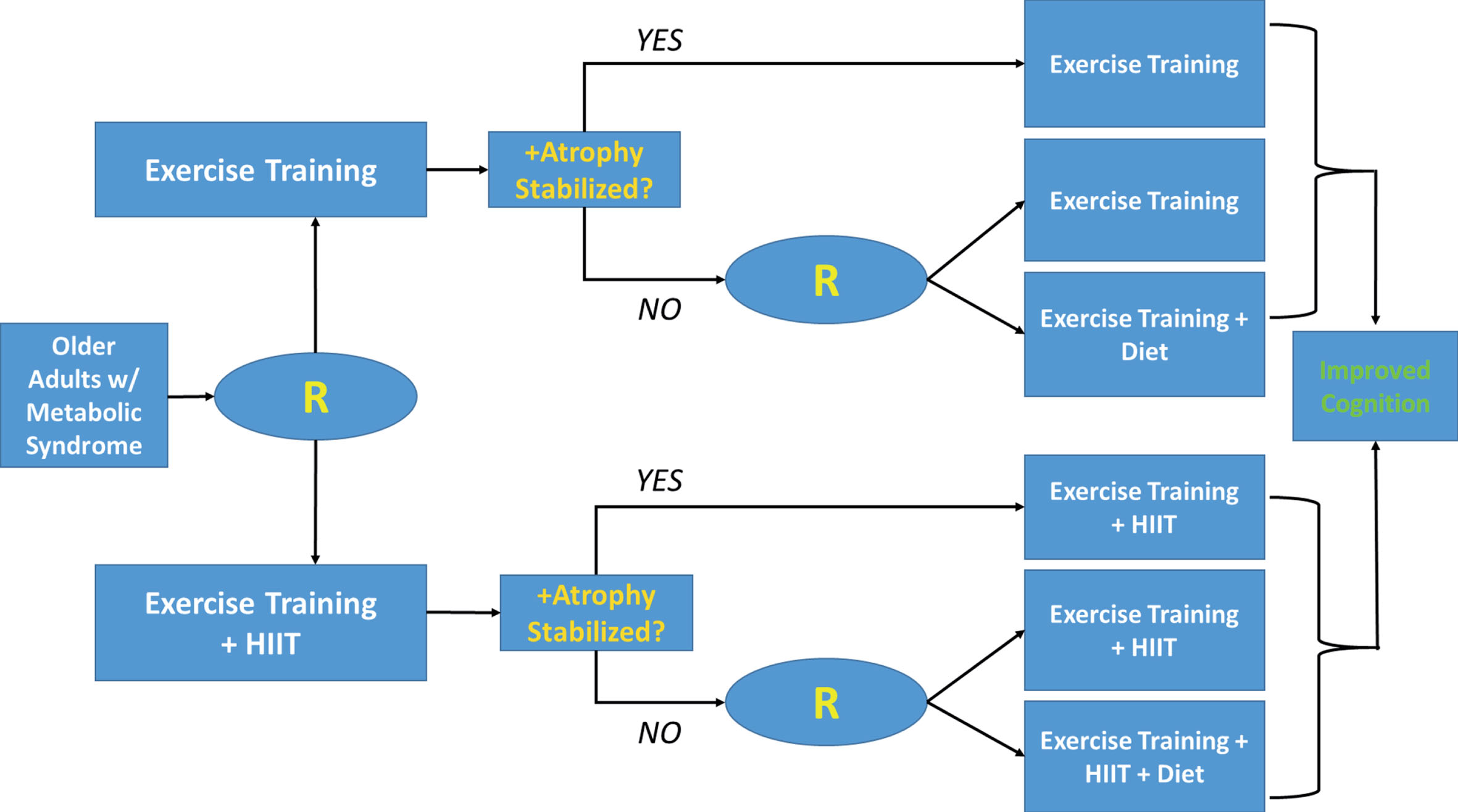

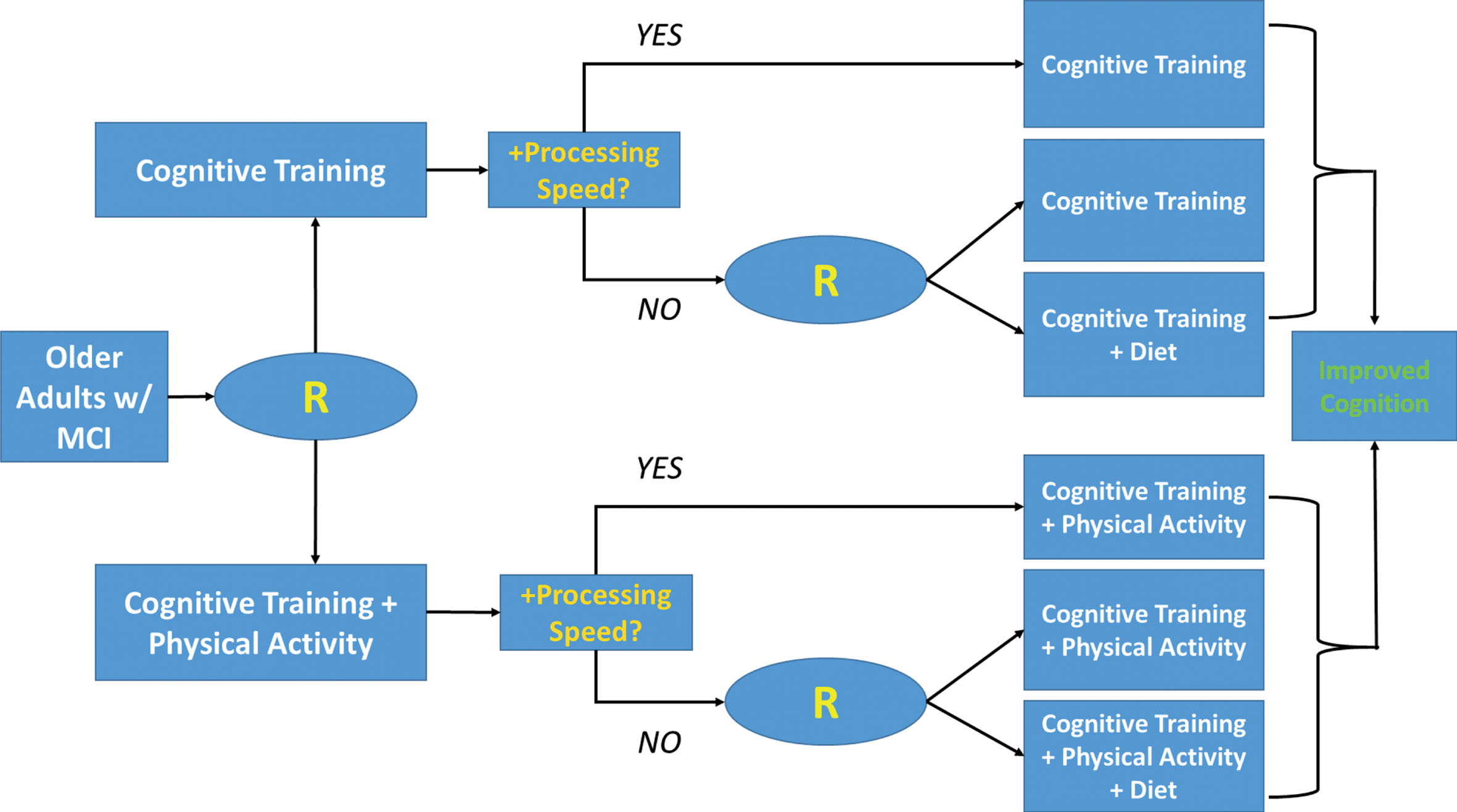

Assumption 5: Large, Randomized Trials are Necessary to Delineate Specific Treatment Mechanisms

Examination of the available literature demonstrates that most of the available trial data on neurocognitive outcomes comes from large, exceedingly costly trials in which multicomponent treatment packages were typically ‘bundled’ into one group. For example, the FINGER trial utilized four different intervention components: diet, exercise, CVD risk management, and cognitive training [214]. Although RCTs will ultimately carry the greatest scientific significance to change clinical practice, and emerging wave of multiphase optimization strategy (MOST) trials have provided critical insight into some recalcitrant public health problems [355]. Using an elaborated factorial design, particularly sequential multiple assignment randomized trials (SMART), these approaches allow investigators to systematically test treatment sequencing, optimize intervention components, and disentangle treatment-exposure interactions systematically using an efficient design with small sample size requirements. SMART designs in particular are ideal for patient populations with large individual differences in treatment response, with a secondary randomization occurring based on initial treatment response. For example, a MOST-based design in which exercise, diet, and cognitive training was examined would be helpful in determining their independent and synergistic effects (Table 1).

Example of a factorial design to determine optimal intervention components among exercise, diet, and cognitive training. Within the example below, main effect comparisons for factors would consist of 1) Exercise vs. No-Exercise (conditions 1, 2, 3, 4 vs. 5, 6, 7, 8); 2) Diet vs. No-Diet (conditions 1, 2, 5, 6 vs. 3, 4, 7, 8); and Cognitive training vs. No-cog training (conditions 1, 3, 5, 7 vs. 2, 4, 6, 8). Interactions between intervention factors can also be examined

MOST and SMART-based approaches could be adopted for brain-based mechanisms for which multiple treatment elements must be integrated in order to improve outcomes. SMART design approaches could be used to test, for example, whether training modalities that first target inflammation or metabolism before introducing other treatment elements may have the greatest benefits, if these treatment mechanisms truly do block other downstream brain changes. These kinds of approaches appear particularly relevant to test the effects of cognitive training in conjunction with lifestyle modification, [413] as cognitive engagement is increasingly recognized as a pillar of prevention [173, 414] and may have contributed to the observed success of the FINGER trial [213, 224, 415]. It is possible that discrepant findings between FINGER and other combined interventions, such as the MAX trial, [416] could have been that elements of the dietary intervention served to ‘prime’ brain areas needed for cognitive improvements for plasticity by augmenting metabolic or inflammatory pathways. Such sequential approaches have been hypothesized to confer greater cognitive benefits among older adults in cognitive rehabilitation [413].

Future directions

As shown by the present review, the impact of lifestyle on the brain, as well as the field of ‘health neuroscience’ more broadly, [417] is a burgeoning area with a great diversity of avenues for future inquiry. This is particularly relevant as intervention strategies are increasingly personalized for improved treatment effect [418]. Several specific hypotheses could be tested as an extension of the associations reviewed above. First, because neuropathological changes in the brain occur over the course of decades and may vary widely between individuals, [55] efforts to assess and better understand individual differences in chronicity of disease burden and structural brain imaging markers on neurocognitive changes following lifestyle change appear indicated. Future interventions would also be informed from inferences brought about by the NIA’s task force on ‘cognitive reserve’, [86, 94, 96] so that randomized trials can incorporate codified markers of reserve capacity to either examine as modifiers of treatment response or at least as a individual difference variable to control for in their examination of treatment changes [419]. Moreover, future interventions should consider inclusion criteria that partially account for reserve factors, such as the use of demographically-corrected normative data, which provide deviations in neurocognitive performance based both age and premorbid education level (a conventional marker of cognitive reseve). Indeed, it is notable that trials accounting for individual differences in baseline neurocognitive function, disease burden (e.g. vascular risk), and cognitive reserve capacity (e.g. education level [145, 236] or indirectly through CAIDE [214]) have demonstrated the largest treatment-related improvement in neurocognition.

Second, routine collection where possible of ‘downstream mechanistic pathways’ (e.g. metabolic function, inflammation, and brain structure) could help inform mechanistic pathways in future studies, even if other pathways are unmeasured (neurotrophins and neurotransmitter function). These would allow emerging trials to align with contemporary causal inference analytic paradigms in which ‘blocking’ of various causal pathways could prove highly informative [353, 420]. Moreover, collection of detailed historical data on cumulative exposure to CVD risk factors could provide important information on individual differences in reserve capacity, in order to examine whether this functions as an unmeasured individual difference influencing the observed treatment-exposure interactions.