Abstract

Over the last fifty years, societies across the world have experienced multiple periods of energy insufficiency with the most recent one being the 2022 global energy crisis. In addition, the electric power industry has been experiencing a steady increase in electricity consumption since the second industrial revolution because of the widespread usage of electrical appliances and devices. Newer devices are equipped with sensors and actuators, they can collect a large amount of data that could help in power management. However, current energy management approaches are mostly applied to limited types of devices in specific domains and are difficult to implement in other scenarios. They fail when it comes to their level of autonomy, flexibility, and genericity. To address these shortcomings, we present, in this paper, an automated energy management approach for connected environments based on generating power estimation models, representing a formal description of energy-related knowledge, and using reinforcement learning (RL) techniques to accomplish energy-efficient actions. The architecture of this approach is based on three main components: power estimation models, knowledge base, and intelligence module. Furthermore, we develop algorithms that exploit knowledge from both the power estimator and the ontology, to generate the corresponding RL agent and environment. We also present different reward functions based on user preferences and power consumption. We illustrate our proposal in the smart home domain. An implementation of the approach is developed and two validation experiments are conducted. Both case studies are deployed in the context of smart homes: (a) a living room with a variety of devices and (b) a smart home with a heating system. The obtained results show that our approach performs well given the low convergence period, the high level of user preferences satisfaction, and the significant decrease in energy consumption.

Keywords

Introduction

In the past few years, the number of connected devices has significantly increased and will continue in the future, in particular, for Internet of Things (IoT) and smart home devices [37]. Cisco estimates that 500 billion devices will be connected to the internet by 2030 [12]. With this increase, the energy consumption of these devices is growing and may challenge the capacity of energy production. For instance, in 2019, over 25 000 TWh of final energy consumption was electrical [20]. The increasing energy needed for these devices results in a high carbon footprint, therefore, a negative impact on the environment. Moreover, the impact of the COVID-19 pandemic and the Russo-Ukrainian war on energy has demonstrated the need for developing adaptive strategies for energy management efficiency and sustainability. In recent years, more sustainable and renewable energy is used, such as wind, solar, and ocean energies. These sources lead to greener energy production, however, energy leakages and losses continue to occur on the demand side.

On the demand side, industrial and residential sectors consume the majority of final electrical energy [20]. In residential sectors, conventional electrical systems and devices are not very eco-friendly. Some are considered high energy demanding (e.g., water heater, fridge, and laundry machine), and others are smaller devices and do not consume as much (e.g., internet modem, led lamp, and mobile phone). However, their large number leads to high energy consumption of the entire environment. Therefore, optimizing a large number of small devices can have a big impact [53]. A saving of 1.0 Mega-Watt per hour worldwide by saving 0.25 W per device for 4 million users and these numbers increase as we go to larger scales, as estimated in [18].

The introduction of IoT and smart devices has led to the emergence of the concept of a smart home. Many IoT devices now offer built-in intelligence capabilities that enable them to communicate with each other and connect to the internet. These intelligent devices help automate tasks, reduce cost, and save energy. A large number of devices integrate sensors allowing them to produce data describing the state of a device or the behavior of a person. In addition, a large number of these devices are also equipped with actuators. Such devices can accomplish tasks that directly impact the environment where they belong. These elements could allow the implementation of autonomous feedback control loops for power management allowing easy integration and understanding between devices to achieve higher energy savings.

In smart homes, several heterogeneous devices are connected using different protocols and sharing all kinds of data. They interact with each other to accomplish specific tasks, in addition to their interactions with humans which adds an extra level of complexity to the system. The smart home environments are scalable as the number of devices is limited, even if they can be quite heterogeneous having different types, interfaces, vendors, etc. However, these environments are dynamic as changes can occur to existing devices such as software updates, hardware updates, location changes, etc. Within this scenario, each device has its power consumption that needs to be estimated or measured in order to be able to estimate global energy consumption and implement the appropriate power management strategies. Energy is widely recognized as a significant concern in the research community, given the exponential growth of connected devices and appliances. Numerous studies have been conducted to address this issue, including the exploration of greener energy sources, changes in the design phase, task scheduling, machine learning approaches, and encouraging users to adopt energy-efficient behaviors through recommendations. However, the existing literature is subject to certain limitations, such as its application in specific scenarios or environments, limited device, or a restricted number of metrics.

The goal of this study is to reduce energy consumption by collecting data, building knowledge, and executing optimal green actions using ontologies and reinforcement learning techniques while respecting user preferences. In this study, a scalable, flexible, and automated energy management approach for smart homes is proposed. The developed energy management system could be implemented in any smart home easily. The approach is based on three main components: an automated power estimator, a formal knowledge representation of the environment, and a flexible reinforcement learning energy manager. In this paper, we focus on the knowledge representation and the RL energy manager. On the one hand, the knowledge representation component is used to understand the relationship between metrics from different devices, power consumption, and actions that can be applied. This model correlates the metrics and the potential actions to be carried out by any new device introduced to the environment. The use of an ontology guarantees the inclusion of heterogeneous devices and the general purpose of the approach. On the other hand, the RL energy manager has the central role of identifying the best energy-efficient action that should be taken and its impact on the entire system while respecting user satisfaction. It is composed of an agent generator that creates a RL agent based on the knowledge received from the knowledge component, and a decision-making model that receives the metrics and the power consumption to choose the best action that can be taken to save energy.

The main contribution of this study is to guarantee the autonomic management of energy consumption in cyber-physical environments. It also provides visibility on energy drains by estimating the power consumption of devices in an automated manner. It lays out a way to formally describe energy-related knowledge. It also proposes a generic RL agent generator that adapts to different environments.

The remainder of this paper is organized as follows: Section 2 reviews the state of art approaches used for energy management in smart environments. In Section 3, the theoretical development, architecture, and different components of the approach are presented. Section 4 presents the case studies, experimental setup, and implementation of each system’s component. Section 5 discusses the results and validity of the approach. In Section 6, we enumerate the main limitations of our approach. Finally, Section 7 concludes and layouts future directions and perspectives.

Literature review

Energy consumption is a significant concern for the research community, therefore, several approaches have been proposed to reduce energy consumption in smart environments. The following section details previous studies that use different techniques to tackle the power management issue.

As seen in [2], user behavior has a direct impact on energy consumption, therefore, some approaches considered user behavior and recommendations. These studies are based on encouraging users and recommending them to take actions that reduce energy consumption. It also includes raising awareness among users about their consumption by showing them visual information and notifying them. In [61], authors focused on the individual level by studying the initial choice of a user to use an energy inefficient application. Likewise, [8] focused on the enterprise level by displaying current consumption and the amount of available renewable energy in collective open workspace. Otherwise, [15] designed a community-scale energy feedback system based on building power meters. It increased the visibility of a district’s energy consumption. In addition, others used games to show users their energy consumption and motivate them to turn off unused appliances [27,34,40].

Some authors have suggested scheduling tasks defined by changing the time of execution of a specific task. Scheduling is usually done to benefit from energy production when it is greener [64], better energy efficient execution time slot [43], or during the absence of users [48,55]. In addition, scheduling could have a target of detecting unused devices to change their operating mode [5,17,29]. In [17], authors proposed an approach that minimizes energy consumed by home appliances during standby mode, where connected outlets cut off the current from a device consuming below a certain threshold. Likewise, [5] proposed energy monitoring and saving functions that reduce standby power automatically by cutting-off electricity for unused devices. In [29], an approach that compromises between energy efficiency and reliability of a system in both shutdown and scale-down scheduling techniques was proposed. Scheduling is implemented in different contexts such as data centers [1,42,45],Wireless Sensor Networks (WSN) [9,49], smart homes [2,28], and IoT [44].

Improving system design and using newer technologies have a significant impact on reducing energy consumption. Therefore, some studies aim to raise a device’s efficiency while reducing its power consumption by making changes in the design phase of a device. It can take the form of using newer technologies or making changes in the architecture and design of the system. In [21], design decisions were made after evaluating and redesigning different mechanical principles, regulation algorithms, and energy consumed during different communication scenarios. In [13], a model-based design methodology for residential micro-grid was proposed. In addition to a simulation presenting structural and behavioral parts of a residential micro-grid system. In [54], an energy management framework used for autonomous electric vehicles in the smart grid was proposed. Energy savings in this system were done during design (architecture and communication protocol). In [41], contextual collected data and machine learning techniques were used in the context of a cloth dyeing factory. Their goal was to identify and predict inefficiency in order to change the manufacturing process.

Other studies focus on power demand and supply. Power demand changes regularly with time, and so does the power supply, especially with renewable energy such as photovoltaic cells and wind turbines. Providers need to match their power production with the demand to be as efficient as possible [50]. Many methods are used such as predicting the power needed at a specific time based on historical records [51], balancing available power supply and demand [56,63] or storing energy when it is available which is the cape for electric vehicles [47,65].

More advanced studies proposed adapting approaches based on contextual data. These approaches are based on the ability of a system to change its initial configuration by tuning some parameters allowing it to adapt to changes that take place during run-time. Some are based on a finite number of cases that were predefined during design time or multiple predefined operating modes. In [19], authors presented an energy-efficient reconfiguration tool built on Raspberry Pi in an intelligent transportation scenario. It identified energy-consuming concerns at design time, analyzed configurations and variants, created a file containing all the possible configurations and the consumption of each of them, selected an initial configuration, and reconfigured devices at run-time according to the context. The authors in [26] proposed multi-operating modes for each layer of the system to increase energy efficiency. In this approach, the optimization of each layer of the system is done by the layer itself based on predefined modes (e.g., sleep, active, low frequency, high-frequency modes). The authors in [3] ensured energy optimization while satisfying the user comfort by proposing a smart zoning multiple-mode feedback system in a smart building based on the usage of each room, its orientation, and its occupancy. Each zone can be in normal utilization, pre-cooling, or power-off mode. Other solutions propose autonomous systems that use machine learning techniques and have knowledge bases.

Recently, there is a growing body of work focused on using RL for energy management in smart homes and buildings [36,58]. In [30], a machine learning energy manager for hybrid electrical vehicles (which have an internal combustion engine and an electric motor) was proposed. A nested reinforcement learning approach was adopted where an inner-loop was responsible for choosing the electric and fuel engines to minimize fuel usage, while the outer loop was in charge of modulating the battery health degradation. Finally, another promising line of research would be the use of both ontologies and machine learning to accomplish context understanding that leads to better power management. Web-of-Objects (WoO) [24] defines a systematic description of smart distributed applications enabling the integration of any connected device. It considers some contextual data (e.g., weather and location). However, power consumption per device was not considered. In [46], authors proposed an intelligent multi-agent system, called Thinkhome. It was responsible for the execution of control strategies that manage the building state. It introduces the semantic context to artificial intelligence by using an ontology for knowledge representation. ThinkHome considers mainly user comfort, user behavior, contextual information (e.g., weather), building information (e.g., spaces and walls), and energy information (e.g., environmental impact and energy sources). Lack of heterogeneity is the main drawback of this approach due to its focus on Heating, Ventilation, and Air Conditioning (HVAC) systems without studying other types of devices. In [25], authors modeled the energy management system using a Markov decision process. They described states, actions, transitions, and rewards of the environment. Their environment is based on a smart energy building connected, an external grid, a distributed renewable energy source, an energy storage system, and a vehicle-to-grid station. In their study, actions were limited to {Buying, Charging, Discharging, Selling} energy, thus, a high-level solution with no impact on individual devices. In [31], authors proposed a RL algorithm that ensures user satisfaction, followed by energy savings and load shifting. It focused on houses equipped with renewable energy resources and setting a dynamic indoor temperature setpoint. A multi-agent RL for home-based demand response was proposed in [57]. Each of the agents was responsible for a type of device (non-shiftable, power-shiftable, time-shiftable, or electric vehicle). In [32], authors proposed a home energy optimization strategy based on deep Q-learning (DQN) and double deep Q-learning (DDQN) to perform the scheduling of home appliances tasks. In [35], authors proposed a demand response algorithm of the next hour for home energy management systems. It focused on reducing electricity cost by shifting loads based on historical data. [60], proposed a deep RL energy management algorithm based on Deep Deterministic Policy Gradients (DDPG) to control a HVAC system. However, an important issue in the previously mentioned existing research is that their environments are static and it is difficult to train agents in new environments.

Theoretical development

Framework architecture

The approach proposed in this study aims at providing an intelligent power management solution for connected environments based on three components: (1) an automated approach to empirically generate power estimation models (PEMs) for a large set of devices; (2) an extension of the SAREF ontology as a knowledge-base contextual understanding component; and (3) an automated knowledge-based reinforcement learning environment and agent generator that generates a decision-making agent responsible of choosing the optimal actions to be executed. It also includes a data repository component formed by a collection of databases that include previously collected metrics, states, applied actions, rewards, power consumption, power estimation models, and potential external data (e.g., outdoor temperature). The proposed architecture ensures the following criteria: high autonomy maturity level, flexibility, heterogeneity of devices, general-purpose, and ability to deal with a large variety of metrics. This architecture provides high flexibility in dynamic environments, therefore, it is not domain specific and can be implemented easily in another domain. Any changes or updates that occur in the environment are considered and lead to the calibration of estimation models and reinforcement learning agents. It also guarantees a high autonomy level where high-level user-oriented energy policies control the infrastructure operations in an automated manner. Beside the high flexibility, implementations can be conducted on any type of device because of the knowledge-base component and the automated power modeling technique. Therefore, high heterogeneity of devices and metrics is guaranteed.

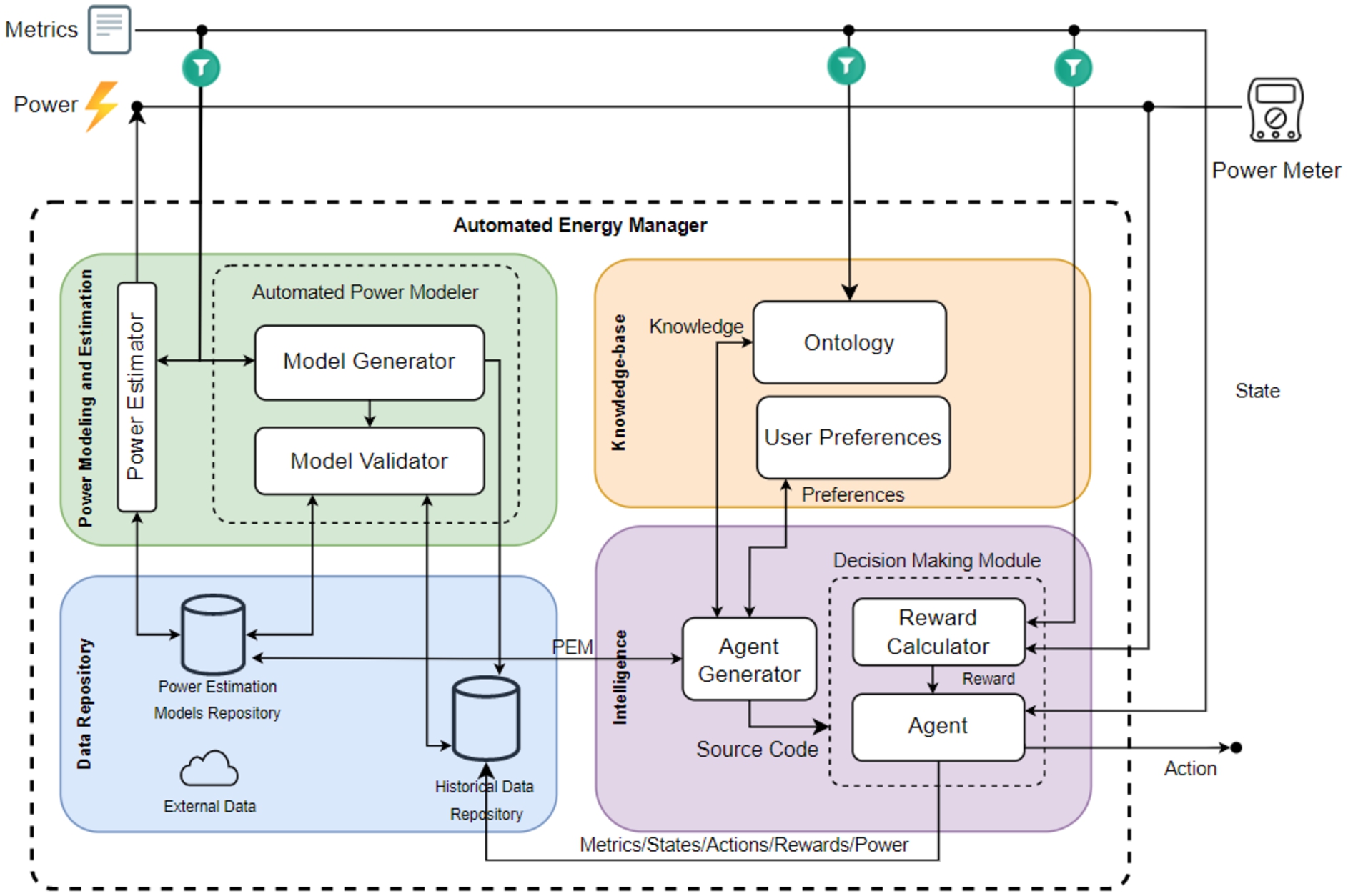

Fig. 1 shows the architecture of the automated intelligent energy management approach, including its distinct but complementary components. It is able to collect metrics, estimate power consumption per device, and represent knowledge present in the environment and the relationship between devices. In addition, it can generate a reinforcement learning environment used for training an agent with the policy of optimizing energy consumption while respecting user preferences.

Automated energy management architecture.

The knowledge of real-time power consumption of devices is an essential and challenging need to quantify and monitor energy leakage and saving [16]. The used automated power estimator ensures up-to-date and accurate power models with error rates as low as 0.33% and up to 7.81% for linear models, and 0.3% up to 3.83% for polynomial models [23]. It aims to empirically generate power models for, potentially, unlimited devices and configurations. The power estimation process follows a crowd-sourcing architecture where benchmarking components can run on any device, generate empirical data, and lastly, the power model generator component will generate an accurate power model for the specific device, or improve the model if a previous one already exists. Generated power estimation models can be shared between different devices in the environment. Monitoring software can connect to this component to query and retrieve the most accurate and up-to-date power model for their devices. Once the model is generated, power consumption can be estimated with negligible overhead in real-time. The overall overhead difference between using the estimator and not is quite low with an absolute average difference of 0.084 watts (corresponds to a relative difference of 1.32%) for the RPi Zero W, and only 0.109 watts (corresponds to a relative difference of 0.72%) for the RPi 3B+. With time, the accuracy of the model can be improved as more data are collected and fed to generate more accurate regression models. In this paper, we consider that an accurate real-time power estimation model was previously generated for each device in the environment.

Knowledge-base representation

An ontology is a defined set of concepts and relationships used to represent the meanings and a shared understanding in a certain domain [39]. Ontologies are considered highly effective for knowledge modeling and information retrieval. They provide a common representation and definition of the concepts and the relationship between them. In addition, ontologies support inference to discover new relationships and knowledge. They are scalable and their reuse is possible. Therefore, an ontology is used to formally represent the environment’s knowledge.

SAREF, or Smart Applications REFerence Ontology [6], is a well-known and adopted ontology used to represent devices, their properties, and capabilities in the context of smart homes. It focuses on defining the properties of devices, which can be measurable, controllable, or both. Moreover, SAREF defines the type of device, such as appliance, sensor, actuator, meter, or HVAC. Overall, SAREF provides a well-defined and organized framework that not only enables developers and researchers to better understand the properties and capabilities of different types of devices, but also assures a high level of interoperability between devices.

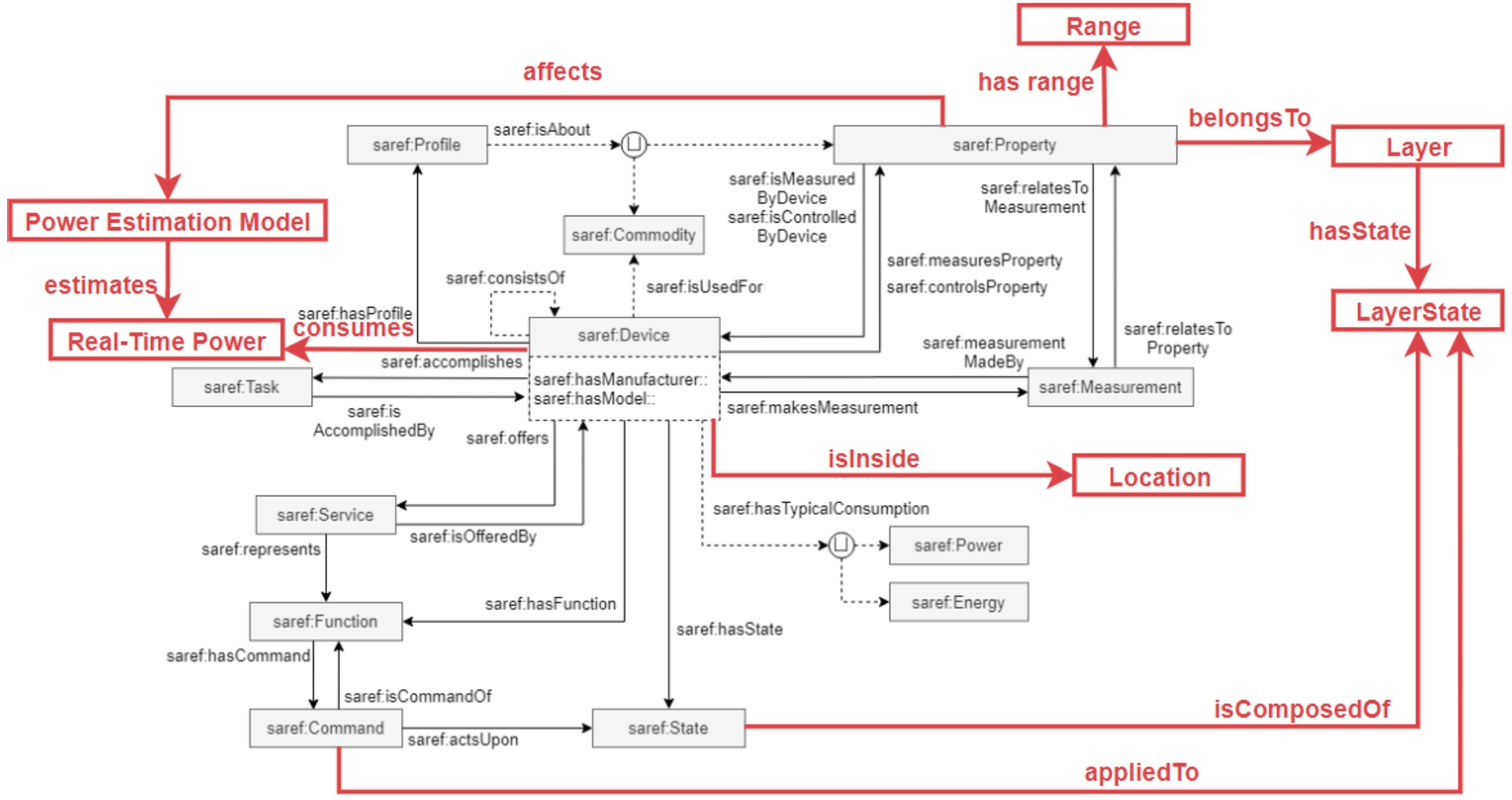

Moreover, existing ontologies do not consider power estimation and measurement in real time. Therefore, it is necessary to extend one of these ontologies to represent this concept, as seen in Fig. 2. The way these concepts are defined in SAREF provides a compatible and easy way to represent the knowledge base for reinforcement learning model generation.

SAREF extension overview (the extension is represented using the red color).

In order to include the real-time power and different layers of a device into the knowledge base, we propose the extension of the SAREF ontology with the following classes, attributes, and relations:

ecps:Location is the geographic space in which a device is deployed. It helps building a contextual understanding of the environment and identify devices that share the same location.

ecps:Layer is the structural level of the system to which a property belongs. It can be application, computational, network, storage, and contextual.

ecps:LayerState is the state in which a specific layer is running. It composes the state of the device.

ecps:Power Estimation Model is the mathematical model that takes as input the properties of a device and returns the power consumption.

ecps:Real Power is the real-time power consumption of a device. It can be measured directly by a hardware wattmeter or estimated using an estimation model.

ecps:hasRange represents the accepted values for each property. It takes the form of a list of accepted values or a range with a minimum and maximum accepted values. Ranges specified with a minimum and maximum can include a step that defines a fixed value increased or decreased from a property value.

ecps:deviceId, ecps:deviceName, ecps:propertyId, ecps:propertyName represent respectively the id and name of a device. They are used to identify a device from another. In addition to the id and name of a property.

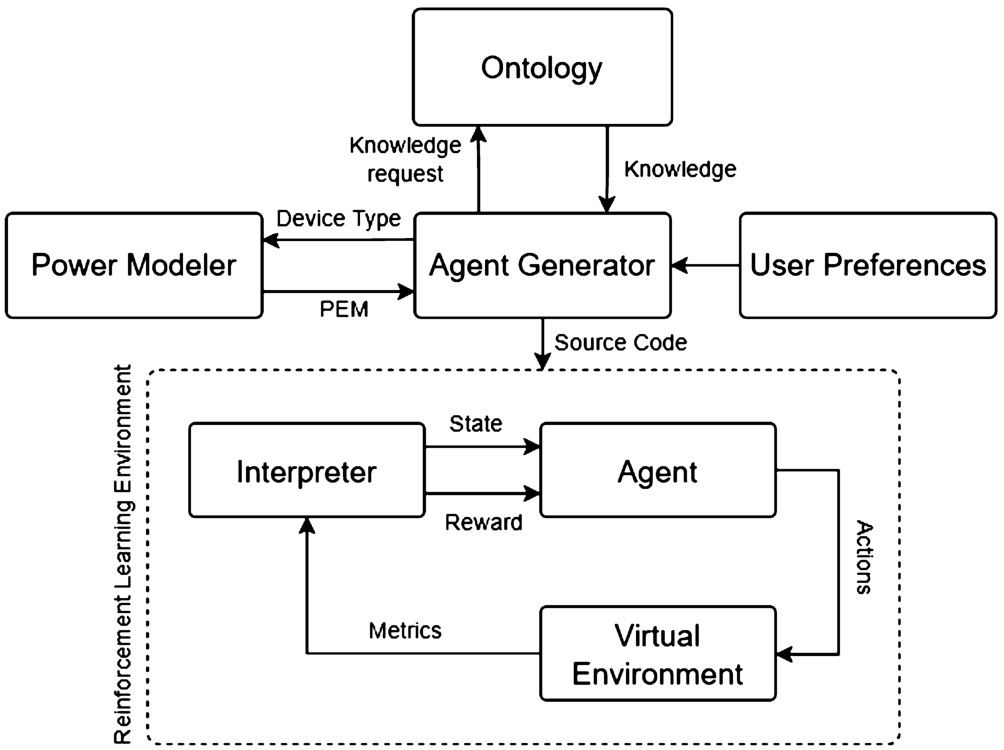

Fig. 3 presents the architecture of the reinforcement learning environment and the agent generation process. The agent generator aims to collect intelligence from the ontology and create a virtual environment and a reinforcement learning model based on the devices present in the environment and the relations between them. First, the agent generator requests necessary knowledge from the ontology and the power estimation models repository for each device. Once this information is retrieved, the agent generator creates the source code of the reinforcement learning environment or modifies the existing one. The generated source code includes all the necessary information needed to optimize energy consumption using reinforcement learning techniques such as states, actions, reward calculation, and power estimation models.

Reinforcement learning environment generation architecture.

Ontology implementation

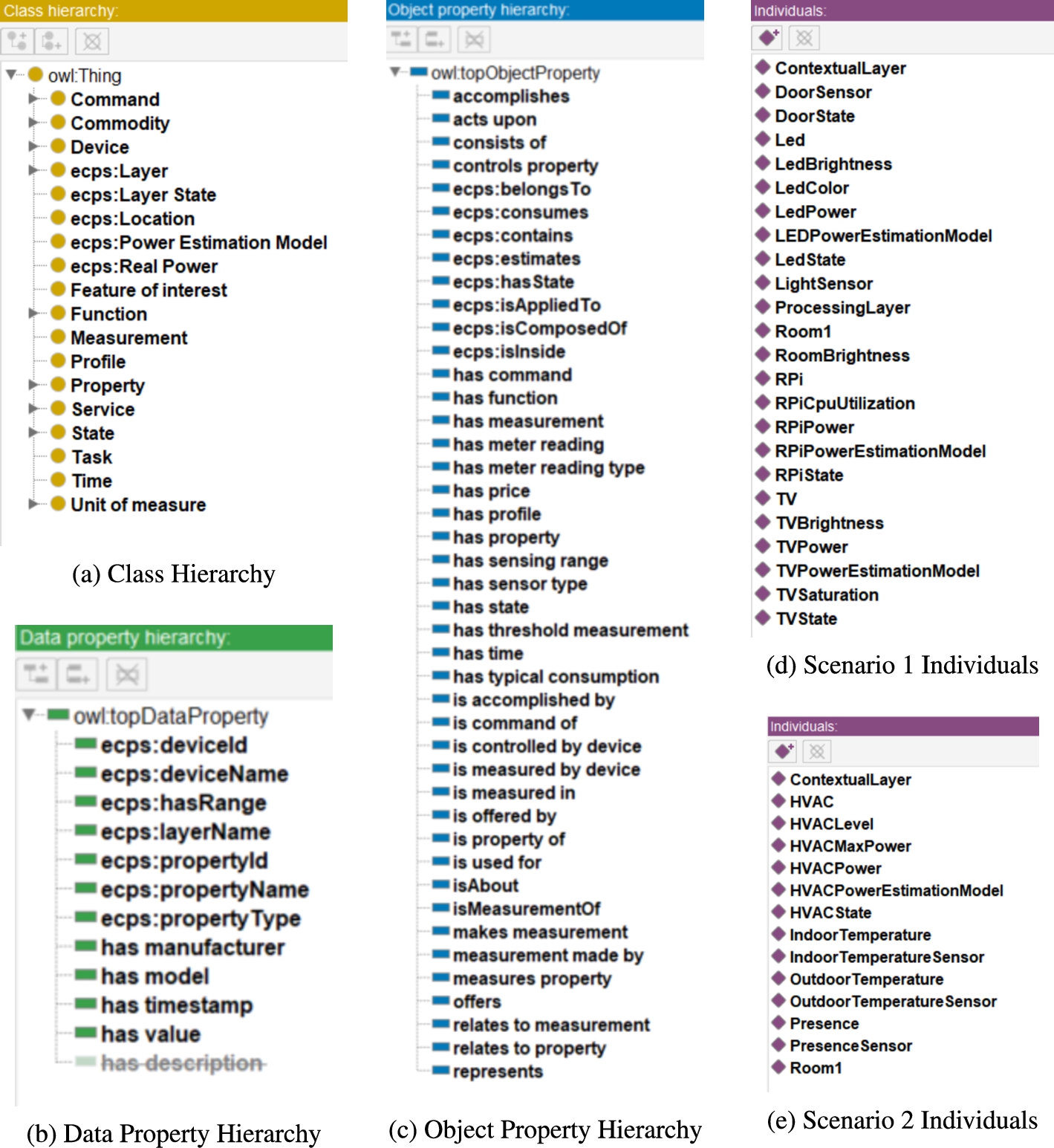

The ontology is defined using the Web Ontology Language (OWL) [4], a language of knowledge representation for ontologies in the Protégé ontology editor and knowledge management system [38]. As seen in Fig. 4, a series of classes, data properties (attributes), and object properties (relations) are defined in order to make the ontology suitable with the agent generator. Newly introduced concepts have names that start with the prefix ecps.

We also create individuals for each of the defined case studies. Fig. 4d and Fig. 4e define each device, controllable property, measurable property, and power estimation model present respectively in the living room scenario and the HVAC scenario. In addition, the relation between these concepts is created to represent the knowledge about the environment to the system.

SAREF ontology extension implementation in protégé.

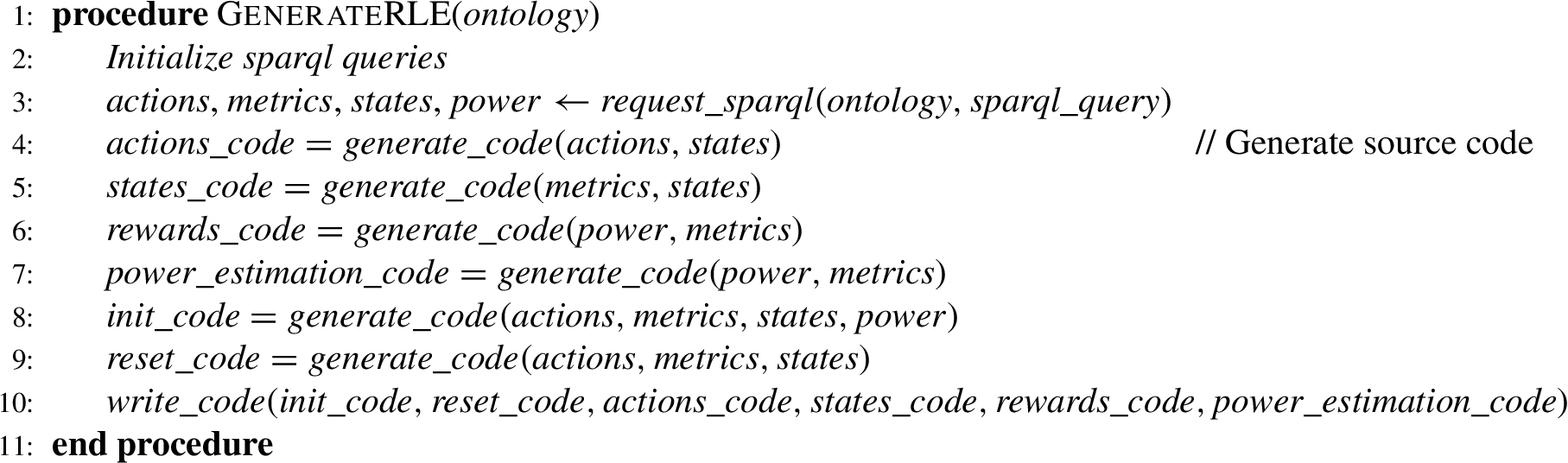

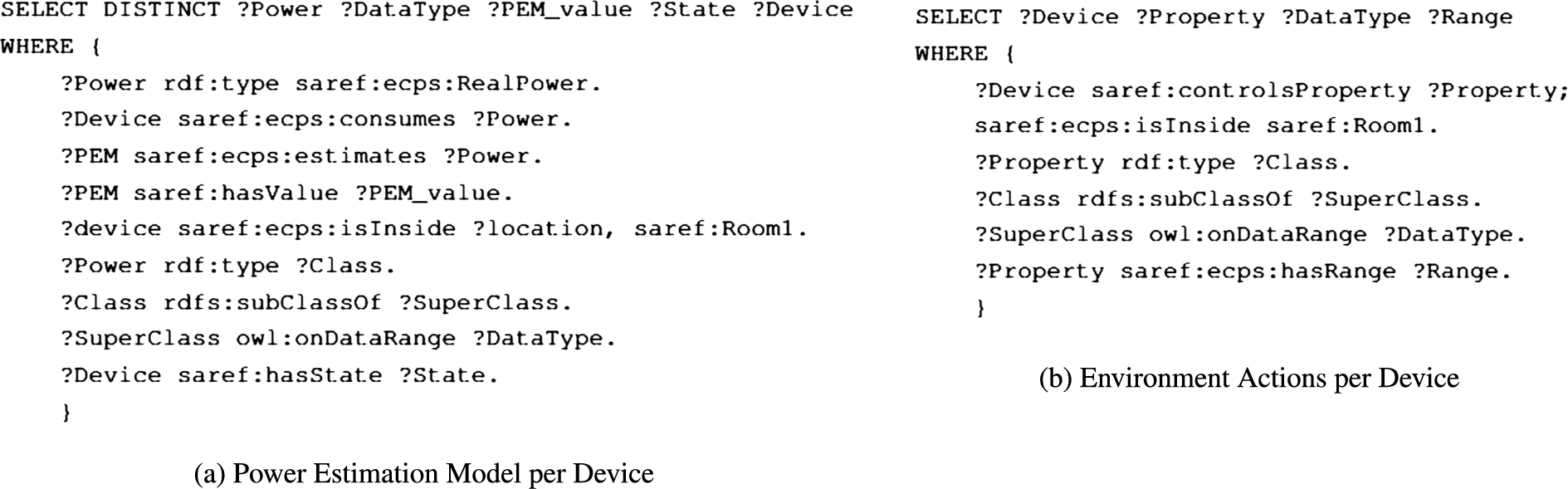

The agent generator is responsible for the generation of each section of the reinforcement learning agent based on TensorFlow and Keras-RL, in addition to an environment built using OpenAI Gym. Algorithm 1 provides the pseudocode of how the reinforcement learning environment is generated. First, a series of SPARQL queries are executed to collect knowledge from the ontology using the request_sparql function. Graphs containing information concerning actions, metrics, states, and power estimation models of each device corresponding to a unique location are collected. Fig. 5 shows two examples of the SPARQL queries used to collect power estimation models and actions each device can accomplish.

Reinforcement learning environment generation algorithm

SPARQL queries examples.

The collected knowledge is used for the creation of the source code of all the functions that compose the reinforcement learning environment. As seen in Algorithm 1, a function called generate_code generates the source code of each of the reinforcement learning environment sections based on the collected ontology knowledge. It generates the source code of six main components: (a) actions, (b) states, (c) rewards, (d) power estimation, (e) initialization, and (f) reset. A template of the source code of the reinforcement learning environment is created. It includes the necessary libraries and fixed chunks of code. However, the automated source code generator writes the majority of the code by using the write_code function. It identifies the blank variable sections of the source code that change from one environment to another and writes the correct code in each section. It can generate the source code of any number of properties and devices due to its extensible way and ability to request knowledge and use it.

Reinforcement learning environment algorithm

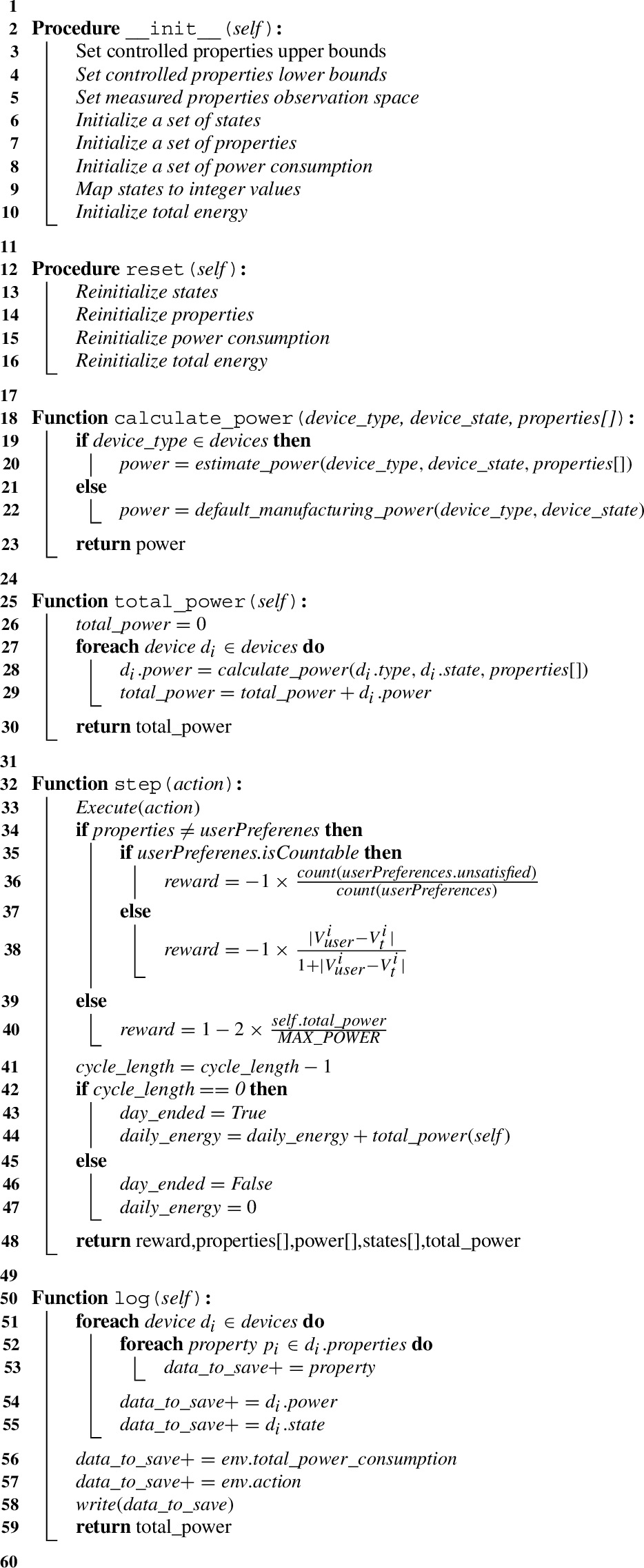

Once the source code is generated, the reinforcement learning environment is ready to be launched. Its structure is presented in Algorithm 2. The following explains the role of each of its functions:

__init__ function defines the observation and the action spaces, i.e., the minimum and maximum acceptable values for each observed metric or action. In addition to a few other attributes, i.e., accepted values for each property and the initial state of each device. This function also initializes states, properties, and power consumption per device. It also encodes and maps properties and states from their initial format to a numerical or Boolean format to be accepted by the reinforcement learning algorithm.

step function executes a step in the environment by applying an action and returns the new reward, observation, power consumption per device, and other info. It includes the list of possible actions and the reward calculation based on user preferences and total power consumption. In this function, randomness and external changes impacting the environment can be applied (e.g., outside temperature changes).

calculate_power function contains the power estimation models for each device in the environment. It returns the value of the power consumption of a specific device at a particular time.

total_power function returns the total power consumption of all devices in the environment at a particular time.

reset function re-initiates the environment to its initial state without changing the observation and the action spaces.

log function records events occurring in the environment into a CSV file, in particular, the value of each property, states of devices, power consumption for each device, total power consumption, executed action, and reward value.

The reward value is equal to a rational number bounded between −1 and +1. It is calculated based on three equations (1), (2), and (3). First, it considers user preferences and makes sure all user preferences are respected. Doing so guarantees the respect of the user preferences before starting to manage power consumption. If any preference is not respected, the reward will be negative between 0 and −1. It is calculated by counting the number of unrespected preferences and dividing it by the total number of user preferences, as seen in Eq. (1), when the user preferences are not measurable.

However, if the user preferences are measurable (i.e., temperature), the reward is calculated based on the difference between the property value

Once all user basic preferences are respected, Eq. (3) is adopted to calculate the reward and ensure a higher reward for each power-aware action.

This equation is bounded between −1 and 1. Its value is −1 when the current power consumption is equal to the maximum power consumption and tends to 1 when the power consumption goes down to 0. The equation is modeled in such a manner to guarantee that the highest reward value is given to our agent if the energy consumption in the environment is the lowest possible while respecting user preferences. It also guarantees that the lowest reward is obtained when the power consumption is at the highest level possible. Once user preferences are not respected anymore, we stop calculating the reward using Eq. (3) and use again Eq. (1) and Eq. (2). Reaching 0 as power consumption for the entire environment is difficult due to user preferences but remains possible in some extreme cases (occupant is not present in a room with only lamps that should be all turned off).

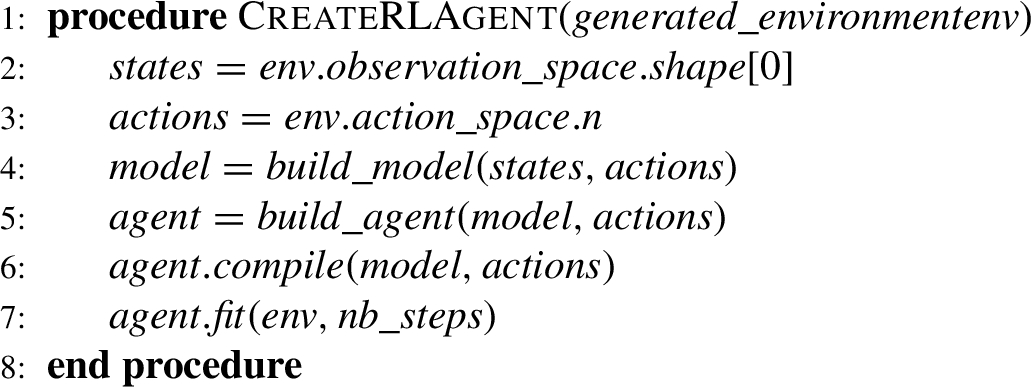

Algorithm 3 shows the model and agent generation pseudocode. First, states and actions are defined based on the previously generated reinforcement learning environment. Then, the model is built using the build_model function. The model is based on a Deep-Q network that takes as an input layer the set of states and returns possible actions in output nodes. It has two hidden dense Keras neural network layers along with a ReLU activation. The build_agent function specifies the policy and builds the agent based on the previously built model. The agent and model are compiled using the compile function, they are later used for training and testing. The fit function trains the agent given the environment and the number of training steps.

Deep reinforcement learning model and agent

Smart home environments are scalable and contain devices that are quite heterogeneous having different types, interfaces, vendors, etc. In addition, these environments are dynamic as changes can occur to existing devices such as software updates, hardware updates that lead to a change in power consumption or location changes that impact the knowledge of the environment. In the following section, our aim is to ensure that all four components of our approach work cohesively to facilitate energy management while simultaneously considering user satisfaction. Our approach was evaluated in two separate case studies, both in the context of a smart home. The first case study (I) was conducted in a living room equipped with a variety of devices, while the second case study (II) involved a smart home with a heating system. These two studies provided us with an opportunity to analyze a variety of device types, data types, and customizable environments. The main objective of case study I is to reduce energy consumption with data provided from different devices, while the objective of case study II is to guarantee that it is possible to increase user satisfaction and reduce energy consumption.

Living room case study

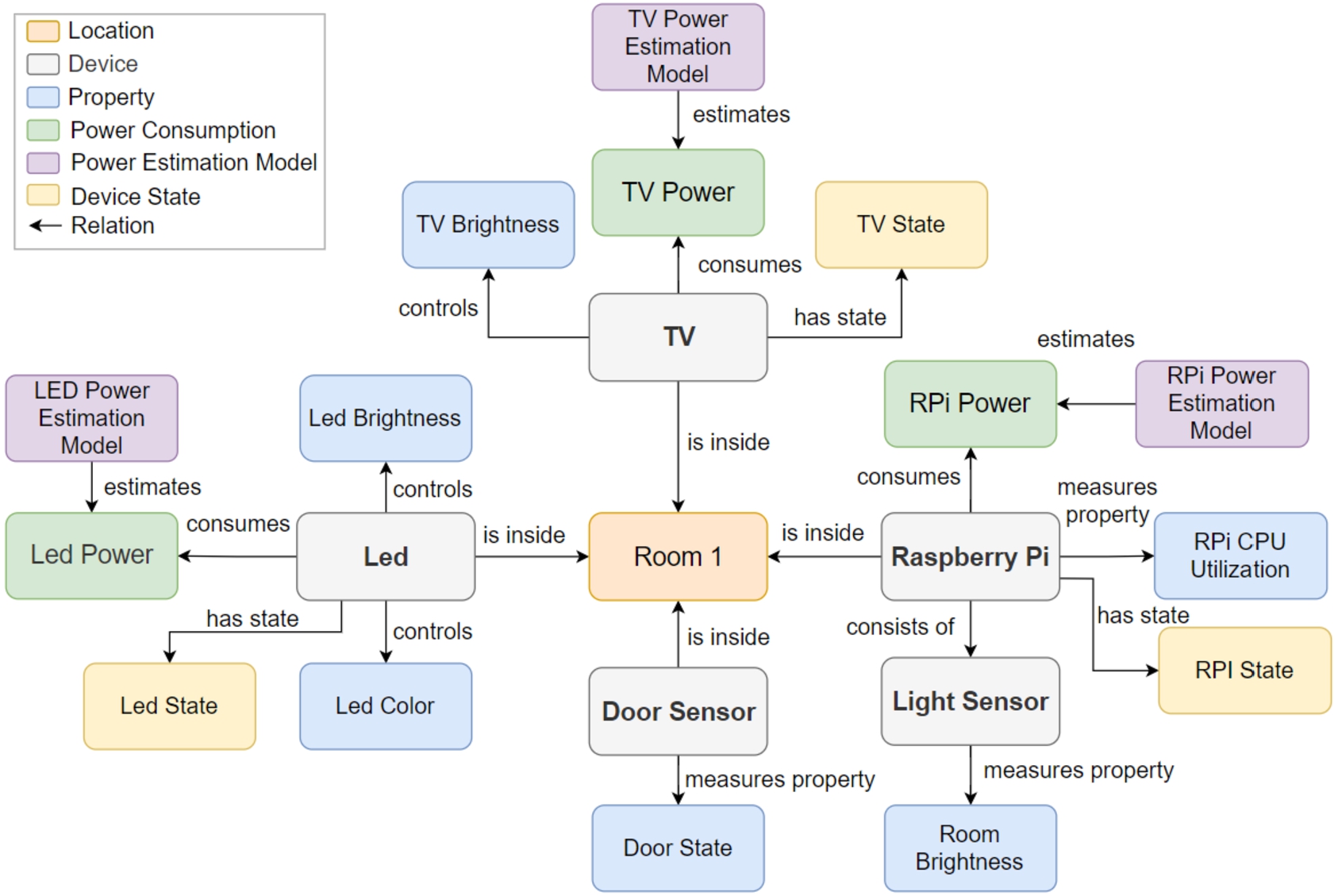

As seen in Fig. 6, the first simulated case study is defined as a living room of a smart home with several devices. A led bulb controls its brightness (LedBrightness) and color (LedColor). A TV controls its brightness (TVBrightness). A Raspberry Pi that measures its CPU utilization with a mounted light sensor that measures the room brightness. The LED bulb, TV, and raspberry pi are considered power consumers and have each a power estimation model that gives their power consumption in real-time. A door sensor checks if the door is opened or closed without being able to change its state. The door sensor is not considered as a power consumer because its power consumption is minimal and unchangeable. LedBrightness has a minimum value of 0 and a maximum value of 100 and can be incremented or decremented by 1% per minute to ensure a smooth light transition that is not annoying for the users. LightColor can have one of the following values: red, blue, green, and white. Devices states are usually on, off, or sleep. However, they are not limited to these states and can take many other forms. For the living room scenario, user preferences are generated based on [33]. They are manually adjusted to add randomness that may occur from a day to another, between weeks, and during weekends.

Devices, properties, and relations representation of the living room case study.

Another simulated case study is conducted with different devices in a different context. It is based on a HVAC system in a smart home. The room where the HVAC is mounted is equipped with an indoor temperature sensor and a presence sensor. In addition, a temperature sensor is positioned outside the room to capture the outdoor temperature. We model the environment using the previously proposed extension of the SAREF ontology as done in the living room case study. Then, we launch the agent generator to build the environment and the reinforcement learning agent. To simulate indoor temperature dynamics in the environment, the exponential decay model in Eq. (4) is adopted [7].

Equation (4) was widely adopted in previous research such as in [10,14,52,59,62]. This experiment used the outdoor temperature dataset proposed by USCRN/USRCRN [11] due to its accuracy, low error rates, and high quality of collected data. The dataset is cleaned and filtered mainly to remove missing data indicated by the lowest possible integer, i.e., -9999,0. The used dataset was collected in Santa Barbara, California, the USA in 2021. User temperature preferences are extracted from the study conducted in [22]. User temperature preferences range between

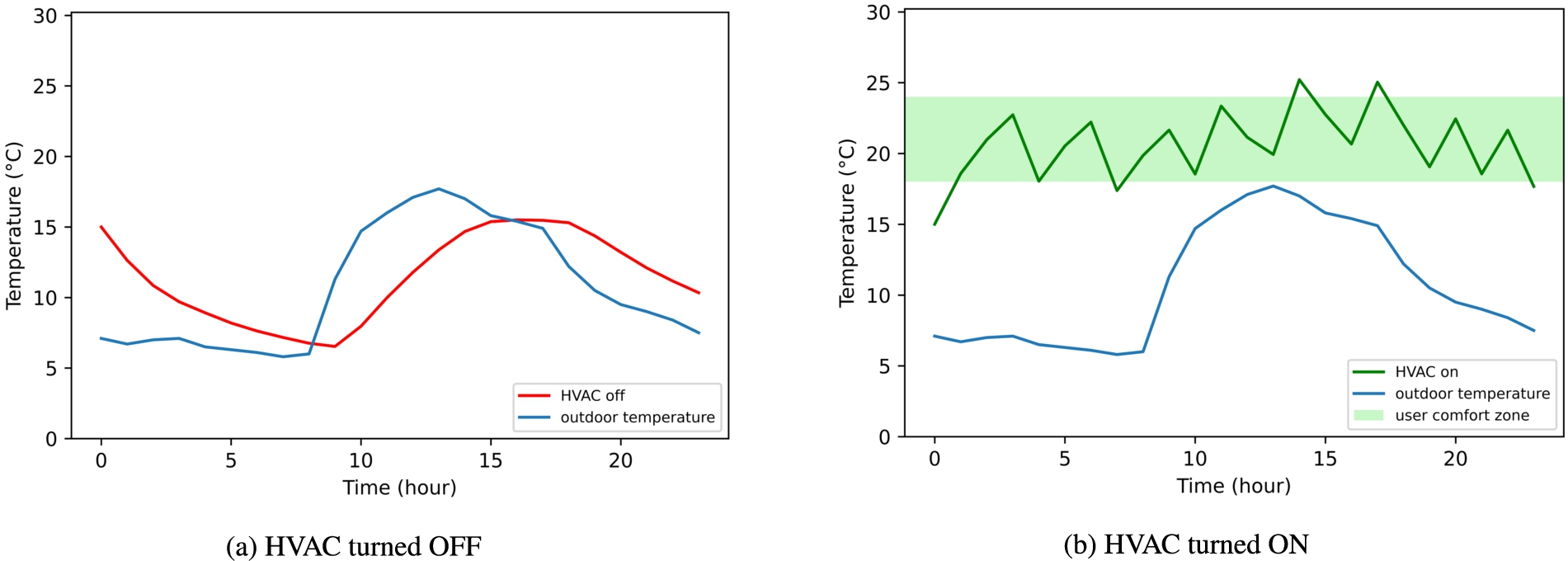

Fig. 7 shows the outside temperature of a sample day in January 2021. In addition, it shows the calculated indoor temperature with the HVAC turned OFF (Fig. 7a) and ON (Fig. 7b). In Fig. 7b, two conditions are added to guarantee that the inside temperature respects user preferences and illustrates a real-life use case.

Outdoor and indoor temperature samples.

A prototype of the proposed power management approach has been developed to reduce power consumption in smart connected environments. In particular, the following two application case studies have been developed, deployed, and experimented in a smart home for validation purposes.

Living room case study

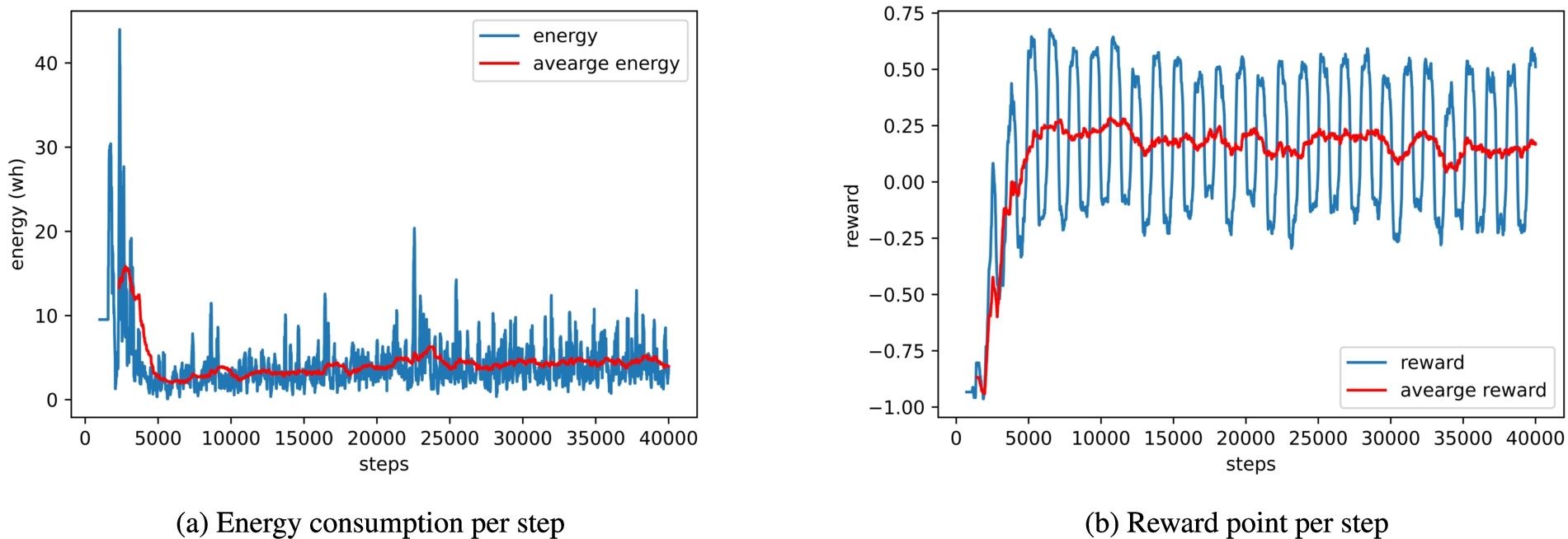

Living room case study experimental results.

A first experiment has been conducted for 70 episodes (100k steps). Each episode lasts for 24 hours and one step is executed per minute (1440 steps per episode). Once per minute, metrics and states of devices are monitored. Then, the agent chooses an action to be executed per device. However, the agent has the possibility to execute a neutral action that keeps all devices in their current state. User preferences for the 28 days are defined (i.e., led state, led brightness, led color, TV brightness, and TV state). Fig. 8 shows the results of the living room case study. Fig. 8a shows the total energy consumption of the environment per step and their average per day. Fig. 8b shows the total reward points and their average per day calculated by the agent. In such scenario, energy has converged during a brief period of time (4 days), and the reward has passed from a negative value to a positive one meaning that the user preferences are respected and that the power consumption is at its lower accepted values. This study case shows that the proposed framework can deal with different types of devices, properties, and actions related to these devices. It validates that this approach can be used to represent knowledge, generate reinforcement learning environments, and train agents. In addition, it confirms the ability to improve energy efficiency and savings in the long term.

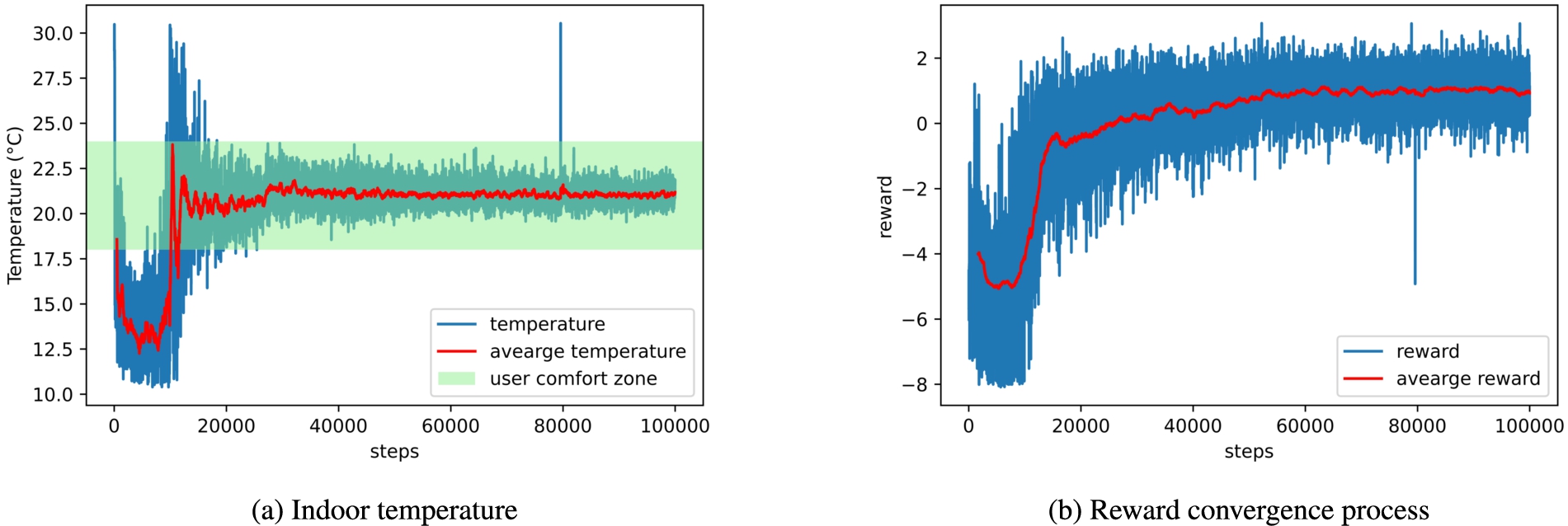

A second experiment was conducted in the HVAC environment for 596 episodes (100k steps). Each episode has lasted one week with a frequency of one step executed each hour (168 steps per episode). User preferences of the minimum and maximum accepted temperatures are defined for an entire week. Fig. 9 shows the results of the HVAC case study. Results show an increase in the reward per episode, therefore, the user satisfaction and the power consumption, as seen in Fig. 9b. User preferences vary between the day and the night. However, for the purpose of simplicity, Fig. 9b graphs the user comfort zone between the lower and higher accepted values. Fig. 9a shows the room temperature per step and the average room temperature per episode. Results show that in the first part of the training (before the 20 000th step), the indoor temperature is under the user preferences and converges with time to respect user preferences. Therefore, validating the ability of the proposed reward function to lead to a better user experience. Initial room temperature is randomized for each new episode ranging between

HVAC case study experimental results.

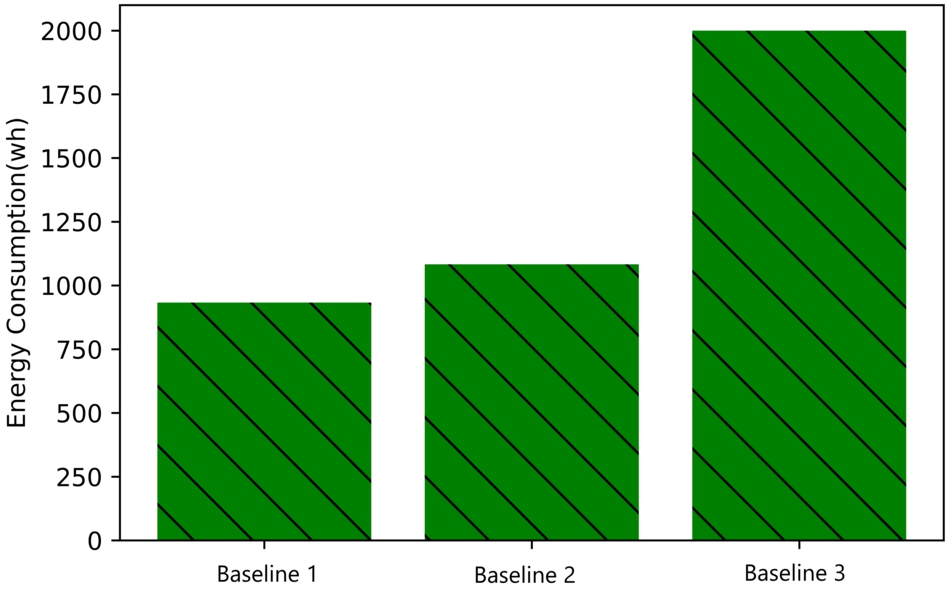

To compare the energy consumption of the proposed approach to other control values, we identify three different baselines:

Baseline 1: Represents the results of the proposed automated energy management approach, proposed in this paper, including the knowledge, environment and model generation, and training of the agent. It also considers user preferences and presence. Baseline 2: Represents an environment where user preference indoor temperature is set to Baseline 3: Represents an environment with a traditional electric heater that stays ON all the time without capturing and considering any of the indoor or outdoor temperatures.

Fig. 10 shows the mean value of energy for each of the previously defined baselines. The results show that the proposed method has the lowest energy consumption compared to the two other traditional methods. Baseline 1 energy consumption equals 932,82 wh, while baselines 2 and 3, respectively, are 1083,3 wh and 2000 wh. We consider that the model has already converged before step number 40 000 and energy consumption is almost stable. Therefore, in the 1st case, the average energy consumption is calculated with data corresponding to steps higher than 40 000.

Mean value of energy in the HVAC case study.

The presented results highlight a significant decrease in power consumption while respecting user preferences in both case studies. In addition, our proposal performs well given the low convergence period (4 days for case study I), the high user satisfaction (the temperature is maintained in the comfort zone for case study II), and the energy consumption decreases. From these results, it is clear that the reward functions are well adapted as feedback to the agent that has the two main goals of facilitating energy optimization and user comfort.

The implementation in two use cases, with completely different devices, confirms the general purpose of our approach because the code generation is done in an automated way allowing the adaptation of the scenario to the framework. It proves the possibility of generating customizable environments with various devices. The approach also showed the capability of dealing with different metrics that correspond to different scenarios and layers of CPS, therefore, we conclude that the approach is extensible on the level of a smart home.

Although our automated energy management framework is established as an efficient way to manage energy consumption, it is important to acknowledge its limitations.

One limitation of our framework is that it utilizes a Markov decision process, where the current states of devices exclusively determine the direct future action, without requiring any knowledge of the past. This approach enables the system to react efficiently to changes in device states and provides real-time decision-making capabilities.

Another limitation in our energy management framework is that it relies on a single agent to simultaneously optimize two distinct objectives: reducing power consumption and increasing user satisfaction. While our current approach has demonstrated promising results, this design choice may not be ideal for all scenarios. However, emerging multi-agent reinforcement learning algorithms offer solutions to handle complex environments with multiple optimization problems. In these approaches, multiple agents learn to coexist in a shared environment, with each agent motivated by its own reward function and executing actions that align with its objectives, such as energy efficiency, user comfort, or cost reduction. By utilizing multi-agent RL, we could potentially achieve better outcomes and overcome the limitations of our current approach.

Conclusion and future directions

In this paper, we proposed an automated management framework designed to optimize energy usage in smart homes using knowledge representation and reinforcement learning techniques. Our approach allows the representation of knowledge and generation of virtual learning environments with a spotlight on energy to provide the best action on how to reduce energy consumption while respecting user preferences. The framework has been validated through two proof-of-concept case studies in a smart home environment, where comprehensive experiments were conducted to evaluate its effectiveness.

The results of these experiments demonstrate the significant potential of this approach in reducing energy consumption while still meeting the user’s preferences. Moreover, the framework demonstrated high user satisfaction and was able to achieve its objectives, defined by our three rewards equations, within a significantly short convergence period. The use of knowledge representation and reinforcement learning enables the framework to learn from its past experiences and improve over time, making it a sustainable and effective solution for managing energy in smart homes. This proposed energy management framework represents a significant step forward in smart home technology, enabling more efficient energy consumption while still respecting user preferences. The results of the experiments demonstrate the effectiveness of the framework and its potential for widespread adoption.

Although the proposed method has shown promising results in the current study, much research still needs to be done to fully evaluate the potential of this proposal in a real-world environment with physical sensors and actuators in real homes, where the behavior of the system can be closely observed and analyzed. In addition, we plan to expand the implementation of the method to cover additional home devices on a larger scale. The extension could include many rooms in a home or even multiple homes in a building that will provide valuable insights into the scalability of the method and how it performs in more complex environments. Furthermore, it is important to investigate the impact of such an approach over a longer period of time. Doing so will help us understand how the behavior of the system evolves as users’ needs and habits change over time. It is essential that future research looks into extreme cases (e.g., during the COVID-19 pandemic) that will help us understand how the system can adapt to sudden changes in user behavior and how it can continue to provide good results even in unpredictable and rapidly changing circumstances. Finally, it will be critical for future research to investigate multi-agent RL algorithms that can be used as solutions for complex environments with more than one optimization problem.

Footnotes

Acknowledgement

The project leading to this publication has received funding from Excellence Initiative of Université de Pau et des Pays de l’Adour – I-Site E2S UPPA, a French “Investissements d’Avenir” programme.

Conflict of interest

The authors declare that they have no conflict of interest.