Abstract

The International Competition on Computational Models of Argumentation (ICCMA) focuses on reasoning tasks in abstract argumentation frameworks. Submitted solvers are tested on a selected collection of benchmark instances, including artificially generated argumentation frameworks and some frameworks formalizing real-world problems. This paper presents the novelties introduced in the organization of the Third (2019) and Fourth (2021) editions of the competition. In particular, we proposed new tracks to competitors, one dedicated to dynamic solvers (i.e., solvers that incrementally compute solutions of frameworks obtained by incrementally modifying original ones) in ICCMA’19 and one dedicated to approximate algorithms in ICCMA’21. From the analysis of the results, we noticed that i) dynamic recomputation of solutions leads to significant performance improvements, ii) approximation provides much faster results with satisfactory accuracy, and iii) classical solvers improved with respect to previous editions, thus revealing advancement in state of the art.

.Introduction

Computational argumentation is a field of Artificial Intelligence (AI) that provides formalisms for reasoning with conflicting information. It finds applications in many different areas, ranging from healthcare [58] to explainable AI [80]. An

The

With each reiteration of the competition, the organizers added new tracks that followed the latest developments in the field of computational argumentation. ICCMA’17 proposed a special track (called Dung’s Triathlon) in which the solvers were required to deal with three consecutive enumeration problems, where the solution computed in the previous step could be used. The 2023 edition also features three special tracks: an approximate Track, a dynamic Track, and an ABA Track.5 The 2019 competition introduced a new track to evaluate the effectiveness of solvers in recomputing extensions with minor adjustments to a starting AF. In this track, specifically designed for dynamic solvers and approaches [3,13], participants were allowed to use previous results to solve the problem more efficiently in a slightly modified framework rather than starting from scratch with each change. Typically, an AF represents a temporary “screenshot” of a debate, and new arguments and attacks can be added/retracted to account for new knowledge during the evolution of a discussion. If we consider disputes among users of online social networks [49], arguments/attacks are continuously added/retracted by users to express their point of view in response to the last utterance. The ICCMA’19 track challenged solvers to handle a single added or retracted attack per modification. This aimed to encourage the creation of specialized solvers for dynamic scenarios. This approach often leads to better performance. The second novelty in ICCMA’19 concerned the use of

In organizing ICCMA’21, we intended to keep the main novelties brought by ICCMA’19 and also planned the introduction of a new track dedicated to structured argumentation (more precisely, assumption-based argumentation [24,76]). Unfortunately, technical issues with the platform used for running the competition prevented us from using Docker, and a lack of participants caused us to cancel the tracks dedicated to dynamic and structured argumentation. On the contrary, the community showed interest in approximate algorithms, leading us to introduce a track dedicated to solvers using such algorithms. A preliminary description of the ICCMA’21 organization can be found in [54], while a short description of participants and results is available in [55].

The rest of this paper is structured as follows. In Section 2, we describe the necessary background about Abstract Argumentation, related work on dynamic frameworks and motivations for including them in the competition, and finally, Docker containers. Section 3 lists and describes the computational problems we included in ICCMA’19 and ICCMA’21, while Section 4 surveys the input and output format the solvers needed to adhere to (dynamic frameworks extend classical input formats). Section 5 describes the solvers that participated in the competition, how benchmarks were assembled, how we ranked the solvers according to the obtained results, and the adopted reference solver. In each section, we emphasize the differences between ICCMA’19 and ICCMA’21. Section 6 reports a detailed analysis of the results, including a comparison with the best ICCMA’17 solvers and between dynamic and non-dynamic solvers in ICCMA’19, to evaluate their speed-up. A similar comparison is made for ICCMA’19 solvers on ICCMA’21 benchmarks. Section 9 introduces lessons learned from organizing the competition, mainly concerning the virtualization of solvers and output parsing. Finally, Section 10 wraps up the paper with conclusions and final thoughts about future work.

.Background and dynamic frameworks

We divide this section into two parts: fundamental notions about Abstract Argumentation are reported in Section 2.1, while Section 2.2 summarises the literature about dynamic AFs and related approaches.

.Abstract argumentation

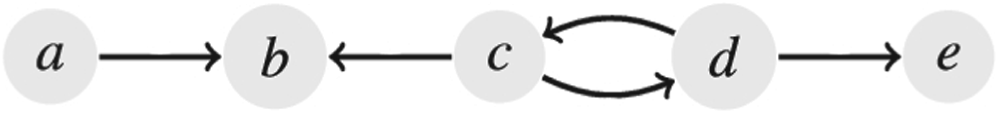

An

An example of an AF represented as a directed graph.

The

For a more detailed view of these semantics, please refer to [11]. Note that grounded and ideal extensions are uniquely determined and always exist [30,31]. Thus, they are also called

We report the definition of eight well-known problems in Abstract Argumentation, where the first six are decision problems:

Credulous acceptance Sceptical acceptance Verification of an extension Existence of an extension Existence of non-empty extension Uniqueness of the solution Enumeration of extensions Counting of extensions

Computational argumentation tools enable formalizing complex problems and understanding real-world situations where conflicting information must be considered to draw non-trivial conclusions. For example, verifying the acceptance of arguments and enumerating extensions is used in applications such as planning [67] and decision support [26]. For an in-depth discussion of the complexity results for the problems mentioned above, we refer to [32] and, in particular, to [51] for enumeration problems and [12,39] for counting problems.

In previous ICCMA editions, all the frameworks in each data set are static since all the AFs are sequentially passed as input to solvers, representing different and independent problem instances. Hence, all tasks are computed from scratch without taking any potentially useful knowledge from previous runs into account. However, AFs can be considered in practical applications as a temporary situation, which evolves when new knowledge becomes available that requires the addition or retraction of arguments and attacks. For example, users of online social networks [48] may engage in disputes and repeatedly add or retract arguments and attacks to express their point of view in response to the last move made by their adversaries in the ongoing digital conversation. Users often disclose as few arguments/attacks as possible in these situations. For this reason, ICCMA’19 also features additional tracks to evaluate solvers on dynamic Dung’s frameworks. The aim is to test those solvers dedicated to efficiently recomputing a solution after a minor change in the original AF. In this case, a problem instance consists of an initial framework (as for classical tracks) and an additional file storing a sequence of additions/deletions of attacks (between already existing arguments) on the initial framework, i.e. a list of modifications. This file has a simple text format, i.e. a sequence of

In [23], the authors investigate the principles in which a grounded extension of a Dung’s AF does not change when the set of arguments/attacks is changed. The authors of [28] study how the extensions can evolve when a new argument is considered. The focus is on adding a single argument interacting with a non-attacked argument. Several properties are defined for the change operations according to how the extensions are modified. For instance, a change operation can be

Modifications of AFs are also studied in the literature as a base to compute

Since in ICCMA’19, the inclusion of a dynamic track was launched for the first time; the changes have been limited to only attacks (that could be added or removed), which is an essential modification one can perform on an AF. Indeed, the dynamic challenge fostered some research, which was one of the motivations for the proposed dynamic challenge. See for instance [1,3,4,62].

.Motivations to approximate algorithms

Generally speaking, approximate algorithms are methods that can compute the solution to a problem faster than what exact algorithms can normally perform, but with a risk of providing an incorrect solution in some cases. This kind of approach had already been studied in the argumentation community before the organization of ICCMA 2021 [52,60,74]. Although not always correct, these algorithms can be highly necessary in situations where exact algorithms (e.g., SAT-based techniques) cannot solve the problem, or at least not fast enough to satisfy the needs of the users (e.g. if the argumentation framework is too large and the user expects a quick answer).

In the first organization of an approximate track at ICCMA, the focus was on the simplest problems related to the acceptability (credulous or skeptical) of arguments from the point of view of the nature of the answer, not from the point of view of complexity (see Section 3.2). However, some interesting ideas could be implemented for future ICCMA competitions, as discussed in Section 9.2.

.The competition tracks and tasks

.Tracks and tasks at ICCMA’19

ICCMA’19 let solvers participate in 7 classical tracks, the same as in ICCMA’17. Each track is named after the name of a semantics (

For single-status semantics (

Complete Semantics (

Preferred Semantics (

Stable Semantics (

Semi-stable Semantics (

Stage Semantics (

Grounded Semantics (only

Ideal Semantics (only

The combination of problems with semantics amounts to 24 tasks overall. In addition, 4 new tracks were dedicated to the solution of problems over dynamic frameworks, this time using the semantics originally proposed in [30]:

Complete Semantics (

Preferred Semantics (

Stable Semantics (

Grounded Semantics (only

In this case, the combination of problems with semantics amounts to a total of 14 tasks. Tasks in dynamic tracks are invoked by appending “

.Tracks and tasks at ICCMA’21

The first difference between ICCMA’19 and ICCMA’21 is the removal of the grounded semantics ( Complete Semantics ( Preferred Semantics ( Stable Semantics ( Semi-stable Semantics ( Stage Semantics ( Ideal Semantics (only

The second track, dedicated to approximate algorithms, has 6 sub-tracks corresponding to the semantics. In this case, the focus is on decision problems. Therefore, the approximate track of ICCMA’21 consists of the following tasks:

Complete Semantics ( Preferred Semantics ( Stable Semantics ( Semi-stable Semantics ( Stage Semantics ( Ideal Semantics (only

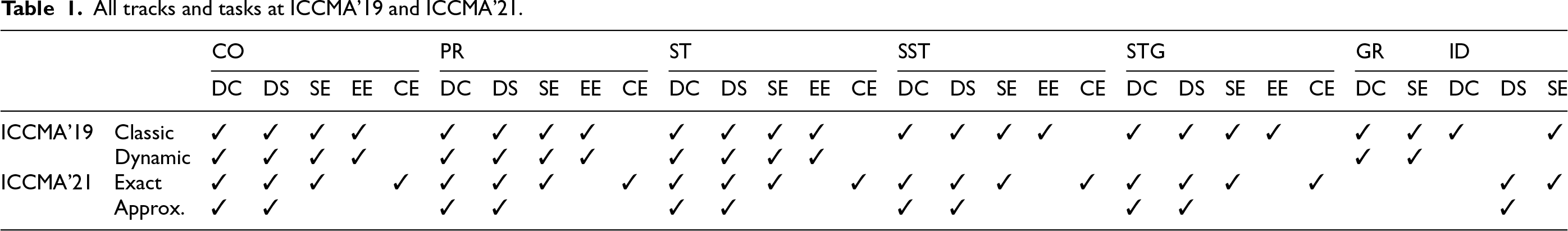

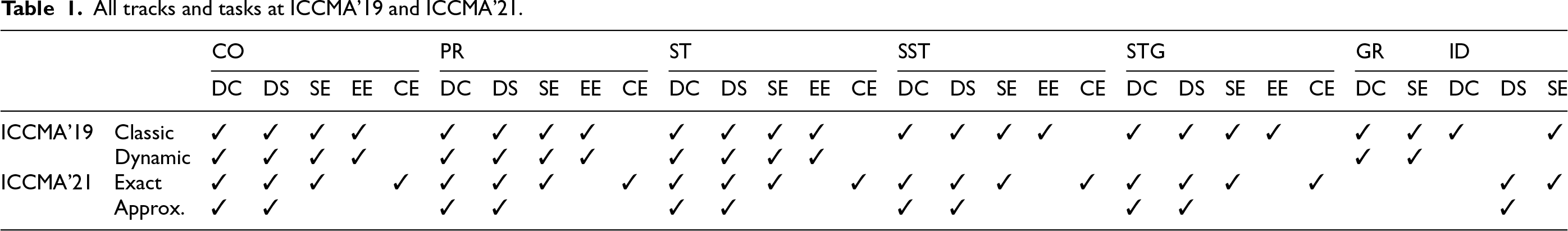

All tracks and tasks at ICCMA’19 and ICCMA’21.

All tracks and tasks at ICCMA’19 and ICCMA’21.

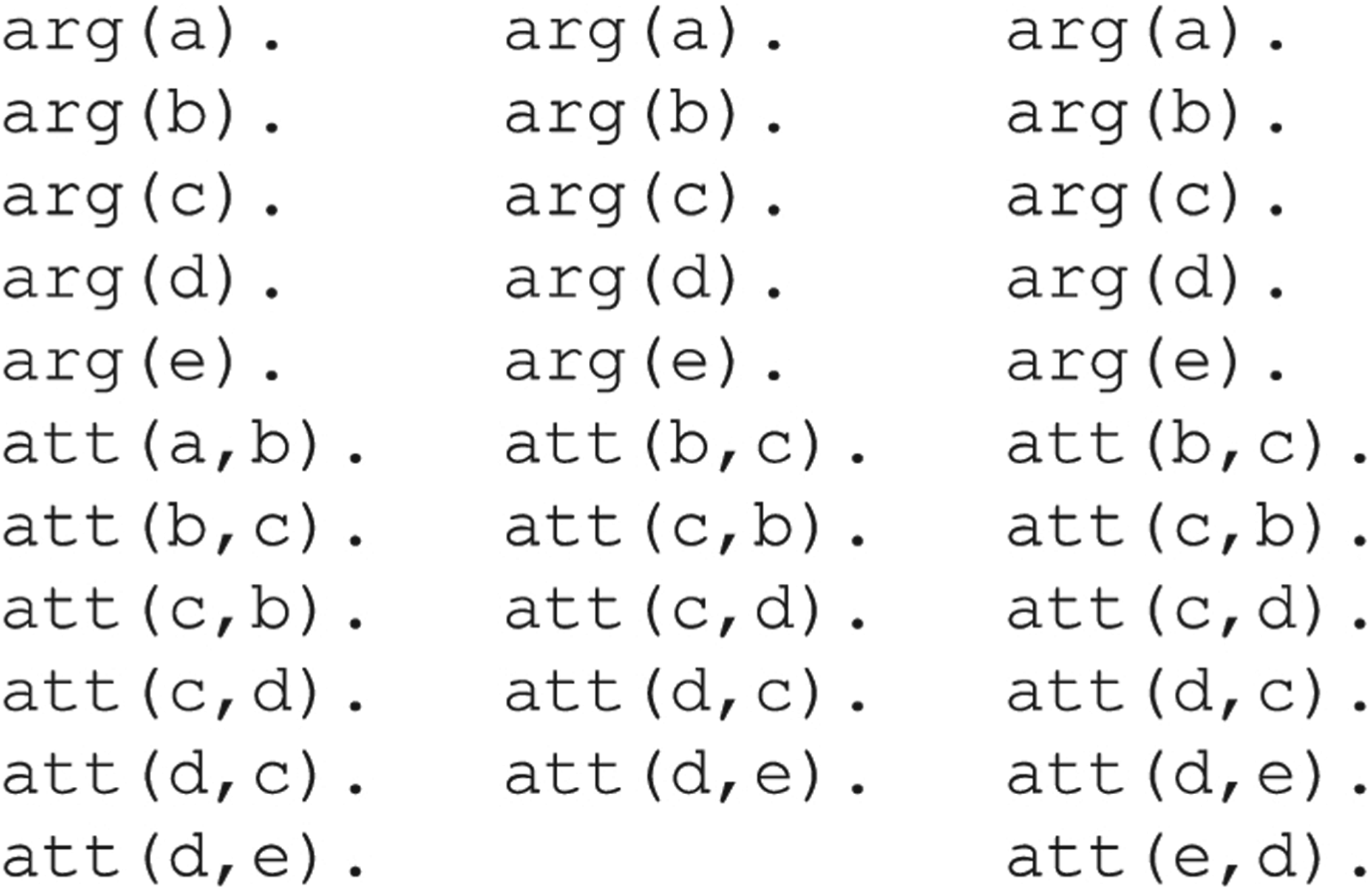

The file formats taken as input by solvers are described below. Benchmarks in ICCMA’19 and ICCMA’21 were available in two different formats (commonly used to represent AFs) to allow participating solvers to choose their preferred format during the competition.7 The required output format is also briefly described. All the solvers needed to adhere to both input and output formats for facilitating the evaluation and comparison of results.

.Input format

Each benchmark instance, that is, each AF, is represented in two different file formats:

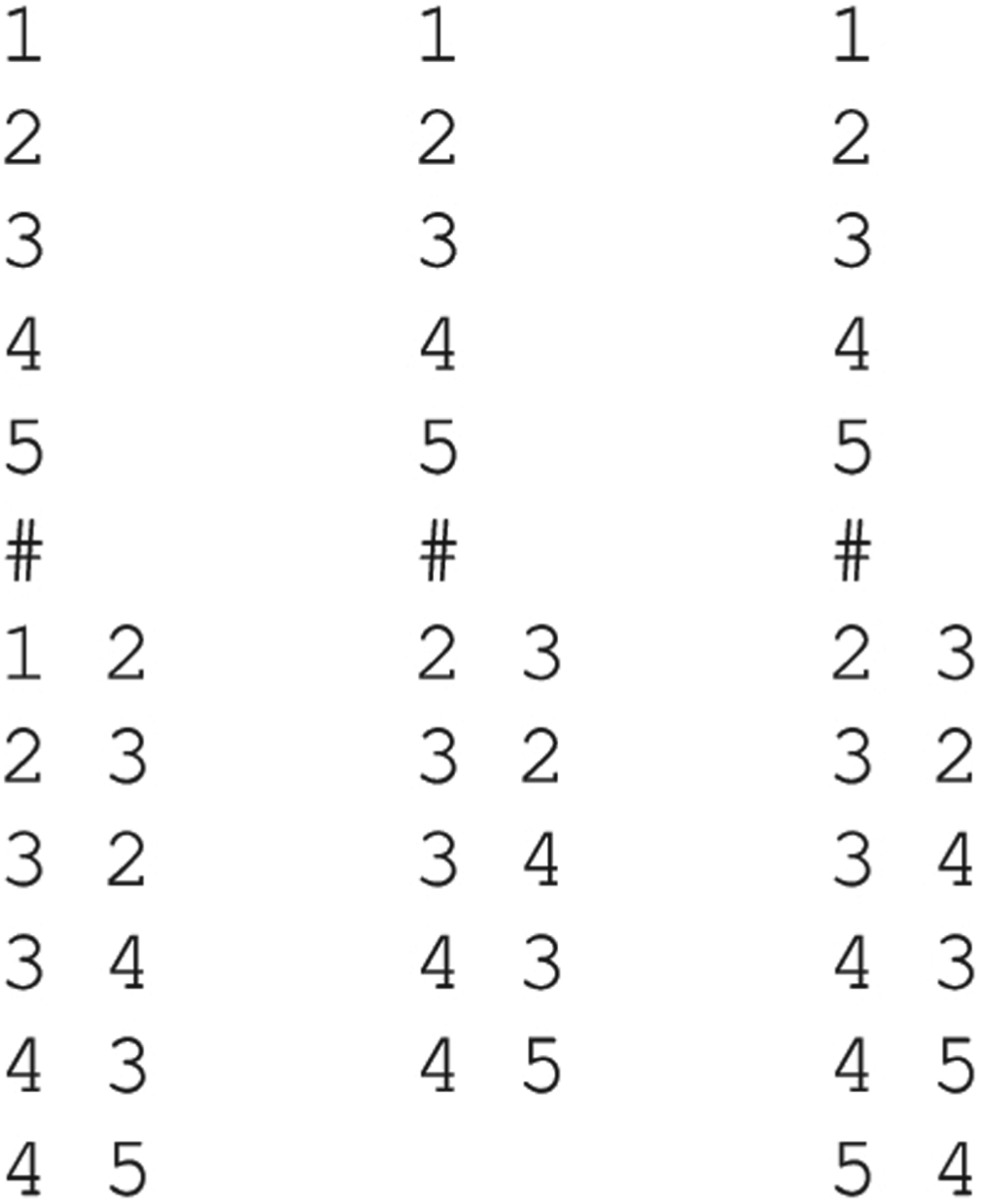

A novel format for dynamic AFs is introduced. For each (dynamic) problem instance, two files are required: the initial framework (either in

The three AFs obtained from the modifications in

A second example shows the same framework in the

The three AFs obtained from the modifications in

To generate the problem instances for the new tracks, a subset of the frameworks used in classical tracks was selected. A sequence of modifications was produced for each of the selected frameworks, where attacks were added only between existing arguments. No new argument was introduced during this process. Further details can be found in Section 5.3. For a modification file with

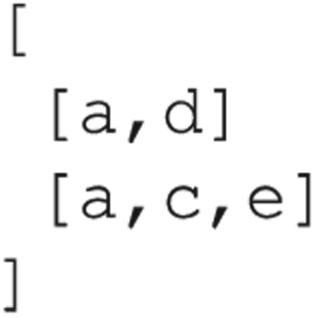

The output format of ICCMA’17 has been retained, except for the

We here show the output format of both

For the dynamic tracks, all answers were output in the form of a list where the first element represents the solution of the required task on the initial framework; each following element in this list is the answer returned for the

This section will cover the solvers and their features, the benchmarks utilized in the competition, and the evaluation process used to rank the solvers.

.Participants at ICCMA’19

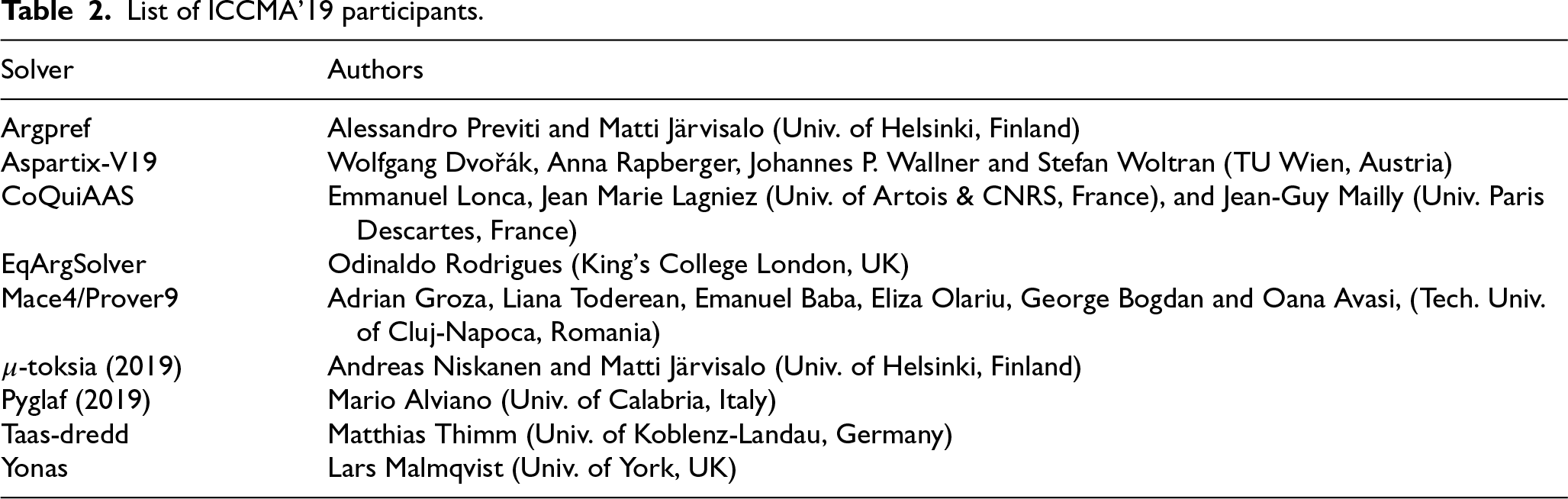

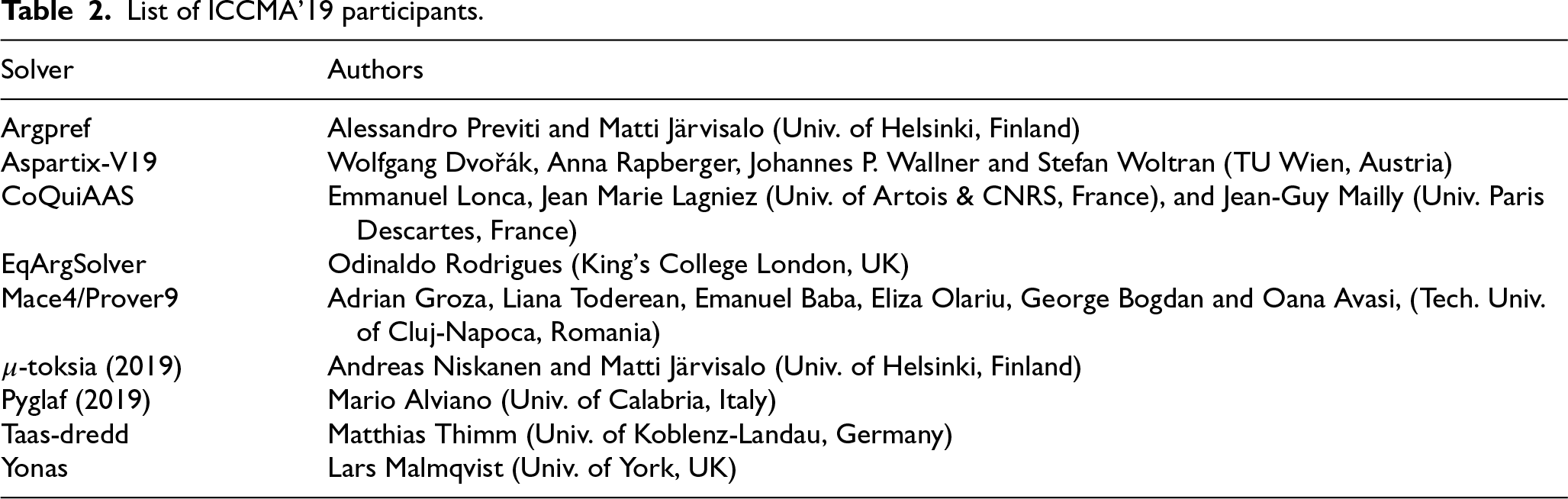

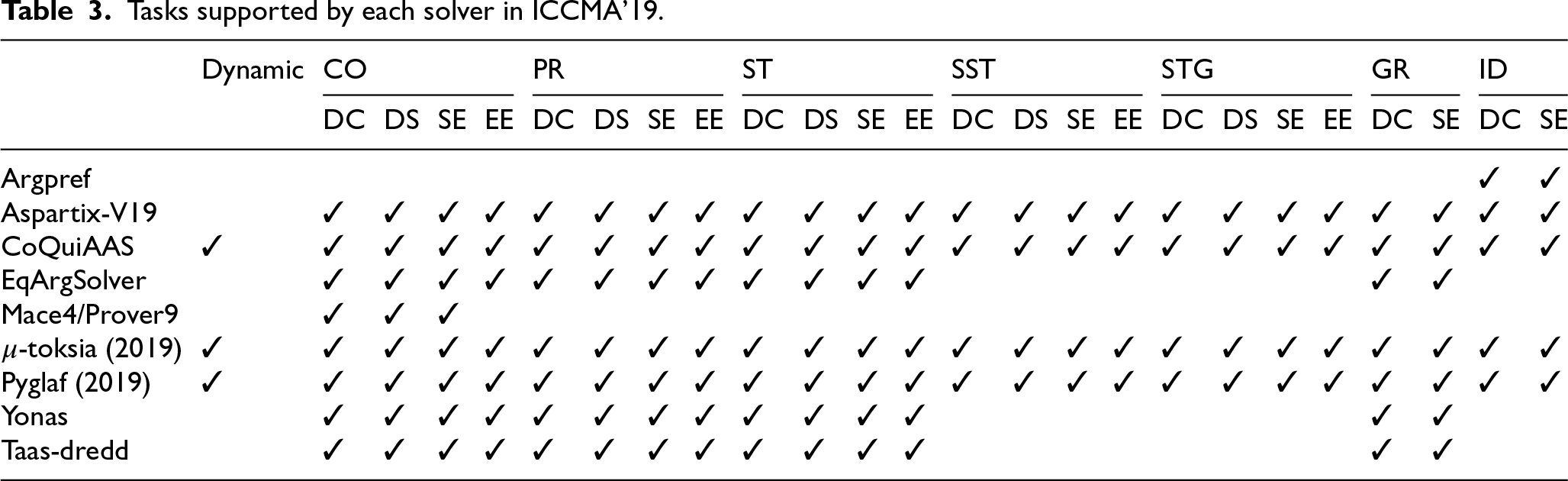

For the third edition, the competition received 9 solver submissions from research groups in Austria, Finland, France, Germany, Italy, Romania, and the UK. Table 2 lists the solvers, related participants, and affiliations. Table 3 shows the tasks all the solvers participated in: 3 solvers were submitted to all the tracks, including dynamic ones. The authors of the solvers used different techniques to implement their applications. In particular, 4 solvers were based on transforming argumentation problems to SAT, 1 on the transformation to ASP, 1 relied on machine learning, and 3 were built on tailor-made algorithms. Following the alphabetical order, we provide a summary of each solver and refer the reader to the official abstract for a more detailed discussion.9

List of ICCMA’19 participants.

List of ICCMA’19 participants.

Tasks supported by each solver in ICCMA’19.

An early version of

The

The

The

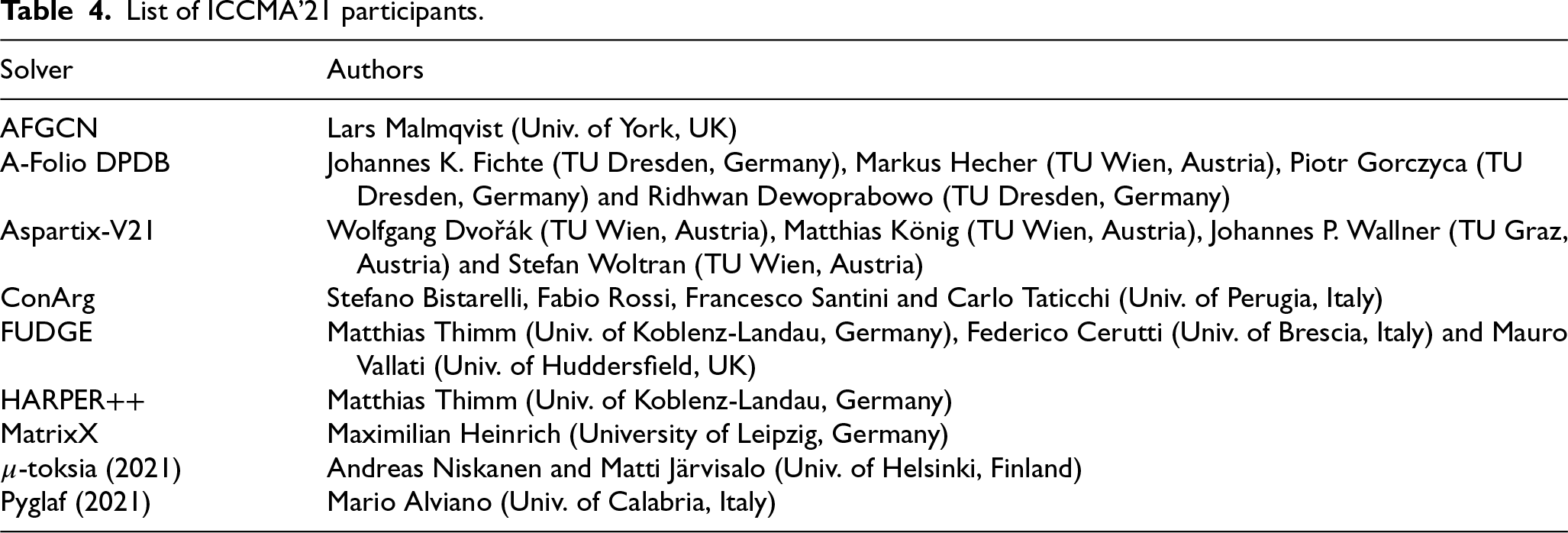

List of ICCMA’21 participants.

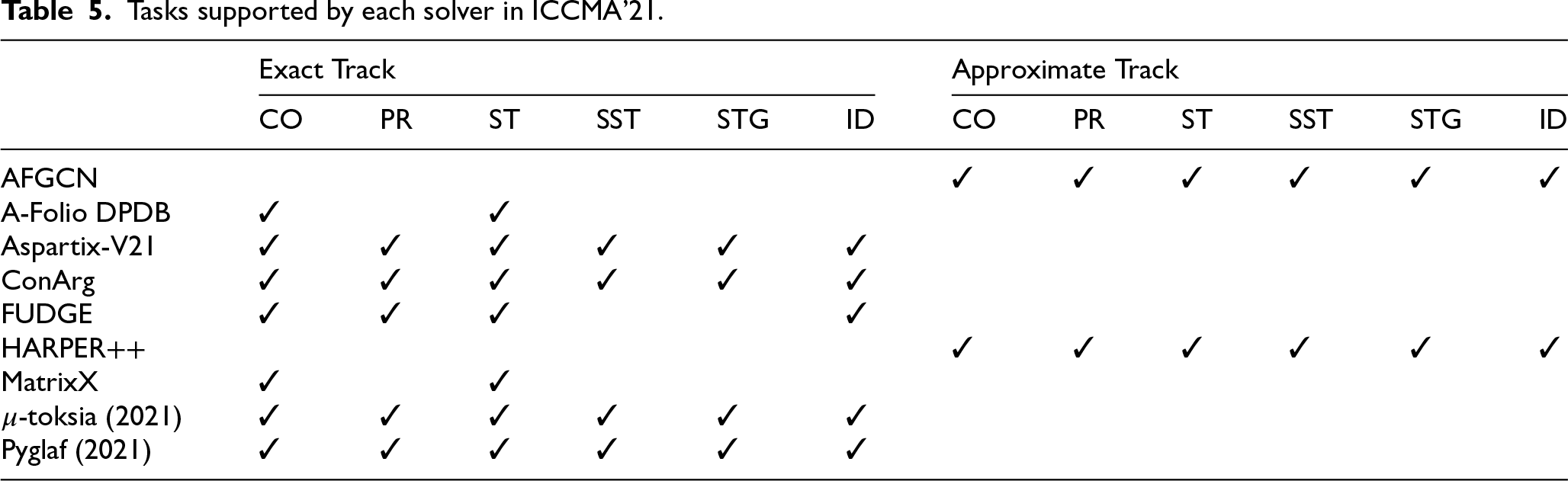

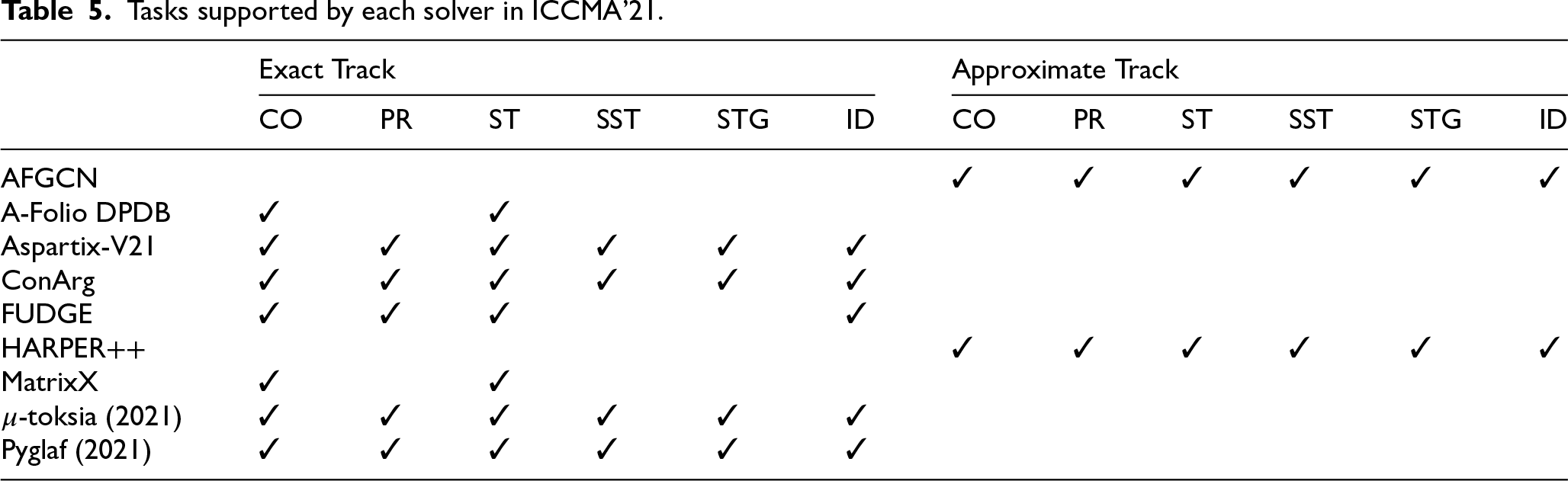

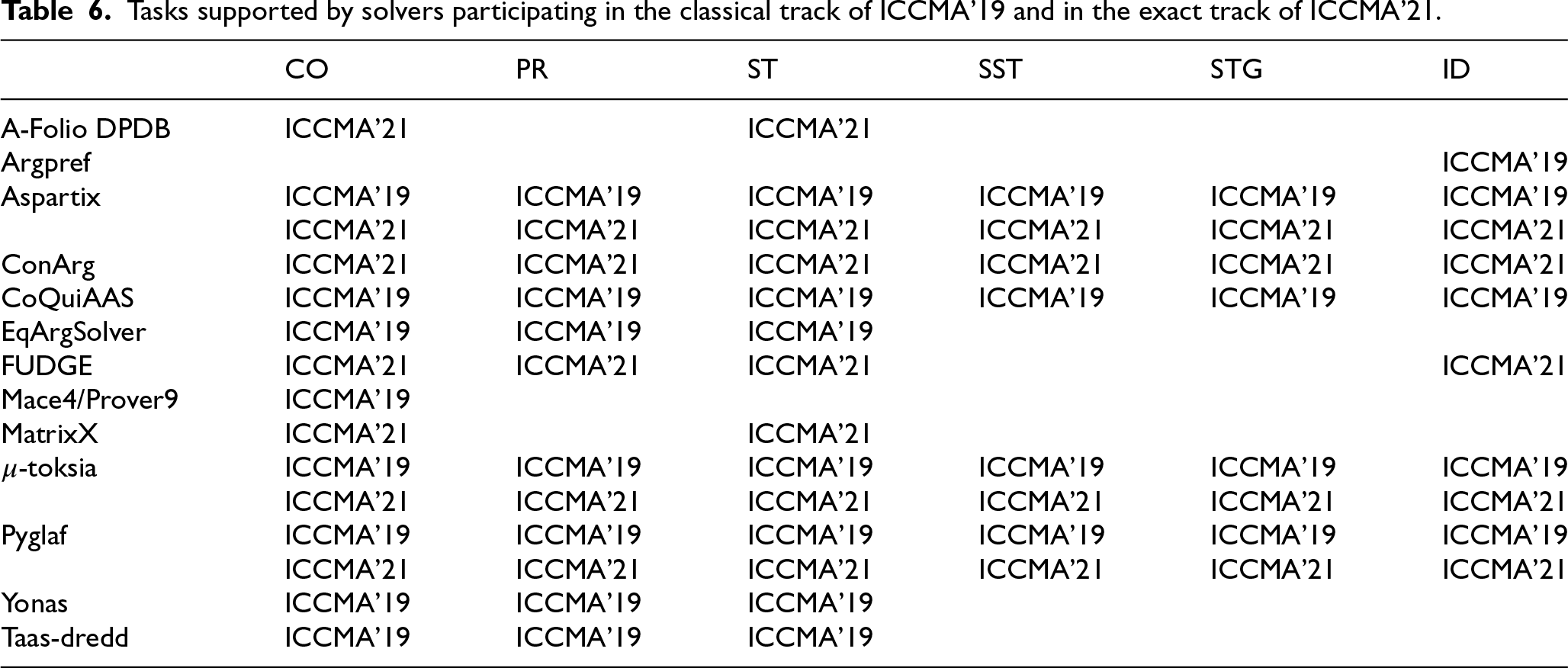

ICCMA’21 received 9 solver submissions as well from research groups in Austria, Finland, Germany, Italy, and the UK. The solvers and their developers are listed in Table 4, while Table 5 shows the solvers’ participation in sub-tracks. The participation of a solver in a sub-track means that it solves all the tasks in the sub-track. We also summarize in Table 6 the tasks supported by all solvers participating in the classical and exact tracks of the ICCMA’19 and ICCMA’21 competitions. Three of the submitted solvers, namely Aspartix,

Tasks supported by each solver in ICCMA’21.

Tasks supported by each solver in ICCMA’21.

Tasks supported by solvers participating in the classical track of ICCMA’19 and in the exact track of ICCMA’21.

Starting from ICCMA’17, the competition welcomes argumentation solvers and the benchmarks on which the evaluations are performed. Six benchmarks have been submitted to ICCMA’17,16 each of which focused on a specific theme, from generating particularly difficult instances to practical scenarios such as traffic networks and planning problems. Opening up to benchmarks in the competition goes toward testing the performance of argumentation solvers on real problems.

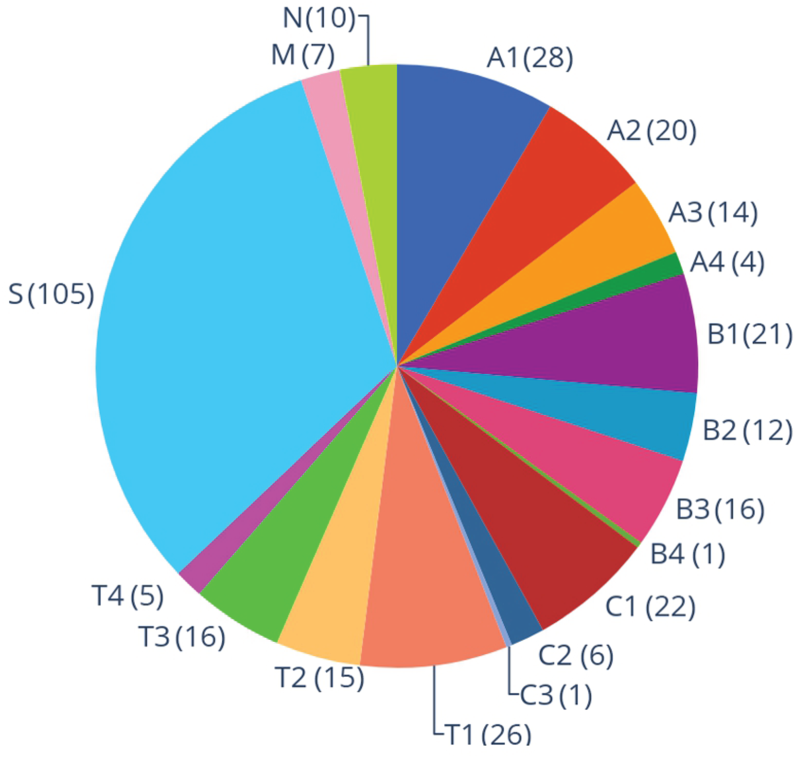

A total of 326 AF instances were selected for ICCMA’19 from the ones that were used in ICCMA’1717 and two new benchmarks submitted to ICCMA’19.18 The selected benchmarks serve two primary purposes. Firstly, we intend to assess the progress of solvers for argumentation problems in comparison to the previous edition of the competition. Thus, we have included instances from ICCMA’17. Secondly, we aim to scrutinize the behavior of different solvers with more practical benchmarks and closer to real-world instances. By doing so, we can identify the most effective solvers to offer solutions to real problems. While testing difficult instances can push solver limits, we aimed to compare solver performance across all collected benchmarks. As pointed out in [69], a good number of instances adopted in ICCMA’17 were too hard for all the solvers submitted in that competition. For example

The instances chosen for the competition, which avoided those that were overly hard, were selected using

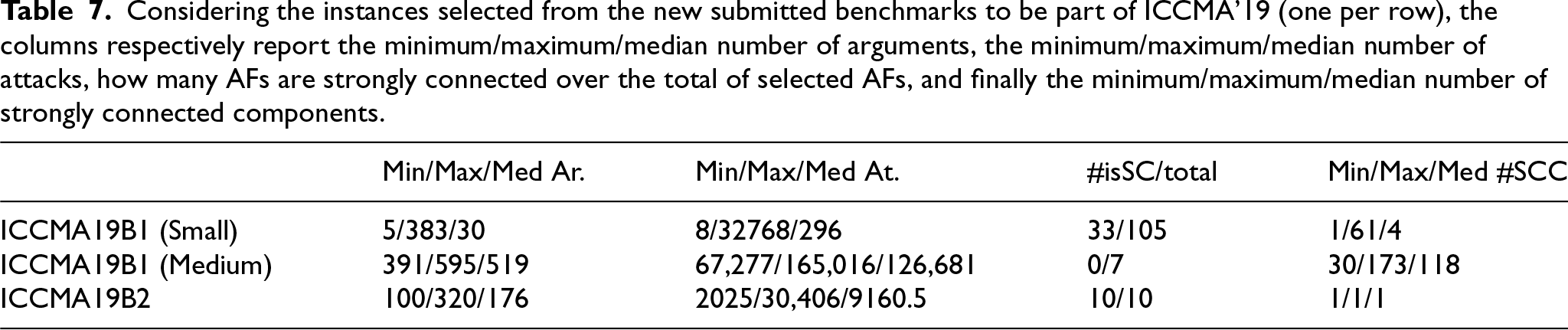

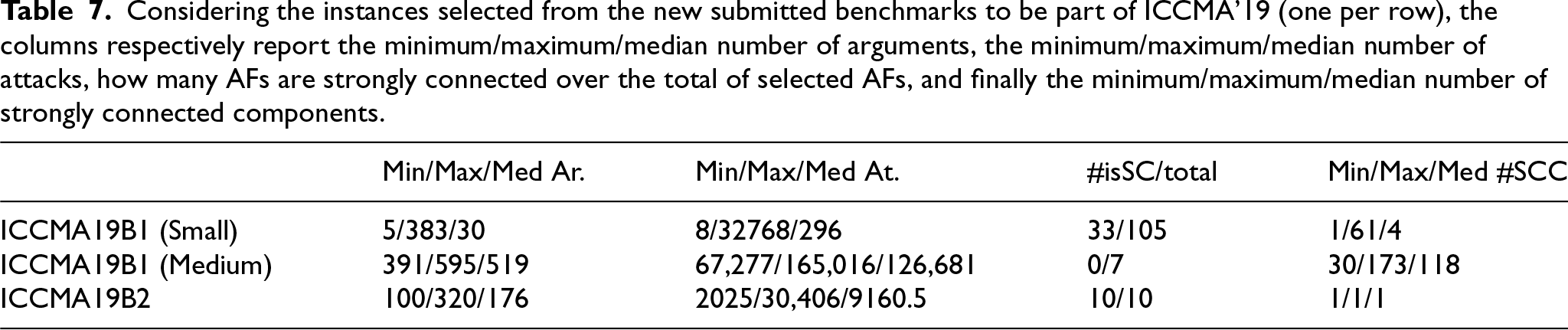

About two third of the instances were selected from ICCMA’17 benchmarks (i.e., 204). The number of selected instances for each class of ICCMA’17 are reported in A; their features are summarised in [42]. The remaining instances (i.e., 122) came from the two new benchmarks that were submitted to ICCMA’19. Only those AFs that ConArg could solve with extended conditions were selected. The first new set of benchmark instances, named ICCMA19B1, was submitted by Bruno Yun and Madalina Croitoru. It is a practically oriented benchmark on logic-based AFs instantiated from inconsistent knowledge bases expressed using Datalog±, a language widely used in the Semantic Web. In the competition, only those instances from the

Considering the instances selected from the new submitted benchmarks to be part of ICCMA’19 (one per row), the columns respectively report the minimum/maximum/median number of arguments, the minimum/maximum/median number of attacks, how many AFs are strongly connected over the total of selected AFs, and finally the minimum/maximum/median number of strongly connected components.

Considering the instances selected from the new submitted benchmarks to be part of ICCMA’19 (one per row), the columns respectively report the minimum/maximum/median number of arguments, the minimum/maximum/median number of attacks, how many AFs are strongly connected over the total of selected AFs, and finally the minimum/maximum/median number of strongly connected components.

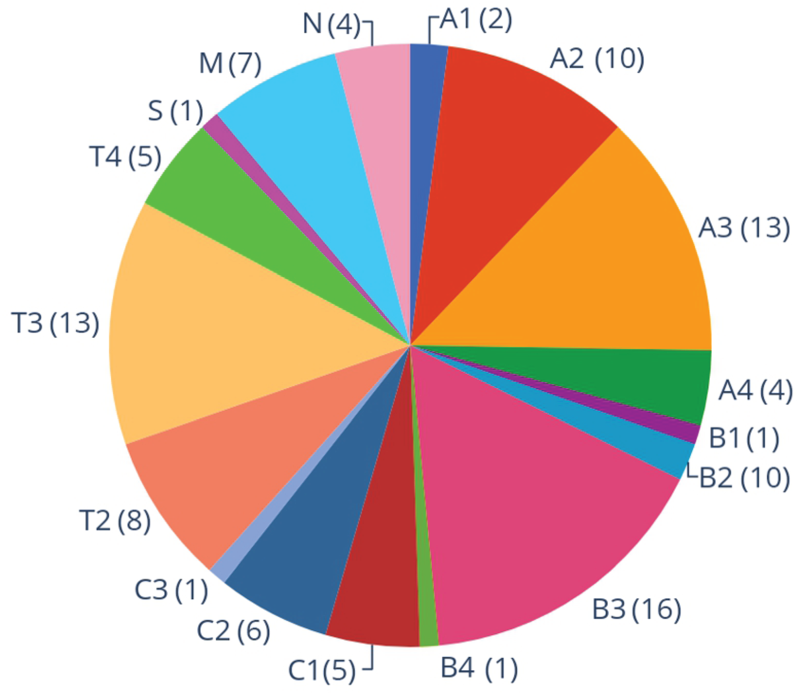

To sum up, Fig. 5 reports the distribution of benchmark instances: labels from A1 until T4 come from ICCMA’17, while the other three labels (S, M, and N) come from the two new benchmarks, described in the following paragraphs.20 A reports the exact number of frameworks selected from each sub-benchmark.

The distribution of AF instances in the ICCMA’19 benchmark, selected from different benchmarks taken from ICCMA’17 (from A1 to T4), and from the two new submissions:

For the benchmark selection of ICCMA’21, a call for benchmarks was launched, which was unfortunately unsuccessful: no set of instances nor any generator was submitted. This leads to two paths: selecting a subset of ICCMA’19 instances and generating new ones. The ICCMA’19 instances were selected to be challenging enough to distinguish efficient solvers while still being solvable by some of them. Building the benchmark for ICCMA’21 in this way also allows for evaluating solver evolution from 2019 to 2021. Then, the hardest instances among the ones used at ICCMA’19 were selected, ranking the instances by two criteria: for a given AF

The distribution of AF instances in the ICCMA’21 benchmark, selected from different benchmarks taken from ICCMA’19. A1 to T4 represent the instances from ICCMA’17,

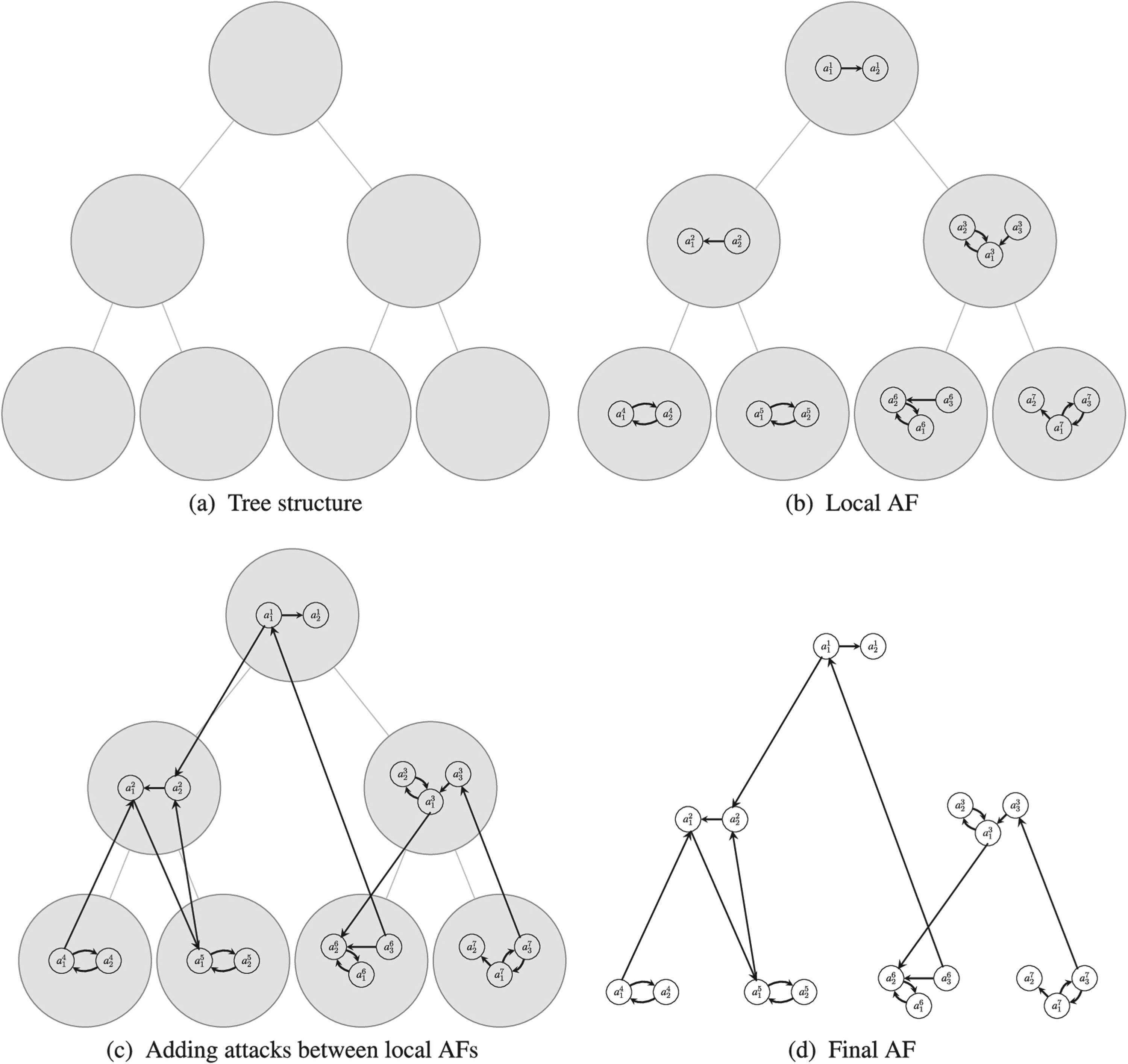

ICCMA’21 also proposed a new approach for generating AFs considering underlying tree structures. More precisely, a tree

AFs generation process.

The number of arguments

For the local AF, two random generators have been considered that are based on the following random graph generators:21

The values

The numbers that are used for generating the local AFs have default values

The details of the distribution of these instances are available online in a CSV file where the first column indicates the type of the local AFs (E for Erdös-Rényi, B for Barabasi-Albert, and BE for the random choice of one of them), the second and third columns give the tree depth and tree-width, and the last column gives the number of instances.22 With all the combinations of parameters, we have 180 types of instances: 60 for each local AF type (E, B, BE). These 60 types of instances correspond to the combination of

The motivation for developing this benchmark generation model was twofold: first of all, it seemed intuitive that (some) large debates could be split into smaller, loosely connected debates. This is the case, for instance, in presidential elections, where arguments about (e.g.) the economy are strongly connected, arguments about environmental issues are strongly connected, but arguments about the economy are (comparatively) only loosely connected to the ones about the environment. This is based solely on intuition, as there is no formal evidence to support it for the moment. The second motivation was that community-based graphs could offer interesting instances that can be solved by “clever” algorithms that take into account the properties of the graph while being hard for purely SAT-based approaches.23 The challenges posed by community-based instances have already been observed in other domains (e.g. SAT, [61]), as well as the ability of the proposed model to provide challenging instances (see [56]).

Each solver was given 4 GB of RAM to compute the results of tasks in both the classical and dynamic tracks. The timeout to compute an answer for the dynamic track was 5 minutes for each framework/change: half of the time in the classical track for a single instance, that is 10 minutes. Time and memory limits mimic previous competitions (also to have some comparison). All runs were done on Intel Sandy Bridge CPUs with 16 cores clocked at 2.6 GHz and 64 GB of RAM, using up to all 16 cores for different runs.

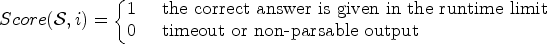

The solvers were given all instances in the tracks they participated in to solve. For each instance, a solver got

For the dynamic tracks, a result was considered correct and complete if, for

The ranking of solvers for a track was based on the sum of scores over all tasks of a considered track. Ties were broken by the solver’s total time to return the correct results. Note that to ensure that each task had the same impact on the evaluation of a track, all tasks for one semantics had the same number of instances.

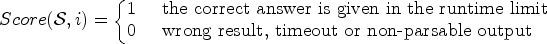

.Scores and ranking at ICCMA’21

For the 2021 edition, the competition has been run on a computer cluster where each machine has an Intel Xeon E5-2637 v4 CPU and 128GB of RAM. The runtime limit for each instance is 600 seconds for the “exact” track and 60 seconds for the “approximate” track. The memory limit is the machine’s memory, i.e. 128GB. Each sub-track has one ranking, i.e., six rankings for the “exact” track and six rankings for the “approximate” track. To be ranked, a solver must participate in the full sub-track (without obligation to participate in all the (sub)tracks). The scoring system is slightly different between both tracks.

For the “exact” track, any wrong result on an instance

On the contrary, wrong results do not lead to an exclusion in the “approximate” track:

Then, the score of the solver

When two solvers have the same score for a given sub-track, the cumulated runtime over the instances correctly solved is used as a tie-break rule (the fastest is the best).

.Reference solvers

In the ICCMA argumentation competitions, only a reference solver is usually used to check the results correctness. For instance, solutions for all instances in ICCMA’15 were computed using Tweety [71,75], a collection of libraries for logical aspects of artificial intelligence and knowledge representation, while Aspartix-D was employed as the reference solver in ICCMA’17 [37,42]. No proof can be given that ConArg has no errors, but

In [29], the authors classify the ConArg approach among “reduction-based implementations”, where first the problem is reduced to the target formalism (in this case, constraints), then a solver for that formalism is executed and, finally, the obtained output is interpreted as solutions of the original problem. The search phase takes advantage of classical techniques in Constraint-programming, such as local consistency, different heuristics for trying to assign values to variables, and complete search-tree with branch-and-bound. Models in Gecode are implemented using

To prevent any issues caused by instances that the reference solver could not solve, the organizers of the ICCMA’19 competition aimed to avoid their usage. The authors of [69] proved that nearly

Concerning the ICCMA’21 competition, a tool named RUBENS,26 developed by some authors of this paper was used to perform initial checks on the submitted solvers. RUBENS is a library designed to generate test cases automatically using translation rules. It comes with some pre-built test generators and an interface allowing users to create new ones with minimal effort. The RUBENS checker then executes the software to be tested on the generated test cases, and if the software produces an unexpected result, an error message is thrown. Using this tool allowed us to discover some issues in two solvers, providing the authors with few instances and allowing them to correct their solvers before the competition. A reference solver was not taken into consideration for the exact track. Instead, the obtained results for each instance were examined to identify any inconsistencies. This allowed us to discover that another solver was incorrect for a sub-track. Unfortunately, this solver could not be fixed in time to participate in the considered sub-track. The same methods could not be used for the approximate track due to the incomplete nature of the algorithms. Therefore, the 2019 version of

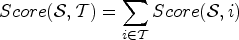

Time [s] taken for each correctly solved instance in the tracks for the semantics

Time [s] taken for each correctly solved instance in the dynamic tracks for the semantics

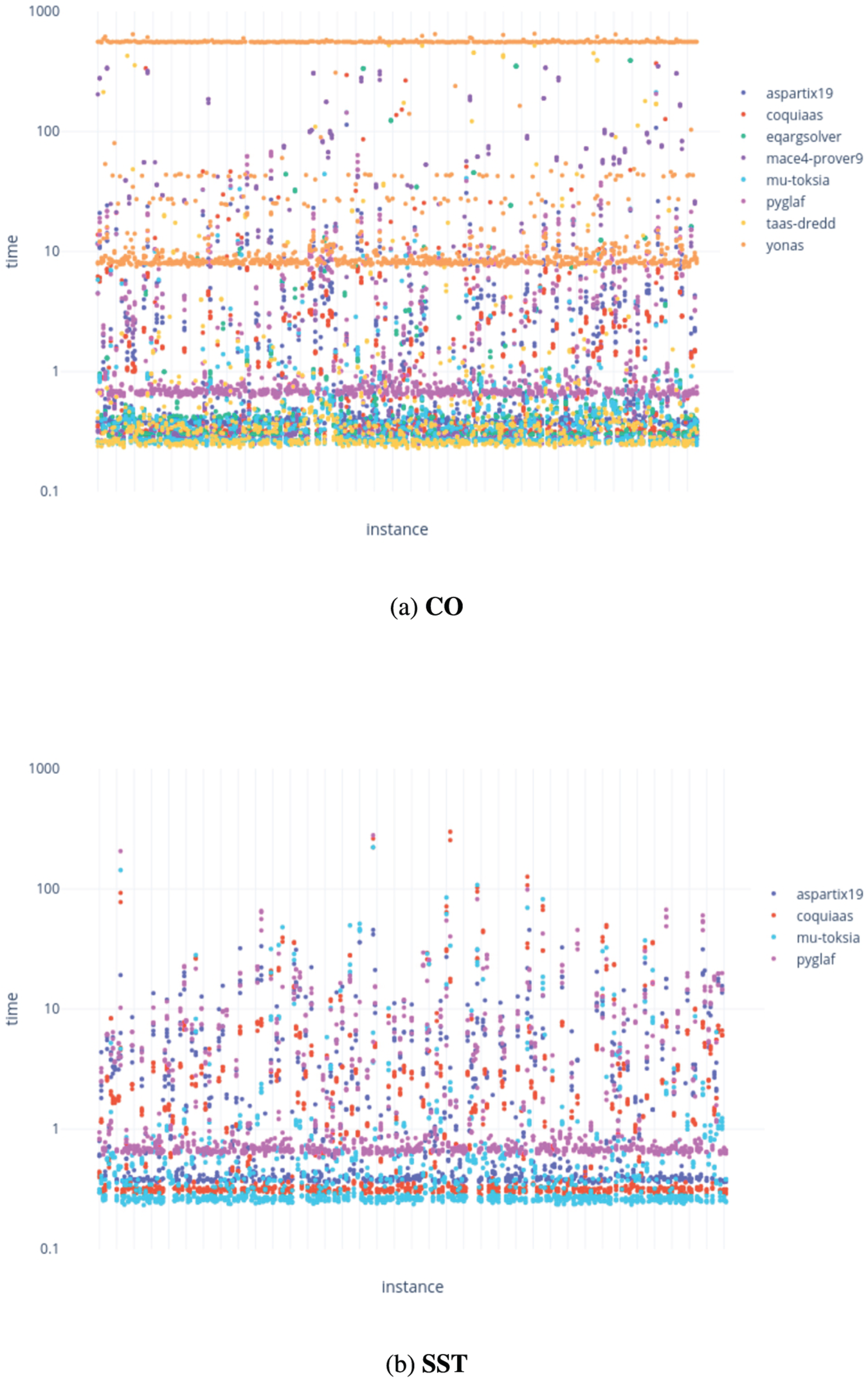

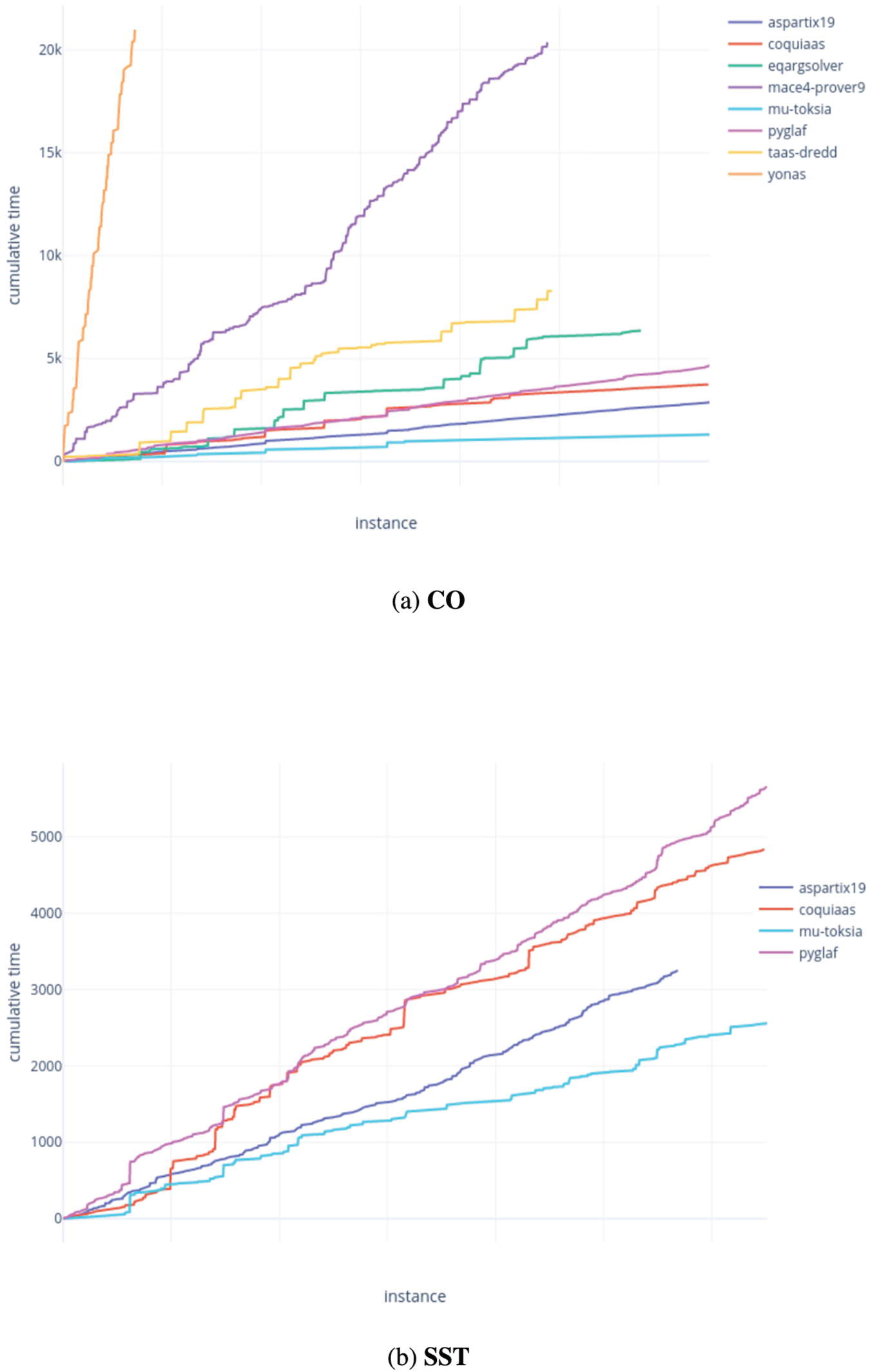

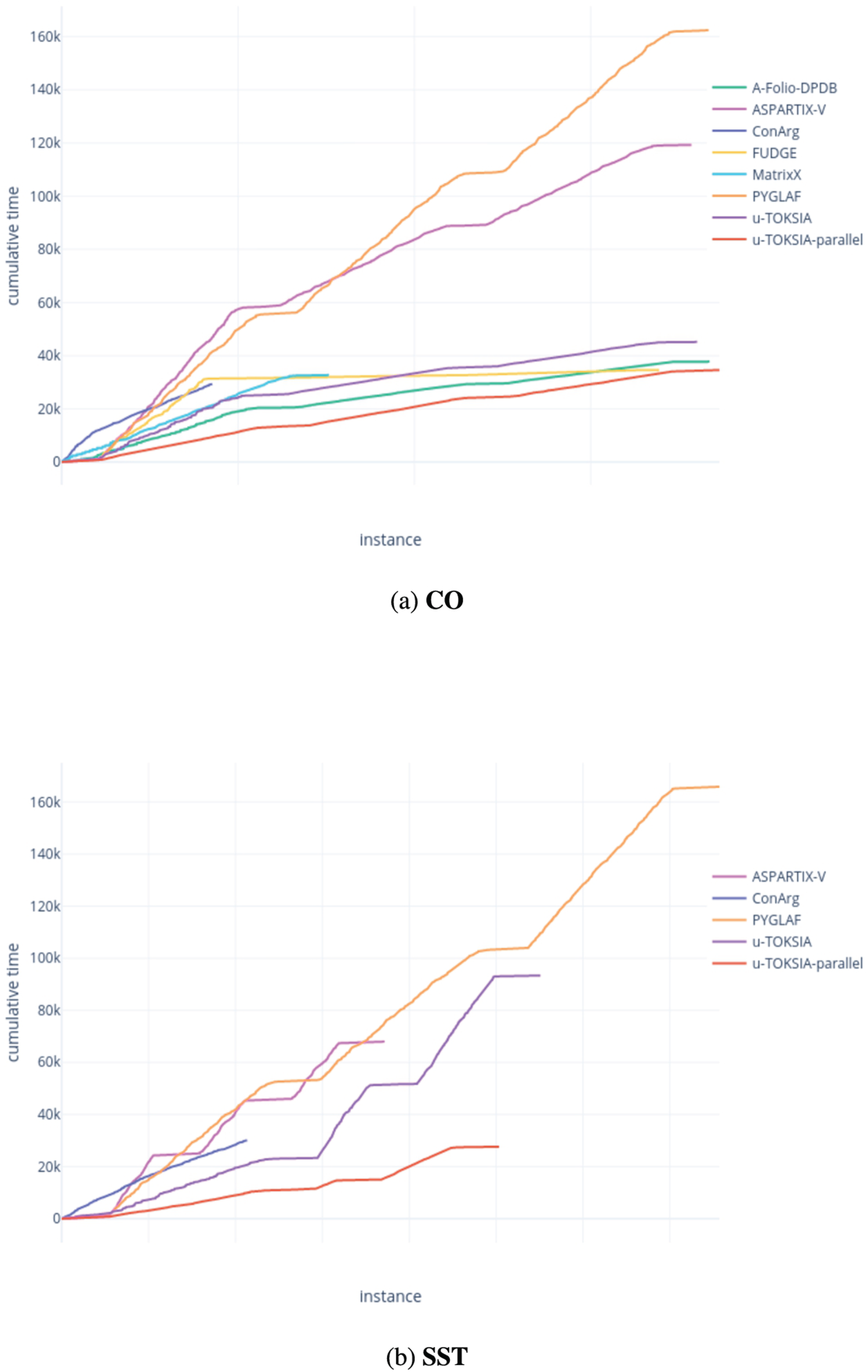

Cumulative time [s] taken to correctly solve all instances in the

Cumulative time [s] taken to correctly solve all instances in the dynamic

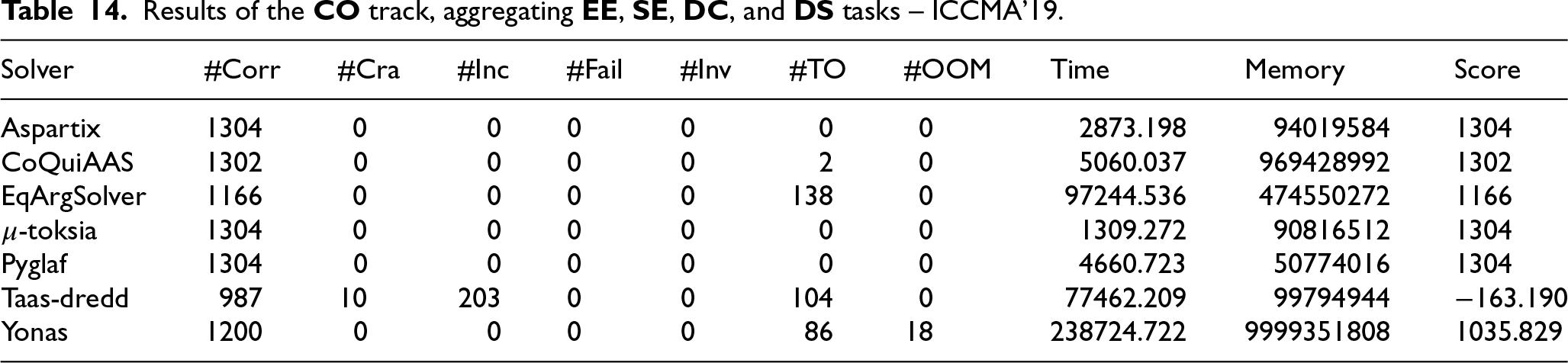

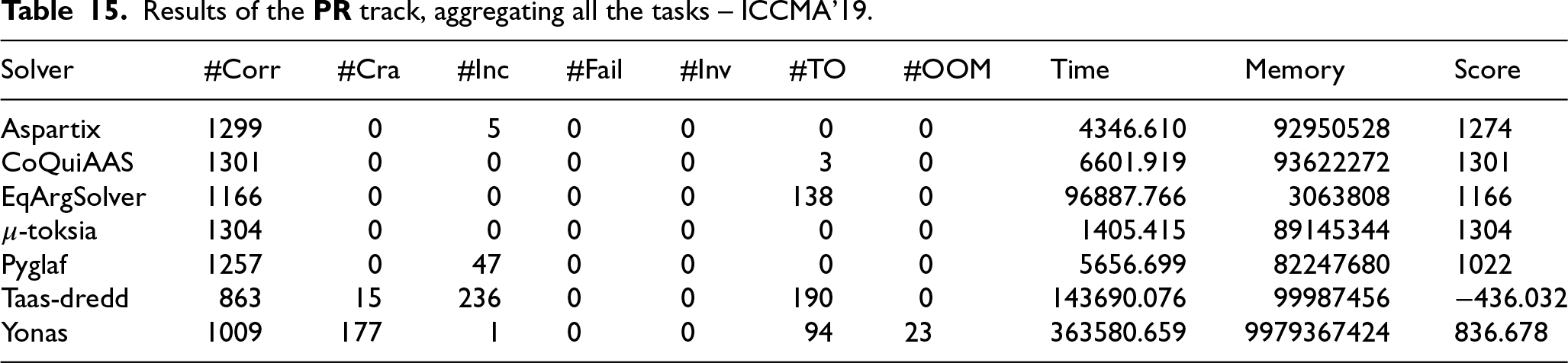

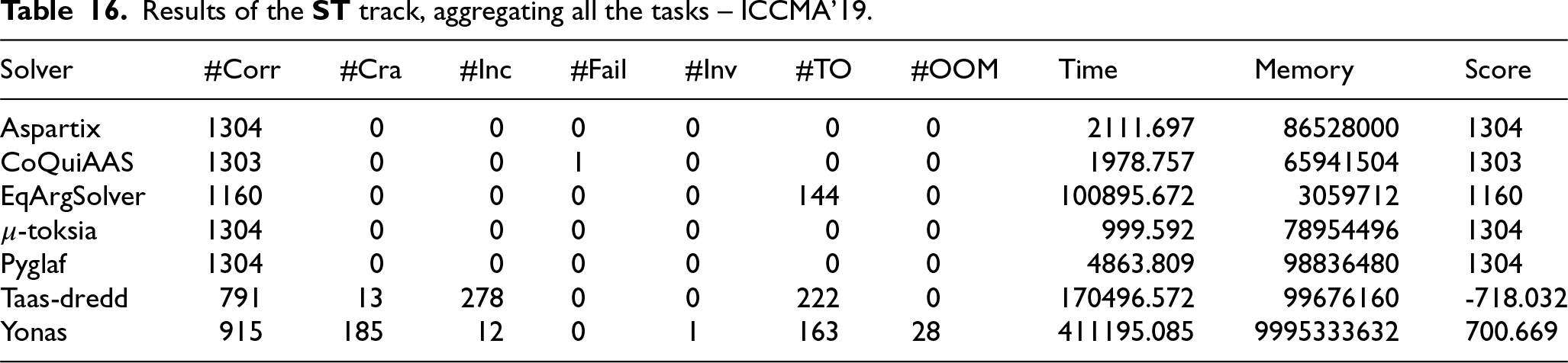

The detailed results and ranking of solvers for all tracks can be found on the dedicated page of the competition website.28 In Appendix B, we report some tables aggregating results per semantics. In the global ranking for the static tracks, the solvers

Figure 8 shows the time (on a logarithmic scale) taken to solve each non-dynamic instance that was solved correctly by each solver;29 failed instances, for example, due to exceeding the memory limit or timeout, are not shown. The results are again aggregated by semantics. We can see that Pyglaf is slightly above the other lines in the case of “easy” instances (less than a second), while Yonas is visibly above all, i.e. it takes significantly longer. The other solvers are very close to each other.

Figure 9 shows the same results this time considering dynamic tracks:

We also show the cumulative amount of time, expressed in seconds, taken to solve each instance (among those correctly solved) of a track. Figure 10 displays the results for the classical tracks, while Fig. 11 reports the results for the dynamic tracks. What emerges for the non-dynamic tracks is that Yonas takes longer than all the other solvers, whose performances are, in turn, more similar to each other. Between the three solvers taking part in the dynamic track,

The hardest instances in the classical tracks were

Concerning the dynamic tracks, in

.Statistical significance of results

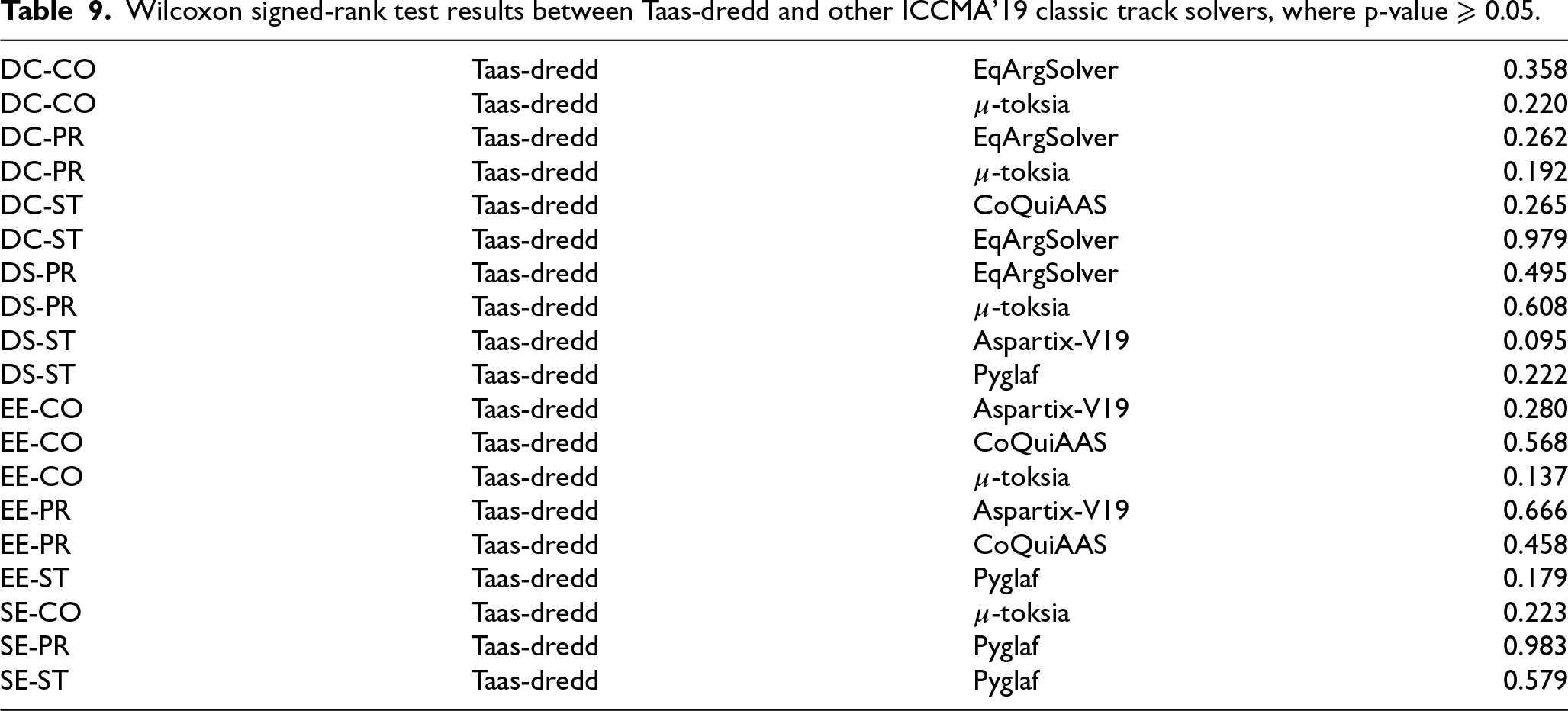

To determine whether the runtime differences of the solvers are significant, we conducted a Wilcoxon signed-rank test [78], a non-parametric test for paired samples which compares the probability that a random value from the first group is greater than its dependent value from the second group. In our case, each group contains the execution time of a solver, given a particular task, against all instances solved by both solvers. The results obtained are trustworthy since the following assumptions are fulfilled: i) the dependent variable is continuous, ii) paired observations are randomly and independently drawn, and iii) paired observations come from the same population. Upon comparison, the test generates a p-value for each sample pair, and statistical significance is determined if the p-value falls below a certain threshold, most commonly 0.05. Performing tests to compare the runtimes for all solvers participating in ICCMA’17, 19, and 21 was not possible due to the different machine configurations on which the performance of the solvers was computed, which results in execution times that are not directly comparable. Therefore, we conducted the test on participant solvers in the ICCMA’19 classic tracks.30 We discuss below the results of tests.

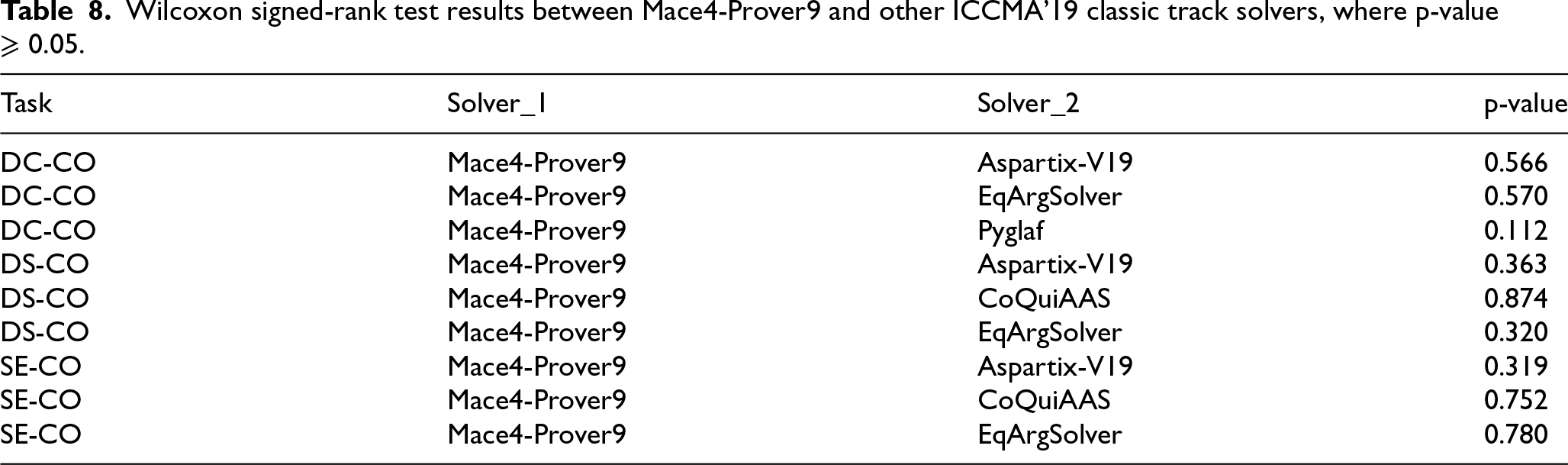

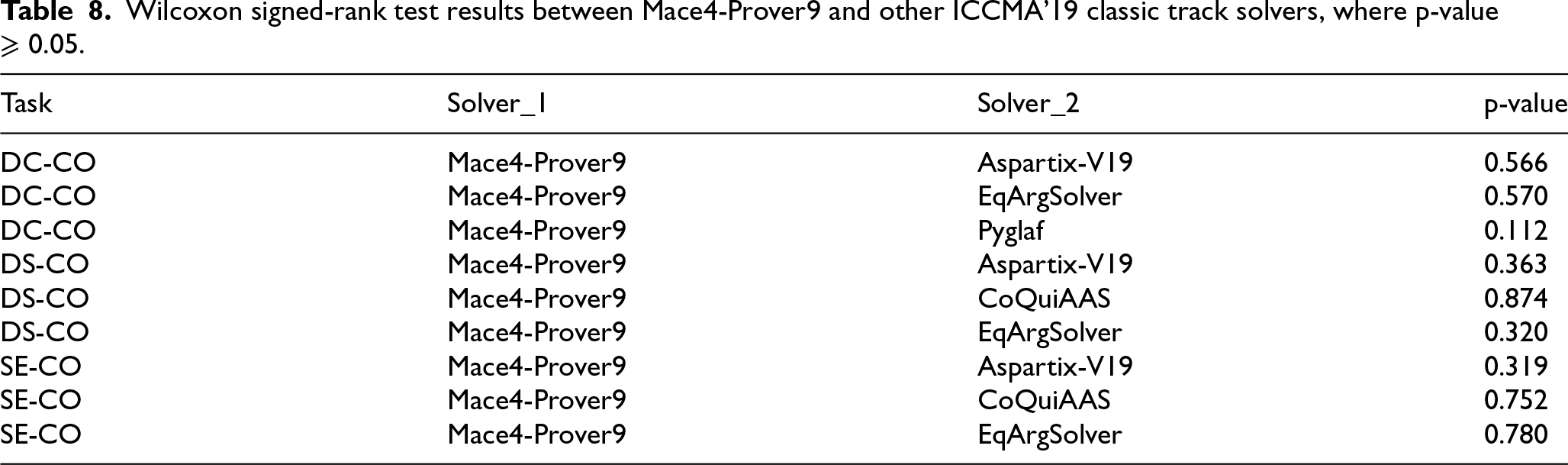

Wilcoxon signed-rank test results between Mace4-Prover9 and other ICCMA’19 classic track solvers, where p-value

.

Wilcoxon signed-rank test results between Mace4-Prover9 and other ICCMA’19 classic track solvers, where p-value

For most of the results (354 out of 383 tests), we obtain p-value

Table 8 shows the results of the Wilcoxon signed-rank tests, conducted between Mace4-Prover9 and other solvers, that presented a p-value

The findings in Table 9 suggest a statistically non-significant difference in performance between

Wilcoxon signed-rank test results between Taas-dredd and other ICCMA’19 classic track solvers, where p-value

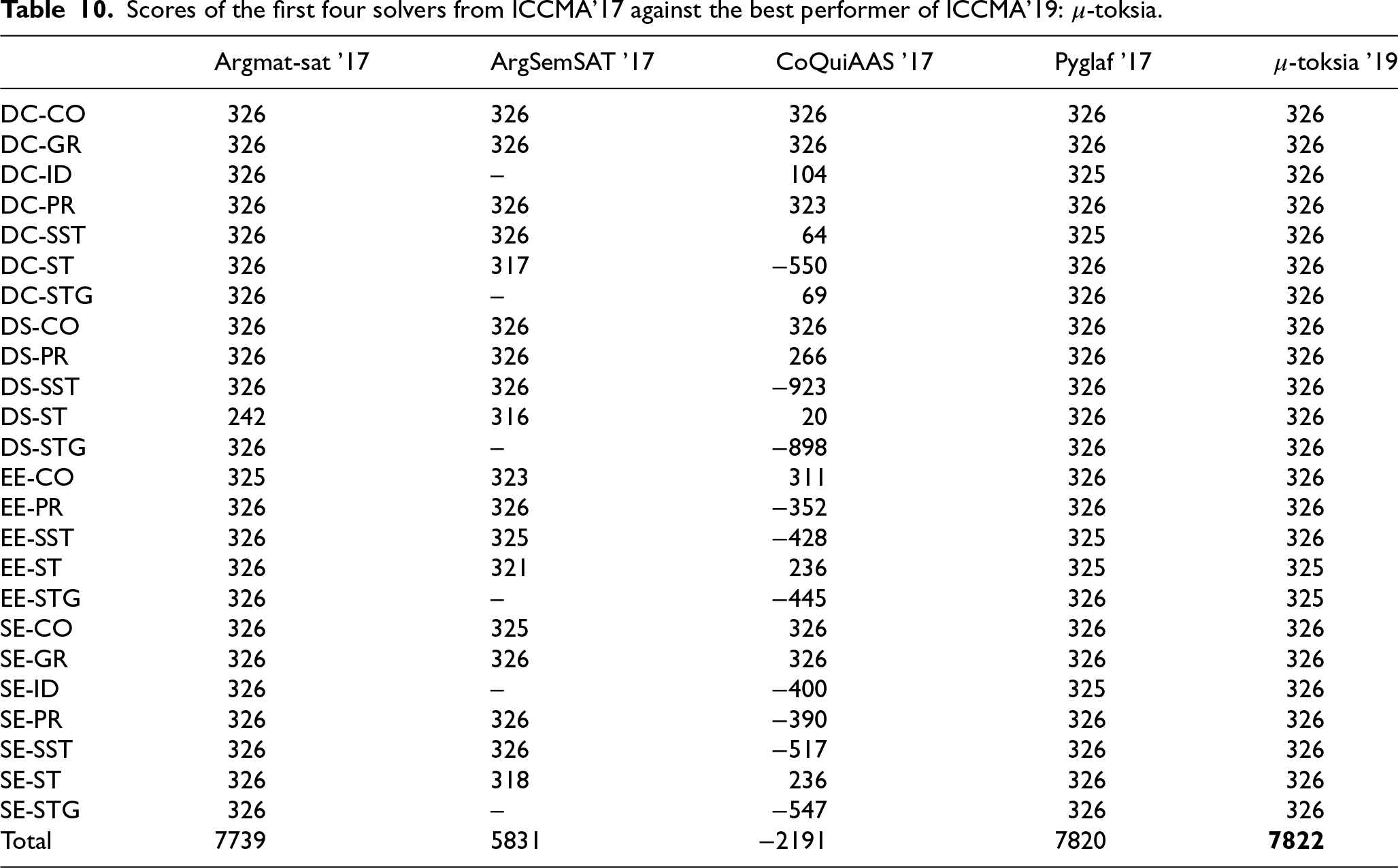

Scores of the first four solvers from ICCMA’17 against the best performer of ICCMA’19:

The first four solvers in ICCMA’17 were compared with the overall winner of ICCMA’19 to quantify the improvements of solvers achieved in two years. The past solvers were also dockerized by asking the participants of ICCMA’17 for those older versions (the participant himself dockerized Pyglaf).

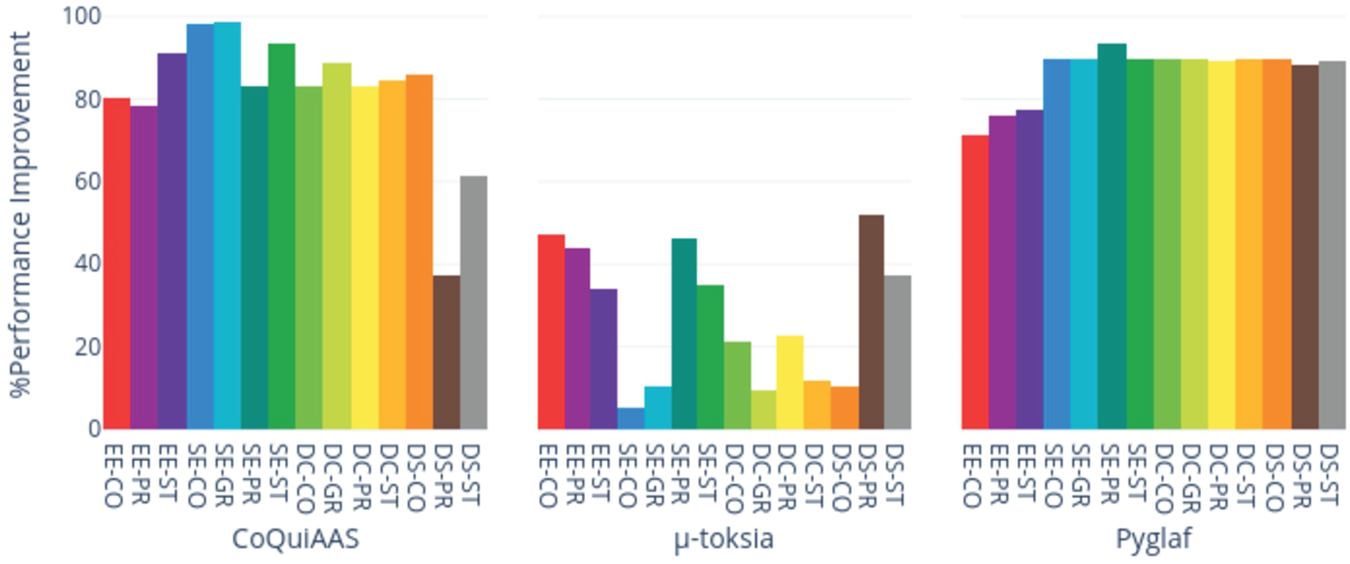

Percentage performance-improvement of a dynamic solver over its non-dynamic version. Values on the ordinate are computed as

Table 10 shows the scores for Argmat-sat, ArgSemSAT, CoQuiAAS’17, Pyglaf’17, and

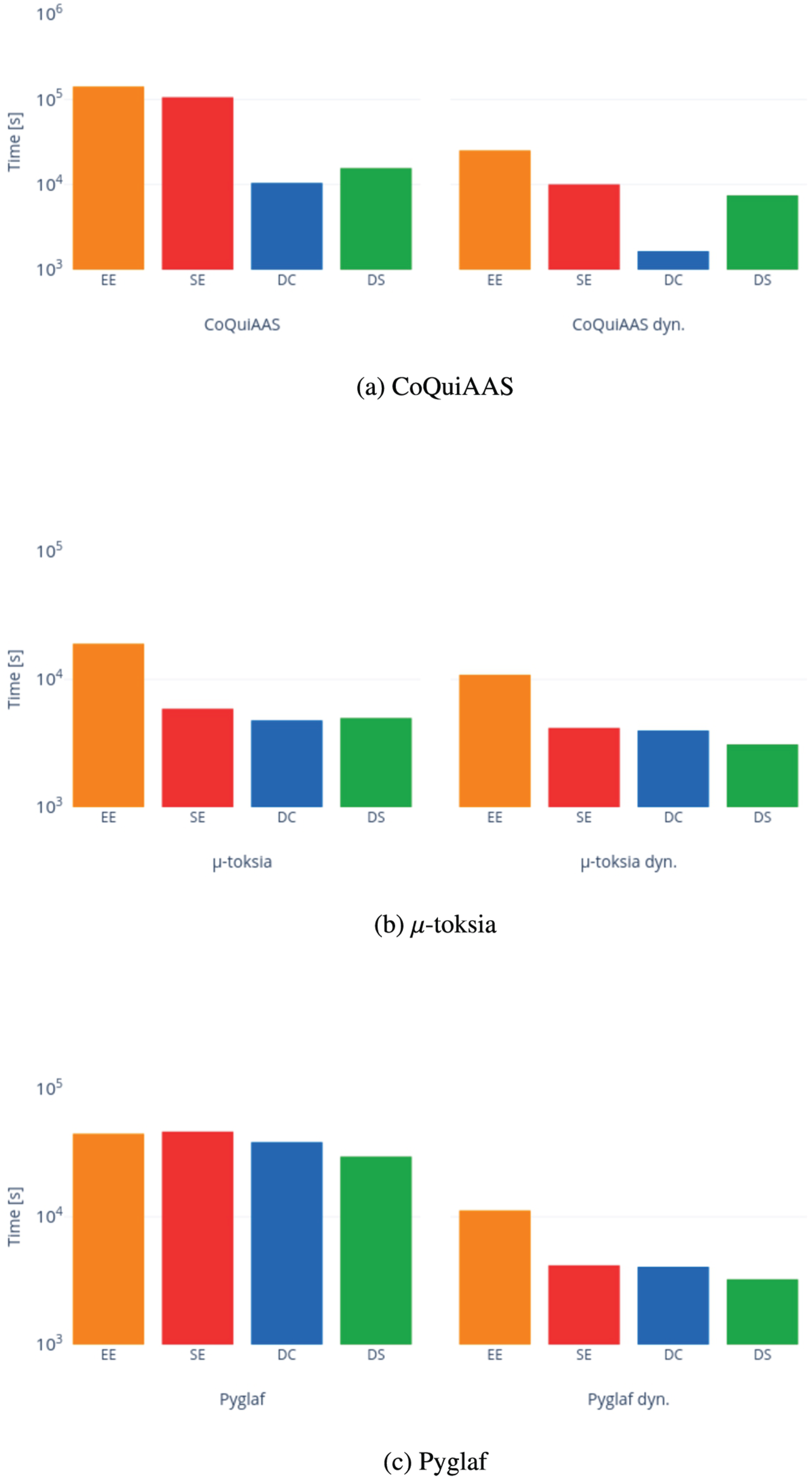

Finally, the performance of dynamic versions of solvers was compared to the performance of their static equivalents. The benchmark instances and eight modifications each were used for comparison (see Section 5.3). Instances suitable for non-dynamic solvers were also generated by applying the modifications. This comparison considered the total time taken to solve each instance and its modifications for the successfully solved instances, and it was performed for the three solvers submitted to dynamic tracks, namely CoQuiAAS,

On average, the dynamic versions of CoQuiAAS,

We also show the differences in performance of CoQuiAAS,

The sum of the time (logarithmic scale) aggregated by the problem of successful instances for each track, considering CoQuiAAS,

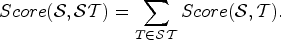

Time [s] taken for each correctly solved instance in the exact track for the semantics

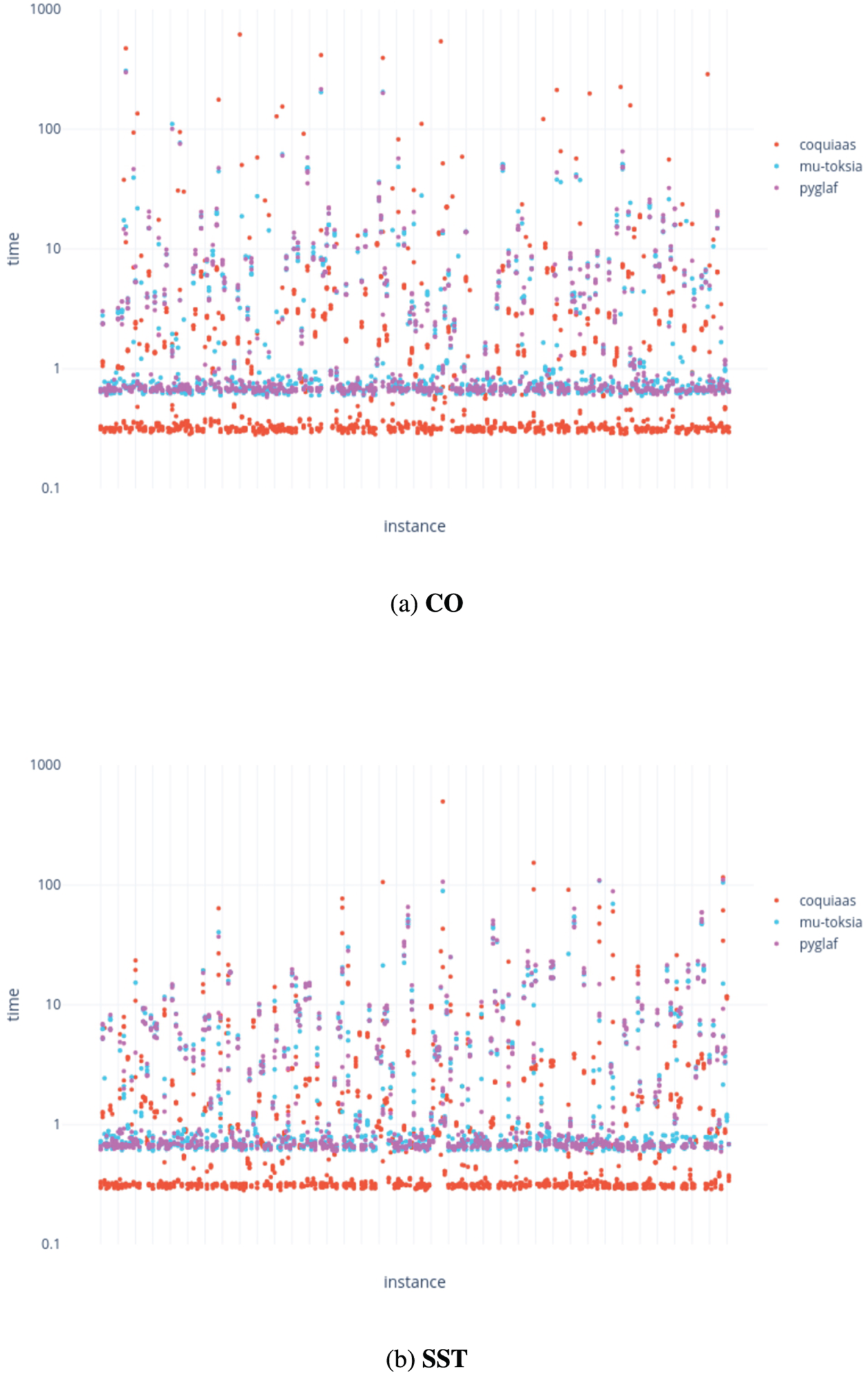

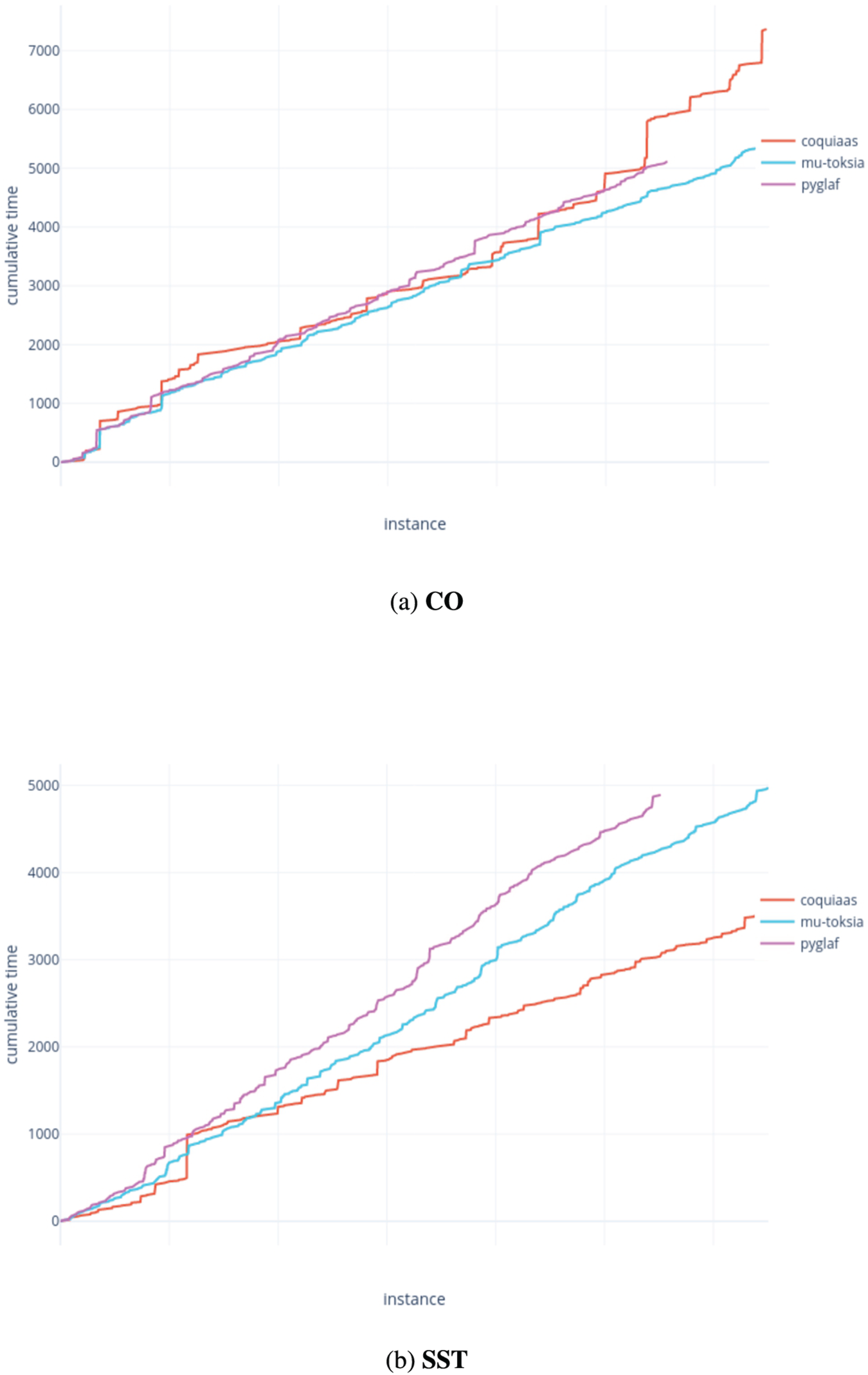

Cumulative time [s] taken to correctly solve all instances in the exact track for the semantics

Now we describe the results of ICCMA’21. More specifically, we focus on the track dedicated to exact algorithms in Section 7.1 and on the track dedicated to approximate algorithms in Section 7.2. The detailed results (ranking and cumulated runtime) for each pair (problem, semantics) can be found in [55].

.Exact track

Compared to the previous edition, the main result of the competition is that there is no global winner (

Figure 14 shows the runtime of solving each instance in the exact track for each solver participating in the sub-tracks dedicated to the complete and semi-stable semantics (other semantics are plotted in C). We observe that some groups of instance seem (in general) easier than some other ones. The data is plotted such that instances are sorted in the lexicographical order. This means that the instances on the left side correspond to some of the instances selected from ICCMA’19 (their benchmark names start with A and B), while the instances at the center correspond mainly to the new instances generated for ICCMA’21. This is in line with the intuition that the new instances should be hard for solvers that do not consider the AFs’ structure. However, notice that the other instances selected from ICCMA’19 (on the right side, with benchmark names starting with M, n, S, or T) are in some cases easy (e.g. Fig. 14a) and in some cases hard, even harder than the new instances from ICCMA’21 (e.g. Fig. 14b).

On Fig. 15, which shows the cumulative runtime (in seconds) for the exact track (under the semantics

.Comparison with ICCMA’19 best solver

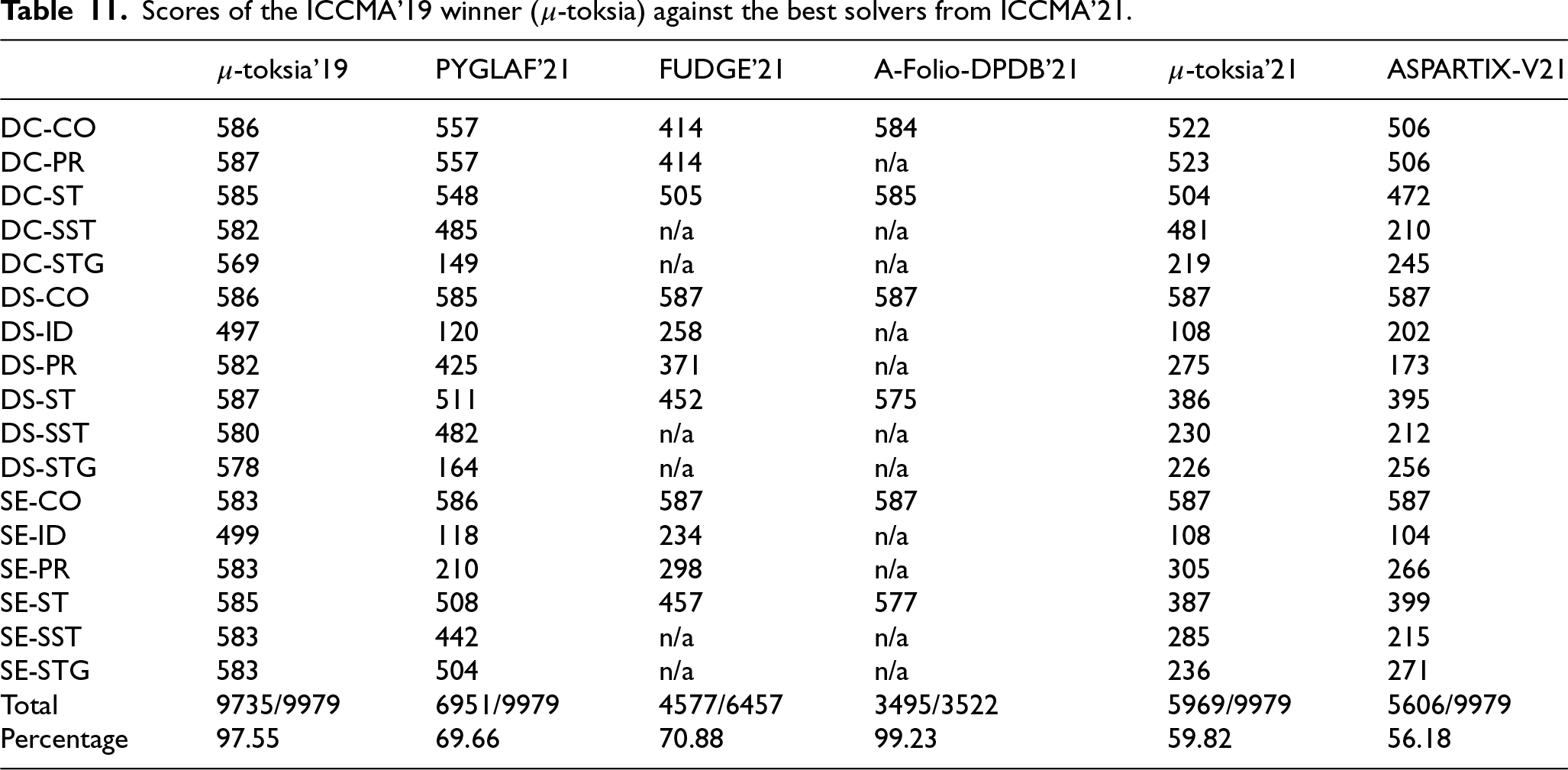

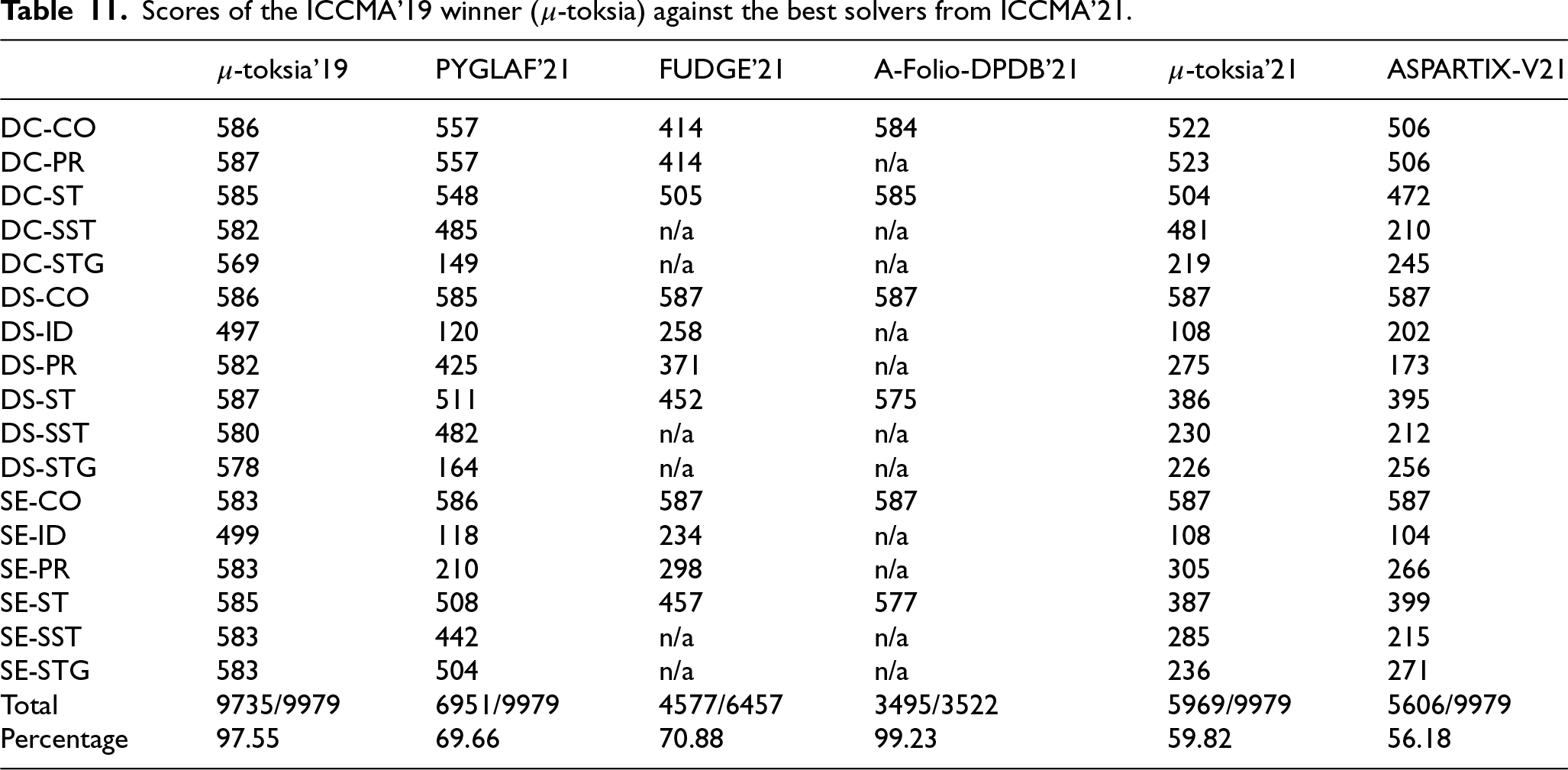

Finally, we have compared

We have only considered

The number of instances to be solved for each pair (problem, semantics) is the same (587). In all the cases,

Scores of the ICCMA’19 winner (μ -toksia) against the best solvers from ICCMA’21.

Scores of the ICCMA’19 winner (

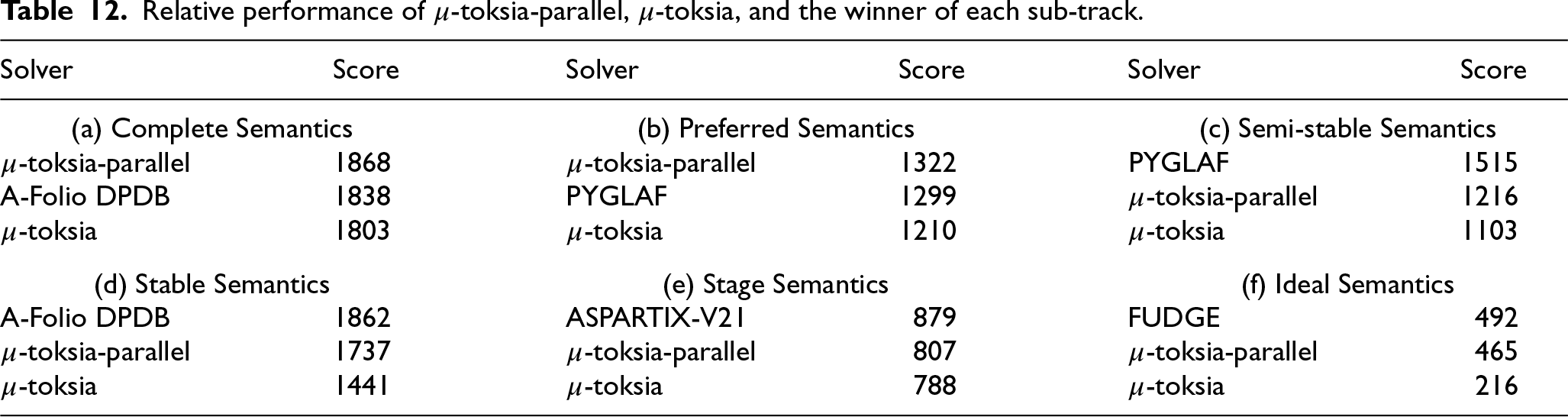

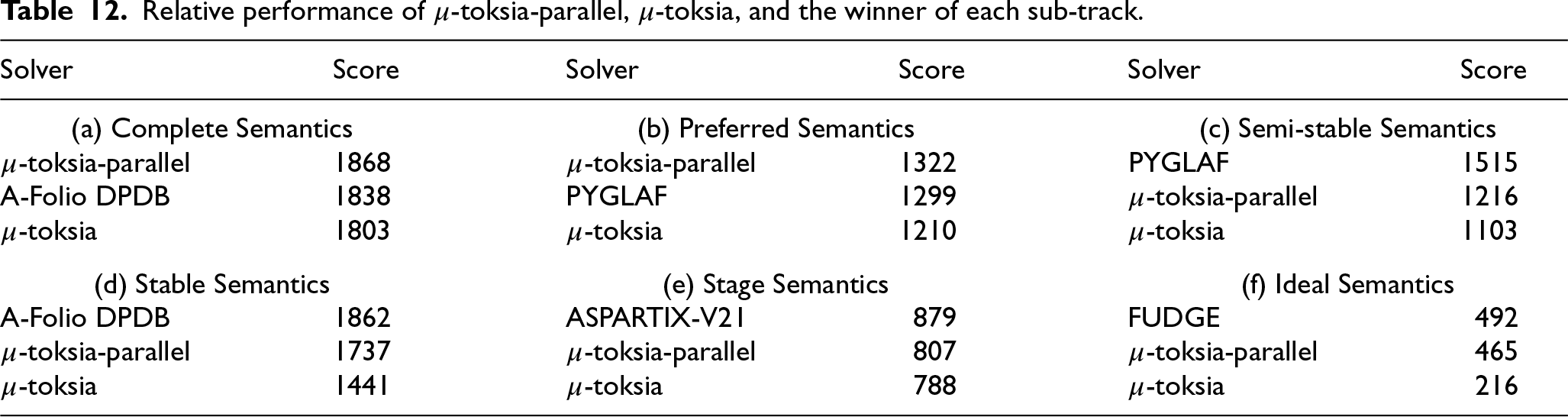

For the first time at ICCMA’21, there was a submission of a solver relying on parallel computing. Indeed,

More precisely, for each sub-track, the score of

Relative performance of μ -toksia-parallel, μ -toksia, and the winner of each sub-track.

Relative performance of

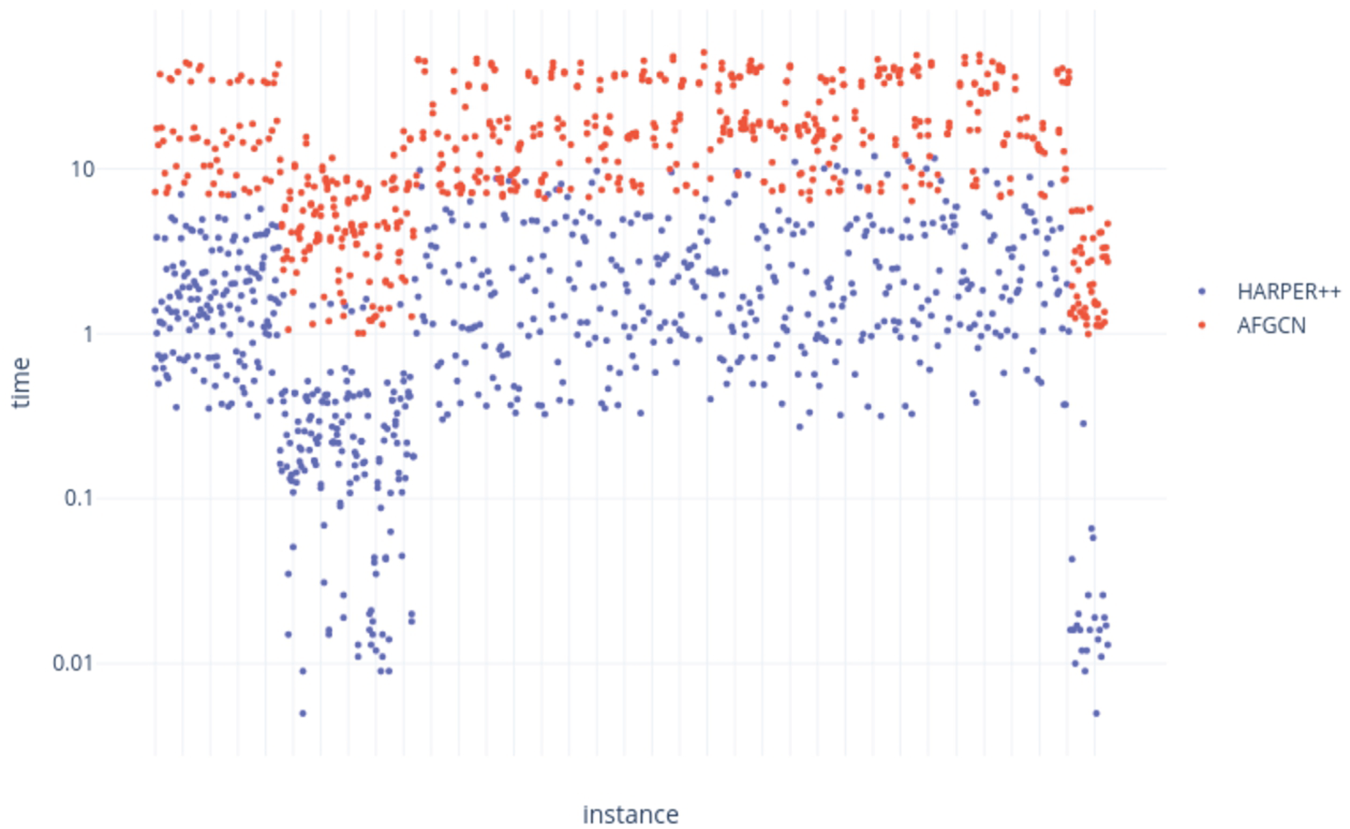

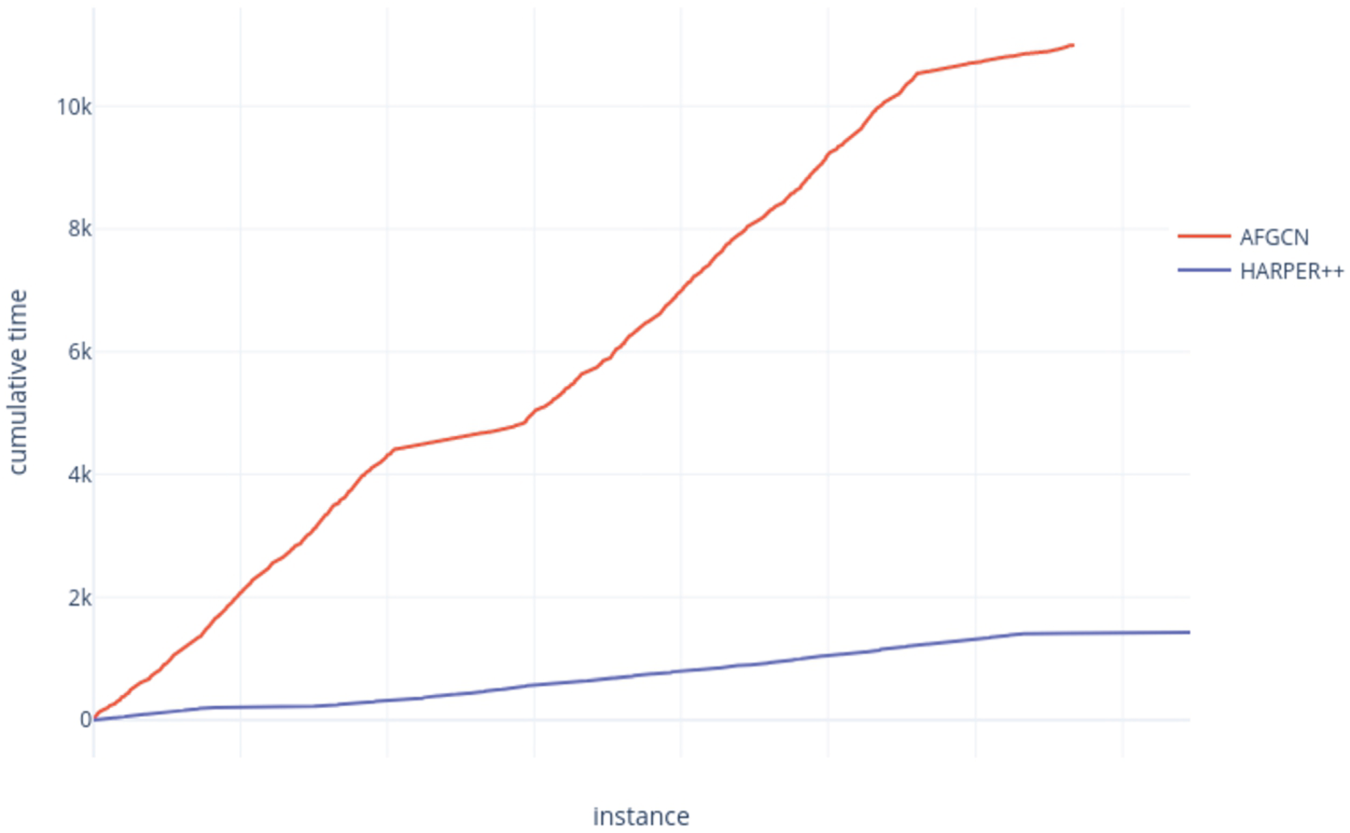

For the track dedicated to approximate algorithms, HARPER

Time [s] taken for each correctly solved instance in the approximate track for the complete semantics – ICCMA’21.

Cumulative time [s] taken to correctly solve all instances in the approximate track for the complete semantics – ICCMA’21.

While the above results show the performance of each solver individually, they do not show how much each solver contributed to the state of the art, as defined by all solvers. While an individual solver may have the best overall performance, it may perform only marginally better than others, albeit consistently. Conversely, a solver may not have outstanding performance across the entire set of instances but beat every other solver on a small subset – without it, the state of the art would be significantly worse for those instances.

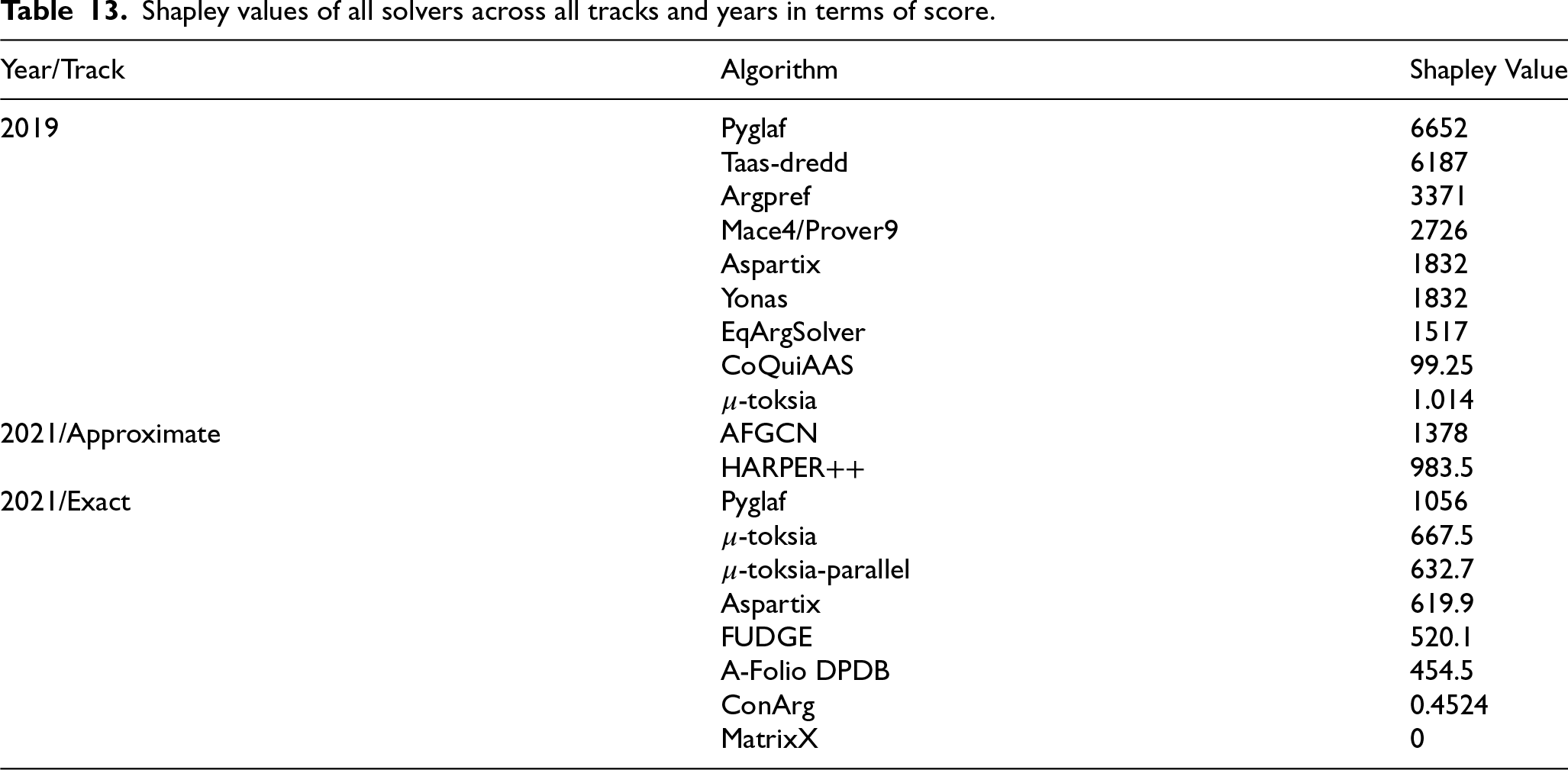

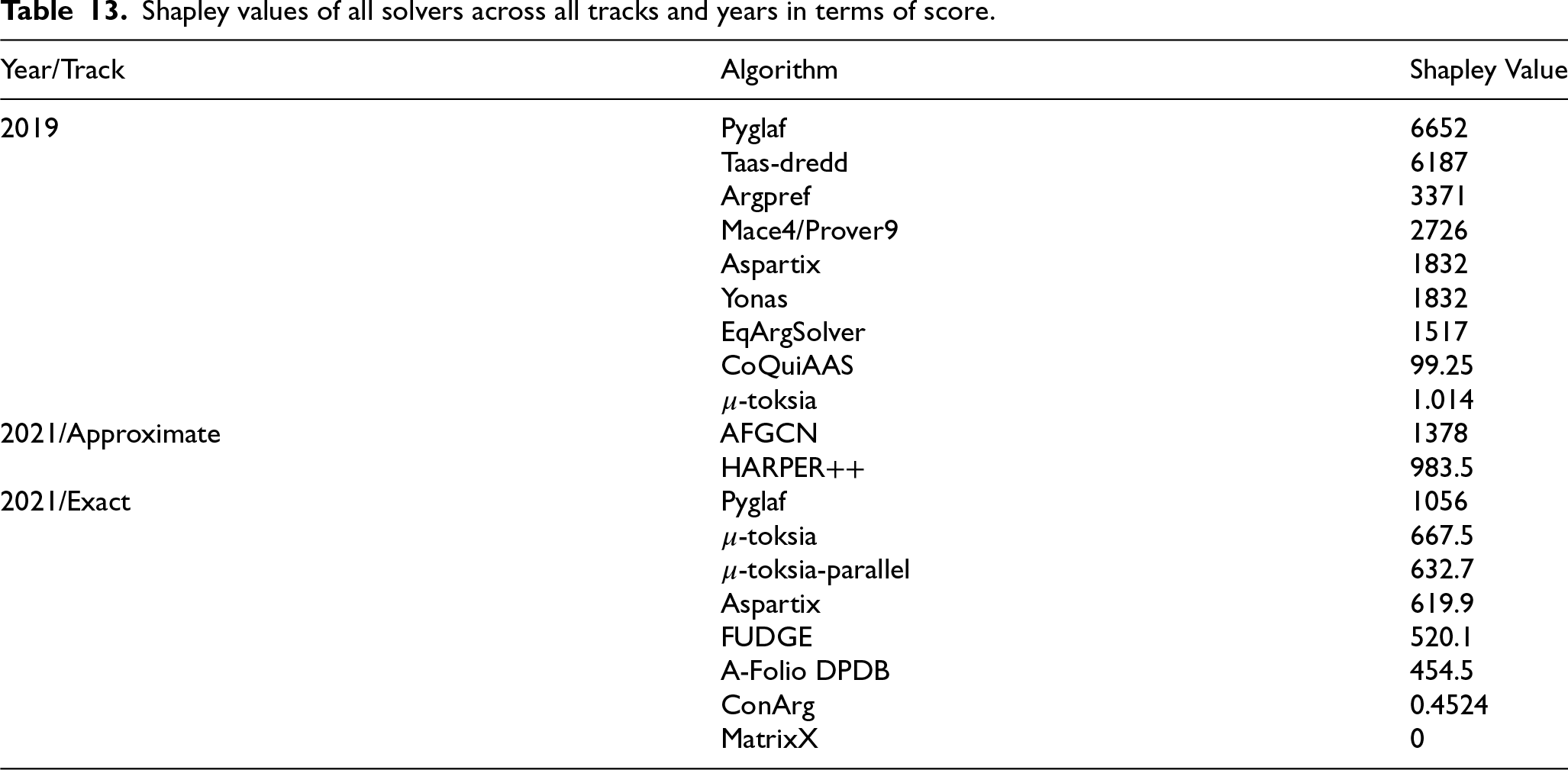

The Shapley value was computed for each solver in each non-dynamic track to assess their contributions to the state of the art [41,50]. Briefly, the Shapley value is the average increase in performance we see by adding the solver in question to any subset of the other solvers. It is a game theory concept with various desirable properties that guarantee a fair credit allocation to each solver. Table 13 shows the results for all solvers across all tracks for both years.

In 2019,

The ranking of solvers for the 2021 competition in terms of Shapley value closely follows the ranking of individual performance when aggregated by sub-track. For the global results, the Shapley value is correlated to both the solver’s individual performance and the number of tracks it participated in (for instance, Pyglaf and

Shapley values of all solvers across all tracks and years in terms of score.

Shapley values of all solvers across all tracks and years in terms of score.

We see a similar picture for most of the individual tracks. These results reveal the recipe for a competition-winning solver – achieve good performance across all instances with a tried-and-tested approach rather than using a new heuristic that works well in only a few cases. They also show the value of the Shapley analysis – we can identify solvers that are much better than anything else on some parts of the competition instances, allowing us to give credit to novel and very different approaches.

Finally, it is worth noting that the solvers in ICCMA’19, and especially ICCMA’21, have shown a significant improvement in terms of correctness in comparison to the 2015 and 2017 editions, where several participants provided incorrect answers.

Organizing and running a competition requires a lot of effort and dedication. In this section, we give some of our insights into what could be improved in the hope that it will be helpful for the organizers of future competitions in AI.

.ICCMA 2019

The requirement for all submitted solvers to use Docker in ICCMA’19 saved much work in setting up solvers and ensuring they ran correctly. However, Docker containers cannot be run directly on most HPC infrastructure because of Docker’s security deficiencies. To leverage our HPC infrastructure to provide the large amount of computational resources required, the Docker containers were converted to Singularity containers,33 which could be run without problems. The underlying virtualization is the same, and the conversion is straightforward; for the purposes of this competition, Docker and Singularity are equivalent.

JSON inspires the standardized output format required for submitted solvers but does not entirely conform to the JSON standard. Various pre-processing steps were implemented in ICCMA’19 that transformed the output format into JSON and handled simple cases directly. While this allowed us to use a standard JSON parser, the additional steps could have been avoided with simple modifications to the output format. It can be recommended that competition organizers require standard formats as far as possible to avoid implementing custom parsers.

As can be seen from the results of the competitions held in 2017 and 2019, the performance of participants relying on SAT varies greatly depending on the implementation of the SAT solver itself. Concerning solvers submitted to ICCMA’19, Argpref implements MiniSAT 2.2.0, a SAT-with-preferences based approach [36],

Selecting the benchmarks to use could lead to errors in the evaluation phase. As shown in [69],

.ICCMA 2021

The organizers of the 2021 edition appreciated the use of Docker in ICCMA’19. However, a part of the community did not receive it well. Furthermore, some technical difficulties were encountered, which prevented the organizers from using Docker again for ICCMA 2021. As a result, various issues arose when setting up the competition environment, such as ensuring that all the submitted solvers were correctly compiling or running.

The exceptional results of

Moreover, beyond the SAT-based approaches, other approaches have been studied in the literature (e.g., based on graph decomposition [57] or backdoors [33]). These approaches seem promising but were mainly absent from ICCMA. However, let us notice that the success of A-FOLIO-DPDB for the counting task (using a technique based on the graph treewidth) confirms the interest of such approaches. So, we believe that one of the main challenges for the near future of the ICCMA competition is to provide challenging benchmarks that could not be solved by “simply” plugging a SAT solver but would require a more fine-grained analysis of the graph properties like those mentioned here. We have started this effort with our community-based benchmark generation approach.

We envision two last directions for improving the competition. Regarding the main track, it seems important to be able to guarantee the results of the solvers, e.g., providing not only a YES/NO answer to decision problems but also completing them with a certificate. This effort has been started by the recent ICCMA 2023, which requires a certificate with positive answers for credulous acceptability and negative answers for skeptical acceptability. Finding certificates for other cases is a challenge, but inspiration could be taken from other communities (e.g. in SAT competitions, UNSAT instances are certified as well35).

Finally, regarding the approximate track, various important questions should be investigated, like the possibility of giving guarantees about the proximity between the correct result and the answer provided by the algorithms or approximate algorithms for computing one extension or the set of (skeptically or credulously) accepted arguments.

.Conclusion

This paper described the 2019 and 2021 editions of the International Competition on Computational Models of Argumentation. In particular, we have outlined the submitted solvers, benchmarks, ranking design, and results obtained by solvers. To evaluate the improvement of solvers over time, we have also compared the top 2019 solvers with the ones that participated in ICCMA’17, and similarly for the best solvers of 2021 with the winner of ICCMA’19. The top solver in 2019, i.e.,

Several changes were implemented between ICCMA’19 and ICCMA’21 to enhance the competition’s significance and meet community expectations. The organizers of ICCMA’21 decided not to utilize Docker as a platform for standardizing solver execution due to technical challenges and reservations from part of the community. Moreover, the absence of participants in the dynamic track led to its removal in ICCMA’21, where an approximate track replaced it. As for individual tasks, ICCMA’21 replaced the enumeration of grounded extensions (which is not a difficult challenge) with a counting task. Finally, contrary to what happened in ICCMA’19, where the organizers used ConArg as a reference solver to evaluate the correctness of the participants, the results of each solver in the exact track of ICCMA’21 were checked for inconsistencies against all the other participants.

ICCMA provides an opportunity to advance research in computational argumentation by fostering the development of efficient solvers capable of addressing real-world problems. The competition’s benchmarks comprise large frameworks that are not typically representative of real-life scenarios involving conflicting information, which can usually be modeled through smaller graphs. Therefore, other crucial factors should also be considered, such as the ability to generalize and ease of use for non-expert end-users. The best solver to use might not be the fastest one, but instead, the solver that comes with APIs for several programming languages, web interfaces, and easy-to-follow manuals or guides. In future competitions, having a criterion that considers these usability parameters would be beneficial. On the other hand, there exist particular applications of argumentation theory (for instance, to produce/enhance explanations for neural networks [9,65]) in which the input data reaches a significant size. In this case, a separate consideration must be made as the solvers’ performance will still play a fundamental role in addressing related problems. In future competitions, it would be interesting to use neural networks as benchmarks to test the behavior of solvers.

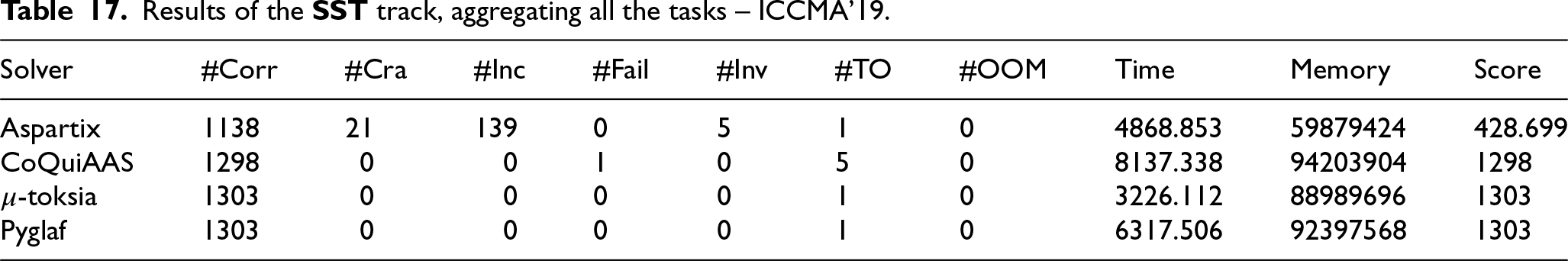

Results of the

Results of the

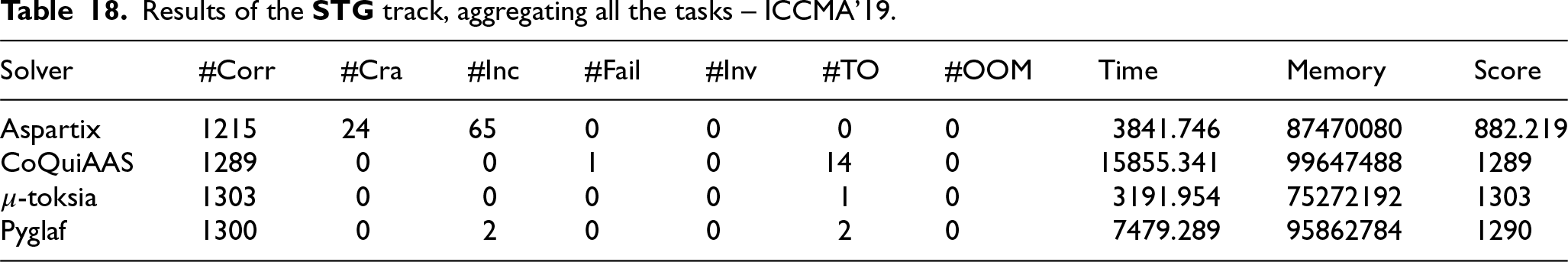

Results of the

Results of the

Results of the

Footnotes

Acknowledgements

We want to thank all the participants of ICCMA’19 and ICCMA’21 for submitting their solvers and benchmarks and thus directly participating in advancing computational models of argumentation. We also thank the ICCMA steering committee for providing support and help in setting up the competition. Finally, we would like to thank Dr. Theofrastos Mantedalis for the help provided in organizing ICCMA’19.

We thank the Advanced Research Computing Center at the University of Wyoming for providing the computational resources used for the 2019 edition and the analysis of the results. Lars Kotthoff is supported by NSF grant 1813537. We thank the Centre de Recherche en Informatique de Lens at the Université d’Artois for running the 2021 edition on its computer cluster, which was funded by the French Ministry of Research and the Région Hauts de France through CPER DATA.

MiniZinc Challenge:

SAT Competition:

Planning competitions:

ICCMA’23 Website:

Docker.com:

The solvers that can “speak” the two format languages were required to select the one they wanted to be tested on in ICCMA’19. For ICCMA’21, the

Trivial graph format:

ICCMA’19 solvers:

Taas project web page:

See

See

See

See

See

ICCMA’17 benchmark list:

ICCMA’17 benchmark selection:

A description of new ICCMA’19 benchmarks:

For this reason, this solver did not participate in ICCMA’19. However, it participated in ICCMA’15, ICCMA’17 and ICCMA’21.

A more detailed description of these benchmark generators can be found on the website of ICCMA’19).

Those graphs were generated through the

See

ICCMA’19 results:

For this and following figures in this section, more semantics are shown in C.

Wilcoxon signed-rank test results for ICCMA’19 classic tracks:

This could mean that Argmast-sat performs better on larger instances due to possible memory exhaustion by

Figures for the other semantics are given in C.

Singularity website:

CaDiCaL documentation:

See

Appendix A. Benchmark selection

Appendix B. Detailed results

Table 21 provides detailed results about Argmat-sat ’17, Pyglaf ’17, and

From Table 22 we extracted Fig. 12: it shows the performance improvement between ICCMA’19 dynamic-solvers and their non-dynamic version.

PYGLAF was removed from the