Abstract

Online debates typically possess a large number of argumentative

Introduction

Background and motivations

The Internet has enabled people to share their views about many topics online, ranging from commenting on important news events1 E.g. BBC News – E.g. an online debate from Kialo –

Further, comments exchanged over such discussions are often argumentative in nature, and can be modelled with argumentation theory (e.g. [7,31]); this can resolve disagreements arising from such online debates by finding the winning arguments based on normative yet intuitive criteria. Suppose that a user would like to know which arguments have prevailed at a given moment, but does not have time to read every comment. Such a reader may think that a given argument has won when it has been rebutted by further comments which have not yet been read. The goal of this paper is to compare how various comment sorting policies can affect the number of actually winning arguments exposed to a reader, depending on how much of the debate has been read. A precise way to compare such policies will bring visibility to which arguments should win, thus helping readers to better navigate and understand large and argumentative online debates (e.g. [1,8,14,28,32,36]).

We apply argumentation theory, data mining and statistics to build a pipeline that compares comment sorting policies by measuring the number of actually winning arguments each policy displays to a reader who has only read a part of the debate. Intuitively, we mine online debates (e.g. such as [13,17,35]), and represent them as directed graphs (digraphs), whose nodes are the comments made during the debates and the edges denote which comment is replying to which other comment. As such debates begin with an initial comment, and each subsequent comment replies to exactly one other comment, these debates are trees, which is what we will restrict our analysis to in this paper, leaving other debate network topologies for future work (see Section 6).

To enable the application of argumentation theory, the pipeline makes two idealised assumptions: (1) each comment qualifies as a self-contained argument, and (2) each reply is either supporting or attacking. Of course, the vast majority of online debates are not so “clean”, but the pipeline does not preclude methods that allow for the identification of whether a comment qualifies as an argument (as opposed to, e.g. an insult), or whether a reply between comments is an attack or a support (e.g. [3,11]). Assuming that there is a relatively “clean” online debate, or that we can incorporate sophisticated data cleaning techniques to the pipeline, then we can treat the online debate formally as a BAF. Furthermore, if this debate is a tree, then the set of winning arguments is unique [15, Theorem 30].

To model the idea of a comment sorting policy, the pipeline linearly sorts all comments based on the direction of replies, such that if comment

Suppose the debate has

The pipeline then aggregates the

As mentioned earlier, if for simplicity one does not wish to incorporate argument mining techniques, then these calculations can only make sense if we do have a dataset of “clean” online debates, i.e. where each comment qualifies as a self-contained argument, and each reply is classified as either an attack or a support. Fortunately, the online debating platform

In Section 2, we will summarise the relevant background in argumentation theory and related work. In Section 3, we will explain the pipeline and clarify its assumptions and limitations. In Section 4, we overview Kialo, explain how it validates the pipeline’s assumptions, explain how we have mined the data, and offer summary statistics. In Section 5, we articulate the comment sorting policies based on likes and time for Kialo, and have the pipeline measure which policy is more effective. Section 6 concludes with future work.

Technical background and related work

In this section we recap relevant aspects of abstract argumentation theory and bipolar argumentation theory. We then give a brief survey of argument mining and its application to online debates.

Abstract argumentation theory

From [15]: An

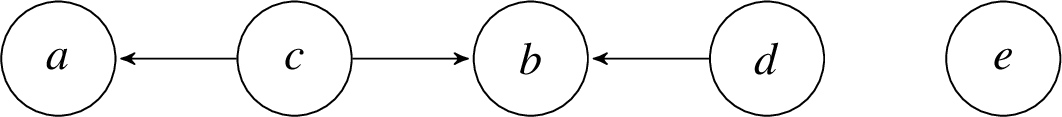

We say Let The AF in Example 2.1; argument

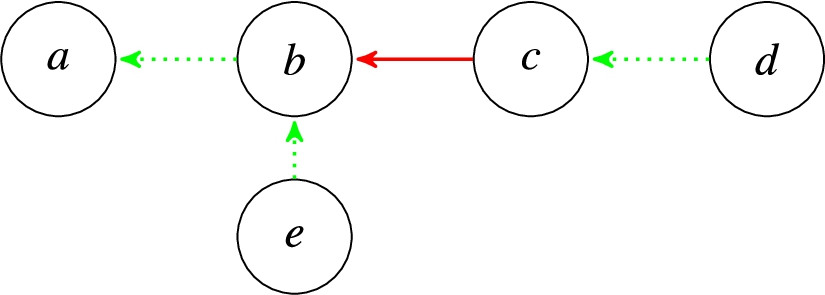

There are principled ways to absorb Figure 2 illustrates the BAF where

The BAF from Example 2.2, where green (dotted) edges denote supports and red (solid) edges denote attacks. The support paths of length 1 in this BAF are (Example 2.1 continued).

In the next section, we will apply ideas from argumentation theory to articulate a pipeline that allows one to rank the effectiveness of various comment sorting policies in online debates, assuming that the input data is already “clean”, either because it is “naturally clean”, or that it has been “cleaned up” by techniques such as those just mentioned. Therefore, the pipeline does not preclude the use of such techniques. We will outline how such techniques can be integrated into this pipeline in Section 6.

A pipeline to evaluate comment sorting policies

In this section, we articulate the pipeline, based in argumentation theory, that can evaluate how best to sort comments for readers who may not have read the entire debate.

The stages of the pipeline

The pipeline consists of the following stages, where the first two stages involve validating our assumptions on the data.

We input to the pipeline an online debate. The nodes are comments made in the debate that possess some

We make the assumption that the digraph is “clean”, in that every comment can be seen as a self-contained argument, and every reply is either an attack or a support. Therefore, the debate digraph is a BAF

We calculate the grounded extension,

We now explain what we mean by

Why is this method of reading comments plausible? We assume that a reader who is interested in the debate would start with the opening argument and read sequentially along the replies without missing any intermediate arguments, in order to fully understand each thread of the debate. Upon reaching an argument with multiple replies, the online debate platform will rank the subsequent debate replies by the chosen attribute (e.g. number of likes), therefore the reader will start reading the debate along the path with the greatest or least values of this attribute. Indeed, this is enforced to some extent in real online discussion platforms such as comments to articles in Disqus4 E.g. see https://www.quantamagazine.org/physicists-debate-hawkings-idea-that-the-universe-had-no-beginning-20190606, last accessed 26/8/2020. Disqus has appropriately indented comments in the user-inteface (UI) to denote the depth of each comment within the debate tree, and sorting the comments from e.g. most to least liked follows this DFS display pattern. E.g. see https://www.dailymail.co.uk/news/article-6894539/Meghan-Markle-snubs-Queens-doctors-birth-doesnt-want-men-suits.html, last accessed 26/8/2020. In contrast, the comments, although sortable by DFS, only allows replies of depth 2 (i.e. replies to the news article, and replies to replies), and displays only the first two comments amongst the depth-2 replies by default.

This example sorts the arguments to represent reading from the earliest to the most recent following DFS. Consider the BAF from Example 2.2, where

A reader would start at the root,

From now, we make the assumption of

(Example 3.1 continued).

This example sorts the arguments to represent reading from the most liked to the least liked following DFS. Consider again the BAF in Example 2.2. Suppose further that argument As shown in Examples 3.1 and 3.2, comment sorting policies order the arguments in We quantitatively compare

(Example 3.1 continued).

The results of applying steps (5) and (6) of the pipeline in Example 3.3

The results of applying steps (5) and (6) of the pipeline in Example 3.3

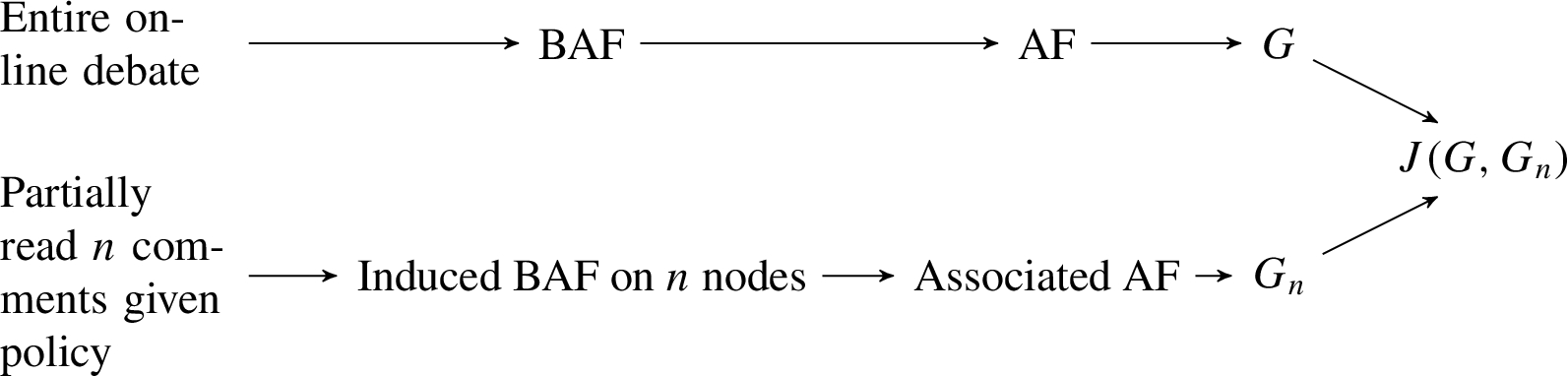

We illustrate the above six steps, for a fixed value of

A schematic of our pipeline used to evaluate comment sorting policies.

The following are straightforward consequences of our pipeline.

If

Note that the converse to Corollary 3.5 is false, as indicated in Example 3.3 for

The induced BAF is the original BAF itself, hence

Intuitively, Corollary 3.5 states that if you read nothing you get nothing, and Corollary 3.6 states that if you read everything you get everything, regardless of the comment sorting policy. One further result concerns the case where all edges are supports and the policy is based on DFS.

(⇒) If

(⇐, contrapositive) Assume that there is some attacking edge

This means that in a debate where there is no disagreement, the more one reads, the more of actually winning arguments is obtained, because every additional argument read is actually winning. Further, this strictly monotonically increasing situation is only possible in the case where every reply is supporting.

As discussed so far, the pipeline takes as input a BAF and a comment sorting policy, and returns a vector

Given a policy

Intuitively, as the Jaccard coefficient is a measure of how many actually winning arguments the apparently winning arguments contain, having read the debate up to a certain point,

For the BAF in Example 2.2, sorting from the earliest comment to the most recent comment gives an average Jaccard of

The following corollary of Theorem 3.7 shows for the special case of debates where there is no disagreement, every policy based on DFS gives an average Jaccard of 0.5. This makes sense as we are taking the centre of mass of the diagonal line that joins

By Theorem 3.7, for any sorting policy based on DFS, (

The first contribution of this paper is a pipeline that accepts as input an online debate represented as a BAF, and a comment sorting policy, and calculates how this policy, on average, exposes the reader to the actually winning arguments, especially when the reader has not read the entire debate. We have given a running example of how the pipeline works (Examples 2.1 to 3.9).

However, the fundamental assumption of the pipeline – that a real-life online debate can be represented as a BAF – is suspect. One cannot just assume that comments posted on online debates are self-contained arguments, nor that every reply can be classified as an attack or a support. Of course, the pipeline does not preclude the use of argument mining techniques (Section 2.3) to structure online debates into a BAF for our pipeline to ingest (Section 6). In the next section, we present a dataset that we have mined, which seems to satisfy the property of being “clean”, and thus being immediately suitable for input into the pipeline as a proof of concept of how might various comment sorting policies be evaluated.

Kialo

We now overview

What is Kialo and why is Kialo “clean”?

Kialo is an online debating platform that helps people “engage in thoughtful discussion, understand different points of view, and help with collaborative decision-making.”6 Quoted from

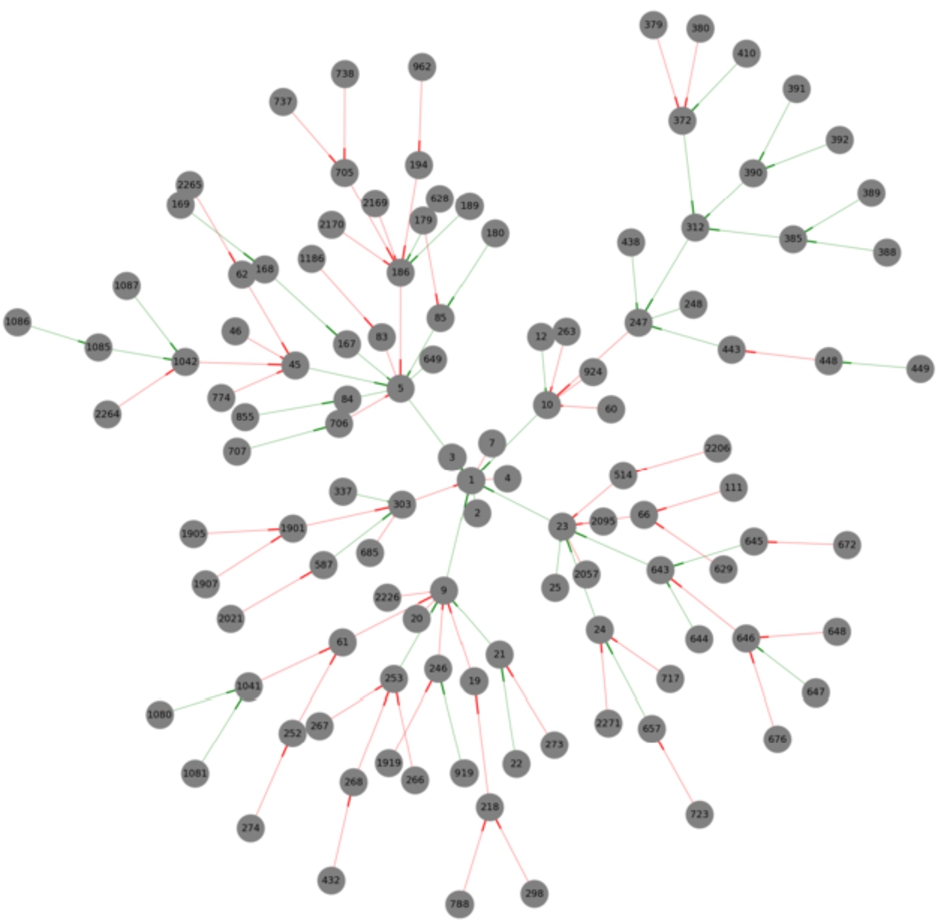

Consider the Kialo discussion with thesis,

Kialo debates are “clean” and easily-represented as BAFs without the use of argument mining tools (Section 2.3). Firstly, Kialo has a strict moderation policy, which aims to keep users engaged and promotes a well-structured discussion.8 See See See See Refer to the URL in Footnote 8.

In Kialo, every claim

In summary, Kialo is an online debating platform. Its highly moderated nature validates our assumptions that every claim made in Kialo debates can be treated as self-contained arguments. Further, every reply between two claims in a Kialo debate must either be a support (pro) or an attack (con). Lastly, the structure of Kialo debates guarantees that the underlying digraph is a tree. It is in this sense that Kialo debates are sufficiently “clean” for us to represent them straightforwardly as BAFs without the need for further data cleaning methods.

We scraped all Kialo debates dated up until March 2019 as follows: we use the publicly visible Kialo application programming interface (API), which is used by Kialo’s front end, to acquire all the available tags used to tag individual debates; this resulted in a list of over one thousand unique tags. We then used the same API to perform a tag-based search for debates using the previously acquired list of tags. For each debate we obtained its claims, replies, and various claim attributes such as the username of the author, its text, time of posting, and votes (related to likes, see Section 5.2). This returned 1,056 debates. We then use independent means of verifying this dataset [4], which gives us a high degree of confidence that this is almost all of the debate activity on Kialo, as of March 2019.

The resulting dataset

When the debates dataset was cleaned, we noticed that some nodes (less than 1% of the total) have empty text and therefore could not be considered arguments; this is possibly due to how the data is stored in the back end of Kialo has failed to synchronise with moderators asking for a claim to be removed. Further, some reply edges violate time coherence (Section 3.1). We delete both such nodes and edges and focus on the resulting sub-discussions, on the assumption that Kialo’s moderation policy implies that sub-discussions are also self-contained, despite being separated from the thesis, due to the conciseness of each reply. This results in The anonymised BAF graphs from the Kialo data would be shared upon reasonable request for research and reproducibility. We can visualise the debate in Fig. 4. This has 115 arguments where The debate as a BAF from Example 4.2, where red edges are attacks and green edges are supports.

We now input our Kialo dataset (Section 4) into our pipeline (Section 3) and evaluate four policies.

Validating the pipeline assumptions

As stated in Section 4.1, Kialo debates are sufficiently clean such that they are readily representable as BAFs due to its design and moderation policy. Further, in our dataset, all debates are trees hence all debates (and all induced subgraphs thereof) have a well-defined set of winning arguments via the grounded extension that the pipeline calculates. This verifies Steps (1) to (3) of the pipeline (Section 3.1), apart from defining the policies to sort comments by. Lastly, we do not need to perform further natural language processing, because the BAFs are already given.

Which policies to evaluate?

To apply Step (4) of the pipeline to Kialo data, we still assume that an interested reader would read each replying comment from the root in a DFS manner as this models how the reader is getting whole conversation threads without skipping comments before backtracking to the next possible thread.

For the purposes of illustrating how the pipeline works on “cleaned” real data in this paper, we compare sorting along two attributes:

Time refers to the time of posting the claim, while likes is a measure of how “good” the claim is. In our dataset, each comment in a Kialo debate is already time-stamped, hence time for each comment is well-defined. However, likes is not well-defined. Conceptually, the closest attribute available in Kialo comments is the number of See See

How can we relate the five-vector of votes

If a claim has

Notice if

We now define the following two

If a claim has

One criticism of rational likes is that this idea of equal weight increases across the five impact categories is unjustified in that it assumes all readers interpret these impact categories rationally. Another approach is to weigh each impact category according to the number of votes each has received across all

If a claim has

Therefore, we enhance all nodes in each BAF of our dataset with rational and empirical likes, based on their votes vector. We define the following four policies to evaluate with our pipeline (Section 3.1):

We now measure the effectiveness of the above four policies as described in Steps (5) and (6) of Section 3.1. For each debate in our dataset, we calculate and plot

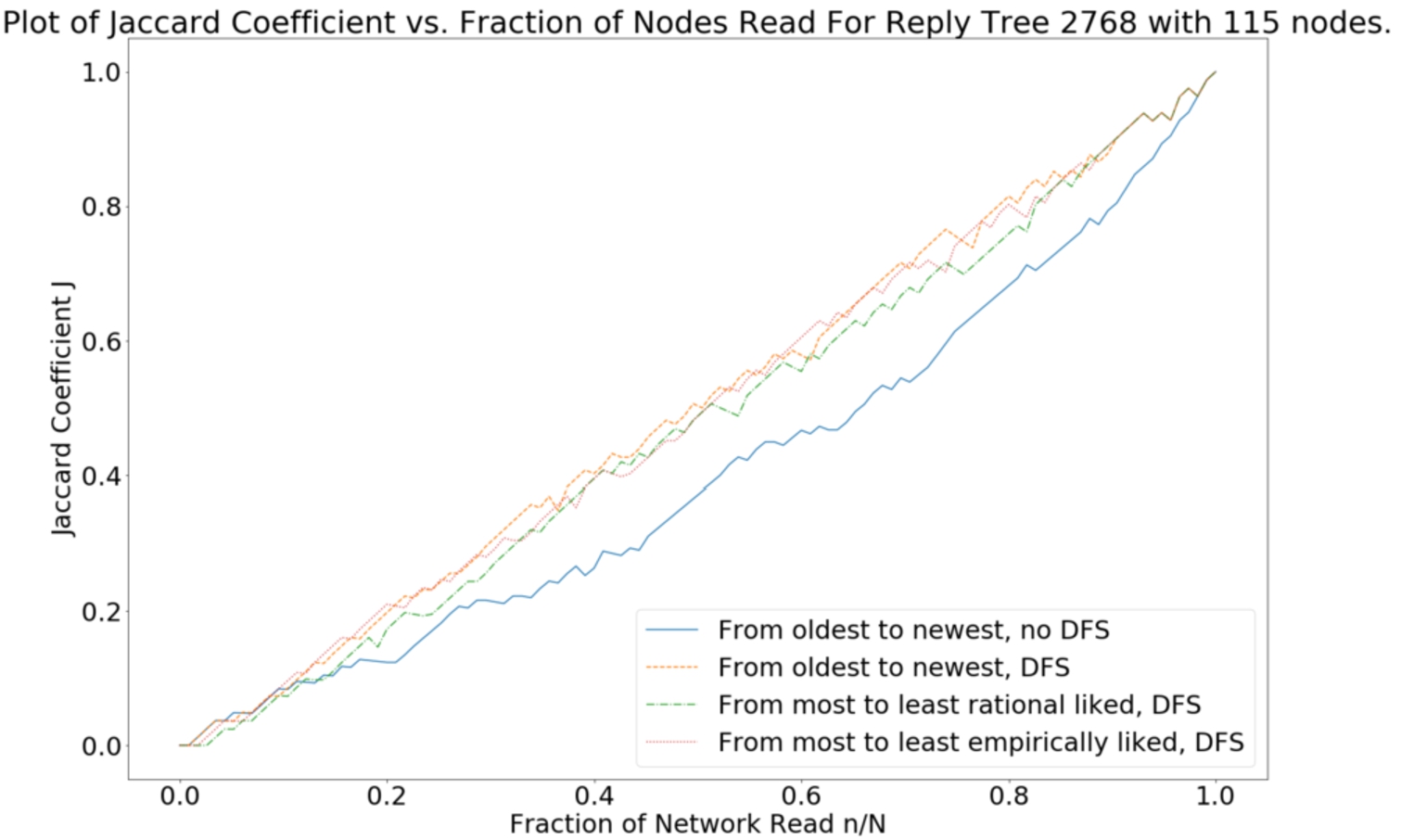

(Example 4.2 continued).

The data cleaning process has assigned the index 2,768 to this debate. As

The

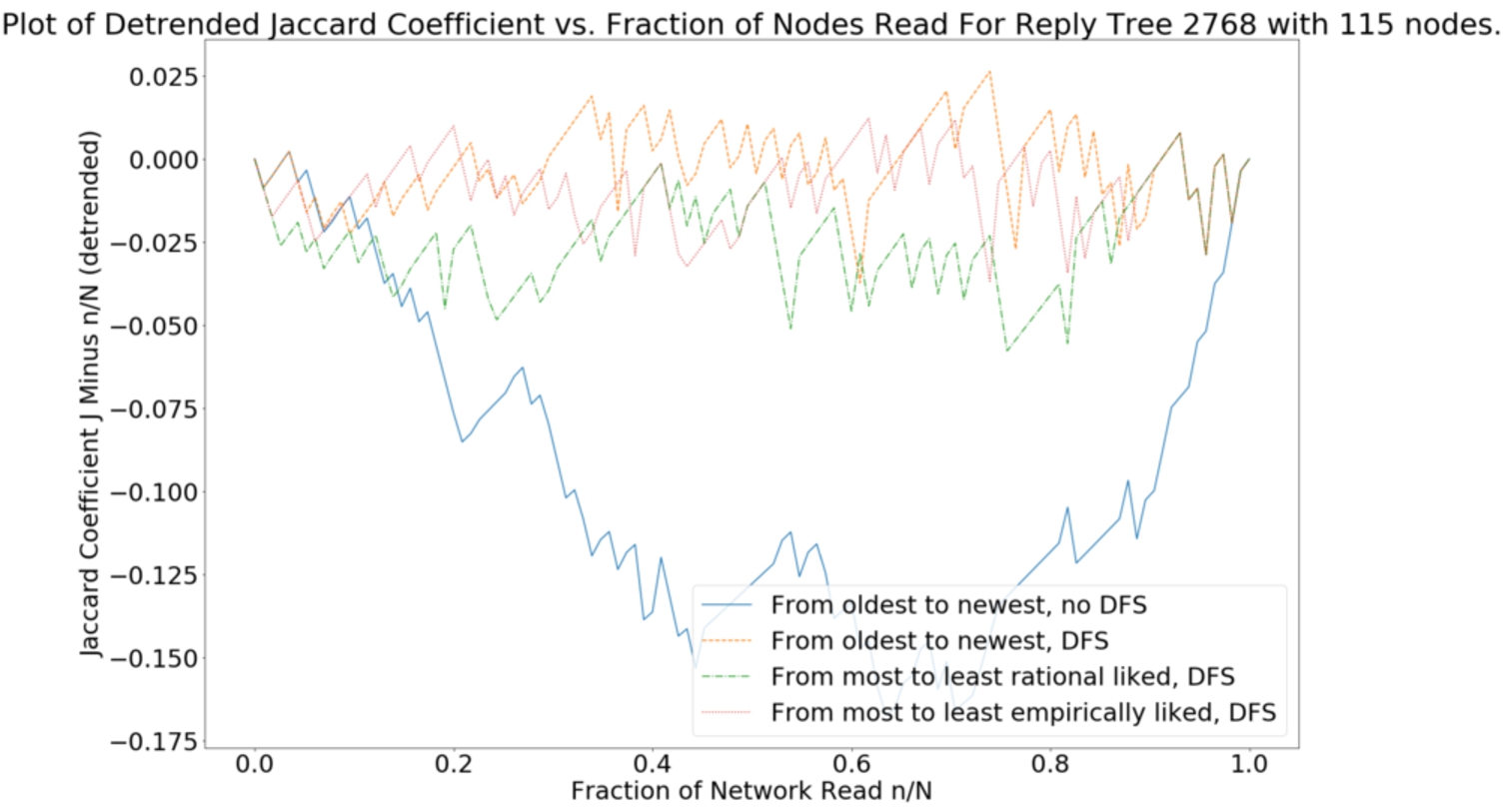

Visually, it is difficult to judge how each policy differs as all cluster around the diagonal. For each debate, we plot the

Figure 6 plots the detrended Jaccard vs.

The

Visually, all policies are the same when reading up to

The conclusions we have drawn so far only apply to debate 2,768 (Examples 4.2 to 5.5). We are now interested in using our entire dataset of 4,365 BAFs to determine which of our four policies – Policies 1 and 2 are based on time (with or without DFS) and Policies 3 and 4 are based on likes (rational and empirical, with DFS) – is the most effective, i.e. maximises

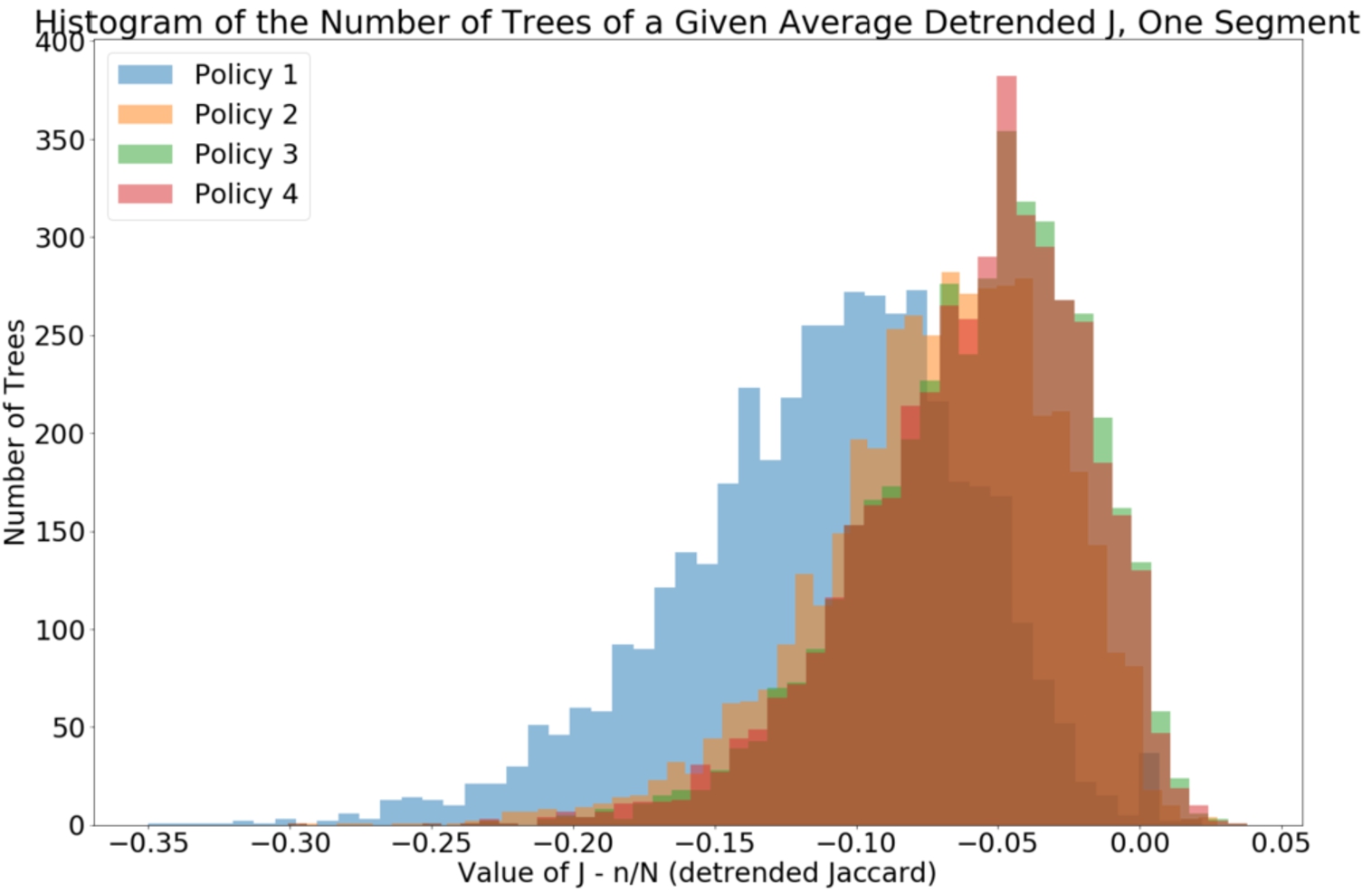

We repeat the calculation in Example 5.5 for all 4,365 BAFs in our dataset. Each version of Fig. 6 for each BAF will have some pattern of fluctuations about the horizontal for each policy. Instead of describing these fluctuations directly, we evaluate the policies over our dataset of debates in two ways: (1) across the entire range of

The

The distribution of the values of

Visually, we can see that Policy 1 is the worst policy to sort by, according to its averaged

The summary statistics for the data behind Fig. 7

We can see that comparing the medians and means of the four policies does support that sorting by likes is better than sorting by time, and further, that time with DFS is better than time without DFS. Let us make this even more precise with an appropriate statistical test. Figure 7 displays skewed distributions, therefore we should make no assumptions on the underlying population distribution for average

As this is a non-parametric situation, we apply the

The results of performing a Mann–Whitney test on the dataset behind Fig. 7, in order to answer the question, “When comparing the four histograms of each policy pairwise, are they sampled from different distributions?” This is answered in the last column of the table, at a significance level of 0.05

For Fig. 7, Policy 2 beats Policy 1 (average

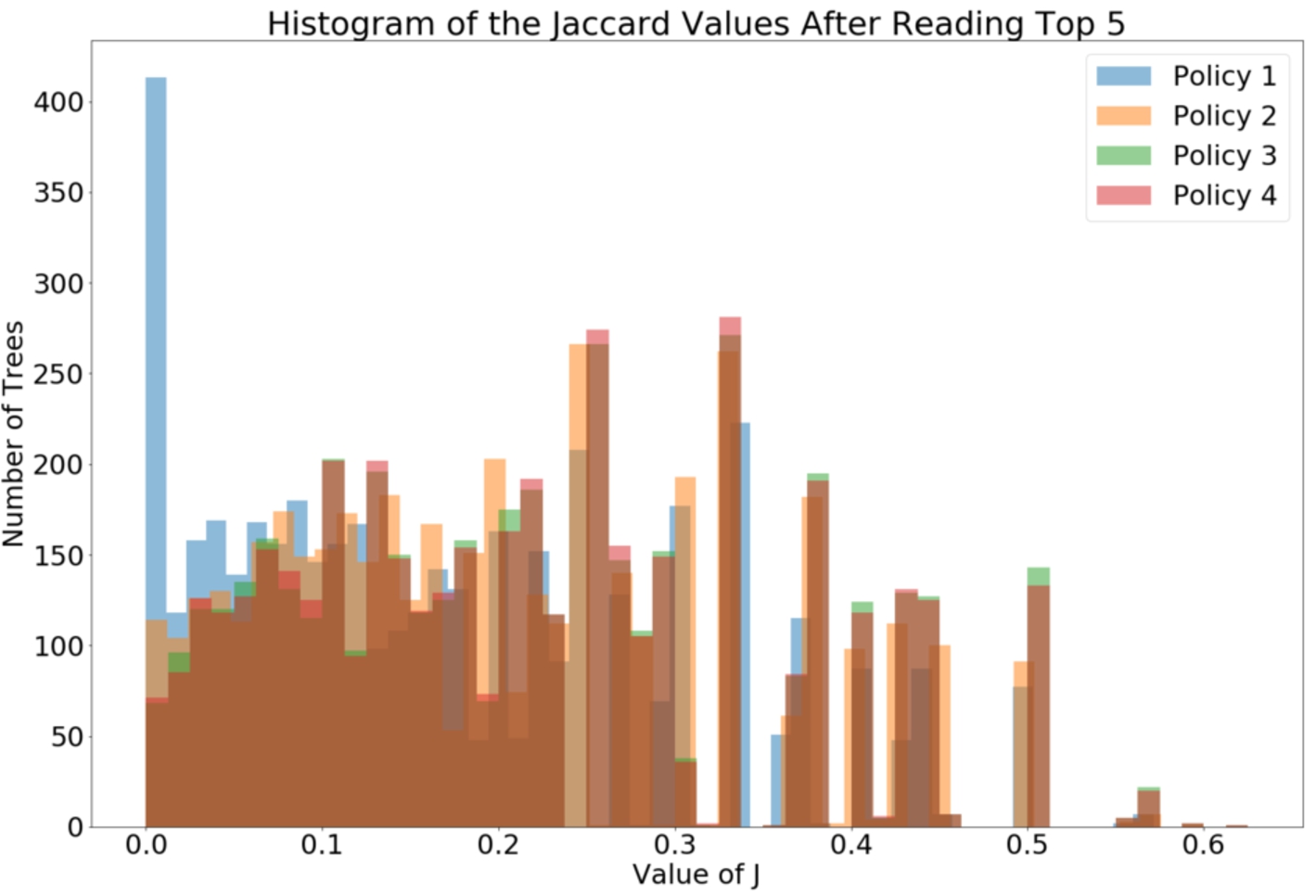

We now perform the same calculation for the case where instead of averaging

The summary statistics of the distributions of

The summary statistics for the data behind Fig. 8

When comparing medians, Policy 1 is again the least effective. Policy 2 is more effective than Policy 1 (i.e. sorting by time with DFS is better than without DFS). Both Policies 3 and 4 are more effective than Policy 2, and they seem as effective as each other. Again, we can make this precise with the same statistical tests, hypotheses and significance levels as for the summary statistics in Fig. 7 (see Table 6).

The results of performing a Mann–Whitney test on the dataset behind Fig. 8, in order to answer the question, “When comparing the four histograms of each policy pairwise, are they sampled from different distributions?” This is answered in the last column of the table, at a significance level of 0.05

We conclude that the ordinal ranking the four policies by

Modern online debates are often so large in scale that the majority of Internet users cannot read all comments made. Many platforms that host these networks make use of comment sorting policies that can display the most suitable comments to readers who may not have read everything. We are interested in applying argumentation theory to measure how effective these policies are in displaying the actually winning arguments to readers who may not have read the entire network.

Our first contribution (Section 3) is a pipeline that takes as input sufficiently “clean” debates represented as bipolar argumentation trees, each having a unique set of (normatively) winning arguments. A sorting policy specifies the order to display the arguments. At each incomplete reading of the pipeline with respect to a policy, which has its own set of provisionally winning arguments from the induced sub-framework, we measure how many actually winning arguments are present using the Jaccard coefficient.

Our second contribution (Sections 4 and 5) is an application of this pipeline to Kialo debates. We argue that Kialo debates are “clean” thanks to its moderation policy. Therefore, each debate is readily-represented as a bipolar argumentation tree. The pipeline calculates, for each debate and policy, its sequence of Jaccard coefficients, one per amount of the debate read. We then aggregate these coefficients to measure how effective the policy is across all debates. As a starting point we have opted to compare policies based on sorting by likes and time, as these are the most obvious attributes available from Kialo. We find that policies that sort the comments from most to least likes are on average more effective at displaying the actually winning arguments than sorting comments from the earliest to the most recent.

This work uses Kialo as a case study on how we can apply argumentation theory to measure the effectiveness of comment sorting policies, taking advantage of Kialo’s “clean” nature. But the pipeline is agnostic to the kinds of debates and policies. In future work, we seek to determine how robust our observation that “sorting by likes is better than sorting by time” for other debates. As stated in Section 3, we cannot assume that other debates are as “clean” as Kialo, so we seek to integrate some argument mining techniques (e.g. [20], or those summarised in Section 2.3) as a pre-processing step. For example, in BBC News’

We anticipate that for noisier datasets we may have to deal with trolls and bots that respectively make abusive comments or distribute spam (e.g. [5,18,30]). For such networks, it may be problematic to allow for the unrebutted comments to win by default, as they are the furthest away from the root and may be irrelevant or insulting. We have made an attempt at mitigating the effects of the leaf nodes winning by default [4], but other ideas involve changing the debate graph topology to have some leaves be symmetrically attacked. However, the resulting loss of a tree structure may mean our actually winning set of arguments can be non-unique, so which actually winning set of arguments (e.g. preferred or grounded) should we compare against? Or would different sorting policies guide readers to different sets of winners?

We can also consider other sorting policies. For example, in Daily Mail comments, the likes attribute contains both an upvote and a downvote. We may (e.g.) compare sorting by descending upvote with sorting by ascending downvote. As mentioned in Section 3, Daily Mail depth-2 comments are hidden under a “See all Replies” button. How does selectively revealing such comments affect the visibility of the actually winning arguments and hence the effectiveness of a policy? The four policies we have studied are chosen because they are present in most debates (Section 3), but Kialo’s user interface (UI) also displays breadth-first search, so we can investigate how effective Kialo’s UI is as a “policy”.

As for post-processing, we have alluded to the many ways the sequence of Jaccard coefficients can be aggregated in Section 5.4. We can test the robustness of our observations by considering more fine-grained averages such as averaging over e.g. the first third of

Finally, we seek to answer the question of why should sorting by likes be better than sorting by time. We hypothesise that likes is somehow related to how “good” a point that comment is making, which by standards of civil discourse raises its probability of winning and force of argument. Future work will make these notions precise.

Footnotes

Acknowledgements

The authors would like to thank the three anonymous reviewers whose suggestions have greatly improved the paper. APY and NS acknowledge funding from the Space for Sharing (S4S) project (Grant No. ES/M00354X/1). SJ was funded by a King’s India scholarship offered by the King’s College London Centre for Doctoral Studies. GB and NS acknowledge funding from the Engineering and Physical Sciences Research Council (EPSC) through the Centre for Doctoral Training in Cross Disciplinary Approaches to Non-Equilibrium Systems (CANES, Grant No. EP/L015854/1).