Abstract

As humans, we desire simple, causal narratives (Bruner, 1991; Charles & Kendall, 2024). We often want to know not just what happened but also why it happened. We generally dislike ambiguous and overly nuanced explanations (Kahneman, 2011). If only life were so simple. Chains of events unfold in ways that always have some level of uncertainty (Beghetto, 2023) and contingencies (Klass, 2023), some of which we may never know or understand.

Education researchers often try to address uncertainty in more systematic ways by specifying factors and mechanisms that attempt to explain relationships between variables of interest, including variations in outcomes (Raudenbush & Bryk, 2002). Such approaches can be helpful in reducing uncertainty but will always be insufficient in accounting for potentially important but unexplained variations.

What is often treated as “statistical noise” (Andrade, 2023) in research may include important information that we overlook in favor of more tidy explanations. We therefore may be tempted to engage in “satisficing” (Simon, 1956), where we treat moderate, large, and even modest “effect sizes” in quantitative research as indicators of success. Moreover, although identifying consistent, “on-average” effects of educational interventions can help to advance knowledge, it may have little relevance or be insufficient for understanding the effects of educational interventions on individual students in specific contexts and across time (Molenaar, 2004).

Single-subject research offers a promising alternative when it comes to understanding the effects of interventions on specific individuals and over time (Kratochwill, 2013). The more detailed or micro-longitudinal aspects of this type of research can help to identify subtle nuances that might otherwise be overlooked in other forms of quantitative research. Although promising, this approach does have its limitations, including everything from being labor intensive and lack of generalizability to potentially missing important effects, side effects, and contingencies that are not specified as variables being studied.

The same can be said of compelling qualitative narratives and cases. Although narrative accounts and cases often do highlight important idiosyncratic and contextually contingent experiences of educational interventions (Yin, 2018), they may fall short when informing teachers and other educational decision makers of the effects on individual students in other contexts and across time.

Most education researchers are careful not to make definitive claims. Few, however, take the time to actively consider side effects of seemingly successful interventions and practices (Zhao, 2017). There are notable exceptions (e.g., educational evaluators) who often actively explore intended and unintended outcomes. However, such efforts tend to be limited to specific interventions and not their evaluation studies in themselves. Indeed, conducting intensive educational evaluations and the findings thereof likely can also have side effects in specific sites and broader educational practice.

In what follows, we discuss the concept of educational side effects. We then provide examples of how researchers have applied variations on this concept when reviewing different facets of education research. We conclude by discussing how researchers can use this concept to interpret research reviewed in (and beyond) this volume and incorporated into their own programs of research.

Understanding Educational Side Effects

Educational side effects (Zhao, 2017) refer to both expected and unexpected effects of interventions and actions in education, which are influenced by a broad range of contingencies, including different and dynamically changing populations, contexts, times, and other uncertainties. Moreover, these side effects can have both positive and negative impact on students, teachers, and the broader social project of education itself.

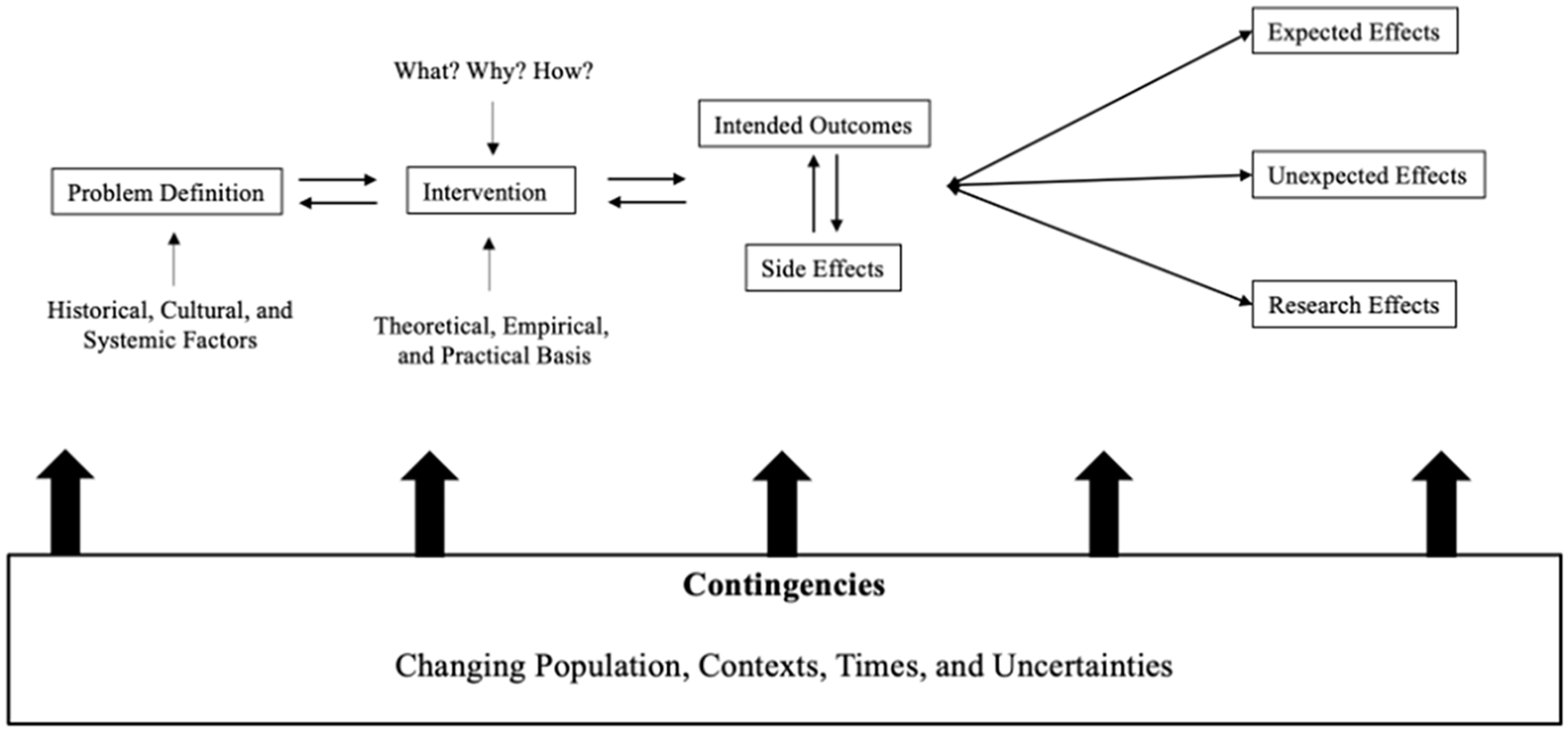

Figure 1 provides a visual process model of educational side effects that can help education researchers more actively anticipate and incorporate this often-missing element when designing their own research and when reviewing and interpreting the research of others.

Understanding Educational Side Effects

As illustrated in Figure 1, educational side effects result from the implementation of educational interventions, which are designed to address an educational problem or issue. Anticipating and accounting for side effects start with clearly defining the educational issue, including the role that historical, cultural, and social contextual factors play.

Next, researchers critically consider the theoretical, empirical, or practical basis of the intervention aimed at addressing the problem. This includes assessing the logical argument of the intervention, for example: What is it about this intervention that will address the problem, why do we believe that it will, and how might it work in practice? In other words, on what basis is the design of the intervention justified? This includes attempting to specify the intended outcomes and potential side effects—what they are, why they are desired, and how the intervention will produce these outcomes and effects.

Acknowledging the inherent uncertainty of making changes in specific educational systems requires researchers to also recognize that unanticipated side effects—whether positive or negative—likely will emerge (Levin, 2013; Oakes & Lipton, 2007). Researchers and practitioners therefore need to proactively anticipate these complexities when designing and studying the effects of interventions and innovations in education.

The concept of side effects extends to education research itself because education research always has potential effects on subsequent research, policy, and practice. As mentioned, a focus on measurable, “average” findings risks overlooking idiosyncratic but potentially impactful effects (e.g., changes in creativity, motivation, teacher and student well-being). Indeed, although researchers often attempt to understand typical patterns, practitioners are concerned with how interventions and innovations will affect specific students in particular schools and classrooms.

Even qualitative research carries potential side effects because findings sometimes unintentionally influence policymakers and practitioners in potentially unhelpful ways, particularly when applied in new contexts. Qualitative research descriptions can be helpful when developing knowledge about the unique features of a particular case or set of cases; teachers and educational leaders want to understand whether and how those cases have relevance for their own students and classrooms.

Researchers can strike a better balance between generating new knowledge and meeting practical needs by accounting for contingencies throughout the entire process, that is, how effects will vary depending on even small changes in the intervention, target population’s characteristics, the specific context, evolution of outcomes, and elements of chance. Recognizing these contingencies can help researchers manage their own (and others’) expectations and refine interventions to maximize benefits and minimize negative side effects.

Central to this work is to incorporate a continuous feedback loop into studies (denoted by the double-headed arrows in Figure 1). This means that all outcomes and effects, even the unintended ones, can inform modifications or even the reconsideration of an intervention or research. Indeed, our judgments about what is novel and of value in one temporal moment can (and likely) will change across time and contexts (Kaufman & Beghetto, 2023). The meaning and practical implications of research findings likely will also evolve over time, context, and populations, requiring researchers to actively reexamine their prior conclusions—going beyond simple replication and emphasizing continued assessment of both applicability and side effects.

We recognize that conducting education research is already challenging (Berliner, 2002). Inviting researchers to add consideration of side effects only intensifies those challenges by introducing additional uncertainties and placing additional time and resource demands on researchers. Indeed, the uncertainty and complexity inherent in education research makes it difficult (if not impossible) for researchers to make accurate predictions of side effects.

Moreover, actively considering side effects when designing interventions and research on those interventions runs the risk of “paralysis by analysis” whereby researchers may avoid exploring side effects of new innovations in research and practice because it appears to be too difficult or complex. Fortunately, there is evidence (Reece et al., 2023) that actively considering potential downsides (or side effects) of innovations can address the problem of ignoring potentially negative impacts while still being able to move forward in productive ways.

Despite these challenges, researchers still have a responsibility to take a more nuanced and reflective approach when designing and interpreting results of education research. This includes engaging in both “what if” and “what if not” thinking (Beghetto, 2023) as a way to consider what might be expected from educational interventions and what might be done if our expectations do not meet reality. At this point, it may be helpful to consider some examples of educational side effects, including how other researchers have attempted to take on this challenge.

Examples of Educational Side Effects

According to the results released in 2023, the average Programme for International Student Assessment (PISA) scores in math, reading, and science did not improve; in fact, they have declined over the last 2 decades across Organisation for Economic Co-operation and Development (OECD) countries, which are some of the most economically developed nations (OECD, 2023). Similarly, the long-term National Assessment of Educational Progress (NAEP) results show insignificant improvement from the 1970s to 2020s, a span of more than 5 decades (NAEP, 2021). Likewise, the Australian national assessment in reading and math (National Assessment Program—Literacy and Numeracy) saw significant change over the past 10 years, or since its first implementation (McGaw, Louden, & Wyatt-Smith, 2020).

We can, of course, question the validity and reliability of these assessments (e.g., Berliner, 2011; Zhao, 2020). These assessments might not have assessed accurately and reliably the competencies of students in these areas. But the assessment authorities have continuously used the results to push for educational changes (e.g., Schleicher, 2018), and the results have generated significant media coverage. Some scholars have even used test scores to predict future economic growth to ask for more educational changes (Hanushek & Woessmann, 2010).

If test scores are truly an indication of educational quality, it seems that education has not gotten any better over the past few decades. Education investment has increased over the past few decades in almost every country. Massive education reforms in curriculum, pedagogy, and school organizations have been implemented. Technology has improved dramatically. Educational innovations have been introduced rigorously. Professional development efforts have bombarded teachers. Schools and communities have been turned around completely by reform efforts. But why has education not improved? Worse, it has declined, and the mental well-being of teachers and students has become worse.

There are plenty of answers. We can continue to blame schools and teachers for their “unwillingness and inability” to make the changes education reformers want. We can blame social media, video games, and other technological tools for their power to distract students from academic learning. We can blame education reformers for their mistaken approach to change education and their unreasonable demand for improvement. We can also blame parents and the society for lacking a universal view of what education should be. And certainly, we can blame the divided, unequal, racist, and unjust society for perpetuating the existing social structure. We can find evidence to support any and all of our criticism, and education research has done that over the past few decades.

Education research, a social enterprise that is supposed to unveil problems, discover laws, find evidence, point to future directions, and guide policy and practices, is to blame as well. No matter what, education research, which involves tens of thousands of people and produces voluminous publications and countless conference sessions, should have influenced educational practices and policies over the past few decades. In the United States, for example, the influential No Child Left Behind (NCLB) law (No Child Left Behind Act of 2001, 2002) was said to be driven by scientific evidence. The Reading First program under NCLB was supposedly completely based on scientific evidence. To push for more evidence-based practices, the law authorized the establishment of the What Works Clearinghouse (Zhao, 2018b).

In fact, evidence-based practice and policymaking in education have become so popular and well accepted since the 1990s that every educator and policymaker talks about evidence whenever policy and practice are discussed. Education research is supposed to collect and provide the evidence needed to make decisions. Indeed, randomized controlled trials have increased dramatically over the past few decades, as have nonrandomized controlled yet quantitative studies. Major education journals have also favored quantitative studies. An example is the significant growth of meta-analysis studies, which has become the most favored type of research in education (Ginsberg & Zhao, 2023).

It seems apparent that the evidence-hungry and evidence-gathering industry of education research has not produced the guidance needed for improvement. There are a number of reasons. First, education research has the attempt to find generalizable laws that would apply to all educational settings and all students. Although there are certainly common laws governing human development and learning and some aspects of schooling, there are too many varieties in each individual in terms of cognition, emotion, psychosocial capabilities, and physical capacities to have laws that apply to all individual students. Moreover, the diversity in living conditions (social economic conditions, physical environments, family composition, community conditions, etc.) of students, facilities and resources of schools, conditions of school leaders and teachers, school organizations, and systematic requirements, such as curriculum, assessment, teacher and school leader employment, make it impossible to have universally applicable laws in education. As a result, the attempt to generalize any findings in education research beyond its original setting needs to be extremely careful. Evidence gathered in one educational setting may not at all apply to other situations.

Second, very little research in education has challenged the existing “grammar” of schooling (Tyack & Tobin, 1994), let alone questioned whether the way schools have been organized can be improved. Instead, the majority of research has been conducted within the traditional schooling framework despite the fact that today’s students are able to learn beyond classrooms and schools, with or without teachers. If the traditional schooling cannot be improved to bring better learning and more equity for all students, shouldn’t education research look beyond the current schooling framework instead of only collecting evidence within the framework? This is particularly true today, when students are expected to acquire different abilities and learning can be global and supported by technology, especially artificial intelligence, such as ChatGPT.

Third, education research has been collecting evidence, but it has failed to question the evidence. The majority of educational studies tend to collect evidence that supports their programs, policies, procedures, or positions, but they do not typically pay attention to evidence that may go against their treatments, whatever they are. In other words, we only have partial evidence in decision-making (Rigney & Zhao, 2021; Zhao, 2017, 2018b). This is the problem we focus on in this issue of Review of Research in Education.

Effects and Side Effects in Education

Evidence is key in scientific research. Empirical evidence is needed to advance or refute theoretical propositions or practical treatments. Education research, learning from medical research, has increasingly called for randomized controlled trials as the “gold standard” for data collection to ensure the quality of evidence. Although it is extremely difficult to use the gold standard in research in education all the time, there is no question that educational studies have increasingly used more scientific ways for evidence gathering. However, as mentioned before, it has failed to examine the nature of evidence.

An education treatment, be it a policy, a program, or a pedagogical approach, can have many different effects or outcomes. Some of the effects are intended and expected, but some can be unintended or unexpected. We can call the first type the “main effect” or simply “effect” and the latter “side effect.” Although not all side effects are necessarily negative, they exist nonetheless.

Side effects in education are like side effects in medicine. Recognizing side effects and taking efforts to create new products to minimize side effects is one reason for the advancement of medicine. In medicine, there are no “wonder drugs” that cure all diseases without causing damages, but in education there are always claims of “wonder drugs” that solve all educational problems without side effects. The so-called reading and math wars are essentially uninformed battles for the panacea educational approach, as are the battles between progressive and traditional education (Zhao, 2018b). Unless education recognizes side effects and makes efforts to minimize them, it is likely that these wars will continue and that education research will continue to waste time without much improvement.

Side effects occur for a number of reasons (Zhao, 2018b). First, education has to serve a diversity of purposes. Just in students, education is supposed to cultivate academically competent, physically healthy, and socioemotionally competent individuals. But research has shown that education that promotes academic achievement or increases test scores can do harm to students’ physical health and social and emotional well-being. For instance, PISA has found a negative correlation between PISA scores and social and emotional well-being. In the 2018 round of PISA, education systems that obtained higher scores also have less student life satisfaction (OECD, 2019). The same is true with Trends in International Mathematical and Science Study (TIMSS), another major international assessment program. TIMSS results have repeatedly shown negative correlations between test scores and social-emotional attributes such as confidence and valuing the subject (Loveless, 2013; Zhao, 2017, 2018b). It was also found that PISA scores are negatively correlated with entrepreneurial confidence (Zhao, 2012a, 2012b, 2012c).

Why do educational systems that seem to be effective in promoting academic achievement measured by test scores harm life satisfaction, confidence, and interest in school subjects? Reasons are many. How time is spent is one. A person’s time is a constant. If a system forces students to spend all their time on academic studies, it is expected their academic achievement would improve. But they do not have time to relax or engage in other activities, which could help them develop better physical and mental health. Teaching methods that constantly rank students based on test scores can pressure them to improve test scores but certainly lower students’ confidence and interest in the subjects. This is why East Asian education systems have been working very hard to change their education to achieve better social and emotional health and have creative and entrepreneurial students (Cheng, 2011; Zhao, 2014).

In the pursuit of evidence of “what works” or evidence of main effects, education researchers have typically neglected the side effects of the same treatment on other outcomes. We have not yet seen any study that honestly and aggressively tracks side effects when they report loudly the effects of educational treatment. Yet we know such side effects exist in the same way any medical product, even the most common over-the-counter product such as ibuprofen, has side effects on the stomach.

Second, side effects happen because of the diversity of students education serves. What works for one group of students with certain characteristics may not work for or may even cause harm to other groups of students. For example, a study finds that although teachers’ cognitive and social abilities have little impact on the achievement of average students, they do affect different students significantly. An increase in male teachers’ cognitive abilities is likely to benefit high-achieving students and hurt low-achieving students. Teachers’ social interactive ability benefits low-achieving students (Grönqvist & Vlachos, 2016). This finding goes against the common view that high-achieving teachers are the best teachers for all students (Barber & Mourshed, 2007).

Similarly, one treatment may work very well and produce significant improvement in academic achievement for students in wealthy schools but may have no effect for students in disadvantaged schools. Worse, this treatment could cause students in disadvantaged schools to lose interest in school. This is no different from the side effects penicillin may cause in some people while being extremely effective in others. Likewise, the same policy that may have effects on some students can hurt others. For instance, the school choice policy that allows students to attend private schools with funding from public schools may benefit those students who have transportation and supportive families but can hurt students who do not and further hurt public schools (Zhao, 2019).

Furthermore, schools serve more than the student population. There are teachers, school leaders, parents, and the community. Educational policies such as the entire school turnaround policy could have the intended outcome of firing the school principal and half of the staff, but it may negatively affect how the teachers, parents, and community view the school, which can have long-term negative impact on students.

Side effects on different populations and individuals are fairly easy to understand. Educational treatments are designed to solve problems, but not everyone has the same problem. So the treatment may help some people solve a problem, or it can be a waste of time or cause negative impact on those who do not have the same problem. Moreover, the solution or treatment may solve a problem but can create more problems for even those who have the problem. Furthermore, the problem the treatment is designed to solve may actually bring benefits to some other people. Solving the problem actually damages the benefits.

Third, time is a major factor in education. An approach that may work well for students in the short term can result in negative effects in the long term. The decade-long argument about the effects of direct instruction versus inquiry-based instruction is a good example. Since the 1970s, thousands of studies have documented the effectiveness of direct instruction on improving students’ reading and math achievement (Hempenstall, 2012; National Institute for Direct Instruction, 2015; Pearson, 2021; Rosenshine, 1978). But the effectiveness is immediate and limited to academic achievement. Direct instruction seems to hinder the development of curiosity and creativity (Janicki & Peterson, 1981; Peterson, 1979; Schweinhart & Weikart, 1997). Moreover, in comparison to learning outcomes of inquiry-based education, the outcomes of direct instruction tend to disappear in the longer term. Students with inquiry-based learning experiences appear to have deeper understanding of the disciplinary concepts and healthier social and emotional capabilities (Klahr & Nigam, 2004; Schweinhart & Weikart, 1997).

Unproductive successes and productive failures (Kapur, 2016) are useful concepts to think about effects and side effects in terms of time. Some educational approaches are effective in producing immediate intended outcomes but hurt the development of capabilities that are valuable in the long run. The immediate success is thus success for certain, but it is unproductive for human learning. This is true with some reading approaches that emphasize and are effective in forcing younger children to recognize the mechanics of reading in first and second grades, but they are counterproductive in improving students’ reading comprehension at a later stage because reading comprehension requires much more than acquisition of phonemic awareness and basic recognition of mechanical details such as letter recognition (Gamse, Bloom, et al., 2008; Gamse, Jacob, et al., 2008).

In human development and learning, there are also capabilities, mindsets, and psychological attributes that affect a person’s life in much longer term than short-term acquisition of knowledge. This is especially true today when capabilities and psychosocial attributes have become extremely important in the age of smart machines (Florida, 2012; Pink, 2006; Trilling & Fadel, 2009; Wagner, 2012). Some educational treatments have been found to increase knowledge acquisition but seriously damage the development of interest, curiosity, and creativity (Bonawitza et al., 2011; Buchsbauma et al., 2011).

Finally, contexts matter. There is a long tradition of borrowing educational practices and policies across national and cultural borders (Zhao, 2018a). In the 1990s, there was a strong interest in the United States in borrowing from Japan’s teacher development policies and practices (Stigler & Hiebert, 1997) and from Singapore math textbooks (Jaciw et al., 2016). But 20 years later, these borrowing practices have not led to better education or greater educational outcomes in math (Jaciw et al., 2016). Similarly, England invested in borrowing math teaching from Shanghai, China. In the early 2000s, the country published Chinese math textbooks and flew in teachers from Shanghai to help spread the Shanghai way of teaching math (Haas & Weale, 2017; Satchell, 2014). But the outcomes have not been nearly as great as had been expected (Wang, 2019). There are no studies of potential side effects in these borrowing efforts, but one can imagine the disruptions caused to schools, the financial investments in these programs, and challenges to policymakers and school leaders.

Different schools have different contexts. A country has its own educational governance structures, cultural traditions, curricular expectations, and assessment policies and procedures, plus public views and perceptions of education. Students, parents, and teachers are also different in their views of education. It is almost impossible to just copy and paste educational practices from other countries.

The same is true for schools within an educational system. In the United States, for example, different states have different ways of school governance, different public opinions of school purposes and functions, different teacher contractual agreements, and different education policies. Schools are also located in different communities, rural, inner city, or suburban, for instance. As a result, although there are plenty of commonalities, the differences can make certain treatments work better or worse. A treatment that has great effects in one school can cause unexpected damages in another school.

Consequences of Neglecting Side Effects

Recognizing and paying attention to side effects or the potential dangers of medical treatments is one of the major reasons for the medical field’s advancement. A number of major medical disasters in the world pushed the medical field to consider the safety of drugs and abandon the idea of “cure-all” snake oils. The establishment of government agencies, such as the FDA, that require the examination and disclosure of side effects resulted in significant improvement in medicine (Junod, 2008).

Education has been envying the advancement in medicine and has learned to adopt randomized controlled trials in educational experiments. But it has not learned to pay attention to side effects. There is no tradition in education research to respect the importance of side effects. There is no authority in education that demands education research to examine and disclose side effects. No education journal asks authors to report side effects. The What Works Clearinghouse is only interested in what works but not what may hurt.

This lack of attention to side effects in education has serious consequences (Zhao, 2018b). Because side effects and effects are like the two sides of the same coin, it is impossible to just have effects without side effects. Knowledge of the potential side effects can help consumers make informed decisions. Today, when publishers promote their textbooks, consultants push their professional development methods, technology companies market their products, or legislators advocate their policies, the consumers that include schools, educators, and students are only given information of effects and told how potent they are, possibly with evidence. In no place would one hear such words as “This reading program improves reading scores but can cause children to lose interest in reading,” although such warning is standard in medical products: “This drug cures headache but can cause stomachache.”

Without knowledge of side effects, consumers buy the products and implement them earnestly. But side effects, as said before, exist. Thus, inevitably, they show up. And if there are actions to minimize the side effects, their impact can be perceived larger than that of main effects. The consumers’ reaction may become so strong that they abandon the treatment and search for another one. This is just one possibility. Another possibility is that the treatment may actually have no effect, but the side effects exist. In other words, it does not work but hurts. The Reading First program did not show significant impact on student reading scores, but it caused many unforeseen problems (Gamse, Bloom, et al., 2008; Gamse, Jacob, et al., 2008; Manzo, 2007, 2008). Yet another possibility is that consumers are unable to alter the situation and abandon the treatment. High-stakes standardized testing in the United States is an example. Despite the revelation of it lacking the intended consequence (Hout & Elliott, 2011) and numerous negative side effects (Koretz, 2017; Neal & Schanzenbach, 2010; Nichols & Berliner, 2007), the policy mandating standardized testing continues. The lack of educational improvement can at least be partially attributed to the negligence of side effects in education.

Another consequence is the perpetual pendulum swings of educational thoughts and treatments (Barker, 2010; Kaestle, 1985; Slavin, 1989). It is no secret that every so often, old educational ideas, which have been discarded for newer ones, come back to replace the newer ideas, which become the old ideas and come back again after some years. Although new arguments and ideas may be added, the dominant ideas do not change. Basically, education history is a journey of these swings without working together to develop better ideas.

A major reason for pendulum swings is the refusal to examine, acknowledge, and accept side effects. Although any educational intervention has side effects, the panacea or total waste mindset dominates the thinking of consumers, developers, and researchers of educational interventions. An intervention is accepted in totality as effective based on evidence of main effects or rejected in totality as ineffective based on evidence of side effects, which are often produced by opponents of the treatment as a way to discard the treatment. As a result, when an intervention is accepted, there is tremendous hope, and inevitably, side effects emerge gradually. As side effects amount to a certain level, opponents rise and win. The intervention is discarded and replaced with the old idea that was abandoned before.

Thus, if side effects are considered an inseparable part of all interventions and the proponents of any intervention honestly study and report side effects together with effects, all consumers are aware of, anticipate, and find ways to accept or minimize side effects. They would not be shocked by the emerging side effects. If both proponents and opponents work together to improve the intervention by increasing the main effects and minimizing side effects, which includes developing new interventions, education has a much better chance of improving.

Lacking significant and transformative innovations in education is another consequence of neglecting side effects in education. Lagemann’s (2003) book, An Elusive Science: The Troubling History of Education Research, explains many factors that contributed to the “troubling history” of education research but did not point out the negligence of side effects as a factor. Yet understanding side effects is perhaps one of the most important ways to stimulate innovations in education. Studying side effects is not necessarily to revoke main effects but to understand them. In some cases, if side effects overpower main effects, the treatment should be abandoned, which enables researchers to imagine new treatments. If side effects are less severe, efforts can be applied to minimize them. For example, if a treatment works well with some students but could cause damage to others, implementers could avoid applying the treatment to those students and seek new approaches for them, which stimulates more research.

In This Volume

This volume of Review of Research of Education is an attempt to bring attention to side effects in education. Interest in this topic is very strong. We received an unusually large number of proposals. After careful review, however, we found that the majority of the proposals did not address the issue of side effects as a necessary component of effects. Instead, they simply gathered evidence of negative side effects on educational treatments. In the end, we selected 11 proposals and encouraged the authors to develop full chapters. In the process, authors of two proposals informed us that they were unable to complete the review. So in this issue, we have a total of nine chapters. The following is a brief summary of these chapters and how they relate to side effects.

“Anticipating the Side Effects of Educational Reform Using System Dynamics Modeling,” by David Keith, Arya Yadama, Ellen O’Neill, and Saras Chung, proposes the use of system dynamics, a method for “conceptualizing and analyzing complex problems that are characterized by multiple interconnected and often nonlinear feedbacks,” to anticipate side effects in education. Anticipating or predicating side effects is important when studying educational interventions because it helps the researchers and intervention developers to expect what side effects may occur.

The authors explain the method and demonstrate its power in a case study of enrollment decline in St. Louis, suggesting the system dynamics model can “add to existing education research practices by accounting for complex, interrelated feedback relationships and their effect on other areas of the system over time.”

Unlike traditional research methods that may overlook or inadequately predict the broader impact of interventions, the system dynamics method described in this chapter emphasizes the dynamic complexity of educational systems. It emphasizes the interconnectedness of variables within the system and enables researchers and policymakers to systematically explore the consequences—intended and unintended—of their actions. This approach offers a valuable tool for education researchers seeking to better understand a broader spectrum of intervention outcomes.

“The Side Effects of Universal School-Based Mental Health Supports: An Integrative Review,” by Stephen MacGregor, Sharon Friesen, Jennifer Turner, José Domene, Carly McMorris, Sharon Allan, Brenna Mesner, and Dennis Sumara, reviews studies of side effects of mental health supports. Mental health of students has become a widely recognized problem, and schools have been tasked to address this issue. Although school-based mental health support programs may have the intended main effect of alleviating mental health problems, they also have side effects.

The authors’ integrative review of published studies found side effects on school staff. Efforts to implement school-based universal mental health support could add time pressure and take away resources from school staff and lead to frustration and resistance to mental health support. Staff also noticed that the outcomes varied in unpredictable ways for different students and in different systems.

This chapter underscores the importance of considering side effects in education research as it systematically identifies and describes intended and unintended outcomes of universal mental health support in schools. The authors explain that interventions can lead to a variety of positive, neutral, and negative outcomes and thereby require a comprehensive evaluation approach in education research and policymaking. Through detailed analysis, they point out gaps in current implementation practices, such as the need for better integration of mental health programs with existing curricula and more supportive organizational and leadership structures. By shedding light on these side effects, the authors advocate for a more nuanced understanding of the dynamics at play in the implementation of school-based mental health supports and encourage a more informed and reflective approach to educational interventions.

In “What Are the Side Effects of School Turnaround? A Systematic Review,” authors Erica Harbatkin, Lam Pham, Christopher Redding, and Alex Moran tackle the side effects of the U.S. school turnaround policy. School turnaround has been advocated as a major policy initiative to dramatically change low-performing schools by changing the school leader and half of the staff. A similarly promising policy action can have significant side effects.

Although the chapter does not cover studies of the policy’s main effects on student achievement, it found, not surprisingly, significant side effects on school systems, educators, and the local communities. These side effects raise the question of whether the cost warrants the dramatic school turnaround. They also suggest that there is clear evidence that the education field has an interest in exploring side effects of educational interventions.

The authors also highlight how well-intentioned interventions, often designed to address educational inequities, can generate both negative and positive unintended effects that may undermine or enhance their overall impact. For example, the disruptions to the school and local community might result in increased educator turnover or changes in school culture that were not part of the original goals.

Their detailed review, which includes both qualitative and quantitative studies, underscores the importance of taking a thorough and multidimensional approach to evaluating educational interventions. By doing so, the authors contribute to a more nuanced understanding of the impact of education reform, advocating for policies and practices that are informed by a more comprehensive appreciation of potential side effects.

The chapter “The Double-Edged Sword of Role Models: A Systematic Narrative Review of the Unintended Effects of Role Model Interventions on Support for the Status Quo,” by Catherine Verniers, Cristina Aelenei, Thomas Breda, Joseph Cimpian, Lola Girerd, Emma Molina, Laurent Sovet, and Andrei Cimpian investigates the side effects of role models, which have been a common practice in education, in particular, STEM education. The purpose is to inspire students to pursue specific careers.

The authors hypothesized that role models as an intervention could also lead students to accept the status quo, which is inequitable, as natural and acceptable. Their systematic review of the literature found both effects and side effects in that the role model intervention indeed led students to a greater endorsement of the status quo, but it also led to greater awareness of gender bias in STEM. The authors call for more research on both effects and side effects or both edges of the role model sword.

This chapter serves as a significant example of considering side effects in education research by shedding light on the complex impacts that well-intentioned interventions, such as introducing role models to encourage STEM pursuits, can have on students’ broader worldviews. The authors highlight the importance of taking a holistic approach when evaluating educational interventions, moving beyond immediate objectives to consider how such interventions might inadvertently affirm or challenge societal inequalities.

The authors call for more comprehensive research into the broader ideological consequences of role models in STEM education. As the authors demonstrate in their review, doing so can result in a more nuanced understanding of how these interventions shape students’ ideologies about gender, society, and meritocracy in addition to intended motivational outcomes.

In “Advancing Equity and Access: ‘Debugging’ Broadening Participation in Computer Science K–12 Education,” Joshua Childs, Rebecca Zarch, Anne Leftwich, Sarah Dunton, Katelin Trautmann, and Tia Madkins review the side effects and effects of policies and practices to encourage broad participation in computer science (CS) in K–12 schools. CS for K–12 students is not necessarily new, but it has gained a lot more attention in recent years. Various organizations and government agencies have developed policies and standards to make it more accessible to all students. But as the chapter suggests, it has not reached the expected outcomes. Side effects indeed are everywhere in CS education, which have historically resulted in disparities in access and participation among underrepresented and underserved communities.

The authors discuss the importance of broadening participation by increasing the diversity of students who engage with CS. The authors explain that this requires active examination of various dimensions of CS, such as access, targeted populations, experiences within CS education, and policy efforts at the state level aimed at broadening participation. The authors highlight ongoing barriers and suggest ways to address equity concerns, pointing to the potential positive impacts of comprehensive policy and curricular reforms aimed at making CS education more inclusive and accessible to all students.

This chapter serves as an example of considering side effects in education research by critically examining the unintended, often negative, outcomes of well-intentioned educational policies and practices in CS education. Just as “computer bugs” in CS can include unexpected or undesirable behavior of a system beyond its intended function, the concept is analogously applied to education to understand how policies may inadvertently perpetuate inequities instead of mitigating them.

By “debugging” these side effects, the authors discuss ways to better navigate and address issues of access and equity systematically. This approach acknowledges the complexities of broadening participation in CS and highlights the importance of research in guiding efforts to create more equitable educational practices that address rather than perpetuate disparities.

Mian Wang, Rachel Schuck, Kaitlynn Penner-Baiden, Skyler Olis, Cambell Ingram, and Grace Fisher investigated the side effects and effects of behavior interventions for autistic students in “Assessment of Adverse Events, Side Effects, and Social Validity in Evidence-Based Behavioral Interventions for Autistic Students.” Behavior interventions have been a popular and controversial practice in treating students with autism. This systematic review focuses on studies identified as evidence-based practices for behavioral interventions in autistic students, with a particular focus on assessing the reporting and evaluation of potentially adverse events/side effects and social validity.

Despite the positive impacts reported by these interventions on various educational and behavioral outcomes, the authors reveal a lack of systematic evaluation and reporting of potential negative consequences of the interventions from the perspectives of key stakeholders, including the autistic individuals themselves. The authors demonstrate how overlooking adverse events and side effects limits our broader understanding of the impact of interventions.

The authors discuss methodological shortcomings within the field of autism intervention research, calling for a shift toward more comprehensive programs of research that encompass not only the effectiveness of interventions but also their safety, acceptability, and long-term consequences. By doing so, the authors contribute to the broader discourse on ethical research and intervention practices that prioritize the well-being and perspectives of autistic individuals, emphasizing the need to strike a better balance between beneficial outcomes with careful consideration of potential harms to autistic students.

In “Interrogating Side Effects in Critical and Quantitative Research in Education” Laura Vernikoff and Emillie Reagan examine the side effects of critical and quantitative research. The authors point out that quantitative research “has historically been (and continues to be) used to cause harm”; their chapter shows that it can also be used to promote equity through primarily analyses of effects and side effects on different subgroups of the study population. They found that although quantitative research in education has made progress along the lines of racial and ethnic groups, more can be done.

They suggest that critical and quantitative research methods should be used to better understand how religion, dis/ability, home language(s), gender identity, national origin, and other aspects of students’ and educators’ multiple identities and experiences affect education as well, using an assets-based lens to better understand communities’ strengths and ways in which oppression continues to impact specific communities in different ways.

Additionally, they encourage future researchers to “contextualize data within place, space, and history rather than trying to generalize to broader populations” to examine and report side effects.

Their chapter illustrates the importance of thoughtfully considering side effects in education research by explicitly acknowledging and addressing the unintended or potentially harmful consequences of traditional quantitative methodologies. For example, the authors discuss how the rigid categorization of social groups can inadvertently perpetuate stereotypes or erase the nuanced realities of individuals’ lived experiences. They also caution against the reliance on conventional measures of statistical significance that may dismiss the importance of findings relevant to smaller or marginalized groups.

By doing so, the authors contribute to the methodological discourse by highlighting the importance of researchers actively considering the ethical implications of their research practices. In this way, the authors highlight how embracing a critical quantitative approach in education research can result in more responsible ways to handle the side effects of their methodological choices, ultimately moving the field toward more equitable and just educational outcomes.

“A Review of Posthumanist Education Research: Expanded Conceptions of Research Possibility and Responsibility,” by Jerry Rosiek, Mary John Adkins-Cartee, Kevin Donley, and Alexander Pratt, provides a systematic review of literature using posthumanism as a lens to examine education research. As a philosophical review, this chapter brings a unique perspective on education research, effects, and side effects. In particular, the posthumanistic way of thinking blurs effects and side effects.

Posthumanist philosophies challenge binary perspectives of direct realism and social constructionism, opting instead for a view where research is understood not just as a passive exploration of the world but as an act that generates ontological effects. By acknowledging that empirical research influences the world it seeks to understand, this philosophy expands researchers’ responsibility to include the examination of both intended outcomes and the diverse, often unintended side effects of educational policies and practices. These side effects might include changes in subjectivities, relationships, community dynamics, and even material conditions, which often are overlooked in traditional education research.

The chapter serves as an example of considering side effects in education research by advocating for an expanded conception of research responsibility that goes beyond the immediate, visible impacts of educational interventions. In advancing an ontologically generative view, the authors highlight the importance of accounting for the complex ways in which research practices alter the reality of educational environments and experiences.

This involves attending to the intricate, dynamic, and sometimes unexpected consequences of educational policies and practices, thus pushing the boundaries of traditional research accountability to embrace a broader, more ethical engagement with the world. Through this lens, the authors explain how posthumanist education research practices can help us not only understand the world but also actively participate in its ongoing transformation, taking into account side effects that emerge from the interplay between human and more-than-human agents within educational settings.

In School Governance Through Performance-Based Accountability: A Comparative Analysis of Its Side-Effects Across Different Regulatory Regimes, authors Antonina Levatino, Antoni Verger, Marjolein Camphuijsen, Andreu Termes, and Lluís Parcerisa tackle the issue of side effects of performance-based accountability (PBA). A policy practice that has gained increasing popularity in school governance over the past 20 years. PBA, endorsed for its potential to enhance the effectiveness and equity of educational systems, was first introduced nationally with the implementation of The No Child Left Behind Act through annual nationwide standardized tests. The authors argue that “PBA motivates school actors to align their objectives and practices with the learning standards and achievement goals outlined in assessment frameworks.” But PBA systems have serious side effects.

The authors systematically reviewed 133 empirical studies with a focus on PBA side effects in five domains: Professional, pedagogical, organizational, students and external contexts. They found a wide range of side effects such as teaching-to-the-test, curriculum narrowing, teacher-centered pedagogies, student marginalization/exclusion, negative effects on student wellbeing, students’ needs not prioritized, less meaningful learning experience, contradicting professional standards, teacher attrition and shortages, reshaping the test pool, channeling resources to test subjects, school segregation, and lowering school quality, among many others.

The authors note that “most side-effects are interconnected and emerge from a complex interplay of factors across these domains.” They also found that “although many side-effects of PBA undermine its intended goals of enhancing efficiency, quality, and equity in education, we also note some positive outcomes, like increased family engagement in education, more focused professional development support and improved formative feedback from school leaders to teachers.”

Conclusion

Education research is a distinct field of study because it is deeply rooted in practice. Indeed, the social project of education is a practice comprised of a broad range of participants, including students, families, educational professionals (e.g., teachers, administrators), allied practitioners (e.g., nurses, social workers, counselors, psychologies), elected policymakers (e.g., school board members, local and federal representatives), educational bureaucrats, consultants, and curricula and testing companies.

Education researchers, thereby, can and often do represent a combination of various disciplinary perspectives (e.g., psychology, economics, sociology, history, philosophy, linguistics, anthropology), including former educational practitioners who have been prepared to become researchers. What education researchers can and do study is vast, as is how they conduct their research (i.e., the methodologies and analytic techniques they employ) and why they are engaging in education research (i.e., the assumptions they hold and constraints they face).

Despite these differences, one element remains constant: Educational interventions (including education research itself) carry side effects. As we have argued in this chapter, anticipating and addressing educational side effects is often a missing or underdeveloped aspect of education research. We hope that this introductory chapter has highlighted the importance of considering side effects when studying educational interventions and related educational efforts.

We also hope that researchers will take on the challenge of incorporating consideration of side effects in their own research. This can occur before, during, and after conducting research studies. Such efforts should be informed by consideration of who might be affected by the research, the potential unexpected impacts of the research, and how the information from studies might be used by different groups (e.g., researchers, practitioners, and policymakers).

In sum, educator researchers have a responsibility to develop an applied understanding of educational side effects. Although doing so will add further complexities and challenges, we hope education researchers will find some direction for how they might more actively anticipate and address side effects in their own research and when interpreting their own and others’ research for educational policy and practice.