Abstract

Research has demonstrated positive impacts of behavioral interventions on various educational outcomes for autistic youth, and implementation of these interventions in education settings has been widely advocated. However, recent studies have identified methodological shortcomings in the behavioral intervention evidence base, including lack of reporting on side effects and social validity. This review including 98 studies identified as evidence-based practices by the National Clearinghouse on Autism Evidence and Practice further highlights the lack of evaluation of side effects and social validity in behavioral intervention research. Suggestions are given regarding assessment of side effects, embedding social validity into intervention, and practical takeaways for educators. Future research and practice should prioritize addressing potential side effects and advancing ethical implementation of evidence-based behavioral interventions.

Autism spectrum disorder (ASD) 1 is a neurodevelopmental condition characterized by challenges in social interaction and communication and the presence of repetitive behaviors and/or specialized interests (American Psychiatric Association, 2013). As the prevalence of autism continues to rise (roughly 1 in 36, Maenner et al., 2023; 11.6% of the 6.5 million students with disabilities are classified as having autism; Department of Education, 2023), educators, researchers, and practitioners have made significant efforts to identify effective interventions to support the diverse needs of autistic students. A variety of approaches exist that aim to help individuals on the autism spectrum gain skills and support their ability to navigate daily life and interact with others effectively.

Evidence-Based Practices

Although there are a vast number of interventions designed to support autistic individuals, many interventions have limited (or no) evidence base. Some, in fact, are based purely in pseudoscience (Travers et al., 2016) and can be highly dangerous (e.g., chelation therapy, Davis et al., 2013; chlorine dioxide treatments, Rivera et al., 2014). With so many intervention choices, it can be extremely difficult for parents and professionals to know which interventions are backed by robust scientific evidence and which are not. It is therefore crucial that efforts are made to identify evidence-based practices (EBPs) to guide professionals such as therapists and teachers to employ effective methods (Vivanti, 2022). Although there are different ways to evaluate the evidence supporting a given intervention, a few general principles are widely regarded. These include assessment of study rigor (e.g., randomized group assignment or evidence of experimental control in single-case design studies), risk of bias (e.g., blinding of outcome assessors; objective measures vs. parent reported outcomes), and whether there are statistically or clinically significant changes in relevant outcomes (Horner et al., 2005; Reichow, 2011). Despite not used as a primary indicator of evidence, social validity—the degree to which an intervention is deemed acceptable by recipients and other stakeholders (Wolf, 1978)—is also an important component to consider when identifying EBPs (Vivanti, 2022).

Presently, there are two main groups that have evaluated the extensive literature to determine which interventions and intervention packages, based on these standards, qualify as an EBP: the National Autism Center via the National Standards Project (National Autism Center, 2015) and National Professional Development Center on Autism Spectrum Disorders via the National Clearinghouse on Autism Evidence and Practice (NCAEP). Most recently, the NCAEP Review Team published an update to their previous 2015 EBP report (Wong et al., 2015) in which they identified 28 nonpharmaceutical EBPs for use with autistic people (Steinbrenner et al., 2020). Criteria for an intervention to be listed as an EBP in this report is either two group design studies or five single-case design studies supporting said intervention or a mix of the two designs (note 83% of the included studies used a single-case design; Steinbrenner et al., 2020). The other main report from the National Autism Center (2015) categorized EBPs into behavioral intervention, cognitive behavioral intervention package, modeling, naturalistic teaching strategies, parent training, peer training, and so on. When comparing these two reports, it is evident that most of the 28 NCAEP EBPs fall under the category of behavioral intervention.

Behavioral Intervention as an EBP

Behavioral interventions are based on the science of applied behavior analysis (ABA). ABA was founded based on B. F. Skinner’s theory of behaviorism that posits that operant learning principles of positive and negative reinforcement (and in some cases punishment) govern our behaviors and can be manipulated to enact meaningful behavior change in individuals’ lives. Some other commonly adopted principles in the field of ABA include stimulus control and generalization, and specific behavioral techniques include extinction, prompting, fading, shaping, and chaining (Granpeesheh et al., 2009). Such intervention can be applied to change behavior across a wide range of contexts, such as improving health and fitness, rehabilitation of individuals with traumatic brain injuries, prevention of child maltreatment, and skill building in those diagnosed with developmental disabilities (Behavior Analyst Certification Board, 2023).

In the case of autism, behavioral intervention gained widespread popularity after Lovaas’s (1987) seminal—yet controversial—article showing that intensive behavioral intervention led to many study participants no longer qualifying for an autism diagnosis. This led to the massive behavioral intervention industry that exists today (T. Smith & Eikeseth, 2011), with behavioral intervention being one of the most common interventions provided to autistic individuals (Monz et al., 2019). One of the most common ABA interventions used with autistic children was discrete trial training (DTT). In DTT, skills are broken down into smaller, discrete behaviors, and each one is worked on individually over a large number of repeated trials. Within each trial, an adult presents a cue and/or prompt (e.g., “What is this?”), and a consequence is provided based on the child’s response. Typically, this would mean providing reinforcement upon a correct response (e.g., giving oral praise or a toy or food) and withholding reinforcement and implementing an error correction procedure in response to incorrect responses. Although DTT is generally adult-driven and conducted in a clinic setting or distraction-free environment (typically seated at a table), newer forms of behavioral intervention have utilized play-based practices and emphasize following the child’s lead within the natural environment. The interventions, termed “naturalistic developmental behavioral interventions” (NDBIs; Schreibman et al., 2015) represent a shift toward more naturalistic, developmentally appropriate, and child-centered approaches, reflecting a blend of behavioral and developmental principles. Despite still being based on the operant principles of reinforcement, NDBIs call for the use of developmentally informed intervention goals and strategies and emphasize letting the child choose activities during learning opportunities to boost motivation. Although much behavioral intervention is delivered in clinic or home settings, it has also been identified to be effective in schools (e.g., Spencer et al., 2014), and it is often suggested that special educators incorporate behavioral intervention into the classroom (e.g., Leach, 2010).

In addition to the reviews conducted to identify EBPs (e.g., National Autism Center, 2015; Steinbrenner et al., 2020), multiple systematic reviews and meta-analyses have suggested there are positive impacts of behavioral intervention for autistic children in a variety of domains. For example, in a review of both early intensive behavioral intervention and NDBIs, Landa (2018) reported positive effects across the board, from improved communication, social, and play skills to increased developmental quotient to reduction in autism symptoms. 2 Similarly, in a scoping review of ABA intervention studies including both controlled group design and single-case design, Gitimoghaddam and colleagues (2022) found evidence of increased cognitive, social/communicative, language, and adaptive skills and decreases in problem behavior. Howlin et al. (2009) also found that IQ scores improved after behavioral intervention (although there was considerable individual variability, and we acknowledge that IQ scores may not be the most valid outcome variable due to potential racial bias [Wicherts, 2016] and ableism [Sequenzia, 2018]). Although recent meta-analyses focused exclusively on controlled trials have pinpointed a weaker evidence base than has previously been purported, particularly due to methodological issues such as high risk of bias (e.g., Eckes et al., 2023; Reichow et al., 2018; Sandbank et al., 2020; Whitehouse et al., 2020), these studies have still found evidence of increases in areas such as intelligence and adaptive behavior (Eckes et al., 2023; Reichow et al., 2018), language/communication, and school readiness and academic skills (Whitehouse et al., 2020). It should be highlighted again, however, that all the recent meta-analyses found a weak evidence base of behavioral interventions, indicating serious methodological improvements for future studies.

Potential methodological issues in the behavioral intervention literature also go beyond intervention effectiveness. For example, single-subject design research has largely fueled the empirical backing and popularity of behavioral interventions (e.g., the majority of studies in Steinbrenner et al., 2020, are single-subject designs). The limited use of group design in behavioral intervention research has caused people to question their true effectiveness. Moreover, some additional methodological shortcomings are identified, including the focus on short-term (Wang & Singer, 2016) and proximal (Sandbank et al., 2020) outcomes, the lack of attention to adverse outcomes (Bottema-Beutel et al., 2021a), and the lack of researcher transparency regarding disclosing potential conflicts of interest (Bottema-Beutel et al., 2021b).

Despite these methodological issues, behavioral interventions remain popular for autistic children (Monz et al., 2019) and continue to be designated as evidence-based practices (e.g., National Autism Center, 2015; Steinbrenner et al., 2020). It is likely that they will continue to be recommended clinically even as future research continues to investigate their effectiveness. In the meantime, however, other equally important questions arise, such as: Do autistic individuals and their families find behavioral intervention acceptable? and What about side effects (SEs) from behavioral intervention?

Side Effects in Education

In the medical field, the study and reporting of SEs in clinical trials are vital for patient safety and well-being (Ioannidis et al., 2004). Unfortunately, education programs have generally not adopted similar practices (Zhao, 2018). In education, the exclusive focus on proving or disproving the effectiveness of pedagogical approaches or interventions has contributed to the reality of ignoring SEs. According to Zhao (2017), this trend has led to several issues within the field of education. For example, it fails to acknowledge that some approaches may be more effective but have more significant or concerning SEs, whereas others may be less effective but have fewer adverse effects (AEs). Furthermore, most studies assess only effectiveness, rarely measuring outcomes beyond standardized test scores (Zhao, 2018). Neglecting to measure other outcomes such as personal qualities and opinions, motivation, quality of life, and social-emotional development limits our understanding of the holistic impact of interventions. Zhao (2017, 2018) called for action to adopt a balanced approach that not only probes both positive and negative effects of instruction and intervention models but also entails maximizing the positive effects while minimizing the negative impact of a given model rather than completely replacing it.

By studying and reporting SEs, researchers and educators can make informed decisions and mitigate potential harms. In the context of behavioral interventions for autistic students, it is crucial to evaluate both effectiveness and potential AEs. Concerns regarding the rigidity and prescriptiveness of interventions, inconsistencies with developmental theories, limitations for certain groups of children and contexts, sustainability of effects, suppression of learner autonomy, and hindrance of creativity must be considered. Only through comprehensive evaluations can the field make progress to avoid unintended negative consequences.

Potential Collateral Effects and Positive Side Effects of Behavioral Intervention

When implementing any behavioral intervention, there are always specific outcomes that the intervention is targeting (i.e., the primary outcomes). Although a clinical program or research study may have multiple primary outcomes, they are usually limited to the main area of need, such as language or social skills. However, behavioral interventions for autistic children are also documented to result in collateral effects in areas other than those specifically targeted for intervention. For example, R. L. Koegel and colleagues (2001) discussed how interventions targeting language can lead to decreases in disruptive behavior (e.g., R. L. Koegel et al., 1992) and that those focused on physical activity can have effects on academic and play behaviors (e.g., Kern et al., 1982). In fact, collateral effects form a major basis for pivotal response treatment (PRT; L. K. Koegel et al., 2016), a popular NDBI, wherein targeting “pivotal” behaviors have been shown to lead to improvements in other, nontargeted areas (see L. K. Koegel et al., 2016). Because “side effects” usually refers to an unintended outcome (Due, 2023), such collateral effects are often not considered SEs because it is usually hoped from the outset that outcomes will generalize beyond the specific target at hand. In fact, some interventions (e.g., Early Start Denver Model; Rogers & Dawson, 2020) are considered comprehensive in that they target a wide range of areas wherein a variety of improvements would be expected. Furthermore, collateral effects usually follow a clear developmental pathway (e.g., improved communication skills usually imply that an individual has more effective means of getting their needs met than engaging in disruptive behavior). In any event, it is important to consider how an intervention might positively impact autistic children in ways that were either unintended (positive SE) or developmentally distal from the intervention target (collateral effects). Additionally, other variables of interest, such as quality of life or child happiness, should be considered, although prior research indicates these variables are rarely assessed (Ledbetter-Cho et al., 2017).

Potential Adverse Events/Negative Side Effects of Behavioral Intervention

Although behavioral interventionists’ goal is to enact positive changes, such interventions have been the subject of criticism due to the possibility of damaging SEs. This has mainly been discussed in nonacademic circles (e.g., social media, blogs). Although little empirical research exists in this area, Bottema-Beutel and colleagues (2021a) found that SEs or AEs were rarely evaluated in nonpharmacological intervention studies for autistic children based on their review of experimental and quasi-experimental studies. Furthermore, even when researchers have conducted systematic reviews specifically on behavioral intervention, assessment of SEs is rarely considered as something to investigate (Whitehouse et al., 2020).

Autistic advocates and allies have voiced concerns regarding both ethical and methodological shortcomings that have led to negative effects of behavioral interventions. They have highlighted issues such as masking/camouflaging to fit into neurotypical standards (Cumming et al., 2020), trauma (Freitas, 2020; McGill & Robinson, 2021), and negative outcomes of the compliance-focused nature of behavioral interventions (Sandoval-Norton & Shkedy, 2019). Moreover, the way that behavioral interventions have been glorified as the “gold standard” for supporting autistic people is a concerning outcome given the methodological issues raised. Indeed, in Sandbank and colleagues’ (2020) meta-analysis of quasi-experimental and experimental group design studies, the impact of behavioral intervention was found to be greatly reduced once study quality was controlled for.

Autistic people have voiced concerns regarding the fact that behavioral intervention predominantly focuses on teaching skills that are especially important for neurotypical—not autistic—people (McGill & Robinson, 2021; Stop ABA, Support Autistics, 2019) and as such (consciously or unconsciously) promotes “camouflaging” or “masking,” terms coined to describe when autistic people learn to engage in certain behaviors and suppress other behaviors in order to fit in or appear neurotypical (see Hull et al., 2017). This is concerning because there is some literature to support the idea that masking is linked to poor mental health outcomes (Cage & Troxell-Whitman, 2019).

Practically speaking, behavioral intervention can focus too much on compliance with adult prompting (Sandoval-Norton & Shkedy, 2019). Many people—including both autistic people and behavior analysts—have voiced concerns that an overemphasis on compliance can put participants at a greater risk for dangerous situations (e.g., risk of sexual assault, bullying, giving in to peer pressure, etc.; Lynch, 2019). Although there are no specific studies linking these two things together, the fact that disabled individuals are at a higher risk for being the victim of a violent crime (Harrell & Rand, 2010) and sexual assault (Brown et al., 2017) forces us to think about how at worst, overly compliant focused interventions can lead to dangerous situations for participants and at best, teaches them that compliance with others is paramount in most situations. Moreover, there are concerns that overemphasis on compliance can lead to decreased intrinsic motivation, self-confidence, and self-esteem (see Sandoval-Norton & Shkedy, 2019). It is, however, important to point out that compliance in certain situations is a necessary skill for all children to learn (e.g., following safety rules, washing hands, brushing teeth, etc.). With that said, the issue of compliance is a nuanced one, but the main point of this critique is to shed light on the fact that an overemphasis on compliance, particularly as it relates to compliance with nonessential demands and skills that may not be important to the intervention recipient or the autistic community at large, have the potential to be harmful. In other words, the concern is that teaching compliance using behavioral interventions may be done in such a way that ignores the autistic person’s way of being instead of focusing solely on supporting the person’s understanding of and ability to lead a healthy and safe life (Schuck et al., 2022). Given these concerns about the potential dire consequences of an inappropriate emphasis on compliance, it is critical to bring awareness to this issue because this is in direct opposition to the intended outcomes of behavioral intervention.

Lastly, autistic individuals have reported trauma as a direct result of experiences with behavioral interventions (Freitas, 2020; McGill & Robinson, 2021). In addition to the issues previously described, some individuals report painful experiences with punishment, withholding of their favorite things, and stressful and anxiety-provoking intervention settings (Sandoval-Norton & Shkedy, 2019). One experience that has reportedly caused negative outcomes is that often in behavioral interventions, autistic participants are not allowed to engage in self-stimulatory behaviors, also known as “stimming” (e.g., encouraging “quiet hands” or “whole body listening” where autistic individuals are not allowed to engage in motor movements; Bascom, 2011; Wise, 2022), causing increased stress and leaving them without a regulation strategy or means of expression. Each of these concerns alone is reason to pause and rethink the true effectiveness of behavioral interventions. Even when the intended outcomes are met, if these adverse SEs are also occurring, it is imperative that we carefully ask ourselves at what cost are the benefits of intervention worthwhile and for whom.

Recent research also suggests that even interventions that have been designed to be child-centered may cause negative outcomes. For example, although the authors feel that NDBIs can be reformed to be in alignment with the neurodiversity paradigm (Schuck et al., 2022) and culturally diverse recipients (Wang et al., 2023), part of that reform is ensuring intervention studies assess potential adverse events. This is especially important given recent findings that autistic adults who viewed video clips of autistic children receiving PRT (a common NDBI) pointed out multiple areas of concern, such as too much emphasis on spoken language and reinforcement disrupting child-parent bonding (Schuck et al., 2024). This suggests that intervention acceptability must not be assumed just because an intervention is behavioral (as opposed to pharmaceutical) in nature or just because it is child-led and naturalistic. Nevertheless, despite advocates’ concerns, many autistic advocates are, in fact, in favor of providing supports to autistic individuals when needed (Robertson, 2010). Autistic adult research participants highly endorse certain intervention goals (Baiden et al., 2024; Waddington et al., 2024). Specifically, Waddington and colleagues (2024) found that autistic adults ranked the domains aimed at addressing harmful behavior (e.g., harming self, harming others) and improving quality of life (safety, autonomy, pursuing interests, mental health) to be two of the top intervention priorities, with almost no one indicating that any identified goals in these categories would be deemed inappropriate. In addition, despite certain complaints, when watching the videos of the PRT sessions, participants in Schuck and colleagues’ (2024) study highlighted positive aspects of the intervention, such as reinforcing attempts and following the child’s lead. Thus, it is not a question of whether intervention can be beneficial for autistic children—it is a question of how to optimize an intervention’s benefit-to-risk ratio.

Despite the potential benefits behavioral interventions might have for autistic children, the neglect of SE evaluation has hindered progress in the field (Rigney & Zhao, 2022; Zhao, 2018). Given the methodological shortcomings of the evidence base and the autistic community’s concerns that behavioral interventions can lead to negative outcomes, it is necessary to consistently and explicitly assess for AEs/SEs to further understand more about the acceptability (or lack thereof) of a given intervention. This is especially true regarding practices that are regarded as evidence based because this is viewed as the “gold standard” for the field. We therefore must critically assess these issues in the studies identified as EBPs, given that neither the NCAEP report (Steinbrenner et al., 2020) nor the journal article based on this review (Hume et al., 2021) mentioned AEs, SEs, or social validity. Prior systematic reviews have shown that few autism intervention or special education studies have included assessment of social validity (e.g., Bottema-Beutel et al., 2024; Callahan et al., 2017; Carter & Wheeler, 2019; Ledford et al., 2016; Snodgrass et al., 2018). We also know that there is limited evidence of assessment of AE/SEs in behavioral intervention research focused on younger children (e.g., Bottema-Beutel et al., 2021a, focused on 0- to 8-year-olds; Whitehouse et al.’s, 2020, meta-synthesis focused on 0- to 12-year-olds). However, these studies included a range of intervention types and did not exclusively focus on behavioral intervention, which is arguably one of the most criticized autism interventions (Devita-Raeburn, 2016).

Although there is evidence that social validity is being assessed more than AEs/SEs (although still inconsistent) in studies of both children (Carter & Wheeler, 2019; Ledford et al., 2016; Snodgrass et al., 2018) and transition-age youth (Bottema-Beutel et al., 2024), we know little about the assessment of AEs/SEs in this older population. It is imperative that we learn more about assessment of AEs/SEs in this group, especially because they would likely have an easier time self-reporting AEs/SEs than younger children. Also, because many prior reviews included only experimental or quasi-experimental studies, it is possible that inclusion of single-case design studies might shed additional light on the current state of AEs/SEs and social validity of behavioral interventions. Lastly, none of the prior reviews combined looking at AEs/SEs and social validity. Although these two constructs are not synonymous, they can inform one another (e.g., AEs can lead to a lack of social validity; social validity assessment can identify AEs), so it is crucial to evaluate these assessments in one review.

Current Study

In this review, we highlight the significance of studying AEs/SEs and social validity in education, specifically focusing on school-based evidence-based behavioral interventions for autistic students based on the 2023 NCAEP EBP report (Steinbrenner et al., 2023). Although prior work on AEs/SEs and social validity has mostly focused on younger children, we focused on adolescent and transition-age students (i.e., 13- to 21-year-olds). We specifically looked at school-based behavioral interventions because interventions that take place in a classroom or with other students may have negative AEs/SEs beyond those affecting just the students themselves (e.g., negative effects on the whole class; Pfiffner & DuPaul, 2018). This research is rooted in a disability studies in education (Baglieri et al., 2011) framework because we view assessment of AEs/SEs and social validity as a means of incorporating autistic voices into research. This directly addresses Baglieri and colleagues’ (2011) assertion that much special education research “attempt[s] to determine if an educational intervention ‘works,’ but they cannot address whether the intervention is a good thing to do to children” (p. 275).

The overall research question we ask is: To what extent do evidence-based behavioral interventions for autistic students incorporate procedures of probing and reporting AEs/SEs and social validity?

Method

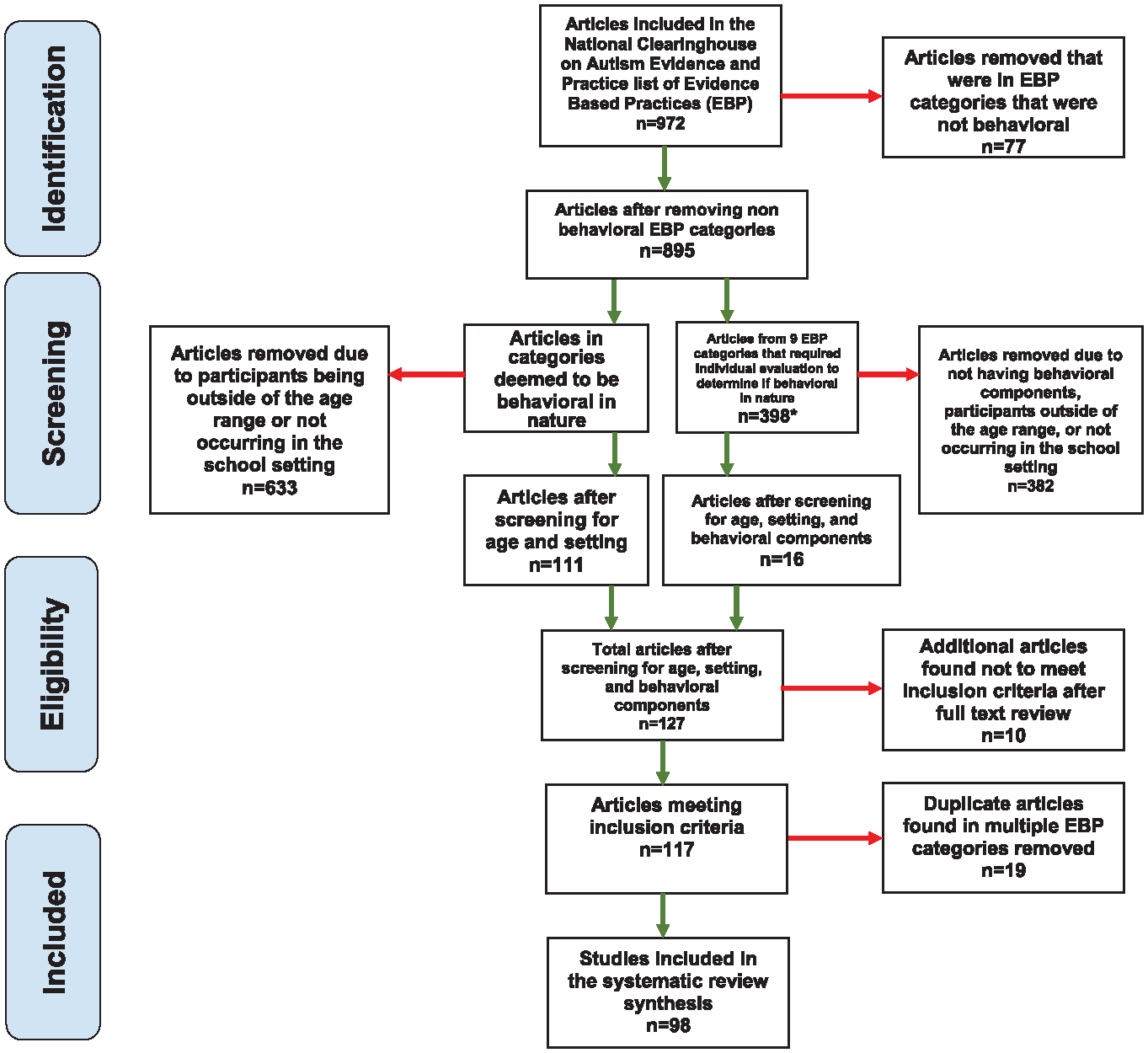

Because this review was particularly concerned with the assessment of adverse events or SEs in evidence-based behavioral interventions, we decided to not conduct our own database search. Instead, we reviewed the Steinbrenner et al. (2020) EBP report for eligible studies. This NCAEP review employed a systematic approach to accessing relevant literature, encompassing both group and single-case design studies. The methodological quality of the included articles was thoroughly evaluated to ensure reliability. As a result, it identified a specific set of interventions that demonstrate efficacy and is representative of the EBP that we felt to be a good starting point for identifying eligible studies.

Eligibility

To be considered for inclusion in this systematic review, studies needed to meet the following inclusion criteria: (a) be listed as providing evidence of an EBP in the NCAEP report (Steinbrenner et al., 2020), (b) include adolescent and/or young adult participants in middle school or high school (i.e., 13–21 years old), (c) used an intervention that was behavioral in nature, and (d) at least some portion of the intervention occurred at school (note that studies that reported conducting interventions in research facilities that resembled classrooms, residential treatment facilities, or “schools” that were run solely by behavioral intervention agencies were excluded).

The studies in Steinbrenner et al. (2020) were organized into 28 EBPs. Twelve of the 28 EBPs coincided with behavioral interventions as defined by the National Standards Project (National Autism Center, 2015). Studies from these 12 EBPs were automatically moved into the pool of potentially eligible studies because they met the criterion of using an intervention that was behavioral in nature. We agreed that three additional categories that were not included in the National Autism Center (2015) report were clearly behavioral in nature (i.e., behavioral momentum intervention, functional communication training, functional behavior assessment). We identified three EBPs that were clearly not consistent with behavioral intervention (Ayres sensory integration, exercise and movement therapy, and music-mediated intervention); studies in these categories were considered ineligible. Although cognitive behavioral/instructional strategies (CBISs) are clearly informed by behavioral principles, they were not considered behavioral by the National Autism Center and differ significantly from behavioral interventions rooted in operant conditioning. The Steinbrenner et al. report defined CBISs as “instruction on management or control of cognitive processes that lead to changes in behavioral, social, or academic behavior” (p. 28), highlighting the emphasis on intervening upon cognitive processes as opposed to intervening directly upon the behavior. These studies were thus excluded.

A preliminary look at the studies in the other nine categories revealed that some studies used behavioral techniques, whereas others did not. It was decided that parent-implemented intervention studies should be excluded because even if parents implemented a school-based intervention, this would not be a typical school intervention. Steinbrenner et al.’s (2020) definition of self-management (“teaching learners to discriminate between appropriate and inappropriate behaviors, accurately monitor and record their own behaviors, and reinforce themselves for behaving appropriately,” p. 119) clearly fit under the behavioral umbrella, so studies in this category were included as potentially eligible. All studies in the remaining seven EBP categories were screened individually to see if the intervention used behavioral principles.

Screening

Studies that were deemed potentially eligible based on their status as including behavioral intervention were screened for age of participants and intervention setting. Three undergraduate research assistants (RAs) screened 744 studies using an Excel file. RAs indicated whether the majority of participants were in the target age range (13–21 years old) and whether at least some of the intervention was conducted at school. If the answer was “yes” to both of these questions, the study was deemed eligible. A random 20% of each RA’s coding was reviewed by either the first or second author. Percentage agreement (n agreements / n total codes) ranged from 87% to 93% for the three RAs. Any disagreements were resolved by one of the two first authors.

The 398 studies that were reviewed to see if they included behavioral principles followed the same coding process except the Excel file also included a column to indicate whether the intervention was behavioral in nature. Note that NDBIs were included as behavioral interventions; even though NDBIs are also governed by additional developmental principles, such as following the child’s lead, they are ultimately still based on the same principles of operant conditioning. The first two authors screened these studies. After screening, a total of 127 behaviorally based intervention studies were identified as eligible for full-text review.

Full-Text Review

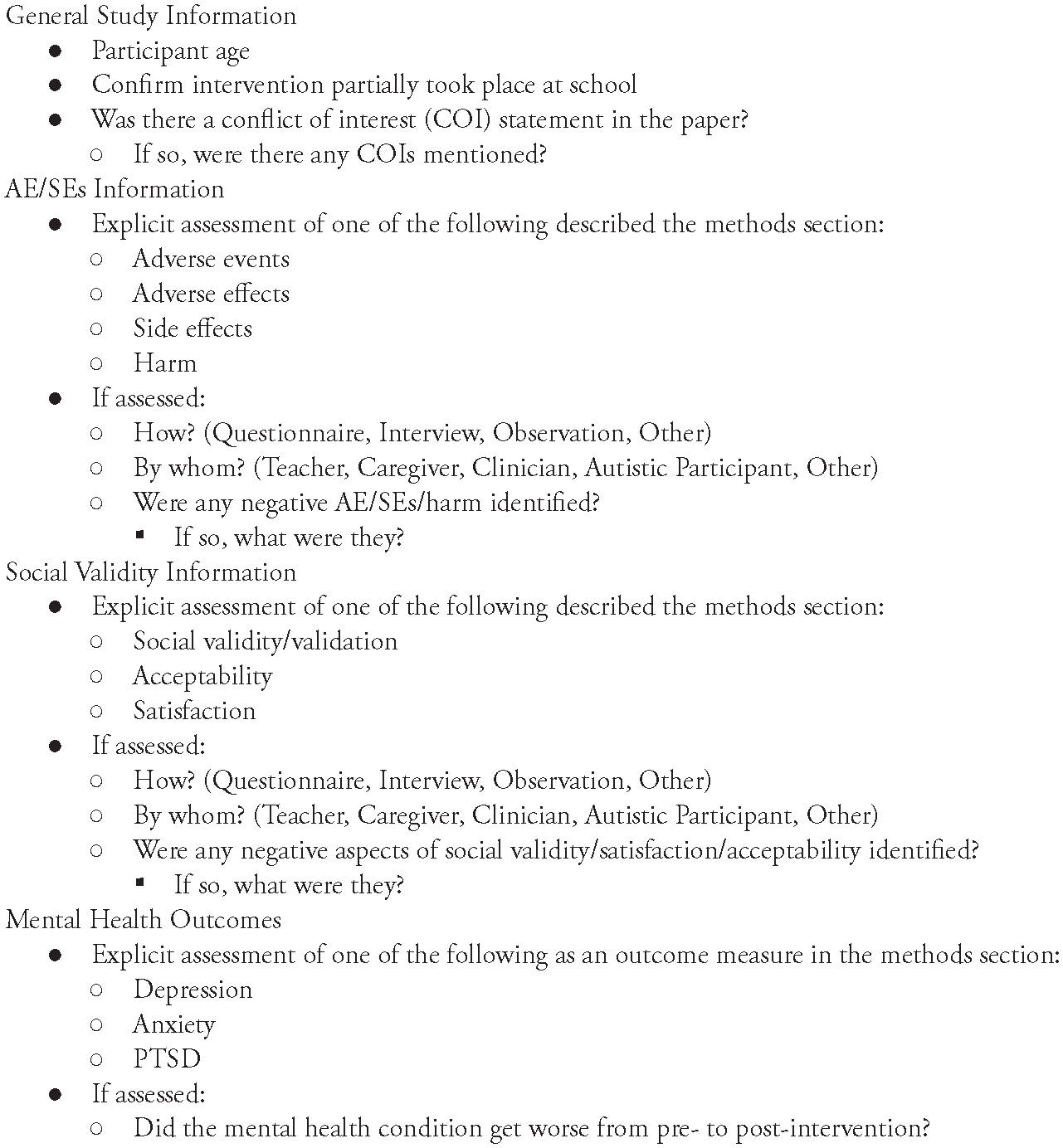

Relevant data were then extracted from the 127 eligible studies. Information obtained during full-text review was extracted and entered into a Qualtrics survey. General study information was extracted (e.g., number and age of participants) as well as the following information focused on assessment of AEs/SEs and social validity/satisfaction/acceptability (see Figure 1 for the entire coding scheme).

Full-Text Coding Scheme

Studies were reviewed and coded independently by three RAs. To train RAs on coding, the first author created an example video of how to code an article. After watching this video, RAs were assigned multiple practice articles (which were related to autism intervention but not included in the current review). Feedback on practice coding was provided by the first and second authors, who also coded the practice articles. After RAs were familiar with the coding process, all studies were double-coded: one RA coded all 127 studies; the other two each double-coded a subset. All discrepancies in coding were highlighted and resolved by one of the first two authors. After full-text coding, 10 studies were determined to be ineligible. A further 19 were removed because they were duplicates of other studies (i.e., they were considered as evidence for multiple EBPs). A total of 98 studies were thus included in our final review (see Figure 2 for a PRISMA diagram).

Screening of Eligible Studies

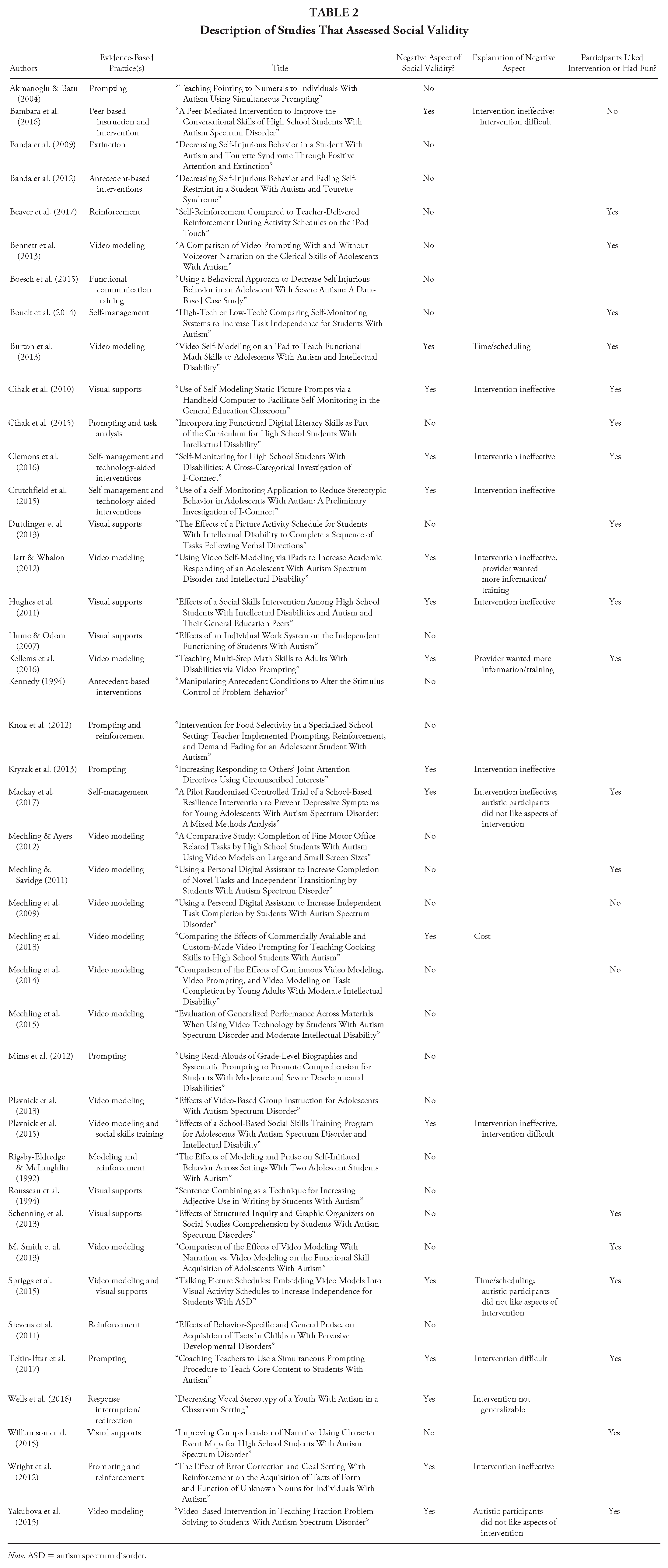

After full-text coding, the first author compiled the reported social validity results from each study that was coded as assessing social validity (i.e., the section of the article that reported on social validity was copied and pasted into an Excel file). These outcomes were then reviewed and qualitatively coded to determine (a) who was asked to provide their perspective, (b) whether the study participants reported enjoying or liking the intervention, (c) whether there was mention of anything negative about the intervention, and (d) if there was something negative, what it was (and whether it could be considered an AE/SE). Content analysis (Stemler, 2015) was used to inductively code the excerpts. To develop codes, the first author read each excerpt and took notes based on the aforementioned four questions. After reading all excerpts, coding categories were then developed for each question, and the first author coded all of the data. This coding was reviewed by the second author. The purpose of this qualitative analysis was to assess whether anything reported as related to social validity could be considered an AE or SE.

Results

All 98 studies included in this review were used in the NCAEP report (Steinbrenner et al., 2020) and are thus examples of studies that should be viewed as demonstrating positive effects of behavioral intervention on academic skill acquisition, social communication, challenging behaviors, and/or vocational skills. However, none of the articles described explicitly assessing “side effects,” “adverse effects,” or “adverse events” in their methods section (see Table 1). Similarly, none of the articles assessed for potential “harm.”

Summary of Results

Note. Denominator for AEs/SEs and social validity assessed is the total number of identified studies (N = 98); for the remaining percentages, the denominator is the number of studies assessing social validity (n = 42). AE = adverse effect; SE = side effect.

Forty-two of the 98 articles (42.9%) explicitly assessed “social validity,” “satisfaction,” or “acceptability” of the intervention. The most common persons involved in the social validity assessment were teachers (k = 28 studies), followed by the autistic intervention recipient (k = 22), classroom staff other than the teacher (e.g., paraprofessionals; k = 12), caregivers (k= 11), peers (k = 2), persons external to the study (e.g., undergraduate raters; k = 2), and other personnel (k = 2). Studies most commonly used questionnaires (k = 32 studies) or interviews (k = 18), although some (k = 6) used other techniques, such as observational ratings from naive observers.

Of the 42 articles that assessed social validity (from anyone’s perspective), 17 (40.5%) mentioned something negative in their results. Negative social validity results were coded as follows: the intervention was ineffective (k = 11), the intervention was difficult to implement or the provider wanted more training (k = 5), autistic participants did not like aspects of the intervention (k = 3), the intervention took too much time to implement (k = 2), and concerns about generalizability (k = 1) and cost (k =1). Only one of the three studies that indicated the autistic intervention recipient did not like an aspect of the intervention was coded as mentioning something that could potentially be considered an adverse event: Mackay et al. (2017) reported that some participants may have not liked the intervention because of “the necessity to talk about emotions which may have been uncomfortable for some young adolescents with ASD” (p. 3466). The study itself did not identify this as a potential AE. Eighteen articles (42.9%) mentioned that the autistic intervention recipients reported liking the intervention or finding it fun. See Table 2 for more details regarding the studies that assessed social validity/satisfaction/acceptability.

Description of Studies That Assessed Social Validity

Note. ASD = autism spectrum disorder.

Only one article included a mental health outcome measure. Mackay et al.’s (2017) intervention was designed to prevent depression in autistic adolescents and showed no increase of depression. No other studies included measures of mental health (e.g., depression, anxiety, posttraumatic stress disorder).

Discussion

Prior research has demonstrated that adverse events and SEs are rarely assessed in research on autism interventions for young children (Bottema-Beutel et al., 2021a). A similar construct, social validity—referring to the degree to which stakeholders find an intervention acceptable—is also not assessed as frequently as it should be (Carter & Wheeler, 2019; Ledford et al., 2016; Snodgrass et al., 2018). There are a number of educational practices designated as evidence-based for use with autistic individuals (e.g., National Autism Center, 2015; Steinbrenner et al., 2020), and although EBP designation should include consideration of social validity (Horner et al., 2005; Reichow et al., 2011), recent identification of EBPs has not made mention of social validity or AE/SEs (e.g., National Autism Center, 2015; Steinbrenner et al., 2020; note that social validity is discussed in the National Autism Center [2015] report but is not used as a metric for determining EBPs). We thus have little understanding of what kind of risk is inherent in these EBPs or whether it is even assessed at all. This is particularly troubling given a subset of EBPs, behavioral interventions, have been heavily criticized by the autistic community as leading to negative outcomes (e.g., Cumming et al., 2020; Freitas, 2020; McGill & Robinson, 2021). This study therefore critically reviewed the assessment of AEs/SEs and social validity in studies that are considered part of the evidence base for school-based behavioral interventions for autistic adolescents.

We identified 98 studies from Steinbrenner and colleagues’ (2020) report on EBPs for autistic individuals that investigated behavioral interventions with autistic individuals ages 13 to 21 and implemented at least partially in a school setting. None of these 98 studies assessed AEs/SEs. These findings are in line with the findings from Bottema-Beutel and colleagues’ (2021a) systematic review of interventions for children up to 8 years of age. However, our results extend the prior findings; Bottema-Beutel et al.’s study looked at randomized controlled trials (RCTs) and quasi-experimental designs, whereas the majority of the studies in our review (i.e., those from Steinbrenner et al.’s, 2020, EBP report) were single-case design. This suggests that the single-case design evidence base has the same problematic lack of risk assessment as the RCTs. It is especially disheartening that this is the case for studies in the adolescent and young adult population because many of these participants would have likely been able to participate in AE/SE assessment themselves (as opposed to younger groups, where self-report is more difficult). Moreover, given that individuals with autism are at an increased risk for mental health issues, such as anxiety and depression (Lai et al., 2019), and that this is the age range where symptoms of these can become more obvious, it is troubling that evaluating potential SEs of intervention has not been made a priority. Autistic people have reported that behavioral intervention can result in mental health challenges, which should demonstrate that this be made a priority in intervention studies moving forward.

Unlike assessment of AEs/SEs, almost half of the 98 selected studies assessed social validity. Over half of these studies included social validity assessment directly from the autistic intervention recipient, which has generally not been the case in other research on autism intervention in both younger children (D’Agostino et al., 2019; Hurley, 2012) and adults (Monahan et al., 2023). Many of the studies discussed positive aspects of the intervention from the perspective of the autistic participants, suggesting that some of these practices are not just effective but also have the capacity to be enjoyable (although it is important to note that we did not assess the methodological quality of these social validity assessments).

The social validity assessments also identified several negative aspects of intervention (e.g., implementation difficulties, concerns with resources, etc.). Only one study mentioned something that we considered as a potential adverse event—autistic participants potentially being uncomfortable discussing emotions (Mackay et al., 2017). Although this was not identified as an adverse event by the authors of the study and we do not necessarily think that the study participants were in fact adversely affected, it is important to consider how much discomfort is acceptable within the context of an intervention. In some cases, it may be necessary to push through discomfort to learn new, meaningful skills (e.g., if one wants to learn skills to cope with anxiety). However, it is also possible that sometimes the skills being taught are not, in fact, meaningful to the individuals with autism themselves (e.g., in some cases, stopping hand flapping or pushing conversation when the autistic person is not interested). In those cases, the threshold for tolerance of any discomfort is much lower.

Altogether, the results of this review indicate that there are both positive and negative aspects of school-based behavioral interventions for autistic students. However, it is impossible to conclude that there is no risk of AEs/SEs given that no studies systematically assessed them. Although a fairly large percentage of studies assessed social validity, it is hard to draw solid conclusions from that because each social validity assessment varied widely, in line with Bottema-Beutel and colleagues’ (2024) findings regarding unsystematic assessment of social validity in research on transition programs. Indeed, social validity can refer to a number of different things—it can be assessed before, during, and/or after an intervention; it can focus on goals, procedures, and/or outcomes; it can be assessed by a variety of stakeholders; and so on—and even when a study mentions assessing it, it is not always clear exactly what participants were asked. Additionally, multiple studies’ social validity measures, particularly those from the autistic participant perspective, included only one question (i.e., some variation of “Do you like/want to continue this intervention?”). Although this clearly assesses social validity in some capacity, it is not nearly thorough enough to draw broad conclusions about the overall acceptability of the intervention, and it is definitely not thorough enough to rule out potential SEs.

Implications for Future Research and Practice

Given these concerns and echoing other calls for similar reforms (e.g., Bottema-Beutel, 2024), the field of behavioral intervention must do a better job of assessing AEs/SEs and social validity. At present, it is nearly impossible to weigh the risk-to-benefit ratio for behavioral interventions. Although qualitative evidence from autistic adults indicates potential AEs/SEs, there needs to be systematic evaluation of the intervention recipients themselves. This is crucial because worldwide, many autistic children lack appropriate supports and services (Hahler & Elsabbagh, 2015). It is likely that in many cases, the benefits of an intervention program will outweigh potential negative aspects. However, such decisions must be based on solid evidence of what those negative aspects might be. Thus, further research focusing on assessing the potential contribution of current behavioral intervention practices to trauma can enhance the field’s practices, reduce the likelihood of unintended SEs, and improve public perceptions. Based on our findings, we present a few suggestions for future research and practice of behavioral interventions.

Assessment of Adverse Effects, Side Effects, and Social Validity in Behavioral Interventions

First, AEs/SEs and social validity must be assessed in all studies (Bottema-Beutel et al., 2021a). Second, this process should follow similar guidelines for assessment of AEs/SEs in pharmacological studies. In particular, AEs/SEs and social validity should be assessed throughout the duration of a study, not just at the end of the study (for a discussion of why social validity assessment only at postintervention is not sufficient, see Schwartz & Baer, 1991). It may be necessary for the field—in collaboration with autistic people with lived experience—to develop a standardized list of potential AEs/SEs to be used across studies that can be updated with more possible AEs/SEs as needed. This would ensure that all AEs/SEs relevant to the autistic community were continually assessed. Such a standardized list should include a comprehensive list of both potentially negative and positive outcomes because it is possible that either or both might occur. Then, when researchers are determining whether benefits of an intervention outweigh the risks, all potential outcomes can be evaluated. This list might include items tapping into positive outcomes, such as individual and family quality of life, autonomy and self-determination, and positive affect and self-confidence. Identified potential positive SEs—or collateral gains—could then be investigated more rigorously in future research. Potential negative SEs might include negative affect (e.g., a child consistently crying or eloping during intervention), child and family stress, and evidence of masking (e.g., feeling the need to hide their autistic traits). There may need to be different versions of this assessment based on age and communication style/ability.

Although social validity is currently assessed more frequently than AEs/SEs, reporting practices must improve. At the very least, authors must be clear in how exactly social validity assessments were made. This includes detailing each question asked so that readers can determine whether questions were appropriate, nonleading, and comprehensive. The field also must work toward using and—where needed—developing more standardized social validity measures instead of each researcher creating their own or modifying an existing one because either of these actions can seriously undermine an instrument’s validity (Bottema-Beutel et al., 2024). Development of all new measures should follow established guidelines (e.g., the Patient-Reported Outcome Measurement Information System, PROMIS, 2013; American Educational Research Association et al., 2014) to ensure validity and reliability. This includes careful attention to item development, assessment of participants’ response processes to refine items and ensure construct validity, and psychometrics based on piloting with a large, representative sample. Although assessment of AEs/SEs and social validity is necessary, there may be barriers to assessing these in certain populations. For example, very young children and/or individuals with communication difficulties may not be able to self-report using a traditional interview or questionnaire. In these cases, parent and teacher/clinician reports are invaluable, and empowering these reporters to carefully consider the possibility of AEs/SEs in behavioral intervention is critical. However, it is still necessary to assess AEs/SEs and social validity from the autistic individual’s point of view even when it is difficult. Such alternative assessments could include behavioral coding of affect as a proxy for intervention acceptability (for an example, see Robinson, 2011). Although this is a good first step toward assessment of negative intervention outcomes, because it can likely identify many situations in which the intervention recipient does not accept the intervention (e.g., if they are always trying to get away from the interventionist or if they are consistently exhibiting negative affect), it should be noted that there may be issues with neurotypical people trying to read autistic individuals’ emotions (Brewer et al., 2016), especially because autistic people’s emotional expressions do not always match the neurotypical “standard” (Keating & Cook, 2021). Other alternatives include Autism Voices, a semistructured interview designed to elicit feedback from autistic research participants who may have limited or no spoken language. The interview includes pictures and specific steps for utilizing the pictures to elicit binary responses (e.g., yes/no) from individuals who are not verbally fluent. Being flexible in the way data are collected (e.g., through spoken, written, or device-assisted language; for an example, see Vincent et al., 2022) is also necessary to ensure all voices are captured.

Furthermore, assessment of all outcomes needs to continue long after the intervention portion of a research study is completed (see Rigney & Zhao, 2022). Even when it comes to assessing maintenance of primary outcomes in interventions for autistic children, there is little standardization and crucially, little understanding of long-term maintenance (Pokorski & LeJeune, 2022). Therefore, any risk versus benefit assessment is being made with incomplete data because we do not know what any long-term risks or benefits might be. Many of the concerns brought up by autistic advocates are about long-term effects of behavioral intervention (e.g., exhaustion and possible mental health issues due to years of masking, McGill & Robinson, 2021; susceptibility to abuse due to enforced compliance, Lynch, 2019). These long-term issues may not be captured in an end-of-study questionnaire or interview, particularly if the child is young and lacks insight or has yet to form a personal identity. Therefore, researchers must follow-up with study participants and their families longitudinally to continually assess their views on the intervention’s goals and distal outcomes (Wang et al., 2023). The adoption of research models like the sequential multiple assignment randomized trial (Collins et al., 2007) can help facilitate the systematic application of personalized approaches within behavioral interventions. Lastly, for both assessment of AEs/SEs and social validity, it will also be important for researchers to critically analyze individual differences; it will not be sufficient to present measures of central tendency when determining potential risk because this could ignore important phenomena that happen for only a subset of participants (see Ioannidis et al., 2004).

Improving the Evidence-Based Practice Designation Process

Changing the process for EBP designation may speed up and improve the quality of assessing AEs/SEs and social validity. Specifically, if quality assessment of these outcomes is utilized by large-scale organizations, such as the NCAEP, as criteria for becoming an EBP (as is suggested by Horner et al., 2005, and Reichow, 2011), this would encourage researchers to make this a priority. Additionally, because group design research is becoming increasingly popular in our field, the standards should be readjusted to reflect what is considered the “gold standard” of research and require the use of RCTs. This would push researchers to continue to prioritize more rigorous research designs. Moreover, the number of studies and participants should be reconsidered. As it stands, the NCAEP criteria for establishing evidence is proving positive effects in at least two high-quality experimental or quasi-experimental group design studies that have been conducted by two different researchers or research groups or at least five high-quality single-subject design studies conducted by at least three different researchers or research groups, totaling at least 20 participants. Lastly, a practice would be designated as an EBP if there is one high-quality randomized or quasi-experimental group design study and a minimum of three high-quality single-subject design studies conducted by at least three different researchers or research groups across all studies (Steinbrenner et al., 2020). Requiring additional studies and participants (especially those from diverse backgrounds) from the start could address the fact that interventions are being designated EBPs but are shown to have weaker positive effects in meta-analyses (e.g., Eckes et al., 2023; Reichow et al., 2018; Sandbank et al., 2020; Whitehouse et al., 2020). This indicates that the standards are perhaps too low. Furthermore, in addition to including evaluation of AEs/SEs and social validity, “high quality” studies should also include transparent conflict of interest statements (Bottema-Beutel et al., 2021b).

Practical Takeaways for Educators

Given that very little is known about AEs/SEs of behavioral EBPs, it will take time before the field has a better understanding of what is acceptable versus unacceptable about such interventions. It is therefore crucial for educators to take steps to actively incorporate socially valid strategies that are likely to reduce AEs/SEs into their practice. For example, one behavioral approach that holds promise for addressing these considerations is NDBIs (Schreibman et al., 2015). As discussed earlier, these interventions emphasize child-centered learning and leveraging student interests to enhance motivation. NDBIs excel in their strengths-based approach, focusing on what students with autism can do rather than their challenges. NDBIs are thus promising in several regards (Schuck et al., 2024; Wang et al., 2023). Although reform is still needed to ensure NDBIs are acceptable to the autistic community, adopting a neurodiversity-aligned NDBI approach may ultimately help promote positive and meaningful outcomes for autistic individuals. However, for this to come to fruition, future studies should take a community-participation approach (e.g., Fletcher-Watson et al., 2019), where autistic students and their families are involved in all stages of clinical trial or education program development. This approach can lead to the inclusion of diverse perspectives and promotes collaborative decision-making, ultimately leading to interventions that better meet the needs and preferences of autistic students and their families.

It may also be useful for educators to incorporate principles of trauma-informed care (TIC) into their practice. This is particularly important given that some autistic adults report trauma from therapy, school, and the diagnostic process (Anderson, 2023; Kerns et al., 2022; Rumball et al., 2020). Based on this information paired with the fact that we have no empirical information on potential AEs of interventions and that it will take time to sufficiently gather this information, clinicians should provide intervention from the assumption that autistic individuals have experienced trauma and allow that to inform their support. According to Rajaraman and colleagues (2022), four core commitments of TIC can guide this integration: (a) acknowledge trauma and its potential impact; (b) create environments that prioritize safety and trust by building rapport with students and identifying alternatives to intrusive restraint procedures, reducing the risk of retraumatization; (c) foster student autonomy and shared decision-making by providing opportunities for choice making and validation throughout the intake and treatment development process; and (d) choose intervention options that focus on teaching adaptive skills.

Incorporating principles of NDBIs and TIC into behavioral EBPs can help ensure that students with autism feel safe during intervention delivery. Emphasizing control, choice, and autonomy for students can minimize the risk of retraumatization and empower their participation in the intervention process. These approaches can also enhance the social validity of EBPs and potentially reduce AEs/SEs.

Limitations

Although this review provides important information regarding the assessment of AEs/SEs and social validity in research on school-based EBPs for autistic individuals, it is necessary to highlight several limitations. First, although our coders who screened Steinbrenner et al.’s (2020) studies were reliable in their coding, it is possible that some studies may have been missed in our initial screen. Similarly, although all articles were double coded, complete coding accuracy cannot be guaranteed. It is also important to note that we did not do our own search of the literature to find relevant articles and instead focused on articles included in the NCAEP review report (Steinbrenner et al., 2020). Although we did want to specifically assess studies that constitute the evidence base for already designated EBPs, it is possible that there are behavioral intervention studies that are superior examples of AE/SE assessment but were not included in our review. Similarly, we focused on whether studies explicitly described assessing AEs/SEs and/or social validity in their method section. We limited our reporting to this explicit assessment because we wanted to focus on the extent to which AEs/SEs and acceptability are a priori prioritized in behavioral EBPs, as opposed to being an afterthought. It is thus possible that some additional studies might have briefly mentioned AEs/SEs or satisfaction/acceptability in the discussion section. Although it is important for researchers to systematically assess AEs/SEs and social validity, these anecdotal comments could be reviewed in future research.

This review also did not look at the way studies considered behavioral EBPs assessed long-term effects (whether primary or collateral). Future research should evaluate this aspect of EBPs because it is possible that many EBPs lists are based on positive results from immediately or shortly after the conclusion of the intervention. Finally, our research team does not have any autistic team members. It is possible that if autistic researchers had coded the social validity outcome results, they would have identified other things that might be considered AEs/SEs.

Conclusion

The findings of this review reveal an alarming reality that AEs/SEs are rarely evaluated or reported in behavioral intervention research. Despite the reported positive effects of behavioral EBPs, the lack of risk assessment and attention to adverse outcomes is concerning. It is crucial for researchers and educators to study and report SEs to make informed decisions and mitigate potential harms.

Several recommendations are made for the future of behavioral interventions. First, enhancing the assessment of AEs/SEs in behavioral interventions is essential. Researchers must systematically evaluate and report on the potential negative consequences of interventions to ensure student safety and well-being. Furthermore, embedding social validity considerations into all phases of implementing EBPs can contribute to a comprehensive understanding of intervention effects and potential SEs. This requires involving diverse stakeholders, considering cultural preferences, and promoting student autonomy and choice throughout the intervention process. Lastly, recognizing the impacts of trauma and incorporating TIC principles in behavioral interventions can minimize the risk of retraumatization and promote student dignity and autonomy.

In conclusion, although there are both positive and negative aspects of school-based behavioral interventions for autistic students, the lack of systematic assessment of SEs is concerning. Future research and practice should focus on enhancing risk assessment, integrating social validity considerations, and embracing trauma-informed approaches. By doing so, the field can advance both the effectiveness and ethical implementation of evidence-based behavioral interventions, ultimately benefiting the well-being and development of autistic students.

Footnotes

Acknowledgements

The authors do not have any direct financial interests to disclose in relation to the publication of this chapter. However, it should be noted that RKS and KMPB have been employed by several university autism centers that deliver naturalistic behavioral intervention. KMBP is also a board-certified behavior analyst and works at a clinical organization based in the community. MW has not been directly involved in the development of naturalistic developmental behavioral intervention models and yet has some research collaboration with the developers of the pivotal response treatment model.