Abstract

This chapter provides a systematic review of research published between 2006 and 2022 on the use of artificial intelligence (AI) platforms in writing instruction. We theorize writing and platforms as complex ecologies, investigating the interplay of their relations and implications for educational equity. Our findings suggested three functional categories of AI platforms in writing instruction (assistive, assessment, and authentication) and a focus on the technical dimensions of platforms and their intersections with the cognitive dimensions of writing. Finally, we found a focus on equity notably absent from our corpus. Taken together, these findings suggest an agenda for equity-oriented research and pedagogy that confronts aspects of platform and writing environments that have, to date, been omitted from the empirical record.

Over the last decade, artificial intelligence (AI) has emerged as a pressing topic in education research, policy, and practice, prompting a flurry of white papers, policy briefs, special issues, and new research journals. The urgency of these conversations has been amplified with the rise of new AI-based platforms like ChatGPT, an AI chatbot that generates text in response to users’ prompts, spurring debates about their impact on writing instruction and practice (Shields, 2023; Thaker, 2023). In light of these conversations and alongside burgeoning research on critical platform studies in education (e.g., Decuypere et al., 2021; Nichols & Garcia, 2022; Sefton-Green, 2022), we turn a critical lens on how AI platforms for writing have been studied in education research to this point—a timely intervention that foregrounds issues of equity in conversations about how new forms of AI may shape the future of writing, teaching, and learning.

Despite AI’s presence in the education literature since the term’s initial popularization in the 1950s, the meaning of “artificial intelligence” can be evasive. Whereas early AI research focused on the development of computers capable of modeling human cognitive states—what theorists call “strong/general AI” (Searle, 1980)—increasingly, the phrase refers to any data-processing system that generates or responds to predictions using computational inferences (Williamson & Eynon, 2020). Rather than simulating abstract mental processes, these “weak AI” systems are designed to solve concrete application problems, from the daring (e.g., self-driving cars) to the mundane (e.g., text autocorrect), by classifying and correlating large stores of data (Bechmann & Bowker, 2019). The methods for processing this information are varied and travel under a range of imposing names (e.g., neural networks, machine learning, deep learning, natural language processing [NLP], data analytics), but they are packaged in a common architecture, known familiarly as a “platform.”

Although most people associate “platforms” with tech giants like Google/Alphabet, Amazon, and Facebook/Meta, the word applies to any digital app, service, or infrastructure that facilitates social and economic exchange—including those between weak AI systems and the diverse settings of their use (Gillespie, 2010; Nichols & Garcia, 2022). This helps to explain why the escalating interest in AI in education over the last decade has coincided with the widespread integration of data-processing platforms into school administration and instruction and learning in informal environments (Decuypere et al., 2021; Nichols & Dixon-Román, in press; Pangrazio et al., 2022). In this chapter, we are interested in how this “platformization” (Poell et al., 2019) of education—the ways that the social and technical dimensions of platforms permeate and shape the social practices of teachers and students—has shaped writing in K–12 schools, particularly the role of AI in “platform-mediated writing instruction.” By this, we mean platforms that use AI to support, enhance, or evaluate writing. Although some of these technologies have long histories in educational institutions (e.g., platforms for automated essay assessment or plagiarism detection), others’ roles in teaching and learning are still being negotiated (e.g., “generative AI,” such as text predictions on a smartphone or text generation tools in Gmail or GoogleDocs).

In keeping with the larger theme of this volume, this chapter aims to consider how an ecological orientation to writing and platforms—one that takes seriously the complex cognitive, social, technical, and political-economic relations that animate each and their dynamic interplay with one another—can intersect with robust conceptualizations of equity to illuminate patterns and elisions in the emerging literature on platform-mediated writing instruction. Although scholars have long associated access to and implementation of emerging technologies as strategies for addressing inequities in educational resources and experiences (cf. Roumell & Salajan, 2016), particularly in terms of closing the “digital divide” (e.g., Dolan, 2016), research has clearly documented that narrow approaches to educational technologies can reinforce and exacerbate inequities (Garcia & Lee, 2020; Reich & Ito, 2017). Consequently, it is imperative to develop more robust approaches to equity that move beyond bias, access, or inclusion in AI research (Mayfield et al., 2019) to acknowledge historically situated systemic and institutional harms and work to counter these harms through “the intentional promotion of thriving across multiple domains for those who experience inequity and injustice” (Osher, Pittman, et al., 2020, p. 18). Such an approach to supporting people to thrive across myriad social, cultural, socioemotional, cognitive, and socioeconomic dimensions challenges narrow, instrumentalist approaches to educational technologies and emphasizes “deeper learning” (Mehta & Fine, 2012) as core to developing equity-centered pedagogy and practice with new technologies (Heinrich et al., 2020; Noguera, 2017). Such an orientation positions equity not as something “achieved” but as a dynamic process shaped by complex ecological relations among people, places, and things (e.g., de Roock, 2021; Gutiérrez, 2016; Gutiérrez et al., 2017; Pinkard, 2019; Vossoughi et al., 2016). Drawing on expansive understandings of equity as a dynamic and active relational process, we argue that an ecological orientation to the platformization of writing instruction is necessary for confronting the challenges that AI platforms pose not only for literacy learning and practice but also for research that intervenes in addressing educational inequities.

An Ecological Framework for Examining Writing and Platformization for Educational Equity

We approach the subject of platform-mediated writing instruction from an ecological orientation, attending to “writing” and “platforms” not as discrete objects of study but as relational, multidimensional assemblages (Decuypere et al., 2021; Kucer, 2001). Environmental historians suggest that although the concept of an “ecology” is not always precise, it is useful for examining the multivalent and mutually constitutive relationships that link surroundings to that which they surround (Benson, 2020). This utility is evinced not only in the everyday invocations of ecological metaphors in popular discourse (e.g., “the political environment,” “our media ecosystem”) but also in the explicit uptake of environmental analysis across a wide range of disciplines, including philosophy of science (Canguilhem, 2008), anthropology (Ingold, 1993), geography (Harvey, 1993), human development (Bronfenbrenner, 1986), gender studies (Haraway, 2016), Indigenous/decolonial studies (Whyte, 2018), linguistics (Garner, 2004), and public policy (Bullard, 2018). The field of education, too, has a history of scholarship that draws on environmental perspectives to spotlight the complex relations that contribute to learning processes. Vygotskian learning theory, for instance, is expressly concerned with “the relations between human beings and their environment, both physical and social” (Vygotsky, 1981, p. 19). More recent work, likewise, has continued to parse the multiple dimensions of activity that give shape to diverse learning ecologies (e.g., Barron, 2022; Gutiérrez, 2016; Hornberger, 2003; Pinkard, 2019).

Importantly, ecological orientations have proven to be useful resources for illuminating structural inequities and imagining equitable alternatives in diverse contexts for learning. Scholars have shown that an ecological understanding of learning invites active investigation not just of how a learning subject encounters a given object but also of the wide range of relations that intermediate this encounter (e.g., C. D. Lee, 2017; C. D. Lee & Daiute, 2019; Nasir et al., 2020, 2021; Pinkard, 2019). Nasir and colleagues’ (2021) RISE framework, for instance, takes an expansive view of learning, describing it as an ecological interplay of four dimensions:

(1)

Beyond offering a more holistic understanding of learning, ecological perspectives like the RISE framework provide a map that can help to identify when research, policies, assessments, curricula, or practices veer into provincialism—that is, where one part of the ecology gets conflated with the whole. As Nasir and colleagues (2021) suggested, it is often in such reductions that ableism, racism, and other inequitable practices get reinscribed in the structures of education. In particular, literacy is often reduced to individualized cognitive activity, which has an alienating effect on many students by perpetuating systemic biases including ableism and racism. In oversimplifying the complex ecology of learning, atomizing approaches to literacy learning prioritize certain ways of knowing, being, and learning over others, thereby marginalizing diverse learners and reinforcing existing onto-epistemic hierarchies and inequities (Warren et al., 2020). Ecological approaches focused on the equitable learning and thriving of youth emphasize the dynamic interplay of multiple actors and the need to intentionally counter inequality across multiple domains over time (Osher, Pittman, et al., 2020).

We can recognize ecological orientations as serving (a) a descriptive function, in making visible a set of relations that constitute and animate a phenomenon (e.g., learning); (b) a diagnostic function, in being useful for analyzing (mis)representations of these relations and their implications (e.g., uneven scholarly attention to certain dimensions of learning, leading to racialized constructions of “ability”); and (c) a design function, in providing insights, via diagnostic analysis, into where structural interventions might be most effective in confronting inequities (e.g., instructional practices attuned to breadth of relations involved in learning). In this article, we articulate an ecological model of platform-mediated writing to engage primarily in the first two of these functions—describing the terrain of the topic and characterizing its qualities, emphases, and omissions—and also conclude with suggestions for how this model might also contribute to design and intervention in educational inequalities in the future.

We draw on these ecological framings of descriptive, diagnostic, and critical functions to address the three questions that guided our review:

An Ecological View of Writing

The need for ecologically oriented approaches to integrating AI platforms in writing instruction has been well established in studies of writing and technology, ranging from Warschauer and Ware’s (2006) call for more contextualized understandings of how automated writing evaluation (AWE) is used in teaching and learning to Cotos (2014) concern that AWE scholarship continues to ignore the “ecology of implementation” (p. 59). Since the development of Project Essay Grader (PEG) in the 1960s, AWE systems have centrally focused on evaluating the content, structure, and quality of writing, often through automated essay scoring (AES) engines. Even with the development of AI platforms that include qualitative feedback, revision assistance, and adaptive support (e.g., via intelligent tutoring systems), that orientation toward evaluation and assessment has persisted, with writing measured through discrete skills and limited text types (Ware, 2011).

These concerns about the implications of such a pervasive focus on assessment have been outlined in position statements from professional organizations (e.g., Conference on College Composition and Communication, 2014; National Conference of Teachers of English, 2013) and echoed in reviews showing how AWE systems focus on formal correctness and error assessment of written products rather than social, multimodal, and contextual understandings of writing processes (e.g., Deane, 2013; Nunes et al., 2022; Stevenson, 2016; Vojak et al., 2011). Although studies suggest affordances associated with AI platforms in writing instruction (e.g., language support, immediate feedback, autonomy, multiple opportunities for revision), concerns regarding how AI platforms frame and shape writing instruction in practice, particularly in relation to structural inequities, raise important questions about how such technologies should be developed, implemented, and studied. Mayfield and colleagues (2019), for example, outlined these equity concerns for machine learning in education, urging researchers to extend the current (and still nascent) focus on fairness and algorithmic bias in AI platforms by looking to lessons drawn from the long history of equity research in education. They reviewed areas that can enrich an equity focus beyond algorithmic bias and fairness, including issues of representational harms (i.e., how technologies represent people and cultures), culturally relevant pedagogies (i.e., how cultural knowledge can be integrated as an asset), linguistic imperialism (i.e., how technologies foreground prestige languages), and surveillance capitalism (i.e., how technologies monitor people’s behavior, often for profit).

The present review is responsive to such calls to develop expansive, nuanced approaches to equity in AI research and implementation in its examination of the complex ecological and ideological relations that comprise writing practices. Addressed in transdisciplinary scholarship that includes evolutionary anthropologists (Leroi-Gourhan, 1993; Rotman, 2008), rhetoric and communication scholars (Haas, 1996; Peters, 2016), and education researchers (Cooper, 1986; Gutiérrez, 2008), an ecological orientation to writing’s dynamic relations requires a multiperspectival approach that includes cognitive, sociocultural, critical, semiotic, and embodied dimensions.

In the field of education, cognitive research on writing aims to understand “human performance, learning and development, and individual differences by analyzing thinking or cognitive processes” (MacArthur & Graham, 2016, p. 24). A cognitive orientation typically understands writing as a process that can be observed and measured, involving skills and strategies for regulating the writing process, the writing environment, and writing behaviors (Bereiter & Scardamalia, 1987; Graham & Perin, 2007; Hayes & Flower, 1980; Zimmerman & Risemberg, 1997), often independent of the sociocultural contexts in which a writing task occurs (Freedman et al., 2016).

Sociocultural dimensions of writing situate writers and their writing in particular social, cultural, historical, political, and material contexts (e.g., Gee, 1990; Heath, 1983; New London Group, 1996; Scribner & Cole, 1981; Street, 1984). Researchers working from this perspective understand writing as a social practice, or socially developed and patterned ways of employing technology, knowledge, and skills in activity (Scribner & Cole, 1981, p. 236). The significant role that digital technologies have played in expanding authoring and dissemination opportunities in recent decades (Coiro et al., 2014; Mills, 2016) draws particular attention to complex relations among the cultural, symbolic, and materials tools that comprise writing.

Critical perspectives outline writing’s role in perpetuating values and power structures that can create and maintain social inequities (e.g., Brandt, 1998, 2009; Canagarajah, 2023; Kirkland, 2004; Luke, 1995). Research in this tradition has argued that everyday literate activities position subjects in “relations of power” (Luke, 2003) that are determined by social, economic, and historical forces. For example, Brandt’s (1998) concept of literacy sponsorship refers to the range of agents, both individual and institutional, that “enable, support, teach, model, as well as recruit, regulate, suppress, or withhold literacy—and gain advantage by it in some way” (p. 166). Contemporary scholarship demonstrates how writing continues to be bound up with political and economic projects intended to reproduce raced, classed, and gendered social relations (e.g., Baker-Bell, 2020; Randall et al., 2022; Wargo, 2021), with digital composition raising new questions about how technologies are neither deterministic nor innocent of how literacy is accomplished (de Roock, 2021; Dixon-Román et al., 2020; Nichols & Johnston, 2020). Of specific concern are language normalizations, which can perpetuate oppressive ideologies around the need for “correct” spelling and grammar or hegemonic raciolinguistic ideas of standard or academic language varieties (Flores & Rosa, 2015).

A semiotic account of writing assumes that representational practices span a range of linguistic and nonlinguistic meaning-making resources, including image, alphabetic writing, speech, and moving images (Bezemer & Kress, 2008). A social semiotic perspective focuses on the representational affordances and constraints of different communication modes and the epistemological and communicational effects of multimodal designs (Hull & Nelson, 2005). Research on multimodal composition highlights the texts, social worlds, and selves that youth bring to their writing and the ways in which changing text forms and opportunities to circulate texts require writers to analyze and write for diverse rhetorical and social contexts (e.g., de los Ríos, 2020; Higgs & Kim, 2022; Kelly, 2018).

Embodied perspectives in writing research attend to the physical, bodily acts of writing: the manipulation of fine-motor skills; the movement and positioning of hands, arms, and body; and the use of visual, aural, and tactile senses (e.g., Haas, 1996; Haas & Witte, 2001). Within a broader embodied cognition framework, which posits that human cognition emerges from the “interplay of task-specific resources distributed across the brain, body, and environment” (Mangen & Balsvik, 2016, p. 100), viewing writing as an embodied practice makes “the distinction between mind and body . . . a specious one” (Haas & Witte, 2001, p. 416). With the increasing prevalence of digital and networked composition tools, researchers have paid particular attention to how different elements of embodied experience, such as sensation and movement, impact writers’ relationships to their texts (e.g., Ehret & Hollett, 2014).

An ecological orientation to writing assumes that writers both shape and are shaped by the interconnected systems in which they are situated. Interrelated cognitive, sociocultural, critical, embodied, and semiotic dimensions of writing highlighted in foundational and current writing research suggest that composition is irreducible to any one of these dimensions and requires an ecological orientation to capture its complexity. Engaging an ecological perspective of the systems, purposes, and practices that shape writing allows for analyses that illuminate the diversity of human learning and development mediated by writing and the ways in which systemic and ideological biases related to writing can reinforce educational inequities.

An Ecological View of Platforms

As with writing, an expansive transdisciplinary literature has also suggested that platforms are not simply digital tools for performing tasks; rather, they are complex environments that consolidate a range of competing interests in their design and use (Bratton, 2016; Garcia & Nichols, 2021; Nichols & LeBlanc, 2021; Williamson, 2018). In media theorist José van Dijck’s (2013) influential framing of platform relations, she suggested that platform ecologies are best understood as an interplay of social, technical, and political-economic dimensions and urged the need for research into the dynamic intersection of each.

The social dimension refers to the uses and outcomes of platform processes. This is the dimension most frequently taken up in education research. For instance, when scholars or practitioners talk about platforms in terms of a category of use (e.g., administrative, assessment, instructional, communicative), they are describing its social function. Research in this area has focused on both the users and content of digital platforms: the consumers and producers of digital content and inquiry into the nature of what is generated and circulated in digital environments.

The technical dimension refers to technical and algorithmic features that shape how digital platforms function: the code, data processes and algorithms (i.e., weak AI), interfaces, default settings, and interoperability of various technical dynamics that enable, constrain, and condition function on the platform. These elements coax user behaviors in specific ways that accord with their own internal logics. Research in this area includes inquiry into platform interfaces, the amalgam of visual features such as buttons, graphic designs, layout, and so on that mediate our subsequent interaction with the software’s underlying code (Lynch, 2017; Monea, 2020; Vee, 2017). Others have examined the datafication of interaction on platforms, tracing the logic of multisided markets into the positioning and surveillance of students in school (Robinson, 2020; Williamson, 2018). This research has led to efforts to develop frameworks for understanding where and how students and teachers might carve out space for critiquing and resisting these extractive logics (LeBlanc et al., 2023; Nichols & LeBlanc, 2020).

Finally, the political-economic dimension refers to the commercial and regulatory interests that shape how a platform is designed. This includes its governance and ownership and the business model its parent company relies on to turn a profit. Consequently, a host of agents and entities—venture capital firms, corporate boards and directors, government regulations and lobbying interests—now function as key “sponsors” of literacy (Brandt, 1998) in digital platforms, exerting significant power over even the most mundane features. The political-economic dimension informs activities in the other dimensions: A business model that depends on the generation of salable data, for instance, may be coded at the technical level to harvest excess data from users, which, in turn, can give new social meanings when users operate it.

An ecological orientation foregrounds that platforms are not reducible to any one of the social, technical, or political-economic dynamics. Instead, platforms as processes emerge from the performative relations among all three (Nichols & LeBlanc, 2021). These competing and agonistic interests at work in platform ecologies, consequently, can create significant challenges for educational equity. Administrative and learning platforms, for example, cede tremendous control over to private companies that are largely unaccountable to schools or the public (Kerssens & van Dijck, 2021) while also reorganizing instruction and assessment to match the governing logics of the data processes and commercial interests at work in these systems (Dixon-Román, 2016). Scholars working in critical race science and technology studies have demonstrated how platforms can inherit discriminatory ensign features from their developers and owners (Chun, 2021), normalize racialized surveillance (Browne, 2015), and enroll marginalized communities into exploitative data processes (McMillan Cottom, 2020).

Learning to Write With AI Platforms: Centering Equity in an Ecological Approach

We situate our inquiry into the platformization of writing in ecological models of learning that center educational equity (e.g., Gutiérrez, 2008; Gutiérrez & Vossoughi, 2010; Ito et al., 2013; Nasir et al., 2021; Osher, Pittman, et al., 2020; Pinkard, 2019), providing a critical lens for understanding the role of AI platforms in education practice as the presence of these new technologies increases in K–12 classrooms. Guided by these models, our analysis is grounded in the assumption that understanding how writing instruction is mediated by AI platforms requires attention to the ecologies of writing and platforms, their interrelations, and their situatedness in broader political, social, cultural, technical, and economic systems that guide their development and use. This ecological orientation invites a broader perspective on what it means to learn with AI platforms, one that is attuned to how learning is facilitated by platforms and how learning and platforms intermediate one another. Because “ecological thinking changes the way we see the ecosystem itself” (Hecht & Crowley, 2020, p. 269), a dynamic, relational framing of platforms and writing can support new understandings of each and new approaches to research and practice that engage robust conceptualizations of educational equity.

The importance of addressing the equity implications of writing with AI platforms is illustrated by scholars representing a wide range of disciplines who have demonstrated that the logics, imperatives, and assumptions embedded in these technologies may simultaneously reinforce or exacerbate structural inequities in practice (e.g., Benjamin, 2019; Chun, 2021; Dixon-Román, 2016; Macgilchrist, 2019). These scholars contend that processes mediated by digital platforms are always deeply intertwined with broader social, historical, cultural, and political contexts. As such, it is crucial to critically examine and address the equity implications of these processes to ensure that the use of digital platforms in writing education does not reproduce and intensify existing social, racial, and economic disparities (de Roock, 2021; Dixon-Román et al., 2020). For example, Benjamin (2019) explored how platform technologies contribute to the disproportionate surveillance, policing, and incarceration of marginalized youth, including in educational settings. Chun (2021) critiqued the idea of “software determinism,” arguing that the seemingly objective algorithms that drive digital platforms are in fact shaped by social and cultural biases. Aguilera and de Roock (2022) offered the concept of proceduralized ideologies to describe how digital platforms, particularly those used in education, can shape knowledge production in ways that privilege certain perspectives and marginalize others. The robust scholarship in this area underscores the need for a critical approach to the use of digital platforms in educational contexts, one that is attuned to the potential for these technologies to perpetuate or deepen social inequities (Macgilchrist, 2019).

An ecological approach to studying AI platforms in education can surface these tensions while also attending to the potential benefits and risks of platformization for addressing educational inequities, which are considerable and have been the subject of innumerable scholarly articles, tech company sales pitches, and school district initiatives. For example, they are recognized for their potential to reduce barriers for students with disabilities; foster essential digital literacy skills; offer innovative ways to engage students in learning, such as in STEM education or through digital games; facilitate personalized, flexible, and student-centered teaching approaches; or provide hybrid or online access to resources (e.g., during COVID-19). It is clear that digital platforms can have positive impacts for individual students, classrooms, and even schools (see e.g., Harris et al., 2023). However, an ecological approach to studying the impacts of these platforms suggests that potential benefits need to be considered in relation to potential harms. For example, educational technologies have become intertwined with accountability movements, standardized testing, and deficit-oriented views of educators toward students and their communities, perpetuating the ongoing exclusion of student identities and abilities from classrooms (Margolis, 2017). The pedagogical approaches that expose marginalized students to simplified and belittling remedial curricula have merely been digitized, further entrenching these issues in the educational landscape (Watkins, 2018) and raising concerns about how educational technologies are implemented and framed in schools (Heinrich et al., 2020). And although the digital divide is very real, it has often been used to frame marginalized communities, especially African Americans, from a deficit perspective (e.g., Brock et al., 2010; Lewis Ellison & Solomon, 2019) and justify reforms that prioritize investment in educational technologies at the expense of other crucial areas (Cuban, 2001; Greene, 2021; van Dijck, 2021). Amid these complexities, we suggest that ecological orientations to studying AI platforms in writing instruction can center educational equity in research and surface these intersecting, dynamic relations between writing and platforms in teaching and learning contexts.

Methods

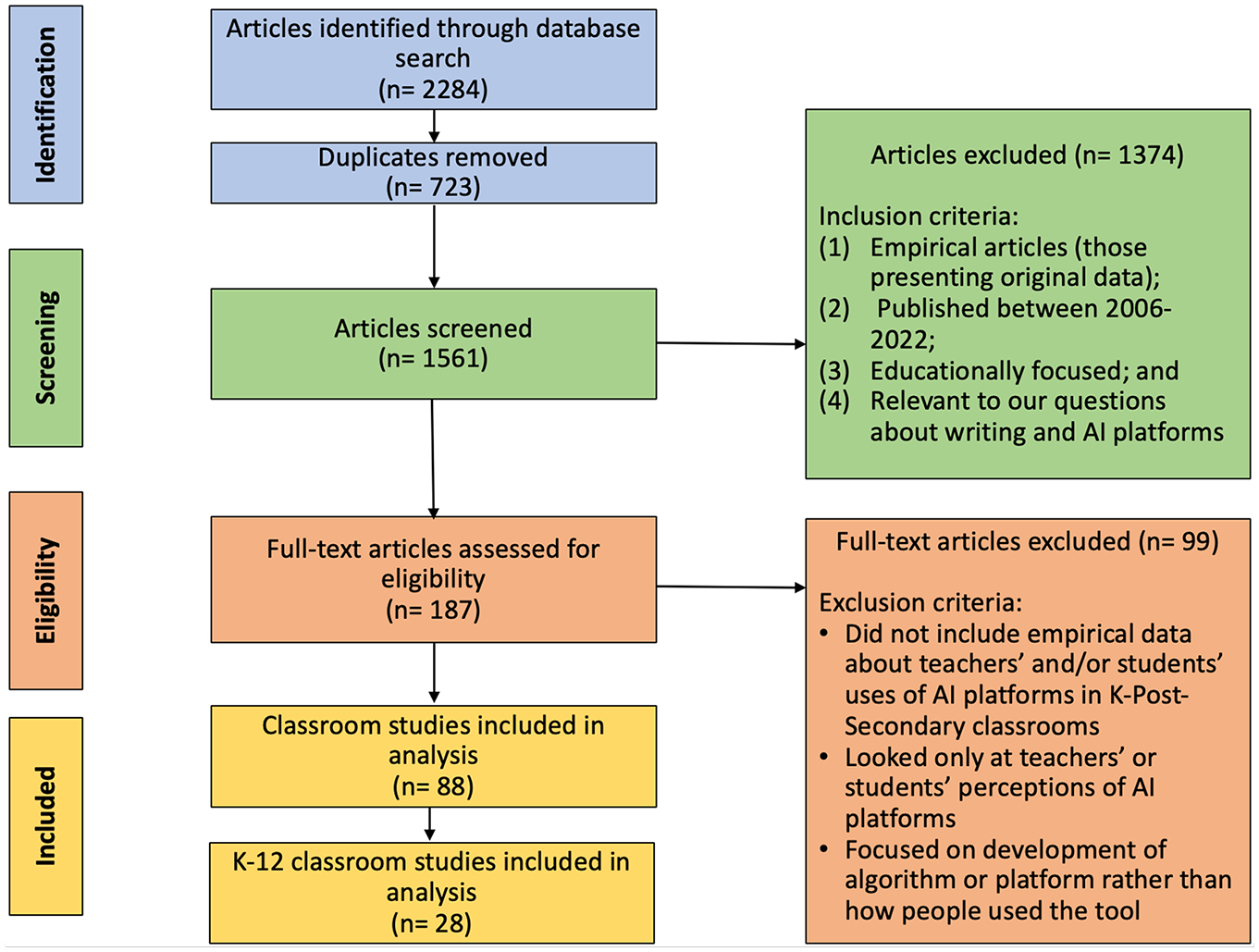

To address our research questions, we conducted a systematic review of empirical education studies published between January 2006 and December 2022. To guide our review process, we turned to the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) Standards (Page et al., 2021) and used the four phases to report our methods: (a) identifying salient articles, (b) screening identified articles, (c) analyzing eligibility for inclusion in an initial corpus, and (d) creating a refined corpus based on additional inclusion/exclusion criteria. We also consulted Welch et al.’s (2012) reporting guidelines for equity-focused systematic reviews, adapting it for our focus on educational equity and using Osher, Pittman, et al.’s (2020) definition of “robust equity” to guide us: “the intentional counter to inequality, institutionalized privilege and prejudice, and systemic deficits and the intentional promotion of thriving across multiple domains for those who experience inequity and injustice” (p. 3). We represented our process in Figure 1.

Search Process Results

Identification

We used four databases salient for literature searches in education (EBSCO-Education Research Complete, ProQuest-ERIC, Scopus, and the Web of Science) to identify journal articles written between January 2006 and December 2022. We chose 2006 as the starting place for our review based on Warschauer and Ware’s (2006) pivotal article outlining a research agenda for automated writing evaluation in education research (a term they introduced). In their review of the landscape of automated writing support at that time, Warschauer and Ware found scant empirical research in education scholarship despite years of discussion and development of AI platforms in other disciplinary contexts. We chose the end date not only to reflect the time when we conducted the searches but also to demarcate what will likely be a new era in AI research in education given the widespread public uptake of OpenAI’s ChatGPT-3.5 in November 2022 and the subsequent development and integration of that and other advanced models of generative AI into platforms used in teaching and learning in 2023.

We conducted searches in each database using the following Boolean search terms: writ* OR literac* OR compos* AND AI OR “artificial intelligence” OR NLP OR “natural language processing” OR “automated writing” OR “machine learning.” We searched the full text of peer-reviewed journal articles written in English published between 2006 and 2022. Our initial pool returned 2,284 citations; we eliminated 723 duplicates and uploaded the remaining 1,561 articles into a shared Zotero collection.

Screening

We screened the 1,561 articles by reading the titles and abstracts to determine relevance. We examined whether they were (a) empirical articles (i.e., those presenting original data), (b) published between 2006 and 2022, (c) educationally focused, and (d) relevant to our questions about writing and AI platforms. By “educationally focused empirical articles,” we mean those with data about teaching or learning in formal or informal settings (which meant eliminating articles about model fit or evaluation of algorithms). For any uncertainties, we consulted AERA’s (2006) Standards for Reporting on Empirical Social Science Research and came to group consensus. For example, some articles appeared to meet the criteria initially, like McKnight (2021), but did not include a separate methods section and/or did not have data about AI; in these cases, two authors skimmed the full piece to determine eligibility. We eliminated 1,374 articles, leaving 187 articles for further review (please see Appendix A for table of included studies).

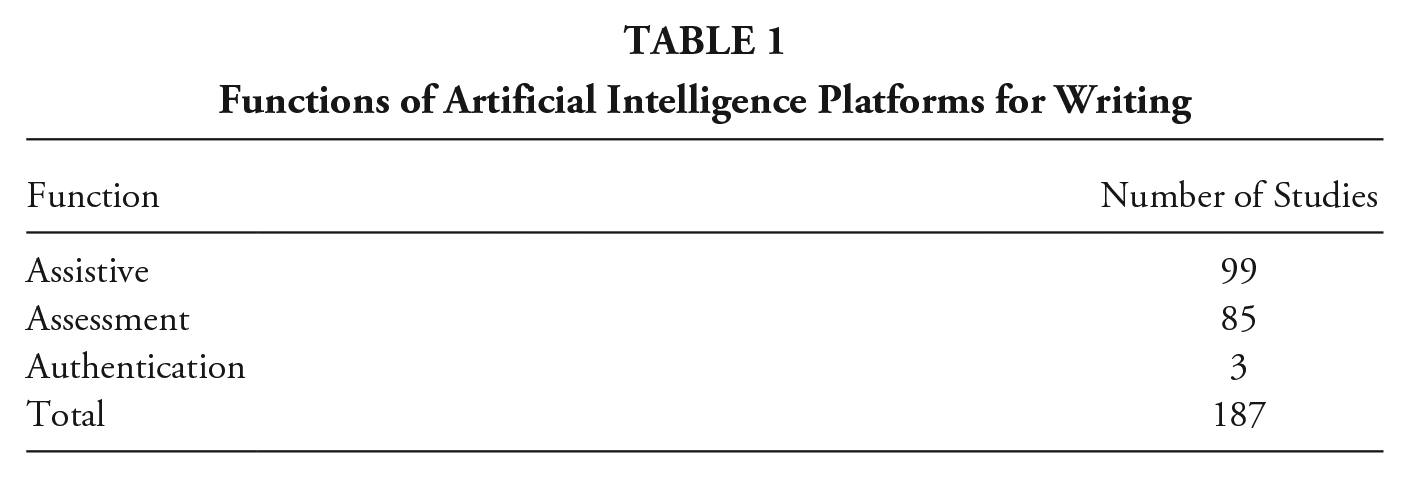

We engaged in a round of descriptive coding (Saldaña, 2016) of the 187 articles to create categories that would help us answer our first research question about the function of AI technologies in writing instruction and practice. We read each of the studies and created a data matrix (Miles et al., 2018) that mapped key criteria: participants, methods, tools, and findings. We coded for different functions, which we collapsed into three categories: assistive, assessment, and authentication. We defined assistive functions as those that support writing instruction, learning, or practice via AI platforms (e.g., text generation, nudges, intelligent tutors, automated feedback, etc.). We defined assessment functions as those concerned primarily with how AI evaluates writing (e.g., grading, classifying features, measuring discrete skills). We defined authentication functions as those in which an AI platform certified writing (e.g., detecting plagiarism, surveilling the writing process). We sorted the 187 studies into one of the three categories in separate folders in our Zotero database, with two authors checking each categorization and discussing any that did not have agreement with the full group until consensus was reached (see Table 1).

Functions of Artificial Intelligence Platforms for Writing

Eligibility

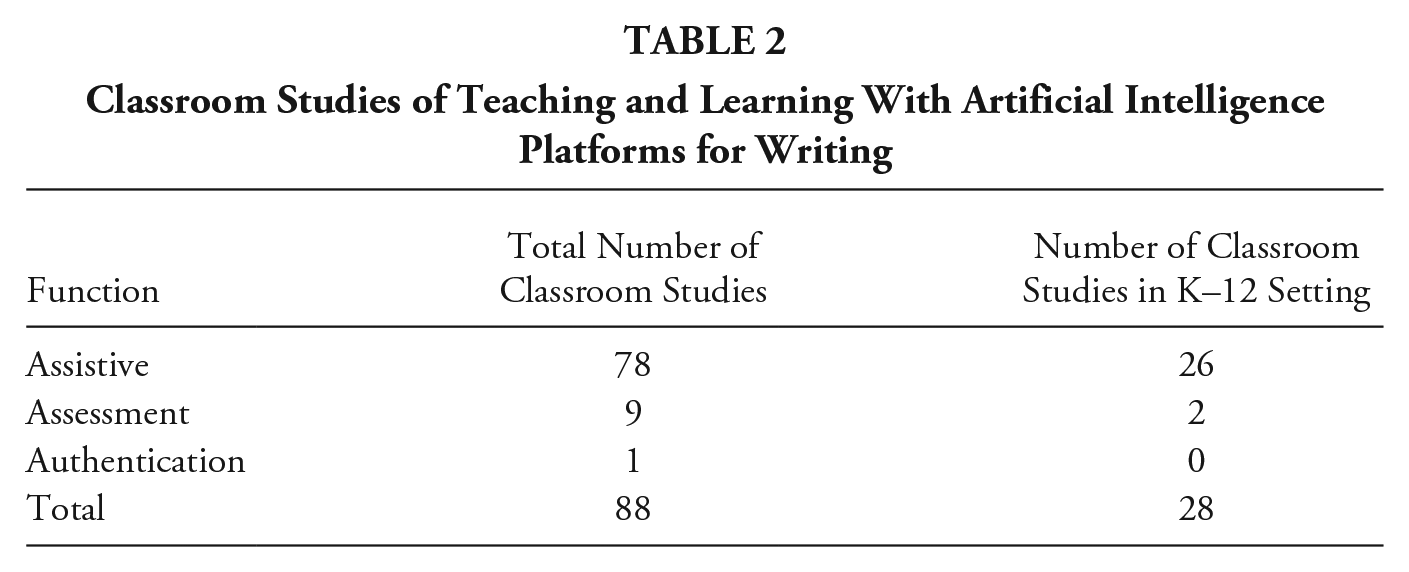

We analyzed the 187 articles to determine eligibility for inclusion in a smaller corpus of data that focused more specifically on teaching and learning in classrooms. Whereas the broader corpus allowed us to look at learning writ large (e.g., tutoring contexts, home use), we wanted to understand how these technologies were implemented pedagogically in school contexts. To be included in this stage of the review, articles had to include empirical data about teachers’ and/or students’ uses of AI platforms in elementary, secondary, and postsecondary classrooms, with observational data or other measures examining how AI platforms were used in teaching or learning. We excluded studies that looked only at teachers’ or students’ perceptions of AI platforms (e.g., Conjin et al., 2022) or were focused on development of the algorithm or platform rather than how people used the tool (e.g., Roscoe et al., 2013). We identified 88 articles meeting our criteria for further analysis, which we descriptively coded in the data matrix by adding new emergent codes we describe in the first finding section.

During this analysis, we noted the 60 postsecondary studies in the classroom corpus were predominantly focused on the quality or characteristics of essay writing and/or grammar development (often in second language [L2] settings). Given our interests in educational equity, learning, and pedagogy in education research about AI, we refined our corpus for further qualitative analysis by identifying articles that collected student data from K–12 settings (

Classroom Studies of Teaching and Learning With Artificial Intelligence Platforms for Writing

We uploaded the full text of the 28 K–12 studies to the web platform of Atlas.ti (Version 23). To answer our second research question, we developed theoretical codes (Saldaña, 2016) from our conceptual framework, a deductive coding approach that allowed us to map patterns conceptually. First, we identified how studies framed writing across five dimensions: (a) cognitive (writing as a cognitive process mediated by strategies), (b) sociocultural (writing as a socially situated and constructed practice), (c) critical (writing as intertwined with social power), (d) semiotic (writing as a representational practice that draws on linguistic and multimodal communication methods), and (e) embodied (writing as a practice that engages the body, the senses, and physical environments). Next, we identified how studies framed platforms along three dimensions: (a) social (mediating connections between users and with content in the social world, beyond human-platform interaction), (b) technical (how back-end and front-end technical factors like code, algorithms, and interfaces mediate the platform’s use or shape its design), and (c) political-economic (how political/economic concerns like commercial or regulatory interests, governance structures, mediate the platform’s use or shape its design). To address our third research question, we engaged in descriptive coding (Saldaña, 2016), an inductive approach that allowed us to look at the multiple, various ways equity was discussed or invoked. We coded any paragraphs in which the word “equity” (or related forms of it) appeared and any concepts related to equity (e.g., access, diversity). We developed emergent codes to characterize how articles focused on equity, including descriptions of participant demographics, linguistic diversity, bias, and disadvantage.

Two members of the author team engaged in all coding, discussing any disagreements until consensus was reached. For example, while two authors coded for mentions of “platforms,” all five authors discussed examples of student-platform interactions to determine whether they should be classified as social (e.g., students logging in to a platform). Because these mentions were largely quotidian (e.g., students were on the platform for

Findings

We organize our findings related to the ways AI platforms for writing are theorized and studied in education scholarship around our three research questions: the functions of the platforms, the framing of writing and platforms in relation to teaching and learning, and the presence and uptake of equity concerns.

Functions of AI Platforms in Writing Practice

To answer our first research question, we report on the three primary functions of AI platforms for writing in the corpus of 88 classroom-focused studies (see Table 2). We found the most prevalent category to be assistive (

For the authentication category, the lone classroom study in the corpus focused on plagiarism. Akçapinar (2015) examined how undergraduates in a computer hardware course who received feedback about their plagiarism scores halfway through the semester had lower instances of plagiarism at the end of the semester. Of the nine classroom studies of assessment, all involved the grading function of AI platforms. Some were concerned with summative assessments, such as Potter and Wilson’s (2021) study, which looked at statewide implementation of AWE software across Grades 4 through 11 to determine whether AWE use was associated with performance on a state writing assessment and how equity and access issues influenced AWE usage. Although they found positive associations between AWE use and performance on the distal assessment, they concluded that “simply providing access to AWE systems that facilitate desirable pedagogical interactions is insufficient to ensure implementation of those interactions” (p. 1572). Other studies focused on formative assessments drew similar conclusions about the importance of school- and classroom-level factors in influencing how AWE is used for assessment. For example, studies found that formative assessments provided opportunities to practice writing and interpret feedback (e.g., González-Carrillo et al., 2021), that engagement was an important mediating variable in making sense of automated feedback (e.g., Zhang & Hyland, 2018), and that an integrated approach across different feedback types (instructor, peer, automated) was most successful (Zhang & Hyland, 2022). These studies suggest that the grading function of AWE is promising but should be implemented carefully in conjunction with a broader focus on teaching and learning. We note that the broader corpus of assessment studies (

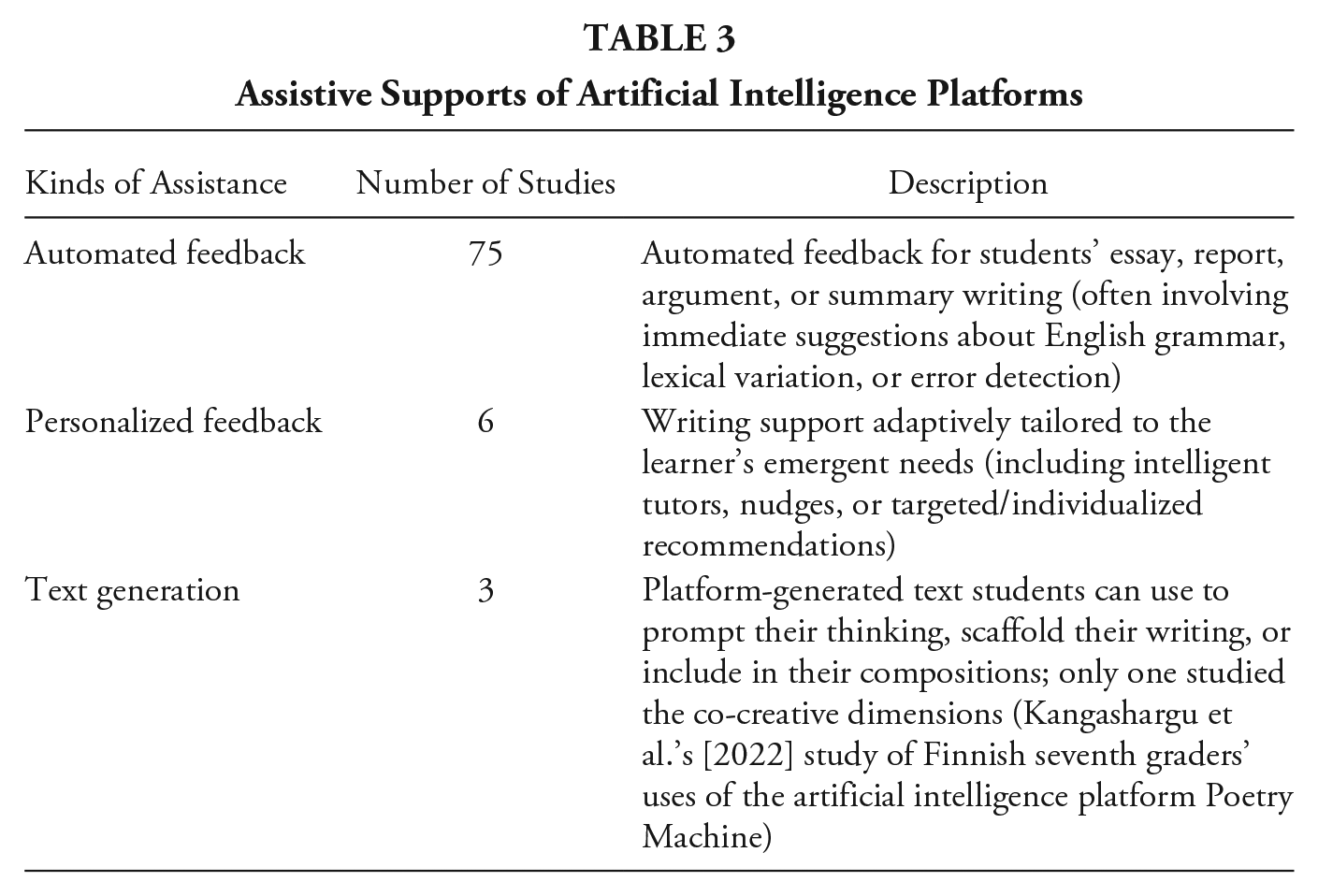

Most of the studies in the corpus of 78 classroom studies focused on understanding how people used AI platforms for writing instruction or practice (an assistive capacity), especially the use of AWE or AES systems. These included commercially or privately developed platforms that incorporated NLP or machine learning (e.g., PEG, My Access, Criterion, Pigai, iTest, Writing Pal, MI Write, SWoRD) or open-access platforms (e.g., Grammarly). We multiply coded the three primary ways studies characterized AI supports: through automated feedback, personalized feedback, and text generation (see Table 3).

Assistive Supports of Artificial Intelligence Platforms

We then coded for how AI platforms assisted teachers or students, identifying seven ways. Almost all studies documented whether and how AWE supported writers in improving their textual output, measuring the (a) quality of the writing by tracking how students’ writing changed from pre- to posttool use (e.g., Lim & Phua, 2019; Liu et al., 2023). Many studies also traced students’ and teachers’ (b) perceptions of the tool’s usefulness (in composing, for revision, for teaching; e.g., Roscoe et al., 2017) or its impact on users’ beliefs (about their writing self-efficacy, motivation to write/revise; e.g., Wilson et al., 2021). Some studies examined how AI platforms assisted writers in developing (c) knowledge about writing (e.g., strategies, text evidence, grammar; e.g., Cotos et al., 2017) or shifting (d) behavior related to writing (e.g., increased revisions after tool use; Wang et al., 2020). Some looked also at the (e) accuracy of the feedback, such as whether the platform identified errors correctly (e.g., Li et al., 2015) or how automated feedback compared to teacher feedback to support student learning (e.g., Link et al., 2022). Several traced students’ (f) performance on a future assessment after the intervention (e.g., Wilson & Roscoe, 2020). Finally, only a handful of studies examined what we called participants’ (g) sensemaking with AI, or how various individual and contextual factors shaped the ways they engaged with the tool, feedback, or writing process (e.g., Ranalli, 2021).

The studies were mixed in terms of how assistive the platforms actually were across these seven areas. Many studies reported mixed results, with small shifts in behavior or improvement only on surface-level dimensions of writing (e.g., Wang et al., 2020). Others reported positive effects but only for some students or only in some areas (e.g., Xu & Zhang, 2022). Although some participants reported that they found feedback helpful, often it was only helpful in some conditions, for some users, or for particular purposes (e.g., Bai & Hu, 2017); indeed, a number of participants found AWE feedback too generalized or impersonal (e.g., Sherafati et al., 2020). A number of studies pointed to the importance of instructors—whether in terms of shaping how the AI platform was incorporated into instruction (e.g., Wilson et al., 2022) or how well the instructor supplemented, integrated, or contextualized the feedback (e.g., Wijekumar et al., 2022). Overall, a principal takeaway across many studies was the importance of context: The same intervention at a different school provided different outcomes, pointing to the complexity involved in how automated feedback is framed and taken up in practice (e.g., Warschauer & Grimes, 2008). This recognition of the importance of context and instructors is an area well established in previous reviews (e.g., Nunes et al., 2022; Stevenson, 2016) and one we examine in our next findings section.

Framing of Writing and Platforms

To answer our second research question about how writing and platforms were framed in the 28 K–12 classroom studies, we looked toward our ecological framework to guide our coding. Analyses suggested that the studies tended to frame writing and platforms in ways that attended to a very limited number of dimensions. Of the five writing dimensions noted in our framework, the most prevalent was the cognitive dimension, with the majority of studies attending to the knowledge, strategies, and skills required to navigate complex problem-solving processes. Similarly, the framing of platforms across the majority of studies highlighted technical dimensions of platforms, focusing on how the tools worked, processes of use, and user interface interactions.

Framing Writing

Given the array of complex, situated, and interconnected activities that comprise writing, we explored how cognitive, sociocultural, semiotic, critical, and embodied dimensions were taken up in K–12 studies. We found that cognitive theoretical underpinnings predominated across the majority of the studies. Twenty-four of the 28 studies drew on seminal cognitive models of the writing process (e.g., Bereiter & Scardamalia, 1987; Hayes, 1996; Hayes & Flower, 1980; Zimmerman & Risemberg, 1997) to anchor investigations of writing performance, development, and learning. Writing in these studies was framed as a complex task requiring the coordination of “several cognitive skills and knowledge sources through multiple demanding processes including setting goals, solving problems, and strategically managing cognitive resources” (Butterfuss et al., 2022, p. 698). Researchers noted that required skills for effective writing were multilevel, including low-level skills (e.g., spelling, punctuation, grammar, sentence structure) and high-level skills (e.g., word choice, organization, idea development, style; e.g., Mørch et al., 2017). Writing was also framed as a process mediated by strategies for planning, drafting, and revising essays (McCarthy et al., 2022; Roscoe et al., 2014, 2015, 2019; Wang et al., 2020) and coordination of self-regulation strategies (Nguyen et al., 2017; Palermo & Thomson, 2018; Tansonboom et al., 2017; Wijekumar et al., 2022).

Studies that framed writing as a sociocultural practice were relatively few in number (Grimes & Warschauer, 2010; Kangasharju et al., 2022; Warschauer & Grimes, 2008; Ware, 2014). These studies characterized writing as a practice influenced by varied social, cultural, material, historical, technological, and relational variables. For example, in their longitudinal study of eight schools’ uses of AWE, Grimes and Warschauer (2010) found that AWE supported students to write and revise more and exhibit more learner autonomy. The successful use of AWE was attributed to local social factors including a stable AWE technology, strong administrative support, and high-quality professional supports for teachers. They noted that although AWE is often viewed as “a magic bullet” that can solve the problem of writing in schools, writing enacted in an AWE environment is always mediated by complex relationships among social and educational contexts, teacher and student beliefs, other technologies, and prior instructional practices.

Of the 28 studies, one study addressed the semiotic and embodied dimensions of writing, which was also the only study to examine the text-generation dimensions of AI platforms (all the others looked exclusively at automated feedback). Kangasharju and colleagues (2022) investigated the impact of an AI-based poetry writing tool on secondary students’ poetry writing and revision processes. Poetry writing was framed as semiotic (allowing experimentation with different communication modes such as sound, image, and words) and embodied (supporting phonological and phonemic awareness). However, the research questions and analysis did not focus on these dimensions.

None of the studies explicitly attended to critical dimensions of writing, although several indirectly acknowledged institutional power by framing writing as a gatekeeper of academic achievement, higher education, and career pathways (Butterfuss et al., 2022; Grimes & Warschauer, 2010; Wilson et al., 2021, 2022). Researchers noted that because “written text is crucial for professional and academic success” (Snow et al., 2015, p. 40), students who “cannot write well are disadvantaged in salary positions and often find coursework too difficult to complete” (Wang et al., 2020). These imagined writing purposes were reflected in the most common written forms featured in the studies, including essay responses to standardized prompts (Allen et al., 2019; Deane et al., 2021), class essays (e.g., Huang & Wilson, 2021; Lim & Phua, 2019; McCarthy et al., 2022), summaries of class readings (e.g., Sung et al., 2016), and explanations of concepts (e.g., Gerard & Linn, 2022; H. S. Lee et al., 2021).

Some studies that framed writing as a cognitive skill also acknowledged where sociocultural considerations could help construct fuller understandings of writing processes (e.g., Allen et al., 2019; Lim & Phua, 2019; Tang & Rich, 2017). Allen and colleagues (2019) noted in their study of linguistic flexibility in student essays that contemporary assessments are highly decontextualized and therefore cannot capture the multidimensionality of writing or the “nuances that constrain students’ behaviors, such as individual differences, the presumed audience, and the nature of the writing assignment” (p. 1628). In a related vein, Lim and Phua (2019) observed that although using AWE can provide important feedback on language accuracy in student writing, it can also “reinforce a mechanistic and formulaic writing” when disconnected from communication in real-world contexts (p. 3). Such considerations underscore the importance of contextualized pedagogical goals that comport with a tool’s affordances and constraints.

Framing Platforms

Returning to van Dijck’s (2013) conceptualization of platforms as an interactive and performative assemblage between social, technical, and political-economic dimensions, we consider here how platforms were framed in the corpus along these dynamics: What do platforms allow their users to do in relationship with others (social)? How do the platforms work (technical)? and Who benefits from the platforms’ use and how (political-economic)?

Most notable was the total absence within the corpus of any discussion of platforms as having political or economic dimensions. Where platforms are “fueled by data” and “organized through algorithms and interfaces,” underlying these technical dynamics is the reality that they are equally “formalized through ownership relations driven by business models, and governed by user agreements” (van Dijck et al., 2018, p. 9). Where specific platforms were named in the research corpus—Writing Pal, MI Write, e-rater, Text Evaluator, Poetry Machine, Annotator, and so on—there was no explicit discussion of ownership, business valuations, data extraction, data surveillance, data monetization, or other key political-economic factors. No portion of any of the articles met the criteria to receive a code for political-economic. This absence is especially noticeable among platform writing products marketed to teachers and students, where end users (in classrooms or universities) may be given free access to technologies in exchange for their data’s aggregation and sale to advertisers or other commercial entities, often without the knowledge of the end users (Williamson, 2021; Yu & Couldry, 2022).

Platform dynamics within this corpus were universally conceptualized narrowly within technical and social dimensions. In this corpus, technical framing was dominant, representing the majority of codes and the fundamental manner by which platforms were framed. Technical framing included procedural architecture at the level of student front-end and platform back-end process (Sung et al., 2016; Wijekumar et al., 2022), parameters of holistic and diagnostic (vocabulary, syntactic complexity, etc.) score reporting (Wilson et al., 2021), lengthy descriptions of modular and lesson organization and themes (Palermo & Thomson, 2018), and software graphic interface demonstrated through photos and screencaptures (Butterfuss et al., 2022; Roscoe et al., 2014). Nearly all descriptions of the technical dimension of the platform involved delineating user interfaces, processes by which students worked with the platform and descriptions of the nature of the feedback or scoring they received. As a representative example, Wilson and Roscoe (2020, pp. 94–95) detailed an AWE platform called Project Essay Grade (PEG) Writing, outlining prompts to students (“assigned in one of three genres: narrative, argumentative, or informative”); digital, interactive graphic organizers; postwriting granular ratings (“scored on a 1 to 5 scale: idea development, organization, style, sentence structure, word choice, and conventions”); an “Overall Score” (from 6 to 30); and finally, formative feedback (“on each of the six traits, indicating ways for students to improve their text with respect to that specific trait”). Wherein these lengthy technical descriptions provide insight into potentials for human interaction, they often overlook the social dynamics that embed them in interactional and instructional contexts and the economic dynamics underwriting design choices and backend logics.

Social dynamics were coded in articles that framed platforms as mediating sociality by facilitating connection between various users. Specifically, we coded research that framed platforms as more than simply facilitating user-to-interface interaction; this additional requirement excluded the majority of descriptions of AWE and writing platforms in classroom contexts. Where social dynamics were indicated, these largely include descriptions of classroom activities in advance of or following the use of the writing platform (Huang & Wilson, 2021; Palermo & Thomson, 2018), typically platform-suggested student peer review (both identifiable and anonymous) in light of automated grading and text suggestions (Wilson et al., 2021). For other studies, social dynamics included brief accounts of teacher instructional use of the platform in the classroom: the number of assignments or prompts facilitated (Huang & Wilson, 2021; Wilson & Roscoe, 2020), assisting students face-to-face in light of AWE generated feedback (Wijekumar et al., 2022), orally reviewing AWE-generated feedback with students in class (Deane et al., 2021), and providing additional human feedback through the platform (Huang & Wilson, 2021; Tang & Rich, 2017). Whereas the majority of this framing suggested platforms had a limited impact on reconfiguring traditional classroom writing instruction and interaction, supplementing and facilitating existing writing practices instead, Grimes and Warshauer (2010) described the value of the writing platform for bolstering classroom management. Students were more engaged and quieter during platform-facilitated writing time than with pencil and paper, simplifying the teacher’s moment-by-moment work. Social dynamics in the corpus were largely described in instructional terms, wherein platforms facilitated conversations and interactions, with brief narrations of these processes.

Equity in Teaching and Learning

Our third research question asked what issues of equity were raised (or not) in studies of AI-mediated writing. We found that equity was rarely discussed directly in these articles and never robustly (Osher, Pittman, et al., 2020). Conceptual frameworks and research designs seemed to reflect the idea that interventions designed to improve learning will positively impact all students, especially those who are positioned as “disadvantaged.” For many studies, this assumption manifested in ethnically and socioeconomically diverse samples. A few studies empirically examined effects on different types of learners, but such issues of equity were never centered as major concerns even when named in the framing of articles.

Notably, the word “equity” did not appear in any of the 28 articles about AI platforms in K–12 classrooms, nor did any relevant uses of the synonyms we searched for (e.g., “access” used to describe a teacher’s access to a computer lab rather than a community’s access to particular resources). There was ample opportunity to explore issues of equity, however. The study participants were generally culturally, socially, and linguistically diverse, but this diversity was rarely explicitly addressed through study rationale, design, or analyses. Most of the K–12 studies included participant demographics as routine reporting of methods or to make the argument for representative samples within a particular school district or city. In a typical example, Deane et al. (2021) stated, “The demographic features were all statistically significant and followed typical patterns for U.S. schools (e.g., slightly lower performance for males and African-American students, and much lower performance for ELLs and special education students)” (p. 75). Such inclusion of diversity through participants, without attention to design and equity, is superficial and even problematic by normalizing inequities like the “lower performance” of Black males and students labeled English language learner (ELL) and special education. Additionally, in some cases, specific populations were excluded, such as “special needs” students in the case of Sung et al. (2016), which reduced the potential for investigating implications for equity. Such mismatches between purported research goals and methodologies highlight the importance of person-centered analyses in contradistinction, or at least in addition, to variable-centered analyses when researching issues of equity (Reeping et al., 2023). Person-centered analysis identifies key patterns of values across variables where the person is viewed holistically as the unit of analysis rather than discounting outlier individuals or groups from the data (Bauer & Shanahan, 2007).

Four articles attended to variations among learners beyond descriptions of demographics, but due to small sample sizes or lack of control groups, it is difficult to assess their findings. Huang and Wilson (2021) found between-student variability in first-draft scores and growth rates when revising essays but dismissed demographic differences as explanatory factors. Their analysis found no influence of gender, ELL, special education, or race on these differences, concluding “no student group was systematically advantaged or disadvantaged by MI Write’s feedback: it was equivalently beneficial for all students” (p. 22). However, questions about equity were not explicitly built into their research design, and there was no control group to assess the relationship between growth and their system’s feedback. Tansonboom et al. (2017) consisted of two studies that similarly addressed issues of equity but without careful consideration in research design. Their first study used a control group and found significant gains among “low prior knowledge students” when experiencing personalized guidance while revising short answers in a science unit. Their second study took a similar approach to Huang and Wilson and similarly reported finding no differences in gains between demographic groups when looking at ELL status and gender but also did not have a control condition.

Two studies were designed with equity in mind but without sufficient sample sizes to include conclusive findings. McCarthy and colleagues (2022) found that non-native English speakers were able to produce essays of similar quality to the rest of the sample, with the exception of mechanics, although the sample was too small to investigate the matter more decisively. They concluded that “further work should be done to examine the combination of [spelling and grammar] feedback for those who have more difficulty with more foundational writing skills including L2 writers and younger, developing writers”(p. 12). Similarly, Ware (2014) sought to better understand automated writing feedback for secondary students in low-income settings and who are labeled English language learners. Ware found that “the language status of students did not appear to impact their benefiting from the type of feedback they received” (p. 243). Taken together, these findings suggest the need for rigorous studies designed with equity at the center.

Many of the studies identified contextual factors upon which success of the interventions were dependent, although without addressing broader implications for marginalized communities. These issues mostly were seen as external to the tool themselves. Nonetheless, two of these remain useful for thinking about equity. Warschauer and Grimes (2008) found that “teachers’ use of the software varied from school to school, based partly on students’ socioeconomic status, but more notably on teachers’ prior beliefs about writing pedagogy” (p. 22). Grimes and Warschauer (2010) concluded that the success of AWE implementation in eight schools was the “result of many local factors that are not easy to replicate, including a mature AWE technology, strong administrative support, excellent professional training, teachers ready to experiment with technology, and in [one of the schools] strong peer support among teachers” (p. 34). An implication of these observations is that more poorly resourced schools are much less likely to have the conditions to support successful AWE implementation, even when bringing in targeted interventions like 1:1 laptop initiatives and AWE software. The use and efficacy of the AWE tools depended largely on student socioeconomic status but also on the multiple factors that typically characterize effective pedagogical environments, themselves closely correlated to student social class and racialization. This important observation is in keeping with recent research undertaking critical race examinations of the differential deployment of digital media in schools that can perpetuate historical inequities without intentional evaluation and resistance (Benjamin, 2019; Dixon-Román et al., 2020; Nichols & Dixon-Román, in press; cf. Reich & Ito, 2017; Watkins, 2018).

Finally, there were a number of instances we coded as “problematic takes” on equity in study narratives, including research design and conceptual approaches. Most of these were statements that grounded study significance in undernuanced analyses of data sets or in deficit assumptions about students and teachers. For example, authors cited NAEP writing data among other large-scale assessment data without troubling it (e.g., Palermo & Thomson, 2018) or issued sweeping deficit diagnoses of writing challenges: “Students struggle with writing as a result of underdeveloped knowledge and skills” (Butterfuss et al., 2022, p. 697). Such interpretations not only oversimplify key issues but also unfairly place the blame on students, ignoring the broader socioeconomic and institutional factors that may impact their learning experiences and outcomes.

Discussion

Given concerns about how evolving AI technologies will impact writing, teaching, and learning, this systematic review addresses an important question about what education research can tell us about writing with “AI platforms,” a term that we suggest includes multiple forms of AI, from automated feedback (e.g., AWE, AES, etc.), to adaptive systems (e.g., conversational agents, intelligent tutors, algorithmic nudging, etc.), to generative AI (e.g., content generation). Our review of studies of platform-mediated writing instruction began from a holistic and multidimensional perspective, asking how current conceptualizations of AI platforms in education research account for teaching and learning in ways that foreground issues of educational equity. We centered our inquiry in expansive, multidimensional frameworks that emphasize the relationship between equity and learning and frame deeper learning as a core equity concern (Nasir et al., 2021; Noguera, 2017; Osher, Pittman, et al., 2020). These frameworks reflect emerging consensus in the science of learning and development scholarship about the importance of attending to dynamic relations between environmental factors, relationships, learning opportunities, and social, physical, emotional, cognitive, and psychological processes (Darling-Hammond et al., 2020; Osher, Cantor, et al., 2020).

Our findings suggest that the ecological perspectives for which we advocate (and for which there is significant research agreement; Darling-Hammond et al., 2020) have not yet been reflected in education studies about AI platforms, writing, and learning. We found that across the three most prevalent functions of AI platforms in writing instruction—assistive, assessment, and authentication—few studies explored practices of teaching and learning or included data about students’ or teachers’ uses of AI platforms, often focusing instead on tool or text production. Given that prior reviews have pointed out the lack of emphasis on teaching and learning in AWE studies, particularly in K–12 classrooms (e.g., Nunes et al., 2022; Stevenson, 2016; Vojak et al., 2011; Warschauer & Ware, 2006), a finding about the limited focus on how AI platforms are implemented in everyday classroom contexts may not be surprising. However, for over a decade, the scholarly literature has demonstrated that context critically shapes practices with AI writing platforms: How people use the tools in particular contexts makes a significant difference in how they support learning as a humanizing practice (Grimes & Warschauer, 2010). Yet the reviewed K–12 studies on whole did not address the importance of context, much less engage the notion of context as a dynamic process that is simultaneously intertwined with cultural foundations of learning and structural forms of inequity (Nasir et al., 2020)—a key insight from an ecological approach to studying platform-mediated writing instruction.

Such limited focus on the dynamic interplay of contextual and individual dimensions of learning with educational technologies is perhaps unsurprising given decades of scholarship about the variability in how technologies are taken up in teaching and learning—and the subsequent exacerbation of inequities when framed in narrow or instrumentalist ways (Heinrich et al., 2020). We found that the reviewed studies primarily attended to what AI platforms “do” rather than to the complex and varied ways they are used in teaching and learning. Studies thus focused on the technical and social uses of AI platforms and not their political and economic dimensions (e.g., platforms operating as multisided markets), an elision with troublesome implications for a range of stakeholders: data harvesting, tracing, and monetization (Hakimi et al., 2021; Zuboff, 2019); the ethics of data enrollment for racialized peoples (Chun, 2021); back-end labor of platform technologies, often carried out by marginalized workers (Gray & Suri, 2019); and environmental concerns about the physical nature of seemingly ephemeral cloud technologies that must rely on telecom wiring, server farms, transoceanic cable, and destructive natural resource extraction (Parks & Starosielski, 2015). All of these have political underpinnings, raise questions of oversight, and play out amid legislative environments (Bratton, 2016). The platforms’ commercial nature, as Perrotta and colleagues (2021) noted, configures participation (writing, clicks, drag and drop tools) according to platform logics that seek easy data interoperability, third-party integration (many of the AI platforms we examined were themselves aggregates of other platforms), and general infrastructualization, with the goal of writing platforms such as Google Docs and others serving as the de facto terrain of classroom instruction and textual production. Where writing is increasingly platformized in schools, it is simultaneously commercialized.

In terms of writing’s connection to commercial enterprises, we found that many studies offered a two-part warrant for platform-mediated writing: (a) Academic and professional success demand writing proficiency, and (b) teachers are overburdened and unable to support students who are woefully behind in developing the cognitive skills and knowledge sources needed to become proficient writers. Following this logic, the purpose of writing seems to be to gain a competitive edge in the global economy. However, an extensive literature has established that people engage varied, rich writing practices, often with high degrees of proficiency, for multiple purposes, across social spaces, and with a wide range of tools (Freedman et al., 2016). In the reviewed studies, writing was narrowly construed, with the multidimensionality of writing compressed to a focus on cognitive processes and without attention to longitudinal considerations (i.e., implications for writing development over time). Given the theoretical commitments of cognitive approaches, it is not surprising that the other dimensions in our ecological model of platform-mediated writing went unaddressed. As cognitive writing researchers have noted, existing cognitive models have not yet adequately captured cultural, social, and environmental impacts on writing and writing development (MacArthur & Graham, 2016). Cognitive processes are central to writing, but they work in tandem with embodied, social, cultural, historical, and linguistic processes. The reviewed K–12 studies paid little attention to how processes and practices interact, but this seems a necessary step going forward if platform-mediated writing in schools is to move beyond the limited logics of capitalism.

Although narrow conceptualizations of educational technology, writing, and learning have significant implications for equity-focused AI research, we noted a near total absence of equity as an explicit area of concern in the reviewed studies. If equity-related issues were alluded to (e.g., concerns over linguistic diversity, access to technologies, bias in the tools), these were often tangentially addressed. This trend was broadly seen in the full classroom corpus as well. Even more concerning than the elision of equity in the studies is how many of them perpetuated deficit framings, warranting their studies with concerns about students’ low scores, lack of writing ability, and error-laden prose. These deficit framings extended to teachers, who were often positioned as lacking time or capacity to read and provide corrective feedback on students’ flawed writing, and to writing itself as an academic burden that technologies could ameliorate.

One significant, overarching implication of these findings is that the narrow, deficit-laden understandings of writing, writers, and writing teachers in AI research place the focus (and blame) on the very individuals that AI platform developers and decision-makers purport to help rather than on inequitable systems, harmful ideologies, and oppressive labor conditions that shape the teaching and learning of writing. Instead of spotlighting the untenable working conditions of teachers in late capitalism (Skaalvik & Skaalvik, 2020) or advocating for the transformation of systems and structures that continue to fail too many young people (Duncan-Andrade, 2022; Ladson-Billings, 2006; Oakes, 2005), the atomized perspectives espoused in these stances point to technological solutions for social problems in ways that reinforce existing inequities (Benjamin, 2019). We wonder how platform-mediated writing studies might move toward asset-based inquiries and stances that not only acknowledge but also presume the dynamic relations of multiple processes involved in writers’ lives and the expansive possibilities of platform-mediated writing. Such ecological approaches would entail theoretical, methodological, and epistemological shifts in how learning is conceptualized, studied, and supported across multiple dimensions in relation to AI platforms.

This finding about the lack of focus on equity in the reviewed studies also draws attention to the need for educational designs that operationalize robust conceptions of equity that move beyond inclusion (Calabrese Barton & Tan, 2020) and algorithmic bias (Mayfield et al., 2019) to attend explicitly to issues of power and ethics (Vakil & Higgs, 2019). Robust conceptualizations of educational equity not only attend to how broader social and institutional structures and practices may systemically contribute to disparities for historically marginalized individuals and communities but also work to promote thriving across multiple domains (Osher, Pittman, et al., 2020). An ecological framework that articulates the relations between AI platforms, writing, and learning not only expands what education researchers notice and investigate but also offers perspectives on multilevel contextual elements that must be considered in the design and implementation of equitable learning environments, tools, and practices (e.g., Coburn et al., 2013; Penuel, 2019; Pinkard, 2019). These expansive conceptualizations push us to view AI platforms not as neutral communication conduits but as active participants in learning ecologies. They also open avenues for identifying, critiquing, and reimagining the sociopolitical impacts of AI platforms in education.

Conclusion

In reviewing AWE research literature from 2006 to 2022, we have attempted to capture a moment in writing studies and education as emerging, proliferating AI technologies for text generation based on large language models, NLP, and machine learning are poised to transform platform-mediated writing as we know it. This new horizon of responsive writing platforms and their attendant implications for teaching and learning is crystallized in our panicked zeitgeist over commercial technologies such as ChatGPT, which commentators breathlessly predict may render the college essay “dead” (Marche, 2022), destabilize white-collar work (Lowrey, 2023), and overturn traditional notions of assessment, fairness, and originality across the formal schooling system from kindergarten to university (Surovell, 2023). Where text-generative technologies are rapidly materializing, monetizing, and already having a substantive and disruptive effect on classroom practice, they may indeed represent a watershed moment for AI platforms in education, which marks a turning point for thinking about how writing will be supported and practiced in K–12 classrooms and beyond and how to support the thriving of all young people. In thinking of what has been and what may yet be, a robust ecological account of platforms—one that demonstrates the agonistic, performative, and recursive relationship between social, technological, and political-economic dynamics—can point to new opportunities for the design, implementation, and research of learning environments that center robust visions of educational equality.

Footnotes

Appendix

Included Studies Organized by Function Category (Assistive, Assessment, Authentication)

|

|

| 1. Abbas, M. A., Hammad, S., Hwang, G.-J., Khan, S., & Gilani, S. M. M. (2020). An assistive environment for EAL academic writing using formulaic sequences classification. |

| 2. Al-Inbari, F. A. Y., & Al-Wasy, B. Q. M. (2022). The impact of automated writing evaluation (AWE) on EFL learners’ peer and self-editing. |

| 3. *Allen, L. K., Likens, A. D., & McNamara, D. S. (2019). Writing flexibility in argumentative essays: A multidimensional analysis. |

| 4. Bai, L., & Hu, G. (2017). In the face of fallible AWE feedback: How do students respond? |

| 5. *Butterfuss, R., Roscoe, R. D., Allen, L. K., McCarthy, K. S., & McNamara, D. S. (2022). Strategy uptake in Writing Pal: Adaptive feedback and instruction. |

| 6. Chen, C.-F. E., & Cheng, W.-Y. E. (2008). Beyond the design of automated writing evaluation: Pedagogical practices and perceived learning effectiveness in EFL writing classes. |

| 7. Chen, M., & Cui, Y. (2022). The effects of AWE and peer feedback on cohesion and coherence in continuation writing. |

| 8. Cheng, G. (2022). Exploring the effects of automated tracking of student responses to teacher feedback in draft revision: Evidence from an undergraduate EFL writing course. |

| 9. Cheng, G., Chwo, G. S.-M., & Ng, W. S. (2021). Automated tracking of student revisions in response to teacher feedback in EFL writing: Technological feasibility and teachers’ perspectives. |

| 10. Chew, C. S., Idris, N., Loh, E. F., Wu, W. V., Chua, Y. P., & Bimba, A. T. (2019). The effects of a theory-based summary writing tool on students’ summary writing. |

| 11. Conijn, R., Martinez-Maldonado, R., Knight, S., Buckingham Shum, S., Van Waes, L., & van Zaanen, M. (2022). How to provide automated feedback on the writing process? A participatory approach to design writing analytics tools. |

| 12. Cotos, E. (2011). Potential of automated writing evaluation feedback. |

| 13. Cotos, E., Link, S., & Huffman, S. (2017). Effects of DDL technology on genre learning. |

| 14. *Deane, P., Wilson, J., Zhang, M., Li, C., van Rijn, P., Guo, H., Roth, A., Winchester, E., & Richter, T. (2021). The sensitivity of a scenario-based assessment of written argumentation to school differences in curriculum and instruction. |

| 15. Dikli, S., & Bleyle, S. (2014). Automated Essay Scoring feedback for second language writers: How does it compare to instructor feedback? |

| 16. Dizon, G., & Gayed, J. M. (2021). Examining the impact of Grammarly on the quality of mobile L2 writing. |

| 17. Dodigovic, M. (2007). Artificial intelligence and second language learning: An efficient approach to error remediation. |

| 18. El Ebyary, K., & Windeatt, S. (2019). Eye tracking analysis of EAP students’ regions of interest in computer-based feedback on grammar, usage, mechanics, style and organization and development. |

| 19. Fang, Y. (2010). Perceptions of the computer-assisted writing program among EFL college learners. |

| 20. Feng, X., & Tang, J. (2012). Calibrating English language courses with major international and national EFL tests via vocabulary range. |

| 21. Gao, J. (2021). Exploring the feedback quality of an automated writing evaluation system Pigai. |

| 22. García-Gorrostieta, J. M., López-López, A., & González-López, S. (2018). Automatic argument assessment of final project reports of computer engineering students. |