Abstract

Social network analysis (SNA) is becoming a prevalent method in education research and practice. But criticism has been voiced against the heavy reliance on quantification within SNA. Recent work suggests combining quantitative and qualitative approaches in SNA—mixed methods social network analysis (MMSNA)—as a remedy. MMSNA is helpful for addressing research questions related to the formal or structural side of relationships and networks, but it also attends to more qualitative questions such as the meaning of interactions or the variability of social relationships. In this chapter, we describe how researchers have applied and presented MMSNA in publications from the perspective of general mixed methods research. Based on a systematic review, we summarize the different applications within the field of education and learning research, point to potential shortcomings of the methods and its presentation, and develop an agenda to support researchers in conducting future MMSNA research.

Beginning in the 1990s, education researchers, policy makers, and practitioners have become interested in relationships and collaboration in educational settings. In response, social network analysis (SNA) was proposed as a theoretical and methodological framework, offering theory and tools to explore relationships in depth. Compared to then existing approaches for studying interactions and interdependencies among, for example, teachers or pupils, SNA allows one to capture these relationships in a more nuanced way, by focusing on the patterns and qualities of relationships (Borgatti et al., 2009). SNA offers valuable insights into whether and to what degree interactions and collaboration take place in education. Another key strength of SNA is that it offers several tools to visualize relationships (Hogan et al., 2007), which creates opportunities for visual analysis and feedback aimed at improving practice. No other methodological framework is as focused on the in-depth exploration of relationships and structures in learning and instruction (Moolenaar, 2012; Sweet, 2016).

The surge in SNA publications across the academic disciplines is largely driven by quantitative SNA studies (Freeman, 2004). Despite its merits, authors such as Fuhse and Mützel (2011) or Hollstein (2011) have criticized this formalized approach to network analysis for a lack of attention to the qualitative aspects of relationships. Recent work addresses these concerns by combining quantitative and qualitative approaches in SNA to what we call mixed methods social network analysis (MMSNA; Froehlich, Rehm, et al., 2020; Hollstein, 2014). MMSNA is helpful for addressing research questions related to the formal or structural side of relationships and networks, but it also attends to questions related to the actual content and meaning of interactions, the day-to-day variability of social relationships, the developments of nodes and ties, and agency (Crossley, 2010; Crossley & Edwards, 2016).

In this chapter, we describe how researchers have applied and presented MMSNA in publications from the perspective of general mixed methods research. Based on a systematic review, we (a) summarize the different applications within the field of education and learning research, (b) point to potential shortcomings of the methods and its presentation, and (c) develop an agenda to support researchers in conducting future MMSNA research. We tailored the review for a diverse audience. For social network and mixed method researchers, the review details the use of mixed methods within SNA, sets forward a framework for quality reporting practices, and hints at potential pitfalls. For education researchers, the review illustrates how MMSNA is being applied when studying relationships and collaboration within educational settings.

Background

Social Network Theory and Analysis

Networks are a way of thinking about social systems that focus our attention on the web of relationships that surrounds actors. Networks are composed of actors (nodes) and their relationships (ties). Actors can be individuals, for example, pupils in a classroom or teachers in a school, or collectivities, such as teams, organizations, or countries. Ties include relationships or transfers such as conversations between pupils or information exchanges among teachers. The central focus of social network theory is on relationships among actors to explain actor and network outcomes. Over the years, there have been discussions about whether social network theory is an actual “theory,” a collection of methods, or a metaphor (Wellman, 1988). Initially, many social capital theorists drew on SNA as a methodological approach and a means to capturing relevant features of interaction only. Today, several scholars argue that this claim is not a valid stance anymore (Borgatti et al., 2014; Brass et al., 2014). To date, a solid body of work has been developed, comprising theoretical concepts such as structural holes (Burt, 1992), centrality (Freeman, 1978), the strength of ties (Granovetter, 1973), and structural equivalence (Lorrain & White, 1971). Network researchers use the tools offered by SNA to study the relationships and structural features of social networks (Wellman, 1983).

A Social Network Perspective in Education Research

The urge to capitalize on relationships, interaction, and collaboration within educational settings is reflected by a growing number of concepts, such as communities of practice, organizational shared and collaborative learning, and professional (learning) communities (Louis & Marks, 1998; McLaughlin & Talbert, 2006; Wenger et al., 2002). Indeed, researchers have established the usefulness of SNA in examining the role of relationships for student achievement (Daly et al., 2014; Pil & Leana, 2009), teacher learning (Baker-Doyle, 2015; Fox et al., 2011), reform and improvement (Moolenaar et al., 2012; Penuel et al., 2016), policy implementation (Coburn et al., 2012; Frank et al., 2011), leadership (Daly & Finnigan, 2011; Spillane & Shirrell, 2017), and professional development programs (Baker-Doyle & Yoon, 2010; Hofman & Dijkstra, 2010; Penuel et al., 2012).

These new lines of research have led to greater interest in the research on relationships in education. However, also conceptual and methodological challenges exist with this approach that SNA attempts to overcome. For example, the focus on particular communities can be limiting, as a student or teacher is often embedded in a network of relationships that span subgroups and include individuals inside and outside institutional boundaries (Penuel et al., 2009; Spillane, 2005). Also, the growing body of research on relationships has concentrated on social interactions. One problem of the extant literature is the precision of measurement (Coburn et al., 2012). Often, an “average” of a relationship between one individual and the rest of the group is being measured, for instance, how many times a teacher reports a certain interaction, irrespective of the specific interaction partner. A more fine-grained exploration of interaction, taking a relational view, could yield a better understanding of these phenomena. 1

Mixed Methods SNA

The nature of SNA within discussions about quantitative, qualitative, and mixed methodologies is ambiguous. Most researchers consider SNA a formal or quantitative technique (Crossley & Edwards, 2016; Hollstein, 2014). This definition is in stark contrast to the historical roots of SNA, which are qualitative (Freeman, 2004). Onwuegbuzie and Hitchcock (2015) highlight the potential to integrate qualitative and quantitative methods of network research and describe the method as quantitative-dominant crossover mixed analysis. Put differently, they view it as an inherently mixed analysis.

An increasing number of social network researchers makes use of mixed methods to generate their findings (Froehlich, Rehm, et al., 2020). This surge in interest has come with the realization that quantitative (or formal) and qualitative SNA each have their respective sets of strengths and weaknesses (Crossley, 2010). For example, qualitative SNA often lacks an overview of the structural properties of a network. Conversely, quantitative network analysis is often too abstract to consider the content being exchanged, the fluctuations of the relationships over time, or the role of agency (Crossley, 2010). This makes MMSNA relevant in gauging the social complexities found within education and learning research.

We define MMSNA as any SNA study drawing from both qualitative and quantitative data or using qualitative and quantitative methods of analysis. This definition is in line with definitions of mixed methods in general (Johnson et al., 2007; Johnson & Onwuegbuzie, 2004). These different methods need to be thoughtfully integrated. This can mean studies (a) that mix within SNA or (b) that include SNA as one element in the overall research design. In the former, qualitative and quantitative relational data are being used or qualitative and quantitative social network analytic approaches are being applied. The latter includes any mixed methods study featuring one component of SNA such as triangulating quantitative SNA with nonrelational, qualitative data.

Describing MMSNA Studies

In his seminal article “Describing Mixed Methods Research,” Guest (2013) states that “two dimensions are enough [to describe mixed methods designs]—the timing and the purpose of integration” (p. 147). These two dimensions thus form the basis for this review. But for any review, it is important not only to look at what is being done but also to assess the quality of research (cf. Siddaway et al., 2019). Therefore, we assess the quality of mixing in addition to the purpose(s) of mixing and its timing.

Purpose(s) of Mixing in MMSNA

Every use of mixed methods has a specific purpose of mixing, which is based on the researchers’ wish to raise the scope, power, and/or quality of their study (Schoonenboom et al., 2018). In this review, we follow the perspective of Schoonenboom et al. (2018) by focusing on “purposes of mixing at a detailed level” (p. 272). To aid the aggregation of the data for this review, we use three major categories of purposes of mixing: follow-up, comparison, and development. If the purpose is follow-up, methods are being used to expand on findings, for example, to aim for more generalizable findings, to find explanations for results, or to replicate findings. For example, researchers might combine a quantitative analysis using survey data with qualitative analysis of interviews “intended to deepen the understandings of the structural network analysis findings” (Rodway, 2015, p. 8). Comparison as a purpose refers to answering complementary research questions and triangulations throughout the research process. Last, development as a purpose for mixing aims at further informing the main research through a prestudy. For example, researchers may conduct cognitive interviews to improve a survey instrument or a sampling procedure.

Timing in MMSNA

Mixed methods research, in general, and MMSNA, in particular, is characterized by design features related to timing. Timing has two dimensions (Schoonenboom & Johnson, 2017):

Simultaneity: A case of mixing is concurrent when both elements involved are performed in parallel (Dingyloudi & Strijbos, 2018), and it is sequential when one element follows the other (Liou & Daly, 2014).

Dependence: A case of mixing is independent when both elements involved are performed without mutual influence (Dingyloudi & Strijbos, 2018), and it is dependent when one element influences the outcome of performance of the other element (Liou & Daly, 2014).

Quality in MMSNA

Hong et al. (2018) developed the Mixed Methods Appraisal Tool (MMAT) as a review tool for mixed studies in 2006 and since then have repeatedly improved it. The MMAT serves as a validated appraisal tool for the methodological quality of either qualitative, quantitative, or mixed methods studies. The MMAT questionnaire has two parts. Two preliminary screening questions confirm the suitability of the study to be evaluated using the MMAT. Next, five criteria for assessing mixed methods studies are applied to studies that include at least one qualitative and one quantitative method. We present the specificities of the coding procedure in the Method section.

Method

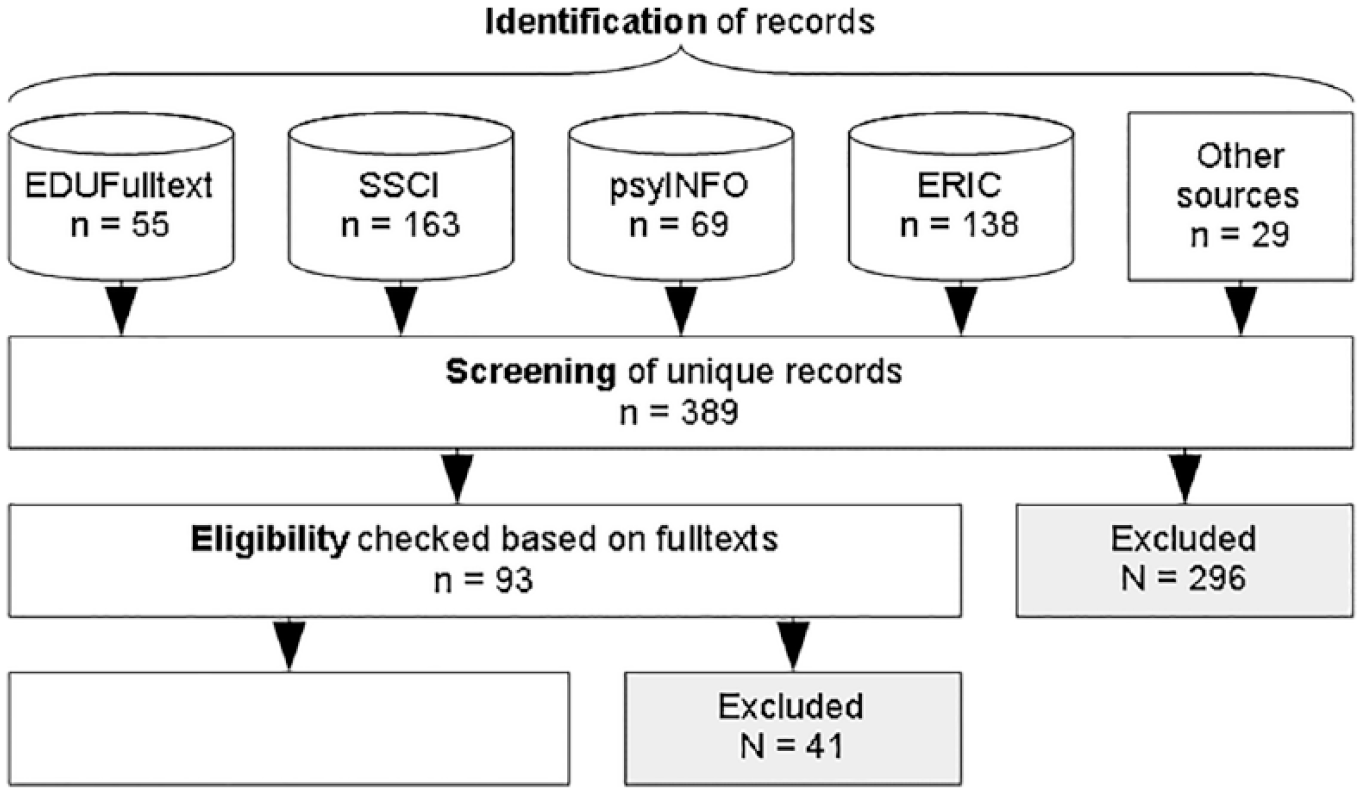

In this review, we follow the PRISMA statement 2 (Moher et al., 2009) and other texts describing the state of the art of doing literature reviews (Siddaway et al., 2019; Van Tulder et al., 2003) to identify and screen records, check their eligibility, and code them. This literature is then evaluated to answer the guiding question of this chapter of how MMSNA is being applied in contemporary education research. This process is depicted in Figure 1.

Overview of the Search Process

Identification of Records

We have followed two major strategies for identifying relevant literature. The first and arguably most important strategy involves the search in scientific databases with a predefined set of keywords. We focused on databases commonly referenced in learning and education: Education Resources Information Center, Education Full Text, PsycARTICLES, PsycINFO, and the Social Sciences Citation Index. PsycARTICLES was later dropped from the search to safeguard consistency, as the search terms turned out to be very imprecise for this database. Discussions with peers in the field confirmed that these are the most relevant databases for this review.

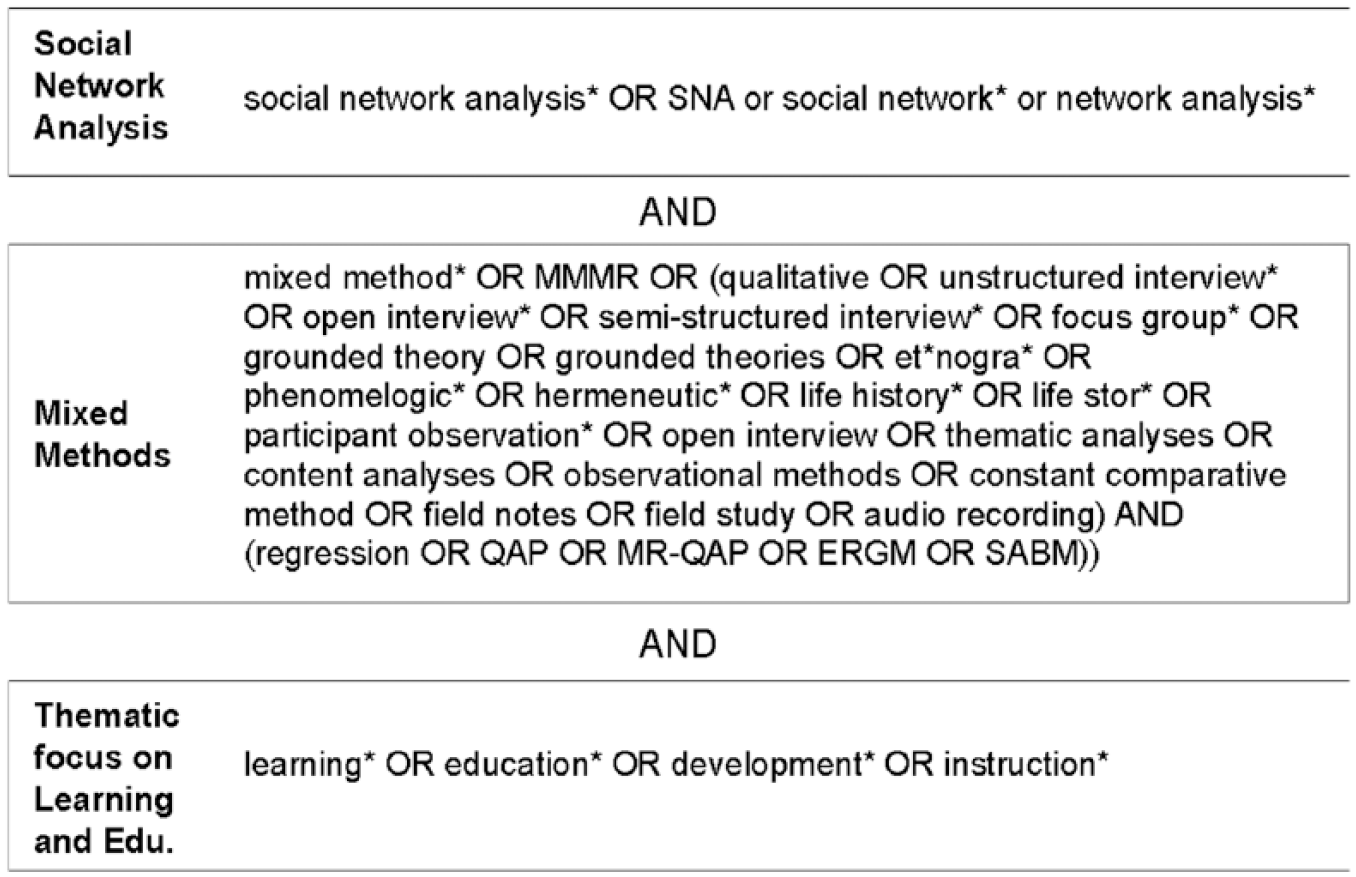

We searched these databases using three blocks of search terms that are connected to each other through “AND” operators. Figure 2 shows the lists of search terms.

Search Terms

In addition to this search, we followed a set of alternative strategies for identifying relevant literature to produce a comprehensive list of records. This includes back-tracking, 3 forward-tracking, 4 and consulting peers. Neither back-tracking nor forward-tracking returned additional records. This most likely has to do with the nature of the review that is not focused on a content-related theme, which would produce a narrative that could be traced in the reference list, but on a method. The consultation of peers, where we addressed colleagues and an international, informal research group about SNA in learning and education produced a list of 29 records before deleting duplicates.

Screening of Records

After the initial identification of 454 potentially relevant records, we created one database that contained the meta-information, including titles and abstracts, from all identified texts. First, we screened this list for duplicates and deleted 65 records. For the remainder of 389 records, two of the authors screened the titles and abstracts to make an initial decision about the relevance of the records. Relevance was determined based on four inclusion criteria. Specifically, the record needed

To report empirical research; conceptual contributions or reviews were excluded

To apply SNA based on our loose definition of MMSNA described in the background section

To use qualitative and quantitative data or apply qualitative and quantitative methods of data analysis

To be situated in education

During screening we excluded 296 records for an initial pool of 93 potential studies. To safeguard reliability of this process, a subset of 14 randomly selected records was coded by all authors and two independent researchers. Exact Kappa (Conger, 1980) was calculated. The result of κ = .75 (p < .01) indicated substantial agreement among the coders.

Eligibility of Records

For all records that passed the screening stage, the complete texts were accessed to confirm eligibility. For the vast majority of records, the reading of the full texts confirmed the initial assessment in the screening phase. One important deviation was found in methodologically oriented texts (e.g., Martínez et al., 2006) that—strictly speaking—did fulfill all inclusion criteria, but were found to be too shallow in the reporting of the empirical results for our purpose. Since this made a thorough review infeasible, these records were excluded. Also, for two records, no complete text could be retrieved, so they were excluded from further review. In total, 41 texts were removed from the literature review after reading the complete texts for a final list of 52 studies to be reviewed. With 52 studies left from an initial pool of 389 records, the precision of the search amounts to 13%. This number is rather low given that the term social network is used in very different ways, most notably in terms of social network sites, which cannot be excluded from the search because relevant research may be carried out using social networking site data. We kept the search rather broad to have greater confidence in not having filtered out relevant studies.

Coding of Included Studies

Three authors coded the following information of each of the eligible texts: publication meta-data, an assessment of the methodological quality of the empirical analysis, and features of the research design and integration. Next to this, memos and qualitative comments were written during the coding procedure.

Publication Meta-Data

Information required for documentation purposes, such as the names of the authors, the publication date, the publication type, and so forth, were coded. Additionally, we coded the theme and context of the article on a broad level.

Purpose(s) of Mixing in MMSNA

In line with our theoretical background, we have categorized the studies based on their purpose(s) of mixing in terms of follow-up, comparison, and development (Schoonenboom et al., 2018). This was done based on the research design, and we did not rely on authors’ statements about what they perceived as the purpose(s) of mixing. 5

Timing in MMSNA

The two dimensions of timing—simultaneity and dependency—were coded for each study. This information was used to form four types of research: parallel and dependent, parallel and independent, sequential and dependent, and sequential and independent. Both design features have been defined and described in the background section. This cross tabulation helped examine the relationships between the dimensions of timing.

Quality in MMSNA

The methodological quality was rated according to the MMAT evaluation instrument (Hong et al., 2018). The MMAT provides five methodological quality criteria to evaluate mixed method studies that are rated as either 1 = present/yes or 0 = not present/no. Specifically, we coded whether

The purpose for applying a mixed methods approach has been stated.

The different components of the study were effectively integrated to provide a response to the research question. As integration is considered an essential component of mixed method research, the MMAT suggest to closely inspecting the used methods to integrate qualitative and quantitative phases, results, and data.

Meta-interference, the overall conclusion arrived at through integrating each of the methods’ inferences (Teddlie & Tashakkori, 2009), added value.

Divergences and inconsistencies between quantitative and qualitative results occurred and were adequately addressed.

The quality criteria of each applied method was adhered to. The MMAT considers high-quality components without exception as a requirement for mixed methods studies of good quality.

Reliability of the Coding Procedure

The coding was again checked in terms of reliability. This time, three of the authors simultaneously coded three records. The result of κ = .62 (p < .01) indicated that there was substantial agreement between the coders’ ratings.

Results

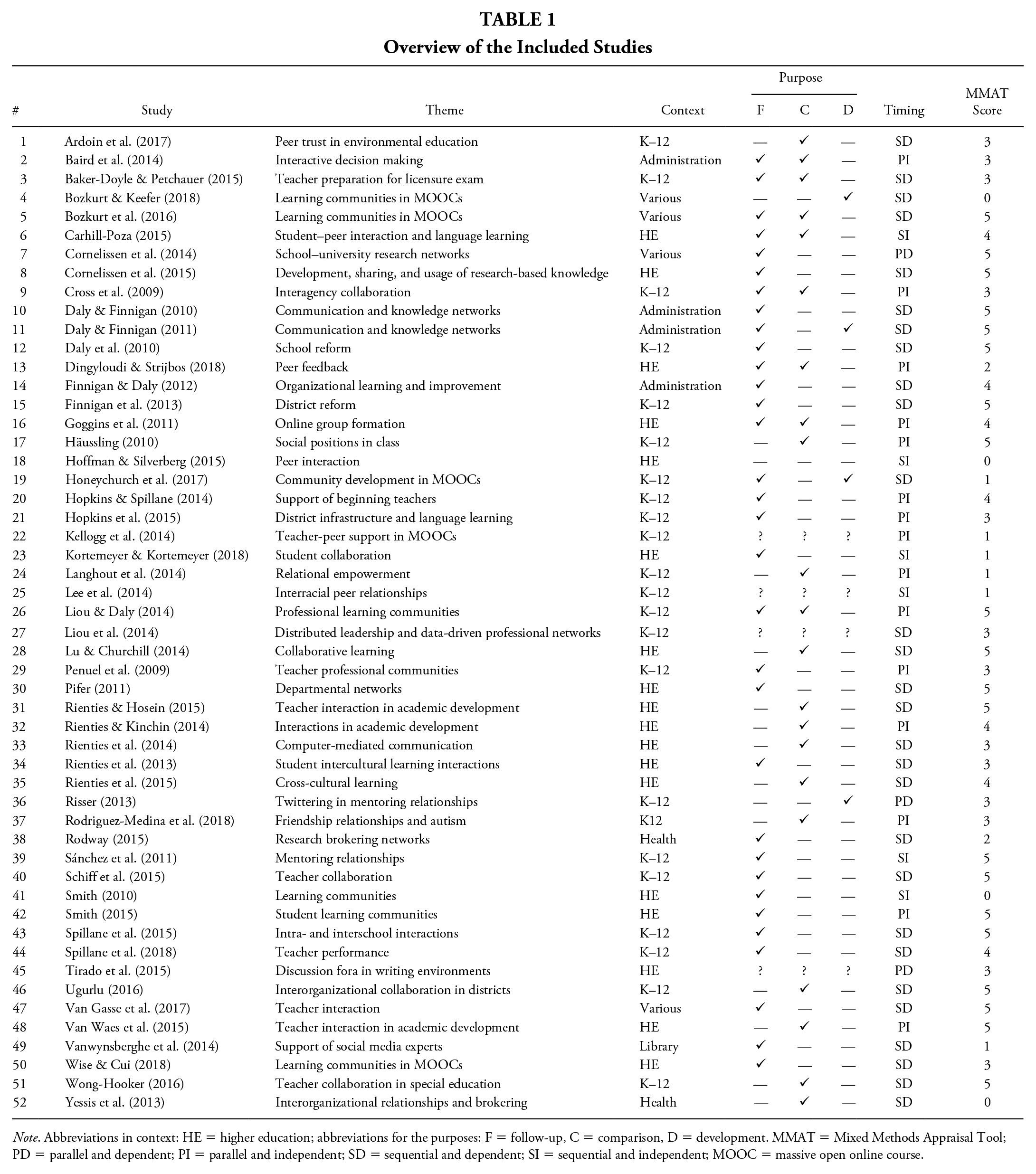

Table 1 summarizes the studies reviewed including information about the theme, identified purpose and timing according to the previously mentioned categories, and the overall score on the MMAT. The table also illustrates the breadth of themes that MMSNA was used to research. In terms of context, it was often applied in K–12 (23 studies, 44%), higher education (18 studies, 35%), or school administration (three studies, 6%).

Overview of the Included Studies

Note. Abbreviations in context: HE = higher education; abbreviations for the purposes: F = follow-up, C = comparison, D = development. MMAT = Mixed Methods Appraisal Tool; PD = parallel and dependent; PI = parallel and independent; SD = sequential and dependent; SI = sequential and independent; MOOC = massive open online course.

Purpose(s) of Mixing in MMSNA

We evaluated the major purposes of mixing. 6 In 32 studies (62%), the purpose of mixing was identified as follow-up. Of almost equal prominence, 21 studies (40%) used different methods for the purpose of comparison. In eight studies (15%), follow-up and comparison were simultaneously present as the purposes of mixing. Four studies (8%) used one method to develop another. In four studies (8%) the purpose remained unclear, even after reading the full text.

We also intended to check for congruence between the purpose(s) of mixing identified by us and the purpose(s) stated by the respective study authors. Given that the vast majority of studies did not allow to us to observe the authors’ thinking and line of argumentation (see Quality in MMSNA below), the alignment between what the authors stated and what was done could not be coded.

Timing in MMSNA

Eighteen studies (35%) contained parallel designs. One example is the study by Dingyloudi and Strijbos (2018), who collected and analyzed quantitative social network data and video data in parallel. An example of a sequential MMSNA design is Rodway (2015), where a quantitative, sociocentric strand of survey research was executed before a qualitative investigation. Thirty-one studies (60%) used methods that were dependent on each other. However, this number for dependency needs to be interpreted with caution, as it represents a frequency count that does not say anything about the strength of the dependency. “Dependency” often referred to a minor interface between the two methods only. For example, researchers used metrics derived from sociocentric network data to inform the qualitative data collection through purposeful sampling procedures or by using network maps as interview material (e.g., Daly & Finnigan, 2010).

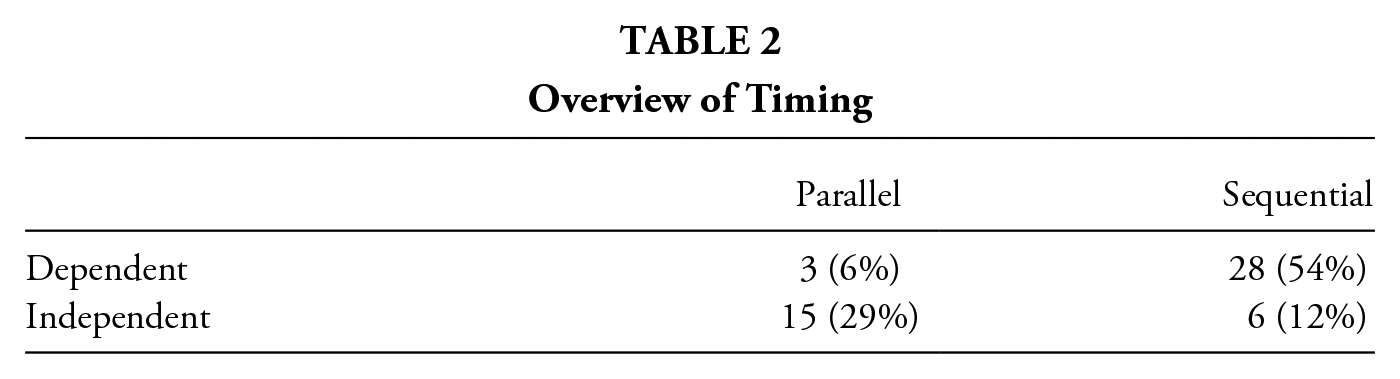

As described above, we aggregated these data into four types (see Table 2). Designs that combined sequential timing with dependency (28 studies, 54%) or parallel timing with independency (15 studies, 29%) made up the vast majority of the sample. This was to be expected in so far that sequential designs that are dependent rather than independent make better use of the information that is already available in the research project, which naturally leads to a higher prevalence. Likewise, parallel timing is more complex in its implementation when the methods are referring to each other (i.e., dependency).

Overview of Timing

Quality in MMSNA

Concerning the quality of the studies, we comment on our observations regarding the five aspects of the MMAT. First, we observed whether the purpose of mixing was explicitly stated. Of the 52 studies, 38 (73%) made their purpose(s) of mixing explicit, while in more than one quarter of the reviewed studies, the researchers did not state their reasons for using quantitative and qualitative data and/or methods. Even for those that did, this discussion was often short and most often referred to “triangulation” or a “case study” without more detailed explanations.

Second, when it came to the integration of the different methods used in a MMSNA design, we looked at whether the methods were effectively integrated to answer the posed research question. For most studies (42 studies, 81%), this was done in a sufficiently clear manner. Third, compared to the above figure of integration of 81%, the adequate integration of outputs (“meta-inference”) was only present in 27 studies (52%). In other words, often, the information gained in one strand of research was not used at all or not sufficiently integrated with the other findings when forming conclusions. This is a missed opportunity to improve the quality of integration and of the overall study.

Fourth, another opportunity to enhance the quality of MMSNA studies is the discussion of how the findings from the quantitative and qualitative data relate to each other. For 36 studies (69%), there were either no differences to be discussed or these divergences were adequately addressed in the discussion. This means that 31% failed to address conflicting findings in meaningful ways. It is important to note that—in line with the coding instructions of the used instrument—we only coded “absence” when differences were observed but not discussed.

Fifth, concerning the overall adherence to quality criteria, we coded 35 studies (68%) as being in line with the quality criteria associated with each of the methods being used. While this number is high compared to the other metrics coded in this review, it is important to note that meeting methodological quality criteria should be a lower level indicator of quality for a study; it does not say anything about the effectiveness of integration or the actual answering the research question. Associated with this criterion, one comment that was often repeated in the memos we wrote during the coding process was that the word limitations of journals made it difficult for authors to comply with the reporting standards of the underlying methods.

Few studies explicitly addressed convergences or divergences and (in)consistencies between results. Most studies discussed the different methods’ findings next to each other and had little or no mixing in the Discussion section. One positive example is the study by Spillane et al. (2018), which integrated the outputs of the different data—interviews with teachers, network surveys, student test scores, and teacher performance data—to investigate how teacher performance predicts interaction. The authors discussed how the interview data offered additional interpretative power on how the school district’s educational infrastructure influenced teachers’ notions about expert teachers. They also addressed how student test scores did not support findings in predicting expert teaching performance and how the interview data helped to inform this finding.

Post Hoc Observations

In this section, we present two post hoc observations that were not part of the deductive coding scheme but that nevertheless seem important. During coding, we observed that many of the MMSNA studies used network maps as a way to illustrate the findings. For instance, Hoffman and Silverberg’s (2015) study on peer interactions in a higher education setting used a network map for the single purpose of illustrating instances of joint work in student collaboration. However, visuals were also used in several studies as feedback to participants or as a basis to develop interventions. For example, Rienties and Kinchin (2014) showed network maps to participants in a professional development program and asked participants to reflect on the patterns and structures as part of a free-response exercise. Van Waes et al. (2015) used a concentric circle method (Hogan et al., 2007; Van Waes & Van den Bossche, 2020) to provide feedback about change over time in personal teaching networks during interviews. The visuals of the personal network maps were used to gain insight into supporting and constraining mechanisms for network change as a complement to longitudinal network surveys. This visual approach (also see Shannon-Baker & Edwards, 2018; Shannon-Baker & Hilpert, 2020) to MMSNA may offer a basis for the design of network interventions where the network awareness of participants is being fostered (Palonen & Froehlich, 2020; Van Waes et al., 2018).

Another post hoc observation is related to the point of integration. The point of integration is the stage of research where two methods are brought together (Schoonenboom & Johnson, 2017). Often, two methods were connected at the sampling stage. Specifically, the quantitative, often sociometric data were used to inform the selection of participants for further, in-depth, and often egocentric data collection (e.g., Rienties & Kinchin, 2014). Other prominent points of integration were at the stages of results/analysis (e.g., Rodríguez-Medina et al., 2018) or in the discussion/conclusion (e.g., Hopkins & Spillane, 2014).

Discussion and Conclusion

As outlined in the introduction, this systematic review aims to (a) give an overview of the different applications of MMSNA within the field of education and learning research, (b) point to challenges of the methods and their presentation, and (c) develop an agenda to support researchers in conducting future MMSNA research. We will now discuss these three themes.

Applications of MMSNA in Education and Learning Research

Overall, our review showed mixing of various methods and data in the eligible MMSNA studies. The surveyed literature shows a great variety of different data sources and analytical methods being used. For example, Häussling (2010) analyzed video recordings of classroom interactions using SNA to uncover social structures, dynamics such as interaction sequences, and the emotional expressions involved. These data were triangulated with responses on networks surveys and interviews. Bokhove (2018) argues how a mixed method approach to classroom interaction may benefit from an SNA lens. He modeled temporal interactions of students and teachers in the classroom combining SNA and video data. However, we did not find examples of mixed data obtained with alternative devices, such as tracking interactions with sociometric badges (Kim et al., 2012). Although we observed some variety in terms of data sources and methods, researchers used network surveys and qualitative interviews most frequently. Similarly, the versatility of MMSNA is illustrated by the range of the themes addressed in the studies we reviewed, which cover diverse topics such as school reform (Daly & Finnigan, 2010), learning in MOOCs (Wise & Cui, 2018), informal mentoring (Risser, 2013), or trust (Ardoin et al., 2017).

Challenges of MMSNA Linked to a Future Research Agenda

We also identified challenges in conducting and reporting MMSNA research from which we try to derive an agenda for further MMSNA research. We recommend to (a) further explore the richness of MMSNA designs, (b) improve reporting practices concerning purpose and design, (c) increase integration, and (d) attends to validity issues identified in MMSNA research.

Variability of MMSNA Designs

We have noted a hegemony of a few MMSNA designs in terms of timing. This is interesting when considering the plethora of different topics and research questions present in the reviewed studies. In consequence, this raises the question whether the designs have indeed been properly fitted to the specific research questions at stake, or whether the designs of published studies have been used overused as blueprints. This observation also extends to the purpose(s) of mixing coded in the studies. While we could name very different purposes of mixing we also noted that explanation, a variant of follow-up, and triangulation, a variant of comparison, were especially prevalent. This complements the findings of Froehlich (2020b) that show a similar trend in MMSNA when it comes to the pairing of specific methods. As such, future research could tap into the richness offered by novel combinations of methods of social network research. For instance, qualitative methods could be used to inform quantitative data collection and analysis. This is especially true for more advanced quantitative techniques such as exponential random graph models or stochastic actor-based model, which play a large role in contemporary quantitative SNA research, but did not appear in the reviewed MMSNA studies.

Improving Reporting

We found that over one quarter of the observed MMSNA studies did not explicate their purpose(s) of mixing. Given that mixing has no value on its own, it is important to communicate the rationale for using multiple methods to the readers. We urge authors of future MMSNA studies to explicate their purpose(s) of mixing and the reasoning behind it. The studies that did report the purpose of mixing often did so in an overly concise manner. The reviewed MMSNA studies used labels such as case study or triangulation without clarifying what these terms meant in the context of the studies. We recommend to explain the arguments behind mixing rather than just labelling them.

As an example, we present the study of Cornelissen et al. (2014). The authors studied partnerships between schools and universities using a social network perspective to find out in what ways knowledge generated from students’ research is developed, shared, and used in their observed school-university network. The authors describe their approach as a longitudinal multimethod case study design and used a purposeful sampling procedure to identify four cases: two students and their respective research advisors. Data were collected via questionnaires and selected cases were queried at multiple time points about their personal networks. Additionally, logs were collected and interviews conducted. The researchers analyzed the data at the ego, dyad, and whole network levels. Describing this research design as a case study may be appropriate, but it does not adequately characterize the actual richness of the mixing involved. A more detailed description about the reasons that have led to the design could be helpful in developing an even more nuanced research design. Specifically, the authors’ interest in studying multiple units of analysis at the same time presents an immediate reason to interweave qualitative and quantitative approaches to network analysis. This is because both approaches have complementary strengths and weaknesses. The higher level questions related to structures could not easily be answered with qualitative methods, whereas quantitative methods often cannot process the details going on at the lower levels of units of analysis. These include why a dyad is functional or not or what an individual’s history is in relation to the network (Froehlich, Mejeh, et al., 2020).

Design decisions such as the timing of a specific study should also be explicated. Take the study of Spillane et al. (2018), in which the design is made very explicit: “Our analysis is based on data from a longitudinal, mixed methods study that used a sequential explanatory mixed methods design” (p. 590). Specifically, they used a sequential design as they sampled people for interviews based on network survey results. They purposefully sampled teaching staffs in schools to maximize variation in formal position, social network position (e.g., more or less central in their schools’ mathematics network), and level (primary or upper grade teachers). They also explicitly state their purpose of mixing: “We sought to interview a broad range of staff, to maximize opportunities for verification (or contradiction) of our theorizing about school staff interactions about mathematics instruction” (p. 591). These statements allow the reader to make inferences about sequence, dependence, and the purpose. Additionally, they provide information about the underlying argumentation.

Another interesting observation regarding reporting practices focuses on the research questions. While all sampled studies used mixed methods, not all studies reflected this in their research questions. While this is certainly not needed for some purposes of mixing, it may point to a lack of alignment between the research questions and the research design. For some studies, it may help pose a research question that transcends the scope of one individual method or research done at one level of analysis. As mentioned in the background section, qualitative and quantitative methods of SNA are complementary and this should be reflected in the research questions (Plano Clark & Badiee, 2010).

Increasing Integration

While some form of integration did happen in most studies, one avenue for further improvement of MMSNA could be a more explicit focus on meta-inferences (Teddlie & Tashakkori, 2009). This means that combined interpretations should be formulated based on both qualitative and quantitative findings. Meta-inferences show the added value of mixing over two separate studies more clearly and emphasize the purpose of mixing. Also, the explicit discussion of divergent findings could help improve integration within MMSNA. The majority of the sampled studies either did not identify any divergent findings worthy of being discussed or did not adequately address such findings. Another suggestion for increased integration was offered by Toraman and Plano Clark (2020), who recommend the thoughtful use of joint displays, where qualitative and quantitative results are presented in one figure or table.

Considering Validity

Analysis of the coding indicated that one third of all samples studies did not adhere to the basic validity requirements of the methods used. Importantly, this does not refer to validity requirements of MMSNA—which still need to be developed and agreed upon—but rather on the individual methods used in the design. There are two pathways in addressing this challenge. A part of the problem could be competence-related. Given that SNA is a complex method to begin with, this complexity increases when embedding it into a mixed methods design. Therefore, it is advisable to strengthen human capital within the authorship teams, either by increasing individuals’ methodological competencies or by adding co-authors with the specific competences in demand.

The root of the problem of validity presented above may also be institutional. We noted that the word limitations of journals and other publication outlets are one potential reason why the authors of many studies in this review (30%) failed to meet the quality criteria of the underlying qualitative and quantitative methods. In general, this is a frequently discussed problem in the mixed methods literature (Leech, 2012). However, we argue that this issue is exacerbated when presenting MMSNA research for two major reasons. First, quantitative approaches to SNA tend to be perceived as more technically challenging and in need of more elaborated methodological explanations. This could be traced back to the methodological training of researchers, which is often focused on statistics at the expense of other quantitative methods (cf. Froehlich, Mamas, et al., 2020). Reporting about qualitative procedures is by no means less problematic. Here, it is our impression that the problem lies in the rather vague guidelines of how to do qualitative SNA. While some exceptions exist—most notably the work of Herz et al. (2015) on qualitative structural analysis—a common language of doing and reporting qualitative SNA is absent (Froehlich, 2020a). Further research may mitigate this problem by developing a clearer conceptualization of how to execute and report qualitative SNA. As SNA is becoming more and more visible especially in the educational domain (Daly, 2010; Moolenaar, 2012; Sweet, 2016), it is our hope that the basic approaches to analyzing social networks will require less argumentation—and hence alleviate this challenge.

General Conclusion

We have written this review based on the observation that SNA is becoming a standard method of education research, but that purely quantitative SNA approaches are often found to be too superficial for many of the phenomena studied in this field. While the call for MMSNA was voiced by the books of Domínguez and Hollstein (2014) and Froehlich, Rehm, et al. (2020), information about why and how MMSNA is being used in research practice did not exist. In this review, we studied—among others—the purpose(s) of mixing and the designs used as presented in contemporary education research. Through the systematically gathered data and the presentation of illustrative examples, we elaborated on how researchers may use MMSNA to study relationships and interactions within educational settings. This contributes to further research in two ways. First, we have proposed an agenda for further research that tackles the challenges identified through the review. Put differently, we laid out a plan of how MMSNA as a research approach could be improved collectively. Second, and on the level of an individual researcher, this chapter may provide guidance throughout the research process by providing a reference to what has worked well before and what did not.