Abstract

A high level of teacher self-efficacy is considered to be important for a successful and healthy teaching career. This preregistered meta-analysis focuses on whether and to what degree interventions can promote teacher self-efficacy. We included 115 studies representing 11,284 pre-service and in-service teachers in our meta-analysis. Interventions had a significant, positive effect on the promotion of teachers’ self-efficacy (g = 0.47, RVE SE = 0.04, 95% CI = [0.40, 0.54]) with no significant differences between pre- and in-service teachers. A fine-grained coding and systematic review of the targeted sources of self-efficacy according to Bandura’s sociocognitive theory revealed that overall interventions including mastery experiences did not significantly differ from those without. However, interventions targeting only mastery experiences were the most successful for pre-service teachers (g = 0.62, RVE SE = 0.11, CI = [0.35, 0.88]). Based on further moderator analyses, we recommend interventions to integrate reflective elements. Finally, future research should apply stricter study designs and more detailed intervention descriptions.

In times of increasing teacher shortages, concerning rates of teacher attrition, and increasing demands put on teachers, many institutions and research organizations are questioning how teachers’ lifelong learning experiences should be designed to best enable teachers to deal with these challenges (Ball & Forzani, 2009; European Commission/EACEA/Eurydice, 2021; Grossman, Hammerness, & McDonald, 2009; Schleicher, 2018). A central variable in the discourse on dealing with challenges is teachers’ self-efficacy (TSE), which is defined as teachers’ belief in their abilities to successfully perform teaching-specific tasks (e.g., motivating disinterested students or calming a disruptive student down; Skaalvik & Skaalvik, 2007; Tschannen-Moran et al., 1998). A wide range of learning opportunities have emerged in recent years to strengthen teachers’ self-efficacy. There are specific intervention programs targeting teacher self-efficacy in pre- and in-service teacher education (e.g., Chao et al., 2017; Dalioglu & Adiguzel, 2016; Hayes et al., 2020; McCullough et al., 2021; Tschannen-Moran & McMaster, 2009) or more global approaches such as placing core teaching practices and opportunities for practice at the heart of pre-service teacher education (Grossman, Hammerness, & McDonald, 2009; Michigan Department of Education, n.d.). Although these two exemplary approaches may seem very different at first glance, they are quite related when examined more closely. Both offer pre-service and in-service teachers a specific combination of experiences.

Albert Bandura’s (1997) socio-cognitive theory provides a suitable framework to describe these different experiences. He proposes that self-efficacy beliefs are based on four sources of experiences, namely, mastery experiences, vicarious experiences, social persuasion, and physiological reactions. Mastery experiences are seen as having the strongest impact on the level of self-efficacy beliefs. However, empirical evidence for the differential effect of the four sources of self-efficacy on the promotion of teacher self-efficacy is inconclusive (D. B. Morris et al., 2017). Given the relevance of high levels of teacher self-efficacy beliefs (Klassen & Tze, 2014; Zee & Koomen, 2016), it remains an open research question how interventions can support pre-service and in-service teachers to strengthen their self-efficacy (Klassen et al., 2011; Moulding et al., 2014; Pendergast et al., 2011; Tschannen-Moran & Woolfolk Hoy, 2007).

The present meta-analysis systematically summarizes current research on interventions 1 promoting teacher self-efficacy. We investigate which characteristics of the interventions are influencing the interventions’ effects and focus specifically on a repeatedly raised research gap, namely, evaluating the role of the experiences captured as sources of self-efficacy (Duffin et al., 2012; Klassen et al., 2011). Within a teacher professional development framework, we evaluate interventions’ effects on different samples of teachers (pre-service teachers and in-service teachers) and examine whether the two groups react differently to different source combinations. Our overall goal is to contribute evidence-based knowledge to the question of how lifelong learning opportunities in teacher education and training should be designed so that teachers can deal with future challenges in a self-efficacious way.

The Concept and Relevance of Teacher Self-Efficacy

The construct of teacher self-efficacy is based on Bandura’s socio-cognitive theory, in which he defined self-efficacy as “the belief in one’s capabilities to organize and execute the course of action required to manage prospective situations” (Bandura, 1995, p. 2). Teacher self-efficacy can, therefore, be defined as an individual teacher’s belief to be able to execute all the actions needed for the job profile of a teacher (for similar definitions, see Holzberger et al., 2013; Tschannen-Moran et al., 1998).

The construct has inspired many studies, meta-analyses, and systematic reviews showing benefits of a high level of teacher self-efficacy for teachers, their teaching quality, and their students (Zee & Koomen, 2016). Teachers with a higher level of teacher self-efficacy report more job satisfaction (Klassen & Chiu, 2010; Stephanou et al., 2013; Toropova et al., 2020), more commitment (Chesnut & Burley, 2015), less emotional exhaustion and fewer stress symptoms (Aloe et al., 2014; Betoret, 2006; Dicke et al., 2018; Fernet et al., 2012; Skaalvik & Skaalvik, 2010, 2014; Wang et al., 2015). Students and even independent observers rate the teaching of self-efficacious teachers as high-quality instruction (Holzberger et al., 2013; Klassen & Tze, 2014; Ryan et al., 2015). In a longitudinal study, teachers’ level of teacher self-efficacy in the first year was predictive of their instructional quality regarding classroom climate, classroom management, and cognitive activation 10 years later (Künsting et al., 2016). Finally, regarding concrete teaching practices, teacher self-efficacy is considered an important predictor of differentiated instruction (Neve et al., 2015; Suprayogi et al., 2017) and innovative behavior (Thurlings et al., 2015). In turn, students from teachers with higher teacher self-efficacy beliefs report more engagement in the class (Zee & Koomen, 2020), better relationships with these teachers (Holzberger et al., 2014), and more interest (Fauth et al., 2019; mediated by teaching quality). Students also show higher academic achievement when taught by teachers with higher levels of teacher self-efficacy (Caprara et al., 2006; Kim & Seo, 2018).

In light of the described evidence on the beneficial outcomes of high levels of teacher self-efficacy, research has consequently started to investigate how teacher self-efficacy can be promoted (e.g., Ansley et al., 2021; Groeschner et al., 2018; Hoogendijk et al., 2018; Junqueira & Matoti, 2013; Ross & Bruce, 2007).

Promoting Teacher Self-Efficacy Through the Four Sources of Self-Efficacy

So far, a huge variety of interventions exist that aim to promote TSE. These interventions differ in the content they deliver (e.g., classroom management skills in Dicke, Elling, et al., 2015; English grammar and vocabulary in S. Chan et al., 2021), the involvement of core teaching practices (e.g., developing positive student-teacher relationships in Aasheim et al., 2020; creating lesson plans in Karalar & Altan, 2018), the ways they are organized (e.g., specific training intervention in Dicke, Elling, et al., 2015; teaching practicum in Holdaway, 2017; individual coaching in Hoogendijk et al., 2018), the activities they implement (e.g., role plays in Dicke, Elling, et al., 2015; video observations in Gold et al., 2017), and the degree of authentic approximations of practice (e.g., interacting with avatars in Bosch & Ellis, 2021; real-time coaching in classroom in Hoogendijk et al., 2018).

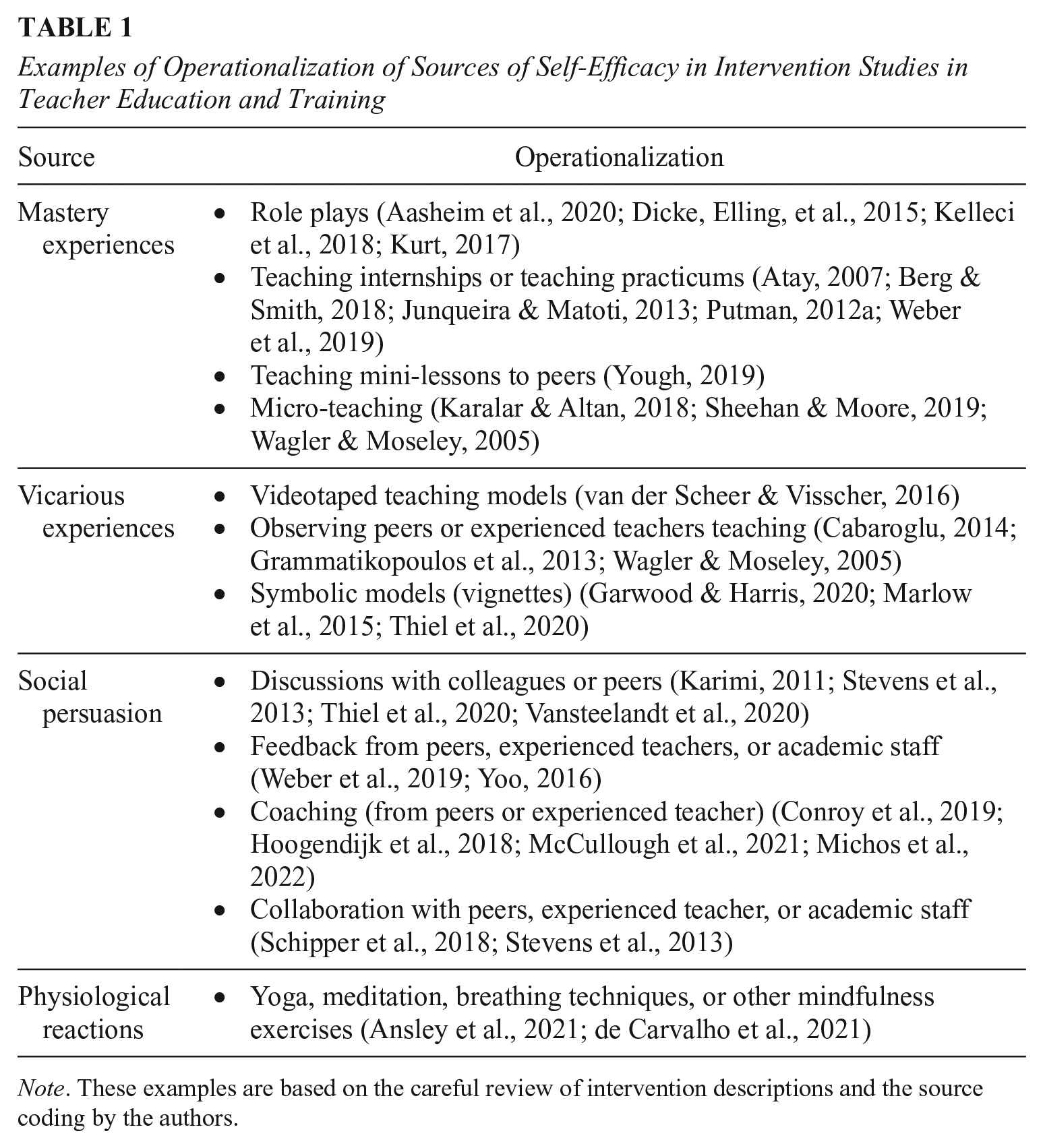

Despite the wide variety of intervention studies aimed at promoting teacher self-efficacy beliefs, the four sources of self-efficacy (i.e., mastery experiences, vicarious experiences, social persuasion, and physiological reactions) described by Bandura (1997) may serve as a feasible and meaningful overall framework for categorizing the diverse interventions. While these sources were originally described outside a specific context, they can be transferred to the teaching context (D. B. Morris et al., 2017; Tschannen-Moran et al., 1998). We therefore carefully reviewed intervention studies on promoting teacher self-efficacy and provide examples of the operationalization of the four sources in teacher education and training in Table 1. Despite their different contents, organizational structures, involved practices, and approximation levels, interventions can offer the same sources of self-efficacy. For example, an intervention specifically fostering classroom management skills (Dicke, Elling, et al., 2015), a student teaching practicum (Holdaway, 2017), and an intervention focusing on different ways of coaching pre-service teachers (Weber et al., 2019) all target the same sources of self-efficacy, namely, mastery experiences, vicarious experiences, and social persuasion. Through coding the different sources of self-efficacy targeted by the interventions, we are able to compare seemingly diverse interventions and to decompose successful strategies for fostering teacher self-efficacy. In the following, we introduce the four sources of self-efficacy according to Bandura’s theory. For each source, we highlight examples from teacher education and training interventions based on our systematic review of relevant studies (see also Table 1).

Examples of Operationalization of Sources of Self-Efficacy in Intervention Studies in Teacher Education and Training

Note. These examples are based on the careful review of intervention descriptions and the source coding by the authors.

Mastery experiences take place whenever someone gains operational experience by doing something. 2 In teacher education, mastery experiences may be teaching experiences in internships for pre-service teachers (e.g., Knoblauch & Chase, 2015; Liaw, 2017) or role plays in seminars for in-service teachers (e.g., Aasheim et al., 2020; Dicke, Elling, et al., 2015), for example. Individuals have vicarious experiences whenever they observe a model doing something, for example, pre-service teachers watching classroom videos (e.g., Bowlin et al., 2015; Kumschick et al., 2017; Thiel et al., 2020) or in-service teachers observing their colleagues or experts (e.g., Schipper et al., 2020; Tschannen-Moran & McMaster, 2009; Vansteelandt et al., 2020). Social persuasion covers all the situations where individuals receive verbal support and encouragement for a specific activity. In teacher education, social persuasion may occur when pre-service and in-service teachers receive feedback from their peers or supervisors or are coached by experts (e.g., Hoogendijk et al., 2018; Weber et al., 2019). The fourth source of self-efficacy pertains to physiological and emotional reactions (sometimes also called “physiological and emotional states”). People obtain information about whether they feel capable in certain situations by interpreting their affective reactions, such as an increased heart rate as a reflection of fear versus excitement. For example, when teachers interpret their palpitations as excitement at teaching a new topic, they feel capable and enter the classroom with more energy and enthusiasm. Emotional exhaustion or higher levels of negative emotions also predicted (changes in) teacher self-efficacy (Burić et al., 2020; Dicke, Parker, et al., 2015). In teacher education, meditation or autogenic training workshops, for example, address this source of self-efficacy (e.g., Ansley et al., 2021; de Carvalho et al., 2021).

The Central Role of Mastery Experiences as a Source to Promote Teacher Self-Efficacy

Bandura (1997) claimed that mastery experiences are “the most effective way of creating a strong sense of efficacy” (p. 3). Empirical studies on mastery experiences (e.g., teaching internships or microteaching) mostly found increases in teacher self-efficacy (Fives et al., 2007; Mergler & Tangen, 2010; O’Neill & Stephenson, 2012; Pfitzner-Eden, 2016a; Rupp & Becker, 2021). However, at the same time, some studies found that the level of teacher self-efficacy did not change significantly after having mastery experiences (Haverback & Parault, 2008; Klassen et al., 2021; Knobloch, 2006). In general, the research is inconclusive when looking at the differential impact of each of the four sources on the level of teacher self-efficacy in general (D. B. Morris et al., 2017). Moreover, only a few studies explicitly compare the effect sizes of the four different sources separately. One example is the study by Tschannen-Moran and McMaster (2009), who explicitly tested different combinations of mastery experiences, vicarious experiences, and social persuasion in an intervention introducing a new instructional reading strategy. While there were increases in teacher self-efficacy in all conditions, they found the largest gains in the one inheriting all three sources. With this result, it remains unclear whether mastery experiences alone represent the strongest source for teacher self-efficacy or whether mastery experiences develop their full potential in combination with other sources.

Further Influencing Factors

Previous research on teacher self-efficacy and the professional development of teachers, in general, has pointed to further characteristics of interventions that might influence the interventions’ effects.

Knowledge

It is still controversial in research how knowledge impacts teacher self-efficacy and whether increases in knowledge are significantly associated with increases in self-efficacy (Dicke, Parker, et al., 2015; Lauermann & König, 2016; D. B. Morris et al., 2017). In qualitative studies, where teachers were asked what they base their self-efficacy on, knowledge is repeatedly cited as a root of self-efficacy (Bautista & Boone, 2015; Palmer, 2011; Phan & Locke, 2015). It is also suggested that knowledge is especially important for individuals with little availability to other sources (Gold et al., 2017; Schwarzer & Warner, 2011). Therefore, in our meta-analysis, we systematically review self-efficacy interventions and investigate whether interventions that target knowledge alone differ from interventions that target at least one of the four sources of self-efficacy, according to Bandura (1997).

Moment of Reflection

Reflection opportunities are seen as an important feature of interventions aiming to change teachers’ attitudes or self-efficacy (Bardach et al., 2021; Dunst et al., 2015; Gaudreau et al., 2013; Kayapinar, 2016; Klassen et al., 2021; Prilop et al., 2019; Weber et al., 2018, 2019). Bauer et al. (2020) showed that reflection opportunities at the university were the only significant predictor of a change in student teachers’ self-efficacy throughout a semester-long internship. As the four sources of self-efficacy exert their effect on self-efficacy beliefs through various cognitive processes such as reflecting, evaluating, and weighting of information (Bandura, 1997), it is presumable that explicit moments of reflection in interventions support these mostly subconscious processes and strengthen the interventions’ effects. In our meta-analysis, we aim to test if interventions that provide a moment of reflection produce larger effects than interventions without a moment of reflection. This would allow us to determine whether a moment of reflection is an important characteristic for successfully promoting teacher self-efficacy.

Duration of Intervention and Period Between Measurement Points

Research on the characteristics of effective teacher training programs furthermore suggests that a certain amount of time is necessary to effect meaningful change (Desimone, 2009; Piwowar et al., 2013; Yoon et al., 2007). Yoon et al. (2007) even concluded that an intervention should last at least 14 hours to impact students’ achievement. In a study on the implementation of differentiated instruction in the classroom, the teachers who had completed more professional development hours displayed higher self-efficacy beliefs in relation to differentiation (Dixon et al., 2014). Since this study is correlational, it is unclear whether more intervention hours cause increases in teacher self-efficacy or whether teachers with higher levels of self-efficacy participate in more professional development activities. The meta-analysis presented here investigates whether there is a linear relationship between the intervention’s duration in hours and its effects.

In addition to the duration of an intervention, we considered the period between the assessments of the interventions’ effectiveness. In a study with university teachers, the authors pointed out that it takes a year to see positive effects on teacher self-efficacy and that shorter periods may lead to decreases (Postareff et al., 2007). This is in line with the theoretical background that teacher self-efficacy might be rather stable once established (Bandura, 1997) and that an intervention over a short period might compete with a longer period of previous experience. However, Bauer et al. (2020) emphasize that studies investigating different periods of internships could not find any additional effects of longer internships on pre-service teachers’ competencies. We will therefore examine whether longer periods are connected with larger effect sizes.

Domains of Teacher Self-Efficacy

Teacher self-efficacy is considered to be domain-specific 3 (Schulte, 2008; Skaalvik & Skaalvik, 2007; Weber et al., 2019). The Teachers’ Sense of Efficacy Scale TSES (TSES; also known as the Ohio State Teacher Efficacy Scale) developed by Tschannen-Moran and Woolfolk Hoy (2001) contains three domains of teacher self-efficacy, which capture core elements of teachers’ daily life: classroom management, student engagement, and instructional strategies. While teachers might feel competent in one domain, they do not automatically feel competent in another (Bandura, 1997). Empirical findings indicate relationships of different strengths between the domains and different outcomes (e.g., Chen et al., 2020; Kim & Seo, 2018). There are also first indices from intervention studies with pre-service teachers that the domains differ in their malleability due to interventions (Avalos & Bascopé, 2014; Dalioglu & Adiguzel, 2016; Pfitzner-Eden, 2016a). We will therefore check whether the three domains of teacher self-efficacy differ in their malleability due to interventions.

The Promotion of Teacher Self-Efficacy in Different Career Stages

Theoretically, self-efficacy beliefs are assumed to be relatively stable once they are set (Bandura, 1997; Woolfolk Hoy & Spero, 2005). And indeed, longitudinal research indicates that after a significant increase in the first 5 years of teaching, in-service teachers’ self-efficacy tends to be rather stable over several years (Künsting et al., 2016; Savolainen et al., 2022). However, other research shows that self-efficacy is certainly malleable over the teaching career. In comparative studies, teachers with more years of experience, that means in-service teachers in comparison with pre-service teachers or in-service teachers with more years in the teaching profession compared to in-service teachers with fewer years of working experience, report higher levels of TSE (D. W. Chan, 2008; Fackler et al., 2021; George et al., 2018; Klassen & Chiu, 2010, 2011; Wolters & Daugherty, 2007; Yeo et al., 2008). This may be because having more years of experience provides teachers with a broader range of experiences in classroom management, student engagement, and instructional strategies on which they can base their self-efficacy ratings. Also, studies evaluating specific interventions or major environmental and societal changes as virtual teaching during the Covid-19 pandemic show changes in in-service TSE (e.g., Aasheim et al., 2020; Conroy et al., 2019; Pressley & Ha, 2021). Summed up, there is empirical evidence that teachers’ years of experience do not prevent teacher self-efficacy changes due to major events or interventions. Nevertheless, it is presumable that interventions with in-service teachers yield smaller changes than interventions with pre-service teachers. A single intervention represents only one of many experiences for in-service teachers and might not cause an immense shift in their self-efficacy. To empirically evaluate this assumption, we analyze in our meta-analysis whether pre-service and in-service teachers react similarly to interventions promoting teacher self-efficacy.

The Interaction of Sources of Teacher Self-Efficacy and Career Stage

Bandura (1997) further describes that different sources may differ in importance depending on someone’s experience level. Vicarious experiences and social persuasion are assumed to be important when people face new tasks or have little previous experience (Bandura, 1997). In turn, they may be more relevant for pre-service than in-service teachers. This also is in line with the idea of practice-based teacher education that highlights the importance of vicarious experiences (terminology there: representations of practice) and social persuasion (terminology there: approximation) for pre-service teachers as well (Ball & Forzani, 2009; Grossman, Compton, et al., 2009; Grossman, Hammerness, & McDonald, 2009). However, in interventions using video observation (a possible operationalization of vicarious experiences), both pre-service and in-service teachers showed significant increases in self-efficacy and outperformed the comparison groups without video observation (Groeschner et al., 2018; Weber et al., 2019). The picture is equally ambiguous when we look at social persuasion. While in a study by Tschannen-Moran and Woolfolk Hoy (2007), social persuasion (operationalized as support from colleagues) explained variance in teacher self-efficacy only for teachers with less than 3 years of experience, several studies show positive relations between social persuasion (e.g., feedback) and the level of teacher self-efficacy across all career stages (Klassen & Durksen, 2014; Moulding et al., 2014; Smith et al., 2020). Moreover, several researchers emphasize the importance of social persuasion (e.g., feedback by mentors or coaches) after mastery experiences for the development of pre-service teachers’ self-efficacy (Klassen & Durksen, 2014; D. B. Morris et al., 2017; Pfitzner-Eden, 2016b; Tschannen-Moran et al., 1998; Tschannen-Moran & Woolfolk Hoy, 2007; Weß et al., 2020). However, Bardach et al. (2021) could not find an additional influence of social persuasion (operationalized as feedback) beyond mastery experiences on pre-service teachers’ self-efficacy.

Finally, it is also unknown whether mastery experiences, generally highlighted as the most important source, are equally important for pre-service and in-service teachers’ self-efficacy. Unlike pre-service teachers, in-service teachers have daily opportunities for mastery experiences—at least regarding the domains of classroom management, student engagement, and instructional strategies. Similar to the economic principle of “diminishing returns,” it is questionable whether additional mastery experiences in interventions will have any additional effect on in-service teachers. On the other hand, during interventions, in-service teachers might gain mastery experiences in areas they are not yet comfortable with (e.g., use of new technologies; dealing with heterogeneous classes), and those mastery experiences might, therefore, affect in-service teachers’ self-efficacy as well.

In summary, it is still unclear whether the three sources 4 —vicarious experiences, social persuasion, and mastery experiences—have differential effects on pre-service and in-service teachers. It also remains to be examined whether social persuasion combined with mastery experiences is especially beneficial for pre-service compared to in-service teachers.

The Present Study

Although teacher self-efficacy is considered a central variable for teachers’ health (e.g., Aloe et al., 2014), their commitment (e.g., Chesnut & Burley, 2015), their teaching quality (e.g., Klassen & Tze, 2014), and their students’ academic adjustment (e.g., Kim & Seo, 2018), to date, it is not empirically clear how interventions can support teachers’ self-efficacy beliefs and what factors influence the effectiveness of interventions (Klassen et al., 2011). Previous reviews and meta-analyses on teacher self-efficacy and its outcomes have claimed that future research should investigate the role of the four sources of self-efficacy in promoting teacher self-efficacy (Chesnut & Burley, 2015; Klassen et al., 2011; D. B. Morris et al., 2017). Therefore, we will analyze which sources are targeted in each intervention and how the respective combinations of sources influence the effectiveness of the interventions. We will also examine interventions with pre-service and in-service teachers to determine whether there are differences in the promotion of teacher self-efficacy depending on the career stage. Additionally, we will investigate whether the sources of self-efficacy and the career stage of teachers interact in promoting teacher self-efficacy. With this focus on the sources of self-efficacy and the malleability of self-efficacy in different career stages, we aim to contribute further insights into the theoretical tenets of self-efficacy by Bandura (1997). To make it possible for teacher-educators to base their decisions on the best available and relevant evidence, we will rate each study’s quality and consider various intervention characteristics. This will allow us to draw a picture of the quality of the current research in promoting teacher self-efficacy and to identify research gaps.

Our research questions and expected findings are as follows:

Research Question 1: Can interventions promote teachers’ self-efficacy?

We expect interventions to significantly and positively affect teachers’ self-efficacy.

Research Question 2: Are there differences in the effects of the interventions depending on the sources of self-efficacy the intervention targets?

According to Bandura’s (1997) theoretical tenets of mastery experiences as the most effective source, we expect interventions that include mastery experiences to produce larger effects than interventions without mastery experiences.

Research Question 3: Are there differences in the interventions’ effects regarding the investigated sample’s career stage?

Based on the claim from Bandura (1997) that teacher self-efficacy is more malleable in the beginning, we expect larger effects for pre-service teachers than for in-service teachers.

Research Question 4: Are there differences in the effects of the interventions based on the interaction of teachers’ career stage and the source targeted by the intervention?

Based on the claim from Bandura (1997) that vicarious experiences and social persuasion are especially important for beginners, we expect interventions targeting vicarious experiences or social persuasion to produce higher effect sizes for pre-service teachers than for in-service teachers. In the literature, it is assumed that especially pre-service teachers may benefit from a combination of social persuasion and mastery experiences (Klassen & Durksen, 2014; D. B. Morris et al., 2017; Pfitzner-Eden, 2016b; Tschannen-Moran et al., 1998; Tschannen-Moran & Woolfolk Hoy, 2007; Weß et al., 2020). Consequently, we expect higher effects of this combination for pre-service teachers than for in-service teachers. In-service teachers have already gained various mastery experiences through their years of experience. Therefore, it is conceivable that additional mastery experiences will not translate into additional self-efficacy. We also expect that pre-service teachers will report higher changes in their teacher self-efficacy after interventions targeting mastery experiences alone or in combination than in-service teachers.

Research Question 5: Are there differences in the effects of the interventions concerning the study’s quality?

Generally, studies with higher quality, as indicated by a randomized study design, for example, produce smaller effects than studies with lower quality (Cheung & Slavin, 2016). We hypothesize that studies with a higher study quality (indicated by a study design with randomization and a control group, a reliability coefficient higher than .8, and a detailed description of the intervention) will report smaller effect sizes than studies with lower study quality.

All further potential influencing factors that have been mentioned in the literature, that is, intervention aims to solely improve knowledge, moment of reflection included in the intervention, intervention’s duration and period, measure of TSE and domains of teacher self-efficacy used in the study, are analyzed as robustness checks without specified hypotheses.

Method

This meta-analysis follows common guidelines for high-quality systematic reviews and meta-analysis (Alexander, 2020; Page et al., 2021; Pigott & Polanin, 2019). We preregistered the meta-analysis on OSF (https://osf.io/ev7tf/) and report changes after the preregistration along with our justifications and possible effects in Table S1 (in the online version of the journal). Within the preregistration process, we calculated a priori power analyses with the R-package metapower (Griffin, 2021) to identify the required sample size and number of studies for the present meta-analysis. Necessary estimates for the power analysis (i.e., effect size, type of effect size, sample size, number of studies, and amount of heterogeneity) were based on the results from a preliminary search that identified a set of eight studies that seemed eligible for our meta-analysis (Aasheim et al., 2020; Al-Awidi & Alghazo, 2012; Aykaç et al., 2019; Bautista & Boone, 2015; Çelebi et al., 2014; Gold et al., 2017; Kunz et al., 2019; Thurm & Barzel, 2020). The a priori power analysis identified a minimum of at least 20 studies with an average sample size of 60 in order to reach 92% power under the conservative assumptions of high heterogeneity (75%) and an overall effect size of d = 0.4.

Systematic Literature Search

We applied a variety of search strategies to find as many relevant studies as possible. First, we conducted a systematic literature search in the databases FIS Bildung, ERIC, and Web of Science, 5 following the guidelines from Gusenbauer and Haddaway (2020) and Siddaway et al. (2019). Depending on the database, the search terms scanned either database-specific descriptors or abstract, title, and keywords (see exact search strings in Table S2 in the online version of the journal). The final search terms underwent numerous refinements following preliminary scoping searches and consultations with review team members and an information specialist. The structure of our search terms was inspired by the PICO-scheme (i.e., Population, Intervention, Comparison, Outcome; Kugley et al., 2017; Lefebvre et al., 2021). Our target population is pre- and in-service teachers. We looked for all kinds of interventions, such as programs or training. The outcome of interest is self-efficacy. We did not specify a comparison for our search term. The search was conducted on December 15, 2020, and delivered 3,526 hits. We kept the search algorithm alive and included search alerts until March 1, 2022, delivering a further 627 results. The search was limited to publications since 1977 because Bandura first described the sources of self-efficacy in that year. The languages of references were limited to German and English. No limitations regarding the publication type were made in order to include grey literature and reduce publication bias.

Second, we screened conference books and one online paper repository from relevant educational societies in Germany, Europe, and the United States, namely, Gesellschaft für empirische Bildungsforschung (GEBF [the Society for Empirical Educational Research]), the European Association for Research on Learning and Instruction (EARLI), and the American Educational Research Association (AERA), resulting in 176 possible studies.

Third, we sent out calls for intervention studies via Twitter and 12 mailing lists (5 special interest groups of EARLI, 6 special interest groups of AERA, and 1 mailing list of the German Psychology Society [DGPS]), resulting in 19 possible studies.

After finishing the first round of eligibility screening with the references yielded by the described search strategies, we further conducted a backward and forward citation search for all included studies via the tool citationchaser (Haddaway et al., 2021). Finally, we screened the reference lists from 12 previous reviews and meta-analyses related to teacher self-efficacy or intervention studies for teachers (Aloe et al., 2014; Chesnut & Burley, 2015; Gegenfurtner et al., 2013; Gesel et al., 2021; Iancu et al., 2018; Kim & Seo, 2018; Klassen & Kim, 2019; Klassen & Tze, 2014; Kraft et al., 2018; Mok & Staub, 2021; D. B. Morris et al., 2017; Zee & Koomen, 2016).

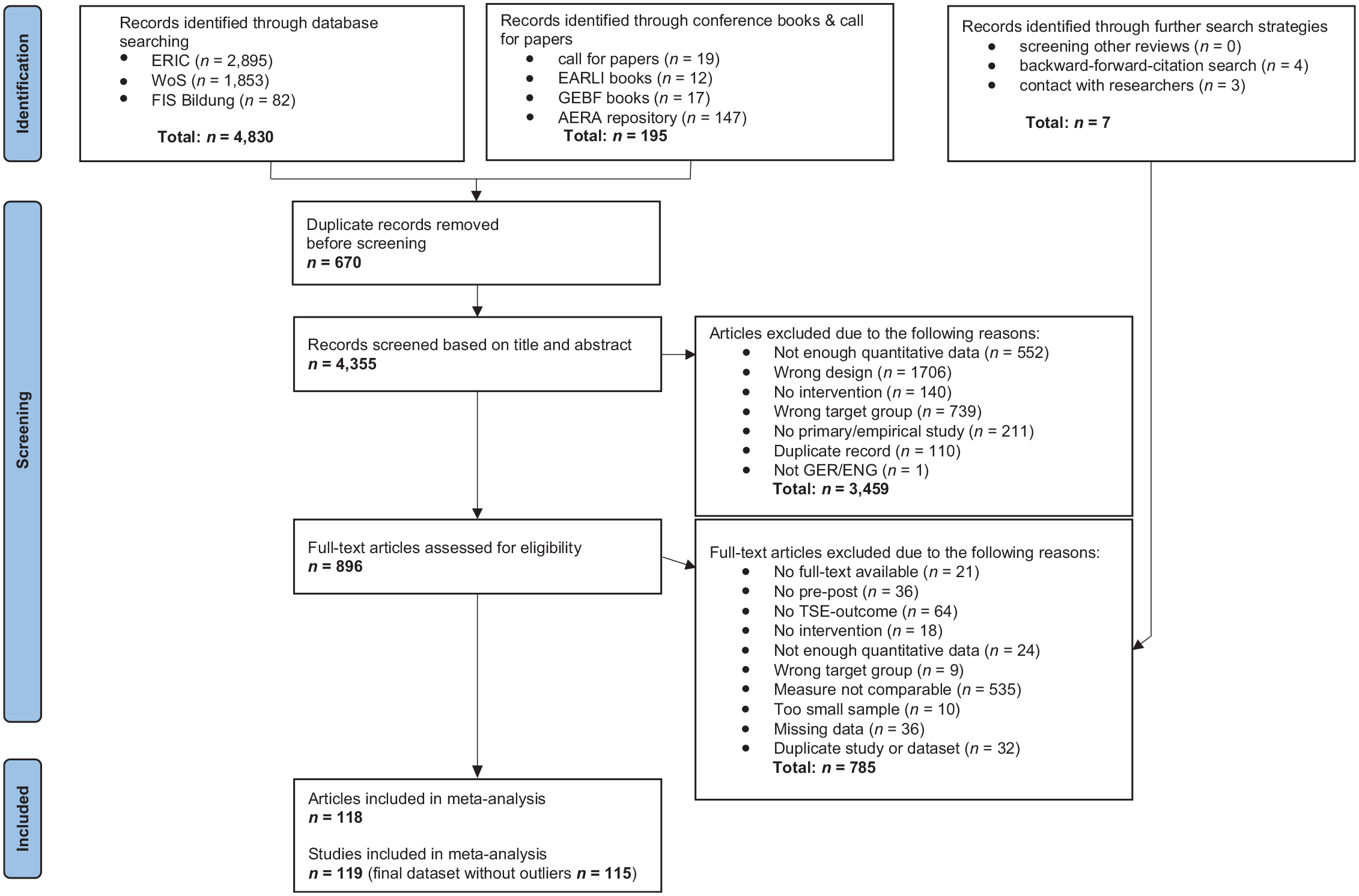

Screening Process

The flowchart (Page et al., 2021) in Figure 1 displays the progress and exclusion reasons from the initially identified references (n = 4,355) to the final data set (n = 119). All studies included in our meta-analysis meet the inclusion criteria listed in Table 2. Studies that did not fulfill one or more inclusion criteria were excluded. All decisions and reasons for exclusion are documented in the dataset SEIMA_inclusion_exclusion.csv on OSF (https://osf.io/65vfq). In Table S3 (in the online version of the journal), we specify exemplary excluded works.

Flowchart representing screening process.

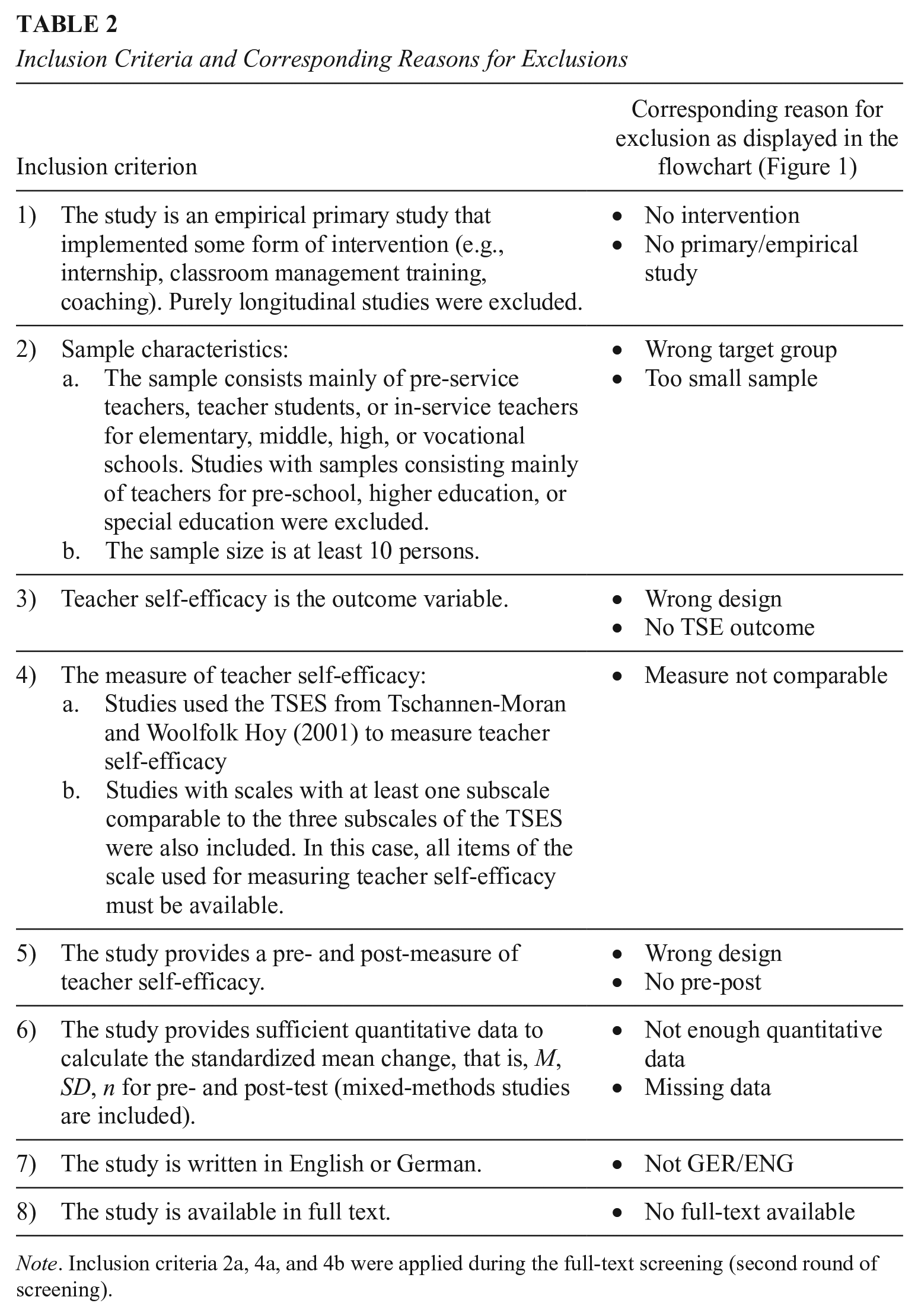

Inclusion Criteria and Corresponding Reasons for Exclusions

Note. Inclusion criteria 2a, 4a, and 4b were applied during the full-text screening (second round of screening).

Based on the inclusion criteria, the screening for eligibility was done in two rounds: In the first round, abstracts and titles were checked; and in the second, full texts were screened for inclusion. Before the screening started, the first author conducted a training with the other two coders (one of the authors and one independent coder) based on a detailed inclusion manual with examples for each criterion. Forty studies were established as the training set, whereas interrater reliability was checked, and unclear issues were discussed after 20 studies. If the interrater reliability was lower than .8, another round of training with a further 20 studies was done. After sufficient interrater reliability (κ ≥ .8) was reached, the independent coding started. To ensure high interrater reliability and high quality during screening, further questions, tips, refinements of the inclusion criteria, and insecure cases were discussed in weekly meetings among the three coders. The screening was done with high sensitivity, so the study was included rather than excluded in unclear cases. Double screening of 462 studies resulted in high interrater reliability (κ = .81 between coder 1 and 2, κ = .83 between coder 1 and 3). Disagreements were resolved through discussion. We contacted the authors for studies that met all the inclusion criteria but lacked statistical information to calculate effect sizes. Responses were included until April 2022. Our final data set exceeds the requisites from our a priori power analyses.

Data Extraction

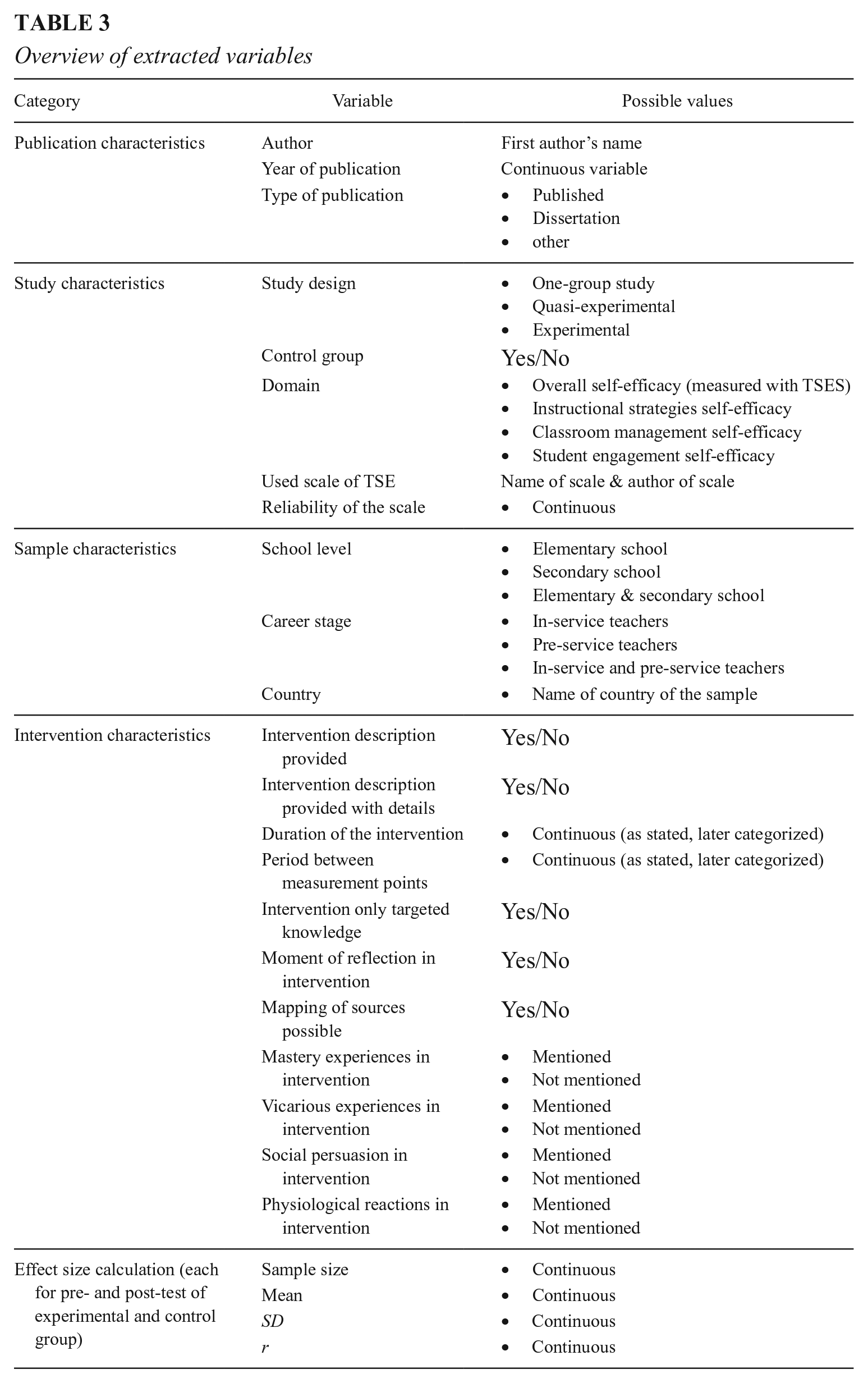

Two of the authors extracted the data necessary to characterize all the studies descriptively and to answer our research questions. A detailed coding manual (see https://osf.io/65vfq) provided instructions regarding the characteristics of (a) the publication, (b) the study, (c) the sample, (d) the intervention, and (e) the calculation of the effect sizes. Table 3 displays an overview of the variables extracted in each category. More detailed information on the study and intervention characteristics is provided below. In cases where information was missing, we coded the variable as not reported.

Overview of extracted variables

A trained student assistant double-coded all variables out of 80 studies that left scope for interpretation. Interrater agreements on single variables ranged from 76.5% (intervention description with details) to 95.1% (intervention targeted only knowledge). The values for Cohen’s kappa ranged from .32 (intervention description) to .88 (career stage). The low value for intervention description was caused by a strongly imbalanced distribution within this variable (Belur et al., 2021). Disagreements were resolved during regular meetings among the three coders.

Study Characteristics

The study design was coded as a one-group study (if no other intervention or control group existed), a quasi-experimental study (at least two groups, but no randomized assignment), or an experimental study (at least two groups and randomized assignment). The control group had to be a “no treatment” condition. If there was a group with an alternative treatment in the study, we coded this as a second treatment group and not as a control group. The used scale of teacher self-efficacy was coded with author and title as referenced in the study. The reliability coefficients of the used scales were extracted as stated in the studies. However, if the authors did not provide reliability calculations with their used sample, we coded the reliability coefficients as not reported.

Intervention Characteristics

Duration of intervention was coded as stated in the study and then recalculated into hours. We subsequently categorized the hours according to the quartiles into interventions lasting 0.5–6 hours, 7–18 hours, 19–30 hours, and 31–210 hours. The period between measurement points was also collected in an open format and later categorized into weeks. A semester was coded as 20 weeks. Periods of less than 1 week were coded as 0 weeks. Regarding the intervention description, we first rated whether there was a description (yes or no). Studies only reporting the title of the intervention received the code “intervention description: no.” Only studies with a general description were eligible for the coding of the sources. Second, we rated whether the studies provided details on the interventions (yes or no), which we used for our study quality rating.

We coded only knowledge for interventions focusing only on knowledge acquisition (e.g., evaluating a lecture in Chao et al., 2017) without mentioning any practical exercises or experiences that could be attributed to the sources. The role of knowledge for self-efficacy is still debated in the literature (D. B. Morris et al., 2017). Since we wanted to find out which characteristics of interventions best promote teacher self-efficacy, we also included studies that focused solely on knowledge acquisition. An intervention coded as only knowledge was not further eligible for coding the four self-efficacy sources. Moment of reflection was coded as “yes” when the intervention explicitly mentioned some form of introspection (e.g., diary, writing reflection papers, using reflection apps).

Coding the Four Sources of Self-Efficacy

One crucial element of the intervention characteristics was the coding of the four sources of self-efficacy. The coding scheme of the four sources was developed according to Bandura’s descriptions (1997), a guide for identifying sources of self-efficacy (Brand & Wilkins, 2007), and a review of the measurement of the sources of self-efficacy (D. B. Morris et al., 2017). Anchor examples for the relevant sources were provided in the coding manual (see https://osf.io/65vfq/). The four sources of self-efficacy were coded individually as “mentioned” or “not mentioned.”

Mastery experiences were coded as “mentioned” when teachers made actual practical experiences on their own (e.g., taught lessons, developed lesson plans). However, when a study only mentioned “teachers were encouraged to practice,” we coded “no mastery experiences mentioned.” Vicarious experiences were coded as “mentioned” when the teachers observed some model (e.g., the instructor of the intervention modeling a method, observing a classroom teacher). When teachers only watched videos transferring knowledge without modeling (e.g., watching a lecture), we coded “no vicarious experiences mentioned.” Social persuasion was coded as “mentioned” whenever there was some kind of social interaction among the teachers (e.g., feedback from supervising teacher, discussing lessons in meetings with mentors or colleagues). Physiological reactions were coded as “mentioned” whenever the intervention mentioned some practice of reducing stress levels or working with attributions (e.g., meditations, autogenic training).

We provide detailed information on each article’s study, sample, and intervention characteristics in Tables S4 and S5 in the online version of the journal.

Statistical Analyses

Computation and Aggregation of Effect Sizes

From our perspective, studies without a control group may also offer valuable information on the sources targeted in their intervention. Hence, our meta-analysis combines effect sizes from two different study designs (studies with a pre- and post-measure from a one-group study [i.e., only treatment group]) and studies with a pre-and post-measure from the treatment and the control group). The effect sizes for studies without a control group (n = 83) are calculated as the standardized mean change, defined as the difference between the post- and pre-test divided by the standard deviation from the pre-test (Becker, 1988; S. B. Morris & DeShon, 2002). The effect size for studies with a control group (n = 36) is calculated as the difference between the standardized mean change in the treatment group and the standardized mean change in the control group. In two studies, we transformed given t-values into the corresponding effect size measure with the formulas provided by Borenstein et al. (2021) and S. B. Morris and DeShon (2002) (Gresko, 2013; Liaw, 2017).

S. B. Morris and DeShon (2002) present theoretical and empirical prerequisites to combine effect sizes from different study designs. Following their guidelines, we (a) used raw score standardization for both effect sizes to avoid any bias induced by the interaction between subject and treatment and (b) empirically evaluated whether the effects derived from studies with a control group differed significantly from those derived from studies without a control group. Further, based on the theoretical tenets of self-efficacy, we (c) assumed that teacher self-efficacy might not change spontaneously (Bandura, 1997). Therefore, there should be no significant change in the control group. Thus, we (d) empirically investigated whether there was a significant change in TSE in the control groups. As the comparability of the effect sizes across different study designs is a prerequisite, we first conducted these checks.

We often had multiple correlated effect sizes per study because the studies reported values from various subscales or even several treatment groups. We followed the recommendations from Viechtbauer (n.d.) and computed two-level random effects models. Additionally, we applied cluster robust inference methods with a small sample correction, also known as robust variance estimation (RVE) (Pustejovsky & Tipton, 2022; Tanner-Smith et al., 2016; Tipton & Pustejovsky, 2015). All analyses were conducted in R using the packages metafor and clubSandwich (Pustejovsky, 2022; Viechtbauer, 2010).

Assessment of Outliers

To identify possible outliers, we calculated Cook’s distance and DFBETAS values (Viechtbauer, 2020; Viechtbauer & Cheung, 2010). The interpretation of Cook’s distance is similar to the Mahalanobis distance. We applied a general rule of thumb that every value larger than 4/n (in our case 4/119 = 0.034) is considered an influential study. DFBETAS values show how much the overall effect size would change (in standard deviations) when the study is deleted. We considered any DFBETAS value larger than one as an influential case (Viechtbauer & Cheung, 2010).

Moderator Analyses

We applied multiple meta-regression models for the moderator analyses. Omnibus F-tests, implemented in the metafor package, indicated the overall significance of the moderators in each model. All categorical moderators were dummy coded. We calculated five meta-regression models to analyze the second research question, whether the targeted sources of self-efficacy influence the interventions’ effects. In the first model, we tested interventions including mastery experiences, against interventions targeting other sources but not mastery experiences. In the second model, we evaluated all interventions targeting only one single source against interventions targeting only mastery experiences. In the third to fifth model, we tested interventions targeting only one specific source (i.e., only mastery experiences, only vicarious experiences, or only social persuasion 6 ) against interventions targeting this specific source in combination with other sources. To analyze the third research question, whether there are differences in the intervention effects depending on the career stage, we computed one meta-regression model with career stage as a dichotomous moderator. Concerning research question four, how specific sources interact with different career stages, we calculated four meta-regression models. In the first model, we evaluated whether there is an interaction between interventions targeting vicarious experiences (alone or in combination) and teachers’ career stage (pre-service or in-service). In the second model, we analyzed whether there is an interaction between interventions targeting social persuasion alone or in combination and the career stage. The third meta-regression model tested whether there are differences in the intervention effects depending on the career stage within the subgroup of interventions targeting mastery experiences and social persuasion together. We built a fourth meta-regression model where we analyzed whether there is an interaction between interventions targeting mastery experiences alone or in combination and teachers’ career stage.

Assessment of Study Quality

Based on existing rating schemes of study quality (e.g., What Works Clearinghouse, 2020) we chose four variables of the study and intervention characteristics to evaluate the quality of the primary studies. As Wedderhoff and Bosnjak (2020) suggested, we evaluated each variable and built a sum score over the four variables (min. = 0, max. = 4). The four variables were (a) randomized assignment (yes = 1, no = 0), (b) existence of a control group (yes = 1, no = 0), (c) reliability of the measure (≥ .8 = 1, < .8 = 0.5, not reported reliability = 0), and (d) details of the intervention described (yes = 1, no = 0). We conducted five meta-regression models evaluating the sum score of study quality as a continuous moderator and each of the four variables as categorical moderators (Research Question 5).

Assessment of Publication Bias

First, we addressed the problem of publication bias through the literature search by including unpublished literature, such as dissertations and reports, in our meta-analysis (n = 19). Second, we visually checked for asymmetry in a funnel plot, which prints the effect sizes on the x-axis against the corresponding standard errors on the y-axis. We further examined a possible publication bias with statistical tests: We conducted a meta-regression on publication type as well as an Egger’s regression test (Egger et al., 1997). The classical Egger’s regression test uses the standard error as the predictor and is therefore equivalent to the precision effect test (PET) of Stanley and Doucouliagos (2014). We also conducted a precision effect test with SE (PEESE), where the sampling variance is the predictor. We followed the conditional PET-PEESE procedure (Carter et al., 2019; Stanley & Doucouliagos, 2014) to decide which model to use for a cautious interpretation of its intercept as a publication bias-adjusted overall effect size estimate.

Robustness Checks

We conducted six further meta-regression models to check whether our results were robust across the domains of self-efficacy, the used scale of self-efficacy, interventions targeting only knowledge, interventions involving moments of reflection, durations of interventions, and periods between measurement points.

Exploratory Analyses

Several studies also reported follow-up measures. We first calculated a meta-analysis including these effect sizes, too. Second, we ran a meta-regression on the measurement point, which allowed us to test the stability and consistency of the intervention effects over time from pre to post to follow-up measurement. We set the pre-post measures as the reference group and analyzed whether they significantly differed from the effect sizes derived from pre- to follow-up, and post- to follow-up.

Results

Checks of Requirements for Aggregation of Effect Sizes

As effect sizes stemmed from different study designs, we applied two empirical checks to provide evidence that we can combine these effect sizes. Our data showed no significant change in the control groups’ teacher self-efficacy (g = 0.04, RVE SE = 0.07, 95% confidence interval [CI] = [−0.10, 0.17], p = .60) from pre- to post-test. Thus, the effect sizes of studies with a control group mainly represent the change in the treatment groups’ teacher self-efficacy and can be compared to the effect sizes of studies without a control group (only treatment group). We also could not find any significant difference (p = .13) between effect sizes based on studies with a control group (g = 0.43, RVE SE = 0.07, CI = [0.30, 0.56]) and effect sizes retrieved from studies without a control group (g = 0.56, RVE SE = 0.05, CI = [0.45, 0.67]). Therefore, we continued our analyses including effect sizes from both study designs.

Outlier Analyses

Cook’s distance and DFBETAS both identified the same seven studies as influential (Cabaroglu, 2014; de Carvalho et al., 2021; Karalar & Altan, 2018; Kissau & Algozzine, 2015; Peebles & Mendaglio, 2014; Whitley et al., 2019; Yilmaz & Koca, 2017). A detailed inspection of the studies revealed problems with the effect sizes or the general credibility in four cases (Karalar & Altan, 2018; Peebles & Mendaglio, 2014; Whitley et al., 2019; Yilmaz & Koca, 2017). We decided to delete the four problematic studies and calculated all the following results without the four influential studies.

Characteristics of Included Studies

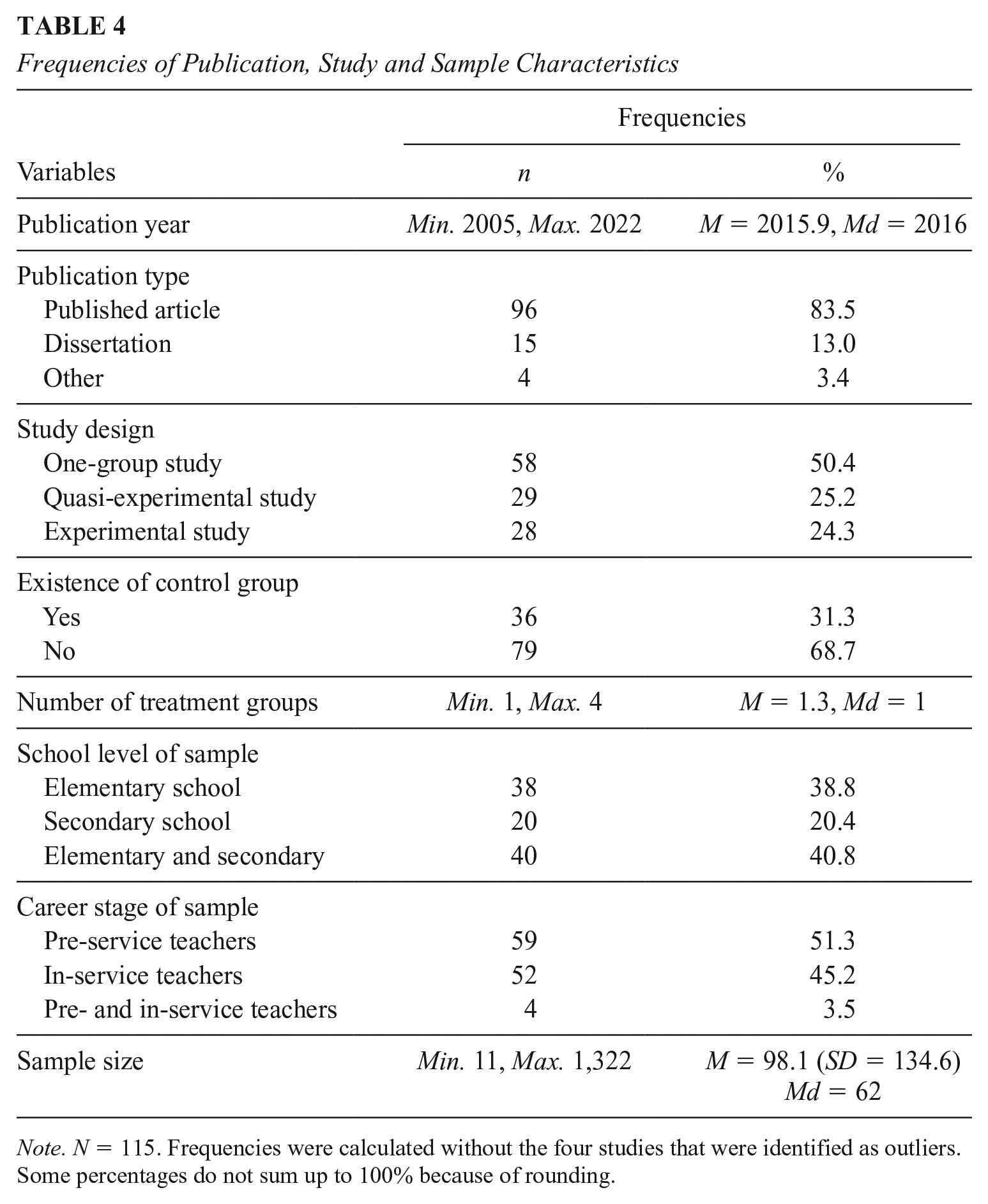

One hundred and nineteen studies published between 2005 and 2022 met our inclusion criteria and reported changes in teacher self-efficacy as a consequence of interventions. Four studies had to be excluded as they were identified as outliers. Table 4 presents an overview of the characteristics of the 115 included studies (i.e., publication year, publication type, study design, existence of control group, number of treatment groups, school level, and career stage). The total sample consisted of 11,284 pre-service and in-service teachers and studies stem from 26 different countries (USA: n = 50, Turkey: n = 13, Germany: n = 11). The majority of studies used the TSES (n = 99). The 115 included studies (n) reported 146 treatment groups (j), and we extracted a total of 318 effect size estimates (k).

Frequencies of Publication, Study and Sample Characteristics

Note. N = 115. Frequencies were calculated without the four studies that were identified as outliers. Some percentages do not sum up to 100% because of rounding.

Most of the 318 effect sizes originate from the domain classroom management self-efficacy (k = 94), followed by the domain instructional strategies self-efficacy (k = 82). Seventy-five effect sizes belong to overall teacher self-efficacy, and 67 stem from the domain student engagement self-efficacy. The interventions lasted between 0.5 and 210 hours, the periods between pre- and post-measurement ranged from less than 1 week to 40 weeks, representing a full academic year. The mean average intervention duration was 27.94 hours (median 18 hours), and the mean average period was 16.8 weeks (median 15 weeks).

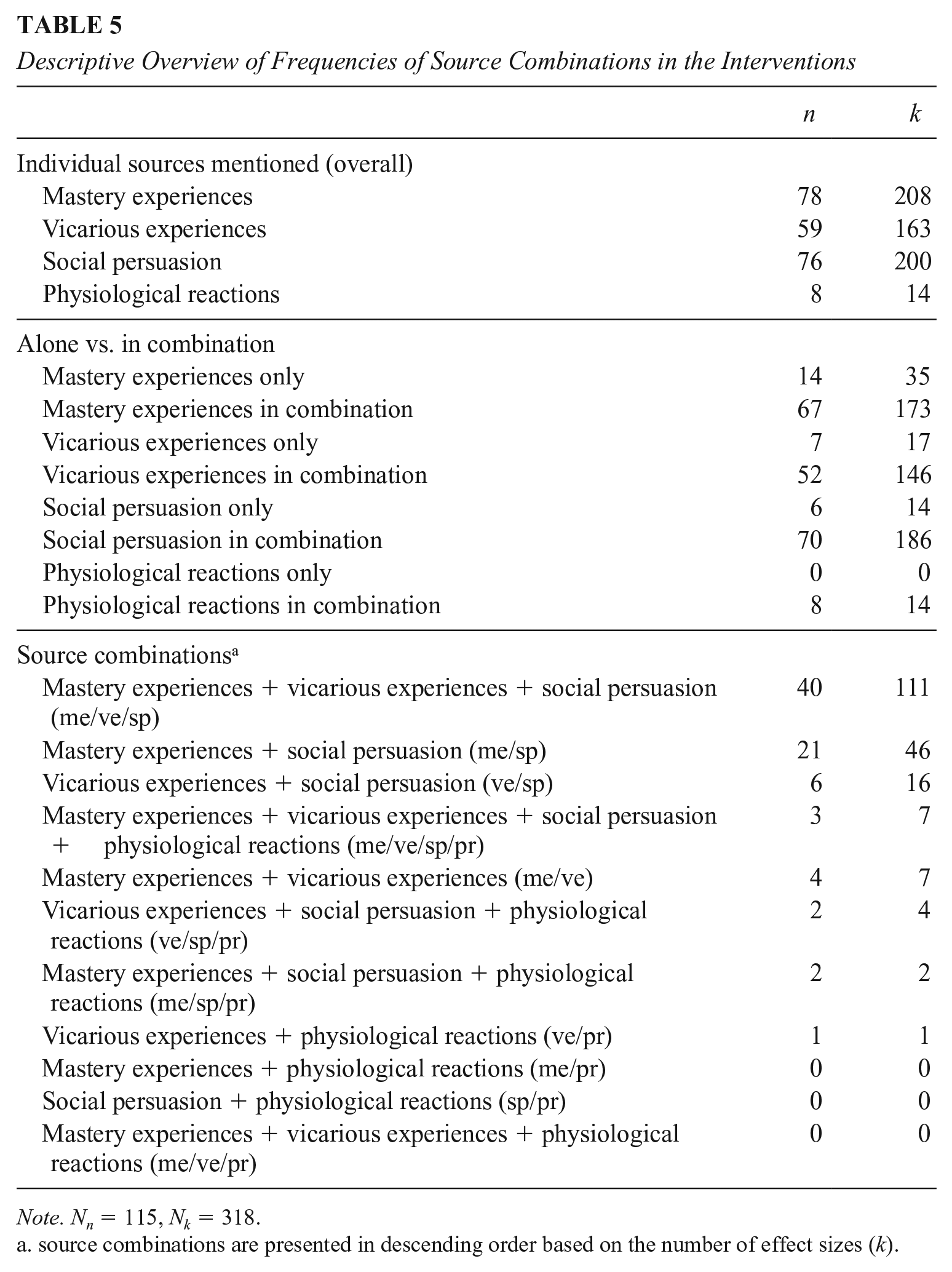

For 93 studies reporting on 123 treatment groups, we could code sources of self-efficacy (k = 260). In nine studies, we could not code sources of self-efficacy because the intervention targeted knowledge only (j = 9, k = 24). Almost half of all treatment groups (j = 66, k = 142) mentioned a moment of reflection. Table 5 displays the frequencies of the individual sources and source combinations in the interventions.

Descriptive Overview of Frequencies of Source Combinations in the Interventions

Note. Nn = 115, Nk = 318.

source combinations are presented in descending order based on the number of effect sizes (k).

Mastery experiences (k = 208) and social persuasion (k = 200) are almost equally targeted in the interventions. Physiological reactions are rarely part of the interventions (k = 14) and are never targeted alone. In general, all four sources appear more often in combination with other sources than alone. The most often targeted sources were mastery experiences, vicarious experiences, and social persuasion in combination (k = 111), followed by mastery experiences and social persuasion (k = 46) and only mastery experiences (k = 35).

Overall Effect of Interventions on Teacher Self-Efficacy (Research Question 1)

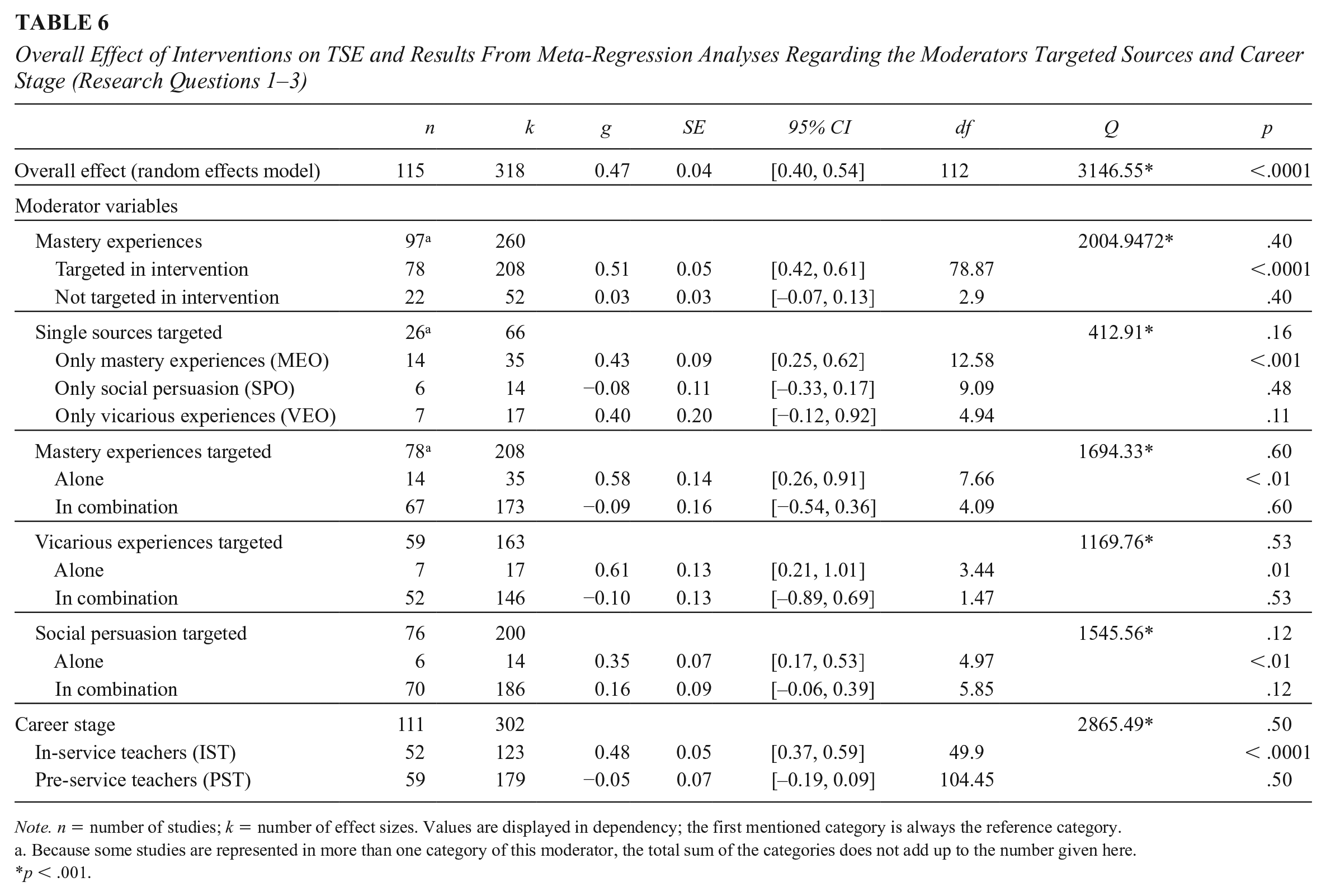

Concerning our first research question about whether interventions can promote teacher self-efficacy at all, we found a positive significant effect of interventions on teachers’ self-efficacy (g = 0.47, RVE SE = 0.04, CI = [0.40, 0.54], p < .0001). The significant Q-statistic (Q [df = 317] = 3146.55, p < .0001) indicates that there is a substantial amount of heterogeneity between the studies. We found a similar overall effect in the exploratory analysis, which also included follow-up measures (g = 0.45, RVE SE = 0.04, CI = [0.38, 0.52], p < .0001). Further results from our exploratory analyses are reported in Table S6 (in the online version of the journal).

The Role of the Sources for Interventions’ Success (Research Question 2)

First, we focused on the role of mastery experiences and evaluated whether, in general, they moderate the interventions’ effects. Interventions that included mastery experiences (g = 0.51, RVE SE = 0.05, CI = [0.42, 0.61]) did not, descriptively or significantly (p = .40), differ from interventions without mastery experiences (g = 0.54, RVE SE = 0.05, CI = [0.43, 0.66] 7 ). Next, we analyzed interventions targeting only one source and compared their effect sizes. Interventions targeting only vicarious experiences (k = 17) or only social persuasion (k = 14) did not differ significantly (p = .16) from interventions targeting only mastery experiences (k = 35). 8 Descriptively, interventions targeting only vicarious experiences showed the largest effects (g = 0.83, RVE SE = 0.20, CI = [0.34, 1.33]), followed by interventions targeting only mastery experiences (g = 0.43, RVE SE = 0.09, CI = [0.25, 0.62]) and interventions targeting only social persuasion (g = 0.35, RVE SE = 0.07, CI = [0.17, 0.53]).

In addition, we analyzed whether the interventions’ effects differ between interventions targeting only one specific source and interventions targeting this source in combination with other sources. We found no significant difference for all three models contrasting mastery experiences, vicarious experiences, and social persuasion as single sources and in combination (p = .60, p = .53, p = .12). A descriptively slightly larger effect size can be found for interventions where mastery experiences are not accompanied by other sources (g = 0.58, RVE SE = 0.14, CI = [0.26, 0.91]), in contrast with interventions targeting mastery experiences in combination with other sources (g = 0.49, RVE SE = 0.06, CI = [0.37, 0.62]). The same picture appears when comparing interventions targeting only vicarious experiences (g = 0.61, RVE SE = 0.13, CI = [0.21, 1.01]) and interventions targeting vicarious experiences in combination with other sources (g = 0.51, RVE SE = 0.05, CI = [0.40, 0.62]). In contrast, interventions targeting only social persuasion (g = 0.35, RVE SE = 0.07, CI = [0.17, 0.53]) are less effective than interventions targeting social persuasion in combination (g = 0.51, RVE SE = 0.06, CI = [0.40, 0.63]). All the results from these moderation analyses are reported in Table 6. We report the average effect sizes for each source and source combination in Table S7 (in the online version of the journal).

Overall Effect of Interventions on TSE and Results From Meta-Regression Analyses Regarding the Moderators Targeted Sources and Career Stage (Research Questions 1–3)

Note. n = number of studies; k = number of effect sizes. Values are displayed in dependency; the first mentioned category is always the reference category.

Because some studies are represented in more than one category of this moderator, the total sum of the categories does not add up to the number given here.

p < .001.

Promoting Teacher Self-Efficacy in Different Career Stages (Research Question 3)

Four studies had mixed samples with pre-service and in-service teachers without stating separate effect sizes for each group. As we cannot draw any conclusions about the role of the career stage from these studies, these four studies were excluded from the moderator analysis on different career stages. As reported in Table 6, our results showed no significant differences between pre-service and in-service teachers in their gains in teacher self-efficacy after the interventions (p = .50). Descriptively, the interventions’ effects were slightly larger for samples with in-service teachers (g = 0.48, RVE SE = 0.05, CI = [0.37, 0.59]) than for pre-service teachers (g = 0.43, RVE SE = 0.05, CI = [0.34, 0.53]).

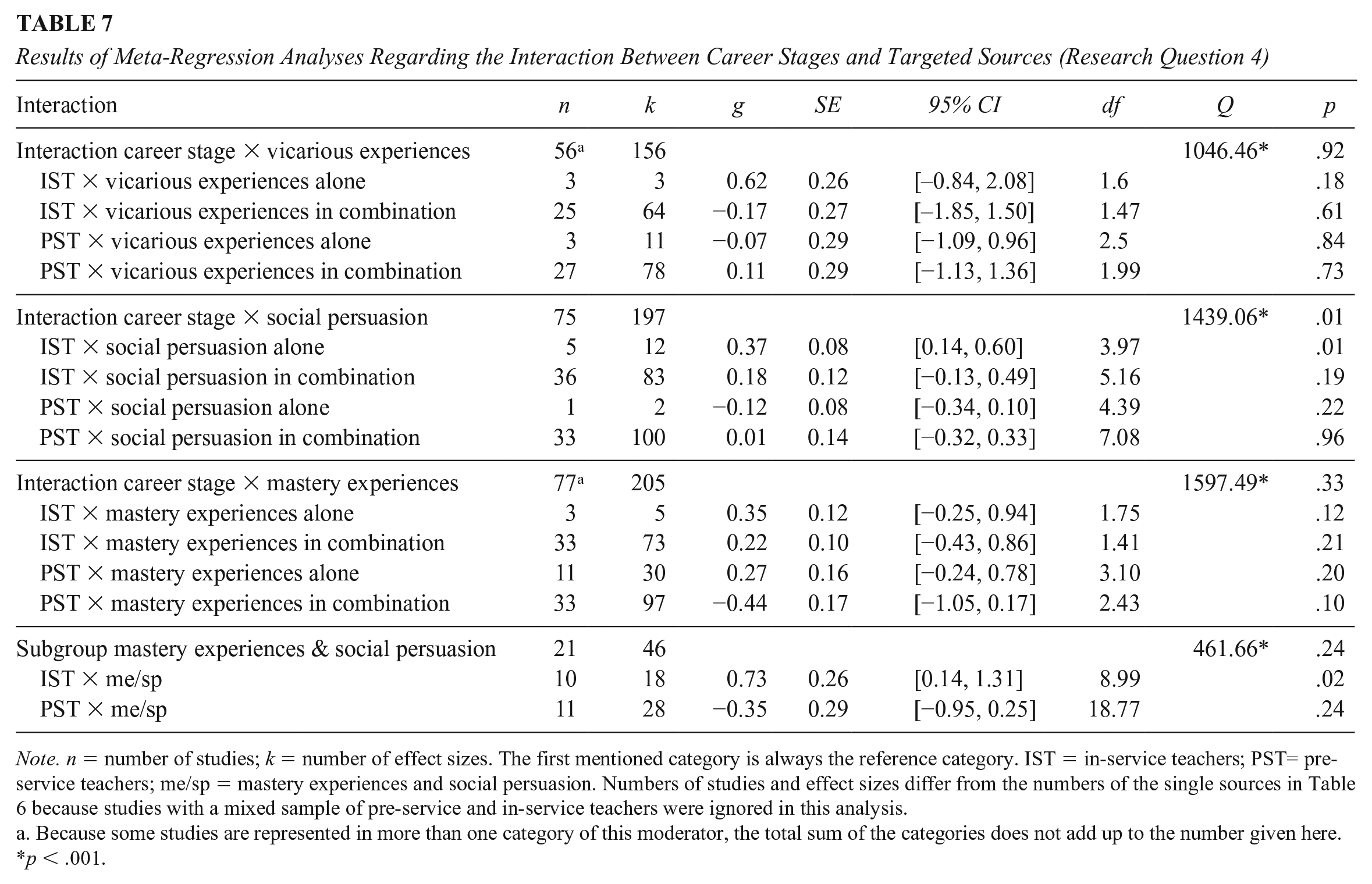

The Interaction of Career Stages and Sources (Research Question 4)

In order to examine whether different sources of self-efficacy may be differently important for teachers depending on their career stage, we checked four interaction models (see Table 7). The first model evaluated whether there is an interaction between career stages and interventions targeting vicarious experiences alone or in combination. There was no significant interaction (p = .92). However, in all categories, the degrees of freedom were below four, pointing to caution with interpretability (Tanner-Smith et al., 2016). Descriptively, in-service teachers profit more from interventions targeting vicarious experiences alone (g = 0.62, RVE SE = 0.26, CI = [−0.84, 2.08]) than from interventions targeting vicarious experiences in combination (g = 0.44, RVE SE = 0.07, CI = [0.31, 0.58]). The same is true for pre-service teachers. However, for them, the difference in teacher self-efficacy gains between interventions targeting vicarious experiences alone (g = 0.55, RVE SE = 0.11, CI = [0.16, 0.94]), and interventions targeting vicarious experiences in combination (g = 0.49, RVE SE = 0.07, CI = [0.35, 0.63]) is much smaller. There was no significant interaction between career stages and interventions targeting social persuasion alone or in combination (p = .96), although the moderator as a whole was significant (p < .05). As the distribution of the effect sizes across the different groups was extremely unbalanced, we are not interpreting this significance. Compared with the first model, however, the descriptive results look different. Pre-service and in-service teachers report more gains in teacher self-efficacy in interventions targeting social persuasion in combination (for pre-service teachers: g = 0.44, RVE SE = 0.06, CI = [0.31, 0.57]; for in-service teachers: g = 0.55, RVE SE = 0.09, CI = [0.37, 0.73]). The changes in interventions targeting social persuasion alone are g = 0.25 (RVE SE = 0.00, CI = [0.24, 0.27]) for pre-service teachers and g = 0.37 (RVE SE = 0.08, CI = [0.14, 0.60]) for in-service teachers.

Results of Meta-Regression Analyses Regarding the Interaction Between Career Stages and Targeted Sources (Research Question 4)

Note. n = number of studies; k = number of effect sizes. The first mentioned category is always the reference category. IST = in-service teachers; PST= pre-service teachers; me/sp = mastery experiences and social persuasion. Numbers of studies and effect sizes differ from the numbers of the single sources in Table 6 because studies with a mixed sample of pre-service and in-service teachers were ignored in this analysis.

Because some studies are represented in more than one category of this moderator, the total sum of the categories does not add up to the number given here.

p < .001.

In a third step, we evaluated whether pre-service teachers benefit more from the combination of mastery experiences and social persuasion than in-service teachers. We found no significant moderating effect (p = .24). In-service teachers (g = 0.73, RVE SE = 0.26, CI = [0.14, 1.31]) benefited descriptively more from this combination than pre-service teachers (g = 0.38, RVE SE = 0.12, CI = [0.11, 0.65]).

We also did not find any interaction in our fourth model evaluating the role of interventions targeting mastery experiences alone or in combination for the two different career stages (p = .33). Again, the degrees of freedom were below four in all categories, pointing to caution with interpretability (Tanner-Smith et al., 2016). At least descriptively, in-service teachers profit more from combined mastery experiences (g = 0.57, RVE SE = 0.09, CI = [0.38, 0.76]) than from mastery experiences alone (g = 0.35, RVE SE = 0.12, CI = [−0.25, 0.94]). Pre-service teachers, in contrast, benefit more from mastery experiences alone (g = 0.62, RVE SE = 0.11, CI = [0.35, 0.88]) than from combined mastery experiences (g = 0.40, RVE SE = 0.07, CI = [0.25, 0.54]).

Moderating Effect of Study Quality (Research Question 5)

Less than half of the 115 studies reached a study quality rating of at least two points out of four possible points (n = 55). Nine studies fulfilled all criteria and received the highest rating of study quality (four points). In comparison, 14 studies fulfilled none and received the lowest rating of study quality (zero points). Table S4 (online only) displays the study-level aggregated study quality rating. Figure S1 (online only) displays the distribution of the study quality ratings of the 318 effect sizes, Table S8 (online only) the results from the meta-regressions.

The sum score of study quality did not significantly moderate the interventions’ effects (p = 0.18). Descriptively, there was a very small positive relationship between study quality and effect size (b = 0.05). The studies with the highest rating of study quality (four points) reached, on average, an effect of g = 0.59 (RVE SE = 0.10, CI = [0.39, 0.79]), while the studies with the lowest rating of study quality (zero points) reached, on average, an effect of g = 0.37 (RVE SE = 0.07, CI = [0.22, 0.52]). As Wedderhoff and Bosnjak (2020) suggested, we also analyzed the single items of our sum score of study quality. Neither the randomized assignment (p = 0.35), the existence of a control group (p = 0.40), nor the reliability of the used measure (p = 0.66) significantly influenced the effects (see Table 8). Descriptively, studies with randomized assignment (g = 0.54, RVE SE = 0.09, CI = [0.36, 0.72), without a control group (g = 0.49, RVE SE = 0.04, CI = [0.40, 0.57]) and either no reported reliability (g = 0.48, RVE SE = 0.07, CI = [0.34, 0. 61]) or a reliability coefficient higher than 0.8 (g = 0.49, RVE SE = 0.05, CI =[ 0.39, 0.58]) found larger gains than studies without randomized assignment (g = 0.45, RVE SE = 0.04, CI = [0.37, 0.53]), with a control group (g = 0.42, RVE SE = 0.06, CI = [0.29, 0.55]) and reliability coefficients of lower than 0.8 (g = 0.40, RVE SE = 0.08, CI = [0.24, 0.57]).

However, the detailed description of interventions was a significant moderator (p < 0.01). Interventions that were described with some details reached significantly larger gains in teacher self-efficacy (g = 0.55, RVE SE = 0.05, CI = [0.45, 0.64]) than interventions without details in the description (g = 0.35, RVE SE = 0.05, CI = [0.25, 0.44]).

Robustness Checks

To adhere to page constraints, we present the results from the robustness checks in Supplement S9 (in the online version of the journal).

Publication Bias

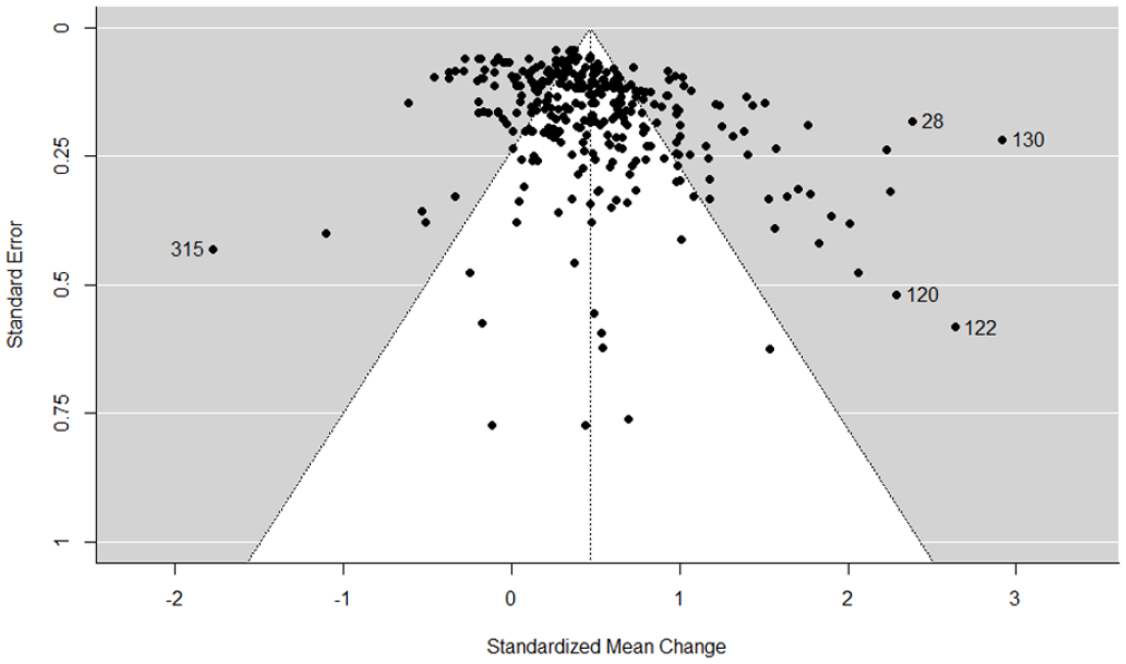

The funnel plot (see Figure 2) looks asymmetrical. Studies with negative effect sizes and larger standard errors seem to be missing. Four of the five most extreme points (indicated by the numbered effect sizes) are located on the funnel plot’s right side. The funnel plot represents the quite large sample sizes (M = 98.1) in the studies and the high heterogeneity among them.

Funnel plot of the effect sizes for interventions promoting teacher self-efficacy.

Although not taking into account the dependency among our correlated effect sizes, we applied precision-effect tests (Stanley & Doucouliagos, 2014) to retrieve statistical information about the funnel plot’s symmetry. The Egger regression test (1997) was significant (p < .0001), indicating asymmetry in the funnel plot. As the Egger regression test was significant, we conducted the PEESE. The PEESE was significant as well (p < .0001). Its limit estimate can be cautiously interpreted as the publication bias-adjusted average true effect (b = 0.29, CI = [0.24, 0.33]).

The moderator publication type was not significant (p = .66). We found the biggest effect sizes for the publication type “other” (g = 0.55, RVE SE = 0.25, CI = [−0.24, 1.33]. Published articles reported slightly larger effect sizes (g = 0.48, RVE SE = 0.04, CI = [0.40, 0.56]) than dissertations (g = 0.39, RVE SE = 0.09, CI = [0.21, 0.57]).

Discussion

This preregistered meta-analysis (https://osf.io/ev7tf) investigated whether interventions in pre-service teacher education and in-service teacher training can promote teacher self-efficacy. One hundred and nineteen studies identified by systematic search approaches were included to answer this question. We further analyzed whether the sample’s career stage, the interventions’ targeted sources, the interaction between these two, and specific study characteristics (e.g., study quality) moderated the effects. One main finding was that teacher self-efficacy can be promoted in interventions—equally for pre-service and in-service teachers. While the different source combinations did not produce statistically significant differences in the intervention effects, the effects were significantly larger when the intervention was described with details (one aspect of study quality), included moments of reflection, and had a certain duration (between 7 and 30 hours).

Research Question 1: Interventions as Ways to Support Teacher Self-Efficacy Promotion

This meta-analysis shows that teachers report, on average, significantly higher teacher self-efficacy beliefs after interventions than before. To evaluate the importance of this effect size, we first compare it with the results from similar meta-analyses; we then judge it concerning the costs of the interventions; and finally, we highlight its robustness across various methodologically relevant variables.

Compared to the only other meta-analysis on the promotion of teacher self-efficacy to date, our average effect size of g = 0.47 is somewhat smaller than the average effect of g = 0.68 in Mok et al. (2023). However, this difference is quite reasonable as we included a broader range of interventions and career stages in our meta-analysis, unlike Mok et al. (2023) who focused on individual support activities by mentors, coaches, or supervisors and examined only pre-service and beginning teachers’ self-efficacy. As there are no other meta-analyses on the promotion of teacher self-efficacy, we further refer to general teacher professional development and three meta-analyses on interventions for constructs related to and comparable with teacher self-efficacy, namely teacher burnout, teacher stress, and teacher well-being. Iancu et al. (2018) conducted a meta-analysis of 23 intervention studies aimed at reducing teacher burnout. Zarate et al. (2019) analyzed the effects of 18 mindfulness-based interventions on teacher stress and burnout, and Oliveira et al. (2021) evaluated the effects of 43 studies using social and emotional learning on teachers’ well-being and stress. All three meta-analyses included only studies with control groups and in-service teachers. They found significant effects on enhancing well-being (g = 0.35 in Oliveira et al., 2021), reducing teacher burnout (d = 0.18 in Iancu et al., 2018; standardized mean difference (SMD) = 0.33 in Zarate et al., 2019), and reducing teacher stress (SMD = 0.53 in Zarate et al., 2019; g = 0.34 in Oliveira et al., 2021). Our average effect size of g = 0.47 and—for more comparability—the average effect size for studies with a control group of g = 0.42 exceed, with one exception, the effects of the mentioned meta-analyses.

Several authors advise that intervention effect sizes should be considered in relation to their costs (Harris, 2009; Kraft, 2020; Levin & Belfield, 2015). Many interventions in pre-service teacher education as internships or specific video-based seminars were part of the university program and did not incur any extra costs (e.g., Gold et al., 2017; Michos et al., 2022; Weber et al., 2019). Especially if pre-service teachers analyzed videos they took with their own devices, these interventions were almost free of costs. Interventions with in-service teachers incur costs through the hours teachers spend in other classrooms for lesson study, for example, or in paying external coaches. The costs for a lesson spent by teachers in another classroom in the United States can be roughly calculated as follows: Based on the assumptions of public school teachers’ average salary of $65,090 per year (USA Facts, n.d.) and 36 weeks teaching 25 lessons per week, a single lesson costs $72.32. The literature on coaching costs reports ranges from $170 per contact hour to $400 per teacher (Barrett & Pas, 2020; Knight, 2012). These exemplary costs of interventions promoting and enhancing in-service teachers’ self-efficacy are well worth the money when compared with the financial costs caused by teacher drop-out and teacher burnout or the societal costs caused by teaching positions that are not staffed. Studies calculate the costs caused by burnout among physicians to be at least $7,600 per year (Han et al., 2019; Shanafelt et al., 2017). This figure is comparable for teachers, for whom, unfortunately, no specific figures are available yet. The cost of replacing a teaching position is sometimes quoted as up to $20,000 per teacher (Learning Policy Institute, 2017). In this comparison, interventions to increase self-efficacy, and thus presumably to increase teachers’ well-being and job satisfaction, are inexpensive and worth the money.

To prove the robustness of our overall effect, we tested a variety of methodologically relevant characteristics (study design, study quality, used scale, and domain of teacher self-efficacy) as moderators in meta-regressions. The effect size was robust across all mentioned methodological moderators, indicating that teacher self-efficacy can be promoted through interventions regardless of specific methodological choices within the studies. Although we included studies with different rigorous study designs, namely, with and without control group comparison, the overall effect is comparable in size to the effect of methodologically rigorous experimental intervention studies (e.g., Thiel et al., 2020). This supports our claim that the overall effect size is credible and that interventions can support teacher self-efficacy development.

Research Question 2: The Role of Specific Sources for Promoting Teacher Self-Efficacy

Before conducting our analyses, each intervention was carefully reviewed with a detailed coding scheme to extract the targeted sources of self-efficacy.

First, it became obvious that some sources have never been targeted in the analyzed interventions. To be more specific, it is noticeable that physiological sources are present in all source combinations that never occurred within the interventions (e.g., only physiological reactions, combination of mastery experiences and physiological reactions). This finding aligns with a general underrepresentation of physiological factors in the research on motivation in education (Martin et al., 2023; Pekrun, 2023). It remains a point for future research to investigate the role of physiological reactions (and their attributions) in promoting teacher self-efficacy.

Within the pool of interventions targeting only one specific source, interventions targeting only vicarious experiences descriptively outreached mastery experiences. Although we must interpret this large effect with caution, as only seven studies contributed to this moderator and the broad corresponding confidence interval indicates high heterogeneity among these studies, we still think this large effect points to the important role of vicarious experiences for the promotion of teacher self-efficacy (e.g., Kumschick et al., 2017). This finding also blends in well with other research findings that highlight the general relevance of vicarious experiences in teacher education per se (e.g., representations of teaching practice for core practices of teaching in Ball & Forzani, 2009; Grossman, Compton, et al., 2009; Grossman, Hammerness, & McDonald, 2009; cognitive modeling for pre-service teachers’ planning and instruction skills in Mok & Staub, 2021; or video observations for teachers’ immersion in Seidel et al., 2011).

Against our theoretically based hypothesis (e.g., Bandura, 1997), interventions including mastery experiences did not produce larger effects than interventions targeting any other source combination without mastery experiences. Due to our reliance on the studies’ intervention descriptions, we could only code whether mastery experiences were offered in interventions and not whether and to what extent these experiences were experienced as successful by the teachers. Therefore, it is presumable that some participants experienced failures, which may have reduced the effect sizes of interventions involving mastery experiences. Future research should explore how failure (unsuccessful mastery experiences) contributes to the promotion of teacher self-efficacy. Nevertheless, the effect size of g = .43 for only mastery experiences can still be interpreted as a substantial effect size for practicing various teaching-related tasks.

Third, in comparison with mastery and vicarious experiences, social persuasion plays a minor role in promoting teacher self-efficacy, as proposed in theory (Bandura, 1997; Schunk, 1991). Within the pool of interventions targeting only one specific source, only social persuasion reached the descriptively lowest effect. This result is similar to findings from longitudinal studies reporting no effect of social support, especially only emotional support, on the development of teacher self-efficacy (e.g., Hartl & Holzberger, 2022). However, the source social persuasion covers a wide range of activities, including, for example, group discussions, mentoring and coaching, or feedback. Whereas the (low) effect size for social persuasion is similar in size to another meta-analysis investigating the effects of coaching, mentoring, and supervision on pre-service teachers’ instruction skills (Mok & Staub, 2021), research further points to several conditions that may be decisive for the effectiveness of feedback, for example, feedback givers’ expertise (Prilop, et al., 2021; Weber et al., 2018), the content delivered (Wisniewski et al., 2020), or the addressed level (e.g., task, process, etc.) (Hattie & Timperley, 2007). It could be that some “negative effects of feedback” have lowered the effect size of social persuasion.

The fact that we could not find significant differences between the sources also complements reflections on teacher education in general and studies on teacher self-efficacy showing that different elements of training (here: the different sources of self-efficacy) are intertwined and do not impact teacher self-efficacy independently but are instead all necessary for an overall effect (Bach, 2022; Ball & Forzani, 2009; Bardach et al., 2021; Grossman, Compton, et al., 2009; Grossman, Hammerness, & McDonald, 2009; Pfitzner-Eden, 2016b). As we had to rely on the intervention descriptions in the primary studies, we could only code whether a source was mentioned. However, we could not code the order, the amount, or the intensity of the mentioned sources of self-efficacy in the interventions nor the perceived valence (for example, whether the mastery experiences were perceived as successful mastery experiences). To better understand the underlying mechanisms, future research should apply more experimental studies that systematically investigate different combinations, orders, amounts, and perceived valences of the sources (e.g., Tschannen-Moran & McMaster, 2009).

Research Question 3: Promotion of Teacher Self-Efficacy Works in All Career Stages

Against our hypothesis, we found no significant difference in the promotion of pre-service and in-service teacher self-efficacy. The almost identical effect sizes for in-service and pre-service teachers raise the question of whether teacher self-efficacy is a less inherently stable construct than previously thought (Bauer et al., 2020; Rupp & Becker, 2021).