Abstract

Simulation-based learning offers a wide range of opportunities to practice complex skills in higher education and to implement different types of scaffolding to facilitate effective learning. This meta-analysis includes 145 empirical studies and investigates the effectiveness of different scaffolding types and technology in simulation-based learning environments to facilitate complex skills. The simulations had a large positive overall effect: g = 0.85, SE = 0.08; CIs [0.69, 1.02]. Technology use and scaffolding had positive effects on learning. Learners with high prior knowledge benefited more from reflection phases; learners with low prior knowledge learned better when supported by examples. Findings were robust across different higher education domains (e.g., medical and teacher education, management). We conclude that (1) simulations are among the most effective means to facilitate learning of complex skills across domains and (2) different scaffolding types can facilitate simulation-based learning during different phases of the development of knowledge and skills.

Knowledge application in more or less realistic situations has been shown to be important for the development of complex skills (e.g., Kolodner, 1992). Expertise development theories (e.g., Van Lehn, 1996) suggest that learners acquire high levels of expertise in complex problem-solving tasks if they dispose of sufficient prior knowledge and engage in a large amount of practice. Practice opportunities ideally include authentic problems related to a professional field (e.g., Barab et al., 2000). However, in higher and further education programs, the opportunity to engage in real-life problem solving is limited. In addition, practice in real-life situations without systematic guidance can be overtaxing for students and come with risks and ethical issues—for example, when working with real students or patients without being systematically prepared. Moreover, real-life situations do not always provide enough practice opportunities as, for example, critical situations appear less frequently or require a lot of time before decisions lead to observable consequences. These limitations make practice in real-life situations a somewhat inaccessible and sometimes suboptimal learning space, particularly for novice learners. Therefore, approximations of practice in which the complexity is reduced (Grossman et al., 2009) can help engage learners in specific aspects of professional practice and are promising in order to avoid confusion and efficiently use resources for learning and instruction. These approximations of practice can be realized in higher education with simulations, which allow students to use authentic problems and also to create a learning environment to practice and facilitate the acquisition of target complex skills (e.g., Cook, 2014).

Simulations are increasingly often used in higher education settings. In STEM (science, technology, engineering, and mathematics) education (e.g., D’Angelo et al., 2014; Wu & Anderson, 2015), they are used to facilitate a deeper understanding of concepts and relationships between them, advance inquiry, problem solving, and decision making. A lot of research has been done in the field of medical education (Cook, 2014; Cook et al., 2013; Hegland et al., 2017), where simulations are used to advance diagnostic competences and motor and technical skills of prospective doctors, nurses, and emergency teams. Simulation-based learning also occurs in other fields, such as teacher education, engineering, and management (e.g., Alfred & Chung, 2011; Brubacher et al., 2015). The present meta-analysis focuses on higher education and, more specifically, on fields that strongly rely on interaction with other people at different levels (physical, cognitive, social, etc.)—for example, medicine, nursing, psychological counseling, management, teacher education, particular areas of engineering, and economics. Regarding this area of interest, little is known about for whom simulations are particularly helpful, what scenarios are effective, and what additional instructional support makes them effective for learners with different learning prerequisites. Synthesized results on the role of different features of simulations (e.g., duration, technology use) and instructional support (e.g., scaffolding) are lacking, especially with regard to effective support for learners with different levels of prior knowledge. This meta-analysis summarizes the effects of scaffolding and technology use in simulation-based learning environments on facilitating a range of complex skills across domains (e.g., medical and teacher education, psychological counseling, care). In a previous meta-analysis, it has been found that the effects of instruction across domains of medical and teacher education have similar magnitude for a certain set of skills related to diagnosing; the effects increase in magnitude with the proper use of scaffolding (Chernikova et al., 2019). Other meta-analyses in the field of medical education (e.g., Cook, 2014) support the idea that simulations can be highly effective for advancing specific motor and technical skills. However, knowledge is still scarce with regard to the effective support to advance a variety of complex skills for learners with different levels of prior knowledge. The present meta-analysis aims at advancing this research by summarizing the effects of simulation-based learning on complex skills—going beyond understanding the subject matter and performing technical tasks. In addition, this meta-analysis aims at differentiating the effects for learners on different levels of prior knowledge.

To assess the effects of instructional support, this meta-analysis adopts a scaffolding framework suggested by Chernikova et al. (2019). The framework relies on defining scaffolding as support during working on a task connected with a temporary shift of control over the learning process from a learner to a teacher or learning environment (e.g., Tabak & Kyza, 2018). The framework suggests that learners with different levels of prior knowledge would benefit from different types of scaffolding. More specifically, learners with high levels of prior knowledge would benefit more from scaffolding that affords and requires more self-regulation (e.g., inducing reflection phases), whereas learners with a low level of prior knowledge would rather benefit from more guidance (e.g., through examples).

Complex Skills in Higher Education

In higher education, students need to be prepared for their future profession, and their professional competences should involve a range of complex skills. The importance of 21st-century skills goes beyond secondary education and is also often addressed during higher and further education (e.g., P21, 2019). Critical thinking, problem solving, communication, and collaboration seem to be the most relevant skills that students should acquire during their education in addition to domain-specific knowledge and skills to be able to make professional decisions and implement solutions.

According to Mayer (1992), problem solving in a broader sense is cognitive processing aimed at achieving a goal when no solution path or method is obvious. It involves critical thinking, monitoring, and experimental interactions with the environment (Raven, 2000), as well as directed application of knowledge to the case or problem. Shin et al. (2003) emphasize the differences between well- and ill-structured problems. Well-structured problems present all elements of the problem, engage the application of a limited number of rules and principles and possess correct, convergent answers. A good example of such problems would be finding the predicate in a sentence, calculating drug dosage based on a patient’s weight, and so on. However, the real-world problems that professionals across domains deal with are usually ill structured. In turn, they fail to present one or more of the problem elements; might have unclear goals; possess multiple solutions, solution paths, or sometimes no solutions at all; or represent uncertainty about which concepts, rules, and principles are necessary for the solution or how they are organized (Funke, 2006; Shin et al., 2003). Problem solving in this case might involve not only diagnosing but also managing critical situations. By diagnosing, we understand collecting case-specific information to reduce uncertainty (Heitzmann et al., 2019), such as diagnosing learning difficulties, identifying the cause of a health problem, or developing a course of action. By managing critical situations we understand using the set of skills required to behave in situations of emergency or uncertainty, such as classroom management skills or emergency help with disasters or accidents.

If the solution path is already known (e.g., a complex procedure with a set of required steps needed to collect information or make a decision) and needs to be accurately followed, we would rather address it as technical/manual performance (an example from medical education would be completing an examination or operation, in teacher education—carrying out lesson activities according to a plan prepared in advance).

To implement the decisions and solutions to problems as well as to collect missing information, one also needs communication skills (e.g., to persuade others to help or to collect missing information from other people; Raven, 2000). If multiple professionals are involved in the situation and need to collaborate to solve the problem, make the decision, or take action (e.g., an emergency team), we see it as collaboration/teamwork skills.

To sum up, different professional domains require specific professional knowledge as a prerequisite to enable the implementation of complex skills; however, the complex skills required appear to be similar across domains. One should be able to identify the problem, analyze the context, and apply professional and experiential knowledge to make practical decisions. Fischer et al. (2014) suggested a framework of epistemic activities that is relevant to a broad range of problem-solving and decision-making procedures across domains: identifying the problem, questioning, generating hypotheses, constructing artifacts, generalizing and evaluating evidence, drawing conclusions, and communicating processes and results. In this meta-analysis, focus on learning outcomes that involve these epistemic activities, critical thinking, and problem solving are among the most important eligibility criteria.

Simulations in Educational Contexts

Simulation-based learning offers learning with approximation of practice, allows limitations of learning in real-life situations to be overcome, and can be an effective approach to develop complex skills. Beaubien and Baker (2004) define a simulation as a tool that reproduces the real-life characteristics of an event or situation. A more specific definition suggested by Cook et al. (2013) stated that simulation is an “educational tool or device with which the learner physically interacts to mimic real life” and in which they emphasize “the necessity of interacting with authentic objects” (p. 876).

What makes simulations educational tools is the opportunity to alter and adjust some aspects of reality in a way that facilitates learning and practicing (e.g., they address less frequent events, shorten response time, provide immediate feedback to the learner, etc.). Although feedback, as providing information about discrepancy of current state (or behavior) and a desired goal stat (Hattie & Timperley, 2007), plays an important role in designing simulation, there are much more opportunities for instructional support. The present research aims at exploring opportunities to provide additional information and scaffolding to the learner in detail.

The operational definition of simulation includes interaction with a real or virtual object, device, or person and the opportunity to alter the flow of this interaction with the decisions and actions made by learners (Heitzmann et al., 2019). Thus, all types of interaction, from role plays and standardized patients to highly immersive interactions with virtual objects, can be considered simulations.

The operational definition of simulation also implies that there is critical thinking and a kind of problem solving that is present during learning and learners take an active role in the skill development processes. Simulations have many features to address the complexity of real-world situations (Davidsson & Verhagen, 2017); for example, they can involve technology aids to better resemble reality or to provide more practice or learning opportunities. However, the idea of simulation tends to focus on the reconstruction of realistic situations and the genuine interactions that participants can participate in. According to Grossman et al. (2009), simulation can be viewed as a simplified version of practice and be used to engage novices in practices that are more or less proximal to the practices of a profession. Therefore, it seems reasonable to additionally focus on the duration of simulation and authenticity in order to determine how realistic the learning environment was and how long the learners were exposed to it.

Another aspect that needs to be considered is the type of simulation, which can be categorized according to what or who learners interact with (real or virtual object or person). The type of simulation is related to the concept of information base (e.g., where the information for decisions comes from) as a context variable (Chernikova et al., 2019; Heitzmann et al., 2019) but provides a further categorization into real or virtual object (e.g., document, tool, model) and real or virtual person (e.g., standardized patients).

Technology use refers to the application of digital media (hardware and software) to establish a learning environment. In class, role plays, simulated discussions, and communication with standardized patients can be seen as simulations without technology use, as no software or hardware is necessary to initiate the interaction (e.g., Davidsson & Verhagen, 2017). Screen-based simulations require computer-supported interfaces and some software, which allows the interaction (e.g., Biese et al., 2009). Another type is interaction supported by combining some hardware with software, such as in a programmed mannequin (Liaw et al., 2014). One more type that requires complex technology is virtual reality, which is likely to facilitate immersion (e.g., Ahlberg et al., 2007). Empirical research on the effects of technology use on learning (comparing the use of computers in the classroom with no technology) provides some supportive evidence of small to moderate positive effects of technology use on learning and achievement (Hattie, 2003; Tamim et al., 2011). Some evidence of no particular effects of technology use comes from medical education comparing high- and low-fidelity simulations for learning (e.g., Ahad et al., 2013). Systematic empirical evidence of the effects of virtual reality is lacking due to the fact that virtual reality is rather new technology and is not yet broadly implemented in classrooms. The aim of this meta-analysis is to summarize the effects of different technologies on acquiring complex skills.

Duration of simulation refers to the time of exposure to a learning environment. In their meta-analysis, Cook et al. (2013) provided supportive evidence of the effectiveness of distributed and repetitive practice (effects above .60) in the acquisition of complex skills in medical education. However, they only focused on comparing simulations with a duration of more than 1 day with simulations of less than 1 day. Therefore, as a next step a meta-analysis might be aimed at capturing the effects of the duration of simulations on a more detailed scale (including simulations lasting for several minutes, hours, days, weeks, or semesters).

Simulation-based learning allows reality to be brought closer into schools and universities. Learners can take over certain roles and act in a hands-on (and heads-on) way in a simulated professional context. Research has shown that full authenticity is not always beneficial for learning (e.g., Henninger & Mandl, 2000). Researchers therefore typically emphasize the opportunity to modify reality for learning purposes with simulated environments. Therefore, it is important to consider the extent to which simulation represents the actual practice in terms of demands set on the learner, the nature of the simulated situation, and the environment and/or the participants involved (e.g., Allen et al., 1991). Sometimes this relationship is addressed as fidelity of the simulation. However, a recent review by Hamstra et al. (2014) emphasized that there are severe inconsistencies in using this term in different research areas. In line with recommendations given by this group of authors (Hamstra et al., 2014), we focus on functional correspondence between a simulated scenario or the simulator itself and the context of real situations. We address this correspondence by estimating the degree of authenticity, and in this way, we avoid the term fidelity and the uncertainty related with it.

Prior Knowledge and Professional Development

Prior knowledge is an important predictor of learning success (e.g., Ausubel, 1968); it can also define the ability of learners to learn from particular materials, and use learning strategies. There are considerations suggesting that including simulations in a later phase of a higher education program after students already know theoretical concepts is promising in order to not overwhelm learners and block too much of their cognitive capacity for solving a problem in a simulation (e.g., Kirschner et al., 2006). Other considerations suggest including simulations at a very early point because this supports the process of restructuring knowledge into higher order concepts that can be used directly to solve problems (e.g., Boshuizen & Schmidt, 2008; Schmidt & Boshuizen, 1993; Schmidt & Rikers, 2007). Thus, a theoretical knowledge base would be stored in a more effective way that is directly linked to cases of application (e.g., Kolodner, 1992). Nonetheless, it seems rather obvious that learners with less theoretical prior knowledge may need more instructional guidance than more advanced learners in order to still possess enough cognitive resources for learning (e.g., Schmidt et al., 2007).

Including opportunities to apply knowledge such as in simulations in higher education programs is crucial. It seems reasonable not to assume a general answer to the question of when a simulation should be used in a higher education program. The type of simulation and the type of instructional support in relation to the prior knowledge of the learners may be more indicative and is therefore explored in the current meta-analysis.

Added Value of Instructional Support

Exposure to ill-structured problems, especially in early stages of expertise development, should be accompanied by scaffolding to maximize learning and avoid cognitive overload, distraction, or focusing on superficial features of a situation (see the discussions of Hmelo-Silver et al., 2007; Kirschner et al., 2006). Scaffolding enables a learner to solve problems through modifying tasks and reducing possible pathways, and through hints helping the learner to coordinate the steps in problem solving or interaction (Quintana et al., 2004), by taking over some elements of learning material (Wood et al., 1976). In meta-analyses, scaffolding has been shown to have medium effects on various learning outcomes (e.g., Gegenfurtner et al., 2014).

One of the scaffolding features frequently mentioned by the researchers is the opportunity to adjust its amount. Scaffolding can be presented during or after the learning situation, be present all the time, or be added or faded gradually (e.g., Belland et al., 2017; Van de Pol et al., 2010). The recent meta-analysis, however, showed that at least in the domains of medical and teacher education, the majority of studies implementing scaffolding to foster diagnostic competences do not employ fading or adding procedures but still report positive effects (Chernikova et al., 2019).

Instructional support can be implemented in many different ways: Learners can obtain a theoretical introduction or some information on how to deal with materials in advance (knowledge convey) or they can be scaffolded in the learning environment. Learners can be guided through procedures step by step (e.g., worked examples or modeling); provided with observation scripts, checklists, or a set of rules or ways to deal with the case in question (e.g., prompts); assigned specific roles (with a prescribed course of action or goals); and asked to reflect on their own problem solving, set goals, and assess progress (e.g., inducing reflection phases).

These types of scaffolding can be positioned on the scale from high levels of instructional guidance and little need for self-regulation to develop skills to high levels of self-regulation with little instructional guidance (Chernikova et al., 2019). In this framework, examples provide solutions or model target behavior (e.g., Renkl, 2014) and can be positioned at a high level of instructional guidance and therefore less self-regulation. Reflection phases, on the other hand, allow learners to think about goals, analyze their own performance, and plan further steps (e.g., Mann et al., 2009), but they do not provide much guidance during the problem solving. Assigning roles can be viewed as prescribing a certain way of solving the problem. Prompts are scaffolds providing hints or additional information about how to handle the task in terms of the actual process required (e.g., Quintana et al., 2004) and might contain higher or lower levels of guidance. All these scaffolding measures were found to be beneficial for developing diagnostic competences (Chernikova et al., 2019). Moreover, the study found an interaction effect between types of scaffolding and prior professional knowledge, suggesting that learners with higher prior knowledge benefit more from scaffolding with less instructional guidance, while learners with low prior knowledge benefit more from scaffolding with more instructional guidance. In this meta-analysis, we aim to replicate the findings on the interaction effect for a broader set of complex skills.

Research Questions

Quite strong empirical evidence supports learning through problem solving in postsecondary education in general (Belland et al., 2017; Dochy et al., 2003). Simulation in turn can be viewed as one of the ways to apply problem solving in close-to-reality settings. There is also empirical evidence in favor of using simulation in medical (e.g., Cook, 2014; Cook et al., 2013) and nursing (Hegland et al., 2017) education to facilitate learning. In line with previous research findings, we expect moderate to large effects of simulation-based learning on the development of complex skills.

We assume that type of simulation, technology use, duration, and authenticity might contribute to the involvement of learners, which in turn might have an effect on the development of complex skills. We expect small to moderate overall effects of technology use (Tamim et al., 2011), positive effects of longer duration (e.g., Cook, 2014), and higher authenticity of simulations (e.g., Hamstra et al., 2014).

Belland et al. (2017) found moderate to large effects of scaffolding in computer-based learning environments. The meta-analysis by Chernikova et al. (2019) reported positive effects of different scaffolding types (e.g., role taking, prompts, reflection phases) on the development of diagnostic competences in medical and teacher education. In line with these findings, we expect that scaffolding would have a positive effect on the advancement of complex skills beyond the effects of simulation-based learning environment. We adopt the scaffolding types categorized in the study (Chernikova et al., 2019) and expect to find a similar pattern of the effects in simulation-based learning environments and for a broader set of complex skills. We expect significant positive added value from the scaffolding compared with simulations with no scaffolding provided.

We expect simulation-based learning to be effective for learners with both low and high prior knowledge when supported by additional instructional measures (e.g., scaffolding). Moreover, in line with the recent meta-analysis by Chernikova et al. (2019), we expect to find an interaction between learners’ prior knowledge and the effectiveness of different scaffolding types, with the scaffolding providing high levels of guidance to be more effective for unfamiliar contexts (a lower level of education), and that relying on high levels of self-regulation to be more effective for familiar contexts (higher levels of education). We expect that the added value of the scaffolding in a simulation-based learning environment will be even more pronounced for learners with low levels of prior knowledge.

Method

Inclusion and Exclusion Criteria

The inclusion criteria were based on the outcome measures reported, research design applied, and statistical information provided in the studies. We discuss these criteria below in more detail.

Complex Skills

The studies eligible for inclusion had to focus on the facilitation of complex skills related to critical thinking, problem solving, communicating, or epistemic activities (Fischer et al., 2014) performed individually or collaboratively. Types of outcomes were first collected as mentioned in primary studies and subsequently categorized. This meta-analysis focuses only on objective measures of learning (written or oral knowledge tests, assessment of performance based on expert rating, or any quantitative measures, including but not limited to frequency of behavior or the number of procedures performed correctly). Studies that reported only learners’ attitudes, beliefs, or self-assessment of learning or competence were excluded from the analysis.

Research Design

The aim of this meta-analysis was to draw causal inferences regarding the effect of a simulation-based learning environment and instructional support on the development of complex skills, so the studies eligible for the analysis had to either have an experimental or quasi-experimental design with at least one treatment and one control condition or report both pre- and postmeasures in the case of a within-subject design. The treatment condition had to include a simulation-based learning environment with instructional support measures, and the control condition should not include active participation in a simulation-based learning environment but could include other instructional methods (i.e., traditional teaching). Studies that did not report any intervention (i.e., studies on tool or measurement validation), studies that reported the comparison of multiple experimental designs (e.g., simulation with few prompts vs. simulation with many prompts, or using best-practice examples vs. erroneous examples within a simulation), and studies that did not provide any control condition (control group or pretest measures) were excluded from the analysis. Study design was used as a control variable in the analysis.

Study Site, Language, and Publication Type

Eligible studies were not limited to any specific study site. To ensure that the concepts and definitions of the core elements coded for the meta-analysis were comparable and relevant, only studies published in English were included in the analysis. However, the origin of studies and language of conduction were not restricted. Different sources, both published and unpublished, were considered to ensure the validity and generalizability of the results. There were no limitations regarding publication year. Publication type was used as a control variable.

Effect Sizes

Eligible studies were required to report sufficient data (e.g., sample sizes, descriptive statistics) to compute effect sizes and identify the direction of scoring. If a study reported information about the pretest effect size, it was used to adjust for pretest differences between treatment and control conditions.

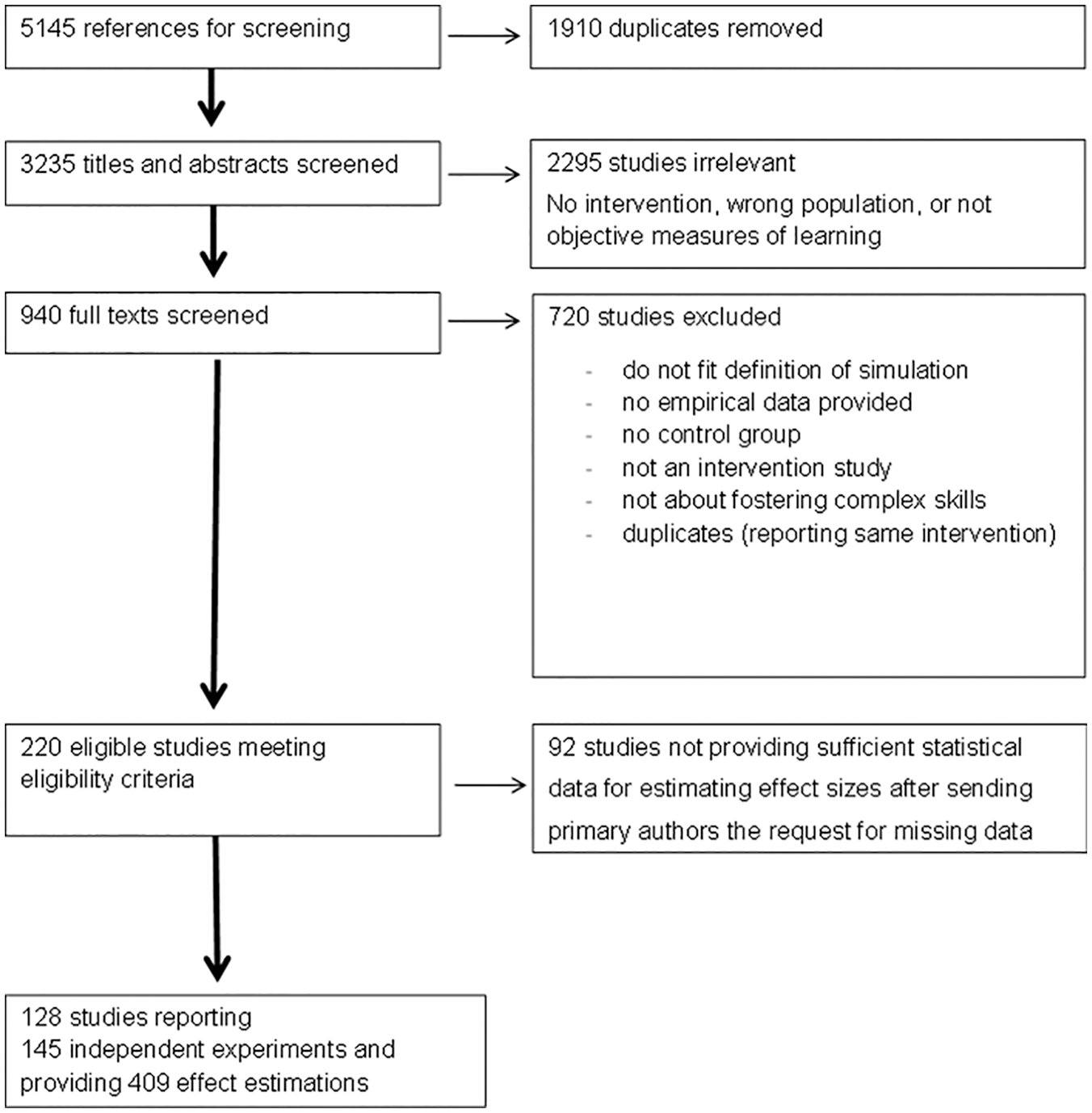

Search Strategies

The search terms were simulat*, competenc*, skill*, teach*, and medic* with no restriction on where the terms occur (title, abstract, descriptor, or full text); we also included experiment* OR quantitative* OR control OR quasi OR effect OR impact in the search string to focus on experimental studies testing the effects of treatment on learning. The search results were obtained on April 13, 2018, and included all articles published before April 2018 in the PsycINFO, PsycARTICLES, ERIC, and MEDLINE databases. After deleting the duplicates, the search resulted in 3,235 articles in medical and teacher education, counseling, engineering, management, and care. During abstract and full-text screening, studies that met the exclusion criteria mentioned in the previous section were excluded; all other studies were included in the next step of the screening and the analysis (Figure 1).

Flow chart of study selection process.

Coding Procedures

The coding scheme for moderators was developed based on a conceptual model developed by the research group (Heitzmann et al., 2019). First, the studies were coded for eligibility criteria using Covidence (online version; Veritas Health Innovation, 2019); the flow chart is presented in Figure 1. Coding for eligibility was done by the first author and three student research assistants using Covidence (online version; Veritas Health Innovation, 2019). If in any doubt (not obvious exclusion), the study was included for further screening. In 95% of cases, the coders agreed about eligibility in the first round. In regular meetings, the coders discussed studies for which there was uncertainty related to eligibility until complete agreement on the inclusion or exclusion of a study was achieved.

Second, within the coder training, 50% of primary studies were double coded (with an interrater agreement above .80). All discrepancies were discussed to reach the final agreement of 100%, and after agreement was reached, all the studies (including training material) were coded by the same author and student research assistants independently. All interrater agreement values can be found in the coding manual, submitted as supplemental document. For “authenticity,” all the studies were double coded and the initial interrater agreement was 78% (with a Cohen’s kappa of .65, due to strong imbalance in the amount of studies in each category), the disagreement was resolved through discussion, and the studies that did not provide enough information for the unambiguous decision (N = 37) were excluded from this part of the analysis.

The domain was coded as medical, teacher education, or other (i.e., psychological counseling, management). Study design was coded as experimental (participants were randomly assigned to conditions), two-group design (no randomization took place), and one-group design (pre–post design). Type of control group was coded for experimental and two-group designs to distinguish between waiting condition, which did not receive any treatment, and instructed control groups, which received instructional support but no simulation. For one-group designs, the type of control was coded as “baseline,” which is the level of complex skill before the intervention as measured in the pretest. Additionally, publication year, information about authors and publication type were retrieved from the primary studies.

Technology use was coded to address the technology support independently from the content of simulation. It was coded as “no” if no specific equipment was used to present the simulation or facilitate the interaction of learners with the problem (e.g., role play), “computer” for screen-based simulations and interacting with virtual or real objects in a computer-supported environment, “simulator” for using specific tools (e.g., low- and high-fidelity mannequins) that learners have physically interacted with, and “virtual reality” for interacting with virtual objects or people in an immersive environment.

For simulation type, we focused on the source of information for learning. The simulations were categorized into document in the case of interaction with written information to make a decision, diagnose, and so on (e.g., disease history or students’ academic achievement tests or homework), virtual object (interaction with a virtual object), role play (interaction with peers, standardized patients, or simulated students), or live model (interaction with real patients, students, or clients). For medical education, we also distinguished between mannequin (a human-like model that shows clinical symptoms) and model (a real object, representing the human body or its parts, that does not show clinical symptoms). In cases where multiple types of simulation were used during treatment, the code “mixed” was used.

Duration of simulation was collected in an open format and subsequently categorized into “very short” (lasting up to1 hour), “short” (lasting 1 or more hours, up to 1 day), “medium” (lasting 1 or several days, up to a month), or “long” (lasting more than 1 or several months).

Authenticity was coded as low if the simulation (task, scenario, or equipment used) resembled reality (real tasks and activities) to a low degree. Authenticity was coded as high if the simulation resembled reality in much detail and in different aspects (e.g., teaching to a simulated classroom, using high-fidelity simulators in medicine, whole facility simulation with different professionals involved in real-time simulation). If only one aspect of the task or situation resembled reality to a high degree and all other aspects were not presented in the way the real task requires, authenticity was coded as selected.

The complex skills were collected in an open format from the primary studies based on target learning outcomes mentioned and were subsequently categorized into the following: “diagnosing” if diagnosing was involved (e.g., diagnosing learning difficulties in teacher education or a particular disease in medical education); “technical performance” for completing complex procedures following known steps or a checklist (performing laparoscopy in medicine or implementing the teaching technique in class for teacher education); “communication skills” for assessment measures of the quality of interaction with other people; “teamwork” for outcome measures related to coordinated performance in a collaborative task; “general problem solving” for problem solving not involving diagnosing (e.g., use of argumentation, identifying or setting goals); and “management” for outcome measures related to managing critical situations (e.g., classroom management or management of critical situations in nursing or medicine).

Prior knowledge was coded in two dimensions: familiarity of context and level of education. Familiarity was coded as low if participants of the study had had little or no exposure to a similar context, and high if learners had already been exposed to a similar context. Level of education was coded as low for undergraduate and graduate students and high for postgraduate students and licensed professionals.

Scaffolding was coded as “included” or “not included” for the following categories: (1) examples (observing modeled behavior or example solutions), (2) prompts (receiving hints on how to work in simulated scenarios), and (3) reflection phases (thinking about the goals of the procedure, analyzing own performance and planning further steps). Additionally, knowledge convey (e.g., lecture or info session prior to simulation-based learning) was coded as “included” or “not included” to control for additional instructional support beyond the scaffolding. In the post hoc analysis, based on the initial coding mentioned above, the scaffolding was recoded to capture the combinations “no scaffolding,” “examples only,” “prompts only,” “reflections only,” “examples + prompts,” “examples + reflections,” “prompts + reflections,” and “all included.”

Statistical Analysis

Calculation of the Effect Sizes and Synthesis of the Analysis

For our analyses, we followed the procedure described by Borenstein et al. (2009) for effect size calculation, integration, and moderator analysis. First, the data on the effects of selected studies was gathered with the help of an Excel sheet. In addition to the coding of moderator variables and statistical values, the Excel sheet was used to compute Cohen’s d, variance, and SE of Cohens’s d as well as correction factor J (see Borenstein et al., 2009). Then, R Studio (Version 3.5.0., 2018) was used to calculate Hedges’s g and perform effect aggregation and metaregression (“metafor” and “robumeta” packages). Second, as multiple studies reported multiple outcomes and used several treatment and/or control conditions, the correlated effect sizes were handled by using robust variance estimation correction coefficients, as suggested by Tanner-Smith et al. (2016). Third, the effect sizes were controlled by pretest differences as these differences may increase the share of random effects variance. As prior knowledge has to be regarded as an important predictor of knowledge at posttest (e.g., Ausubel, 1968), it has to be controlled for if possible. If available, pretest data were used to adjust the effect sizes by subtracting the pretest effect sizes from the posttest effect sizes and adding up the variances of both effects. Fourth, preliminary analyses were performed to identify systematic bias among effects from primary studies. Fifth, a random effects model was used to address the research questions. Confidence intervals were employed to assess the significance of an effect. Heterogeneity estimates (Q-statistics) were utilized to determine the variance of the true effect sizes between studies (tau) and the proportion of this variance that could be explained by random factors (I2). The thresholds suggested by Higgins et al. (2003) were used to interpret the I2 (25% for low heterogeneity, 50% for medium, 75% for high heterogeneity).

Assessment of Publication Bias

The recent simulation study by Carter et al. (2017) compared the effectiveness of different techniques to estimate and correct for publication bias under different conditions (i.e., high heterogeneity). They suggest not relying only on the results of the random effects model if the probability of publication bias is high. We expect high effects of simulation-based learning (i.e., Cook, 2014) and do not expect high publication bias; however, we expect high heterogeneity of the effects, which according to Carter et al. (2017) can hinder some types of analysis (e.g., p-curve analysis). We have a relatively small sample of empirical studies and apply a range of techniques to identify possible biases (Egger’s test, trim and fill) and improve the generalizability of the results (Sterne & Egger, 2001). If no publication bias were detected, we would rely on random effects model estimation for the effect sizes with robust variance estimation correction.

Results

Results of Literature Search

The 145 eligible studies (from 128 articles published in the period 1979‒2018) provided 409 effect estimations (Figure 1). The total sample consisted of 10,532 participants. Most of the studies come from medical education (126 studies), while studies in teacher education are represented by seven independent studies and other domains by 12 independent studies. Most studies focused on general problem solving (51) or the technical performance of a particular complex procedure (56); the other outcomes were communication skills (24), diagnosing (18), managing critical situations (18), and collaboration and teamwork (5). Some studies reported more than one complex skill as learning outcomes.

Out of 409 effects of simulation-based learning, only 270 included complete information with no missing codes on instructional support measures used within the simulation. In 12% of treatments, the simulation was not accompanied by any additional instructional support, while 25% of simulations were accompanied by knowledge convey (i.e., lectures or other expository forms of instruction). It is worth noting that a small number of simulations reported using a single scaffolding type only: 6% used examples with no other support measures, 3% used simulations with additionally induced reflection phases, and less than 1% used solely prompts to support simulation. The most frequent combinations of instructional support measures were knowledge convey together with examples (82 effects), knowledge convey with reflection phases (62 effects), and examples with reflection phases (43 effects). However, the analysis identified that there is a considerable amount of missing data indicating that the instructional support measures were not mentioned explicitly or in sufficient detail in the description of the treatment in primary studies. Additionally, almost all of the studies reported some kind of feedback that participants received from the learning environment or the instructor during or after the simulation, which was not explicitly coded and therefore stayed beyond the focus of the current analysis.

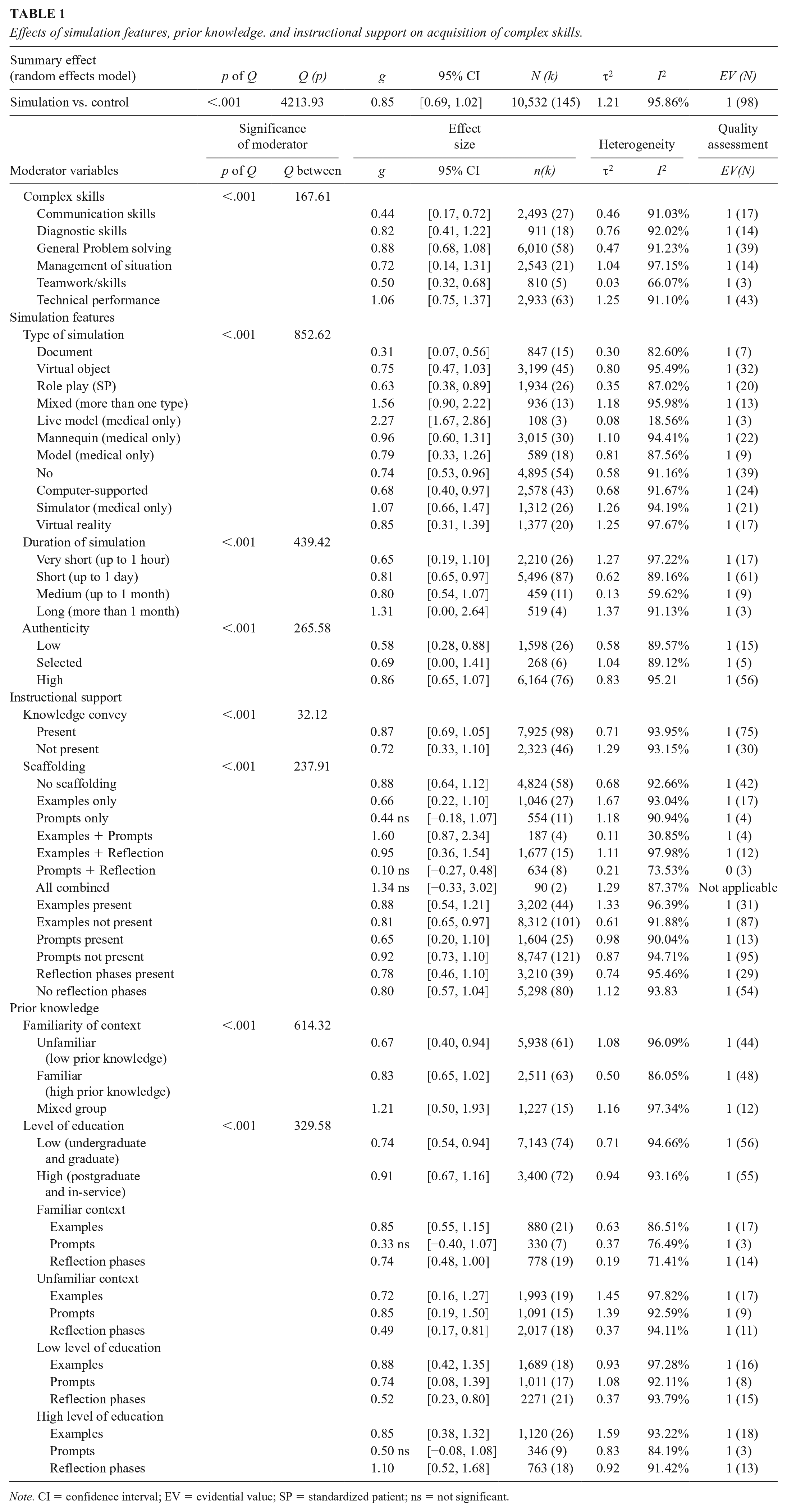

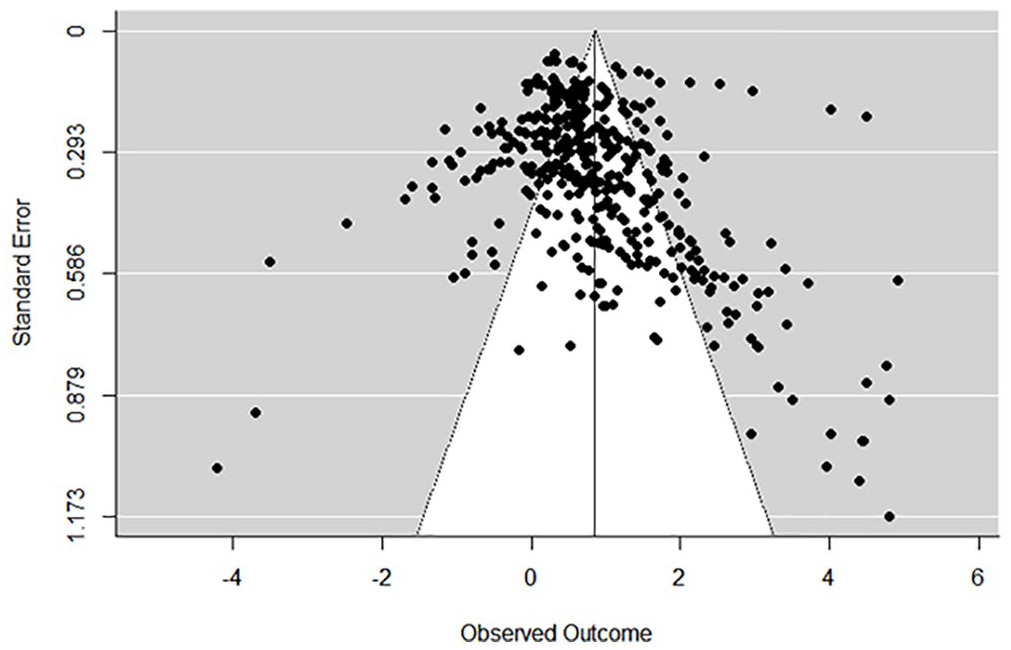

Quality Assessment and Preliminary Analysis

The procedures targeted at assessing the quality of data coming from primary studies (e.g., no linear relationship between effect size and standard error, symmetry of funnel plot) and the generalizability of the summary and moderator effects found in the meta-analysis indicated no evidence of publication bias or questionable research practices (see Figure 2 and Table 1). The metaregression on control variables (year of publication, publication type, study design, type of control, domain) showed that these factors do not explain any statistically significant amount of variance between study effects (p values above .05).

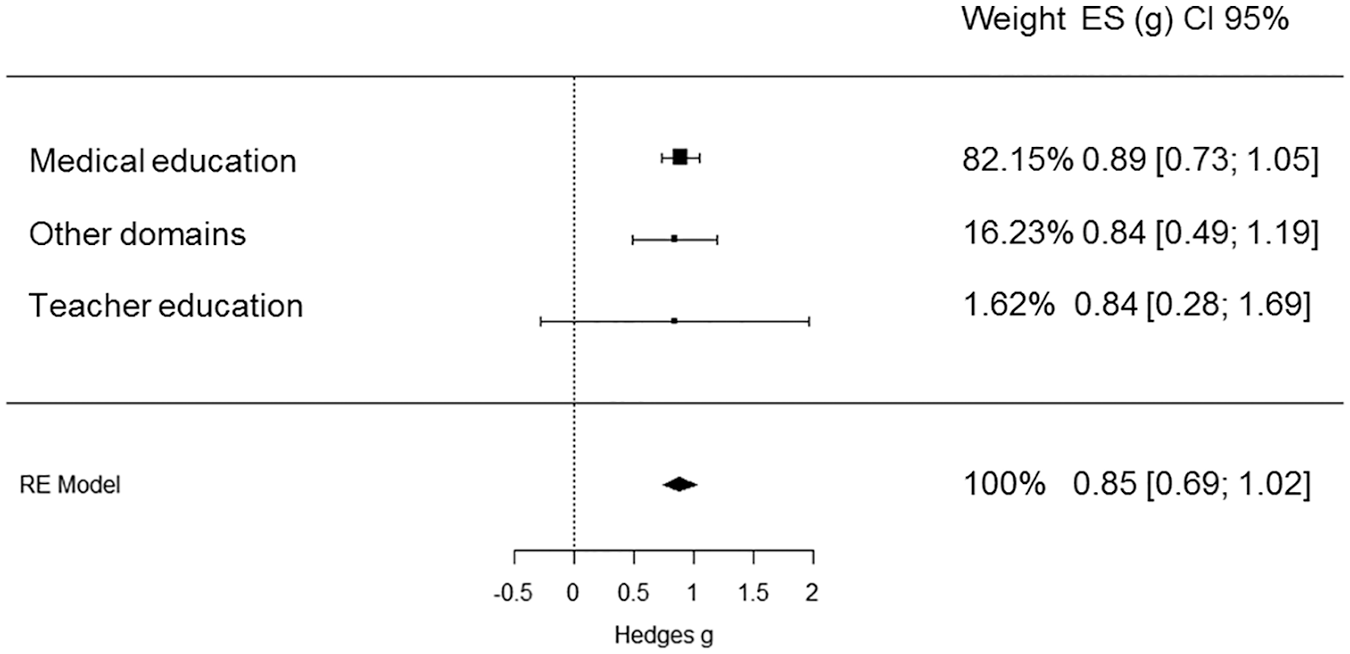

Forest plot of the overall effect of simulations on the acquisition of complex skills across domains.

Effects of simulation features, prior knowledge. and instructional support on acquisition of complex skills.

Note. CI = confidence interval; EV = evidential value; SP = standardized patient; ns = not significant.

Overall Effect of Simulation-Based Learning on Complex Skills (Research Question 1)

With regard to Research Question 1, simulation-based learning had a large positive effect on fostering complex skills compared with conditions (1) without intervention (waiting control: g = 1.02, SE = 0.30, N = 16); (2) with differently instructed control: g = 0.82, SE = 0.13, N = 53); or compared with (3) baseline (g = 0.88, SE = 0.10, N = 76). As there were no statiscally significant differences between three control conditions, the overall effect was estimated: g = 0.85, SE = 0.08, N = 145. As expected, the analysis also identified high heterogeneity between studies: Q (409) = 4213.93, p < .0001; τ2 = 1.2; I2 = 95.86%. This heterogeneity could not be explained by control variables (year of publication, publication type, study design, type of control, domain). The effect sizes found in individual studies, weights, and confidence intervals, as well as the summary effect from the random effects model estimation, are presented in Figure 2 and organized by domains. A funnel plot of effect size distribution and standard errors is presented in Figure 3.

Funnel plot of the overall effect of simulations on the acquisition of complex skills.

Communication and collaboration skills (teamwork) are only moderately facilitated by simulation-based learning (g = 0.44, SE = 0.15, and g = 0.50, SE = 0.08, respectively), followed by situation management (g = 0.72, SE = 0.30), diagnostic skills (g = 0.82, SE = 0.21), and problem solving (g = 0.88, SE = 0.11); the highest effects of simulations reported are on technical performance (g = 1.06, SE = 0.15). The number of studies in each group can be found in Table 1.

Features of Simulation (Research Question 2)

Simulation Type

Simulation-based learning had greater effects when presented in the form of live simulations with real patients (g = 2.27, SE = 1.04, N = 3, medical education only), followed by hybrid simulations, where several simulation types were used during learning phases (g = 1.56, SE = 0.17, N = 13). In medical education, mannequins were also highly effective (g = 0.96, SE = 0.11, N = 30). Role plays and using virtual objects had moderate effects on learning (g = 0.63, SE = 0.12, N = 26, and g = 0.75, SE = 0.09, N = 45), and simulations based on documents, although often highly resembling actual tasks (X-rays of patients, students’ homework) were the least effective (g = 0.31, SE = 0.15, N = 15).

Technology Use

Higher levels of technology support during simulation were associated with greater effects on learning outcomes: simulators (e.g., programmed mannequins) in medical education (g = 1.07, SE = 0.18, N = 26) and virtual reality across domains (g = 0.85, SE = 0.24, N = 20) were more effective than screen-based simulations (g = 0.68, SE = 0.13, N = 43) and simulations that did not implement technology support (g = 0.74, SE = 0.11, N = 54).

Authenticity

Simulations that resembled reality at the low level had an effect of g = 0.58, SE = 0.18, N = 26, while high authenticity simulations, which represented all aspects of highly realistic situations, had an effect of g = 0.86, SE = 0.10, N = 76. Simulations that represented one aspect of a situation in a highly realistic way, but all other aspects were less realistic (selected authenticity), also had positive effects g = 0.69, SE = 0.40. However, the data for this type of authenticity came from a relatively small sample of studies in medical education (N = 6) and was highly heterogeneous.

As post hoc analysis, we looked at the interaction between authenticity and familiarity of context (learners’ prior knowledge). For unfamiliar contexts, high authenticity simulations had more value (g = 0.74, SE = 0.16) than low authenticity simulations (g = 0.57, SE = 0.28). For familiar contexts, high authenticity simulations also had more value (g = 0.92, SE = 0.14) than low authenticity simulations (g = 0.57, SE = 0.19). No interaction was found between authenticity and level of education as another indicator of prior knowledge. There was an insufficient number of studies to estimate the effects of authenticity for mixed groups.

Duration of Simulation

Very short simulations lasting less than an hour had an effect of g = 0.65, SE = 0.20. Longer simulations were associated with higher effects: simulations lasting for several hours (up to a day)—g = 0.81, SE = 0.09; simulations lasting for several days (up to a month)—g = 0.80, SE = 0.18. A few simulations lasted for more than a month and had an effect of g = 1.31, SE = 0.31, but the number of studies (N = 4) reporting simulations with an extended duration was not sufficient for conclusive results.

Added Value of the Scaffolding (Research Question 3)

To evaluate the added value of scaffolding, the effects of simulations with and without particular scaffolding types were compared. When examples were present (g = 0.88, SE = 0.17), the effects of simulations were descriptively higher than without examples (g = 0.81, SE = 0.09); however, the difference was not statistically significant. The examples across all domains were usually represented by live or recorded demonstrations of how to deal with the simulated environment, particular tool, or situation (e.g., Chen et al., 2015; Damle et al., 2015; Overbaugh, 1995). Some studies mentioned using correct or positive examples together with erroneous or negative examples (e.g., Douglas et al., 2016), but more commonly, only demonstrations of correct target behaviors were used.

The effects of simulation when prompts were presented (g = 0.65, SE = 0.23) were significantly lower than in the absence of prompts (g = 0.92, SE = 0.09). The prompts in the primary studies were represented by short textual hints within the simulation environment, which suggested actions or allowed to revisit the conceptual level during the exploration of the simulation (e.g., Dankbaar et al., 2016; Kumar & Sherwood, 2007); another form of prompting are questions that may lead the learner to further actions (Alfred & Chung, 2011).

The presence of reflection phases had no added value, while the effects with reflection phases (g = 0.78, SE = 0.16) and without reflection phases (g = 0.80, SE = 0.12) were similar. The reflection phases in the medical context introduced in the primary studies usually involved reflecting on positive and negative aspects or strategies used in the demonstration, in the own performance or a peer’s performance (e.g., Alinier et al., 2006; Cuisinier et al., 2015; Douglas et al., 2016). These phases were held during briefing and debriefing sessions and usually implied that insights from these reflections can be used for further simulation trials or at least to improve one’s performance in a final assessment. In nonmedical contexts, reflection phases were focused on asking learners (1) to write a report, documenting the process and problems occurring and evaluating own actions (e.g., Newell & Newell, 2018), or (2) to fill in worksheets, reflecting on their actions in the simulated environment and the consequences of these actions (e.g., Girod & Girod, 2006) or to reflect on the case scenario and discuss the action plan with peers and supervisors (e.g., Broadbent & Neehan, 1971).

To summarize, the comparison of presence versus absence of particular scaffolding types did not support our hypothesis about an added value of scaffolding; the effects were relatively high in the cases of presence and of absence of examples, prompts, and reflections. Post hoc analysis was performed to clarify the high heterogeneity in the effects, which could have led to a lack of significant differences in “included” versus “not included” scaffolding types.

Post hoc analysis also found that treatments with no scaffolding explicitly mentioned in the description were also connected with high effects of learning (g = 0.88, SE = 0.11), partly due to knowledge convey. But treatments with neither scaffolding nor knowledge convey (N = 19) also resulted in relatively high learning outcomes (g = 0.68, SE = 0.21). Furthermore, different types of scaffolding were usually combined within the study, and some combinations were more effective than others, partly supporting the hypothesis about the added value of scaffolding. For example, examples were often (N = 15) combined with reflection phases with the effect of (g = 0.95, SE = 0.17). Combinations of examples and prompts showed very high effects in a few studies (N = 4) in medical education (g = 1.60, SE = 0.37). If only prompts were used as scaffolding (g = 0.44, SE = 0.32) or combined with reflection phases (g = 0.10, SE = 0.19), no significant learning effects were found. Combinations of all scaffolding types (N = 2) resulted in very heterogeneous results that did not reach statistical significance (g = 1.34, SE = 0.86). To sum up, the hypothesis of the added value of scaffolding can be partly supported by post hoc analysis (see Table 1).

Prior Professional Knowledge

With regard to learners’ prior knowledge, subgroup analyses (Table 1) indicated that learners showed a lower increase of complex skills in an unfamiliar context (g = 0.67, SE = 0.10) than in a familiar context (g = 0.83, SE = 0.13), but overall learners in both contexts benefited from simulation-based learning. When learners with lower and higher prior knowledge were combined in the same group (mixed group), even higher learning outcomes were reached (g = 1.21, SE = 0.36). If the level of education was taken as a measure of prior knowledge, learners both on a low level of education (g = 0.74, SE = 0.11) and on a high level of education (g = 0.91, SE = 0.07) improved their skills through simulation-based learning with a similar pattern (i.e., learners with higher prior knowledge benefited more from simulations).

Interaction Between Scaffolding Types and Prior Knowledge and Experience

There was a significant interaction effect found between prior knowledge and the effectiveness of different scaffolding types.

Familiarity of Context as an Indicator of Prior Knowledge and Experience

In a familiar context, examples have no added value, but neither do they hinder learning. Learners with higher prior knowledge (as defined by the familiarity with the context) learn equally well if examples are presented (g = 0.85, SE = 0.12) or not (g = 0.83, SE = 0.15). In unfamiliar context (learners have little prior knowledge of what is learned), examples have more added value; however, the difference between effects if examples are presented (g = 0.72, SE = 0.28) or not presented (g = 0.65, SE = 0.14) does not reach statistical significance.

In contrast, introducing prompts in a familiar context was not beneficial for learning (g = 0.33, SE = 0.38). If no prompts were presented, the average effect of simulation in a familiar context was g = 0.96, SE = 0.10. Prompts had a significant positive effect on learning in an unfamiliar context (g = 0.85, SE = 0.33) compared with no prompts (g = 0.63, SE = 0.16).

Reflection phases induced by educators were more beneficial in familiar (g = 0.74, SE = 0.15) than in unfamiliar contexts (g = 0.49, SE = 0.21). The difference failed to reach statistical significance (p = .13), though.

The mixed group had an insufficient number of studies to perform the analysis of interaction with scaffolding types.

Level of Education as an Indicator of Prior Knowledge and Experience

For postgraduate learners, the presence of examples (g = 0.85, SE = 0.20) showed a smaller effect than if no examples were provided (g = 1.00, SE = 0.11). Thus, postgraduate learners had a pattern different from undergraduate and graduate learners, who benefited more from the presence of examples (g = 0.88, SE = 0.27) than if no examples were provided (g = 0.80, SE = 0.11). The differences, however, did not reach statistical significance due to high heterogeneity within the conditions where examples were present versus where examples were absent.

Similarly, introducing prompts for a high (postgraduate learners and practitioners) level (g = 0.50, SE = 0.36) was not beneficial for learning compared with simulations without prompts (g = 0.91, SE = 0.11). For low-level (undergraduate and graduate) learners, there was no statistically significant difference between the prompts (g = 0.74, SE = 0.24) and no prompts (g = 0.76, SE = 0.09) condition; however, introducing prompts was related to higher learning outcomes in the high-level group.

Reflection phases were highly beneficial for postgraduate learners (g = 1.10, SE = 0.16) compared with no reflection phases (g = 0.86, SE = 0.14). In contrast, graduate and undergraduate learners had better learning outcomes when no reflection phases were used (g = 0.81, SE = 0.14) than in the presence of reflection phases (g = 0.52, SE = 0.13).

In post hoc analysis, we analyzed only the treatments with (1) examples as the only scaffolding method (N = 27), (2) prompts as the only scaffolding method (N = 11), (3) reflections as the only scaffolding method (N = 15), and (4) a combination of examples and reflections as the most frequent combination (N = 15). Other combinations did not include a sufficient number of studies for the analysis.

For low-education-level learners, examples were more beneficial (g = 1.15, SE = 0.58, N = 9) than for learners with a high level of education (g = 0.56, SE = 0.25, N = 18).

For low-education-level learners, prompts were more beneficial (g = 0.69, SE = 0.61, N = 7) than for learners with a high level of education (g = 0.14, SE = 0.08, N = 5), but no significant effects for learning were found in either group.

For low-education-level learners, reflections were more beneficial (g = 1.13, SE = 0.23, N = 7) than for learners with a high level of education (g = 0.69, SE = 0.15, N = 8). Moreover, it was the most beneficial scaffolding for learners with a high level of education compared with the other two (examples and prompts).

The example–reflection phase combination was highly beneficial for learners with a high level of education (g = 1.71, SE = 0.59, N = 8), but it also had a positive effect on learners with a low level of education (g = 0.48, SE = 0.19, N = 7).

Discussion

The results of this meta-analysis show that simulation-based learning has large positive overall effects on the advancement of a broad range of complex skills and across a broad range of different domains in higher education. The size of the effect of simulations on learning even exceeds the expectedly large influence of the learners’ prior knowledge. The effect size is still very large when simulation-based learning is compared with different kinds of instruction instead of “real” control groups, including waiting controls. There is only a very small number of instructional methods for which these relations hold true. These include feedback and formative assessment (see Hattie, 2003; Hattie & Timperley, 2007), which already point to possible interpretations of effects that large. One of the issues in higher education is the lack of feedback in the context of complex authentic activities. Simulations typically address exactly this issue. They often entail providing information to the learner on the discrepancy of currently observable competence indicators and a desired competence goal, which is one of the most common definitions of feedback in the context of learning (Hattie & Timperley, 2007). The potential of simulations for learning has been known for a while in medical education (e.g., Cook, 2014) but is now increasingly transferred (and sometimes reinvented) to other domains of higher education (see Heitzmann et al., 2019). This meta-analysis provides supportive evidence that the large effects of simulation-based learning found for medical knowledge and skills do generalize across domains.

But simulations are more than just feedback as they provide opportunities for meaningful applications of knowledge to professional problems (Grossman et al., 2009). Simulated problems may be tailored to the needs of learners as an approximation of practice and are thus probably often more effective than real practice.

With regard to types of simulation, the analysis shows that combining several types of simulation over the treatment time—for example, role play with practice on a model (Dumont et al., 2016) or virtual reality (Lehmann et al., 2013)—might have greater effects on learning. We have also identified that some types of simulation are more frequently used to target particular complex skills. For example, communication skills are frequently facilitated through role plays, whereas technical performance is frequently addressed by using a simulator or virtual reality. Therefore, we would like to emphasize that the simulation type should not be viewed independently of target skills and instructional support quality. The type of simulation depends a lot on the learning context (e.g., radiologists have to work with images, teachers with students’ tests), but providing different types of simulations can be beneficial across domains.

The present meta-analysis included different types of complex skills as outcome measures. The analysis yielded evidence of differences with respect to facilitating effects that are considerably bigger than the differences between the effects of the domains involved. The biggest effect sizes were obtained for tasks related to technical performance that mainly come from medicine (Araújo et al., 2014; Banks et al., 2007), followed by problem-solving and -diagnosing skills. These findings emphasize that if the simulation requires the coordinated use of different mental modes and abilities—for example, motor and sensory skills together with reasoning—the learning gains are larger than for simulations that require the involvement of fewer skills. Despite these differences, simulations had effects that can be categorized as large positive effects for all but one type of skill. The exception is teamwork, where the meta-analysis found a medium positive effect only. This in turn may be due to the high complexity in the case of real team training. Another explanation of low effects is that it might be difficult to find ways to further improve social skills, as they are by far the most trained skills we possess.

There has been a long debate on political and societal levels around the question of an added value of technologies for learning (cf. Rogers, 2001). According to this meta-analysis, simulations still have substantial effects if no digital technology is used at all. Typical computer-and-screen-based simulations do not outperform well-organized no-tech role plays and simulated patients or simulated students. Both of them, the technology-enhanced and the no-tech variants do have large effects. However, some more recent technologies that enhance sensory perception (e.g., virtual reality, full-scale simulators) seem to make a difference. With more studies and better theory, it will be possible to identify features and dimensions of these technologies that are responsible for greater learning gains.

Another main finding of this meta-analysis is certainly that simulations with an overall high authenticity do have greater effects than simulations with a lower authenticity. However, it is also very interesting that even simulations with low authenticity still have large effect sizes, exceeding those of many other forms of instruction. This is encouraging for higher education practice as high-authenticity simulations are sometimes very expensive and time-consuming to build. At least for learners with some experience of the real situation, a reduced version might do just as well in low- as in high-authenticity simulations. Moreover, simulations aimed at high authenticity for only one or a small number of objects and processes are associated with effects similar to the effects of simulations aimed at high authenticity with respect to all situational parameters. This can be taken as supportive evidence of the approximation-of-practice approach (Grossman et al., 2009), claiming that the real advantage in simulations is the reduction of task complexity to levels a learner can handle.

A more practical question regarding the use of simulations in higher education concerns the extent to which their effects depend on prior knowledge. In other words, are simulations better suited for beginning students or for more advanced students? In this meta-analysis, the overall effects are large for both familiar and unfamiliar contexts as well as lower and higher levels of university education. However, the effects are greater if learners are unfamiliar with the context and the task. Taken together, these findings seem to indicate that even more advanced studies in higher education enable effective simulation of professional situations of which learners do not have prior experience. This pattern of finding does not support the claim that simulations as forms of problem-based learning are only applicable in later phases in higher education when learners are familiar with the relevant concepts and procedures (see Dochy et al., 2003).

Based on the findings by Belland et al. (2017) and Chernikova et al. (2019), we expected significant positive effects of the scaffolding. However, a surprisingly small additional effect to the large effects of simulations can be attributed to scaffolding. One explanation could lay in the nature of simulation-based environments, which might already include some levels of instructional support, which is built-in in the scenario (e.g., feedback), this would also explain lower effects than the ones found by Belland et al. (2017) when comparing scaffolding with no scaffolding conditions. For the instructional support, we did not find a single pattern for effective simulation. The very same pedagogies were a success for some complex skills, while simultaneously being a failure for the other skills. We have also found that simulation-based learning implemented in primary studies has strong effects, but we admit that presenting an opportunity to interact with learning material will not improve learning by default. Additional instruction does not seem to add much beyond the effects of simulation; however, in some cases it does. A meta-analysis is not the right method to deliver detailed explanations for these exceptions. Here we need more primary studies. One contributing factor may be that in many simulations learners can find out the correct strategy themselves, by trial and error if needed. A more fine-grained analysis in the primary studies would be needed to test hypotheses stating that learners prefer trying without help instead of using assistance (see Aleven et al., 2003) also for the context of simulations in higher education.

Another important question of this meta-analysis targeted the additional instructional support and the extent to which the effects of this support depended on individual learning prerequisites, in particular, learners’ prior knowledge.

The findings suggest that if learning prerequisites are not considered at all, one could even conclude that scaffolding does not make a real difference. Moreover, scaffolding is even associated with dysfunctional learning processes in some studies. The picture changes once we take the moderating effects of prior knowledge into account. This may be seen as trivial, as the training wheel effects of scaffolding had been established a long time ago (Carroll & Carrithers, 1984). The training wheels keep learners away from possible errors and their consequences, which is definitely beneficial at early stages of learning. However, the findings of this meta-analysis extend this established perspective on scaffolding. The findings support the claim made elsewhere (Chernikova et al., 2019) that different types of scaffolding rather than their presence or nonpresence have a kind of effectiveness curve in relation to learners’ different levels of prior knowledge. Examples and prompts have better effects for learners with low prior knowledge, whereas reflection phases have their highest effectiveness with high prior knowledge.

Put more generally, a certain type of scaffolding may work optimally in interaction with a specific level of prior knowledge, high or low. However, whereas some types of scaffolding just lose their effectiveness with respect to the other knowledge level, others even have detrimental effects for the “nonfitting” prior knowledge level. The latter effects are known as “expertise reversal effects” from cognitive load research (Kalyuga et al., 2003). In this meta-analysis, we found indications of reversal effects for prompts and for reflection phases. Prompts had their optimal effectiveness in unfamiliar contexts and detrimental effects in familiar contexts. For reflection phases, optimal effectiveness was given for postgraduate students and in familiar contexts, whereas graduate and undergraduate students learned better if no reflection phases were implemented.

One possible explanation for why this meta-analysis did not find a negative effect for examples might be that many of the studies included in the sample offered examples as additional options to problem solving. They did not replace problem solving with examples. Thus, more advanced learners may simply not have chosen to use them. In contrast, reflection phases were typically implemented in an intrusive way and made the learners interrupt their problem solving for some time. Prompts appeared during problem solving, attracting the learners’ attention at least for some of the time needed to read and decide that the prompt was irrelevant. Of course, this suggested model of optimal effectiveness of different scaffolding types depending on learners’ prior knowledge and experience needs to be put to the test in primary studies.

Limitations

Simulations are broadly used in different domains to facilitate different kinds of content knowledge and skills. The current meta-analysis puts a particular focus on learning of complex skills connected with interaction with other people, seen as complex systems (on physiological, cognitive, psychological, social, or ethical levels), and its findings do not straightforwardly generalize to other domains like science, mathematics, engineering, or informatics. One of the concerns for generalization is that a large body of the STEM research has been conducted in secondary rather than higher education, and the simulations are often used (1) to advance knowledge and skills related to interaction with mechanisms or abstract systems or (2) to understand complex concepts and their interrelation.

A large proportion of the findings in this meta-analysis comes from medical education. Although the findings and the magnitude of the effects are similar in different domains and we additionally performed sensitivity analysis to ensure the generalizability of findings across domains, some caution should be taken in interpreting results for other domains, especially with regard to the effects of technology, the type of simulation, outcome measures, and some other moderators. We hope that this analysis will instigate future studies in other domains, such as teacher education to better understand and to realize the enormous potential as well as the potential pitfalls that come with simulation-based learning.

Furthermore, there were some limitations caused by the characteristics of some of the primary studies included. First, a large part of the studies provided relatively little description of the treatment, which was insufficient for coding of some moderators, as well as differentiating on a finer level between types of reflections (reflecting on the simulated scenario vs. reflecting on own reasoning, modeling vs. worked examples or different types of prompts, e.g., cognitive, meta-cognitive). Second, many studies implemented multiple instructional support measures during one treatment making it difficult to determine the effects of a specific measure.

The study had a particular focus on the effects of different scaffolding types within a self-regulation framework (see Chernikova et al., 2019), which might have resulted in leaving out of scope some other, potentially relevant instructional support, measures like providing feedback or changing the amount of instruction during the treatment (e.g., fading or adding instruction).

The effects of combinations of instructional support could only partially be investigated due to the lack of primary studies, or missing data about scaffolding use in the studies included in the analysis. So while our study demonstrated that simulation-based learning has large positive overall effects on the advancement of a broad range of complex skills, as compared with no simulation, a necessary next step will be to directly compare different types of simulations and scaffolds with each other. However, the current body of existing research may yet not suffice for a meta-analytic approach to these comparisons.

Another limitation is the way prior knowledge was assessed in the meta-analysis. The approach of estimating learners’ prior knowledge through familiarity of context and level of education proved interesting and fruitful results, but it also has some drawbacks. The level of education was more frequently indicated in the primary studies’ descriptions. However, there were familiar and unfamiliar topics presented on all levels. Thus, level of education represented overall experience with learning rather than actual prior knowledge. Familiarity of context, in contrast, addresses prior knowledge of subject matter but is rarely described in the primary studies. Familiarity of context also does not directly address expertise and experience. Thus, there is still room for better operationalization of prior knowledge to explain parts of the remaining high heterogeneity: We were only able to explain 4% of the heterogeneity with the one we used.

One more limitation of the current meta-analysis is related to the assumption that all simulations and instructional measures used to support them were of similar implementation quality. The number of primary studies did not allow to differentiate between role of simulation features for each particular target skill and explore the relationship between the features in a greater detail.

To sum up, the large remaining (i.e., unexplained) heterogeneity of the effects requires caution when drawing conclusions about the effectiveness of specific types and combinations of scaffolding and technology.

Conclusion

There are hardly any study programs that would not aim to facilitate complex skills involving problem solving, diagnosing, communication, and collaboration. Simulations provide a wide range of practice opportunities and offer one of the most effective ways we know of designing learning environments in higher education. Simulation-based learning can start early in study programs, as it works well for beginners and advanced learners.

Although the analysis shows that social skills are not very enhanced, the acquisition of skills involving technical/manual performance can be facilitated a lot. The effect of simulation is greatly enhanced by the use of recent technologies. Higher levels of authenticity are related to greater effects while learning in both familiar and unfamiliar contexts. It is worth noting, however, that higher levels of authenticity do not necessarily involve the use of recent technologies but rather more precise design of a simulation-based learning environment.

Scaffolding can additionally help, but the relative effect size compared with the effects of simulations is surprisingly small. However, rather than casting doubt on the relevance of scaffolding for simulation-based learning environments, we suggest trying to identify the most effective types, combinations, and sequences of scaffolding for learners with different prior knowledge and experience. Further research on scaffolding may investigate the optimal transitions of different types of scaffolding with increasing levels of complex skills. Including other kinds or instructional support in further research might provide important additional insights for designing effective simulations.

Footnotes

Notes

Authors

OLGA CHERNIKOVA currently holds a PhD in learning sciences from Ludwig-Maximilians-Universität in Munich, Leopoldstrasse 13, Munich 80802, Germany; email:

NICOLE HEITZMANN holds a doctoral degree in educational sciences. She is a postdoc research fellow at the Munich Center of the Learning Sciences. She is affiliated with the Department of Psychology and the Institute of Medical Education at Ludwig-Maximilians-Universität in Munich, Leopoldstrasse 13, Munich 80802, Germany; email:

MATTHIAS STADLER is an assistant professor at the chair of Educational Psychology and Educational Sciences, Department of Psychology, at Ludwig-Maximilians-Universität in Munich, Leopoldstrasse 13, Munich 80802, Germany; email:

DORIS HOLZBERGER is an associate professor of research on learning and instruction at the Centre for International Student Assessment, Technical University of Munich, Arcisstrasse 21, Munich 80333, Germany; email:

TINA SEIDEL is a full professor of educational psychology at the Technical University of Munich, Arcisstrasse 21, Munich 80333, Germany; email:

FRANK FISCHER is a full professor of educational psychology and educational sciences, Department of Psychology, at Ludwig-Maximilians-Universität in Munich, Germany, and is Director of the Munich Center of the Learning Sciences, Leopoldstrasse 13, Munich 80802, Germany; email: