Abstract

In recent years, robots have increasingly been implemented as tutors in both first- and second-language education. The field of robot-assisted language learning (RALL) is developing rapidly. Studies have been published targeting different languages, age groups, and aspects of language and using different robots and methodologies. The present review presents an overview of the results obtained so far in RALL research and discusses the current possibilities and limitations of using social robots for first- and second-language learning. Thirty-three studies in which vocabulary, reading skills, speaking skills, grammar, and sign language were taught are discussed. Beside insights into learning gains attained in RALL situations, these studies raise more general issues regarding students’ motivation and robots’ social behavior in learning situations. This review concludes with directions for future research on the use of social robots in language education.

Keywords

Technologies such as computers, tablets, and smartphones offer a wide array of possibilities for first- and second-language learning. These forms of technology, in particular interactive white boards, automatic speech recognition programs, instructive virtual games, chat programs, tablets, and animated books, are increasingly being integrated into language education for both children and adults (Golonka, Bowles, Frank, Richardson, & Freynik, 2014; Takacs, Swart, & Bus, 2015; Young et al., 2012). These technologies allow for forms of language learning that are not always present in traditional classrooms, such as one-to-one and tailored instruction, access to native language input, direct feedback, and the possibility to practice with a virtual agent, which may be less intimidating than practicing with a peer or classmate (Golonka et al., 2014).

One of the newest forms of technology used in education—and the focus of the present review—are social robots. Social robots are robots that are specifically designed to interact and communicate with people, either semiautonomously or autonomously (i.e., with or without a person controlling the robot in real time), following behavioral norms that are typical for human interaction (Bartneck & Forlizzi, 2004). These robots are different from, for example, robotic arms in factories, which are often designed to perform a specific task and generally do not interact with people. They also differ from virtual agents or computer-based intelligent tutoring systems, as social robots always have a physical body of some sort and are therefore present in the real world, rather than being only virtually present via a screen. The field of robotics has developed rapidly over the past decade, leading to the availability of robots that can be employed for educational purposes. In recent experiments, robots have been used as tutors, for example, in teaching prime numbers (Kennedy, Baxter, Senft, & Belpaeme, 2015), puzzle-solving skills (Leyzberg, Spaulding, Toneva, & Scassellati, 2012), and, even more recently, language (e.g., Alemi, Meghdari, & Ghazisaedy, 2014; Kennedy, Baxter, Senft, & Belpaeme, 2016). The main aims of this review are to present the current state of knowledge about robot-assisted language learning (RALL), discuss advantages and disadvantages of RALL, and identify potential areas for future research on this topic.

Robots are presumed to have at least two advantages over most other forms of technology. First, they allow the learner to interact with the real-life physical environment, which is thought to be important for language development (Barsalou, 2008; Hockema & Smith, 2009; Iverson, 2010; Wellsby & Pexman, 2014). Specifically, both the manipulation of real-life objects (Kersten & Smith, 2002) and the use of whole-body movement and gestures (Mavilidi, Okely, Chandler, Cliff, & Paas, 2015; Rowe & Goldin-Meadow, 2009; Toumpaniari, Loyens, Mavilidi, & Paas, 2015) have been found to help children’s vocabulary learning. Because of the possibility of acting on the physical environment, robots offer possibilities not provided by traditional computer-assisted lessons, such as manipulating objects and using gestures to support language teaching (e.g., Alemi et al., 2014).

The second advantage is that robots allow for more natural interaction than other forms of technology because of their appearance, which is often humanoid or in the shape of an animal. Many robots can use nonverbal cues such as eye gaze, pointing, and other types of gestures. While this also holds for animated characters on a screen, robots are generally perceived as more helpful, credible, informative, and enjoyable to interact with than animated characters (Kidd & Breazeal, 2004; Wainer, Feil-Seifer, Shell, & Matari, 2007). Furthermore, robots are more likely to be perceived as a typical teacher, peer, or friend rather than as a machine: Both children and adults have a tendency to anthropomorphize robots, that is, to ascribe human-like characteristics and behaviors to robots (Bartneck, Kulić, Croft, & Zoghbi, 2009; Beran, Ramirez-Serrano, Kuzyk, Fior, & Nugent, 2011; Duffy, 2003). Therefore, robots can be programmed to take up a specific role, for example, the role of a teacher or friend, depending on whether the aim of the learning tasks is to instruct or correct students on a task or to have them practice newly learned information with peers.

Even though it is clear which advantages robots potentially have, there are a number of issues that need to be addressed in order for robots to be effective language tutors (see also Kanero et al., 2018, for a review on early language learning). The present review presents the current state of RALL research, with a special focus on affective aspects such as students’ motivation and their responses to robots’ social behavior. The overall goal of our review is to gain insight into the potential of robots as first- and second-language tutors and to identify areas for further research. Studies on preschool children, school-aged children, and adults will be reviewed. Throughout our review, studies will be described in relative detail to allow a thorough evaluation of the studies conducted and the possibilities robots offer for supporting language learners.

Our review is organized as follows. First, we describe our search criteria and the studies that were selected for review. Second, we present studies focusing on the effects of RALL on participants’ language-learning outcomes. In these studies, word learning has been investigated more extensively than other aspects of language and will be discussed first, followed by a discussion of RALL studies on grammar learning, reading skills, speaking skills, and sign language. Third, we describe studies focusing on the role of affective aspects of RALL, addressing how robots may affect learners’ motivation, the role of the robot’s novelty, and the effect of robots’ social behavior on learning. Finally, we discuss the meaning of these findings and offer directions for future research on the use of social robots for first- and second-language learning.

Method

In our review, we take a narrative approach. Specifically, we synthesize the relevant literature in order to provide a comprehensive overview of the work conducted so far (cf. Cronin, Ryan, & Coughlan, 2008). Given the limited number of RALL studies to date, we have adopted an inclusive approach in selecting studies. We did not apply rigorous criteria with respect to the quality of the studies, as due to the emerging nature of the field this could have led to a loss of information (Arksey & O’Malley, 2005).

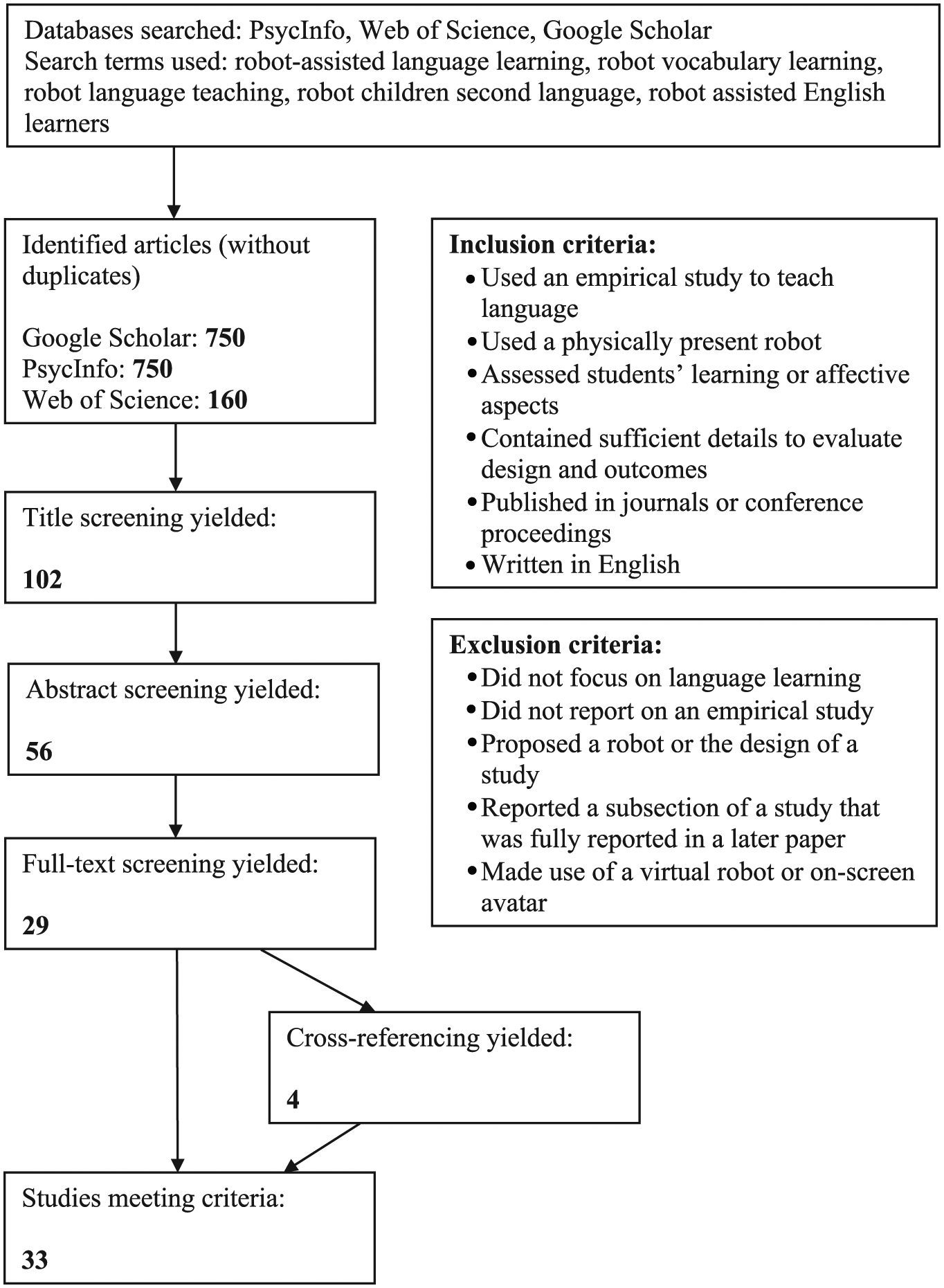

Figure 1 shows the search, screening, and identification procedure. Studies were included if they (a) used an empirical design in which language was taught to children or adults (i.e., reviews and studies in which a specific robot or design of a study were proposed were excluded); (b) used a physically present robot (rather than a virtual robot), as we were interested in physical robots that have an embodied presence during the learning task; (c) assessed students’ language-learning gains or affective aspects; (d) contained sufficient details to evaluate the design and outcomes (i.e., number of participants, number of target words, learning gains); (e) were published papers in journals or conference proceedings 1 ; and (f) were written in English.

Study selection process.

Our literature search was conducted using PsycInfo, Web of Science, and Google Scholar. For Google Scholar and PsycInfo, the first 150 results were examined for each search term (cf. Falagas, Pitsouni, Malietzis, & Pappas, 2008; Shultz, 2007). The following five search terms were used: “robot-assisted language learning,” “robot vocabulary learning,” “robot language teaching,” “robot children second language,” and “robot assisted English learners.” A total of 750 papers in Google Scholar, 750 papers in PsycInfo, and 160 papers in Web of Science were examined based on their titles. A total of 102 studies were identified as potentially relevant, as their titles included (parts of) our search terms.

After reading the abstracts of all 102 papers, 46 papers were excluded based on the criteria mentioned above. Specifically, we excluded papers that did not report on an empirical study (N = 14; e.g., Belpaeme et al., 2015), did not focus on language learning (N = 13; e.g., Arsénio, 2014), proposed a specific robot or a design of a study, rather than an empirical study assessing students’ (affective aspects of) learning (N = 14; e.g., Funakoshi, Mizumoto, Nagata, & Nakano, 2011), or reported on a part of a study (e.g., preliminary results or a subset of the data), which was fully described in a later published paper that was included in the review (N = 5; e.g., Tanaka & Ghosh, 2011).

Subsequently, the full texts of the remaining 56 papers were read, and 27 further papers that did not meet the inclusion criteria were excluded. Reasons for exclusion included proposing a specific robot or a design of a study (N = 10; e.g., Nagata, Mizumoto, Funakoshi, & Nakano, 2010), not focusing on language learning (N = 6; e.g., Hood, Lemaignan, & Dillenbourg, 2015), reporting on a part of a study only (N = 9; e.g., Köse et al., 2015), and the use of a virtual robot rather than a physical one (N = 2; e.g., Moriguchi, Kanda, Ishiguro, Shimada, & Itakura, 2011).

Thus, 29 studies met the inclusion criteria. The references of these articles were checked and Google Scholar’s “cited by” function was used for each of these articles to identify other potentially relevant studies. In so doing, four additional studies that met the inclusion criteria were found, yielding a total of 33 studies for our review.

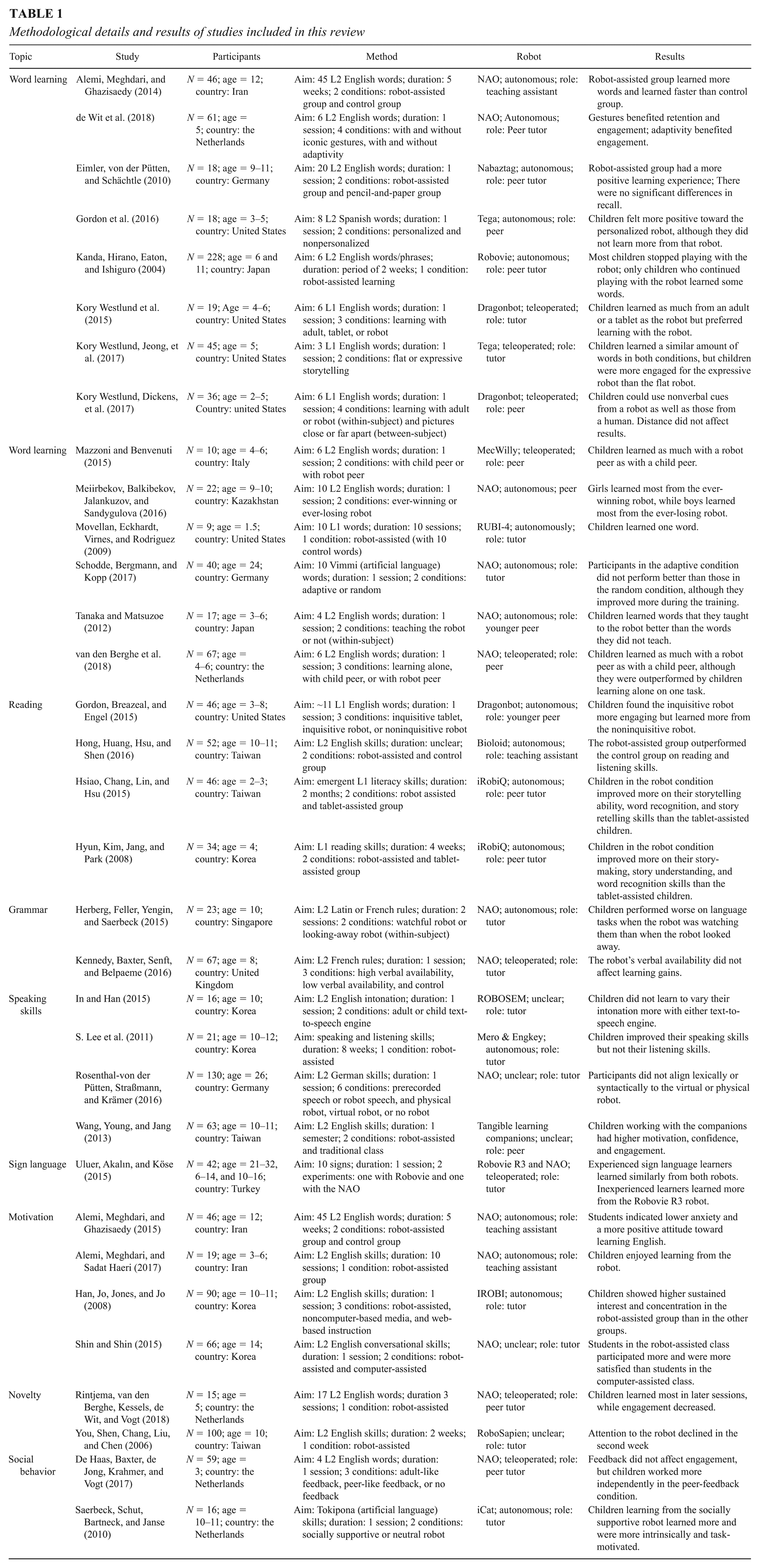

Information on the design, characteristics, and main findings were extracted from all 33 studies. Studies were then assigned to one of two categories: language-learning outcomes or affective aspects of RALL. Studies on learning outcomes were grouped according to whether they addressed word learning or other language skills. Studies on affective aspects were grouped according to whether they focused on motivational aspects, the robot’s novelty, or the robot’s social behavior. For an overview of all the studies and their characteristics, see Table 1. For an overview of the types of robots used in these studies and their main characteristics, see the appendix.

Methodological details and results of studies included in this review

Learning Gains in Robot-Assisted Language Learning Studies

Robot-Assisted Word Learning

Word Learning in Preschool and Young School-Aged Children

Out of all 33 RALL studies included in the review, 13 focused on word learning. Most of these included preschool children or children who just entered school. In three of these, children and were presented with words in a second language (L2) or in their first language (L1) over multiple sessions. Pretests indicated that the children did not yet know these words prior to the studies, and posttests indicated that the children learned only few words in each of the three studies.

First, in a study on Japanese child learners of English (L2), an English-speaking Robovie robot was put into several classrooms of 6-year-olds and 11-year-olds over a period of 2 weeks (Kanda, Hirano, Eaton, & Ishiguro, 2004). Children were free in choosing how much to interact with the robot and could interact with the robot alone or with class mates. Children engaged in various activities with the robot, such as hugging, singing, and playing rock-paper-scissors. The robot used various English sentences, and the authors tested children’s knowledge of six different target words and phrases that were commonly used in the interactions between the robot and the children, for example, “Hello” and “Let’s play together.” The study showed that learning gains were small. On average, the children knew only one or two of the six words or phrases examined in the posttest (Kanda et al., 2004).

These outcomes are similar to those obtained in a second RALL study on preschoolers’ L2 word learning, by Gordon et al. (2016). In this study, a robot that personalized its motivational strategies depending on the child’s affective state was used. Specifically, 3- to 5-year-old English-speaking children played several games on a tablet together with a Tega robot over the course of seven sessions in which they were taught a total of eight L2 (Spanish) words. On average, children learned only one or two out of eight words targeted in this study, as indicated by their scores on a posttest. We will discuss this study’s results for personalized motivational strategies further in a later section on the effects of robots’ social behaviors.

In the last study on preschoolers’ word learning to be reviewed, English-speaking children were taught words in their L1 over multiple sessions (Movellan, Eckhardt, Virnes, & Rodriguez, 2009). Specifically, 2-year-old children interacted with a RUBI-4 robot for 10 sessions over a period of 12 days, in which the robot taught the children 10 words through digital and physical games. As in the other two studies, children showed limited learning in this study, as they learned only 1 or 2 out of 10 words.

To summarize, limited learning was found in each of the three studies. In all three studies, picture selection tasks were used to assess children’s learning gains. In this type of task, children hear a target word and are asked to choose the picture corresponding to this target word out of several pictures (usually three or four). This task measures receptive vocabulary knowledge, that is, understanding of the meaning of a word. This is in contrast to productive knowledge, or the ability to produce a word with its correct meaning. Crucially, as it is a multiple-choice task, children can also obtain the right answer by guessing, and this chance level should be taken into account when interpreting results. If we do so in interpreting the results of the above studies, it appears that although the children in Gordon et al. (2016) improved as compared to their pretest performance, they did not score above chance level for seven out of eight words. Children did score above chance level in Movellan et al. (2009).

Importantly, in the second study, by Kanda et al. (2004), reviewed above, children were free to determine whether and for how long they interacted with the robot, and children’s learning was related to the time they had spent interacting with the robot. Interaction time declined for most children already within the first week, and only children who maintained interest during the second week learned some words and phrases. In the studies by Gordon et al. (2016) and Movellan et al. (2009), children’s interaction time with the robot was not recorded, making it unclear how much time children actually spent playing with the robot and how active they were during the sessions. One possible explanation of the limited learning gains in these studies, therefore, is that children did not stay engaged enough over multiple RALL sessions to learn a substantial number of words.

Three other RALL studies found more positive results for robots teaching L1 or L2 words to preschoolers. Each of these studies used a different approach and, crucially, consisted of only one session. Specifically, children were taught (a) L1 words through shared book-reading with a robot (Kory Westlund, Jeong, et al., 2017), (b) L2 words by teaching a robot words rather than vice versa (Tanaka & Matsuzoe, 2012), or (c) L2 words by playing “I spy with my little eye” with a robot (de Wit et al., 2018). In all three studies, children learned a substantial number of new words.

In the first study, preschoolers were read one of two versions of a story by a Tega robot (Kory Westlund, Jeong, et al., 2017). The results indicated that on a receptive vocabulary (picture selection) task, children responded correctly to two out of three L1 target words on average. Moreover, to measure productive knowledge, a story retell task was used, where children had to retell the story to the experimenter. Results indicated that children used the target words from the story they had heard more often than those from the story they had not heard. 2

In the second study that showed considerable word-learning gains in preschoolers, 17 Japanese-speaking preschoolers were taught four L2 (English) verbs by an experimenter who used objects to illustrate the meaning of the verbs (e.g., a cup for the verb “drink”; Tanaka & Matsuzoe, 2012). Then, the child taught the robot two of these words, randomly chosen, by making it act out the relevant verb. The results indicated that children learned the words that they taught the robot better than the words that they did not teach the robot, as evidenced in a picture selection task. Children demonstrated more knowledge of the verbs that they acted out than those that they did not act out not only in a direct posttest but also in a posttest 3 to 5 weeks after the training.

The last study showing clear word-learning gains in preschool children, conducted by de Wit et al. (2018), tested the effectiveness of a robot’s use of gestures in teaching L2. In this study, 5-year-old children played the game “I spy with my little eye” with a NAO robot that used an iconic gesture to illustrate the meaning of each target word (e.g., it scratched its head and armpit for the word “monkey”) with half of the children but did not produce such a gesture with the other half of the children. The children’s task was to choose a picture of the animal corresponding to the English target word out of several pictures. Immediate posttest results indicated that children learned, on average, almost three out of six words. There was no immediate effect of the robot’s iconic gesture use. However, iconic gestures did benefit retention of the target words: Children who had been presented with iconic gestures in the learning task showed better recall of the words in a delayed posttest a week later than children who had not been presented with iconic gestures.

Overall, these three studies suggest that RALL may benefit children’s word learning (de Wit et al., 2018; Kory Westlund, Jeong, et al., 2017; Tanaka & Matsuzoe, 2012). Crucially, all three studies consisted of one session only, suggesting that effects may differ between single- and multiple-session studies. We will return to this issue later in the section on the novelty effect. Some caution is needed, however, in interpreting the results of these studies. An important limitation of the study by Kory Westlund, Jeong, et al. (2017) is that children’s potential prior knowledge of the words was not assessed. The finding that in this study children recognized not only target words but also control words indicates that they had prior knowledge of these words, as these words were not taught explicitly. This leaves open the possibility that they also had prior knowledge of the target words. A possible limitation of the study by Tanaka and Matsuzoe (2012) is that a human teacher was present in addition to the robot to teach children the L2 words. Since no condition was included in which only a robot was present, we do not know whether children are able to learn from teaching a robot by themselves, or whether the learning in this study was mostly due to being taught by a human adult. Finally, since children are known to learn from teaching someone else (Rohrbeck, Ginsburg-Block, Fantuzzo, & Miller, 2003), it is not clear whether children’s word learning was due to the activity of teaching itself (i.e., the additional opportunity to practice the target words) or due to teaching a robot specifically. Despite their methodological limitations, the results of these three studies show the potential of using shared book-reading, learning by teaching a robot, and performing language games together with a robot for teaching young children new words in L1 or L2. 3

An important question is how effective robots are in teaching words in comparison to human teachers. Even though robots are typically not developed with the aim of replacing human teachers, comparisons between robot and human teachers or peers are useful to investigate areas in which robots can complement humans. This question was addressed directly in a study comparing learning gains in an L1 (English) vocabulary-training task provided to preschoolers by a human teacher, a tablet, or a Dragonbot robot (Kory Westlund et al., 2015). Children saw pictures of animals on a tablet and were provided with L1 labels by the human teacher, tablet, or robot. The children in this study learned as much from the tablet or the robot as they learned from the human, that is, four out of six words. Similarly, in a more recent study by the same authors, preschoolers could use nonverbal cues (bodily orientation and eye gaze) of either a human teacher or a robot equally well when mapping unfamiliar L1 (English) words onto pictures (Kory Westlund, Dickens, et al., 2017). Two more studies have investigated how a robot peer compares to a human peer in language-learning experiments. Mazzoni and Benvenuti (2015) found that preschoolers learned as much (i.e., two to three out of six L2 words on average) when working either with a human peer or with a MecWilly robot. Similarly, van den Berghe et al. (2018) found that preschoolers generally learned as many L2 words when learning with a child peer or with a robot peer. However, children learning without a peer altogether showed the highest performance. Note that in this last study, children were provided with L2 words by a human experimenter and played games on a tablet with a child peer, with a robot peer, or without a peer. The presence of the experimenter may have attenuated the possible benefits of a (robot) peer.

This review of studies indicates that (robot) peers do not necessarily lead to higher learning gains than learning without such peers. Rather, the findings of the studies described above suggest that children may be able to learn equally well when being taught by a robot or by a human teacher, or when being assisted by a robot or child peer.

Word Learning in School-Aged Children and Adults

As discussed above, RALL studies on word learning in young children show a mixed pattern of results, with some studies reporting small learning gains (Gordon et al., 2016; Kanda et al., 2004; Movellan et al., 2009) and others reporting more substantial learning gains (de Wit et al., 2018; Kory Westlund, Jeong, et al., 2017; Tanaka & Matsuzoe, 2012). Studies with older age groups—older school-aged children and adults—demonstrate a more consistent picture, showing clear word learning across studies. However, very different approaches have been taken across studies, with respect to both the role of the robot (i.e., acting like an assistant vs. a teacher) and whether it was controlled by a human or not, making it difficult to compare results directly.

In a study by Meiirbekov, Balkibekov, Jalankuzov, and Sandygulova (2016), the robot was used as a peer learner. Children’s task in this study was to play a game together with a NAO robot in which they were provided with a letter and had to select images of words starting with that letter. After one lesson, children were on average able to produce over 3 out of the 10 L2 words that they had been taught.

In contrast, in another study (Alemi et al., 2014), the robot was used as a teaching assistant. Here, a NAO robot assisted in teaching young adolescents L2 (English) words by interacting with the students, making gestures depicting the target words, showing pictures, and telling stories. Students were taught a total of 45 words over the course of 10 sessions. The classes incorporating the robot were compared to an English class that did not have a robot assistant but engaged in the same type of activities. Results indicated that the students in the RALL classes learned faster, learned more, and retained more words than the students educated in the traditional class. 4

Yet another study (Eimler, von der Pütten, & Schächtle, 2010) had 9- to 11-year-old German children play L2 English games with a Nabaztag robot for one session. The results indicated that children learned almost 14 out of 20 words on average. These are very high learning gains. Crucially, however, these learning gains did not significantly differ from those of children who had been taught these words through paper vocabulary lists. This suggests that children of this age may generally be skilled word learners and obtain high learning gains across different types of vocabulary interventions.

Finally, a study on adults learning words in an artificial language used the robot as a teacher (Schodde, Bergmann, & Kopp, 2017). The participants in this study were taught 10 words in the artificial language Vimmi via an “I spy with my little eye” game. In each trial, a NAO robot asked the participant to find the picture of the target word among distractor pictures. Participants’ knowledge of the target words was assessed in an immediate posttest via two translation tasks: one from Vimmi to German and one from German to Vimmi. Participants produced, on average, 7 out of 10 words in the Vimmi-to-German translation task and 3.5 out of 10 words in the German-to-Vimmi translation task. These learning gains are substantial, especially given that (a) translating words is more difficult than a receptive task (Mondria & Wiersma, 2004), (b) there was only one session, and (c) the learning task consisted of only three trials per target word.

Apart from the different roles assigned to the robot, another aspect that makes RALL studies on word learning difficult to compare is that in many of the studies described above, the robot was teleoperated by the experimenters (see Table 1 for information on whether studies used a teleoperated or autonomous robot). Teleoperation refers to a person controlling the robot, often without the participant’s knowledge, in real time. Teleoperation is often the preferred or even the only option for certain tasks, as an autonomous robot (working without teleoperation) would require speech recognition and predefined scripts. Such scripts describe all the steps of an interaction, and the robot cannot divert from this script. Elaborate scripts are needed to have robots respond appropriately to the input, but even then the suitability of the responses cannot be guaranteed due to, among other reasons, the unpredictability of participants’ behavior. Thus, previous studies that used an autonomous robot typically consisted of simple designs (e.g., “I spy with my little eye” games on a tablet) that allow for limited variability in the learners’ responses (de Wit et al., 2018; Schodde et al., 2017). In more complex settings, experimenters can ensure through teleoperating that the robot answers appropriately, as they can simply type in contingent responses. Hence, given the current state of robot technology and the scientific literature, how effective robots are when operating autonomously remains an open question.

Summarizing RALL Studies on Word Learning Across Age Groups

The L1 and L2 word-learning studies discussed above found mixed results regarding the robot’s effectiveness for word learning. Specifically, several studies found only small (Movellan et al., 2009) or no learning gains (Gordon et al., 2016), or learning gains that held only for a subgroup of the children (Kanda et al., 2004). Other small-scale studies with preschool children showed positive effects of the use of robots in word learning and suggest that aspects such as learning by teaching and gestures might improve learning gains (de Wit et al., 2018; Tanaka & Matsuzoe, 2012). However, many studies were based on small samples and/or lacked control conditions and therefore provide only tentative evidence. Studies on school-aged children (Alemi et al., 2014; Eimler et al., 2010; Meiirbekov et al., 2016) and adults (Schodde et al., 2017) suggest that RALL benefits word learning more with these groups than with preschool children. However, direct comparisons between adults and children are needed to support this conclusion. Furthermore, it is important to note that most of the studies showing high word-learning gains employed the robot as a teaching assistant or peer learner rather than as an independent tutor (Alemi et al., 2014; Meiirbekov et al., 2016; Tanaka & Matsuzoe, 2012). Perhaps, in their current form, robots are not sufficiently technologically advanced (e.g., due to difficulties with speech recognition) to be effective tutors on their own. The current evidence bases suggest that teleoperation is still required for robots to be effective tutors and that technological advances and research on which robot behaviors are effective for learning are required to develop effective autonomous robot tutors.

Language Skills Beyond Word Learning

Language use comprises more skills than just vocabulary. These other skills, such as reading, speaking, grammatical skills, and sign language, have been studied less extensively in RALL research than word learning; only 11 of the 33 selected studies addressed (one of) these skills.

Reading Skills

RALL studies on reading skills show that a robot may be beneficial in assisting the teaching of reading skills, either in the function of an assistant or as a tutor. Specifically, comparing an L1 robot-assisted digital book-reading program to the same program without a robot, Hyun, Kim, Jang, and Park (2008) found that preschoolers in the robot-assisted program improved more on story-making, story-understanding, and word-recognizing skills over a 4-week period than children who were not assisted by the robot. Similar results were obtained in another study on early L1 reading (Hsiao, Chang, Lin, & Hsu, 2015). In this study, 2-year-old children followed an early L1 reading program over a period of 2 months, supported either by an iRobiQ robot with a screen or by a tablet without a robot. The results indicated that both groups improved on early literacy tests measuring comprehension, storytelling ability, retelling of stories, and word recognition. However, the children who had interacted with the robot improved more on their storytelling ability, word recognition, and story-retelling skills than children who had worked with a tablet only.

While the results of these two studies are promising, a third study on L1 reading in young children did not find such positive results. In this study, a relatively large group of 46 preschoolers performed a learning task in which they had to find out, together with a Dragonbot robot, how to read words (Gordon, Breazeal, & Engel, 2015). On average, the children learned the written word form of only 1 out of 11 new words. As in some of the other word-learning studies reviewed above (Gordon et al., 2016; Kanda et al., 2004; Movellan et al., 2009), these small learning gains were taken as evidence of the robot’s effectiveness by the authors.

The only RALL study on L2 reading that has been performed to date found a positive effect of the presence of a robot teaching assistant on children’s L2 (English) reading skills (Hong, Huang, Hsu, & Shen, 2016). In this study, either a human or robot teacher taught 10- to 11-year-old children reading, speaking, and listening skills by reading stories aloud, encouraging children to read sentences out loud, engaging in act-out games, and engaging in question-answer conversations. Children in the robot-assisted classroom outperformed children in the traditional classroom on a standardized English reading test. Children in the robot-assisted classroom were highly motivated by the robot, which may have benefited their learning as compared to children in the traditional classroom. Overall, these findings suggest that there is potential for robots supporting reading skills.

Grammar

Two RALL studies addressed L2 grammar learning, and both demonstrated positive effects of the robot on children’s learning. First, Kennedy et al. (2016) found that a NAO robot positively affected English-speaking children’s learning of the French articles “le” and “la.” The robot tutor taught 8- to 9-year-old children three rules regarding French articles. The children improved their knowledge of French articles and retained this knowledge in a posttest a week later. In the second RALL study on L2 grammar learning, Herberg, Feller, Yengin, and Saerbeck (2015) investigated children’s learning of Latin and French rules, such as those governing plural and article use, in two separate sessions with a NAO robot. The robot either looked at them or looked away during tasks in which the children had to practice the newly acquired information. The study showed that children learned the rules from the robot. Unexpectedly, however, children performed worse if the robot had looked at them, although the effect was found for difficult items in Latin only. A possible explanation of this finding, proposed by the authors, is that instead of representing a comforting social presence during the task and putting the child at ease (which was the intended outcome), the robot increased pressure and, as such, made the children perform worse. These results indicate not only that the specific learning materials and their difficulty may affect experiment outcomes but also that the robot’s behavior may affect learning in unexpected ways.

Speaking Skills

Studies addressing L2 speaking skills found mixed results. One study used a ROBOSEM robot to teach Korean-speaking children to use English intonation patterns (In & Han, 2015). Native English speakers vary their intonation more than native speakers of Korean, and less varied intonation shows Korean L2 English learners’ nonnativeness. In the study by In and Han (2015), children did not learn to vary their English intonation upon interacting with the robot as compared to their pretest performance. The experimenters concluded that the robot’s speech system (as opposed to human speech) is not effective enough to evoke changes in intonation. However, another study, also conducted in Korea and aimed at improving L2 English speaking and listening skills, did find improvement in other speaking skills (S. Lee et al., 2011). Specifically, this study examined children while they were playing with two robots, the Mero robot and the Engkey robot, with the purpose of improving their L2 (English) speaking and listening skills. The study showed that children’s L2 listening skills did not improve upon interacting with the robots but that L2 speaking skills (measured through pronunciation, vocabulary, grammar, and communicative ability) did improve. Interestingly, the children in this study improved on all four aspects of speaking skills.

Even though both studies involved the same L1 and L2, they show opposing results, as the participants in S. Lee et al. (2011) improved their L2 pronunciation upon interacting with the robot, whereas the children in the study by In and Han (2015) did not. Contradictory results were also found in two studies that compared robot-assisted classrooms to traditional classrooms in teaching L2 English speaking skills to Taiwanese children: In one study, children improved their speaking skills more in the robot-assisted classroom than children in traditional classrooms (Wang, Young, & Jang, 2013), while in another study, children in a robot-assisted classroom outperformed students in a traditional classroom on L2 listening but not on L2 speaking (Hong et al., 2016). This contrast in results may be due to the different scope of the L2 training: The training of Wang et al. (2013) was aimed only at teaching speaking skills, while the training of Hong et al. (2016) was also aimed at teaching listening, reading, and writing.

Conflicting results across studies targeting the same skill (i.e., L2 speaking skills) in very similar participant groups may be due to the various ways in which speaking skills were evaluated. Speaking skills can be assessed in different ways, for example, by measuring intonation, speech rate, pronunciation, vocabulary, and grammatical complexity. Given the very different operationalizations of (L2) speaking skills in earlier work, future work on RALL assessing these different aspects would be useful to identify which speaking skills benefit most from robot tutoring. In pursuing this line of research, an important recommendation is that studies target the same L1s and L2s to test the effectiveness of robots for teaching speaking skills, as most L2 speaking skills are heavily dependent on learners’ L1.

Before we conclude this section on RALL research on L2 speaking, a final study is noteworthy, in which adults’ L2 lexical and syntactic alignment behavior was assessed. Lexical and syntactic alignment refers to the degree to which speakers adapt their words and sentence structures to those of their conversational partner (Rosenthal-von der Pütten, Straßmann, & Krämer, 2016) and thus involves a very different type of learning (implicit vs. explicit) and skill than the type of speaking skills (e.g., pronunciation and intonation) discussed above. Rosenthal-Von der Pütten et al. (2016) compared the L2 (German) alignment behavior of adults with various L1s to a physical robot, a virtual robot, and a computer system with prerecorded speech without a (virtual) agent. Contrary to the authors’ expectations, participants showed no alignment to the physical or virtual NAO robot or the computer system (i.e., they did not use similar words and sentence structures). Furthermore, there were no significant differences in the perceived human likeness of the robot’s text-to-speech system (i.e., the system that converts text into spoken voice output) and the prerecorded human speech. This is a striking result, as text-to-speech systems are often of inferior quality to human speech. It may also explain the absence of alignment effects: Participants may not have felt the need to align to a computer with such an advanced speech system. Note that alignment may also not result in implicit learning if the speech system is perceived to be of inferior quality: Learners may not learn advanced vocabulary or grammar from inferior systems. Clearly, more research is needed on how a robot’s text-to-speech system affects L2 language learning in general and the learner’s pronunciation of L2 words in particular.

Sign Language

RALL studies on sign language are nearly absent, and only 1 out of the 33 in our review addressed this topic. In this study, robots were found to be able to teach sign language successfully to various types of learners (Uluer, Akalın, & Köse, 2015). Uluer et al. (2015) compared the effectiveness of two robots in teaching Turkish sign language to three groups of Turkish participants: hearing adults, hearing children, and hearing-impaired children. The first robot, a Robovie R3 robot, has hands with five independent fingers, allowing for the production of signs that are more accurate than those of most other robots. The second, a NAO robot, has only three fingers that cannot be moved independently. The three participant groups played imitation and act-out games with the robots. The results indicated that all groups learned most of the signs from the robot. Even though there was no difference between the effects of the two robots for the experienced sign language learners, the Robovie R3 robot resulted in significantly higher learning gains than the NAO robot in the inexperienced learners (typically hearing groups, who, unlike the hearing-impaired children, were novices in sign language). Thus, considering the specific characteristics of the robot seems especially relevant in learning situations like these, which rely more on the robot’s physical interaction possibilities.

Summary

Studies on language skills other than word learning are rare in RALL research. Also, they are typically diverse, in the sense that they have looked at different age-groups and used very different research designs. The available studies, albeit few in number, suggest that a robot can successfully assist in teaching reading skills (Gordon et al., 2015; Hong et al., 2016; Hsiao et al., 2015; Hyun et al., 2008), grammar learning (Herberg et al., 2015; Kennedy et al., 2016), and sign language (Uluer et al., 2015), either in L1 or in L2. The evidence with respect to speaking skills is more mixed (Hong et al., 2016; In & Han, 2015; S. Lee et al., 2011; Rosenthal-von der Pütten et al., 2016; Wang et al., 2013) and may differ depending on which types of speaking skills are assessed (e.g., pronunciation, intonation, lexical alignment; cf. In & Han, 2015; S. Lee et al., 2011; Wang et al., 2013, for pronunciation; Rosenthal-von der Pütten et al., 2016, for alignment).

Affective Aspects of Robot-Assisted Language Learning

Robots’ Positive Effects on Motivation

Robots not only affect language-learning gains but may also affect students’ learning strategies and motivation to learn. Given that motivation has been found to be positively related to learning achievements (Dörnyei, 1994; Pekrun, Goetz, Titz, & Perry, 2002), it is important to look at how the use of robots in language-learning studies affects students’ motivation. Previous studies indicate that robots generally have a positive effect on students’ motivation in RALL.

A number of studies comparing a robot-assisted classroom to a traditional classroom found higher student motivation in robot-assisted classrooms than in traditional classrooms. In the study by Alemi et al. (2014) on L2 word learning in school-aged students that was reviewed above, robot-assisted students indicated that they felt very positive about learning with a robot. As discussed earlier, learning outcomes in this study indicated that the robot-assisted students learned faster, learned more, and retained more than the students in the traditional class. In fact, the students in the robot-assisted class needed less than a third of the time required by the traditional class to work through the materials.

The effects of RALL on these students’ learning-related emotions were reported in a follow-up article (Alemi, Meghdari, & Ghazisaedy, 2015). Using self-report questionnaires, the authors found that students were less anxious to make mistakes and less self-conscious about making mistakes in the presence of the robot than in the presence of a human teacher. Similar positive effects were found in studies on speaking skills. In Wang et al. (2013), 10- to 11-year-old students who learned together with a robot companion also displayed higher confidence, motivation, and engagement than children in a traditional classroom. A positive effect on students’ motivation was also found by S. Lee et al. (2011), who observed that a robot improved learners’ self-reported satisfaction, interest, confidence, and motivation. Finally, the 9- to 11-year-old children in the study by Eimler et al. (2010) were found to have a more positive learning experience when learning L2 English words with assistance from a robot than when they were taught these words through paper vocabulary lists, even though there were no significant differences in word learning between the two conditions.

Other studies have compared the motivational aspect of the robot to other types of technology. The preschool children in Hsiao et al.’s (2015) reading experiment participated much more actively when assisted by a robot: They engaged more in reading, singing, and replying to questions than when working without robot. An observational study found that preschoolers in an L2 (English) learning class showed less anxiety, higher motivation, and higher engagement after interacting with a robot multiple times (Alemi, Meghdari, & Sadat Haeri, 2017). Furthermore, in a study comparing the at-home use of the IROBI robot for L2 (English) language learning to noncomputer-based media and web-based instruction, 10- to 12-year-old children working with a robot showed longer sustained interest and concentration than the other groups (Han, Jo, Jones, & Jo, 2008). Similarly, 14-year-old students were found to participate more and to be more satisfied when working with a NAO robot in an L2 (English) conversation class than when working with a computer (Shin & Shin, 2015). The students’ motivation did not differ across conditions. These results must be interpreted with caution, however, as the students working with the robot engaged in an additional group conversation with the robot and thus had more exposure to the technology. Last, in Kory Westlund et al.’s (2015) study, preschoolers’ learning with a robot was compared to learning with a tablet and a human teacher. The authors found that almost all the children preferred learning with the robot to learning with the human teacher or the tablet. Note, however, that this preference did not lead to higher learning outcomes. In summary, robots seem to have a more positive effect on students’ motivation than other types of technology, such as tablets or web-based programs.

The positive effects of robots on learning-related emotions have been found not only in RALL studies but also in studies looking at other types of robot-assisted learning, such as programming and drawing and interpreting graphs (Chin, Hong, & Chen, 2014; E. Lee & Lee, 2008; E. Z. F. Liu, Lin, & Chang, 2010; Mitnik, Recabarren, Nussbaum, & Soto, 2009; Nourbakhsh et al., 2005). Interestingly, the picture that emerges from the literature on affective aspects of robot-assisted learning is much clearer than that on language-learning gains: The assistance and/or presence of social robots has a positive effect on students’ engagement, attitude and motivation, and this holds across domains (language vs. other domains) and across age groups. This suggests that the potential of robots lies mainly in their ability to motivate students.

Interestingly, such positive effects on motivational aspects are generally not found for other types of technology, such as interactive white boards, blogs, and virtual worlds, for which only weak evidence of positive effects is found (Barrett & Liu, 2016; Golonka et al., 2014). It should, however, be studied further, as people are likely to differ in the degree to which they feel intrinsically motivated to make use of technology for language learning (Stockwell, 2008). Future research could investigate the degree to which students are intrinsically motivated to work with robots and whether and how the positive effects of robots on motivation could benefit students’ language learning. One caveat is noteworthy here: It is not completely clear to what extent the boost in motivation is due to the motivational actions of the robot itself or to the novelty of the robot. On the basis of the current state of knowledge, the possibility remains that the robot initially boosts motivation but that this effect fades out over time as people become accustomed to working with robots. This possibility will be discussed further in the next section.

The Novelty Effect in RALL research

Robots often spark a lot of enthusiasm in their users. This excitement can result in a so-called novelty effect on learning: Learners enjoy the new technology so much that their initial interest leads to higher learning outcomes, which would not have been attained if learners had been more familiar with the robot (cf. S. Liu, Liao, & Pratt, 2009). Once learners become used to the technology, their interest and boost in learning outcomes fade away. This effect might be particularly influential in experiments involving one session or a small number of sessions. In fact, it may, at least in part, explain why one-session word-learning studies found higher learning outcomes than word-learning studies consisting of multiple sessions.

Many authors do not report on how novelty may have affected their results or on how they controlled for a novelty effect. Some one-session experiments have addressed the issue of the novelty effect by having the children play with the robot for a few days before the actual experiment (e.g., Han et al., 2008). It is not clear, however, whether this procedure attenuated the novelty effect in this study, as the experiment itself consisted of only one session.

Studies reporting on students’ interest in robots over time found mixed results. In the previously discussed study on L2 word learning by Kanda et al. (2004), the amount of time in which children wanted to interact with the robot quickly decreased within 2 weeks, and this decrease in interaction time with the robot in turn attenuated the learning effect. Specifically, in this study, learning gains were found only for the children who continued playing with the robot, a subset of about a quarter of the 200 participants in the study. Moreover, the continued interaction was not due to sustained interest. Rather, most children indicated that they continued playing with the robot out of pity (Kanda et al., 2004).

A similar decline in interest in working with the robot is reported in a study in which a RoboSapien robot assisted a teacher in English classes, engaging in several activities such as storytelling, answering questions, cheerleading, gesture games, and pronunciation exercises (You, Shen, Chang, Liu, & Chen, 2006). Overall, the children enjoyed the robot, although the attention they paid to the robot declined in the second week. After two lessons, children had already gotten used to the robot and became less interested in working with it. Language-learning gains were not assessed, so it is not clear whether the decline in children’s motivation affected learning.

Similarly, a decline in task engagement was found over the course of three sessions in a study on preschoolers learning L2 English words with a NAO robot (Rintjema, van den Berghe, Kessels, de Wit, & Vogt, 2018). This decline in task engagement did not affect learning gains, as children learned more in later sessions than in the first session. These results need to be interpreted with caution, as the specific target words taught in the lessons and the type of the lessons were not counterbalanced. Therefore, it is not clear whether changes in engagement and learning were due to a (dissipating) novelty effect of the robot or to differences in the content of the lessons. However, the studies discussed above do show that further development of both technology and content is needed to sustain children’s interest and to make children enjoy interactions over time in order for robots to become effective learning companions or tutors.

In contrast to the studies summarized thus far that showed a decline in participants’ interest in the robot, two previous studies found that participants sustained interest in working with a robot over a longer period. In the first, by Alemi, Meghdari, Mahboub Basiri, and Taheri (2015), a relatively large sample of students reported positive experiences after having worked with a robot for 10 sessions over 5 weeks, suggesting that they maintained their interest in the robot over multiple sessions. A possible explanation is that the robot functioned as an assistant to a human teacher, and that the teacher and robot together could sustain students’ interest for a prolonged time. If a robot is solely responsible for maintaining an interaction, the behavioral and conversational demands on the robot’s social interactional skills are higher than if a human teacher can act as a mediator.

The second study showing sustained interest found that the robot could maintain children’s attention even if it interacted with the child independently, at least in very young children (Hsiao et al., 2015). The toddlers in this study interacted with a robot twice a week for a period of 8 weeks. Children did not lose interest in the robot and participated equally actively in the last 4 weeks as in the first 4 weeks. Note, however, that children in the control condition who worked with a tablet also sustained their interest over this period. This suggests that the e-book that was used as teaching material in both conditions and which contained many different activities was interesting enough to sustain interest over a long time period.

Raising and maintaining participants’ interest is crucial to successful interactions, and recent work has addressed the issue of maintaining interest in RALL situations. Specifically, Han, Kang, Park, and Hong (2012) conducted several pilot RALL studies with an iRobiQ robot, and concluded that there are several strategies to encourage sustained interaction between a robot and children. These strategies are mostly focused on making the child seem important to the robot. This can be achieved by having voice- or face-tracking systems recognize and track the child, using pictures of the child on the screen, “remembering” the child’s learning history, or working around quirks (e.g., framing quirks by telling the robot’s “birth story”; Han et al., 2012). Therefore, a key recommendation for future RALL studies, according to these authors, is to teleoperate the robot in order to tailor the robot’s speech and actions to the specific behavior and needs of an individual child. Currently, artificial intelligence, visual recognition systems, and automatic speech recognition systems clearly do not yet allow for robots to interact autonomously with a child in such a way that the child will remain interested in the robot.

This recommendation is in keeping with the conclusion of a review of several (mostly non-RALL) robot studies in which robots interacted with children and adults over longer periods of time (Leite, Martinho, & Paiva, 2013). These studies found varying results, from a clear, short-lived novelty effect (Fernaeus, Håkansson, Jacobsson, & Ljungblad, 2010) to sustained interest over a period of 5 months (Tanaka, Cicourel, & Movellan, 2007). The authors propose several guidelines to encourage long-term interaction with social robots, involving the robot’s appearance, behaviors, affect, memory, and adaptation. For example, one recommendation is that the robot should have both routine behaviors, such as greetings, as well as new and personalized behaviors over time (e.g., adding new games or suggesting games that match participants’ interests). It is likely that the effectiveness of behaviors aimed to increase or sustain learners’ interest differs per target group (e.g., depending on age, gender, or subject), and a robot’s behaviors should be focused on its audience.

In short, the novelty effect is an important issue to be taken into account in robot studies. At least some results on learning-related emotions and learning gains obtained in previous robot studies are likely to stem from the initial excitement when learners work with a robot for the first time. Some ways in which long-term interaction could be fostered involve working around technical limitations (e.g., teleoperating the robot) or increasing (diversity in) the robot’s social behavior. The next section will outline in more detail the outcomes, and concomitant complexities, of earlier work on robots’ social behavior, and in particular on their supportiveness and motivational behavior.

The Complexity of Robots’ Social Behavior

As noted in the Introduction, one of the advantages of robots is their appearance, and therefore their potential benefits in establishing more natural interactions. Robots can be programmed to behave socially via both nonverbal behaviors (e.g., gaze, body posture) and verbal behaviors (e.g., giving praise, saying someone’s name). This section reviews evidence relating to robot behavior’s ability to increase students’ motivation and learning outcomes.

Several studies have examined how a robot’s social behavior may positively affect learning gains. In one of these, the effect of social support on children’s ability to learn an artificial language was investigated. Specifically, Saerbeck, Schut, Bartneck, and Janse (2010) studied how 10- to 11-year-old children interacted with the iCat to learn an artificial language. The experimenters manipulated the degree to which the robot was socially supportive, such that in one condition the robot engaged in a social dialogue, while in the other condition the robot focused solely on the desired transfer of knowledge. Children interacted with the robot for equally long periods across the two conditions, but children working with the socially supportive robot learned more and were more intrinsically motivated, as they reported having had more fun than children working with the neutral robot. This finding is similar to that of Gordon et al. (2016), who found that children felt more positive toward a personalized robot. Crucially, in this study, the robot adapted its motivational utterances to the child’s affective state (e.g., excited, thinking, or frustrated). Note, however, that this did not lead to higher learning gains, in contrast to the results of a non-RALL study by Leyzberg, Spaulding, and Scassellati (2014), in which a personalized robot tutor resulted in higher learning outcomes than a nonpersonalized tutor in a puzzle-solving task. Such mixed findings indicate that personalization is an important avenue for future research on exactly how robots can be used as effective tutors. Note that the two studies used very different age groups (preschoolers and adults), and personalization may affect age groups differently.

Another type of robot social behavior that may benefit learning is the robot’s expressiveness (Kory Westlund, Jeong, et al., 2017). Using storytelling to teach preschoolers L1 (English) words, these researchers compared an expressive robot to a “flat” robot. The expressive robot spoke in a more expressive way, with changes in its intonation. The flat robot did not vary the intonation of its utterances. Both voices were recorded by a female adult rather than created via text-to-speech systems, as a computer-generated voice cannot reach the same variation in intonation as a human voice. The expressiveness of the robot did not affect how many target words children recognized or how they perceived the robot. However, children in the expressive condition used more target words in their retellings, told longer stories in a delayed posttest 4 to 6 weeks later, and were more likely to imitate the robot’s phrasing in their story retellings. Crucially, concentration, engagement, and surprise (but not attention during the story) were significantly higher for children in the expressive condition than in the flat condition. Thus, although children did not learn more words receptively when interacting with an expressive robot, the expressiveness of the robot had a positive effect on the way in which children were involved in the task and on their production and retention of the target words.

Other aspects of social behavior, however, do not seem to have such positive effects on language learning. Specifically, previous work on the effects of verbal availability, feedback, and adaptivity has shown mixed results. The term verbal availability refers to a robot’s sensitive response towards a student, for example, by using the student’s name, giving praise, or asking for the student’s opinion (Kennedy et al., 2016). In the study by Kennedy et al. (2016) that was also discussed above, a NAO robot taught 8- and 9-year-old children French articles, showing either high or low verbal availability. High verbal availability did not result in greater learning gains. Interestingly, however, another study by the same authors reporting on a math-learning experiment found that the NAO’s nonverbal availability (i.e., the use of nonverbal cues such as gaze and posture to attend to the student) did affect children’s learning gains positively (Kennedy et al., 2015), indicating that the effects of verbal and nonverbal availability may defer depending on the specifics of the tasks used.

Similarly, the effect of a robot’s feedback during RALL tasks is unclear. To date, only one study has directly compared the effects of several types of feedback in a RALL task. In this task, 3-year-old children were taught L2 English count words by a NAO robot (de Haas, Baxter, de Jong, Krahmer, & Vogt, 2017). There were three conditions in which children were given (a) explicit positive and implicit negative feedback (i.e., adult-like feedback), (b) explicit negative feedback (i.e., peer-like feedback), or (c) no feedback. The authors did not assess children’s vocabulary learning gains but studied how feedback affected children’s engagement, as measured via eye gaze and the amount of help children needed from the experimenter. The study showed that children looked more often at the experimenter in the no-feedback condition than in both feedback conditions, and that they needed more help from the experimenter in the explicit positive- and no-feedback conditions than in the explicit negative-feedback condition. There were no differences in the duration of the gaze toward the stimulus materials and the robot across the three conditions. This study indicates that the way in which children engage in a learning task is affected by the feedback the robot provides, but more research is needed to assess how a robot’s feedback affects learning gains and motivation, and ideally to compare how children respond to feedback from robot and human tutors.

A final behavior that may affect learning is adaptivity. This is an area worth exploring, since robots can, at least in theory, be programmed to provide adaptive tutoring. Only two studies to date have studied the effects of robot adaptivity on language learning. In the first study, by Schodde et al. (2017), an adaptive robot system was compared to a random system in teaching German adults words from an artificial language called Vimmi. The adaptive robot system selected which item to teach (depending on the items that the participant showed difficulty with) and the difficulty of the item (i.e., the number of distractors). The adaptive robot did not result in higher scores on two translation tasks (from Vimmi to German and from German to Vimmi) than the random robot, but participants in the adaptive condition improved more within the “I spy with my little eye” game (i.e., they found the right target more often) than participants in the random control condition. Schodde et al. note that the fact that the participants’ greater improvement did not result in higher learning gains could be due to the difficulty of the translation tasks as compared to the leaning task. If the participants’ knowledge had been measured receptively, a benefit of adaptivity might have been found.

However, in the second study examining the effects of a robot’s adaptivity on language learning (de Wit et al., 2018), no positive effects of adaptation were found on word knowledge tasks either. In this study, which is also discussed above, de Wit et al. (2018) investigated the effect of adaptivity on Dutch preschoolers’ learning of L2 English animal names. For half of the children, the “I spy with my little eye” game was adapted to the child’s needs (e.g., fewer distractor pictures for difficult target words), and for the other half of the children, the difficulty was not adapted. While children in the adaptivity condition remained engaged during the game, in contrast to the children in the no-adaptivity condition, adaptivity did not result in higher learning gains. As these studies do not convincingly show that adaptivity results in higher learning gains, more research is needed to study the effect of adaptive systems and to confirm the importance of adaptivity in RALL.

Thus, across studies, there are contradictory results with respect to the effects of the robot’s personality and social behavior on learners’ motivation and learning outcomes. Although one could adopt a “no harm in trying” policy with regard to incorporating personalized or social behavior in child-robot interactions, other experiments indicate that caution is needed, as social behavior does not always lead to higher learning gains. In the study by Gordon et al. (2015), for example, a robot that was not curious led to higher learning gains than a curious robot that showed excitement about the learning task and interest in the progression of the story. A possible explanation for this finding is that the curious robot was indeed more engaging but distracted participants from the learning task and therefore resulted in smaller learning gains compared to a less engaging robot.

These results are reminiscent of the study by Herberg et al. (2015) described earlier, which showed that children performed worse when a robot looked at them during tasks than when it looked away. Finally, support for the idea that social behavior may result in lower learning gains comes from an experiment in which prime numbers were taught by a NAO robot to 7- and 8-year-old children (Kennedy et al., 2015). In this study, an antisocial robot (which actively avoided gaze) resulted in greater learning gains than a social robot. In-depth analyses revealed that children spent more time looking at the social robot than the antisocial robot, thus looking less at the educational content provided by the tablet. These findings suggest that when a robot is too social, it can distract the child and actually make the child learn less than when the robot is less social, at least when the educational content is provided by an external medium such as a tablet and not by the robot itself.

A finding that adds to the complexity of the effects of a robot’s social behavior on task interest and learning is that gender can play a role in the beneficial or adverse effects of the robot’s social behavior. As discussed above, the children in Meiirbekov et al.’s (2016) study learned to produce over three new L2 words when working with a robot. The experimenters compared learning gains for children working together with a robot that would always either win or lose the game to assess whether the robot making mistakes would make the child feel more at ease during the learning process. Interestingly, the child’s gender determined which robot version led to the highest outcomes: Girls learned twice as many words as boys from the ever-winning robot, whereas boys learned twice as many words as girls from the ever-losing robot. The experimenters did not offer any possible explanations for this interaction effect of robot condition and gender, but it may suggest that there are differences between girls and boys in empathy or competitiveness, that is, in how they perceive the different robot versions (e.g., they may feel sorry for the robot when it loses or focus on their own wins) and in how they engage with the robot when it always either wins or loses.

To summarize, the existing evidence with respect to the robot’s social behavior is mixed. A robot showing social behavior such as producing the child’s name can increase children’s engagement in learning tasks. At the same time, the social behaviors of the robot can distract children from learning and, as a consequence, result in poorer learning outcomes. Moreover, there may be interaction effects with child characteristics such as gender, and results may differ depending on learners’ sociocultural backgrounds. The studies listed above have been conducted in countries all over the world (e.g., the United States, the United Kingdom, Singapore), and the different contexts may affect how children respond to the robot’s behavior. Moreover, the studies reviewed in this section involved a single session only, and it is not clear whether effects of robots’ social behavior differ when learners interact with robots over multiple sessions. Thus, it is still difficult to disentangle the effects of the robot’s social behavior shown from the novelty effect discussed above. Future research should try to optimize the social behavior of the robot for different learning tasks (e.g., grammar learning vs. speaking skills) and different groups of learners (e.g., preschool vs. school-aged children, girls vs. boys) and incorporate adaptivity and feedback.

Discussion

The goal of this review was to provide an overview of the current evidence on RALL and to identify potential topics for future research regarding the use of robots for language teaching. Thirty-three studies addressing word learning, reading skills, grammar learning, speaking skills, and sign language have been discussed, focusing on two important aspects: (a) the robot’s effect on children’s L1 and L2 language-learning gains and learning motivation and (b) the way robots should behave to maximize learning outcomes. Below, these aspects will be discussed separately, followed by a discussion of possible avenues for future research.

Mixed results were found with respect to L1 and L2 language learning outcomes. Most studies focused on word learning and did not clearly show whether robots are effective for word learning. More research is needed to determine the most effective role for the robot (e.g., teaching assistant or peer learner), the age-groups for which robots are most beneficial (e.g., preschool children, school-aged children, or adults), and the optimal number of sessions for word learning (one or multiple). The few studies examining reading skills, grammar learning, and sign language showed quite positive results, while the evidence with respect to speaking skills is more mixed. Note that the studies made different comparisons: Studies on grammar learning and sign language compared different robot behaviors or platforms to assess the most effective robot (behavior), while the studies on reading and speaking skills compared the effectiveness of a robot to other types of technology or traditional classrooms. Moreover, the conflicting results between skills may result from differences in demands on the robot’s interactional qualities (e.g., being able to have contingent conversations), which are likely higher in lessons on speaking skills than in lessons on reading or grammar. Lessons on reading and grammar can be mediated through a tablet or other devices that display words or rules (thus combining the robot with other types of technology), while robots cannot fall back on such devices and need more skills (e.g., speech recognition, natural language generation) when practicing speaking skills with learners.

In contrast to the studies on language-learning outcomes, which showed mixed results, the studies addressing participants’ learning-related emotions generally found positive effects and showed that both children and adults often enjoy working with the robot. Given that learning motivation and learning gains are often related (Dörnyei, 1994; Pekrun et al., 2002), the robot’s potential to motivate learners could be a valuable property. However, higher motivation was not always linked to higher learning gains in the RALL studies reviewed, and motivation could, at least in part, be due to the initial novelty effect of robots, which soon disappears. Although addressed in some experiments (e.g., Han et al., 2008), it is not clear how novelty has affected the results of previous studies. A strong recommendation is to carefully consider how to introduce the robot to participants to minimize novelty effects and to see whether the effects found are robust to prolonged exposure or wear off over time.

Conflicting results were especially striking with respect to the social behavior of the robot. Although some studies found positive effects of personalized and/or social behavior on learning gains and enjoyment, other studies found social behavior to negatively affect learning outcomes and behavior. Thus, it is clear that there is a thin line between the robot being social enough to sustain children’s interest and being too social, leading to children being distracted or even intimidated by the robot. Furthermore, adaptivity and feedback have remained understudied and should have a more central role in future studies, given the importance of adapting to the learner’s level and providing helpful feedback in L2 education and education in general (Li, 2010; Vygotsky, 1980).

One of the issues that makes it difficult to compare findings across studies is teleoperation. In the section on word learning, we discussed how teleoperation allows the experimenter to control the robot in real time, which may result in different interactions than when the robot is running autonomously. A teleoperated robot can respond in a contingent manner, while autonomous robots have to rely on predefined scripts and can respond contingently only when the participant behaves as expected. One of the values of RALL research is its contribution to developing autonomous robots that can be placed in classrooms. This does not mean, however, that robots should not be teleoperated during experiments, as teleoperating robots allows researchers to study more advanced interactions than those that can be achieved using autonomous robots. Such interactions are valuable to identify robot behaviors or properties that need to be developed further. However, we recommend that researchers clearly state whether they teleoperated their robot or used it autonomously to make the distinction between the two types of robots more clear and to facilitate comparisons between studies.

A further important issue follows from the newness of the field: Robots constitute a new form of technology, and too few studies have been conducted so far to conclude that robots are effective language tutors. Future studies will allow for firmer conclusions regarding robots’ potential as language tutors. Furthermore, a subset of the previous studies is heavily underpowered and/or suffers from other methodological limitations (e.g., no control group), which warrants caution in interpreting and evaluating their results. These issues often come to the fore in studying the use of technology for language learning: New technologies are often met with great enthusiasm, but research on their effectiveness often does not meet empirical standards and/or does not necessarily provide conclusive evidence of the benefits of these technologies (Salaberry, 2001). However, even with their limitations, the studies reviewed in this article helped us to identify potential areas for future research.

In our Introduction, several advantages of robots over many other forms of technology were discussed. One advantage is that robots provide opportunities for the learner to interact with their real-life environment. They are physically present and make it possible to integrate physical exercises or objects into learning tasks. Thus far, motor activities with robots have rarely been incorporated in learning tasks due to their feasibility (e.g., walking is undesirable as robots are likely to fall over), with the exception of a few studies (e.g., Tanaka & Matsuzoe, 2012). This is not surprising, because the more a robot acts and moves through space, the more likely it is that technical issues such as falling over or overheating will arise. However, it is possible that the use of objects and exercises could lead to higher learning gains (Kersten & Smith, 2002; Mavilidi et al., 2015; Toumpaniari et al., 2015). As robot technology is developing, motor activities are becoming more feasible to integrate, and this is therefore an area worth exploring.

Another advantage of robots over most other technologies is the interactional possibilities robots provide. Given the mixed evidence on the robot’s social behavior, personalization of child-robot interaction is perhaps the most important line of research for the future. When humans teache a child new word forms and meanings, they carefully monitor the child’s comprehension and, if necessary, adapt the tutoring strategy to the child’s needs. Robots are not yet capable of monitoring the child’s comprehension and adapting the tutoring strategy to individual children in such a careful manner. This makes it difficult to obtain “true” adaptivity, in which the robot adapts its lesson and behavior depending on the child’s comprehension. This is partially due to speech recognition systems, which in their current form are often not suited to recognizing child speech (Kennedy et al., 2017), and to systems not being advanced enough to recognize children’s emotions or comprehension. Furthermore, it is difficult to program robots to respond in a contingent manner, and even more so in interactions with young children, who are much more unpredictable in their verbal responses than older speakers. In other words, the research so far indicates that the important advantages of robots over most forms of technology still exist primarily in theory. Technical limitations prevent regular implementation of these possible advantages, and further technological developments are required to make full use of robots’ potential and to put these theoretical advantages into practice.

A review of research on computer-assisted language learning (Garrett, 2009) mentioned how, in 1991, it was possible for one person to write “an overview . . . of the kinds of technological resources currently available to support language learning and of various approaches to making use of them” (Garrett, 1991, as quoted in Garrett, 2009, p. 719). In 2009, the same author noted that an update would fill an entire journal, requiring contributions from many different areas of expertise (Garrett, 2009). Perhaps in another two decades, we will say the same about RALL. We will go from one review aimed at capturing almost all extant RALL research to a great many possibilities we cannot even imagine at the moment. The use of robots may become such an everyday aspect of life that we will not even wonder about employing them. However, before we reach that point, we first have to find out how exactly robots should interact and behave socially to be effective language tutors.

Footnotes

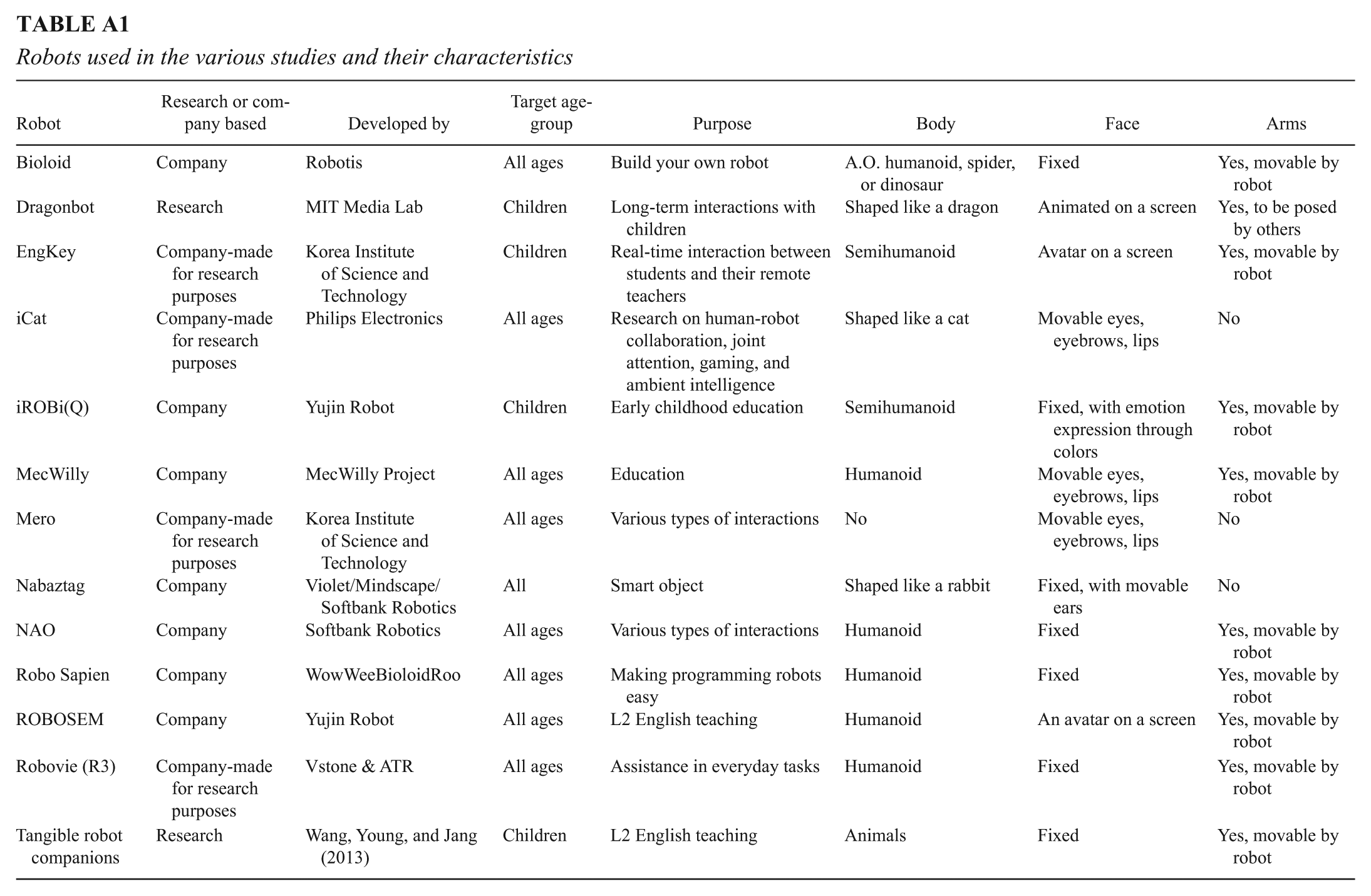

Appendix

Robots used in the various studies and their characteristics

| Robot | Research or company based | Developed by | Target age-group | Purpose | Body | Face | Arms |

|---|---|---|---|---|---|---|---|