Abstract

This study examines the pipeline of evidence for educational interventions, focusing on Institute of Education Sciences-funded research and What Works Clearinghouse (WWC) reviews. Using data from 376 efficacy-focused awards, it tracks evidence progression from grant funding to WWC-reviewed publications. The WWC has played a vital role in synthesizing and disseminating evidence, although challenges remain in expediting the review process. Key recommendations for maintaining the evidence pipeline include research funding that adds to the supply of rigorous evidence entering the pipeline and retaining and adequately funding the work of evidence clearinghouses that vet and disseminate findings for practitioners. Additional recommendations for expediting the movement of evidence to practitioners include rapid cycle evaluations, registered report submissions, and greater collaboration among researchers, funders, and journals.

Keywords

National legislation in the last decade has placed a strong emphasis on the adoption of evidence-based policies, practices, and interventions. Specifically, the Every Student Succeeds Act (ESSA; 2015) encourages school and district leaders to choose evidence-based interventions shown to improve student outcomes. At the same time, recent orders from the executive branch of the U.S. government have limited the ability of federal education agencies to support school district leaders and other practitioners in identifying and implementing effective interventions. Numerous grants and contracts that sought to generate or validate evidence to inform programmatic decision-making were cancelled, and the federal employees overseeing them were terminated. Although this study examined the pipeline of intervention evidence in education as it stood prior to the recent executive orders, the findings have clear implications for how education decision-making can best be supported moving forward.

Federal agencies, such as the U.S. Department of Education and the National Science Foundation, and philanthropic organizations (e.g., The Arnold Ventures Group) share a mission that is at least in part to provide evidence to decision-makers by funding rigorous trials of education interventions. For example, a goal of the What Works Clearinghouse (WWC; Institute of Education Sciences [IES], 2025g)—an investment of the U.S. Department of Education—is to review research, determine which studies meet rigorous standards, summarize findings for practitioners, and provide implementation support for effective interventions through WWC products, such as intervention reports and practice guides (IES, 2025c). In doing so, the WWC seeks to support decision-making through multiple stages of the evidence “pipeline.”

However, regardless of the evidence source, the pipeline to decision-makers is long and fragmented (Neuhoff et al., 2015). An extended period has often been required for funders, researchers, and clearinghouses to generate, validate, and disseminate evidence about interventions to practitioners (Honig & Coburn, 2008; Nelson et al., 2009), including critical information that decision-makers need regarding the implementation requirements of those interventions (Garcia & Davis, 2019). The timelines for decisions about adopting interventions often outpace the production of relevant evidence about intervention effectiveness. Decision-makers, therefore, often must proceed with programmatic decisions with the best available evidence, which is often insufficient (Nelson et al., 2009).

Study Purpose

This study examines the pipeline of evidence about the effectiveness of education interventions, focusing on one source of intervention evidence: IES (2025d) grant awards. We focused on IES grants and WWC data for three reasons. First and foremost, overt attention to a progression of events that begins with grant award, through production and review of evidence, and concluding with dissemination of implementation guidance for practitioners is a core tenet of IES’s theory of action (Albro, 2024), making it unique among federal agencies in this regard. Furthermore, IES has made large investments in curating databases that link information about funded grants to (a) the scholarly products they generate (e.g., reports/publications in the Education Resources Information Center [ERIC]), (b) WWC reviews and individual findings from such products (e.g., the WWC’s Data From Individual Studies), and (c) WWC products. Finally, IES has also made large investments in ensuring that WWC products provide practitioner-friendly resources for implementing effective programs (e.g., in the form of intervention reports and practice guides). Moreover, IES (2025e) found that practice guides are the WWC product found most useful by school and district education leaders. Analyzing IES grant and WWC review data together provided a feasible way to understand the pipeline of how evidence on intervention effectiveness can contribute to practitioner-facing resources.

Among IES grants, we focused on award types within the Education Research Grants and Research and Development Center grants programs, both funded by IES’s National Center for Education Research, that are most clearly dedicated to the production of evidence about intervention effectiveness. Historically, in IES funding solicitations, these were referred to as “efficacy and replication,” “effectiveness,” and “scale-up evaluations.” The primary difference between the efficacy award types and the effectiveness/scale-up award types was that efficacy studies sought to test interventions that had not been rigorously evaluated previously, under more idealized conditions, whereas the effectiveness/scale-up studies were seeking to generate evidence about interventions with prior evidence of efficacy but now implemented under routine practice in authentic education settings. More recently, the solicitations for Education Research Grants and Research and Development Centers grants categorized these types of awards as “impact.” Using this specific set of IES-funded awards, we examined how these awards’ publications contributed to evidence in WWC reviews and products.

Overview of Data and Methods

We used three public federal data sources to understand the education evidence pipeline. First, we used IES award data to gather information about 376 impact-focused IES awards that started in 2015 or earlier (also including replication, scale-up evaluations, and impact studies conducted by research and development centers). The percentage of impact-focused grants awarded appears to be relatively stable at approximately 20% to 30% per year (see Table S1, available on the journal website), so we expect the patterns observed in our analysis will hold should future grants be awarded. Second, we searched ERIC, Google Scholar, and IES award pages in August 2024 to find the publications these awards produced. Third, we merged these records with data on WWC reviews and WWC-assigned evidence tiers. The Supplementary Methods Appendix, available on the journal website, provides more detail, including R code and data to reproduce our analyses.

We used these data to examine the number and proportion of awards that (a) produced at least one publication that we could find through our multipronged search, (b) produced at least one publication that was reviewed by the WWC, (c) produced at least one publication with a study that met WWC standards (with or without reservations), and (d) produced a publication describing a study that met WWC standards and had an ESSA Evidence Tier of 1, 2, or 3 (strong, moderate, or promising evidence, respectively; see IES, 2025b). These outcomes reflect various stages of the idealized evidence pipeline from evidence generation to application.

Results

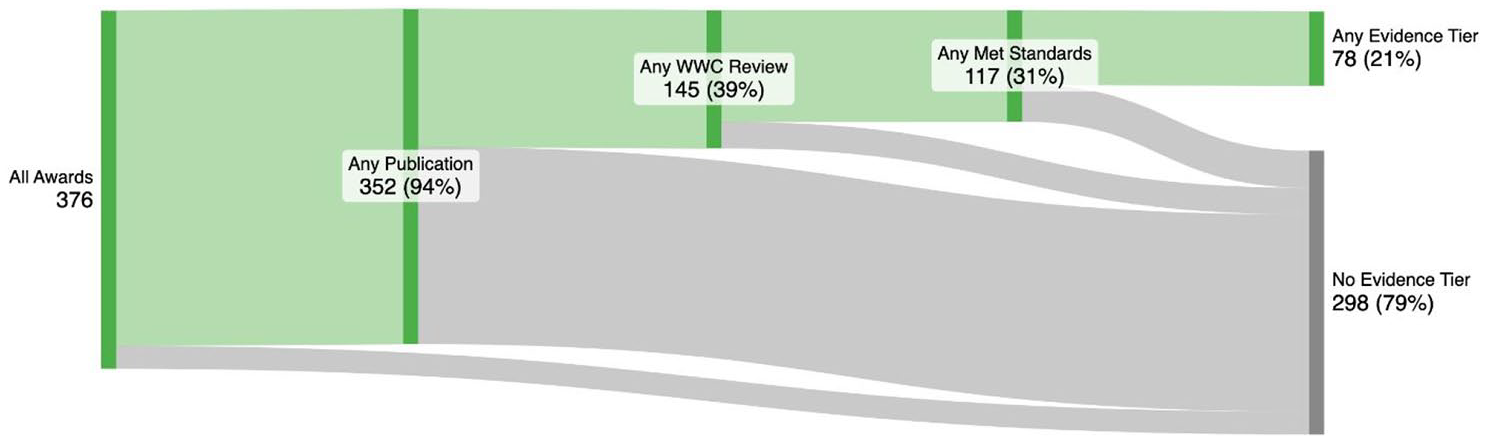

Figure 1 shows the number of awards meeting each benchmark described previously. The amount of evidence that reaches the end of the pipeline is driven by factors that differ based on the pipeline segment. The initial (far left) segment describes the evidence “supply” produced by the awarded grants, via publications. In the middle of the pipeline, we observe queuing of evidence, where some publications produced by awards have not yet been reviewed by the WWC. We note that some awards may not have an associated WWC review because the existing publications from that award, at the time of our analysis, focused on implementation or theoretical considerations, not impact per se, and as such would have been ineligible for WWC review. Regardless of eligibility for WWC review, evidence in the queue is not lost but instead awaiting the opportunity to inform policy and practice. Future pipeline analyses may consider reviewing all study reports, perhaps coding their research questions and/or research designs, to assess WWC review eligibility. Finally, movement through the final segments of the pipeline is driven by the quality of the evidence produced, with only the highest quality studies meeting WWC standards and obtaining an ESSA Evidence Tier. A more detailed version of these results, disaggregated by award characteristics (e.g., IES program area, award period), can be found in Table 1.

Sankey diagram of the evidence pipeline.

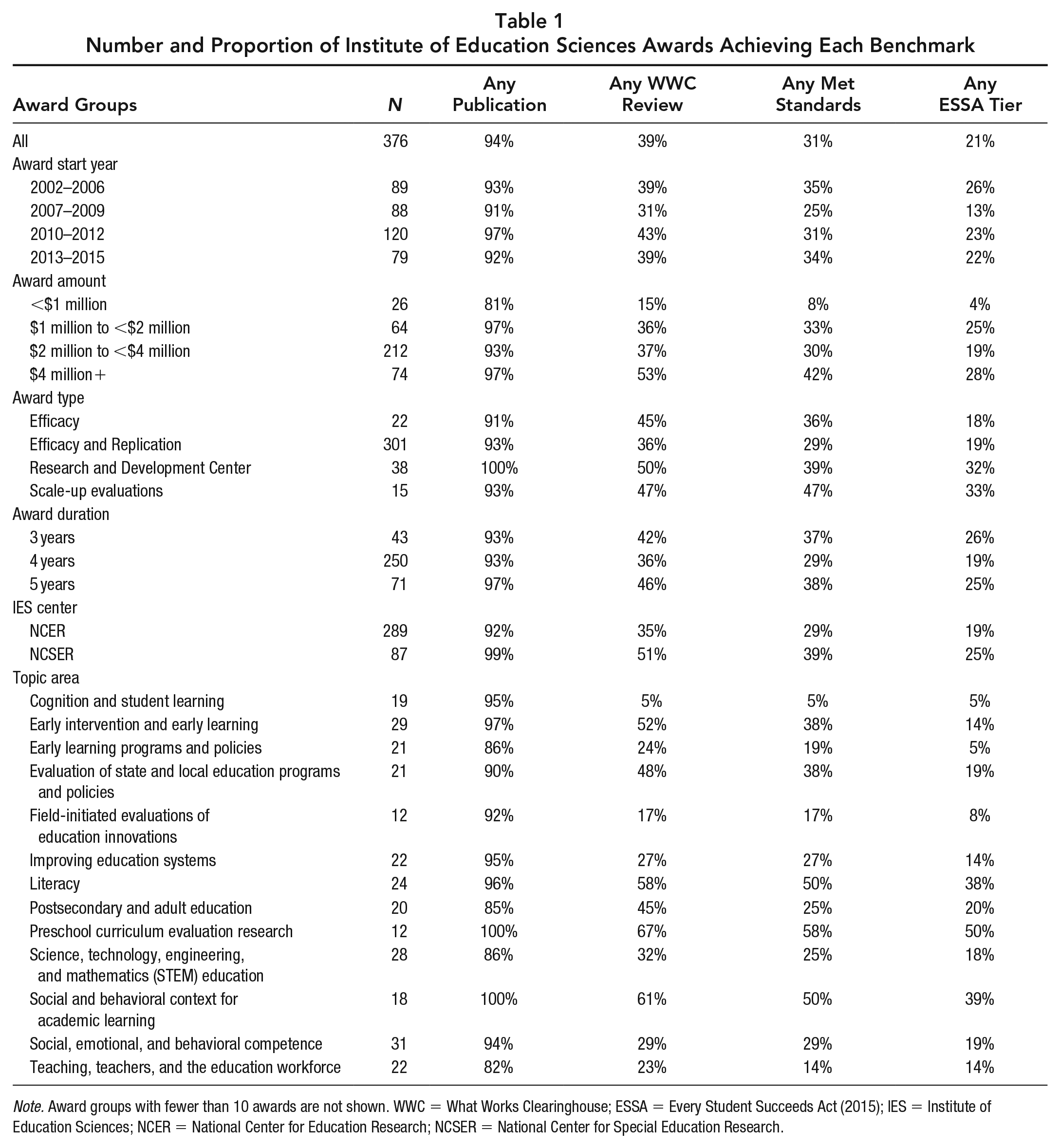

Number and Proportion of Institute of Education Sciences Awards Achieving Each Benchmark

Note. Award groups with fewer than 10 awards are not shown. WWC = What Works Clearinghouse; ESSA = Every Student Succeeds Act (2015); IES = Institute of Education Sciences; NCER = National Center for Education Research; NCSER = National Center for Special Education Research.

Table 1 provides the number and proportion of IES awards achieving each benchmark, disaggregated by various award characteristics. Overall, there is little variation in the proportion of awards meeting each benchmark based on the award characteristics. One notable exception is scale-up evaluations and studies that come from Research and Development Center funding, where the proportion of studies meeting the pipeline benchmarks tends to be higher than those from other award types.

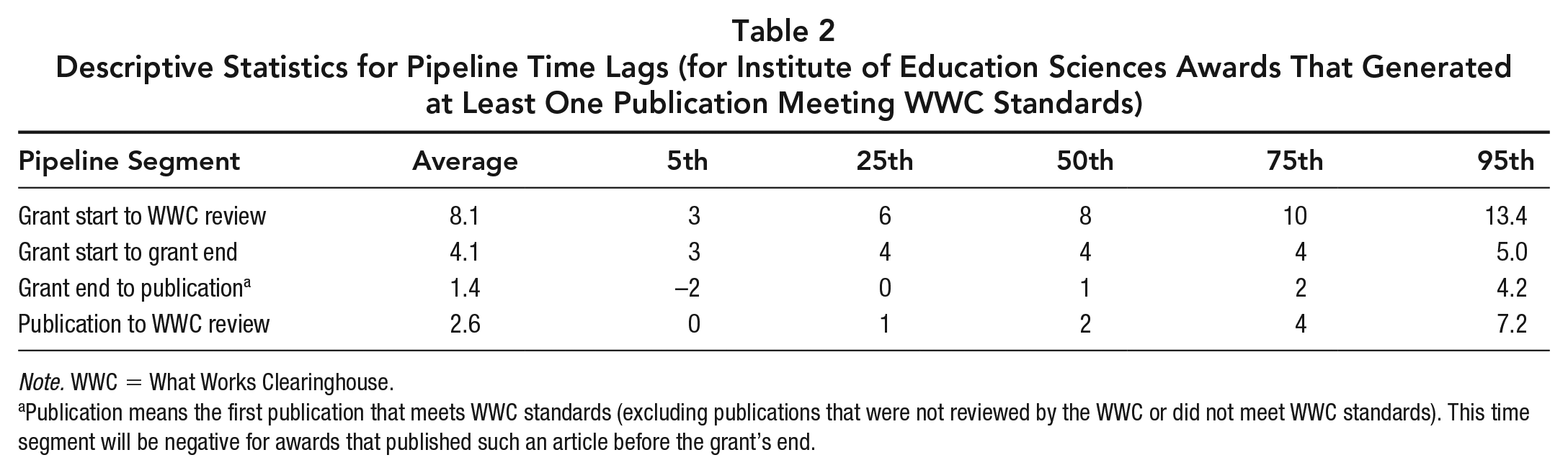

In Table 2, we provide descriptive statistics (mean and percentiles) for both overall and segment-specific timelines within the evidence pipeline. The mean timeline for grants to produce at least one publication that is reviewed by the WWC is approximately 8 years, with the largest segment, the duration of the grant, averaging approximately 4 years in duration.

Descriptive Statistics for Pipeline Time Lags (for Institute of Education Sciences Awards That Generated at Least One Publication Meeting WWC Standards)

Note. WWC = What Works Clearinghouse.

Publication means the first publication that meets WWC standards (excluding publications that were not reviewed by the WWC or did not meet WWC standards). This time segment will be negative for awards that published such an article before the grant’s end.

The timelines described in Table 2 do not include the time it takes for information from a WWC study review to be used in a WWC product designed for decision-makers (e.g., Intervention Reports and Practice Guides). The timeline required to produce WWC products is driven at least partly by the time required for sufficient evidence (i.e., from multiple studies) to accumulate for an intervention. That is, knowledge accumulation is slowed by the current lack of replication in the education research literature (which is akin to the state of replication in other fields; Chhin et al., 2018) and the extended time needed for replication studies to be conducted (e.g., a 4- or 5-year grant) and to produce reports with study findings.

Limitations

Regarding data sources, we acknowledge that our analysis did not include all sources of impact evidence, thus making our estimates of evidence production somewhat conservative. Other agencies and entities contribute to the supply, including the National Science Foundation and private foundations (e.g., Arnold Ventures), and the WWC has reviewed many of the associated study publications. Similarly, even within the U.S. Department of Education, there are other centers or programs that produced impact studies that were not included in this analysis, such as those funded through the National Center for Educational Evaluation or the Investing in Innovation (i3) program (now called the Education Innovation and Research program). Similarly, although rigorous, large-scale impact studies were not the primary aim of Goal 2: Design and Development studies funded by the National Center for Education Research (subsequently referred to as “development and innovation studies”), a small number of randomized controlled trials (RCTs) were conducted as part of these awards. Our analyses did not include those RCTs.

We also note here that WWC products are not the only sources of evidence and implementation support for interventions. For example, the Blueprints for Healthy Youth Development research registry reviews and disseminates information on interventions with strong evidence of effectiveness in several youth development domains, including education. Similarly, Social Programs That Work reviews and disseminates programs with credible evidence of effectiveness from well-conducted RCTs in K—12 education among other policy areas (for a discussion of the similarities and differences between these three entities, see Taylor et al., 2021). These clearinghouses are among several alternative dissemination mechanisms to the WWC, including long-standing resources, such as research briefs/reports and books, although implementation support has not historically been a focus of these nonclearinghouse alternatives.

Finally, given the available data to conduct this analysis, our methods necessarily underestimate the true lag between an initial call for evidence and eventual practitioner decisions that utilize it. For example, at the beginning of the evidence pipeline, a more appropriate start might be at the opening of the award solicitation funding a program evaluation. At the end of the pipeline, practitioner decision-making would ideally be represented by uptake of WWC products. Furthermore, to analyze the duration of grant awards and subsequent pipeline segments, we used the award period data from the public IES award database. These award period data do not reflect award extensions, blurring the endpoints for certain awards. Because these markers are not consistently tracked and recorded, our analysis likely underestimates the true gap between when guidance is provided and when practitioners apply it in their decisions.

Discussion

The results of our analysis of evidence movement and the time requirements for key segments of the evidence pipeline suggest the need for a multifaceted approach to producing evidence and validating and expediting its availability to practitioners and policymakers. As such, we present in this section potential strategies that can be used to facilitate expedited evidence generation and dissemination at all segments of the pipeline, and we organize our recommendations by these segments.

Study/Grant Duration

Grant durations averaged just over 4 years. This result is expected because large-scale trials of comprehensive education interventions often require significant time for recruitment and implementation and may require multiple cohorts of participants. However, for some studies, shorter timelines are possible, and rapid cycle evaluation designs (see Atukpawu-Tipton & Poes, 2020) should be considered to produce evidence more quickly than traditional evaluation designs. Protracted study durations also slow the accumulation of evidence needed for synthesis and WWC synthesis products.

From Grant End to Publication

This portion of the pipeline is determined by the time required for grantees to produce published or nonpublished reports. Publication timelines averaged 1.4 years from the end of the grant. This timeline is not uncommon for publishing education research in journals, especially for those with low acceptance rates. If those articles requiring 1.4 years to be published, on average, were submitted a full year prior to the end of the grant, the effective timeline of nearly 2.5 years lacks sufficient urgency to meet the needs of decision-makers. Ideally, we would have been able to accurately track the portion of this timeline when manuscripts were in peer review. However, although some journals publish article submission dates, some do not, making systematic tracking difficult.

Current initiatives should help accelerate the timely dissemination of evidence for policy and programmatic decisions. These include the registered report model, wherein the study methods and planned analyses are peer reviewed and (if applicable) provisionally accepted prior to data analysis (i.e., based on the importance of the research question and the rigor of the methods used to address it rather than the results). In addition to discouraging publication outcome reporting bias against nonsignificant effects (Pigott et al., 2013), registered reports move much of the work of manuscript preparation earlier on in the life cycle of a research project, thereby reducing delays between data analysis and manuscript publication. Additionally, a variety of openly accessible research repositories (e.g., EdArXiv and SocArXiv) are now available for researchers to post preprints of their articles prior to undergoing the peer-review process in a traditional scholarly outlet, and journals are increasingly accepting of this practice (e.g., see Evaluation Review, 2025). Such efforts connect research evidence to decision-makers and evidence clearinghouses more quickly by eliminating peer review and copyediting delays and more inclusively by circumventing paywalls imposed by for-profit journals. 1

From Publication to WWC Review

There is a significant queue of evidence in the pipeline between when a report is published and a potential review by the WWC, with 39% of awards producing a publication that was subsequently reviewed by the WWC. When reviews occurred, these required 2.6 years, on average, from when the study report was published. With regard to expediting WWC review of study reports, it is important to note that study reviews are resource-intensive and the WWC has finite resources to commit to study reviews. In fact, historically, IES’s funding has been limited (see National Academies of Sciences, Engineering, and Medicine, 2022) compared to other scientific funding agencies with a similar mission and goals. Although the number of study reports currently in the queue for WWC review is greater than we would prefer for timely programmatic decision-making, without the existence of the WWC or other clearinghouses that review education research, we will see exponential growth in the amount of rigorous evidence that will never be synthesized or will never inform practitioner-facing products. It is undeniable that entities such as the WWC and other education clearinghouses are critical elements in an evidence pipeline that leads to better decision-making, and the rapidity of their functions could be improved with increased funding. Increased funding for IES could allow it to expand the scope and intensity of the WWC review work conducted by its contractors. In a complementary fashion, researchers conducting impact studies can decrease the likelihood that a study report is overlooked by the WWC by submitting the report to ERIC (IES, 2025a). As a critical resource for identifying publications that provide evidence for intervention effectiveness, the functionality of ERIC must be preserved.

Even with increased funding and greater awareness of available reports, the WWC or a successor clearinghouse could not undertake all study reviews of interest to stakeholders, and the timeline for such reviews will inevitably be subject to delays. Historically, whether the WWC reviewed a particular study was driven by a complex set of factors that influenced the urgency of the review, and those factors were not always apparent to the research community or the general public. For example, the study topic area, media attention around the intervention, and whether the study was relevant to grant competitions were all factors in the WWC’s determination of which studies to review, among many other considerations (see IES, 2025h, Appendix A).

Recent changes to WWC procedures sought to expedite evidence review and dissemination. The introduction of the Study Review Protocol (SRP; IES, 2025f) would have expedited WWC reviews because it bypasses delays such as studies being reviewed only when a relevant WWC product (e.g., an Intervention Report or Practice Guide) was being produced. With the SRP in place, WWC reviews would have been completed more quickly than in the past, albeit without implementation support until the study was included in an Intervention Report or Practice Guide. As a long-range goal, evidence clearinghouses like the WWC might consider coupling impact information with initial implementation guidance when presenting the results of an intervention study.

A Holistic Vision for Further Discussion

Beyond these initiatives in isolation, community-wide efforts are needed to accelerate production and dissemination of evidence and implementation support. Such efforts would facilitate integrated approaches to improving the evidence and implementation support pipeline. Specifically, ongoing collaboration among researchers, funders, technical assistance providers, and journal editors could not only increase the proportion of studies that produce rigorous evidence but also expedite the evidence and implementation support that those studies disseminate. Models exist for these collaborations, such as in the previously mentioned evidence repositories and the technical assistance once provided by Abt Global (Abt Global, 2025) to grantees of the U.S. Department of Education’s Education Innovation and Research Program (U.S. Department of Education, 2025), prior to their contract being cancelled in early 2025.

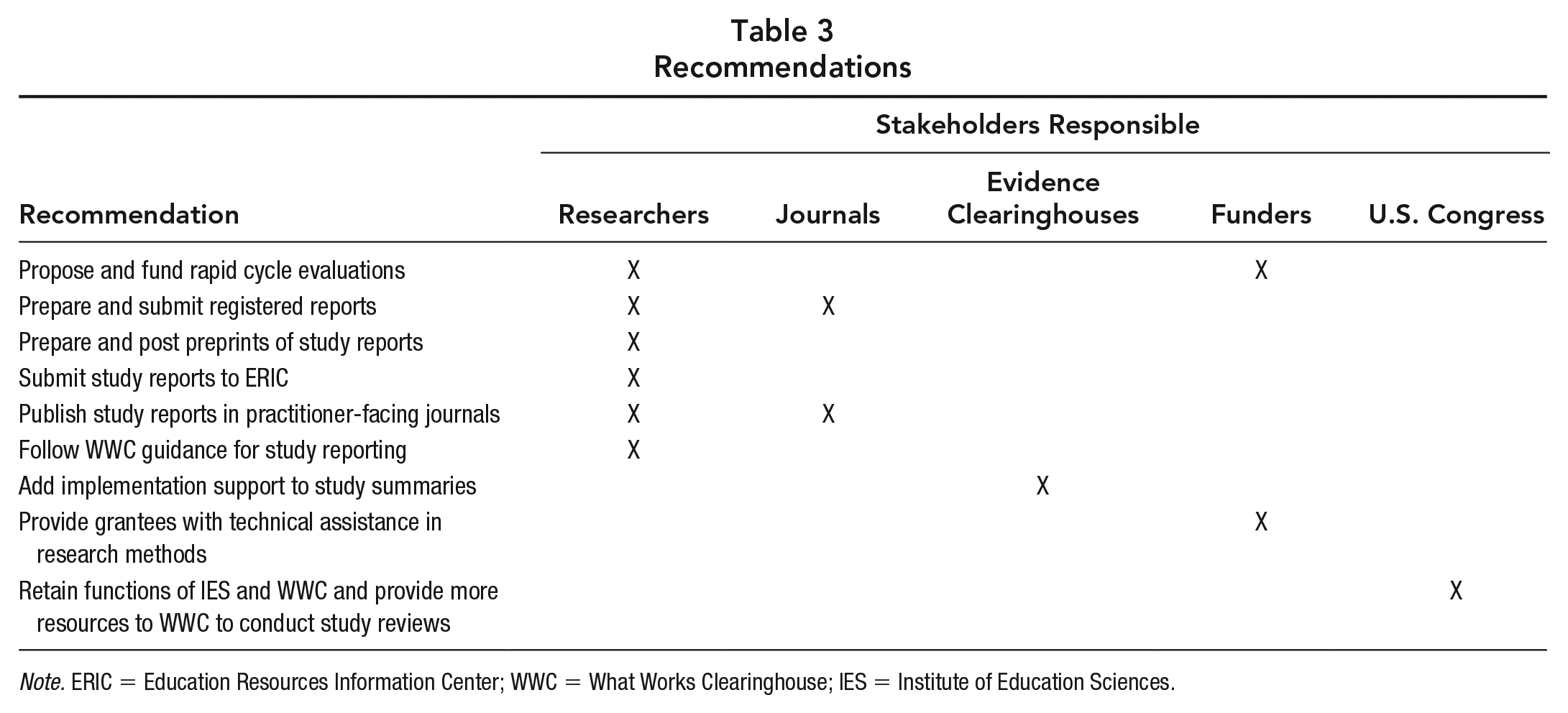

One vision for how multiple stakeholders could collaborate includes the following scenario. A funded grant is immediately assigned a technical assistance expert from the funder, clearinghouse, or journal that supports conduct of a rigorous study that can meet evidence standards of the WWC or another clearinghouse. The intent here is to reduce the loss of evidence stemming from studies not meeting standards. The study is concurrently registered in an evidence repository, submitted as a registered report to a scholarly journal, and provisionally accepted after undergoing peer review and revision if applicable. The expectation would be that researchers/authors later develop a researcher-facing journal article or report based on their findings, with design and impact reporting conducive to both expedited review from an evidence clearinghouse and to synthesis (see IES, 2025i). This would serve to shorten the pipeline segment between reports becoming available and subsequent evidence clearinghouse review. Additionally, researchers are strongly encouraged to develop a practitioner-facing article/report that provides implementation support. The practitioner article receives a DOI unique from that of the research article, acknowledging the scholarship and effort that researchers use in translating findings for decision-makers and other nonresearchers. In Table 3, we provide a summary of the recommendations discussed in this article by stakeholder group. For some recommendations, progress is already underway.

Recommendations

Note. ERIC = Education Resources Information Center; WWC = What Works Clearinghouse; IES = Institute of Education Sciences.

Conclusion

Our findings identify multiple factors that influence both the amount of vetted evidence available to education decision-makers and the often protracted timeline for that evidence to be available for practitioner use. We conclude that our vision of a well-supplied and efficient evidence pipeline will require additional resources and greater stakeholder coordination. Specifically, the research community should continue to generate evidence through rigorous impact studies and seek to expeditiously move that evidence to decision-makers. Achieving the vision is much more likely if impact studies continue to be funded with federal, state, and private (e.g., foundation) support; if the critical functions of evidence clearinghouses are retained and adequately funded; and if multiple stakeholders work to build and integrate our existing infrastructures, incentives, and supports (e.g., technical assistance). Many of the component pieces already exist; they just function in isolation. We hope this article stimulates conversation among key stakeholders who can move this vision closer to reality.

Supplemental Material

sj-docx-1-edr-10.3102_0013189X251364273 – Supplemental material for Mapping the Pipeline of Intervention Evidence in Education

Supplemental material, sj-docx-1-edr-10.3102_0013189X251364273 for Mapping the Pipeline of Intervention Evidence in Education by Joseph A. Taylor, David I. Miller, Laura E. Michaelson and Kathryn Watson in Educational Researcher

Footnotes

Notes

Authors

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.