Abstract

This conceptual article explores shared epistemic agency between humans and generative artificial intelligence (GenAI), emphasizing the need to foster active human epistemic agency through adaptive epistemic stances. We propose a framework incorporating epistemic stances in interactions with GenAI, drawing on Tsai’s (2004) work to explore the reinforcing relationship between them. The article outlines how learners’ epistemic stances affect their interaction with GenAI and suggests strategies for fostering critical engagement and inquiry. We assert that learners with adaptive epistemic stances benefit more from GenAI and that continuous interaction with GenAI can reshape learners’ epistemic development. This article aims to promote a symbiotic relationship where both human and machine contributions are valued, ultimately enhancing the overall learning experience through shared epistemic agency.

Keywords

The release of advanced generative artificial intelligence (GenAI) models (e.g., GPT-4.5, Gemini 2.5, Llama 3.3, and Claude 3.5) has prompted deep concerns and interest among academics, educators, and learners. Unlike traditional AI learning systems, which typically focus on rule-based systems, data-driven algorithms, and machine-learning models to provide personalized learning experiences, automated assessments, and adaptive learning paths (Hopgood, 2021), GenAI introduces a more dynamic, multimodal, and interactive method. These models can engage in open-ended conversations, generate creative content, and perform various roles to support students based on their inputs (Sabzalieva & Valentini, 2023). Moreover, some specialized tutoring systems, powered by advanced GenAI (e.g., Kahnmigo), incorporate pedagogical frameworks such as Socratic dialogue and use techniques such as retrieval augmented generation (RAG) algorithms to deliver accurate and unbiased content (Hicke et al., 2023). These systems are built for educational environments, offering targeted support and personalized learning experiences that align with established educational goals. However, despite their instructional features, specialized GenAI systems are still susceptible to potential errors due to the data they are trained on. Moreover, most GenAI systems are not engineered for educational contexts, raising questions about their effective application in learning.

Concerns about overreliance on GenAI systems have been raised by students (Chen & Zhu, 2023) and health professionals (Samant et al., 2024), especially regarding issues such as inaccurate information, academic integrity, and fairness in learning assessments (Sok & Heng, 2023). These concerns reflect a broader issue: A potential loss of epistemic agency, which refers to the ability of students to actively contribute to the knowledge and practices within their learning communities (Miller et al., 2018; Scardamalia, 2002). In an era where seemingly coherent outputs can be effortlessly generated by AI, preserving human agency in the pursuit of understanding becomes critical. In fact, the importance of human agency was highlighted by UNESCO (2024) in the proposed AI competency framework. The affordance and limitation of various GenAI systems prompt an important inquiry for educators: How should teaching and learning practices adapt to ensure that students retain agency in their pursuit of knowledge? The danger of losing epistemic agency is not limited to education; it has also been observed when AI influences political knowledge and beliefs (Coeckelbergh, 2022). Both human actors and intelligent machines are now recognized as possessing epistemic agency (Ponti et al., 2024), which challenges traditional ideas of knowledge construction and learning. As GenAI continues to advance, educators and learners must engage in this evolving process to ensure that the human element remains central to education while leveraging the potential of GenAI to enhance learning outcomes.

This conceptual article attempts to contribute to such collective effort by first illustrating the nature of shared epistemic agency between human and GenAI to work collaboratively on the epistemic tasks at hand. We propose a framework that incorporates epistemic stances, the position or viewpoint one adopts regarding the methods and criteria for acquiring and validating knowledge (Hofer & Pintrich, 1997; Kuhn et al., 2000) when working with GenAI via two modified assertions based on the conceptual framework proposed by Tsai (2004). Next, we analyze real-life human-GenAI interaction in light of human epistemic stances and possible factors that affect human-GenAI interaction. Finally, we outline the epistemic stances learners may take when working with GenAI and suggest strategies to interact with GenAI to shape and facilitate learners’ epistemic stances. In essence, we argue that as GenAI becomes more powerful, people should develop more adaptative epistemic responses to augment their role as knowers.

Theoretical Foundation

Shared Epistemic Agency Between Humans and GenAI

Epistemic agency refers to the capacity of individuals or groups to actively shape the knowledge and practices in a community (Scardamalia, 2002). It reflects the degree of autonomy and influence individuals or groups wield across the entire spectrum of knowledge creation components—including goals, strategies, resources, and evaluation of results (Scardamalia & Bereiter, 2006). In classroom settings, promoting epistemic agency means shifting students’ roles from passive recipients of information to active participants who engage in inquiry activities and contribute meaningfully to the learning process (Stroupe, 2014). With the advent of GenAI, the concept of epistemic agency extends beyond its traditional understanding in the social and educational sciences, where it typically refers to the human capacity to act intentionally and influence social relationships or structures. The inclusion of nonhuman actors expands our understanding of how different entities interact to shape outcomes (Latour, 1996), demonstrating that agency is no longer exclusively possessed by humans but shared across both human and technological actors, such as GenAI. The human and GenAI form a network that includes not only the interaction between them but also the underlying algorithms, the data used to train the model, the hardware running the software, and the interface or modality through which the interaction takes place. Even though the interaction occurs overtly between the human actor and GenAI, each component—whether software, hardware, or interface—plays a critical role in the communication process. When users input a query or command, hoping for a specific type of response, GenAI interprets this input based on its programming and the data it has been pretrained on (Ubani et al., 2023). The output GenAI provides is its way of aligning with the user’s request, which might involve clarifying questions, providing information, or performing a task. Each step in this process involves a translation of intents and meanings, with both the human and GenAI adjusting and aligning their behaviors to maintain effective communication. Occasionally (maybe usually), there are moments of breakdown, such as when GenAI misinterprets a query given its inherent limitations and built-in assumptions of the GenAI’s algorithms and data sets. Similarly, if a human uses the output from GenAI without any verification or modification, this decision might indicate an imprudent judgment and failure to assume epistemic agency on the part of the human actor.

Hence, an effective shared epistemic agency involves the intentional, collaborative effort of group members to create and advance shared knowledge objectives through coordinated knowledge-related and process-oriented actions (Damşa et al., 2010). For example, Khanmigo, the AI tutor powered by ChatGPT-4 in Khan Academy, uses Socratic dialogue to guide students through mastering complex concepts (see https://www.khanacademy.org/khan-labs) by integrating process-oriented actions such as setting goals, monitoring progress, and providing constructive feedback. Simultaneously, it employs knowledge-related actions such as identifying knowledge gaps, facilitating discussions to create shared understanding, and encouraging the generation and negotiation of new ideas. However, the challenge arises: Without such a tailored AI tutor, could humans alone effectively master the cognitive and epistemic construction processes in a group and achieve similarly fruitful learning outcomes? Although general-purpose GenAI, such as ChatGPT, is capable and flexible, it requires fine-tuning and specific prompting to carry out these knowledge-related and process-oriented actions effectively (e.g., RAG or prompt engineering). Given the autonomy of humans in shaping the entire knowledge spectrum, we argue that humans are the fundamental initiators and drivers of this shared epistemic agency between humans and GenAI.

Therefore, when interacting with GenAI, users who adopt a stance that treats the system as having epistemic agency see it as more than just a tool. They view GenAI as an entity capable of providing suggestions and solutions and clarifying confusion. The interaction between a human and GenAI transcends the simplistic model of a user merely commanding a machine. Instead, it represents a complex, symbiotic relationship where technology and humans influence each other to co-construct in a shared network. In this dynamic, GenAI adapts and responds based on the input it receives. Conversely, the human user should not be a passive operator but an active participant, initiating and shaping GenAI’s learning and output through continuous engagement.

The key component in this interactive dynamic is the fact that the human retains epistemic agency rather than relinquishing it to GenAI. This involves actively acquiring, questioning, verifying, and making sense of the content generated by GenAI. By adopting an epistemic stance that emphasizes critical engagement, users can effectively guide GenAI, helping to refine its responses and ensuring that the generated content is not only relevant but also reliable. This active participation is essential for leveraging GenAI’s capabilities fully while maintaining critical oversight over its operations and outputs, thereby fostering a mutually beneficial relationship that enhances both the technology’s utility and the user’s experience through a shared epistemic agency.

Humans’ Epistemic Stances and Related Factors That Contribute to the Formation of Shared Epistemic Agency With GenAI

People’s epistemic stance, or the position they adopt for acquiring and validating knowledge against specific criteria (Hofer & Pintrich, 1997; Kuhn et al., 2000), can be classified based on their level of epistemic thinking, progressing from an “absolutist” to “multiplist” to “evaluativist” orientation (Kuhn et al., 2000). Absolutists believe that there is a definitive right or wrong answer. Multiplists, on the other hand, argue that representations of the same event can conflict due to individuals’ unique interpretations; therefore, all claims could be considered equally valid because each representation is shaped by different perspectives, contexts, and experiences. Evaluativists, however, acknowledge that although multiple viewpoints exist, some claims hold more merit than others after a critical evaluation of knowledge claims based on established criteria. Epistemic stances influence cognitive processes and interactions. For example, evaluativists generate more diverse ideas during scientific argumentation and outperform absolutists in physics problems (Nussbaum et al., 2008). Multiplists, however, show less interaction and tend to agree with their partners. Additionally, evaluativists excel in juror reasoning and evidence representation compared to multiplists and absolutists (Weinstock, 2009). These findings demonstrate that people adopting different epistemic stances process the information available to them differently and engage with the task distinctly as they draw on underlying concepts and principles that guide their approach.

Building on this foundation, research has identified various factors influencing interactions between humans and GenAI. For example, students’ self-efficacy and trust in GenAI tools mediate the relationship between their GenAI use and learning performance (Shahzad et al., 2025). University students also exhibit greater interest in adopting GenAI for learning compared to their instructors, who tend to express amplified ethical concerns (Chan & Lee, 2023). Additionally, students’ motivational structures may impact their use of GenAI. Specifically, time pressure (e.g., avoiding an impending assignment deadline) and sensitivity to rewards (e.g., concerns about academic grades) positively predict GenAI use, whereas sensitivity to quality (e.g., students’ awareness of the quality of their learning) does not show a significant relationship with GenAI use (Abbas et al., 2024). Moreover, cognitive factors, such as prior knowledge, can influence the quality of prompts generated by users (Robertson et al., 2024), potentially resulting in distinct patterns and outcomes of human-GenAI co-construction. These findings suggest that human-GenAI shared epistemic agency may depend on the interplay of various factors. Amid the ongoing debate surrounding the GenAI use in educational settings, there is a pressing need to investigate how human epistemic stances would affect the dynamics of shared epistemic agency when people work with GenAI. Although a comprehensive exploration of these contributing factors is beyond the scope of this conceptual article, we focus on examining human-GenAI shared epistemic agency through the lens of epistemic stance and the exploration of possible factors that may influence students’ epistemic stance when working with GenAI.

Conceptual Interpretation and Exploration

The Symbiotic Framework of Adaptive Epistemic Stances and Learning With GenAI

As GenAI systems become increasingly integrated into educational settings, traditional methods of instruction must evolve to accommodate the dynamic interplay between human learners and intelligent machines. To address this, we propose a symbiotic framework that incorporates an epistemic stance into the use of GenAI, emphasizing a shared epistemic agency between humans and GenAI, building on the work of Tsai (2004).

During the early surge of Internet use in education, Tsai (2004) proposed two key assertions: (a) Learners with different epistemic beliefs benefit differently from Internet-based instruction, and (b) the use of Internet-based instruction will change or reshape learners’ epistemologies.

Tsai’s (2004) assertions are grounded in the flexibility and openness of Internet-based instruction, characterized by self-directed information-seeking behavior using search engines to meet individual learning. This process inherently introduces complexities and challenges. Likewise, several key features characterize the development of GenAI, including generative adversarial networks, reinforcement learning, neural networks, large multimodal models, natural language processing, and the utilization of big data (Cao et al., 2023). These components work together to enable GenAI systems or agents to learn, adapt, and perform complex tasks by mimicking human-like cognitive processes. Meanwhile, the GenAI advancements have created a more complex and uncertain learning environment than conventional Internet-based instruction, necessitating a novel approach to teaching and learning. Thus, our human-GenAI symbiotic framework incorporates two modified assertions from Tsai to foster shared epistemic agency with adaptive epistemic stances when learning with GenAI. This framework aims to create a learning environment where human and GenAI interactions support and co-construct knowledge, enhancing the overall learning experience.

Modified Assertion 1: Learners with varying epistemic stances benefit differently from learning with GenAI

Regarding Tsai’s (2004) first assertion, he posits that Internet-based environments can enable students with adequate background knowledge to acquire a more comprehensive understanding of a knowledge domain, which can cater to the needs of students with advanced epistemologies, such as evaluativist or commitment within relativism as described by Perry (1970). In contrast, students with less advanced epistemologies and limited background knowledge, such as dualism, may benefit more from traditional authority-based teaching emphasizing a high degree of certainty in knowledge.

We argue that while engaging with GenAI, learners with different epistemic stances derive varying benefits from their interactions. Unlike Internet-based learning environments where learners actively select from search engine results, learning with GenAI provides a cohesive package of information. Here, GenAI offers a coherent explanation and solution to queries without necessitating learners to filter and assemble from multiple sources. The output generated by GenAI is seemly a more efficient way for learning given that GenAI’s responses are curated and do not involve direct access to open Internet sources.

However, maximizing efficiency may not always be achievable when learning with GenAI. Despite GenAI’s ability to generate logical and coherent content, the accuracy and reliability of this information can be questionable. A study testing ChatGPT for literature reviews revealed that two-thirds of the references provided by ChatGPT were fabricated (Haman & Školník, 2024). Additionally, some models have produced content that is nonsensical, hallucinatory, and erroneous (Hannigan et al., 2024; Hicks et al., 2024). Notably, for problems with definitive answers (e.g., formulaic problems and logical puzzles), GenAI can provide the best match for a response; however, for ill-structured problems (e.g., conceptual questions) or problems without definitive answers, GenAI provides the most probable answer based on prompt inputs, available data, and probabilistic models, which may not be always correct (Plevris et al., 2023; Wang, 2023).

Learners’ epistemic stances play a crucial role in determining how effectively they interact with and benefit from learning with GenAI. Inherently, the three epistemic stances depict the evolution of justification for knowing (Bråten et al., 2014). This evolution begins with an absolutist phase, where no justification is deemed necessary; transitions to a multiplist stage, where personal justification forms the primary basis for knowledge claims; and culminates in an evaluativist phase. In the Evaluativist phase, individuals possess a deep comprehension of inquiry procedures and recognize legitimate evidence in specific domains, enabling them to discern varying degrees of veracity in knowledge claims (Weinstock, 2009).

Thus, the epistemic stance users hold will affect not only the way they interact with GenAI but also the breadth, depth, and quality of information they can access. Absolutist learners, who consider knowledge as certain and derived from authority, seek clear, unambiguous information (Barzilai & Eshet-Alkalai, 2015). They may benefit from GenAI in straightforward, factual tasks; however, their tendency to seek clear-cut answers might limit their ability to critically evaluate or challenge GenAI’s responses in more complex or nuanced scenarios. Learners with a multiplist stance perceive knowledge as subjective, multifaceted, and justified by personal preferences and judgments (Barzilai & Eshet-Alkalai, 2015). They may view GenAI as an entity for exploring diverse viewpoints rather than verifying facts. As a result, they may be less focused on critically validating the accuracy of the information, potentially leading them to accept probabilistic outputs without sufficient verification. Finally, evaluativists tended to question and verify arguments more critically and consider alternative positions compared to multiplists and absolutists, who were either less critical about inconsistencies and misconceptions or were more likely to engage in argumentation to find the “right” answer (Nussbaum et al., 2008). When working with GenAI, an evaluativist user with adequate background knowledge may choose a centrifugal way of learning and opt to conduct a parallel search using Internet-based search tools to verify the information provided by GenAI consistently before proceeding with their respective task or discussing with other humans, for example, their peers. Thus, the ability to justify information from multiple sources may become a fundamental literacy in working with GenAI (Lee et al., 2024).

Modified Assertion 2: More frequent interaction with GenAI will change and reshape learners’ epistemic stance

As for the second assertion in Tsai (2004), Internet-based learning contains rich information, diverse perspectives, and viewpoints for open-ended exploration or debates on controversial issues. With adequate guidance and sufficient learner reflection, the open environment can facilitate students’ epistemic development via synchronous and asynchronous modes of collaborative learning. Efforts and initiatives promoting epistemic change have continued to grow in recent years (e.g., Kerwer & Rosman, 2020; Muis & Duffy, 2013). Fostering learners’ epistemic development is viewed as the primary goal in education because growing evidence shows that epistemic development is related to better learning strategies, academic achievement, comprehension, critical thinking, and teaching approaches (Guilfoyle et al., 2020; Kuhn, 2018; Lunn Brownlee et al., 2017; Yusuf et al., 2024). Likewise, we claim that learning with GenAI will change and reshape learners’ epistemic stance, especially when instructors intentionally provide the necessary scaffoldings and comprehensive promptings.

As GenAI applications become increasingly prevalent, learners’ epistemic stances may evolve based on the extent of their active engagement and exploration in the learning environments facilitated by GenAI. Muis et al. (2006) suggested that epistemic development is intricate and shaped by social interactions as individuals actively construct meaning from their experiences. In particular, the progression of epistemic development is characterized by the dynamic interplay between the individual and the environment or social world (Hofer & Pintrich, 1997). Notably, the respective stances learners assume when working with GenAI will shape the dynamic social network that influences how users actively construct meaning, creating a unique GenAI learning climate. This climate, in turn, will continuously change and reshape users’ epistemic stances. As learners interact with GenAI and each other, their approaches to knowing and understanding evolve, contributing to a collective learning environment that promotes diverse ways of knowing and critical engagement with content. For example, in a creative design task, dyads used GenAI as a collaboration tool to build consensus, allowed discussion for prompting, and gradually reduced their reliance on GenAI (Han et al., 2024).

Particularly, evaluativist learners tend to seek alternative explanations and additional information, reflecting their advanced epistemic development (Nussbaum et al., 2008). However, the fear of seeking help—due to the stigma of appearing dumb, asking stupid questions, or being ridiculed—poses a significant obstacle to exploring truth and gaining knowledge (Bornschlegl et al., 2020; Brown et al., 2021; Micari & Calkins, 2021). One advantage of working with GenAI is that learners may be more willing to ask questions even when they lack proficiency in the subject domain. In addition to providing cognitive feedback, GenAI, through its anthropomorphic and affective design, can offer trust and emotional support (Maeda & Quan-Haase, 2024) to aid learners in persistently pursuing their epistemic tasks. GenAI resembles humans in some ways, but learners may typically feel less threatened when asking questions to GenAI compared to interacting with humans. Therefore, this continuous interaction process can encourage learners to approach their tasks with the goal of mastering knowledge. As a result, learners achieve better knowledge gains and validate information from different sources and perspectives through multiple conversation turns or parallel searches (Breakstone et al., 2021), leading to a more adaptive epistemic stance.

As suggested in Tsai (2004), with appropriate guidance and learner reflection, Internet-based instruction can foster learners’ advanced epistemology. Similarly, we contend that the dynamic interaction with GenAI supports the development of critical thinking and epistemic reflexivity, essential components of advanced epistemic stances. By continuously engaging with GenAI, learners are encouraged to take proactive steps in resolving doubts and conflicts, fostering a more active and inquiry-based approach to learning. Instructional guidance and learning strategies can be designed to enhance the iterative process of human-GenAI interaction, assisting learners to evolve from basic acceptance of information to more complex and evaluative ways of knowing.

Analysis of Human-GenAI Interaction in Terms of Epistemic Stances and Related Factors According to the Two Modified Assertions

Using the actual dialogs between GenAI (e.g., ChatGPT) and students in an advanced statistics graduate course to complete their homework assignment, for example, we can analyze students’ epistemic stances based on their interaction processes with GenAI and examine potential factors that influence the dynamic of shared epistemic agency between GenAI and humans. The assignments involve tackling complex, real-world problems, which include formulating research questions or hypotheses, analyzing data using appropriate statistical methods, and interpreting the results while considering alternative explanations. Specifically, we build on the two modified assertions derived from Tsai (2004) to guide learners in completing homework assignments.

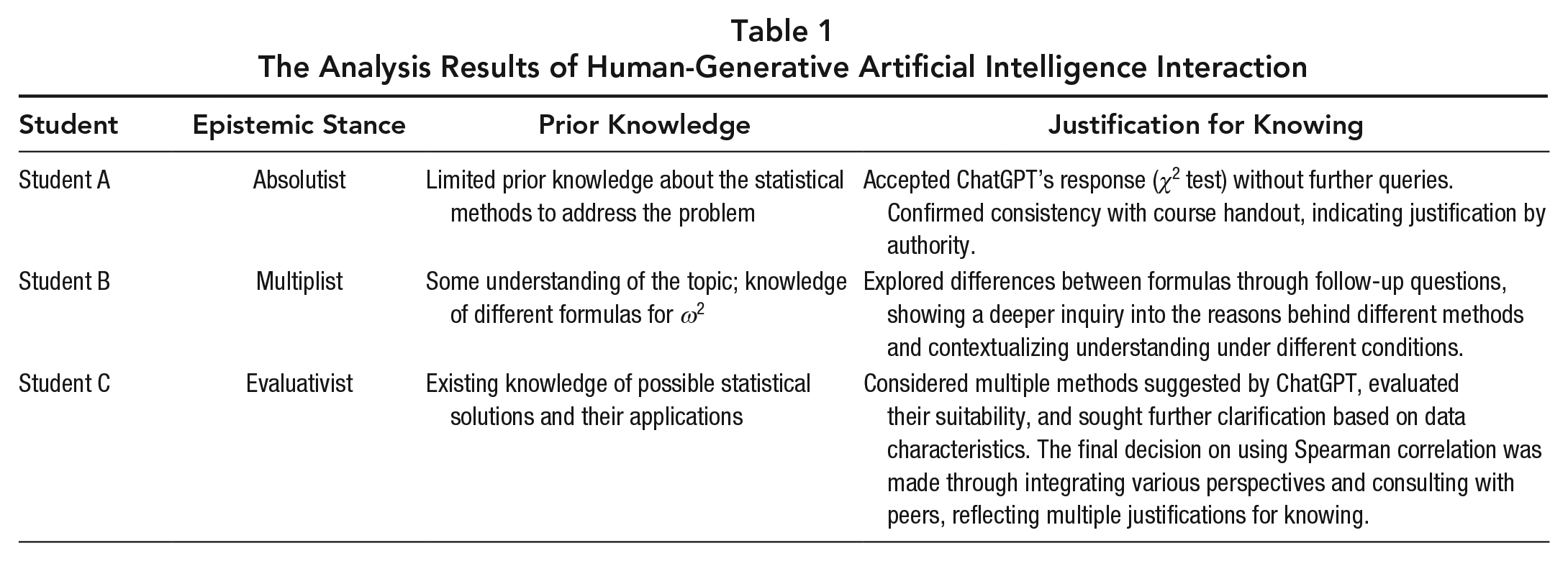

Mainly, we acknowledge that learners with varying epistemic stances benefit differently from learning with GenAI given their background knowledge according to the modified Assertion 1. Thus, we encourage students to consult GenAI in completing their weekly assignment and require them to reflect and document the process of their interaction with ChatGPT and peers if there is any, based on the modified Assertion 2. The analysis results of human-GenAI interaction are summarized in Table 1.

The Analysis Results of Human-Generative Artificial Intelligence Interaction

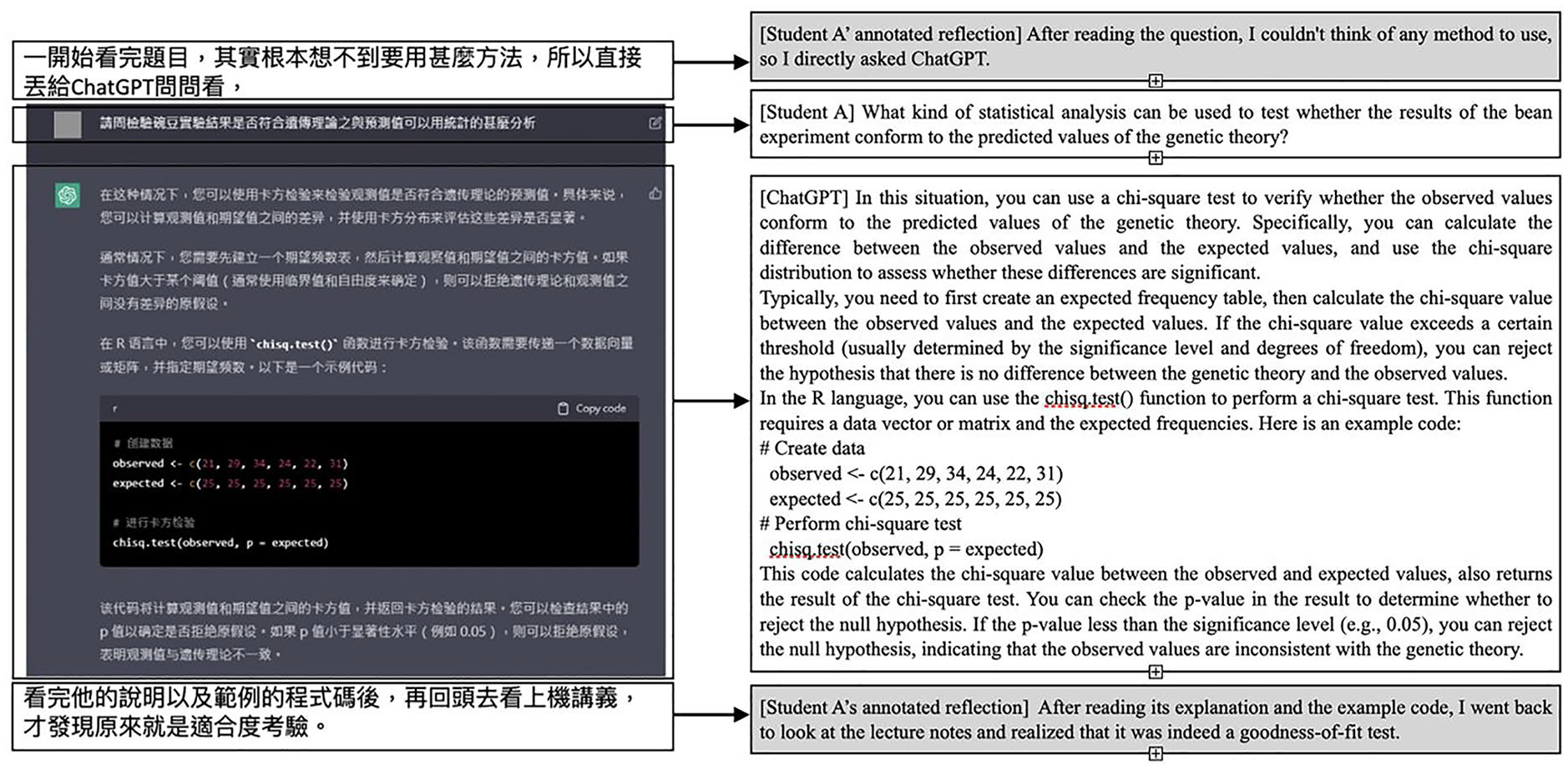

Figure 1 exhibits Student A’s interaction with GenAI. Student A commented he had no idea of the methods to address the statistical problem; thus, he asked ChatGPT what statistical method is appropriate to test whether the result of the pea experiment is aligned with the genetics theory. ChatGPT replied that Student A can use the chi-square test with the chisq.test() function. After reading the responses and examples provided by ChatGPT, Student A reviewed the course handout and found that it was the goodness-of-fit chi-square test, which ended this round of human-GenAI interaction.

Student A’s interaction dialog with ChatGPT (absolutist).

Student A’s interaction can be classified as an absolutist stance, in which Student A accepted the response provided by ChatGPT without further queries. Notably, Student A mentioned that he had no idea of the possible solutions, indicating his limited prior knowledge in solving the statistical problem. After he obtained feedback from ChatGPT, he checked the course handout and found that the ChatGPT response is consistent with what is described in the course handout, signifying his justification of knowing by authority. This interaction between Student A and GenAI showed that students’ prior knowledge, justification of knowing, and epistemic stances possibly affect the shared epistemic agency between human and GenAI. Given Student A’s limited background knowledge and less adaptive epistemic stance, he can benefit more from ChatGPT’s coherent explanations, supplemented by his active verification with authoritative sources.

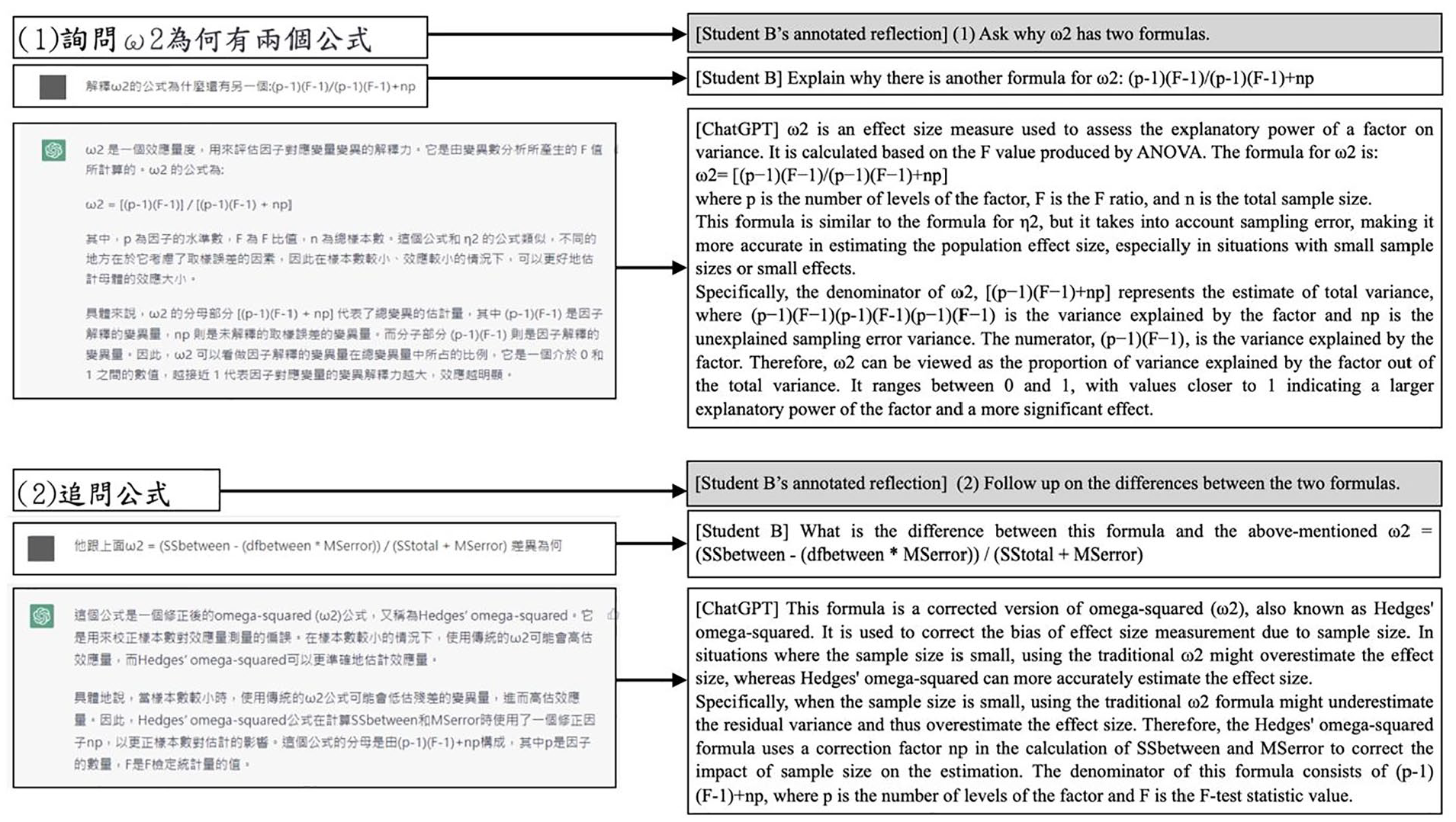

Figure 2 illustrates Student B’s interaction with GenAI. Student B asked ChatGPT why there is an alternative formula for calculating ω2 as [(p – 1) (F – 1)] / [(p – 1) (F – 1 + np)]. ChatGPT explained the components of the formula and specified the conditions when this formula can be optimally used. Student B further inquired how this formula was different from the original ω2 = [(SSbetween) – df(between) × (MSerror)] / (SStotal + MSerror). ChatGPT responded that this modified formula could adjust for biased effect size estimates due to the small sample size.

Student B’s interaction dialog with ChatGPT (multiplist).

Instead of assuming there is a right or wrong answer, Student B inquired about the reason for the existence of two formulas for calculating ω2. His intention to explore the differences between the formulas reflects a multiplist stance, aiming to contextualize his understanding of the formulas under different conditions. Rather than starting with no idea of the topic in inquiry, asking why there are two different formulas in the initial question suggested that Student B had some understanding of the topic and his metacognitive attempt to distinguish the related aspects. His follow-up question further delved deeper to investigate conditions that are suitable for each of the formulas.

In contrast to traditional Internet searches that yield identical results for the same queries, posing the same question to a GenAI prompts it to generate content aligned with the core knowledge or data the GenAI has been trained on through paraphrasing, summarization, or reorganization of its responses. Mainly, GenAI’s underlying algorithm is primarily designed to generate responses by predicting the most probable sequence of words based on patterns learned from a large and diverse dataset, rather than retrieving and synthesizing information directly from external sources. If the user provides additional examples or asks for clarification using prompt strategies, such as few-shot in-context learning (H. Liu et al., 2022), the algorithm is designed to understand this new context and tailor its response based on the probabilistic model accordingly. This may involve offering more detailed explanations, presenting alternative perspectives, or providing additional information relevant to the new context based on its extensive training data. Thus, asking more follow-up questions inherently represents exploring multiple perspectives because it encourages deeper inquiry and consideration of different viewpoints.

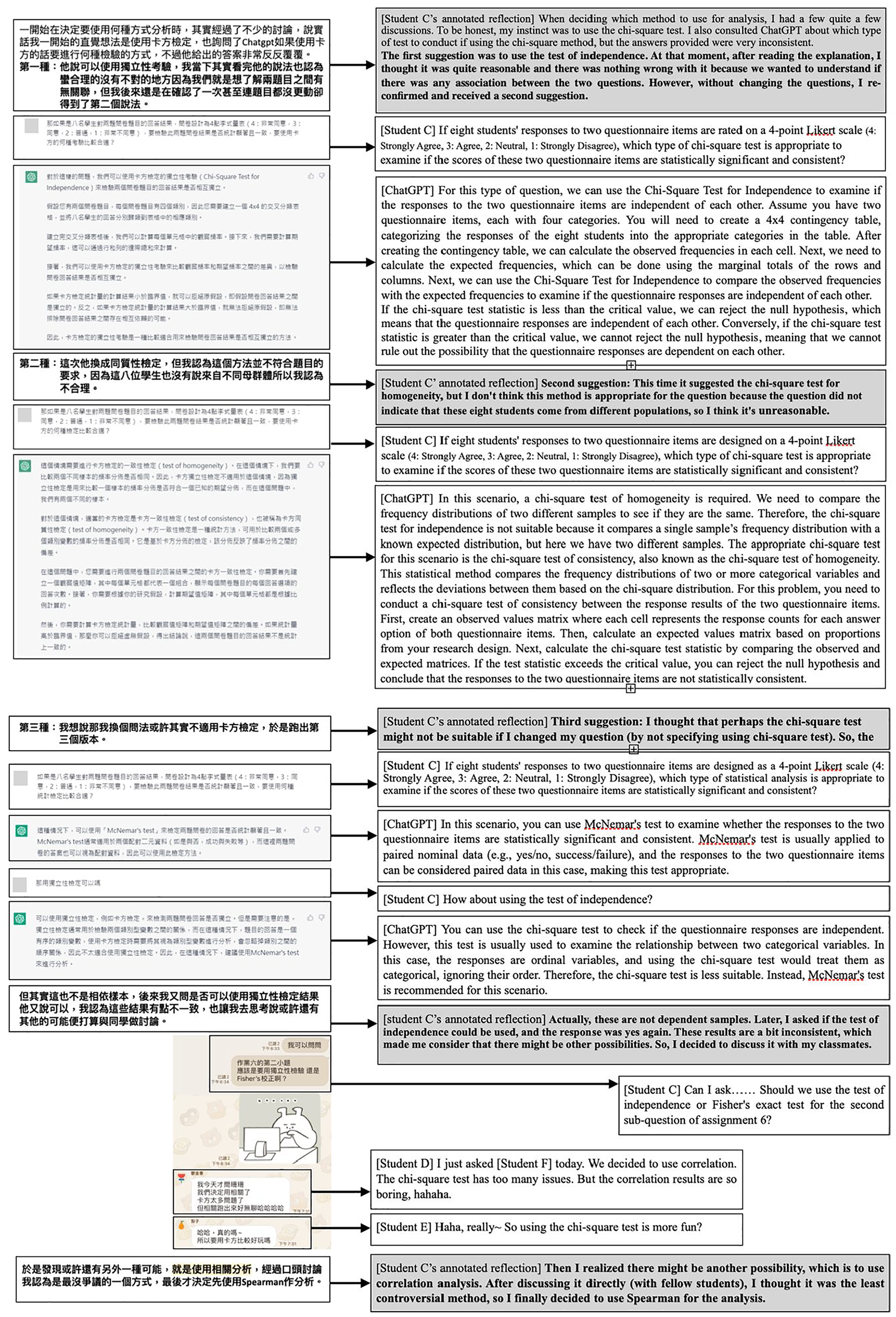

Figure 3 presents Student C’s interaction with GenAI. The homework problem requires students to examine whether eight students’ responses are consistent on two questionnaire items measured on a 4-point Likert scale. Student C started with the idea of using the χ2 test to solve the homework problem, and he asked ChatGPT whether the χ2 test was an appropriate method. Student C was provided with an answer by ChatGPT to use χ2 test for independence, which confirmed his original thought. However, Student C asked ChatGPT the same question again and was given the test of homogeneity as another solution to the homework problem. He questioned this approach because nothing indicated that the eight students were from different populations. Thus, he asked a more general question by saying what statistical hypothesis testing approach is suitable under this context. ChatGPT replied that McNemar’s test can be applied for paired data by treating two questionnaire items as paired data. Student C followed up and asked whether the test of independence was feasible. ChatGPT replied that χ2 can be used to test whether responses on questionnaire items are independent with categorical data. However, given that responses are measured on an ordinal scale in the current case, McNemar’s test is a more proper approach. Student C evaluated the adequacy of this approach (the data are not a paired sample) and checked with ChatGPT again with the original method (i.e., Is it okay to use the test for independence?) Student C reassured himself that the data are not from a paired sample and decided to discuss with fellow students other possible approaches. Finally, he determined that the Spearman correlation would be the one that was the least controversial.

Student C’s interaction dialog with ChatGPT (evaluativist).

Student C considers alternative methods suggested by ChatGPT, such as the test of homogeneity and McNemar’s test, and evaluates their suitability based on the specific context of the data. Student C also seeks clarification on the applicability of these methods based on the scale of the data (categorical vs. ordinal) and the specific research question (independence vs. homogeneity). Student C’s self-reflection on the adequacy of these statistical approaches exhibited his existing knowledge base of possible solutions that may be applied in different contexts. The process of resorting to ChatGPT repeatedly reflects a consideration of multiple perspectives and sources of information to arrive at a well-justified decision. In the end, Student C decided to use the Spearman correlation, which he determined to be the least controversial approach. This decision was based on a thorough evaluation of different sources, including both ChatGPT and other human collaborators, and aligned with the multiple justification approach because Student C integrated various perspectives and methods to arrive at a conclusion.

By analyzing students’ interaction process with ChatGPT in completing their homework assignments, we observed that epistemic stance plays a crucial role in how individuals acquire and validate knowledge, significantly influencing their cognitive processes and interactions. Prior knowledge and justification for knowing also emerge as key factors shaping one’s epistemic stance. For instance, an absolutist stance, often associated with limited prior knowledge, involves accepting information as definitive without further inquiry, as shown in Student A’s reliance on ChatGPT’s response. In contrast, a multiplist stance, exemplified by Student B, reflects an understanding that multiple perspectives (e.g., two ω2 formulas) exist and involves exploring these differences. An evaluativist stance, as demonstrated by Student C, incorporates critical evaluation of various knowledge claims (e.g., χ2, NcNemar’s test, or Spearman correlation) to determine the most appropriate approach.

Previous research revealed that students with higher knowledge were prone to fully understand different claims by evaluating the credibility and veracity of the online information (Mason et al., 2011). In contrast, students with limited knowledge are more likely to accept expert testimony without question because they feel they lack the ability to challenge the expert’s claims (Muis et al., 2016). This unquestioning acceptance of authority is influenced by the students’ perceived lack of prior knowledge, leading to more reliance on experts and more certain beliefs based on authority.

Aligned with the two modified assertions based on Tsai (2004), similar findings were observed in the current human-GenAI interaction examples. For Assertion 1, learners with varying epistemic stances benefit differently from learning with GenAI. More knowledgeable learners (Student C) tended to reflect on the trustworthiness and adequacy of the method proposed by GenAI and demonstrated more adaptive ways of justifying their knowledge, such as combining personal justification, justification by authority, and multiple justifications. Through these justification and verification processes, they discern various similar ideas and come to understand the content fully. In contrast, less knowledgeable learners, like Student A, may directly accept GenAI’s response and rely on authority sources (e.g., course handouts) to build essential knowledge about the course content.

For Assertion 2, as learners actively interact with GenAI—whether through querying, analyzing its responses, or reflecting on its suggestions—their understanding of knowledge claims can deepen. This gradual evolution occurs as learners confront the limitations and strengths of GenAI, particularly when they are required to verify information or source data or evaluate probabilistic answers provided by the system. Such processes can shift learners from an absolutist or multiplist stance toward an evaluativist orientation, where they become more adept at critically evaluating evidence and discerning the quality of information. However, this transformation is not automatic. The impact of frequent GenAI interaction on learners’ epistemic stance is contingent on the presence of appropriate instructional scaffolding and reflection, as indicated by Tsai (2004). In the following, we discuss pedagogical and technical strategies that can promote learners’ epistemic stance when engaging with GenAI.

Implication and Conclusion

The varying epistemic stances, which are contingent on prior knowledge and methods of justification for knowing, underscore the importance of developing instructional strategies that promote human and GenAI interaction. When students have more knowledge, they tend to evaluate the credibility and sufficiency of information critically, using a mix of personal experience, authoritative sources, and multiple forms of justification. Conversely, students with less knowledge often accept information from authoritative sources without question. Even with frequent engagement in GenAI, without proper pedagogical strategies or prompting aids, their epstimic stances may remain less adaptive or deteriorate, leading them to accept abitrary informaition without actively evaluating its credibility. Therefore, it is crucial for educators to apply pedagogical strategies in creating learning environments that encourage all students to engage in deeper, more critical analysis of information provided by GenAI. This learning climate can be achieved through activities that stimulate questioning, a comparison of different sources, and the application of diverse justification methods. By doing so, educators can help students develop the skills needed to independently assess the validity of information, fostering a more nuanced and adaptive approach to learning.

Specifically, instructional strategies that encourage verification and sourcing behaviors—such as designing and implementing activities for learners to cross-check GenAI’s responses with credible sources, compare and contrast information from multiple sources, engage in metacognitive reflection, or explore alternative viewpoints (Hannigan et al., 2024; Lee et al., 2024; Robertson et al., 2024; Tankelevitch et al., 2024)—are essential in fostering deeper epistemic development. Although GenAI alone may not directly alter learners’ epistemic stances, the combination of frequent interaction with GenAI, proper instructional scaffolds, and learner reflection can contribute to a gradual evolution of their epistemic stance. This process enhances learners’ ability to critically engage with AI-generated knowledge, leading to more meaningful learning outcomes.

Alternatively, technical strategies, such as role-playing, chain of thoughts, and RAG, can be employed effectively for students with limited prior knowledge to help them engage in context-aware human-GenAI interactions. With the role-playing prompts (X. Liu & Ni, 2024), learners with limited prior knowledge can guide the GenAI to adjust its output to suit their level of understanding. For example, by asking the GenAI to explain the χ2 test as if explaining to a 10-year-old, the learner can receive a simplified and more accessible explanation. This approach not only makes complex concepts easier to grasp but also allows learners to engage with the material in a way that matches their current knowledge and cognitive level. Using the chain of thoughts strategy by asking the GenAI to reflect on its generation of output step by step, learners can enhance the GenAI’s performance by generating more unbiased and accurate output (Vacareanu et al., 2024; Wei et al., 2022). Instructors are encouraged to provide learners with RAG-based GenAI chatbots. The RAG-based chatbots can help by delivering tailored and relevant knowledge with the incorporation of user profiles and information from reliable sources (Shi et al., 2024), minimizing hallucinations and maintaining consistent responses (Niu et al., 2024; Shuster et al., 2021).

In addition to the foremost factors we have explored, such as learners’ epistemic stances, prior knowledge, and justification for knowing, other important characteristics, such as motivational structure, interest, and self-efficacy, may also play a critical role in shaping human-GenAI interactions (Abbas et al., 2024; Chan & Lee, 2023; Shahzad et al., 2025). These learner attributes may significantly affect how individuals engage with and benefit from GenAI systems. Understanding these factors can provide deeper insights into how learners navigate GenAI-facilitated environments. Future research could delve into these characteristics to develop more tailored approaches, optimizing the effectiveness of GenAI systems in educational settings.

This conceptual article emphasizes that integrating GenAI into education necessitates a rethinking of traditional teaching methods and a redefinition of epistemic agency that accounts for both human and machine contributions. In this evolving educational landscape, learners must not passively accept GenAI-generated content but instead actively engage with it through critical questioning, verification, and the use of prompting strategies. By doing so, they can shape GenAI’s responses, ensuring the reliability, relevance, and accuracy of the information provided. This active engagement is key to maintaining human epistemic agency in a setting where machine-generated knowledge plays a significant role.

This shared epistemic agency between humans and GenAI creates opportunities for mutual intellectual growth (Wu et al., 2021). To fully leverage this potential, learners must adopt an adaptive epistemic stance—ways of thinking about and engaging with knowledge that can evolve based on contextual scenarios, new information, and situational feedback—for effective interaction with GenAI. To foster this adaptability, a symbiotic learning environment can be designed that provides real-time detection and representation of human, GenAI, and collective epistemic belief networks combined with diagnosis, scaffolding, and personalized pedagogical feedback from experts (Wu & Tsai, 2022). This personalized feedback is crucial in helping learners critically evaluate AI-generated content, integrate new insights, and grow intellectually alongside GenAI by reflecting on their learning strategies and adjusting to evolving knowledge and complexity. Simultaneously, it is important to acknowledge the challenges and limitations of GenAI in knowledge creation, preparing learners for a future where human-GenAI collaboration plays a central role in learning, problem-solving, and knowledge construction.