Abstract

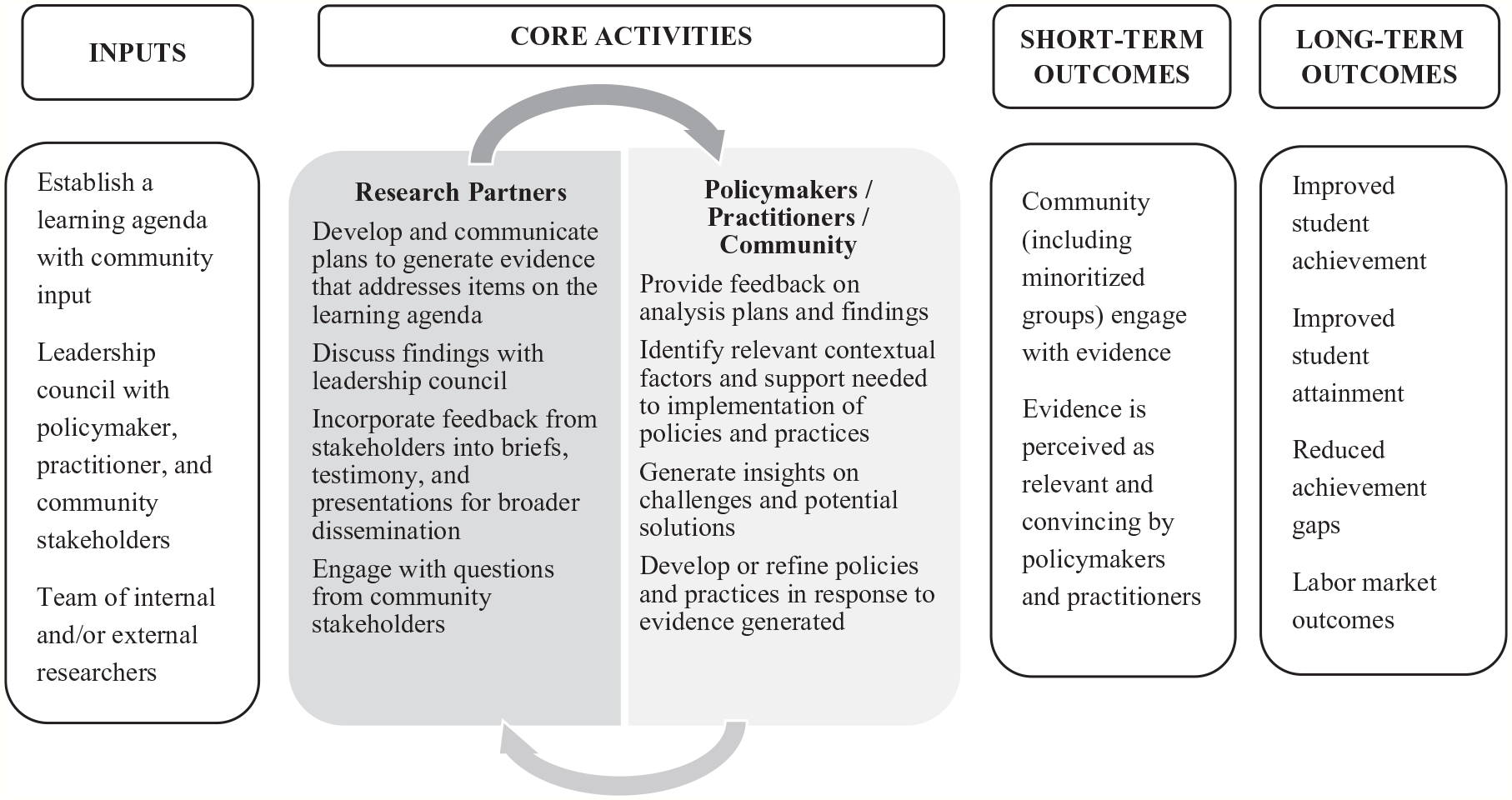

This essay offers a framework for broader community involvement as a means of increasing the relevance and usefulness of evidence developed. This essay begins by defining key concepts related to democratizing the development of evidence. The sections that follow outline a logic model that calls for a bidirectional, iterative set of core activities to draw on insights from practitioners, community stakeholders, and researchers to generate a learning agenda, analysis plans, and findings that can be used to develop or refine policies and practices. The essay concludes with implications for advocates of democratizing the development of evidence and for researchers.

Keywords

With humility, researchers and practitioners can acknowledge the limitations of what they “know,” remain open to the possibility of being wrong, and be willing to learn and improve their own knowledge and work over time.

Introduction

Over the past two decades, federal education policies have spurred the development of research evidence—evidence derived when systematic methods and analyses are used to address questions (William T. Grant Foundation, n.d.). Since its inception in 2002, the Institute of Education Sciences has awarded research grants, provided independent evaluations of federally funded education programs, and synthesized existing evidence about educational practices, programs, and policies through the What Works Clearinghouse (Institute of Education Sciences, 2018). Under the most recent reauthorization of the Elementary and Secondary Education Act, the 2015 Every Student Succeeds Act (ESSA), educational leaders are required to justify certain spending decisions with evidence (U.S. Department of Education, 2016).

The rationale behind encouraging evidence development and use is straightforward: evidence-informed decisions should yield better policies and practices, ultimately resulting in better educational outcomes. Yet this rationale rests on the assumption that educators and policymakers will be open to evidence that suggests a need for improvement, or that is from different settings. Education leaders may feel pressure to avoid appearing to lack confidence in an established vision or set of reforms (Corcoran et al., 2001), and publicly elected school boards and school board appointed superintendents face disincentives to be transparent about evidence that identifies weaknesses, since voters tend to remember bad news over good (Nielsen & Moynihan, 2017). Additionally, policymakers and practitioners have strong preferences for internal sources of evidence (Finnigan et al., 2013; Massell et al., 2012), and thus may be skeptical of the relevance of evidence developed outside their context.

One potential avenue to counter the tendency to disregard or selectively attend to evidence is democratizing evidence—enabling a variety of stakeholders to participate in the production of evidence to inform educational improvement (Tseng et al., 2018). Providing opportunities for stakeholders to offer input on what questions are asked and answered should result in an evidence base that is more relevant to the concerns of stakeholders, including the communities served by educational institutions. Greater transparency in the evidence development process and responsiveness to stakeholder concerns may increase the community’s desire to act on findings (Nelson et al., 2015), creating incentives for leaders to consider evidence in decision-making processes.

The purpose of this essay is to establish a framework for broader community involvement as a means of increasing the relevance and usefulness of evidence developed. Similar to the set of boundaries Collins (2019) established for “deliberative culture,” this essay offers principles for a democratic approach to evidence building. First, a diverse group of stakeholders, including communities marginalized by systemic inequalities, should have the opportunity to share viewpoints. Incorporating the perspectives of stakeholders can heighten attention to systemic racism and disenfranchisement of minoritized communities in the process of generating evidence, yielding an evidence base that can inform efforts to improve equity (Ming & Goldenberg, 2021). Second, those leading the process agree to pursue a common interest over individual self-interests, taking into consideration the extent to which actions are expected to benefit the public as opposed to being used for private gain (Hutt & Polikoff, 2020).

In the education space, adherence to the democratic principles can be seen in school board meetings that involve both constituent participation and deliberation by policymakers (Collins, 2021) and in school district outreach to gather stakeholder input on specific topics. A recent study found that respondents who viewed a school board meeting in which the board provided both opportunities for people to comment and responses to the commenters led to increased trust in local officials and a stronger willingness to attend school board meetings in the future (Collins, 2021). Deliberative civic engagement processes, such as participatory budgeting, allow community members to learn, listen, debate, and deliberate about the allocation of public resources, and can increase community trust in leadership and democratic institutions (Roza, 2021).

The remainder of this essay begins with definitions of key concepts related to democratizing the development of evidence. The sections that follow outline a logic model (see Figure 1) that calls for a bidirectional, iterative set of core activities to draw on insights from practitioners, community stakeholders, and researchers to generate a learning agenda, analysis plans, and findings that can be used to develop or refine policies and practices. The essay concludes with implications for advocates of democratizing the development of evidence and for researchers.

Logic model for democratizing evidence use.

Defining Key Concepts Related to Democratizing Evidence

Learning Agendas

Gathering stakeholder input on what evidence is needed, and for what purpose, can inform plans for identifying and addressing priority questions relevant to the programs and policies of an agency. These plans, known as learning agendas, facilitate development of evidence that supports organizational learning and improves decision making (Office of Evaluation Sciences, n.d.). The Evidence Act toolkit encourages frequent internal and external stakeholder engagement throughout the process of setting a learning agenda (Office of Evaluation Sciences, n.d.).

Evidence

Addressing the questions of a learning agenda involves developing research evidence through the systematic collection and analysis of appropriate sources of data. Stakeholders in the education community may view a variety of data sources as evidence. For example, elected school board officials view experience, testimony, examples, law, and policies as types evidence (Asen et al., 2012), and education leaders and teachers view student performance data as an especially credible source of evidence (Finnigan et al., 2013). To meet the definition of research evidence, these sources of data would need to be appropriate for the questions asked, and systematically collected and analyzed.

To illustrate the distinction between data sources and evidence, consider the question of whether schools are improving student achievement. Education stakeholders may look to proficiency rates for answers, but schools where many students are well below proficiency might show little improvement in proficiency rates, even if students’ underlying test scores improve considerably (Shafer, 2016). Given the question, year-to-year changes in test scores is a more appropriate source of data than proficiency rates, with the appropriate method for analyzing test scores depending on the interpretations stakeholders wish to make (Castellano & Ho, 2013).

Defining Evidence in Light of Democratic Principles

What constitutes relevant and convincing evidence (Gordon & Conaway, 2020), and who gets to decide? Adherence to the democratic principles requires considering stakeholders’ concerns. Practitioners may be interested in evidence that can be used to inform and improve program implementation, while federal policy guidance privileges evidence of causal impacts. An agenda informed by the concerns of stakeholders may include a range of methodological approaches that allow for describing conditions, implementation, or outcomes; comparing conditions, implementation, or outcomes to established criteria or to alternative conditions; or estimating the causal impact of a program or policy on a desired outcome (Office of Evaluation Sciences, n.d.).

Developing relevant and convincing evidence also requires consideration to the outcomes that stakeholders value. Education agencies at the federal, state, and local level attend to evidence derived from standardized tests, yet community stakeholders may view students’ sense of safety and belonging at school as valued outcomes. Historically, academic concerns have been privileged over practical concerns (Ming & Goldenberg, 2021), and conventional assumptions tend to center white middle class values, taking a deficit lens to minoritized students (Kirkland, 2019). Navigating differences in what types of evidence stakeholders find relevant and convincing is a challenging but essential aspect of democratizing evidence.

Deliberative democratic structures could add value during the generation of evidence in response to the questions, as stakeholders contribute insights about local context. Democratic structures also offer an opportunity to incorporate a more diverse range of perspectives in evidence interpretation. These three approaches to applying democratic principles to evidence building align to the outputs of the logic model in Figure 1: a learning agenda, an analysis plan, and findings that reflect stakeholder input. In the sections that follow, the key inputs, core activities, and challenges to building evidence in accordance with democratic principles are explored in more detail.

Key Inputs in Democratizing Evidence

Key inputs in the logic model include a leadership council, a team of internal or external researchers and brokers, and time and space for events. In keeping with the principles of democracy, the leadership council should include representation from key stakeholder communities to ensure that the learning agenda reflects the questions of educators seeking to improve their work, as well as parents and community groups invested in improving educational outcomes (Jackson et al., 2021; Tseng et al., 2018). To foster the development of learning agendas that intentionally benefit communities of color (Michener, 2020), minoritized communities that have traditionally had less voice in decision making should be included. While inclusion, by itself, is insufficient to realize democratic ideals, drawing on different perspectives is a step toward an evidence base that is relevant and convincing to a variety of stakeholders.

Developing and enacting a learning agenda is likely to require substantial analytic capacity. While educational agencies often have data-savvy staff, they may have limited time available due to the demands of compliance and accountability reporting. Leaders could reallocate tasks so that these staff have dedicated time to address the learning agenda, or hire additional staff. Advantages to having research partners embedded in an education agency include opportunities to establish relationships and build trust with practitioners, awareness of the intricacies of administrative data, and knowledge of the local context (Conaway et al., 2015). As a result, internal research capacity enables quick-turnaround analyses in a time frame aligned with decision-makers’ needs.

Many education agencies have turned to external research partners or developed research-practice partnerships for additional expertise. External research partners may be better positioned to investigate long-term research questions, conduct resource-intensive data gathering, or when political independence is needed (Conaway et al., 2015). Research-practice partnerships involve long-term collaborations between researchers and practitioners, such as research alliances, design research partners, and networked improvement communities, as well as the Regional Education Laboratories funded by the U.S. Department of Education (Tseng et al., 2017). The National Network of Education Research-Practice Partnerships website (National Network of Education Research-Practice Partnerships, n.d.) contains numerous resources to support research partnerships.

The work of creating norms and routines that allow for connections across different stakeholder groups is often carried out by “brokers” who bridge the research and policy/practice worlds (Conaway, 2019). Brokers may serve a variety of roles, such as developing partnership governance and administrative structures, designing processes and communications routines, sharing knowledge of research and practice within organizations, and translating research to increase accessibility for a general audience (Hopkins et al., 2019; Neal et al., 2015; Wentworth et al., 2021).

Time and space for events are crucial inputs for democratizing evidence, particularly in communities where democratic structures are not the norm. The leadership committee could host meetings to solicit input from community groups and engage in deliberative discourse. Time and space are also critical for planning, negotiation, and developing of trust with research partners. In an example from Detroit, community partners and researchers participate in regular meetings and seasonal retreats for team building, information sharing, and critical reflection (Wilson, 2021). Nelson et al. (2015) found that data managers were often protective of their agency’s data, so research partners established protocols to facilitate data sharing and performed favors such as collecting data or conducting small within-agency analyses to build trust. Involving data managers in leadership council meetings could facilitate enactment of the learning agenda.

Lessons from a partnership in which schools, after-school programs, and public agencies came together to conduct research with university partners suggest that research partners need to demonstrate commitment to prioritizing community agencies’ goals (Nelson et al., 2015). Researchers repositioned themselves as invited collaborators (rather than leaders) in community-driven working groups, taking on the role of coaching agency staff on how to ask questions that were both researchable and actionable. High-quality, relevant research heightened the community’s interest in acting on findings (Nelson et al., 2015).

Codevelopment of Learning Agendas

Including practitioners and community members in the process of developing the learning agenda is key to democratizing evidence (Tseng et al., 2018). The core activities of the logic model depicted in Figure 1 include engagement with practitioners and community stakeholders to generate insights on challenges and potential solutions. While the strategic plans of the state education agency or school district can serve as a starting point for identifying evidence needs, involving stakeholders early in the process is critical, since how problems are framed influences how decision processes unfold, legitimizing some potential responses and not others (Coburn et al., 2009). Furthermore, questions about program and policy effectiveness might be framed differently depending on whether stakeholders’ goals emphasize adequacy, equality, or benefits to the disadvantaged (Brighouse et al., 2018).

Conversations with researchers might include discussion of existing data and research related to the challenges and potential solutions to refine the set of questions for the learning agenda. Review of research syntheses can mitigate the potential for an individual study or researcher to have an outsize influence on the discussion (Ming & Goldenberg, 2021). In addition, school staff appreciate efforts to rule out programs that might prove ineffective (Corcoran et al., 2001), and stakeholders may be especially interested in existing evidence if the options under consideration require large commitments of resources (Weiss, 1980). Programs with a rigorous evidence base may be more likely to successfully replicate. Among programs scaled up under Investing in Innovation grants, two of the four programs with prior experimental evidence of effectiveness had positive impacts on student achievement, while a smaller proportion of the programs with less rigorous prior evidence produced positive results (Boulay et al., 2018).

Sharing data and research should be viewed as a means of sparking dialogue, rather than ending conversations (Kirkland, 2019). The iterative nature of the core activities offers practitioners and community stakeholders an opportunity to discuss the relevance of existing research. The application of existing research may be viewed as a form of “wishful extrapolation” (Manski, 2013), particularly if studies are set in contexts dissimilar from their communities. Practitioners and community members can share insights on the extent to which local contextual factors vary from the study context in ways that could affect a proposed program or policy (Ming & Goldenberg, 2021). Ultimately, evidence from current local data may be more convincing than existing research to address questions (Finnigan et al., 2013; Massell et al., 2012; Yohalem & Tseng, 2015).

To prioritize the questions on the learning agenda, a recently developed framework for public accountability offers two key criteria to consider: actionability of information and the extent to which actions benefit the public as opposed to being used for private gain (Hutt & Polikoff, 2020). Since evidence that is relevant to the current policy agenda and upcoming decisions is more likely to be used (Conaway et al., 2015; Contandriopoulos et al., 2010), it may be worth prioritizing the questions on the learning agenda with these factors in mind. Additionally, by emphasizing benefits to the public, the framework reflects the democratic principle of putting common interests over individual self-interests. Since those with the authority to prioritize items on the learning agenda wield considerable power, granting this authority to the leadership council or an elected school board is more consistent with the democratic principles than leaving these decisions up to education leaders or researchers, as might otherwise be the case.

Analysis Plans

The core activities leading to the analysis plan involve practitioners and community stakeholders providing insight on relevant contextual factors and supports needed for implementation, and researchers identifying the data and methods best suited to answering the research questions. Community stakeholders can contribute to discussions in which the leadership council critically examines the social inequities and racial biases that exist in the context in which evidence is generated (Kirkland, 2019). Practitioners can share insights on the contextual factors that might support or derail implementation of initiatives, such as lack of resources, insufficient time for staff development, or constraints related to existing policies or informal norms. Stakeholder feedback on the analysis plans fosters the production of evidence that reflects “what we want to know, why we want to know it and how we envisage that evidence being used” (Nutley et al., 2013, p. 6).

The choice of what type of data and methods to use should be driven by the questions being asked (Ercikan & Roth, 2006). If practitioners and researchers are collaboratively developing interventions, design-based research would be an appropriate approach to developing knowledge (Penuel & Farrell, 2017). State and district leaders are often interested in understanding the factors that drive variation in the quality of implementation of programs and policies, the resources needed to ensure successful implementation, and drivers of the cost of different options (Conaway et al., 2015; Yohalem & Tseng, 2015). Observations, interviews, and focus groups with community members and practitioners responsible for enacting the programs and policies can be used to address “how” and “why” questions and assess whether programs and policies are working as intended. Interim analyses could support continuous improvement of key initiatives, increasing the likelihood that a new program or policy is implemented successfully.

Research partners could help develop experimental or quasi-experimental analysis plans for questions about causal impact. More complex analysis plans are warranted for causal questions since correlational evidence may be misleading due to selection bias. For example, programs that attract highly motivated students will tend to be positively associated with outcomes, while programs that involve less well-motivated students will appear to performing poorly. Implying a causal relationship where none exists may direct attention away from levers for change that address underlying causes (Ming & Goldenberg, 2021). Correlational relationships also tend to be substantially larger than causal effects, and failing to distinguish between correlational and causal evidence may result in policymakers dismissing programs with “small” effects that are comparatively large relative to alternatives (Kraft, 2020).

The analysis plan should consider potential limitations and be transparent about them. If certain demographics tend to be underrepresented in the data collected, researchers might use purposeful sampling to guide data gathering (Ming & Goldenberg, 2021). For example, for a qualitative analysis of testimony from school board meetings, researchers might wish to consider how to collect supplementary data if individuals testifying are not reflective of the demographics of families served by the district. An analysis plan could specify when further outreach or alternative data sources are warranted to ensure adequate representation from various subgroups. The leadership council might also consider adherence to the Standards for Excellence in Education Research principles of making findings, methods, and data open to improve transparency to the public.

Findings That Address Stakeholder Concerns and Incorporate Insights

The ideal situation for interpreting evidence is one in which community and practitioner expertise on context and implementation is pooled with researcher and analyst expertise (Contandriopoulos et al., 2010). Stakeholders’ feedback on initial findings helps clarify additional steps needed to transform evidence into “knowledge in practice” (Farley-Ripple et al., 2018, p. 239). Practitioners, community members, and students may have insights on the nuances of how data were collected or how programs were implemented that improve interpretation of the findings. Enacting this process requires adequate time for individuals in different roles to interact in substantive ways with the evidence (Coburn et al., 2009). Enabling engagement with evidence by diverse groups of constituents may require substantial investments in brokerage activities that facilitate interpretation of the evidence (Landry et al., 2003). Discussing evidence may help stakeholders understand challenges and design and improve solutions (Booker et al., 2019).

Inviting stakeholders to weigh in on the interpretation of evidence in priority policy areas is risky for education leaders, given that findings may suggest favored policies and practices are less effective than desired (Conaway et al., 2015). In the partnership described by Nelson et al. (2015), community agencies retained control over the release of findings, which helped the research partners build trust with their community partners. While agency control over the release of findings alleviates agency concerns, it may be antithetical to a public accountability framework if other stakeholders’ concerns go unaddressed. For example, people have been silenced when projects “veered too close to unearthing unflattering realities of racialized discipline practices in schools” (Diamond, 2021). Striking a compromise might involve an agreement that requires that answers to questions are publicly provided, but grants the agency involved some amount of time to review findings before they are released.

A key challenge in evidence interpretation is that practitioners may assume that evidence will either validate or help them identify ways to improve; negative findings may be ignored, dismissed, or explained away (Booker et al., 2019). The tendency to explain away findings is consistent with confirmation bias, in which people tend to interpret evidence in ways that align with existing beliefs or expectations (Nickerson, 1998). Additionally, decision-making experiments indicate that individuals disproportionately favor the status quo (Samuelson & Zeckhauser, 1988), which could lead to unduly discounting evidence that contradicts current practices. To counter such biases, education leaders could commit in advance to the criteria that merit reconsidering their previously held positions and communicate how they intend to respond to positive, negative, or inconclusive evidence (Gordon & Conaway, 2020). Prior to conducting the research, working groups might engage in “if . . . then” discussions to specify the actions warranted by different potential findings, following the approach described in Nelson et al. (2015).

Implications for Advocates of Democratizing the Development of Evidence

The democratic principles that underlie the logic model, paired with a focus on actionable items that benefit the public (Hutt & Polikoff, 2020), set the stage for broader stakeholder engagement in establishing and enacting a learning agenda, developing evidence, and interpreting the evidence produced. Diverse stakeholder groups can provide input on what questions should be asked to form a co-constructed learning agenda. Education leaders can foster an inclusive approach at public meetings to encourage stakeholder feedback on challenges and potential solutions. As the evidence base develops, structures and processes that offer opportunities for a variety of stakeholders to engage in interpreting the evidence can shed light on what additional evidence might be needed and what concerns are left to address. The online appendix (available on the journal website) offers questions to consider for each input in the process.

Given the aspirational nature of the democratic principles, and the fact that many education agencies lack the key inputs described in the logic model, it is worth considering what is sufficient to begin working toward the democratization of evidence. It might be most feasible to begin by asking active members of the educational community what questions they would like answered, and examining existing evidence as opposed to building a local evidence base. Publicly available resources, such as Regional Educational Laboratories toolkits and What Works Clearinghouse Practice Guides, may help agencies build capacity to deliberate about evidence. At a minimum, such efforts would require dedicated time for individuals to engage in conversations.

Advocates for democratizing the development of evidence should consider ways of making the development and interpretation of evidence more inclusive. Leaders of state and district agencies could seek input from the teachers, families, students, and communities they serve. Although the process of developing and enacting a community-informed learning agenda likely involves a long time frame and will not be the right approach for every question, the process is intended to build trust and ultimately generate an evidence base that is more relevant and compelling to stakeholders.

A key implication for researchers is to appreciate that methodological expertise alone is insufficient, and that practitioners’ and families’ experiences with the education system and knowledge of local contexts constitute valuable forms of knowledge that can be applied to identifying plausible explanations for findings and interpretations of evidence (Tseng et al., 2018). Researchers also might consider asking practitioners, policymakers, or community stakeholders how they interpret evidence in light of specific political and organizational contexts. Ongoing conversations can encourage depth of evidence use through more robust evidence searches and improved interpretation of evidence (Farley-Ripple et al., 2018).

Supplemental Material

sj-pdf-1-edr-10.3102_0013189X211060357 – Supplemental material for Democratizing the Development of Evidence

Supplemental material, sj-pdf-1-edr-10.3102_0013189X211060357 for Democratizing the Development of Evidence by Cara Jackson in Educational Researcher

Footnotes

Notes

Author

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.