Abstract

The purpose of this study was to observe college students' behavior on five multiple-choice, self-tailored exams of 75 items. Self-tailoring was defined as an option to omit up to five questions from being scored on an exam. Ninety-nine students from two sections of an undergraduate educational psychology course made a statistically significant increase in their mean scores on all exams by using the self-tailoring procedure. Students' efficacy in omitting incorrect answers on self-tailored tests was noted. There was a statistically significant linear increase in percent of incorrectly answered questions omitted from the total number of questions omitted in five exams. Frequency of questions omitted from scoring was statistically negatively correlated with their difficulty. Implications for assessment and teaching using self-tailored tests in college classrooms were mentioned.

Multiple-choice exams are very common in assessment of academic performance in large college classes but are administered typically with no option to choose questions from a larger pool or to make any changes in the test. Students often complain that a test was either too hard or did not measure their real knowledge. The current study allowed college undergraduates the option to self-tailor their multiple-choice exams by omitting up to five questions from scoring. We defined this option as self-tailored testing.

Morse (1988) investigated a form of self-tailored testing in which undergraduates selected items to answer from a larger set of questions with the constraint that examinees attempt some minimum number of items. When scores were calculated for only student-selected questions, 89% of students improved their scores. In this framework, students' metacognitive skill would be expected to affect their capitalizing on a self-tailoring option. Flavell (1976) offered one of the first definitions of metacognition as “knowledge concerning one's own cognitive processes and products of anything related to them” (p. 232). Students' self-tailoring efficacy served as an indicator of their metacognitive ability to distinguish between correct and incorrect answers on multiple-choice exams and their metacognitive monitoring of test performance. Thus, the proxy for metacognitive ability in the current study was operationalized as the percent of questions students elected to omit from scoring that they were unable to answer correctly. Another aspect of metacognition in this self-tailoring of a test was students' metacognitive monitoring of test performance, which may be affected by different factors. Item difficulty was one factor, defined as “the proportion of examinees who answer the item correctly” (Crocker & Algina, 1986, p. 311). We briefly distinguish self-tailored tests from other adaptive methods, elaborate on metacognition, and address the effects of item difficulty and practice in calibration in the following paragraphs.

Tailored tests

Self-tailored tests are different from adaptive, tailored, computer adaptive, and self-adaptive tests as they have appeared in the literature. The terms “adaptive” and “tailored test” have been used interchangeably (Wright & Stone, 1979; Thorndike, 1982; Weiss, 1982, 1983; Crocker & Algina, 1986). Such tests always involve some extent of individualizing the set of items administered to an examinee. This is done to match the difficulty of items more appropriately to the proficiency of the examinee (Weiss, 1983), to reduce the number of items or time required (Milman, Holland, Kaszniak, D'Agostino, Garrett, & Rapcsak, 2008), or both. Self-adapted tests (Pitkin & Vispoel, 2001) offer examinees the opportunity to specify the difficulty for upcoming items. There is some evidence that allowing examinees some control over the test situation may reduce test anxiety (Wise, 1994; Wise, Roos, Plake, & Nebelsick-Gullett, 1994). Self-tailored tests are different from these other methods in that the examinee selects items for inclusion or exclusion, rather than a computer algorithm or a pre-set difficulty in sorting of items, as in Lord's (1971) flexilevel test or Binet's intelligence test (Weiss, 1982).

Metacognition and metacognitive monitoring

Metacognition can be separated into two areas: knowledge and regulation of cognition (Schraw & Moshman, 1995). Shraw (2009) defined metacognitive judgment as “a probabilistic judgment of one's performance before, during, and after performance” (p. 34). Schraw also defined one's “ability to distinguish between confidence for correct items and confidence for incorrect items” as discrimination (p. 38). Many researchers have examined college students' matching between their perceived and actual performance and referred to it as “monitoring accuracy” or “calibration of performance” (Nietfeld & Schraw, 2002; Nietfeld, Cao, & Osborne, 2005).

Pressley and Ghatala (1988) examined undergraduates' discrimination of correct and incorrect answers on multiple-choice tests of reading comprehension, opposites, and analogies taken from the Scholastic Aptitude Test by rating certainty of correctness of each answer on an Awareness Scale. Options ranged from 20% to 100% certainty. Students were often very certain their answers on comprehension items were right when they were wrong. Students' awareness of performance was not related to their academic ability as an overall score on the Verbal section. Students discriminated better between correct and incorrect answers on easier questions as indexed by the item difficulty. Other factors, such as whether one is asked how many questions were answered correctly versus answered incorrectly, also have been shown to be related to monitoring accuracy (Weinstein & Roediger, 2010) or forecasting how many word pairs they would recall or forget (Finn, 2008).

Item and test difficulty

Item and test difficulty have been reported in numerous studies as important in students' judgment of their performance (Pressley & Ghatala, 1988; Schraw & Roedel, 1994; Sinkavich, 1995; Hacker, Bol, Horgan, & Rakow, 2000; Morse & Morse, 2002; Nietfeld, et al., 2005). However, researchers operationalized item difficulty differently. Morse and Morse (2002) and Pressley and Ghatala (1988) used the proportion of examinees who answered an item correctly as the index of item difficulty. Nietfeld, et al. (2005) defined “easy” items as requiring simple identification and more difficult questions as requiring application of knowledge. Others applied their own qualitative judgment of item challenge (e.g., Sinkavich, 1995).

Both more accurate calibration of easier test items and examinees' tendency to be overconfident on difficult items and under-confident on easy items have been reported (Lichtenstein & Fischhoff, 1977; Pressley & Ghatala, 1988; Schraw & Roedel, 1994; Nietfeld, et al., 2005). Gigerenzer, Hoffrage, and Kleinbolting (1991) referred to this over- or under-confidence differing by difficulty of questions as the “hard-easy effect.” Juslin, Winman, and Olsson (2000) defined the hard-easy effect as “a covariation between over/underconfidence and task difficulty; overconfidence is more common for hard item samples, whereas underconfidence is more common for easy item samples” (p. 1). Flannelly and Flannelly (2000) asked students to provide a confidence judgment on scale from 1 to 100 for each multiple-choice question and a reason to support or oppose their answer for each question. Providing reasons against one's answers reduced overconfidence on hard items and underconfidence on easy items. Students who did better on the test were more accurate in judging correctness of their answers.

Practice in metacognitive monitoring on tests

Judgment of performance can be affected in several ways, such as the effect of practice on successive exams (Hacker, et al., 2000; Pierce & Smith, 2001; Bol, Hacker, O'Shea, & Allen, 2005; Nietfeld, et al., 2005). Mixed results have been reported. Bol, et al. (2005) examined the effect of online practice on calibration across five quizzes and found no difference in performance on a final exam between students who practiced calibration, and those who did not. Calibration was defined as matching perceived with actual performance. Pierce and Smith (2001) reported no improvement of students' metacomprehension accuracy over four successive sets of questions. Nietfeld, et al. (2005) found monitoring accuracy remained stable over four exams in a semester for all students. A contrary result was reported by Hacker, et al. (2000): high-scoring students improved their prediction of performance over three exams in a semester. De Carvalho Filho (2009) reported that college students' confidence and performance significantly improved over four subsequent short-answer tests but not on multiple-choice tests.

Several gaps in the literature are apparent. Firstly, self-tailored exams have not been well investigated since Morse (1988). No study has investigated examinees' behavior on self-tailored exams in the same manner or over as many tests as the current study. Secondly, previous studies have not examined metacognition in the context of a self-tailoring procedure on examinations. Thirdly, studies of whether practice on subsequent exams would improve accuracy of metacognitive monitoring on a test have yielded mixed results; therefore, this question needs further investigation. Fourthly, further investigation of the effect of item difficulty on metacognitive monitoring of test performance is needed.

This study hypothesized that: (a) the option to omit questions from scoring (self-tailor the test) would result in higher mean scores for students on five in-class exams, (b) students' efficacy in self-tailoring (e.g., accurately distinguish their correct and incorrect answers) would improve over five in-class exams, and (c) frequency of omission of items from scoring would correlate with item difficulty.

Method

Participants

Participants were undergraduate students from two sections of an educational psychology course. Sixty-one students of 99 from section one, and 38 students of 39 from section two signed the informed consent. Ten of those withdrew from the course, and nine did not take all exams. Therefore, 80 students completed the entire study. Of these, 71 used the option to omit questions on all exams. Class sections were taught by different instructors, which could have affected participation rates. Section 2 was taught by one of the authors who spent more time explaining the procedure in Section 2 than in Section 1, which was taught by another instructor. Of the 80 students, 55 (69%) were women, and 25 (31%) were men. Mean age was 20.8 yr. By ethnicity, 52 (65%) were Caucasian, 27 (34%) African/American, and 1 (1%) Native/American. Most were sophomores 24 (30%) or juniors 25 (31%), 18 (23%) were seniors, 12 (15%) were freshmen, and 1 (.3%) “other.” The mean self-reported ACT score was 22.5 (SD = 4.0, n = 71) with a range from 16 to 30. Students reported their ACT scores on a demographic questionnaire after they signed the informed consent. The mean self-reported grade point average was 3.1 (SD = 0.6, n = 72) with a range from 1.4 to 4.0.

Students from different majors were enrolled in the course as requirement for a degree or as an elective. Twenty-five students (32%) were education majors; 19 were (24%) kinesiology majors, 9 (11%) engineering majors, 8 (10%) biological science majors, and 7 (9%) business majors. Twelve (14%) students were from majors of nursing, animal and diary science, nutrition, pre-occupational therapy, criminal justice, and undeclared.

Procedure

Five 75-item multiple-choice exams were administered throughout the semester. The items were taken from a test bank for this text (Berger, 2005). Tests were identical across sections with the exception of three items on Exam 1 and one item on Exam 5, due to slight differences in notes and lectures of the two instructors. Three forms of each test were used in Section 1, whereas Section 2 used two forms for Exams 2–5, and a single form for Exam 1. Multiple forms differed only on the ordering of alternatives for items, as a cheating deterrent.

Before Exam 1, students were asked to participate in the study and sign the consent form. Oral and written instructions were given on how to omit questions from scoring with examples of the scoring method and calculations of an adjusted score. Students also completed a demographic form. All students were allowed to use the self-tailoring option on all exams regardless of whether they chose to participate in the study. Therefore, all students in each class had the opportunity to increase their scores on the exams.

When taking each exam, students explicitly marked on the answer sheet up to five questions they wished to have excluded. This arrangement was agreeable to both instructors. Omitting up to five questions from a total number of 75 questions on the test would represent only 7% of the test. Adjusted scores were calculated by the first researcher, and tests were returned to students at the next class. Correct answers were discussed by instructors. Each student was given the original score, the adjusted (self-tailored) score, and how many omitted questions had been answered correctly or incorrectly. After Exam 5, participants answered a 12-item questionnaire on their perception of the scoring, its effect on their performance and changes in study or test-taking behaviors.

Student survey

Seven items on the student survey used a Likert-type scale with the response options of 1: Strongly disagree, 2: Disagree, 3: Agree, and 4: Strongly agree. One item assessed any reported change in study time. Other items, not reported in this study, included descriptive answers about test-taking strategies, study strategies, and strategies students used to decide which questions to omit. Finally, students were asked how many hours they studied for the class. The estimated internal consistency reliability coefficient for the seven Likert-type items was α =.82.

Results

Descriptive statistics and estimated reliabilities of students' scores and mean number of items omitted on all five exams prior to calculation of the adjusted scores are presented in Table 1. The means of exam performances reported as the percent of correct answers were fairly consistent across exams. Only about five percentage points separated the lowest mean performance on Exam 2 (76%) from the highest mean performance, on Exam 5 (81%). Score variation for the five exams was also consistent, and indicated a fairly wide range of performance. Distributions on all exams were slightly negatively skewed. Performance was above the mean scores for previous groups of students who completed the same course. Mean items omitted from scoring were consistent across exams, about four (Table 1). The range of omissions was 0–5 with the exception of Exam 1 (1–5). The median KR-20 reliability estimates across the forms of each exam varied little across exams, from .87 to .89.

Summary statistics for percent correct scores and internal consistency reliability estimates by exam (N = 80)

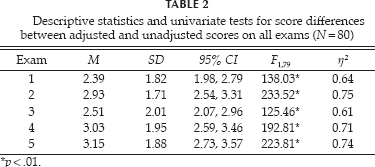

Differences between scores before and after applying the self-tailoring option were calculated for all participants on all exams. The five resulting difference scores (e.g., self-tailored score vs uncorrected score) were analyzed via a single-sample, multivariate analysis of variance (MANOVA), testing the null hypothesis that self-tailored scores and uncorrected scores were equal for the set of five exams. This analysis was based on students from the combined class sections who completed all five exams during the study (N = 80). There was a statistically significant difference among mean scores due to self-tailoring on the set of five exams (F5,75 = 74.93, p <.01, η2 = 0.83,). Univariate follow-up analyses of variance (ANOVAs) affirmed that these differences were statistically significantly different on each exam (p<.01) (Table 2). Therefore, students increased mean scores on each of the five exams when omitting questions. On individual exams, 82% (Exam 3) to 94% (Exam 5) of students improved their scores via the self-tailoring option.

Descriptive statistics and univariate tests for score differences between adjusted and unadjusted scores on all exams (N = 80)

p<.01.

The percent of omitted items that were incorrectly answered on an exam quantified efficacy of self-tailoring. This value was used as the dependent variable in a simple repeated measures ANOVA, using the exam as the independent variable. Scores from students in the combined sections who omitted questions on all exams were used in this analysis (n = 71). The main effect of exam was statistically significant. Omission efficacy scores on the five exams are summarized in Table 3. Mean self-tailoring efficacy was lowest on Exam 1 and highest on Exam 5. Follow-up tests using the Least Significant Difference (LSD) criterion at the .05 level showed that efficacy scores on Exams 2, 4, and 5 were statistically significantly higher than on Exam 1. The test of trend showed a statistically significant linear increase of percentages over five exams (F1,70=6.74, MSE =596.98, p = .01, η2 = 0.09). Higher order trends (quadratic, cubic, and Order 4) were not statistically significant. Students omitted an increasingly higher number of incorrectly answered questions out of the total number omitted over five successive exams, and this increase was linear in form. Therefore, students' self-tailoring efficacy improved across exams for students who always used the self-tailoring option.

Descriptive statistics for self-tailoring efficacy scores (N = 71)

Note.—Efficacy scores represent the percent of omitted items that the student had answered incorrectly.

Pearson correlations were used to assess whether the frequency of item omissions correlated with item difficulty values. Item difficulties represented the proportion of students who answered each question correctly. Lower values indicated more difficult questions. Frequencies of item omissions were calculated by counting the number of students who omitted each item. There were moderately strong, negative correlations between item difficulties and frequencies of omissions on all exams, varying from -.48 (95%CI = -.63, -.29; Exam 2) to -.73 (95%CI = -.82, -.61; Exam 5). When given the option to omit questions from scoring, students more frequently omitted more difficult questions.

Descriptive statistics of students' responses to selected questions from the questionnaire given to students after Exam 5 were compiled to answer how students described their strategies of omitting questions from scoring (Table 4). The analysis included students who completed all five exams during the semester (N = 80).

Percent and frequencies of 1: Strongly disagree, 2: Disagree, 3: Agree, and 4: Strongly agree answers on student survey

Students were favorable toward the option to omit questions. Students agreed that the self-tailoring option: (a) helped them to improve their scores, (b) should be used for other multiple-choice exams, (c) offered students more control over their exams, (d) helped them become more confident to distinguish what they knew from what they did not know on exams, and (e) helped identify wrong answers across exams (Table 4).

Questions were asked about study time, study strategies, and test taking strategies. Students reported studying an average of 3.5 hr. per week for class (range 1–12 hr.). The self-tailoring option: (a) did not change reported study time for 75% of students, whereas 21% reported that they studied more as a result; (b) made 59% of students aware of some change in study strategies; and (c) resulted in 77% of students being aware of needed changes in test-taking strategies. More students acknowledged awareness about changes in test taking than study strategies (Table 4).

Discussion

Several points appear warranted from the data presented in this study. Firstly, undergraduate students can apply the self-tailoring procedure so as to improve their scores on in-class exams. The proxy for metacogitive behavior and self-tailoring efficacy showed an increase after Exam 1, and the vast majority of students effectively applied the procedure to increase their scores. Efficacy was better than chance to begin with, and practice did help to improve efficacy. This latter finding is inconsistent with results reported by Bol, et al. (2005), Nietfeld et al. (2005), and Pierce and Smith (2001), but congruent with Hacker, et al. (2000), who reported improvement for higher achieving students, though these studies used calibration (perceived performance vs actual performance, typically on an item-by-item basis) as the indicator of metacognitive skill. One possible explanation for the disagreement is that self-tailoring only requires that students identify a limited number of items about which they are least certain of their success, as opposed to judging success on all items encountered. It may be the case that improving one's skill in identifying only “worst scenario” performance instances is simpler to effect than is improvement in judgments of all item outcomes. In the present study, students perceived an improvement in self-tailoring efficacy corresponding to actual improvement. Other studies have tended not to measure participants' perceptions.

Secondly, metacognitive ability, as quantified by self-tailoring efficacy, tended to improve across the five exams. The part of metacognition responsible for the self-tailoring procedure was metacognitive monitoring of performance on a test. A factor that might affect monitoring accuracy is whether students received feedback or explicit training on monitoring of performance (Nietfeld, et al., 2005). In the current study, students received oral instruction and written instruction on how to omit questions from scoring before the first exam. After tests were returned from the university testing services and adjusted scores were furnished, students in both sections reviewed their answers on the test including the answers they omitted from scoring. Correct answers for test questions were discussed in class as part of each test debriefing. These practices might have had an influence on students' learning of how to apply the self-tailoring procedure and their increased success in applying the procedure after the first exam.

Thirdly, as hypothesized, there was a relationship between item difficulty and the likelihood that students would elect to omit an item. More difficult items were more often omitted. This result affirms reports of multiple studies (e.g., Pressley & Ghatala, 1988; Schraw & Roedel, 1994; Morse & Morse, 2002). Thus, perceptions of success do correspond systematically to the empirical challenge of test items, and students' accuracy of metacognitive monitoring does depend on the difficulty of the task.

Fourthly, students were favorably disposed towards the self-tailoring option, with many recommending that it be more widely used. Additionally, they perceived the self-tailoring option as giving them more of a sense of control in exams. This is consistent with a finding from Wise, et al. (1994), who reported that lower-performing examinees taking computer adaptive tests preferred to have greater control over the selection of item challenge, generally opting for less difficult questions.

Concerns about self-tailored tests

Among the concerns that would arise with applying a self-tailoring option is that instructors might be reluctant to apply the procedure in a large college classroom if they relied on hand calculation of adjusted scores on exams. An alternative to this would be calculation of an adjusted score by the school testing services, or a simple spreadsheet could be constructed to automate the process. Another option would be to incorporate the scoring adjustment to tests taken on-line, if the on-line testing program could accommodate a self-tailoring option.

Another concern of educators might be whether the procedure encouraged students to study less for exams. The present study results suggest that instructors need not fear that students would reduce their study efforts in preparation for exams, although objective measures would be needed for assurance. A third concern would be that of threats to content validity of the test by selective omission of items by students. The current study did not address content integrity of the tests as a result of self-tailoring, so this question requires investigation.

Limitations

The study involved only college students taking a lower-level behavioral science course in a large, lecture format. All of the tests were multiple-choice measures. We have no insight from the current study as to how the results would generalize to other educational levels, college courses in other disciplines, upper level courses, or when applied using other assessment methods. No systematic control or review for item content was incorporated, and therefore can not address the concern mentioned earlier about content validity of the test being affected by allowing the self-tailoring option.

Self-tailoring of examinations can be applied to multiple choice examinations with little difficulty. Since students tend to elect more difficult items for omission, this may serve as useful feedback to instructors as to which topic areas merit more attention or coverage in class. Based on observation of the most frequently omitted questions, an instructor may decide whether the quality of these questions could be improved. Therefore, this information could be used to improve teaching of the course and assessment of students' knowledge in the future.

Students perceived the self-tailoring option favorably. Such a stance by students may seem self-serving, as the self-tailoring option would appear an easy way to evade challenging questions or to opt out of assessment of topics not adequately studied. However, Green and Halpin (1977) and Green, Halpin, and Halpin (1996) reported that students more frequently identified empirically difficult items as of lower perceived quality (e.g., less “fair”) than were easier items. Thus, the self-tailoring procedure would be one simple way to address complaints, whether accurate or not, about tests being unfair.

Self-tailoring efficacy can be used as a crude proxy for metacognitive behavior. The purpose in this study was not to provide a competing indicator for metacognitive skill, although other studies typically require examinees to make judgments about success or confidence of success on each individual item or task. Such a tactic, while useful in a laboratory or study setting, would be quite intrusive and time-consuming to implement in a classroom setting; the efficacy scores used here required much less effort to obtain.

The emphasis of this study was on gauging efficacy and improvement of self-tailoring skill by practice on consecutive exams. In the future, researchers may wish to determine if the improvement in self-tailoring skill differs between lower and higher achieving learners. Instructors might be interested to see whether the option to omit questions from scoring improves or worsens the mastery of course content. Something as simple as a survey of college instructors to assess whether they would find the self-tailoring option palatable, would be informative for educators.

A second way to broaden the scope of the current study would be to investigate a self-tailoring option on tests that include other item formats, such as short answer items and true and false items to allow comparison of the frequencies of omissions of different item types. Behavior on on-line or computer-administered tests may also differ, and therefore is deserving of investigation. Similarly, more information on the effect of self-tailoring based on test length, maximum omissions allowed, and comparison of methods to minimize threats to content integrity would be helpful.

The final recommendation would be involving different ages and educational levels of participants, different course types, and different types of tests, including very low stakes (e.g., practice quizzes) as well as consequential tests. The robustness of the current results are unknown; further research is needed.