Abstract

This paper represents a brief, selective review of technology-aided programs for persons with severe/profound and multiple disabilities. Specifically, the paper provides detailed summaries of a number of illustrative studies conducted by these authors for the assessment of (a) microswitch-based programs aimed at promoting response engagement and control of environmental stimulation, (b) speech-generating devices and computer-aided programs directed at promoting communication and stimulus choice, (c) orientation technology solutions for promoting indoor travel, and (d) visual- and verbal-instruction technology for promoting performance of complex, multi-step tasks. The studies included in the review provide a specific picture of the technological instruments adopted within the programs, of the participants involved, and of the outcomes obtained. Questions of practical importance left unanswered by those studies and others in the field are also discussed as possible targets of new research.

Technology-aided programs for persons with severe/profound and multiple disabilities are intervention approaches using a variety of technological devices for enhancing or enabling performance of daily tasks (Lancioni, Bellini, Oliva, Singh, O'Reilly, & Sigafoos, 2010b; Shih & Shih, 2010; Borg, Larson, & Östegren, 2011; Reichle, 2011; Shih, 2011;). Typically, the technological devices are expected to promote forms of adaptive responding (e.g., control of environmental events/stimulation, communication, indoor travel, and constructive activities) by bridging the gap between the person's behavioral repertoire and the abilities required for the adaptive responding targeted (Uslan, Malone, & De l'Aune, 1983; Holburn, Nguyen, & Vietze, 2004; Lancioni, O'Reilly, Singh, Sigafoos, Oliva, Antonucci, et al., 2008a; Lancioni, O'Reilly, Singh, Buonocunto, Sacco, Colonna, et al., 2009a; Bauer, Elsaesser, & Arthanat, 2011;).

Microswitch devices combined with a microprocessor-based control system were developed to enable persons with minimal behavioral repertoires and possibly intellectual and communication disabilities to control environmental events or stimulation that they would not be able to directly manipulate (Lancioni, Singh, O'Reilly, Sigafoos, Didden, & Oliva, 2009c). Speech-generating devices are instruments that can produce different types of verbal outputs in relation to small and simple non-verbal responses of the participants (Sigafoos, Green, Payne, Son, O'Reilly, & Lancioni, 2009). In practice, they allow verbal communication by persons who are only able to emit largely unintelligible speech, have lost speech and communication abilities, or have never developed sufficient speech and language or communication abilities (Rispoli, Franco, Van der Meer, Lang, & Camargo, 2010). Orientation technology can provide direct guidance or corrective feedback to help persons with serious problems finding their way to travel independently within their residence or work environments (Lancioni, Singh, O'Reilly, Sigafoos, Alberti, Scigliuzzo, et al., 2010c). Technology-based instruction systems activated via a single input key or other sensor can ensure the performance of multi-step tasks to persons who would not be able to remember the task steps or sequence on their own (Lancioni, O'Reilly, Seedhouse, Furniss, & Cunha, 2000).

This paper represents a selective review of studies assessing intervention programs based on the aforementioned types of technology: microswitch-based programs aimed at promoting response engagement and control of environmental stimulation, speech-generating devices and computer-aided programs directed at promoting communication and stimulus choice, orientation technology for promoting indoor travel, and visual and verbal instruction technology for promoting performance of complex, multi-step tasks. In particular, we provide detailed summaries of a number of illustrative studies that we conducted and describe the technological instruments adopted within the programs, the participants involved in those programs, and the outcomes obtained. Relevant questions for new research are also examined (see Conclusions).

Microswitch-based Programs for Engagement and Stimulation Control

The development of microswitch-based programs for persons with profound and multiple disabilities requires the fulfillment of four basic conditions: the identification of a response that is already available in the person's repertoire (although at low frequencies) and appears relatively easy for the person to perform; the availability of a microswitch that suits the response identified, that is, can monitor it reliably without being excessively invasive; the use of a microprocessor-aided control system that connects the microswitch to environmental stimuli and can regulate brief activations of those stimuli in relation to the person's response performance; and the availability of stimuli that are apparently enjoyable for (preferred by) the person and thus sufficiently motivating to increase his or her response frequency and maintain such an increase over time (Crawford & Schuster 1993; Kazdin, 2001; Lancioni et al., 2002, 2004, 2008a, 2010b; Holburn, Nguyen, & Vietze, 2004; Anderton, Standen, & Avory, 2005; Mechling, 2006; Saunders, Questad, Cullinan, & Saunders, 2011; Tam, Phillips, & Mudford, 2011; Lui, Falk, & Chau, 2012). Responses used for microswitch-based programs have commonly included head-turning and hand-pushing or variations thereof (Leatherby, Gast, Wolery, & Collins, 1992; Saunders, Smagner, & Saunders, 2003; Saunders & Saunders, 2011). The microswitches employed to monitor the occurrence of those responses have generally consisted of commercial pressure devices.

Although those responses and microswitches may be largely and successfully used, groups of persons exist with pervasive disabilities and minimal behavioral (motor) repertoires, who could not reliably produce those responses and could not benefit from those microswitches (Lancioni, Singh, O'Reilly, & Oliva, 2005; Lancioni, et al., 2008a; Lui, et al., 2012; Tam, et al., 2011). For these people, extensive research efforts have recently been conducted to identify alternative (minimal) responses and suitable microswitches (Lancioni, Singh, O'Reilly, & Sigafoos, 2011a). For example, Lancioni, Tota, Smaldone, Singh, O'Reilly, Sigafoos, et al. (2007c) adopted chin and eyelid movements as responses for a girl of 12.5 years and a girl of 4.0 years, respectively. The girls had congenital encephalopathy with pervasive motor impairment, lack of speech or other forms of communication, and intellectual disabilities.

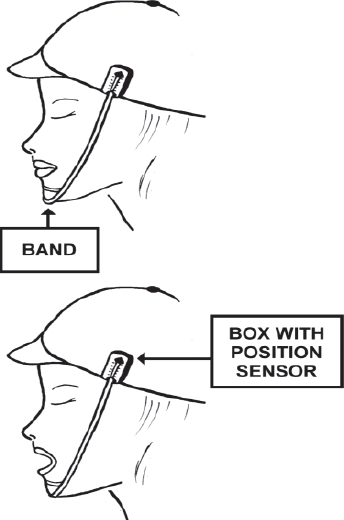

In this case, chin movements consisted of specific increases in the level of mouth opening. These movements were recorded through a position sensor/microswitch, which was inside a small box fixed to the side of a hat that the girls wore. The position microswitch was linked to a band passing under the girl's chin and ending at the other side of the hat (see Fig. 1). Downward movements of the chin pulled the band and, consequently, the position microswitch. This triggered a control system that activated brief periods of preferred stimulation during the intervention phases of the study. Preferred stimulation consisted of a variety of stimulus events (e.g., familiar voices and vibratory inputs), which had been selected through stimulus preference screening procedures.

Schematic representation of a position microswitch (with the position sensor inside the box and the band under the chin).

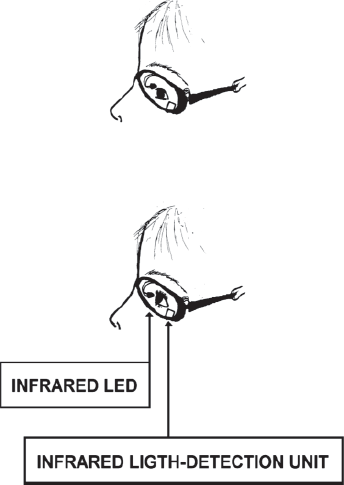

Eyelid movements consisted of the girl pulling upward the eyelid of the left eye or both eyelids (i.e., as if she were to look at the ceiling). This response was monitored via an optic microswitch fixed to an eyeglass frame and connected to a control system. The optic microswitch (see Fig. 2) included an infrared light-emitting diode (LED), which was placed in front of the girl's left eye and an infrared light-detection unit. The LED pointed at the girl's left eyelid except when she looked upward, when it pointed at the girl's eye; then infrared light reflection decreased and triggered the control system. This in turn activated brief periods of preferred stimulation during the intervention phases of the study, which were applied according to an ABAB sequence (Barlow, Nock, & Hersen, 2009).

Schematic representation of an optic microswitch mounted on an eyeglasses frame (with infrared LED and infrared light-detection unit).

Results showed that both girls had significant increases in response frequencies during those intervention phases. That is, both girls apparently learned to use those responses to control (increase) preferred environmental stimulation that they could not have controlled in any direct manner.

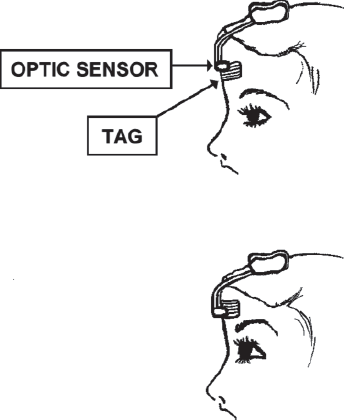

Lancioni, O'Reilly, Singh, Sigafoos, Didden, Oliva, et al. (2007a) investigated the possibility of using small upward or downward movements of the forehead skin as a response for two participants with profound multiple disabilities and minimal motor repertoire of 6 and 14 years of age. The microswitch set up for monitoring such a response consisted of an optic sensor (barcode reader) with an electronic regulation unit, and a small tag with horizontal bars (see Fig. 3). The tag was pasted on the participants' forehead and the optic sensor was held in front of the tag via a light support structure. The study used an ABAB design in which the A represented baseline phases and the B intervention phases (Barlow, et al., 2009). During the intervention phases, small movements of the tag, occurring in concomitance with small upward or downward movements of the forehead skin, triggered the microswitch system and, as a consequence, activated a microprocessor-aided control system that delivered brief periods of preferred stimulation (see above). Data indicated that the response and the microswitch were adequate for the two participants. In fact, both of them showed a strong response increase during the intervention phases of the study (i.e., when the response allowed access to stimulation) as opposed to their performance during baseline periods in which no stimulation occurred.

Schematic representation of an optic sensor (barcode reader) and a small tag with horizontal bars.

Lancioni, Singh, O'Reilly, Sigafoos, Alberti, Oliva, et al. (2011b) investigated the use of eyelid responses or finger/hand movements for three post-coma persons of 67 to 77 years of age who were reported to be in a minimally conscious state and to present with pervasive motor impairment and lack of any means of communication. The response for the first participant consisted of an eyelid closure, recorded via a new camera-based microswitch. This microswitch was a new technical solution that served to replace the optic microswitch used with the eyeglass frame (Fig. 1). The new microswitch involved a computer with CPU, a USB video camera with a 16-mm lens, and special software. The left eyelid of the participant was provided with a blue color spot greater than 1.5 cm2. Fig. 4 provides a schematic representation of an open eyelid with the color spot on it only minimally visible and a representation of a closed eyelid with the color spot on it fully visible. The camera was directed to the participant's face from a distance of about 1 m and the computer analyzed her face pictograms at intervals of 200–300 msec. If the camera detected an increase of about 50% in the size of the blue area of her eyelid, the computer recorded an eyelid-closure response and activated a brief period of musical stimulation. This stimulation was supplemented with a light body massage.

Schematic representation of a color spot applied on the eyelid, which is minimally visible (eyelid open) and fully visible (eyelid closed).

The response for the second participant consisted of minimal changes in the position of her fingers/hand. The microswitch consisted of a touch-sensitive pad on which the participant's hand was placed. Each square inch of the pad functioned as an independent device. Whenever any of the independent devices was triggered, the microswitch and a related control system were activated. This led to brief periods of musical stimulation and body massage.

The response for the third participant consisted of a protracted (i.e., 1-sec.) eyelid closure. The microswitch consisted of an optic sensor mounted on an eyeglass frame as described above. Microswitch activation triggered a control system to present brief stimulation periods. All three participants, who were exposed to an ABAB design (Barlow et al., 2009), managed to use the responses and microswitches selected for them successfully during the B (intervention) phases of the study. During those phases, in fact, they showed significant response increases (with consequent stimulation increases).

Lancioni, et al. (2010a) assessed the possibility of promoting the use of two different responses by a man of 21 years of age who had spastic tetraparesis, blindness, epilepsy, lack of communication means, and, reportedly, profound intellectual disability. The two responses, which consisted of eyelid and mouth opening, were to enable him to control different sets of stimulus events. Given his serious problems of head posture, the use of optic microswitches fixed on eyeglass frames was not practical or even feasible to detect the aforementioned responses. To solve these problems, camera-based microswitch technology was employed. The technology included the components mentioned above. Two light-blue color dots of about 6 mm in diameter (one at the side of the nose and the other below the lower lip) allowed the technology to detect mouth opening (see Fig. 5). A green color spot, greater than 1.5 cm2, on the left eyelid allowed the technology to detect eyelid opening (i.e., following a process contrary to the one used for monitoring eyelid closing; see Fig. 4).

Schematic representation of color dots used at the nose and under the lower lip, respectively.

The study was carried out according to a multiple probe design across responses (Barlow, et al., 2009). Initially, the intervention focused on mouth opening so the participant was only provided with the color spots at his nose and below his lower lip. If the camera detected an increase of over 45% in the distance of the two color spots for about 500 msec., the computer recorded a mouth-opening response and activated a brief period of the stimulation available for this response (i.e., vocal and instrumental rhythmic sounds). Subsequently, the intervention focused on the eyelid-opening response so the participant was only provided with the color spot on his eyelid. If the camera detected a decrease of the green spot of about 60% for 1 sec., the computer recorded an eyelid-opening response and activated a brief period of the stimulation available for this response (i.e., audio-recordings of familiar voices/persons). Finally, the participant was provided with color spots at his nose and below his lower lip as well as on his eyelid and the camera was set to monitor both responses. Their occurrence led to the same forms of stimulation described above. Data showed that the man managed to use both responses successfully. When he could choose between the two, he developed a slight preference for mouth opening.

Speech-generating Devices and Computer-aided Programs for Communication and Choice

Speech-generating devices allow persons without speech abilities the opportunity to make requests in a verbal manner so as to be comprehensible to communication partners unfamiliar with persons with disabilities or not in immediate spatial proximity. Speech-generating devices may vary widely in terms of the number of messages (requests) that they allow, in terms of the activation response they require the participant to perform, and in terms of the formulation of the message (i.e., from simple one-word messages to phrases) (Schepis & Reid, 2003; Sigafoos, Didden, & O'Reilly, 2003; Beck, Stoner, Bock, & Parton, 2008; Sigafoos, et al., 2009; Banda, Copple, Koul, Sancibrian, & Bogschutz, 2010; Van der Meer, Sigafoos, O'Reilly, & Lancioni, 2011). For persons with reasonable fine motor coordination, for example, the activation response may involve pointing to, pressing, or touching specific areas or panels of a device (Rispoli, Franco, Van der Meer, Lang, & Camargo, 2010). These areas or panels could be specified (discriminated) with pictures, drawings, or other graphic symbols familiar to the person using them.

For persons without sufficient fine motor coordination, two alternative forms of activation responding may be adopted. One form consists in the use of sensors/microswitches connected to voice output instruments so that the person can activate specific requests via minimal responses detected through microswitch-like technology. For example, minimal hand closure could activate a microswitch-like device causing the verbal request of caregiver attention, while head-turning may activate a microswitch-like device causing the verbal request of visual stimulation. The second form consists of the use of a single microswitch-like device together with an automatic scanning system applied to a computer screen in front of the person. The scanning could emphasize different request cells available on the screen and identified with pictorial images. In practice, the same response (activating the microswitch-like device) would allow the person to produce different request messages provided that he or she performs it in relation to the scanning of different request cells (Lancioni, Sigafoos, O'Reilly, & Singh, 2012b). For example, the activation of a touch-sensitive pad in relation to the scanning of a food item may lead the system to verbalize the request of a snack. The same response (i.e., activation of the touch-sensitive pad) in relation to the scanning of a music item may lead the system to verbalize the request of specific music pieces.

Computer-aided programs for choice are technological solutions designed to present the person with severe/profound and multiple disabilities with samples of various environmental stimuli available for selection, allow him or her to select among those samples and enjoy the presentation of the related stimulus event, and ask for repetitions of the stimulus previously selected and enjoyed (Lancioni, O'Reilly, Singh, Sigafoos, Oliva, & Severini, 2006). Within any given program session, the person would be presented with a brief sample of each of the stimuli available (e.g., songs or videoclips). In relation to each sample, the person could perform a selection response (e.g., could activate a sensor or microswitch device) or could abstain from such a response and thus bypass it. Such a program arrangement would promote vast choice opportunities, enhance self-determination, and increase enjoyment (Lancioni, et al., 2012b). It could also be feasible for persons with pervasive motor disabilities, given that a single, minimal response could be used for all choices and repetition requests.

Lancioni, O'Reilly, Singh, Buonocunto, Sacco, Colonna, et al. (2009a) carried out a program with an 18-year-old participant who had suffered severe traumatic brain injury at the age of 14 and was considered to be in a minimally conscious state. He presented with pervasive motor disabilities, lack of any form of communication (or environmental control), and used a gastrostomy tube for nutrition. Given his motor condition, two simple responses were selected to make as many verbal requests. The first response consisted of a small movement of the right hand, which activated an optic sensor linked to a voice output device and caused a verbal call for the attention of a research assistant. The second response consisted of a small movement of the right big toe, which activated tilt sensors linked to a voice output device and caused a verbal call for the attention of the mother or grandfather. In reponse to the calls, the research assistant approached the participant and engaged him in body movements and talking for about 20 sec. The mother or grandfather approached the participant and talked and showed him pictures, or sang and played music for about 20 sec.

The study was carried out according to a multiple probe design across responses (Barlow, et al., 2009). Initially, intervention focused on the first response. Once the participant was using this response and related technology for calling the attention of the caregiver successfully, the intervention focused on the second response. When the second response had consolidated, the participant was provided with the technology for both responses so he could make both requests freely. Data showed a large increase in each of the responses during the intervention phases in which they were directly targeted. Satisfactory response levels were also present during the last intervention period, suggesting that the participant remained active and motivated to make requests that ensured his contact (positive emotional engagement) with relevant people.

In a recent study, Lancioni, Singh, O'Reilly, Sigafoos, Ricci, Addante, et al. (2011c) assessed the use of a commercial speech-generating device to enable a woman with intellectual disabilities, respiratory problems, and absence of productive verbal communication, to request access to various activities. In response to the requests, the caregiver was expected to mediate in two different ways, that is, by ensuring the availability of the material for the activities and by allowing choice between different material options. The device was a tablet-like tool with nine cells (Go Talk 9; Special Needs Products of Random Acts Inc., USA). Only five of those cells were used. Each cell was assigned a pictorial representation of one of the activities available. Making requests consisted of producing a light pressure on one of those representations (i.e., activating the underlying key area and triggering the verbalization of the related message). The message consisted of calling the caregiver and asking her for the opportunity to engage in a specific activity. The activities involved: listening to music or songs, watching videos, using ornamental material, watching picture cards and completing drawings, and using make-up.

The study was carried out according to an AB1BAB design in which the A represented baseline phases and the B1 and B represented intervention phases (Kazdin, 2001; Lancioni, et al., 2011c). The B1 phase served to introduce the five pictorial representations used to indicate the activities available. Initially, there was only one representation. When the participant used it consistently, the second representation was introduced. This was used individually at the start and in combination with the first one successively. The process continued according to the same conditions until all five representations had been introduced. This was done with one representation at a time. The B phases included all five representations so the participant could request any of the activities. An activity was made available for about 2 min. after each request. Results showed that during the baseline phases only a few requests were made and understood by caregivers and staff. During the intervention phases, in contrast, the participant made an average of about eight requests per 20–25 min. session. The activities being more frequently requested involved listening to music and songs and watching videos.

Lancioni, Singh, O'Reilly, Sigafoos, Buonocunto, Sacco, et al. (2010d) assessed the possibility of implementing a computer-aided program for choice with three post-coma patients with multiple impairments. The patients were 22, 81, and 51 years old. They had suffered brain injury due to a road accident, ischemic strokes, and rupture of an intracranial aneurysm, respectively. They presented extensive motor impairment that made it impossible for them to reach and manipulate environmental stimuli. They were also characterized by lack of speech and absence of any specific self-help ability.

The first (youngest) patient was rated at the sixth level of the Rancho Levels of Cognitive Functioning (based on the confused-appropriate response dimension; see Hagen, 1998). The other two patients were reported to be in a minimally conscious state with total scores of 11 and 15 in the Coma Recovery Scale-Revised (Kalmar & Giacino, 2005). The response that the patients could use to make their choices and repetition requests consisted of a hand pressure, a double eyelid closure within a 2-sec. interval and/or a light head movement, and a hand-closure movement, respectively. The microswitch technology used to detect responding consisted of a pressure device (first participant), an optic sensor for eyelid closures and a touch sensor to detect head movements (for the second participant), and a touch-pressure device (for the third participant).

Each program session included a set of 16 stimuli: 12 were considered preferred by the participants (e.g., pieces of songs) while the other 4 were considered non-preferred (e.g., distorted sounds) and served as control stimuli. The expectation was that the latter stimuli would be avoided if the participants had a purposeful choice behavior. For each stimulus, the computer presented a sample of 3 s and combined it with verbal expressions such as “and this?” or “want it?” If the participant responded to the sample (i.e., activated the microswitch) within 6 s, the computer turned on the matching stimulus for 20 s. If the participant activated the microswitch within 6 s from the end of a 20-s stimulus episode, the computer extended the presentation of the same stimulus of 20 additional seconds. A pause interval of 6–10 s was placed between the end of a stimulus episode or the lack of responding to a stimulus sample and the presentation of a new sample. The program was implemented according to a non-concurrent multiple baseline design across participants. Results showed that all participants' response frequencies increased; they chose a variety of the stimuli available. Their responding concentrated almost exclusively on the preferred stimuli and included multiple requests of extensions or repetitions of several of those stimuli.

Orientation Technology for Indoor Travel

The two most basic forms of orientation technology rely on the use of direct orientation cues and corrective feedback, respectively (Uslan et al., 1983; Lancioni, Bracalente, & Oliva, 1995; Lancioni, O'Reilly, Singh, Sigafoos, Oliva, Baccani, et al., 2007b). A program based on direct orientation cues guides the person's travel by marking or specifying the space to the destination with the automatic presentation of auditory (buzzer-like) sounds, verbal messages, or lights.

Such a program is considered appropriate for persons who have a very limited notion of space and virtually no orientation abilities due to combinations of intellectual, neurological and visual disabilities (Lancioni, et al. 2007b, Lancioni, Singh, O'Reilly, Sigafoos, Alberti, Scigliuzzo, et al., 2010c). A program based on corrective feedback is much less intrusive than one using directive cues and would be appropriate for persons who have functional vision and/or possess a general notion of the spatial reality surrounding them. The persons would be allowed to engage in travel using self-management and initiative skills (Lancioni, et al., 2009c). The orientation technology would interfere with the person only if he or she deviated from or lost the correct direction. In that case, feedback (e.g., a vibration on the chest) would be presented. The feedback would continue until the person had found the correct direction again.

Lancioni, et al. (1995) assessed a pilot program based on direct orientation cues with four participants between 12.5 and 15 years of age who were totally blind and functioned within the profound intellectual disability range. The participants were to travel to several indoor destinations for their daily activities. The technology involved a portable electronic control device and a variety of acoustic sources. The portable device was worn by the participants on their chest. The acoustic sources were used along the routes to the destinations.

Before each travel, the supervising staff person entered the route to the destination (i.e., the route's identification number) in the portable device. Once that operation was done, the first acoustic source available on that route (the closest to the participant's position) was automatically activated. That is, it started emitting a complex sound alternating “on” and “off” phases of 3–5 s. When the participant approached that source, the portable device deactivated it and activated the next one in the route automatically. The process was repeated until the last source (i.e., the one at the destination) was reached. For each participant, the study involved an ABAB design. Results showed that during baseline (A) phases, the participants' percentages of correct travel ranged between near zero and about 30. During the last section of the second intervention (B) phase, their success rate was near or above 90 percent.

Lancioni, Singh, O'Reilly, Sigafoos, Campodonico, & Oliva (2008b) assessed a new (upgraded and simplified) program based on direct orientation cues with a woman of 28 years of age who presented with multiple disabilities including blindness, motor impairment with rigidity of the lower extremities and clubfoot, spatial disorientation, and intellectual disabilities estimated to be in the moderate range. The program was applied in the participant's apartment and work place. The apartment had an area of about 80 m2 and included six target destinations (e.g., bathroom and music corner). The work place had an area of about 100 m2 and five destinations (e.g., office desk and entrance). The orientation technology involved the presence of a sound source at each of the destinations of the two settings and a portable operation and control device that the participant used to select the destinations through specific discriminated keys.

Before traveling to a destination, the participant pressed the key associated to it. This resulted in the sound source at that destination emitting a sound cue every 15 s until the participant arrived and turned it off. The participant always walked along the walls or the furniture and used them as support and guidance for safe travel. The study was conducted according to a multiple probe design across settings (Barlow, et al., 2009). Initially, intervention was implemented in the work place and then in the apartment. Finally, the orientation technology was used in both settings. Data collection covered about 10 travels per day. Correct travel in the two settings was below 20% during the baseline and about or above 95% toward the end of the intervention.

Lancioni and Mantini (1999) assessed a program based on corrective feedback with a 30-year-old woman who had a diagnosis of severe visual impairment, deafness, and profound intellectual disability. The orientation technology included a variety of boxes emitting ultrasonic waves and a portable device combined with a feedback case. The device was programmed for a sequence of routes and destinations prior to each activity session. The participant activated each of those routes by attaching a Velcro@ tag, which she found with the related activity material, to the front panel of the device working as an input key. The device and the feedback case remained totally silent while the participant was walking along the route leading to the destination selected. They were activated and provided vibratory feedback whenever the woman's direction diverged about 20 degrees from the direction required. In response to feedback, the participant was to turn until the vibration stopped (i.e., until she had re-acquired the correct direction). Thereafter, she could resume walking to the destination.

The study was conducted according to an ABAB design. Data showed that during the baseline (A) phases, the participant's correct travel rate was close to 0%. During the intervention (B) phases, her percentages increased steadily and eventually settled above 95%. She required a mean of about two error corrections per travel. The time needed for each correction instance was quite short (i.e., a mean of about 10 s).

Visual- and Verbal-Instruction Programs for Multi-step Tasks

Technology-based instruction programs represent a most valuable resource for helping persons with extensive and multiple disabilities manage the performance of complex, socially and vocationally relevant tasks that involve a variety of steps (e.g., cooking and cleaning tasks). The technology can ensure an orderly presentation of the instructions without requiring the participants any manipulation of instruction material that could cause difficulties and errors (Mechling & Ortega-Hurndon, 2007; Mechling & Gustafson, 2009; Cannella-Malone, et al., 2011). Lancioni, et al. (2000) assessed the suitability and effectiveness of a computer-aided system designed to present pictorial instructions for the single steps of the tasks at hand. The study included six adults between 23 and 47 years of age whose intellectual functioning was rated in the severe disability range. The system involved an IBM 110 palm-top computer with color screen, electronic circuitry, an auditory output box, and a vibration box. The system was housed inside a protective case, which had a single key in addition to the screen for displaying the pictorial instructions.

The participants were to press the key to cause the occurrence of a new pictorial instruction on the computer screen. The tasks contained between 25 and 31 pictorial instructions referred to as many specific steps as well as four to six additional instructions. The latter instructions (which were interspersed with the former ones) consisted of representations of smiling faces and represented reinforcing events. In concomitance with each of these representations, the participants would receive the attention of the task supervisor and, occasionally, also tangible events such as a pleasant item. These reinforcers served to motivate the participants' performance. The aforementioned auditory output box and vibration box served as prompting devices.

Five participants carried the auditory box with them and received a verbal encouragement to continue if they failed to press the system's key for time intervals longer than those preset for the different steps of the task. The sixth participant carried the vibration box, as he was deaf. He received a vibratory prompt instead of a verbal encouragement when he was late with his responding as described above. To avoid errors due to inaccurate use of the key (e.g., repeated key pressing), the system was fitted with a latency function. This function made the system temporarily unresponsive after the first key press. All participants used the computer-aided system as well as a card system, according to an alternating treatments design (Barlow, et al., 2009). One system served for a set of four food preparation tasks or for a set of four cleaning and table-setting tasks. Sets of tasks and systems were counterbalanced across participants. Eventually, there was a crossover test in which the tasks previously carried out with the computer-aided system were scheduled for the card system and vice-versa.

Data showed that all six participants had a higher percentage of correct steps on the tasks carried out with the computer-aided system compared to the tasks carried out with the card system. During the crossover test, the participants had an improvement of 14 to 36 percentage points in the performance of the tasks transferred from the card system to the computer-aided system and a deterioration of 9 to 30 percentage points in the performance of the tasks transferred from the computer-aided system to the card system.

Lancioni, Singh, O'Reilly, Zonno, Flora, Cassano, et al. (2009d) carried out two studies to assess the effects of a technology-based verbal instruction strategy on the performance and mood of persons with moderate Alzheimer's disease (i.e., in a condition of severe social-adaptive impairment; see Folstein, Folstein, & McHugh, 1975). In the first study, they included six participants who were between 68 and 79 years of age and attended an Alzheimer rehabilitation center or a day center where they received supervised activity engagement. The verbal instruction strategy was applied to help them recapture a simple, social daily activity such as making and sharing a snack. A preliminary task analysis identified 22 steps for the completion of the activity. The activity material included the items required for it, which were stored on a small table, and an activity table next to the small one. The instruction technology involved radio-frequency photocells, light-reflecting paper, an amplified MP3 player with USB pen drive connection, a pen with the recording of the verbal instructions related to the task steps, and a microprocessor-based electronic control unit.

The photocells and light reflecting paper were at the opposite sides of the small table with the activity items. An activity session started with the control unit triggering the MP3, which presented the first verbal instruction (e.g., take the tablecloth). Taking the tablecloth led the person to break the photocells' light beam. This initiated a brief (programmable) interval (e.g., 4 s) at the end of which the control unit triggered the MP3 to present the second instruction (e.g., put the tablecloth on table). The presentation of this instruction started a new, longer interval (programmable on the basis of the time the participants required to perform the step), at the end of which the control unit triggered the MP3 to present the third instruction (e.g., take a slice of bread). Taking the bread caused an interruption of the photocells' light beam and started a new brief interval at the end of which the fourth instruction was presented (e.g., put the bread on table). The procedure continued following the same conditions until all instructions were presented and the task was completed.

The participants were divided into two groups of three. Each group was exposed to a non-concurrent multiple baseline design across participants (Barlow, et al., 2009). Data showed that the participants' levels of correct steps were between 25% and 60% during the baseline period and increased at least 30 percentage points during the intervention. Five of the six participants had a mood improvement (i.e., an increase in indices of happiness) during the activity sessions as opposed to parallel non-activity periods.

In the second study, two men with moderate Alzheimer's disease participated. They were 66 and 76 years old and were exposed to the same verbal instruction technology mentioned above for supporting them in the performance of the shaving activity. The activity was divided into 16 steps for each of which the participants received a specific instruction. Their baseline percentages of correct responses were around 30 and showed an increased of at least 40 points during the intervention. Their mood also showed benefits. One participant showed an increase in indices of happiness and the other showed a decrease in indices of unhappiness during the activity sessions as opposed to parallel non-activity periods.

Conclusions

The studies, which were reviewed above as illustrative examples of the various types of technology-based programs, provide an encouraging picture as to how such programs might help persons with severe/profound and multiple disabilities improve their achievements. Indeed, those programs seem to help the person to bridge the gap between their repertoire and the adaptive responding and outcome targeted for them, with large performance and social benefits (Brown, Schalock, & Brown, 2009; Friedman, Wamsley, Liebel, Saad, & Eggert, 2009; Jumisko, Lexell, & Söderberg, 2009; Scherer, Craddock, & Mackeogh, 2011). In spite of the fairly promising picture, it should be noted that all programs are largely experimental. Consequently, their application and evaluation would need to be extended to gather new evidence on their effectiveness (i.e., on participants' performance and mood), their usability in daily contexts, as well as their possible (general) drawbacks and risks (Barlow, et al., 2009; Handley, 2009; Lancioni, et al., 2012b; Okoro, Strine, Balluz, Crews, & Mokdad, 2010; Ryan, 2010).

Microswitch-based programs could be further evaluated with the involvement of participants presenting minimal responses rarely targeted or different from those previously targeted and the identification of new or upgraded microswitch devices that could reliably monitor those responses. With regard to the latter aspect, camera-based microswitch technology may be considered a practically relevant area of investigation with potentially extensive implications for low functioning persons (see Lancioni, et al., 2010a; Leung & Chau, 2010).

New research initiatives regarding speech-generating devices could attempt to integrate their use with that of microswitches within the same intervention programs (Lancioni, O'Reilly, Singh, Sigafoos, Didden, Oliva, et al., 2009b). For example, the participants could have the opportunity to make requests to their caregiver and, at the same time, be able to access particular stimulation events independently. This kind of combination could prove highly satisfactory for the participants' overall input and choice opportunities and could facilitate the task of the caregiver (Parette, Meadan, Doubet, & Hess, 2010). Indeed, the caregiver would be called upon to satisfy the participants' requests less frequently and this would make him or her more available for other (educational or practical/organizational) tasks within the daily context (Lancioni, et al., 2009b; Ripat & Woodgate, 2011).

The usability and practicality of orientation systems could also be investigated with persons with neuro-degenerative diseases (e.g., Alzheimer's disease and other forms of dementia) who lose their ability to travel within their daily indoor contexts with negative consequences on their independence and social status (McGilton, Rivera, & Dawson, 2003; Provencher, Bier, Audet, & Gagnon, 2008; Marquardt & Schmieg, 2009). Additional research efforts could be directed at upgrading the technology so as to reduce the dimensions of its different components and make their programming easier and less time costly (Baker & Moon, 2008; Lancioni, et al., 2010c; Malinowsky, Almkvist, Kottorp, & Nygrd, 2010).

The applicability and impact of instruction programs could be further evaluated through the use of new (i.e., more flexible and versatile) forms of technology that could be easily adapted to the characteristics of the participants. Comparisons of different types of pictorial instructions (i.e., static pictorial representations versus dynamic response images – video-clips) should be tested for participants with intellectual and multiple disabilities as well as participants with dementias. Similarly, comparisons of verbal instructions could be effective with combinations of visual and verbal instructions for persons with neuro-degenerative conditions (Lancioni, Perilli, Singh, O'Reilly, Sigafoos, Cassano, et al., 2012a).