Abstract

This study documented the effect of sample sizes commonly seen in exercise science research on type I and type II errors in statistical tests of numerous correlations. Data on tennis string testing were used to examine zero-order and partial correlations between six variables for the population (N = 198) and three randomly drawn sub-samples of 99, 50, and 25. Sample size and statistical analysis procedure affected the rates of statistical errors. Reducing sample size increased type II errors 7% to 21% using correlation analysis. Partial correlation analysis of smaller samples increased type II errors 29% to 85%. Correlation studies of small sample sizes are likely vulnerable to type I or type II statistical errors and should be interpreted with caution.

Scientists in kinesiology and exercise science must balance ethical, pragmatic, and theoretical constraints in designing research. Given the complexity of the human body and behavior, as well as the kinds (basic or applied) of research questions, research design and statistical analysis of the data are challenging tasks. Over the years there have been several articles that have pointed out weaknesses in exercise science research and ways to improve research design, statistical analysis, and reporting (Thomas, Salazar, & Landers, 1991; e.g., Bates, Dufek, & Davis, 1992; James & Bates, 1997; Thomas, Lochbaum, Landers, & He, 1997; Atkinson & Nevill, 2001; Chen & Zhu, 2001; Mullineaux, Bartlett, & Bennett, 2001; Hopkins, Marshall, Battermam, & Hanin, 2009; Knudson, 2009; Kordi, Mansournia, Rostami, & Maffulli, 2011). Slowly gaining traction in the social sciences and now in the physical sciences is less emphasis on null hypothesis significance testing, with greater focus on the size of differences or associations (Hopkins, et al., 2009).

A controversial research design and statistics issue has been appropriate sample size. Since most research studies use statistical tests of differences or associations of interest, the design must have an adequate sample size to have a high probability of detecting a meaningful difference with the statistical test assuming the effect or association did exist (e.g., statistical power). The sample size, however, should also not be so large as to waste resources and detect small, meaningless or trivial effects or associations as “statistically significant.” One of the most common design problems in exercise science research may be small sample sizes with inadequate statistical power (Jones & Brewer, 1972; Christensen & Christensen, 1977; Thomas, et al., 1997; Speed & Andersen, 2000). Pyne and Hopkins (2012) recently described the sample sizes in both comparison and correlation studies presented at the 2012 American College of Sports Medicine Annual Meeting as “woefully inadequate.” This problem of small sample sizes is also common in psychology (Maxwell, 2004). Maxwell also noted that a contributing factor to the problem of underpowered studies was the testing of multiple hypotheses from one data set. A study with a small sample could have weak statistical power to detect a real difference for any specific hypothesis, but would be likely to make a type I error if the study tested a large number of dependent variables. Small sample sizes also increase the risk of bias due to sampling error and the influence of outlier observations.

Unfortunately, estimating appropriate sample sizes is difficult for researchers because of variations between designs (Hopkins, 2006), uncertainty in expected statistical error and power rates (Thomas, et al., 1997), and differences between sampling aligned with statistical testing or to determine magnitudes of differences with confidence intervals (Hopkins, 2007). Justifying sample sizes with statistical power calculations (Cohen, 1988; Gatsonis & Sampson, 1989; Bonett & Wright, 2000) can also be a subjective exercise that uses creative adjustments in error rates, variability, and the minimum clinically/pragmatically meaningful difference (Lenth, 2001; Batterham & Atkinson, 2005; Maxwell, Kelly, & Rausch, 2008). Bayesian models that are able to specify all levels of uncertainties, with both conditional and unconditional probabilities, can be solved or simulated to estimate sample sizes. Monte Carlo simulations have recently been employed to determine recommendations for sample sizes in factor analyses (Myers, Ahn, & Jin, 2011).

Correlation designs are also vulnerable to statistical errors in hypothesis testing due to small sample size and multiple comparisons. A study designed to correlate numerous variables from a single data set is more likely to see one “statistically significant” correlation due to chance alone than a traditional study with only two variables. Unfortunately, there are fewer models or rules of thumb for estimating sample sizes in correlation and regression, as there are in comparison designs. Correlation studies are usually recommended to have samples in the hundreds (Pyne & Hopkins, 2012), however some researchers have tried to apply the 10-participants rule of thumb in comparison group studies, to correlation/regression for N = 10 for each predictor or variable (Maxwell, 2004).

The purpose of this study was to document type I and type II errors in correlation studies with small sample sizes and large numbers of dependent variables typically seen in exercise science studies. It was hypothesized that smaller samples, common in exercise science, would have increasing type II errors rates than larger samples. The study took advantage of a unique data set from a whole population to examine the potential change in the inter-correlation of numerous dependent variables and their interpretation given smaller sample sizes common in exercise science.

Method

Data from mechanical testing of virtually all of the polyester tennis strings commercially available in the world from 2012 were analyzed in this study. Tennis Warehouse is a large independent retailer of tennis equipment and performs their own tests on equipment. Tennis Warehouse performs independent tests of samples of all tennis strings produced using a protocol (Cross, Lindsey, & Andruczyk, 2000) to measure mechanical response variables using a load cell and laser timing systems (Knudson & Lindsey, 2013). The results of these tests were performed by one of the authors and are available to the public at the company website 2 under the string performance database.

Data for all the polyester strings (N = 198) tested in the high speed and 62 pounds of tension condition were analyzed for six variables: gauge, impact duration, string deflection, peak force, string stiffness, and energy return. This essentially represents the entire population of polyester strings commercially available. Polyester strings were selected because that category provided the largest number of individual brands of strings tested in 2012.

The effect of various sample sizes was determined by examining by three different additional sub-samples from the original population. Three random samples of decreasing sample size (99, 50, and 25) were selected using a random number generator. Correlations were performed on the variables for all data sets using SPSS 20. An additional more rigorous partial correlation analysis was also performed, factoring out the one variable with the highest inter-correlations with the other variables. Statistical significance for each correlation was tested and accepted at a type I error rate of p < .05. The number of correlations identified as statistically significant in each data set were compared with correlations from the criterion data on the whole population (N = 198) using 95% confidence intervals. While the use of confidence intervals on population data in this study is not strictly necessary, however it does provide a uniform magnitude of differences in correlations (Hopkins, 2007). Statistical errors observed in the smaller sub-samples were false positives (type I errors: statistically significant sub-sample correlation not observed in the population, and false negatives (type II errors: non-significant sub-sample correlation when the correlation was significant in the population). Descriptive data for the string mechanical variables from the population and three sub-samples (Table 1) indicated normal distributions (skews between −.90 to .96). The consistency of the normative data for each of the random samples and the population supports the effectiveness of the random sampling used.

Mechanical Variables of Polyester Strings for Various Sample Sizes (Ns = 198, 99, 50, and 25)

Results

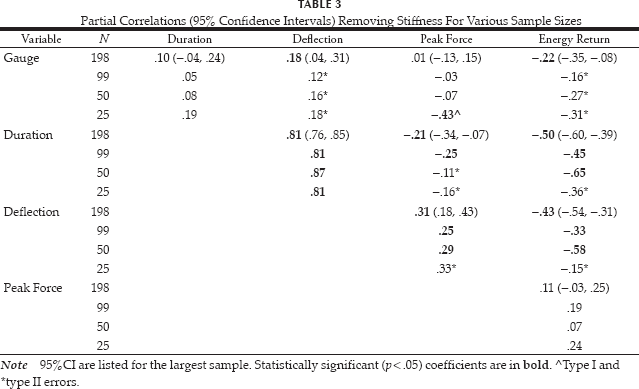

Fourteen of 15 zero-order correlations were statistically significant in the criterion data set (Table 2). The variables had strong inter-correlations, with stiffness most strongly related to the other variables, so partial correlations were performed controlling for association with stiffness. Partial correlations showed that 7 of 10 associations were statistically significant (Table 3), with strong associations remaining between string deflection and impact duration. Factoring out stiffness made most associations among the variables weaker, with the exception of a weak (r = −.22) inverse association between gauge and energy return becoming statistically significant.

Zero-Order Correlations Between Polyester String Mechanical Variables For Various Sample Sizes

Partial Correlations (95% Confidence Intervals) Removing Stiffness For Various Sample Sizes

Reducing the sample size to 99 resulted in zero-order correlations similar to the population data, with the exception of one false negative (type II error) of not identifying a weak association between stiffness and energy return. The more precise partial correlation analysis with this sample size resulted in two type II errors relative to the criterion sample.

The sample size of 50 also had qualitatively similar zero-order correlations to the population data for most variables, but now had two false negative (type II errors) tests. The partial correlation analysis also had false negative statistical errors.

The smallest sample size (n = 25) had greater variation between the zero-order correlation coefficients and the population correlations, and now had three false negative tests. The partial correlation analysis of this smallest data sample had six false negative errors, and also had one false positive (type I error). Partial correlation of this small data set has more statistical errors (one of the seven real associations was detected, along with a false negative association) than associations correctly identified.

Discussion

The present study confirmed theoretical trends of increasing statistical errors with decreasing sample sizes in correlation analyses of multiple dependent variables. Polyester tennis strings have a mix of strong, weak, and no associations between the six mechanical impact variables. Accurately capturing the size of these associations without statistical errors was dependent on sample size and the kind of statistical analysis performed.

Zero order correlations are the most common correlation technique but, unfortunately, conclusions from these tests have several threats to validity. First, this study showed that decreasing sample sizes increased the type II errors of zero-order correlations from 7% to 21%. Second, variables can have statistically significant zero-order correlations with each other so the variables appear to be associated, but they are not because the associations are accidental, erroneous, or spurious (Haig, 2003). Third, correlations are point estimates and a non-linear variable that should normally be interpreted with confidence intervals (Hopkins, 2007), the coefficient of determination (r2) or absolute magnitudes (Zhu, 2012). Adjusted versions of r2 are often reported because sampling errors do not accurately model the population (Vacha-Haase & Thompson, 2004).

The more rigorous partial correlation analysis showed even larger negative effects of smaller sample sizes on statistical testing errors. Partial correlations of decreasing sample sizes increased type II errors from 29% to 85% with the smallest sample size also increasing type I errors to 33%. It could be concluded that based on these errors the N = 50 and N = 25 samples sizes were inadequate for an accurate correlation analysis of the six string performance variables studied. These results also call into question the common rule of thumb of having at least 10 observations for every variable of interest in correlation studies. Modern exercise science research equipment is often automated, providing access to numerous variables that increase the temptation for researchers to correlate or compare numerous variables with a modest sample or data set.

Testing numerous hypotheses from a single, small data set is the dominant kind of research design in exercise science, posing a risk to the veracity of the results of these studies (Morrow & Frankiewicz, 1979; Knudson, 2009). In applied biomechanics, e.g., 73% to 81% of original research reports test multiple hypotheses from the same data set (Knudson, 2005, 2009), unfortunately most often without correcting for inflation of the experiment-wise error rate. This presents a serious problem to the development of a coherent body of literature, because even illogical or meaningless effects can appear to be statistically significant from uncorrected testing of multiple hypotheses from the same data set (Austin, Mamdani, Huurlink, & Hux, 2006; Knudson, 2007). Much of the inconsistent results in scientific research reports can be attributed to uncorrected testing of multiple hypotheses in the same data (Maxwell, 2004; Ioannidis, 2005), as well as limited replication and poor review and citation of previous research (Knudson, Elliott, & Ackland, 2012).

The present study was limited in that a modest range of sample sizes were examined and the data represent the mechanical response of fairly uniform sports equipment. It is likely that associations between other mechanical, physiological, or behavioral variables with human subjects could be different, as well as the extent of the negative effects of smaller sample sizes. Future exercise science research should confirm and extend these results to assist researchers in interpreting previous correlation studies and planning well-powered studies.

In summary, the present study reported mechanical data from tennis strings to document the adverse effects of small sample sizes on statistical testing errors in correlation analyses of multiple dependent variables commonly seen in exercise science studies. These statistical errors were also dependent on the kind of correlation analysis performed. Zero-order correlations analyses of small samples (Ns = 25 or 50) resulted in 14% to 21% increases in type II errors, while similar partial correlations had 43% to 85% increases in type II errors. The more precise partial correlation analysis of the smallest sample size also resulted in a type I error. Results of previous studies using correlation analysis of small sample sizes in exercise should be interpreted with caution, given the potentially large percentage of associations not being identified as statistically significant, as well as the more rare association being falsely identified as statistically significant. Greater use of larger sampling, confidence intervals, and more precise partial correlation or regression analyses will likely improve the accuracy and consistency of the results of exercise science research.