Abstract

There is no doubt that prudence and risk aversion must guide public decisions when the associated adverse outcomes are either serious or irreversible. With any carcinogen, the levels of risk and needed protection before and after an event occurs, are determined by dose-response models. Regulatory law should not crowd out the actual beneficial effects from low dose exposures—when demonstrable—that are inevitably lost when it adopts the linear non-threshold (LNT) as its causal model. Because regulating exposures requires planning and developing protective measures for future acute and chronic exposures, public management decisions should be based on minimizing costs and harmful exposures. We address the direct and indirect effects of causation when the danger consists of exposure to very low levels of carcinogens and toxicants. The societal consequences of a policy can be deleterious when that policy is based on a risk assumed by the LNT, in cases where low exposures are actually beneficial. Our work develops the science and the law of causal risk modeling: both are interwoven. We suggest how their relevant characteristics differ, but do not attempt to keep them separated; as we demonstrate, this union, however unsatisfactory, cannot be severed.

INTRODUCTION

The two natural events—earthquake and resulting tsunami—that caused the catastrophes that so greatly affected Japan in early 2011 have also had an immediate impact on energy policies of countries that depend on nuclear energy. Once again, the development and safety of nuclear power is questioned. The natural events caused partial core melt-downs and accidents involving spent nuclear fuel stored on the site of the six Boiling Water Reactors (BWRs) at the Fukushima Daiichi plant. The loss of coolant accidents (LOCAs) caused releases of cancer causing radionuclides. As with any carcinogen (chemical or radionuclide), the level of risk and protection before and after accidents is determined by dose-response functions based on different studies.

We address causation when the effect of very low exposures to carcinogens on human health. It is here that many can be exposed to low levels of radiation for some periods of time near, and at great distances, from the source of danger. The causal question is exemplified by this question: What is the level of danger—if any—from inhalation or ingestion of radionuclides at levels around milli-Sieverts (mSv) per unit of exposure time? The corollary policy question is: What is a prudent level of tolerable exposure, and what if that exposure is demonstrably benign at low doses or dose rates, contrary to the prevailing hypothesis? Although different age groups respond differently to ionizing radiation (children are more sensitive than adults), the question is important for setting both ambient (routine) and emergency exposure standards. The latter exposures are much less controversial than the former: there is no disputing the acute effects of ionizing radiation or chemicals at high doses.

The correct answers can avoid costly policies that can do more harm than good (at a clearly determinable overall cost to the nation), help to optimize responses to the non-acute consequences, help to address “fear of cancer”, and provides defensible choices of future sources of energy in which nuclear power plays a prominent role. Although the answers we provide are guarded, the essence of our proposal is that—given the necessary (scientific) and sufficient (legal) information for policy-science—a sound choice between alternative causal models of dose-response should be made. That choice should avoid a hypothesis, given the gravity of the consequences to society. More specifically, we will attempt to show that it is not at all clear that the linear, non-threshold dose response model (LNT) should be assumed without evidence and used to set tolerable levels of exposure. In the longer term, even when the risk of danger disappears (depending on the half-life of the radionuclides emitted from the accident), the imprint of fear can lead to energy policies that create other perhaps much less uncertain dangers. Those can result in adopting costlier energy sources, dependencies on inimical suppliers, raise the carbon footprint of a nation, and so on. The correct choice of dose-response model has implications that go well beyond excess cancer incidence: the analysis of trade-offs begins locally but has global impact.

Lack of knowledge argues for prudence and risk aversion. Both are context-specific: it is stupefying that the magnitude 9 (Richter magnitude scale) was not designed against as it was not considered when it is established that these magnitudes have a (worldwide) rate of 1 to 3 per century (McCaffrey, 2008). The reason for surprise is not just the lack of foresight about the design choice (a barrier wall to withstand a tsunami about half as high as that experienced), but also that the number of reactors on site (there were six). In foresight, the stakes in the decision are so great for Japan (and all of us) that a design for magnitude 9 would have been prudent and necessary, given the potential societal damage. It would provide “the ample margin of safety” required for a correct policy choice when the outcomes are extremely high and the direct and indirect costs approach the half trillion dollar mark. The correct analysis and optimal choice of protection normatively should be based on the net value of the stakes associated with very low probability events. On the one hand, there will be losses of large magnitude, even though the probabilities are relatively low. On the other, the correct analysis also would account for beneficial effects from low exposure, even though those exposures do kill at much greater doses. In this paper we consider both of these dimensions.

In most countries, regulatory risk analysis uses causation, through dose-response models: it is part of the legal basis for justifying the reduction of exposures to hazardous agents likely to cause cancer that are either assumed or presumed 1 to lead to very small individual lifetime risk (relative to background). In the aggregate, the overall expected number of cancers can, however, be significant but still undetectable, given the much larger background numbers of cancers in the population. Unless cases or deaths can be uniquely associated with a particular exposure (for leukemia, for instance, cigarettes smoke and not ionizing radiation, even though the individual was a smoker), the apportionment of risk is unfeasible.

Because regulating exposure to carcinogens requires direct and indirect expenditures measured in billions of dollars per year, a public management decision should be based on minimizing exposures, but also should account for all other (e.g., economic) costs associated with any such minimization; this balancing is inherent to setting environmental and occupational standards in many US federal laws. To set those standards, the law (from tort to environmental to constitutional law) couples legal causation and the “best science” 2 about cause and effect to regulatory policy-making. The potential for judicial reviews of a regulatory choice is assumed to limit the scope of an agency freedom to act arbitrarily: the overall system is assumed to yield, on the average, optimal social outcomes. Hazardous exposures are regulated (e.g., via numerical standards limiting hourly exposure) at either acceptable or tolerable risks. For example, a one in a million chance of cancer death yields the corresponding tolerable exposure, for a given causal model of dose-response (Table 1). 3 Moreover, an important function of a public agency is to inform the public and stakeholders affected about the regulatory intent and the scientific basis of its regulations. These combine elements of policy with a choice of causal model and data.

Concentrations of cadmium, from alternative cancer risks dose-response models

The characterizations of regulatory risks conditions its estimates on the advances in scientific knowledge, provided that this knowledge has been corroborated through independent replications, empirical and theoretical findings, confirmation by predictions, and is scrutinized via peer reviews and other examinations—including judicial review. However, this last review can paradoxically result in a policy bias that conflicts—for instance, allowing far too great latitude to regulatory agencies—with that scientifically best characterization of risks. More specifically, the consequences of a policy bias towards high level of safety, as we will demonstrate in this paper, can be deleterious because it becomes entrenched. From a seemingly unrelated field, quantum physics—following Sokal and Bricmont (1999)—we find that Heisenberg's (1958) statement that:

Science no longer confronts nature as an objective observer, but sees itself as an actor in this interplay between man and nature … method and object can no longer be separated.

This is particularly true at the macro-state, namely policy-science. It is equally clear that, as Bohr (1928) suggests:

An independent reality in the ordinary physical sense can … neither be ascribed to the phenomenon nor to the agencies of observation.

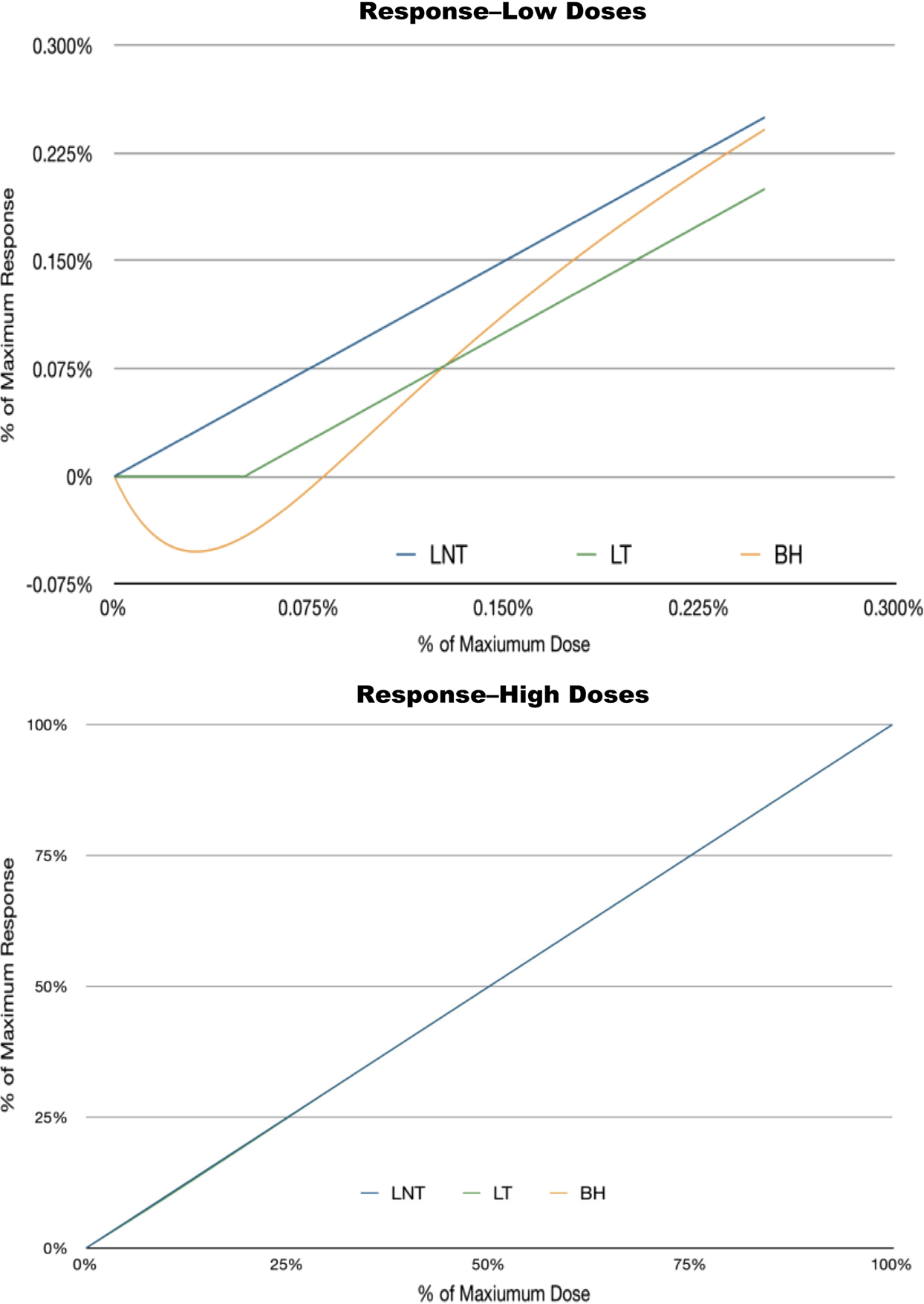

We thus need to confront not only scientific but also the perceptual (cognitive) aspects of low probability events with large consequences. The modern interaction of science and policy to issue regulatory numbers effectively nullifies the ideal that science can be separated from policy. As we discuss, we see no convincing evidence of this ideal occurs. 4 Rather, we clearly see an application of Bell's result in which an event affects jurisdictions thousands of kilometers away. First, scientific knowledge, Figure 1 (Ricci, 2009), changes with time and, if it is not reflected in regulatory laws, results in an incomplete analysis of the true risks—resulting in a positive feedback that decreases safety, rather than increase it. Second, regulatory inertia to change and judicial inertia can fail to provide the very protection that they are designed to provide by law.

Simultaneity of a societal concern with hazardous condition, knowledge, truth, and uncertainty about causation.

CAUSATION AT LOW DOSES

The essence of the scientific issue we discuss is exemplified by the differences between the US and the French Academies (i.e., the US National Academies of Science, the French Academy of Sciences, and the French National Academy of Medicine) regarding the effects of ionizing radiations at low doses. Although the US (BEIR VII, Phase 2 Report) supported the LNT, the French Academies raised doubts about its validity. The US used epidemiological studies; France instead included in vitro cell line results. Although these alternative choices are unambiguous, the result for regulatory law is ambiguity: alternative and essentially diametrically opposite views—given that the very same level of scientific knowledge is available to all of these institutions—create a difficult regulatory situation that affects environmental choices and energy development, and thus have important implications for geopolitical stability.

It should not be controversial to ask for a decision theoretic analysis of how those choices are made. This analysis would account for experimental (e.g., short-term tests, bioassays on live animals, and epidemiological studies) scientific evidence, the biology associated with the cancers, combines those and points to the correct regulatory choice for a socially acceptable criterion of choice. The advantage is that external reviewers can assess the choice made by those institutions analytically. Specifically, the weights given to alternative biological and experimental information—common to all—would have to be disclosed and could be assessed by unbiased parties, given the criterion for the optimal choice. The policy issue we confront is one of cause and effect via cancer dose-response models. These can yield very different results, for the same set of data, as the results shown in Table 1 suggest: each is an alternative cumulative (thus monotonic) distribution functions used in current regulatory practice. The setting of the tolerable risk, from which we develop tolerable exposures, is a matter of social policy.

These models are used in current regulatory practice in the US and in those jurisdictions that follows the US approach. In the EU, several Member States use T25 , NOAEL, and LOAEL methods (based on the probit model) to develop tolerated exposure or doses. Some use the single and two-hit models of cancer and the 0.99 UCL to develop the tolerable exposure; while other pool scientists to develop a consensus opinion (Seely et al., 2001). Although some countries, for example Australia, modify the method, the foundational regulatory basis is the LNT at low doses. However, those foreign interpretations and uses have different constitutional and administrative frameworks than those of the original jurisdiction which developed these methods and standards, and are thus of questionable policy use because those affected may not have the recourses for redress available in the original jurisdiction under its laws.

Policy choices not based on sound methods neither resolve ambiguity nor increase protection; less protection may be likely despite the large sums spent to reduce what turns out to be a phantom hazard, created by conservative (erring on the side of precaution) assumptions. For cancer risk assessments, regulatory agencies (e.g., US EPA, 2005) default to linearity at low doses:

… extrapolation is based on extension of a biologically based model if supported by substantial data. Otherwise, default approaches can be applied that are consistent with current understanding of mode(s) of action of the agent, including approaches that assume linearity or nonlinearity of the dose-response relationship, or both. A default approach for linearity extends a straight line from the POD to zero dose/zero response. The linear approach is used when: (1) there is an absence of sufficient information on modes of action or (2) the mode of action information indicates that the dose- response curve at low dose is or is expected to be linear. Where alternative approaches have significant biological support, and no scientific consensus favors a single approach, an assessment may present results using alternative approaches.

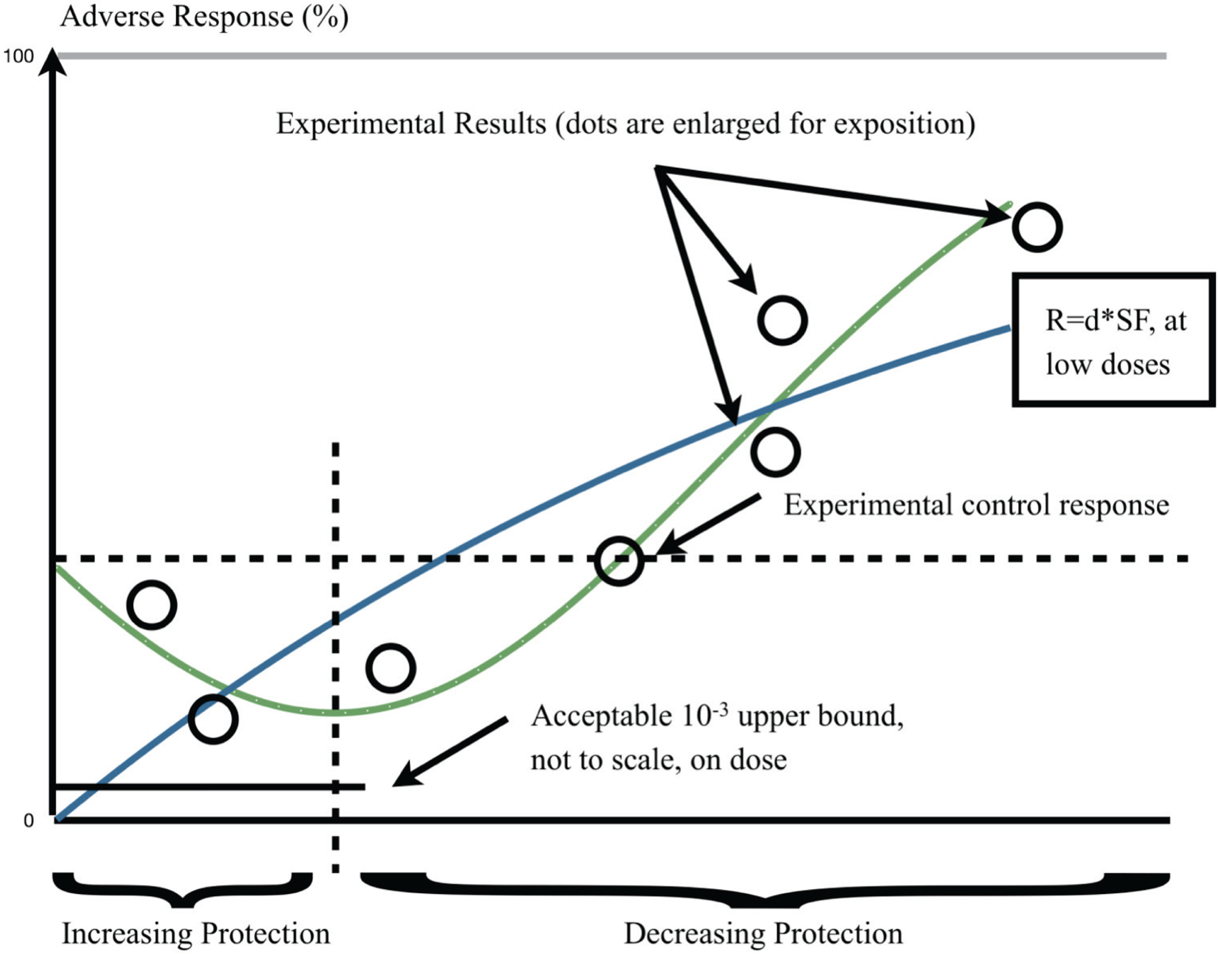

Our overall context is Figure 1, which depicts the two alternatives at issue in this paper: the LNT hypothesis and its biphasic-hormetic (B-H) alternative. The regulatory science importance of this issue is that, as the depiction shows, the LNT excludes any benefit from any exposure; the B-H allows such benefit to be quantified, when it exists. From an analysis that uses either one or the other causal model, exposure is the regulated to minimize cancer incidence or deaths. However, if the form of dose-response is conjectural—it is an assumption—while its alternative has a fundamental empirical and theoretical basis—it is a presumption—then it would seem, legally and logically, that those who are exposed and those who regulate exposure should have full knowledge of both alternatives. Using one as a policy default is scientifically and legally unjustifiable even though it is done routinely; as we will discuss. By direct implication, fears of low dose radiation may be misplaced if the B-H were correct, as the B-H predicts beneficial results for those exposures.

Our Figure 1 appears as a mixture of relativisms, empiricism, and other –isms. This is not our intent. We are only concerned with misallocation of societal resources, given the immediacy of danger. Our figure depicts a situation-specific event that generates societal concerns with a deadly hazard and links that event to actions (such as R&D) taken by society to understand the factual basis of the event and its consequences over time. The goal of society is reduce or eliminate exposure to that hazard but such reduction should not create other and possibly greater dangers. We use empirical and theoretical results over time, and predict that the gap between “truth” and the results from theory and empirical studies would eventually narrow. Given these, we also show that—on the average—fear for the hazard and its consequences diminish as a function of increased understanding and coping measures taken as knowledge increases. This reduction in concern is based on: 1) “theory” is falsifiable and 2) that “truth” will eventually become verifiable. More specifically, we find it difficult to accept a proposition that purports to be conservative when its conservativism is based on a hypothesis that cannot be tested and both theoretical and empirical knowledge disconfirms it. To the extent that the law requires proof of an event (or chain of events leading to damages) and that legal proofs balance the evidence for and against with a specific balancing (e.g., of the probabilities for the more likely than not, test; or factual as for clear and convincing evidence, and so on), we think that a hypothesis cannot have the same probative vale of corroborated factual evidence. Nonetheless, the reason for our ambivalence is that it is probable that a hypothesis has to be made, and policy be based on it, when the situation warrants it. But, we hope that—when this occurs—the hypothesis well summarizes what is known at the time that the societal choice is made. The asymmetries regarding the probable convergence of the trajectories on the scientific “truth” and the possible reduction in average societal concern involve. Different rates of convergence on the “truth”, should convergence actually occur. The basis for asymmetry and possible non convergence include: perceptions of the hazard (and thus the level of concern) which change at different rates (e.g., depending on individual age and other factors); knowledge—information and the processing of that information—changes at yet different rates; uncertainty (e.g., randomness) cannot be fully resolved. Even though we show the evolution of different processes continuously, the events characterizing them over time are discrete. Finally, “truth” in this Figure means a scientific known or discoverable truth.

To sum up, a mathematical (i.e., biostatistical) model is used to determine public or occupational tolerable exposures, from a policy-generated acceptable risk number (Table 1). In other words, a policy-mandated probability of cancer conditional on the causal dose-response model determines the tolerable exposure and the eventual cost of cleaning up to, or reducing, that exposure. It does not matter that exposure can be measured by alternative metrics, such as: dose, dose rate, cumulative exposure or by some other plausible metric of hazard. A policy statement determines the hazard (e.g., zero risk = zero probability of response and thus zero dose, dose rate or cumulative exposure). US regulatory law (e.g., food, cosmetics, occupational, environmental and so on) distinguishes between cancer and non-cancer (toxic) dose-response models. We focus on environmental health and cancer risk assessment in which the effect of exposure is generally assessed using the (linearized) multistage (LMS) dose-response model (US EPA, 2003). The probability of carcinogenic response is the individual lifetime cumulative probability; the upper 95% confidence limit of the estimated slope (the linear term of the LMS) is used to determine tolerable dose, for a given regulatory level of tolerable risk (e.g., 1×10−6 ). The construction of the LNT cannot account for benefits, even when they actually exist empirically because it is forced to be interpolated through the (0,0) origin of the dose and response axes. More specifically, the cancer dose-response model most often used by the US EPA is the linearized multistage model, LMS, which forces linearity at low doses and does not admit a threshold at low doses (risk is lifetime individual probability):

All higher powers of d, with the exception of the zeroth and first power, are discarded. The USEPA uses q1 , i.e., the first power of d and its upper 95% confidence bound, the so-called q1 ∗. The LMS model constrains the coefficients (parameters in statistical estimation) to be greater or equal to zero, resulting in a monotonic function (it is a cumulative lifetime distribution). This is why this model cannot account for any B-H theoretical and empirical reality: in the LMS model the exponent in equation (1) is linear at low doses and interpolates to (0, 0).

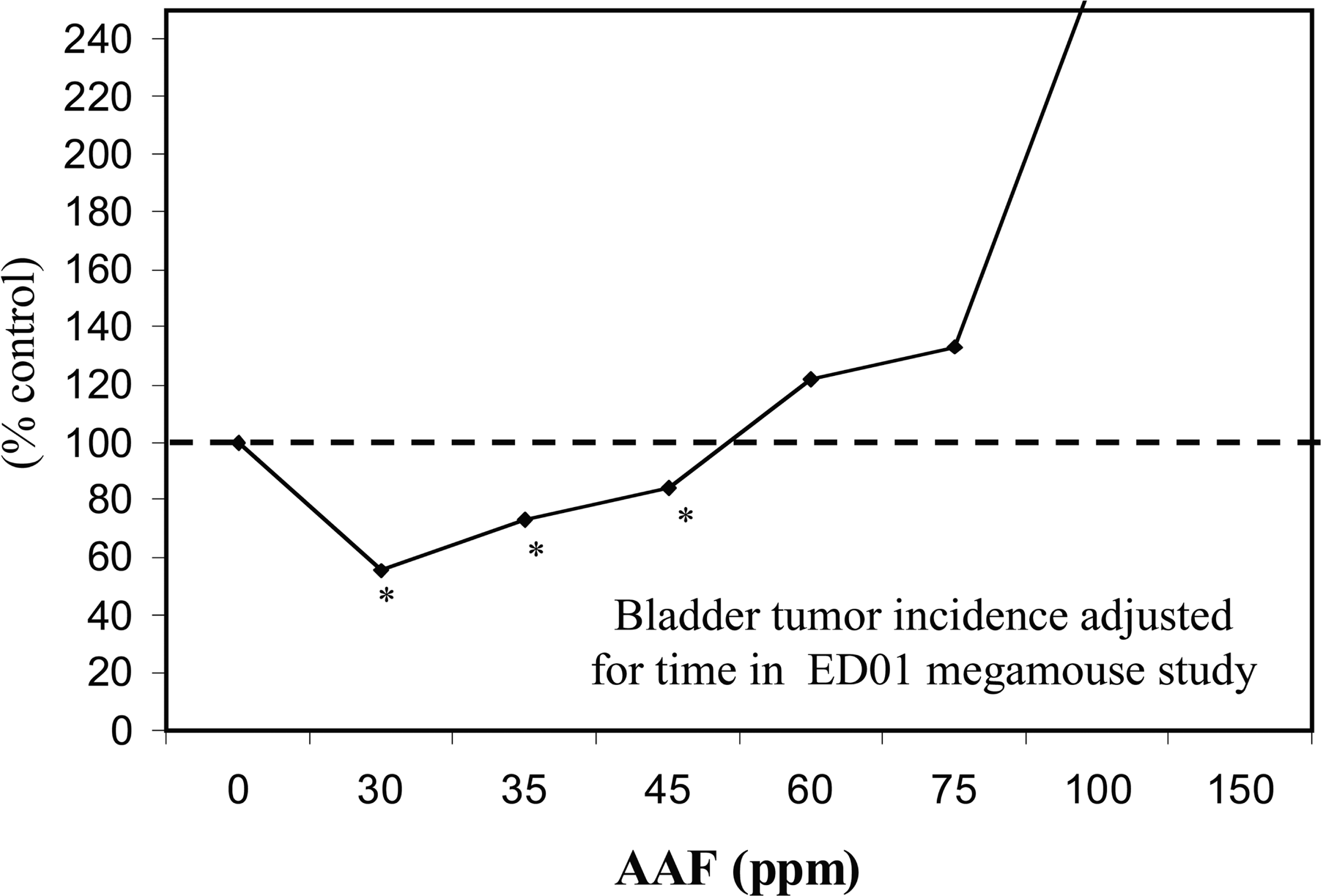

The LMS can be shown to be incorrect, when the number of data used in fitting it is larger than the number of parameters being estimated; however this is not generally the case because of the design of the experiments to which this model is applied. Nonetheless, there are notable exceptions. For example, experimental results obtained in the mid 1970s by an US FDA long-term bioassay study with over 24,000 mice exposed to the carcinogen 2-AAF to determine the shape of the dose response at low doses (because of its size, this study is known as the mega-mouse experiment). Despite the very large (and yet unmatched in size) number of animals used, the results were only sensitive to a risk of 1/100, much less than the risk levels used by regulatory agencies to set tolerable doses or exposures. Importantly, the US Society of Toxicology (SOT, Bruce et al., 1981) 14-member expert panel reviewed the results of the mega-mouse study and reported that the study supported a B-H dose response model, when the analysis included a time component based on interim sacrifices. The SOT found that 2-AAF had a J-shaped dose response for bladder cancers that was consistent in each of the six separate rooms in which large number of animals were housed, an important internal replication. This study points to a practical problem in many, if not all, whole animal lifetime cancer studies: the size of the study becomes so overwhelming that it is unfeasible to carry out. Thus: How can anyone demonstrate dose-response behaviors below an excess risk of about 1/100, even though the risks of concern are much lower than this number? The biphasic model of Figures 2 and 3 depicts low dose reduction and high dose increase in the incidence of the adverse effect: The empirical form of the B-H cancer dose-response is depicted, for 2-AAF's mega-mouse experiment, in Figure 3.

Alternative dose-response models for cancer: LNT and biphasic (hormetic) models. (points are enlarged for representation purpose).

Biphasic Exposure-Response Model (J-shaped) for Exposure to 2-AAF in the ED01 Mouse Experiment; Percent of Control Animal Responses as a Function of Exposure (Bruce et al., 1981).

MODELS OF DAMAGE

The fundamental problem with modeling the risks of extremely low doses is that measuring the response directly becomes exceedingly difficult. When the predicted response is very low, as in cases of (for example) chronic exposure to radiation at levels similar to or slightly above background levels (levels of a few mSv/y), extracting a signal from the noise of the host of confounding factors—other carcinogens, varying levels of healthiness pre-exposure, and a wide array of risk factors other than the carcinogen in question—may well be impossible. This means that the qualitative features of the response (even its sign!) may be unknowable.

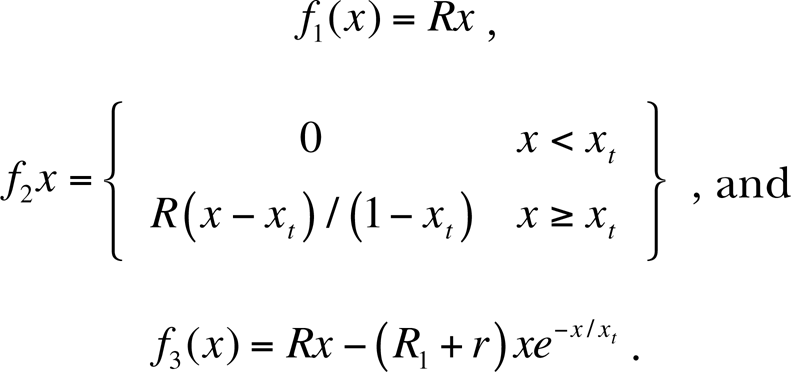

In trying to create public policy around such exposures, we are thus confronted with the worst possible case imaginable—a tiny, unknowable signal that must be multiplied by a vast number of potential cases to determine the possible level of harm involved. Systems in which a tiny error is magnified to create a much larger signal are said to be ill-conditioned. The thoughtless use of an ill-conditioned procedure runs the risk of producing an approximate solution that is nothing of the sort—a solution in which errors can easily grow to be large enough that even the basic nature of the answer—much less its magnitude—may be fundamentally wrong. To see the problem, consider the following three models for the biological response putative carcinogen, where x=0 represents 0 dose and x=1 represents the highest dose under consideration, at which we expect a rate of R. In these models, xt may be interpreted as a very low dose, much lower than any expected background dose. Let

The three response curves are nearly indistinguishable for most values of x. At values of a few xt , though, they show strikingly different behavior—f 1 continues to show positive harm, f 2 exhibits no harm below the threshold exposure xt , and f 3 exhibits J-shaped (biphasic hormetic) behavior. Figure 4 plots these functions first at significant fractions of 1, then around xt .

Alternative results and the paradox of high versus low dose: at significant fractions of the maximum tolerated dose, the three curves appear to be identical.

At significant fractions of the maximum dose, the three curves appear to be identical. At smaller scales, the distinct behavior of the three curves becomes apparent.

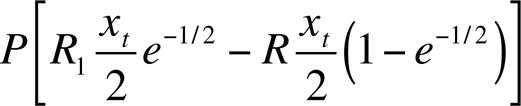

If the measured exposure is xt /2 on a large population P, policymakers are in an unenviable position. According to response function f 1, the small exposure should give rise to an expected PRxt /2 cases of cancer; according to f 2 none at all, and according to f 3 the exposure should actually prevent

cases per year.

Given this sort of uncertainty, and given that measurements of very low-level responses are likely to be extraordinarily difficult to make with any accuracy given the host of possible confounding factors at very small scales, crafting public policy using “the best science” in the traditional way may be impossible.

Let us instead consider the problem in a decision-theoretic framework. There are three components to dealing with an environmental hazard of the sort discussed in here. First, the actual dose x must be measured as accurately as possible. Any such measurement will necessarily have some degree of error. Secondly, the response to this dose must be estimated using the best available science. This is straightforward for large x (in our example, for x > xt ), where the linear dose-response curve is well established, but enormously difficult for small x. Finally, the legal consequences of that response must be worked out. This step is highly different in different jurisdictions. As a baseline, we assume a model legal system adhering to two principles:

It attempts, by some mechanism, to assign damages proportional to a given entity's proportional blame for damages. Thus, if a single entity is shown to have caused some harm largely on its own, it is assigned the damages caused by that harm. The actual mechanism by which damages are apportioned may vary wildly—damages may be assigned first to a single entity, for example, which may then bring its own claims against other parties with which it shares responsibility—but we assume that at the end of legal proceedings damages have been assigned more or less fairly.

It establishes a standard of proof (preponderance of the evidence, beyond a reasonable doubt, or some other standard) that a claim of harm must meet for damages to be assessed.

Let us consider a few characteristic cases:

One polluter, well determined dose In this case, although x is well determined, f(x) is not. In fact, as sgn(f(x)) isn't known, the science can't even establish the existence of harm, must less the magnitude. In a heavily-populated region, Nf(x), the expected number of cases per year, now potentially covers an enormous range.

In this case, claiming that the “conservative” decision is the one that avoids the greatest possible harm is somewhat dangerous. It invites a sort of arms race between interested parties in which each side attempts to measure the immeasurable in a manner most favorable to their position. Attempts to study phenomena within the noise level of an experiment have historically led to disaster (Langmuir & Hall, 1989).

Many polluters, well-determined collective dose x>>xt, delivered in partial doses such that each polluter Pi causes xi < xt of the total dose. This time f is on the solidly established linear response portion of the curve. The ultimate result depends entirely upon how the specific jurisdiction decides to apportion damages.

If the damages are apportioned according to the fractional responsibility for the dose, then the ultimate damages will be precisely those expected by LNT, albeit with a significantly different justification. The two cases are instructive. The LNT model makes sense as a regulatory device if one expects the number of polluters to be quite high, so that the total dose may be much larger than the dose for which any individual polluter might be responsible. It is a much more dubious proposition in cases in which a sole low-level polluter exists. The key is that it is much more reasonable to scale down a well understood harm to the scale at which individual entities are responsible than to scale up a poorly understood harm by multiplying it by a large population.

A single polluter polluting near xt; background rate Rb of the adverse event. Here a single polluter is polluting quite near the threshold level. We assume a background rate for the adverse event is Rb , even in the absence of the pollutant. What is the probability that a specific adverse event has been caused by the pollution? In this case, the total rate of the adverse event is f + Rb , so the fraction of the rate due to the pollution is f/(f + Rb ) for positive f. When these claims are addressed by the legal system, then, harm is said to have occurred whenever f/(f + Rb ) exceeds some threshold probability T. Figure 5 plots f/(f + Rb ) for Rb = 10Rxt , 100Rxt , and 1000Rxt (A, B, and C respectively).

Difference in results due to the gap between dose-response models. Rb = 10Rxt .

Rb = 100Rxt .

Rb = 1000Rxt .

In this case, if the standard is something like “better than even odds” that the specific harm is due to the low-level exposure, then the difference between LNT and BH is irrelevant; by that point the danger should be readily measurable. Regulations are usually designed to prevent exposures well below the level at which the rate of adverse effects doubles. This can be problematic in the face of a difficult-to-detect gap between possible dose-response curves at low-levels—in an area like the Los Angeles basin, the tiny difference between the curves in the third case might represents the difference between no deaths and a few dozen per year.

DISCUSSION

Our paradigm is invariant to the jurisdiction using it, provided that science and policy mix to create a regulatory framework in which causation is a necessary—but not sufficient—legal requirement. We are consistent and supplement the US EPA (2005) attempt to:

“[Encourage] risk assessors to be receptive to new scientific information, [following the US National Research Council, NRC, comments] … need for departures from default options when a ‘sufficient showing’ is made. [The NRC] … called on EPA to articulate clearly its criteria for a departure so that decisions to depart from default options would be ‘scientifically credible and receive public acceptance’ [because] [i]t was concerned that ad hoc departures would undercut the scientific credibility of a risk assessment. NRC envisioned that principles for choosing and departing from default options would balance several objectives, including ‘protecting the public health, ensuring scientific validity, minimizing serious errors in estimating risks, maximizing incentives for research, creating an orderly and predictable process, and fostering openness and trustworthiness.’”

This encouragement emphasizes that causation and policy combine and that the ideal of keeping science separate from policy cannot be guaranteed (a result summarized in Figure 1). Nonetheless, our work decouples, to the extent possible, science from policy-based default reasoning and is consistent with scientific skepticism—i.e., that a scientific investigation begins with the null hypothesis as well as parsimonious explanations, according to Occam's Razor. Practically, it is difficult to see how—given the emphasis of the US EPA on the LMS and its use to set cancer guideline numbers in its IRIS data base—that practitioners will take on the costly burdens we discussed and “be receptive to new scientific information,” particularly in the current economy. Litigation and political forces cause the change: a “sufficient showing” may be a tepid (and so far seemingly unused) criterion for any necessary and sufficient evidence of adverse effects, given dose. The reason is that a public choice—unlike a private choice—is a policy act (US EPA, 2005; parenthetic statement added):

“The extent of health protection provided to the public ultimately depends upon what risk managers decide is the appropriate course of regulatory action. When risk assessments are performed using only one set of procedures, it may be difficult for risk managers to determine how much health protectiveness is built into a particular hazard determination or risk characterization. When there are alternative procedures having significant biological support, (one of which is the existence of hormetic mechanisms) the Agency encourages assessments to be performed using these alternative procedures, if feasible, in order to shed light on the uncertainties in the assessment, recognizing that the Agency may decide to give greater weight to one set of procedures than another in a specific assessment or management decision.”

For regulating exposure to carcinogens, the US EPA (2005) also requires a weight-of-evidence narrative that should describe:

the quality and quantity of the data;

all key decisions and the basis for these major decisions; and

any data, analyses, or assumptions that are unusual for or new to EPA.

To account for these, the US EPA (2005) uses a narrative that includes several items that should include:

conclusions about human carcinogenic potential …

a summary of the key evidence supporting these conclusions (for each descriptor used), including information on the type(s) of data (human and/or animal, in vivo and/or in vitro) used to support the conclusion(s),

available information on the epidemiologic or experimental conditions that characterize expression of carcinogenicity (e.g., if carcinogenicity is possible only by one exposure route or only above a certain human exposure level),

a summary of potential modes of action and how they reinforce the conclusions,

indications of any susceptible populations or lifestages, when available, and

a summary of the key default options invoked when the available information is inconclusive.

Additionally, the US EPA approach accounts for the mode of action and other biological aspects of a carcinogen. It provides several dose-response models in its BMDS package that is freely available on line at the US EPA NCEAS site. Unfortunately, this toolbox does not contain models that would allow the analysis of biphasic behaviors. The natural language used in the narrative does not provide a sufficient integrated method: it is vague and lacks a bright line for determining what is in from what is out. The previous sections have dealt with parts of this point, we now turn how this bright line could be studied (and be consistent with the US EPA approach for cancer risk assessment.)

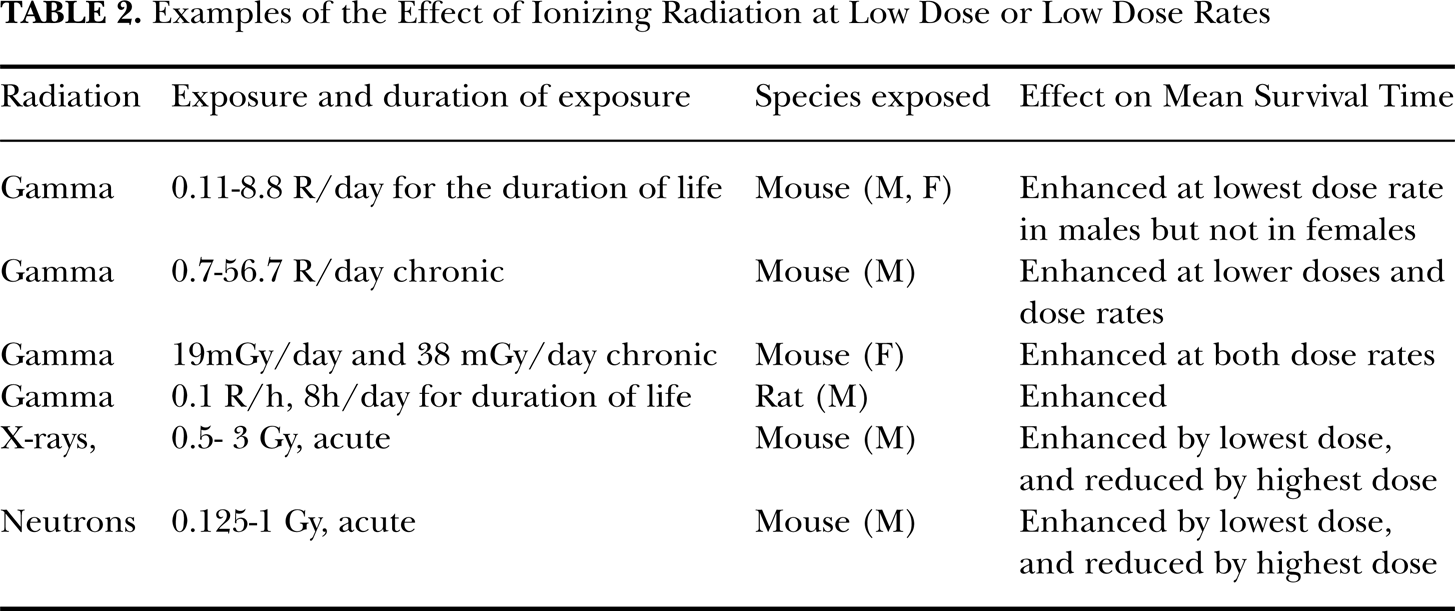

IONIZING RADIATION: EVIDENCE OF B-H CANCER DOSE-RESPONSE

For ionizing radiation there is incontrovertible evidence that, from approximately 300 mGy to 2 Gy, the cumulative probability of damage from exposure is approximately linear (Pollycove and Feinendegen, 2003). High levels of ionizing radiations decrease mean survival time of those exposed, across all exposed species (UNSCEAR, 1982). This is not the case for low levels of radiation. Upton (2001) has provided a summary of experiments which have detected enhanced mean survival times in, among other species, rats and mouse, from whole-body ionizing radiations. We have abstracted these in Table 2.

Examples of the Effect of Ionizing Radiation at Low Dose or Low Dose Rates

The conclusions of a UNSCEAR group of experts of a no effect of “radiation-associated excess” of either mortality risk or incidence of leukemia adds relevance to these findings. The J-shaped behavior had been demonstrated by Vilenchik and Knudsen (2006) with regressions for the excess relative risk of DSB mutations, and compares well with the epidemiological studies. This commonality, because DSBs are a “major mechanisms of leukemogenesis,” is to the findings of the J-shaped dose–response model for IR exposure and leukemia. Bogen et al. (1997) developed a biologically plausible cytodynamic 2-stage model predicting a J-shaped response for ionizing radiations and shows that his model fits the observed uranium miner data. Scott et al. (2007) have developed a model that links different cellular states to the probability of transition from one state to the other as a function genomic damage induced by different level of exposure to ionizing radiation. They, using a MCMC solution, find a multi-phasic response that depends on the amount of radiation and repair mechanisms.

At times, because epidemiological studies may not be designed to test for biphasic dose-response, the finding of responses lower than the controls (the actual J-effect) is interpreted as the healthy worker effect, healthier life styles, and so on. This has been the case for studies at Los Alamos, Hanford, and other locations where workers exposed to ionizing radiations had lower cancer risk that those unexposed. These explanations may be clarified. The hormetic effect of very low levels of exposure stimulates the immune system. Similar findings have also been reported in from non-occupational populations in Taiwan (chronic gamma radiation from Cobalt-60) and indoor radon exposures (Cohen, 1995), and in other studies of nuclear workers (in the US, Canada, and the UK) whose total cancer deaths are lower than those unexposed. Unfortunately, even when the J-shape occurs, the policy conclusion relates back to linearity at low doses: evidence is trumped by conjecture (Cardis et al., 2007). A Canadian epidemiological study of female mortality involving for breast cancer, where the patients had had several fluoroscopies, was reassessed by Pollycove (1988): the original report of a LNT effect from those exposures turned out to be not LNT-like but, rather, J-shaped. Schöllnberger et al. (2007) also comment that the LNT hypothesis is—at low doses—inconsistent with the empirical results from in vitro studies involving exposures to low energy photons (which are used for imaging work, such as mammography). Radiation damage to cellular DNA (pre- or post-exposures to mSv levels) has also shown to be characterized by J-shaped functions for X-and gamma rays (Upton, 2001):

Although the existence of such adaptive responses is no longer in doubt, it is not clear from the existing data whether the dose-response relationships for mutagenic, clastogenic, and carcinogenic effects of radiation are comparably biphasic in the low-dose domain (citations omitted for brevity). Pending further research to resolve this question, the implication of adaptive responses or the setting of radiation exposure limits will remain uncertain.

As Upton summarizes (2001), beneficial effects were found for immunological responses (e.g., antibodies, lymphocytes) and apoptosis in cells of rabbits, sheep, mice, and humans, including human lymphocytes and mouse spleen cells, from exposure to gamma and X-rays. UNSCEAR (1994) and recent studies document the stimulatory effect of low-LET background radiation (Pollycove and Feinedegen, 2005) on several cellular functions, who conclude that the prevention of genetic mutations by increased low-dose radiation is associated with decreased mortality and decreased cancer mortality in human populations…. The natural experiments that occur daily—and have done so for tens of thousands of years—provide additional insights. These experiments occur when people live in areas with relatively high continuous background radiation (either from geology or from altitude). What seems to be occurring is that those certain populations in several areas of the world (in China and in the US, for instance) have lower background cancer rates than populations with less exposure: these exposure rates are measured in the milirem (mrem/year) and the range of exposures is between 400 to 800 mrem/year, or even higher.

POLICY REVISITED

Can science be separated from scientific causal arguments that buttress policy? It turns out that this is no longer the proper question to ask. Instead, it is the use of the science in regulatory law that matters: science qua science is not in dispute. When science justifies policy using or causing fear and relying on ignorance then it becomes suspect, even though the avowed policy end is protection.

Comparability—Jaworowski (2010) reports of the (bogus) six-foot Chernobyl chicken in depicted in a 1986 issue of the National Enquirer (with a circulation of 4.4 million in that year and found in most US supermarkets) indicates is a gross, but convincing to many, use of fear from invisible radioactive cancer agents to generate revenues. If the level of expenditures for mitigation or prevention is used to justify public funding, then accidents that can probabilistically generate deaths, illnesses, and damage to property and to the environment have to be ranked by importance. The magnitude and frequency of the outcomes—not necessarily stated as an expected value—should help policy makers and stakeholders decide on the allocation of increasingly scarce resources. The second aspect of the question has to do with the comparability of the causes of an accident. Comparability requires high similarity between the sources of danger (the hazards generating risks). Thus, the Chernobyl accident—due to the type, operations and lack of containment vessels of the nuclear reactor—cannot be compared to US and the Fukushima nuclear power plants because these have containment vessels around the reactors. The TMI partial core meltdown and those at the Daiichi plant are, on the other hand, at least in part comparable (regarding mechanisms of failure and releases but not regarding the initiating events of the accident. The cause and effect, in this situation, are known or knowable.

Policy-making—Radon in the drinking water is example of the difficulties that agency rulemaking encounters when dealing with uncertain causation. A committee of external experts found that the U. S. Environmental Protection Agency's (US EPA) “policy and regulations are frequently perceived as lacking strong scientific foundation.” 5 The regulatory process for radon began in 1974, and the scientific basis for regulating it still has not gone to Congress in late 1993. The US EPA based its risk calculations on an unpublished study, estimating that approximately 200 excess cancer deaths would occur in the US per year, and the cost of each death averted would be approximately 3 million dollars. However, the US EPA Science Adviser asked the agency to consider the “enormous uncertainty” about the risk, and that the “maximum contamination level” might be in the range of 1500 to 2000 pCi/liter, rather than the agency's 300 pCi/liter. The US EPA Science Advisory Board (SAB) had concluded that “there is no direct epidemiologic or laboratory animal evidence of cancer being caused by ingestion of radon in drinking water,” that “it is not possible to exclude the possibility of zero risk from ingested radon,” and suggested that 3000 pCi/liter would be appropriate.

Judicial review—Late in 1993, the U. S. Supreme Court addressed the extent to which scientific results could be admissible in Daubert. 6 It had been held that—given epidemiologic evidence of the effect of Bendectin 7 where the scientific evidence based on chemical structure activity, in vitro, animal tests, and recalculations which had not been peer-reviewed, but were based on epidemiologic results, did not meet the Frye test. 8 In Daubert, the unanimous Supreme Court held that the “general acceptance” of a scientific finding is not required for that finding to be admissible into evidence, 9 rejecting the Frye doctrine. 10 The Daubert Court stated that:

“(t)he adjective ‘scientific’ implies a grounding in the methods and procedures of science. Similarly the word ‘knowledge’ connotes more than subjective belief or unsupported speculation.” 11

This Court also added that:

“(o)f course, it would be unreasonable to conclude that the subject of scientific testimony must be ‘known’ to a certainty; arguably there is no certainty in science.” 12

The opinion properly explains the role of peer-review in science policy as a:

“[R]elevant, though not dispositive, consideration in assessing the scientific validity of a particular technique or methodology on which a particular technique is premised.” (at 4809).

The Court went on to discuss the difference between legal and scientific analysis, and gave the reason for the difference: “[S]cientific conclusions are subject to perpetual revision. Law, on the other hand, must resolve disputes finally and quickly.” 13 The former is unquestionable; the latter is troublesome because not only is the judicial process notoriously slow, but, if it depends on scientific information, then it should be able to accommodate change. 14

What is the correct risk level for policy?—In Industrial Union Dept., AFL-CIO, v. American Petroleum Institute, 100 S. Ct. 2844 (1980). The U.S. Supreme Court addressed the meaning of “significant risk,” where the Occupational and Safety Health Agency (OSHA) wanted to reduce exposure to benzene in the workplace, from 10 ppm to 1 ppm based on their findings that benzene presented a “significant risk of health impairment” (Occupational Health and Safety Act, 29 U. S. C. § 655(b) (5)). The Court held that the agency had the burden of showing that “it is at least more likely than not that long-term exposure to 10 ppm of benzene presents a significant (a qualifier that does not appear in the statute) risk of material health impairment.” It thus requires the agency to develop better evidence of cause of the adverse effect (leukemia) and concluded that “safe” is not equivalent to “risk-free.” Moreover, the significance of risk is not a “mathematical straitjacket” and OSHA's findings of risk need not approach “anything like scientific certainty.” However, the Court did not reveal what factual findings would demonstrate a “significant risk.” In a footnote it stated that animal studies could support “a conclusion on the significance of the risk” and that epidemiologic evidence, even if insufficient to generate a dose-response model, “would at least be helpful” in deciding whether a risk is significant. The Court based its remand primarily because meeting the 1 ppm standard would be very costly. As the Court stated:

“The reviewing court must take into account contradictory evidence in the record…, but the possibility of drawing two inconsistent conclusions from the evidence does not prevent an administrative agency's findings from being supported by substantial evidence.”

The Court refused to determine the precise value of “significant risk” and noted that chlorinated water at one part per billion would not be significant, but one per thousand risk of death from inhaling gasoline vapor would be. Other federal decisions have taken the more legally traditional deferential approach in their review of how an agency interprets a statute. For instance, in Chevron v. Natural Resources Defense Fund, 467 U. S. 837 (1984), involving the interpretation of a section of the Clean Air Act, the Court stated that:

“[T]he Administrator's interpretation represents a reasonable accommodation of manifestly competing interests and is entitled to deference: the regulatory scheme is technical and complex, the agency considered that matter in detailed and reasoned fashion, and the decision involves reconciling conflicting policies… Judges are not expert in the field, and are not part of either political branch of the Government…When a challenge to an agency construction of a statutory provision, fairly conceptualized, really centers on the wisdom of the agency's policy, rather than whether it is a reasonable choice within a gap left open by Congress, the challenge must fail.” (at 865–866)

Is there a minimum level of risk?—If the statute is relatively clear the reviewing court will not allow much freedom to the agency. For example, in the controversy surrounding Orange No. 17 and Red no. 19, used in cosmetics, although the Food and Drug Administration (FDA) had determined that these additives were carcinogenic in animals, the individual lifetime estimates of human risk were, respectively, 2.0 × 10−10 and 9.0 × 10−6 (51 Fed. Reg. 28331 (1986), and 51 Fed. Reg. 28346 (1986)). The FDA had concluded that these risks, developed through what it considered to be state of the art (but with conservative, pessimistic, assumptions), used in quantitative risk assessment were de minimis, and would not therefore be banned. However, because of the express prohibition against all color additives intended for human consumption that “induce cancer in man or animal” (Color Additives Amendment of 1960, PL 86–618; 21 U. S. C. § 376 (b) (5) (B), the Delaney Clause), the court rejected, albeit with “some reluctance,” the FDA's conclusion of de minimis risk (Public Citizens v. Young, 831 F. 2d 1108 (D. C. Cir. 1987). Alabama Power Co. v. Costle, 636 F. 2d 323 (D. C. Cir. 1979) (de minimis principle allows judicial discretion to avoid regulations that result in little or no benefit) and Les v. Reilly, 968 F. 2d 985, cert. den'd, 113 S. Ct. 1361 (1992) affirms the prohibition mandated by the Delaney Clause prohibiting carcinogenic food additives.

EU'S “ZERO TOLERANCE” APPROACH TO SAFETY

The MRL regulation (a combination of Regulations and Directives issued by the EU in the past decade) based on the principle of “zero tolerance” means that no concentration is safe if a substance has not been given a maximum residue limit (MRL) (for Annex 1 substances). Thus, for those substances unless the MRL exists for a subset of pharmacologically active substances, those are unacceptable. Thus, because limits of detection are increasing (lower and lower concentrations are increasingly detected by increasing accurate instruments), zero tolerance is equivalent to zero risk of US law. The MRL is a requisite (Directive 2001/82/EC, the “Cascade Provisions”) for the marketing of any veterinary products used as sources of food for humans: if there is no MRL, then the product containing certain listed substance for which there is no MRL is cannot be marketed unless there is zero mass quantity of pharmacologically active of the listed substances. Zero tolerance protects human health. The EC Decisions 2002/657/EC and 2005/34/EC have introduced the concept of Minimum Required Performance Limits (MRPLs) as a means to reduce the otherwise draconian impact of the MRLs, when the foodstuffs limits are greater than zero and their use in foodstuffs has been duly authorized by a health agency in a third world country that exports to the EU. The concern is that the stringency of the MRL may not fairly apply to food products from third world exporters to the EU and risk assessment methods may provide a more equitable way to control unwanted exposures in the EU's importing nations. Even though the single source implied in EU law has been shown to be incorrect, for our purposes the issue is that any blunt prohibition based on a LNT-like assumption can still cause more harm than good. Moreover, as the REACH directive specifically places the burden of proof on the registrant (namely, industry), it creates a possibly insurmountable burden because it is asking for proof of a negative. The zero tolerance principle also applies to GM substances or organisms. The EU Commission staff paper (2007) contains discussions as to substances in which the residues are not harmful per se but can be harmful when incorrectly used or are physiological products of the animals themselves. We note that the approach taken in the EU allows for extrapolations (sic) between species

The evidence of cancer (either benign or malignant) in humans can consist of epidemiological incidence or prevalence data, in vivo animal test of different durations, cellular and genetic tests all the way to the shape of a molecule or to the form of radiation to understand how the substance affects an organ or tissue. This evidence can be uniquely informative about the incidence of specific cancers (e.g., asbestos and mesothelioma) in humans, but often cannot be fully explanatory of cause and effect. In general we can think of weight-of-evidence, strength-of-evidence, modes of action and so on to understand hoe exposure causes cancer in humans. The EU and its Member States have different views of the importance of that evidence and, more importantly, on the type of model of dose-response used to assess the risk associated with exposure. In general, the basis is an LNT (such as a MS model, for instance, in which the interpolation to zero is linear from the lowest measured response) or a threshold model. Notably, none of those approaches allow for beneficial effects at low doses (Seely et al., 2001). If a carcinogen is deemed to act directly on a gene, then there is no threshold for that carcinogen: it is characterized by the LNT behavior (VC and benzene are an example because the evidence is epidemiological). But there are exceptions where the approach involves a tumorigenic dose (TDx%) that has been determined to cause cancer in 25% (or less) of the animals in a study: the tolerable exposure level for humans is 1/1000 times lower that the TDx%. When a carcinogen's mode of action is epigenetic, its effect can be characterized by an experimental threshold (and thus the cognizant public agency uses factors of safety to develop a tolerable dose for humans); an example is thrichloroethylene. Some Member States do not use these approaches but rely on consensus about the dangerousness of an exposure. Overall, the variability in the use of dose-response models to set acceptable or tolerable doses is rather extreme: some countries use an LNT model; others do not, and prefer to use the NOAEL (or LOAEL) and thus use threshold, in which the experimental exposure is decreased, through a factor of safety, to establish a legally justified acceptable exposure.

Public information—Against this complex background of science and law, the dissemination to the public of biased scientific information by federal agencies became the concern of the Congress and led to the Data Quality Act, DQA, (2002, P.L. No. 106-54, §515), amending the Paperwork Reduction Act (44 USC 3501 et seq.). Congress' concern was raised by the number of requests for data from the public and the fear that the information provided by the agencies that fall under this congressional mandate (executive departments and so on) could be incorrect and possibly cause damage to society. “Information” is defined in the DQA to include “statistical information”. The DQA was formalized through its Guidelines (67 FR 8452) by the Office of Management and Budget (OMB) and. Specifically, “quality” is a composition of “integrity, objectivity, and utility” of the information (67 FR 8659). On the surface, the US EPA appears to meet with the OMB guidelines, which state that information needs to be “objective, realistic, and scientifically balanced” (US OMB, 2002); 67 FR 8452)). In addition to reasoning about cause and effect, under the DQA and the OMB's Guidelines, US federal agencies must use “sound statistical methods.” Accordingly, there is no limit to the methods that must be used, other than they are sound. 15 However, it remains to be proven by the agency that its policy-making results in “objectivity, realism and scientific balance”. For example, it is well known that peer reviews can be biased and that peer-reviewed articles end up being corrected or retracted. In the context of public decision-making, it seems appropriate that the standards of review should be greater than the standard of scientific peer review of journal articles because the stakes for society are far higher than the mere acceptance or rejection of a paper in the literature. Central to causal reasoning for risk assessment, investigations (e.g., Ottenbacher, 1998) have shown that peer-reviewed articles in epidemiology commonly fall short of good statistical practices that would limit false positives to reported nominal rates. Causal inferences drawn in peer-reviewed epidemiology and risk assessment papers often fall far short of the normative requirements for valid causal inferences (e.g., Ricci and MacDonald (2006)).

How to deal with the issue we have identified may lie in exploring how a risk analysis can find a superior solution, in support of public decision-making, when there is disagreement. Dealing with complex causality involves often-irreducible uncertainties. The very difference in the magnitude of the outcomes—for a given acceptable risk level—between the LNT and the J-shaped dose response model has value: it informs the decision-makers about what it can be expected statistically and that information can be coupled (depending on the law) with the costs associated with prevention. The rational assumption is that that society wishes to maximize its net discounted expected benefits, accounting for direct, indirect, tangible and intangible costs and benefits, and not merely the reduction is risk, if any. As will we discuss, the conjectural reduction is risk associated with the LNT—when it is the incorrect choice—does not reduce risk, relative to the alternative J-shaped dose-response model: it actually increases risk precisely in the domain where it should not do so. In this context, asymmetry of information remains important. Even though scientific information and knowledge is open and can be carefully scrutinized and made public through judicial proceedings, by agencies and authorities via Criteria Documents, and so on, much of the relevant research is either unpublished or the data bases are unavailable for independent assessment, even when FOI legislation exists (as compliance can be stalled). Moreover, the temporal aspect of open information inherently has some transitory elements: evidence contrary to the published findings may take years to become available (as the vaccine-autism 2011 scandal clearly demonstrates). And, by the time this evidence is discredited, the perception of danger is formed and seemingly unchangeable in people's mind.

A EUROPEAN UNION INSIGHT: REACH REGULATION

In the EU, the REACH Regulation, which has direct force of law on the EU's Member States, has a very specific policy approach in which the “polluter pays” and the “precautionary principle” combine. Nonetheless, because of the need for a causal assessment remains, can REACH also be causing more harm than good? The wording of REACH is that:

This Regulation should ensure a high level of protection of human health and the environment as well as the free movement of substances, on their own, in preparations and in articles, while enhancing competitiveness and innovation. This Regulation should also promote the development of alternative methods for the assessment of hazards of substances.

The REACH Regulation:

… is based on the principle that it is for manufacturers, importers and downstream users to ensure that they manufacture, place on the market or use such substances that do not adversely affect human health or the environment. Its provisions are underpinned by the precautionary principle.

This is a very different command from that of US laws because, in Europe, the Precautionary Principle is an expressed concern that has been a fundamental constitutional basis to justify environmental choices: it trumps the effect of scarce information on potential public exposure to agents that could cause serious or irreversible harm, (cancer or other adverse health or environmental effects). If the EU were to use the LNT because lack of certainty about a mechanism of action, the choice would prima facie appear to be conservative and thus protective (at this point costs of control are irrelevant because of the “polluter pays” principle. Yet, the combination of both principles creates a paradox: at levels of risk below 0.01 the LNT is unknowable—as opposed to being uncertain—but the B-H is, as we have shown, not only knowable but it also be replicated provided that the experiment is not biased to support linearity (for example, an animal bioassay experiment that uses MTD and fractions of the MTD, plus a control group generally fail to provide information about a nonlinear dose-response relationship). Specifically, we cannot find evidence for cancer that supports the LNT at very low doses. Thus the quandary: how can REACH protect when it uses the Precautionary Principle and when that principle is factually based on the LNT? Under REACH, the evidence to be put forward includes:

… The evaluation of non-human information shall comprise:

– the hazard identification for the effect based on all available non-human information;

– the establishment of the quantitative dose (concentration)—response (effect) relationship.

… When it is not possible to establish the quantitative dose (concentration)—response (effect) relationship, then this should be justified and a semi-quantitative or qualitative analysis shall be included. …

The REACH Regulation also states that:

If one study is available then a robust study summary should be prepared for that study. If there are several studies addressing the same effect, then, having taken into account possible variables (e.g. conduct, adequacy, relevance of test species, quality of results, etc.), normally the study or studies giving rise to the highest concern shall be used to establish the DNELs and a robust study summary shall be prepared for that study or studies and included as part of the technical dossier. Robust summaries will be required of all key data used in the hazard assessment. If the study or studies giving rise to the highest concern are not used, then this shall be fully justified and included as part of the technical dossier, not only for the study being used but also for all studies demonstrating a higher concern than the study being used. It is important irrespective of whether hazards have been identified or not that the validity of the study be considered.

REACH Regulation (EC 1907/2006) aims to ensure a high protection of human health and the environment (OJEU, Title I General Issues, Chapter 1, Article 1).

As REACH is a Regulation, every member state of the European Union has to integrate it in their national legislation exactly as it is, unlike a Directive. The question concerning the health risk assessment of chemicals that fall under REACH is: How are the safe dose-levels determined? A corollary question is: what is the flexibility inherent to using alternative dose-response models, including those that go against the orthodox LNT or threshold models? Clearly, REACH being an administrative tool, suggest little flexibility—to avoid violating the Precautionary Principle—while permitting the implementation and enforcement of REACH at the lowest possible societal costs. According to Chapter 5, Health Risk Assessment of the European environment- state and outlook 2010, “all human health risk assessments of chemicals include hazard identification, dose-response assessment, exposure assessment and risk estimation/characterization”. The REACH Regulation requires that manufacturer or importer of specific substances submit their registration to the European Chemicals Agency. Nothing is mentioned in the REACH regulation about this: the dose-response assessment is defined as “the estimation of the relationship between dose, or level of exposure to a substance, and the incidence and severity of an effect (CEC, 1993)”. REACH requires different procedures to be executed from the manufacturer or transporter in order to “ensure a high level of protection of human health and the environment.”

Part B Hazard Assessment of the guidance on information requirements and chemical safety assessment describes the different steps that should be taken in order to warranty a maximum health and environmental protection. Information about human health endpoints such as “acute toxicity, irritation and corrosivity, sensitisation, repeated dose toxicity, mutagenicity, carcinogenicity, and reproductive toxicity as well as any other available information on the toxicity of the substance” should be collected and assessed. The information will be used to set the dose descriptors, which is the equivalent of the well-known toxicological NOAEL. That is: “If necessary, modify the dose descriptor to the correct starting point and calculate the overall assessment factor based on all the uncertainties involved in the assessment.” 16 REACH contains reference and language regarding the Derived No-Effect Level (DNEL), which “represents a level of exposure above which humans should not be exposed.” 17 When no DNEL can be derived “REACH requires a qualitative assessment to be performed.” Also, the guidance booklet states that “for non-threshold endpoints, if data allow, the development of a (semi) quantitative reference value (the DMEL, derived minimal effect level) may be useful. The question that arises and is not answered by the REACH Directive—and thus the purpose of this paper—is the efficiency of such guidance for the application of the Reach regulation: again more harm is likely to be done, rather than less.

CONCLUSION

When the stakes for society are very high facts should rule, not assumptions. When an agency focuses exclusively on the harmful side of exposure at low doses, ignoring its beneficial effects, it contradicts the statutory mandate adequately to protect health. At low probabilities, effects can be benign, rather than being as the US EPA assumes by using the LNT. This situation demonstrably leads to distorted resource allocations and to regulations or guidelines that increase rather than reduce human health risks (Bryer, 1993). The problem is the default use of the LNT (as the LMS used in cancer regulatory work in the US). Specifically, regulatory policy-science judgments should be scientific judgments, as exemplified by the simplest set of alternatives in our statement below:

“the probability that the LNT at low doses cancer model is correct, for chemical XYZ, is 0.40” against the “probability that a B-H dose-response at those low doses is 0.60”

Attitude towards risk should not be included in cancer risk analysis, but neutrality should. If it were, risk aversion or proneness should be calculated along with the risk-neutral information, on a case-by-case basis. But then: whose individual aversion or proneness is an agency using?

A US EPA policy position is that this agency will examine and report on the upper end of a range of risks or exposures when it is not sure about where the risk of true concern can be found. However, it is not clear that using defaults does help to assess the (true) risks in a way that improves decisions, based on comparisons that use the same measure of success: number of deaths averted. Rather, default assumptions may simply replace an informative but uncertain estimate of the true risk with a less informative default number that carries a lower value of information (VOI) for decision-makers. When there is insufficient information, it cannot be decided that a risk is of a trivial magnitude (assuming that all stakeholders agree to what is trivial, a legal issue) by a policy defaults. Using scientific, consensus-based choices must overcome fallacies, long recognized in heuristic reasoning, as well as cognitive biases on a case-by-case basis, because some fallacies occur in some situations and other fallacies may occur in yet other situations. More importantly, those defaults must change as the state of the information changes. The decision-theoretic approach with loss functions is useful here. Specifically, aside from measures of model performance, such as AIC, BIC, the important criterion for model selection is that “a theory or model should be evaluated in terms of the quality of the decisions that are made based on the theory or model.”

The suggestions and concepts discussed in this paper can be summarized in a diagram, (Figure 6) in which each axis measures a degree of evidence; the projections depict the overall societal value of the information, over the period t3 – t1, on the C-axis. This diagram is meant to be a simple way to organize complicated information. The A-axis measures the data (information) and the B-axis measures the causal basis for the risk analysis and thus accounts for the mathematical and statistical aspects of causal modeling as an overall index of outcome from an analysis, given the state of the information at the time that the analysis is completed and peer reviewed. The C-axis—a combination of the results from on A and B—can be understood as follows: 1.0 means true causation without doubt, and so on to the lesser standard of science-policy proof that combines uncertainty and variability about the evidence in A and B; 0 means complete unavailability of a data or a causal argument, respectively.

Conceptual assessment of policy-science results in regulatory law.

Figure 6 attempts to deal with an overall measure of variability and uncertainty by depicting a stakeholder's summary set of beliefs, in terms of the US EPA (2004):

“The use of sophisticated uncertainty tools also involves substantial issues of science and mathematics … It is not, however, EPA's intent to suggest that full probabilistic models of cancer risks are generally feasible at this time, or that the role of a qualitative presentation of uncertainties should be diminished.” (p. 49)

We think that the implications of this statement are important for cancer policy. We conclude that dealing with the uncertainty and variability inherent to cancer risk assessment for policy making should:

Not be myopic (and circumvents the admirable research done by and for the US EPA), because Bayesian (and other probabilistic and non-probabilistic methods) are now established in the peer-reviewed literature that their use can withstand the admissibility standard of Daubert and its line of (US federal) cases dealing with the admissibility of evidence.

Be consistent with advancing the state-of-the-art regarding ranking of alternative choices and seemingly with fundamental jurisprudence and administrative law.

Be consistent with the need to avoid making “conservative” assumptions when more accurate information and knowledge is available.

Avoid promoting default reasoning.

Footnotes

ACKNOWLEDGMENTS

The Authors thanks Oscar Chröisty, HNU, for invaluable research assistance.

1

A legal presumption is “[a] conclusion made as to the existence or nonexistence of a fact that must be drawn from other evidence that is admitted and proven to be true.” If specific facts are established, a judge or jury must assume another fact that the law recognizes as a logical conclusion from the proof that has been introduced. A presumption differs from an inference, which is a conclusion that a judge or jury may draw from the proof of certain facts if such facts would lead a reasonable person of average intelligence to reach the same conclusion. A legal conclusive presumption is one in which the proof of certain facts places the existence of the assumed fact beyond dispute. The presumption cannot be rebutted or contradicted by evidence to the contrary. For example, a child younger than seven is presumed to be incapable of committing a felony. There are very few conclusive presumptions because they are considered to be a substantive rule of law, as opposed to a rule of evidence. A rebuttable presumption is one that can be disproved by evidence to the contrary. An assumption is a taking for granted of a fact or statement without the need for proof (Merriam-Webster on line dictionary): this contradicts the idea that an assumption must be checked after an analysis is done, if that analysis is based on that assumption. This is typically done in statistical analysis: a model is fit to data and the results are then checked against the assumptions on which the estimation procedure is based.

2

The law has rather specific way to deal with the admissibility of scientific evidence (e.g., at least three US Supreme Court cases discussed later in this paper) that affect scientific causal arguments.

3

Formally: risk (lifetime cumulative probability of cancer death) = f(d), where d is a suitable dose or exposure. Thus, for a policy risk level, say 10−6 , we can determine the associated tolerable exposure, for example the acceptable exposure to benzene, in micrograms of benzene per cubic meter of ambient air. The f(d) must begin at zero and cannot exceed f(d) = 1.00 because probabilities are number between zero and one, including these two values; the function is monotonic.

4

Following ![]() , what is needed goes beyond “some or many believe that…” and results in a categorical, but contingent, statement such as “given all of the relevant and robust information to date, we find that one or more

, what is needed goes beyond “some or many believe that…” and results in a categorical, but contingent, statement such as “given all of the relevant and robust information to date, we find that one or more

5

Symmetry of information knowledge and its processing between the parties is higher in regulatory law than in tort law. In a regulatory process, evidence standards are more liberal than in judicial proceedings and public participation enhances the ventilation of ideas and beliefs which tend to result in an equitable information base. In tort law such symmetry is lessened.

6

Daubert et al., v. Merrell Dow Pharmaceuticals, Inc., 61 L. Week 4805. The Frye tests resulted in the summary judgment against the plaintiff; see, e. g., Christophersen v. Allied-Signal, No. 89–1995 (5th Cir. 1991), cert. den'd, 112 S. Ct. 1280 (1992), where the scientific testimony about the causal association between exposure to nickel and cadmium and colorectal cancer was excluded because it was not mainstream science. Some state courts have allowed into evidence scientific constructs that would fail the Frey-type test, provided that they are “sound, adequately founded … and … reasonably relied upon by experts,” Rubanick v. Witco Chemical, 593 A. 2d 733 (N J 1991). The Supreme Court of New Jersey held that the scope of scientific testimony should be enlarged, relative to traditional tort law, when uncertainty and lack of knowledge are present. Later, that Court also held that there should be a thorough review of “studies and other information to determine whether that information is part of that relied on by experts and then there should be a determination of whether or not those methods are supported by some expert consensus.” Landridge v. Celotex, 605 A. 2d 1079 (N. J. 1992), at 1086.

7

In Brock v. Merrell Dow Pharmaceuticals (874 F. 2d 307 (5th Cir.), 884 F. 2d 166 (5th Cir.) reh'g en banc den'd, 884 F. 2d 167 (5th Cir. 1989) the court established its own “scientific standard” for determining causation from the administration of Bendectin and its consequent teratogenic outcomes. The court attempted to establish a “universal” standard—statistically significant epidemiologic results—where there is “no consensus in the medical community” (at 309).

8

The Frye test (Frye v. U. S., 293 F. 1014 (D. C. 1923)) states: “(j)ust when a scientific principle or discovery crosses the line between the experimental and the demonstrable stages is difficult to define. Somewhere in this twilight zone the evidential force of the principle must be recognized, and while the courts will go a long way in admitting expert testimony deduced from well-recognized scientific principle or discovery, the thing from which the deduction is made must be sufficiently established to have gained general acceptance in the particular field in which it belongs.” (emphasis added by the Court). Under Frye, the courts generally have not scrutinized the scientific reasoning leading to the expert's opinion, but rather have directed their attention principally the matters (“things”) from which the opinion was derived. “General acceptance” is still at factor in admitting evidence, and a method that “has been able to attract only minimal support within the community” (citing from U. S. v. Downing, 753 F. 2d 1224 (3rd Cir. 1985), at 1238) “may be viewed with skepticism” (at 4809).

9

The US Supreme Court held that: “(t)o summarize, the ‘general acceptance’ test is not a necessary precondition to the admissibility of scientific evidence under the Federal Rules of Evidence, but the Rules of Evidence—especially Rule 702—do assign to the trial judge the task of ensuring that an expert's testimony both rests on a reliable foundation and is relevant to the task at hand. Pertinent evidence based on scientifically valid principles will satisfy those demands.” (at 4810).

10

Federal Rule 403 balances the probative value against the danger of prejudice, confusion, and so on. In general, the assumption is that a jury will hear the evidence proffered by an expert in tort cases (Ballou v. Henri Studios, Inc., 656 F. 2d 1147 (5th Cir. 1981))‥

11

Ibid, At 4808.

12

Ibid, At 4808. The Court cites the American Association for the Advancement of Science's amicus brief: “(s)cience is not an encyclopedic body of knowledge about the universe. Instead it represents a process for proposing and refining theoretical explanations about the world that are subject to further testing and refinement.” (Emphasis in the original; at 4808).

13

Ibid, at 4810.

14

Ibid, Chief Justice Rehnquist concurring in part and dissenting in part, at 4810–4811.

15